From Validation to Courtroom: A Comparative Analysis of Error Rates Across Forensic Method TRLs

This article provides a comprehensive analysis of the relationship between Technology Readiness Levels (TRLs) and empirically measured error rates in forensic science.

From Validation to Courtroom: A Comparative Analysis of Error Rates Across Forensic Method TRLs

Abstract

This article provides a comprehensive analysis of the relationship between Technology Readiness Levels (TRLs) and empirically measured error rates in forensic science. Aimed at researchers, forensic practitioners, and legal stakeholders, it synthesizes current research to explore the foundational challenges of defining and measuring forensic error, examines methodological factors influencing reliability across disciplines like DNA, fingerprints, and toolmarks, discusses strategies for troubleshooting and error rate optimization, and presents a comparative framework for validating emerging techniques such as comprehensive two-dimensional gas chromatography (GC×GC). The review underscores that foundational validity, established through rigorous empirical testing, is a prerequisite for estimating meaningful error rates and for the responsible integration of new forensic methods into the justice system.

Defining the Problem: The Elusive Nature of Error in Forensic Science

The admissibility of expert testimony and scientific evidence in United States courts hinges on its reliability. Two standards form the cornerstone of this requirement: the Daubert Standard, established by the 1993 U.S. Supreme Court case Daubert v. Merrell Dow Pharmaceuticals Inc., and the 2016 report by the President's Council of Advisors on Science and Technology (PCAST) [1] [2]. The Daubert Standard provides a systematic framework for trial judges, who act as "gatekeepers," to assess the reliability and relevance of expert witness testimony before presentation to a jury [1]. This standard explicitly includes the "known or potential rate of error" as one of its key factors for determining whether an expert's methodology is scientifically valid [1] [3]. The subsequent PCAST report reinforced and expanded upon this imperative, emphasizing that foundational validity requires empirical evidence of reliability, typically established through rigorous empirical studies to determine reliability and estimate error rates [4] [2]. For forensic science disciplines and any scientific evidence presented in court, these standards create a legal imperative to quantify, understand, and communicate error rates.

The Daubert Standard and Its Error Rate Factor

The Daubert Standard marked a significant shift from the older Frye Standard (which focused primarily on "general acceptance") by placing responsibility on trial judges to scrutinize not only an expert's conclusions but also the underlying scientific methodology and principles [1]. The Court provided a non-exhaustive list of factors for judges to consider:

- Whether the technique or theory can be and has been tested

- Whether it has been subjected to peer review and publication

- Its known or potential error rate

- The existence and maintenance of standards controlling its operation

- Whether it has attracted widespread acceptance in a relevant scientific community [1]

While judges sometimes struggle with the error rate factor, research indicates they often engage in "implicit error rate analysis" by thoroughly examining the quality of the methodology used by the expert [3]. This analysis proves more significant in predicting the admissibility of evidence than the other Daubert factors. Subsequent cases like General Electric Co. v. Joiner and Kumho Tire Co. v. Carmichael clarified that this "gatekeeping" obligation applies to all expert testimony, not just scientific testimony, and that appellate courts review these decisions for abuse of discretion [1].

The PCAST Report and Its Emphasis on Foundational Validity

The 2016 PCAST report, "Forensic Science in Criminal Courts: Ensuring Scientific Validity of Feature-Comparison Methods," provided a powerful reinforcement of Daubert's principles, with a specific focus on forensic science [4] [2]. PCAST emphasized that for a forensic method to be foundationally valid, it must be demonstrated through empirical testing to be repeatable, reproducible, and accurate, with established error rates [4]. The report specifically recommended well-designed "black-box" studies that mirror real-world casework conditions to measure how often practitioners reach incorrect conclusions [4]. These studies involve practicing forensic analysts interpreting evidence samples with known origins, with their conclusions compared to ground truth to calculate false positive and false negative rates [4]. PCAST distinguished between foundational validity (whether a method is reliable in general) and validity as applied (whether it was reliably executed in a particular case), placing error rate estimation at the core of establishing foundational validity [2].

Comparative Error Rates Across Forensic Disciplines

Empirical studies conducted in response to Daubert and PCAST have revealed significant variation in error rates across different forensic disciplines. The following table summarizes key findings from recent "black-box" studies:

Table 1: Comparative Error Rates in Forensic Disciplines

| Forensic Discipline | False Positive Rate | False Negative Rate | Study Details |

|---|---|---|---|

| Bloodstain Pattern Analysis | 11.2% (average) | Not specified | 75 analysts, conclusions wrong ~11% of time; consensus seldom wrong [4] |

| Striated Toolmark Analysis | 0.45% - 7.24% (pooled: 2.0%) | Not specified | Range from three open-set studies; pooled weighted average: 2.0% [5] |

| Latent Fingerprint Analysis | 0.1% | 7.5% | Miami-Dade Research Study on ACE-V process [2] |

| Bitemark Analysis | Up to 64.0% | Up to 22.0% | Illustrates wide variability in published error rates [2] |

These quantitative differences highlight why general statements about "forensic science" reliability can be misleading. The 11.2% error rate in bloodstain pattern analysis, for instance, far exceeds the 0.1% false positive rate for latent fingerprint analysis, suggesting different levels of scientific maturity and methodological standardization across disciplines [4] [2]. Furthermore, error rates are not monolithic; the same method may produce different error rates depending on examiner training, laboratory protocols, and the specific nature of the evidence being examined.

The Multiple Comparisons Problem in Forensic Science

A critical methodological issue affecting error rates is the multiple comparisons problem, which persists in various forensic disciplines. This occurs when a single conclusion relies on numerous comparisons, either explicitly or implicitly, increasing the probability of false discoveries [5]. For example, matching a cut wire to a wire-cutting tool requires comparing multiple surfaces and alignments. Research demonstrates that with a single-comparison false discovery rate (FDR) of 2.0% (the pooled average for toolmark analysis), conducting just 100 comparisons increases the family-wise false discovery rate to 86.7% [5]. The following table illustrates this relationship:

Table 2: Impact of Multiple Comparisons on Family-Wise Error Rates

| Single-Comparison FDR | 10 Comparisons | 100 Comparisons | 1,000 Comparisons |

|---|---|---|---|

| 7.24% (Mattijssen) | 52.8% | 99.9% | ~100.0% |

| 2.00% (Pooled) | 18.3% | 86.7% | ~100.0% |

| 0.70% (Bajic) | 6.8% | 50.7% | 99.9% |

| 0.45% (Best) | 4.5% | 36.6% | 98.9% |

This mathematical relationship underscores why forensic methods requiring extensive comparisons need exceptionally low single-comparison error rates to maintain overall reliability. Failure to account for these multiple comparisons inherently increases false discovery rates and can contribute to wrongful accusations [5].

Experimental Protocols for Establishing Error Rates

"Black-Box" Proficiency Studies

The most direct method for estimating error rates involves "black-box" studies where practicing forensic analysts examine evidence samples with known origins under conditions mimicking routine casework [4]. The protocol for the bloodstain pattern analysis error rate study exemplifies this approach:

- Participant Recruitment: 75 practicing bloodstain pattern analysts participated [4]

- Evidence Preparation: Bloodstains were produced under known conditions or taken from actual casework [4]

- Analysis Phase: Participants responded to classification prompts or questions about the bloodstains' origins [4]

- Comparison to Ground Truth: Researchers compared analysts' conclusions to known causes to calculate error rates [4]

- Consensus Analysis: Researchers examined how often the average group response was wrong versus how often individual analysts contradicted each other [4]

This methodology revealed not only the overall 11.2% error rate but also an 8% contradiction rate between analysts and that technical review (a common error-prevention method) failed to catch errors 18-34% of the time [4].

Computational Approaches for Multiple Comparisons

For disciplines involving pattern matching, researchers have developed computational methods to quantify the implicit multiple comparisons problem:

- Define Comparison Parameters: Determine the physical dimensions of evidence (e.g., blade cut length

b, wire diameterd) and scanning resolutionr[5] - Calculate Comparison Range: Compute the minimum (

b/d) and maximum (b/r - d/r + 1) number of comparisons needed [5] - Account for All Surfaces: Multiply by the number of distinct surfaces requiring comparison (e.g., 2-4 for wire-cutting tools) [5]

- Compute Family-Wise Error Rate: Calculate the overall false discovery probability using the formula E_n = 1 - [1 - e]^n, where

eis the single-comparison error rate andnis the number of comparisons [5]

This approach demonstrates why subjective matching techniques without quantified similarity thresholds are particularly vulnerable to inflated error rates from multiple comparisons.

The Scientist's Toolkit: Key Concepts and Reagents

Table 3: Essential Conceptual Toolkit for Error Rate Research

| Concept/Reagent | Function/Definition | Application in Error Rate Studies |

|---|---|---|

| Black-Box Studies | Proficiency tests where analysts examine evidence of known origin without prior knowledge of the "correct" answer | Mimics real-world conditions to measure actual performance rather than theoretical best-case performance [4] |

| False Positive Rate | The rate at which analysts incorrectly conclude a match between non-matching samples | Critical for legal contexts where false incrimination is a primary concern; often prioritized by analysts [2] |

| False Negative Rate | The rate at which analysts incorrectly exclude a true match | Important for investigative completeness but typically considered less problematic than false positives [2] |

| Multiple Comparisons Correction | Statistical adjustments to account for the increased false discovery risk when conducting many tests | Essential for forensic methods involving database searches or optimal alignment finding [5] |

| Ground Truth Samples | Physical evidence or synthetic samples with definitively known origins | Provides the benchmark against which analyst performance is measured in validation studies [4] |

| Proficiency Testing | Regular assessment of analyst competence using standardized tests | Though not perfect, provides ongoing monitoring of individual and laboratory performance [2] |

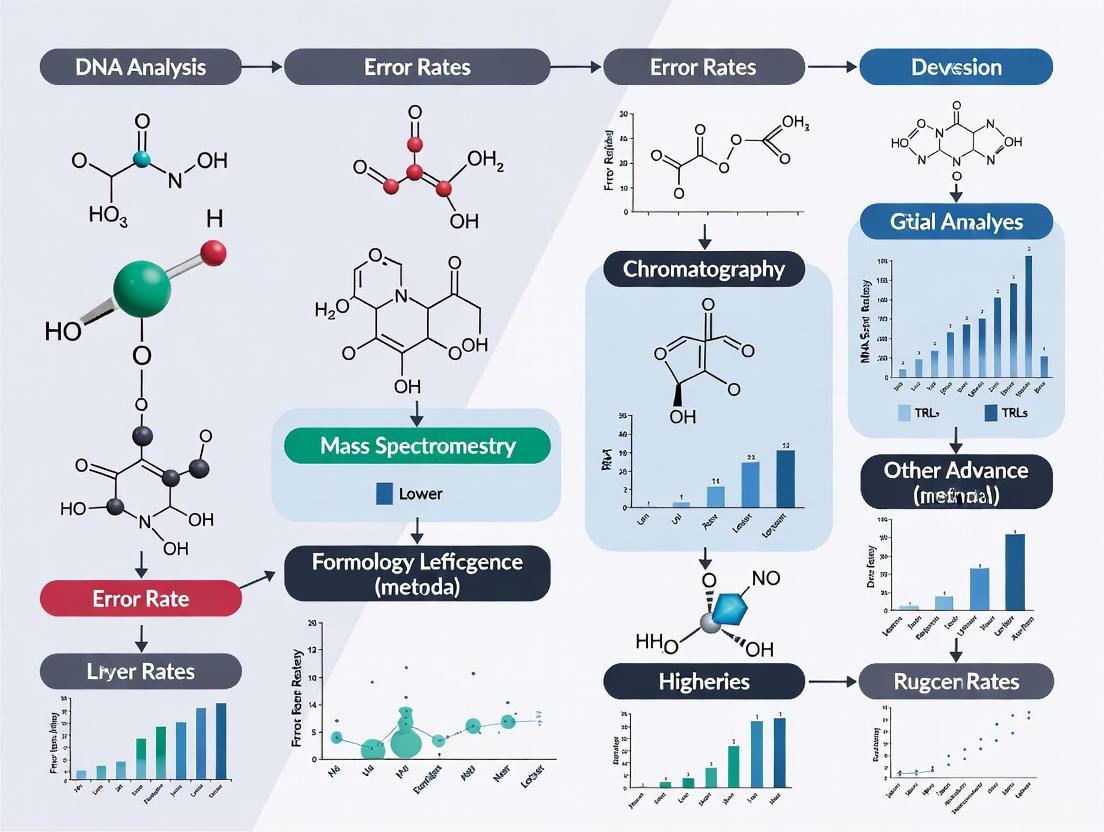

Visualizing the Daubert-PCAST Framework

This diagram illustrates the synergistic relationship between the legal standards established by Daubert and the scientific framework provided by PCAST, culminating in improved evidentiary reliability.

The Daubert and PCAST standards, though arising from different branches of government and separated by over two decades, create a converging imperative for forensic science: the mandatory quantification of error rates. This requirement stems from both legal principles (ensuring reliable evidence reaches jurors) and scientific principles (establishing foundational validity through empirical testing). The research conducted in response to these standards has revealed substantial variation in reliability across forensic disciplines, with some methods exhibiting error rates exceeding 10% while others demonstrate error rates below 1% [4] [2]. This variability underscores why courts cannot treat "forensic science" as a monolith when making admissibility determinations. As research continues to expose the complexities of forensic evidence—including the multiple comparisons problem that inherently increases false discovery rates—the legal and scientific communities must continue their collaborative efforts to establish transparent, empirically grounded error rates for all forensic methods used in legal proceedings [5] [6]. The ultimate goal remains the same: ensuring that scientific evidence presented in court meets the highest standards of reliability to promote justice.

The scientific validity of forensic evidence presented in courtrooms has undergone significant scrutiny over the past two decades, revealing critical gaps between legal reliance and scientific foundation. Forensic science encompasses a wide spectrum of disciplines, from traditional feature-comparison methods like fingerprints and toolmarks to advanced instrumental techniques such as comprehensive two-dimensional gas chromatography (GC×GC). Each discipline operates at different levels of technological maturity and possesses vastly different error rate documentation. Understanding this landscape is crucial for researchers, legal professionals, and policymakers working to strengthen forensic practice.

Recent authoritative reports from the National Academy of Sciences (NAS) and the President's Council of Advisors on Science and Technology (PCAST) have highlighted that many long-accepted forensic methods lack proper scientific validation [7]. The legal framework for admitting forensic evidence, primarily through the Daubert Standard and Federal Rule of Evidence 702, requires consideration of a method's known or potential error rate [8]. However, as this analysis reveals, the forensic science community faces significant challenges in establishing and communicating these error rates across different methodologies and disciplines, creating a complex multidimensional problem where subjectivity significantly impacts reliability.

Lesson 1: Error Rates Vary Dramatically Across Forensic Disciplines

Substantial empirical evidence demonstrates that error rates are not consistent across forensic science disciplines. The variation spans orders of magnitude, reflecting fundamental differences in methodological maturity, standardization, and subjective interpretation.

Table 1: Documented Error Rates Across Forensic Disciplines

| Forensic Discipline | False Positive Error Rate | False Negative Error Rate | Key Studies/References |

|---|---|---|---|

| Latent Fingerprint Analysis | 0.1% | 7.5% | Miami-Dade Research Study [2] |

| Bitemark Analysis | 64.0% | 22.0% | Bitemark Profiling Inquiry [2] |

| DNA Mixture Interpretation | Varied by laboratory & protocol | Varied by laboratory & protocol | STRmix Collaborative Exercise [2] |

| Modern GC×GC Methods | Under validation; not yet established | Under validation; not yet established | Current research focus [8] |

The extraordinarily wide range from 0.1% to 64% in false positive rates illustrates that not all forensic evidence carries equivalent weight or reliability. This variation stems from core methodological differences: techniques relying heavily on human pattern matching (like bitemarks) show significantly higher error rates than those supported by automated instrumentation and statistical models (like modern DNA analysis) [2]. This lesson underscores the critical importance of discipline-specific validation rather than treating "forensic science" as a monolith when considering error rates.

Lesson 2: Legal Standards Demand Known Error Rates That Often Remain Unestablished

Legal systems explicitly require error rate consideration for scientific evidence, creating a significant challenge for many forensic disciplines where these rates remain poorly quantified or entirely unestablished.

The Daubert Standard and Its Requirements

The Daubert Standard, governing admissibility of scientific evidence in federal courts and many state courts, outlines four key factors for judges to consider: (1) whether the technique can be and has been tested; (2) whether the technique has been subjected to peer review and publication; (3) the technique's known or potential error rate; and (4) whether the technique has gained general acceptance in the relevant scientific community [8] [7]. The 2000 amendment to Federal Rule of Evidence 702 further reinforced these requirements, mandating that expert testimony be based on "reliable principles and methods" reliably applied to the case facts [7].

The Practical Reality of Error Rate Evidence

Despite these legal requirements, most forensic disciplines lack well-established, published error rates derived from large-scale empirical studies [9] [2]. A survey of 183 practicing forensic analysts revealed that while analysts perceive errors to be rare, most could not specify where error rates for their discipline were documented or published [9]. Their estimates of error in their fields were "widely divergent – with some estimates unrealistically low" [9]. This gap between legal expectations and scientific reality creates a fundamental tension in the justice system, where courts must evaluate evidence whose error characteristics are not fully understood.

Lesson 3: Distinguishing Method Performance from Method Conformance Is Critical

A crucial conceptual framework for understanding forensic error involves separating two distinct concepts: method performance and method conformance.

Table 2: Method Performance vs. Method Conformance

| Aspect | Method Performance | Method Conformance |

|---|---|---|

| Definition | The inherent capacity of a method to discriminate between different propositions of interest (e.g., mated vs. non-mated comparisons) | Whether the outcome of a method results from the analyst's adherence to defined procedures |

| Relates To | Fundamental validity and reliability of the method itself | Proper execution and application of the method |

| Assessment Through | Black box studies, validation studies, proficiency tests | Technical review, protocol compliance checks |

| Impact on Error | Limits of what the method can achieve under ideal conditions | How human and operational factors affect real-world results |

Method performance reflects the fundamental capability of a forensic method to distinguish between different conditions, such as whether two samples share a common source. This is typically measured through controlled studies that examine the method's accuracy and reliability across many samples and examiners [10]. Method conformance, in contrast, assesses whether an analyst properly adhered to established procedures during a specific examination. An error can occur through either poor method performance (the method itself is unreliable) or poor method conformance (the method was improperly executed) [10]. This distinction is essential for diagnosing sources of error and implementing targeted improvements.

Lesson 4: Inconclusive Results Represent a Critical Dimension of Forensic Error

The treatment of inconclusive decisions represents a complex dimension in understanding forensic error rates, particularly for subjective feature-comparison disciplines.

Inconclusive decisions are neither "correct" nor "incorrect" in the traditional binary sense, but can be evaluated as either "appropriate" or "inappropriate" given the available data quality and methodological limitations [10]. The frequency and handling of inconclusive results significantly impact reported error rates. For example, when examiners decline to make definitive judgments on challenging samples, calculated false positive and false negative rates based only on definitive conclusions may appear artificially favorable. Recent collaborative testing in DNA mixture interpretation reveals that error rates vary substantially across laboratories and protocols, with inconclusive rates forming an important part of the complete accuracy picture [2]. A comprehensive understanding of forensic error must therefore account for the entire spectrum of possible conclusions, including inconclusives, rather than focusing exclusively on definitive judgments.

Lesson 5: Technological Readiness Level (TRL) Correlates With Error Rate Documentation

The concept of Technology Readiness Levels (TRL) provides a useful framework for understanding how forensic methods evolve from research to validated practice, with error rate documentation typically improving as methods mature.

Table 3: Technology Readiness Levels in Forensic Science

| TRL | Stage Description | Error Rate Status | Example Forensic Methods |

|---|---|---|---|

| 1-2 | Basic principle observed/formulated | No systematic error assessment | Novel spectroscopic techniques [11] |

| 3-4 | Experimental proof of concept | Preliminary precision data only | Early GC×GC research applications [8] |

| 5-6 | Technology validated in relevant environment | Initial black box studies beginning | Handheld XRF for ash analysis [11] |

| 7-8 | System proven in operational environment | Multi-laboratory validation underway | GC×GC for oil spill tracing [8] |

| 9 | Actual system proven in operational environment | Well-established through repeated testing | Standardized DNA analysis [7] |

Traditional forensic methods like fingerprint analysis that achieved widespread adoption before modern validation standards (TRL 9) now face scrutiny for insufficient error rate documentation despite decades of use [7]. Meanwhile, newer instrumental techniques like comprehensive two-dimensional gas chromatography (GC×GC) are progressing through defined TRL stages, with current research focusing on increased intra- and inter-laboratory validation and error rate analysis necessary for court admissibility [8]. This progression demonstrates that error rate characterization should be viewed as an evolving process rather than a static achievement, with methods at different TRLs expected to have different levels of error rate documentation.

Lesson 6: Modern Instrumental Methods Are Shifting the Error Paradigm

Advanced analytical technologies are transforming forensic science by reducing subjective interpretation and generating quantitative data that supports statistical error analysis.

The GC×GC Revolution in Separation Science

Comprehensive two-dimensional gas chromatography (GC×GC) represents a significant advancement over traditional 1D GC methods, providing increased peak capacity and improved detection of trace compounds in complex forensic samples [8]. In GC×GC, a modulator connects primary and secondary separation columns with different stationary phases, creating two independent separation mechanisms that dramatically improve resolution of complex mixtures like illicit drugs, fingerprint residues, and ignitable liquid residues [8]. This technological advancement reduces ambiguity in chemical identification—a significant source of error in traditional chromatography—though it requires extensive validation to establish new error rate parameters for courtroom applications.

Spectroscopic Advances in Evidence Analysis

Modern spectroscopic techniques are providing more objective, quantitative approaches to traditional forensic questions. For example:

- Raman Spectroscopy: Advanced systems with improved optics and data processing enable non-destructive analysis of trace evidence with definitive molecular identification [11].

- Handheld X-ray Fluorescence (XRF): Provides non-destructive elemental analysis of materials like cigarette ash for brand discrimination [11].

- ATR FT-IR Spectroscopy with Chemometrics: Accurately estimates the age of bloodstains at crime scenes, adding temporal information to evidence interpretation [11].

- LIBS Sensors: Portable laser-induced breakdown spectroscopy systems allow rapid, on-site elemental analysis of forensic samples with enhanced sensitivity [11].

These instrumental approaches generate fundamentally different types of data compared to traditional pattern-matching disciplines, with error rates that can be more readily quantified through repeated measurements and statistical analysis.

Lesson 7: Research Priorities Are Systematically Addressing Error Rate Gaps

Significant coordinated efforts are underway to address foundational validity and error rate documentation across forensic disciplines, reflecting a paradigm shift toward scientifically rigorous practice.

The National Institute of Justice's Forensic Science Strategic Research Plan 2022-2026 outlines five strategic priorities that directly address error rate challenges [12]. These include: (I) Advancing applied research and development; (II) Supporting foundational research; (III) Maximizing research impact; (IV) Cultivating the workforce; and (V) Coordinating across communities of practice [12]. Specific objectives most relevant to error rates include developing "standard criteria for analysis and interpretation," conducting research on the "foundational validity and reliability of forensic methods," measuring "the accuracy and reliability of forensic examinations (e.g., black box studies)," and identifying "sources of error (e.g., white box studies)" [12]. This comprehensive framework represents the research community's systematic response to the error rate challenges identified in the NAS and PCAST reports, focusing resources on the most critical validity gaps.

Experimental Protocols & Methodologies

Black Box Study Design for Error Rate Determination

Black box studies represent the gold standard for estimating real-world error rates in forensic feature-comparison disciplines. The fundamental protocol involves: (1) Recruiting practicing forensic analysts representing multiple laboratories and experience levels; (2) Creating a test set containing both mated pairs (samples from the same source) and non-mated pairs (samples from different sources), with ground truth known to researchers but not participants; (3) Presenting samples to examiners in their normal working environment without special notification that they are being tested; (4) Collecting all examination conclusions using the discipline's standard conclusion scale; (5) Analyzing results to calculate false positive, false negative, and inconclusive rates across different conditions and examiner characteristics [2] [7]. These studies directly address the Daubert standard's requirement for "known or potential error rate" by providing empirical data on how often examiners reach erroneous conclusions under realistic conditions.

GC×GC Method Validation Protocol

For advanced instrumental techniques like comprehensive two-dimensional gas chromatography, establishing error rates requires rigorous method validation: (1) Specificity: Demonstrate baseline separation of target analytes from interferents in complex matrices; (2) Linearity and Range: Establish calibration curves across expected concentration ranges with correlation coefficients >0.99; (3) Limit of Detection/Quantification: Determine through serial dilution of spiked samples; (4) Precision: Conduct repeatability (intra-day) and reproducibility (inter-day, inter-operator, inter-instrument) studies with %RSD targets <15% for retention times and <20% for areas; (5) Robustness: Deliberately vary method parameters (temperature ramp, carrier flow) to establish operational limits; (6) Matrix Effects: Analyze targets in various forensic-relevant matrices to quantify suppression/enhancement [8]. This comprehensive validation provides the statistical foundation for quantifying measurement uncertainty—the instrumental equivalent of error rates in subjective disciplines.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Essential Materials for Advanced Forensic Chemistry Research

| Item/Category | Function/Application | Specific Examples |

|---|---|---|

| GC×GC Instrument System | Separation of complex forensic mixtures | Dual-stage cryogenic modulator; dual-column setups with orthogonal stationary phases [8] |

| Advanced Detectors | Identification and quantification of separated analytes | High-resolution mass spectrometry (HRMS); time-of-flight (TOF) MS; FID/TOFMS dual detection [8] |

| Reference Standards | Method validation and quantitative analysis | Certified reference materials for drugs, explosives, petroleum markers, and synthetic cannabinoids [8] |

| Data Processing Software | Handling complex multidimensional data | Instrument-specific software for peak detection, integration, and pattern recognition algorithms [8] [12] |

| Portable Spectrometers | Non-destructive field analysis of evidence | Handheld XRF, portable LIBS sensors, Raman spectrometers for crime scene investigation [11] |

Visualizing the Forensic Error Assessment Framework

The following diagram illustrates the conceptual relationship between forensic method maturity, error rate documentation, and legal admissibility requirements:

Forensic Method Maturity Pathway. This diagram illustrates the progression of forensic methods from development through implementation, showing how error rate documentation requirements intensify as methods approach courtroom admissibility.

The second diagram details the experimental workflow for establishing forensic error rates:

Error Rate Determination Workflow. This diagram outlines the systematic process for establishing error rates across both subjective pattern-matching disciplines and objective instrumental methods, highlighting the shared experimental framework despite methodological differences.

The seven lessons detailed in this analysis collectively demonstrate that understanding forensic error requires navigating a complex, multidimensional landscape where methodological maturity, subjective interpretation, legal standards, and technological advancement intersect. The forensic science community has made significant progress in acknowledging and systematically addressing error rate gaps, particularly through coordinated research initiatives like the NIJ Strategic Plan [12]. However, substantial work remains to establish comprehensive error rate documentation across all forensic disciplines, especially those relying heavily on human pattern matching.

For researchers and practitioners, this analysis underscores that error rate consideration must evolve from an afterthought to a fundamental component of method development, validation, and implementation. The ongoing transition from purely subjective methods to instrument-supported techniques with quantifiable uncertainty represents the most promising pathway for strengthening forensic science's scientific foundation. As this evolution continues, maintaining focus on these seven key lessons will ensure that error rate understanding keeps pace with technological advancement, ultimately producing more reliable evidence for the justice system.

In forensic science, the accuracy of analytical methods is paramount, as results can directly determine outcomes in judicial proceedings. Legal standards for the admissibility of scientific evidence, such as the Daubert Standard, guide courts to consider known error rates of forensic techniques [8]. However, a significant challenge persists: a disconnect between what forensic scientists believe about error rates in their disciplines and the empirical reality of those errors, which often remain poorly documented [9]. This guide objectively compares perceptual survey data with empirical data across forensic methods at varying Technology Readiness Levels (TRL) to illuminate this critical gap. The analysis reveals that while forensic analysts universally perceive errors as rare, the empirical validation of these perceptions and the integration of quantitative, objective methods are still evolving, particularly for newer technologies.

Survey Data on Forensic Analysts' Perceptions of Error

A foundational 2019 survey of 183 practicing forensic analysts provides direct insight into how the profession views error in its work [9]. The results indicate a community that is highly confident in its methodologies.

Key Perceptual Findings from Analyst Survey: The survey found that analysts perceive all types of errors to be rare, with false positive errors considered even more rare than false negatives [9]. This suggests a strong institutional and professional preference for minimizing the risk of incorrect incrimination. Furthermore, most analysts reported a preference for minimizing the risk of false positives over false negatives, aligning with this perception [9]. A critical finding was that most analysts could not specify where error rates for their discipline were documented or published, and their estimates were "widely divergent—with some estimates unrealistically low" [9].

Table 1: Summary of Forensic Analyst Perceptions from Survey Data

| Perception Aspect | Survey Finding | Implication |

|---|---|---|

| General Error Frequency | Perceived as rare across disciplines | High confidence in the reliability of forensic methods |

| False Positives vs. False Negatives | False positives perceived as even rarer | Reflects a conscious preference to avoid wrongful incrimination |

| Documentation of Error Rates | Most analysts could not specify where error rates are documented | Suggests a lack of standardized, accessible error rate data |

| Estimate Consensus | Estimates were widely divergent and sometimes unrealistically low | Highlights a potential need for better communication and training on method limitations |

The Empirical Reality of Forensic Method Error Rates

The empirical landscape of forensic error rates is complex, characterized by a push for more objective data and a recognized lack of established rates for many techniques.

The Push for Empirical Validation and a Paradigm Shift

A significant movement within forensic science advocates for a paradigm shift away from methods based on human perception and subjective judgment toward those grounded in quantitative measurements and statistical models [13]. The call is for methods that are transparent, reproducible, and intrinsically resistant to cognitive bias, using the logically correct likelihood-ratio framework for evidence interpretation [13]. This shift is essential for empirical validation under casework conditions.

Documented Error Rates and Technological Advancements

For some established and emerging technologies, empirical data is beginning to surface:

- Traditional Human-Based Methods: Recent reviews of forensic science conclude that error rates for some common techniques are not well-documented or even established [9]. This empirical void exists for many techniques reliant on human judgment.

- Digital Forensics (AI): In digital forensics, AI-powered deepfake detection algorithms have shown high empirical accuracy, with one report noting a 92% detection rate [14]. However, challenges like algorithmic "black box" models and training data bias can undermine credibility and potentially amplify forensic errors [14] [15].

- Bullet Comparison: To address the subjectivity of traditional bullet analysis, the new Forensic Bullet Comparison Visualizer (FBCV) was developed. This tool utilizes advanced algorithms to provide statistical support, bridging the gap between complex data and practical application to improve comparison accuracy [16].

- Next-Generation Sequencing (NGS): This DNA technology is noted for its high reliability and is transforming investigations by providing in-depth genetic insights. While specific error rates are not listed, its ability to process damaged or minute samples enhances its empirical robustness [16].

Table 2: Comparative Overview of Forensic Methods: TRL, Perceptions, and Empirical Data

| Forensic Method | Technology Readiness Level (TRL) | Analyst Perception of Error | Empirical Reality & Error Rate Data |

|---|---|---|---|

| Subjective Pattern Matching (e.g., fingerprints) | High (TRL 4) | Errors perceived as very rare, especially false positives [9] | Error rates not well-documented or established; calls for paradigm shift to quantitative methods [9] [13] |

| Digital Forensics (Deepfake Detection) | Emerging (TRL 3-4) | Perceptions not specifically surveyed | NIST (2024) reports detection accuracy of ~92%; challenges with algorithm transparency remain [14] |

| Bullet Comparison (FBCV) | Mid-High (TRL 4) | Traditional method seen as subjective | New algorithmic tools (FBCV) provide objective statistical support to enhance accuracy [16] |

| DNA (Next-Generation Sequencing) | High (TRL 4) | Generally high confidence | Considered highly reliable; speeds up investigations and reduces backlogs [16] |

| GC×GC-MS Forensic Applications | Low-Mid (TRL 1-3) | Not yet widely surveyed in practice | Research stage; requires validation and error rate analysis for court admissibility [8] |

Experimental Protocols for Error Rate Studies

Understanding the methodology behind error rate studies is crucial for interpreting their findings. The following are generalized protocols for the key types of studies cited.

Protocol for Perceptual Survey Studies (e.g., Murrie et al., 2019)

- Objective: To examine what forensic analysts know or believe about error rates in their disciplines.

- Participant Recruitment: A sample of practicing forensic analysts (e.g., n=183) is recruited across multiple disciplines.

- Data Collection: Participants complete an anonymous survey containing both quantitative and qualitative components.

- Quantitative Estimates: Analysts are asked to provide numerical estimates for false positive and false negative error rates in their field.

- Preference Elicitation: Questions gauge whether analysts prioritize minimizing false positives or false negatives.

- Knowledge Assessment: Analysts are asked to identify where error rates for their discipline are documented.

- Data Analysis: Quantitative estimates are analyzed for central tendency and variability. Qualitative responses are coded for themes. Results are synthesized to reveal perceptions and knowledge gaps [9].

Protocol for Empirical Validation Studies (e.g., Digital Forensics)

- Objective: To empirically determine the accuracy and error rate of a specific forensic tool or method, such as a deepfake detection algorithm.

- Reference Dataset Curation: A large, diverse dataset of known authentic and manipulated media is assembled. This serves as the ground truth.

- Tool/Algorithm Testing: The forensic tool or algorithm is run against the reference dataset. The testing is often blinded to avoid analyst bias.

- Outcome Measurement: For each item in the dataset, the tool's output (e.g., "fake" or "authentic") is compared to the ground truth.

- Statistical Analysis: Outcomes are tabulated into a confusion matrix to calculate key metrics [14]:

- Accuracy: (True Positives + True Negatives) / Total Cases

- False Positive Rate: False Positives / Total Actual Negatives

- False Negative Rate: False Negatives / Total Actual Positives

- Validation: The process is repeated across different conditions to ensure reliability.

Protocol for Technical/Methodological Research (e.g., GC×GC-MS)

- Objective: To develop and validate a new analytical method for a specific forensic application, such as illicit drug analysis or odor decomposition.

- Method Development: The GC×GC-MS parameters are optimized, including column selection, temperature programming, and modulation period.

- Calibration & Sensitivity: The method is calibrated using known standards to establish linearity, limit of detection (LOD), and limit of quantification (LOQ).

- Precision & Accuracy: Repeatability (intra-day) and reproducibility (inter-day) are measured. Accuracy is determined via spike-and-recovery experiments.

- Specificity: The method is tested against complex, real-world mixtures to demonstrate its peak capacity and ability to separate co-eluting analytes.

- Application to Case-Ready Samples: The validated method is applied to authentic forensic samples (e.g., drug seizures, fire debris) to demonstrate practical utility [8].

- Error Analysis: Throughout validation, potential sources of error are identified and quantified where possible, a requirement for meeting legal admissibility standards like Daubert [8].

Visualizing the Legal and Empirical Framework for Forensic Error

The path from a forensic method's development to its acceptance in court is governed by legal standards that explicitly require consideration of error rates. The following diagram illustrates this framework and the critical role of empirical validation.

Diagram 1: Forensic Error Rate Admissibility Framework. This diagram illustrates the relationship between legal admissibility standards, analyst perceptions, and the empirical reality of forensic methods. A "validation gap" exists between perceptions and reality, driving a necessary paradigm shift toward quantitative methods for court acceptance.

The Scientist's Toolkit: Key Research Reagent Solutions

The advancement of forensic science, particularly the move towards more empirical and quantitative methods, relies on a suite of sophisticated tools and reagents.

Table 3: Essential Research Reagents and Tools for Modern Forensic Method Development

| Tool/Reagent | Function in Forensic Research & Validation |

|---|---|

| GC×GC-MS System | Provides superior separation of complex mixtures (e.g., drugs, fire debris) for non-targeted analysis, increasing detectability of trace analytes [8]. |

| Next-Generation Sequencing (NGS) | Allows for detailed analysis of degraded, minimal, or mixed DNA samples, providing greater discriminatory power than traditional methods [16]. |

| AI/ML Algorithms | Automates the analysis of large datasets (e.g., digital media, logs) to identify patterns, anomalies, and potential evidence, improving efficiency [14] [15]. |

| Likelihood Ratio Software | Provides the statistical framework for objectively evaluating evidence strength, moving interpretation away from subjective judgment [13]. |

| Certified Reference Materials | Essential for method calibration, determining accuracy (spike-and-recovery), and establishing limits of detection and quantification [8]. |

| Forensic Bullet Comparison Visualizer (FBCV) | Uses algorithms to provide objective statistical support for bullet comparisons, reducing subjectivity of traditional methods [16]. |

| Cloud Forensic Tools | Specialized software for acquiring and analyzing data from distributed cloud storage platforms, addressing jurisdictional and technical challenges [14] [15]. |

In any complex scientific system, error is unavoidable [6]. For forensic science, a discipline with profound implications for justice, understanding and managing this spectrum of error is not merely an academic exercise but a fundamental ethical requirement. Errors range from discrete procedural mistakes in a laboratory to the subtle statistical risk of coincidental matches, each with different causes and consequences. A comparative analysis of forensic methods, evaluated through the framework of Technology Readiness Levels (TRL), reveals how the maturity of a discipline influences its error profile. This guide objectively compares the error rates and reliability of various forensic methods, providing researchers and development professionals with the experimental data and protocols necessary to critically assess and improve forensic technologies.

Defining the Spectrum of Forensic Error

A critical first step is recognizing that 'error' is subjective and multidimensional [6]. What constitutes an error can vary depending on perspective—whether that of a laboratory manager, a testifying expert, or a legal practitioner.

- 1.1. A Typology of Forensic Errors: A comprehensive study analyzing wrongful convictions established a forensic error typology, categorizing five primary error types [17]. This typology provides a structured framework for comparison, moving beyond a simplistic "right or wrong" dichotomy.

- 1.2. Subjective and Multidimensional Nature: Disagreement exists on what counts as an error. Is a mistake that is caught before a report is issued (a "near miss") equivalent to one that leads to a wrongful conviction? Different stakeholders may have different answers, and various studies compute error rates based on different outcomes, from individual proficiency tests to departmental-level error rates [6].

The following diagram illustrates the logical relationships between the major categories of forensic error and their primary contributing factors, as identified in contemporary research.

Comparative Error Rates Across Forensic Disciplines

Not all forensic disciplines are equally prone to error. A landmark analysis of 732 exoneration cases and 1,391 forensic examinations revealed that certain disciplines contribute disproportionately to wrongful convictions [17]. The following table summarizes the key findings, highlighting disciplines with higher observed error rates and their primary associated error types.

Table 1: Forensic Discipline Error Profile Based on Case Analysis

| Forensic Discipline | Prevalence of Error in Cases | Dominant Error Type(s) | Common Root Causes |

|---|---|---|---|

| Bitemark Analysis | Disproportionately high | Type 2 (Incorrect Individualization) | Inadequate scientific foundation; examiners often outside structured labs [17] |

| Hair Comparison | High | Type 3 (Testimony Error) | Testimony conformed to past, now-outdated standards [17] |

| Serology | High | Type 3 (Testimony), Best Practice Failures | Failure to collect reference samples, conduct tests correctly [17] |

| Latent Fingerprints | Lower, but severe | Type 2 (Incorrect Individualization) | Fraud or examiners violating basic standards [17] |

| Seized Drug Analysis | High (primarily field testing) | Type 5 (Evidence Handling/Reporting) | Reliance on unconfirmed presumptive tests in the field [17] |

| DNA Analysis | Lower, but present | Type 2 (Interpretation Error) | Complex DNA mixture interpretation; early, less reliable methods [17] |

Quantitative data from controlled black-box studies further allows for the calculation of False Discovery Rates (FDR) for specific comparative tasks. The table below pools data from multiple studies on striated toolmark analysis, demonstrating how the initial FDR for a single comparison compounds as the number of comparisons increases—a phenomenon known as the multiple comparisons problem [5].

Table 2: Impact of Multiple Comparisons on Family-Wise False Discovery Rate (FDR) in Toolmark Analysis [5]

| Source Study | Single-Comparison FDR (e) | Family-Wise FDR after 10 Comparisons (E10) | Family-Wise FDR after 100 Comparisons (E100) | Max Comparisons for ≤10% Total FDR |

|---|---|---|---|---|

| Mattijssen et al. [5] | 7.24% | 52.8% | 99.9% | 1 |

| Pooled Study Data [5] | 2.00% | 18.3% | 86.7% | 5 |

| Bajic et al. [5] | 0.70% | 6.8% | 50.7% | 14 |

| Best et al. [5] | 0.45% | 4.5% | 36.6% | 23 |

Experimental Protocols for Error Rate Estimation

A robust understanding of error rates requires data from well-designed experiments. The following are detailed methodologies for two key types of studies cited in this guide.

3.1. Protocol for a Black-Box Study (Striated Toolmark Analysis): These studies are designed to estimate the intrinsic false discovery rate of a method by testing examiner proficiency with ground-truthed samples.

- Objective: To determine the false positive and false negative rates in matching a cut wire to a specific wire-cutting tool.

- Sample Preparation: A known wire-cutting tool is used to create cuts in wires of a specific composition (e.g., 12-gauge aluminum). These constitute the "evidence." The tool is also used to create reference "blade cuts" in a sheet of the same material, which may be made at multiple angles. "Non-matching" samples are created using different tools [5].

- Examination Process: Examiners are provided with the evidence wire and several potential tool marks (both matching and non-matching) without knowing the ground truth. The process involves:

- Comparing each side of the wire to each cutting surface of the blade cuts under a comparison microscope.

- Physically sliding and aligning the wire along the blade cut to match striation patterns, a process that inherently involves hundreds to thousands of implicit comparisons [5].

- Declaring an identification, exclusion, or inconclusive result for each comparison.

- Data Analysis: The FDR is calculated as the proportion of known non-matching samples that were incorrectly declared as an identification. The false negative rate is calculated from known matching samples that were excluded. The family-wise error rate for the entire examination is then modeled as ( E_n = 1 - [1 - e]^n ), where ( e ) is the single-comparison FDR and ( n ) is the number of independent comparisons performed [5].

3.2. Protocol for a Practitioner Survey (Perceived Error Rates): Surveys assess the gap between perceived and empirically measured error rates.

- Objective: To examine what forensic analysts believe and estimate about error rates in their own disciplines [9].

- Survey Design: A cross-sectional survey is administered to a sample of practicing forensic analysts (e.g., 183 analysts across multiple disciplines). The survey includes both quantitative and qualitative components.

- Measures:

- Quantitative Estimates: Analysts are asked to provide numerical estimates for the rates of false positives and false negatives in their discipline.

- Error Preference: Analysts are asked whether their methodology is designed to minimize false positives or false negatives.

- Documentation Source: Analysts are asked to identify where error rates for their discipline are documented or published.

- Data Analysis: Responses are analyzed to reveal central tendencies and variability in perceptions. A key finding is that analysts often perceive errors to be very rare, with some estimates being unrealistically low, and many cannot specify where documented error rates can be found [9].

The Scientist's Toolkit: Research Reagent Solutions

Advancing forensic methods requires specific tools and materials. The following table details key reagents and their functions in developing and validating new forensic techniques, such as comprehensive two-dimensional gas chromatography (GC×GC).

Table 3: Essential Research Reagents and Materials for Advanced Forensic Method Development

| Reagent/Material | Function in Research & Development |

|---|---|

| GC×GC Instrumentation | Core analytical platform for separating complex mixtures (e.g., drugs, ignitable liquids). Consists of a primary column, modulator, and secondary column to achieve higher peak capacity than 1D-GC [8]. |

| Modulator | The "heart" of the GC×GC system. It traps and re-injects effluent from the first dimension column onto the second dimension column, preserving separation and dramatically increasing resolution [8]. |

| Time-of-Flight Mass Spectrometry (TOFMS) | A high-speed detector capable of collecting full-range mass spectra very rapidly, which is essential for deconvoluting the fast-separating peaks generated by GC×GC [8]. |

| Certified Reference Materials (CRMs) | High-purity analytical standards with certified chemical and physical properties. Used for method development, calibration, and determining the false positive/negative rates of a new technique [18] [8]. |

| Proficiency Test Samples | Blind or declared samples provided by external vendors (e.g., Collaborative Testing Services). Used in validation studies and ongoing quality assurance to measure practical error rates in a laboratory [18]. |

Legal and Analytical Readiness: The Path from Research to Courtroom

For any forensic method, admission as evidence in legal proceedings is the ultimate test of its reliability. Courts in the United States and Canada apply specific standards to evaluate the admissibility of expert testimony, which directly impacts the adoption of new technologies.

5.1. Legal Standards for Admissibility:

- Daubert Standard (U.S. Federal Courts): Judges act as gatekeepers and consider several factors, including whether the theory or technique has been tested, its known or potential error rate, and whether it has been peer-reviewed and is generally accepted [8].

- Frye Standard (Some U.S. States): Requires that the scientific technique be "generally accepted" by the relevant scientific community [8].

- Mohan Criteria (Canada): Focuses on the relevance and necessity of the expert evidence, the absence of exclusionary rules, and the presence of a properly qualified expert [8].

5.2. The TRL Framework and Error Rates: A method's Technology Readiness Level (TRL) is a useful metric for gauging its maturity. Lower-TRL methods (e.g., novel research techniques like GC×GC for fingermark chemistry) often lack established error rates and extensive validation, making them inadmissible under Daubert [8]. High-TRL methods (e.g., standardized DNA analysis) have well-documented error rates from proficiency testing and are generally accepted. The journey to court admission requires a deliberate focus on intra- and inter-laboratory validation, error rate analysis, and standardization [8].

The following workflow diagram maps the critical path a novel forensic method must take to progress from basic research to being court-ready, highlighting the integral role of error rate management at each stage.

Measuring the Immeasurable: Methodologies for Quantifying Forensic Error Rates

The estimation of method error rates is a cornerstone of establishing reliability in forensic science. The choice of experimental design—black-box or white-box—fundamentally shapes how these error rates are calculated, interpreted, and contextualized within a validation framework. Black-box studies, which treat the forensic system as an opaque unit, measure overall performance outputs without regard to internal processes. In contrast, white-box studies peer inside the system to understand how internal components, logic, and decision-making pathways contribute to the final outcome. This guide provides an objective comparison of these two experimental approaches, framing them within the broader thesis of evaluating the comparative error rates of forensic methods at different Technology Readiness Levels (TRL). The analysis is supported by experimental data and detailed protocols to inform researchers and professionals in forensic science and drug development.

Core Conceptual Differences

The distinction between black-box and white-box studies lies in the experimenter's knowledge of and access to the system's internals.

- Black-Box Studies investigate a system's performance by analyzing its responses to inputs without any knowledge of its internal workings. The system is treated as an "opaque box" [19] [20]. The focus is exclusively on the correlation between inputs and outputs to assess overall performance and error rates.

- White-Box Studies require full knowledge of and access to the system's internal structure, logic, and code [19] [21]. The experiment is designed to probe specific internal pathways, components, and decision-making processes to understand how and why errors occur.

Table 1: Conceptual Comparison of Black-Box and White-Box Experimental Approaches

| Aspect | Black-Box Studies | White-Box Studies |

|---|---|---|

| Core Focus | Overall system performance, input-output relationships [19] [21] | Internal logic, code structure, and execution paths [19] [21] |

| System Knowledge | None; the system is opaque [20] [22] | Full knowledge of source code and architecture [20] [21] |

| Primary Goal | Estimate real-world error rates and functional accuracy [19] | Identify root causes of errors, verify internal logic, achieve high code coverage [19] [20] |

| Data Basis for Tests | Requirements, specifications, and user scenarios [23] [21] | Source code, algorithms, and internal design documents [20] [21] |

| Ideal Application | System-level validation, acceptance testing, user-centric performance [19] [22] | Unit testing, code-level validation, security vulnerability detection [20] [21] |

Diagram 1: High-Level Experimental Design Workflow

Quantitative Error Rate Data from Comparative Studies

Empirical data reveals how these two approaches yield different, yet complementary, error metrics. Black-box studies often report higher-level functional error rates, while white-box studies provide granular data on code and logic coverage.

Error Rates in Software Testing Contexts

Table 2: Comparative Error Detection Rates in Software Testing

| Testing Type | Reported Defect Detection Rate | Typical Code Coverage | Key Findings |

|---|---|---|---|

| Black-Box Testing | Catches ~75% of functional/UI bugs via boundary value analysis [19] | Unknown (code not reviewed) [19] | Effective for user-facing functionality; misses hidden logic flaws [19] [20] |

| White-Box Testing | Defect detection up to 90% when combined with code reviews [19] | Can push coverage above 85% using statement/branch analysis [19] | Uncovers hidden bugs, logic flaws, and security vulnerabilities [19] [20] |

| Combined Approach | Far surpasses black-box-only approaches [19] | Provides a complete view of software health [19] | Creates a more balanced view of performance and reliability [19] [22] |

Error Rates in Forensic Science Applications

Black-box studies are prominently used to estimate error rates in forensic feature-comparison disciplines, though the treatment of "inconclusive" results significantly impacts the final rate.

Table 3: Forensic Black-Box Study Error Rates for Striated Toolmark Analysis

| Study Reference | False Discovery Rate (FDR) per Single Comparison | Family-Wise FDR after 100 Comparisons | Key Methodology Notes |

|---|---|---|---|

| Mattijssen et al. (2020) [5] | 7.24% | 99.9% | Open-set study design; highlights impact of multiple comparisons [5] |

| Pooled Data Analysis [5] | 2.00% | 86.7% | Weighted average from multiple studies on striated evidence [5] |

| Bajic (2019) [5] | 0.70% | 50.7% | Suggests a maximum of 14 comparisons to keep family-wise FDR <10% [5] |

| Best Case (2021) [5] | 0.45% | 36.6% | Represents the lower bound of error rates found in literature [5] |

A re-evaluation of firearm examination black-box studies found that how inconclusive results are treated is a major factor in calculating final error rates, with variations including excluding them, counting them as correct, or counting them as incorrect [24]. Furthermore, process errors occurred at higher rates than examiner errors [24], a finding more likely to emerge from a white-box analysis of the examination process.

Experimental Protocols and Methodologies

Protocol for a Forensic Black-Box Study

Black-box studies in forensics are designed to simulate real-world operational conditions to obtain a realistic estimate of overall performance.

- Define the Population and Samples: Create a representative set of "ground truth" samples, including both mated (same source) and non-mated (different source) comparisons. The study can be "closed-set" (all samples have a known match in the set) or more realistic "open-set" (some samples have no match) [24] [5].

- Engage Participants: Multiple examiners from different laboratories participate without knowledge of the ground truth to avoid bias [24].

- Define the Conclusion Scale: Establish a standardized scale for examiners to report their findings (e.g., Identification, Inconclusive, Elimination) [10].

- Execute Comparisons: Examiners perform comparisons based on their standard operating procedures, providing conclusions for each pair.

- Data Analysis and Error Rate Calculation:

- Compare examiner conclusions to the ground truth.

- False Positive Rate (FPR): Calculate the proportion of non-mated comparisons erroneously reported as "Identification."

- False Negative Rate (FNR): Calculate the proportion of mated comparisons erroneously reported as "Elimination."

- Treat Inconclusives: Decide on a statistical treatment for inconclusive results (e.g., exclude, count as error, or count as correct), as this choice profoundly affects the final error rate [24].

Protocol for a White-Box Study

White-box studies deconstruct the system to evaluate its components and internal logic.

- Gain Full Access: Obtain complete access to the system's internals, including source code, algorithms, training data for machine learning models, and detailed standard operating procedures (SOPs) for human-in-the-loop systems [19] [20].

- Code and Logic Analysis:

- Design Targeted Tests: Create tests to force execution down specific paths. Key techniques include:

- Statement Coverage: Ensure every line of code or procedural step is executed at least once [19] [21].

- Branch Coverage: Test all possible outcomes of every decision point (e.g., every "if" and "else" condition) [19] [21].

- Path Testing: Test all possible routes through a combination of logical decisions [19].

- Loop Testing: Test loops for correct behavior at their boundaries, including zero, one, and multiple iterations [19].

- Execute and Measure: Run the tests and measure coverage metrics. The goal is to identify not just if an error occurred, but the exact location and logical cause of any failure [20].

Diagram 2: Comparative Experimental Workflows

The Researcher's Toolkit: Essential Materials and Reagents

A robust error rate study requires careful selection of materials, software, and methodological tools.

Table 4: Essential Research Reagents and Solutions for Error Rate Studies

| Item / Solution | Function / Purpose | Application Context |

|---|---|---|

| Standardized Evidence Sets | Provides the "ground truth" with known characteristics for controlled testing. | Core to both black-box and white-box studies in forensics [24] [5]. |

| Static Code Analysis Tools (e.g., SonarQube) | Automates the review of source code for vulnerabilities, bugs, and code smells without execution. | Foundational for white-box testing of software-based forensic systems [20] [21]. |

| Unit Testing Frameworks (e.g., JUnit, pytest) | Provides a structure to write and execute automated tests for individual units of code. | Essential for white-box testing to verify the logic of specific functions and algorithms [20] [21]. |

| Code Coverage Tools (e.g., JaCoCo) | Measures the percentage of code executed during tests, ensuring thoroughness. | A key metric in white-box studies to quantify test completeness (e.g., statement, branch coverage) [19] [20]. |

| Cross-Correlation Function Algorithms | Quantifies the similarity between two patterns (e.g., striations on bullets or wires). | Used in both algorithmic and visual white-box analysis of forensic comparisons; highlights the multiple comparisons problem [5]. |

| Black-Box Testing Suites (e.g., Selenium for UI, Postman for API) | Automates end-to-end functional tests from a user's perspective without code access. | Used for system-level black-box validation of forensic software systems [20] [21]. |

| Standardized Conclusion Scales | Provides a consistent framework for examiners to report findings in black-box studies. | Critical for ensuring consistency and interpretability in forensic black-box studies [10]. |

In forensic science, the "multiple comparisons problem" arises when an examiner conducts a large number of comparative tests between evidence samples, increasing the probability of falsely associating non-matching items purely by chance. This statistical phenomenon presents particular challenges in toolmark examination, where practitioners must determine whether marks found at a crime scene were made by a specific tool. As the field faces increasing scrutiny regarding the scientific validity and reliability of its methods, understanding and addressing this problem through objective, automated approaches has become imperative [25] [26].

Traditional toolmark examination has relied heavily on subjective human judgment using comparison microscopes, where examiners assess microscopic features based on their training and experience. This process lacks precisely defined, scientifically justified protocols that yield objective determinations with well-characterized confidence limits and error rates [26]. The problem intensifies when conducting multiple comparisons across large datasets or consecutively manufactured tools with subtle variations, potentially leading to false positive identifications with serious legal consequences.

This case study examines how emerging automated methodologies address the multiple comparisons problem in toolmark and wire examination through statistically rigorous frameworks. By implementing objective similarity metrics, likelihood ratio calculations, and controlled error rate measurements, these approaches strengthen the scientific foundation of toolmark identification while providing transparent accountability for comparison results.

Comparative Analysis of Toolmark Examination Methods

Performance Metrics Across Methodologies

Table 1: Comparative performance of toolmark examination methodologies

| Methodology | Error Rate Range | Statistical Foundation | Automation Level | Data Type | Multiple Comparisons Handling |

|---|---|---|---|---|---|

| Traditional Examiner-Based | Not fully quantified [26] | Subjective pattern recognition | Manual | 2D microscopic images | Limited systematic adjustment |

| Automated Plier Mark Analysis | 0-4% misleading evidence rate [27] | Likelihood ratios with machine learning | High automation | 3D topographies | Explicit statistical control |

| Automated Screwdriver Mark Analysis | 2% false positive, 4% false negative [28] | Beta distribution fitting | High automation | 3D toolmarks | Threshold-based classification |

| NIST Chisel/Punch Study | 0% false positives in controlled study [26] | Objective similarity metrics (ACCFMAX) | Full automation | 2D profile & 3D topography | Statistical baseline establishment |

Quantitative Error Rate Comparison

Table 2: Experimental error rates across toolmark studies

| Study Focus | Tool Types | False Positive Rate | False Negative Rate | Misleading Evidence Rate | Sample Size |

|---|---|---|---|---|---|

| Plier Mark Comparison [27] | Cutting pliers | 0-4% | Not specified | 0-4% | Various brands and models |

| Screwdriver Mark Analysis [28] | Slotted screwdrivers | 2% | 4% | Not specified | Consecutively manufactured |

| NIST Protocol [26] | Chisels and punches | 0% | 0% | Not specified | 40 known/unknown marks |

Experimental Protocols in Automated Toolmark Analysis

3D Topography Acquisition and Treatment

Advanced automated comparison methods begin with high-resolution 3D topography acquisition of toolmarks. This process involves using confocal microscopes or optical profilometers to capture surface characteristics at micron-level resolution, converting qualitative visual assessments into quantitative data. The 3D topographic data undergoes specific treatment to enable valid comparisons, including:

- Data filtering to remove noise and artifacts while preserving relevant topographic features

- Surface registration to align compared marks in three-dimensional space

- Feature extraction to identify and quantify statistically relevant characteristics

- Data normalization to account for variations in mark creation conditions [27] [28]

For striated toolmarks (e.g., from pliers or wire cutters), the specific zone along the blade must be carefully selected to build appropriate within-source variability models, which is crucial for addressing the multiple comparisons problem by establishing valid baselines for similarity assessments [27].

Correlation Metrics and Machine Learning Integration

Automated systems employ correlation metrics to quantitatively assess similarity between toolmarks. The typical workflow involves:

- Pairwise comparison of all toolmarks in the dataset

- Similarity score calculation using metrics like the ACCFMAX value [26]

- Distribution analysis of known matching and non-matching comparisons

- Threshold establishment for identification decisions based on statistical analysis of these distributions

- Machine learning algorithm application to combine multiple comparison metrics and compute likelihood ratios [27]

This approach allows for the derivation of likelihood ratios that assign weight to forensic evidence, providing a transparent and statistically valid framework for addressing the multiple comparisons problem [27]. The machine learning component enhances performance by learning optimal weightings for different correlation metrics based on empirical data.

Figure 1: Automated toolmark analysis workflow integrating machine learning for statistical decision-making

Statistical Framework for Multiple Comparisons

Addressing the multiple comparisons problem requires specialized statistical frameworks that control for increased Type I errors (false positives) when conducting numerous simultaneous tests. Automated toolmark analysis implements several key approaches:

- Likelihood Ratio Framework: Provides continuous measure of evidence strength rather than binary decisions, incorporating both within-source and between-source variability [27]

- Distribution Fitting: Beta distributions are fitted to known match and known non-match densities, allowing derivation of likelihood ratios for new toolmark pairs [28]

- Cross-Validation: Procedures validate classification accuracy on unseen data, with reported sensitivity of 98% and specificity of 96% for screwdriver marks [28]

- Baseline Establishment: Using distributions of similarity metrics for known matching and non-matching comparisons to establish statistically valid identification baselines [26]

These statistical controls specifically address the multiple comparisons problem by quantifying and accounting for the increased risk of false associations when conducting numerous tests, thereby enhancing the scientific validity of conclusions [27] [28].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential materials and instruments for automated toolmark analysis

| Item | Function | Application Example |

|---|---|---|

| Confocal Microscope | High-resolution 3D topography acquisition | Non-contact measurement of toolmark surface topography [26] |

| Stylus Profilometer | 2D cross-section profile measurement | Quantitative assessment of striated mark depth and spacing [26] |

| Automated Comparison Algorithms | Objective similarity assessment | Calculating correlation metrics between toolmark pairs [28] |

| Machine Learning Algorithms | Likelihood ratio computation | Combining multiple comparison metrics for evidence weighting [27] |

| Consecutively Manufactured Tools | Reference dataset creation | Studying toolmark variability within and between tools [26] |

| Standardized Sample Preparation | Controlled mark generation | Reproducible creation of toolmarks with controlled variables [26] |

| Statistical Software Packages | Distribution analysis and error rate calculation | Fitting Beta distributions to known match/non-match densities [28] |

Cross-Disciplinary Implications for Forensic Method Validation

The automated approaches developed for toolmark examination provide valuable frameworks for addressing the multiple comparisons problem across forensic disciplines. The implementation of objective similarity metrics, likelihood ratios, and statistically quantified error rates establishes a template for enhancing scientific validity in other pattern evidence domains [29].

Recent research initiatives focus on developing statistically rigorous methods for computing score-based likelihood ratios for impression and pattern evidence, which would directly address multiple comparison challenges across disciplines [29]. These approaches aim to provide forensic examiners with quantitative results to bolster what have traditionally been subjective opinions, while simultaneously controlling for statistical artifacts arising from multiple testing scenarios.

The transition from subjective to objective comparison methods represents a paradigm shift in forensic science, responding to critiques from scientific advisory bodies that have questioned the reliability of traditional examination methods [25] [26]. By explicitly addressing the multiple comparisons problem through statistical frameworks, automated toolmark analysis demonstrates a path forward for enhancing forensic science validity across multiple disciplines.

Figure 2: Logical framework addressing the multiple comparisons problem in toolmark analysis

Automated toolmark analysis methodologies demonstrate significant advances in addressing the multiple comparisons problem that challenges traditional forensic examination. Through the implementation of objective similarity metrics, likelihood ratio frameworks, and statistically quantified error rates, these approaches enhance scientific validity while providing transparent accountability for comparison results. The reported misleading evidence rates between 0-4% for automated plier mark comparisons and false positive rates of 2% for screwdriver mark analysis represent substantial improvements over traditional methods whose error rates have not been fully quantified [27] [28].

The cross-disciplinary implications of these statistical frameworks extend beyond toolmark examination to other pattern evidence domains, offering a template for addressing fundamental validation challenges in forensic science. As the field continues evolving toward more objective methodologies, the systematic approach to managing multiple comparisons developed in toolmark analysis provides an essential foundation for enhancing reliability across forensic disciplines.

Proficiency Testing (PT) serves as a critical tool for assessing the performance of forensic laboratories and examiners. Within a broader thesis on the comparative error rates of forensic methods at different Technology Readiness Levels (TRL), understanding the role of PT is paramount. PT provides an external quality assessment mechanism, enabling laboratories to benchmark their performance against established standards and peer institutions [30]. The fundamental premise is that the reliability and probative value of forensic science evidence are inextricably linked to the rates at which examiners make errors [31]. For jurors and other stakeholders, rationally assessing the significance of a reported forensic match requires information about the false positive error rates associated with the methodology [31].

PT schemes typically involve characterized samples designed to represent the types of samples, matrices, and targets analyzed in forensic laboratories [30]. These samples contain measured values not disclosed to participants, functioning as blind samples that mimic real casework. Participants analyze these samples using their standard protocols and report results to the PT provider for confidential evaluation and grading against established reference values [30]. This process facilitates interlaboratory comparison and helps identify potential systematic issues within laboratory processes.

This article examines both the utility and limitations of PT as a mechanism for estimating error rates across forensic disciplines, with particular attention to how these limitations manifest differently across TRL levels. We explore experimental data from multiple forensic domains, analyze methodological frameworks for PT implementation, and discuss the implications for error rate estimation in both established and emerging forensic methods.

The Utility of Proficiency Testing in Error Rate Estimation

Core Functions and Implementation

Proficiency Testing serves multiple essential functions within forensic science quality systems. Primarily, it provides an external validation of laboratory competency, supplementing internal quality control measures. For accredited laboratories, participation in PT is often mandatory for maintaining accreditation status under standards such as ISO/IEC 17025, with PT providers themselves requiring accreditation under ISO 17043 [30]. This standardized framework ensures that PT schemes meet minimum quality requirements and provide meaningful assessments.

The statistical foundation of PT enables quantitative error rate estimation. Laboratories evaluate their performance based on concepts of accuracy (closeness to the true value) and precision (closeness of repeated measurements) while accounting for bias (systematic deviation) and error (difference between measurement and true value) [30]. This statistical rigor allows for the calculation of empirical error rates that can be tracked over time, revealing trends in laboratory performance and method reliability.