From Lab to Crime Lab: Implementing TRL-Driven Forensic Biology Evidence Screening Technologies

This article provides a comprehensive framework for researchers, scientists, and forensic development professionals on the implementation of Technology Readiness Level (TRL) frameworks for forensic biology evidence screening.

From Lab to Crime Lab: Implementing TRL-Driven Forensic Biology Evidence Screening Technologies

Abstract

This article provides a comprehensive framework for researchers, scientists, and forensic development professionals on the implementation of Technology Readiness Level (TRL) frameworks for forensic biology evidence screening. It explores the foundational research priorities set by national institutes, details cutting-edge methodological applications from spectroscopy to genomics, addresses critical troubleshooting for real-world lab integration and backlogs, and establishes validation protocols against rigorous quality assurance standards. The synthesis of these intents offers a strategic roadmap for advancing forensic biology from research to reliable practice, with significant implications for the efficiency and integrity of the criminal justice system.

The Strategic Roadmap: National Priorities and Core Concepts for Forensic Biology Screening

Understanding the NIJ's Strategic Research Plan for Forensic Science (2022-2026)

The National Institute of Justice (NIJ) Forensic Science Strategic Research Plan for 2022-2026 provides a comprehensive framework to strengthen the quality and practice of forensic science through targeted research and development. This plan addresses critical opportunities and challenges faced by the forensic science community, emphasizing collaborative partnerships between government, academic, and industry sectors to advance the field [1]. The strategic agenda is particularly relevant for forensic biology evidence screening technology implementation, as it directs research investments toward overcoming current limitations in evidence processing, interpretation, and validation.

NIJ's forensic science mission focuses on enhancing forensic practice through scientific innovation and information exchange, with specific emphasis on developing highly discriminating, accurate, reliable, and cost-effective methods for physical evidence analysis [2]. For researchers and practitioners implementing forensic biology evidence screening technologies, this plan establishes clear priorities for validating emerging methods, integrating advanced technologies, and ensuring the reliable adoption of new protocols into operational workflows.

Strategic Priority I: Advance Applied Research and Development in Forensic Science

Strategic Priority I focuses on meeting practitioner needs through applied research and development, resulting in improved procedures, methods, processes, devices, and materials. This priority is particularly relevant for evidence screening technology implementation as it addresses specific technical challenges encountered in operational forensic biology laboratories.

Key Objectives for Forensic Biology Evidence Screening

Table 1: Strategic Priority I Objectives Relevant to Evidence Screening Technologies

| Objective Category | Specific Research Objectives | Relevance to Evidence Screening |

|---|---|---|

| Application of Existing Technologies | Machine learning methods for forensic classification; Tools increasing sensitivity and specificity | AI-driven forensic workflows; Improved evidence triaging |

| Novel Technologies & Methods | Differentiation techniques for biological evidence; Investigation of novel evidence aspects | Body fluid identification; Microbiome analysis |

| Evidence Differentiation | Detection/identification during collection; Differentiation in complex matrices | Mixture deconvolution; Secondary transfer understanding |

| Expedited Information Delivery | Expanded triaging tools; Workflows for investigative enhancement | Rapid DNA technologies; Field-deployable systems |

| Automated Support Tools | Objective methods supporting interpretations; Technology for complex mixture analysis | Probabilistic genotyping software; AI for mixture interpretation |

Multiple objectives within Priority I directly support the advancement of forensic biology evidence screening technologies. The plan emphasizes developing tools that increase sensitivity and specificity of forensic analysis, which aligns with the need for more precise evidence screening platforms [1]. Additionally, the focus on machine learning methods for forensic classification enables more automated and objective screening processes, reducing subjective interpretation and increasing throughput efficiency.

The NIJ plan specifically identifies the need for biological evidence screening tools that can identify areas on evidence with DNA, estimate time since sample deposition, detect single source versus mixed samples, determine proportions of contributors, or identify sex of contributors [3]. These capabilities represent significant advancements beyond current screening methods and would substantially enhance efficiency in forensic biology workflows. The plan also highlights the importance of mixture interpretation algorithms for all forensically relevant markers and machine learning tools for mixed DNA profile evaluation, both critical for implementing next-generation evidence screening technologies [3].

Strategic Priority II: Support Foundational Research in Forensic Science

Strategic Priority II addresses the fundamental scientific basis of forensic analysis, ensuring methods are valid, reliable, and scientifically sound. For evidence screening technology implementation, this priority provides the critical foundation for validating new technologies and establishing their limitations.

Foundational Research Objectives for Technology Implementation

Table 2: Foundational Research Needs for Evidence Screening Validation

| Research Category | Specific Research Needs | Technology Implementation Relevance |

|---|---|---|

| Validity & Reliability | Understanding fundamental scientific basis; Quantifying measurement uncertainty | Establishing error rates; Defining performance metrics |

| Decision Analysis | Measuring accuracy/reliability (black box studies); Identifying sources of error (white box studies) | Validation protocols; User proficiency testing |

| Evidence Limitations | Understanding value beyond individualization; Activity level propositions | Contextual interpretation; Transfer persistence studies |

| Stability & Transfer | Effects of environmental factors; Primary vs. secondary transfer; Storage condition impacts | Evidence preservation protocols; Contamination prevention |

Foundational research is particularly crucial for emerging evidence screening technologies, as it establishes the scientific validity and reliability thresholds necessary for courtroom admissibility. The plan emphasizes the need to quantify measurement uncertainty in forensic analytical methods, a critical requirement for implementing new screening technologies whose error rates may not be fully characterized [1]. This includes understanding the fundamental scientific basis of forensic science disciplines, which provides the theoretical framework for developing and validating innovative screening platforms.

For evidence screening implementation, decision analysis research represents a particularly valuable component, including measurements of accuracy and reliability through black box studies and identification of sources of error through white box studies [1]. These studies are essential for establishing standard operating procedures, defining competency requirements for operators, and developing appropriate quality control measures for new screening technologies. Additionally, research on the stability, persistence, and transfer of evidence provides critical context for interpreting screening results, especially for sensitive techniques capable of detecting minute quantities of biological material [1].

Experimental Protocols for Evidence Screening Technology Validation

Protocol 1: Validation Framework for Novel Evidence Screening Platforms

Purpose: Establish standardized validation protocols for emerging forensic biology evidence screening technologies, ensuring reliability, reproducibility, and admissible results.

Materials and Equipment:

- Reference biological samples (blood, saliva, semen, touch DNA)

- Mock evidence items (fabric swatches, hard surfaces)

- Candidate screening technology/platform

- Standard comparison methods (current laboratory protocols)

- Statistical analysis software

- Data recording and management system

Procedure:

- Sensitivity Determination:

- Prepare dilution series of reference biological samples

- Apply candidate screening technology to determine limit of detection

- Compare results with standard methods

- Establish minimum sample requirements for reliable detection

Specificity Assessment:

- Test technology against common interferents (soil, dyes, other body fluids)

- Evaluate cross-reactivity with non-human biological material

- Determine false positive and false negative rates

Reproducibility Testing:

- Conduct intra-operator repeatability testing (n=20)

- Perform inter-operator reproducibility testing (multiple analysts, n=20 each)

- Assess inter-instrument reproducibility where applicable

- Calculate precision metrics and confidence intervals

Robustness Evaluation:

- Introduce minor variations in protocol (temperature, humidity, processing time)

- Assess impact on results and establish operating parameters

- Determine environmental tolerances for field-deployable systems

Comparison Studies:

- Process identical sample sets with candidate and standard technologies

- Perform statistical analysis of concordance

- Identify and investigate discrepant results

Data Analysis: Calculate sensitivity, specificity, positive predictive value, negative predictive value, and overall accuracy with 95% confidence intervals. Perform statistical testing to establish significant differences between methods. Document all validation data for technology transition and training purposes.

Protocol 2: Implementation Framework for AI-Assisted Mixture Interpretation

Purpose: Establish standardized protocols for implementing and validating AI-assisted tools for forensic mixture interpretation within evidence screening workflows.

Materials and Equipment:

- Prepared mixed DNA samples with known contributor proportions

- AI-assisted interpretation software/platform

- Traditional probabilistic genotyping software (comparator)

- Computing infrastructure meeting software specifications

- Data security and backup systems

Procedure:

- Software Verification:

- Confirm installation and configuration according to developer specifications

- Run internal validation tests provided by developer

- Verify algorithm performance against benchmark datasets

Threshold Establishment:

- Process known mixture samples across concentration ranges

- Determine optimal analytical thresholds for contributor detection

- Establish interpretation thresholds for reliable profile inclusion

Performance Characterization:

- Evaluate software accuracy with progressively complex mixtures (2-5 contributors)

- Assess performance with degraded and low-template samples

- Determine computational requirements and processing times

Comparison Studies:

- Process identical sample sets with AI-assisted and traditional methods

- Compare results for concordance across quantitative and qualitative metrics

- Assess workflow efficiency improvements

Implementation Planning:

- Develop standard operating procedures incorporating new tool

- Establish training requirements and competency assessment

- Define quality assurance measures and ongoing monitoring

Data Analysis: Document number of contributors correctly identified, allele detection rates, mixture proportion estimates, and computational processing times. Perform statistical analysis of results compared to known ground truth and traditional methods.

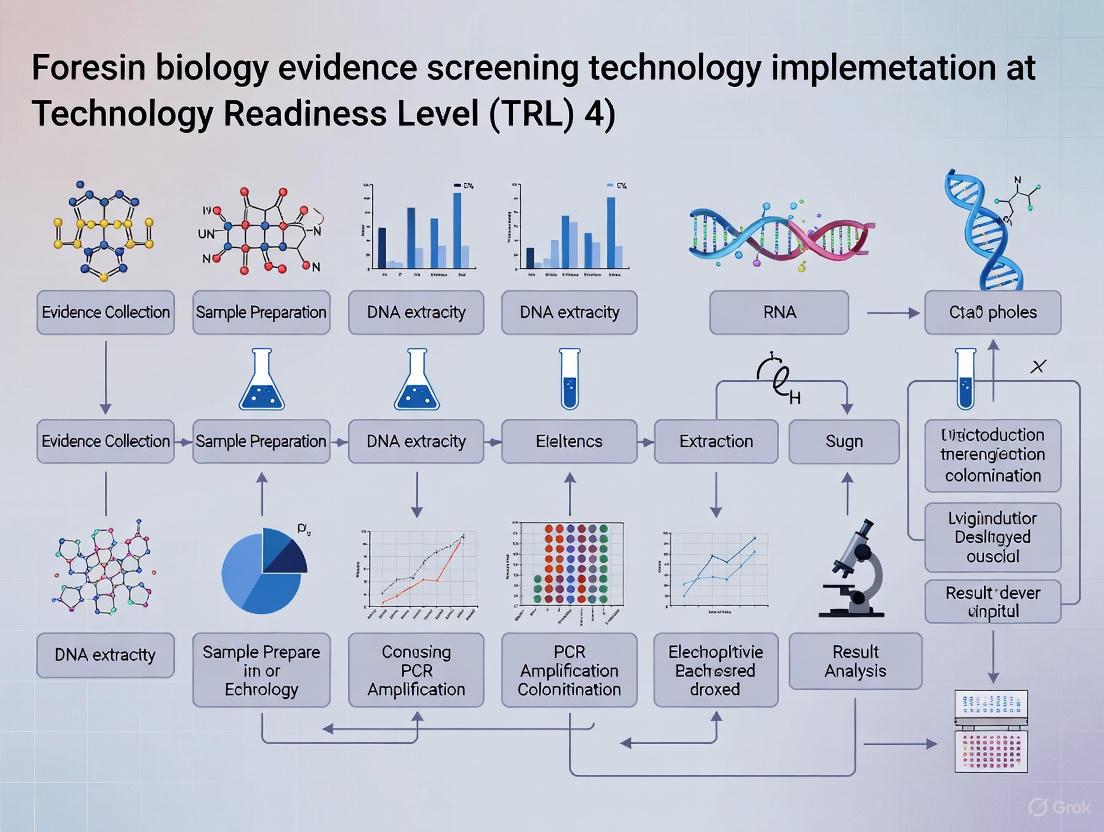

Research and Development Workflow for Evidence Screening Technologies

The following diagram illustrates the complete research, development, and implementation pathway for forensic biology evidence screening technologies as guided by the NIJ Strategic Research Plan:

Technology Development Pathway: This workflow illustrates the evidence screening technology development pathway from need identification through impact assessment, showing how NIJ's strategic priorities guide research activities at each stage while maintaining feedback mechanisms for continuous improvement.

The Scientist's Toolkit: Research Reagent Solutions for Evidence Screening

Table 3: Essential Research Reagents and Materials for Evidence Screening Technology Development

| Reagent/Material | Function/Application | Implementation Considerations |

|---|---|---|

| Magnetic Bead-based Extraction Kits | DNA purification from complex biological matrices; Integration with microfluidic systems | Enables automation; Reduces manual handling contamination; Improves yield from low-template samples |

| Microfluidic Chip Platforms | Miniaturized DNA analysis; Portable forensic technology development | Enables rapid, on-site DNA extraction; Reduces reagent consumption; Requires specialized instrumentation |

| Stable Fluorescent Dyes & Tags | Evidence visualization; Body fluid identification and differentiation | Enables non-destructive testing; Must maintain evidence integrity for subsequent DNA analysis |

| Probabilistic Genotyping Software | Complex mixture interpretation; Statistical weight of evidence calculation | Requires extensive validation; Computational resource intensive; Training essential for proper use |

| Reference DNA Standards | Method validation; Quality control; Instrument calibration | Essential for quantitative assays; Must represent diverse population groups; Enables inter-laboratory comparison |

| Rapid DNA Amplification Kits | Field-deployable DNA analysis; Expedited processing workflows | Reduced processing time; May have limitations with complex or degraded samples |

| Surface Sampling Devices | Efficient DNA recovery from various evidence substrates | Material composition affects DNA adsorption/release; Standardization needed across evidence types |

| Stabilization Buffers & Preservation Media | Maintain DNA integrity during storage and transport | Critical for field collections; Temperature stability requirements vary; Affects downstream analysis |

Strategic Integration with Forensic Biology Evidence Screening

The NIJ Strategic Research Plan provides a comprehensive framework for advancing forensic biology evidence screening technologies through coordinated research activities across multiple domains. For researchers and implementers, understanding the interconnected nature of these strategic priorities is essential for developing technologies that are not only scientifically sound but also operationally viable.

The emphasis on workforce development within Strategic Priority IV ensures that the implementation of new evidence screening technologies includes appropriate training, competency assessment, and continuing education programs [1]. This human factor is critical for successful technology transition, as even the most advanced screening platforms require skilled operators to generate reliable, interpretable results. Similarly, Strategic Priority V's focus on coordination across communities of practice facilitates the information sharing and collaborative partnerships necessary for standardized implementation of new screening technologies across diverse laboratory environments [1].

For forensic biology evidence screening technology implementation specifically, the NIJ plan addresses critical needs such as methods to associate cell type with DNA profile, technologies to improve DNA recovery, and approaches to optimize DNA processing workflows [3]. These directed research priorities provide clear guidance for developers and implementers regarding the operational challenges most needing innovative solutions. By aligning evidence screening technology development with these strategically identified needs, researchers can maximize the impact and adoption potential of their work.

Defining Technology Readiness Levels (TRL) in a Forensic Biology Context

Technology Readiness Levels (TRL) are a systematic metric used to assess the maturity level of a particular technology. The scale consists of nine levels, with TRL 1 being the lowest (basic principles observed) and TRL 9 being the highest (actual system proven in operational environment) [4]. This standardized approach provides a common framework for researchers, developers, and funding agencies to communicate about technological development status. Originally developed by NASA, the TRL framework has been widely adopted across multiple sectors including aerospace, energy, and healthcare [5]. In forensic biology, implementing new evidence screening technologies requires careful navigation through each TRL stage to ensure reliability, validity, and eventual admissibility in legal proceedings [6].

The forensic science landscape is undergoing significant transformation, with forensic biology laboratories increasingly processing complex biological evidence while facing heightened scrutiny regarding scientific integrity and evidence reliability [7]. This environment makes the TRL framework particularly valuable for guiding the development and implementation of new technologies in a manner that satisfies both scientific and legal standards. The adoption of emerging technologies in forensic biology—such as next-generation sequencing (NGS), rapid DNA analysis, and artificial intelligence-driven workflows—must be carefully managed through structured readiness assessments to meet the rigorous standards required for courtroom evidence [6] [8].

TRL Framework Adaptation for Forensic Biology

The generalized TRL scale requires contextual adaptation for forensic biology applications to address field-specific requirements, including validation standards, contamination control, and legal admissibility considerations. The table below outlines a tailored TRL framework for forensic biology evidence screening technologies.

Table 1: Technology Readiness Levels Adapted for Forensic Biology Context

| TRL | Definition | Forensic Biology Specific Criteria | Validation Requirements |

|---|---|---|---|

| 1 | Basic principles observed and reported | Literature review of fundamental biological principles; identification of potential forensic markers | Review of scientific knowledge base; assessment of foundational research [9] |

| 2 | Technology concept formulated | Practical application of principles to forensic scenarios; initial hypothesis for evidence screening | Concept generation; development of experimental designs [9] |

| 3 | Analytical and experimental proof-of-concept | Laboratory studies with controlled samples; initial demonstration of forensic applicability | Characterization of preliminary candidates; feasibility demonstration [9] |

| 4 | Component validation in laboratory environment | Basic forensic components integrated; testing with mock biological evidence | Optimization for assay development; finalization of critical design requirements [9] |

| 5 | Component validation in relevant environment | Breadboard system tested with forensically relevant samples; integration of key subsystems | Product development of reagents, components, and subsystems; pilot scale manufacturing preparations [9] |

| 6 | System model demonstration in relevant environment | Prototype testing with authentic case-type samples; evaluation in simulated forensic laboratory | System integration and testing with alpha/beta instruments; pilot lot production [4] [9] |

| 7 | Prototype demonstration in operational environment | Working prototype demonstrated in forensic laboratory setting; comparison with standard methods | Analytical verification with contrived and retrospective samples; preparation for clinical/validation studies [9] |

| 8 | Actual system completed and qualified | Technology validated for specific forensic applications; establishment of standard operating procedures | Clinical studies/evaluation; FDA clearance/approval for diagnostic components; finalization of GMP manufacturing [9] |

| 9 | Actual system proven in operational setting | Routine use in casework; successful challenge in legal proceedings; integration into quality management systems | Actual technology proven through successful deployment in operational setting [4] |

Experimental Protocols for TRL Advancement in Forensic Biology

Protocol for TRL 3-4 Transition: Proof-of-Concept Validation

Objective: To establish analytical proof-of-concept for a novel forensic biology screening technology using controlled samples and laboratory conditions.

Materials and Reagents:

- Reference DNA standards (purified human genomic DNA)

- Mock biological samples (saliva, blood, touch DNA samples on various substrates)

- Extraction and purification kits (magnetic bead-based systems recommended)

- Positive and negative control materials

- All necessary buffers and solutions

Procedure:

- Prepare dilution series of reference DNA standards covering expected forensic range (0.1-50 ng/μL)

- Spike mock samples with known quantities of DNA standards

- Process samples through the proposed screening technology following manufacturer's instructions

- Analyze results for sensitivity, specificity, and reproducibility

- Compare results with current standard methods (e.g., conventional PCR, electrophoresis)

- Perform statistical analysis to determine significant differences (p < 0.05 considered significant)

- Document all procedures, results, and observations in controlled laboratory notebooks

Acceptance Criteria:

- Technology demonstrates ability to detect biological material at forensically relevant concentrations

- False positive rate < 5% in negative controls

- Coefficient of variation < 15% for replicate analyses

Protocol for TRL 5-6 Transition: Simulated Environment Testing

Objective: To validate technology components in a simulated forensic environment using forensically relevant samples and conditions.

Materials and Reagents:

- Authentic-type forensic samples (bloodstains, saliva swabs, touched objects)

- Environmental challenged samples (UV-exposed, humidified, heated)

- Inhibitor-containing samples (soil, dye, humic acid)

- Miniaturized extraction kits for field deployment

- Portable power sources and environmental control equipment

Procedure:

- Collect authentic-type samples using standard forensic collection techniques

- Subject sample subsets to environmental challenges reflecting casework conditions

- Process samples using the proposed technology in a simulated operational environment

- Incorporate intentional contamination controls to assess robustness

- Train multiple operators with varying experience levels to assess usability

- Compare results with laboratory-based standard methods

- Assess ease of use, processing time, and required technical expertise

- Document any procedural challenges or failure modes

Acceptance Criteria:

- Technology performs reliably with environmentally challenged samples

- Multiple operators can achieve consistent results with minimal training

- Technology demonstrates superiority or equivalence to existing methods in at least one key parameter

Protocol for TRL 7-8 Transition: Operational Environment Qualification

Objective: To qualify the complete technology system in an operational forensic laboratory environment following established quality standards.

Materials and Reagents:

- Casework-type samples (blind-coded)

- ISO/IEC 17025 compliant quality control materials

- Documentation systems for chain of custody

- Reference standard methods and equipment

- Proficiency test materials

Procedure:

- Establish technology within accredited forensic laboratory workflow

- Process blind-coded casework-type samples alongside routine casework

- Implement full chain-of-custody documentation and evidence handling procedures

- Conduct comparative analysis with standard methods using statistical equivalence testing

- Perform robustness testing under varying laboratory conditions

- Validate according to SWGDAM guidelines or equivalent international standards

- Assess data integration with laboratory information management systems (LIMS)

- Document all validation data for regulatory submission purposes

Acceptance Criteria:

- Technology meets all relevant forensic validation standards (SWGDAM, ENFSI, etc.)

- Successful integration with laboratory quality management system

- Demonstration of fitness-for-purpose for intended forensic applications

Technology Development Workflow

The following diagram illustrates the complete technology development pathway from basic research to operational implementation in forensic biology.

Technology Development Pathway in Forensic Biology

Forensic Biology Research Reagent Solutions

The successful development and implementation of forensic biology technologies requires specific research reagents and materials tailored to evidentiary applications. The table below details essential solutions for technology development across TRL stages.

Table 2: Key Research Reagent Solutions for Forensic Biology Technology Development

| Reagent/Material | Function | TRL Application Range | Forensic-Specific Considerations |

|---|---|---|---|

| Reference DNA Standards | Quantification calibration and method comparison | TRL 3-9 | Should include degraded DNA and low-copy number samples to mimic forensic evidence [8] |

| Mock Biological Samples | Controlled testing without evidentiary constraints | TRL 3-6 | Should include blood, saliva, semen, and touch DNA on various substrates [8] |

| Inhibitor Panels | Assessment of inhibition resistance | TRL 4-7 | Should include common forensic inhibitors: hematin, humic acid, tannins, dyes [8] |

| Stabilization Buffers | Preservation of biological integrity during testing | TRL 5-9 | Must maintain DNA stability under various storage conditions [8] |

| Rapid Extraction Kits | Efficient DNA recovery from complex substrates | TRL 5-9 | Should be optimized for minimal hands-on time and maximum yield from trace samples [8] |

| Portable Detection Kits | Field-based screening and analysis | TRL 6-9 | Must include environmental controls and user-friendly interface [8] |

| Quality Control Materials | Validation and proficiency testing | TRL 7-9 | Should be traceable to international standards and commutabile with casework samples [7] |

| LIMS Integration Modules | Data management and chain of custody | TRL 7-9 | Must comply with forensic standards for data integrity and security [7] |

Legal and Regulatory Considerations for TRL Progression

Advancement through TRL stages in forensic biology requires careful attention to legal admissibility standards alongside technical validation. Courtroom admissibility standards including the Frye Standard, Daubert Standard, Federal Rule of Evidence 702 in the United States, and the Mohan Criteria in Canada establish rigorous requirements for scientific evidence [6]. These standards demand that forensic technologies demonstrate reliability, known error rates, peer review acceptance, and standardized protocols—factors that must be addressed throughout TRL progression.

The transition from TRL 7 to TRL 8 particularly requires thorough documentation of validation studies, error rate analysis, and proficiency testing to satisfy these legal standards [6]. Technologies intended for forensic implementation must also integrate with established quality management systems, typically based on ISO/IEC 17025 standards for forensic testing laboratories [7]. This integration includes implementing appropriate chain-of-custody procedures, evidence tracking systems, and casework documentation protocols that will withstand legal scrutiny.

The structured framework of Technology Readiness Levels provides an essential roadmap for developing and implementing novel technologies in forensic biology. By systematically addressing technical, operational, and legal requirements at each TRL stage, researchers and developers can effectively advance promising technologies from basic concept to court-admissible applications. The specialized protocols, validation criteria, and reagent solutions outlined in this document offer practical guidance for navigating this complex pathway while maintaining scientific rigor and legal compliance. As forensic biology continues to evolve with emerging technologies such as next-generation sequencing, rapid DNA analysis, and artificial intelligence applications, the TRL framework will remain crucial for ensuring that innovation translates into reliable, validated forensic practice.

Within the framework of Technology Readiness Level (TRL) research for implementing novel forensic biology evidence screening technologies, establishing foundational validity and reliability is a critical precursor to admissibility and widespread adoption. The forensic science community faces increasing scrutiny regarding the scientific underpinnings of its methods, particularly for non-DNA evidence [10]. Courts, guided by standards such as those from the Daubert ruling, require that expert testimony be based on scientifically valid methods with known error rates and adherence to established standards [10]. The National Institute of Justice (NIJ) explicitly identifies "Foundational Validity and Reliability of Forensic Methods" as a strategic priority, emphasizing the need to understand the fundamental scientific basis of forensic disciplines and to quantify measurement uncertainty [1]. This document outlines application notes and experimental protocols designed to address these core needs, providing a structured pathway for researchers to rigorously evaluate new forensic biology screening technologies before they are deployed in the criminal justice system.

Application Notes: Quantitative Frameworks for Evidence Analysis

The evolution from purely qualitative assessments to integrated quantitative and statistical frameworks is central to modern forensic biology. The following application notes summarize key methodologies and data for establishing the validity and reliability of biological evidence screening.

Analytical Techniques for Qualitative and Quantitative Analysis

Forensic analysis often involves a tandem approach, where qualitative analysis identifies the presence or absence of specific substances, and subsequent quantitative analysis determines the concentration or amount of those substances [11]. This is crucial in forensic biology for applications such as DNA quantitation, toxicology, and body fluid identification.

Table 1: Common Analytical Techniques in Forensic Biology

| Technique | Primary Use in Forensic Biology | Qualitative/Qantitative Application | Key Metric |

|---|---|---|---|

| Massively Parallel Sequencing (MPS) | STR/SNP sequencing, mixture deconvolution, body fluid ID [12] | Both | Detects sequence variation in alleles; high discrimination power [12] |

| Quantitative PCR (qPCR) | DNA quantitation, body fluid identification via RNA analysis [12] | Quantitative | Measures DNA concentration; determines presence of body fluid-specific RNA [13] [12] |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Drug detection, biomarker identification [11] [14] | Both (Confirmatory & Quantitative) | Identifies and quantifies compounds based on mass-to-charge ratio |

| Immunochromatography | Presumptive tests for drugs, specific proteins [14] | Qualitative | Rapid, visual identification of target substances |

| Probabilistic Genotyping | DNA mixture interpretation [12] | Quantitative (Statistical) | Calculates Likelihood Ratios (LR) to weigh evidence [12] |

Performance Metrics for Technology Validation

Evaluating a new forensic technology requires assessing its performance against benchmark standards. The following metrics are essential for establishing validity and reliability.

Table 2: Key Validation Metrics for Forensic Screening Technologies

| Performance Metric | Definition | Target for New Technology | Experimental Protocol Reference |

|---|---|---|---|

| Sensitivity | The minimum amount of target analyte (e.g., DNA, specific RNA) that can be reliably detected. | ≤10 pg DNA for advanced methods [12] | Protocol 3.1 |

| Specificity | The ability to distinguish the target analyte from similar, non-target substances. | >95% for body fluid-specific mRNA markers [12] | Protocol 3.2 |

| Accuracy/Precision | The closeness of agreement between a measured value and a true value (accuracy), and the repeatability of measurements (precision). | CV < 10% for quantitative measurements | Protocol 3.3 |

| Stochastic Threshold | The DNA quantity below which allele drop-out due to random effects becomes significant. | Determined empirically via validation studies [12] | Protocol 3.1 |

| Error Rate | The frequency of false positives and false negatives in controlled tests. | Must be established and reported for the method and lab [10] | Protocol 3.4 |

| Limit of Detection (LOD) | The lowest concentration of an analyte that can be consistently identified. | Defined by statistical models from validation data | Protocol 3.1 |

Experimental Protocols

Protocol for Determining Sensitivity and Stochastic Threshold

Objective: To establish the minimum quantity of DNA that can be reliably amplified and profiled using a specific screening technology (e.g., STR kit, MPS panel), and to determine the stochastic threshold.

- Sample Preparation: Serially dilute a control DNA standard of known concentration (e.g., 1:10 dilutions from 1 ng/µL to 0.5 pg/µL).

- Replication: For each dilution level, prepare a minimum of n=10 replicates to account for stochastic effects.

- Amplification & Analysis: Process all replicates through the entire analytical workflow (extraction, quantitation, amplification, separation/sequencing).

- Data Collection: Record the following for each replicate:

- Whether a full, partial, or no profile was obtained.

- The number of alleles detected versus expected.

- Peak heights (for CE) or read depths (for MPS).

- Presence of allelic drop-in.

- Data Analysis:

- Sensitivity (LOD): Plot the profile success rate (%) against the input DNA quantity. The LOD is typically the lowest quantity where ≥95% of replicates produce a usable profile.

- Stochastic Threshold: Calculate the average peak height/read depth of heterozygous alleles at each dilution. The stochastic threshold is the peak height/read depth value below which heterozygous balance falls outside an acceptable range (e.g., <60%) and allelic drop-out is observed in >5% of replicates.

Protocol for Assessing Specificity and Cross-Reactivity

Objective: To verify that the screening technology detects only the intended target (e.g., a specific body fluid, drug metabolite) and does not cross-react with common interferents.

- Test Panel Creation: Assemble a panel of samples including:

- Positive Controls: Pure, known target substances.

- Common Interferents: Biologically relevant substances that may be present at a crime scene (e.g., other body fluids, soil, cleaning agents, common pharmaceuticals).

- Mixtures: Samples containing the target mixed with interferents in various ratios.

- Negative Controls: Samples known to lack the target (e.g., water).

- Blinded Analysis: Code the samples and analyze them using the screening technology under validation.

- Data Collection & Scoring: Record the result (positive/negative, or quantitative value) for each sample.

- Data Analysis:

- Calculate the false positive rate (number of interferents incorrectly identified as target / total number of interferents).

- Calculate the false negative rate (number of positive controls incorrectly called negative / total number of positive controls).

- For quantitative assays, report the degree of signal suppression or enhancement caused by interferents in mixture samples.

Protocol for Intra- and Inter-Laboratory Reproducibility

Objective: To measure the precision (repeatability and reproducibility) of the screening technology within a single laboratory and across multiple laboratories.

- Sample & Standard Preparation: Prepare a set of homogeneous, stable reference samples or standards with known properties. These should span low, medium, and high concentrations of the target analyte.

- Intra-Laboratory Study (Repeatability):

- Within a single lab, have two or more analysts test each reference sample multiple times (e.g., n=5) over a short period (e.g., one day) using the same equipment and reagents.

- Calculate the standard deviation and coefficient of variation (CV) for the results from each sample level.

- Inter-Laboratory Study (Reproducibility):

- Distribute identical sets of the reference samples to a minimum of three participating laboratories.

- Each lab follows a standardized protocol (the one being validated) to analyze the samples.

- Each sample should be tested a minimum of n=3 times per lab.

- Statistical Analysis:

- Perform an analysis of variance (ANOVA) to partition the total variance into components representing between-lab and within-lab variability.

- Report the overall reproducibility standard deviation and the interlaboratory CV.

Protocol for Black Box Study to Estimate Error Rates

Objective: To empirically measure the false positive and false negative rates of a forensic feature-comparison method under realistic conditions [10].

- Study Design: Create a set of trial specimens that includes:

- True Matches: Pairs of samples known to originate from the same source.

- True Non-Matches: Pairs of samples known to originate from different sources.

- The prevalence of matches and non-matches should not be 50:50 but should reflect a more realistic, challenging ratio.

- Blinding & Presentation: The set of trials is presented to examiners in a "black box" format where they have no contextual information about the cases. The order of trials is randomized.

- Examination & Conclusion: Each examiner compares the pairs and selects a conclusion from a predefined scale (e.g., Identification, Inconclusive, Exclusion).

- Data Analysis:

- False Positive Rate (FPR): (Number of incorrect "Identification" decisions on true non-match pairs / Total number of true non-match pairs).

- False Negative Rate (FNR): (Number of incorrect "Exclusion" decisions on true match pairs / Total number of true match pairs).

- Report rates with confidence intervals to quantify uncertainty.

Visualizing Workflows and Logical Frameworks

Foundational Research Workflow

Evidence Evaluation Logic

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Foundational Validation Studies

| Item | Function in Research & Development |

|---|---|

| Standard Reference Material (SRM) | Provides a material with certified properties (e.g., DNA concentration, sequence) for calibrating instruments, assessing accuracy, and ensuring consistency across experiments and laboratories [1]. |

| Control DNA | Used in sensitivity, stochastic, and reproducibility studies to monitor assay performance and distinguish true results from artifacts. Includes male/female, single-source, and mixture controls. |

| Characterized Body Fluid Samples | Saliva, blood, semen, etc., samples collected under IRB approval and used as positive controls and knowns for developing and validating body fluid identification assays [12]. |

| Probabilistic Genotyping Software | Sophisticated software that uses statistical models to interpret complex DNA mixtures, accounting for stutter, drop-out, and drop-in, and providing a Likelihood Ratio to weigh the evidence [12]. |

| MPS Platform & Kits | Enables high-throughput, sequence-based analysis of multiple marker types (STRs, SNPs) from a single sample, increasing discriminatory power and aiding in the analysis of challenging samples [12]. |

| Inhibitor Stocks | Purified substances (e.g., humic acid, hematin, tannin) that are added to samples during validation to test the robustness of the DNA extraction and amplification process to common PCR inhibitors. |

| Synthetic Oligonucleotides | Custom-designed DNA sequences used as positive controls for qPCR assays, as components in multiplex assay design, and for creating synthetic DNA mixtures with precisely known genotypes. |

Microbiome analysis represents a paradigm shift in forensic science, moving beyond traditional human DNA analysis to exploit the unique microbial communities associated with human bodies and environmental samples. The microbiome comprises all microorganisms—bacteria, fungi, viruses, and their genes—inhabiting a specific environment [15]. In forensics, this approach provides investigative leads for individual identification, geolocation inference, and post-mortem interval (PMI) estimation [15]. The field has evolved significantly from culture-dependent techniques to next-generation sequencing (NGS) technologies, enabling comprehensive characterization of microbial communities without the limitations of laboratory cultivation [15] [16].

Key Analytical Methods in Forensic Microbiome Research

Sequencing Technologies

Two primary NGS methods are employed in forensic microbiome analysis, each with distinct advantages and limitations for forensic applications:

Table 1: Comparison of Microbiome Sequencing Methods

| Feature | Amplicon Sequencing | Shotgun Metagenomic Sequencing |

|---|---|---|

| Target | Marker genes (e.g., 16S rRNA, ITS) [15] | All genomic DNA in sample [15] |

| Resolution | Genus to species level [15] | Species to strain level [15] |

| Cost | Lower [15] | Higher [15] |

| Suitable for Low-Biomass | Better performance [15] | Poorer performance [15] |

| Information Output | Taxonomic composition [15] | Taxonomic & functional gene information [15] |

| Common Platforms | Illumina MiSeq, Oxford Nanopore, PacBio [15] | Illumina platforms [15] |

Bioinformatics Pipelines

Data analysis pipelines are critical for converting raw sequencing data into forensically actionable information. The bioinformatics workflow for amplicon sequencing typically involves:

- Sequence Denoising: Using algorithms like DADA2 or Deblur to generate exact amplicon sequence variants (ASVs), which are more precise than traditional operational taxonomic units (OTUs) [15].

- Taxonomic Classification: Assigning taxonomy to ASVs using reference databases such as SILVA, Greengenes, or UNITE [15].

- Statistical Analysis: Multivariate statistical methods to identify discriminatory microbial signatures for forensic applications.

For shotgun metagenomic data, analysis includes:

- Quality Control & Host Depletion: Using tools like KneadData and Trimmomatic to remove low-quality sequences and host DNA contamination [15].

- Taxonomic Profiling: Utilizing tools like MetaPhlAn2 or Kraken 2 for comprehensive taxonomic assignment [15].

- Functional Profiling: Analysis with tools like HUMAnN3 to determine microbial metabolic capabilities, which may provide additional contextual information [15].

Figure 1: Bioinformatics Workflow for Forensic Microbiome Analysis

Experimental Protocols

Sample Collection and Preservation

Proper sample collection is critical for reliable microbiome analysis. Forensic samples may include:

- Skin swabs: Collected from palmar surfaces using sterile swabs moistened with molecular-grade water [16].

- Soil samples: Collected from potential crime scenes at depths of 0-5 cm using sterile corers [16].

- Body fluids: Vaginal fluid, saliva, and fecal material collected with sterile swabs or containers [16].

- Decomposition samples: Swabs from skin, soil beneath remains, or internal organs during autopsy [16].

Preservation Protocol: Immediately after collection, samples should be placed in DNA/RNA stabilization buffer and stored at -80°C to prevent microbial community shifts. Storage duration and conditions should be standardized and documented, as variations can affect bacterial community analysis [16].

DNA Extraction and Library Preparation

DNA Extraction:

- Use mechanical lysis (bead beating) combined with chemical lysis for comprehensive cell disruption.

- Employ commercial kits (e.g., DNeasy PowerSoil Kit) optimized for environmental samples.

- Include extraction controls to monitor contamination.

- Quantify DNA yield using fluorometric methods (e.g., Qubit).

16S rRNA Amplicon Library Preparation:

- Amplify the V4 hypervariable region of the 16S rRNA gene using primers 515F/806R.

- Attach dual-index barcodes and Illumina sequencing adapters.

- Clean amplified libraries using magnetic bead-based purification.

- Quantify libraries and pool in equimolar ratios.

- Validate library quality using capillary electrophoresis.

Shotgun Metagenomic Library Preparation:

- Fragment genomic DNA to ~350 bp using acoustic shearing.

- Perform end repair, A-tailing, and adapter ligation.

- Size-select fragments using magnetic beads.

- Amplify library with index primers.

- Quantify and normalize libraries for sequencing.

Sequencing and Data Analysis

Sequencing Parameters:

- Illumina MiSeq: 2×250 bp for 16S rRNA amplicons

- Illumina NovaSeq: 2×150 bp for shotgun metagenomics

Bioinformatics Analysis Protocol:

- Quality Filtering: Remove low-quality reads (Q-score <20) and trim adapters.

- Denoising: For 16S data, use DADA2 to infer exact amplicon sequence variants (ASVs).

- Taxonomic Assignment: Classify sequences against the SILVA database for 16S data or RefSeq for shotgun data.

- Contamination Removal: Identify and remove potential contaminants using the decontam package (R).

- Statistical Analysis: Perform alpha and beta diversity analyses, differential abundance testing, and machine learning classification for forensic applications.

Forensic Applications and Validation

Individual Identification

The human skin microbiome is highly personalized and stable over time, making it valuable for associating individuals with objects or locations [15] [16]. Key findings include:

- Individuals leave unique microbial fingerprints on surfaces like computer keyboards and phones [15].

- Microbial signatures can persist for weeks to months on touched objects [15].

- Cohabiting individuals develop similar microbial profiles, enabling relationship inference [15].

Post-Mortem Interval (PMI) Estimation

Thanatomicrobiome (microbes inhabiting the body after death) and epinecrotic communities (microbes on the body surface) follow predictable successional patterns that correlate with time since death [16]. Research demonstrates:

- Distinct microbial community shifts occur at specific decomposition stages [16].

- Soil microbiota beneath decomposing remains changes predictably [16].

- Machine learning models using microbial markers can estimate PMI with increasing accuracy [16].

Body Fluid Identification

Microbial signatures can differentiate body fluids when conventional methods are inconclusive:

- Vaginal fluid: Characterized by Lactobacillus crispatus and Lactobacillus jensenii [16].

- Saliva: Identified by Streptococcus salivarius and other oral commensals [16].

- Feces: Distinguished by Bacteroides species and other gut microbiota [16].

Geolocation Inference

Soil microbiomes exhibit biogeographical patterns that can associate samples with specific regions [15] [16]. Applications include:

- Linking suspects to crime scenes through soil microbial signatures [15].

- Determining the origin of illicit materials based on environmental microbiomes [15].

- Regional identification using distinctive microbial taxa [15].

Table 2: Technology Readiness Levels for Forensic Microbiome Applications

| Application | Current TRL | Key Validation Needs | Legal Considerations |

|---|---|---|---|

| Individual Identification | 3-4 (Experimental Proof) | Standardized protocols, error rate analysis, population studies [3] | Meets Daubert standards for reliability and peer review with further validation [6] |

| PMI Estimation | 3 (Analytical Formulation) | Succession model validation across environments, precision estimates [3] | Requires known error rates and general acceptance in forensic pathology [6] |

| Geolocation | 2-3 (Technology Concept) | Comprehensive spatial databases, statistical models for probability [3] | Necessitates demonstration of reliability under varying environmental conditions [6] |

| Body Fluid ID | 4 (Lab Validation) | Multi-center validation studies, mixture interpretation protocols [3] | Must establish specificity and sensitivity thresholds for courtroom admissibility [6] |

Figure 2: Technology Readiness Level Framework for Forensic Implementation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Forensic Microbiome Analysis

| Reagent/Kit | Function | Application Notes |

|---|---|---|

| DNeasy PowerSoil Pro Kit | DNA extraction from soil and difficult samples | Optimal for inhibitor removal; includes bead beating for cell lysis [15] |

| Illumina 16S Metagenomic Sequencing Library Preparation Reagents | 16S rRNA amplicon library prep | Includes primers targeting hypervariable regions; compatible with dual indexing [15] |

| ZymoBIOMICS Microbial Community Standard | Positive control for sequencing runs | Validates entire workflow from extraction to analysis; quantifies technical variation [15] |

| Qubit dsDNA HS Assay Kit | Accurate DNA quantification | Fluorometric method preferred over spectrophotometry for low-biomass samples [15] |

| DADA2 (R package) | Amplicon sequence variant inference | More exact than OTU clustering; reduces spurious sequence variants [15] |

| MetaPhlAn2 | Taxonomic profiling from shotgun data | Species-level resolution using clade-specific marker genes [15] |

| SILVA Database | 16S rRNA reference database | Curated alignment and taxonomy; regularly updated [15] |

Current Challenges and Future Directions

Despite its potential, forensic microbiome analysis faces several challenges that must be addressed for routine implementation:

- Standardization: Lack of standardized protocols for sample collection, storage, and analysis [16].

- Validation: Need for established error rates, reproducibility metrics, and population-specific databases [16] [3].

- Bioinformatics: Requirement for user-friendly, validated bioinformatics pipelines accessible to forensic laboratories [15].

- Legal Admissibility: Meeting Daubert standards (testing, peer review, error rates, acceptance) and Mohan criteria (relevance, necessity, reliability) for courtroom evidence [6].

Future development should focus on:

- Reference Databases: Expanding curated microbial databases for forensic applications [3].

- Quantitative Models: Developing statistical frameworks for evaluating evidence weight [3].

- Integration: Combining microbiome data with other forensic markers for stronger associations [3].

- Rapid Detection: Creating field-deployable tools for preliminary microbiome screening [3].

The Critical Role of Reference Collections and Databases for Method Development

In forensic biology, the implementation of new evidence screening technologies relies on robust method development and validation. Central to this process are forensic reference collections and databases, which provide the foundational materials and data necessary to develop, optimize, and validate analytical methods. These resources enable the transition of technologies from basic research to operational use by providing authenticated materials for testing and comparison. The National Institute of Justice (NIJ) emphasizes this need through its Research and Development Technology Working Group, which has identified specific operational requirements where improved databases and reference materials would significantly advance forensic capabilities [3]. This document outlines the critical function of these resources within the technology readiness level (TRL) framework for forensic biology evidence screening.

Forensic reference collections encompass both physical samples and digital data records that serve as benchmarks for analytical method development. These resources provide known materials against which unknown evidentiary samples can be compared, ensuring analytical accuracy and reliability. The following table summarizes key forensic databases and their characteristics relevant to method development.

Table 1: Key Forensic Reference Databases and Collections

| Database/Collection Name | Maintaining Organization | Primary Content | Scale of Collection | Access Method |

|---|---|---|---|---|

| International Forensic Automotive Paint Data Query (PDQ) [17] | Royal Canadian Mounted Police (RCMP) | Chemical and color information of original automotive paints | ~13,000 vehicles; ~50,000 paint layers | Annual CD release to member law enforcement agencies |

| FBI Lab - Forensic Automobile Carpet Database (FACD) [17] | Federal Bureau of Investigations (FBI) | Carpet fibers from vehicles; microscopic characteristics, IR microscopy | ~800 samples | Request-based analysis by FBI for law enforcement |

| European Collection of Automotive Paints (EUCAP) [17] | French Police Lab (Germany BKA handles data analysis) | Paint samples from salvage vehicles | Not Specified | Internet access with secure ID and password |

| FBI Lab - Fiber Library [17] | Federal Bureau of Investigations (FBI) | FTIR spectra of textile fibers; physical fiber samples | 86 spectral records | Analysis request to FBI; library in OMNIC software |

| Barcode of Life Database (BOLD) [18] | University of Guelph, Canada | DNA sequences for species identification | 1.8M+ specimen records; 111,289+ species with barcodes | Free online access with optional login |

These resources address the critical need for authenticated reference materials that underpin reliable method development. As noted by NIJ's research priorities, there remains a pressing need for "additional characterization of existing databases and further development of population data of forensically relevant genetic markers" particularly for "populations that are currently underrepresented in existing databases" [3]. This is especially true in forensic biology, where population genetics statistics are essential for calculating evidential weight.

Quantitative Analysis of Database Utility

The effectiveness of reference databases in method development can be quantitatively assessed through parameters such as sample diversity, analytical precision, and discrimination power. The following table summarizes key quantitative metrics for evaluating database utility in forensic method development.

Table 2: Quantitative Metrics for Database Utility in Method Development

| Performance Parameter | Application in Method Development | Target Threshold | Impact on Technology Implementation |

|---|---|---|---|

| Sample Diversity | Assessing method robustness across different sample types | Comprehensive population coverage | Reduces false negatives in evidence screening |

| Discrimination Power | Evaluating method's ability to distinguish between sources | >0.99 for highly similar materials | Ensures evidentiary value of matches |

| Analytical Precision | Establishing reproducibility of measurements | CV <5-15% depending on analyte | Validates repeatability across instruments and operators |

| Limit of Detection (LOD) | Determining minimum detectable quantity of target | Substance-dependent (e.g., ng/mL for drugs) | Defines application range for trace evidence |

| False Positive Rate | Validating method specificity | <1% for confirmatory methods | Ensures reliability in casework applications |

Quantitative data analysis methods, including descriptive analysis and diagnostic analysis, are essential for interpreting these performance parameters [19]. For instance, statistical analysis of database records helps establish appropriate match thresholds and confidence intervals for new screening technologies. The move toward probabilistic genotyping for DNA mixture interpretation exemplifies how database-driven statistical models are transforming forensic biology [3].

Experimental Protocols for Method Validation

Protocol 1: Method Development Lifecycle for Forensic Screening

The development of analytical methods follows a structured lifecycle to ensure reliability and admissibility in legal contexts. This protocol adapts the pharmaceutical industry's rigorous approach to method development for forensic applications [20].

1. Requirements Identification

- Define the analytical need based on operational gaps (e.g., detecting new synthetic drugs)

- Review existing/compendial procedures for adaptability

- Establish target performance characteristics (specificity, sensitivity, precision)

2. Method Development

- Select appropriate analytical techniques (e.g., LC-MS/MS, GC×GC-MS)

- Optimize instrument parameters through systematic testing

- Establish sample preparation procedures

- Develop preliminary acceptance criteria

3. Validation Planning & Execution

- Prepare a validation protocol defining experiments and acceptance criteria

- Evaluate specificity, limit of detection, limit of quantitation, linearity, accuracy, range, and precision

- Document all procedures and results thoroughly

4. Implementation & Monitoring

- Develop Standard Operating Procedures (SOPs) for routine execution

- Define criteria for revalidation and system suitability tests

- Implement continuous monitoring for fitness-for-purpose

5. Technology Transfer

- Qualify receiving laboratories through comparative testing

- Document transfer under pre-approved protocol

- Ensure procedural knowledge is effectively communicated

This structured approach aligns with international guidelines from ICH, FDA, and EMA, adapted for forensic contexts [20]. The process emphasizes documentation rigor and regulatory compliance, which are equally essential in forensic science where evidence must withstand legal challenges.

Protocol 2: GC×GC-MS for Complex Mixture Analysis in Trace Evidence

Comprehensive two-dimensional gas chromatography-mass spectrometry (GC×GC-MS) provides enhanced separation for complex forensic samples. This protocol details its application for trace evidence analysis, based on published methodologies [21].

Sample Preparation

- Lubricant Analysis: Conduct hexane solvent extraction of suspected lubricant samples (50-100 µL)

- Paint/Polymer Analysis: Employ flash pyrolysis with Pyroprobe 4000: start at 50°C for 2s, ramp to 750°C at 50°C/s, hold for 2s

- Sample Size: Use minimal material (~50 µg) to preserve evidence integrity

Instrument Configuration

- GC System: Agilent 7890B gas chromatograph with split-splitless injector

- MS System: Agilent 5977 quadrupole mass spectrometer

- Columns: Combination of non-polar/moderately polar columns for two-dimensional separation

- Modulator: Differential flow modulation (DFM) for comprehensive 2D separation

- Temperature Program: Optimized gradient from 50°C to 280°C based on analyte properties

Data Acquisition & Analysis

- Mass Spectrometry Conditions: Electron ionization (EI) mode at 70 eV; mass range 35-550 m/z

- Data Processing: Use specialized software to generate 2D chromatographic "fingerprints"

- Pattern Recognition: Compare sample fingerprints to reference database entries

- Component Identification: Leverage increased peak capacity to resolve co-eluting compounds

This protocol demonstrates a significant advancement over traditional GC-MS, particularly for discriminating between sources of complex mixtures like sexual lubricants, automotive paints, and tire rubber [21]. The enhanced separation power addresses a key limitation in trace evidence analysis where coelution can prevent correct identification.

Research Reagent Solutions for Forensic Method Development

The following table details essential materials and reagents required for implementing advanced analytical methods in forensic biology evidence screening.

Table 3: Essential Research Reagents for Forensic Biology Method Development

| Reagent/Material | Function in Method Development | Application Examples | Critical Specifications |

|---|---|---|---|

| Authenticated Reference Standards | Method calibration and quantitative accuracy | Drug quantification, toxicology testing, DNA quantification | Purity >95%, concentration verification, stability data |

| DNA Extraction Kits | Recovery of amplifiable DNA from diverse evidence types | Low-copy number DNA, touch DNA, challenging substrates (e.g., metal) | Yield efficiency, inhibitor removal, compatibility with downstream analysis |

| Chromatography Columns | Separation of complex mixtures | GC×GC-MS for lubricants, paints; LC-MS/MS for drugs | Stationary phase selectivity, column efficiency, temperature stability |

| Mass Spectrometry Calibrants | Instrument calibration and mass accuracy confirmation | Daily MS system tuning, quantitative method validation | Known m/z ratios, chemical stability, compatibility with analysis mode |

| Population DNA Databases | Statistical assessment of evidentiary value | Probabilistic genotyping, mixture interpretation, kinship analysis | Representative population coverage, quality-controlled data, ethical compliance |

| Optical Glass Standards | Microscope calibration for physical evidence analysis | Refractive index measurements of glass fragments | Certified refractive index range (e.g., 1.34 to 2.40), traceable documentation |

These research reagents form the foundational toolkit for developing and implementing new screening technologies. Their quality directly impacts the reliability of analytical results and the eventual admissibility of evidence in legal proceedings. As highlighted by NIJ, there is a particular need for "improved DNA collection devices or methods for recovery and release of human DNA (e.g., from metallic items)" [3], demonstrating how reagent development directly addresses operational challenges.

Reference collections and databases serve as the cornerstone of method development in forensic biology evidence screening. They provide the essential materials for technology validation, performance assessment, and operational implementation. As analytical techniques evolve toward greater sensitivity and specificity, the role of comprehensive, well-characterized reference resources becomes increasingly critical. The ongoing development of these resources, particularly for emerging evidence types and underrepresented populations, will directly determine the success of new technology implementation across the forensic science community. Future advancements should focus on expanding database diversity, improving accessibility, and enhancing integration with statistical interpretation tools to maximize their impact on forensic method development.

Forensic laboratories worldwide are operating in an era of unprecedented technological transformation. They face growing pressure to implement advanced analytical methods while managing increasing case backlogs, complex evidence, and high expectations for expedited results. This application note details the current technological demands, provides validated experimental protocols for key forensic analyses, and visualizes core workflows to support researchers and scientists in the field of forensic biology evidence screening. The content is framed within the context of Technology Readiness Level (TRL) research to facilitate the implementation and maturation of emerging forensic technologies.

Market Dynamics and Quantitative Landscape

The global forensic technology market is experiencing significant growth, driven by technological advancements and increasing demand for reliable criminal identification procedures. The table below summarizes key quantitative data for the DNA forensics market, a critical segment of forensic laboratories' technological portfolio.

Table 1: Global DNA Forensics Market Projections and Segment Analysis

| Metric | Details |

|---|---|

| 2024 Market Value | $3.1 billion [22] |

| 2025 Projected Market Value | $3.3 billion [22] |

| 2030 Projected Market Value | $4.7 billion [22] |

| CAGR (2025-2030) | 7.7% [22] |

| Dominant Product Segment | Kits and Consumables [22] |

| Key Techniques | PCR, STR, Next-Generation Sequencing (NGS) [22] |

| Major Applications | Criminal Testing, Paternity & Familial Testing [22] |

| Regional Market Leader | North America ($1.1B in 2024) [22] |

This growth is fueled by several factors: rising crime rates necessitating accurate tools, increased government funding for forensic science, technological developments in testing, and the expansion of national DNA databases which now exist in over 70 countries [22]. Furthermore, the integration of artificial intelligence (AI) and machine learning into forensic processes is emerging as a key trend, enabling improved analysis and automation [22].

Key Technological Pressures and Advanced Protocols

Pressure: Demand for Rapid Results

Solution: Rapid DNA Integration

A significant pressure is the demand to reduce turnaround times. The Federal Bureau of Investigation (FBI) has approved the integration of Rapid DNA technology into the Combined DNA Index System (CODIS), effective July 1, 2025 [23]. This allows law enforcement to process DNA samples in hours instead of days or weeks and compare them directly to the national database.

Table 2: Research Reagent Solutions for DNA Quantitation via qPCR

| Item | Function |

|---|---|

| Quantitative PCR (qPCR) Kits | Provide optimized primers, probes, enzymes, and buffers for specific quantification of human DNA [24]. |

| Human DNA Quantitation Standard (e.g., NIST SRM 2372) | Serves as a quality control measure to assess the accuracy of quantitation results, ensuring data reliability [24]. |

| DNA Extraction Kits | Isolate and purify DNA from complex biological samples prior to quantitation and downstream analysis. |

| Fluorescent Dyes/Probes | Intercalate with or bind to DNA, emitting a fluorescent signal that is monitored during PCR cycles to measure amplification [24]. |

Protocol 1: Data Analysis for Quantitative PCR (qPCR) Purpose: To accurately determine the quantity and quality of human DNA in a forensic sample prior to downstream STR analysis [24].

Methodology:

- Amplification: The qPCR process is monitored in real-time via a fluorescent signal. The process occurs in three phases:

- Exponential Phase: Theoretical doubling of amplicons per cycle. The baseline fluorescence (background noise) is established, and a fluorescence threshold is set. The Cycle Threshold (CT) is the point at which a sample's fluorescence exceeds this threshold [24].

- Linear Phase: Reagent consumption impedes efficiency; this phase is not used for data analysis [24].

- Plateau Region: Amplification ceases due to critical reagent depletion [24].

- Standard Curve Generation: A regression line is derived by plotting the CT values of known DNA standards against the log of their concentrations [24].

- Sample Quantitation: The concentration of an unknown sample is determined by comparing its CT value against the standard curve. A lower CT indicates a higher initial DNA concentration [24].

The workflow for this protocol is logically sequenced below:

Pressure: Casework Backlog and Resource Allocation

Solution: AI-Powered Predictive Modeling

Forensic labs face substantial backlogs. AI and machine learning can transform case management through predictive modeling [25].

Protocol 2: AI for Case Prioritization and Resource Allocation Purpose: To leverage historical case data to predict processing times, prioritize evidence, and optimize resource allocation [25].

Methodology:

- Data Aggregation: Compile historical case data including evidence type, complexity, processing time, and analyst workload.

- Model Training: Train a machine learning model on this data to identify patterns and correlations between case characteristics and required resources.

- Prediction and Prioritization:

- Case Duration Estimation: Input characteristics of new cases to predict processing time, aiding in staffing and equipment planning [25].

- Evidence Prioritization: The model automatically scans and ranks incoming evidence based on its predicted investigative value, helping to prioritize high-yield samples [25].

- Human Verification: A critical guardrail. All AI-generated recommendations must be verified by a qualified forensic analyst to prevent misclassification that could have serious consequences [25]. An audit trail documenting all AI inputs and conclusions is essential [25].

The decision process for integrating AI into the workflow is outlined as follows:

Pressure: Integration of Complex Data Types

Solution: Forensic Data Analysis (FDA) and Intelligence Synthesis

Labs must handle diverse data from DNA, latent prints, and trace evidence. Forensic Data Analysis (FDA) provides a structured process to examine this data for patterns of fraudulent or non-standard activities [26].

Protocol 3: The Forensic Data Analysis (FDA) Process Purpose: To identify risk areas, detect non-standard activities, and synthesize intelligence from multiple forensic data streams [26].

Methodology: The FDA process is iterative and consists of four stages:

- Acquisition: Identify and gather relevant data from multiple sources (e.g., databases, logs) into a centralized structure like a data warehouse [26].

- Examination: Use exploratory data analysis and data visualization to look at data set characteristics and identify patterns of activity [26].

- Analysis: Create queries, process results, and review emerging patterns. This stage involves creating and testing hypotheses through iterative simulation; if no evidence is found, a new hypothesis is developed [26].

- Reporting: Present findings via written reports, graphical dashboards, or other business intelligence techniques [26].

The cyclical nature of this analytical process is shown in the following workflow:

Discussion and Forward-Facing Considerations

The current landscape requires forensic labs to be agile in adopting new technologies like Rapid DNA and AI, while maintaining rigorous scientific standards. The implementation of these technologies aligns with higher TRLs, facilitating their transition from research to operational use. Key challenges that must be managed include:

- Accuracy and Validation: For Rapid DNA, ensuring proper sample collection and processing to prevent contamination is critical for maintaining evidence credibility [23].

- AI Oversight and Trust: AI systems require careful human oversight and proven reliability before deployment. The "black box" nature of some models necessitates robust audit trails and a framework for responsible AI use in forensics [25].

- Standardization: A lack of universal standards for data forensics and AI applications presents an administrative challenge, highlighting the need for community-wide consensus on best practices [26] [25].

Forensic labs are navigating a complex environment shaped by the pressure to do more, faster, and with greater analytical depth. The protocols for qPCR, AI-driven resource management, and forensic data analysis detailed in this application note provide a roadmap for leveraging current technologies to meet these demands. Successful implementation within a TRL framework requires a balanced approach that embraces innovation while adhering to the foundational principles of validation, standardization, and expert oversight.

Cutting-Edge Tools: From Spectroscopy to Genomics in Evidence Screening

Estimating the time since deposition (TSD) of a bloodstain is often considered a "holy grail" in forensic science, as it can provide critical information for reconstructing the timeline of a crime [27]. While numerous analytical techniques have been explored, attenuated total reflectance Fourier transform infrared (ATR FT-IR) spectroscopy has emerged as a particularly powerful method due to its non-destructive nature, rapid analysis time, and high sensitivity to the biochemical changes occurring in blood as it ages [28] [29]. This Application Note details the experimental protocols and performance data for implementing ATR FT-IR spectroscopy for bloodstain age determination, framed within the context of advancing the Technology Readiness Level (TRL) of this methodology for forensic practice.

Experimental Protocols

Sample Preparation Protocol

The following protocol ensures the generation of reliable and reproducible bloodstain samples for age estimation studies.

- Blood Collection: Obtain fresh whole blood samples via venipuncture from healthy, consenting donors. The use of anticoagulants (e.g., EDTA) should be consistent and documented, as their absence may be preferable to mimic real-world conditions [28]. Institutional ethical approval and donor informed consent are mandatory.

- Substrate Deposition: Deposit blood droplets (typical volume 5-50 µL) onto relevant substrates. For a comprehensive model, include both non-rigid (e.g., white cotton fabric, cellulose paper) and rigid (e.g., glass) surfaces, as surface properties significantly impact spectral data and model performance [30].

- Aging Conditions: Age stains under controlled conditions that simulate real crime scene environments. Key parameters to control and document include:

- Temperature and Humidity: Use environmental chambers for stability.

- Light Exposure: Differentiate between indoor (dim/dark) and outdoor (full spectrum light) conditions [28].

- Time Series: Collect data across a relevant time frame. Studies have successfully monitored changes from hours up to 212 days [28] [30]. A suggested initial time course is 1, 3, 7, 12, 19, 30, 50, 85, and 107 days.

Spectral Acquisition Parameters

Standardized instrument settings are crucial for cross-laboratory reproducibility.

- Instrumentation: Use an FT-IR spectrometer equipped with an ATR accessory featuring a diamond crystal.

- Spectral Range: Collect data in the mid-infrared region, typically 900–1800 cm⁻¹ (the "biofingerprint" region) [28]. Some methods focus on key peaks within 1800–1300 cm⁻¹ [31].

- Resolution and Scans: Set a resolution of 4 cm⁻¹ and co-add 32 scans per spectrum to ensure a high signal-to-noise ratio [28].

- Replication: Acquire multiple technical replicates (e.g., 3-5 spectra from different spots on the same stain) and average them to create a single representative spectrum for each sample.

Data Preprocessing and Chemometric Analysis

Extracting meaningful age-related information from complex spectral data requires a robust chemometric workflow.

- Preprocessing: Apply the following steps to minimize non-chemical spectral variations:

- Baseline Correction: Removes instrumental offsets and scattering effects.

- Standard Normal Variate (SNV) or Multiplicative Scatter Correction (MSC): Further corrects for light scattering, particularly important for stains on porous substrates [28] [32].

- Smoothing: Use techniques like Savitzky-Golay smoothing to reduce high-frequency noise [32] [33].

- Multivariate Analysis:

- Regression for Age Prediction: Use Partial Least Squares Regression (PLSR) to model the relationship between spectral data (X-matrix) and the known TSD (Y-matrix) [28] [30] [33]. The model's complexity (number of latent variables) should be optimized via cross-validation to prevent overfitting.

- Machine Learning for Age Prediction: Advanced techniques like Support Vector Machines for Regression (SVMr) and Artificial Neural Networks (ANNs) trained with the Levenberg-Marquardt algorithm have shown superior performance, achieving coefficients of determination (R²) above 0.92 in prediction [34] [31].

- Validation: Always validate models using an external test set of samples not included in the model building. Internal validation via 10-fold cross-validation is also recommended [28].

Figure 1: ATR-FTIR workflow for bloodstain age estimation, showing key steps from sample preparation to final TSD prediction.

Performance Data and Model Validation

The following tables summarize the predictive performance of ATR FT-IR spectroscopy for bloodstain age estimation as reported in recent literature.

Table 1: Performance of ATR-FTIR for bloodstain age estimation under different conditions.

| Model Type / Condition | Time Range | R² | RMSEP | RPD | Key Techniques | Source |

|---|---|---|---|---|---|---|

| Indoor Model | 7-85 days | 0.94 | 5.83 days | 4.08 | PLSR | [28] |