From Lab to Courtroom: Validating Novel Forensic Methods Against Established Techniques Using Technology Readiness Levels

This article provides a comprehensive framework for researchers and forensic development professionals navigating the complex validation pathway from novel method development to court-admissible evidence.

From Lab to Courtroom: Validating Novel Forensic Methods Against Established Techniques Using Technology Readiness Levels

Abstract

This article provides a comprehensive framework for researchers and forensic development professionals navigating the complex validation pathway from novel method development to court-admissible evidence. It explores foundational concepts of forensic validation, methodological applications of Technology Readiness Levels (TRL), troubleshooting for common implementation barriers, and rigorous comparative validation against established techniques. By integrating current research on analytical techniques, legal admissibility standards, and cognitive bias mitigation, this guide addresses the critical intersection of scientific innovation and judicial reliability in forensic science.

The Forensic Validation Imperative: Building on Historical Context and Core Principles

The 2009 National Academy of Sciences (NAS) report, "Strengthening Forensic Science in the United States: A Path Forward," marked a pivotal moment for forensic science, providing a rigorous, independent assessment that exposed critical deficiencies across numerous forensic disciplines [1] [2]. This landmark report fundamentally shook the field by revealing that many long-accepted forensic methods, with the notable exception of nuclear DNA analysis, lacked proper scientific validation [3]. It concluded that no forensic method had been "rigorously shown to have the capacity to consistently, and with a high degree of certainty, demonstrate a connection between evidence and a specific individual or source" [4] [2]. The report served as a catalyst for a national conversation on forensic reform, highlighting systemic issues including absent standardization, uneven reliability across disciplines, unquantified error rates, and profound lack of research on method performance and the impact of contextual bias [1]. This article examines the legacy of the NAS report by using its framework to evaluate historical deficiencies and by applying modern Technology Readiness Level (TRL) research to compare the validation status of both established and novel forensic techniques.

Unpacking the NAS Report's Core Critiques

The 2009 NAS report provided a comprehensive critique of the forensic science system, identifying several fundamental areas requiring immediate reform. Its evaluation revealed that many pattern comparison disciplines—such as fingerprint examination, firearms and toolmark analysis, bite mark analysis, and microscopic hair comparison—operated more as technical disciplines than evidence-based sciences [2]. These fields had developed primarily within law enforcement contexts rather than academic institutions, leading to a significant dearth of peer-reviewed studies establishing their scientific validity and foundational principles [4] [2].

The report systematically outlined key challenges that contributed to a landscape of unreliable forensic evidence [1]:

- Lack of Standardization: Absence of uniform operational procedures and enforceable best practices across crime laboratories.

- Inconsistent Certification: No mandatory, uniform requirements for certifying forensic practitioners or accrediting crime laboratories.

- Variable Reliability: Significant variations in the reliability of expert evidence interpretation between disciplines and practitioners.

- Unknown Error Rates: Most forensic disciplines had not established measurable performance limits or quantifiable error rates.

- Cognitive Bias: Lack of protocols to address the impact of contextual information and cognitive bias on forensic decision-making.

These deficiencies had real-world consequences, contributing to wrongful convictions as demonstrated by the Innocence Project's work. For example, a comprehensive review of FBI microscopic hair analysis cases revealed that over 90% of the first 257 cases reviewed contained one or more types of testimonial errors that exceeded scientific limits [2].

The Legal Framework for Forensic Evidence Admissibility

The NAS report emerged within a specific legal context where courts had long struggled with evaluating the scientific validity of forensic evidence. The legal standards for admitting scientific evidence—primarily the Daubert Standard in federal courts and many states—require judges to act as gatekeepers to ensure expert testimony rests on a reliable foundation [5] [4]. The Daubert standard specifies several factors for evaluating scientific evidence, including:

- Testability: Whether the method can be and has been tested

- Peer Review: Whether the method has been subjected to peer review and publication

- Error Rates: The known or potential error rate of the technique

- Standards: The existence and maintenance of standards controlling the technique's operation

- General Acceptance: Whether the method has gained widespread acceptance within the relevant scientific community [4] [6]

Despite these legal requirements, courts frequently admitted forensic evidence without rigorous scientific scrutiny, often deferring to precedent and practitioner experience rather than empirical validation [4]. The 2009 NAS report and subsequent 2016 President's Council of Advisors on Science and Technology (PCAST) report provided the scientific critique that courts needed to begin more rigorous evaluations of forensic evidence [4] [2].

Figure 1: Legal Standards for Scientific Evidence. This diagram compares the key components of major legal standards governing the admissibility of forensic evidence in the United States (Frye, Daubert, Federal Rule of Evidence 702) and Canada (Mohan). [5] [4]

Experimental Protocols for Validation Studies

Protocol for Digital Forensic Tool Validation

Recent research has developed rigorous experimental methodologies to validate forensic tools according to legal admissibility standards. Ismail et al. (2025) established a comprehensive protocol for comparing digital forensic tools through controlled testing environments [7] [6]:

- Experimental Design: Comparative analysis between commercial tools (FTK, Forensic MagiCube) and open-source alternatives (Autopsy, ProDiscover Basic) across multiple test scenarios

- Test Scenarios:

- Preservation and collection of original data

- Recovery of deleted files through data carving

- Targeted artifact searching in case-specific scenarios

- Validation Metrics: Each experiment performed in triplicate to establish repeatability metrics, with error rates calculated by comparing acquired artifacts to control references

- Result Verification: Hash values used to confirm data integrity before and after imaging, with cross-validation across multiple tools to identify inconsistencies [8] [6]

This methodology directly addresses Daubert factors by establishing testability, error rates, and reliability metrics for forensic tools [6].

Protocol for Novel Analytical Technique Validation

For emerging forensic technologies like comprehensive two-dimensional gas chromatography (GC×GC), validation protocols focus on establishing foundational validity through a Technology Readiness Level (TRL) framework [5]:

- Technology Readiness Levels:

- TRL 1: Basic principle observed and reported

- TRL 2: Technology concept formulated

- TRL 3: Experimental proof of concept

- TRL 4: Technology validated in laboratory environment

- Validation Parameters: Method precision, accuracy, selectivity, sensitivity, limit of detection, limit of quantification, linearity, and robustness

- Legal Compliance Assessment: Evaluation against Frye, Daubert, and Federal Rule of Evidence 702 requirements, including general acceptance, peer review, and known error rates [5]

This structured approach enables objective assessment of when novel forensic methods achieve sufficient maturity for casework application.

Comparative Performance Data: Traditional vs. Novel Methods

Digital Forensic Tools Performance Comparison

Table 1: Comparative Performance of Digital Forensic Tools in Validation Studies [7] [6]

| Performance Metric | Commercial Tools (FTK, MagiCube) | Open-Source Tools (Autopsy, ProDiscover) | Validation Outcome |

|---|---|---|---|

| Data Preservation Integrity | 99.8% accuracy in original data collection | 99.7% accuracy in original data collection | Statistically equivalent performance |

| Deleted File Recovery Rate | 94.2% recovery through data carving | 92.8% recovery through data carving | Comparable capabilities with minor variation |

| Targeted Artifact Searching | 98.5% precision in relevant artifact identification | 97.9% precision in relevant artifact identification | Functionally equivalent for evidentiary purposes |

| Repeatability (Triplicate Testing) | <0.5% variance between experimental replicates | <0.7% variance between experimental replicates | Both categories demonstrate high reproducibility |

| Error Rate | 0.2-1.8% depending on scenario | 0.3-2.1% depending on scenario | Known, quantifiable, and comparable error rates |

Technology Readiness Levels of Forensic Methods

Table 2: Technology Readiness Level Assessment of Forensic Methods Based on Current Literature [5]

| Forensic Discipline | Pre-2009 NAS Status | Current TRL (2024) | Key Validation Gaps |

|---|---|---|---|

| Nuclear DNA Analysis | Established validity | TRL 4 (Operational) | Minimal gaps; considered gold standard |

| Latent Print Comparison | Assumed validity without empirical foundation | TRL 4 (Operational) | Foundational validity established post-2009 |

| Firearms & Toolmarks | Longstanding use despite validity questions | TRL 3-4 (Transitional) | Progress toward foundational validity |

| Bitemark Analysis | Routinely admitted despite concerns | TRL 2 (Research) | Serious reliability concerns; not scientifically established |

| GC×GC for Illicit Drugs | Emerging research | TRL 3 (Proof of Concept) | Requires inter-laboratory validation and error rate analysis |

| GC×GC for Arson Investigations | Early development | TRL 3 (Proof of Concept) | Standardization and legal acceptance pending |

| Microscopic Hair Analysis | Historically admitted | TRL 1-2 (Basic Research) | Lacks validity; contributed to wrongful convictions |

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing validated forensic methods requires specific technical resources and reagents. The following toolkit outlines essential components for conducting forensic validation studies:

Table 3: Essential Research Reagents and Materials for Forensic Validation Studies [5] [9]

| Tool/Reagent | Function in Validation | Application Examples |

|---|---|---|

| GC×GC-MS Systems | Provides superior separation of complex mixtures | Illicit drug analysis, fire debris analysis, odor decomposition profiling |

| Reference Standard Materials | Enables method calibration and accuracy determination | Controlled substances, petroleum products, synthetic cannabinoids |

| Certified Reference Materials | Establishes traceability and measurement uncertainty | DNA quantification standards, toxicology controls, firearm discharge residue |

| Proficiency Test Samples | Assesses laboratory and analyst performance | Blind samples with known ground truth for pattern recognition methods |

| Statistical Analysis Software | Quantifies error rates and confidence intervals | Likelihood ratio calculations, population frequency estimates, error rate measurement |

| Validated Extraction Kits | Ensures reproducible sample processing | DNA extraction, drug purification, ignitable liquid recovery |

| Digital Forensic Workstations | Maintains evidence integrity while enabling analysis | Write-blocking hardware, forensic imaging devices, hash calculation tools |

Signaling Pathways: From Research to Courtroom Acceptance

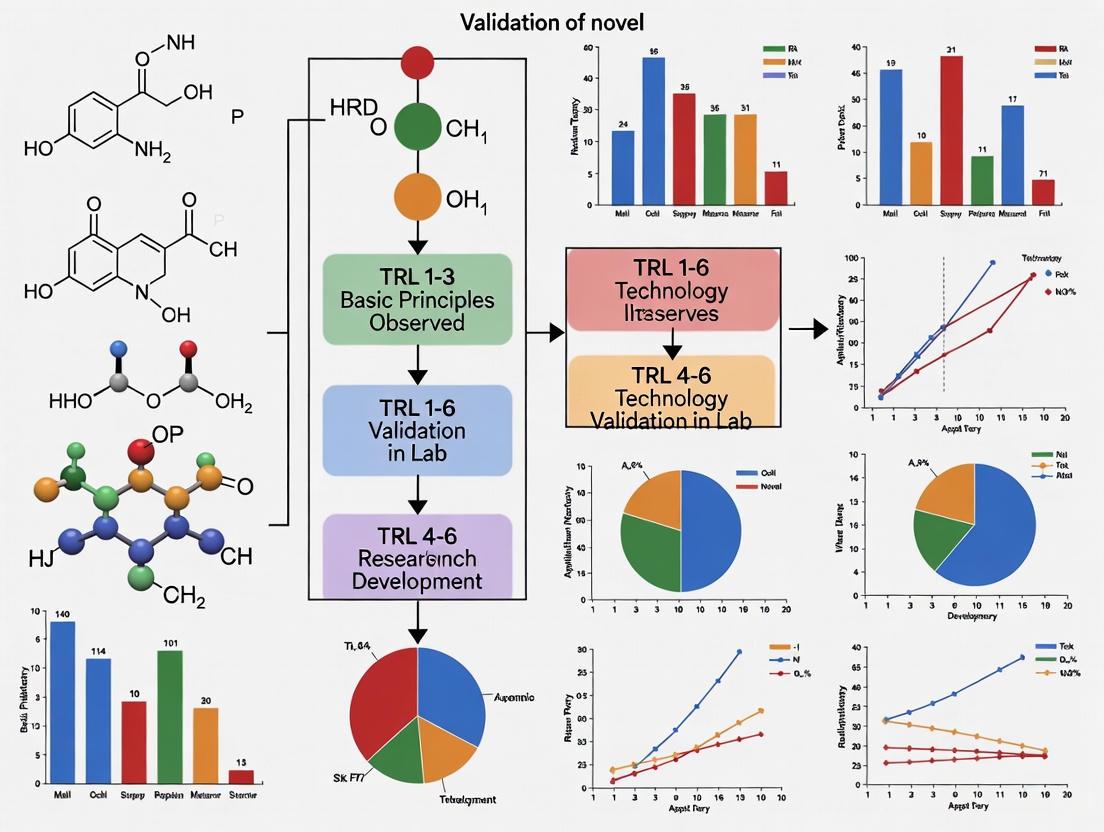

The pathway from forensic research development to courtroom acceptance involves multiple critical stages where scientific validity must be established. The following diagram illustrates this process with key decision points:

Figure 2: Forensic Method Validation Pathway. This workflow diagrams the progression from basic research to courtroom admissibility, highlighting how novel forensic methods achieve Technology Readiness Levels and satisfy legal standards. [5] [4]

Fifteen years after its publication, the 2009 NAS report continues to shape forensic science reform, yet significant challenges remain. While progress has been made in certain areas—particularly the establishment of foundational validity for latent print analysis and improved scientific standards for firearms comparison—many forensic disciplines still operate without sufficient scientific foundation [4] [2]. The legacy of the NAS report is a lasting recognition that forensic science must continually evolve through rigorous research, independent validation, and critical self-assessment. The Technology Readiness Level framework provides a structured approach for evaluating novel methods against established techniques, offering a pathway for integrating innovative technologies while maintaining scientific rigor. For researchers and forensic professionals, the ongoing implementation of the NAS report's recommendations requires sustained commitment to validation, transparency, and error rate quantification—ensuring that forensic evidence presented in courtrooms meets the highest standards of scientific reliability. As the field continues to develop, the NAS report remains a touchstone for measuring progress in the critical mission of strengthening forensic science through evidence-based practice.

In forensic science, validation is the process of providing objective evidence that a method, technique, or procedure is fit for its intended purpose and yields reliable, reproducible results. This process is fundamental for ensuring that forensic evidence meets stringent legal standards for admissibility and scientific reliability. The core purpose of validation is to demonstrate that a method consistently performs within established performance criteria, thereby supporting the credibility of expert testimony in legal proceedings. Within the framework of Technology Readiness Levels (TRL), validation is the critical activity that transitions a novel analytical method from proof-of-concept in a research setting (lower TRL) to a proven, reliable tool ready for implementation in casework (higher TRL).

The legal landscape for forensic evidence is shaped by several pivotal standards. The Daubert Standard, established in the 1993 case Daubert v. Merrell Dow Pharmaceuticals, Inc., requires judges to act as gatekeepers and assess whether the scientific theory or technique presented can be and has been tested, whether it has been subjected to peer review and publication, its known or potential error rate, and whether it has gained widespread acceptance within the relevant scientific community [5]. This standard is incorporated into the Federal Rule of Evidence 702 [5]. The earlier Frye Standard (Frye v. United States, 1923) focuses on "general acceptance" of a method within the scientific community [5]. In Canada, the Mohan Criteria govern the admissibility of expert evidence, emphasizing relevance, necessity, the absence of any exclusionary rule, and a properly qualified expert [5]. For any novel forensic method, addressing these legal benchmarks is a primary objective of the validation process.

Core Conceptual Framework for Forensic Validation

A conceptual framework for forensic validation outlines the key variables and their relationships, guiding the systematic assessment of a new method's performance against a reference or established technique. This framework is not merely a procedural checklist but a structured argument that builds a case for the method's reliability.

Table 1: Core Components of a Forensic Validation Framework

| Framework Component | Definition & Role in Validation | Relationship to Legal Standards |

|---|---|---|

| Independent Variable (The Test Method) | The novel forensic method or technology being validated. Its performance is the subject of the investigation. | Must be defined with sufficient clarity to be tested and peer-reviewed (Daubert). |

| Dependent Variable (The Result) | The output, measurement, or classification produced by the test method (e.g., a concentration, a DNA profile, an identification). | Must be shown to have a known error rate and be reproducible (Daubert). |

| Comparative Method | The established, reliable technique against which the new method is compared. Ideally, this is a reference method with documented correctness [10]. | Provides a benchmark for "general acceptance" (Frye) and helps establish the reliability of the new method. |

| Systematic Error (Inaccuracy) | The difference between the result obtained by the new method and the true value (or the value from the comparative method). It can be constant or proportional [10]. | Quantifying this error is essential for establishing a "known error rate" (Daubert). |

| Moderating Variables | Factors that can alter the effect the independent variable has on the dependent variable (e.g., sample matrix, environmental conditions, operator skill) [11]. | Testing across these variables demonstrates the method's robustness and defines its limits, supporting its validity. |

| Mediating Variables | Factors that explain the process through which the independent and dependent variables are related (e.g., a specific chemical reaction or a software algorithm) [11]. | Understanding the mediating mechanism strengthens the scientific foundation of the method, satisfying Daubert's requirement for a testable theory. |

The relationship between these components can be visualized as a workflow for developing a validation framework. The process begins with defining the novel method and the research question, then moves through identifying key variables, designing experiments to test their relationships, and finally analyzing data to quantify systematic error and other performance metrics. This logical flow ensures a comprehensive validation process.

The Comparison of Methods Experiment: A Core Validation Protocol

A cornerstone of the validation framework is the Comparison of Methods Experiment, which is designed to estimate the systematic error, or inaccuracy, of the new method relative to an established one using real patient specimens [10]. This experiment directly tests the relationship between the independent variable (the new method) and the dependent variable (the result) using the comparative method as a benchmark.

Detailed Experimental Protocol

The following workflow outlines the key steps in executing a robust Comparison of Methods experiment, from selecting a comparative method through to data analysis.

- Selection of Comparative Method: The ideal comparative method is a reference method whose correctness is well-documented through definitive studies and traceable standards. If only a routine method is available, any large discrepancies must be investigated to determine which method is inaccurate [10].

- Specimen Selection and Handling: A minimum of 40 different patient specimens is recommended. These should be carefully selected to cover the entire working range of the method and represent the spectrum of diseases or conditions expected in routine practice. Specimens should be analyzed by both methods within two hours of each other to avoid stability issues, unless specific handling procedures (e.g., refrigeration, preservatives) are defined and followed [10].

- Analysis and Data Collection: The experiment should be conducted over a minimum of five different days to minimize systematic errors from a single run. While single measurements are common, duplicate measurements on different samples or in different analytical orders are advantageous as they help identify sample mix-ups, transposition errors, and other mistakes [10].

- Data Analysis and Interpretation:

- Graphical Inspection: The data should first be graphed for visual inspection. A difference plot (test result minus comparative result vs. comparative result) is used when one-to-one agreement is expected. A comparison plot (test result vs. comparative result) is used for other cases. This helps identify outliers and the general pattern of agreement [10].

- Statistical Calculations: For data covering a wide analytical range, linear regression statistics (slope, y-intercept, standard error of the estimate) are calculated. The systematic error (SE) at a medically or forensically critical decision concentration (Xc) is determined as SE = Yc - Xc, where Yc is the value predicted by the regression line for Xc [10]. For a narrow analytical range, the average difference (bias) between the two methods is the preferred measure of systematic error [10].

- Correlation Coefficient (r): The correlation coefficient is less useful for judging method acceptability and more for assessing whether the data range is wide enough to provide reliable regression estimates. An

r > 0.99is desirable for simple linear regression [10].

Performance Metrics and Data Interpretation

The validation process relies on quantifying specific performance metrics to objectively judge the acceptability of a new method. The choice of metric depends on the type of classification or measurement being performed.

Table 2: Key Performance Metrics for Classifier and Analytical Method Validation

| Metric / Statistic | Primary Function | Interpretation in Validation Context |

|---|---|---|

| Accuracy | Measures overall correctness of classification [12]. | A baseline measure, but can be misleading with imbalanced datasets. |

| Area Under the ROC Curve (AUC) | Measures the model's ability to rank examples and separate classes [12]. | Important for applications like suspect prioritization; values closer to 1.0 indicate better performance. |

| Systematic Error (SE) / Bias | Estimates the inaccuracy of a measurement at a decision point [10]. | The primary output of a comparison of methods experiment. Must be less than the allowable total error for the method to be acceptable. |

| Linear Regression Slope | Indicates the presence of proportional error [10]. | A slope of 1.0 indicates no proportional error. A slope ≠ 1.0 requires correction or method modification. |

| Linear Regression Y-Intercept | Indicates the presence of constant error [10]. | An intercept of 0 indicates no constant error. An intercept ≠ 0 suggests a background interference or calibration offset. |

| F-measure (F-score) | Combines precision and recall for a balanced view of classification performance [12]. | Particularly useful for imbalanced datasets (e.g., rare event detection). |

Essential Research Reagent Solutions and Materials

The following table details key materials and tools required for conducting rigorous forensic validation studies, particularly those involving comparative method experiments.

Table 3: Essential Research Reagent Solutions and Materials for Validation

| Item / Solution | Function in Validation |

|---|---|

| Certified Reference Materials (CRMs) | Provides a ground truth with known analyte concentrations to establish accuracy and calibrate instruments. Essential for traceability. |

| Patient-Derived Specimens | Real-world samples used in the comparison of methods experiment to assess method performance across a biological range and various sample matrices [10]. |

| Quality Control Materials | Used to monitor the precision and stability of both the test and comparative methods throughout the validation study. |

| Statistical Analysis Software | Used for data graphing, calculating regression statistics, bias, and other performance metrics (e.g., R, Python with scikit-learn, specialized validation software) [10]. |

| Forensic Database / Reference Collections | Provides population data or known standards for comparison, essential for validating methods involving DNA, seized drugs, or pattern evidence [13]. |

Validation in Practice: Readiness and Implementation

Successfully validating a method requires placing it within a broader context of technological and legal readiness. The Technology Readiness Level (TRL) scale is a useful framework for this. Research in forensic applications like comprehensive two-dimensional gas chromatography (GC×GC) is often categorized at specific TRLs. For example, as of 2024, GC×GC applications in fire debris and oil spill analysis have reached TRL 4 (technology validated in lab), whereas applications in fingermark chemistry and toxicology are at lower TRLs (TRL 2-3, technology concept/formulation and experimental proof of concept respectively) [5].

The ultimate goal of validation is implementation. The National Institute of Justice (NIJ) Forensic Science Strategic Research Plan, 2022-2026 emphasizes strategic priorities that align directly with the validation framework, including "Foundational Validity and Reliability of Forensic Methods" and "Standard Criteria for Analysis and Interpretation" [13]. This highlights the ongoing institutional drive to ensure that new methods are not only technically sound but also legally defensible and practically implementable in forensic laboratories.

Forensic science stands at a crossroads, balancing between established, court-ready techniques and a wave of novel analytical methods promising greater sensitivity, speed, and intelligence. The critical factor determining which methods transition from research to courtroom is validation – the comprehensive process of demonstrating that a technique is reliable, reproducible, and fit-for-purpose within the legal context [5]. While DNA analysis has achieved an unprecedented level of judicial acceptance through decades of standardization and error rate quantification, emerging techniques across digital, chemical, and biological domains face a substantial validation gap [14] [5] [15]. This gap exists not merely in technical performance but in the intricate framework of legal admissibility standards, reference materials, and foundational validity studies required for acceptance as evidence.

The validation challenge is particularly acute given the diverse nature of emerging forensic disciplines. From comprehensive two-dimensional gas chromatography (GC×GC) for chemical evidence to large language models (LLMs) for digital forensic timeline analysis, novel techniques must navigate a complex pathway from proof-of-concept to routine application [5] [16]. Legal standards such as the Daubert Standard in the United States and the Mohan Criteria in Canada establish rigorous benchmarks for scientific evidence, emphasizing testing, peer review, known error rates, and general acceptance within the relevant scientific community [5]. This review systematically compares the validation maturity of established forensic DNA methods against emerging techniques, analyzing the specific requirements for closing the validation gap and integrating innovative technologies into the forensic scientist's toolkit.

Theoretical Framework: Validation Principles and Technology Readiness

Legal Admissibility Standards for Forensic Evidence

For any forensic method to achieve operational status, it must satisfy legally defined admissibility standards. These standards create the essential framework for validation protocols, emphasizing not just analytical performance but legal reliability.

Table 1: Legal Standards for Forensic Evidence Admissibility

| Standard | Jurisdiction | Key Criteria | Impact on Validation |

|---|---|---|---|

| Daubert | United States (Federal) | Testing/validation, peer review, error rates, general acceptance [5] | Requires formal error rate quantification & inter-laboratory reproducibility studies |

| Frye | United States (Some States) | "General acceptance" in relevant scientific community [5] | Emphasizes consensus building through publications & professional organization endorsements |

| Mohan | Canada | Relevance, necessity, absence of exclusionary rules, qualified expert [5] | Focuses on fit-for-purpose validation & practitioner competency standards |

These legal standards directly influence how validation studies must be designed and documented. The Daubert Standard, in particular, has pushed forensic validation beyond mere "general acceptance" toward quantifiable measures of uncertainty, error rates, and foundational validity [5]. For novel techniques, this means validation must include black-box studies to measure accuracy and reliability, white-box studies to identify sources of error, and inter-laboratory studies to establish reproducibility [13].

Technology Readiness Levels (TRL) in Forensic Science

Technology Readiness Levels (TRL) provide a structured framework for assessing the maturity of forensic methods. This scale helps contextualize the validation gap between established and emerging techniques.

Diagram: Technology Readiness Pathway for Forensic Methods. Established techniques like DNA profiling operate at TRL 9, while novel methods exist at various lower maturity levels, creating the validation gap [5].

Established DNA methods reside at TRL 9, characterized by standardized protocols, extensive reference databases, quantified error rates, and routine admissibility [14] [17]. In contrast, emerging techniques like GC×GC for fire debris analysis or LLM-based timeline analysis typically exist at TRL 3-6, where basic and applied research has demonstrated functionality but comprehensive validation and standardization remain incomplete [5] [16]. This TRL framework highlights the multi-stage validation pathway required for novel techniques to achieve operational status, with each transition between levels requiring increasingly rigorous and legally-focused validation studies.

Established Techniques: The DNA Validation Paradigm

The Evolution of DNA Analysis Validation

Forensic DNA analysis represents the gold standard for validated forensic techniques, having undergone three decades of refinement, standardization, and extensive validation. The validation journey of DNA methods provides a template for emerging techniques seeking to bridge the validation gap. Next-generation sequencing (NGS) technologies demonstrate how even advanced methods can achieve validation maturity through systematic testing and standardization [14]. NGS enables analysis of entire genomes or specific regions with high precision, particularly valuable for damaged, minimal, or aged DNA samples [15]. The validation pathway for NGS has included development of standardized kits, inter-laboratory studies, establishment of nomenclature systems compatible with existing DNA databases, and population studies to generate frequency data for alleles detected through sequencing [14].

The implementation of probabilistic genotyping methods for complex DNA mixture interpretation further illustrates the evolution of validation practices. These methods employ sophisticated statistical frameworks to analyze mixtures with characteristics like allele drop-out/drop-in and heterozygous imbalance [14]. Their validation required specialized software development, developmental and internal validation studies by forensic laboratories, and the publication of guidelines by regulating bodies [14]. The adoption of these methods demonstrates how forensic validation has expanded to include computational tools and statistical approaches, providing a model for validating AI-based forensic technologies now emerging in other disciplines.

Standardized Protocols and Reference Materials

Validated DNA analysis relies extensively on standardized protocols, reference materials, and quality control measures that provide the foundation for reliability and reproducibility across laboratories.

Table 2: Validated Components of Forensic DNA Analysis

| Validation Component | Specific Examples | Function in Validation |

|---|---|---|

| Standardized Kits | GlobalFiler, PowerPlex Fusion | Ensure reproducibility across laboratories with controlled sensitivity & specificity [14] |

| Reference Materials | NIST Standard Reference Materials | Enable calibration and performance verification across platforms [13] |

| Quality Control | Quantitative PCR, Inhibition Checks | Monitor sample quality & analytical process reliability [17] |

| Database Infrastructure | CODIS, National DNA Databases | Support statistical interpretation & population frequency estimates [14] |

The establishment of automatable systems like the Fast DNA IDentification Line (FIDL) demonstrates how validation extends beyond analytical chemistry to encompass entire workflows. FIDL represents a series of software solutions that automate the process from raw capillary electrophoresis data to DNA report, including automated profile analysis, contamination checks, and database comparisons [17]. The validation of such systems requires demonstrating equivalent performance to manual processes while improving efficiency and reducing turn-around times from 17-35 days down to 2-9 days in operational environments [17].

Novel Techniques: The Validation Frontier

Digital and Multimedia Forensics

Digital forensics faces significant validation challenges due to the rapidly evolving nature of technology and evidence sources. The emergence of large language models (LLMs) for forensic timeline analysis represents both an opportunity and a validation challenge. These models can potentially reconstruct sequences of events from digital artifacts but require standardized evaluation methodologies to assess their performance [16]. Unlike DNA analysis with established error rates, LLM-based digital analysis lacks standardized validation frameworks, though initiatives like the NIST Computer Forensic Tool Testing (CFTT) Program aim to establish methodology for testing computer forensic tools [16].

The validation of digital forensic methods must address concerns about hallucinations, inaccuracies, and evidence security when using AI-based tools [16]. Proposed validation approaches include creating standardized forensic timeline datasets and ground truth data, using metrics like BLEU and ROUGE for quantitative evaluation, and maintaining human-in-the-loop oversight throughout the investigative process [16]. These requirements parallel the early validation challenges faced by probabilistic genotyping in DNA analysis but are complicated by the "black box" nature of some AI systems and the rapidly changing digital landscape.

Forensic Chemistry and Instrumental Analysis

Novel separation and detection technologies in forensic chemistry illustrate the validation challenges for instrumental techniques. Comprehensive two-dimensional gas chromatography (GC×GC) provides enhanced separation power for complex forensic evidence including illicit drugs, fingerprint residue, and ignitable liquid residues [5]. Despite analytical advantages, GC×GC methods face validation barriers including the need for intra- and inter-laboratory validation studies, error rate analysis, and standardization of data interpretation criteria [5].

The validation pathway for GC×GC mirrors aspects of DNA validation but faces unique challenges in standardizing data interpretation across laboratory environments. As with early DNA methods, reference libraries and standardized data interpretation guidelines must be developed and collaboratively tested [5]. The technique must also demonstrate compatibility with existing quality assurance frameworks and establish proficiency testing programs before achieving widespread adoption in operational laboratories.

Comparative Analysis: The Validation Gap in Practice

Technology Readiness and Admissibility Comparison

The validation gap between established and novel forensic techniques becomes evident when comparing their technology readiness levels and legal admissibility status.

Table 3: Validation Status Comparison Between Established and Novel Techniques

| Parameter | Established DNA Methods | Novel Techniques (GC×GC, LLMs) |

|---|---|---|

| TRL Level | 9 (Routine casework) [14] [17] | 3-6 (Proof of concept to validation) [5] [16] |

| Error Rates | Quantified & documented [14] | Largely unknown or in estimation phase [5] [16] |

| Standard Methods | ANSI/ASB Standards (e.g., 175 for DNA) [18] | Research methods only [5] |

| Reference Materials | Commercially available & NIST-certified [13] | In development or non-standardized [5] |

| Legal Challenges | Minimal for core methodologies | Significant admissibility hurdles [5] |

This comparison highlights the multi-faceted nature of the validation gap, encompassing not just technical performance but the entire ecosystem of standards, reference materials, and legal precedent that establishes reliability in forensic practice.

Validation Methodologies and Protocols

The validation approaches for established versus novel techniques differ significantly in scope, methodology, and documentation requirements.

DNA Method Validation Protocol:

- Performance Verification: Following manufacturer protocols for commercial kits with established sensitivity and specificity [14]

- Mixture Studies: Analysis of known synthetic mixtures with varying contributor numbers and ratios [17]

- Reproducibility Testing: Intra- and inter-laboratory studies to establish precision [14]

- Population Studies: Generation of allele frequency data for statistical interpretation [14]

- Database Integration: Validation of search algorithms against known DNA databases [17]

- Casework Mock Trials: Application to simulated case samples before implementation [17]

Novel Technique Validation Protocol:

- Proof-of-Concept: Demonstration of analytical principle with controlled samples [5] [16]

- Comparative Studies: Comparison against established methods for same evidence type [5]

- Robustness Testing: Evaluation of performance under varying conditions [5]

- Interpretation Criteria Development: Establishment of objective data interpretation guidelines [5]

- Error Rate Estimation: Black-box studies to measure accuracy and reliability [13]

- Standards Development: Collaboration with OSAC and similar bodies to develop consensus standards [18]

Bridging the Gap: Validation Strategies for Novel Techniques

Standardization and Reference Materials

Closing the validation gap requires systematic approaches to standardization and reference material development. The Organization of Scientific Area Committees (OSAC) for Forensic Science plays a critical role in this process by facilitating development of consensus standards across diverse forensic disciplines [18]. Recent efforts include standards development in digital and multimedia science, forensic chemistry, and novel instrumental methods [18]. The National Institute of Standards and Technology (NIST) supports these efforts through reference material development, including mass spectral libraries and standardized DNA profiling systems [13] [18].

For novel techniques, reference material development must keep pace with analytical innovation. The NIST Forensic Science Strategic Research Plan 2022-2026 emphasizes developing reference materials and collections, accessible and searchable databases, and databases to support statistical interpretation of evidence weight [13]. These resources enable laboratories to validate their implementation of methods and provide the foundation for proficiency testing programs essential for demonstrating reliability.

Research and Development Priorities

Strategic research priorities identified by the National Institute of Justice provide a roadmap for addressing the validation gap through focused research and development.

Diagram: Strategic Research Priorities for Closing the Validation Gap. The NIJ framework emphasizes sequential development from foundational validity to implementation impact assessment [13].

Key research priorities include:

- Foundational Validity and Reliability: Understanding the fundamental scientific basis of forensic disciplines and quantifying measurement uncertainty [13]

- Decision Analysis Studies: Measuring accuracy and reliability through black-box studies and identifying sources of error through white-box studies [13]

- Human Factors Research: Evaluating how human cognition affects forensic decisions and developing safeguards [13]

- Implementation Science: Studying how validated methods transition into operational practice and affect criminal justice outcomes [13]

This structured approach ensures that validation addresses not just analytical performance but the entire ecosystem of forensic practice, from fundamental principles to operational impact.

The Scientist's Toolkit: Research Reagent Solutions

Implementing and validating novel forensic techniques requires specific research reagents and materials that enable standardization, quality control, and method development.

Table 4: Essential Research Reagents for Forensic Method Validation

| Reagent/Material | Application | Function in Validation |

|---|---|---|

| Standardized DNA Profiling Kits (e.g., Precision ID Globalfiler NGS STR Panel) | MPS-based DNA analysis | Enable sequencing of STR and SNP markers with platform-specific validation [14] |

| Probabilistic Genotyping Software (e.g., EuroForMix, STRmix) | DNA mixture interpretation | Provide statistical framework for evaluating complex DNA profiles [14] |

| GC×GC Reference Standards | Forensic chemistry method development | Enable retention index alignment and cross-laboratory method transfer [5] |

| Digital Forensic Corpora | LLM and AI tool validation | Provide ground truth data for evaluating digital forensic tool performance [16] |

| NIST Standard Reference Materials | Method qualification and verification | Certified reference materials for instrument calibration and method validation [13] |

These research reagents form the foundation for method development and validation across forensic disciplines. Their availability and quality directly impact the ability to close the validation gap for novel techniques by providing benchmarks for performance assessment and standardization.

The validation gap between established DNA methods and novel forensic techniques represents both a challenge and opportunity for the forensic science community. While DNA analysis provides a validated framework encompassing technical protocols, statistical interpretation, and legal admissibility, emerging techniques across digital, chemical, and biological domains must navigate a complex pathway from proof-of-concept to operational implementation. Closing this gap requires systematic approaches to validation, including foundational research establishing scientific validity, error rate quantification through black-box studies, development of standardized protocols and reference materials, and integration with legal admissibility standards.

The ongoing work of standards organizations like OSAC, research initiatives outlined in the NIST Forensic Science Strategic Research Plan, and technology development in areas like MPS and probabilistic genotyping provide templates for validating novel methods [13] [18]. As artificial intelligence and advanced instrumentation transform forensic practice, the validation framework established for DNA analysis offers both guidance and inspiration for ensuring that new techniques meet the rigorous standards demanded by the criminal justice system. Through collaborative research, standardized validation protocols, and investment in reference materials and infrastructure, the forensic science community can systematically bridge the validation gap, bringing the promise of novel techniques to bear on the pursuit of justice.

The admissibility of expert testimony in legal proceedings is governed by specific standards that determine which scientific evidence can be presented to a jury. For researchers and scientists developing novel forensic methods, understanding these legal frameworks is crucial for ensuring their work meets the requisite reliability thresholds for courtroom acceptance. The validation of new forensic techniques operates within a structured paradigm where legal standards serve as the ultimate gatekeeper, determining whether scientific advancements can transition from laboratory research to admissible evidence. This guide provides a comprehensive comparison of the three dominant standards governing expert testimony in United States courts: the Frye Standard, the Daubert Standard, and Federal Rule of Evidence 702, with specific application to the validation of novel forensic methodologies against established techniques.

Core Legal Standards: Definitions and Historical Development

The Frye Standard: General Acceptance Test

Established in the 1923 case Frye v. United States, this standard represents the earliest formal test for expert testimony admissibility [19]. The Frye Standard focuses exclusively on whether the expert's methodology is "generally accepted" by the relevant scientific community [19] [20]. The court famously stated that a scientific principle or discovery "must be sufficiently established to have gained general acceptance in the particular field in which it belongs" [20]. This standard essentially delegates the court's gatekeeping function to the scientific community itself, relying on consensus to ensure reliability.

The Daubert Standard: Judicial Gatekeeping

In the 1993 case Daubert v. Merrell Dow Pharmaceuticals, Inc., the U.S. Supreme Court established a new standard for federal courts, holding that the Frye test had been superseded by the Federal Rules of Evidence [21] [22]. Daubert transformed the landscape of expert testimony by assigning trial judges an active "gatekeeping" role in assessing not just general acceptance, but the overall reliability and relevance of expert testimony [21]. The Court provided a non-exhaustive list of factors for judges to consider, shifting the inquiry from scientific consensus to scientific validity [21].

Federal Rule of Evidence 702: Codified Standards

Rule 702 of the Federal Rules of Evidence was amended in 2000 to codify and clarify the standards articulated in Daubert and its progeny cases [23] [24]. The rule was further amended in December 2023 to emphasize that "the proponent demonstrates to the court that it is more likely than not that" the testimony meets admissibility requirements [24]. This rule operationalizes the Daubert standard by specifying the exact requirements expert testimony must satisfy, making the judge's gatekeeping function more structured and explicit.

Comparative Analysis of Admissibility Standards

The following tables provide a detailed comparison of the three standards across critical dimensions relevant to forensic researchers and legal professionals.

Table 1: Core Characteristics and Legal Foundations

| Characteristic | Frye Standard | Daubert Standard | Federal Rule of Evidence 702 |

|---|---|---|---|

| Originating Case | Frye v. United States (1923) [19] | Daubert v. Merrell Dow Pharmaceuticals (1993) [21] | Amendments (2000, 2023) codifying Daubert [23] [24] |

| Primary Focus | General acceptance in relevant scientific community [19] [20] | Reliability and relevance of methodology [21] | Reliability and sufficient application of principles/methods [23] |

| Judicial Role | Limited gatekeeping; defers to scientific consensus [19] | Active gatekeeper assessing scientific validity [21] | Structured gatekeeper applying explicit factors [24] |

| Scope | Primarily scientific evidence | All expert testimony (scientific, technical, specialized) [21] | All expert testimony [23] |

| Current Jurisdiction | Some state courts (CA, IL, PA, NY) [20] [25] | Federal courts and majority of states [22] [25] | Federal courts and Daubert-states [23] |

Table 2: Validation Criteria for Novel Forensic Methods

| Validation Criteria | Frye Standard | Daubert Standard | Federal Rule of Evidence 702 |

|---|---|---|---|

| Testing & Validation | Not explicitly required | Whether theory/technique can be/has been tested [21] | Testimony is product of reliable principles/methods [23] |

| Peer Review | Not explicitly required | Whether technique has been subjected to peer review [21] | Implicit in reliable principles/methods requirement |

| Error Rate | Not considered | Known or potential error rate [21] | Considered in reliability assessment |

| Standards & Controls | Not considered | Existence/maintenance of standards [21] | Implicit in reliable application requirement |

| General Acceptance | Sole criterion [19] [20] | One factor among others [21] | Considered but not determinative |

| Application Reliability | Not specifically assessed | Reliability of application to facts [22] | Expert's opinion reflects reliable application [24] |

Experimental Protocols: Validating Forensic Methods Against Legal Standards

Validation Framework for Novel Analytical Techniques

Recent research on comprehensive two-dimensional gas chromatography (GC×GC) applications in forensic science provides a relevant case study for validating novel methodologies against legal admissibility standards [5]. The technology readiness level (TRL) framework applied to GC×GC forensic applications demonstrates a systematic approach to method validation that aligns with legal standards:

- TRL 1-2 (Basic Research): Focus on proof-of-concept studies for specific forensic applications (e.g., illicit drug analysis, fingerprint residue, toxicological evidence) [5]

- TRL 3-4 (Method Development): Intra-laboratory validation with emphasis on determining error rates, establishing standards and controls, and testing reliability under varying conditions [5]

- TRL 5-6 (Inter-laboratory Validation): Multi-laboratory studies to demonstrate generalizability and reproducibility across different instruments and operators [5]

- TRL 7+ (Courtroom Readiness): Implementation of standardized protocols with documented error rates, peer-reviewed publication, and demonstration of general acceptance through widespread adoption [5]

Protocol for Daubert-Specific Validation

For laboratories operating in Daubert jurisdictions, the following experimental protocol ensures compliance with all reliability factors:

- Testability Validation: Design experiments that can potentially falsify the methodology's underlying hypotheses under controlled conditions [21]

- Peer Review Implementation: Submit methodology and validation studies to reputable scientific journals with rigorous peer-review processes [21]

- Error Rate Quantification: Conduct repeated measurements across multiple operators and instruments to establish known error rates with confidence intervals [21]

- Standards Development: Create and document standardized operating procedures with quality control measures [21]

- Acceptance Tracking: Monitor citation rates, adoption by other laboratories, and inclusion in methodological standards as general acceptance metrics [21]

Workflow Visualization: From Method Development to Courtroom Admissibility

The following diagram illustrates the pathway for validating novel forensic methods against legal admissibility standards, highlighting critical decision points and validation requirements.

Diagram 1: Forensic Method Validation Pathway for Courtroom Admissibility

Table 3: Research Reagent Solutions for Forensic Method Validation

| Research Tool | Function in Validation | Legal Standard Application |

|---|---|---|

| Inter-laboratory Comparison Materials | Standardized samples for reproducibility testing across multiple facilities | Demonstrates reliability and consistency (Daubert/Rule 702) [5] |

| Certified Reference Materials | Provides ground truth for method accuracy and error rate determination | Quantifies known error rates (Daubert Factor) [21] [5] |

| Blinded Proficiency Samples | Assesses analyst performance without bias | Establishes operational standards and controls (Daubert/Rule 702) [21] |

| Statistical Analysis Software | Calculates confidence intervals, error rates, and significance testing | Provides quantitative support for reliability claims (All Standards) [5] |

| Protocol Documentation Systems | Records standardized operating procedures and deviations | Evidence of maintained standards (Daubert Factor) [21] |

| Literature Tracking Databases | Monitors peer-reviewed publications and citations | Demonstrates general acceptance (Frye/Daubert) [21] [19] |

Quantitative Data Analysis: Legal Standard Impact on Admissibility

Table 4: Empirical Data on Standard Application and Outcomes

| Metric | Frye Standard | Daubert Standard | Federal Rule 702 |

|---|---|---|---|

| Jurisdictional Coverage | Minority of states (approximately 9-12) [20] [25] | Federal courts + approximately 27 states [26] [22] | All federal courts + Daubert states [23] |

| Novel Method Admissibility | Restricted until general acceptance achieved [19] | More permissive if reliability demonstrated [21] | Explicit preponderance standard [24] |

| Exclusion Rate Trend | Historically lower for established methods | Increased exclusion of plaintiff experts in civil cases [22] | Recent amendments emphasize stricter gatekeeping [24] |

| Judicial Training Requirements | Minimal scientific expertise needed | Significant scientific literacy required [22] | Structured factors reduce subjective assessment |

| Validation Timeline Impact | Potentially lengthy acceptance process | Faster adoption with proper validation [26] | Clearer requirements streamline process |

The choice of legal standard significantly impacts the validation pathway for novel forensic methods. Researchers operating in Frye jurisdictions must prioritize community acceptance through publications, conference presentations, and adoption by established laboratories. In Daubert and Rule 702 jurisdictions, a more multifaceted approach is necessary, with specific attention to testing, error rate quantification, and standardization. The recent amendments to Rule 702 emphasize that the proponent must demonstrate admissibility by a preponderance of the evidence, placing greater responsibility on researchers to comprehensively document their validation processes [24].

Understanding these legal frameworks enables forensic researchers to strategically design validation studies that address specific admissibility criteria from the initial development phases. This proactive approach facilitates smoother transition from experimental techniques to court-ready methodology, ensuring that scientific advancements can effectively serve the justice system while maintaining rigorous reliability standards.

The rigorous validation of novel forensic methods is a cornerstone of a reliable and scientifically sound justice system. This process, however, is fundamentally conducted and interpreted by humans, whose reasoning is susceptible to systematic cognitive biases. Within the context of Technology Readiness Level (TRL) research, where methods progress from basic principles to validated operational use, understanding these biases is not optional but essential [5]. Cognitive bias refers to the class of effects through which an individual's preexisting beliefs, expectations, motives, and situational context influence the collection, perception, and interpretation of evidence [27] [28]. These biases are universal, subconscious mental shortcuts that can skew perceptions and undermine the search for truth, even among highly skilled and ethical forensic examiners [28] [29]. This guide objectively compares the performance of traditional forensic decision-making against modern, bias-mitigated approaches, providing experimental data and frameworks essential for researchers and scientists developing and validating new forensic techniques against established standards.

Understanding Cognitive Bias in Forensic Science

Defining the Problem

Forensic confirmation bias, a specific type of cognitive bias, describes how an individual’s beliefs and the situational context of a case can affect how criminal evidence is collected and evaluated [27]. For instance, a forensic scientist provided with extraneous information—such as a suspect’s criminal record or an eyewitness identification—can be subconsciously biased throughout their analysis [27]. This is not a matter of misconduct, but rather a feature of human cognition that operates outside conscious awareness, making it challenging to recognize and control [28].

A significant barrier to progress is the "bias blind spot," where experts recognize the potential for bias in general but deny its effects on their own conclusions [27]. A 2017 survey found that many forensic examiners lacked proper training to mitigate this bias, and even those who were trained were often ineffective in overcoming its subconscious influence [27]. A systematic review of the literature robustly demonstrates this vulnerability, identifying 29 primary source studies across 14 different forensic disciplines that show the influence of confirmation bias on analysts' conclusions [30].

Mechanisms of Bias in the Analytical Workflow

Cognitive biases can infiltrate the forensic process at multiple stages. Research has identified eight key sources of bias, which can be grouped into three categories [28]:

- Category A (Case-Specific Factors): Includes the data (evidence itself), reference materials, task-irrelevant contextual information (e.g., detective's theories), and base-rate expectations (e.g., how common a certain trace material is).

- Category B (Practitioner-Specific Factors): Includes organizational factors within a laboratory and the education and training of the individual practitioner.

- Category C (Human Cognitive Factors): Includes inherent brain functions and personal factors like stress or mental fatigue.

The diagram below illustrates how these sources introduce risk at different stages of a typical forensic analysis workflow and where targeted mitigation strategies can be implemented.

Comparative Analysis: Traditional vs. Bias-Mitigated Approaches

A critical component of validating any new forensic method is assessing its vulnerability to cognitive bias compared to existing techniques. The following table summarizes key performance differentiators between traditional, often subjective, forensic practices and modern frameworks designed for bias mitigation.

Table 1: Performance Comparison of Traditional vs. Bias-Mitigated Forensic Analysis

| Performance Metric | Traditional Forensic Analysis | Bias-Mitigated Approaches | Experimental Support & Impact on Validation |

|---|---|---|---|

| Decision Accuracy | Potentially compromised by contextual bias and subjective judgment. | Enhanced through structured protocols that isolate the examiner from biasing information. | Signal detection theory studies show bias mitigation improves discriminability between same-source and different-source evidence [31]. |

| Error Rate | Often unknown or difficult to quantify due to subjective processes. | A known error rate can be established through black-box studies using mitigated protocols, a key Daubert standard [5] [31]. | Proficiency tests designed with bias controls provide more realistic and defensible error rate data for method validation [13]. |

| Context Management | Examiners often have full access to all investigative context, which can sway interpretation [27] [30]. | Implements Linear Sequential Unmasking (LSU/LSU-E) to control the flow of information [27] [28]. | Studies show analysts exposed to contextual information are significantly more likely to align conclusions with that context, undermining method reliability [30]. |

| Sample Comparison | Typically uses a single suspect sample versus the evidence, fostering expectation. | Employs evidence line-ups with multiple known-innocent samples to reduce inherent assumption bias [28]. | Research confirms that presenting a single suspect sample is a key source of bias; line-ups provide a more robust comparison framework [28] [30]. |

| Result Verification | Non-blind verification risks simply confirming the original analyst's biased conclusion. | Mandates blind verification where the second analyst is independent of the first's work and conclusions [28]. | Blind verification ensures the independence of the quality control process, a critical factor for establishing a method's repeatability during validation. |

| Transparency | Documentation may focus on the conclusion, not the decision-making pathway. | Emphasizes documenting the sequence of information exposure and the rationale for analytical decisions [28]. | Transparent documentation is crucial for demonstrating during validation that the method's application was controlled and unbiased. |

Experimental Protocols for Quantifying Bias and Performance

To generate the comparative data required for TRL advancement and legal admissibility, researchers must employ rigorous experimental designs. The following protocols are foundational for testing the validity and reliability of forensic methods.

The "Black Box" Proficiency Study

- Objective: To measure the intrinsic accuracy and error rate of a forensic method or examiner by testing their performance on samples with known ground truth, without the practitioners' knowledge they are being tested.

- Methodology:

- Material Development: Create a set of evidence samples (e.g., fingerprints, cartridge cases, DNA mixtures) where the ground truth (same-source or different-source) is definitively known. The set should have an equal number of "match" and "non-match" pairs to avoid response bias [31].

- Participant Selection: Engage a representative sample of qualified examiners from multiple laboratories.

- Blinded Administration: Present the test materials to participants as part of their normal casework or a seemingly routine proficiency test, ensuring they are blind to the study's purpose and the presence of non-matching pairs.

- Data Collection: Collect all decisions, including "inconclusive" responses, which must be recorded separately from definitive conclusions [31].

- Data Analysis: Use signal detection theory models to calculate measures like discriminability (d') and response bias, providing a more nuanced view of performance than proportion correct alone [31]. This allows researchers to distinguish true analytical power from a simple tendency to call everything a "match" or "non-match."

Contextual Bias Manipulation Study

- Objective: To directly test the susceptibility of a forensic method to extraneous contextual information.

- Methodology:

- Group Randomization: Randomly assign examiner participants into at least two groups: a "context" group and a "blind" control group.

- Stimuli: Use the same set of challenging evidence samples with known ground truth for all groups.

- Intervention: Provide the "context" group with potentially biasing information (e.g., "the suspect has confessed" or a strong investigative hypothesis) before or during their analysis. The "blind" group receives only the evidence samples without this context.

- Control: A third group can utilize a Linear Sequential Unmasking (LSU) protocol, where they analyze the evidence first and are only provided the context afterward to frame their conclusion [27] [28].

- Data Analysis: Compare the decision accuracy and the rate of conclusive decisions that align with the provided context between the groups. A statistically significant increase in context-aligned conclusions in the "context" group demonstrates the method's vulnerability to bias [30].

Successfully validating a novel forensic method against cognitive biases requires more than just protocols; it necessitates a suite of conceptual and practical tools. The following table details key resources for designing a bias-aware validation study.

Table 2: Essential Reagents & Solutions for Bias-Conscious Forensic Validation Research

| Tool/Resource | Category | Function in Validation & Bias Mitigation |

|---|---|---|

| Linear Sequential Unmasking-Expanded (LSU-E) | Protocol Framework | A structured workflow that controls the sequence and timing of information disclosure to the examiner, minimizing the biasing power of task-irrelevant data [28]. |

| Signal Detection Theory (SDT) | Analytical Metric | A statistical model used to de-confound accuracy from response bias, providing pure measures of a method's discriminability (e.g., d-prime, AUC) [31]. |

| Evidence "Line-ups" | Experimental Material | A set of reference samples that includes the suspect sample among several known-innocent samples. This prevents the inherent assumption of guilt and tests the method's specificity [28] [30]. |

| Blind Verification Protocol | Quality Control Procedure | A mandatory step where a second, qualified examiner repeats the analysis without any knowledge of the first examiner's results, ensuring independence and testing reliability [28]. |

| Daubert Standard Criteria | Legal Framework | A set of U.S. federal court criteria for the admissibility of expert testimony, which explicitly considers testing, peer review, error rates, and general acceptance—all of which are informed by bias-aware validation [5]. |

Visualization of an Integrated Bias-Mitigated Workflow

The following diagram synthesizes the core concepts and tools into a single, integrated workflow. This represents an idealized, robust process for conducting forensic analyses in a manner that minimizes the impact of cognitive biases, from evidence intake to final reporting. This workflow serves as a model against which both traditional and novel methods can be compared during the validation process.

Implementing Technology Readiness Levels: A Practical Framework for Forensic Method Development

Technology Readiness Levels (TRL) provide a systematic measurement system for assessing the maturity level of a particular technology, offering a common framework for engineers, project managers, and investors to understand development status [32] [33]. Originally developed by NASA in the 1970s for space technologies, this scaled framework has since been adopted across diverse sectors including forensic science, where validating novel methods against established techniques is paramount for legal admissibility and scientific rigor [34] [5].

In forensic science, the transition from experimental research to court-admissible evidence presents unique challenges. Emerging analytical techniques must satisfy not only scientific validation standards but also legal benchmarks for reliability, including the Daubert Standard and Frye Standard in the United States or the Mohan Criteria in Canada [5]. The TRL framework provides a structured pathway for this transition, enabling forensic researchers to systematically advance technologies from basic principle observation (TRL 1) to operational use in casework (TRL 9) while addressing the stringent requirements of the legal system.

The TRL Framework: A Scalable Model for Forensic Technology Development

Core Definitions and Historical Context

The TRL framework consists of nine distinct levels that track technology development from basic research to operational deployment [32] [35]. This systematic approach enables consistent maturity assessment across different technologies and provides a common language for researchers, developers, and funding agencies [34].

Initially developed at NASA during the 1970s, the TRL scale was formally defined in 1989 with seven levels, later expanding to the current nine-level system in the 1990s [34]. The framework has since been adopted by numerous government agencies worldwide, including the U.S. Department of Defense, European Space Agency, and European Commission for Horizon 2020 research programs [34]. The International Organization for Standardization further canonized TRLs through the ISO 16290:2013 standard [34].

Detailed TRL Breakdown with Forensic Science Applications

Table 1: Technology Readiness Levels with Forensic Science Applications

| TRL | Definition | Forensic Science Applications & Experimental Protocols |

|---|---|---|

| TRL 1 | Basic principles observed and reported [32] | Literature review of fundamental scientific principles underlying new forensic techniques (e.g., initial studies on DNA analysis methods) [35]. |

| TRL 2 | Technology concept and/or application formulated [32] | Practical applications invented based on basic principles; analytical studies of potential forensic uses (e.g., conceptual framework for rapid DNA analysis) [35] [15]. |

| TRL 3 | Analytical and experimental critical function and/or proof of concept [32] | Active R&D with laboratory studies; proof-of-concept model construction (e.g., initial experiments demonstrating feasibility of new fingerprint detection method) [32] [33]. |

| TRL 4 | Component and/or breadboard validation in laboratory environment [32] | Basic technological components integrated and tested in laboratory setting (e.g., testing multiple components of a new forensic analysis system together) [32] [35]. |

| TRL 5 | Component and/or breadboard validation in relevant environment [32] | More rigorous testing in environments simulating real-world conditions (e.g., testing forensic equipment in simulated crime scene environments) [32] [33]. |

| TRL 6 | System/subsystem model or prototype demonstration in a relevant environment [32] | Fully functional prototype or representational model tested in simulated operational environment (e.g., prototype DNA analyzer tested in mock laboratory setting) [32] [35]. |

| TRL 7 | System prototype demonstration in an operational environment [32] | Working model or prototype demonstrated in actual operational environment (e.g., prototype deployed in real crime scene investigation under controlled conditions) [32] [33]. |

| TRL 8 | Actual system completed and "flight qualified" through test and demonstration [32] | Technology tested and "flight qualified," ready for implementation into existing systems (e.g., fully validated forensic method implemented in crime laboratory) [32] [35]. |

| TRL 9 | Actual system "flight proven" through successful mission operations [32] | Actual application of technology proven in real-life conditions through operational use (e.g., forensic method successfully used in casework and upheld in court proceedings) [32] [35]. |

TRL Application in Forensic Method Validation: From Innovation to Courtroom Admissibility

Bridging the Gap Between Research and Legal Standards

The progression of forensic technologies through TRL stages must incorporate legal admissibility considerations throughout development. In the United States, the Daubert Standard requires that expert testimony be based on sufficient facts or data, derived from reliable principles and methods, reliably applied to the case [5]. Similarly, Canada's Mohan Criteria establish that expert evidence must be relevant, necessary, absent any exclusionary rule, and presented by a properly qualified expert [5].

For novel forensic methods, meeting these legal standards requires deliberate planning across TRL stages:

- TRL 3-4 (Proof of Concept): Begin developing error rate analysis protocols and testing理论基础

- TRL 5-6 (Validation in Relevant Environment): Conduct inter-laboratory validation studies and establish standard operating procedures

- TRL 7-8 (Operational Demonstration): Implement quality assurance standards and gather data on real-world performance

- TRL 9 (Operational Use): Maintain rigorous documentation of casework applications and court outcomes

Table 2: Forensic Technology Validation Pathway Against Legal Standards

| Legal Standard | Key Requirements | TRL Stage for Addressing Requirements | Recommended Experimental Protocols |

|---|---|---|---|

| Daubert Standard | Whether the theory/technique can be/has been tested [5] | TRL 3-4: Experimental proof of concept | Develop hypothesis-driven testing protocols with controlled variables |

| Whether the theory/technique has been peer-reviewed [5] | TRL 4-6: Laboratory and simulated environment validation | Submit methods and results to peer-reviewed forensic science journals | |

| Known or potential error rate [5] | TRL 5-7: Validation in relevant to operational environments | Conduct repeated testing with known samples to establish error rates | |

| Frye Standard | General acceptance in relevant scientific community [5] | TRL 7-9: Operational environment to proven system | Present findings at professional conferences; publish validation studies |

| Mohan Criteria | Relevance and necessity for assisting trier of fact [5] | TRL 6-8: Prototype demonstration to system qualification | Conduct studies demonstrating evidentiary value beyond existing methods |

Case Study: Comprehensive Two-Dimensional Gas Chromatography (GC×GC) in Forensic Chemistry

The development of Comprehensive Two-Dimensional Gas Chromatography (GC×GC) for forensic applications illustrates the TRL pathway in practice. GC×GC provides advanced chromatographic separation for various types of forensic evidence, including illicit drugs, fingerprint residue, toxicological evidence, and petroleum analysis for arson investigations [5].

The technology progression followed this TRL pathway:

- TRL 2-3: Initial concept formulation and proof-of-concept studies in the 1980s-1990s, with theory development driven by need for improved peak capacity [5]

- TRL 4-5: Component validation in laboratory environments, addressing technical challenges like modulator design and column selection [5]

- TRL 6-7: Prototype demonstration for specific forensic applications including fire debris analysis and controlled substance identification [5]

- TRL 8: Technology qualification through development of standard methods and validation protocols [5]

Current research indicates GC×GC for forensic applications now reaches approximately TRL 4 on a specialized readiness scale, indicating validated research with established protocols but not yet routine forensic implementation [5]. Advancement to higher TRLs requires increased intra- and inter-laboratory validation, error rate analysis, and standardization to meet legal admissibility standards [5].

Experimental Design and Validation Protocols Across TRLs

Methodologies for Advancing Forensic Technologies

The progression of forensic technologies through TRL stages requires carefully designed experimental protocols at each level:

TRL 3-4 (Proof of Concept to Laboratory Validation)

- Develop controlled experiments testing critical functions

- Establish baseline performance metrics against reference standards

- Identify potential interferents and limitations

- Document methodology for peer review

TRL 5-6 (Relevant Environment to Prototype Demonstration)

- Conduct testing in simulated operational environments

- Perform comparative studies against established methods

- Initiate intra-laboratory validation with multiple operators

- Begin developing standard operating procedures

TRL 7-8 (Operational Demonstration to System Qualification)

- Implement inter-laboratory validation studies

- Conduct robustness testing under varied conditions

- Establish quality control parameters and acceptance criteria

- Document error rates and limitations comprehensively

Workflow for Forensic Technology Validation

The following diagram illustrates the integrated workflow for advancing forensic technologies through TRL stages while addressing legal admissibility requirements:

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Materials for Forensic Technology Development

| Research Tool | Function in Technology Development | TRL Application Stage |

|---|---|---|

| Reference Standards | Certified materials for method calibration and validation | TRL 3-9: Essential throughout development |

| Quality Control Materials | Samples with known properties for monitoring analytical performance | TRL 4-9: Critical from laboratory validation onward |

| Proficiency Test Samples | Blind samples for assessing method and analyst performance | TRL 5-9: Important for operational testing phases |

| Sample Preparation Kits | Standardized reagents for consistent sample processing | TRL 4-8: Key for method transfer and standardization |

| Data Analysis Software | Tools for statistical analysis and error rate determination | TRL 3-9: Required for data treatment across all stages |

Comparative Analysis of Emerging Forensic Technologies

TRL Assessment of Current Forensic Innovations

Multiple emerging technologies in forensic science are progressing through the TRL framework at varying rates:

Next-Generation Sequencing (NGS) in DNA Analysis NGS technologies enable analysis of more complex samples, degraded DNA, and provide additional genetic markers beyond traditional STR analysis [15]. Current status approximately TRL 7-8 with implementation in some forensic laboratories but ongoing validation for specific applications [15].

Rapid DNA Analysis Portable instruments allowing DNA profiling in field settings, with 2025 FBI Quality Assurance Standards providing implementation guidance for booking stations and forensic samples [36]. Current status approximately TRL 8 with established standards for operational use [15] [36].