From Lab to Courtroom: Integrating TRL Assessment into the Forensic Software Development Lifecycle

This article provides a comprehensive framework for integrating Technology Readiness Level (TRL) assessment into the forensic software development lifecycle.

From Lab to Courtroom: Integrating TRL Assessment into the Forensic Software Development Lifecycle

Abstract

This article provides a comprehensive framework for integrating Technology Readiness Level (TRL) assessment into the forensic software development lifecycle. Aimed at developers, forensic scientists, and laboratory managers, it bridges the gap between theoretical innovation and court-admissible digital tools. The content explores the foundational principles of TRL, outlines a methodological approach for its application in development, addresses common troubleshooting and optimization challenges, and establishes validation protocols for legal and scientific standards. By adopting a structured TRL-driven approach, organizations can enhance the reliability, admissibility, and effectiveness of digital forensic tools in an era of rapidly evolving cyber threats and complex data environments.

Understanding TRL and the Modern Digital Forensics Landscape

Technology Readiness Levels (TRLs) are a systematic metric used to assess the maturity of a particular technology during its development and acquisition phases. The framework establishes a unified scale from basic research (TRL 1) to full commercial application (TRL 9), enabling consistent discussion of technical maturity across different types of technology. Originally developed by NASA during the 1970s, the TRL scale has since been adopted by the U.S. Department of Defense, the European Space Agency (ESA), the European Union, and various other organizations and industries worldwide [1] [2].

This application note details the standardized definitions, assessment protocols, and integration methodologies for implementing TRL assessment within forensic software development lifecycle research. The structured approach facilitates risk management, funding decisions, and strategic planning for technology development and transition [1].

The 9-Level TRL Scale: Definitions and Applications

The following table summarizes the standardized definitions and characteristics for each of the nine Technology Readiness Levels.

Table 1: Technology Readiness Levels (TRLs) Definition Scale

| TRL | Description | Key Activities & Milestones | Outputs & Evidence |

|---|---|---|---|

| TRL 1 | Basic principles observed and reported [3] [4]. | Initial scientific research; translation of results into future R&D [3]. | Published research papers documenting underlying principles. |

| TRL 2 | Technology concept and/or application formulated [3] [4]. | Practical applications are postulated based on observed principles [3] [1]. | Specification of technology concept; no experimental proof. |

| TRL 3 | Analytical and experimental critical function and/or characteristic proof-of-concept [3] [4]. | Active R&D; analytical/lab studies; proof-of-concept model construction [3]. | Experimental proof-of-concept; validation of critical function. |

| TRL 4 | Component and/or breadboard validation in laboratory environment [3] [1]. | Multiple component pieces are integrated and tested in a lab [3]. | Basic technology validation in a laboratory environment [4]. |

| TRL 5 | Component and/or breadboard validation in relevant environment [3] [1]. | Rigorous testing of breadboard technology in simulated realistic environments [3]. | Technology basic validation in a relevant environment [4]. |

| TRL 6 | System/subsystem model or prototype demonstration in a relevant environment [3] [1]. | A fully functional prototype or representational model is tested [3]. | Technology model/prototype demonstration in a relevant environment [4]. |

| TRL 7 | System prototype demonstration in an operational environment [3] [1]. | Working model or prototype is demonstrated in a space/operational environment [3]. | Technology prototype demonstration in an operational environment [4]. |

| TRL 8 | Actual system completed and "flight qualified" through test and demonstration [3] [1]. | System is tested, "flight qualified," and ready for implementation [3]. | Actual technology completed and qualified through test and demonstration [4]. |

| TRL 9 | Actual system "flight proven" through successful mission operations [3] [1]. | Technology has been proven during a successful mission [3]. | Actual technology qualified through successful mission operations [4]. |

TRL Assessment Protocol for Forensic Software Development

Integrating TRL assessment within the forensic software development lifecycle requires a phased experimental and validation protocol. The following workflow delineates the key assessment activities for each major development phase.

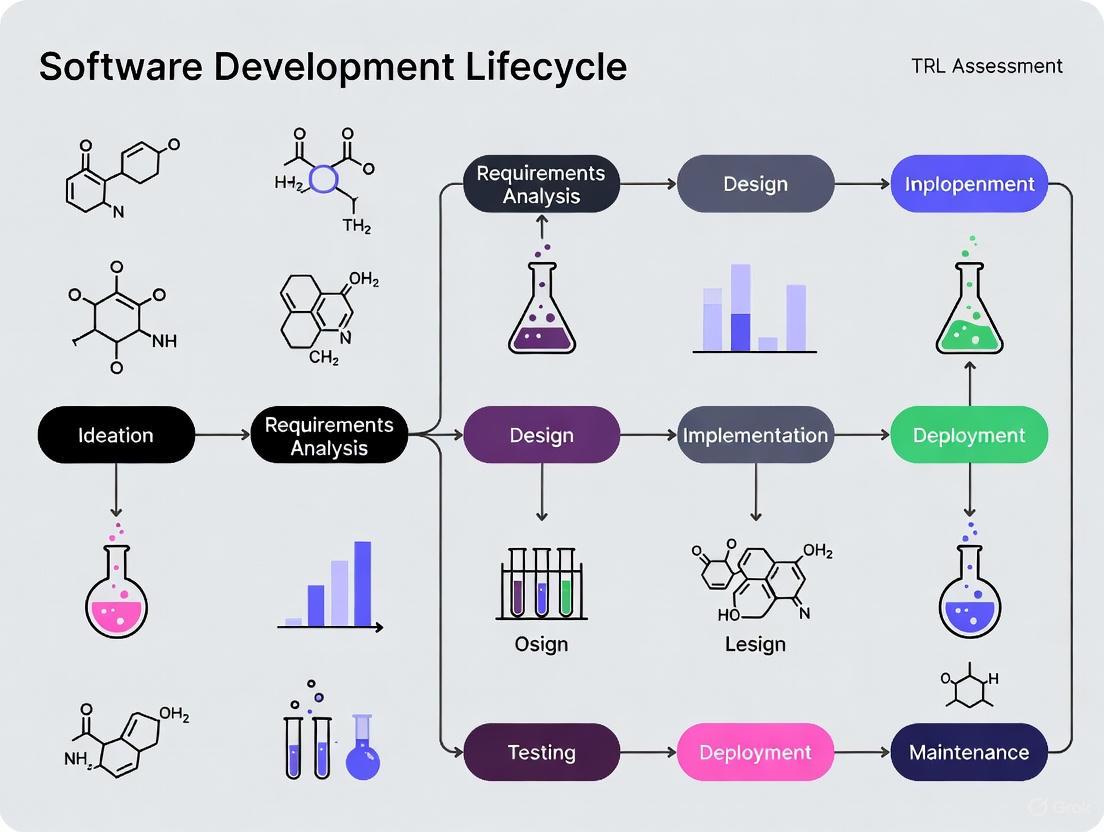

Diagram 1: TRL Assessment in Forensic Software Development Lifecycle

Phase 1: Foundational Research (TRL 1-2)

Objective: Establish scientific basis and formulate a practical technology concept for forensic application.

Experimental Protocol:

- Literature Review & Gap Analysis: Conduct a systematic review of existing digital forensics frameworks, secure software development lifecycles (S-SDLC), and cloud forensic challenges [5]. Map identified gaps to potential novel software solutions.

- Abuse Case Formulation: Define hypothetical misuse scenarios and adversarial requirements specific to the forensic context. These abuse cases will inform security and forensic-ready requirements [6].

- Feasibility Study: Perform analytical studies to determine if the core software concept can address the identified gaps and abuse cases. The output is a Technology Concept Document specifying potential application and theoretical performance benchmarks.

Phase 2: Proof-of-Concept & Laboratory Validation (TRL 3-4)

Objective: Demonstrate critical functional feasibility and validate core components in a controlled lab environment.

Experimental Protocol:

- Critical Function Prototyping: Develop a limited, non-integrated software module (e.g., a specific evidence collection algorithm or log parser) to prove a core concept. This constitutes the Proof-of-Concept (PoC) Model [3].

- Component Breadboarding & Lab Testing: Integrate multiple discrete software components (e.g., evidence collection, secure storage, integrity hashing) into a "breadboard" architecture. Test this integrated unit in a controlled laboratory environment using synthetic or historical non-sensitive data.

- Validation Metrics: Measure performance against predefined criteria from the feasibility study, such as data processing accuracy, integrity verification success rate, and error handling. Success leads to a TRL 4 Validation Report.

Phase 3: Relevant Environment Testing (TRL 5-6)

Objective: Validate the technology in environments that simulate real-world operational conditions.

Experimental Protocol:

- Relevant Environment Simulation: Create a high-fidelity test environment that mirrors a production forensic infrastructure. This includes replicating network configurations, cloud service APIs (e.g., from AWS or Azure), and data loads comparable to real casework [6].

- Alpha-Prototype Development: Build a fully functional, integrated software prototype that includes all major forensic and security features.

- Rigorous Functional & Security Testing: Subject the prototype to comprehensive testing in the simulated environment.

- Static Application Security Testing (SAST): Analyze source code for vulnerabilities [6].

- Dynamic Application Security Testing (DAST): Test the running application for runtime flaws [6].

- Forensic Readiness Drills: Simulate incident response scenarios to test the prototype's ability to collect, preserve, and report admissible digital evidence effectively.

Phase 4: Operational Demonstration & Qualification (TRL 7-8)

Objective: Demonstrate the system prototype in a live operational environment and complete final qualification.

Experimental Protocol:

- Controlled Field Demonstration (TRL 7): Deploy the system prototype within a limited, monitored segment of a live forensic laboratory or corporate IT environment. Use the system to process and analyze real digital evidence from a non-critical, approved case under strict supervision.

- Data Collection & Performance Analysis: Collect extensive performance data, including system reliability, evidence processing speed, resource utilization, and admissibility of generated outputs.

- Final System Qualification (TRL 8): The final software system undergoes formal qualification testing against all functional, security, and forensic requirements. This includes:

- Audit and Compliance Review: Ensuring compliance with standards like ISO/IEC 27034 or NIST SSDF [6].

- Final Security Assessment: Penetration testing and code review.

- Production Readiness Review: A formal gate review authorizing the system for full deployment.

Phase 5: Mission Operations (TRL 9)

Objective: Prove the actual system through successful use in full-scale mission operations.

Experimental Protocol:

- Full-Scale Deployment: Roll out the qualified system across the intended operational environments (e.g., multiple forensic labs, corporate security teams).

- Operational Monitoring & Feedback Loop: Implement continuous monitoring to track system performance, reliability, and the admissibility of evidence it helps to collect in real cases over an extended period.

- Post-Mission Analysis: Document the system's performance, lessons learned, and any identified areas for improvement. Successful performance in this phase confirms TRL 9 status.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key tools, standards, and frameworks essential for conducting TRL assessments in forensic software development.

Table 2: Key Research Reagents & Solutions for TRL Assessment

| Tool/Reagent | Function/Description | Application in Forensic S-SDLC |

|---|---|---|

| Threat Modeling Frameworks | Systematic approach to identify and mitigate security threats during design [6]. | Informs security requirements and abuse cases at TRL 2-3; critical for "forensic-by-design" [5]. |

| SAST/DAST Tools | Static and Dynamic Application Security Testing tools to automatically scan for vulnerabilities [6]. | Core validation tools for component (TRL 4-5) and system-level (TRL 6-7) testing. |

| Software Bill of Materials (SBOM) | A nested inventory of all software components and dependencies [6]. | Manages supply-chain risk; essential for verification and audit at TRL 6-8. |

| Forensic Readiness Drills | Simulated incident response exercises to test evidence collection and handling procedures. | Validates the "forensic-ready" property of the software in relevant (TRL 6) and operational (TRL 7) environments. |

| Policy-as-Code Gates | Automated security and compliance checks embedded within the CI/CD pipeline [6]. | Enforces security standards continuously from TRL 4 onwards; gates deployment at TRL 8. |

| ISO/IEC 15288 & 12207 | International standards for systems and software engineering life cycle processes [5]. | Provides the overarching process framework for aligning FbD development with engineering best practices. |

Risk Management: Navigating the "Valley of Death"

A critical concept in TRL progression is the "Valley of Death"—the difficult transition from a validated prototype (TRL 6) to a system demonstrated in an operational environment (TRL 7) [2]. This phase requires a significant increase in funding, rigorous testing, and access to real-world deployment opportunities.

Diagram 2: Risk Profile and the TRL Valley of Death

Mitigation Strategies for Forensic Software:

- Incremental Demonstration: Use phased rollouts in progressively more realistic environments, such as internal lab networks before live forensic environments.

- Strategic Partnerships: Collaborate with end-user organizations (e.g., law enforcement digital forensics units) early to co-develop requirements and secure test opportunities.

- Targeted Funding: Seek grants or internal funding specifically earmarked for technology demonstration (TRL 6-7) to bridge this critical gap.

The digital forensics field is confronting unprecedented challenges that threaten its fundamental capacity to conduct effective investigations. The convergence of exponential data growth, the geographical and legal complexities of cloud computing, and the evidentiary ambiguities introduced by AI-generated media are creating critical impediments to justice and security [7] [8] [9]. These challenges are not merely operational but are deeply technical, demanding a more structured and rigorous approach to the development of forensic tools and methodologies. This application note frames these core challenges within the context of integrating Technology Readiness Level (TRL) assessment into the forensic software development lifecycle. By providing quantitative data, experimental protocols, and structured frameworks, this document aims to equip researchers and developers with the methodologies needed to advance forensic capabilities in the face of these evolving threats.

Quantitative Analysis of Core Challenges

The scale and impact of the primary challenges facing digital forensics can be quantitatively characterized to guide research and development priorities. The data underscores the necessity for a structured development approach to achieve admissible and actionable results.

Table 1: Quantitative Analysis of Digital Forensics Core Challenges

| Challenge Dimension | Key Metric | Impact on Digital Forensics | Structured Development Imperative |

|---|---|---|---|

| Data Volume & Variety [7] [8] | - Exponential data growth from IoT, mobile, and enterprise systems.- Evidence formats: Video, audio, logs, documents, IoT data streams. | - Creates major processing bottlenecks [7].- Increases risk of critical evidence being overlooked during manual review [8]. | - Requires development of scalable, AI-powered analytics for intelligent indexing and triage [8] [10].- Necessitates modular software architecture to handle diverse data parsers. |

| Cloud Complexity [7] [9] [10] | - Data distributed across multiple jurisdictions and platforms.- Differing data retention and access policies among providers. | - Lengthy evidence acquisition due to cross-border legal processes [7].- Introduces chain of custody gaps and potential legal challenges [8]. | - Demands tools with standardized APIs for cloud data extraction [10].- Requires cryptographic hashing and tamper-evident audit logs integrated early in the development lifecycle [8]. |

| AI-Generated Evidence [7] [9] | - Deepfake technology creates realistic fake video/audio.- "Cheapfakes" and other manipulated media are increasingly common. | - Undermines evidence integrity and trust in digital media [7].- Can be used for blackmail, fraud, and misinformation [7]. | - Drives need for integrated deepfake detection modules (e.g., analyzing pixel patterns, audio frequencies) [7] [9].- Tools must provide verifiable metrics on media authenticity for court admissibility. |

Experimental Protocols for Challenge Validation

To systematically evaluate and mitigate these challenges, rigorous experimental protocols are essential. The following methodologies provide a framework for validating the effectiveness of new forensic tools and techniques.

Protocol for Data Volume and Variety Processing

Objective: To quantify the efficiency and accuracy of a forensic tool in processing large, multi-format datasets and identifying relevant evidence.

Evidence Set Curation:

- Assemble a standardized corpus of digital evidence exceeding 10 TB in total size.

- Incorporate a wide variety of formats: disk images, mobile device backups, cloud export data (e.g., from Facebook, Instagram), CCTV footage, and IoT device logs [8] [10].

- Seed the corpus with known target artifacts, including documents, specific images, and communication records.

Tool Deployment and Configuration:

- Configure the tool under test using analysis presets tailored to the simulated case type (e.g., data theft, communication analysis) [10].

- Enable AI-powered features for object detection, face recognition, and text pattern analysis [10].

- For the control, run the same corpus through a baseline forensic tool without these automated features.

Metrics and Measurement:

- Processing Time: Measure the total time to ingest, index, and analyze the complete corpus.

- Recall and Precision: Calculate the percentage of seeded target artifacts correctly identified (recall) and the percentage of flagged items that are genuinely relevant (precision).

- System Resource Utilization: Monitor CPU, memory, and storage I/O throughout the process to assess scalability.

Protocol for Cloud Evidence Acquisition and Integrity

Objective: To verify the reliability and legal defensibility of a cloud forensics tool in acquiring evidence from various platforms while maintaining a secure chain of custody.

Test Environment Setup:

- Create controlled user accounts on multiple cloud platforms (e.g., a social media platform, a cloud storage service).

- Populate these accounts with a known set of data, including files, messages, and metadata.

Evidence Acquisition:

- Use the tool under test to simulate evidence collection via provider APIs, acting as a client application [10].

- The tool should acquire user data without altering the original evidence in the cloud.

Integrity and Logging Verification:

- Upon acquisition, the tool must automatically generate cryptographic hashes (e.g., SHA-256) for all collected files [8].

- Verify that a tamper-evident audit log is created, documenting every action with timestamps and user IDs [8].

- Validate the integrity of the evidence by comparing the generated hashes before and after the transfer to an evidence repository.

Protocol for AI-Generated Media Detection

Objective: To evaluate the efficacy of a forensic tool in distinguishing between authentic and AI-manipulated media.

Media Dataset Preparation:

- Compile a verified dataset containing both authentic media and AI-generated deepfakes/cheapfakes.

- The manipulated media should be generated using state-of-the-art tools (e.g., GANs, diffusion models) and should include various types: face-swapped videos, synthesized audio, and generated images.

Analysis and Detection:

- Process the entire dataset using the forensic tool's deepfake detection module.

- The tool should analyze the media for digital fingerprints of manipulation, such as:

Evaluation of Results:

- Construct a confusion matrix to determine the tool's true positive, false positive, true negative, and false negative rates.

- Calculate the accuracy and F1-score to quantify the tool's detection performance.

- The tool should generate a forensic report detailing the evidence for or against authenticity, suitable for expert testimony [7].

Technology Readiness Level (TRL) Assessment in Forensic SDLC

Integrating TRL assessment into a forensically-aware Software Development Lifecycle (SDLC) ensures that tools are not only functionally sound but also legally robust and reliable. The following workflow visualizes this integration, highlighting critical forensic validation gates.

The Scientist's Toolkit: Research Reagent Solutions

Advancing digital forensics requires a suite of specialized "research reagents"—both technical tools and procedural frameworks. The following table details essential components for developing and validating forensic solutions tailored to modern challenges.

Table 2: Key Research Reagents for Digital Forensics Development

| Category | Item/Technique | Function & Application in Forensic Research |

|---|---|---|

| Data Ingestion & Triage | AI-Powered Analysis Presets [10] | Pre-configured workflows to automate repetitive analysis tasks (e.g., hash filtering, YARA rule scanning, file carving), ensuring consistency and saving time in large-scale investigations. |

| Automated Metadata Tagging [8] | Intelligently indexes evidence upon ingestion, making files immediately searchable by time, location, person, or object. Crucial for managing evidence variety and velocity. | |

| Evidence Integrity | Cryptographic Hashing (e.g., SHA-256) [8] | Generates a unique digital fingerprint for a file or data set. Any alteration changes this hash, providing a primary means of verifying evidence integrity throughout the chain of custody. |

| Tamper-Evident Audit Logs [8] | Automatically records every action performed on a piece of evidence (upload, view, share), with timestamps and user IDs, creating an immutable record for courtroom validation. | |

| Advanced Analysis | Deepfake Detection Algorithms [7] [9] | Analyzes video and audio files for digital fingerprints of manipulation, such as inconsistencies in pixel patterns, audio frequencies, or lighting, to verify media authenticity. |

| Offline LLM (e.g., BelkaGPT) [10] | A Large Language Model that operates on isolated case data to process text-based artifacts (emails, chats), detecting topics and emotional tones without compromising data privacy. | |

| Validation & Standards | ISO/IEC 27037 Guidelines [7] | An international standard providing guidelines for identifying, collecting, and preserving digital evidence. Serves as a benchmark for developing legally admissible forensic tools. |

| Controlled Evidence Corpora | Standardized, well-documented datasets of digital evidence (including known deepfakes and authentic media) used for tool benchmarking, validation, and comparative performance analysis. |

The tripartite challenge of data volume, cloud complexity, and AI-generated evidence represents a fundamental inflection point for digital forensics. Overcoming these obstacles requires a departure from ad-hoc tool development and toward a structured, rigorous lifecycle model informed by TRL assessment. The quantitative data, experimental protocols, and integration framework provided in this application note establish a foundation for this transition. By adopting these structured development practices, researchers and forensic software developers can create solutions that are not only technologically advanced but also scalable, legally defensible, and capable of preserving evidential integrity in an increasingly complex digital ecosystem. This approach is critical for maintaining the pace of justice and upholding the probative value of digital evidence in 2025 and beyond.

The integration of digital forensic tools into the justice system carries profound implications for individual liberty and legal outcomes. Courts increasingly rely on digital evidence, yet its admissibility hinges entirely on the scientific validity and legal reliability of the tools and methods used to extract and analyze it [11] [12]. The legal standards for admissibility, particularly the Daubert Standard, establish a rigorous framework that demands forensic tools be empirically tested, peer-reviewed, have known error rates, and be widely accepted in the relevant scientific community [12] [13]. Failure to meet these standards can result in the exclusion of critical evidence or, worse, wrongful decisions based on flawed technical findings [11] [13]. This document provides detailed application notes and protocols for integrating Technology Readiness Level (TRL) assessment into the forensic software development lifecycle, ensuring that tools not only perform technically but also withstand legal scrutiny.

Legal and Scientific Foundations for Admissibility

Core Legal Standards

Forensic evidence in the United States is evaluated against a series of legal tests that determine its admissibility in court. The transition from the Frye standard to the Daubert standard represents a significant shift towards a more rigorous, scientific evaluation of evidence [11].

- Frye Standard: Originating from Frye v. United States (1923), this standard requires that the scientific technique or principle underlying the evidence be "sufficiently established to have gained general acceptance in the particular field to which it belongs" [11].

- Daubert Standard: Established in Daubert v. Merrell Dow Pharmaceuticals, Inc. (1993), this standard charges trial judges with the responsibility of being "gatekeepers" of scientific evidence. It provides a more flexible set of factors for judges to consider [11] [13]:

- Whether the theory or technique can be (and has been) tested.

- Whether it has been subjected to peer review and publication.

- The known or potential error rate.

- The existence and maintenance of standards controlling its operation.

- The degree of widespread acceptance within a relevant scientific community.

The Federal Rules of Evidence (FRE), particularly Rule 901, further govern the authentication of digital evidence, requiring that the proponent produce evidence sufficient to support a finding that the digital item is what the proponent claims it is [13].

The NRC and PCAST Reports: A Call for Reform

Two landmark reports have critically shaped the modern expectation for forensic science:

- 2009 National Research Council (NRC) Report: This report shattered the "myth of accuracy" surrounding many traditional forensic disciplines, revealing that many methods, with the exception of DNA analysis, lacked proper scientific validation and foundation [11].

- 2016 PCAST Report: The President's Council of Advisors on Science and Technology (PCAST) reinforced the NRC's findings, calling for stricter scientific validation of forensic feature-comparison methods and highlighting the need for empirical measurement of reliability [11].

These reports have collectively exposed systemic shortcomings, including flawed forensic methods, legal gaps, and issues with the scientific literacy of judges and attorneys, creating an urgent need for reforms that ensure unreliable forensic methods are excluded from judicial proceedings [11].

Validation Protocols and Experimental Methodologies

A robust validation framework is essential for demonstrating that a forensic tool produces reliable, accurate, and repeatable results. The following protocol, adapted from rigorous experimental designs in the field, provides a template for comprehensive tool validation [12].

Comprehensive Tool Validation Protocol

Objective: To quantitatively validate the performance and reliability of a digital forensic tool against established legal and scientific criteria.

Experimental Design:

- Controlled Testing Environment: Utilize isolated, forensically sterile workstations to prevent evidence contamination.

- Comparative Analysis: Compare the tool under test against a validated commercial counterpart (e.g., FTK, EnCase) or an accepted reference tool.

- Triplicate Testing: Conduct all experiments in triplicate to establish repeatability and calculate error rates.

- Blinded Analysis: Where possible, implement blinding to minimize examiner bias during result interpretation.

Test Scenarios & Data Preparation: Prepare controlled evidence samples containing known data artifacts. The testing must encompass at least the following three scenarios:

- Preservation and Collection of Original Data: Verify the tool's ability to create a forensically sound bit-for-bit copy of the original source without alteration, and generate accurate hash values (MD5, SHA-1, SHA-256) for integrity checking.

- Recovery of Deleted Files via Data Carving: Assess the tool's capability to recover files that have been deleted from the filesystem, using a pre-defined set of file types (documents, images, archives).

- Targeted Artifact Searching: Evaluate the tool's search functionality and its ability to identify and parse specific forensic artifacts (e.g., browser history, registry entries, application logs) from a disk image.

Metrics and Data Analysis: Calculate the following key performance indicators for each test scenario:

Table 1: Key Validation Metrics and Their Calculations

| Metric | Description | Calculation Method |

|---|---|---|

| Accuracy | The proportion of true results (both true positives and true negatives) among the total number of cases examined. | (True Positives + True Negatives) / Total Artifacts |

| Error Rate | The proportion of incorrect results (false positives and false negatives) produced by the tool. | (False Positives + False Negatives) / Total Artifacts |

| Repeatability | The tool's ability to produce the same results under identical conditions over multiple trials. | Consistent results in all triplicate runs |

Validation Reporting: The final validation report must document the entire process, including the experimental setup, raw data, calculated metrics, and a definitive conclusion on the tool's reliability for its intended forensic purpose.

Protocol for Validating Explainable AI in Forensic Tools

With the rise of AI in digital forensics, a specialized validation protocol is required to address the "black box" problem and meet the demands of the Daubert standard and FRE 901 [13].

Objective: To validate that an AI-powered forensic tool adheres to the principles of Explainable AI (XAI) and produces forensically sound, court-admissible outputs.

The Four Principles of Explainable AI (per NIST) [13]:

- Explanation: The system must deliver accompanying evidence or reasons for all outputs.

- Meaningful: Explanations must be understandable to the end-user (e.g., the forensic examiner).

- Explanation Accuracy: The provided explanation must correctly reflect the system's process for generating the output.

- Knowledge Limits: The system must only operate under conditions for which it was designed or when it reaches a sufficient confidence in its output.

Validation Workflow:

- Input a diverse set of test data into the AI tool, including edge cases and known problematic data.

- Record all outputs and, crucially, the justifications and confidence scores provided by the tool for each decision.

- Audit the explanation accuracy by having a subject matter expert trace the tool's reasoning against its internal logic (if accessible) and the known ground truth of the test data.

- Test knowledge limits by inputting data outside the tool's intended scope and verifying that it appropriately withholds judgment or flags the input as unsuitable.

- Evaluate meaningfulness by having a certified forensic examiner, trained on the tool, review the explanations and confirm they are comprehensible and sufficient for articulating in a written report or courtroom testimony.

Integrating TRL Assessment into the Forensic SDLC

Integrating TRL assessment into the Software Development Life Cycle (SDLC) ensures that technological maturity and legal admissibility are core considerations from inception to deployment. The concept of forensic readiness must be embedded from the earliest planning phases [14].

Diagram 1: TRL integration in forensic SDLC

The diagram above illustrates how TRL assessment maps onto a forensically-ready SDLC. This integration ensures that every development phase includes activities specifically designed to advance the tool's technological maturity while building the evidence base required for legal admissibility.

Table 2: TRL Milestones and Forensic Admissibility Activities in the SDLC

| SDLC Phase | TRL Range | Key Activities for Legal Admissibility | Outputs for Courtroom Defense |

|---|---|---|---|

| Planning & Design | TRL 1-3 (Basic Research to Proof-of-Concept) | - Define legal requirements (Daubert, FRE).- Establish forensic readiness protocols.- Design for explainability, audit trails, and immutable logs. | - Admissibility Requirements Document.- Architecture diagrams showing data integrity measures. |

| Coding & Development | TRL 4-6 (Lab Validation to Prototype in Relevant Environment) | - Implement detailed logging and evidence provenance tracking.- Code modularly for testing and validation.- Integrate forensic markers for data tracing. | - Peer-reviewed technical papers on the method.- Source code documentation for transparency. |

| Testing & Validation | TRL 4-6 (Continued) | - Execute the validation protocols (Sections 3.1 & 3.2).- Conduct independent peer review.- Calculate accuracy and error rates. | - Comprehensive validation report with error rates.- Results of peer review.- Certification from standards bodies (if applicable). |

| Deployment & Maintenance | TRL 7-9 (System Proven in Operational Environment) | - Deploy with a certification package (all documentation).- Monitor performance in real cases.- Plan for updates and re-validation. | - Chain of custody documentation from real cases.- Testimony from other experts on widespread acceptance.- Audit logs from the tool's operational use. |

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key components and their functions in building and validating forensically sound digital tools.

Table 3: Essential Research Reagents for Forensic Tool Development & Validation

| Item / Solution | Function in Development & Validation |

|---|---|

| Controlled Test Image Generator | Creates standardized, forensically-sound disk images with known artifacts (files, logs, deleted data) for controlled tool testing and benchmarking. |

| Hash Value Calculator (Reference) | Provides a ground-truth checksum (e.g., SHA-256) for verifying the absolute integrity of data during preservation and collection tests. |

| Data Carving Benchmark Suite | A collection of file system images with known deleted files to quantitatively measure a tool's file recovery capabilities and error rates. |

| Open-Source Forensic Tools (e.g., Autopsy, Sleuth Kit) | Serves as a reference or baseline for comparative analysis and validation of results, promoting transparency and peer review [12]. |

| Commercial Forensic Tools (e.g., FTK, EnCase) | Acts as a validated commercial benchmark against which the performance and output of new or open-source tools can be compared [12]. |

| Explainable AI (XAI) Framework | A software library or set of principles (per NIST) integrated into AI tools to ensure they provide understandable reasons for their outputs, which is critical for courtroom testimony [13]. |

| Standardized Validation Framework | A structured methodology (e.g., based on NIST Computer Forensics Tool Testing) that outlines a rigorous experimental design for testing tool reliability, repeatability, and error rates [12]. |

The adherence to rigorous, scientifically grounded validation protocols is no longer optional for digital forensic tools; it is a fundamental prerequisite for their admission as evidence in courts of law. By systematically integrating TRL assessment into the software development lifecycle, developers and researchers can create a verifiable trail of evidence that demonstrates a tool's reliability, validates its error rates, and ensures its operations are transparent and explainable. This structured approach directly addresses the critical factors of the Daubert standard and fulfills the urgent need for reform highlighted by the NRC and PCAST reports [11]. Ultimately, embracing this disciplined framework is essential for upholding the integrity of the justice system, ensuring that digital evidence serves as a pillar of truth rather than a source of judicial error.

The integrity of digital evidence, and by extension judicial outcomes, is fundamentally reliant on the reliability of the digital forensics tools used in investigations. The development of these tools, however, faces a unique convergence of challenges: the breakneck pace of technological change in platforms and devices, the absolute requirement for legal defensibility, and the methodological divide between modern agile development practices and traditional, plan-driven Software Development Life Cycle (SDLC) models [15] [16] [9]. This creates a critical gap where the urgent need for updated tools can compromise the rigorous validation they require.

Simultaneously, the field is grappling with an explosion of data volume, variety, and velocity, alongside sophisticated anti-forensic techniques and the complexities of cloud and IoT evidence [8] [10]. These pressures often force tool developers to choose between speed (Agile) and rigor (Traditional SDLC), a compromise that can introduce risk into the entire investigative process. This paper argues for the integration of Technology Readiness Level (TRL) assessment as a unifying framework to bridge this methodological gap. Integrating TRL provides a structured, evidence-based mechanism to guide forensic tools from conceptual, research-oriented prototypes to court-ready, legally defensible products, without sacrificing adaptability or thoroughness.

The Evolving Landscape and Pressing Challenges in Digital Forensics

The environment in which digital forensics tools operate is more dynamic and demanding than ever. Key trends for 2025 illuminate the specific pressures placed on development lifecycles:

- Data Complexity and Scale: Digital evidence is characterized by an exponential growth in volume, variety, and velocity [8]. Investigations now routinely involve petabyte-scale datasets from cloud sources, a vast array of IoT devices, and encrypted mobile platforms, pushing manual analysis to obsolescence [16] [10].

- The Anti-Forensics Challenge: Cybercriminals are increasingly employing sophisticated techniques to erase, obscure, or manipulate digital evidence. These methods include encryption, steganography, and data wiping, which deliberately aim to frustrate forensic analysis [10].

- The Cloud Forensics Hurdle: The distributed nature of cloud storage introduces significant obstacles for investigators, including data fragmentation across global servers, jurisdictional conflicts due to differing national laws, and a lack of visibility and control compared to traditional data sources [16] [17]. Legacy forensic workflows are often incompatible with cloud infrastructure, where data is in constant motion [17].

- The Demand for Automation and AI: To manage data scale and complexity, the field is rapidly adopting AI and machine learning. These technologies accelerate tasks like pattern recognition in logs, media analysis for explicit content, and processing communications via Natural Language Processing (NLP) [10]. However, these AI-driven tools introduce their own need for validation, as "black box" models and training data bias can undermine the credibility of evidence in court [16] [10].

Table 1: Key Market and Technical Drivers Shaping Forensic Tool Development

| Driver | Impact on Forensic Tool Development | Supporting Data |

|---|---|---|

| Market Growth | Increased investment and competition, necessitating faster development cycles. | Global digital forensics market projected to reach $18.2 billion by 2030 (CAGR 12.2%) [16]. |

| Cloud Data Proliferation | Tools must adapt to API-based collection, cross-jurisdictional data retrieval, and petabyte-scale analysis. | Over 60% of newly generated data will reside in the cloud by 2025 [16]. |

| AI Integration | Development requires new validation protocols for AI-generated findings to ensure legal admissibility. | AI can increase deepfake audio detection accuracy to 92% [16]. |

| Device Proliferation & Security | Tools must continuously update to handle new mobile, IoT, and vehicle systems with advanced encryption. | Tens of billions of IoT devices expected worldwide by 2025 [9]. |

Current SDLC Methodologies and Their Limitations in Forensics

Agile Methodology

Agile development, with its emphasis on iteration, customer collaboration, and responding to change, is highly effective for rapidly adapting to new forensic challenges. Its principles are showcased in the development of tools like LinkForensics, where developer-law enforcement collaboration and almost weekly feedback loops enabled the swift creation of an automated tool for identifying harmful link pathways—a process previously done manually [18]. This approach allows teams to "action [new requirements] immediately" [18], which is crucial in a field where exploit techniques change constantly.

However, the very strength of Agile—its flexibility—becomes a liability for ensuring the rigorous, repeatable validation required for courtroom evidence. An iterative cycle may prioritize a new feature without dedicating sufficient time to the extensive, documented testing needed to prove the tool's findings are forensically sound and reproducible.

Traditional SDLC and Secure-SDLC (S-SDLC)

Traditional SDLC models, and their secure counterparts like the Secure Software Development Life Cycle (S-SDLC), provide the structured rigor that Agile lacks. Methodologies such as McGraw's "Seven Touchpoints" integrate security activities—including security requirements, design, testing, and maintenance—throughout all phases of development [19]. This ensures that foundational practices like secure coding, penetration testing, and static analysis are not afterthoughts but are built into the process from the beginning [19]. This is essential for creating a "forensically ready" SDLC that produces a verifiable audit trail and ensures evidence integrity [20].

The limitation of these plan-driven models is their inherent inflexibility. They can be too slow to keep pace with the evolving threat landscape, potentially resulting in tools that are secure and reliable but obsolete by the time they are deployed.

The Systemic Gap

The core problem is a systemic one. Current development practices, whether Agile or Traditional, often lack techniques to "represent and reason about the systemic problems that are created by inadequate investment, by poor management leadership and by the breakdown in communication between development teams" [21]. The focus tends to be on technical execution rather than on a framework for ensuring that a tool progresses methodically from a research concept to a judicially robust product. This gap can lead to tools that are either rapidly delivered but not properly validated, or thoroughly validated but no longer relevant.

Technology Readiness Levels (TRL) as a Unifying Framework

The TRL framework, originally developed by NASA, provides a standardized scale to assess the maturity of a particular technology. Its integration into forensic software development can create a common language between developers, researchers, and legal professionals, objectively measuring progress toward a forensically sound product.

The framework's power lies in translating abstract goals like "courtroom readiness" into a series of concrete, evidence-based milestones. This bridges the Agile-Traditional SDLC divide by allowing for iterative development within a given TRL stage (an Agile strength), while requiring specific, rigorous deliverables to advance to the next level of maturity (a Traditional SDLC strength).

Table 2: Technology Readiness Levels (TRL) Adapted for Digital Forensics Tools

| TRL | Stage Definition | Forensic-Specific Validation Criteria | Primary SDLC Phase |

|---|---|---|---|

| 1-3: Research | Basic principles observed and formulated. Initial experimental proof-of-concept. | Concept validates a core forensic function (e.g., parsing a new file system). | Requirements & Design |

| 4-5: Development | Component and system validation in lab environment. | Tool reliably extracts/data carves from a controlled disk image. Output is consistent. | Implementation & Testing |

| 6-7: Prototyping | System prototype demonstrated in operational/realistic environment. | Tool processes evidence from a real, but non-case, device (e.g., donated phone). | Testing & Deployment |

| 8-9: Operation | System complete and qualified. Proven in operational environment. | Tool used successfully in actual investigations; results withstand peer review and legal discovery. | Deployment & Maintenance |

TRL Integration Logic

The following diagram visualizes how the TRL framework creates a bridge between Agile and Traditional SDLC methodologies, ensuring a continuous flow of validation and feedback throughout the development lifecycle.

Application Notes: TRL Integration Protocols for Forensic Tool Development

This section provides detailed, actionable protocols for integrating TRL assessment into the development of digital forensics tools.

Protocol 1: Establishing a TRL Assessment Baseline

Objective: To define the specific, measurable criteria a forensic tool must meet at each TRL stage. Methodology:

- Convene a Cross-Functional Panel: Assemble a team including digital forensics examiners, software developers, legal advisors (to address admissibility concerns), and quality assurance analysts.

- Define TRL Exit Criteria: For each TRL (1-9), the panel will document the required evidence of maturity. This moves beyond generic software metrics to forensic-specific validation.

- Example for TRL 5 (Component Validation): "The tool's data carving module must successfully recover 99.5% of specified file types (JPEG, PDF, SQLite) from a standardized NIST-based test image, producing a cryptographically hash-verifiable output log."

- Example for TRL 7 (System Demonstrated in Operational Environment): "The tool must complete a full extraction and analysis of a donated smartphone (e.g., Android 13, iOS 16) in a lab setting that mimics a real investigation, producing a report that aligns with the findings of an established commercial tool (e.g., Cellebrite, Oxygen Forensic Suite) for 95% of common artifacts."

- Documentation: All criteria must be documented in a TRL Assessment Handbook, which will serve as the objective benchmark for all maturity evaluations.

Protocol 2: TRL-Gated S-SDLC Integration

Objective: To embed TRL assessment gates into the Secure Software Development Life Cycle, ensuring security and forensic soundness are validated at each stage. Methodology:

- Map TRLs to SDLC Phases: Align TRL milestones with specific SDLC phases as shown in Table 2. For instance, completing the Design phase requires achieving at least TRL 3.

- Implement Phase-Gate Reviews: Before a project can proceed from one SDLC phase to the next, a formal review must be held. The gate review for moving from Testing to Deployment, for example, is conditional upon the tool achieving TRL 7.

- Incorporate Security Touchpoints: Integrate the security activities from models like McGraw's Seven Touchpoints [19] directly into the TRL criteria. Advancement to TRL 6, for example, requires passing a security-focused code review and static analysis.

Protocol 3: Agile-TRL Hybrid for Rapid Feature Development

Objective: To allow for Agile development of new features for a mature tool without compromising its overall validated state. Methodology:

- Feature-Specific TRL Tracking: When a new feature (e.g., support for a new messaging app) is added to a tool already at TRL 8, that feature is assigned its own, lower TRL (e.g., TRL 4).

- Sandboxed Iteration: The feature is developed and iterated upon using Agile sprints within a dedicated development branch. Its progression (TRL 4 → 5 → 6) is tracked independently.

- Promotion to Main Branch: The new feature is only merged into the main, TRL 8-certified branch of the tool once it has itself reached TRL 7, validated per the protocols in 5.1 and 5.2. This ensures the core tool's maturity is never degraded by new, unproven code.

The Scientist's Toolkit: Essential Research Reagents for Forensic Tool Validation

The following reagents and materials are critical for conducting the experiments and validation procedures required to advance a forensic tool's TRL.

Table 3: Key Research Reagents for Digital Forensics Tool Validation

| Reagent / Material | Function in Development & Validation | Example Use Case |

|---|---|---|

| NIST CFReDS Kit | Provides standardized, pre-built digital corpora for controlled testing and tool calibration. | Used in TRL 4-5 to establish baseline accuracy of file carving and parsing algorithms against a known-ground-truth dataset. |

| Donated Device Library | A collection of sanitized, real-world mobile phones, IoT devices, and hard drives from various manufacturers and OS versions. | Used in TRL 6-7 for operational testing in a realistic environment, ensuring tool compatibility with diverse hardware. |

| Forensic Software Toolsuite | Established commercial and open-source tools (e.g., Autopsy, Belkasoft X, Cellebrite) used for cross-validation. | Used as a reference standard at TRL 7 to verify that a new tool's output is forensically consistent with accepted industry tools. |

| Cryptographic Hash Generator | Software (e.g., sha256sum) to generate unique digital fingerprints for evidence files and tool outputs. |

Critical at all TRLs for proving evidence integrity and ensuring tool operations do not alter the source data. |

| Controlled Test Images | Custom disk images containing known artifacts, hidden data, and anti-forensic challenges (e.g., steganography, encrypted volumes). | Used to test and score a tool's effectiveness against specific threats and techniques during TRL 5-6 development. |

| Legal Admissibility Checklist | A document, developed in consultation with legal experts, outlining the technical requirements for courtroom evidence. | Guides development from TRL 1 to ensure the final product (TRL 9) meets the legal standards for discovery and testimony. |

The integration of the Technology Readiness Level framework into both Agile and Traditional SDLC models offers a pragmatic and systematic solution to the core challenges in modern digital forensics tool development. It provides a structured pathway to transform innovative research into legally defensible technology. By adopting TRL gating, the field can foster an environment where tools are developed with both the speed to react to new threats and the rigor to withstand judicial scrutiny. This bridges the critical gap between rapid innovation and the unwavering reliability required by the justice system, ultimately strengthening the integrity of digital evidence worldwide.

A Practical TRL Integration Framework for the Forensic SDLC

Technology Readiness Levels (TRL) are a systematic metric used to assess the maturity of a particular technology. The scale ranges from TRL 1 (basic principles observed) to TRL 9 (actual system proven in operational environment) [3] [1]. This application note details the activities, outputs, and validation criteria for TRL 1 through TRL 3 within the context of forensic science research and development. This early phase transforms a fundamental scientific observation into a validated proof-of-concept, establishing its potential for forensic application.

Integrating TRL assessment into the forensic software development lifecycle ensures that new tools meet rigorous scientific standards and practical investigative needs from the outset [14]. The objective of Phase 1 is to define precise forensic requirements and demonstrate analytical proof-of-concept, laying a foundation for future development and eventual integration into operational forensic workflows.

TRL Definitions and Phase 1 Objectives

The following table outlines the specific definitions and core focus for each TRL within Phase 1.

Table 1: Technology Readiness Levels 1-3: Definitions and Focus

| TRL | Official Definition | Phase 1 Focus in Forensic Context |

|---|---|---|

| TRL 1 | Basic principles observed and reported [1]. | Initial scientific research begins. Fundamental knowledge of a technique (e.g., a chemical reaction, a physical property, an algorithm) is documented for its potential forensic relevance. |

| TRL 2 | Technology concept and/or application formulated [1]. | Practical application of the basic principles is invented. A specific forensic use case is proposed (e.g., "This spectroscopic method could differentiate body fluid stains."). |

| TRL 3 | Analytical and experimental critical function and/or characteristic proof-of-concept [1]. | Active R&D is initiated. Analytical and laboratory studies validate the core concept. A proof-of-concept model confirms the technology's viability for the proposed forensic application. |

Defining Forensic Requirements: The Strategic Framework

The transition from TRL 1 to TRL 3 must be guided by a clear strategic framework aligned with the documented needs of the forensic community. The National Institute of Justice (NIJ) Forensic Science Strategic Research Plan, 2022-2026 provides critical guidance for defining these requirements [22].

Applied Research and Development Objectives

Forensic technology concepts should aim to fulfill one or more of the following applied research objectives [22]:

- Tools that increase sensitivity and specificity of forensic analysis.

- Methods to maximize the information gained from limited or degraded evidence.

- Nondestructive or minimally destructive methods that preserve evidence for further testing.

- Machine learning methods for the classification of forensic evidence.

- Rapid and reliable field-deployable technologies for use at crime scenes.

- Automated tools to support examiners' conclusions and reduce subjective bias.

Foundational Research Objectives

Concurrently, foundational research must assess the fundamental validity of the proposed method [22]:

- Understanding the fundamental scientific basis of the new forensic method.

- Quantifying measurement uncertainty associated with the analytical technique.

- Establishing the limitations of the evidence analyzed by the method, including its stability, persistence, and transfer properties.

Experimental Protocols for Analytical Proof-of-Concept

The following protocols provide a framework for achieving experimental proof-of-concept (TRL 3) in key areas of forensic science.

Protocol: Proof-of-Concept for Body Fluid Identification Using Novel Spectroscopy

This protocol outlines the steps to validate a novel spectroscopic method for differentiating body fluids, a common trace evidence type [23].

1. Objective: To demonstrate that a novel analytical technique (e.g., FTIR Spectroscopy) can reliably distinguish between dried stains of blood, semen, and saliva on a representative substrate (e.g., cotton cloth).

2. Materials and Reagents:

- Reference Materials: Purified samples of blood, semen, and saliva from approved ethical sources.

- Substrates: 5 cm x 5 cm squares of white 100% cotton cloth.

- Analytical Instrument: Fourier-Transform Infrared (FTIR) Spectrometer.

- Software: Multivariate statistical analysis software (e.g., PCA, PLS-DA).

3. Experimental Procedure:

- Sample Preparation (Day 1):

- Spot 10 µL of each reference body fluid onto separate, labeled cloth substrates (n=5 per fluid).

- Allow all spots to air-dry completely at room temperature for 24 hours.

- Data Acquisition (Day 2):

- Using the FTIR spectrometer, collect absorption spectra from each dried stain.

- Instrument Settings: Resolution: 4 cm⁻¹, Number of Scans: 64, Spectral Range: 4000 - 600 cm⁻¹.

- Ensure background scans are collected immediately before each sample set.

- Data Analysis (Day 3):

- Pre-process all spectra (e.g., baseline correction, vector normalization).

- Input processed spectral data into the multivariate software.

- Perform Principal Component Analysis (PCA) to visualize natural clustering of the three body fluid types.

- Develop a Partial Least Squares-Discriminant Analysis (PLS-DA) model and use cross-validation to calculate classification accuracy.

4. Success Criteria for TRL 3: The PLS-DA model must achieve a cross-validated classification accuracy of ≥95% in differentiating the three body fluids, demonstrating a robust proof-of-concept.

Protocol: Proof-of-Concept for Drug Analysis Using Chromatography

This protocol establishes a method for developing an initial proof-of-concept for separating and identifying compounds in a complex mixture, such as illicit drugs [24].

1. Objective: To develop a Gas Chromatography-Mass Spectrometry (GC-MS) method that separates and provides a preliminary identification of three common compounds in a simulated seized drug sample.

2. Materials and Reagents:

- Analytical Standards: Certified reference materials of caffeine, procaine, and heroin.

- Simulated Sample: A mixture of the three standards in methanol at approximately 100 µg/mL each.

- Solvents: HPLC-grade methanol and dichloromethane.

- Analytical Instrument: Gas Chromatograph coupled to a Mass Spectrometer (GC-MS).

3. Experimental Procedure:

- Method Development:

- Inject individual standards to determine their retention times and characteristic mass spectra.

- Optimize GC temperature ramp to achieve baseline separation of all three compounds.

- Sample Analysis:

- Inject 1 µL of the simulated sample mixture using the optimized method.

- GC Conditions: Inlet Temp: 250°C, Split Ratio: 10:1, Oven Program: 50°C (hold 1 min) to 300°C at 15°C/min.

- MS Conditions: Ion Source Temp: 230°C, Transfer Line: 280°C, Scan Range: 40-550 m/z.

- Data Interpretation:

- Analyze the total ion chromatogram (TIC) to confirm separation of three distinct peaks.

- For each peak, compare the generated mass spectrum to a reference spectral library (e.g., NIST) for identification. A match factor >80% is considered a preliminary identification.

4. Success Criteria for TRL 3: The method must successfully separate the three components in the mixture with a resolution (Rs) >1.5 between all peaks, and library search must yield a preliminary identification for each.

Workflow Visualization and Data Analysis

The logical progression from basic principle to validated proof-of-concept follows a defined pathway. The diagram below illustrates this workflow and the critical decision gates.

Quantitative Data Analysis and Success Metrics

At the culmination of TRL 3, experimental data must be evaluated against pre-defined quantitative metrics. The following table summarizes example success criteria for different types of forensic proof-of-concept studies.

Table 2: Example Success Criteria for TRL 3 in Forensic Proof-of-Concept Studies

| Analytical Technique | Proof-of-Concept Goal | Key Performance Metrics | TRL 3 Success Threshold |

|---|---|---|---|

| Multivariate Spectroscopy [23] | Differentiate biological stains | Classification Accuracy | ≥ 95% |

| Chromatography (GC-MS) [24] | Separate drug混合物 | Chromatographic Resolution (Rs) | > 1.5 between all critical pairs |

| Mass Spectrometry [24] | Identify explosive residue | Library Match Factor / Signal-to-Noise | > 80% / > 10:1 |

| Capillary Electrophoresis [24] | Detect trace DNA | Limit of Detection (LOD) | < 50 pg DNA |

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, materials, and instruments essential for conducting proof-of-concept experiments in forensic analytical chemistry.

Table 3: Key Research Reagent Solutions and Materials for Forensic Proof-of-Concept Studies

| Item | Function / Application | Example in Protocol |

|---|---|---|

| Certified Reference Materials | Provides a ground-truth standard for method validation and calibration. | Purified drug standards (e.g., heroin, caffeine) for GC-MS identification [24]. |

| Body Fluid Standards (Ethically Sourced) | Used to develop and validate methods for body fluid identification. | Purified blood, semen, and saliva for spectroscopic differentiation [23]. |

| Fourier-Transform Infrared (FTIR) Spectrometer | Identifies organic functional groups and compounds by measuring infrared absorption. | Generating molecular "fingerprints" to classify unknown body fluids [23] [24]. |

| Gas Chromatograph-Mass Spectrometer (GC-MS) | Separates volatile mixtures (GC) and provides definitive identification of components (MS). | Separating and identifying compounds in a complex seized drug sample [24]. |

| Capillary Electrophoresis (CE) System | Separates ionic molecules like DNA fragments based on size and charge. | Creating a DNA profile from trace biological evidence [24]. |

| Multivariate Statistical Software | Analyzes complex, multi-dimensional data to find patterns and build classification models. | Performing PCA and PLS-DA on spectral data to differentiate body fluids [23]. |

Integrating Technology Readiness Level (TRL) assessment into the forensic software development lifecycle provides a structured framework for de-risking technology development and objectively evaluating maturity. Phase 2 (TRL 4-6) encompasses validation in laboratory and relevant environments, representing a critical transition from basic component testing to integrated prototype demonstration. This phase ensures that forensic software components and systems function reliably under controlled and realistic conditions before deployment in operational settings [25].

The rigorous application of standardized testing protocols during this phase is paramount for building confidence in the software's capabilities. For digital forensic tools, this directly correlates with the admissibility and defensibility of digital evidence in legal proceedings [25] [26]. This document outlines detailed application notes and experimental protocols for conducting component and prototype validation using forensic datasets, providing a roadmap for researchers and developers in the field.

Core Principles and Quantitative Metrics for Validation

Validation at TRL 4-6 is guided by core principles that ensure the process is systematic, thorough, and legally defensible. These principles include a methodological approach, reproducibility, validation against real-world scenarios, and thorough documentation [25]. Quantitative metrics are essential for objectively measuring a tool's performance against these principles and established benchmarks.

Table 1: Key Quantitative Validation Metrics for Forensic Software at TRL 4-6

| Metric Category | Specific Metric | TRL 4 (Lab) Target | TRL 5-6 (Relevant Environment) Target | Measurement Method |

|---|---|---|---|---|

| Data Integrity | Hash Verification Success Rate | 100% | 100% | SHA-1, MD5 hashing of source vs. image [27] |

| Processing Accuracy | File Carving Accuracy | >95% | >98% | Comparison against known file set [28] |

| Data Parsing Fidelity | >90% | >95% | Comparison of parsed data to raw database bytes [26] | |

| Performance | Data Processing Throughput (GB/hour) | Baseline | ≥20% improvement over baseline | Timed processing of standardized dataset [25] |

| Reliability | Test Result Reproducibility | 100% | 100% | Repeated tests in same environment (ISO 5725) [25] |

| Functional Coverage | Percentage of NIST CFTT Tests Passed | Baseline for tool category | >90% of relevant tests | Execution of CFTT test procedures [25] [29] |

The National Institute of Standards and Technology's Computer Forensics Tool Testing (CFTT) program provides a critical foundation for this testing, developing general tool specifications, test procedures, and test criteria [25] [29]. The principle of reproducibility, as defined by ISO 5725, requires that tests yield consistent and reproducible results, meaning the same findings are achieved whether the tool is used in the same lab or a different one [25].

Experimental Protocols

Protocol 1: Component-Level Validation of a Data Parsing Algorithm (TRL 4)

1. Objective: To validate the accuracy and reliability of a software component (e.g., a SQLite database parser) in an isolated laboratory environment.

2. Materials and Reagents:

- Test System: A dedicated, forensically sterile workstation with a configured write-blocker.

- Forensic Software Prototype: The version of the software containing the parser component to be tested.

- Reference Tool: An established, validated forensic tool (e.g., FTK, Autopsy) for result comparison [27] [28].

- CFReDS Dataset: A Computer Forensic Reference Data Set (CFReDS) from NIST, containing a known set of data artifacts with verified content [29].

- Custom Dataset: A laboratory-created dataset with a precisely known structure and content, including intentionally corrupted records to test error handling.

3. Methodology: 1. Preparation: Place the CFReDS and custom datasets on a test storage device. Create a forensic image of this device using a validated hardware imager, and verify the image integrity using a cryptographic hash (e.g., SHA-1) [27]. 2. Execution: * Process the forensic image through the prototype software's parser component. * Execute the same parsing operation using the reference tool. * For both runs, record all extracted database records, including deleted entries where applicable. 3. Data Analysis: * Compare the output of the prototype parser against the known ground truth of the datasets. * Quantify the number of correctly parsed records, missed records (false negatives), and incorrectly interpreted records (false positives). * Cross-validate the prototype's output against the output from the reference tool, noting any discrepancies. * Document the component's behavior when encountering corrupted or unexpected data structures.

4. Acceptance Criteria: The parser component must correctly extract no less than 95% of known records from the CFReDS dataset and demonstrate robust error handling without catastrophic failure. Results must be 100% reproducible upon repeated testing [25].

Protocol 2: Integrated Prototype Demonstration with Synthetic Forensic Datasets (TRL 5)

1. Objective: To demonstrate the performance of an integrated software prototype in a relevant environment using a synthetic, scenario-based forensic dataset that includes coherent background activity.

2. Materials and Reagents:

- Relevant Environment: A virtualized machine or dedicated test computer that simulates a typical user's device.

- Integrated Software Prototype: The complete forensic software application with all key components integrated.

- Synthetic Disk Image: A dataset generated using a framework like Re-imagen, which leverages Large Language Models (LLMs) to create realistic device usage scenarios, including "wear-and-tear" artifacts and background user activity [30].

- Analysis Plan: A predefined plan outlining the investigative scenario (e.g., "identify evidence of data exfiltration") and specific artifacts to target.

3. Methodology: 1. Scenario Setup: Utilize the Re-imagen framework to generate a synthetic disk image. The scenario should involve a specific evidential action (e.g., copying a confidential file to a USB) amidst normal, LLM-generated user persona activities (e.g., web browsing, email, document editing) [30]. 2. Blinded Analysis: Provide the integrated software prototype and the synthetic disk image to an analyst without disclosing the ground truth of the scenario. 3. Processing and Examination: The analyst uses the prototype to conduct a full investigation, including evidence acquisition, data carving, keyword searching, and timeline generation [28]. 4. Reporting: The analyst produces a report detailing the findings, including the evidence of the key evidential action and a reconstruction of user activity.

5. Validation: Compare the prototype-generated report against the known ground truth of the synthetic scenario. Evaluate not only the success in finding the key evidence but also the accuracy and coherence of the background activity reconstruction.

6. Acceptance Criteria: The prototype must correctly identify the key evidential actions and provide a timeline of activity that is consistent with the known scenario. The software should effectively distinguish between significant evidence and incidental background noise.

Protocol 3: Performance Benchmarking and Robustness Testing (TRL 6)

1. Objective: To benchmark the performance and robustness of the prototype against large-scale, multi-source datasets and to test its resilience against non-standard inputs.

2. Materials and Reagents:

- High-Performance Computing Node: A system with significant processing power and memory.

- Benchmarking Prototype: The software prototype configured for performance logging.

- NSRL Subset: A substantial subset of the National Software Reference Library (NSRL) Reference Data Set (RDS) to test file filtering capabilities [29].

- Multi-source Evidence Dataset: A large corpus comprising data from multiple device types (e.g., hard drives, smartphone images, cloud data exports).

3. Methodology: 1. Throughput Test: Process the multi-source evidence dataset with the prototype and record the time to complete key stages (e.g., ingestion, indexing, analysis). Compare against baseline performance metrics. 2. Scalability Test: Measure system resource utilization (CPU, RAM, storage I/O) while processing datasets of increasing size. 3. Robustness Test: Introduce datasets with known anomalies, such as non-standard file system features, intentionally corrupted partitions, or files with manipulated extensions. Document the prototype's ability to handle these gracefully. 4. Hash Filtering Efficiency Test: Process a disk image containing a known mixture of known-good (e.g., OS files from NSRL) and unknown files. Verify the prototype's accuracy in filtering and categorizing files.

4. Acceptance Criteria: The prototype must process data at a throughput meeting or exceeding project requirements, scale efficiently with dataset size, and maintain stability when encountering anomalous data. Hash filtering must achieve a false-positive rate of less than 0.1%.

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials and digital "reagents" required for the rigorous validation of forensic software at TRL 4-6.

Table 2: Key Research Reagents for Forensic Software Validation

| Reagent / Material | Function in Validation | Example Sources / Instances |

|---|---|---|

| CFReDS (Computer Forensic Reference Data Sets) | Provides simulated digital evidence with known content for testing tool accuracy and verifying findings [29]. | NIST |

| NSRL (National Software Reference Library) | Reference Data Set (RDS) of file profiles used to filter known files, testing the software's ability to identify unknown or relevant data [29]. | NIST |

| Synthetic Dataset Generation Frameworks | Creates realistic, scalable, and privacy-compliant datasets with coherent background activity for testing in relevant environments [30]. | Re-imagen |

| Validated Reference Tools | Provides a benchmark for comparing the output and performance of the prototype under test, ensuring parity with established methods [25] [27]. | Forensic Toolkit (FTK), EnCase, Autopsy |

| Forensic Hardware Interfaces | Ensures the integrity of original evidence during the testing process by preventing write operations to source media [27]. | Hardware write-blockers |

| Hash Algorithm Suites | Fundamental for verifying the integrity of evidence and forensic images throughout all testing phases [27]. | SHA-1, SHA-256, MD5 |

Technology Readiness Levels (TRLs) provide a systematic metric for assessing the maturity of a particular technology. The scale ranges from 1 (basic principles observed) to 9 (actual system proven in operational environment) [3]. This application note details the critical final phases of forensic software maturation—TRLs 7 through 9—where technologies transition from advanced prototypes to fully operational systems qualified for live investigations.

In digital forensics, this progression ensures that tools not only function technically but also meet the rigorous demands of evidentiary standards, chain-of-custody requirements, and operational workflows. The transition from TRL 6 to TRL 7 is often considered a critical chasm, marking the point where a product begins to be used in real conditions by users with higher expectations and lower tolerance for imperfections [31]. Successfully navigating this "valley of death"—where neither academia nor the private sector typically prioritizes investment—requires coordinated collaboration between developers, forensic examiners, and legal experts [32].

TRL Definitions and Progression Criteria

Detailed TRL Definitions for Forensic Software

TRL 7: System Prototype Demonstration in Operational Environment A TRL 7 technology has a working model or prototype demonstrated in an actual operational environment [3]. For forensic software, this represents a major step increase in maturity where a prototype system is verified in a real investigative context, though potentially with limited scope. The software must handle genuine evidence sources and produce forensically sound results under realistic conditions.

TRL 8: System Complete and Qualified At TRL 8, the technology has been tested and "flight qualified" and is ready for implementation into an already existing technology or technology system [3]. In forensic terms, this means the software has completed all validation testing, is fully documented, and is qualified for use in investigations that may produce evidence for legal proceedings.

TRL 9: Actual System Proven in Operational Environment TRL 9 represents the highest maturity level, where the actual system has been "flight proven" during a successful mission [3]. For forensic tools, this means successful deployment in multiple real investigations, potentially across different organizations, with demonstrated reliability and effectiveness in producing admissible digital evidence.

Quantitative Progression Metrics

Table 1: Key Progression Criteria for TRLs 7-9 in Digital Forensics

| TRL | Validation Environment | Minimum Case Threshold | Evidence Integrity Requirements | Performance Benchmarks |

|---|---|---|---|---|

| TRL 7 | Live investigative environment with supervised use | 3-5 controlled investigations | Write-blocking functionality verified; hash validation implemented | Processing speed ≥80% of production tools; false positive rate <15% |

| TRL 8 | Multiple operational environments across different organizations | 10+ diverse case types | Chain-of-custody logging automated; compliance with ISO 27043 standards | Processing speed ≥95% of industry standards; false positive rate <5% |

| TRL 9 | Full deployment across intended user base | 25+ successful investigations with evidence presented in legal proceedings | Zero unrecoverable errors in evidence processing; full audit trail compliance | 99.9% reliability in processing supported evidence types; user efficiency improved by ≥20% |

Experimental Protocols for TRL Validation

Protocol for TRL 7 Operational Demonstration

Objective: Validate that the forensic software prototype functions effectively in a live investigative environment under supervised conditions.

Materials and Setup:

- Forensic workstation meeting minimum system requirements

- Write-blocking hardware for evidence acquisition

- Test evidence samples representing common case types (disk images, mobile device backups, memory dumps)

- Comparison tools (commercial forensic suites for result validation)

Methodology:

- Environment Configuration: Install the prototype software on designated forensic workstations following standard operating procedures for tool validation.

- Evidence Processing: Process at least three different evidence types through the complete forensic workflow—from acquisition to analysis and reporting.

- Result Validation: Compare findings with those obtained from established tools (e.g., EnCase, Autopsy, X-Ways Forensics) [33].

- Performance Metrics Collection: Document processing times, resource utilization, and accuracy metrics.

- User Feedback Integration: Forensic examiners complete standardized assessment forms covering usability, reliability, and output quality.

Success Criteria:

- Software processes evidence without altering original data

- Key functionalities perform as specified in design requirements

- No critical errors that halt investigation processes

- Results are forensically sound and reproducible

Protocol for TRL 8 System Qualification

Objective: Qualify the complete forensic system for use in investigations that may yield evidence for legal proceedings.

Materials and Setup:

- Multiple forensic workstations across different organizational units

- Diverse evidence types including challenging scenarios (encrypted volumes, anti-forensic techniques)

- Documentation for legal compliance (validation protocols, error logging procedures)

Methodology:

- Multi-Site Deployment: Install the qualified software version across at least two independent forensic laboratories.

- Blinded Testing: Examiners process cases without developer support, using only official documentation.

- Stress Testing: Process large-scale evidence sets (>1TB) and complex scenarios (APTs, anti-forensic techniques) [34].

- Legal Compliance Review: Verify that output meets standards for evidence presentation, including comprehensive audit trails and chain-of-custody documentation.

- Interoperability Testing: Validate integration with existing forensic ecosystems (centralized repositories, evidence management systems).

Success Criteria:

- Consistent performance across different environments and operators

- Comprehensive documentation supporting legal admissibility

- Effective handling of edge cases and challenging evidence

- Successful integration with existing laboratory workflows

Protocol for TRL 9 Operational Provenance