From AFIS Scores to Likelihood Ratios: A Scientific Framework for Quantitative Fingerprint Evidence Evaluation

This article provides a comprehensive guide for forensic researchers and professionals on converting Automated Fingerprint Identification System (AFIS) similarity scores into forensically valid Likelihood Ratios (LR).

From AFIS Scores to Likelihood Ratios: A Scientific Framework for Quantitative Fingerprint Evidence Evaluation

Abstract

This article provides a comprehensive guide for forensic researchers and professionals on converting Automated Fingerprint Identification System (AFIS) similarity scores into forensically valid Likelihood Ratios (LR). It explores the foundational Bayesian framework underpinning this conversion, details methodological approaches including parametric and non-parametric calibration techniques, addresses critical troubleshooting aspects such as accounting for typicality and image quality, and outlines robust validation protocols. By translating subjective similarity scores into objective, quantitative LRs, this process enhances the scientific validity, transparency, and reliability of fingerprint evidence in judicial contexts, marking a crucial shift from experience-based to data-driven forensic evaluation.

The Bayesian Bedrock: Understanding the 'Why' Behind LR Conversion for AFIS

The Analysis, Comparison, Evaluation, and Verification (ACE-V) framework has long served as the methodological cornerstone of forensic fingerprint examination. However, its reliance on subjective human judgment presents significant limitations for scientific validation and transparent evidence reporting. The scientific imperative now demands a transition toward fully quantitative evaluation methods that compute the strength of evidence using statistical models and computational algorithms. This paradigm shift centers on converting similarity scores from Automated Fingerprint Identification Systems (AFIS) into calibrated Likelihood Ratios (LRs) that objectively quantify evidence strength under competing propositions [1].

This transformation addresses fundamental scientific requirements by enabling transparent validation, empirical measurement of error rates, and proper calibration of evidential strength. Recent research has demonstrated that quantitative models considering the position and direction of minutiae as three-dimensional feature variables can effectively quantify fingerprint individuality and provide a statistical foundation for refining AFIS scoring mechanisms [2]. The movement beyond subjective ACE-V to quantitative evaluation represents not merely a technical improvement but a fundamental requirement for meeting modern scientific standards in forensic practice.

Theoretical Foundation of Likelihood Ratio Calculation

Fundamental Principles

The Likelihood Ratio framework provides a coherent statistical approach for evaluating evidence under two competing propositions:

- H1 (Same-Source Proposition): The fingermark and fingerprint originate from the same finger of the same donor [1]

- H2 (Different-Source Proposition): The fingermark originates from a random finger of another donor from the relevant population [1]

The LR is computed as the ratio of the probability of the evidence under these two competing hypotheses: LR = P(Evidence|H1) / P(Evidence|H2)

This quantitative approach enables forensic scientists to articulate evidential strength numerically rather than through categorical statements, thereby providing a more transparent and scientifically defensible framework for expressing the value of forensic evidence. The LR framework logically separates the role of the forensic scientist (who provides the LR) from that of the fact-finder (who combines the LR with prior case information) [1].

Advantages Over Traditional ACE-V

Table: Qualitative ACE-V vs. Quantitative LR Approaches

| Aspect | Traditional ACE-V | Quantitative LR Framework |

|---|---|---|

| Output | Categorical conclusions (Identification, Exclusion, Inconclusive) | Continuous measure of evidence strength |

| Transparency | Subjective expert judgment | Computationally derived, algorithmically transparent |

| Error Measurement | Difficult to quantify empirically | Can be empirically measured and validated |

| Calibration | Varies between examiners | Systematic calibration against known datasets |

| Scientific Foundation | Pattern recognition expertise | Statistical modeling and probability theory |

| Validation | Proficiency testing | Comprehensive performance metrics [1] |

Quantitative LR methods address the "black box" nature of AFIS comparison algorithms by treating them as feature extractors and similarity score generators, then applying statistical models to convert these scores into properly calibrated LRs [1]. This approach acknowledges that commercial AFIS algorithms were primarily developed for candidate selection rather than evidential weight evaluation, making the statistical transformation essential for forensic evidence evaluation [1].

Experimental Protocols for LR Method Validation

Core Validation Framework

A comprehensive validation protocol for LR methods must assess multiple performance characteristics through structured experiments. The validation matrix should specify performance characteristics, metrics, graphical representations, validation criteria, data requirements, experimental protocols, analytical results, and validation decisions for each aspect of method performance [1].

Table: Essential Performance Characteristics for LR Method Validation

| Performance Characteristic | Performance Metrics | Graphical Representations | Validation Criteria |

|---|---|---|---|

| Accuracy | Cllr | ECE Plot | According to definition [1] |

| Discriminating Power | EER, Cllrmin | ECEmin Plot, DET Plot | According to definition [1] |

| Calibration | Cllrcal | ECE Plot, Tippett Plot | According to definition [1] |

| Robustness | Cllr, EER, Range of LR | ECE Plot, DET Plot, Tippett Plot | According to definition [1] |

| Coherence | Cllr, EER | ECE Plot, DET Plot, Tippett Plot | According to definition [1] |

| Generalization | Cllr, EER | ECE Plot, DET Plot, Tippett Plot | According to definition [1] |

Dataset Requirements and Preparation

Proper experimental design requires distinct datasets for development and validation stages:

- Development Dataset: Used for training statistical models and establishing scoring distributions. This may include simulated data or known fingerprints with controlled characteristics.

- Validation Dataset: Must consist of forensically relevant data, preferably fingermarks from real cases, to ensure ecological validity [1]. For privacy reasons, the original fingerprint images cannot typically be shared, but the derived LRs constitute the core data for validation [1].

Experimental protocols should specify the source, size, and characteristics of both development and validation datasets. The Netherlands Forensic Institute protocol, for example, used fingerprints scanned using the ACCO 1394S live scanner, converted into biometric scores using the Motorola BIS 9.1 algorithm [1].

Workflow for Quantitative Evidence Evaluation

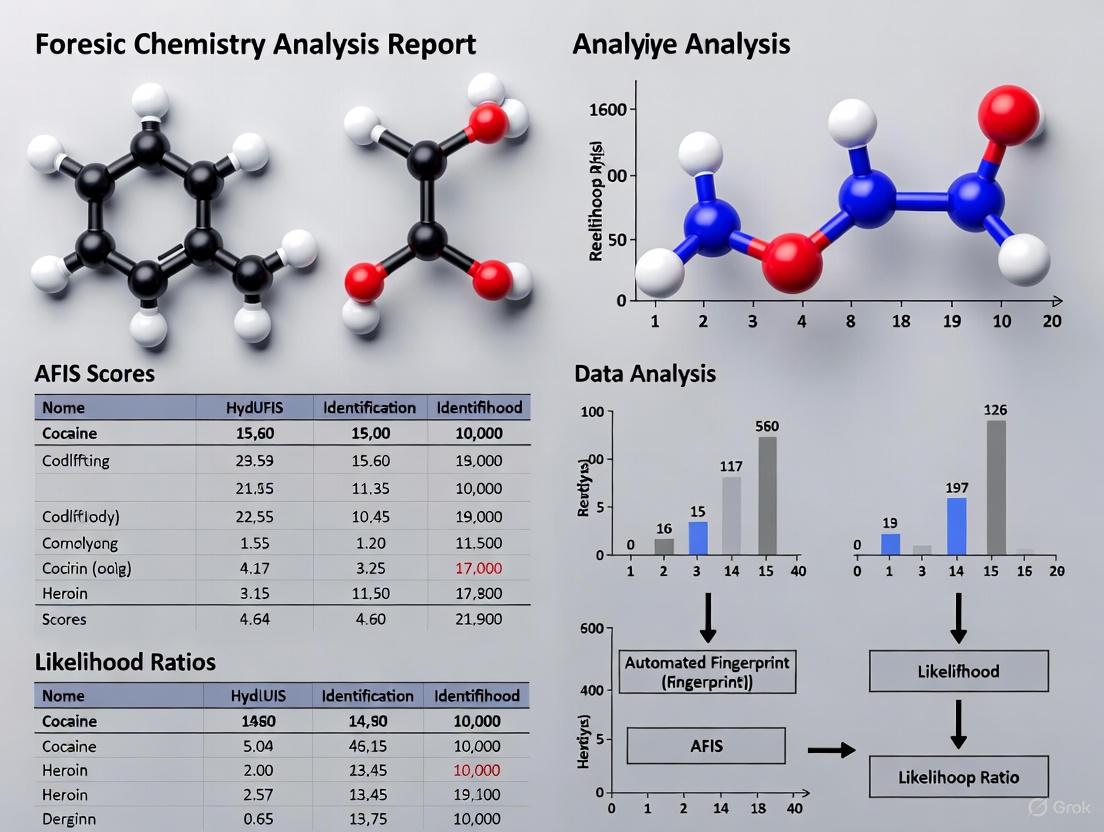

The following diagram illustrates the complete workflow for quantitative fingerprint evidence evaluation, from image acquisition to LR calculation:

Quantitative Individuality Assessment Protocol

Building on traditional minutiae comparison, advanced protocols now incorporate three-dimensional feature distribution analysis:

- Data Extraction: Extract 3D feature data (position and direction of minutiae) from known fingerprints in AFIS databases [2]

- Data Processing: Perform data calibration, translation, and error correction to normalize minutiae distribution [2]

- Distribution Analysis: Statistically analyze distribution density of minutiae across different pattern types (whorl, left loop, right loop, arch, accidental) [2]

- Individuality Scoring: Calculate individuality scores for fingerprints using quantitative models that account for observed distribution patterns [2]

- Score Integration: Modify AFIS scoring mechanisms to incorporate individuality scores, enhancing discrimination between same-source fingerprints and close non-matches [2]

This protocol leverages large-scale data analysis (e.g., 56,812,114 known fingerprints) to establish statistical foundations for refined AFIS scoring mechanisms and LR evidence evaluation frameworks [2].

The Scientist's Toolkit: Essential Research Reagents and Materials

Core Research Materials

Table: Essential Materials for LR Method Development and Validation

| Research Reagent | Function/Application | Implementation Example |

|---|---|---|

| AFIS with Score Export | Generates similarity scores for fingerprint comparisons | Motorola BIS/Printrak 9.1 algorithm [1] |

| Forensic Fingerprint Database | Provides ground-truthed data for method development and validation | Real forensic case data from Netherlands Forensic Institute [1] |

| Statistical Modeling Software | Implements LR calculation algorithms and performance metrics | R, Python with scikit-learn or custom implementations [1] |

| Validation Framework | Defines performance characteristics, metrics, and criteria | Validation matrix specifying accuracy, discrimination, calibration, etc. [1] |

| 3D Minutiae Distribution Data | Enables quantification of fingerprint individuality | Dataset of 56,812,114 known fingerprints with 3D feature variables [2] |

| Ordered Probit Model | Constructs LRs from examiner responses in error rate studies | Alternative to categorical reporting scales for palmprint comparisons [3] |

Data Presentation and Performance Metrics

Quantitative Results from Validation Studies

Comprehensive validation requires presentation of quantitative results across all performance characteristics. The following table summarizes typical outcomes from LR method validation:

Table: Exemplary Quantitative Results from LR Method Validation

| Performance Characteristic | Baseline Method Results | Enhanced Method Results | Relative Improvement | Validation Decision |

|---|---|---|---|---|

| Accuracy (Cllr) | 0.25 | 0.18 | -28.0% | Pass [1] |

| Discriminating Power (Cllrmin) | 0.15 | 0.10 | -33.3% | Pass [1] |

| Calibration (Cllrcal) | 0.12 | 0.08 | -33.3% | Pass [1] |

| Robustness (Cllr) | 0.28 | 0.20 | -28.6% | Pass [1] |

| Coherence (Cllr) | 0.26 | 0.19 | -26.9% | Pass [1] |

| Generalization (Cllr) | 0.27 | 0.21 | -22.2% | Pass [1] |

Interpretation of Quantitative Outcomes

The quantitative outcomes from validation studies provide critical insights for method improvement and implementation:

- Accuracy reflects how well the computed LRs correspond to ground truth, with lower Cllr values indicating better accuracy [1]

- Discriminating Power measures the method's ability to distinguish between same-source and different-source comparisons, with EER (Equal Error Rate) and Cllrmin as key metrics [1]

- Calibration assesses whether LRs are properly scaled, such that an LR of X corresponds to the appropriate strength of evidence [1]

- Robustness evaluates performance consistency across different data variations and conditions [1]

- Coherence ensures that the method produces logically consistent results across related evidence types [1]

- Generalization measures performance when applied to new data not used in development [1]

Recent studies applying these metrics have revealed that quantitatively derived LRs may show that traditional articulation scales overestimate the strength of support for same-source propositions by up to five orders of magnitude, highlighting the critical need for properly calibrated quantitative approaches [3].

Implementation Framework and Future Directions

Integration with Traditional Forensic Workflow

The following diagram illustrates how quantitative LR methods integrate with and enhance traditional forensic examination workflows:

Future Research Directions

The transition beyond subjective ACE-V to quantitative evaluation opens several promising research avenues:

- Integration of 3D Minutiae Distribution Models: Leveraging large-scale minutiae distribution data to refine AFIS scoring mechanisms and LR calculation [2]

- Multi-modal Biometric Evaluation: Developing LR frameworks that combine fingerprint evidence with other forensic modalities

- Case-specific Proposition Definition: Refining hypothesis formulation to address relevant case circumstances rather than generic propositions [1]

- Standardized Validation Protocols: Establishing universally accepted validation criteria and performance thresholds for forensic LR methods [1]

- Computational Efficiency Optimization: Enhancing algorithm performance for practical implementation in operational forensic laboratories

The scientific imperative for moving beyond subjective ACE-V to quantitative evaluation represents a fundamental evolution in forensic science. By implementing robust LR methods with comprehensive validation protocols, the field advances toward truly scientific evidence evaluation that is transparent, measurable, and scientifically defensible.

In the evaluation of scientific evidence, the Bayesian framework provides a coherent and intuitive method for updating beliefs in light of new data. Unlike frequentist statistics, which calculates the probability of observing the data given a hypothesized model (e.g., a p-value, denoted as P(D|H)), Bayesian statistics answers the more directly relevant question: what is the probability that a hypothesis is true given the observed data (denoted as P(H|D)) [4]. The Likelihood Ratio (LR) serves as the fundamental engine for this belief updating, quantifying how much more likely the evidence is under one hypothesis compared to an alternative.

The application of this methodology is particularly valuable in fields like diagnostic development and forensic science. For AFIS (Automated Fingerprint Identification System) score conversion research, the LR provides a rigorous, quantitative measure for converting a similarity score (the evidence) into a statement about the probability that two fingerprints originate from the same source. Its utility extends to drug development, where it can integrate diverse data types to predict drug-target interactions with high accuracy, substantially reducing development time and costs by exposing fewer patients to ineffective treatments [4] [5].

The Mathematical Foundation of the Likelihood Ratio

Bayes' Theorem and the Role of the LR

Bayes' Theorem mathematically describes how prior beliefs are updated with new evidence to form a posterior belief. The theorem is formally expressed as:

P(H|E) = [P(E|H) * P(H)] / P(E)

Where:

- P(H|E) is the Posterior Probability: The probability of the hypothesis (H) given the evidence (E).

- P(E|H) is the Likelihood: The probability of the evidence (E) if the hypothesis (H) is true.

- P(H) is the Prior Probability: The initial probability of the hypothesis before seeing the evidence.

- P(E) is the Marginal Probability of the Evidence: The total probability of the evidence under all possible hypotheses.

The Likelihood Ratio appears when we compare two competing and mutually exclusive hypotheses, typically termed H1 (the prosecution's hypothesis in forensics, or the alternative hypothesis in drug discovery) and H2 (the defense's hypothesis, or the null hypothesis). By writing Bayes' Theorem for both H1 and H2 and dividing the two expressions, we obtain the odds form of Bayes' Theorem:

[P(H1|E) / P(H2|E)] = LR * [P(H1) / P(H2)]

This can be summarized as:

Posterior Odds = Likelihood Ratio × Prior Odds

The Likelihood Ratio (LR) is the factor that converts the prior odds into the posterior odds. It is defined as:

LR = P(E|H1) / P(E|H2)

Interpretation of the Likelihood Ratio

The LR is a measure of the diagnostic strength of the evidence (E).

- An LR > 1 supports hypothesis H1. The larger the value, the more the evidence supports H1.

- An LR < 1 supports hypothesis H2. The closer the value is to zero, the more the evidence supports H2.

- An LR = 1 provides no support for either hypothesis, as the evidence is equally likely under both.

The following diagram illustrates the logical workflow of how the Likelihood Ratio functions within the Bayesian framework to update belief.

Core Components and Quantitative Values

Table 1: Core Components of the Bayesian Framework using Likelihood Ratios

| Component | Mathematical Representation | Interpretation in AFIS Research | Interpretation in Drug Development (BANDIT) |

|---|---|---|---|

| Evidence (E) | A measured similarity score, data point, or set of observations. | The AFIS similarity score between two fingerprints. | Multiple data types: drug efficacy, transcriptional response, structure, etc. [5]. |

| Hypotheses | H1: Proposition 1H2: Proposition 2 | H1: The two prints are from the same source.H2: The two prints are from different sources. | H1: Two drugs share a target.H2: Two drugs do not share a target [5]. |

| Likelihood P(E|H1) | Probability density function under H1. | Density of the score distribution for mated (same-source) comparisons. | Similarity of data profiles for drug pairs known to share a target [5]. |

| Likelihood P(E|H2) | Probability density function under H2. | Density of the score distribution for non-mated (different-source) comparisons. | Similarity of data profiles for drug pairs known to not share a target [5]. |

| Likelihood Ratio (LR) | LR = P(E|H1) / P(E|H2) | The strength of evidence the AFIS score provides for same-source vs. different-source. | The Total Likelihood Ratio (TLR) combining all data types for target prediction [5]. |

Application in Drug Target Identification: The BANDIT Case Study

The BANDIT (Bayesian ANalysis to determine Drug Interaction Targets) platform is a prime example of the LR's power in modern drug development. It addresses the critical bottleneck of target identification by integrating over 20 million data points from six distinct types [5].

Protocol: Calculating the Total Likelihood Ratio (TLR) for Drug-Target Prediction

Objective: To predict whether a query drug shares a target with a known drug in the database by integrating multiple, diverse data types.

Experimental Workflow Overview:

Step-by-Step Methodology:

Data Collection and Similarity Calculation:

- For a query drug and all drugs in the database with known targets, calculate pairwise similarity scores across multiple data types [5].

- Data Types Used: Drug structures, drug efficacy (e.g., NCI-60 GI50 values), post-treatment transcriptional responses, reported adverse effects, and bioassay results [5].

- Similarity calculations are specific to each data type (e.g., Tanimoto coefficient for structures, correlation for transcriptional responses).

Calibration of Individual Likelihood Ratios:

- For each data type, separate all drug pairs into two populations: those that share a known target and those that do not.

- For each data type's similarity score, construct density distributions for both the "shared-target" and "non-shared-target" populations.

- For a given observed similarity score (S) for a new drug pair, calculate the individual LR for that data type as: LRdatatype = (Density of S in Shared-Target distribution) / (Density of S in Non-Shared-Target distribution) [5].

Integration into a Total Likelihood Ratio (TLR):

- Assuming conditional independence of the data types, the individual LRs are combined by multiplication to yield the TLR [5]: TLR = LRstructure * LRGI50 * LRtranscriptional * LRsideeffect * LRbioassay

- The TLR is proportional to the odds that the query drug and the database drug share a target, given all available evidence.

Target Prediction via Voting Algorithm:

- For a query orphan compound, its TLR is calculated against all drugs in the database with known targets.

- A "voting" algorithm identifies specific protein targets: if a protein appears as a known target in many of the top-TLR shared-target predictions, it is likely a target for the query compound [5].

- The accuracy of this method increases with the TLR cutoff value, reaching ~90% in validation studies [5].

Research Reagent Solutions for Bayesian Drug-Target Identification

Table 2: Essential Materials and Data Sources for Implementing a BANDIT-like Protocol

| Reagent / Data Source | Function / Description | Utility in Bayesian Analysis |

|---|---|---|

| Chemical Structure Database(e.g., PubChem) | Provides canonical molecular structures for small molecules [5]. | Enables calculation of structural similarity, a primary feature for LR calculation. |

| Drug Sensitivity Profiling(e.g., NCI-60 GI50 screens) | Measures growth inhibition of drugs across a panel of 60 human tumor cell lines [5]. | Provides drug efficacy data; similarity in sensitivity profiles is a strong predictor of shared targets. |

| Transcriptional Response Database(e.g., LINCS L1000) | Catalogues gene expression changes in cell lines after drug treatment [5]. | Allows computation of similarity in gene expression signatures for LR calculation. |

| Adverse Event Reporting System(e.g., FAERS) | Database of reported side effects for approved drugs [5]. | Similarity in side effect profiles provides phenotypic evidence for shared mechanisms of action (LR input). |

| Bioassay Activity Database(e.g., PubChem BioAssay) | Contains results from high-throughput screening assays against various biological targets [5]. | Provides a broad, unbiased set of biological activity data for comprehensive similarity scoring. |

| Known Drug-Target Database(e.g., ChEMBL, DrugBank) | A curated repository of known interactions between drugs and their protein targets [5]. | Serves as the "ground truth" for calibrating the likelihood distributions for shared vs. non-shared target pairs. |

Advanced Considerations and Best Practices

Validation and Performance

The BANDIT platform was validated using 5-fold cross-validation on approximately 2000 compounds with known targets, achieving an Area Under the Receiver Operating Curve (AUROC) of 0.89 [5]. The predictive power consistently increased as more data types were integrated into the TLR, confirming the value of the multi-faceted Bayesian approach [5]. Furthermore, BANDIT's predictions for novel kinase inhibitors showed that its predicted targets had significantly higher levels of experimental inhibition compared to non-predictions (p < 1e-5), demonstrating its practical utility in guiding experimental work [5].

Visualizing Data Type Contribution

The discriminative power of different data types, as measured by their ability to separate drug pairs that share targets from those that do not, varies significantly. The following table, derived from the BANDIT study's Kolmogorov-Smirnov test analysis, summarizes this performance.

Table 3: Discriminative Power of Different Data Types for Shared-Target Prediction

| Data Type | K-S Statistic (D) | Relative Performance | Interpretation |

|---|---|---|---|

| Structural Similarity | 0.390 | Highest | The most powerful single differentiator of shared targets [5]. |

| Bioassay Similarity | 0.327 | High | Unbiased bioactivity data is a highly discriminative feature [5]. |

| Drug Efficacy (GI50) | 0.331 | High | Similarity in growth inhibition patterns is a strong predictor [5]. |

| Adverse Effects | 0.14 | Low | Side effect profile similarity is a weaker differentiator [5]. |

| Transcriptional Response | 0.10 | Lowest | Gene expression response similarity was the weakest single predictor [5]. |

| Total LR (TLR) | 0.69 | Highest (Integrated) | Combining all data types drastically outperforms any single data type [5]. |

An Automated Fingerprint Identification System (AFIS) is a digital biometric system designed to capture, store, analyze, and compare fingerprint data against a vast database of known and unknown records [6]. Central to its operation is the generation of a similarity score, a numerical measure representing the degree of correspondence between two fingerprint impressions [7]. These scores form the computational foundation for identification decisions in forensic science, yet their interpretation requires careful statistical framing to avoid contextual biases and overstatement of evidential value [8] [9].

The evolution from experience-based fingerprint examination toward scientifically valid quantitative evaluation has been accelerated by judicial scrutiny following highly publicized misidentifications [8]. Modern forensic science increasingly employs statistical models, particularly those based on the likelihood ratio (LR), to weigh fingerprint evidence transparently [8] [9]. Converting AFIS similarity scores into LRs provides a logically correct framework for expressing evidential strength, helping to address concerns about subjective interpretation and the lack of measurable error rates identified in foundational reports like the 2009 National Academy of Sciences report [8].

Quantitative Foundations of Similarity Scores

Score Distribution Modeling

The computational process of fingerprint matching involves comparing minutiae data—ridge endings, bifurcations, and their spatial relationships [6]. AFIS algorithms generate similarity scores by comparing the spatial patterns and relationships of minutiae points between a query fingerprint (e.g., from a crime scene) and reference prints in the database [7]. The distribution of these scores differs significantly depending on whether the comparisons are from the same source (the same finger) or different sources (different fingers) [8] [9].

Research indicates that the statistical distributions of similarity scores vary based on multiple factors, including the number of minutiae compared and their specific configurations [8]. Under same-source conditions, the optimal parameter methods for different numbers of minutiae are gamma and Weibull distributions, while for minutiae configurations, normal, Weibull, and lognormal distributions provide the best fit [8]. For different-source conditions, lognormal distribution is typically selected for different numbers of minutiae, and Weibull, gamma, and lognormal distributions for different minutiae configurations [8].

Table 1: Optimal Distribution Models for AFIS Similarity Scores Under Different Conditions

| Comparison Type | Feature Considered | Optimal Distribution Models |

|---|---|---|

| Same-Source | Number of Minutiae | Gamma, Weibull |

| Same-Source | Minutiae Configuration | Normal, Weibull, Lognormal |

| Different-Source | Number of Minutiae | Lognormal |

| Different-Source | Minutiae Configuration | Weibull, Gamma, Lognormal |

Performance Metrics and Discriminatory Power

The accuracy of AFIS similarity scores is intrinsically linked to the quantity and quality of minutiae available for comparison. Studies demonstrate that LR models show increased accuracy as the number of minutiae increases, indicating strong discriminative and corrective power [8]. However, the discriminative ability varies significantly between models based on different numbers of minutiae versus those based on different minutiae configurations, with the former generally outperforming the latter [8].

The table below summarizes key quantitative findings from recent research on score-based LR methods:

Table 2: Key Performance Findings for Score-Based Likelihood Ratio Methods

| Performance Factor | Impact on Accuracy/Performance | Research Findings |

|---|---|---|

| Number of Minutiae | Positive correlation | LR accuracy increases with more minutiae [8] |

| Minutiae Configuration | Variable impact | Lower accuracy compared to minutiae count models [8] |

| Database Size | Critical for stability | Large databases (up to 10M fingerprints) used for model building [8] |

| Between-Finger Variability | Dependent on multiple factors | Investigated factors: general pattern, finger number, minutiae count [9] |

Likelihood Ratio Conversion of Similarity Scores

Theoretical Framework

The likelihood ratio provides a logical framework for interpreting AFIS similarity scores by comparing the probability of the evidence under two competing hypotheses [8] [9]. The LR formula is expressed as:

LR = ( \frac{f(s|Hp)}{f(s|Hd)} )

Where:

- s = observed similarity score

- (H_p) = prosecution hypothesis (same source)

- (H_d) = defense hypothesis (different sources)

- (f(s|H_p)) = probability density of score s under same-source hypothesis

- (f(s|H_d)) = probability density of score s under different-source hypothesis [9]

This Bayesian framework allows forensic experts to quantify evidential strength numerically, moving away from non-probabilistic assertions of identity [8]. The numerator models within-source variability (how much scores vary when the same finger is compared against itself under different conditions), while the denominator models between-source variability (how much scores vary when comparing different fingers) [9].

Computational Workflow

The conversion of raw similarity scores to calibrated likelihood ratios follows a systematic process that incorporates statistical modeling of both within-finger and between-finger variability [9]. This workflow ensures that the final LR output accurately represents the evidential strength of fingerprint comparisons.

Experimental Protocols for LR Validation

Protocol 1: Database Construction and Management

Purpose: To establish a representative fingerprint database for modeling score distributions and computing reliable LRs [8] [9].

Materials:

- High-resolution fingerprint scanners (live-scan devices)

- Digital imaging software for latent print enhancement

- Secure storage infrastructure for biometric data

- Computational resources for large-scale data processing

Procedure:

- Sample Collection: Acquire fingerprint sets using standardized acquisition protocols, ensuring representation of diverse pattern types (loops, whorls, arches) and finger numbers [9].

- Quality Control: Implement quality metrics to exclude poor-quality impressions that could skew similarity score distributions.

- Database Structuring: Organize the database to facilitate efficient retrieval and comparison, incorporating metadata such as pattern class, finger number, and acquisition conditions.

- Size Determination: Build databases of sufficient scale (research indicates databases containing 10 million fingerprints from different sources have been used for building LR models) to ensure stable distribution estimation [8].

- Security Measures: Implement strict access controls and data protection protocols to maintain privacy and integrity of biometric information [10].

Protocol 2: Similarity Score Generation and Distribution Fitting

Purpose: To generate similarity scores from fingerprint comparisons and fit appropriate statistical distributions for LR computation [8] [9].

Materials:

- AFIS with configurable matching algorithms

- Statistical computing environment (R, Python with scipy)

- High-performance computing resources for large-scale comparisons

Procedure:

- Intra-Source Comparisons: Generate similarity scores by comparing multiple impressions of the same finger under different conditions (within-finger variability) [9].

- Inter-Source Comparisons: Generate similarity scores by comparing fingerprints from different sources (between-finger variability) using systematic sampling from the background database [9].

- Distribution Selection: Test candidate distributions (normal, Weibull, gamma, lognormal) for best fit to both same-source and different-source score populations using goodness-of-fit tests (e.g., Kolmogorov-Smirnov, Anderson-Darling) [8].

- Parameter Estimation: Calculate maximum likelihood estimates for distribution parameters for each modeled condition (e.g., specific minutiae counts, pattern types) [8].

- Model Validation: Assess fitted models through cross-validation techniques, measuring calibration and discrimination performance [10].

Protocol 3: Likelihood Ratio Performance Validation

Purpose: To validate the discriminative ability and calibration of computed likelihood ratios [8] [10].

Materials:

- Validation dataset independent from development data

- Computing resources for performance metrics calculation

- Visualization tools for diagnostic plots

Procedure:

- Test Set Construction: Create a balanced set of same-source and different-source comparisons not used in model development.

- LR Computation: Calculate LRs for all test comparisons using the developed models.

- Discrimination Assessment: Compute Tippett plots and calculate rates of misleading evidence (both for same-source and different-source cases) [9].

- Calibration Assessment: Evaluate the relationship between assigned LRs and ground truth using calibration plots [8].

- Error Rate Estimation: Quantify empirical error rates under specified decision thresholds, providing measurable performance metrics for courtroom testimony [8] [9].

Forensic Limitations and Methodological Constraints

Technical Limitations

Despite advances in statistical modeling, several technical limitations affect the reliability of AFIS similarity scores and their conversion to LRs:

- Database Dependencies: The between-finger variability distribution is highly dependent on the composition and size of the background database, with rare fingerprint pattern combinations requiring extremely large databases for stable estimation [9].

- Algorithmic Variability: Different feature extraction algorithms and AFIS systems produce different similarity scores for the same fingerprint pairs, complicating universal standardization [10].

- Minutiae Configuration Impact: LR models based solely on different numbers of minutiae outperform those based on different minutiae configurations, indicating persistent challenges in quantifying qualitative feature relationships [8].

- Contextual Bias: The ACE-V (Analysis, Comparison, Evaluation, and Verification) methodology remains vulnerable to contextual bias and subjective interpretation, despite attempts to introduce quantitative frameworks [8] [11].

Human Factors and System Limitations

Human expertise and system design introduce additional constraints in the interpretation of AFIS outputs:

- Human Error: Despite technological advancements, fingerprint examination still relies heavily on human expertise, introducing possibilities for error in identification and interpretation [11].

- Complexity of Latent Prints: Analyzing latent prints from crime scenes presents challenges due to partial prints, smudges, or poor quality, which affect both automated scoring and human verification [11].

- Technological Gaps: Automated systems are not foolproof and can miss matches that human examiners might identify, particularly when examiners rely too heavily on AFIS suggestions [11].

- Training Inconsistencies: Maintaining consistent standards across different jurisdictions and ensuring adequate training for fingerprint examiners remain ongoing challenges [11].

Research Reagents and Computational Tools

The experimental workflow for AFIS score conversion to likelihood ratios requires specialized computational resources and statistical tools. The following table details essential components of the research toolkit for this field.

Table 3: Essential Research Toolkit for AFIS Score and Likelihood Ratio Studies

| Tool Category | Specific Examples/Functions | Research Application |

|---|---|---|

| Biometric Data Acquisition | Live-scan devices, latent print enhancement tools | Capture high-quality fingerprint images for database construction [6] |

| AFIS Platforms | Commercial and open-source matching algorithms | Generate similarity scores for same-source and different-source comparisons [7] [6] |

| Statistical Computing Environments | R, Python with scipy, pandas, numpy | Distribution fitting, parameter estimation, and LR computation [8] |

| Data Visualization Libraries | matplotlib, seaborn, ggplot2 | Generate Tippett plots, calibration plots, and distribution visualizations [8] [9] |

| High-Performance Computing Resources | Parallel processing clusters, cloud computing | Manage large-scale fingerprint comparisons and database searches [12] |

| Validation Frameworks | Cross-validation scripts, error rate calculators | Assess discrimination and calibration performance of LR models [10] |

The conversion of AFIS similarity scores to likelihood ratios represents a significant advancement in forensic science, moving fingerprint identification from subjective experience toward scientifically valid quantitative evaluation. This transformation addresses fundamental concerns about the reliability and validity of fingerprint evidence while providing a transparent framework for expressing evidential strength. However, technical limitations including database dependencies, algorithmic variability, and the complex relationship between minutiae quantity and configuration continue to present research challenges. Future work should focus on standardizing validation protocols, improving model calibration across diverse fingerprint characteristics, and developing more robust methods for quantifying the discriminative value of minuteiae configurations. As these statistical approaches mature, they will enhance the scientific foundation of fingerprint evidence while maintaining essential human oversight in forensic decision-making.

The field of forensic science, particularly fingerprint identification, is undergoing a fundamental paradigm shift. This transition moves the discipline from a foundation of subjective expert experience towards one of objective, quantifiable science. This evolution is largely driven by two pivotal reports: the 2009 National Academy of Sciences (NAS) report and the 2016 President's Council of Advisors on Science and Technology (PCAST) report. These documents critically assessed forensic feature-comparison methods and mandated greater scientific rigor, empirical validation, and quantitative expression of evidential strength [8] [13]. Within fingerprint examination, this has catalyzed research into converting traditional Automatic Fingerprint Identification System (AFIS) similarity scores into forensically interpretable Likelihood Ratios (LRs), providing a statistical framework for evaluating evidence [8] [1]. These application notes detail the protocols and methodologies central to this research, framed within the broader context of AFIS score conversion to LR calculation.

The Driving Critiques: NAS and PCAST Reports

The NAS and PCAST reports served as catalysts for reform by highlighting critical methodological shortcomings in traditional forensic practices.

Key Findings and Recommendations

Table 1: Core Tenets of the NAS and PCAST Reports

| Aspect | 2009 NAS Report | 2016 PCAST Report |

|---|---|---|

| Primary Critique | Reliance on subjective, experience-based conclusions lacking quantifiable reliability and accuracy testing [8]. | Forensic methods require "foundational validity" established through empirical studies to be repeatable, reproducible, and accurate [13]. |

| Recommended Framework | Establishment of a statistical probabilistic evaluation system [8]. | Use of likelihood ratios to quantify the strength of evidence [8]. |

| Emphasis | Need for basic research to establish scientific validity [8]. | Importance of empirical error rates and validation studies [13]. |

Impact on Forensic Practice

The reports forced a reckoning within the forensic community. In response, organizations like the International Association for Identification removed prohibitions on statistical language and began endorsing statistically valid models for evidence evaluation [8]. This created the necessary impetus for the development and validation of LR methods, which provide a transparent and logically sound framework for weighing evidence under competing propositions (e.g., same-source vs. different-source) [8] [1].

The Scientific Framework: Likelihood Ratio Calculation

The Likelihood Ratio is the cornerstone of the modern, quantitative approach to forensic evidence evaluation. For fingerprint evidence, it is calculated by comparing the probability of the observed AFIS similarity score under two competing hypotheses.

Definition and Components

The LR for a given AFIS similarity score (S) is defined as: $$LR = \frac{f(S|H{SS})}{f(S|H{DS})}$$ Where:

- (H_{SS}): The prosecution hypothesis that the fingermark and fingerprint originate from the same source.

- (H_{DS}): The defense hypothesis that the fingermark and fingerprint originate from different sources.

- (f(S|H_{SS})): The probability density of the score S under the same-source hypothesis.

- (f(S|H_{DS})): The probability density of the score S under the different-source hypothesis [1].

An LR greater than 1 supports the same-source hypothesis, while an LR less than 1 supports the different-source hypothesis. The further the LR is from 1, the stronger the evidence.

Workflow for LR Calculation from AFIS Scores

The conversion of a raw AFIS score into a calibrated Likelihood Ratio follows a multi-stage process. The workflow below outlines the key steps from initial evidence processing to the final interpretation of the calculated LR.

Experimental Protocols for LR Method Validation

The PCAST report's emphasis on "foundational validity" necessitates rigorous, empirical validation of any LR method before it can be deployed in casework. The following protocol outlines a comprehensive validation framework.

Core Validation Protocol

Objective: To empirically validate the performance and reliability of a score-based LR method for fingerprint evidence evaluation. Propositions: HSS: The fingermark and fingerprint originate from the same source. HDS: The fingermark and fingerprint originate from different sources [1].

Procedure:

- Dataset Curation:

- Utilize separate, forensically relevant datasets for model development and validation to ensure generalizability [1].

- The validation dataset should include real forensic fingermarks to reflect casework conditions [1].

- Database construction should involve a large number of fingerprints (e.g., millions) from different sources to ensure robustness [8].

Score Generation:

- Compare fingermarks against fingerprints using a commercial AFIS algorithm (e.g., Motorola BIS/Printrak) treated as a "black box" [1].

- For each comparison, record the similarity score and the ground truth (SS or DS).

LR Calculation:

- For a given score S, compute f(S|HSS) by modeling the distribution of scores from known same-source comparisons.

- Compute f(S|HDS) by modeling the distribution of scores from known different-source comparisons.

- Statistical models (e.g., gamma, Weibull, lognormal distributions) are fitted to these score distributions to derive the probability densities [8].

Performance Assessment: Evaluate the method against pre-defined validation criteria using the following metrics and visualizations.

Table 2: Validation Matrix for LR Methods [1]

| Performance Characteristic | Performance Metric | Graphical Representation | Validation Criteria Example |

|---|---|---|---|

| Accuracy | Cllr (Cost of log LR) | ECE (Empirical Cross-Entropy) Plot | Cllr < 0.3 |

| Discriminating Power | EER (Equal Error Rate), Cllrmin | DET (Detection Error Trade-off) Plot | EER < 5% |

| Calibration | Cllrcal | ECE Plot | Cllr ≈ Cllrcal |

| Robustness | Cllr, EER | Tippett Plot | Performance stability across datasets |

| Coherence | Cllr, EER | Tippett Plot | Consistent performance across data subsets |

Advanced Consideration: Accounting for Typicity

A critical advancement in LR calculation is ensuring that the metric accounts for both the similarity between two fingerprints and their typicality within the relevant population. Methods based solely on similarity scores without considering the rarity of the features in the population are flawed [14]. The "common-source method" is recommended as it properly incorporates this typicality, providing a more statistically valid LR [14].

The Scientist's Toolkit: Essential Research Reagents

The transition to quantitative evidence evaluation requires a new set of "research reagents"—methodologies, software, and data resources.

Table 3: Key Research Reagents for AFIS-LR Research

| Reagent / Tool | Function / Purpose | Example / Note |

|---|---|---|

| AFIS with API | Generates raw similarity scores from fingerprint/fingermark comparisons. | Treated as a "black box"; e.g., Motorola BIS algorithm [1]. |

| Forensic Databases | Provides data for model development and validation. Must be large and forensically relevant. | Databases of 10+ million fingerprints; real casework fingermarks [8] [1]. |

| Statistical Software (R, Python, Matlab) | Used for statistical modeling, distribution fitting, and LR calculation. | Matlab code for typicality-aware LR calculations [14]. |

| Distribution Models | Models the probability of scores under HSS and HDS. | Gamma, Weibull, and Lognormal distributions [8]. |

| Validation Metrics Suite | A set of tools to measure the performance of the LR method. | Cllr, EER, ECE plots, Tippett plots [1]. |

| Quality Metric Tools | Quantifies the clarity of the evidence, which can impact score distributions. | Analogous to OFIQ (Open-Source Facial Image Quality) in facial recognition [15]. |

The critiques laid out by the NAS and PCAST reports were not an endpoint but a vital catalyst. They initiated an essential evolution in forensic science, pushing fingerprint identification from a craft based on accumulated experience toward a rigorous, quantitative discipline. The research protocols and application notes detailed herein focus on the conversion of AFIS scores into Likelihood Ratios, which sits at the heart of this transformation. The ongoing development, rigorous validation, and implementation of these statistical methods are paramount for improving the accuracy and reliability of fingerprint evidence, thereby strengthening the foundation of the judicial process.

The Conversion Toolkit: Statistical Methods for Calculating LRs from AFIS Scores

The conversion of similarity scores into probabilistically meaningful Likelihood Ratios (LRs) represents a fundamental paradigm in modern forensic evidence evaluation. Within the context of Automated Fingerprint Identification System (AFIS) research, this calibration process transforms abstract similarity metrics into evidential weight statements that are both scientifically valid and forensically informative. A Likelihood Ratio is formally defined as the ratio of the probability of the evidence under two competing propositions: the same-source proposition (H1) and the different-source proposition (H2) [1]. This framework provides a coherent statistical basis for expressing the strength of forensic evidence while clearly separating the roles of the forensic examiner and the judicial decision-maker.

The calibration process addresses a critical limitation of raw similarity scores generated by AFIS algorithms. These systems, primarily designed for investigative prioritization, produce scores that, while useful for ranking candidates, lack probabilistic interpretability for evidential assessment [1]. Proper calibration bridges this gap by converting scores into LRs that properly balance similarity and typicality considerations [16]. Scores accounting only for similarity between specimens produce poorly calibrated LRs, while effective scores incorporate both similarity and the typicality of the features within relevant population data [16]. This transformation enables forensic practitioners to move beyond simplistic "match/no-match" dichotomies toward a more nuanced and statistically rigorous expression of evidential value.

Theoretical Foundations of Likelihood Ratio Calculation

Core Principles of Forensic Evidence Evaluation

The theoretical basis for likelihood ratio calculation in forensic science rests upon Bayesian inference frameworks, which provide a method for updating prior beliefs about propositions in light of new evidence [17]. The LR quantitatively expresses how much more likely the evidence is under one proposition compared to another, serving as a multiplicative factor that modifies prior odds into posterior odds. This approach requires explicit definition of the competing propositions relevant to the case context, typically formulated at source level as follows [1]:

- H1 (Same-Source Proposition): The fingermark and fingerprint originate from the same finger of the same donor.

- H2 (Different-Source Proposition): The fingermark originates from a random finger of another donor from the relevant population.

A critical insight in score-based LR estimation is that effective scores must incorporate both similarity and typicality considerations [16]. Similarity-only measures, which merely quantify the degree of agreement between two specimens, produce poorly calibrated LRs because they fail to account for the rarity of the observed features in the relevant population. Properly calibrated scores must therefore reflect not only how similar two specimens are to each other, but also how typical the questioned specimen is within the population specified by the defense hypothesis [16].

Performance Metrics for Validation

The validation of LR methods requires assessment across multiple performance characteristics, organized systematically in a validation matrix [1]. Key metrics include:

Table 1: Essential Performance Metrics for LR Validation

| Performance Characteristic | Performance Metric | Graphical Representation | Validation Criteria |

|---|---|---|---|

| Accuracy | Cllr | ECE Plot | According to definition |

| Discriminating Power | EER, Cllrmin | ECEmin Plot, DET Plot | According to definition |

| Calibration | Cllrcal | ECE Plot, Tippett Plot | According to definition |

| Robustness | Cllr, EER | ECE Plot, DET Plot, Tippett Plot | According to definition |

| Coherence | Cllr, EER | ECE Plot, DET Plot, Tippett Plot | According to definition |

| Generalization | Cllr, EER | ECE Plot, DET Plot, Tippett Plot | According to definition |

The Cllr (Cost of log Likelihood Ratio) serves as a particularly important metric, measuring the accuracy of the LR system by penalizing both discriminability loss and calibration errors [17]. Lower Cllr values indicate better performance, with perfect systems achieving Cllr = 0. Additional metrics like the Equal Error Rate (EER) focus specifically on discriminating power, representing the point where false acceptance and false rejection rates are equal [1].

Experimental Protocols for AFIS Score Calibration

Data Collection and Preparation

The calibration of AFIS scores into likelihood ratios requires carefully structured datasets representing both same-source and different-source comparisons. The experimental protocol must utilize separate datasets for development (training) and validation stages to ensure unbiased performance assessment [1]. For fingerprint applications, datasets should include fingermarks with varying minutiae counts (typically 5-12 minutiae) to represent real forensic conditions [1].

The data collection process involves:

- Acquisition of fingerprint images using standardized scanning equipment (e.g., ACCO 1394S live scanner)

- Generation of comparison scores using AFIS algorithms (e.g., Motorola BIS/Printrak 9.1) treated as a black box

- Organization of score data into same-source (SS) and different-source (DS) categories based on ground truth

- Partitioning of data into development and validation sets, ensuring no overlapping specimens

For forensic facial image comparison, similar protocols apply, utilizing datasets such as SCface containing surveillance camera images or ENFSI proficiency test data representing casework-related images [17]. These datasets should include variations in image quality, resolution, and acquisition conditions to ensure method robustness.

Logistic Regression Calibration Protocol

Logistic regression provides a widely adopted method for converting scores to log-likelihood ratios [18]. The protocol involves:

Diagram 1: Logistic Regression Calibration Workflow

Step 1: Data Preparation

- Collect SS scores (comparisons from known matching pairs)

- Collect DS scores (comparisons from known non-matching pairs)

- Label SS scores as class 1 and DS scores as class 0 for regression

Step 2: Model Training

- Apply logistic regression to the scores with their class labels

- The logistic function maps scores to log-likelihood ratios:

log(LR) = β₀ + β₁ × score - Estimate parameters β₀ and β₁ using maximum likelihood estimation

Step 3: Application

- For a new comparison with score

s, compute:LR = exp(β₀ + β₁ × s) - This transforms the score into a properly calibrated likelihood ratio

This approach can be extended to multimodal fusion when multiple scores are available from different systems, using multivariate logistic regression to combine them into a single LR [18].

Advanced Calibration Techniques

Beyond basic logistic regression, several advanced calibration methods have been developed to address specific challenges:

Quality-Based Calibration: This approach incorporates quality metrics of the input samples to improve calibration performance. Research in facial image comparison has demonstrated that quality-based calibration outperforms naive approaches, particularly for non-ideal samples such as surveillance imagery [17].

Same-Feature Calibration: This technique uses development data with similar characteristics to the test data, ensuring the calibration parameters are appropriate for the specific case context. Studies show this method improves both Cllr and ECE metrics compared to generic calibration [17].

Kernel Density Estimation (KDE): As an alternative to parametric methods like logistic regression, KDE provides a non-parametric approach to estimate the probability density functions for SS and DS scores, from which LRs can be directly computed [16].

Implementation Framework and Validation

LR Method Implementation

The implementation of a calibrated LR system within an AFIS framework follows a structured processing pipeline:

Diagram 2: LR Method Implementation Framework

The implementation consists of two primary components:

- Scorer: The biometric system (AFIS) that generates raw similarity or distance scores from fingerprint comparisons

- Calibrator: The statistical model that transforms scores into calibrated likelihood ratios

This separation allows forensic institutions to treat commercial AFIS algorithms as black-box scorers while implementing transparent, validated calibration methods to produce forensically interpretable LRs [1]. The Netherlands Forensic Institute has demonstrated this approach using Motorola BIS/Printrak 9.1 as the scoring engine with custom calibration modules [1].

Validation Methodology

Comprehensive validation of calibrated LR systems follows a structured approach using a validation matrix that specifies performance characteristics, metrics, and criteria [1]. The validation protocol includes:

Table 2: Validation Protocol for Calibrated LR Systems

| Validation Stage | Procedure | Data Requirements | Success Criteria |

|---|---|---|---|

| Accuracy Assessment | Compute Cllr on validation data | Independent validation dataset | Cllr < threshold (e.g., 0.2) |

| Discriminating Power Evaluation | Calculate EER, Cllrmin | Balanced SS and DS scores | Improvement over baseline |

| Calibration Verification | Analyze Tippett plots | Validation scores with ground truth | Proper score distribution |

| Robustness Testing | Performance under variations | Data with quality degradation | Limited performance loss |

| Coherence Assessment | Consistency across conditions | Multiple data subsets | Stable performance |

| Generalization Testing | Cross-dataset evaluation | External datasets | Maintained performance |

The validation should compare the performance of the calibrated system against established baseline methods, reporting relative improvements or degradations in percentage terms [1]. For instance, a validation report might indicate "Cllr improved by 15% compared to the baseline method."

Essential Research Reagents and Materials

The implementation of score calibration protocols requires specific computational tools and data resources. The following table details essential components for establishing a calibrated LR system:

Table 3: Research Reagent Solutions for Score Calibration

| Component | Specification | Function/Purpose | Example Sources |

|---|---|---|---|

| AFIS Algorithm | Commercial or open-source comparison engine | Generates raw similarity scores from fingerprint comparisons | Motorola BIS/Printrak 9.1 [1] |

| Development Dataset | Curated fingerprint pairs with ground truth | Training calibration models | Netherlands Forensic Institute data [1] |

| Validation Dataset | Independent fingerprint pairs with ground truth | Testing calibrated LR performance | Forensic casework data [1] |

| Calibration Software | Logistic regression or KDE implementation | Transforms scores to LRs | R, Python with scikit-learn [18] |

| Performance Metrics | Cllr, EER implementation | Quantifies system performance | FoCal Toolkit, BOSARIS Toolkit [17] |

| Quality Metrics | Image quality assessment algorithms | Enhances calibration for quality variations | NFIQ or custom quality measures [19] |

The selection of appropriate datasets is particularly critical, as the performance of calibrated systems depends heavily on the representativeness and completeness of the development data. Ideally, datasets should reflect the actual casework conditions, including variations in fingerprint quality, minutiae count, and acquisition methods [1].

Performance Assessment and Interpretation

Quantitative Performance Metrics

The assessment of calibrated LR systems generates quantitative metrics that must be properly interpreted:

Cllr Interpretation: The Cllr metric can be decomposed into Cllrmin (discrimination component) and Cllrcal (calibration component), allowing separate assessment of these performance aspects [17]. Well-calibrated systems show small differences between Cllr and Cllrmin.

ECE Plots: The Empirical Cross-Entropy plot visualizes how the LR values would affect decision accuracy across different prior probabilities, providing a clear representation of the practical utility of the system [1].

Tippett Plots: These plots show the cumulative distribution of LR values for both SS and DS comparisons, allowing visual assessment of discrimination and calibration [1]. Well-calibrated systems show LRs > 1 for most SS comparisons and LRs < 1 for most DS comparisons.

Practical Implementation Considerations

Successful implementation of calibrated LR systems in operational forensic environments requires attention to several practical aspects:

Computational Efficiency: Calibration should introduce minimal computational overhead to maintain practical workflow efficiency, especially in high-volume casework environments.

Transparency and Explainability: Despite the statistical complexity of calibration methods, the implementation should provide transparent results that can be effectively communicated in legal proceedings.

Robustness to Data Limitations: Methods should maintain reasonable performance even with limited development data, through techniques like regularization in logistic regression or Bayesian approaches to density estimation [18].

Continuous Validation: Ongoing performance monitoring should be implemented to detect performance degradation due to changes in casework characteristics or AFIS algorithm updates.

The integration of properly calibrated LR systems into forensic practice represents a significant advancement toward more transparent, quantitative, and scientifically valid evidence evaluation. By transforming similarity scores into probabilistically meaningful LRs, forensic practitioners can provide clearer assessments of evidential strength while maintaining appropriate scientific rigor.

Parametric survival analysis plays a crucial role in reliability engineering, biomedical research, and forensic science by enabling the modeling of time-to-event data. Within the context of Automated Fingerprint Identification System (AFIS) score conversion research, these methods provide the mathematical foundation for calculating precise likelihood ratios that quantify the strength of fingerprint evidence. The gamma, Weibull, and lognormal distributions offer particularly flexible frameworks for capturing diverse failure rate patterns and data structures encountered in practical applications.

Each distribution possesses unique characteristics that make it suitable for different types of data. The Weibull distribution effectively models data with monotonic hazard rates that can be increasing, decreasing, or constant, making it invaluable for reliability testing and failure analysis [20]. The lognormal distribution is characterized by its right-skewed shape and is often appropriate for modeling data where the logarithm of the variable follows a normal distribution, such as repair times or certain biological processes. The gamma distribution provides a flexible two-parameter family that can accommodate various shapes, including the exponential distribution as a special case, and is particularly useful for modeling heterogeneous survival data [21] [22].

Recent methodological advances have demonstrated the enhanced capability of these distributions when applied through sophisticated statistical frameworks. The integration of these parametric methods within accelerated failure time (AFT) models and network meta-analysis frameworks has expanded their utility in complex research domains [21] [23]. Furthermore, the development of shifted mixture models that combine Weibull, lognormal, and gamma distributions has shown promising results in capturing complex data structures that single distributions cannot adequately represent [22].

Theoretical Foundations

Distribution Properties and Characteristics

Each of the three distributions possesses distinct mathematical properties that determine its appropriateness for different data types and research questions.

The Weibull distribution is defined by its probability density function (PDF): f(t) = (β/α)(t/α)^(β-1) exp(-(t/α)^β) for t ≥ 0, where α > 0 is the scale parameter and β > 0 is the shape parameter. The flexibility of the Weibull distribution stems from its shape parameter β, which directly determines the behavior of the hazard function: when β < 1, the hazard decreases over time; when β = 1, the hazard is constant (reducing to the exponential distribution); and when β > 1, the hazard increases over time [20]. This property makes the Weibull distribution particularly valuable for modeling data with monotonic hazard rates, such as component failures in engineering systems or disease progression in medical research.

The lognormal distribution has a PDF given by: f(t) = 1/(tσ√(2π)) exp(-(ln(t) - μ)^2/(2σ^2)) for t > 0, where μ is the mean of the logarithm of the variable and σ is the standard deviation of the logarithm. The lognormal distribution is characterized by its right-skewed shape and its relationship to the normal distribution, which facilitates parameter estimation through logarithmic transformation of the data. This distribution typically exhibits a hazard function that increases initially and then decreases, making it suitable for modeling phenomena such as repair times, reaction times, and certain disease processes [21].

The gamma distribution has a PDF: f(t) = (t^(k-1) exp(-t/θ))/(θ^k Γ(k)) for t > 0, where k > 0 is the shape parameter and θ > 0 is the scale parameter. The gamma distribution offers considerable flexibility, as it can take on various shapes depending on the value of the shape parameter k. When k = 1, it reduces to the exponential distribution; when k > 1, the distribution is unimodal and right-skewed; and when k < 1, the distribution has a sharp peak at the origin. The hazard function of the gamma distribution can be increasing, decreasing, or constant, depending on the shape parameter [21] [22].

Table 1: Comparative Characteristics of Parametric Distributions

| Distribution | Parameters | Hazard Function Behavior | Typical Applications |

|---|---|---|---|

| Weibull | α (scale), β (shape) | Increasing (β>1), Decreasing (β<1), Constant (β=1) | Reliability analysis, Failure time modeling [20] |

| Lognormal | μ (location), σ (scale) | Increases to peak then decreases | Repair times, Biological measurements [21] |

| Gamma | k (shape), θ (scale) | Increasing (k>1), Decreasing (k<1), Constant (k=1) | Survival data, Insurance claims, Bayesian analysis [21] [22] |

| Generalized Gamma | μ, σ, Q | Flexible: can approximate Weibull, lognormal, gamma | Complex survival patterns, Network meta-analysis [21] |

Relationship to Likelihood Ratio Calculation

In AFIS score conversion research, parametric distributions provide the mathematical foundation for calculating likelihood ratios that quantify the strength of evidence. The likelihood ratio (LR) represents the ratio of the probability of observing a particular similarity score under two competing hypotheses: the prosecution hypothesis (Hp) that the latent print comes from the suspect versus the defense hypothesis (Hd) that it comes from another individual in the relevant population.

The general form of the likelihood ratio can be expressed as: LR = f(x|Hp) / f(x|Hd), where f(x|H) represents the probability density function evaluated at similarity score x under hypothesis H. Parametric fitting methods allow for the estimation of these probability density functions from relevant score distributions [24].

The choice of distribution directly impacts the calculated likelihood ratios and consequently the strength of evidence statements. Proper model selection is therefore critical to ensuring the validity and reliability of forensic conclusions. Research has shown that mixture models, which combine multiple parametric distributions, can provide enhanced flexibility for modeling complex score distributions that may arise from heterogeneous populations or multiple contributing factors [22].

Experimental Protocols

Parameter Estimation Methods

Protocol 3.1.1: Maximum Likelihood Estimation for Weibull Distribution

Purpose: To estimate Weibull distribution parameters (α, β) from survival data or similarity scores using maximum likelihood estimation (MLE).

Materials and Reagents:

- Statistical software (R with

flexsurvpackage [21] or equivalent) - Dataset containing observed times or scores

- Computational resources for numerical optimization

Procedure:

- Data Preparation: Compile complete dataset of observed event times or similarity scores. For AFIS research, this would include genuine and impostor similarity scores.

- Likelihood Function Specification: Define the log-likelihood function for the Weibull distribution:

L(α,β) = Σ[ln(β) - βln(α) + (β-1)ln(t_i) - (t_i/α)^β]for uncensored data. - Numerical Optimization: Implement optimization algorithm (e.g., Newton-Raphson, BFGS) to find parameter values (α, β) that maximize the log-likelihood function.

- Convergence Verification: Check optimization convergence criteria and ensure solution stability.

- Goodness-of-Fit Assessment: Evaluate model fit using appropriate diagnostics (e.g., Q-Q plots, AIC, BIC).

Notes: For censored data common in survival analysis, the likelihood function must be modified to incorporate information from censored observations [20] [23].

Protocol 3.1.2: Expectation-Maximization Algorithm for Mixture Models

Purpose: To estimate parameters of mixture distributions combining gamma, Weibull, and lognormal components using the Expectation-Maximization (EM) algorithm.

Materials and Reagents:

- Programming environment with statistical capabilities (R, Python with SciPy)

- Dataset for analysis

- Initial parameter estimates for mixture components

Procedure:

- Initialization: Provide initial estimates for mixture proportions and component parameters.

- E-step: Calculate the posterior probabilities of component membership for each observation.

- M-step: Update parameter estimates for each component using weighted MLE based on current posterior probabilities.

- Iteration: Alternate between E-step and M-step until convergence criteria are met (e.g., change in log-likelihood < 1e-6).

- Model Selection: Compare models with different numbers of components using information criteria (AIC, BIC).

Notes: The EM algorithm is particularly valuable for fitting shifted mixture models, which have shown superior performance for small datasets in recent research [22].

Model Selection and Validation

Protocol 3.2.1: Comprehensive Goodness-of-Fit Assessment

Purpose: To evaluate and compare the fit of different parametric distributions to empirical data.

Materials and Reagents:

- Multiple fitted parametric models

- Diagnostic plotting capabilities

- Information criterion calculation tools

Procedure:

- Visual Inspection: Generate probability-probability (P-P) plots and quantile-quantile (Q-Q) plots for each fitted distribution.

- Statistical Tests: Apply goodness-of-fit tests (Kolmogorov-Smirnov, Anderson-Darling, Cramér-von Mises) to assess formal fit.

- Information Criteria: Calculate Akaike Information Criterion (AIC) and Bayesian Information Criterion (BIC) for model comparison.

- Residual Analysis: Examine Cox-Snell residuals for survival models or standardized residuals for continuous data.

- Predictive Validation: If sample size permits, implement cross-validation to assess out-of-sample predictive performance.

Notes: For AFIS score conversion, particular attention should be paid to the fit in the extreme tails of the distribution, as these regions significantly impact calculated likelihood ratios for strong evidence [24].

Table 2: Parameter Estimation Methods for Different Distributions

| Distribution | Estimation Methods | Software Implementation | Convergence Considerations |

|---|---|---|---|

| Weibull | MLE, Least Squares, Weighted Least Squares [20] | flexsurv R package [21] |

Generally stable with adequate sample size |

| Lognormal | MLE via log transformation, Bayesian methods | survreg in R, flexsurv [21] |

Straightforward after log transformation |

| Gamma | MLE, Method of Moments, EM algorithm for mixtures | flexsurv [21], custom EM implementation [22] |

May require constraint on parameters (k>0, θ>0) |

| Mixture Models | EM algorithm, Bayesian Markov Chain Monte Carlo | Custom implementation in R/Python [22] | Sensitive to initial values; multiple restarts recommended |

Applications in AFIS Score Conversion

Implementation Workflow for Likelihood Ratio Calculation

The application of parametric fitting methods to AFIS score conversion follows a systematic workflow that transforms raw similarity scores into forensically interpretable likelihood ratios. This process involves multiple stages of data handling, model fitting, and validation.

Advanced Modeling Approaches

Recent methodological advances have expanded the toolbox available for AFIS score conversion research. Network meta-analysis approaches, while developed for biomedical applications, offer frameworks for synthesizing evidence across multiple studies or populations that could be adapted for forensic applications [21]. These methods enable the simultaneous comparison of multiple treatment effects (or, in the forensic context, multiple sources of variability) within a unified statistical model.

The accelerated failure time (AFT) model provides an intuitive alternative to proportional hazards models for survival data [23]. In the AFT framework, the effect of covariates is to accelerate or decelerate the survival time, which can be more interpretable than hazard ratios. For AFIS research, this framework could be adapted to model how case-specific factors (e.g., fingerprint quality, number of features) influence similarity scores.

Mixture models that combine gamma, Weibull, and lognormal distributions have demonstrated superior performance for complex datasets, particularly when population heterogeneity is present [22]. In forensic science applications, such mixture models could account for distinct subpopulations (e.g., different fingerprint pattern types, varying quality characteristics) that might otherwise complicate score distribution modeling.

Validation and Quality Assurance

Robust validation of parametric models is essential for ensuring reliable likelihood ratio calculation in forensic applications. The pointwise reliability assessment framework [24] provides methodologies for evaluating the reliability of individual predictions, which aligns closely with the needs of forensic evaluation where each case must be assessed independently.

Key validation approaches include:

Discrimination Assessment: Evaluating how well the model distinguishes between genuine and impostor comparisons using metrics such as the Area Under the ROC Curve (AUC) and Detection Error Tradeoff (DET) curves.

Calibration Validation: Assessing whether calculated likelihood ratios are statistically well-calibrated, using approaches such as the log-likelihood ratio cost (Cllr) and calibration plots.

Reliability Testing: Implementing methods such as the density principle and local fit principle [24] to identify predictions that may be unreliable due to being in sparsely populated regions of the feature space.

Research Reagent Solutions

Table 3: Essential Computational Tools for Parametric Distribution Modeling

| Tool/Software | Primary Function | Application Note | Distribution Compatibility |

|---|---|---|---|

| R flexsurv package [21] | Parametric survival modeling | Supports Weibull, gamma, lognormal, generalized gamma with time-varying effects | All distributions discussed |

| Python SciPy library | Statistical analysis and optimization | Provides PDF, CDF, and parameter estimation for standard distributions | Weibull, gamma, lognormal |

| Stan probabilistic programming | Bayesian modeling | Enables custom distribution fitting and hierarchical models | All distributions, including mixtures |

| EM algorithm custom code [22] | Mixture model fitting | Required for implementing shifted mixture distributions | Weibull-gamma-lognormal mixtures |

| Goodness-of-fit testing suite | Model validation | Comprehensive fit assessment (KS, AD, CvM tests) | All distributions |

Parametric fitting methods using gamma, Weibull, and lognormal distributions provide a powerful framework for statistical modeling in AFIS score conversion research. Each distribution offers unique characteristics that make it suitable for different data patterns, with the Weibull distribution excelling for monotonic hazard rates, the lognormal for right-skewed data, and the gamma for its flexible shape parameter. The emergence of mixture models that combine these distributions has further enhanced our ability to capture complex data structures.

The implementation of these methods requires careful attention to parameter estimation, model selection, and validation protocols. Maximum likelihood estimation and the EM algorithm serve as foundational approaches for parameter estimation, while comprehensive goodness-of-fit assessment ensures model adequacy. For AFIS applications specifically, the translation of fitted distributions into likelihood ratios demands rigorous validation to ensure forensic reliability.

As research in this field advances, the integration of Bayesian methods, mixture models, and reliability assessment frameworks will continue to strengthen the statistical foundation of forensic evidence evaluation. The protocols and applications outlined in this document provide a roadmap for researchers implementing these methods in both experimental and operational contexts.