Forensic Text Comparison System Fusion: Techniques, Validation, and Biomedical Applications

This article provides a comprehensive examination of fusion techniques in forensic text comparison (FTC) systems for researchers and forensic science professionals.

Forensic Text Comparison System Fusion: Techniques, Validation, and Biomedical Applications

Abstract

This article provides a comprehensive examination of fusion techniques in forensic text comparison (FTC) systems for researchers and forensic science professionals. It explores the foundational principles of the Likelihood Ratio (LR) framework and its application in authorship analysis, detailing specific methodologies like multivariate kernel density with lexical features and N-grams. The content covers advanced fusion approaches, notably logistic-regression fusion, and addresses critical troubleshooting aspects such as performance pitfalls and data relevance. Furthermore, it outlines rigorous validation protocols and comparative performance assessments using metrics like Cllr and Tippett plots. The discussion extends to the implications of these forensic techniques for enhancing data integrity and analysis in biomedical and clinical research contexts.

The Core Principles of Forensic Text Comparison and the LR Framework

The Likelihood Ratio (LR) framework is widely recognized as the logically and legally correct approach for evaluating forensic evidence, including textual evidence in authorship analysis [1]. An LR is a quantitative measure of evidence strength that compares the probability of the evidence under two competing hypotheses: the prosecution hypothesis (Hp) and the defense hypothesis (Hd) [2]. This is formally expressed in the equation:

LR = p(E|Hp) / p(E|Hd)

where E represents the observed evidence [1]. When the LR is greater than 1, the evidence supports Hp; when it is less than 1, it supports Hd. The further the value is from 1, the stronger the support for the respective hypothesis [1]. The LR framework enables forensic scientists to present a transparent, reproducible, and quantifiable measure of evidence strength that is intrinsically resistant to cognitive bias, addressing serious limitations of traditional expert-led opinion testimony in forensic linguistics [1].

Application in Forensic Text Comparison

In Forensic Text Comparison (FTC), the typical Hp states that "the source-questioned and source-known documents were produced by the same author," while Hd states that they were produced by different individuals [1]. The LR framework allows for the evaluation of both the similarity (how similar the writing styles are) and the typicality (how common or distinctive this similarity is) of the textual evidence [2] [1].

Two primary methodological approaches exist for LR estimation in FTC:

- Score-based methods: Reduce multivariate textual features to a single similarity score (e.g., Cosine distance). Likelihood Ratios are then estimated based on the distribution of these scores [3] [2].

- Feature-based methods: Compute LRs by directly assigning probabilities to the multivariate features using discrete statistical models, such as Poisson-based models [3] [2].

Table 1: Comparison of Score-Based and Feature-Based Methods for LR Estimation in FTC

| Aspect | Score-Based Methods | Feature-Based Methods |

|---|---|---|

| Core Approach | Reduces features to a similarity score (e.g., Cosine) [2] | Directly models multivariate feature probabilities [2] |

| Information Preservation | Loss of information from dimensionality reduction [2] | Preserves full multivariate feature structure [2] |

| Key Components | Assesses similarity only [2] | Incorporates both similarity and typicality [2] |

| Theoretical Fit for Text | Lower; assumes normality, violated by count data [2] | Higher; uses discrete models (e.g., Poisson) [3] [2] |

| Data Efficiency | More robust with limited data [2] | Requires larger quantities of data [2] |

| Reported Performance | Generally conservative LRs [2] | Outperforms score-based methods (Cllr ~0.09 better) [3] |

System Fusion Techniques

Given that different LR estimation procedures (e.g., based on different feature sets or models) can yield varying results, fusion techniques offer a powerful strategy to create a more robust and accurate system. The core principle involves combining the LRs or scores from multiple independent procedures to generate a single, more reliable LR.

Research has demonstrated that a fused forensic text comparison system can outperform any of its individual constituent procedures [4]. For instance, one study fused LRs from three different procedures (a multivariate kernel density method with authorship attribution features, word token N-grams, and character N-grams) using logistic regression [4]. The performance of the fused system, measured by the log-likelihood-ratio cost (Cllr), was superior to any single procedure, achieving a Cllr of 0.15 at a token length of 1500 [4].

Table 2: Performance of a Fused FTC System vs. Single Procedures [4]

| LR Estimation Procedure | Relative Performance (Cllr) |

|---|---|

| Multivariate Kernel Density (MVKD) with Authorship Features | Best performing single procedure |

| N-grams (Word Tokens) | Lower performance than MVKD |

| N-grams (Characters) | Lower performance than MVKD |

| Fused System (Logistic Regression) | Superior to all single procedures |

Experimental Protocols for FTC System Validation

Core Protocol: LR Estimation via Feature-Based Poisson Model

This protocol details the procedure for estimating LRs using a feature-based Poisson model, which has been shown to outperform score-based methods [3].

Text Preprocessing and Feature Extraction

- Data Preparation: Collect and clean text corpora. A large dataset is recommended; studies have used datasets from 2,157 authors [3] [2].

- Feature Selection: Create a bag-of-words representation for each document by counting the N-most common words across all documents. The value of N can be systematically varied (e.g., from 5 to 400) to assess impact [2].

- Feature Vector Construction: Each document is represented as a vector of word counts. Feature selection can be applied to improve performance [3].

Model Fitting and LR Calculation

- Model Selection: Employ a Poisson model to model the discrete (count-based) nature of the textual features [3] [2]. Consider variations like a one-level zero-inflated Poisson model or a two-level Poisson-gamma model for complex data structures [2].

- Parameter Estimation: Calculate the probability of the observed evidence (the questioned and known documents' feature vectors) under both Hp (same author) and Hd (different authors) using the fitted Poisson model.

- LR Derivation: Compute the LR by taking the ratio of the two probabilities as per the fundamental LR equation [1].

Performance Assessment

Validation Protocol: Addressing Casework Conditions

Empirical validation of an FTC system must replicate the conditions of the case under investigation using relevant data [1]. The following protocol uses topic mismatch as a case study.

Experimental Setup

- Define Condition: Identify a specific casework condition to test, such as a mismatch in topics between the questioned and known documents [1].

- Curate Relevant Data: Partition a text database to create two experimental conditions:

- Matched Condition: Source-known and source-questioned documents share the same topic.

- Mismatched Condition: Source-known and source-questioned documents cover different topics [1].

Execution and Analysis

- Run LR Estimation: Apply the chosen LR estimation method (e.g., from Protocol 4.1) to both the matched and mismatched conditions.

- Compare Performance: Calculate and compare the Cllr for both conditions. A properly validated system must demonstrate reliability under the specific challenged condition (mismatched topics) intended for casework application [1].

- System Calibration: Apply logistic regression calibration to the derived LRs if necessary to improve their validity [1].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Materials for FTC Research

| Item/Solution | Function in FTC Research |

|---|---|

| Text Corpora | Provides the fundamental data for building and validating FTC systems. Studies recommend using large datasets (e.g., 2,000+ authors) with controlled variables like document length and topic [2] [5] [1]. |

| Bag-of-Words Model | A standard text representation that converts documents into numerical feature vectors based on word counts, serving as input for statistical models [2]. |

| Function Words List | A predefined set of high-frequency, low-meaning words (e.g., "the", "and", "of") that serve as stable stylistic features for authorship attribution [2]. |

| N-gram Generator | Software tool to extract contiguous sequences of N words or characters from text. Used as features for some LR estimation procedures [4]. |

| Poisson Model | A discrete statistical model appropriate for count-based textual data. Used in feature-based LR estimation to compute probabilities [3] [2]. |

| Cosine Distance Metric | A similarity measure used in score-based methods to reduce a document's multivariate feature vector to a single score for comparison [3] [2]. |

| Logistic Regression Calibration | A computational method to calibrate raw output scores or LRs, improving their validity and reliability. Also used for fusing multiple LRs [2] [4] [1]. |

| Cllr (log-LR cost) Metric | A central gradient metric for objectively assessing the overall performance and quality of the LRs produced by an FTC system [3] [4]. |

Defining Forensic Text Comparison (FTC) and Authorship Attribution

Forensic Text Comparison (FTC) is a scientific discipline concerned with the analysis and evaluation of textual evidence for legal purposes. Within this framework, Authorship Attribution specifically refers to the process of identifying the most likely author of a questioned text from a set of candidate authors [6]. This technique plays a crucial role in several fields, including forensic linguistics, literary analysis, and historical research, where determining the true authorship of a document can change the understanding of its significance [6]. The methods used in authorship attribution often rely on statistical analysis of language patterns, word usage, and other textual characteristics to draw conclusions about the likely author [6].

The Likelihood Ratio Framework in FTC

The Likelihood Ratio (LR) framework is increasingly held to be the logically and legally correct approach for evaluating forensic evidence, including textual evidence [7] [1]. This framework provides a transparent, reproducible, and quantitatively rigorous method for assessing the strength of evidence. The LR is a quantitative statement of the strength of evidence, expressed as the ratio of the probability of the evidence assuming the prosecution hypothesis (Hp) is true to the probability of the same evidence assuming the defense hypothesis (Hd) is true [1]:

In the context of FTC, the typical Hp is that "the source-questioned and source-known documents were produced by the same author" or "the defendant produced the source-questioned document." The typical Hd is that "the source-questioned and source-known documents were produced by different individuals" or "the defendant did not produce the source-questioned document" [1]. An LR greater than 1 supports the prosecution hypothesis, while an LR less than 1 supports the defense hypothesis. The further the value is from 1, the stronger the evidence [1].

Bayesian Interpretation of Forensic Evidence

The LR framework operates within the broader context of Bayes' Theorem, which describes how prior beliefs should be updated in light of new evidence. The odds form of Bayes' Theorem is expressed as [1]:

The prior odds represent the fact-finder's belief about the hypotheses before considering the new evidence. The posterior odds represent the updated belief after considering the evidence. It is legally inappropriate for forensic scientists to present posterior odds because this concerns the ultimate issue of the suspect's guilt or innocence—a decision reserved for the trier-of-fact [1].

System Fusion Techniques in FTC

System fusion techniques in FTC involve combining multiple computational procedures to improve the reliability and discriminability of authorship analysis. Research has demonstrated that fusing the results from different textual analysis methods significantly enhances system performance compared to any single procedure [7] [8] [4].

Individual Procedures for LR Estimation

A fused FTC system typically integrates multiple analytical approaches, each with distinct strengths in capturing different aspects of authorship style:

Multivariate Kernel Density (MVKD) Procedure: Models each message group as a vector of authorship attribution features, including vocabulary richness, average token number per message line, uppercase character ratio, and other stylistic markers [7] [4].

Token N-grams Procedure: Utilizes word token-based N-grams (contiguous sequences of N words) to capture syntactic patterns and frequent word combinations characteristic of an author's style [7] [8].

Character N-grams Procedure: Employs character-based N-grams to capture sub-word orthographic patterns, morphological features, and typing habits that are often subconscious and difficult to manipulate [7] [8].

Logistic Regression Fusion

Logistic-regression fusion is a robust technique for combining the LRs separately estimated from multiple procedures into a single, more reliable LR for each author comparison [7]. This method involves training a logistic regression model on the outputs of the individual systems to optimize their combined discriminative performance. Empirical studies have demonstrated that this fusion approach is particularly beneficial when dealing with small sample sizes (e.g., 500-1500 tokens), which is advantageous for real casework where data scarcity is a common challenge [7].

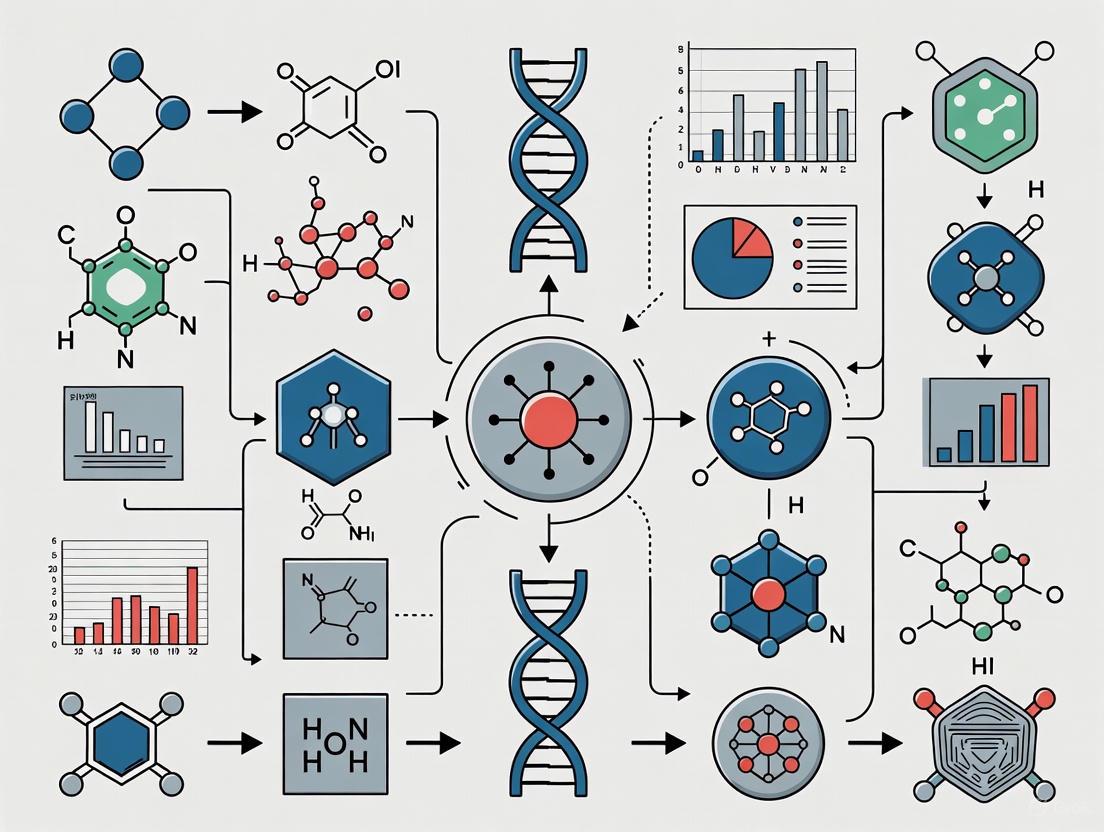

Figure 1: FTC System Fusion Architecture

Quantitative Performance of Fused Systems

Research on predatory chatlog messages from 115 authors demonstrated that fused systems consistently outperform individual procedures across various sample sizes. System performance is typically assessed using the log likelihood ratio cost (Cllr), a gradient metric for evaluating the quality of LRs, with lower values indicating better performance [7] [8].

Table 1: Performance Comparison of Individual vs. Fused FTC Systems (Cllr Values)

| System Type | 500 Tokens | 1000 Tokens | 1500 Tokens | 2500 Tokens |

|---|---|---|---|---|

| MVKD Procedure | 0.31 | 0.21 | 0.18 | 0.16 |

| Token N-grams | 0.44 | 0.33 | 0.27 | 0.21 |

| Character N-grams | 0.42 | 0.31 | 0.25 | 0.19 |

| Fused System | 0.21 | 0.16 | 0.15 | 0.13 |

The table above illustrates several key findings: (1) the MVKD procedure with authorship attribution features consistently performed best among the individual procedures across all token sizes; (2) all systems showed improved performance (lower Cllr values) with increasing token numbers; and (3) most significantly, the fused system achieved superior performance compared to any individual procedure at every data level [7] [8]. For example, with 1500 tokens, the fused system achieved a Cllr value of 0.15, outperforming the best individual procedure (MVKD) which achieved 0.18 [8].

Experimental Protocols for FTC Validation

Essential Research Reagents and Materials

Table 2: Essential Research Reagents for FTC Experiments

| Reagent/Resource | Function in FTC Research | Specifications |

|---|---|---|

| Forensic Text Corpus | Provides authentic textual data for method development and validation | Should include known authorship texts with metadata; examples include predatory chatlog messages [7] or Amazon Authorship Verification Corpus [1] |

| Tokenization Algorithm | Segments continuous text into analyzable units (words, characters) | Critical preprocessing step for feature extraction; affects N-gram generation [7] |

| Authorship Attribution Features | Quantifies stylistic characteristics for author discrimination | Includes vocabulary richness, average sentence length, punctuation frequency, capitalization patterns [7] [4] |

| N-gram Generators | Produces sequential language models for syntactic analysis | Configurable for token (word) or character N-grams of varying lengths [7] [8] |

| Statistical Modeling Framework | Implements LR calculation and calibration | Dirichlet-multinomial model for score calculation; logistic regression for calibration [1] |

| Validation Metrics | Assesses system performance and reliability | Cllr for overall system performance; Tippett plots for evidence strength visualization [7] [1] |

Detailed Experimental Workflow

The following workflow outlines the standardized protocol for conducting validated FTC experiments:

Figure 2: FTC Experimental Workflow

Phase 1: Data Collection and Preparation

- Database Selection: Utilize forensically relevant textual databases such as the Amazon Authorship Verification Corpus (AAVC) which contains reviews classified into different topics, or curated chatlog messages from known authors [1] [7].

- Topic Matching: Ensure the experimental design reflects casework conditions by controlling for topic mismatch between questioned and known documents, as this significantly impacts system performance [1].

- Data Partitioning: Divide data into three mutually exclusive sets: Test, Reference, and Calibration databases to ensure proper validation [1].

Phase 2: Text Preprocessing

- Tokenization: Convert continuous text into individual word tokens using standardized tokenization algorithms [1].

- Normalization: Apply consistent text normalization procedures (case folding, punctuation handling) while preserving potentially discriminative features.

- Document Pairing: Generate same-author and different-author pairs of documents for system training and testing. For robust validation, use at least 1776 same-author and 1776 different-author pairs [1].

Phase 3: Feature Extraction

- Implement the three core feature extraction procedures in parallel:

- MVKD Features: Calculate authorship attribution features including vocabulary richness, average token number per message line, uppercase character ratio, and other stylistic markers [7] [4].

- Token N-grams: Generate word-based N-gram models (typically bi-grams or tri-grams) to capture syntactic patterns [7].

- Character N-grams: Extract character-based N-grams (typically 4-grams or 5-grams) to capture sub-word orthographic patterns [7].

Phase 4: Model Development and Fusion

- Score Calculation: Compute raw similarity scores using appropriate statistical models such as the Dirichlet-multinomial model for bag-of-words representations [1].

- Calibration: Convert raw scores to LRs using logistic regression calibration to ensure well-calibrated LRs that accurately represent evidence strength [7] [1].

- Fusion Implementation: Apply logistic-regression fusion to combine LRs from the three separate procedures into a single, more robust LR output [7].

Phase 5: System Validation

- Performance Assessment: Evaluate system performance using the Cllr metric, which measures the overall quality of the LR system [7] [1].

- Evidence Strength Visualization: Generate Tippett plots to visually represent the strength of the derived LRs and the system's discriminative ability [7] [8].

- Boundary Management: Apply the Empirical Lower and Upper Bound (ELUB) method if unrealistically strong LRs are observed, to prevent potentially misleading evidence [7] [8].

Emerging Challenges and Future Directions

The field of FTC faces several significant challenges that require ongoing research attention. The rapid advancement of Large Language Models (LLMs) has complicated authorship attribution by blurring the lines between human and machine authorship [9]. This development has created four distinct authorship attribution problems: (1) Human-written Text Attribution; (2) LLM-generated Text Detection; (3) LLM-generated Text Attribution; and (4) Human-LLM Co-authored Text Attribution [9].

Validation remains a critical challenge, with studies demonstrating that FTC systems must be validated using data and conditions that accurately reflect casework scenarios, particularly regarding topic matching between compared documents [1]. Systems validated on mismatched topics may perform significantly worse when applied to real casework, potentially misleading triers-of-fact [1]. Other persistent challenges include dealing with sparse data, accounting for an author's stylistic variation across different contexts, and maintaining explainability in increasingly complex computational models [10] [9].

Future research directions should focus on developing more robust fusion techniques that can adapt to these emerging challenges, particularly in handling LLM-generated content and cross-domain authorship analysis. There is also a pressing need for standardized validation protocols and shared resources to advance the reliability and scientific acceptance of FTC methodologies [1] [9].

The idiolect is defined as an individual's unique and distinctive use of language, encompassing the totality of their possible utterances [11]. In forensic science, this linguistic individuality becomes a critical behavioral signal for attributing authorship to questioned texts, such as those found in SMS messages, chatlogs, or emails [7] [12]. The analysis of the idiolect has evolved from qualitative assessment to quantitative measurement within a statistically rigorous framework. Modern forensic text comparison (FTC) now operates within the likelihood ratio (LR) framework, which provides a logically and legally correct method for evaluating evidence strength [7] [1]. This framework quantifies evidence as the probability of observing the textual evidence if the prosecution hypothesis is true versus if the defense hypothesis is true [1]. However, a single method is often insufficient. System fusion techniques, which combine multiple analytical procedures, have been empirically demonstrated to enhance the performance and reliability of authorship attribution, outperforming individual methods [7] [8] [4]. This document outlines the application notes and experimental protocols for implementing these fused systems in forensic text comparison.

Quantitative Foundations of Idiolectal Analysis

Performance Metrics for Forensic Text Comparison Systems

The performance of a forensic text comparison system is quantitatively assessed using specific metrics, primarily the log likelihood ratio cost (Cllr). This gradient metric evaluates the quality of the likelihood ratios produced by a system [7]. A lower Cllr value indicates better system performance. Research has demonstrated that fused systems achieve superior performance compared to individual methods. The following table summarizes the quantitative performance of individual procedures versus a fused system from a key study using chatlog messages:

Table 1: Performance comparison (Cllr values) of individual procedures and a fused system across different token sizes [7] [4]

| Token Size | MVKD Procedure | Token N-grams | Character N-grams | Fused System |

|---|---|---|---|---|

| 500 | 0.34 | 0.56 | 0.54 | 0.21 |

| 1000 | 0.23 | 0.48 | 0.42 | 0.17 |

| 1500 | 0.18 | 0.41 | 0.36 | 0.15 |

| 2500 | 0.14 | 0.35 | 0.30 | 0.11 |

The Impact of Data Quantity on Idiolectal Analysis

The amount of available text data significantly influences the reliability of idiolectal analysis. As shown in Table 1, system performance improves consistently as the token count increases from 500 to 2500 tokens [7]. This highlights a critical consideration for forensic applications: the scarcity of data is a common challenge in real casework. The fusion of multiple procedures has been shown to be particularly advantageous in these low-token scenarios, mitigating the limitations of individual methods when data is limited [7].

Experimental Protocols for Fused Forensic Text Comparison

Protocol 1: A Multi-Procedure Fusion System

This protocol is based on the seminal work by Ishihara (2017), which fused three distinct procedures to estimate LRs for predatory chatlog messages [7] [4].

Objective: To estimate the strength of linguistic evidence via a fused FTC system that combines the Multivariate Kernel Density (MVKD), Token N-grams, and Character N-grams procedures. Materials: Chatlog messages from a known set of authors (e.g., 115 authors); Computational environment (e.g., R or Python). Workflow:

- Data Preparation: For each author, sample multiple groups of messages at varying token sizes (e.g., 500, 1000, 1500, 2500 tokens).

- Feature Extraction:

- MVKD Procedure: Model each message group as a vector of traditional authorship attribution features. These may include vocabulary richness, average sentence/token length, ratio of function words, and character case ratios [7].

- Token N-grams Procedure: Extract contiguous sequences of N words (e.g., bigrams, trigrams) from the texts.

- Character N-grams Procedure: Extract contiguous sequences of N characters (e.g., 4-grams, 5-grams) from the texts.

- Likelihood Ratio Estimation: Calculate LRs for each author comparison separately for the three procedures.

- The MVKD procedure uses its specific formula to compute LRs based on the feature vectors.

- The N-gram procedures use their respective models to compute LRs.

- Logistic-Regression Fusion: Fuse the three sets of derived LRs into a single, combined LR for each comparison using a logistic regression model [7] [12].

- Performance Validation:

Protocol 2: Validation Under Casework-Relevant Conditions

This protocol addresses the critical need for empirical validation of FTC methods under conditions that reflect real casework, as emphasized by Ishihara (2023) [1].

Objective: To validate an FTC system by replicating specific conditions of a case, such as topic mismatch between questioned and known documents.

Materials: Text corpora with metadata on topic, genre, and author; FTC software (e.g., the idiolect R package [13]).

Workflow:

- Define Casework Conditions: Identify the specific condition to be validated (e.g., mismatch in topics between documents).

- Select Relevant Data: Choose text data that accurately reflects the defined condition. For topic mismatch, this involves using known and questioned documents that differ in subject matter but share other relevant characteristics (e.g., genre, register) [1].

- LR Calculation and Calibration: Calculate LRs using an appropriate statistical model (e.g., a Dirichlet-multinomial model), followed by logistic regression calibration [1].

- Performance Assessment: Compare the system's performance under two scenarios:

- Matched Conditions: Where the validation data and setup perfectly mirror the casework conditions.

- Mismatched Conditions: Where the validation overlooks specific casework factors (e.g., topic).

- Result Interpretation: Analyze the divergence in performance (e.g., Cllr values) between the two scenarios. A significant divergence indicates that validation under casework-relevant conditions is essential to avoid misleading the trier-of-fact [1].

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials and computational "reagents" required for conducting forensic text comparison research.

Table 2: Key research reagents and computational tools for forensic text comparison

| Research Reagent / Tool | Type | Function / Application | Exemplar / Citation |

|---|---|---|---|

idiolect R Package |

Software Package | Provides a comprehensive suite for comparative authorship analysis in a forensic context, including methods like Delta and N-gram Tracing, and LR calibration. | [13] [14] |

| Quanteda R Package | Software Package | A foundational natural language processing (NLP) tool used for corpus creation, tokenization, and feature extraction (e.g., N-grams). | [13] |

| Authorship Attribution Features | Linguistic Metrics | Traditional stylometric features (e.g., vocabulary richness, sentence length, function word ratios) used to model an author's style. | [7] [4] |

| N-grams (Token & Character) | Textual Features | Contiguous sequences of words or characters that capture idiosyncratic lexical and sub-lexical patterns in an author's idiolect. | [7] [12] |

| Logistic-Regression Fusion | Statistical Method | A robust technique for combining the likelihood ratios output by multiple, independent FTC procedures into a single, more reliable LR. | [7] [12] |

| Log Likelihood Ratio Cost (Cllr) | Validation Metric | A primary metric for assessing the overall performance and discriminative power of a likelihood ratio-based system. | [7] [1] |

| Tippett Plots | Visualization Tool | A graphical representation used to display the distribution of LRs for both same-author and different-author comparisons, illustrating the strength of the evidence. | [7] [4] |

| Corpus for Idiolectal Research (CIDRE) | Data Resource | An example of a longitudinal corpus with dated works, essential for studying the evolution of an author's idiolect over time. | [11] |

Forensic Text Comparison (FTC) applies scientific principles to analyze textual evidence, aiming to provide insights regarding the authorship of questioned documents. A scientifically robust FTC framework relies on quantitative measurements, statistical models, and interpretation within the Likelihood Ratio (LR) framework, all of which must be empirically validated [1]. This application note addresses two central challenges in achieving valid and reliable FTC: topic mismatch and variable writing styles. We detail protocols for experimental design and system fusion that are critical for researchers developing methods resistant to the complex realities of forensic casework.

The Likelihood Ratio Framework is the logically and legally correct approach for evaluating forensic evidence, including authorship [7] [1]. An LR quantifies the strength of evidence by comparing the probability of the evidence under two competing hypotheses: the prosecution hypothesis (Hp, e.g., the same author wrote both documents) and the defense hypothesis (Hd, e.g., different authors wrote the documents) [1]. The resulting LR value informs the trier of fact without encroaching on the ultimate issue of guilt or innocence.

The Core Challenges: Topic Mismatch and Variable Writing Styles

Topic Mismatch

Topic mismatch occurs when the known and questioned documents under comparison differ in their subject matter. This is a frequent occurrence in real casework and poses a significant threat to the validity of an analysis.

- Impact on Validation: Empirical validation of an FTC system must replicate the conditions of the case under investigation. If the case involves a topic mismatch, but the validation experiment uses only same-topic documents, the resulting performance metrics will be unrealistically optimistic and mislead the trier-of-fact [1]. Validation is therefore condition-specific.

- Underlying Cause: An author's vocabulary and syntactic structures can shift with the topic. An FTC system trained on emails about work projects may perform poorly when comparing a work email to a questioned document about sports, not because the author is different, but because the linguistic register has changed.

Variable Writing Styles

An individual's idiolect is not a fixed, monolithic entity but is influenced by a multitude of factors beyond topic.

- Complex Influences: Writing style can vary based on the genre (e.g., email vs. text message), the intended audience, the author's emotional state, and the level of formality [1]. A single author may demonstrate markedly different linguistic profiles in a technical report, a personal blog post, and a series of SMS messages.

- Idiolect and Group Membership: A text simultaneously encodes information about the unique identity of the author (their idiolect) and their membership in broader social groups (e.g., based on age, gender, or regional dialect) [1]. A robust FTC system must be sensitive to the individuating markers of authorship while being robust to these other sources of variation.

Experimental Protocols for Addressing Topic Mismatch

To build FTC systems that are valid under realistic conditions, researchers must design experiments that explicitly account for topic mismatch.

Protocol 1: Cross-Topic Validation

Aim: To assess the performance of an FTC system when the known and questioned documents have different topics. Procedure:

- Database Curation: Assemble a corpus of texts from multiple authors. For each author, collect texts spanning at least two distinct, well-defined topics.

- Define Experimental Conditions:

- Same-Topic Condition: Use texts on the same topic for both known and questioned author representations.

- Cross-Topic Condition: Use texts on different topics for the known and questioned author representations.

- LR Calculation: For each author and condition, calculate LRs using the chosen statistical model (e.g., Dirichlet-multinomial model [1] or a Multivariate Kernel Density model [7]).

- Performance Assessment: Evaluate and compare system performance for both conditions using the log-likelihood-ratio cost (

Cllr) and Tippett plots [7] [1].

Table 1: Key Quantitative Metrics for System Assessment

| Metric | Description | Interpretation |

|---|---|---|

Cllr (Log-Likelihood-Ratio Cost) |

A single numerical index measuring the overall quality of the LR system; lower values indicate better performance [7]. | Gradient metric for system discrimination and calibration. |

Cllr_min |

The Cllr value after optimal calibration, representing the pure discrimination power of the system. |

Measures lack of discrimination. |

| Tippett Plots | A graphical representation of the cumulative proportion of LRs supporting the correct vs. incorrect hypothesis. | Visualizes the strength and reliability of evidence. |

Protocol 2: Fusion of Multiple Textual Features

Aim: To improve the robustness and discriminability of an FTC system by fusing evidence from multiple, complementary linguistic analyses. Rationale: Different feature types (e.g., lexical, character-based) are affected differently by topic changes. Fusing them can create a more stable and accurate system [7] [12]. Procedure:

- Feature Extraction: For each text, extract at least three different types of features:

- Lexical/Stylistic Features: Vocabulary richness, average sentence length, punctuation ratios, etc. [7].

- Character N-grams: Contiguous sequences of 'n' characters.

- Token N-grams: Contiguous sequences of 'n' words.

- Independent LR Estimation: Compute a set of LRs for each author comparison using each feature type independently.

- Logistic Regression Fusion: Fuse the multiple LR values into a single, combined LR using a logistic regression fusion technique [7] [12].

- Performance Comparison: Assess the performance of the fused system against each individual feature system using

Cllr.

Table 2: Research Reagent Solutions for FTC Experiments

| Reagent (Data & Model) | Function in FTC |

|---|---|

| Reference Text Corpus | A collection of texts from a population of authors; provides background data for estimating the typicality of a writing style under Hd [1]. |

| Dirichlet-Multinomial Model | A statistical model used for calculating LRs from count-based linguistic data (e.g., word frequencies, n-grams) [1]. |

| Multivariate Kernel Density (MVKD) Formula | A procedure for estimating LRs by modelling a set of messages as a vector of continuous-valued authorship attribution features [7]. |

| Logistic Regression Fusion | A robust technique for combining the quantitative output (LRs) from multiple, independent analysis procedures into a single, more powerful LR [7]. |

System Fusion Workflow

The following diagram illustrates the integrated workflow for a fused FTC system, from data preparation to the final fused LR.

Fused FTC System Architecture

Validation Framework for Real-World Application

For an FTC methodology to be scientifically defensible, it must undergo rigorous empirical validation that mirrors real-world conditions.

Essential Validation Requirements

Validation must fulfill two core requirements [1]:

- Reflect Case Conditions: The experimental design must replicate the specific challenges present in the case under investigation, such as topic mismatch, genre differences, or message length.

- Use Relevant Data: The data used for validation must be relevant to the case. This includes similarity in language, medium (e.g., SMS, email), and topic.

Validation Workflow Protocol

The following protocol outlines the key stages in a robust validation process for an FTC system.

FTC System Validation Process

Topic mismatch and variable writing styles present significant challenges to the reliability of Forensic Text Comparison. Overcoming these challenges requires a methodical approach centered on condition-specific validation and evidence fusion. By implementing the experimental protocols and validation framework outlined in this application note, researchers can develop more robust, transparent, and scientifically defensible FTC systems. The use of the LR framework, combined with fused feature sets and rigorous validation against relevant data, provides a path toward demonstrably reliable authorship analysis that meets the stringent demands of the legal context.

The Role of Quantitative Measurements and Statistical Models in Modern FTC

Forensic Text Comparison (FTC) has undergone a significant transformation, moving from qualitative, opinion-based analysis to a quantitative, data-driven scientific discipline. This paradigm shift is characterized by the adoption of quantitative measurements, statistical models, and rigorous validation frameworks, bringing FTC in line with other forensic comparative sciences [1]. The emergence of forensic data science represents a new paradigm in which methods based on human perception and subjective judgment are replaced with methods based on relevant data, quantitative measurements, and statistical models [15]. These approaches are not only transparent and reproducible but also intrinsically resistant to cognitive bias, addressing longstanding criticisms regarding the validation of traditional forensic linguistic approaches [1].

Central to this evolution is the Likelihood Ratio (LR) framework, increasingly recognized as the logically and legally correct approach for evaluating forensic evidence, including textual evidence [8] [7]. The LR provides a quantitative statement of the strength of evidence, allowing forensic scientists to communicate the probative value of their findings without encroaching on the ultimate issue reserved for the trier of fact [1]. This article details the application notes and protocols underpinning modern FTC systems, with particular emphasis on fusion techniques that combine multiple analytical procedures to enhance the reliability and discriminatory power of forensic text analysis.

Theoretical Foundation: The Likelihood Ratio Framework

The Likelihood Ratio framework provides a logically sound structure for evaluating the strength of forensic text evidence. It is formally expressed as:

LR = p(E|Hp) / p(E|Hd) [1]

Where:

- E represents the forensic text evidence under examination

- Hp is the prosecution hypothesis (typically that the same author produced both the questioned and known documents)

- Hd is the defense hypothesis (typically that different authors produced the documents) [1]

The LR quantitatively expresses how much more likely the evidence is under one hypothesis compared to the other. An LR greater than 1 supports Hp, while an LR less than 1 supports Hd. The further the LR is from 1, the stronger the evidence [1]. This framework logically updates the fact-finder's prior beliefs through Bayes' Theorem, ensuring a scientifically defensible and transparent interpretation of evidence [1].

Quantitative Features in Forensic Text Comparison

Modern FTC systems employ diverse quantitative features to capture an author's unique stylistic patterns. The research indicates that combining multiple feature types through fusion techniques significantly enhances system performance [8] [12].

Table 1: Quantitative Features Used in Forensic Text Comparison

| Feature Category | Specific Examples | Measurement Approach | Application in FTC |

|---|---|---|---|

| Lexical & Syntactic Features | Vocabulary richness, average token number per message line, uppercase character ratio [7] | Multivariate analysis using Kernel Density formulas [8] | Captures author-specific patterns in word usage and basic writing style [7] |

| Token N-Grams | Recurring sequences of words [8] | Frequency-based statistical models | Identifies habitual phrases and common word combinations [8] |

| Character N-Grams | Recurring character sequences [8] | Frequency-based statistical models | Captures sub-word patterns, spelling habits, and morphological preferences [8] |

System Fusion Techniques and Performance

Fusion techniques integrate results from multiple analytical procedures to produce a single, more robust likelihood ratio. Logistic regression fusion has proven particularly effective in FTC applications, demonstrating consistent performance improvements over individual procedures [8] [12].

Table 2: Performance Comparison of Single Procedure vs. Fused FTC Systems

| System Configuration | Sample Size (Tokens) | Performance Metric (Cllr) | Key Findings |

|---|---|---|---|

| MVKD Procedure Only | 1500 | Not specified (Best performer among singles) | MVKD with authorship attribution features performed best in terms of Cllr among single procedures [8] |

| Fused System | 1500 | 0.15 [8] | The fused system outperformed all three single procedures; fusion most beneficial with smaller samples (500-1500 tokens) [8] |

The empirical evidence demonstrates that fusion is particularly advantageous in casework where data scarcity is a recurring challenge [8]. The performance improvement stems from the system's ability to leverage complementary strengths of different feature types, creating a more robust and reliable author verification system.

Experimental Protocols and Workflows

Core FTC Experimental Protocol

The following workflow outlines the standard experimental procedure for a fused forensic text comparison system:

Protocol Steps

- Data Preparation and Text Preprocessing: Collect known and questioned text samples. For chatlog analysis, manually check and transform messages into computer-readable format [7]. Control for variables such as topic, genre, and register to ensure valid comparisons [1].

- Feature Extraction: Convert texts into quantitative feature vectors using multiple procedures:

- Individual LR Estimation: Calculate likelihood ratios separately using each feature type. Apply appropriate statistical models for each procedure (e.g., Dirichlet-multinomial model for N-grams) [1].

- Logistic Regression Fusion: Input the LRs from all procedures into a logistic regression model to obtain a single, fused LR for each author comparison [8]. This technique is robust and has been successfully applied in various forensic comparison systems [7].

- Calibration and Validation: Assess system performance using the log-likelihood-ratio cost (Cllr) and visualize results with Tippett plots [8]. Apply validation methods such as the Empirical Lower and Upper Bound (ELUB) to address unrealistically strong LRs [8].

- Expert Reporting: Present the fused LR with appropriate explanations of its meaning and limitations, ensuring clear communication to the trier of fact without addressing the ultimate issue [1].

The Scientist's Toolkit: Research Reagents and Materials

Table 3: Essential Research Reagents for FTC System Development

| Tool/Resource | Specification | Application in FTC |

|---|---|---|

| Forensic Text Database | 115+ authors; predatory chatlog messages; 500-2500 token samples [8] | Provides realistic, forensically relevant data for system development and validation |

| Multivariate Kernel Density (MVKD) | Formula for modeling feature vectors [8] [7] | Estimates LRs from lexical and syntactic authorship attribution features |

| N-gram Models | Word token-based and character-based N-grams [8] | Captures sequential linguistic patterns at different granularities |

| Logistic Regression Fusion | Calibration and fusion technique [8] [12] | Robust method for combining multiple LR streams into a single, more accurate output |

| Performance Validation Metrics | Log-Likelihood-Ratio Cost (Cllr); Tippett plots [8] [1] | Objective assessment of LR system quality and discriminability |

| Calibration Methods | Bi-Gaussianized calibration [15] | Advanced technique for improving LR calibration and interpretation |

Validation Framework and Future Directions

Empirical validation remains critical for admissible FTC evidence. Validation must replicate case conditions using relevant data, particularly addressing challenging factors like topic mismatch between questioned and known documents [1]. The following conceptual diagram illustrates the essential elements of the new forensic data science paradigm:

Essential future research includes determining specific casework conditions that require validation, establishing what constitutes relevant data for casework, and defining quality and quantity thresholds for validation data [1]. These developments will contribute significantly to making scientifically defensible and demonstrably reliable FTC available to the justice system.

Implementing Fusion: From Individual Features to Integrated Systems

Within the broader research on forensic text comparison system fusion techniques, the Multivariate Kernel Density (MVKD) procedure represents a foundational methodology for quantifying the strength of evidence. This approach operates within the logically rigorous likelihood ratio (LR) framework, which is increasingly held as the standard for evaluating forensic evidence [7]. The MVKD procedure with lexical features enables the calculation of a likelihood ratio by comparing the probability of observing a questioned text under competing prosecution and defense hypotheses [7]. This document provides detailed application notes and experimental protocols for implementing this procedure, serving researchers and forensic scientists engaged in authorship attribution of electronic communications such as chatlogs, emails, and SMS messages.

Quantitative Performance Data

The performance of the MVKD procedure has been empirically evaluated against other methods, such as those based on character N-grams, using metrics like the log-likelihood-ratio-cost (Cllr) [16]. The following tables summarize key quantitative findings from comparative studies.

Table 1: Comparative Performance (Cllr) of MVKD vs. N-gram Procedures for Different Token Sizes

| Token Size | MVKD Procedure (Cllr) | Character N-gram Procedure (Cllr) | Token N-gram Procedure (Cllr) | Fused System (Cllr) |

|---|---|---|---|---|

| 500 | 0.34 | 0.66 | 0.51 | 0.22 |

| 1000 | 0.22 | 0.53 | 0.38 | 0.16 |

| 1500 | 0.18 | 0.46 | 0.32 | 0.15 |

| 2500 | 0.16 | 0.40 | 0.27 | 0.14 |

Source: Adapted from [7] and [4]. Lower Cllr values indicate better system performance.

Table 2: Core Lexical Feature Set for MVKD in Forensic Text Comparison

| Feature Category | Specific Features & Descriptions |

|---|---|

| Lexical Richness | Vocabulary richness (e.g., Type-Token Ratio) |

| Message Length | Average number of tokens per message line |

| Character Usage | Ratio of upper-case characters; digit and punctuation frequency |

| Structural Features | Word length distribution; sentence/line complexity |

Source: Summarized from [7].

Experimental Protocols

Data Collection and Preparation Protocol

- Source Material: Acquire chatlog messages from known authors. In the referenced study, data consisted of real chatlog communications between later-sentenced paedophiles and undercover police officers in the US, obtained from a public archive (http://pjfi.org/) [7].

- Data Curation: Manually check and transform messages from each author into a computer-readable format. This step is crucial for data integrity [7].

- Author Selection: Select a cohort of authors for analysis. The referenced study used messages from 115 authors [4].

- Sample Sizing: For each author, create message groups of varying token sizes (e.g., 500, 1000, 1500, and 2500 tokens) to model the effect of data quantity on system performance [7].

Feature Extraction Protocol

- Input: Prepared text samples (message groups) from known suspects/offenders and anonymous authors [7].

- Procedure: For each text sample, calculate a vector of lexical features. The specific features used in the foundational research include [7]:

- Vocabulary richness features.

- The average token number per message line.

- Upper-case character ratio.

- Additional features as detailed in Table 2 of this document.

- Output: A multivariate dataset where each author's text sample is represented by a numerical vector of the extracted lexical features.

Likelihood Ratio Calculation Protocol using MVKD

- Objective: To compute the likelihood ratio for a forensic text comparison.

- Hypotheses:

- Prosecution Hypothesis (Hp): The suspect and the offender are the same person.

- Defense Hypothesis (Hd): The suspect and the offender are different people [7].

- Calculation: The LR is the ratio of the probability of observing the evidence (the linguistic data) under Hp versus under Hd[cite:2].

- MVKD Implementation: The MVKD procedure is used to model the distribution of the multivariate lexical feature vectors in the population of potential authors and to estimate the required probabilities for the LR calculation [16] [7].

- Formula: The likelihood ratio can be expressed as: LR = p(E | Hp) / p(E | Hd) Where E represents the evidence (the linguistic features of the text) [7].

Workflow Visualization

MVKD Forensic Text Comparison Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Reagents for MVKD-based Forensic Text Comparison

| Item Name | Function/Description | Application Note |

|---|---|---|

| Chatlog Database | A curated corpus of electronic messages from known authors. | Serves as the population data for modeling feature distributions. The Perverted Justice Foundation Inc. (PJFI) archive has been used in foundational studies [7]. |

| Lexical Feature Set | A defined vector of computable text features. | Enables quantitative representation of authorship style. Includes metrics for lexical richness, message length, and character usage [7]. |

| MVKD Software | Computational implementation of the Multivariate Kernel Density formula. | The core engine for calculating probability densities of feature vectors under competing hypotheses [16] [17]. |

| Log-Likelihood-Ratio Cost (Cllr) | A gradient metric for assessing the quality of calculated LRs. | The primary performance indicator for the system; lower values signify better discrimination and calibration [16] [7] [4]. |

| Logistic Regression Model | A statistical model for fusing LRs from multiple procedures. | Used to combine evidence from MVKD, token N-grams, and character N-grams into a single, more robust LR [7] [4]. |

This document details the application of Word Token-Based N-grams as an individual procedure within a broader research framework focused on fusing multiple forensic text comparison (FTC) systems. The fusion of distinct computational linguistic procedures has been demonstrated to yield superior performance over any single method, creating a more robust and reliable system for authorship analysis [8] [12]. This protocol describes the role, implementation, and evaluation of the word n-gram procedure, which captures an author's lexical and syntactic preferences by analyzing contiguous sequences of words.

Performance in a Fused System

In the context of fused forensic text comparison, individual procedures are evaluated both on their standalone performance and on their contribution to a combined system. The quantitative performance of a word n-gram system, alongside other procedures, is typically assessed using the log-likelihood-ratio cost (Cllr). Lower Cllr values indicate a more accurate and discriminating system.

The table below summarizes example performance metrics from a fused FTC study, illustrating the comparative performance of individual procedures and the performance gain achieved through fusion.

Table 1: Performance Comparison of Individual Procedures and a Fused System (Example Data from a Chatlog Message Study using 1500 Tokens)

| System / Procedure | Cllr Value | Relative Performance |

|---|---|---|

| MVKD (with authorship features) | ~0.19 (Inferred) | Best performing single procedure |

| Word Token-Based N-grams | ~0.27 (Inferred) | Mid-performing single procedure |

| Character-Based N-grams | ~0.32 (Inferred) | Lower-performing single procedure |

| Logistic-Regression Fused System | 0.15 | Outperforms all single procedures |

Interpretation: The fused system achieves a lower Cllr (0.15) than any of the individual procedures, demonstrating that the strengths of the word n-gram method, combined with the strengths of other procedures, create a more powerful and reliable FTC system [8] [4]. The fusion is particularly beneficial when data is scarce (e.g., 500-1500 tokens) [7].

Experimental Protocol for Word Token-Based N-gram Analysis

This protocol provides a step-by-step methodology for implementing a word token-based n-gram procedure for forensic text comparison.

Text Preprocessing and Data Preparation

- Data Collection: Gather the relevant text corpora. This includes the questioned document(s) (Q) and the known documents (K) from a suspect. For validation, a large, representative background corpus (B) is also required to model the population of potential authors [1].

- Text Normalization:

- Convert the entire text of each document to lowercase to ensure consistency.

- Remove or standardize non-lexical elements (e.g., URLs, email addresses, excessive punctuation), though the retention of certain stylistic markers can be configuration-dependent.

- Tokenization: Split the text into individual word tokens. This involves separating words based on spaces and punctuation.

- Stop Word Filtering (Optional but Recommended): For many stylometric applications, it is effective to remove very common function words (e.g., "the", "and", "of") [18]. However, in forensic text comparison, these words can be highly discriminative due to their unconscious use. This choice should be empirically validated.

- n-gram Generation: Using the tokenized text, generate contiguous sequences of

nwords.- For example, from the sentence "The quick brown fox," the bigrams (2-grams) would be "the quick", "quick brown", "brown fox".

- The value of

n(e.g., 1 for unigrams, 2 for bigrams, 3 for trigrams) is a key parameter to optimize.

Feature Extraction and Model Training

- Feature Vector Creation: For each set of documents (Q, K, and the background corpus B), create a feature vector representing the frequency of each unique n-gram found in the text.

- Feature Selection: Due to the high dimensionality of n-gram feature spaces, it is often necessary to select the most discriminative features. This can be done by:

- Retaining the most frequent

kn-grams across the background corpus. - Using a statistical measure to identify n-grams that best distinguish authors.

- Retaining the most frequent

- Model Estimation: Use a statistical model to estimate the probability of the evidence (the n-gram features) under two competing hypotheses. A common approach is the Dirichlet-multinomial model [1].

- Similarity (Prosecution Hypothesis, Hp): Calculate the probability of the n-gram features in the questioned document given the model of the known documents by the suspect.

p(E | Hp) - Typicality (Defense Hypothesis, Hd): Calculate the probability of the same n-gram features given a model built from the background corpus of many other authors.

p(E | Hd)

- Similarity (Prosecution Hypothesis, Hp): Calculate the probability of the n-gram features in the questioned document given the model of the known documents by the suspect.

Likelihood Ratio Calculation and Fusion

- LR Calculation: Compute the Likelihood Ratio (LR) as the ratio of the two probabilities.

LR = p(E | Hp) / p(E | Hd)An LR > 1 supports the prosecution hypothesis (same author), while an LR < 1 supports the defense hypothesis (different authors) [7] [1]. - Calibration (Optional): The raw output scores from the model may need to be transformed into well-calibrated LRs using a technique like logistic regression [1].

- Fusion: The LRs from the word n-gram procedure are combined with the LRs from other independent procedures (e.g., character n-grams, MVKD) using a logistic-regression fusion technique to produce a single, more robust LR for the evidence [8] [12].

The following workflow diagram illustrates the entire experimental protocol.

The Researcher's Toolkit

Table 2: Essential Research Reagents and Computational Tools for Word N-gram Analysis

| Item / Tool | Function / Description | Application Note |

|---|---|---|

| Forensic Text Corpus | A collection of texts from known authors, used for modeling and validation. | Must be relevant to case conditions (e.g., topic, genre, medium). Predatory chatlogs [8] and SMS messages [12] have been used. |

| Background Population Corpus | A large, representative corpus of texts from many authors. | Models the population for the defense hypothesis (Hd) and is critical for estimating typicality [1]. |

| Tokenization Tool (e.g., NLTK) | Software library to split text into word tokens. | The Natural Language Toolkit (NLTK) in Python is a standard for this task [19]. |

| Statistical Computing Environment (e.g., R, Python) | Platform for implementing statistical models and calculations. | Used for building the Dirichlet-multinomial or other models and calculating probabilities and LRs [1]. |

| Likelihood Ratio (LR) Framework | The logical and legal framework for evaluating evidence strength. | Quantifies the strength of evidence for one hypothesis over another (e.g., same author vs. different authors) [7] [1]. |

| Logistic Regression Calibration & Fusion | A technique to convert model scores to calibrated LRs and fuse multiple LRs. | Critical for combining the output of the word n-gram procedure with other systems (e.g., character n-grams, MVKD) [8] [12]. |

| Evaluation Metric (Cllr) | The log-likelihood-ratio cost, a metric for LR system performance. | The primary metric for assessing the validity and reliability of the procedure; lower values indicate better performance [8] [4]. |

Within the framework of forensic text comparison system fusion techniques, character-based n-grams provide an exceptionally granular approach for analyzing textual evidence. Unlike word-level models that rely on complete lexical units, character n-grams identify sequences of consecutive characters, enabling the detection of subtle author-specific patterns, habitual misspellings, morphological variations, and other distinctive features that remain persistent across documents [20] [21]. This methodology proves particularly valuable for forensic analysis of short texts—such as threatening messages, social media posts, or smeared documents—where word-level models suffer from data sparsity and insufficient contextual information [20] [22]. By operating at the sub-word level, character n-grams capture stylistic consistencies that are largely unconscious and difficult for authors to disguise, thereby offering robust features for distinguishing between individuals in forensic authorship attribution.

The integration of character n-gram analysis into multimodal fusion frameworks represents a significant advancement for forensic science. As demonstrated in computer vision and natural language processing research, fusion techniques that combine multiple feature types and analysis levels substantially improve pattern recognition accuracy and system robustness [23] [24] [22]. Similarly, in forensic text comparison, fusing character-level patterns with word-level, syntactic, and semantic features creates a more comprehensive representation of authorship style, enhancing the discriminative power of comparison systems while mitigating the limitations inherent in any single analytical approach.

Research Reagent Solutions

Table 1: Essential Research Reagents and Computational Tools for Character-Based N-gram Analysis

| Reagent/Tool | Type/Function | Forensic Application |

|---|---|---|

| Text Preprocessing Pipeline | Normalization, cleaning, and encoding standardization | Ensures consistent analysis by handling variations in formatting, punctuation, and character encoding across evidentiary documents |

N-gram Tokenization Library (e.g., tidytext R package [21]) |

Generates contiguous character sequences of length n from raw text |

Extracts foundational character-level features for subsequent pattern analysis and model development |

| Feature Weighting Algorithms (e.g., TF-IWF [20]) | Calculates term frequency-inverse inverse document frequency | Identifies discriminative character sequences by emphasizing patterns frequent in a specific document but rare across the corpus |

| Dimensionality Reduction Methods (e.g., PCA, autoencoders) | Projects high-dimensional n-gram features into lower-dimensional space | Addresses the "curse of dimensionality" and enhances computational efficiency for comparison tasks |

| Similarity/Distance Metrics (e.g., cosine similarity, Jaccard index) | Quantifies the resemblance between document feature vectors | Provides quantitative measures for assessing authorship similarity in forensic comparisons |

| Fusion Framework (e.g., static linear or dynamic fusion [20]) | Integrates character n-gram features with other linguistic evidence | Creates a robust, multi-feature decision system that improves attribution accuracy and reliability |

Experimental Protocol for Forensic Analysis

Procedure: Character N-gram Feature Extraction and Comparison

Objective: To generate and compare character-based n-gram profiles from questioned and known writing samples for authorship attribution.

Materials and Reagents:

- Digital text documents (questioned and known specimens)

- Computational environment with necessary libraries (e.g., R with

tidytext[21], Python withscikit-learn) - Text preprocessing tools

Methodology:

Text Preprocessing: Normalize all documents by converting to lowercase, removing extraneous whitespace, and standardizing punctuation. Retain all alphanumeric characters, as selective removal may discard forensically significant patterns [20].

N-gram Generation: Utilize a tokenization library to decompose each document into overlapping sequences of

nconsecutive characters. For languages with alphabetic systems, empirically test values ofnbetween 3 and 5 to balance specificity and generalizability [21].Feature Vector Construction:

- Calculate the frequency of each unique character n-gram within every document.

- Apply a feature weighting scheme like TF-IWF (Term Frequency-Inverse Inverse Document Frequency) to emphasize n-grams that are common in a specific document but rare in the overall corpus [20]. This helps highlight author-specific patterns.

- Construct a document-feature matrix where rows represent documents and columns represent the weighted frequencies of each character n-gram.

Similarity Analysis:

- Compute pairwise similarity scores (e.g., cosine similarity) between the questioned document's feature vector and the feature vectors of all known specimens.

- Rank known specimens based on their similarity scores to the questioned document to identify potential authors.

Procedure: Multi-Feature Fusion for Authorship Attribution

Objective: To integrate character n-gram features with word-level semantic features to create a more robust forensic text comparison system.

Materials and Reagents:

- Extracted character n-gram features (from Procedure 3.1)

- Word-level semantic features (e.g., from Word2Vec or BERT models [20] [22])

- Fusion framework (static linear or dynamic)

Methodology:

Feature-Level Fusion: Implement one of two primary fusion strategies to combine evidence [20]:

- Static Linear Fusion: Create a unified feature vector by concatenating the character n-gram vector and the word-level semantic vector, potentially applying fixed weights to each feature type.

F_fused = α * F_ngram + β * F_semantic - Dynamic Fusion: Develop an adaptive model where the fusion weights (α, β) are not fixed but are determined based on the characteristics of the input text (e.g., its length or thematic content). This allows the system to rely more heavily on character n-grams for very short texts and on semantic features for longer, more contextual documents [20].

- Static Linear Fusion: Create a unified feature vector by concatenating the character n-gram vector and the word-level semantic vector, potentially applying fixed weights to each feature type.

Model Training and Validation: Train a classifier (e.g., SVM, neural network) on the fused feature vectors from a training corpus of known authorship. Validate the model's performance using a separate test set, employing metrics such as accuracy, precision, and recall, with a particular focus on its ability to correctly attribute authorship of short texts [22].

Data Analysis and Interpretation

Table 2: Quantitative Performance Comparison of Text Representation Methods on Classification Tasks

| Representation Method | Feature Type | Reported Accuracy on Short Texts | Key Advantages for Forensic Analysis |

|---|---|---|---|

| Bag-of-Words (BoW) | Word-level | Baseline | Simple to implement, provides a basic lexical profile |

| Topic Models (LDA) | Global topic | Lower performance on short texts [20] | Captures document-level thematic content |

| Word Embeddings (Word2Vec) | Word-level semantic | Moderate [20] | Captures semantic relationships and contextual meaning |

| Character N-grams | Character-level | High for pattern recognition [21] | Resistant to lexicon variation, captures sub-word style |

| Fused Features (e.g., WWE + ETI) | Hybrid: Semantic + Topic | Highest [20] [22] | Combines strengths of multiple feature types, mitigates individual weaknesses |

The data from comparative studies strongly supports the fusion of feature types. Models relying on a single feature type, such as pure topic models, exhibit notable limitations when applied to short texts due to data sparsity [20]. Character n-grams address this sparsity directly by utilizing a much larger set of features derived from sub-word units. Furthermore, the successful application of weighted word embeddings and extended topic information demonstrates that emphasizing discriminative features and enriching context directly improves model performance [20]. In a forensic context, this translates to a higher confidence in attribution when multiple, complementary lines of textual evidence are combined.

Visualizations

Workflow for Forensic Text Comparison

Character N-gram Analysis Process

Application Notes

The primary application of character-based n-grams within forensic text comparison is resolving authorship of short, sparse texts, where traditional methods falter. This includes SMS messages, social media posts, graffiti, ransom notes, and forged documents. In one demonstrated methodology, a sliding window extension technique enriches the apparent context of a short text without altering its original word order or semantics, thereby providing a denser feature set for topic modeling and subsequent fusion with character-level patterns [20].

Successful implementation requires careful consideration of the fusion strategy. For operational environments where consistency is paramount, static linear fusion offers simplicity and reproducibility. For research or advanced casework involving diverse text types, dynamic fusion, which adapts weighting based on text properties like length, can optimize performance [20]. The fusion framework is analogous to those achieving state-of-the-art results in fine-grained image recognition, where combining features at multiple levels of granularity is essential for distinguishing between highly similar classes [23].

Forensic practitioners must validate their fused models on corpora representative of actual case material. Performance should be benchmarked against single-feature models to quantitatively demonstrate the added value of fusion, particularly focusing on reduction in false positive attributions. This rigorous, evidence-based approach ensures that character-based n-gram analysis and feature fusion meet the high standards of reliability required for forensic testimony.

The evaluation of forensic evidence is increasingly conducted within the Likelihood Ratio (LR) framework, which is recognized as a logically and legally sound method for expressing the strength of evidence [25]. This framework compares the probability of observing the evidence under two competing propositions, typically the prosecution hypothesis (H1) and the defence hypothesis (H2) [7]. The LR provides a transparent and balanced measure of evidential strength, overcoming the significant limitations of traditional binary classification methods that rely on arbitrary "cliff-edge" p-value cut-offs [25].

In complex forensic disciplines, multiple, independent forensic-comparison systems may analyze different characteristics of the same evidence. Logistic-regression fusion is a powerful statistical technique designed to combine the LRs or similarity scores from these multiple systems into a single, more robust, and better-calibrated LR [26]. This fused LR represents the combined strength of all available evidence, often resulting in improved system performance and greater discriminative power compared to any single system [12] [7]. This protocol details the application of logistic-regression fusion, with a specific focus on its role in advancing forensic text comparison system fusion techniques.

Theoretical Foundation

The Likelihood Ratio Framework

The Likelihood Ratio is the fundamental metric for evidence evaluation in modern forensic science. It is formally defined as:

LR = P(E|H1) / P(E|H2)

where P(E|H1) is the probability of observing the evidence (E) given that hypothesis H1 is true, and P(E|H2) is the probability of E given that H2 is true [25] [7].

The value of the LR quantitatively expresses the degree of support for one proposition over the other:

- An LR > 1 provides support for H1 over H2.

- An LR = 1 indicates the evidence is equally probable under both hypotheses and is therefore inconclusive.

- An LR < 1 provides support for H2 over H1 [25].

The magnitude of the LR can be interpreted using verbal scales, such as the one provided by the European Network of Forensic Science Institutes (ENFSI), which ranges from "weak support" to "extremely strong support" [25].

The Need for Fusion of Multiple Systems

A single type of analysis may provide only a partial view of the evidence. For instance, in forensic text comparison, an author's style can be captured through:

- Lexical features (e.g., vocabulary richness, word length distribution).

- Character N-grams (short sequences of characters capturing spelling habits).

- Token N-grams (sequences of words capturing phrase-level patterns) [7].

A system based solely on lexical features might miss syntactic patterns captured by token N-grams, and vice-versa. Using a single system risks overlooking valuable discriminatory information present in other feature types. Combining multiple systems mitigates this risk and leverages the complementary strengths of different analytical approaches.

The Role of Logistic Regression in Calibration and Fusion

Raw scores from forensic comparison systems, while indicative of similarity, are not directly interpretable as LRs. Their absolute values lack a probabilistic calibration [26]. Logistic regression is a robust and widely adopted method for converting these raw scores into well-calibrated LRs.

The procedure involves:

- Calibration: Transforming the output score from a single system into a log-likelihood ratio.

- Fusion: Combining the log-likelihood ratios from multiple systems into a single, fused log-likelihood ratio [26].

Logistic regression is suitable for this task because it directly models the posterior probability of a proposition (e.g., H1 being true) given the evidence, which can be algebraically rearranged to produce an LR [26] [7].

Workflow and Signaling Pathway

The following diagram illustrates the logical sequence and data flow for applying logistic-regression fusion in a forensic context, from evidence processing to the final fused likelihood ratio.

Experimental Protocols

Protocol 1: Data Preparation and Feature Extraction for Forensic Text Comparison

This protocol outlines the initial steps for preparing text evidence and extracting features for multiple analysis systems.

1. Objective: To prepare a corpus of text messages and extract diverse feature sets suitable for calculating likelihood ratios from independent systems.

2. Materials:

- Text Corpus: A collection of text messages (e.g., chatlogs, SMS) from known authors. The corpus should be partitioned into a training set (for model development), a test set (for performance evaluation), and a background population set (for modelling the relevant population) [7].

- Computing Resources: A computer with sufficient processing power and memory for text analysis.

- Software: Programming environment (e.g., R, Python) with text processing libraries.

3. Procedure:

1. Data Cleaning and Tokenization:

* Remove metadata and extraneous characters, but preserve orthographic features (e.g., "u" for "you") typical of informal text [7].

* Split text into individual word tokens.

2. Feature Extraction for Multiple Systems:

* System 1 (Lexical Features): Calculate a vector of features for each document/author. Example features include [7]:

* Vocabulary richness (e.g., Type-Token Ratio).

* Average sentence length (in tokens).

* Ratio of function words to content words.

* Character-level features (e.g., ratio of upper-case characters).

* System 2 (Character N-grams):

* Decompose the text into overlapping sequences of n consecutive characters (typical n=3-5).

* Create a document-feature matrix representing the frequency of each character N-gram.

* System 3 (Token N-grams):

* Decompose the text into overlapping sequences of n consecutive word tokens (typical n=1-3).

* Create a document-feature matrix representing the frequency of each token N-gram.

3. Data Partitioning:

* Ensure the training, test, and background sets are mutually exclusive and representative of the population.

Protocol 2: Training the Logistic-Regression Fusion Model

This protocol describes how to train a fusion model using scores from multiple systems.

1. Objective: To develop a logistic-regression model that fuses the log-LR outputs from multiple, independent forensic comparison systems.

2. Materials:

- Input Data: A set of log-Likelihood Ratios (or raw scores that have been converted to log-LRs) from

kdifferent systems for a series of known comparisons in the training set. - Software: Statistical software capable of performing logistic regression (e.g., R, Python with scikit-learn).

3. Procedure:

1. Generate Input Scores: For each comparison i in the training set, obtain a vector of scores from the k systems. Let s_i = [s_i1, s_i2, ..., s_ik] be this vector, where each s_ik is ideally a log-LR. If systems output raw scores, a preliminary calibration step must be performed on each system's output separately [26].

2. Define Dependent Variable: Assign a binary label y_i for each comparison i:

* y_i = 1 for comparisons where H1 is true (e.g., same-origin).

* y_i = 0 for comparisons where H2 is true (e.g., different-origin).

3. Model Training: Fit a logistic regression model to the training data. The model predicts the probability that H1 is true, given the scores from the k systems [26] [7]:

P(H1 | s_i) = σ(β_0 + β_1*s_i1 + β_2*s_i2 + ... + β_k*s_ik)

where σ(.) is the logistic sigmoid function.

4. Derive Fused Log-LR: The fused log-Likelihood Ratio for a new set of scores s_new is calculated directly from the model's linear predictor [26]:

Fused log-LR(s_new) = β_0 + β_1*s_new1 + β_2*s_new2 + ... + β_k*s_newk

The final fused LR is obtained by exponentiation: Fused LR = exp(Fused log-LR).

Protocol 3: System Performance Evaluation

This protocol defines the methods for assessing the performance and validity of the fused LR system.