Forensic Text Comparison Methodology: Principles, Validation, and Applications for Scientific Research

This article provides a comprehensive overview of Forensic Text Comparison (FTC), a scientific discipline for evaluating the strength of textual evidence.

Forensic Text Comparison Methodology: Principles, Validation, and Applications for Scientific Research

Abstract

This article provides a comprehensive overview of Forensic Text Comparison (FTC), a scientific discipline for evaluating the strength of textual evidence. Aimed at researchers and scientists, it explores the foundational Likelihood Ratio framework for quantitative evidence evaluation, details core methodological approaches including feature-based and score-based systems, and addresses critical challenges like topic mismatch and data scarcity. The content emphasizes the necessity of rigorous empirical validation under case-relevant conditions and discusses performance benchmarking, offering insights into the application of these methodologies in scientific and investigative contexts.

The Foundations of Forensic Text Comparison: From Idiolect to the Likelihood Ratio Framework

Defining Forensic Text Comparison and the Concept of Idiolect

Forensic Text Comparison (FTC) is a scientific methodology within forensic linguistics that aims to determine the likelihood that a specific individual authored a particular questioned text. It operates on the core premise that every individual possesses a unique and habitual language pattern, known as an idiolect [1] [2]. This technical guide explores the definition of idiolect, the methodological framework of FTC, and its application, providing researchers and scientists with a detailed overview of the current state of this interdisciplinary field.

The principle that an individual's language use is distinctive provides the theoretical foundation for applying linguistic analysis in legal and investigative contexts [1]. This review is situated within broader research on forensic text comparison methodology, which seeks to develop robust, reliable, and scientifically validated techniques for authorship analysis.

Core Concepts

The Concept of Idiolect

An idiolect is defined as an individual's unique and personal use of language. This encompasses their characteristic choices in vocabulary, grammar, and pronunciation [1] [2]. The term itself is derived from the Greek idio- (meaning 'own, personal') and -lect (from 'dialect') [1]. Crucially, an idiolect is not static; it evolves over a person's lifetime through experiences, such as learning new words or moving to a different geographical region [2].

In essence, while people within a speech community share a mutually intelligible language (a dialect), the specific way each person employs that language is unique to them. Idiolects represent the most granular level of linguistic variation, forming the building blocks of a language, which is itself a composite of mutually intelligible idiolects [1] [2].

Forensic Text Comparison

Forensic Text Comparison (FTC) is the practical application of idiolect theory in forensic science. It involves comparing a text of unknown authorship (the questioned text) with texts of known authorship from a suspect (the reference texts) [1]. The goal is to assess the strength of the evidence for whether the suspect authored the questioned text.

This process is analogous to other forensic comparative sciences. The analysis does not typically rely on a single, conspicuous marker but on a constellation of subtle, often subconscious, linguistic habits. These can include the use of prepositions, punctuation, and other features that an author does not consciously control [2]. FTC provides a framework for quantifying the degree of similarity or difference between these linguistic patterns.

Quantitative Features and Analytical Techniques

Forensic text comparison relies on the computational analysis of quantifiable linguistic features. The table below summarizes the primary categories of features and analytical techniques used in modern FTC research.

Table 1: Key Analytical Features and Techniques in Forensic Text Comparison

| Feature Category | Specific Examples | Analytical Technique | Function/Purpose |

|---|---|---|---|

| Lexico-Grammatical Features | Pronoun frequency, negations, sensory descriptions [3] | Multivariate Kernel Density (MVKD) [4] | Models an author's style as a vector of features for statistical comparison. |

| N-grams | Consecutive sequences of 'n' words or characters [3] [4] | N-gram Models [4] | Captures habitual phrases and syntactic patterns. |

| Psycholinguistic Features | Deception, emotion (anger, fear), subjectivity [3] | NLP Libraries (e.g., Empath) [3] | Infers psychological state and cognitive patterns from language use. |

| Stylistic Features | Overconfidence, hedging, exaggeration [3] | Machine Learning Classifiers (SVM, Random Forest) [3] | Identifies stylistic markers associated with deception or specific author traits. |

The performance of an FTC system is often evaluated using metrics like the log-likelihood-ratio cost (Cllr), which gauges the quality of the computed likelihood ratios [4]. Research indicates that a fusion of multiple techniques (e.g., combining MVKD and N-gram procedures) often yields superior performance and more reliable results than any single method alone [4].

Experimental Protocols in FTC Research

A Protocol for a Fused Forensic Text Comparison System

The following methodology is adapted from a study that demonstrated the efficacy of a fused system for estimating the strength of linguistic evidence using a likelihood ratio (LR) framework [4].

1. Objective: To estimate the strength of evidence for authorship by fusing LRs derived from multiple analytical procedures.

2. Materials and Data:

- Corpus: Chatlog messages from 115 authors.

- Text Samples: For each author, multiple groups of messages are sampled, with the token length progressively increased (e.g., 500, 1000, 1500, and 2500 tokens) to test the effect of data quantity.

3. Experimental Procedure:

- Step 1: Feature Extraction. For each author's set of messages, extract three independent sets of features:

- A vector of authorship attribution features (e.g., from Table 1).

- Word-based N-grams.

- Character-based N-grams.

- Step 2: Individual Likelihood Ratio Estimation. Calculate an LR for each author comparison using three different procedures:

- MVKD Procedure: Model each group of messages as a vector of authorship features.

- Word N-gram Procedure.

- Character N-gram Procedure.

- Step 3: Logistic-Regression Fusion. Fuse the three separately estimated LRs using logistic regression to obtain a single, more robust LR for each author comparison.

- Step 4: System Evaluation. Assess the performance of the individual procedures and the fused system using:

- Log-likelihood-ratio cost (Cllr): A single metric representing overall system accuracy.

- Tippett plots: Graphical representations of the distribution of LRs for ground-truth authors and non-authors.

A Protocol for Psycholinguistic NLP Analysis

This protocol outlines a methodology for identifying persons of interest by analyzing psycholinguistic features over time, as demonstrated in recent research [3].

1. Objective: To identify key suspects from a larger pool by reverse-engineering psycholinguistic features indicative of deceptive or emotional behavior.

2. Materials and Data:

- Corpus: A set of texts from multiple suspects (e.g., transcribed police interviews, emails).

- Ground Truth: Knowledge of the guilty parties (for validation).

3. Experimental Procedure:

- Step 1: Temporal Feature Tracking. For each suspect's text, calculate and track the following variables over the duration of the discourse or interview:

- Step 2: Topic and Entity Analysis.

- Apply Latent Dirichlet Allocation (LDA) to identify key topics.

- Analyze correlation to investigative keywords and phrases.

- Identify contradictory narratives within the text.

- Step 3: Data Integration and Suspect Ranking. Combine the outputs from the previous steps to create a subset of suspects who are highly correlated with the psycholinguistic and topical patterns of interest. This acts as a human feature reduction algorithm.

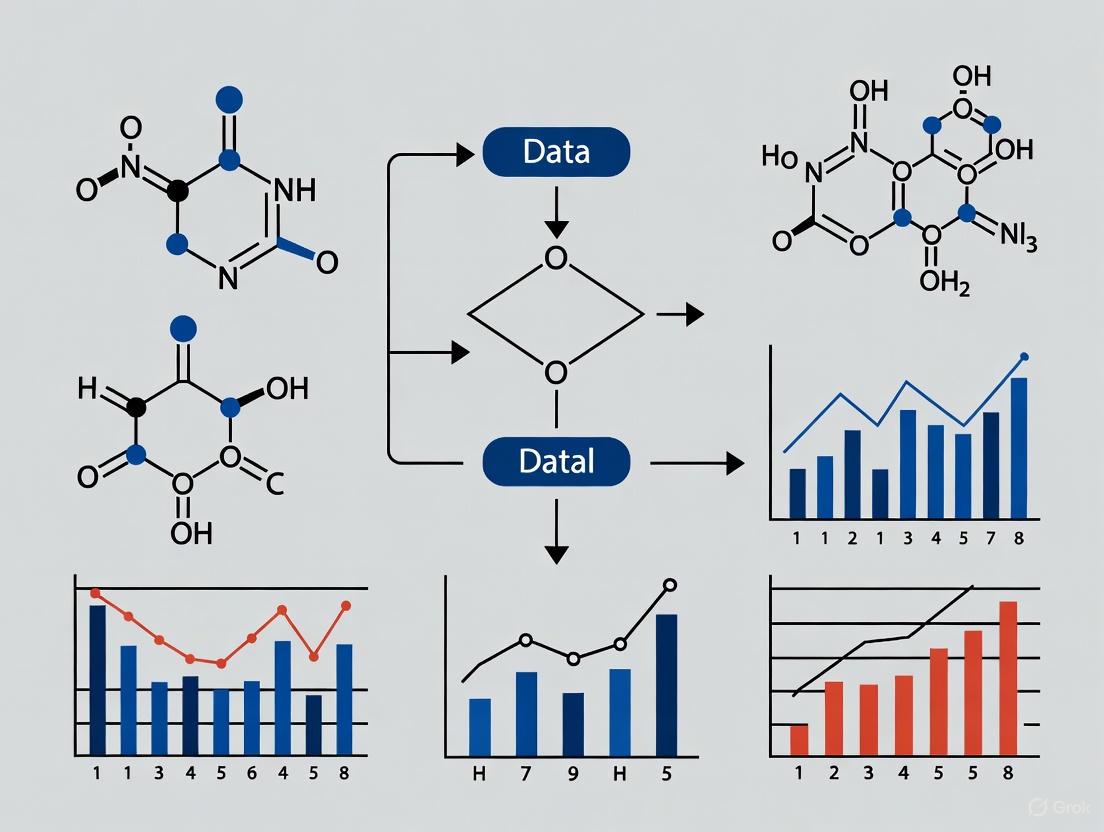

Visualization of Methodologies

The following diagrams illustrate the logical workflows of the core FTC methodologies described in this guide.

Fused Text Comparison System

Psycholinguistic Analysis Workflow

The Researcher's Toolkit

The following table details key reagents, software, and analytical solutions essential for conducting research in forensic text comparison.

Table 2: Essential Research Tools for Forensic Text Comparison

| Tool / Solution | Type | Primary Function in FTC |

|---|---|---|

| Empath [3] | Python Library | Analyzes text against built-in categories to generate and track features like deception and emotion over time. |

| LIWC (Linguistic Inquiry and Word Count) [3] | Software / Dictionary | Quantifies psychological and linguistic features in text, such as emotionality and cognitive processes. |

| MVKD (Multivariate Kernel Density) Procedure [4] | Statistical Model | Models an author's style as a multivariate distribution of linguistic features for likelihood ratio calculation. |

| N-gram Models (Word & Character) [3] [4] | Computational Linguistic Model | Captures frequent, habitual sequences of language elements that are characteristic of an author's idiolect. |

| Machine Learning Classifiers (e.g., SVM, Random Forest) [3] | Algorithm | Classifies texts based on learned stylistic patterns, often used for deception detection or authorship attribution. |

| LDA (Latent Dirichlet Allocation) [3] | Topic Modeling Algorithm | Discovers underlying thematic structures in a corpus of text, which can be used for narrative analysis. |

The Likelihood Ratio (LR) framework is widely recognized as the logically and legally correct method for the evaluation of forensic evidence [5]. Its adoption is being championed by scientific bodies and is becoming a regulatory requirement in an increasing number of jurisdictions. For instance, in the United Kingdom, the LR framework is slated for deployment across all major forensic science disciplines by October 2026 [5]. This framework provides a coherent and transparent method for quantifying the strength of evidence, moving away from categorical assertions towards a more nuanced and scientifically defensible interpretation. This guide explores the core principles of the LR framework, its application in forensic text comparison (FTC), and the empirical validation required for its defensible use, thereby situating it within the broader research agenda for robust forensic text comparison methodology.

Core Principles of the Likelihood Ratio

Fundamental Definition and Interpretation

At its heart, a Likelihood Ratio is a quantitative statement about the strength of evidence. It assesses the probability of the evidence under two competing propositions, typically the prosecution hypothesis ((Hp)) and the defense hypothesis ((Hd)) [5]. The LR is formally expressed in Equation (1):

[ LR = \frac{p(E|Hp)}{p(E|Hd)} ]

Here, (p(E|Hp)) is the probability of observing the evidence ((E)) given that the prosecution's hypothesis is true. Conversely, (p(E|Hd)) is the probability of the same evidence given that the defense's hypothesis is true [5]. The prosecution hypothesis in a typical FTC case might be that "the questioned and known documents were produced by the same author," while the defense hypothesis would be that "they were produced by different individuals" [5].

The value of the LR indicates the direction and strength of the evidence:

- LR > 1: The evidence supports (H_p).

- LR = 1: The evidence is neutral; it is equally probable under both hypotheses.

- LR < 1: The evidence supports (H_d) [5].

The further the LR is from 1, the stronger the evidence. For example, an LR of 10 means the evidence is ten times more likely if (Hp) is true than if (Hd) is true. Conversely, an LR of 0.1 means the evidence is ten times more likely if (H_d) is true [5].

The Role of the LR in Updating Beliefs: Bayes' Theorem

The LR is the key component in the logical process of updating prior beliefs about the hypotheses in light of new evidence. This process is formally described by the odds form of Bayes' Theorem, shown in Equation (2):

[ \underbrace{\frac{p(Hp)}{p(Hd)}}{\text{prior odds}} \times \underbrace{\frac{p(E|Hp)}{p(E|Hd)}}{\text{Likelihood Ratio (LR)}} = \underbrace{\frac{p(Hp|E)}{p(Hd|E)}}_{\text{posterior odds}} ]

This equation states that the prior odds (the fact-finder's belief about the hypotheses before considering the new evidence) multiplied by the LR yields the posterior odds (the updated belief after considering the evidence) [5].

It is critical to recognize the respective roles within this framework. The forensic scientist's task is to compute the LR based on the evidence. It is not the role of the forensic scientist to assign prior odds or to present the posterior odds, as these involve the fact-finder's domain and speak to the ultimate issue of guilt or innocence, which is the prerogative of the court [5]. The LR itself is a statement about the evidence, not the hypotheses.

Application of the LR Framework to Forensic Text Comparison

Forensic Text Comparison seeks to evaluate whether a questioned document originated from a particular known author. The complexity of textual evidence lies in the fact that a text encodes not only information about the author's idiolect but also about their social group, the topic, the genre, and the specific communicative situation [5]. The LR framework provides a structure for weighing the similarity and typicality of stylistic patterns observed in the texts.

Core Workflow in an FTC-LR System

The process of applying the LR framework in FTC involves a sequence of steps, from data preparation to the final calculation and validation of the LR. The workflow can be summarized as follows:

Diagram 1: Experimental workflow for an FTC-LR system.

Essential Research Reagent Solutions for FTC

To implement the workflow above, researchers and practitioners rely on a set of methodological "reagents" – essential components that ensure the analysis is scientifically sound.

Table 1: Essential Research Reagent Solutions for FTC-LR Analysis

| Item | Function in FTC-LR Analysis |

|---|---|

| Reference Data Corpora | Provides population-level data to estimate the typicality of features under (H_d). The data must be relevant to the case conditions (e.g., topic, genre) [5]. |

| Stylometric Features | Quantifiable aspects of writing style (e.g., "Average character number per word token," "Punctuation character ratio," vocabulary richness) used as measurements for comparison [6]. |

| Statistical Model | A computational model (e.g., Dirichlet-multinomial, Multivariate Kernel Density) used to calculate the probabilities (p(E|Hp)) and (p(E|Hd)) based on the extracted features [6]. |

| Calibration Model | A model, such as logistic regression calibration, applied to the output of the primary statistical model to ensure that the computed LRs are valid and well-calibrated [5]. |

| Validation Metrics | Performance measures like the log-likelihood-ratio cost (Cllr) and visualization tools like Tippett plots used to empirically test the accuracy and reliability of the LR system [5] [6]. |

Experimental Validation and Performance Metrics

The Critical Importance of Validation

A core tenet of the scientific method applied to forensic inference is empirical validation. It is not sufficient to simply use an LR model; the model's performance must be rigorously tested under conditions that reflect casework. Two main requirements for empirical validation are [5]:

- Reflecting the conditions of the case: The experimental setup must mimic the challenges of real casework (e.g., mismatched topics between documents, limited text length).

- Using relevant data: The data used for validation must be pertinent to the specific conditions of the case under investigation.

Failure to meet these requirements can mislead the trier-of-fact. For example, using a model validated on same-topic texts for a case involving texts on different topics (a "topic mismatch") would produce LRs of unknown validity and potentially over- or under-state the strength of the evidence [5].

Key Quantitative Metrics for System Performance

The performance of an LR-based system is quantitatively assessed using specific metrics that evaluate its discrimination ability and calibration.

Table 2: Key Performance Metrics for LR-Based Forensic Systems

| Metric | Description | Interpretation |

|---|---|---|

| Log-Likelihood-Ratio Cost (Cllr) | A single scalar measure that evaluates the overall performance of a forensic LR system, considering both discrimination and calibration [6]. | A lower Cllr value indicates better system performance. A perfect system has a Cllr of 0. Values below 1 are generally indicative of a system with some discrimination ability [6]. |

| Tippett Plots | A graphical tool that shows the cumulative distribution of LRs for same-source and different-source conditions [5]. | Allows for a visual assessment of system performance. A good system will show LRs >1 for same-source cases (supporting (Hp)) on the right and LRs <1 for different-source cases (supporting (Hd)) on the left, with a clear separation between the two curves. |

| Discrimination Accuracy | The rate at which the system correctly provides evidence supporting the true hypothesis. | For example, a discrimination accuracy of 94% means the system correctly assigns LRs >1 to same-source pairs and LRs <1 to different-source pairs 94% of the time [6]. |

Sample Experimental Protocol: Impact of Text Length

To illustrate a validated experiment, consider an investigation into how the amount of text influences the strength and accuracy of evidence in FTC.

1. Objective: To determine the effect of sample size on the performance of an LR-based authorship attribution system [6]. 2. Materials: Chatlog messages from 115 authors from a real archive of evidence [6]. 3. Feature Extraction: Stylometric features such as "Average character number per word token," "Punctuation character ratio," and vocabulary richness measures were extracted [6]. 4. Variable: Text length was manipulated at four levels: 500, 1000, 1500, and 2500 words [6]. 5. LR Calculation: LRs were calculated using the Multivariate Kernel Density formula, followed by logistic regression calibration [6]. 6. Performance Assessment: The primary metric was the log-likelihood-ratio cost (Cllr). Other assessments included credible intervals and equal error rates [6].

The results of this experiment are summarized in the table below, demonstrating a clear relationship between text length and system performance.

Table 3: Experimental Results: Impact of Text Length on FTC-LR System Performance [6]

| Sample Size (Words) | Discrimination Accuracy (Approx.) | Log-Likelihood-Ratio Cost (Cllr) |

|---|---|---|

| 500 | 76% | 0.68258 |

| 1000 | Information Not Specified | Information Not Specified |

| 1500 | Information Not Specified | Information Not Specified |

| 2500 | 94% | 0.21707 |

The data shows that a larger sample size is highly beneficial to FTC. It results in improved discriminability, an increase in the magnitude of LRs when (Hp) is true, and a decrease in the magnitude of LRs when (Hp) is false [6]. Furthermore, certain features like "Average character number per word token" were found to be robust across different sample sizes [6].

Advanced Considerations and Methodological Challenges

A significant challenge in some forensic disciplines is the tradition of examiners using subjective, categorical conclusions (e.g., "Identification," "Inconclusive," "Elimination"). Recent research has proposed methods to convert these categorical conclusions into LRs by statistically modeling examiner responses from black-box studies [7].

However, these methods face major hurdles to provide LRs meaningful for a specific case:

- Examiner-specific performance: A model trained on data pooled from multiple examiners may not represent the performance of the specific examiner in a given case, who may perform better or worse than the average [7].

- Case-specific conditions: The model must be trained on data that reflects the specific conditions of the case (e.g., quality of the evidence, type of material). LRs calculated under one set of conditions can differ substantially from those calculated under another [7].

A proposed solution is a Bayesian framework that uses population data as an informed prior, which is then updated with the specific examiner's own proficiency test data as it becomes available, gradually tailoring the model to the individual practitioner [7].

Presenting LRs for Maximum Understandability

A current frontier in LR research is how to best present LRs to legal decision-makers (e.g., judges, juries) to maximize comprehension. Existing empirical literature has explored the understanding of different formats, including:

- Numerical likelihood ratio values.

- Numerical random-match probabilities.

- Verbal strength-of-support statements.

A review of this literature concludes that there is no definitive answer on the "best" way to present LRs, highlighting a critical need for further research guided by robust methodologies [8]. Future studies must focus specifically on LR comprehension, using defined indicators like sensitivity, orthodoxy, and coherence to properly evaluate understanding [8].

The Likelihood Ratio framework provides a logically sound, transparent, and quantitative foundation for the evaluation of forensic evidence, including textual evidence. Its application in Forensic Text Comparison requires careful attention to statistical modeling, feature selection, and, most critically, empirical validation under casework-relevant conditions. While challenges remain—such as the integration of subjective examiner conclusions and the optimal communication of LR values to the courts—the LR framework represents the future of forensic science. It pushes the field towards greater scientific rigor, demonstrable reliability, and ultimately, a more robust and defensible administration of justice.

Forensic text comparison methodology research applies scientific principles and computational techniques to analyze written evidence, with the core objective of providing empirical support for one of two competing hypotheses: the prosecution's position (Hp) or the defense's position (Hd). This field integrates principles from psycholinguistics, computer science, and formal statistics to objectively evaluate linguistic evidence [3]. The process involves identifying and quantifying distinctive linguistic patterns to help triers of fact assess the strength of evidence in criminal cases, such as threats, forgeries, or anonymous communications.

The analytical framework is built upon a foundation of pattern-driven analysis, seeking symmetry between language (lingua) and the mind (psyche) [3]. By applying Natural Language Processing (NLP) and machine learning, researchers can extract measurable cues related to deception, emotion, and subjectivity from text sources like emails, instant messages, and transcribed interviews [3]. This technical guide details the methodologies and experimental protocols that underpin this rigorous scientific discipline.

Theoretical Foundation and Psycholinguistic Framework

Key Psycholinguistic Concepts in Hypothesis Formation

The theoretical foundation of forensic text comparison rests on the principle that language reflects cognitive and psychological states. Research demonstrates that deceptive communication, emotional arousal, and attempted deception manifest in predictable, quantifiable linguistic patterns [3].

- Deception Patterns: Deceptive language often exhibits subtle but measurable changes, including alterations in pronoun use, an increase in negations, and excessive use of sensory descriptions. These features are frequently too subtle for human detection but can be identified with computational assistance [3].

- Emotion and Subjectivity: The presence of specific emotions, such as anger and fear, as well as heightened subjectivity in narratives, can serve as proxies for deception or consciousness of guilt. For instance, overconfident individuals may tell more lies, and subjective language can influence perception and trustworthiness, even in the absence of factual information [3].

- Contradictory Narratives: Inconsistencies in a narrative over time or logical contradictions within a single statement are key indicators examined under both prosecution and defense frameworks.

Formal Statement of Core Hypotheses

The competing hypotheses are formally defined propositions regarding the source of a questioned text.

- Prosecution Hypothesis (Hp): The suspect is the author of the questioned text.

- Defense Hypothesis (Hd): The suspect is not the author of the questioned text; the questioned text originates from another source within a relevant population.

The role of the forensic text analyst is not to determine guilt or innocence, but to evaluate the linguistic evidence and calculate a likelihood ratio that expresses the strength of the evidence for one hypothesis over the other [3].

Quantitative Analysis of Psycholinguistic Features

The following table summarizes key psycholinguistic features and their typical interpretation in support of the prosecution or defense hypotheses, as identified in recent research [3].

Table 1: Quantitative Analysis of Psycholinguistic Features in Hypothesis Testing

| Feature Category | Specific Metric | Measurement Method | Typical Interpretation in Support of Hp | Typical Interpretation in Support of Hd |

|---|---|---|---|---|

| Deception | Deception over time | Python Empath library; statistical comparison with word embeddings [3] |

Sustained or elevated deception levels when discussing crime-related topics | Deception levels consistent with baseline or unrelated to crime topics |

| Emotion | Anger, Fear, Neutrality over time | N-gram analysis paired with emotion lexicons [3] | Increased fear or anger correlated with investigative keywords; unnatural neutrality | Emotional responses are contextually appropriate and not correlated with key crime terms |

| Subjectivity | Subjectivity vs. Objectivity | Lexical analysis (e.g., using LIWC) [3] | High subjectivity in factual accounts; contradictory narratives | Objective, consistent narrative without internal contradictions |

| Lexical Correlation | N-gram correlation | Pairwise correlation to investigative keywords and entities [3] | High correlation between suspect's language and specific crime-related entities/terms | Low correlation to key crime terms; language is generic |

| Narrative Consistency | Contradictory statements | Latent Dirichlet Allocation (LDA) for topic coherence; word vectors [3] | Fundamental contradictions in core narrative elements | Stable and coherent narrative throughout |

Experimental Protocols for Forensic Text Comparison

Protocol 1: NLP-Based Deception and Emotion Analysis

This protocol outlines the steps for a standardized evaluation of deception and emotion in suspect narratives, a common experimental approach in recent research [3].

Table 2: Key Research Reagent Solutions for NLP-Based Analysis

| Tool/Reagent | Type/Function | Brief Description of Role in Analysis |

|---|---|---|

| Empath Library | Python Library for NLP | Generates and analyzes lexical categories from text; used to calculate deception over time via statistical comparison with word embeddings [3]. |

| N-gram Models | Computational Linguistic Model | Identifies contiguous sequences of n words; used to track the frequency and context of investigative keywords and emotional language over time [3]. |

| LIWC (Linguistic Inquiry and Word Count) | Psycholinguistic Analysis Tool | Extracts features related to psychological states (e.g., emotion, subjectivity) from text, providing quantifiable data for machine learning [3]. |

| Latent Dirichlet Allocation (LDA) | Topic Modeling Algorithm | Discovers underlying thematic topics in a corpus of text; used to identify contradictory narratives or topic shifts [3]. |

| Word Embeddings (e.g., Word2Vec) | Word Vector Representation | Represents words in a high-dimensional space to measure semantic similarity; used for entity-to-topic correlation analysis [3]. |

- Data Collection and Preprocessing: Gather a corpus of text from the suspect(s) and, if available, from a relevant population sample. This can include transcribed police interviews, emails, or social media posts. Preprocess the text by tokenization, lemmatization, and removal of stop words.

- Feature Extraction:

- Calculate a deception score for each text segment using the

Empathlibrary. - Extract emotion scores (anger, fear, neutrality) using n-grams paired with established emotion lexicons.

- Compute subjectivity scores using a tool like LIWC.

- Perform entity and topic extraction using LDA to identify key themes and named entities.

- Calculate a deception score for each text segment using the

- Temporal Analysis: Plot the extracted features (deception, emotion, subjectivity) over the timeline of the text (e.g., interview sequence). This visualizes behavioral trends and predispositions.

- Correlation Analysis: Conduct a pairwise correlation between the suspect's use of specific keywords/entities and the central themes of the crime. A suspect highly correlated with key investigative terms may be of greater interest.

- Hypothesis Evaluation: Synthesize the results. A pattern of elevated deception, heightened fear or anger correlated with crime topics, and contradictory narratives may support the prosecution's position (Hp). A lack of these patterns may support the defense's position (Hd).

Protocol 2: Standardized LLM Evaluation for Forensic Timeline Analysis

Inspired by the NIST Computer Forensic Tool Testing Program, this protocol provides a framework for quantitatively evaluating the application of Large Language Models (LLMs) to forensic tasks, such as timeline analysis, which can support or challenge textual evidence [9].

- Dataset and Ground Truth Development: Create a standardized dataset that includes forensic timeline data (e.g., system logs, communication records). Establish a verified ground truth for this dataset.

- Timeline Generation and LLM Tasking: Process the data through a forensic timeline generator (e.g., log2timeline/plaso). Then, task an LLM (e.g., ChatGPT) with analyzing the timeline to answer specific investigative questions.

- Quantitative Evaluation with BLEU/ROUGE: Compare the LLM's output against the ground truth using quantitative metrics like BLEU (Bilingual Evaluation Understudy) and ROUGE (Recall-Oriented Understudy for Gisting Evaluation). These metrics assess the overlap and quality of the LLM-generated analysis versus the known facts [9].

- Hypothesis Support: The results determine the reliability of the LLM-generated analysis. Strong performance (high BLEU/ROUGE scores) indicates the tool can be used to generate credible insights that may support a case timeline for either Hp or Hd. Poor performance would undermine the credibility of such an analysis.

Case Study Application of the Framework

A research project successfully applied a psycholinguistic NLP framework to a fictional murder case with 18 suspects and two conspirators, whose identities were known only as ground truth [3]. The methodology involved analyzing separate, LLM-generated police interviews for each suspect.

- Initial Challenge: Initial analysis showed minimal variance in deception levels across all suspects, rendering this single metric ineffective [3].

- Application of Multi-Faceted Protocol: Researchers implemented a framework combining entity-to-topic correlation, deception detection, and emotion analysis.

- Outcome: By focusing on the correlation between a suspect's language and the key entities of the crime, and by analyzing emotional cues beyond deception, the methodology successfully identified the two guilty conspirators from the larger pool, thereby validating the prosecution's hypothesis (Hp) in the scenario [3]. This case demonstrates the necessity of a multi-feature approach rather than relying on a single linguistic metric.

The rigorous application of the core hypotheses framework is fundamental to the scientific validity of forensic text comparison. By employing standardized experimental protocols, quantitative analysis of psycholinguistic features, and a clear understanding of the prosecution and defense positions (Hp and Hd), researchers and forensic practitioners can provide objective, reliable, and actionable insights from linguistic evidence. The ongoing development of NLP and machine learning techniques, coupled with standardized evaluation methods, continues to enhance the field's precision and reliability, ensuring that its findings are robust and defensible.

Forensic text comparison methodology research represents a critical interdisciplinary frontier, integrating computational linguistics, psychology, and data science to address challenges in legal evidence analysis. This field has evolved from traditional qualitative document examination to sophisticated quantitative frameworks that disentangle the complex interplay of authorial style, genre conventions, and topical content in textual evidence. The burgeoning volume of digital communication in legal contexts—including emails, social media posts, and transcribed interviews—has created an urgent need for scientifically robust analytical protocols that can withstand judicial scrutiny [3].

Contemporary research focuses on developing transparent, replicable methodologies that account for the multifaceted nature of linguistic expression. The fundamental challenge lies in distinguishing between stable author-specific patterns, transient genre-appropriate conventions, and content-driven vocabulary selection. This whitepaper examines current technical approaches within the context of a broader thesis: that reliable forensic text comparison requires integrated multi-dimensional analysis rather than isolated feature examination. We present a comprehensive technical guide featuring experimental protocols, analytical frameworks, and visualization methodologies designed for researchers and forensic professionals engaged in developing validated text analysis procedures for legal applications [3] [10].

Theoretical Foundations

Psycholinguistic Underpinnings of Deceptive Communication

Psycholinguistics provides the theoretical foundation for understanding how cognitive processes manifest in linguistic output during deceptive communication. Research indicates that deception imposes additional cognitive load, resulting in measurable linguistic features including changes in pronoun distribution, verbal complexity, and emotional expression [3]. The Pythagorean concept of pattern-driven reality finds modern application in forensic text analysis, where computational methods detect subtle but consistent patterns linking psychological states to linguistic choices [3].

Forensic text comparison operates on the principle that individuals exhibit measurable patterns in their language use across multiple dimensions. The analytical challenge lies in distinguishing between three primary influences: author-specific patterns (relatively stable across an individual's texts), genre-constrained conventions (shared across documents serving similar functions), and topic-driven vocabulary (content-specific terminology). Research demonstrates that effective forensic analysis must account for all three dimensions simultaneously rather than in isolation [3].

Analytical Framework for Multi-Dimensional Text Analysis

A robust analytical framework for forensic text comparison must integrate three complementary perspectives: author attribution through stylistic analysis, genre classification through structural patterns, and topic modeling through content analysis. This tripartite approach enables researchers to isolate stable authorial fingerprints from variable contextual influences, thereby increasing the reliability of forensic conclusions [3] [11].

Table 1: Core Dimensions of Forensic Text Analysis

| Dimension | Key Features | Analytical Methods | Forensic Application |

|---|---|---|---|

| Author | Pronoun frequency, syntactic complexity, vocabulary richness, punctuation patterns | N-gram analysis, lexical richness metrics, function word frequency | Author attribution, identity verification |

| Genre | Text structure, formulaic expressions, register-appropriate vocabulary, document length | Structural templates, discourse markers, move analysis | Document classification, context assessment |

| Topic | Domain-specific terminology, semantic coherence, entity density, conceptual relationships | LDA topic modeling, word embeddings, entity extraction, knowledge graphs | Content verification, intent analysis |

Methodological Approaches

Quantitative Text Analysis Protocols

Modern forensic text analysis employs rigorous quantitative protocols to transform unstructured text into analyzable data structures. The MAXDictio module within MAXQDA provides comprehensive tools for quantitative content analysis, including vocabulary analysis, dictionary-based analysis, and visual text exploration [12]. These tools enable researchers to conduct systematic investigations of word frequencies, distributions, and patterns across document collections, forming the foundation for more advanced forensic comparisons [12].

The Word Tree visualization represents a particularly powerful methodology for exploring textual structure, displaying all combinations that lead to or from specific words of interest with frequency information [12]. This approach facilitates the identification of characteristic phrasing patterns that may distinguish individual authors or genre conventions. Advanced implementations incorporate lemmatization (summarizing words sharing the same stem), stop word lists for filtering common but uninformative terms, and integration with document variables or codes to segment analysis by relevant metadata [12].

Experimental Workflow for Forensic Text Comparison

A standardized experimental workflow ensures methodological consistency and reproducibility in forensic text comparison research. The following protocol outlines key stages in a comprehensive analysis:

Stage 1: Corpus Compilation and Preprocessing

- Document collection and metadata annotation

- Text normalization (lowercasing, punctuation handling)

- Tokenization and sentence boundary detection

- Linguistic annotation (part-of-speech tagging, syntactic parsing)

Stage 2: Feature Extraction and Selection

- Lexical feature extraction (character n-grams, word n-grams)

- Syntactic feature calculation (production rules, dependency relations)

- Semantic feature generation (entity density, topic distributions)

- Stylistic feature computation (readability metrics, cohesion indices)

Stage 3: Multi-Dimensional Analysis

- Author dimension: Stylometric fingerprinting using function words and character n-grams

- Genre dimension: Discourse structure analysis using move analysis and rhetorical patterns

- Topic dimension: Semantic field identification using topic models and word embeddings

Stage 4: Validation and Interpretation

- Cross-validation using known-author documents

- Statistical significance testing of distinctive features

- Likelihood ratio calculation for evidence weight assessment

- Results interpretation within forensic context

Diagram 1: Forensic Text Analysis Workflow

Advanced Visualization Methodologies

Effective visualization transforms complex textual patterns into interpretable visual representations, enabling researchers to identify relationships that might remain obscured in raw data. Modern text visualization tools employ multiple methodologies, each offering distinct analytical advantages [13].

Network graphs represent words or concepts as nodes and their relationships as edges, revealing structural patterns in discourse. Tools like InfraNodus use text network analysis algorithms to identify influential concepts and topical clusters, enabling researchers to explore relationships and gaps in textual data [13]. Timeline and frequency charts track the evolution of concepts across documents or narrative time, implemented in tools like Voyant Tools and MAXQDA through rank-frequency analysis and dispersion plots [13]. Embedding projections use dimensionality reduction techniques like t-SNE or UMAP to visualize semantic relationships in high-dimensional word vector spaces, while knowledge graphs instantiate entities and their typed relations based on domain ontologies, enabling logical reasoning over textual content [13].

Table 2: Text Visualization Tools for Forensic Analysis

| Tool | Primary Methodology | Key Features | Best Suited For |

|---|---|---|---|

| InfraNodus | AI-powered knowledge graphs, text network analysis | Interactive graph visualization, gap detection, AI-powered insights | Exploring conceptual relationships, identifying discourse gaps |

| Voyant Tools | Tag clouds, timeline analysis, frequency charts | Browser-based, timeline visualization, entity extraction | Initial text exploration, temporal pattern identification |

| MAXQDA | Coding representation, frequency visualization | Powerful coding features, code frequency analysis, thematic analysis | Systematic qualitative analysis, manual annotation |

| NotebookLM | AI-powered mindmaps | Mindmap generation, document chatting, structured overview | Document summarization, conceptual mapping |

Technical Implementation

Analytical Techniques for Deception Detection

Deception detection represents a critical application of forensic text analysis, employing specific linguistic features as indicators of deceptive communication. Research by Adkins et al. (2025) demonstrates that integrated analysis of deception cues, emotional markers, and subjectivity levels can effectively identify persons of interest in investigative contexts [3]. Their approach combines multiple NLP techniques to create a psycholinguistic profile based on temporal patterns in language use.

The Empath library provides a methodological framework for quantifying deception-related language through statistical comparison with word embeddings and built-in categories [3]. This approach identifies contextually relevant deception indicators in target text, normalizes token frequencies, and uses these normalized values as features for machine learning classification. Complementary research by Huang and Liu (2022) demonstrates that subjectivity-objectivity balance serves as a proxy for deception, with highly subjective communications often perceived as more trustworthy despite potential factual inaccuracies [3].

Diagram 2: Deception Detection Framework

Research Reagent Solutions for Text Analysis

Forensic text analysis relies on specialized computational tools and linguistic resources that function as "research reagents" in experimental protocols. These standardized components enable reproducible, validated analyses across different research contexts and document types.

Table 3: Essential Research Reagent Solutions for Forensic Text Analysis

| Reagent Category | Specific Tools/Resources | Function in Analysis | Implementation Example |

|---|---|---|---|

| Linguistic Feature Extractors | NLTK, SpaCy, Stanford CoreNLP | Tokenization, lemmatization, part-of-speech tagging, dependency parsing | Extracting syntactic complexity metrics for author profiling |

| Psychological Text Analyzers | LIWC, Empath Library | Quantifying psychological constructs, emotional tone, cognitive processes | Measuring deception indicators and emotional markers over time |

| Topic Modeling Frameworks | Gensim, Mallet, BERTopic | Identifying latent thematic structures, conceptual relationships | Distinguishing topic-driven vocabulary from author-specific style |

| Visualization Platforms | InfraNodus, Voyant Tools, MAXQDA | Creating interpretable visualizations of complex textual patterns | Generating knowledge graphs for conceptual relationship analysis |

| Machine Learning Classifiers | Scikit-learn, TensorFlow, PyTorch | Building predictive models for authorship attribution | Implementing ensemble methods with Logistic Regression, SVM, Random Forest |

Validation Frameworks and Statistical Interpretation

Robust validation represents the cornerstone of forensically sound text comparison methodology. The critical review by Yang et al. emphasizes that persistent challenges—including substrate variability, environmental influences, and database deficiencies—require rigorous validation protocols specifically designed for forensic applications [10]. Their analysis of analytical techniques for forensic paper comparison highlights the necessity of standardized validation approaches across the field.

Forensic text comparison must address two distinct validation requirements: methodological validation (establishing that a technique reliably measures what it claims to measure) and interpretive validation (establishing appropriate statistical frameworks for drawing inferences from results). Methodological validation requires demonstrating repeatability (consistent results under identical conditions) and reproducibility (consistent results across different laboratories and operators) [10]. Interpretive validation requires establishing appropriate statistical models for evaluating the strength of evidence, with likelihood ratio frameworks increasingly recognized as the most appropriate approach for forensic applications [3] [10].

Challenges and Future Directions

Despite significant methodological advances, forensic text comparison faces persistent challenges that require continued research attention. A primary limitation identified across multiple studies is the dependency on sufficient sample sizes for reliable model training, particularly for authorship attribution tasks where limited known samples from potential authors may be available [3] [10]. Additionally, the dynamic nature of language use across contexts and over time complicates the identification of stable authorial fingerprints.

Future research directions should prioritize the development of adaptive models that account for linguistic change across time and context, improved normalization techniques for cross-genre comparison, and standardized validation protocols specifically designed for forensic applications. The integration of psycholinguistic theory with computational methods represents a particularly promising avenue for enhancing deception detection capabilities, moving beyond surface-level patterns to model the cognitive processes underlying linguistic production [3]. Furthermore, research should address the ethical implications of automated text analysis in legal contexts, ensuring that methodologies remain transparent, interpretable, and forensically validated.

Forensic text comparison methodology research has evolved from qualitative examination to sophisticated multi-dimensional frameworks that simultaneously address authorial, generic, and topical influences on linguistic production. This whitepaper has presented current technical approaches, experimental protocols, and analytical frameworks that enable researchers to disentangle these complex interactions for reliable forensic analysis. The continued development of validated, transparent methodologies remains essential for advancing the scientific rigor of textual evidence analysis in legal contexts. As computational capabilities advance and linguistic theories evolve, forensic text comparison methodologies will continue to increase in discriminative power, provided they remain grounded in robust validation frameworks and ethical implementation practices.

Core FTC Methodologies: Feature-Based, Score-Based, and Psycholinguistic Approaches

Forensic Text Comparison (FTC) is a scientific discipline concerned with quantifying the strength of linguistic evidence for authorship attribution. Within the judicial system, there is increasing agreement that the strength of forensic evidence, including textual evidence, should be quantified and presented using a Likelihood Ratio (LR) [14]. The LR framework provides a coherent and transparent method for evaluating evidence under two competing propositions: typically, a prosecution hypothesis (e.g., the suspect is the author of the questioned text) and a defense hypothesis (e.g., the suspect is not the author) [15] [14]. The application of the LR framework to textual evidence represents a significant methodological advancement over traditional, non-probabilistic approaches to authorship analysis.

There are two conventional computational methods for calculating a Likelihood Ratio in FTC: score-based methods and feature-based methods [14]. Score-based methods reduce the multivariate data of a text (e.g., word counts) to a single, univariate similarity or distance score (e.g., Cosine distance, Burrows's Delta). The LR is then estimated based on the distributions of these scores from known and unknown sources [15] [14]. While computationally simpler and robust with limited data, this approach has a critical shortcoming: it inevitably loses information from the original multivariate feature space and does not directly assess the typicality of the evidence, only its similarity [14].

In contrast, feature-based methods directly compute LRs by assigning probabilities to the multivariate linguistic features themselves. This paper provides an in-depth technical guide on implementing two powerful classes of feature-based models—Poisson and Dirichlet-Multinomial models—which are theoretically more appropriate for the discrete, count-based nature of textual data and form a core part of modern forensic text comparison methodology research [15] [14] [16].

Table 1: Core Concepts in Forensic Text Comparison

| Concept | Description | Importance in FTC |

|---|---|---|

| Likelihood Ratio (LR) | A ratio of the probabilities of the evidence under two competing hypotheses (prosecution vs. defense). | Provides a quantitative, logically coherent measure of evidence strength for the court [14]. |

| Feature-Based Methods | Methods that compute LRs by directly modeling the multivariate distribution of linguistic features (e.g., word counts). | Preserves more information from the evidence and incorporates both similarity and typicality [14]. |

| Textual Typicality | The rarity or commonness of a set of linguistic features in a relevant population. | A key component of the LR; distinguishes feature-based from score-based methods [14]. |

| Bag-of-Words Model | A text representation model that discards word order and uses word frequencies as features. | A common, effective feature set for authorship attribution, forming the input for Poisson and DMM models [14] [16]. |

Theoretical Foundations of Poisson and Dirichlet-Multinomial Models

The Poisson Model for Count Data

The Poisson distribution is a discrete probability distribution that models the probability of a given number of events occurring within a fixed interval of time or space, assuming these events happen with a known constant mean rate and independently of the time since the last event [17]. Its probability mass function is given by:

(P(Y=k) = \frac{e^{-\lambda} \lambda^{k}}{k!})

where (k) is the number of occurrences (a non-negative integer) and (\lambda) is the expected number of occurrences, which is also the variance of the distribution [17].

In the context of FTC, the "events" are the occurrences of specific words or linguistic features in a text. A Poisson model is naturally suited for modeling word count data because it can handle discrete, non-negative counts and can capture the often over-dispersed nature of word frequency distributions [14]. When implemented within a Generalized Linear Model (GLM) framework, Poisson regression models the logarithm of the expected count as a linear function of predictor variables. This log-link function ensures that the predicted counts are always non-negative [17]. For LR estimation, a Poisson model allows for the direct calculation of the probability of observing a particular set of word counts in a questioned document, given a specific author, thereby incorporating both the similarity between documents and the typicality of the author's writing style within a population [15] [14].

The Dirichlet-Multinomial Model for Topic and Cluster Analysis

While the Poisson model is a univariate model for counts of individual features, the Dirichlet-Multinomial (DMM) model is a multivariate model often used for clustering short texts and discovering latent topics [16]. It is a generative model that assumes a document is generated by first drawing a topic mixture from a Dirichlet distribution, and then generating the words of the document from a multinomial distribution conditioned on that topic [16].

A key variant is the Dirichlet Multinomial Mixture (DMM) model, which assumes that each short text (e.g., a tweet or a message) belongs to a single topic [16]. This "one-topic-per-document" assumption is particularly effective for short text clustering, where the limited word co-occurrence information makes assigning multiple topics to a single document challenging [16]. The DMM model helps overcome the data sparsity and high-dimensionality problems inherent in short text analysis, making it a valuable tool for forensic analysts who often work with SMS messages, emails, or social media posts.

Experimental Protocols and Performance Benchmarking

Protocol for Poisson-based Likelihood Ratio Estimation

The implementation of a Poisson model for LR estimation in FTC involves a structured workflow, from data preparation to performance validation. The following diagram illustrates the core sequence of this protocol.

Data Collection and Preparation: The foundational step is gathering a large, representative corpus of texts to serve as a reference population. A seminal study by Carne & Ishihara (2020) utilized documents from 2,157 authors to ensure robust model training and evaluation [15] [14]. Texts are preprocessed using standard natural language processing (NLP) techniques, which may include tokenization, lowercasing, and removal of punctuation. The features are then extracted, typically using a bag-of-words model that discards word order and represents each document as a vector of word counts [14].

Feature Selection and Model Training: The high dimensionality of text data (thousands of words) necessitates feature selection. A common approach is to select the N-most frequent words (e.g., N=400) across the corpus to create the feature vectors [14]. For the Poisson model, the parameters (e.g., the expected word counts λ for different authors) are estimated from the training data. The LR for a questioned document (Q) and a known document (K) from a suspect is then calculated by comparing the probability of observing the word counts in Q under the assumption that the author is the suspect (same source) versus the assumption that the author is a random member of the population (different source) [14]. This can be extended using more complex models like a two-level Poisson-gamma model to account for extra-Poisson variation [14].

Performance Validation: The performance of the LR system must be rigorously validated using a separate test set. The standard metric is the log-likelihood ratio cost (Cllr). This metric evaluates the system's overall performance by combining measures of its discrimination power (ability to distinguish between same-source and different-source comparisons) and its calibration (the accuracy of the LR values themselves) [15] [14]. A lower Cllr indicates better performance.

Protocol for Dirichlet-Multinomial Mixture for Short Text Clustering

The DMM protocol focuses on inferring the latent topic structure within a collection of short texts, which can help in organizing and understanding large volumes of forensic data, such as categorizing messages by theme or intent.

Data Preprocessing for Short Texts: Short texts present unique challenges, including sparse terms and a limited number of words per document, which leads to fewer word co-occurrences [16]. Preprocessing is critical and may involve more aggressive filtering (e.g., removing very rare words) and handling of noise like spelling errors, which is common in social media data [16].

Determining the Number of Topics (Clusters): A significant challenge with DMM and related topic models is that they typically require pre-specifying the number of topics, K, which is often unknown [16]. Advanced methods like Gibbs Sampling for DMM (GSDMM) can automatically infer the optimal number of topics, but at a high computational cost, especially if the initial maximum K is set too high [16].

Model Fitting and Cluster Refinement: The DMM model is fitted to the short text corpus, assigning each document to a single topic cluster. To enhance performance, a hybrid approach like the Topic Clustering based on Levenshtein Distance (TCLD) algorithm can be employed. After an initial clustering with DMM, TCLD evaluates the semantic relationships between documents using the Levenshtein Distance (a fuzzy string matching algorithm). It then decides whether to keep a document in its initial cluster, move it to a more appropriate cluster, or mark it as an outlier, thereby optimizing the final topic clusters [16].

Quantitative Performance Comparison

Empirical studies have directly compared the performance of feature-based and score-based methods under controlled conditions. The following table synthesizes key findings from a large-scale evaluation.

Table 2: Empirical Performance Comparison of FTC Methods

| Method Type | Specific Model | Data & Features | Performance (Cllr) | Key Findings |

|---|---|---|---|---|

| Feature-Based | One-level Poisson, Zero-inflated Poisson, Two-level Poisson-gamma [14] | 2,157 authors; Bag-of-words (N=400) [14] | Cllr = 0.14-0.2 lower than score-based (best settings) [14] | Outperforms score-based methods. Performance can be further improved with feature selection [15] [14]. |

| Score-Based | Cosine Distance [15] [14] | 2,157 authors; Bag-of-words (N=400) [14] | Baseline for comparison (Cllr ~0.09 higher than feature-based) [15] | Violates statistical assumptions of textual data (e.g., normality). Assesses only similarity, not typicality [14]. |

| Hybrid Topic Model | TCLD (DMM + Levenshtein Distance) [16] | Six English benchmark short-text datasets [16] | 83% improvement in Purity; 67% improvement in NMI vs. baseline models [16] | Effectively addresses outlier problem and determines optimal topic number in short texts [16]. |

The Scientist's Toolkit: Essential Research Reagents

Implementing the methodologies described requires a suite of computational tools and conceptual frameworks. The following table details the key components of the forensic text analyst's toolkit.

Table 3: Research Reagent Solutions for Forensic Text Modeling

| Tool / Reagent | Type | Function in FTC Research |

|---|---|---|

| Bag-of-Words Model | Conceptual / Representational | Represents text as a multivariate vector of word counts, serving as the primary input for both Poisson and DMM models [14] [16]. |

| Log-Likelihood Ratio Cost (Cllr) | Evaluation Metric | The standard metric for validating the performance and reliability of a forensic LR system, assessing both discrimination and calibration [15] [14]. |

| Gibbs Sampling | Computational Algorithm | A Markov Chain Monte Carlo (MCMC) method used for approximate inference in complex probabilistic models like GSDMM, to estimate model parameters and cluster assignments [16]. |

| Levenshtein Distance Algorithm | Computational Algorithm | Measures the similarity between two strings by calculating the minimum number of single-character edits required to change one string into the other. Used in hybrid models like TCLD for post-clustering refinement [16]. |

| Empath / LIWC | Software Library / Lexicon | NLP libraries used for psycholinguistic feature extraction (e.g., detecting deception, emotion). Can be used to generate specialized feature sets for analysis [3]. |

| LASSO / Fused LASSO | Statistical Penalization | Regularization techniques used in time-dependent Poisson models to achieve sparsity and identify words with stable discriminatory power over time, handling high-dimensional parameters [18]. |

Advanced Implementations and Future Directions

Time-Dependent Poisson Models

A significant advancement in Poisson modeling for text is the development of time-dependent Poisson reduced rank models. Political lexicon and writing style are not static; they evolve. This model allows the parameters representing word weights ((bj^{(k)})) to change over time ((t)) [18]. The model is formulated as: (Y{ijt} \sim \text{Poisson}(\mu{ijt}), \text{ where } \mu{ijt} = \exp(\alphaj + \beta{it} + \sum{k=1}^K b{j,t}^{(k)} f_{it}^{(k)})) To manage the high dimensionality of this formulation, estimation employs LASSO and Fused LASSO penalization techniques. This encourages sparsity (many word weights are zero) and temporal smoothness (word weights change gradually over time), allowing the model to automatically identify words that have a stable, discriminating effect on author or party positions across different time periods [18].

Integration with Psycholinguistic NLP Frameworks

The future of FTC methodology lies in the integration of sophisticated statistical models like Poisson and DMM with psycholinguistically informed NLP frameworks. Such frameworks move beyond simple word counts to analyze features like deception over time, emotion levels (e.g., anger, fear), and subjectivity in narratives [3]. By combining latent topic information from DMM models with psycholinguistic feature extraction tools (e.g., Empath), analysts can create a more nuanced profile of an author. This can help in identifying persons of interest by focusing on those whose communication is highly correlated with investigative keywords and who demonstrate linguistic patterns associated with deceptive or emotional states [3].

Handling Overdispersion with Negative Binomial

A key assumption of the standard Poisson model is that the mean equals the variance. Real-world text data often exhibits overdispersion, where the variance exceeds the mean. While a two-level Poisson-gamma model can account for this [14], a common and practical alternative is the Negative Binomial regression model, which can be viewed as a generalization of the Poisson model that incorporates extra-Poisson variation [17]. This model should be the go-to choice when overdispersion is detected in the count data, as it leads to more reliable and accurate confidence intervals for the model parameters.

Forensic Text Comparison (FTC) is a scientific discipline concerned with quantifying the evidence for authorship of textual materials. In the context of cybercrime, law enforcement, and intellectual property disputes, text messages are often the main medium of communication and may be the only available source of information leading to the identification of the wrongdoer(s) [19]. The foundational concept is that each person possesses a unique writing style, or idiolect, which manifests in author-specific characteristics within the text [19]. The core challenge for FTC is to develop methodologies that can quantitatively represent these stylistic patterns and reliably evaluate the strength of evidence for authorship attribution.

Score-based methods represent a significant methodological advancement within the likelihood ratio (LR) framework for FTC. These methods provide a structured paradigm for quantifying the strength of evidence by comparing the similarity between a questioned text and known author samples [19]. Within this framework, Cosine Distance and Burrows's Delta have emerged as two prominent score-generating functions for comparing paired text samples. Their efficacy lies in the ability to transform the multivariate structure of linguistic features into a univariate score, which can then be converted into a likelihood ratio—a statistically valid measure of evidence strength that helps the trier-of-fact (e.g., a judge or jury) assess whether the suspect and the author of an incriminating text are the same person [19] [20]. This technical guide explores the theoretical foundations, experimental protocols, and performance characteristics of these two core methods, positioning them within the broader research agenda to build demonstrably reliable systems for forensic authorship analysis.

Theoretical Foundations of Distance Measures in Stylometry

The Bag-of-Words Model and Feature Space

Score-based authorship attribution typically begins with the Bag-of-Words (BoW) model, a near-standard technique for representing textual data [19]. In this model, texts are converted into vectors in a high-dimensional space where each dimension corresponds to the normalized frequency of a specific word. The initial feature set usually comprises the N Most Frequent Words (MFW) from the entire corpus, excluding stop words. The relative frequencies of these MFW are often transformed using Z-score normalization to create a document-term matrix. This standardization is a critical step in Burrows's original Delta method and its variants, as it accounts for the overall vocabulary richness of individual documents and makes feature values comparable across texts [21].

The core assumption is that an author's stylistic signature is encoded in their consistent patterns of word preference—their tendency to over-use or under-use common words relative to other authors. The BoW model, while discarding information about word order, effectively captures these statistical patterns. The choice of N (the number of MFW) is an experimental parameter, with research indicating that system performance can be robust across a wide range of N values, particularly when using Cosine Distance [21].

Defining the Distance Measures

The similarity or dissimilarity between two text vectors is quantified using a distance measure, which serves as the score in a score-based LR system. The two measures central to this guide are:

Cosine Distance: This measure calculates the cosine of the angle between two text vectors in the high-dimensional feature space. It is computed as 1 minus the cosine similarity. The cosine similarity is the dot product of the two vectors divided by the product of their magnitudes (Euclidean norms). A key property of Cosine Distance is its insensitivity to vector magnitude; it focuses solely on the directional alignment of the vectors, which corresponds to the qualitative pattern of word usage [21]. This property makes it particularly effective for authorship tasks, where the "key profile" of an author's style—the pattern of over- and under-utilization of vocabulary—is more important than the actual amplitude of frequency deviations [21].

Burrows's Delta (Delta Bur): This is the original measure proposed by John Burrows, which has proven remarkably successful in computational stylistics [21]. It is defined as the mean of the absolute differences between the Z-scores of the MFW in two texts. Mathematically, for two texts A and B, Delta is (1/N) * Σ |Zi,A - Zi,B|, where the sum is taken over the N MFW. In essence, it is the Manhattan distance (L1 distance) between the Z-score vectors [21]. Unlike Cosine Distance, it is sensitive to the magnitudes of the Z-scores, making it potentially more susceptible to outliers—extreme Z-score values specific to single texts rather than all texts of a single author [21].

The Key Profile Hypothesis vs. The Outlier Hypothesis

Research into why these algorithms work has led to two competing hypotheses, which have significant implications for understanding the robustness of different distance measures.

The Outlier Hypothesis (H1): This posited that performance differences between measures were caused by single extreme Z-score values. It suggested that the positive effect of vector normalization (inherent in Cosine Distance) stemmed from the reduction of these outlier amplitudes [21].

The Key Profile Hypothesis (H2): This hypothesis, which has received stronger empirical support, argues that an author's stylistic signature manifests more in the qualitative combination of word preferences (the pattern) than in the actual amplitude of Z-scores [21]. A measure is successful if it emphasizes these structural differences without being overly influenced by amplitude variations.

Experiments have disproven H1 by showing that vector normalization, which drastically improves the performance of all Delta measures, hardly reduces the number of extreme Z-score values [21]. Conversely, H2 was confirmed by creating pure "key profile" vectors that only recorded whether a word frequency was above average (+1), unremarkable (0), or below average (-1). These ternary vectors performed almost as well as the full vector normalization, demonstrating that the profile of deviation across the MFW is the critical factor [21]. This finding explains the superior and robust performance of Cosine Distance, which intrinsically normalizes for vector length and is therefore a pure measure of the key profile.

Experimental Protocols for Forensic Validation

A General Workflow for Score-Based Authorship Analysis

The following workflow delineates the standard procedure for conducting a score-based authorship analysis, from data preparation to the calculation of a likelihood ratio. This process is visualized in Figure 1.

Figure 1: A generalized workflow for score-based forensic text comparison, showing the process from data collection to the calculation of a likelihood ratio.

Protocol 1: Implementing Cosine Distance with a BoW Model

This protocol details the steps for a specific experiment demonstrating the efficacy of Cosine Distance, as described in the research.

Objective: To estimate score-based likelihood ratios for linguistic text evidence using a Bag-of-Words model and Cosine Distance as the score-generating function [19].

Corpus:

- Source: The Amazon Product Data Authorship Verification Corpus was used.

- Synthesis: Two groups of documents were synthesized for each author. Each group contained documents of approximately 700, 1400, and 2100 words. This design allowed for 720 same-author comparisons and 517,680 different-author comparisons to test system validity [19].

Feature Engineering:

- Model: A Bag-of-Words model was implemented.

- Features: The Z-score normalized relative frequencies of the N most frequent words (N was varied in experiments, e.g., N=260).

- Vector Representation: Each text is represented as a high-dimensional vector of these normalized frequencies.

Score Calculation:

- The Cosine Distance between the vector of a questioned document (Q) and the vector of a known suspect document (K) is calculated.

- The Cosine Distance is defined as: 1 - ( (Q • K) / (||Q|| * ||K|| ) ), where '•' denotes the dot product and '|| ||' denotes the Euclidean norm (vector length).

Likelihood Ratio Estimation:

- Score Distributions: The distributions of same-author scores and different-author scores are compiled using a "common source method" [19].

- Model Fitting: The score distributions are approximated using parametric models (e.g., Normal, Log-normal, Gamma, Weibull). The best-fitting model is selected.

- Conversion: The calculated Cosine Distance score between K and Q is converted into a likelihood ratio using the fitted distributions. The LR is given by: LR = f(score | same-author) / f(score | different-author), where f is the probability density function.

Validation:

- Metric: System validity is assessed using the log-likelihood-ratio cost (Cllr). A lower Cllr indicates better system performance.

- Visualization: The strength and calibration of the derived LRs are visualized using Tippett plots.

Protocol 2: Comparing Delta Variants and the Key Profile

This protocol is based on experiments designed to test the key profile hypothesis by comparing different variants of Burrows's Delta.

Objective: To understand the performance differences between Burrows's Delta (Delta Bur), other Minkowski distances (Lp-Delta), and Cosine Delta (Delta Cos), and to test the outlier (H1) and key profile (H2) hypotheses [21].

Corpus:

- Composition: A corpus of 75 texts in German, with 25 different authors and 3 novels per author. Similar corpora in English and French were used for validation [21].

Feature Engineering:

- Model: The standard document-term matrix with Z-score normalization of MFW.

- Manipulations: Two specific data manipulations were applied:

- Vector Length Normalization: All feature vectors were normalized to a length of 1.

- Outlier Truncation (Clamping): All |Z-scores| > 2 were set to +2 or -2.

- Ternary Key Profiles: Vectors were converted to values of +1 (above average), 0 (unremarkable), or -1 (below average).

Score Calculation & Analysis:

- Distance Measures: Multiple Lp-Delta measures were calculated, including L1 (Manhattan, Delta Bur), L2 (Euclidean, Delta Q), and L4 (highly sensitive to outliers).

- Cosine Distance (Delta Cos) was also calculated.

- Task: All 75 texts were automatically clustered into 25 groups (one per author) based on the calculated distance matrices.

Evaluation:

- Metric: Clustering quality was estimated using the Adjusted Rand Index (ARI). An ARI of 100% signifies perfect author attribution, while 0% is random.

- Comparison: The performance of each distance measure, with and without data manipulations, was compared across a wide range of MFW (e.g., from 100 to 5000 words).

Performance Data and Comparative Analysis

Quantitative Performance of Cosine Distance

The following table summarizes key performance data for the Cosine Distance measure from experimental results, highlighting its effectiveness and the impact of document length.

Table 1: Performance of Cosine Distance with a Bag-of-Words Model (N=260 MFW) [19]

| Document Length (Words) | Log-Likelihood-Ratio Cost (Cllr) | Interpretation |

|---|---|---|

| 700 | 0.70640 | Moderate discrimination accuracy |

| 1,400 | 0.45314 | Good discrimination accuracy |

| 2,100 | 0.30692 | Very good discrimination accuracy |

The data demonstrates a clear trend: increasing the amount of available text consistently improves system performance. This is a fundamental principle in forensic text comparison, as larger sample sizes provide a more stable and representative estimate of an author's style [6] [19].

Comparative Performance of Delta Measures

Experiments comparing different distance measures provide clear evidence for the superiority of Cosine Distance and the value of normalization.

Table 2: Comparison of Distance Measure Performance (Clustering Quality via ARI) [21]

| Distance Measure | Key Characteristic | Performance with Standard Z-scores | Performance with Vector Normalization |

|---|---|---|---|

| Cosine Delta (Delta Cos) | Insensitive to vector magnitude | High and Robust (e.g., ARI >90%) | (Inherently normalized) |

| Burrows's Delta (L1) | Manhattan distance, sensitive to magnitude | Moderate (worse than Cosine) | Dramatically Improved (~matches Cosine) |

| Argamon's Delta Q (L2) | Euclidean distance, more sensitive to outliers | Poor (worse than L1) | Dramatically Improved (identical to Cosine) |

| L4 Delta | Highly sensitive to single outliers | Very Poor | Improved, but still worse than others |

The results in Table 2 strongly support the Key Profile Hypothesis (H2). The dramatic improvement seen in all measures after vector normalization—which does not reduce outliers but standardizes amplitudes—indicates that the pattern of word use, not the magnitude of frequency differences, is the primary carrier of authorship signal [21]. The robustness of Cosine Delta across a wide range of MFW makes it a particularly reliable choice.

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational "reagents" essential for conducting experiments in score-based forensic text comparison.

Table 3: Essential Materials and Tools for Score-Based Forensic Text Comparison Research

| Item / Concept | Function in the Experimental Protocol |

|---|---|

| Reference Corpus | A collection of texts from many authors used to establish the background population and to select the N Most Frequent Words (MFW) for the model. Its relevance to the case context is critical for validation [20]. |

| Bag-of-Words (BoW) Model | The foundational data representation model that transforms unstructured text into a numerical matrix, allowing for quantitative analysis. It records word frequencies while discarding word order [19]. |

| Z-score Normalization | A statistical procedure that standardizes the frequency of each word across the corpus. It expresses each word's frequency in a text as the number of standard deviations it is from the mean frequency across all texts, ensuring comparability [21]. |

| Most Frequent Words (MFW) | The set of feature words (e.g., N=260, 500, 1000) used to represent the texts. These common, often function words (e.g., "the", "and", "of") are believed to be less topic-dependent and more reflective of subconscious stylistic habits [19] [21]. |