Forensic Method Validation and Standardization: Protocols, Implementation, and Impact on Scientific Integrity

This article provides a comprehensive analysis of the processes, challenges, and significance of method validation and standardization in forensic science.

Forensic Method Validation and Standardization: Protocols, Implementation, and Impact on Scientific Integrity

Abstract

This article provides a comprehensive analysis of the processes, challenges, and significance of method validation and standardization in forensic science. Drawing on current standards from OSAC, ASB, and other regulatory bodies, it explores the foundational principles established by landmark reports, detailed methodological applications across disciplines like toxicology and DNA analysis, practical strategies for overcoming implementation hurdles, and comparative frameworks for assessing method validity. Tailored for researchers, scientists, and drug development professionals, the content bridges theoretical standards with practical application, emphasizing how rigorous validation protocols ensure reliability, admissibility, and ethical integrity in scientific findings.

The Foundation of Trust in Forensic Science: From NAS to Modern Standards

The 2009 report from the National Research Council (NRC) of the National Academies, titled "Strengthening Forensic Science in the United States: A Path Forward," served as a seismic shock to the foundation of forensic practice. This comprehensive assessment revealed a critical gap between long-accepted forensic methods and rigorous scientific standards, noting that many disciplines, particularly those involving feature-comparison, lacked proper scientific validation [1]. The need for this transformative report arose from growing concerns within the scientific and legal communities about the subjective nature of many forensic disciplines and their potential for contributing to wrongful convictions.

Building upon this foundation, the President's Council of Advisors on Science and Technology (PCAST) issued its own landmark report in 2016, "Forensic Science in Criminal Courts: Ensuring Scientific Validity of Feature-Comparison Methods" [1]. This report further developed the NRC's critical analysis by introducing a precise framework for assessing what it termed "foundational validity" – the requirement that a method must be shown through empirical studies to be repeatable, reproducible, and accurate before its results can be admitted in court [2]. Together, these reports have catalyzed an ongoing transformation in forensic science, pushing the field toward greater scientific rigor, reliability, and standardization.

The NRC Report: A Comprehensive Call for Reform

Core Findings and Critiques

The 2009 NRC report provided a systematic evaluation of the then-current state of forensic science, identifying several fundamental deficiencies that undermined the reliability of forensic evidence in criminal proceedings. Its most significant finding was that many forensic disciplines, particularly those involving subjective pattern matching, lacked proper scientific foundations. The report noted that techniques such as bite-mark analysis, firearm and toolmark identification, and even fingerprint analysis had evolved primarily for crime investigation rather than scientific inquiry, and had never been subjected to the rigorous validation required for scientific evidence [1].

The report further criticized the wide variation in standards and practices across crime laboratories, the potential for contextual bias in forensic examinations, and the general absence of mandatory certification and standardized training requirements. It also highlighted the inconsistent application of statistical methods and quantitative measures to express the probative value of forensic evidence, noting that many examiners presented conclusions as absolute certainties without proper statistical foundation.

Principal Recommendations for Systemic Improvement

The NRC committee proposed a comprehensive set of recommendations to address these systemic issues, including:

- Creation of an independent federal entity, the National Institute of Forensic Science (NIFS), to oversee and standardize forensic practices nationwide

- Standardization of terminology and reporting practices to ensure that forensic conclusions are communicated accurately and without overstatement

- Promotion of research-based validation for all forensic methods, particularly establishing the reliability and measurable error rates of feature-comparison disciplines

- Enhanced education and training in scientific methodology, statistics, and ethics for forensic practitioners

- Separation of forensic laboratories from law enforcement agencies to mitigate potential contextual biases

- Development of quantifiable measures for expressing the strength of forensic evidence, moving away from categorical statements of identification

The PCAST Report: Advancing the Scientific Framework

The Concept of "Foundational Validity"

The 2016 PCAST report advanced the NRC's work by articulating a more precise standard for evaluating forensic methods. It defined "foundational validity" as requiring that a method be based on empirical studies that establish its reliability and accuracy through appropriate measures, with known and acceptable error rates [2]. The report established that for a method to be considered scientifically valid, it must demonstrate:

- Repeatability: The ability of the same examiner to consistently obtain the same results when repeating an analysis

- Reproducibility: The ability of different examiners to independently obtain the same results when analyzing the same evidence

- Accuracy: The ability of the method to correctly identify true matches and true non-matches, as measured by false positive and false negative rates

PCAST further specified that these properties should be established through "black-box studies" that measure the performance of the method as a whole, rather than relying on theoretical arguments about why a method should work.

Discipline-Specific Assessments and Findings

PCAST applied its framework for foundational validity to several specific feature-comparison disciplines, with varying conclusions:

Table: PCAST Assessment of Forensic Disciplines for Foundational Validity

| Discipline | Foundational Validity Status | Key Limitations Noted | Recommended Actions |

|---|---|---|---|

| Single-source DNA | Established | None for basic methodology | Considered gold standard |

| Simple DNA mixtures (2 contributors) | Established | Minor contributor must constitute ≥20% of sample | Appropriate statistical framework required |

| Complex DNA mixtures (>3 contributors) | Limited | Accuracy diminishes with more contributors | More extensive empirical validation needed |

| Latent fingerprints | Established | Requires high-quality images | Testimony must acknowledge error rates |

| Firearms/Toolmarks | Lacking in 2016 | Insufficient black-box studies | Recommended against admission without further validation |

| Bitemark analysis | Lacking | No scientific basis for identification | Recommended against admission; advised against research investment |

For DNA analysis, PCAST determined that foundational validity was established for single-source samples and simple mixtures of up to two individuals, provided the minor contributor constituted at least 20% of the intact DNA and the sample met minimum quantity thresholds [2]. The report expressed greater concern about more complex DNA mixtures, noting that probabilistic genotyping methods required additional validation, particularly for samples with three or more contributors.

Regarding latent fingerprint analysis, PCAST concluded the method was foundationally valid but emphasized that this validity depended on using high-quality images and that testimony must acknowledge empirically established error rates from relevant black-box studies [2].

Most critically, PCAST found that firearms/toolmark analysis and bite-mark analysis lacked foundational validity. For firearms/toolmarks, the report noted insufficient black-box studies demonstrating adequate reliability and accuracy. For bite-mark analysis, PCAST went further, concluding not only that it lacked validity but also that the prospects for developing it into a scientifically valid method were poor, advising against government investment in such research [1].

Method Validation and Standardization Frameworks

Evolution of Method Validation Standards

In response to the NRC and PCAST critiques, forensic science has moved toward more rigorous and standardized method validation protocols. The ANSI/ASB Standard 036 now delineates minimum standards for validating analytical methods in forensic toxicology, requiring laboratories to demonstrate that their methods are "fit for their intended use" through comprehensive validation studies [3]. Similar standards have been developed for other forensic disciplines, creating a more consistent framework for establishing method reliability.

The fundamental reason for performing method validation is to ensure confidence and reliability in forensic test results by systematically demonstrating that a method consistently produces accurate and reproducible results appropriate for its intended application [3]. This represents a significant shift from earlier practices where many methods were adopted based on tradition rather than empirical validation.

Key Methodological Approaches for Quantitative Analysis

Forensic science increasingly employs sophisticated analytical techniques that can provide both qualitative identification and quantitative measurement of forensic materials:

Table: Analytical Techniques in Forensic Chemistry

| Technique | Primary Application | Qualitative/Quantitative Capability | Strengths |

|---|---|---|---|

| Chromatography (HPLC, GC) | Drug analysis, toxicology, explosives | Both qualitative and quantitative | High sensitivity and specificity |

| Spectroscopy (IR, FTIR) | Material identification, drug analysis | Primarily qualitative, some quantitative applications | Non-destructive; rapid analysis |

| Mass Spectrometry (LC-MS, GC-MS) | Confirmatory drug testing, toxicology | Both qualitative and quantitative | Gold standard for identification and quantification |

| Microscopy | Fiber analysis, material comparison | Primarily qualitative | Visual comparison capabilities |

Chromatographic methods are used extensively in forensic laboratories to analyze body fluids for illicit drugs, samples from crime scenes, and residues from explosives [4]. High-performance liquid chromatography (HPLC) has been used extensively for both qualitative and quantitative analyses of drugs, metabolites, explosives, marker dyes, and inks. Liquid chromatography coupled with mass spectrometry (LC-MS) is widely used in forensics for confirmatory and quantitative analyses and represents a powerful tool for drug screening [4].

Spectroscopic techniques can be qualitative or quantitative or both, depending on the procedures used and the types of measurements collected. For example, ultraviolet and visible spectrophotometry is generally used as a screening tool to determine the presence or absence of suspected compounds, but it can also be quantitative in single-substance solutions or with appropriate standards [4].

Implementation and Impact: The Post-PCAST Landscape

Judicial Response to the Reports

The PCAST report has significantly influenced judicial decision-making regarding the admissibility of forensic evidence, though its adoption has varied by jurisdiction and forensic discipline. The National Institute of Justice maintains a database of post-PCAST court decisions that reveals nuanced application of the report's recommendations [2].

For firearms and toolmark analysis, courts have frequently responded to reliability concerns by limiting the scope of expert testimony rather than excluding it entirely. A common limitation requires that "a firearms and toolmark expert may not give an unqualified opinion, or testify with absolute or 100% certainty, that based on ballistics pattern comparison matching a fatal shot was fired from one firearm to the exclusion of all other firearms" [2]. More recently, some courts have pointed to new black-box studies conducted after 2016 as potentially establishing the reliability of the method, permitting admission with appropriate limitations [2].

For bite-mark analysis, the trend has moved strongly toward non-admissibility. Courts have generally found that bite-mark analysis does not meet scientific standards for validity, or at minimum requires rigorous Daubert or Frye hearings before admission [2]. Even in cases where bite-mark evidence was previously admitted and resulted in conviction, courts have become increasingly skeptical of its reliability.

For complex DNA mixture interpretation, courts have generally admitted evidence using probabilistic genotyping software, though sometimes with limitations on testimony. Response studies to the PCAST report, such as the "STRmix PCAST Response Study," have persuaded some courts that these methods can be reliable with four or more contributors when properly validated and applied [2].

Advancing Quantitative Methodologies in Forensic Science

The NRC and PCAST reports have accelerated the development and adoption of quantitative approaches across forensic disciplines, moving beyond purely qualitative assessments:

Diagram: Quantitative Evaluation Framework for Digital Forensic Evidence

In digital forensics, researchers have begun developing quantitative frameworks to evaluate the plausibility of alternative hypotheses explaining how digital evidence came to exist on a device. These approaches include:

Bayesian networks that propagate probabilities from initial priors to final posteriors based on conditional probabilities [5]. For example, in cases of internet auction fraud, Bayesian analysis yielded a likelihood ratio of 164,000 in favor of the prosecution hypothesis, providing "very strong support" for this explanation [5].

Conventional probability theory applied to specific defense scenarios. For the "inadvertent download defense" in cases involving illicit images, researchers have used Urn Models and the Binomial Theorem to calculate the probability of random download scenarios, with 95% confidence intervals typically showing very low probabilities (e.g., 0.03%-4.35%) supporting such defenses [5].

Complexity analysis to evaluate alternative explanations such as the "Trojan Horse Defense." By counting the operations required to achieve the presence of recovered materials by different mechanisms and applying the principle of least contingency, researchers can compute odds ratios for competing hypotheses [5].

The Research Toolkit: Essential Methods and Reagents

Core Analytical Technologies for Forensic Validation

Table: Essential Research Reagent Solutions for Forensic Method Development

| Tool/Reagent Category | Specific Examples | Primary Function in Validation | Application Context |

|---|---|---|---|

| Separation Techniques | HPLC, GC columns; mobile phase solvents | Separate complex mixtures into individual components | Drug analysis, toxicology, explosives residue |

| Detection Systems | Mass spectrometers; UV-Vis detectors; electrochemical detectors | Identify and quantify separated analytes | Confirmatory testing; trace evidence analysis |

| Reference Standards | Certified reference materials; internal standards | Provide calibration and quality control benchmarks | Method validation; quantitative accuracy assessment |

| Statistical Software | Probabilistic genotyping programs; Bayesian network tools | Calculate likelihood ratios; evaluate proposition plausibility | DNA mixture interpretation; digital evidence evaluation |

| Quality Control Materials | Positive/negative controls; proficiency test materials | Monitor analytical process performance | Ongoing method verification; laboratory accreditation |

The implementation of rigorous forensic validation requires not only sophisticated instrumentation but also certified reference materials, quality control reagents, and specialized software for statistical interpretation. Chromatographic reference standards are particularly critical for establishing calibration curves, determining linearity ranges, and calculating limits of detection and quantification during method validation [4].

For DNA analysis, probabilistic genotyping software such as STRmix and TrueAllele has become essential for interpreting complex mixtures, though these tools require extensive validation to establish their reliability and measure potential error rates [2]. The debate over the validity of these methods for mixtures with more than three contributors illustrates the ongoing need for rigorous validation studies.

Experimental Protocols for Method Validation

Based on ANSI/ASB Standard 036 and related guidelines, core validation experiments for forensic methods should include:

- Accuracy and bias studies using certified reference materials at multiple concentrations across the method's working range

- Precision assessment through repeatability (within-run) and reproducibility (between-run) experiments

- Limit studies to establish detection and quantification limits appropriate for forensic applications

- Specificity and selectivity testing to ensure the method can distinguish target analytes from potentially interfering substances

- Robustness testing to evaluate the method's resilience to minor variations in analytical conditions

- Stability assessments for analytes in relevant matrices under various storage conditions

For feature-comparison methods, validation must additionally include black-box studies that measure the actual performance of examiners using the method, establishing false positive and false negative rates across a range of representative casework samples [2].

The NRC and PCAST reports together represent a watershed moment in forensic science, catalyzing an essential transition from experience-based practice to evidence-based methodology. By introducing and defining the concept of "foundational validity," these reports established a clear scientific benchmark for the admission of forensic evidence in criminal proceedings [1] [2]. Their enduring impact continues to drive standardization efforts, method validation requirements, and judicial scrutiny of forensic evidence.

While significant progress has been made in addressing the critiques raised in these reports, full implementation of their recommendations remains an ongoing process. The development of empirically validated methods, the establishment of measurable error rates, the adoption of quantitative expression of evidence strength, and the reduction of contextual biases continue to present challenges and opportunities for the forensic science community. As these reforms progress, they strengthen not only the scientific foundation of forensic practice but also the integrity and reliability of the criminal justice system as a whole.

Within the complex ecosystem of forensic science, the establishment and maintenance of technically sound, consensus-based standards are fundamental to ensuring the reliability and admissibility of scientific evidence. This landscape is primarily shaped by three key organizations working in a complementary hierarchy: the Organization of Scientific Area Committees (OSAC), the Academy Standards Board (ASB), and the American National Standards Institute (ANSI). For researchers, scientists, and drug development professionals, understanding the distinct yet interconnected roles of these bodies is crucial for navigating the rigorous processes of forensic method validation and standardization. OSAC identifies the need for and develops proposed standards, ASB functions as a primary Standards Development Organization (SDO) to formally produce these standards through a consensus process, and ANSI provides the overarching accreditation and recognition that confers these documents the status of American National Standards (ANS). This guide provides an in-depth examination of their roles, the standards development workflow, and the current state of the forensic science standards registry, all within the critical context of forensic method validation.

The Key Organizations and Their Functions

Organization of Scientific Area Committees (OSAC)

OSAC, administered by the National Institute of Standards and Technology (NIST), is a collaborative body comprised of over 600 forensic science and criminal justice practitioners. Its primary mission is to strengthen the nation's use of forensic science by facilitating the development and implementation of technically sound, consensus-based standards. OSAC itself does not publish standards; instead, it acts as a central hub for identifying needs, drafting proposals, and evaluating standards for placement on its authoritative OSAC Registry [6] [7]. This Registry is a curated list of standards that OSAC has reviewed and endorsed for implementation by forensic science service providers (FSSPs). As of May 2025, the Registry contained over 230 standards spanning more than 20 forensic disciplines, from toxicology and DNA to anthropology and document examination [8]. OSAC's work ensures that the standards promoted are of high technical quality and fit for their intended purpose in the justice system.

Academy Standards Board (ASB)

The ASB is an accredited Standards Development Organization (SDO) that focuses exclusively on the development of standards for the forensic science community. It is the primary SDO partner for OSAC. While OSAC identifies needs and drafts proposals, the ASB is responsible for the formal, consensus-based development and publication of many forensic science standards [8]. The ASB follows a rigorous, open process to produce American National Standards (ANS), which are then submitted to OSAC for consideration for the OSAC Registry. For example, foundational documents such as ANSI/ASB Standard 036, Standard Practices for Method Validation in Forensic Toxicology, and ANSI/ASB Standard 056, Standard for Evaluation of Measurement Uncertainty in Forensic Toxicology, are ASB-published standards that carry the ANSI designation [6] [3]. The ASB provides the essential infrastructure for the balloting and public comment phases required for a standard to achieve ANSI accreditation.

American National Standards Institute (ANSI)

ANSI is a private, non-profit organization that oversees the development of voluntary consensus standards for products, services, processes, and systems in the United States. Its role is that of an accreditor and coordinator. ANSI does not develop standards itself but accredits SDOs like the ASB, ensuring they adhere to essential requirements for openness, balance, consensus, and due process [8]. When a standard developed by an accredited SDO like ASB completes its consensus process and is approved by ANSI, it earns the designation of an American National Standard (ANS), signifying it has met the highest level of recognition. This designation, denoted by the "ANSI/" prefix (e.g., ANSI/ASB Standard 017), is a mark of integrity and quality that is recognized by regulators and laboratories worldwide.

Table 1: Summary of Key Organization Roles

| Organization | Primary Role | Key Output | Governing Authority |

|---|---|---|---|

| OSAC | Identifies needs, drafts proposals, and maintains a registry of endorsed standards. | OSAC Registry & OSAC Proposed Standards | National Institute of Standards and Technology (NIST) |

| ASB | An accredited SDO that develops and publishes forensic science standards via a consensus process. | American National Standards (ANS) for forensic science. | Accredited by the American National Standards Institute (ANSI) |

| ANSI | Accredits SDOs and approves standards as American National Standards. | Accreditation of SDOs; ANSI designation for standards. | Serves as the U.S. member body to ISO and IEC. |

The Forensic Standards Development Workflow

The journey of a forensic science standard from conception to implementation is a multi-stage, collaborative process involving OSAC, an SDO like ASB, and ANSI oversight. The workflow ensures technical rigor, consensus, and transparency.

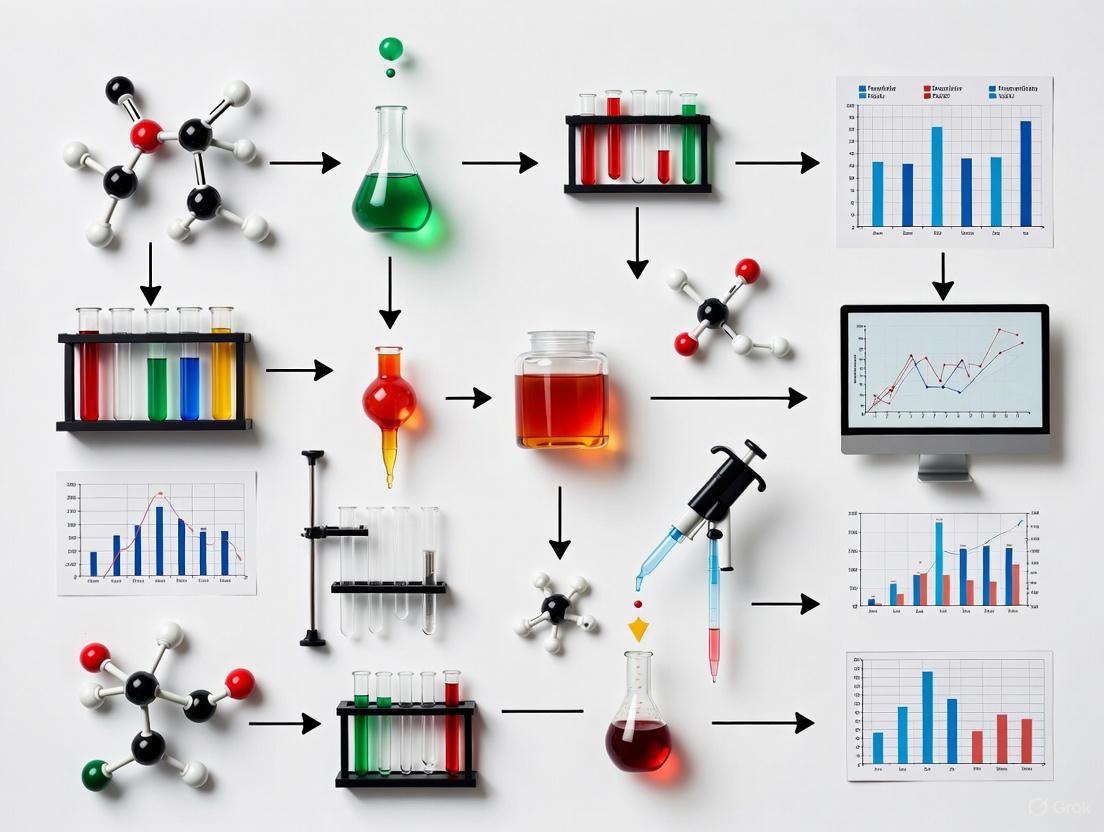

Figure 1: Forensic Standard Development Workflow

Stage 1: Need Identification and Internal OSAC Drafting

The process begins within an OSAC Subcommittee, where a specific gap or need for a new or revised standard is identified. A working group is formed to create a working draft. This draft is categorized as "Under Development" within the OSAC Standards Library and is not yet publicly available [7].

Stage 2: OSAC Proposed Standard and Public Comment

Once the internal draft is mature, it moves to the status of "OSAC Proposed Standard." At this point, OSAC publicly posts the draft and opens a formal comment period, welcoming feedback from all stakeholders on whether the draft is suitable for submission to an SDO [6]. For example, the OSAC Standards Bulletin from February 2025 listed several OSAC Proposed Standards open for comment, with a deadline for stakeholders to submit feedback [6].

Stage 3: Submission to a Standards Development Organization (SDO)

After incorporating feedback from the public comment period, the OSAC Proposed Standard is submitted to an accredited SDO, most commonly the ASB. The standard's status in the OSAC library changes to "In SDO Development" [7]. The May 2025 bulletin listed numerous examples of this, such as OSAC 2021-N-0010, Standard for Skeletal Preparation and Sampling in Forensic Anthropology, which had been submitted to ASB to begin work on ASB Standard 225 [8].

Stage 4: SDO Consensus Development and Public Comment

The SDO manages the formal consensus process. This includes balloting the standard within its membership and, crucially, holding a mandatory public comment period. The SDO is responsible for addressing all comments received before the standard can be finalized. The ASB frequently has multiple standards open for public comment simultaneously across various disciplines [6] [8].

Stage 5: ANSI Approval and SDO Publication

Once the SDO's consensus and public comment requirements are satisfied, the final standard is submitted to ANSI for approval as an American National Standard (ANS). Upon ANSI approval, the SDO publishes the standard, giving it the "ANSI/" designation (e.g., ANSI/ASTM E3406-25e1) [8]. It is now an "SDO Published Standard."

Stage 6: OSAC Registry Approval and Implementation

The newly published ANS is then sent back to OSAC for consideration for the OSAC Registry. OSAC evaluates the published standard for technical quality and suitability. If approved, it is added to the OSAC Registry, officially endorsing it for implementation by forensic science service providers (FSSPs) [7]. The cycle culminates in laboratories implementing the standard, with OSAC actively tracking implementation rates to measure impact [6].

The Current Forensic Science Standards Landscape

The forensic science standards landscape is dynamic, with a constant flow of new, revised, and proposed standards. The following tables summarize the quantitative scope of this landscape and provide examples of recently published and proposed standards.

Table 2: OSAC Standards Library Metrics (Snapshot)

| Category | Count | Description |

|---|---|---|

| OSAC Registry | 245 | SDO-published & OSAC Proposed Standards endorsed by OSAC [7]. |

| OSAC Registry Archive | 29 | Historical standards replaced by revised versions [7]. |

| SDO-Published Standards | 260 | Standards published by an SDO, not all on Registry [7]. |

| In SDO Development | 279 | Standards actively being developed at an SDO [7]. |

Table 3: Examples of Recently Added or Published Standards (as of May 2025)

| Standard Designation | Title | Discipline | Status & Notes |

|---|---|---|---|

| ANSI/ASTM E1386-23 | Standard Practice for Separation of Ignitable Liquid Residues from Fire Debris Samples by Solvent Extraction | Fire Debris | Added to OSAC Registry, May 2025 [8]. |

| OSAC 2023-N-0014 | Standard for the Medical Forensic Examination in the Clinical Setting | Forensic Nursing | First standard from Forensic Nursing Subcommittee on Registry [8]. |

| ANSI/ASB Standard 017 | Standard for Metrological Traceability in Forensic Toxicology | Toxicology | Newly published edition, 2025 [6]. |

| ANSI/ASTM E2998-25 | Standard Practice for Identification and Classification of Smokeless Powder | Explosives | Revision of a previous standard [8]. |

Experimental Protocols and Research Reagents for Method Validation

A core tenet of forensic science, as mandated by accrediting bodies like ISO/IEC 17025, is that all methods must be validated to ensure they are fit for purpose. The standards developed by OSAC, ASB, and ANSI provide the critical framework for this validation. ANSI/ASB Standard 036: Standard Practices for Method Validation in Forensic Toxicology is a prime example of such a foundational document, delineating the minimum requirements for validating analytical methods [3]. The validation process outlined in these standards is a systematic experiment to demonstrate a method's reliability.

Detailed Methodology for a Method Validation Experiment

A validation study following ANSI/ASB Standard 036 would involve a series of experiments to characterize the following performance parameters [3]:

- Precision and Bias: Experiments are designed to assess the method's repeatability (same day, same analyst) and intermediate precision (different days, different analysts) by analyzing replicates of quality control samples at low, medium, and high concentrations. Bias is evaluated by comparing the mean measured value to the accepted true value of a certified reference material.

- Limit of Detection (LOD) and Limit of Quantitation (LOQ): The LOD (the lowest level at which an analyte can be detected) and LOQ (the lowest level at which an analyte can be reliably quantified) are determined through the analysis of serial dilutions of the analyte. Statistical methods or signal-to-noise ratios are applied to the data to establish these limits.

- Linearity and Range: A calibration curve is constructed by analyzing a series of standards with known concentrations across the expected working range of the method. The linearity is assessed via statistical measures like the coefficient of determination (R²), and the range is confirmed as the interval over which linearity, precision, and accuracy are acceptable.

- Carryover: This is tested by injecting a blank solvent sample immediately following the analysis of a high-concentration calibration standard. The absence of a peak in the blank channel confirms that carryover is negligible or within acceptable limits.

- Interference Check: The method is challenged by analyzing samples that may contain structurally similar compounds or common metabolites to demonstrate that the method is specific for the target analyte and free from interferences.

The Scientist's Toolkit: Essential Research Reagents for Validation

Conducting a rigorous method validation study requires high-quality, traceable materials and reagents. The following table details key components of the "research reagent toolkit" essential for this process.

Table 4: Essential Research Reagents for Forensic Method Validation

| Reagent / Material | Function in Validation | Criticality and Technical Notes |

|---|---|---|

| Certified Reference Material (CRM) | Serves as the primary standard for establishing the true value of an analyte. Used to evaluate method bias and accuracy. | Critical; Must be traceable to a national metrology institute (e.g., NIST). ANSI/ASB Standard 017 provides guidelines for metrological traceability [6]. |

| Quality Control (QC) Materials | Used to monitor the precision and stability of the method over time during the validation study and in routine use. | High; Typically prepared at low, medium, and high concentrations within the linear range. |

| Blank Matrix | The analyte-free biological fluid (e.g., blood, urine) used to prepare calibration standards and QC samples. Used to assess specificity and LOD/LOQ. | High; The matrix should be as similar as possible to authentic casework samples to account for matrix effects. |

| Stable Isotope-Labeled Internal Standards | Added to all calibration standards, QCs, and unknown samples to correct for losses during sample preparation and variations in instrument response. | Critical; Essential for achieving high precision and accuracy in quantitative mass spectrometry-based methods. |

| System Suitability Test Solutions | Used to verify that the analytical instrument (e.g., LC-MS/MS, GC-MS) is performing adequately before a batch of samples is analyzed. | High; Confirms parameters like chromatographic retention, peak shape, and signal intensity are within predefined criteria. |

The interconnected framework of OSAC, ASB, and ANSI provides a robust, transparent, and consensus-driven system for advancing the quality and reliability of forensic science. OSAC acts as the engine for identifying needs and vetting standards, ASB provides the formal platform for their development, and ANSI confers the national-level accreditation that ensures integrity and due process. For researchers and practitioners, engagement with this standards landscape—from participating in public comment periods to the diligent implementation of registered standards—is not merely an administrative task. It is a fundamental component of the scientific method itself, ensuring that forensic analyses are grounded in technically sound, validated, and universally accepted practices. As the field continues to evolve, this collaborative structure is essential for building and maintaining the scientific foundation upon which justice depends.

Within scientific research and drug development, the credibility of findings hinges upon a foundation of rigorous methodology. Three interconnected core principles form the bedrock of this credibility: validation, standardization, and reliability. For researchers, scientists, and forensic professionals, a precise understanding of these concepts is non-negotiable for producing data that is both trustworthy and defensible.

This guide provides an in-depth technical examination of these principles, framing them within the critical context of forensic method validation and standardization processes. We will dissect their definitions, explore the methodologies to achieve them, and demonstrate their practical application through structured data, experimental protocols, and clear visualizations.

Defining the Core Principles

While often used interchangeably, validation, standardization, and reliability represent distinct concepts in the scientific landscape. The following table provides a structured comparison for clarity.

Table 1: Core Principles Comparison

| Principle | Core Question | Primary Focus | Relationship to Forensic Context |

|---|---|---|---|

| Validation [3] | Is the method measuring the right thing accurately and precisely? | Accuracy of the method and its results. | Ensures analytical methods (e.g., for seized drugs or toxicology) are "fit for purpose," providing confidence in reported results such as analyte identification and quantification [3]. |

| Standardization | Are the methods and procedures consistent across different labs and operators? | Consistency and uniformity of procedures. | Allows for the comparison of results across different forensic laboratories and over time, which is critical for collaborative investigations and upholding the integrity of the justice system. |

| Reliability [9] [10] | Can the method produce consistent results when repeated under the same conditions? | Consistency and repeatability of results. | Ensures that repeated analyses of the same evidence item, whether by the same analyst or a different one, will yield the same conclusive result, safeguarding against procedural errors. |

The relationship between these concepts, particularly between reliability and validity, is crucial. Reliability is concerned with the consistency of a measure—whether the same result can be reproducibly achieved under consistent conditions [9] [10]. In contrast, validity is concerned with the accuracy of a measure—whether it truly measures what it claims to measure [10]. A measurement can be reliable (consistent) without being valid (accurate). However, a valid measurement must generally also be reliable; you cannot accurately measure something if your tool gives inconsistent readings [10].

Diagram 1: Interrelationship of core scientific principles, showing how validation, standardization, and reliability contribute to trustworthy data.

Method Validation: Protocols and Practices

Method validation is a systematic process of proving that an analytical method is suitable for its intended use. In forensic toxicology, standards such as ANSI/ASB Standard 036 delineate the minimum practices for this process to ensure confidence and reliability in test results [3].

Key Validation Parameters and Experimental Protocols

The following table outlines the essential parameters that must be evaluated during method validation, along with a description of the typical experimental protocol for each.

Table 2: Key Method Validation Parameters and Experimental Protocols

| Parameter | Experimental Protocol & Methodology | Purpose & Evaluation |

|---|---|---|

| Accuracy | Analyze a minimum of 5 replicates of quality control (QC) samples at three concentrations (low, medium, high) against a certified reference material (CRM). | Measures closeness of the mean test result to the true value. Expressed as percent bias. |

| Precision | Intra-assay: Analyze 5 replicates at 3 concentrations in a single run. Inter-assay: Analyze 5 replicates at 3 concentrations over 3 different days. | Measures the agreement among a series of measurements. Expressed as %CV (Coefficient of Variation). |

| Specificity/Selectivity | Analyze a blank matrix and analyze the blank matrix spiked with the target analyte(s). Also, analyze samples spiked with potentially interfering substances (e.g., metabolites, structurally similar compounds, common adulterants). | Demonstrates that the method can unequivocally assess the analyte in the presence of other components. |

| Linearity & Range | Prepare and analyze a minimum of 5 calibration standards across the intended working range (e.g., 50-150% of the target concentration). Plot response vs. concentration. | Evaluates if the analytical procedure produces results directly proportional to analyte concentration. Assessed via correlation coefficient (R²) and residual plots. |

| Limit of Detection (LOD) | Analyze a minimum of 5 independent blank matrices and determine the standard deviation (SD). LOD is typically calculated as 3*SD of the blank/slope of the calibration curve. | The lowest amount of analyte that can be detected, but not necessarily quantified. |

| Limit of Quantification (LOQ) | Analyze a minimum of 5 replicates at a low concentration. LOQ is typically calculated as 10*SD of the blank/slope of the calibration curve. Must be demonstrated with a precision of ≤20% CV and accuracy of 80-120%. | The lowest amount of analyte that can be quantitatively determined with acceptable precision and accuracy. |

| Robustness | Deliberately introduce small, deliberate variations in method parameters (e.g., pH ±0.2 units, temperature ±2°C, mobile phase composition ±2%). | Measures the capacity of a method to remain unaffected by small, intentional variations in procedural parameters. |

The Scientist's Toolkit: Essential Research Reagents and Materials

The execution of a validated method requires high-quality, traceable materials. The following table details key reagents and their critical functions in forensic analytical methods.

Table 3: Essential Research Reagent Solutions for Analytical Method Validation

| Reagent/Material | Function & Role in Validation |

|---|---|

| Certified Reference Material (CRM) | Provides a substance with a certified purity and concentration, serving as the ultimate standard for establishing method accuracy and calibrating instruments. |

| Internal Standard (IS) | A compound added in a constant amount to all samples, blanks, and calibrators to correct for variability in sample preparation and instrument response. |

| Quality Control (QC) Materials | Samples with known concentrations of analyte(s) used to monitor the ongoing performance and stability of the analytical method during validation and routine use. |

| Blank Matrix | The biological or sample material (e.g., blood, urine) free of the target analytes. Used to prepare calibration standards and QCs and to assess method specificity and potential interference. |

| Stable Isotope-Labeled Analytes | Often used as the ideal internal standard, these are chemically identical to the target analyte but have a different mass, allowing for compensation during mass spectrometric analysis. |

Measuring and Ensuring Reliability

Reliability focuses on the consistency and repeatability of data over time [9]. In a research context, this translates to ensuring that experiments and analyses yield stable and reproducible results.

Methodologies for Measuring Reliability

Several statistical and procedural approaches can be employed to measure reliability, each applicable in different experimental scenarios.

Table 4: Methods for Measuring Data and Method Reliability

| Method | Procedure | Application Context |

|---|---|---|

| Test-Retest Reliability [10] | The same measurement is repeated on the same subjects under the same conditions after a period of time. The results are compared using a correlation coefficient. | Assessing the stability of a measurement instrument (e.g., a diagnostic assay) over time. |

| Inter-rater Reliability [10] | Different analysts or observers independently measure or score the same set of samples. The degree of agreement among them is calculated (e.g., using Cohen's Kappa). | Quantifying subjectivity in methods that involve manual interpretation, such as microscopy or certain spectroscopic analyses. |

| Internal Consistency [10] | The consistency of results is assessed across items within a single test or measurement. Often measured by Cronbach's alpha after splitting data into halves. | Evaluating the reliability of a multi-item questionnaire or a multi-parameter diagnostic panel. |

| Intra-assay & Inter-assay Precision | Intra-assay: Multiple replicates are measured in a single run. Inter-assay: Replicates are measured across multiple separate runs, days, or by different analysts. | A fundamental pillar of method validation in analytical chemistry, directly quantifying the random error of the method. |

A Framework for Ensuring Reliability

Achieving reliable data is an ongoing process that integrates technology, well-defined policies, and human diligence [9]. Key steps include:

- Data Governance Framework: Establish a formal framework with standardized policies for data collection, storage, usage, and security [9].

- Data Collection Standards: Set clear guidelines for the types of data to be collected, acceptable sources, and methodologies to ensure consistency and comparability from the outset [9].

- Data Auditing and Monitoring: Implement regular audits and real-time monitoring systems to check for inconsistencies, gaps, or anomalies in the data [9].

- Staff Training and Awareness: Conduct regular training to reinforce the importance of data reliability and proper procedural execution, as the human element is often a critical variable [9].

The workflow below illustrates the continuous cycle for establishing and maintaining data reliability.

Diagram 2: Data reliability assurance cycle, showing the continuous process from governance to refinement.

Validation, standardization, and reliability are not isolated checkboxes but are deeply intertwined principles that form the foundation of scientific integrity. Validation ensures a method is fundamentally sound and fit-for-purpose, standardization ensures its consistent application across environments, and reliability provides the evidence of its ongoing, consistent performance.

For forensic science and drug development, where decisions have profound societal and health implications, a rigorous adherence to these principles is paramount. They transform raw data into trustworthy, defensible evidence, thereby upholding the integrity of the scientific process and the justice systems that rely upon it.

The admissibility of expert testimony in United States courts has undergone a profound transformation throughout the past century, evolving from a simple "general acceptance" test to a complex judicial gatekeeping function with significant implications for forensic method validation. This evolution from the Frye Standard to the Daubert Standard represents a fundamental shift in how courts assess scientific evidence, placing increased emphasis on empirical testing, error rate analysis, and methodological rigor [11]. For researchers, scientists, and drug development professionals, understanding these legal frameworks is crucial not only for courtroom testimony but for developing forensic and scientific methodologies that withstand rigorous judicial scrutiny.

The legal standards governing expert evidence have direct implications for research design and validation processes across multiple scientific disciplines. The transition from Frye to Daubert reflects a broader movement toward establishing scientific validity through demonstrable, data-driven metrics rather than professional consensus alone [12] [13]. This whitepaper examines the historical context, legal principles, and practical applications of these evidentiary standards, with particular focus on their impact on forensic method validation and standardization processes in research environments.

Historical Development: From Frye to the Daubert Trilogy

The Frye Standard: General Acceptance as the Benchmark

The original standard for admitting scientific evidence was established in Frye v. United States (1923), a District of Columbia Circuit case concerning the admissibility of systolic blood pressure deception test results [11] [14]. The Frye test focused exclusively on whether the scientific principle or discovery underlying the evidence had gained "general acceptance" in its relevant field [15]. The court famously stated:

"Just when a scientific principle or discovery crosses the line between the experimental and demonstrable stages is difficult to define. Somewhere in this twilight zone the evidential force of the principle must be recognized, and while courts will go a long way in admitting expert testimony deduced from a well-recognized scientific principle or discovery, the thing from which the deduction is made must be sufficiently established to have gained general acceptance in the particular field in which it belongs." [15]

Under Frye, the scientific community essentially acted as gatekeeper through their collective judgment about which methodologies were sufficiently reliable for courtroom application [16]. This standard prevailed for decades, particularly in state courts, despite criticisms that it could exclude novel but valid scientific techniques that had not yet gained widespread recognition [13] [14].

The Daubert Revolution: Judicial Gatekeeping and Scientific Rigor

In 1993, the United States Supreme Court decided Daubert v. Merrell Dow Pharmaceuticals, Inc., fundamentally reshaping the standard for admitting expert testimony in federal courts [11] [17]. The Court held that the Frye standard had been superseded by the Federal Rules of Evidence, particularly Rule 702, which governs expert testimony [15]. Daubert established trial judges as gatekeepers responsible for ensuring that expert testimony rests on a reliable foundation and is relevant to the case [11] [15].

The Daubert Court identified four non-exhaustive factors for judges to consider when assessing scientific validity:

- Testability: Whether the theory or technique can be (and has been) tested [11] [17]

- Peer Review: Whether the method has been subjected to peer review and publication [11] [17]

- Error Rate: The known or potential rate of error and the existence of standards controlling the technique's operation [11] [17]

- General Acceptance: The extent to which the method has gained general acceptance in the relevant scientific community [11] [17]

Unlike Frye's singular focus, Daubert established a multi-factor test that emphasizes the judge's role in evaluating methodological reliability [18]. The Court stressed that the inquiry must be flexible, focusing on principles and methodology rather than the conclusions they generate [15].

The Daubert Trilogy: Expanding and Clarifying the Standard

The Daubert standard was further refined in two subsequent Supreme Court decisions, collectively known as the "Daubert Trilogy" [13]:

General Electric Co. v. Joiner (1997): Established that appellate courts should review a trial judge's admissibility decision under an abuse of discretion standard, making it more difficult to overturn admissibility rulings on appeal [11] [17]. The Court also emphasized that conclusions and methodology are not entirely distinct, allowing judges to examine whether an expert's conclusions logically follow from the methodology employed [11].

Kumho Tire Co. v. Carmichael (1999): Extended Daubert's gatekeeping requirement to all expert testimony, not just scientific evidence [11] [17]. This expansion meant that technical and other specialized knowledge would be subject to the same judicial scrutiny as scientific evidence [17]. The Court clarified that the Daubert factors might not apply equally to all forms of expertise, and judges have discretion to determine how to assess reliability in each case [17].

Table 1: The Evolution of Expert Evidence Standards in United States Courts

| Case/Standard | Year | Key Principle | Gatekeeper | Primary Test |

|---|---|---|---|---|

| Frye | 1923 | General Acceptance | Scientific Community | Single-factor: Whether the method is generally accepted in the relevant scientific community |

| Daubert | 1993 | Reliability & Relevance | Trial Judge | Multi-factor: Testing, peer review, error rates, general acceptance |

| Joiner | 1997 | Methodology-Consclusion Connection | Trial Judge (with appellate deference) | Abuse of discretion standard for appellate review |

| Kumho Tire | 1999 | All Expert Testimony | Trial Judge | Flexible application of Daubert factors to all expert evidence |

Comparative Analysis: Key Differences and Practical Implications

Fundamental Distinctions Between Frye and Daubert

The transition from Frye to Daubert represents more than just a legal technicality; it signals a fundamental shift in how scientific evidence is evaluated within the legal system. While Frye focuses exclusively on consensus within the scientific community, Daubert requires active judicial assessment of methodological reliability through multiple factors [18]. This distinction has profound implications for both the admission of novel scientific techniques and the validation requirements for forensic methods.

Under Frye, courts essentially defer to the scientific community's collective judgment about which methodologies are valid [16]. This approach offers predictability but potentially excludes novel yet valid scientific techniques that have not yet gained widespread acceptance [14]. Daubert, in contrast, allows for the admission of newer methods if they demonstrate reliability through empirical testing, even before achieving broad acceptance within the field [18]. Conversely, Daubert may exclude evidence based on "generally accepted" methods if they yield "bad science" in a particular application [16].

Impact on Forensic Science and Method Validation

The Daubert standard has particularly significant implications for forensic science, where many traditional disciplines developed primarily within law enforcement contexts rather than academic scientific communities [13]. The 2009 National Academy of Sciences report highlighted that "no forensic method other than nuclear DNA analysis has been rigorously shown to have the capacity to consistently and with a high degree of certainty support conclusions about 'individualization'" [12]. This conclusion exposed significant validation gaps in many forensic disciplines when measured against Daubert's factors, particularly the requirement for known error rates [12].

The emphasis on error rate assessment has driven important changes in forensic science research and practice. As one researcher noted, "An empirical measurement of error rates is not simply a desirable feature; it is essential for determining whether a [forensic science] method is foundationally valid" [12]. This requirement has prompted initiatives such as the blind proficiency testing program at the Houston Forensic Science Center, which introduces mock evidence samples into ordinary workflows to develop statistical data on error rates [12].

Table 2: Impact of Daubert on Forensic Method Validation Requirements

| Daubert Factor | Traditional Forensic Practice | Post-Daubert Validation Requirements |

|---|---|---|

| Empirical Testing | Reliance on precedent and casework experience | Requirement for controlled validation studies under laboratory conditions |

| Error Rates | Often unknown or unquantified | Development of proficiency testing programs and statistical error rate measurement |

| Standards & Controls | Laboratory-specific protocols | Implementation of standardized protocols and quality control measures |

| Peer Review | Limited external scrutiny | Publication in peer-reviewed journals and external validation studies |

Methodological Implications: Validation Protocols and Research Design

Experimental Design for Daubert-Compliant Validation

For research scientists and drug development professionals, complying with Daubert standards requires rigorous experimental design focused on establishing foundational validity. The following protocols provide frameworks for generating forensically valid data:

Blind Proficiency Testing Protocol: The Houston Forensic Science Center has developed a model for blind testing that introduces mock evidence samples into normal workflows without analysts' knowledge [12]. This protocol includes:

- Sample Development: Creation of realistic mock evidence samples that represent various difficulty levels and scenarios encountered in casework

- Blind Introduction: Incorporation of these samples into the normal workflow through case management systems that prevent analysts from identifying test samples

- Data Collection: Systematic recording of results, including both correct and incorrect determinations

- Error Rate Calculation: Statistical analysis of results to establish method and practitioner-specific error rates

- Process Evaluation: Assessment of entire testing流程, from evidence handling to reporting [12]

Multi-Laboratory Validation Studies: These studies involve multiple laboratories testing the same samples using standardized protocols to establish reproducibility and inter-laboratory consistency:

- Protocol Standardization: Development of detailed, standardized testing protocols distributed to all participating laboratories

- Sample Distribution: Creation and distribution of identical sample sets to multiple laboratories

- Blinded Analysis: Independent analysis of samples without inter-laboratory communication

- Data Analysis: Statistical comparison of results across laboratories to identify methodological inconsistencies and establish reliability metrics

- Publication: Submission of results for peer review and publication to satisfy Daubert's peer review factor [12] [13]

The Scientist's Toolkit: Research Reagent Solutions for Forensic Validation

Table 3: Essential Research Materials for Forensic Method Validation

| Research Reagent | Function in Validation Studies | Application Examples |

|---|---|---|

| Standard Reference Materials | Provides known controls for method calibration and verification | Controlled substances with certified purity, DNA standards with known profiles |

| Proficiency Test Samples | Allows assessment of analyst competency and method reliability | Mock case samples with known ground truth for blind testing |

| Quality Control Materials | Monitors analytical process stability and repeatability | Internal standards, control samples analyzed with each batch |

| Calibration Standards | Establishes quantitative relationship between signal and analyte concentration | Drug quantification standards, instrument calibration standards |

| Blinded Sample Sets | Eliminates cognitive bias during method validation | Samples with known characteristics but unknown to analysts during testing |

Visualization of Legal Standards and Their Application

Evolution of Expert Evidence Standards

Figure 1: Evolution of U.S. Expert Evidence Standards

Daubert Standard Application Workflow

Figure 2: Daubert Admissibility Decision Workflow

Current Landscape and Research Implications

State-by-State Adoption Patterns

The adoption of Daubert versus Frye across United States jurisdictions remains mixed, creating a complex patchwork of standards that researchers and expert witnesses must navigate [16]. As of 2025, the distribution includes:

- Daubert States: Approximately 27 states have adopted Daubert in some form, though only nine have adopted it in its entirety without modification [11]

- Frye States: Several significant jurisdictions including California, Illinois, and New York continue to adhere to the Frye standard [18]

- Hybrid Approaches: Some states apply modified Daubert or Frye standards, while others have developed unique approaches or apply different standards depending on case type [16]

This variation necessitates that researchers understand the specific evidentiary standards applicable in their jurisdiction, as validation requirements may differ significantly between Daubert and Frye jurisdictions [16] [18].

Future Directions in Forensic Method Validation

The ongoing implementation of Daubert standards continues to drive methodological improvements in forensic science and related research fields. Key developments include:

- Increased Emphasis on Error Rate Quantification: The forensic science community is developing more sophisticated approaches to measuring and reporting error rates, moving from binary "right/wrong" assessments to more nuanced reliability metrics [12]

- Blind Testing Integration: More forensic laboratories are implementing blind proficiency testing programs to generate empirical data on method reliability and analyst performance [12]

- Standardization Initiatives: Organizations including the National Institute of Standards and Technology (NIST) are developing standardized protocols and validation frameworks for various forensic disciplines [13]

- Interdisciplinary Collaboration: Increased collaboration between forensic practitioners, academic researchers, and statistical experts addresses validity questions that cross traditional disciplinary boundaries [12] [13]

For researchers and drug development professionals, these trends underscore the importance of building robust validation data directly into research designs rather than treating validation as an afterthought. The integration of statistical rigor, error analysis, and independent replication from the earliest stages of method development creates stronger scientific foundations that better withstand judicial scrutiny under Daubert standards [12] [19].

The evolution from Frye to Daubert represents a significant maturation in how legal systems evaluate scientific evidence, shifting from deference to professional consensus toward active judicial assessment of methodological reliability. For researchers, scientists, and drug development professionals, this legal evolution has profound implications for how scientific methods are developed, validated, and presented in legal contexts.

The Daubert framework, with its emphasis on testability, error rates, peer review, and standardization, aligns closely with core scientific values of empirical testing and methodological transparency [17] [19]. By integrating these principles into research design and validation processes, scientific professionals can enhance both the legal robustness and scientific integrity of their work. As forensic method validation continues to evolve in response to these legal standards, the intersection of law and science promises to yield more reliable, transparent, and scientifically rigorous approaches to evidence evaluation across multiple disciplines.

Implementing Rigorous Protocols: A Guide to Forensic Method Validation

ANSI/ASB Standard 036, titled "Standard Practices for Method Validation in Forensic Toxicology," establishes the minimum standards of practice for validating analytical methods that target specific analytes or analyte classes within forensic toxicology [3]. This standard provides a critical framework for ensuring confidence and reliability in forensic toxicological test results by demonstrating that any analytical method is fit for its intended purpose [3]. The standard applies across multiple subdisciplines, including postmortem forensic toxicology, human performance toxicology (e.g., drug-facilitated crimes), non-regulated employment drug testing, court-ordered toxicology, and general forensic toxicology involving non-lethal poisonings or intoxications [3].

The development and adoption of Standard 036 represents a significant advancement in forensic science standardization. It has officially replaced the previous version developed by the Scientific Working Group for Forensic Toxicology (SWGTOX), marking an evolution in validation practices [20]. This transition reflects the broader movement within forensic science toward empirically validated methods with demonstrated reliability, particularly in response to judicial scrutiny following the Daubert ruling, which requires judges to examine the empirical foundation for proffered expert testimony [21]. The standard aligns with the increasing emphasis on method validation across forensic disciplines, addressing concerns raised by scientific organizations about the limited research foundation supporting many traditional forensic techniques [21].

Core Validation Parameters and Experimental Protocols

Standard 036 outlines specific validation parameters that must be evaluated to establish a method's reliability. The quantitative criteria for these parameters are summarized in the table below, providing a template for implementation.

Table 1: Key Method Validation Parameters and Acceptance Criteria

| Validation Parameter | Experimental Protocol | Acceptance Criteria | Technical Requirements |

|---|---|---|---|

| Accuracy | Analysis of quality control samples at multiple concentrations across the calibration range | Typically ±15-20% of target concentration | Use certified reference materials when available |

| Precision | Repeated analysis of replicates (n≥5) at low, medium, and high concentrations within a run and across different runs | Coefficient of variation ≤15-20% | Evaluates both intra-day and inter-day variability |

| Selectivity | Analysis of blank samples from at least 10 different sources to check for interferences | No significant interference (<20% of LLOQ) | Tests potential cross-reactivity with similar compounds |

| Specificity | Challenge the method with compounds structurally similar to the target analyte | No significant response for analogues | Confirms method distinguishes target from interferents |

| Linearity | Analysis of calibration standards across the expected concentration range | Correlation coefficient (r) ≥0.99 | Minimum of 6 concentration levels recommended |

| Limit of Detection (LOD) | Analysis of decreasing analyte concentrations | Signal-to-noise ratio ≥3:1 | Determined by both empirical and statistical approaches |

| Lower Limit of Quantification (LLOQ) | Analysis of decreasing concentrations with acceptable precision and accuracy | Signal-to-noise ratio ≥10:1 with ±20% accuracy | Lowest concentration with reliable quantification |

The experimental design for validating a method according to Standard 036 requires a systematic approach that addresses each parameter with appropriate statistical rigor. For the accuracy and precision experiments, the protocol requires analysis of quality control samples at a minimum of three concentrations (low, medium, and high) across the calibration curve, with five replicates at each concentration level. These analyses should be performed on at least three separate days to establish both within-run and between-run precision [3]. The results should demonstrate that the method consistently produces results within the specified acceptance criteria, typically ±15% of the target concentration for accuracy and ≤15% coefficient of variation for precision [22].

The selectivity and specificity experiments are designed to ensure the method accurately measures the target analyte without interference from other substances that might be present in forensic samples. The experimental protocol requires testing blank samples from at least ten different sources to check for endogenous interferences [3]. Additionally, the method should be challenged with compounds structurally similar to the target analyte, as well as common medications and drugs of abuse that might be present in casework samples. For ionizable compounds, this may include testing different isobaric compounds that could produce similar mass spectrometric transitions [22].

Method Validation Workflow and Implementation Framework

The following diagram illustrates the comprehensive workflow for method validation according to ANSI/ASB Standard 036, showing the sequential relationship between different validation components and decision points.

Method Validation Workflow

Implementing Standard 036 requires careful planning and documentation. The validation process begins with establishing a comprehensive validation plan that defines the scope, objectives, and acceptance criteria before any experiments are conducted. This plan should detail the specific experiments to be performed, the number of replicates, concentration levels, and statistical approaches for data analysis [3]. The plan must also address the context of application, specifying the biological matrices, analyte concentrations, and potential interferents relevant to the method's intended use in forensic toxicology [22].

Documentation represents a critical component of the validation process. Standard 036 requires complete and transparent reporting of all validation data, including raw data, statistical analyses, and any deviations from the established protocol [3]. The final validation report should provide sufficient detail to allow an independent forensic toxicologist to understand and evaluate the method's performance characteristics. This documentation serves not only as an internal quality assurance measure but also as potential evidence in legal proceedings where the method's reliability may be challenged [21].

The Scientist's Toolkit: Essential Reagents and Materials

Successful implementation of method validation according to Standard 036 requires specific reagents, materials, and instrumentation. The following table details the essential components of the validation toolkit and their functions in the process.

Table 2: Essential Research Reagent Solutions for Method Validation

| Toolkit Component | Function in Validation | Specific Application Examples |

|---|---|---|

| Certified Reference Materials | Provide traceable analyte quantification | Drug parent compounds and metabolites with certified purity |

| Internal Standards | Correct for analytical variability | Stable isotope-labeled analogs of target analytes |

| Quality Control Materials | Monitor method performance over time | Spiked samples at low, medium, and high concentrations |

| Extraction Solvents | Isolate analytes from biological matrices | Methyl tert-butyl ether (MTBE), ethyl acetate, hexane |

| Derivatization Reagents | Enhance detection of certain compounds | MSTFA, BSTFA, PFPA for GC-MS applications |

| Mobile Phase Additives | Improve chromatographic separation | Ammonium formate, ammonium acetate, formic acid |

| Biological Matrices | Validate method in relevant media | Blood, urine, oral fluid, liver homogenate |

| Solid-Phase Extraction Cartridges | Cleanup and concentrate samples | C18, mixed-mode, polymer-based sorbents |

The selection of appropriate certified reference materials is particularly critical, as these form the foundation for all quantitative measurements. These materials should be obtained from accredited suppliers with documented purity and stability [3]. Similarly, internal standards, preferably stable isotope-labeled versions of the target analytes, are essential for correcting variations in sample preparation, injection volume, and ion suppression/enhancement effects in mass spectrometric detection [22].

The biological matrices used for validation should match those encountered in casework, with consideration for potential variations between specimen types. For example, methods validated for whole blood may require additional validation if applied to urine or alternative matrices [3]. The toolkit should include matrices from multiple sources to properly evaluate selectivity and matrix effects, a requirement emphasized in Standard 036 which specifies testing samples from at least ten different sources [3].

Relationship to Broader Forensic Standardization Initiatives

ANSI/ASB Standard 036 does not exist in isolation but functions within a broader framework of forensic science standardization. The following diagram illustrates how Standard 036 connects with other key standards and guidelines in the forensic validation ecosystem.

Forensic Standardization Framework

Standard 036 aligns with the emerging international standard for forensic sciences, ISO 21043, which provides requirements and recommendations designed to ensure quality throughout the forensic process, including vocabulary, recovery, analysis, interpretation, and reporting [23]. This alignment is crucial as it creates consistency across disciplines and jurisdictions. The standard also directly supports the admissibility of forensic toxicology evidence in legal proceedings by addressing the Daubert factors, particularly the requirements for testing, error rate determination, and adherence to professional standards [21].

Recent developments in forensic standardization continue to reinforce the importance of Standard 036. The Organization of Scientific Area Committees (OSAC) for Forensic Science has included Standard 036 on its Registry, recognizing it as a consensus standard for forensic practice [8]. Additionally, the Academy Standards Board (ASB) has proposed a new Guideline (ASB 236) specifically for "Conducting Test Method Development, Validation, and Verification in Forensic Toxicology," which will provide further guidance for implementing Standard 036 [8]. These interconnected standards create a comprehensive framework that supports the transparent and reproducible practices essential to modern forensic science [23].

ANSI/ASB Standard 036 provides a comprehensive template for method validation that meets the rigorous demands of modern forensic toxicology. By establishing minimum standards and clear validation criteria, the standard promotes confidence and reliability in forensic toxicology results, which is essential given the consequential nature of these analyses in legal proceedings [3]. The structured approach outlined in this deconstruction offers researchers and practitioners a roadmap for implementing validation protocols that withstand scientific and judicial scrutiny.

The ongoing evolution of Standard 036, including the development of a second edition noted by NIST [22], reflects the dynamic nature of forensic science and the commitment to evidence-based practices. As new analytical technologies emerge and novel psychoactive substances continue to challenge forensic toxicology laboratories, the principles embedded in Standard 036 provide a stable foundation for validating methods to address these changes. For researchers, drug development professionals, and forensic scientists, mastery of this standard is not merely a regulatory requirement but a fundamental component of scientific rigor and professional practice in the complex landscape of forensic toxicology.

Forensic science provides critical, objective evidence for the judicial system. The integrity of this evidence depends entirely on the reliability and validity of the analytical methods used to produce it. Method validation demonstrates that a scientific procedure is fit for its intended purpose, ensuring confidence in forensic results across disciplines. This case study examines the application of validation standards in three key forensic fields: forensic toxicology, DNA analysis, and digital forensics. Within the broader thesis on forensic method validation, this analysis reveals both the established, standardized frameworks in the chemical and biological sciences and the emerging, adaptive approaches required for digital evidence, highlighting a unified principle: validation is fundamental to forensic reliability.

Validation in Forensic Toxicology

Forensic toxicology focuses on detecting and quantifying drugs, alcohol, and poisons in biological specimens. The ANSI/ASB Standard 036 establishes minimum practices for validating analytical methods in this field, from postmortem analysis to human performance testing [3]. The standard's core principle is that validation must prove a method is "fit for its intended use," providing a framework that ensures confidence in results that can have profound legal consequences [3].

Experimental Protocol for Method Validation

A validation study for a novel liquid chromatography-tandem mass spectrometry (LC-MS/MS) method for quantifying synthetic opioids would follow this protocol:

- Step 1: Define Scope and Parameters: Establish the method's purpose, target analytes, and required validation parameters based on Standard 036.

- Step 2: Prepare Calibrators and Controls: Create a series of standard solutions at known concentrations for calibration and quality control samples.

- Step 3: Determine Selectivity/Specificity: Analyze a minimum of 10 independent sources of blank matrix to confirm no endogenous interference at the retention times of the target analytes and internal standards.

- Step 4: Establish Linearity and LOQ: Analyze calibrators across the working range (e.g., 1-100 ng/mL). The limit of quantification (LOQ) is the lowest concentration meeting predefined accuracy and precision criteria (e.g., ±20% bias, <20% CV).

- Step 5: Assess Accuracy and Precision: Analyze QC samples at low, medium, and high concentrations over multiple runs and days. Intra-day and inter-day precision should not exceed 15% CV, and accuracy should be within ±15% of the nominal concentration.

- Step 6: Evaluate Matrix Effects: Post-column infuse analyte solution while injecting blank matrix extracts to monitor signal suppression/enhancement. Quantify matrix factor by comparing analyte response in matrix to neat solution.

- Step 7: Verify Processed Sample Stability: Reinject analyzed samples after storage in the autosampler (e.g., 24-72 hours) to demonstrate analyte stability.

The workflow for this validation is systematic, ensuring each parameter is rigorously tested before the method is declared fit for use. The diagram below illustrates this workflow:

Key Reagents and Materials for Toxicology Validation

Table 1: Essential Research Reagent Solutions for Toxicology Method Validation

| Reagent/Material | Function in Validation |

|---|---|

| Certified Reference Materials | Provides known, high-purity analyte standards for preparing calibrators and quality control samples; essential for establishing accuracy and calibration model. |

| Blank Biological Matrix | Drug-free human plasma, urine, or whole blood used to prepare calibration standards and QCs; critical for assessing selectivity and matrix effects. |

| Stable Isotope-Labeled Internal Standards | Corrects for variability in sample preparation and ionization suppression/enhancement in mass spectrometry; improves accuracy and precision. |

| Quality Control Samples | Independently prepared samples at low, medium, and high concentrations; used to evaluate the method's accuracy and precision across the analytical run. |

| Mobile Phase Solvents & Additives | High-purity solvents and modifiers for chromatographic separation; their consistency is vital for maintaining retention time stability and signal response. |

Validation in DNA Analysis

DNA analysis represents one of the most standardized disciplines in forensic science. ANSI/ASB Standard 020 governs validation for DNA mixtures, requiring labs to design studies that demonstrate reliable interpretation protocols for complex samples [24]. Furthermore, the FBI's Quality Assurance Standards (QAS), updated for 2025, provide a regulatory framework for all forensic DNA testing laboratories, with new clarifications for emerging technologies like Rapid DNA [25].

Experimental Protocol for DNA Mixture Interpretation

A validation study for a laboratory's probabilistic genotyping protocol for mixed DNA samples involves: