Evaluating Expanded Conclusion Scales for Forensic Chemical Evidence: A New Paradigm for Evidence Interpretation

This article examines the implementation and impact of expanded conclusion scales in the evaluation of forensic chemical evidence.

Evaluating Expanded Conclusion Scales for Forensic Chemical Evidence: A New Paradigm for Evidence Interpretation

Abstract

This article examines the implementation and impact of expanded conclusion scales in the evaluation of forensic chemical evidence. Moving beyond the traditional ternary system of Identification, Inconclusive, and Exclusion, we explore scales that incorporate support-based statements like 'Support for Common Source' and 'Support for Different Sources.' Grounded in the paradigm shift towards more transparent, data-driven forensic methods, this analysis covers the foundational theory behind expanded scales, methodological approaches for implementation in chemical analysis, strategies for optimizing examiner performance and mitigating cognitive bias, and comparative validation against traditional methods. The discussion is highly relevant for researchers, forensic scientists, and professionals in drug development and toxicology who seek to enhance the logical rigor and evidentiary value of chemical findings.

The Paradigm Shift in Forensic Evidence Interpretation: From Ternary to Expanded Scales

In forensic chemistry, the analytical process culminates in a formal conclusion regarding the evidence examined. For decades, the dominant framework for reporting these conclusions has been the traditional 3-conclusion scale, which limits examiners to three categorical decisions: Identification, Exclusion, or Inconclusive [1] [2]. This tripartite system has provided a seemingly straightforward approach to evidence interpretation across multiple forensic disciplines, including the analysis of controlled substances, toxicological substances, fire debris, and explosives. However, within the rigorous scientific context of modern forensic chemistry, this limited scale presents significant constraints on the expression of evidential strength and the communication of analytical certainty.

The inherent limitations of this traditional scale have prompted research into expanded conclusion scales that offer a more nuanced approach to reporting forensic chemical evidence. This analytical comparison guide examines the fundamental constraints of the 3-conclusion scale through empirical data and experimental studies, demonstrating how expanded scales provide a superior framework for conveying the probative value of forensic chemical analyses. As forensic chemistry continues to evolve toward more quantitative and statistically robust practices, the adoption of expanded conclusion scales represents a critical advancement in aligning reporting practices with scientific principles [3].

Fundamental Structural Differences

The traditional 3-conclusion scale forces a continuous spectrum of analytical evidence into three discrete categories, potentially losing significant information about the strength of evidence. In contrast, expanded scales introduce intermediate conclusions that better represent the continuum of analytical certainty.

Table 1: Structural Comparison of Conclusion Scale Frameworks

| Scale Characteristic | Traditional 3-Conclusion Scale | Expanded 5-Conclusion Scale |

|---|---|---|

| Conclusion Categories | Identification, Inconclusive, Exclusion | Identification, Support for Common Source, Inconclusive, Support for Different Sources, Exclusion |

| Information Resolution | Low | High |

| Evidential Strength Mapping | Categorical | Continuous |

| Risk of Information Loss | High | Low |

| Investigative Utility | Limited | Enhanced |

Quantitative Performance Metrics

Experimental studies comparing scale performance demonstrate significant differences in how examiners utilize expanded scales versus traditional frameworks. Research in latent print examinations—which share analogous decision-making challenges with forensic chemistry—reveals that when using the expanded scale, examiners became more risk-averse when making "Identification" decisions and tended to transition both weaker Identification and stronger Inconclusive responses to the "Support for Common Source" statement [1] [2]. This behavioral shift indicates that expanded scales prompt more calibrated decision-making that better aligns with the actual strength of analytical evidence.

Table 2: Experimental Performance Data from Comparative Studies

| Performance Metric | Traditional 3-Conclusion Scale | Expanded 5-Conclusion Scale |

|---|---|---|

| Rate of Definitive Conclusions | Higher | Moderately lower |

| Error Rate for Identifications | Potentially higher | Reduced through risk aversion |

| Inconclusive Rate | Variable, often higher for ambiguous cases | Lower, with reclassification to support statements |

| Evidential Transparency | Limited | Enhanced |

| Statistical Foundation | Weak | Strengthened |

Protocol for Comparative Decision-Making Studies

The fundamental methodology for evaluating conclusion scales involves controlled studies where forensic examiners analyze standardized sample sets using different scale frameworks. The following protocol outlines the key experimental design elements:

Sample Set Preparation: Curate a balanced set of known-source and different-source chemical evidence samples with predetermined ground truth. Samples should span a range of analytical challenges, including complex mixtures, low concentrations, and degraded materials.

Participant Selection and Randomization: Engage qualified forensic chemists as participants, randomly assigning them to either the traditional or expanded scale condition to minimize selection bias.

Blinded Analysis: Conduct examinations under blinded conditions where participants have no knowledge of the expected outcomes or sample origins.

Data Collection and Signal Detection Theory Analysis: Record all conclusions and analyze results using Signal Detection Theory (SDT) to measure sensitivity (d') and decision threshold (β) parameters [1]. SDT provides a quantitative framework for determining whether the expanded scale changes the threshold for definitive conclusions.

Error Rate Calculation: Compute false positive and false negative rates for each scale framework, establishing comparative reliability metrics.

Protocol for Quantitative Evidential Strength Measurement

Forensic chemistry increasingly employs quantitative analytical approaches that generate continuous data, providing an ideal foundation for implementing expanded conclusion scales:

Instrumental Analysis: Employ validated chromatographic and spectroscopic techniques (GC-MS, LC-MS/MS, HPLC) to generate quantitative data for chemical evidence [4] [5].

Multivariate Statistical Modeling: Apply statistical learning tools to classify analytical results and generate likelihood ratios or similar continuous metrics of evidential strength [6].

Threshold Establishment: Define statistical thresholds for conclusion categories based on empirical validation studies and probability models.

Cross-Validation: Implement cross-validation procedures to estimate classification error rates and validate threshold selections.

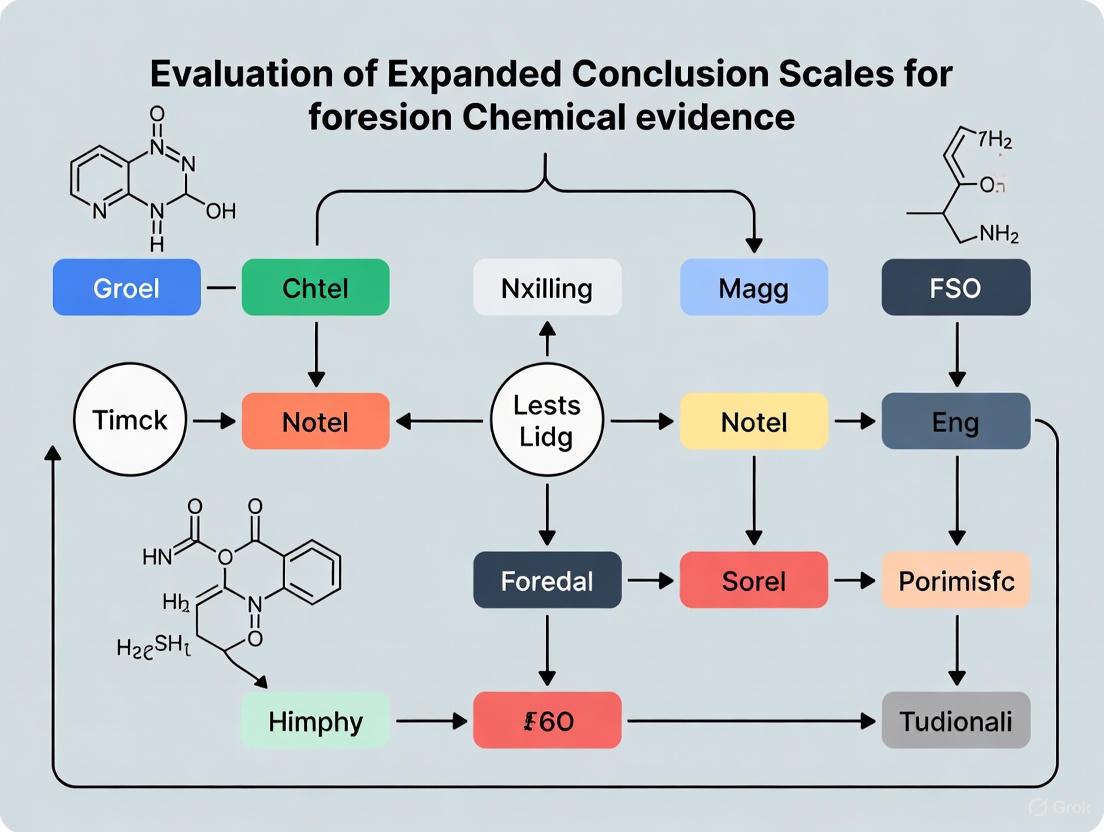

Signaling Pathways and Analytical Workflows

The decision-making process in forensic chemical analysis follows a logical pathway from evidence examination to final conclusion. The expanded conclusion scale introduces additional decision nodes that provide more nuanced reporting options.

Figure 1: Decision pathway for expanded conclusion scales in forensic chemistry

Experimental Workflow for Quantitative Forensic Chemistry

Modern forensic chemistry employs sophisticated instrumental techniques that generate quantitative data suitable for statistical evaluation and classification using expanded conclusion frameworks.

Figure 2: Experimental workflow for quantitative forensic chemistry

The Scientist's Toolkit: Essential Research Reagents and Materials

Implementing robust expanded conclusion scales in forensic chemistry requires specific analytical tools and statistical approaches. The following reagents and methodologies represent essential components for conducting validation studies and operational analyses.

Table 3: Essential Research Reagents and Methodologies for Expanded Conclusion Research

| Tool/Reagent | Function in Conclusion Scale Research | Application Example |

|---|---|---|

| Gas Chromatography-Mass Spectrometry (GC-MS) | Separation and identification of chemical compounds in complex mixtures | Drug purity analysis, fire debris characterization [5] |

| Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) | Quantitative analysis of non-volatile or thermally labile compounds | Toxicology screening, drug metabolite quantification [5] |

| Deuterated Internal Standards | Correction for analytical variability and quantification accuracy | Improved signal-to-noise ratio in trace analysis [5] |

| Statistical Learning Algorithms | Multivariate classification of analytical data for source attribution | Fracture surface matching, chemical profile comparison [6] |

| Likelihood Ratio Models | Quantitative expression of evidential strength under competing propositions | Bayesian evaluation of analytical data [3] |

| Reference Standard Materials | Method validation and quality assurance | Certified reference materials for instrument calibration [4] |

| Signal Detection Theory Framework | Measurement of decision thresholds and sensitivity | Comparison of examiner performance across conclusion scales [1] |

The limitations of the traditional 3-conclusion scale in forensic chemistry are both theoretical and practical, affecting the scientific validity and operational utility of forensic evidence. The restricted categorical framework fails to capture the continuous nature of analytical data generated by modern instrumental techniques, potentially losing significant information about evidential strength [4] [5]. Experimental studies demonstrate that expanded scales promote more calibrated decision-making, reduce categorical thinking, and provide greater transparency regarding analytical certainty [1] [2].

The implementation of expanded conclusion scales aligns with broader trends toward quantitative methodologies in forensic science, including statistical learning approaches for evidence classification [6] and Bayesian frameworks for evidence evaluation [3]. For forensic chemistry researchers and practitioners, adopting expanded scales represents an essential step toward enhancing scientific rigor, improving communication of evidential value, and strengthening the foundation of forensic evidence in legal contexts.

Expanded conclusion scales represent a significant evolution in forensic reporting, moving beyond the traditional three-value system of Identification, Inconclusive, or Exclusion. These new frameworks introduce support-based statements that provide a more nuanced expression of the strength of forensic evidence. Within forensic chemical evidence research and drug development, this shift allows scientists to communicate findings with greater scientific transparency and probative value, offering a more detailed mapping of the internal strength-of-evidence value to a conclusion [1].

The fundamental limitation of the traditional 3-conclusion scale is its tendency to lose information when translating complex analytical data into one of only three possible conclusions. The expanded scale, as proposed by bodies such as the Friction Ridge Subcommittee of OSAC, incorporates two additional values: "support for different sources" and "support for common sources" [1]. This approach aligns with a broader disciplinary push for fully transparent reporting that discloses fundamental principles, methodology, validity, error rates, assumptions, limitations, and areas of scientific controversy [7].

Comparative Analysis: Traditional vs. Expanded Scales

Theoretical Framework and Structural Comparison

Table 1: Structural Composition of Conclusion Scales

| Scale Type | Available Conclusions | Core Function |

|---|---|---|

| Traditional 3-Valued Scale | Identification, Inconclusive, Exclusion [1] | Categorical classification that can lose granular evidence strength during translation [1]. |

| Expanded 5-Valued Scale | Identification, Support for Common Source, Inconclusive, Support for Different Sources, Exclusion [1] | Provides a more continuous spectrum for expressing evidentiary strength, retaining more information [1]. |

Performance Outcomes from Experimental Data

Experimental data modeling using signal detection theory reveals how the adoption of expanded scales alters examiner decision-making thresholds and the ultimate distribution of conclusions.

Table 2: Experimental Outcomes from Latent Print Examination Study

| Performance Metric | Traditional 3-Value Scale | Expanded 5-Value Scale | Observed Change |

|---|---|---|---|

| Threshold for "Identification" | Baseline risk level | Increased threshold [1] | Examiners became more risk-averse [1]. |

| Conclusion Distribution | Weaker Identifications and stronger Inconclusives forced into distinct categories | Weaker Identifications and stronger Inconclusives transitioned to "Support for Common Source" [1] | Redistribution of conclusions, providing more granular information on the strength of evidence [1]. |

| Primary Utility | Simple, categorical decisions | More investigative leads and a more nuanced evidence presentation [1] | Trade-offs between correct and erroneous identifications [1]. |

Detailed Experimental Protocols

The following methodology details a protocol used to empirically evaluate the impact of expanded conclusion scales, providing a model for future research in forensic chemistry.

Examiner Comparison Task Workflow

The diagram below illustrates the experimental workflow used to compare scale performance.

Protocol Steps

- Participant Recruitment and Randomization: Certified latent print examiners are recruited and randomly assigned to one of two groups: one using the traditional 3-conclusion scale and the other using the expanded 5-conclusion scale [1].

- Comparison Task: Each examiner in both groups completes an identical set of 60 latent print comparisons. This sample size provides sufficient data for robust statistical modeling [1].

- Data Collection: The conclusion chosen for each comparison is systematically recorded. The data set must be structured to allow for paired analysis, tracking how the same evidence is classified under different scales.

- Data Modeling with Signal Detection Theory: The collected data is analyzed using Signal Detection Theory (SDT). This statistical framework allows researchers to measure whether the use of the expanded scale systematically changes an examiner's threshold or sensitivity for making an "Identification" decision compared to the control group using the traditional scale [1].

- Analysis of Conclusion Redistribution: The analysis specifically examines how conclusions are redistributed, particularly focusing on whether examiners shift weaker "Identification" and stronger "Inconclusive" responses to the new "Support for Common Source" statement [1].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Conducting Scale Comparison Studies

| Item Name | Function/Application in Research |

|---|---|

| Validated Comparison Stimuli | A standardized set of latent and known prints (or chemical spectra/data) used as the test medium for all examiners/analysts to ensure consistency. |

| Signal Detection Theory (SDT) Model | A statistical framework used to quantify decision-making thresholds and sensitivity, measuring how the conclusion scale affects examiner/analyst behavior [1]. |

| Randomized Group Protocol | An experimental design that randomly assigns participants to different conclusion scale groups to control for confounding variables and ensure the validity of the comparison [1]. |

| Data Collection Framework | A structured database or system for recording all conclusions, which must be designed to handle the different response options of each scale being tested. |

| Statistical Analysis Software | Software capable of running advanced statistical models, including Signal Detection Theory analysis, to interpret the collected experimental data [1]. |

Signal Detection Theory (SDT) provides a robust framework for analyzing decision-making under conditions of uncertainty, offering a precise language and graphic notation for understanding how decisions are made when signals must be distinguished from noise [8]. Originally developed in the context of radar operation during World War II, SDT has since been applied to numerous fields including psychology, medicine, and notably, forensic science [9]. In forensic contexts, SDT illuminates the fundamental challenges experts face when evaluating evidence where the "signal" represents a true connection between a piece of evidence and a suspect, while "noise" represents the inherent variability and uncertainty in forensic analysis [10]. The theory acknowledges that nearly all reasoning and decision-making occurs amidst some degree of uncertainty, and provides tools to quantify both the inherent detectability of signals and the decision biases of those making the judgments [8].

The application of SDT to forensic science is particularly relevant for evaluating expanded conclusion scales in forensic chemical evidence research. It helps formalize how forensic scientists balance the competing risks of different types of errors when rendering conclusions about evidence [10]. As forensic science continues to evolve toward more nuanced expression of evidential strength, understanding the theoretical underpinnings provided by SDT becomes essential for researchers, scientists, and drug development professionals working in this interdisciplinary field. This framework allows for systematic analysis of how effectively practitioners can distinguish between evidence with different probative values, and how their decision thresholds affect the interpretation of forensic results.

Core Principles of Signal Detection Theory

Fundamental Concepts and Terminology

Signal Detection Theory formalizes decision-making under uncertainty through several key concepts. The theory begins with the premise that decision-makers must distinguish between two distinct states of reality: either a signal is present or absent [8]. In forensic contexts, this might correspond to whether evidence truly links a suspect to a crime scene (signal present) or does not (signal absent). The decision-maker then makes a binary choice: either respond "yes" (signal present) or "no" (signal absent) [11]. This combination of reality states and decisions creates four possible outcomes, as detailed in Table 1: Signal Detection Theory Outcome Matrix.

Table 1: Signal Detection Theory Outcome Matrix

| Reality State | Signal Present | Signal Absent |

|---|---|---|

| "Yes" Response | Hit | False Alarm |

| "No" Response | Miss | Correct Rejection |

In forensic science, these outcomes have significant implications. A hit occurs when a forensic expert correctly identifies a true connection between evidence and a suspect. A miss occurs when the expert fails to identify a true connection. A false alarm happens when the expert incorrectly claims a connection exists when none does, while a correct rejection occurs when the expert correctly identifies the absence of a connection [8]. The consequences of these different error types vary substantially in forensic contexts, with false alarms potentially leading to wrongful accusations, and misses potentially allowing guilty parties to avoid detection.

Internal Response Distributions and Decision Criteria

A central tenet of SDT is that both signals and noise exist along a continuum of strength, represented by overlapping probability distributions [8]. The noise-alone distribution represents the internal response when only background noise or non-relevant information is present, while the signal-plus-noise distribution represents the internal response when a true signal is present amidst the noise [11]. These distributions inevitably overlap, creating inherent uncertainty in the decision process [8].

The criterion (or decision threshold) is the internal response level at which a decision-maker switches from "no" to "yes" responses [8]. This criterion is influenced by both the perceived probabilities of signal presence and the consequences of different types of errors [8]. In forensic science, this criterion placement reflects an examiner's conservatism or liberalism in making identifications. A conservative criterion (set high) reduces false alarms but increases misses, while a liberal criterion (set low) increases hits but also increases false alarms [8]. The following diagram illustrates the relationship between these distributions and the decision criterion:

Diagram 1: Signal and Noise Distributions in SDT

The discriminability index (d') quantifies the degree of separation between the noise-alone and signal-plus-noise distributions, representing the inherent detectability of the signal [8]. A higher d' indicates better ability to distinguish signal from noise, which in forensic contexts might correspond to more discriminative analytical techniques or clearer evidence patterns.

Receiver Operating Characteristic (ROC) Curves

The Receiver Operating Characteristic (ROC) curve provides a comprehensive graphical representation of decision performance across all possible criterion settings [8]. This curve plots the hit rate against the false alarm rate as the decision criterion moves from conservative to liberal [8]. The shape and position of the ROC curve reflect the underlying discriminability (d') between signal and noise distributions. A curve that bows upward toward the upper left corner indicates better discriminability, while a curve closer to the diagonal chance line indicates poorer discriminability [8].

In forensic science, ROC analysis offers a powerful tool for evaluating the performance of different forensic techniques, methodologies, or individual examiners. By examining the entire ROC curve, researchers can identify optimal decision criteria that balance the costs and benefits of different error types based on the specific context and consequences [8]. This becomes particularly important when validating new analytical techniques or establishing standards for evidence interpretation in forensic chemistry.

SDT Application to Forensic Evidence Evaluation

Forensic Decision-Making as a Signal Detection Problem

The application of Signal Detection Theory to forensic science creates a powerful framework for understanding and improving forensic decision-making [10]. In this context, the "signal" represents a true association between forensic evidence and a source (e.g., a chemical profile truly matching a suspected source), while "noise" represents the random variations and uncertainties inherent in forensic analysis [10]. The forensic examiner must decide whether the observed data contains sufficient signal to conclude that a match exists.

Forensic decision-making involves two distinct components that align with SDT principles: information acquisition and criterion setting [8]. Information acquisition refers to the data gathered through forensic analysis, such as chemical spectra, chromatograms, or other analytical measurements. This component depends on the sensitivity and specificity of the analytical techniques employed. Criterion setting refers to the decision threshold adopted by the forensic examiner, which is influenced by subjective factors including perceived consequences of errors, organizational culture, and individual risk tolerance [8]. Research has demonstrated that forensic examiners may adjust their decision criteria based on their perception of the relative costs of false positives versus false negatives, with some erring toward "yes" decisions to avoid missing true connections, while others adopt more conservative criteria to minimize false accusations [8].

Forensic scientists express their conclusions using various conclusion scales, which can be broadly categorized as categorical conclusions or likelihood ratios [12]. Categorical conclusions provide definitive statements (e.g., "identification," "exclusion"), while likelihood ratios quantify the strength of evidence by comparing the probability of the evidence under two competing propositions [13]. The interpretation of these conclusions by criminal justice professionals presents significant challenges, with research indicating widespread misunderstanding of the intended meaning and strength of different conclusion types [12].

Recent studies have examined how criminal justice professionals interpret different forensic conclusion formats. In one comprehensive study, 269 professionals assessed forensic reports containing categorical (CAT), verbal likelihood ratio (VLR), or numerical likelihood ratio (NLR) conclusions with either low or high evidential strength [12]. The results revealed systematic misinterpretations across conclusion types, as summarized in Table 2: Interpretation of Forensic Conclusion Types by Professionals.

Table 2: Interpretation of Forensic Conclusion Types by Professionals

| Conclusion Type | Strength Level | Interpretation Trend | Understanding Issues |

|---|---|---|---|

| Categorical (CAT) | High | Overestimated strength | Perceived as stronger than comparable VLR/NLR |

| Categorical (CAT) | Low | Underestimated strength | Correctly emphasized uncertainty |

| Verbal LR (VLR) | High | Overestimated strength | - |

| Numerical LR (NLR) | High | Overestimated strength | - |

| All Types | - | Self-assessment overestimation | Professionals overestimated their actual understanding |

The study found that approximately a quarter of all questions measuring actual understanding of forensic reports were answered incorrectly [12]. Furthermore, professionals consistently overestimated their own understanding of all conclusion types, indicating a concerning metacognitive gap in their ability to evaluate their comprehension of forensic evidence [14]. These findings highlight the critical need for improved training and standardization in how forensic conclusions are communicated and interpreted within the criminal justice system.

Research on the interpretation of forensic conclusions typically employs controlled experimental designs where participants evaluate simulated forensic reports containing different conclusion types and strengths. One representative methodology involved an online questionnaire administered to 269 criminal justice professionals, including crime scene investigators, police detectives, public prosecutors, criminal lawyers, and judges [12]. Each participant assessed three fingerprint examination reports that were identical except for the conclusion section, which systematically varied in format (CAT, VLR, or NLR) and strength (high or low) [12].

The experimental protocol typically includes several key components. First, participants provide demographic information and complete self-assessment measures of their understanding of forensic reports. Next, they evaluate multiple forensic reports with randomized conclusion types and strengths. For each report, participants answer factual questions designed to measure their actual understanding of the conclusion's meaning and implications [12]. These questions might ask participants to estimate the probability of the suspect being the source of the evidence or to compare the strength of different conclusions. The data collection phase is followed by statistical analyses comparing performance across professional groups, conclusion types, and strength levels, while controlling for potential confounding variables [14].

Research comparing different forensic conclusion formats has yielded several consistent findings with important implications for forensic practice. Studies have demonstrated systematic differences in how forensic examiners and legal professionals interpret various conclusion formats compared to laypersons [15]. For instance, fingerprint examiners distinguish between "Identification" and "Extremely Strong Support for Common Source" conclusions, while members of the general public do not perceive a meaningful difference between these categories [15].

Additionally, statements incorporating numerical values tend to be perceived as having lower evidential strength than categorical conclusions, even when intended to convey equivalent strength [15]. This presents a particular challenge for implementing likelihood ratio approaches, as legal professionals and jurors may undervalue numerically expressed evidence compared to more authoritative-sounding categorical conclusions. Laypersons also tend to place the highest categorical conclusion in each scale at the very top of the evidence axis, potentially creating ceiling effects that limit the ability to discriminate between strong and very strong evidence [15].

Quantitative Analysis of Forensic Evidence Impact

Beyond conclusion interpretation, researchers have employed quantitative case processing methodology to examine the relationship between forensic evidence and criminal justice outcomes. One such study analyzed cases involving chemical trace evidence, biology (DNA) evidence, and ballistics/toolmarks evidence, collecting data from multiple disconnected sources to build a comprehensive database [16]. This approach allowed researchers to test specific hypotheses about how forensic evidence influences case outcomes, as detailed in Table 3: Impact of Forensic Evidence on Criminal Justice Outcomes.

Table 3: Impact of Forensic Evidence on Criminal Justice Outcomes

| Study Reference | Evidence Type | Impact on Investigations | Impact on Court Outcomes |

|---|---|---|---|

| Briody [2] | DNA | - | Significant relationship with convictions |

| Roman et al. [3] | DNA | Increased suspect identification and arrests | Increased prosecution acceptance |

| McEwen & Regoeczi [4] | DNA, fingerprints, ballistics | - | Higher charges, conviction rates, and sentence lengths |

| Schroeder & White [6] | DNA | No significant relationship with case clearance | - |

| Multiple US Jurisdictions | Mixed | Predictive for arrest and charges | Inconsistent impact on convictions |

These studies reveal a complex relationship between forensic evidence and case outcomes, with impacts varying by evidence type, crime type, and stage of the criminal justice process [16]. The inconsistencies in research findings highlight the methodological challenges in studying forensic evidence impact, including variations in how evidence is categorized (collected, analyzed, or probative) and differences in jurisdictional practices [16].

Likelihood Ratio Framework for Evidence Evaluation

Theoretical Foundation of the Likelihood Ratio

The likelihood ratio (LR) framework provides a statistical approach for evaluating forensic evidence that aligns with the principles of Signal Detection Theory while offering greater nuance than categorical conclusions. The LR quantifies the strength of evidence by comparing the probability of the evidence under two competing propositions [13]. The formula for calculating the likelihood ratio is:

LR = P(E|H₁) / P(E|H₂)

Where E represents the observed evidence, H₁ represents the prosecution hypothesis (typically that the evidence came from the suspect), and H₂ represents the defense hypothesis (typically that the evidence came from an alternative source) [13]. The LR takes values from 0 to +∞, with values greater than 1 supporting the prosecution hypothesis and values less than 1 supporting the defense hypothesis [13].

The LR framework offers several advantages over traditional categorical approaches. First, it avoids the "falling off a cliff" problem associated with fixed threshold decisions, where minute differences in evidence strength lead to截然不同的conclusions [13]. Second, it explicitly considers both propositions rather than focusing exclusively on the prosecution hypothesis. Third, it provides a continuous scale of evidence strength that can be translated into verbal equivalents for communication to legal decision-makers [13].

LR Verbal Equivalents and Implementation

To facilitate communication of LR values in legal contexts, standardized verbal scales have been developed. One widely adopted scale is provided by the European Network of Forensic Science Institutes (ENFSI), which categorizes LR values into strength of evidence statements [13]. For example, LRs between 1 and 10 provide "weak support" for H₁ over H₂, while LRs between 10,000 and 100,000 provide "very strong support" [13]. Similar scales exist for LRs less than 1, providing equivalent support for H₂ over H₁.

The implementation of LR approaches in forensic chemistry has been demonstrated in various applications, including the discrimination between chronic and non-chronic alcohol drinkers using alcohol biomarkers [13]. In this context, statistical classification methods based on penalized logistic regression can be employed to calculate LRs, particularly when data separation occurs in two-class classification settings [13]. These methods offer flexibility in model assumptions and can handle situations where traditional approaches like Linear Discriminant Analysis encounter limitations.

The Researcher's Toolkit: Experimental Protocols and Reagents

Key Research Reagent Solutions

Research on forensic evidence evaluation often utilizes specific analytical techniques and statistical tools. The following table details essential materials and methods used in experimental studies of forensic chemical evidence evaluation.

Table 4: Key Research Reagent Solutions and Methodologies

| Tool/Method | Function | Application Example |

|---|---|---|

| Logistic Regression-Based Classification | Statistical modeling for evidence evaluation | Calculating likelihood ratios from multivariate chemical data [13] |

| Penalized Logistic Regression | Handles data separation in classification | Forensic toxicology applications with limited sample sizes [13] |

| R Shiny Implementation | User-friendly interface for statistical analysis | Allows forensic practitioners to compute LRs without programming expertise [13] |

| Alcohol Biomarkers (EtG, FAEEs) | Direct markers of alcohol consumption | Discriminating chronic from non-chronic alcohol drinkers [13] |

| Receiver Operating Characteristic (ROC) Analysis | Visualizing decision performance across thresholds | Evaluating discriminability of different forensic techniques [8] |

Experimental Workflow for Forensic Evidence Studies

The typical experimental workflow for studies evaluating forensic conclusion scales follows a systematic process from study design through data analysis. The following diagram illustrates this workflow:

Diagram 2: Experimental Workflow for Conclusion Scale Studies

This workflow begins with careful study design and participant recruitment, typically targeting relevant professional groups such forensic examiners, law enforcement personnel, legal professionals, and sometimes laypersons for comparison [12]. Researchers then create standardized forensic reports that are identical except for the conclusion section, which is systematically varied according to the experimental conditions [14]. Data collection occurs through controlled questionnaires that measure both self-assessed and actual understanding of the forensic conclusions [12]. Statistical analyses examine differences in interpretation across conclusion types, strength levels, and professional groups, while controlling for potential confounding variables [14]. Finally, findings inform the implementation of improved reporting standards and training materials to enhance the communication and interpretation of forensic evidence [15].

Signal Detection Theory provides a powerful theoretical framework for understanding how forensic examiners make decisions under conditions of uncertainty, balancing the competing risks of false positives and false negatives. The application of SDT principles to forensic science illuminates the complex interplay between the inherent discriminability of analytical techniques and the decision thresholds adopted by individual examiners. Research on the interpretation of forensic conclusions reveals systematic challenges in how different conclusion formats are understood by criminal justice professionals, with important implications for the implementation of expanded conclusion scales in forensic chemical evidence research.

The likelihood ratio framework offers a statistically rigorous approach to expressing evidential strength that aligns with SDT principles while avoiding the limitations of traditional categorical conclusions. However, effective implementation requires careful attention to how these quantitative expressions are communicated and interpreted by legal decision-makers. Future research should continue to explore optimal methods for conveying forensic conclusions, with particular emphasis on interdisciplinary collaboration between forensic scientists, statisticians, and legal professionals. By grounding forensic evidence evaluation in the theoretical foundations of Signal Detection Theory and implementing robust statistical approaches like likelihood ratios, the field can advance toward more transparent, reliable, and scientifically valid practices.

The forensic sciences are undergoing a fundamental transformation, moving away from methods based on human perception and subjective judgment toward those grounded in relevant data, quantitative measurements, and statistical models [17]. This paradigm shift is driven by a dual imperative: the ethical need for transparent reporting and the scientific requirement for empirical validation. In the specific domain of forensic chemical evidence, particularly drug analysis and toxicology, this shift manifests in the critical evaluation of how conclusions are reported. Traditional binary conclusion scales (e.g., Identification/Exclusion) are increasingly seen as information-limited and potentially misleading. This guide objectively compares the performance of traditional and expanded conclusion scales, framing the evaluation within the broader thesis that transparency and empirical validation are the primary forces advancing modern forensic practice. The adoption of expanded conclusion scales represents a concrete response to calls for more nuanced, scientifically defensible reporting practices that better communicate the strength of forensic evidence [1].

Experimental Comparison: Traditional vs. Expanded Scales

Experimental Protocol and Design

A seminal study published in the Journal of Forensic Sciences (March 2025) provides a robust empirical comparison of conclusion scales. The research employed a between-groups design where latent print examiners each completed 60 comparisons using one of two conclusion scales [1]. This experimental protocol is directly analogous to studies that could be conducted in forensic chemistry, such as comparing the interpretation of complex chromatographic data.

- Group 1 (Traditional Scale): Utilized a 3-conclusion scale: Identification, Inconclusive, or Exclusion.

- Group 2 (Expanded Scale): Utilized a 5-conclusion scale, adding two nuanced statements: Support for Common Source and Support for Different Sources.

The resulting data were modeled using Signal Detection Theory (SDT), a statistical framework that distinguishes between an examiner's inherent sensitivity to true matches/non-matches and their decision criterion (or risk tolerance). The primary measured outcome was whether the expanded scale changed the threshold for an "Identification" conclusion [1].

Quantitative Data Comparison

The following table summarizes the key performance data derived from the experimental study, illustrating the operational impacts of adopting an expanded conclusion scale.

Table 1: Performance Comparison of Traditional 3-Conclusion and Expanded 5-Conclusion Scales

| Performance Metric | Traditional 3-Conclusion Scale | Expanded 5-Conclusion Scale | Implication for Forensic Chemistry |

|---|---|---|---|

| Decision Threshold | Fixed, high-threshold for "Identification" | More flexible, dynamic thresholds | Allows for more nuanced reporting of complex analytical results |

| Information Fidelity | Loses information by compressing strength of evidence into 3 categories [1] | Preserves more information by mapping evidence to 5 categories [1] | Better communicates the strength of evidence from chemical analyses |

| Examiner Behavior | -- | Increased risk-aversion for definitive "Identification" decisions [1] | May promote conservatism in definitive source attributions for drug traces |

| Response Redistribution | -- | Weaker "Identification" and stronger "Inconclusive" responses transition to "Support for Common Source" [1] | Provides an intermediate category for evidence that is suggestive but not definitive |

| Investigative Utility | Limited to definitive conclusions | "Support" statements can generate more investigative leads [1] | Can guide investigations even when evidence does not support a definitive conclusion |

Visualizing the Analytical Workflow

The shift to expanded scales and statistical evaluation represents a new logical workflow for forensic analysis. The diagram below maps this process.

Figure 1: Analytical Workflow from Evidence to Transparent Report. The process begins with data collection, moves through empirical and statistical evaluation, and branches at the critical point of mapping to a conclusion scale, highlighting the divergent outputs of traditional (red) and expanded (green) systems.

The Decision Logic of Expanded Scales

The core advantage of an expanded scale lies in its more granular decision logic, which reduces information loss. The following diagram details this internal process.

Figure 2: Decision Logic and Information Fidelity. The expanded 5-scale (bottom) captures nuanced strength-of-evidence values by providing a dedicated output category for weak evidence, whereas the traditional 3-scale (top) collapses these nuanced states into a single, less informative "Inconclusive" category.

The Scientist's Toolkit: Research Reagent Solutions

Implementing empirically validated and transparent methods requires a suite of conceptual and technical tools. The following table details key "research reagents" essential for this work.

Table 2: Essential Toolkit for Research on Expanded Conclusion Scales and Empirical Validation

| Tool / Reagent | Function & Purpose | Application Example |

|---|---|---|

| Signal Detection Theory (SDT) | A statistical framework to model and disentangle an examiner's discrimination sensitivity from their decision-making criteria (bias) [1]. | Quantifying how an expanded scale changes risk aversion in "Identification" decisions, as demonstrated in the latent print study. |

| Likelihood Ratio (LR) Framework | The logically correct framework for interpreting evidence, quantifying the strength of evidence for one proposition versus another using statistical models [17]. | Providing a continuous, transparent scale of evidence strength that can later be mapped to categorical conclusion scales. |

| Empirical Validation Protocols | Experimental designs (e.g., black-box studies, proficiency testing) that test the performance and reliability of methods under casework-like conditions [17]. | Conducting studies to establish error rates and validity for chemical identification methods used in forensic toxicology. |

| Expanded Conclusion Scales | Reporting scales with additional categories (e.g., "Support for..." statements) that preserve more information about the strength of evidence [1]. | Providing a more nuanced report on a drug identification where the analytical data is strong but not conclusive due to sample degradation. |

| Transparency Taxonomy | A structured guide (e.g., Elliott's taxonomy) for determining what information to disclose in reports to achieve Reliability, Assessment, Justice, Accountability, and Innovation goals [18]. | Ensuring a forensic report includes the Basis, Justification, and Limitations of the analytical method and conclusions presented. |

The experimental data clearly demonstrates that expanded conclusion scales alter examiner behavior, promoting greater caution in definitive identifications while capturing more information about the strength of evidence [1]. This shift is a direct operational response to the broader paradigm shift demanding transparency and empirical validation across forensic science [17]. For researchers and professionals in forensic chemistry and drug development, the adoption of these scales, supported by the Likelihood Ratio framework and Signal Detection Theory, represents a critical step forward. It moves the discipline toward a future where forensic reports are not just conclusions but transparent, validated, and scientifically robust communications of evidential weight, fulfilling obligations to both science and justice [18].

Implementing Expanded Scales in Forensic Chemical Analysis: A Practical Framework

In forensic chemical evidence research, the journey from raw analytical data to a definitive conclusion statement is a structured, multi-stage process. This guide provides practitioners with a framework for evaluating expanded conclusion scales, moving from data collection through statistical analysis and interpretation to ultimately form scientifically defensible conclusions. The integrity of this process is paramount, as it supports critical decisions in the criminal justice system. This objective comparison outlines the core methodologies, their protocols, and the essential tools that underpin reliable forensic practice.

Selecting the appropriate data analysis technique is foundational to interpreting analytical data correctly. The table below summarizes key quantitative methods used in forensic research for mapping data to conclusions.

Table 1: Core Data Analysis Methods for Forensic Evidence Research

| Method | Primary Purpose | Key Applications in Forensic Chemistry | Underlying Algorithm/Model |

|---|---|---|---|

| Regression Analysis [19] [20] | Models the relationship between a dependent variable and one or more independent variables. | Quantifying the relationship between drug concentration and instrument response; calibrating equipment. | Linear Model: ( Y = β0 + β1*X + ε ) (where Y is dependent, X is independent, β are coefficients, ε is error) [19]. |

| Factor Analysis [19] [20] [21] | Reduces data complexity by identifying underlying latent variables (factors). | Identifying patterns in complex chemical profiles (e.g., ink or drug sample composition) [22]. | Exploratory (EFA) to uncover structure; Confirmatory (CFA) to test a hypothesized structure [21]. |

| Monte Carlo Simulation [19] [20] | Estimates probabilities of different outcomes by running multiple trials with random sampling. | Assessing uncertainty in measurements and risk analysis for complex evidential interpretations [20]. | Computational technique using random sampling from defined probability distributions to model outcomes [19]. |

| Time Series Analysis [19] | Analyzes data points collected sequentially over time to identify trends and patterns. | Monitoring degradation of a substance over time or analyzing sequential evidence patterns. | Not specified in search results. |

| Diagnostic Analysis [19] [23] | Identifies the causes of observed outcomes or anomalies in the data. | Investigating the reasons for an outlier in chemical analysis or a unexpected experimental result. | Involves collecting data from various sources to identify patterns and correlations that explain an event [23]. |

| Statistical Inference [21] | Uses sample data to make generalizations about a larger population. | Determining if two samples originate from the same source using statistical tests. | Common techniques include t-tests (two groups), ANOVA (multiple groups), and chi-square tests (categorical variables) [21]. |

Experimental Protocols for Key Analytical Methods

Protocol for Regression Analysis

Objective: To establish a quantitative relationship between an independent variable (e.g., concentration) and a dependent variable (e.g., instrument response) for calibration and prediction [20].

- Define Variables: Identify the dependent variable (the outcome you want to predict, e.g., peak area) and independent variable(s) (the predictors, e.g., concentration of an analyte) [19].

- Data Collection: Collect a sufficient number of data points across the expected range of the independent variable to ensure a robust model.

- Model Fitting: Use statistical software (e.g., R, SPSS) to fit a regression model (e.g., linear, logistic) to the data. The core equation is ( Y = β0 + β1*X + ε ), where ( Y ) is the dependent variable, ( X ) is the independent variable, ( β0 ) is the intercept, ( β1 ) is the coefficient, and ( ε ) is the error term [19].

- Check Assumptions: Validate key assumptions including linearity, independence of observations, and normality of errors. Not meeting these can compromise result reliability [19].

- Interpret Results: Examine the coefficient (β1) to understand the direction and magnitude of the relationship, and the R-squared value to assess the model's goodness-of-fit.

- Make Predictions: Use the validated model to predict unknown values of the dependent variable based on new measurements of the independent variable.

Protocol for Monte Carlo Simulation for Uncertainty

Objective: To quantify uncertainty and assess risks by modeling the range of possible outcomes in a complex system [19] [20].

- Model Definition: Create a mathematical model of the system or process, pinpointing all deterministic and stochastic (uncertain) input variables [19].

- Define Distributions: For each uncertain input, define its probability distribution (e.g., normal, uniform) based on empirical data or expert knowledge.

- Random Sampling: The computer algorithm randomly draws a value for each uncertain input from its specified distribution [19].

- Run Iterations: The model is computed once using the set of random samples. This process is repeated thousands of times to build a comprehensive picture of all possible outcomes [19].

- Analyze Outputs: Aggregate the results from all iterations to produce a probability distribution of the possible outcomes. This allows you to determine the likelihood of specific events [19].

Protocol for Factor Analysis

Objective: To reduce data complexity and identify underlying structures (latent variables) that explain patterns in observed variables [19] [21].

- Data Preparation: Assemble a dataset with multiple observed variables (e.g., concentrations of various chemical components).

- Correlation Check: Ensure that the variables are sufficiently correlated, as factor analysis seeks to explain these correlations.

- Factor Extraction: Using software (e.g., R, SPSS, Python), perform factor extraction (Exploratory Factor Analysis is common for uncovering hidden structures). This identifies the initial set of factors [21].

- Determine Number of Factors: Use criteria (e.g., Kaiser criterion, scree plot) to decide the number of meaningful factors to retain.

- Factor Rotation: Apply a rotational method (e.g., Varimax) to make the output more interpretable by maximizing high and low factor loadings.

- Interpret Factors: Analyze the factor loadings (coefficients showing the correlation between variables and factors) to name and understand the latent constructs the factors represent [19].

Visualizing the Analytical Workflow

The following diagrams map the logical flow from data acquisition to conclusion, illustrating the critical role of data analysis and accessibility in the process.

Accessible Color Application Logic

The Scientist's Toolkit: Essential Research Reagents & Materials

Forensic chemistry relies on specialized materials and instruments to generate valid and reliable data. The following table details key items used in modern forensic laboratories.

Table 2: Essential Research Reagent Solutions and Materials for Forensic Chemistry

| Item Name | Function / Application |

|---|---|

| Laboratory Information Management System (LIMS) [22] | Software for electronic barcode tracking of evidence from receipt through testing to disposition, ensuring chain of custody and providing real-time case updates. |

| International Ink Library [22] | The world's largest collection of writing inks, used for the chemical analysis and dating of inks on questioned documents. |

| Vacuum Metal Deposition (VMD) [22] | An advanced instrument using silver, gold, and zinc in a vacuum environment to develop latent prints on challenging surfaces as a last-resort capability. |

| Thermal Ribbon Analysis Platform (TRAP) [22] | An automated system developed to significantly improve the efficiency of examining counterfeit identification documents and financial documents. |

| Forensic Information System for Handwriting (FISH) [22] | A unique database used to associate handwritten threat letters in protective intelligence investigations, with AI evaluation underway to improve search algorithms. |

| Rapid DNA System [22] | Technology capable of performing DNA tests in approximately 90 minutes from mock evidence, complementing traditional lab tests for faster leads. |

Technology Readiness Levels (TRL) for Method Validation

Technology Readiness Levels (TRL) provide a systematic metric for assessing the maturity of a particular technology, originally developed by NASA during the 1970s. The scale ranges from TRL 1 (basic principles observed) to TRL 9 (actual system proven in operational environment), enabling consistent and uniform discussions of technical maturity across different types of technology [24]. This framework has since been adopted beyond its aerospace origins, with the European Union implementing it in research frameworks like Horizon 2020, and the Department of Defense utilizing it for procurement decisions [24]. In recent years, the TRL framework has gained relevance in forensic science as researchers and practitioners seek standardized methods to evaluate emerging analytical techniques, particularly those involving complex chemical evidence.

The adoption of TRL in forensic contexts addresses a critical need for structured technology assessment prior to courtroom implementation. Novel forensic methods must satisfy rigorous legal standards for evidence admissibility, including the Daubert Standard and Frye Standard in the United States, which require demonstrated scientific validity, known error rates, and peer acceptance [25]. Similarly, Canada's Mohan Criteria mandate that expert evidence meet threshold reliability standards [25]. The TRL framework provides a structured pathway for forensic researchers to systematically advance methods from basic research to legally admissible applications, thereby strengthening the scientific foundation of forensic evidence.

Within forensic chemistry, method validation encompasses multiple dimensions beyond analytical performance, including legal admissibility, reproducibility across laboratories, and resistance to contextual bias. The TRL framework offers a mechanism to track progress across these dimensions simultaneously, ensuring that methods mature not only technically but also within their operational legal context. This is particularly relevant for evaluating expanded conclusion scales in forensic chemical evidence research, where the translation of analytical data into likelihood statements requires rigorous validation at multiple levels.

TRL Frameworks and Definitions

Standard TRL Definitions and Historical Development

The TRL scale consists of nine distinct levels that represent a technology's progression from basic research to operational deployment. NASA's original definitions have been adapted by various organizations, but core concepts remain consistent across implementations [24] [26]. The scale begins with TRL 1, where basic principles are observed and reported, progressing through technology concept formulation (TRL 2), experimental proof of concept (TRL 3), and component validation in laboratory environments (TRL 4). Mid-level TRLs (5-6) involve validation in increasingly relevant environments, while higher levels (TRL 7-9) focus on system prototyping, qualification, and operational deployment [24].

The historical development of TRL reflects its evolution from a NASA-specific tool to a widely accepted assessment framework. The method was conceived at NASA in 1974 and formally defined in 1989 with seven levels, later expanding to the current nine-level scale in the 1990s [24]. This expansion allowed for more granular assessment of technology maturation. The U.S. Department of Defense began using TRLs for procurement in the early 2000s following a Government Accountability Office report that recommended assessing technology maturity prior to transition [24]. By 2008, the European Space Agency had adopted the scale, followed by the European Commission in 2010 [24].

Table: Standard Technology Readiness Level Definitions

| TRL | Stage | Definition | Key Characteristics |

|---|---|---|---|

| TRL 1 | Fundamental Research | Basic principles observed and reported | Scientific research begins with observation of basic properties |

| TRL 2 | Fundamental Research | Technology concept and/or application formulated | Practical applications identified; remains speculative with little experimental proof |

| TRL 3 | Research & Development | Experimental proof of concept | Active R&D begins; analytical and laboratory studies validate feasibility |

| TRL 4 | Research & Development | Component validation in laboratory environment | Basic technological components integrated in laboratory setting |

| TRL 5 | Research & Development | Component validation in relevant environment | Technology validated in simulated environment closer to final application |

| TRL 6 | Pilot & Demonstration | System/subsystem model demonstration in relevant environment | Prototype system demonstrated at pilot scale in simulated environment |

| TRL 7 | Pilot & Demonstration | System prototype demonstration in operational environment | Full-scale prototype demonstrated in operational environment under limited conditions |

| TRL 8 | Pilot & Demonstration | Actual system completed and qualified | Technology proven in final form under expected conditions |

| TRL 9 | Early Adoption | Actual system proven through successful deployment | Actual application in final form under full range of operational conditions |

TRL Adaptations for Specific Domains

While the core TRL framework remains consistent, various domains have developed adaptations to address field-specific requirements. In forensic science, the standard TRL scale requires careful interpretation to address legal admissibility requirements and evidentiary standards that differ from aerospace or defense contexts. The Government of Canada's Clean Growth Hub groups the nine TRLs into four broader technology development stages: Fundamental Research (TRL 1-2), Research and Development (TRL 3-5), Pilot and Demonstration (TRL 6-8), and Early Adoption (TRL 9) [27].

Recent research has documented formal adaptations of TRL for specific applications. A 2024 study adapted TRL for implementation science (TRL-IS), making key modifications including the "removal of laboratory testing, limiting the use of 'operational' environment and a clearer distinction between level 6 (pilot in a relevant environment) and 7 (demonstration in the real world prior to release)" [28]. This adaptation demonstrates the framework's flexibility while maintaining its core assessment function. The TRL-IS showed evidence of good inter-rater reliability (ICC = 0.90) when tested across multiple case studies, indicating that appropriately adapted TRL scales can provide consistent maturity assessments across different evaluators [28].

For forensic method validation, key distinctions in environment relevance are particularly important. According to the Government of Canada's TRL Assessment Tool, a simulated environment represents "a relevant working environment with controlled realistic conditions, generally outside of the lab," while an operational environment constitutes the "'real-world' environment with conditions associated with typical use of the product and or process" [27]. This distinction becomes critical when validating forensic methods for courtroom applications, where the operational environment includes not just laboratory conditions but also legal proceedings and cross-examination.

TRL Application to Forensic Method Validation

Forensic-Specific TRL Assessment Criteria

Applying TRL to forensic method validation requires expanding standard technical criteria to encompass legal and operational considerations specific to forensic contexts. At lower TRLs (1-3), forensic method development focuses on establishing basic scientific principles and initial proof-of-concept demonstrations. For example, in forensic chemistry, this might involve demonstrating that a novel analytical technique can distinguish between chemically similar substances found as evidence [25]. Research at these levels typically occurs in controlled laboratory environments with purified standards rather than case-type samples.

At mid-level TRLs (4-6), validation activities shift toward demonstrating reliability with forensically relevant materials and conditions. This includes testing with casework-type samples that may be complex mixtures, degraded, or present in trace quantities [25]. A key consideration at these levels is establishing error rates and sensitivity limits, which are essential for meeting legal admissibility standards such as those outlined in the Daubert criteria [25]. Method validation at TRL 5-6 typically involves intra-laboratory studies with predefined protocols and statistical analysis of performance metrics.

Higher TRLs (7-9) for forensic methods require demonstration of reliability across multiple laboratories and under operational conditions that include the full evidence handling workflow. This includes establishing standard operating procedures, training requirements, and quality control measures [25]. At TRL 8, the method should be qualified through rigorous testing that establishes its fitness-for-purpose in casework, while TRL 9 requires successful deployment in routine casework and withstanding legal challenges to its reliability. A method is considered TRL 9 only when it has been generally accepted in the relevant scientific community and admitted as evidence in multiple court proceedings [25].

Table: TRL Assessment Criteria for Forensic Method Validation

| TRL Range | Technical Validation Milestones | Legal Readiness Milestones | Operational Implementation Milestones |

|---|---|---|---|

| TRL 1-3 | Basic principles observed; Proof-of-concept established with controlled samples | Research published in peer-reviewed literature | Laboratory techniques documented; Initial cost-benefit analysis |

| TRL 4-6 | Validation with forensically relevant materials; Established sensitivity and specificity | Initial evaluation against legal standards (Daubert/Mohan); Known error rates established | Protocol development; Analyst training requirements defined; Intra-laboratory validation |

| TRL 7-8 | Inter-laboratory validation; Demonstration with authentic case samples | Admissibility established in multiple jurisdictions; Challenges to methodology addressed | Quality assurance protocols implemented; Integration with laboratory information systems |

| TRL 9 | Continuous monitoring of casework performance; Method optimization based on operational experience | Widespread admissibility as generally accepted; Precedent established for evidence interpretation | Full implementation in casework; Proficiency testing programs; Sustainable training and certification |

Expanded conclusion scales represent a significant evolution in forensic reporting practices, moving beyond traditional categorical determinations (e.g., identification, exclusion, inconclusive) to include probabilistic statements and likelihood ratios that better communicate the strength of evidence. The implementation of such scales requires careful validation across multiple TRLs to ensure both scientific robustness and legal acceptability.

Recent research has demonstrated the utility of expanded conclusion scales in forensic disciplines. A 2025 study on latent print examinations found that when using an expanded scale with two additional values (support for different sources and support for common sources), "examiners became more risk-averse when making 'Identification' decisions and tended to transition both the weaker Identification and stronger Inconclusive responses to the 'Support for Common Source' statement" [1]. This shift in decision-making patterns highlights how methodological changes can impact operational practices, necessitating thorough validation across multiple TRLs before implementation.

For forensic chemical evidence, expanded conclusion scales enable more nuanced interpretation of complex mixture analysis, source attribution, and activity level propositions. However, implementing these scales requires validation of both the analytical methods producing the underlying data and the statistical frameworks used to interpret them. This dual validation requirement makes TRL assessment particularly valuable, as it forces concurrent consideration of analytical and interpretative maturity.

Experimental Protocols for TRL Assessment

Comprehensive Two-Dimensional Gas Chromatography (GC×GC) Case Study

Comprehensive two-dimensional gas chromatography (GC×GC) provides an illustrative case study of TRL assessment for an advanced analytical method in forensic chemistry. GC×GC expands upon traditional 1D GC by adjoining "two columns of different stationary phases in series with a modulator" to increase peak capacity and separation of complex mixtures [25]. The technique has been explored for various forensic applications including illicit drug analysis, fingerprint residue characterization, toxicology, decomposition odor analysis, and petroleum analysis for arson investigations [25].

The experimental protocol for validating GC×GC methods progresses through specific milestones at each TRL stage. At TRL 3-4, validation focuses on establishing basic method parameters using standards and controlled samples. This includes optimization of the modulator settings, column combinations, and temperature programs to achieve required separation for target analytes. Method performance characteristics such as linearity, detection limits, and reproducibility are established using certified reference materials [25].

At TRL 5-6, validation expands to include forensically relevant samples that exhibit the complexity expected in casework. For fire debris analysis, this might include testing with burned substrates containing weathered ignitable liquids. Experimental protocols at this stage must establish that the method can reliably identify target compounds in the presence of complex matrices and interferences. This includes determining false positive rates and false negative rates through controlled studies with known samples [25].

Reaching TRL 7-8 requires inter-laboratory validation studies that demonstrate reproducibility across multiple instruments and analysts. The experimental design must include standardized protocols, shared reference materials, and statistical analysis of between-laboratory variation. For GC×GC methods, a 2024 review noted that "future directions for all applications should place a focus on increased intra- and inter-laboratory validation, error rate analysis, and standardization" to advance technical readiness [25]. These studies provide the empirical foundation for establishing the method's reliability in legal proceedings.

Validating expanded conclusion scales requires experimental protocols that address both the analytical methods producing the data and the interpretative frameworks used to reach conclusions. The protocol progresses through TRLs with increasing emphasis on operational relevance and legal considerations.

At TRL 3-4, initial validation focuses on the scale structure itself through psychometric testing. This includes assessing whether the scale categories are comprehensible to intended users, discriminative across different strength of evidence scenarios, and reliable across repeated evaluations. Studies at this level typically use controlled sample sets with known ground truth and involve participants trained in the new scale [1].

At TRL 5-6, validation expands to include the impact of expanded scales on decision-making. Experimental protocols employ signal detection theory to measure whether the expanded scale changes decision thresholds, as demonstrated in a 2025 study where "examiners each completed 60 comparisons using one of the two scales, and the resulting data were modeled using signal detection theory to measure whether the expanded scale changed the threshold for an 'Identification' conclusion" [1]. These studies typically include both novices and experienced practitioners to assess learning curves and expertise development.

Reaching TRL 7-8 requires field studies in operational environments with authentic casework. Protocols at this level focus on implementation challenges, including integration with laboratory information systems, reporting templates, and training requirements. A critical component is assessing how expanded conclusions are communicated in reports and testimony, and how they are understood by legal professionals [1]. Successful validation at these levels requires collaboration between forensic researchers, practitioners, and legal stakeholders.

The Scientist's Toolkit: Research Reagent Solutions

Implementing TRL assessment for forensic method validation requires specific materials and approaches tailored to the unique requirements of legally-admissible scientific methods. The following toolkit outlines essential components for researchers developing and validating forensic methods across the TRL spectrum.

Table: Essential Research Reagents and Materials for Forensic Method Validation

| Category | Specific Materials/Resources | Application in Validation | TRL Range |

|---|---|---|---|

| Reference Standards | Certified reference materials (CRMs); Internal standards; Proficiency test samples | Establishing method accuracy, precision, and reliability through comparison with known values | TRL 3-9 |

| Quality Control Materials | Blank samples; Control samples; Calibration verification materials | Monitoring method performance, detecting contamination, ensuring consistency across analyses | TRL 4-9 |

| Forensically Relevant Matrices | Bloodstains on various substrates; Simulated fire debris; Artificial fingerprint residues | Testing method performance with complex matrices similar to casework evidence | TRL 5-8 |

| Data Analysis Tools | Statistical software (R, Python); Likelihood ratio calculators; Validation template spreadsheets | Quantitative assessment of method performance, error rates, and uncertainty measurement | TRL 3-9 |

| Documentation Templates | Standard operating procedure (SOP) templates; Validation plan templates; Data recording forms | Ensuring consistent documentation practices essential for legal admissibility | TRL 5-9 |

| Legal Framework Resources | Daubert criteria checklist; Frye standard summaries; Court ruling databases | Aligning validation studies with legal requirements for admissibility | TRL 4-9 |

Beyond physical materials, the toolkit for advancing forensic methods through TRLs includes conceptual frameworks and implementation strategies. The FAIR principles (Findable, Accessible, Interoperable, and Reusable) provide guidance for data management throughout validation, particularly important for establishing transparency and reliability [29]. Additionally, structured data collection using standardized formats enables more robust validation studies and facilitates the inter-laboratory comparisons necessary for higher TRLs.

For methods involving expanded conclusion scales, specific toolkit components include decision-making studies that evaluate how different reporting formats impact interpretation, and communication templates that ensure statistical statements are conveyed accurately and understandably in legal contexts. These components address the unique challenge of validating both the scientific and communicative aspects of novel forensic approaches.

Comparative Analysis of Forensic Technologies at Different TRLs

Current State of Forensic Method Readiness

The application of TRL assessment to forensic science reveals significant variation in maturity across different analytical techniques and applications. Understanding these differences helps prioritize research investments and implementation strategies for advancing methods toward operational use.

Comprehensive two-dimensional gas chromatography (GC×GC) illustrates this variation within a single analytical platform. A 2024 review categorized forensic applications of GC×GC using a simplified readiness scale from 1 to 4, finding that "oil spill forensics and decomposition odor as forensic evidence have reached 30+ works for each application," indicating higher maturity compared to other applications [25]. This disparity highlights how the same core technology can exist at different TRLs depending on the specific forensic application and the extent of validation completed.

Emerging technologies in forensic DNA analysis demonstrate another TRL progression pattern. Next-generation sequencing (NGS) represents a transformative technology that is "still relatively recent" and will "take time to become accessible, affordable, and fully established for regular forensic use" [30]. In contrast, rapid DNA analysis and mobile DNA platforms are "more commonly needed in specific scenarios, such as disaster recovery, or in particular locations like airports and border checkpoints to speed up the workflow" [30]. This suggests these technologies have reached higher TRLs for specific, limited applications while remaining at lower TRLs for general casework.

Table: Comparative TRL Assessment of Forensic Technologies

| Technology | Representative Applications | Estimated Current TRL | Key Validation Milestones Achieved | Major Barriers to Higher TRL |

|---|---|---|---|---|

| GC×GC-MS | Oil spill tracing; Decomposition odor | TRL 7-8 | Method optimization; Demonstrations with authentic samples; Some inter-lab studies | Standardization; Extensive inter-laboratory validation; Establishment of error rates |

| GC×GC-MS | Illicit drug analysis; Fingermark chemistry | TRL 5-6 | Proof-of-concept; Laboratory validation with standards and some realistic samples | Demonstration with authentic case samples; Legal challenges resolved |

| Next-Generation Sequencing | Forensic DNA analysis | TRL 6-7 | Validation studies published; Early implementation in some laboratories | Cost; Infrastructure requirements; Standardization across platforms |

| Rapid DNA Analysis | Disaster victim identification; Border control | TRL 8-9 | Extensive validation; Use in operational settings; Legal acceptance in specific contexts | Expansion to general casework; Integration with laboratory workflows |

| Expanded Conclusion Scales | Latent print analysis; Chemical evidence | TRL 5-7 | Laboratory studies; Some implementation studies; Limited casework use | Widespread adoption; Legal precedent across jurisdictions; Standardized training |

Validation Data Requirements Across TRLs

The type and extent of validation data required for forensic methods evolve significantly across the TRL spectrum. At lower TRLs (1-4), validation focuses on basic performance characteristics established through controlled experiments with standards and simple matrices. Data requirements include demonstration of specificity, sensitivity, and linearity under ideal conditions [25] [30].

At mid-TRLs (5-7), validation data must address performance with forensically relevant materials and conditions. This includes establishing robustness to variations in sample quality, reproducibility across multiple analysts and instruments, and stability of results over time. For quantitative methods, data must demonstrate accuracy and precision with complex matrices, while qualitative methods require comprehensive characterization of false positive and false negative rates [25].

At higher TRLs (8-9), validation data must support operational implementation and legal admissibility. This includes inter-laboratory study results, proficiency test performance, and casework validation with known and questioned samples. Perhaps most importantly, methods at these levels require data demonstrating reliability in court, including records of successful admissibility challenges and judicial rulings on method acceptability [25]. This comprehensive data collection across multiple dimensions ensures that forensic methods meet the rigorous standards required for use in the justice system.