Establishing the Scientific Foundation of Forensic Chemistry: From Method Validation to Courtroom Admissibility

This article addresses the critical need for establishing and validating the fundamental scientific basis of forensic chemistry disciplines, a priority underscored by the National Institute of Justice.

Establishing the Scientific Foundation of Forensic Chemistry: From Method Validation to Courtroom Admissibility

Abstract

This article addresses the critical need for establishing and validating the fundamental scientific basis of forensic chemistry disciplines, a priority underscored by the National Institute of Justice. Aimed at researchers, scientists, and drug development professionals, it explores the foundational validity and reliability of forensic methods, the application of novel analytical techniques like E-LEI-MS for seized drug analysis, strategies for troubleshooting and optimizing methods within complex matrices and resource constraints, and the implementation of robust validation frameworks through standards from OSAC and ISO. By synthesizing current research, strategic priorities, and emerging standards, this review provides a comprehensive roadmap for strengthening the scientific rigor and impact of forensic chemistry in both laboratory and legal contexts.

The Scientific Pillars of Forensic Chemistry: Assessing Core Validity and Reliability

Understanding the Fundamental Scientific Basis of Forensic Disciplines

Forensic science is undergoing a fundamental transformation from a discipline reliant on subjective expert opinion to one grounded in quantitative, statistically robust methodologies. This shift is driven by legal requirements for scientific evidence to be "not only relevant but reliable," as established in the Supreme Court decision Daubert v. Merrell Dow Pharmaceuticals, Inc (1993), and by critiques such as the landmark 2009 National Academy of Sciences report that highlighted the lack of scientific validation, determination of error rates, and reliability testing in many forensic disciplines [1]. In response, forensic researchers have developed novel approaches that leverage advanced instrumentation, statistical learning frameworks, and nanotechnology to establish objective scientific bases for forensic evidence analysis. This whitepaper examines the fundamental scientific principles, quantitative methodologies, and experimental protocols that are establishing forensic chemistry and related disciplines as rigorously validated scientific fields.

Theoretical Foundations: From Qualitative Pattern Matching to Quantitative Analysis

The theoretical underpinning of modern forensic science rests on the premise that certain physical characteristics exhibit sufficient randomness and complexity to be unique at relevant microscopic length scales. For fracture evidence, this premise of uniqueness arises from the interaction between a material's intrinsic properties, microstructural features, and the exposure history of external forces [1]. The complex jagged trajectory of fractured surfaces contains information that can be quantified rather than merely visually assessed.

The Transition from Self-Affine to Unique Fracture Characteristics

Research has demonstrated that fracture surface topography exhibits self-affine or fractal properties at small length scales, meaning the roughness scales with the observation window. However, at larger length scales (typically >50-70 μm for many materials), this self-affine behavior transitions to non-self-affine characteristics where the surface roughness reaches a saturation level that captures the individuality of the fracture surface [1]. This transition scale, typically about 2-3 times the average grain size for materials undergoing cleavage fracture, provides a scientifically-defensible basis for comparison and represents the stochastic critical distance for cleavage fracture initiation [1].

Table 1: Key Length Scales in Fracture Surface Topography Analysis

| Scale Type | Typical Size Range | Characteristics | Forensic Significance |

|---|---|---|---|

| Self-Affine Region | <10-20 μm | Fractal nature with similar topographical features | Limited discrimination value |

| Transition Scale | ~50-75 μm | Shift from self-affine to unique characteristics | Sets optimal observation scale |

| Analysis Field of View | >500-750 μm | Captures multiple unique regions | Provides statistical power for comparison |

Statistical Learning Frameworks for Evidence Evaluation

Modern forensic science increasingly employs statistical learning tools to classify evidence and quantify the strength of associations. Multivariate statistical models are trained on spectral analysis of surface topography mapped by three-dimensional microscopy to distinguish matching from non-matching specimens with near-perfect accuracy [1]. These approaches generate likelihood ratios that quantitatively express the strength of evidence by comparing probabilities of observations under alternative hypotheses (e.g., the same source versus different sources) [2]. This framework provides the statistical foundation called for by scientific and legal critics of traditional forensic methods.

Quantitative Methodologies in Forensic Evidence Analysis

Fracture Surface Topography Analysis

Experimental Protocol: Quantitative Fracture Matching

Sample Preparation: Fractured specimens are mounted to ensure stability during imaging without altering surface features. Conduct initial visual examination to identify potential macro-scale correspondence.

3D Topographical Imaging: Map fracture surfaces using confocal microscopy or white light interferometry with resolution sufficient to capture features at the transition scale (typically <1 μm lateral resolution). The field of view should be at least 10 times the transition scale to avert signal aliasing [1].

Surface Roughness Quantification: Calculate the height-height correlation function, δh(δx)=√⟨[h(x+δx)-h(x)]²⟩ₓ, where the 〈⋯〉 operator denotes averaging over the x-direction. This function characterizes the surface roughness and identifies the transition from self-affine to unique characteristics [1].

Spectral Feature Extraction: Perform spectral analysis of the topography data to extract features across multiple frequency bands around the transition scale. These features serve as input for statistical classification.

Statistical Classification: Apply multivariate statistical learning algorithms (e.g., linear discriminant analysis, support vector machines) to classify specimen pairs as "match" or "non-match." The model outputs likelihood ratios expressing the strength of evidence.

Error Rate Estimation: Validate the model using known samples to establish empirical error rates and confidence intervals for the conclusions.

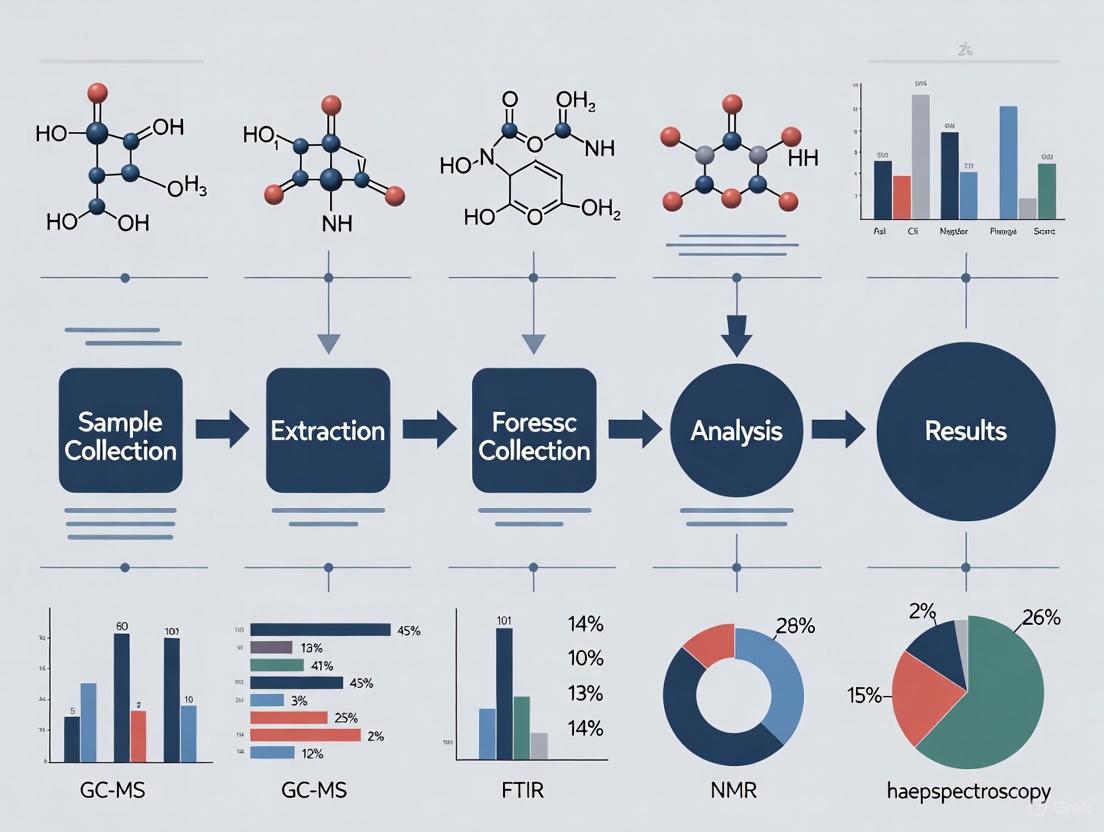

Figure 1: Experimental workflow for quantitative fracture surface analysis

Probabilistic Genotyping of DNA Mixtures

Experimental Protocol: Probabilistic Genotyping

DNA Extraction and Amplification: Extract DNA from evidence samples using standard extraction methods. Amplify short tandem repeat (STR) markers using polymerase chain reaction (PCR) with fluorescently labeled primers.

Capillary Electrophoresis: Separate amplified fragments by size using capillary electrophoresis. Detect alleles with laser-induced fluorescence to generate electropherograms.

Data Preprocessing: Analyze electropherograms to distinguish true alleles from artifacts (stutter, pull-up) based on peak characteristics, using quantitative (peak height) and qualitative (allele designation) information [2].

Probabilistic Modeling: Compute likelihood ratios using specialized software (e.g., STRmix, EuroForMix) that compares probabilities of the observed DNA profile under competing propositions about contributors to the mixture [2].

Interpretation and Reporting: Report likelihood ratios with appropriate uncertainty measures, following established guidelines for interpretation and communication.

Table 2: Comparison of Probabilistic Genotyping Software Approaches

| Software | Model Type | Data Utilized | Key Characteristics | Typical Output |

|---|---|---|---|---|

| LRmix Studio | Qualitative | Allele designations only | Considers detected alleles without quantitative information | Likelihood Ratio |

| STRmix | Quantitative | Allele designations and peak heights | Incorporates peak height information; continuous model | Generally higher LRs than qualitative |

| EuroForMix | Quantitative | Allele designations and peak heights | Open-source platform; quantitative model | Comparable to STRmix with minor variations |

Carbon Quantum Dots for Forensic Detection

Experimental Protocol: CQD-Based Evidence Detection

CQD Synthesis: Prepare carbon quantum dots using bottom-up approaches such as:

Surface Functionalization: Enhance CQD properties through:

Characterization: Analyze CQD properties using:

- Transmission Electron Microscopy: Determine size distribution and morphology.

- Fluorescence Spectroscopy: Measure excitation/emission profiles and quantum yield.

- FT-IR Spectroscopy: Identify surface functional groups.

Forensic Application: Apply functionalized CQDs to evidence samples using appropriate protocols for specific evidence types (e.g., fingerprint development, drug detection, biological stain identification).

Detection and Imaging: Visualize CQD-labeled evidence using appropriate illumination (typically UV or blue light) and capture fluorescence signals with specialized imaging systems.

Figure 2: Carbon quantum dots synthesis and application workflow

Research Reagent Solutions and Essential Materials

Table 3: Key Research Reagents for Advanced Forensic Analysis

| Reagent/Material | Composition/Type | Function in Forensic Analysis | Application Examples |

|---|---|---|---|

| Carbon Quantum Dots | Nanoscale carbon particles (2-10 nm) | Fluorescent probes for trace evidence detection | Fingerprint enhancement, drug identification, biological stain analysis [3] |

| STR Amplification Kits | Primer sets, polymerase, nucleotides | Simultaneous amplification of multiple STR loci | DNA profiling for human identification [2] |

| Fluorescent Dyes | Organic fluorophores (e.g., SYBR Green) | DNA staining for quantification and detection | Real-time PCR, DNA fragment analysis [2] |

| Surface Passivation Agents | Polymers (PEG), surfactants (SDS) | Prevent nanoparticle aggregation and enhance stability | Maintaining CQD dispersion in solution [3] |

| Heteroatom Dopants | Nitrogen, sulfur, phosphorus compounds | Modify CQD electronic structure and optical properties | Enhancing fluorescence intensity and selectivity [3] |

Discussion: Validity, Reliability, and Error Rates in Forensic Science

The movement toward quantitative forensic methodologies addresses fundamental scientific concerns about the validity and reliability of forensic evidence. Validity refers to whether a method actually measures what it purports to measure, while reliability concerns the consistency of results when the same evidence is examined multiple times or by different examiners [4].

Traditional forensic disciplines such as bloodstain pattern analysis (BPA) face challenges to their scientific validity due to complex interacting variables that make precise mathematical calculations difficult, and because different causes can produce similar patterns (many-to-one relationship) [4]. The quantitative approaches described in this whitepaper address these concerns by establishing clear mathematical models that define the relationship between evidence characteristics and source associations.

Cognitive bias presents another significant challenge to forensic science reliability, as contextual information and expectations can influence perceptual and interpretive processes [4]. Quantitative methodologies that incorporate Linear Sequential Unmasking—where examiners are exposed to case information gradually rather than all at once—can minimize these biases while maintaining analytical rigor [4].

Establishing known error rates remains challenging but essential for forensic methodologies. Error rate studies for fracture matching using topographic analysis and statistical learning have demonstrated near-perfect discrimination between matching and non-matching specimens [1]. Similarly, probabilistic genotyping software has been validated through extensive interlaboratory studies that examine variation in results across different laboratories and platforms [2] [4].

Future Perspectives: Integration with Artificial Intelligence and Computational Simulations

The future of forensic science lies in the deeper integration of quantitative analytical methods with artificial intelligence and computational simulations. Machine learning algorithms can enhance the discrimination power of fracture surface analysis by identifying subtle patterns not captured by traditional spectral analysis [1]. Similarly, the convergence of carbon quantum dots with AI platforms could create automated detection systems for multiple evidence types with minimal human intervention [3].

Computational fluid dynamics simulations are being developed to model bloodstain pattern formation under various conditions, potentially placing BPA on a more rigorous scientific foundation [4]. These simulations can account for the complex interacting variables that challenge traditional BPA and provide testable predictions about pattern formation.

As forensic science continues its transformation toward quantitative rigor, the fundamental scientific basis of forensic disciplines will strengthen, providing more reliable evidence for legal proceedings while maintaining scientific credibility.

Quantifying Measurement Uncertainty in Forensic Analytical Methods

Measurement uncertainty is a fundamental metrological concept that quantifies the doubt associated with the result of any scientific measurement. In forensic chemistry, particularly in seized drug analysis and toxicology, establishing valid uncertainty estimates is critical for demonstrating the scientific validity and reliability of analytical results presented in legal proceedings. Without proper uncertainty quantification, forensic conclusions lack statistical rigor and may not meet evolving evidentiary standards required by courts. The National Institute of Justice (NIJ) specifically identifies "quantification of measurement uncertainty in forensic analytical methods" as a core research objective to strengthen the foundational validity of forensic science disciplines [5].

The international standard ISO 21043 provides requirements and recommendations designed to ensure quality throughout the entire forensic process, including analysis, interpretation, and reporting [6]. Similarly, standard ANSI/ASB Standard 056, Standard for Evaluation of Measurement Uncertainty in Forensic Toxicology establishes specific protocols for uncertainty evaluation in analytical methods [7]. These standards emphasize the use of transparent and reproducible methods that are "empirically calibrated and validated under casework conditions" [6], providing the framework for implementing uncertainty quantification in operational forensic laboratories.

Table 1: Key International Standards Governing Measurement Uncertainty in Forensic Science

| Standard Identifier | Title | Scope | Relevance to Uncertainty Quantification |

|---|---|---|---|

| ISO 21043 | Forensic Sciences | Vocabulary, recovery, analysis, interpretation, and reporting | Provides overarching quality framework for uncertainty evaluation throughout forensic process |

| ANSI/ASB Standard 056 | Standard for Evaluation of Measurement Uncertainty in Forensic Toxicology | Specific to toxicological analysis | Establishes protocols for uncertainty evaluation in analytical methods |

| ANSI/ASB Standard 017 | Standard for Metrological Traceability in Forensic Toxicology | Metrological traceability requirements | Ensures measurement results can be traced to reference standards |

Methodological Approaches to Uncertainty Evaluation

Identification and Quantification of Uncertainty Components

A comprehensive uncertainty evaluation begins with systematic identification of all potential uncertainty sources throughout the analytical process. The cause-and-effect diagram (also called Ishikawa or fishbone diagram) provides a structured methodology for visualizing and categorizing these sources. For a typical forensic chemical analysis using chromatography-mass spectrometry, major uncertainty contributors include: sample preparation (weighing, dilution, extraction efficiency), instrumental analysis (calibration, detector response, retention time variation), data processing (integration algorithms, baseline correction), and reference standards (purity, stability).

Each identified uncertainty component must be quantified through experimental studies or literature data. For Type A evaluations (based on statistical analysis), replication experiments provide direct estimates of standard uncertainty. For example, intermediate precision studies conducted over 10-20 analytical runs quantify contributions from analyst-to-analyst variation, instrument performance drift, and environmental fluctuations. Method validation parameters including precision, accuracy, specificity, and linearity provide essential data for comprehensive uncertainty budgets [5].

Table 2: Experimental Protocols for Quantifying Major Uncertainty Components

| Uncertainty Component | Experimental Protocol | Calculation Method | Key Parameters |

|---|---|---|---|

| Balance Calibration | Repeat weighing of certified reference weights | Standard uncertainty from calibration certificate + temperature effects | Resolution, linearity, sensitivity |

| Sample Preparation | Multiple extractions from homogeneous sample | Standard deviation of recovery rates | Extraction efficiency, concentration factor variability |

| Instrument Response | Repeated analysis of quality control materials | Relative standard deviation of peak areas/heights | Injection volume precision, detector noise, signal drift |

| Calibration Curve | Analysis of standards at different concentrations | Residual standard error from regression statistics | Confidence intervals for predicted values |

The GUM Methodology for Uncertainty Propagation

The Guide to the Expression of Uncertainty in Measurement (GUM) provides the internationally recognized framework for combining individual uncertainty components into a combined standard uncertainty. This propagation approach mathematically models the measurement process as a functional relationship: y = f(x₁, x₂, ..., xₙ), where y is the measurand (e.g., drug concentration) and xᵢ are the input quantities. The combined standard uncertainty u_c(y) is calculated using the law of propagation of uncertainty:

uc²(y) = Σ[∂f/∂xi]²u²(xi) + 2ΣΣ(∂f/∂xi)(∂f/∂xj)u(xi,x_j)

where u(xi) are the standard uncertainties of input estimates and u(xi,x_j) are their estimated covariances. For forensic applications where expanded uncertainty is typically reported at approximately 95% confidence level, the combined standard uncertainty is multiplied by a coverage factor k=2 to yield the expanded uncertainty U [7].

Implementation Workflow for Forensic Laboratories

The implementation of robust measurement uncertainty protocols requires a systematic approach that integrates with existing quality management systems. The workflow encompasses method validation, data collection, statistical analysis, and continuous monitoring, ensuring that uncertainty estimates remain valid throughout the method's lifecycle. The process follows a logical sequence from initial uncertainty source identification through final reporting, with feedback mechanisms for ongoing improvement.

Diagram 1: Measurement Uncertainty Evaluation Workflow

Uncertainty Budget Development and Documentation

The uncertainty budget provides formal documentation of the uncertainty evaluation process, presenting a structured summary of all uncertainty components, their magnitudes, evaluation methods, and contribution to the combined uncertainty. A well-constructed budget enables forensic scientists to identify dominant uncertainty sources and prioritize method improvement efforts. It also provides transparency for technical review and courtroom testimony.

Table 3: Exemplary Uncertainty Budget for Cocaine HCl Quantification by GC-MS

| Uncertainty Source | Value | Standard Uncertainty | Probability Distribution | Sensitivity Coefficient | Contribution | Evaluation Type |

|---|---|---|---|---|---|---|

| Sample Weight (mg) | 10.2 | 0.041 | Normal | 0.98 | 0.040 | A |

| Calibration Curve | 1.00 | 0.025 | Normal | 1.02 | 0.026 | A |

| Extraction Efficiency | 98.5% | 0.015 | Rectangular | 1.01 | 0.015 | B |

| Dilution Volume | 10.0 mL | 0.032 | Triangular | 0.99 | 0.032 | B |

| Combined Standard Uncertainty | 0.057 | |||||

| Expanded Uncertainty (k=2) | 0.114 |

Uncertainty Reporting and Interpretation in Legal Contexts

Standardized Reporting Formats

Forensic reports must communicate uncertainty estimates in a manner that is both scientifically accurate and comprehensible to legal professionals. The recommended format expresses the measured value with its expanded uncertainty and coverage factor: "The concentration of cocaine was determined to be 75.2 ± 2.4 mg/g, where the reported uncertainty is an expanded uncertainty calculated using a coverage factor of k=2 which gives a level of confidence of approximately 95%." This format aligns with international guidance while maintaining clarity for non-specialists.

Interpretation Framework for Decision-Making

In forensic chemistry, measurement uncertainty directly impacts interpretative conclusions regarding compliance with legal limits or comparison between samples. When assessing whether a measured value exceeds a legal threshold, the uncertainty interval must be considered. For example, if the legal threshold for a controlled substance is 1.0% and the measured value is 1.2% with an expanded uncertainty of ±0.3%, the lower bound of the interval (0.9%) falls below the threshold, indicating the measurement does not provide conclusive evidence of non-compliance. This approach aligns with the conservative principle in forensic science, protecting against false positive conclusions.

Quality Assurance and Continuous Improvement

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing robust uncertainty quantification requires specific materials and reference standards that ensure traceability and method validity. These reagents form the foundation for producing forensically defensible measurement uncertainty estimates.

Table 4: Essential Materials for Uncertainty Evaluation in Forensic Chemistry

| Item | Function | Critical Specifications |

|---|---|---|

| Certified Reference Materials (CRMs) | Establish metrological traceability to SI units; calibrate instruments | Certified purity values with stated uncertainties; stability documentation |

| Quality Control Materials | Monitor method performance over time; validate precision estimates | Matrix-matched to authentic samples; validated homogeneity |

| Certified Balance Weights | Quantify uncertainty contribution from sample weighing | Calibration traceable to national standards; appropriate mass range |

| Class A Volumetric Glassware | Control uncertainty from dilution and preparation steps | Certified tolerances; calibration documentation |

| Chromatographic Reference Standards | Identify and quantify uncertainty from retention time and detector response | High purity; stability under storage conditions; verified identity |

Proficiency Testing and Interlaboratory Comparisons

Regular participation in proficiency testing programs and interlaboratory comparisons provides external validation of uncertainty estimates. These programs allow forensic laboratories to benchmark their measurement performance against peer institutions and identify potential bias in their methods. The statistical analysis of results from multiple laboratories following the same protocol (as described in ISO 5725) provides robust estimates of method reproducibility, a critical component of measurement uncertainty that is difficult to quantify through single-laboratory studies [5].

Ongoing monitoring of quality control data using statistical control charts enables forensic laboratories to detect changes in measurement precision over time, triggering re-evaluation of uncertainty estimates when significant deviations occur. This continuous improvement cycle ensures that reported uncertainty values accurately reflect current method performance, maintaining the scientific integrity of forensic measurements and their admissibility in legal proceedings.

The Role of Foundational Research in Preventing Wrongful Convictions

Foundational research provides the critical scientific bedrock upon which reliable and valid forensic chemistry disciplines are built. Within the criminal justice system, the accuracy of forensic evidence is paramount; errors can lead to the ultimate failure—the wrongful conviction of the innocent. Recent data from the National Registry of Exonerations records over 3,000 cases of wrongful convictions in the United States, with false or misleading forensic evidence being a significant contributing factor [8]. Foundational research systematically addresses this problem by subjecting forensic methods to rigorous scientific validation, establishing known error rates, and identifying the boundaries of reliable interpretation. This whitepaper examines the specific role of such research in validating forensic chemistry disciplines, with a particular focus on the legal standards that evidence must meet and the practical methodologies that underpin reliable forensic practice.

The Problem: Forensic Evidence and Wrongful Convictions

Scope and Scale

Wrongful convictions represent a profound travesty of justice. The Innocence Project has worked to exonerate 375 individuals, including 21 who served on death row, often with forensic science issues playing a role [8]. A comprehensive study analyzed 732 wrongful conviction cases classified as involving "false or misleading forensic evidence," encompassing 1,391 individual forensic examinations [8] [9]. This dataset provides a robust evidence base for identifying systemic weaknesses and targeting research efforts where they are most needed.

A Typology of Forensic Error

Analysis of wrongful convictions reveals that errors related to forensic evidence are not monolithic but fall into distinct, categorizable types. The developed forensic error typology is essential for diagnosing root causes [8] [9].

Table 1: Forensic Evidence Error Typology (Adapted from Morgan, 2023) [8]

| Error Type | Description | Common Examples |

|---|---|---|

| Type 1: Forensic Science Reports | Misstatement of the scientific basis of an examination. | Lab error, poor communication, resource constraints. |

| Type 2: Individualization/Classification | Incorrect individualization, classification, or interpretation. | Interpretation error, fraudulent association. |

| Type 3: Testimony | Erroneous presentation of forensic results at trial. | Mischaracterized statistical weight or probability. |

| Type 4: Officer of the Court | Errors by legal actors related to forensic evidence. | Excluded evidence, accepting faulty testimony. |

| Type 5: Evidence Handling & Reporting | Failure to collect, examine, or report potentially probative evidence. | Chain of custody breaks, lost evidence, police misconduct. |

A critical finding from this research is that most errors are not direct identification or classification mistakes by forensic scientists [9]. More frequently, errors involve miscommunication of results, failure to conform to standards, or actions by criminal justice actors outside forensic science organizations' control, such as the suppression of exculpatory evidence or reliance on unconfirmed presumptive tests [8].

High-Risk Disciplines and Techniques

Quantitative analysis of exoneration cases identifies specific forensic disciplines that have been disproportionately associated with erroneous convictions. The table below highlights disciplines with high observed rates of error, providing a clear priority list for foundational research and reform.

Table 2: Forensic Discipline Error Rates in Wrongful Convictions [8]

| Discipline | % of Examinations with ≥1 Error | % with Individualization/Classification Errors | Primary Issues Identified |

|---|---|---|---|

| Seized Drug Analysis | 100% | 100% | Primarily errors using field drug testing kits (129 of 130 errors). |

| Bitemark Comparison | 77% | 73% | Disproportionate share of incorrect identifications; examiners often outside structured labs. |

| Fire Debris Investigation | 78% | 38% | Testimony and interpretation errors. |

| Forensic Medicine (Pediatric Physical Abuse) | 83% | 22% | High rate of case errors. |

| Serology | 68% | 26% | Testimony errors, best practice failures, inadequate defense. |

| Hair Comparison | 59% | 20% | Testimony conforming to outdated standards. |

| DNA Analysis | 64% | 14% | Use of early, unreliable methods; interpretation of complex mixtures. |

| Latent Fingerprints | 46% | 18% | Fraud or clear violations of basic standards by uncertified examiners. |

The Foundational Research Framework: Validity, Reliability, and Legal Admissibility

Core Scientific Principles

For any forensic chemistry method to be reliable, its foundational validity must be established. This aligns with core principles of research validity adapted for the forensic context [10]:

- Construct Validity: Does the analytical technique accurately measure the specific chemical compound or property it claims to measure? For example, does a chromatographic method for detecting a novel synthetic cannabinoid truly measure that compound and not a similar interferent?

- Reliability: Does the method produce consistent, reproducible results when applied by different examiners in different laboratories using the same sample?

- Criterion Validity: How do the results from a new technique compare to those from an established "gold standard" method? This is crucial for demonstrating that a novel method like comprehensive two-dimensional gas chromatography (GC×GC) is as reliable as established 1D GC methods [11].

Legal Standards for Admissibility

Foundational research must ensure that forensic methods meet the legal thresholds for admissibility as expert evidence in court. These standards define the requirements for scientific validity and reliability [11].

Table 3: Legal Standards for the Admissibility of Expert Evidence [11]

| Standard | Jurisdiction | Key Criteria |

|---|---|---|

| Daubert Standard | U.S. Federal Courts | 1. Whether the theory/technique can be and has been tested.2. Whether it has been subjected to peer review and publication.3. The known or potential error rate.4. The existence and maintenance of standards controlling its operation.5. General acceptance in the relevant scientific community. |

| Frye Standard | Some U.S. State Courts | General acceptance in the relevant scientific community. |

| Federal Rule of Evidence 702 | U.S. Federal Courts | Testimony is based on sufficient facts/data, product of reliable principles/methods, and the expert has reliably applied them. |

| Mohan Criteria | Canada | Relevance, necessity in assisting the trier of fact, absence of exclusionary rules, and a properly qualified expert. |

The known or potential error rate criterion from Daubert is a direct mandate for foundational research. It requires rigorous, black-box studies to measure the accuracy of forensic methods and the individuals who use them [11] [5]. Furthermore, the legal principle of "general acceptance" necessitates that new techniques undergo extensive intra- and inter-laboratory validation and standardization before they can be implemented in routine casework [11].

Figure 1: The relationship between legal admissibility standards and the foundational research they necessitate.

Key Research Methodologies and Protocols

Foundational Validity and Reliability Studies

The National Institute of Justice's (NIJ) Forensic Science Strategic Research Plan prioritizes research that assesses the "fundamental scientific basis of forensic science disciplines" and quantifies "measurement uncertainty in forensic analytical methods" [5]. Key experimental approaches include:

- Black-Box Studies: These measure the accuracy and reliability of forensic examinations by providing certified examiners with evidence samples of known origin without revealing the ground truth. The results are compared to the known facts to calculate empirical error rates [5].

- White-Box Studies: These go beyond measuring error rates to identify the sources of error. They investigate human factors, cognitive biases, and the impact of contextual information on analytical decision-making [8] [5].

- Interlaboratory Studies: Multiple laboratories analyze the same set of samples using the same protocol. This is critical for establishing method robustness, reproducibility, and for defining standards for interpretation across the community [5].

Advanced Analytical Techniques: GC×GC as a Case Study

Comprehensive two-dimensional gas chromatography (GC×GC) represents the cutting edge of separation science for complex forensic mixtures. It offers increased peak capacity and sensitivity compared to traditional 1D-GC, making it promising for applications in illicit drug analysis, fire debris investigation, and decomposition odor analysis [11].

Experimental Protocol: GC×GC-MS for Complex Seized Drug Analysis [11]

Instrumentation: A GC×GC system is configured with:

- Primary Column: A non-polar or mid-polarity column (e.g., 30m length, 0.25mm i.d.) for the first dimension separation based primarily on boiling point.

- Modulator: The "heart" of the system, which traps, focuses, and reinjects effluent segments from the first column onto the second column at precise intervals (e.g., 2-8 second modulation periods).

- Secondary Column: A polar column (e.g., 1-2m length, 0.1mm i.d.) for rapid second dimension separation based on polarity.

- Detector: Most commonly a Time-of-Flight Mass Spectrometer (TOF-MS) due to its fast acquisition rate, which is necessary to capture the very narrow peaks produced in the second dimension.

Sample Preparation: An aliquot of the seized material is dissolved in a suitable solvent (e.g., methanol) and diluted to an appropriate concentration. An internal standard may be added for quantitative analysis.

Data Acquisition: The sample is injected into the GC×GC system. The resulting data is a three-dimensional plot (1D retention time vs. 2D retention time vs. signal intensity) that provides a unique chemical "fingerprint" of the sample.

Data Analysis and Validation:

- Targeted Analysis: Identifying and quantifying specific compounds of interest (e.g., fentanyl, synthetic cannabinoids) by comparing their retention times and mass spectra to certified reference standards analyzed under identical conditions.

- Non-Targeted Analysis: Using pattern recognition and chemometric software to identify unknown compounds or to compare the overall chemical profile of a sample to a database of known illicit drug preparations.

- Validation: The method must be validated for parameters including specificity, linearity, accuracy, precision, limit of detection (LOD), and limit of quantitation (LOQ) to meet legal admissibility standards like Daubert.

Figure 2: Generic workflow for a GC×GC-MS analysis of a complex forensic sample like seized drugs.

The Scientist's Toolkit: Essential Reagents and Materials

Table 4: Key Research Reagent Solutions for Foundational Forensic Studies [11] [5] [12]

| Item / Solution | Function in Foundational Research |

|---|---|

| Certified Reference Materials (CRMs) | High-purity analytical standards used to validate instrument response, confirm analyte identity, and establish retention indices. Essential for demonstrating method specificity. |

| Internal Standards (Isotope-Labeled) | Added to samples to correct for analytical variability and matrix effects during quantitative analysis, improving accuracy and precision. |

| Characterized Proficiency Test Samples | Samples with known composition but unknown to the analyst, used in black-box and interlaboratory studies to measure method and examiner reliability. |

| Complex Mock Evidence Matrices | Simulated, well-characterized evidence samples (e.g., drug mixtures in common cutting agents, ignitable liquids on burnt debris) used to test method robustness and the limits of detection/quantitation. |

Impact and Implementation: From Research to Practice

Foundational research's ultimate value is realized when it translates into practices that prevent wrongful convictions. The NIJ's strategic plan emphasizes maximizing the impact of research by supporting its implementation into forensic laboratories [5]. Key impacts include:

- Development of Best Practices and Standards: Foundational research directly informs the creation of standardized methods, reporting formats, and conclusion scales. For example, research on the unreliable nature of bitemark analysis has led to calls for its severely restricted use and more conservative testimony standards [8] [9].

- Improved Communication of Results: Research into cognitive bias has led to reforms such as sequential unmasking, where examiners are shielded from extraneous, biasing information until after their initial analysis is complete [8].

- Sentinel Event Analysis: Dr. John Morgan's research advocates for treating wrongful convictions as "sentinel events"—critical failures that signal major system weaknesses. Foundational research provides the tools to conduct root-cause analyses of these events and implement systemic reforms to prevent their recurrence [8].

The trajectory of foundational research is guided by both scientific innovation and the enduring imperative to ensure justice. The NIJ's strategic priorities for 2022-2026 highlight future directions, including the development of standard criteria for analysis and interpretation, the use of automated tools to support examiner conclusions, and a deeper understanding of evidence stability, transfer, and persistence [5]. For novel techniques like GC×GC, the path forward requires a concerted focus on intra- and inter-laboratory validation, error rate analysis, and standardization to advance its Technology Readiness Level for courtroom acceptance [11].

In conclusion, foundational research is not an academic exercise; it is a critical safeguard for the integrity of the criminal justice system. By rigorously validating the scientific basis of forensic chemistry methods, establishing their known error rates, and translating these findings into standardized practices, such research directly addresses the root causes of erroneous convictions. It provides the necessary evidence to meet legal admissibility standards, strengthens examiner proficiency, and ultimately builds a forensic science infrastructure capable of reliably delivering truth and justice.

The National Institute of Justice (NIJ) Forensic Science Strategic Research Plan for 2022-2026 establishes a comprehensive framework to strengthen the scientific foundations of forensic disciplines through targeted research and development. This whitepaper examines the plan's five strategic priorities with specific emphasis on implications for forensic chemistry validity research. We analyze how these priorities address critical needs in method validation, error rate quantification, and analytical technique standardization to meet both scientific and legal admissibility standards. For forensic chemists and drug development professionals, this plan emphasizes transitioning from proof-of-concept demonstrations to court-ready methodologies supported by robust foundational data and appropriate statistical measures for expressing evidential weight.

The NIJ developed its Forensic Science Strategic Research Plan to communicate a cohesive research agenda addressing the complex challenges faced by the modern forensic science community. This plan emerges against a backdrop of increasing demands for forensic services coupled with diminishing resources, creating a pressing need for innovative, efficient, and scientifically robust approaches to evidence analysis [5]. The strategic priorities outlined in the plan closely parallel opportunities and challenges identified across the forensic science ecosystem, with particular relevance for disciplines requiring advanced chemical analysis techniques.

For forensic chemistry specifically, the plan emphasizes strengthening the fundamental scientific basis of analytical methods while simultaneously advancing applied research to meet evolving casework demands [5] [13]. This dual focus recognizes that for forensic methods to withstand legal scrutiny, they must be demonstrably valid, reliable, and well-understood within their limitations. The plan explicitly notes that "if forensic methods are demonstrated to be valid and the limits of those methods are well understood, then investigators, prosecutors, courts, and juries can make well-informed decisions" [5], directly addressing the core thesis of establishing scientific validity in forensic chemistry disciplines.

Strategic Priority Analysis: Implications for Forensic Chemistry

Priority I: Advance Applied Research and Development in Forensic Science

This priority focuses on translating scientific innovation into practical solutions for forensic practitioners, with multiple objectives directly relevant to forensic chemistry research and drug development.

Table 1: Applied R&D Objectives for Forensic Chemistry

| Objective Area | Specific Research Focus | Impact on Forensic Chemistry |

|---|---|---|

| Novel Technologies & Methods | Identification/quantitation of forensically relevant analytes (e.g., seized drugs, gunshot residue) [5] [13] | Development of more specific, sensitive, and efficient analytical methods for substance identification |

| Evidence Differentiation | Methods to differentiate evidence from complex matrices or conditions [5] | Enhanced capability to isolate and identify target compounds in mixed samples |

| Automated Tools | Library search algorithms for unknown compound identification [5] | Improved analytical workflows for rapid and accurate compound matching |

| Standard Criteria | Evaluation of methods to express weight of evidence (e.g., likelihood ratios) [5] [13] | Standardized approaches for statistical interpretation and reporting of chemical findings |

A critical application area within this priority is the development and validation of comprehensive two-dimensional gas chromatography (GC×GC) techniques. Recent research has demonstrated GC×GC's superior separation capabilities for complex forensic mixtures including illicit drugs, toxicological evidence, and ignitable liquid residues [11]. However, for such advanced techniques to transition from research settings to routine casework, they must meet rigorous legal admissibility standards including the Daubert Standard and Federal Rule of Evidence 702, which require demonstrated testing, peer review, known error rates, and general acceptance in the relevant scientific community [11].

Priority II: Support Foundational Research in Forensic Science

This priority addresses the fundamental scientific underpinnings of forensic methods, with direct implications for establishing the validity of forensic chemistry disciplines.

Table 2: Foundational Research Requirements

| Research Domain | Key Questions | Methodological Approaches |

|---|---|---|

| Foundational Validity & Reliability | Understanding fundamental scientific basis of forensic disciplines [5] | Basic research on analytical principles, measurement uncertainty quantification |

| Decision Analysis | Measurement of accuracy and reliability of forensic examinations [5] | Black box studies, white box studies, interlaboratory comparisons |

| Evidence Limitations | Understanding value of evidence beyond individualization [5] | Research on activity level propositions, transfer and persistence studies |

| Error Rate Quantification | Establishing known or potential error rates [11] | Validation studies, proficiency testing, statistical analysis of casework data |

Foundational research must specifically address the legal standards for admissibility, particularly the requirements established in Daubert v. Merrell Dow Pharmaceuticals, Inc. (1993), which emphasizes whether the technique can be and has been tested, whether it has been subjected to peer review and publication, the known or potential error rate, and the degree of acceptance within the relevant scientific community [11]. For forensic chemistry methods, this translates to comprehensive validation studies that establish method robustness, specificity, sensitivity, and reliability under casework conditions.

Strategic Priorities III-V: Implementation Framework

The remaining priorities create the ecosystem necessary for research impact:

Priority III: Maximize Research Impact - Focuses on disseminating research products, implementing methods and technologies, and assessing program impact [5] [13]. For forensic chemistry, this includes developing evidence-based best practice guides and facilitating technology transfer from research to operational laboratories.

Priority IV: Cultivate Workforce - Addresses the development of current and future forensic science researchers and practitioners [5] [13]. This includes fostering the next generation of researchers, facilitating research within public laboratories, and implementing processes for workforce assessment and sustainability.

Priority V: Coordinate Across Communities - Emphasizes collaboration across academic, industry, and government sectors to maximize resources and address challenges caused by high demand and limited resources [5] [13].

Experimental Validation Pathway for Forensic Chemistry Methods

The following workflow diagram illustrates the comprehensive validation pathway for forensic chemistry methods from development to courtroom adoption:

Advanced Analytical Techniques: GC×GC Case Study

Comprehensive two-dimensional gas chromatography (GC×GC) represents an exemplary model of technology advancement aligned with the NIJ strategic priorities. The technique provides significantly enhanced separation capabilities compared to traditional 1D-GC, particularly for complex mixtures encountered in forensic chemistry applications [11].

The following diagram illustrates the GC×GC analytical workflow and its forensic applications:

Technology Readiness Levels for Forensic Applications

GC×GC research has progressed across multiple forensic chemistry domains, though at varying stages of maturity relative to courtroom admissibility requirements [11]:

- Level 4 (Court-Ready): Well-established methodologies with documented error rates and extensive validation (e.g., drug analysis, fire debris)

- Level 3 (Validation Phase): Demonstrated efficacy with ongoing interlaboratory studies (e.g., toxicology, chemical profiling)

- Level 2 (Proof-of-Concept): Established feasibility with limited validation (e.g., fingermark chemistry, decomposition odor)

- Level 1 (Early Research): Preliminary demonstrations with minimal validation data (e.g., chemical, biological, nuclear, radioactive materials)

Data Management and FAIR Principles in Forensic Research

Effective forensic chemistry research requires robust data management practices aligned with FAIR principles (Findable, Accessible, Interoperable, Reusable) [14]. Proper data classification and management are fundamental to establishing methodological validity and reliability.

Table 3: Data Classification in Forensic Chemistry Research

| Data Type | Description | Examples in Forensic Chemistry |

|---|---|---|

| Quantitative | Numerical measurements objectively collected [14] | Concentration values, peak areas, retention times, spectral intensities |

| Continuous | Measurable values that can be subdivided [14] | Temperature, pressure, response factors, calibration curves |

| Discrete | Counted values that are distinct and separate [14] | Number of peaks, identified compounds, replicate measurements |

| Qualitative | Descriptive characteristics, generally non-numerical [14] | Color tests, crystal morphology, chromatographic pattern descriptions |

| Ordinal | Qualitative data with inherent order or ranking [14] | Signal strength categories, match confidence scales |

Implementation of structured data management plans ensures that forensic chemistry research data remains accessible for verification, reanalysis, and statistical interpretation of evidentiary weight – a critical component for establishing foundational validity [5] [14].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of the NIJ strategic research priorities requires specific analytical resources and reference materials. The following table details essential research reagents and their functions in forensic chemistry research:

Table 4: Essential Research Reagents and Materials for Forensic Chemistry

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Certified Reference Materials | Quantification and method validation [5] | Drug standards, controlled substance analogs, metabolite references |

| Internal Standards | Quality control and quantification accuracy [11] | Deuterated analogs, stable isotope-labeled compounds |

| Quality Control Materials | Method performance verification [5] | Proficiency test materials, internal quality control samples |

| Stationary Phases | Chromatographic separation [11] | GC columns (non-polar, mid-polar, specialized phases for GC×GC) |

| Derivatization Reagents | Analyte modification for enhanced detection [11] | Silylation, acylation, esterification reagents for GC analysis |

| Sample Preparation Materials | Extraction and cleanup [5] | Solid-phase extraction cartridges, solvents, filtration devices |

The NIJ Forensic Science Strategic Research Plan 2022-2026 establishes a comprehensive roadmap for advancing the scientific foundations of forensic chemistry through targeted research initiatives. Successful implementation requires focused attention on method validation, error rate quantification, and standardized interpretation frameworks that meet both scientific and legal standards. Future research directions should emphasize interlaboratory collaboration, open data practices, and workforce development to ensure forensic chemistry methodologies withstand evolving legal and scientific scrutiny while maintaining pace with emerging analytical technologies and complex evidence types.

Advanced Analytical Techniques in Practice: From Seized Drugs to New Psychoactive Substances

Illicit drug profiling, or chemical fingerprinting, is a fundamental process in forensic chemistry that involves the identification, quantitation, and categorization of drug samples into groups. This profiling provides investigative leads such as a common or different origin of seized samples, elucidation of synthetic pathways, identification of adulterants and impurities, and determination of geographic origin for plant-derived exhibits [15]. The global illicit drug market has seen significant growth, with approximately 275 million people consuming illicit drugs in 2020—a 10% increase from 2010—and this number is projected to increase by 11% worldwide by 2030 [15]. This expanding market, coupled with the emergence of new psychoactive substances (NPS), presents substantial challenges for law enforcement and forensic investigators, necessitating robust and sophisticated analytical approaches for drug profiling [15].

The validity of forensic chemistry disciplines, including drug profiling, requires careful scientific scrutiny. According to recent scientific guidelines, forensic feature-comparison methods must demonstrate plausibility, sound research design, intersubjective testability, and a valid methodology to reason from group data to statements about individual cases to be considered scientifically valid [16]. This article examines illicit drug profiling within this framework of scientific validity, focusing on the application of traditional and advanced analytical techniques including Gas Chromatography-Mass Spectrometry (GC-MS), Liquid Chromatography-Mass Spectrometry (LC-MS), and Inductively Coupled Plasma-Mass Spectrometry (ICP-MS).

Physical Profiling of Illicit Drugs

Physical profiling represents the initial stage of drug examination and involves documenting all physical characteristics of a seized drug sample. This includes attributes such as color, packaging material, thickness of packaging plastic, logos on tablets or packages, as well as tablet weight and dimensions [15]. These physical characteristics provide complementary information that may support subsequent chemical profiling and allow for the preliminary grouping of illicit drugs to speculate whether different samples originate from a similar source [15].

For example, if a batch of 3,4-methylenedioxymethamphetamine (MDMA) tablets or heroin blocks were pressed with a tool containing specific imperfections, these imperfections would be transferred to the entire batch, potentially providing evidence of a common source [15]. A 2012 study examining over 300 heroin samples focused on five different physical characteristics: color and weight of the substance, and width, weight, and thickness of the plastic package. The research found that film thickness was the least reliable characteristic due to significant variability between samples, while package dimensions were the most reliable and could potentially serve as a trademark for a particular production line [15].

However, physical profiling alone often provides insufficient data for definitive conclusions. Manufacturers may employ diverse concealment approaches to eliminate physical evidence that could link samples, and uncontrolled clandestine laboratory conditions can produce variations in a drug's physical characteristics [15]. Consequently, utilizing chemical profiling techniques becomes necessary for more definitive analysis and conclusions.

Chemical Profiling of Illicit Drugs

Chemical profiling involves gathering comprehensive chemical information about a drug sample and can be classified into organic and inorganic profiling based on the analytical technique applied and the type of impurity being investigated [15]. Organic profiling focuses on the active pharmaceutical ingredient, by-products, adulterants, and diluents, while inorganic profiling targets elemental traces originating from catalysts, reagents, or environmental contamination [15].

Table 1: Chemical Profiling Approaches for Illicit Drugs

| Profiling Type | Analytical Technique | Target Analytes | Information Obtained |

|---|---|---|---|

| Organic Profiling | Isotope-Ratio Mass Spectrometry (IRMS) | Stable isotopes (C, N) | Geographic origin, environmental conditions |

| Gas Chromatography-Mass Spectrometry (GC-MS) | Active compounds, by-products, impurities | Synthetic route, precursors, cutting agents | |

| Liquid Chromatography-Mass spectrometry (LC-MS) | Active compounds, by-products, impurities | Synthetic route, precursors, cutting agents | |

| Ultra-High-Performance Liquid Chromatography (UHPLC) | Active compounds, by-products, impurities | Synthetic route, precursors, cutting agents with high separation | |

| Thin Layer Chromatography (TLC) | Active compounds | Preliminary identification, separation | |

| Inorganic Profiling | Inductively Coupled Plasma-Mass Spectrometry (ICP-MS) | Elemental traces (catalysts, impurities) | Synthetic route, geographic origin |

Some countries have established specific programs that define chemical fingerprints or signatures for common illicit drugs. For example, Australia has specific signatures for amphetamine-type substances (ATS), heroin, and cocaine. ATS have two main signatures: Signature I involves analyzing by-product content to understand synthetic routes and precursors using GC-MS, while Signature II involves analyzing elemental traces using ICP-MS to reveal information about synthetic routes [15].

Organic Chemical Profiling

Isotope-Ratio Mass Spectrometry (IRMS)

Isotope-Ratio Mass Spectrometry (IRMS) is a powerful tool in forensic investigations for drug profiling, particularly for natural illicit drugs derived from plants. The technique operates on the hypothesis that plant-derived drugs exhibit IRMS profiles reflecting environmental and growth conditions, providing information about geographic origin [15].

In 2006, researchers successfully identified links between provinces in Brazil through seized marijuana samples based on analysis of carbon and nitrogen isotopes, which primarily reflect climate and other environmental plant growth conditions [15]. Similarly, nitrogen isotope analysis was used to examine large cocaine seizures in 2007, where researchers could link certain logos to specific sample groups and found significant variations in nitrogen isotopes that correlated with successive precipitation steps in processing [15].

Diagram 1: IRMS Workflow for Geographic Sourcing

Gas Chromatography-Mass Spectrometry (GC-MS)

GC-MS is one of the most widely used techniques for organic profiling of illicit drugs, providing separation capabilities combined with sensitive detection and identification. This technique is particularly valuable for analyzing volatile and semi-volatile organic compounds present in drug samples, including impurities, by-products, and cutting agents that provide information about synthetic routes and processing methods [15].

The application of GC-MS enables forensic chemists to identify specific synthetic pathways based on the by-products and intermediates detected. For example, different methods of methamphetamine production (e.g., ephedrine reduction, reductive amination) produce distinct impurity profiles that can be identified using GC-MS, providing crucial intelligence about manufacturing processes [15].

Table 2: GC-MS Parameters for Drug Profiling Analysis

| Parameter | Setting/Requirement | Purpose/Impact |

|---|---|---|

| Column Type | Fused silica capillary (5-30m length) | Compound separation |

| Stationary Phase | Non-polar to mid-polar (e.g., 5% phenyl polysiloxane) | Separation efficiency |

| Injection Mode | Split or splitless | Sensitivity, resolution |

| Injection Temperature | 250-300°C | Volatilization without degradation |

| Oven Program | Ramp from 60°C (hold 1min) to 300°C at 10-20°C/min | Optimal separation |

| Carrier Gas | Helium or Hydrogen | Mobile phase |

| Ion Source Temperature | 230-300°C | Efficient ionization |

| Mass Range | 40-500 m/z | Coverage of drug compounds |

Liquid Chromatography-Mass Spectrometry (LC-MS) and Ultra-High-Performance Liquid Chromatography (UHPLC)

LC-MS and its advanced form UHPLC have become increasingly important in illicit drug profiling, particularly for the analysis of less volatile, thermally labile, or polar compounds that may not be suitable for GC-MS analysis. These techniques are especially valuable for new psychoactive substances (NPS), which often have complex chemical structures and may decompose under high temperatures [15].

UHPLC offers improved separation efficiency, resolution, and speed compared to conventional liquid chromatography, making it particularly suitable for the analysis of complex mixtures of drugs and their impurities. The technique is often coupled with high-resolution mass spectrometry for precise identification of compounds based on exact mass measurements [15].

Inorganic Chemical Profiling

Inductively Coupled Plasma-Mass Spectrometry (ICP-MS)

ICP-MS is the primary technique used for inorganic or elemental profiling of illicit drugs, providing extremely sensitive detection of trace elements present at parts per billion (ppb) or even parts per trillion (ppt) levels. These trace elements may originate from catalysts used in synthesis, processing equipment, water sources, or environmental contamination during production or storage [15].

Elemental profiling through ICP-MS can provide complementary information to organic profiling techniques, helping to establish links between seizures, identify common sources, and determine geographic origin. The technique is particularly valuable for amphetamine-type substances (ATS), where elemental traces from catalysts can reveal information about synthetic routes [15].

Diagram 2: ICP-MS Elemental Profiling Workflow

Table 3: ICP-MS Operating Conditions for Drug Profiling

| Parameter | Typical Setting | Notes |

|---|---|---|

| RF Power | 1.3-1.6 kW | Plasma stability |

| Nebulizer Gas Flow | 0.8-1.2 L/min | Sample introduction efficiency |

| Auxiliary Gas Flow | 0.9-1.2 L/min | Plasma maintenance |

| Plasma Gas Flow | 13-18 L/min | Plasma formation |

| Sample Uptake Rate | 0.5-1.5 mL/min | Analysis speed, sensitivity |

| Dwell Time | 10-100 ms/isotope | Signal stability |

| Resolution | 0.6-0.8 amu | Mass separation |

| Collision/Reaction Cell Gas | He, H₂, or NH₃ | Interference reduction |

Experimental Protocols

Sample Preparation for Organic Profiling

Proper sample preparation is critical for accurate and reproducible drug profiling results. For organic profiling using GC-MS or LC-MS, typical sample preparation involves:

- Sample Homogenization: The entire seized sample is ground and mixed to ensure homogeneity. For tablet samples, multiple tablets from the same batch are typically pooled and homogenized.

- Weighing: Approximately 10-100 mg of homogenized sample is accurately weighed into a suitable container.

- Extraction: The weighed sample is extracted with an appropriate solvent (e.g., methanol, chloroform, or buffer solutions) using sonication, vortex mixing, or shaking for 15-30 minutes.

- Centrifugation: The extract is centrifuged at 3000-5000 rpm for 10-15 minutes to separate insoluble particulates.

- Filtration: The supernatant is filtered through a 0.22-0.45 μm membrane filter to remove any remaining particles.

- Derivatization (for GC-MS): For compounds with polar functional groups, derivatization may be performed using agents like MSTFA (N-methyl-N-trimethylsilyltrifluoroacetamide) or BSTFA (N,O-bis(trimethylsilyl)trifluoroacetamide) to improve volatility and thermal stability.

- Analysis: The prepared sample is analyzed by GC-MS or LC-MS using optimized instrument parameters.

Sample Preparation for Inorganic Profiling

Sample preparation for ICP-MS analysis requires complete digestion of organic matrix and dissolution of elements:

- Weighing: 20-100 mg of homogenized drug sample is accurately weighed into a digestion vessel.

- Acid Addition: A mixture of high-purity nitric acid (2-5 mL) and optionally hydrogen peroxide (0.5-1 mL) is added to the sample.

- Digestion: The sample is digested using microwave-assisted digestion at elevated temperature (150-200°C) and pressure for 15-30 minutes, or using hot block digestion.

- Dilution: The digested sample is diluted to a final volume (typically 25-50 mL) with high-purity deionized water.

- Internal Standard Addition: An appropriate internal standard (e.g., Rh, In, Re) is added to correct for instrument drift and matrix effects.

- Analysis: The diluted digestate is analyzed by ICP-MS using optimized operating conditions and appropriate calibration standards.

Quality Control Procedures

To ensure the validity and reliability of drug profiling results, comprehensive quality control measures must be implemented:

- Method Blanks: Prepared and analyzed with each batch of samples to monitor contamination.

- Certified Reference Materials: Analyzed to verify method accuracy when available.

- Duplicate Samples: Prepared and analyzed to assess method precision.

- Spiked Samples: Prepared by adding known amounts of target analytes to assess recovery.

- Calibration Standards: Prepared fresh for each analytical batch and used to calibrate instruments.

- Continuing Calibration Verification: Analyzed periodically during analytical runs to verify calibration stability.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents and Materials for Drug Profiling

| Item | Function/Application | Notes |

|---|---|---|

| High-Purity Solvents (Methanol, Acetonitrile, Chloroform) | Sample extraction, mobile phase preparation | HPLC or LC-MS grade recommended to minimize interference |

| Derivatization Reagents (MSTFA, BSTFA, TFAA) | Chemical modification for GC analysis | Improves volatility and stability of polar compounds |

| High-Purity Acids (Nitric, Hydrochloric) | Sample digestion for elemental analysis | Trace metal grade to prevent contamination |

| Certified Reference Materials | Method validation, quality control | Certified drug standards with known purity |

| Solid Phase Extraction (SPE) Cartridges | Sample clean-up, concentration | Various phases (C18, mixed-mode) for different applications |

| ISOTOPIC Standards (¹³C, ¹⁵N labeled compounds) | Isotope ratio measurements, quantification | Essential for IRMS and isotope dilution methods |

| ICP-MS Tuning Solution | Instrument optimization | Contains elements covering full mass range |

| Mobile Phase Additives (Formic acid, Ammonium acetate) | LC-MS mobile phase modification | Enhances ionization, improves separation |

Validity Framework for Forensic Drug Profiling

The scientific validity of forensic drug profiling methods must be evaluated within established frameworks for forensic feature-comparison methods. Inspired by the Bradford Hill Guidelines for causal inference in epidemiology, the following guidelines can be applied to evaluate drug profiling techniques [16]:

- Plausibility: The theoretical foundation supporting drug profiling must be sound. For example, the hypothesis that isotopic ratios reflect geographic origin or that impurity profiles reveal synthetic pathways should be biologically and chemically plausible.

- Sound Research Design and Methods: The research design and methods used to develop and validate drug profiling techniques must demonstrate construct and external validity. This includes proper experimental controls, appropriate sample sizes, and realistic conditions.

- Intersubjective Testability: Drug profiling methods must be replicable and reproducible across different laboratories and examiners. Protocols should be clearly documented to allow independent verification.

- Valid Methodology for Individualization: The methodology must provide a valid basis for reasoning from group data (class characteristics) to statements about individual cases. This requires understanding the specificity and discrimination power of the profiling technique.

These guidelines align with the Daubert factors that U.S. courts consider when evaluating scientific evidence, including testing, error rates, standards, peer review, and general acceptance [16]. For drug profiling to be forensically valid, it must demonstrate empirical validation through properly designed studies that establish the scientific basis for linking chemical profiles to origins, routes, or common sources.

Illicit drug profiling employing traditional and advanced analytical approaches represents a critical component of modern forensic chemistry. Techniques such as GC-MS, LC-MS, and ICP-MS provide complementary information for comprehensive chemical fingerprinting of seized drugs, enabling forensic chemists to extract valuable profiling data for intelligence and investigative purposes. The continued development and validation of these methods within established scientific frameworks ensures their reliability and admissibility in legal proceedings while advancing the field of forensic chemistry as a scientifically rigorous discipline.

Forensic chemistry faces a critical challenge: the need for analytical techniques that are not only fast and reliable but also scientifically valid, as emphasized by recent judicial and scientific reviews [16]. Extractive-liquid sampling electron ionization-mass spectrometry (E-LEI-MS) emerges as a novel analytical approach that addresses this challenge by combining ambient sampling with the high identification power of electron ionization (EI) [17]. This technique fulfills the growing demand for real-time analytical results across various fields, including pharmaceutical quality control and forensic drug analysis [18].

E-LEI-MS represents a significant advancement in direct mass spectrometry (DMS), where samples are introduced directly into the mass spectrometer without chromatographic separation or extensive preparation [17]. Unlike other ambient ionization techniques that use atmospheric pressure ionization sources like ESI or APCI, E-LEI-MS is the first real-time MS technique to utilize EI for compound ionization [19]. This unique combination provides highly informative and reproducible fragmentation patterns that are directly searchable against standard reference libraries such as the National Institute of Standards and Technology (NIST) database, significantly enhancing compound identification capabilities [17] [18].

Fundamental Principles of E-LEI-MS

Theoretical Foundation and Operational Mechanism

E-LEI-MS operates on the principle of direct liquid extraction coupled with electron ionization. The technique uses a suitable solvent deposited onto the sample surface, where analytes are dissolved and immediately transferred into the EI ion source through the effect of high vacuum using a sampling tip [17]. This process occurs at atmospheric pressure and ground potential, requiring neither sample preparation nor manipulation [17].

Once the analyte solution enters the ion source, high-temperature and high-vacuum conditions promote rapid gas-phase conversion. A 70-eV electron beam then effects typical EI ionization, producing characteristic fragment patterns that provide structural information about the analytes [17]. The coupling of an EI source with liquid phase analysis was demonstrated through previous developments in Direct Electron Ionization (DEI) and Liquid Electron Ionization (LEI) interfaces [18].

A critical innovation in the E-LEI-MS system is the vaporization microchannel (VMC), positioned before the high-vacuum ion source to facilitate vaporization and transport of the liquid extract containing analytes into the ion source [18]. This component, inspired by the LEI interface, ensures efficient analyte introduction despite the challenging transition from atmospheric pressure to high vacuum.

Comparative Advantages in Forensic Analysis

The validity of forensic science methods has come under increased scrutiny, with courts requiring rigorous empirical validation of techniques [16]. E-LEI-MS addresses several key concerns in forensic chemistry:

Standardized Spectral Libraries: Unlike many ambient MS techniques that produce protonated molecules with variable adducts, E-LEI-MS generates classical EI spectra that are directly comparable to well-established reference libraries [17]. This provides a foundation for reliable identification that meets forensic standards.

Reduced Matrix Effects: Gas-phase EI ionization provides limitless small molecule applications scarcely influenced by matrix composition or compound polarity [17], potentially reducing the uncertainty introduced by complex samples.

Empirical Validation: The technique produces reproducible, searchable spectra that enable systematic validation against known standards, addressing concerns about the scientific foundation of forensic methods [16].

Technical Configuration and System Components

Core E-LEI-MS Apparatus

The E-LEI-MS system represents a sophisticated integration of sampling and ionization technologies. The complete apparatus consists of multiple precisely engineered components that work in concert to enable direct analysis at ambient conditions [17] [18].

E-LEI-MS Sampling and Ionization Workflow

Essential Research Reagents and Materials

The E-LEI-MS system requires specific components for optimal operation. The following table details the essential materials and their functions:

Table 1: Essential Research Reagent Solutions and Materials for E-LEI-MS

| Component | Specifications | Function |

|---|---|---|

| Sampling Tip (Inner Tubing) | Fused silica capillary; 30-50 μm I.D; 375 μm O.D. [17] [18] | Core sampling component; transfers analyte solution to EI source via vacuum aspiration |

| Solvent Delivery Tubing | Peek tube; 450 μm I.D.; 660 μm O.D.; 8-10 cm length [17] [18] | Delivers appropriate solvent to sampling spot for analyte extraction |

| Inlet Capillary | Fused silica; 25-30 cm length; 40-50 μm I.D. [18] | Connects valve to MS; acts as extension of inside capillary |

| Vaporization Microchannel (VMC) | 530 μm I.D.; 600 μm O.D.; 24 cm length [18] | Facilitates vaporization and transport of liquid extract into high-vacuum ion source |

| Microfluidic Valve | MV201 manual 3-port valve; 170 nL valve volume [17] | Regulates access to ion source; prevents vacuum loss during sampling |

| Extraction Solvents | Acetonitrile, Methanol [17] [18] | Dissolves analytes from sample surface for transfer to MS |

System Configuration Variations

The E-LEI-MS system has been successfully adapted to different mass spectrometer platforms, with specific modifications to optimize performance:

- E-LEI-QqQ-MS System: Utilizes a 20 cm length sampling capillary with 40 μm I.D. and 25 cm inlet capillary [18]

- E-LEI-Q-ToF-MS System: Employs a 30 cm length sampling capillary with 50 μm I.D. and 30 cm inlet capillary [18]

These variations address the disparate suction forces exerted by different vacuum systems, demonstrating the technique's adaptability across analytical platforms [18].

Experimental Protocols and Methodologies

Standard E-LEI-MS Analytical Procedure

The E-LEI-MS analysis follows a systematic protocol designed to ensure reproducible results:

Sample Preparation: No specific preparation is required. Solid samples are analyzed directly from their native state. For surface analysis, the sampling tip is positioned 0.1-0.5 mm above the surface [17].

Solvent Selection and Delivery: An appropriate solvent (typically acetonitrile or methanol) is delivered via syringe pump at flow rates of 1-5 μL/min [17] [18]. The solvent choice depends on analyte solubility and polarity.

Sampling Process: The solvent flows through the outer tubing to the sampling spot, where it dissolves analytes. The high vacuum effect (10⁻⁵ to 10⁻⁶ Torr) immediately aspirates the solution through the inner tubing [17].

Ionization and Detection: The analyte solution is vaporized in the VMC and introduced to the EI source. Ionization occurs at 70 eV, with mass analysis in either scan mode (for untargeted analysis) or SIM mode (for targeted compounds) [17].

Data Acquisition: MS acquisition begins before valve actuation. The signal typically appears approximately 1 minute after valve opening, with analysis complete within 3-5 minutes [17] [18].

Pharmaceutical Screening Protocol

For pharmaceutical applications, E-LEI-MS has been successfully applied to identify active ingredients in commercial tablets without any pretreatment [17]:

- Sample Presentation: Whole tablets are placed on the sampling stage. The sampling tip is positioned directly on the tablet surface.

- Solvent Conditions: Acetonitrile is typically used as the extraction solvent.

- Detection Parameters: Scan mode (m/z 50-500) for untargeted analysis; SIM mode for specific active ingredients.

- Identification: Experimental spectra are compared against NIST library using spectral match criteria (>90% similarity) [17].

Forensic Drug Screening Protocol

For forensic applications, particularly in drug-facilitated sexual assault (DFSA) investigations [18]:

- Sample Preparation: 20 μL of standard solutions or fortified beverages are spotted on watch glass surfaces and analyzed as dried spots.

- Target Analytes: Benzodiazepines (clobazam, clonazepam, diazepam, flunitrazepam, lorazepam, oxazepam) at concentrations of 20-100 mg/L.

- Quality Control: Blank samples and solvent controls are analyzed between specimens to prevent carryover.

- Data Interpretation: High-resolution MS capabilities enable differentiation of isobaric compounds.

Applications and Performance Data

Pharmaceutical Analysis Applications

E-LEI-MS has demonstrated remarkable capabilities in pharmaceutical analysis, successfully identifying active ingredients in various commercial formulations without sample preparation:

Table 2: E-LEI-MS Pharmaceutical Screening Applications and Results

| Pharmaceutical Product | Active Ingredient(s) | Sample Preparation | E-LEI-MS Results |

|---|---|---|---|

| Surgamyl Tablets | Tiaprofenic acid | None | Correct identification with 93.6% NIST spectral match [17] |

| Brufen Tablets | Ibuprofen | None | Undoubted identification despite excipients [17] |

| NeoNisidina Tablets | Acetylsalicylic acid (250 mg), Acetaminophen (200 mg), Caffeine (25 mg) | None | All three active ingredients detected simultaneously using SIM mode [17] |

| 20 Industrial Drugs | 16 different APIs across various therapeutic classes | None | Successful detection of APIs and excipients in all samples [18] |

Forensic Science Applications