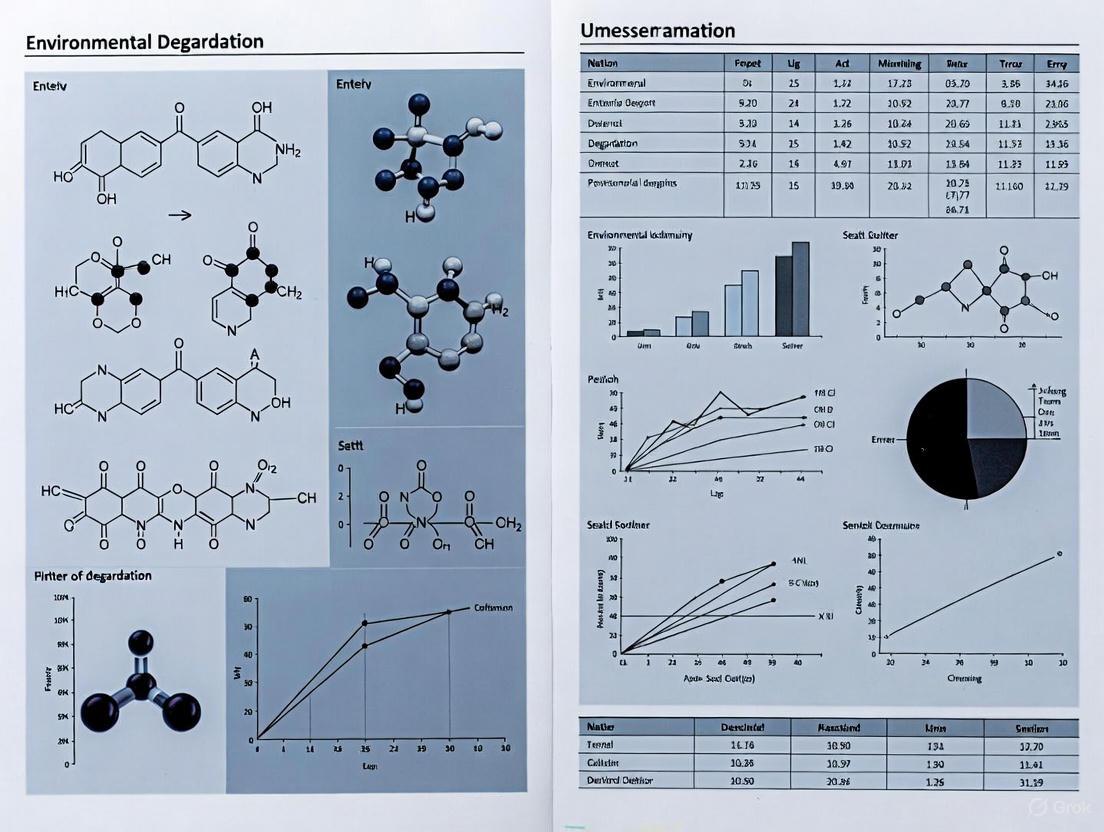

Environmental Degradation: Evidence, Impacts, and Research Implications for Biomedical Science

This article synthesizes the most current scientific evidence on environmental degradation, establishing the unequivocal links between human activity, planetary change, and human health.

Environmental Degradation: Evidence, Impacts, and Research Implications for Biomedical Science

Abstract

This article synthesizes the most current scientific evidence on environmental degradation, establishing the unequivocal links between human activity, planetary change, and human health. Tailored for researchers, scientists, and drug development professionals, it moves from foundational evidence to methodological approaches for investigating these connections. It addresses critical challenges, including data limitations and global inequities, and validates pathways for sustainable solutions. The analysis concludes by outlining the profound implications for biomedical and clinical research, emphasizing the need for an integrated approach to safeguard global health in a changing environment.

The Unassailable Evidence: Linking Human Activity to Global Environmental Crisis

Troubleshooting Guides for Environmental Research

Guide 1: Troubleshooting Sensor-Based Environmental Data Collection

Problem: Low or inconsistent readings from portable air quality sensors in a community-based monitoring project.

Solution: Follow this systematic troubleshooting protocol to identify and resolve the issue [1] [2].

Step 1: Verify the Experimental Controls

Step 2: Inspect Equipment and Storage Conditions

Step 3: Isolate Variables Through Testing

Documentation Requirements: Maintain detailed records of all sensor deployments, calibration dates, environmental conditions, and any protocol deviations for regulatory compliance and data validation [3] [2].

Guide 2: Troubleshooting Ecotoxicity Bioassays in Pharmaceutical Assessment

Problem: Inconsistent results in aquatic ecotoxicity testing of pharmaceutical compounds during environmental risk assessment (ERA).

Solution: Implement this tiered troubleshooting approach to ensure data reliability [4].

Step 1: Confirm Test Organism Viability

Step 2: Validate Chemical Preparation and Exposure System

Step 3: Review Endpoint Measurement Methods

Frequently Asked Questions (FAQs)

Q1: How can citizen science data from portable sensors be validated for regulatory decision-making?

Community-collected data can support regulatory decisions when gathered using rigorous protocols [3]. This includes developing Standard Operating Procedures (SOPs), implementing quality assurance plans, using calibrated equipment, and comparing results with reference-grade monitors. The credibility of community-collected data often depends more on process transparency and documentation than absolute precision [3].

Q2: What are the key gaps in environmental risk assessment (ERA) for pharmaceuticals, particularly antiparasitic drugs?

Major ERA gaps include missing chronic ecotoxicity data for drugs approved before 2006, limited testing of transformation products, and insufficient understanding of effects on nontarget species [4]. For antiparasitic drugs, which target evolutionarily conserved pathways, the risks to nontarget organisms are particularly concerning but poorly characterized. Only approximately 12% of drugs have complete ecotoxicity data sets [4].

Q3: How can researchers effectively communicate complex environmental health findings to diverse stakeholders?

Successful communication requires translating scientific findings into actionable information tailored to specific audiences [3]. Effective strategies include using clear visualizations, contextualizing data within local concerns, acknowledging limitations transparently, and engaging stakeholders throughout the research process rather than only at the end [3].

Q4: What methodologies help quantify disproportionate environmental health impacts on vulnerable populations?

Geographic Information Systems (GIS) mapping combined with environmental monitoring data can identify disproportionate impacts [5] [6]. Methodologies include mapping pollution sources with demographic data, calculating disease burden attributable to specific exposures, and employing participatory approaches that incorporate local community knowledge into assessment frameworks [5] [3].

Quantitative Data on Environmental Stressors

Table 1: Global Climate Change Indicators and Impacts

| Indicator | Current Status | Observed Trends | Key Impacts |

|---|---|---|---|

| Global Temperature | 1.2°C above pre-industrial levels [7] | 2024 was hottest year on record; 2023 previously held record [7] | Extreme heat exposure, crop yield reductions, coral reef collapse [7] |

| Greenhouse Gases | CO₂ at highest in 2 million years [7] | Continued rise in 2024 after record 2023 levels [7] | Ocean acidification, long-term warming commitment [7] |

| Sea Level Rise | Accelerating due to ice melt [8] | Arctic and Antarctic ice loss well below average [7] | Coastal flooding, community relocation, ecosystem loss [8] |

| Extreme Weather | Increasing frequency/intensity [8] | More hurricanes, droughts, heatwaves, flooding [8] | Infrastructure damage, economic losses, health emergencies [8] |

Table 2: Pollution-Associated Health and Environmental Burdens

| Pollution Type | Scale of Impact | Vulnerable Populations | Documented Health Outcomes |

|---|---|---|---|

| Air Pollution | Millions of annual deaths globally [8] | Communities near industry/transport corridors [5] | Respiratory illness, cardiovascular disease, premature mortality [5] [8] |

| Chemical Contaminants | >99% of vulture population decline in India/Pakistan from veterinary diclofenac [4] | Scavenging species exposed through food chain [4] | Renal failure, population collapse in non-target species [4] |

| Heavy Metals | Global burden of disease from lead exposure [5] | Socially vulnerable communities [5] | Ischemic heart disease, neurological impairment, developmental deficits [5] |

| Pharmaceutical Residues | Widespread aquatic contamination (ng/L to μg/L range) [4] | Freshwater organisms near wastewater discharges [4] | Endocrine disruption, feminization of fish, potential antibiotic resistance [4] |

Experimental Protocols

Protocol 1: Structured Process for Environmental Health Assessment

This methodology provides a framework for conducting comprehensive environmental health assessments that integrate quantitative data with community engagement [3].

Step 1: Form Partnership and Identify Stakeholders

- Establish collaborative teams including researchers, community representatives, government agencies, and non-profit organizations [3]

- Define roles, responsibilities, and decision-making processes

- Develop communication protocols and conflict resolution mechanisms

Step 2: Define Goals, Objectives, and Hypotheses

- Create specific, measurable assessment objectives aligned with partner needs

- Develop testable hypotheses regarding environmental exposure-health relationships

- Establish agreed-upon success metrics and outcomes [3]

Step 3: Identify Environmental Stressors and Salutary Factors

- Compile existing environmental, health, and socioeconomic data

- Incorporate local knowledge through community mapping and input sessions [6]

- Identify both negative stressors and positive protective factors [3]

Step 4: Collect Data and Expert Knowledge

- Implement environmental monitoring using appropriate technologies (sensors, lab analysis) [3]

- Gather quantitative health data and qualitative local experience

- Document topic-expert knowledge through structured interviews

Step 5: Rank Environmental Health Factors

- Develop prioritization criteria with stakeholder input

- Apply multi-criteria decision analysis to rank factors by concern level

- Validate rankings through technical assessment and community review

Step 6: Identify Risk Mitigation Strategies

- Brainstorm potential interventions across technical, policy, and community dimensions

- Evaluate feasibility considering technical, financial, and social factors

- Select promising strategies for further development

Step 7: Prioritize Risk Mitigation Strategies

- Assess strategies against defined criteria (effectiveness, cost, equity)

- Develop implementation roadmaps for high-priority strategies

- Identify responsible parties and resource requirements

Protocol 2: Environmental Risk Assessment for Veterinary Medicinal Products

This standardized protocol follows the European Medicines Agency's tiered approach for assessing ecological risks of veterinary pharmaceuticals [4].

Phase I: Initial Exposure Assessment

- Evaluate product characteristics: usage patterns, dosing regimens, excretion pathways

- Calculate Predicted Environmental Concentration (PEC) for relevant compartments

- Screen for potentially concerning products (PECsoil ≥ 100 μg/kg triggers Phase II) [4]

Phase II Tier A: Preliminary Effects Assessment

- Conduct standardized ecotoxicity tests on base set of organisms

- Calculate Predicted No-Effect Concentration (PNEC) from most sensitive endpoint

- Determine risk by PEC/PNEC ratio (>1 proceeds to Tier B) [4]

Phase II Tier B: Refined Risk Assessment

- Conduct fate studies: hydrolysis, photolysis, biodegradation

- Perform extended ecotoxicity testing using chronic endpoints

- Refine PEC and PNEC values with additional data

Phase II Tier C: Specialized Studies and Risk Mitigation

- Conduct field studies or microcosm/mesocosm experiments if needed

- Develop risk mitigation measures if risks identified

- Weigh environmental risks against product benefits for regulatory decision

Research Workflow Visualizations

Environmental Health Assessment Workflow

Pharmaceutical Environmental Risk Assessment

Research Reagent Solutions

Table 3: Essential Materials for Environmental Health Research

| Research Tool | Application | Function | Technical Considerations |

|---|---|---|---|

| Portable Air Sensors | Community-based air quality monitoring [3] | Real-time measurement of pollutants (PM2.5, NO₂, O₃) | Require calibration, subject to environmental conditions, varying precision [3] |

| GIS Mapping Software | Spatial analysis of environmental justice indicators [6] | Visualize disproportionate impacts, identify hotspots | Dependent on data quality, scale, and appropriate indicator selection [5] [6] |

| Standardized Ecotoxicity Test Kits | Regulatory environmental risk assessment [4] | Determine effects on model organisms (Daphnia, algae) | Standardized protocols essential for regulatory acceptance [4] |

| Digital Data Loggers | Environmental exposure assessment | Continuous monitoring of temperature, humidity, other parameters | Require regular calibration, proper deployment, and maintenance [3] |

| Participatory Research Tools | Community-engaged environmental health assessment [3] | Incorporate local knowledge, build stakeholder capacity | Time-intensive, requires trust-building, essential for equitable outcomes [3] |

Technical Support FAQs

Q1: How can I troubleshoot high background noise when measuring atmospheric CH4 concentrations using isotope ratio mass spectrometry?

A: High background noise can stem from incomplete purification of sample gases. Implement a multi-stage trapping system as used in specialized CH4 analyzers [9].

- Check the Chemical Trap: Ensure the chemical trap containing I2O5 and quartz wool is active to remove atmospheric CO, which can interfere with measurements [9].

- Inspect the Pre-freeze Trap: Verify the pre-freeze cold trap is effectively removing other trace gases from the sample stream before it enters the oxidation furnace [9].

- Examine Water Management: Confirm that the adsorption water trap, packed with materials like magnesium perchlorate, is effectively removing water vapor, which is a common source of interference and can affect test precision [9].

Q2: What could cause low precision in carbon isotope (δ13C) data from ice core gas samples?

A: Low precision often results from low analyte concentration or contamination.

- Ensure Complete Oxidation: Verify the condition and temperature of the oxidation furnace. The furnace must completely convert CH4 to CO2 and H2O. An automatic oxygen injection valve can maintain the CuO oxidant's capacity, ensuring consistent and complete combustion [9].

- Optimize the Cold Trap System: Use a combination of cold traps for target gas purification and conversion. The CO2 produced should be concentrated in a liquid nitrogen cold trap, transferred to a second trap, and then passed through a chromatographic column for further separation to ensure sample purity before introduction to the mass spectrometer [9].

Q3: Our climate model projections for regional precipitation show high uncertainty. How can we improve them?

A: Regional climate projection uncertainty is a key research focus. The following methodologies are recommended:

- Employ Dynamical Downscaling: Use high-resolution Regional Climate Models (RCMs) coupled with an urban canopy model to better simulate local atmospheric processes. For example, projects are developing kilometer-scale future climate simulation datasets for the Yangtze River Delta to project changes in urban extreme events [10].

- Apply Statistical and AI Methods: Utilize statistical downscaling or artificial intelligence to correct biases in global climate model outputs. AI methods are also being developed to build impact assessment models for key sectors, creating a more robust climate change impact assessment system [10].

Q4: How can we quantitatively assess the contribution of different soil layers to total CH4 surface emissions?

A: This requires combining precise measurement with isotopic analysis.

- Use Isotope Techniques: Isotope technology is essential to resolve the production mechanisms and migration patterns of CH4 in soil profiles. The core of this methodology is to analyze the carbon and hydrogen isotopic composition of CH4 from different soil depths, which serves as a tracer to quantify the contribution of each layer to the total surface flux [9].

- Implement a Robust Analysis System: A dedicated CH4 carbon and hydrogen element enrichment analyzer is required. This system directly interfaces with an isotope ratio mass spectrometer, simultaneously enriching and analyzing both carbon and hydrogen isotopes from gas samples, which is necessary for handling the low-concentration gases found in soil samples [9].

Experimental Protocols & Data

Protocol: Analysis of Carbon and Hydrogen Isotopes in Greenhouse Gas CH4

Application: For studying CH4 production mechanisms, migration laws in soil profiles, and source apportionment in ecosystems like permafrost and glaciers [9].

Workflow Diagram:

Materials and Reagents:

- Helium (He) Carrier Gas: High-purity, used to transport the sample gas through the system [9].

- Chemical Trap: Packed with I2O5 and quartz wool to remove carbon monoxide (CO) from the air [9].

- Oxidation Furnace: Contains CuO and quartz wool, maintained at high temperature to oxidize CH4 to CO2 and H2O [9].

- Chromatographic Column: Separates CO2 from any residual gases after oxidation and trapping [9].

- Cr Reaction Furnace: Contains chromium metal powder and quartz wool, used to convert H2O into H2 gas for hydrogen isotope analysis [9].

Climate Impact Projection Data

Table: Key Focal Areas for Regional Climate Impact Modeling (2025-2026)

| Research Focus Area | Key Methodology | Primary Output/Objective |

|---|---|---|

| Compound Flood Events | Analysis of historical probabilities of combined floods, storm surges, and extreme precipitation; development of compound flood disaster evaluation models [10]. | Assess impact and risk of compound flooding on estuaries under climate change [10]. |

| High-Resolution Climate Simulation | Dynamical/statistical downscaling or AI methods using CMIP6/CMIP7 models; coupling with urban canopy models [10]. | Create kilometer-scale climate projection datasets to estimate future changes in extreme events in urban agglomerations [10]. |

| Saltwater Intrusion | Construction and simulation of estuary saltwater intrusion models [10]. | Evaluate past and future impacts of sea-level rise and climate change on estuary salinity [10]. |

| Urban Climate Resilience | Development of a climate resilience index integrating social, economic, and environmental indicators [10]. | Formulate a city climate resilience assessment system and planning recommendations [10]. |

The Scientist's Toolkit: Key Research Reagents & Materials

Table: Essential Reagents and Materials for Advanced Climate Science Research

| Item | Function/Application in Climate Research |

|---|---|

| Isotope Ratio Mass Spectrometer (IRMS) | The core instrument for precisely measuring the ratios of stable isotopes (e.g., 13C/12C, 2H/H) in greenhouse gases like CO2 and CH4, used for tracing sources and sinks [9]. |

| Gas Pre-concentration Systems (e.g., PreCon, custom analyzers) | Essential for analyzing low-concentration trace gases from air, ice core, or soil samples. They purify and concentrate target molecules (e.g., CH4) before introduction to IRMS or GC systems [9]. |

| High-Resolution Regional Climate Models (RCMs) | Numerical models used to downscale global climate projections to regional scales (e.g., city-level), crucial for projecting local impacts like extreme heat and precipitation [10]. |

| Carbon Molecular Sieve/Chromatographic Columns | Used in gas chromatography systems to separate different gas species (e.g., CO2, N2O, CH4) from a mixed sample stream, ensuring pure analyte reaches the detector [9]. |

| Coupling Interfaces (Open-Split Interface) | A critical technical component that allows the direct connection of peripheral devices (e.g., gas chromatographs, elemental analyzers) to an IRMS, enabling continuous-flow isotope analysis [9]. |

Frequently Asked Questions (FAQs)

FAQ 1: Is the Earth currently experiencing a sixth mass extinction? The scientific community is engaged in an active debate on this question, with interpretations of the data leading to different conclusions.

- Evidence Supporting the Crisis: Many studies argue that a sixth mass extinction is underway. One key study found the average rate of vertebrate species loss over the last century to be up to 100 times higher than the natural background rate [11]. This analysis, using a conservative background rate of 2 mammal extinctions per 10,000 species per 100 years (2 E/MSY), concluded that the number of species lost in the last century would have taken between 800 and 10,000 years to disappear under normal conditions [11]. Some researchers project that, since around AD 1500, possibly as many as 7.5–13% of all ~2 million known species have already gone extinct, a figure far greater than the 0.04% listed on the IUCN Red List, which is biased toward vertebrates [12].

- An Opposing Viewpoint: Recent research challenges this characterization. A 2025 analysis argues that while biodiversity decline is real, the scale does not meet the geological definition of a mass extinction (loss of 75% of species) [13] [14]. This study, focusing on genus-level extinctions since 1500, found that 102 genera have gone extinct, representing 0.45% of the genera assessed by the IUCN. It also found that the majority of these extinctions were of island-dwelling species and that decade-by-decade extinction rates have been declining over the last 100 years, partly due to successful conservation efforts [13] [14].

FAQ 2: How does climate change interact with biodiversity loss? Climate change and biodiversity loss are deeply interconnected crises that reinforce each other [15] [16].

- Climate Change as a Driver: Climate change is a significant driver of biodiversity loss and is projected to become the dominant cause in the coming decades [17] [16]. Its impacts include [18] [17] [15]:

- Species Range Shifts: Rising temperatures force species to move to higher elevations or latitudes.

- Ecosystem Disruption: It can disrupt ecological interactions, such as predator-prey balances and plant-pollinator relationships.

- Habitat Destruction: Ocean acidification harms corals and shellfish, while rising sea levels destroy coastal habitats.

- Increased Extinction Risk: The risk of species extinction increases with every degree of warming [18].

- Biodiversity as a Climate Defense: Healthy ecosystems are our strongest natural defense against climate change. They act as massive carbon sinks [18] [15].

- Forests offer roughly two-thirds of the total mitigation potential of all nature-based solutions [18].

- Peatlands cover only 3% of the world’s land but store twice as much carbon as all forests [18].

- Ocean habitats like seagrasses and mangroves can sequester carbon dioxide at rates up to four times higher than terrestrial forests [18].

FAQ 3: What are the primary methodologies for quantifying extinction rates? Researchers use several key methods, each with its own strengths and limitations.

- IUCN Red List Analysis: This involves assessing the conservation status of species against standardized criteria to determine their risk of extinction. A limitation is that the Red List is taxonomically biased, with comprehensive coverage for birds and mammals but very poor coverage for invertebrates, which constitute the majority of known species [12].

- Comparative Rate Analysis: This method compares current extinction rates to the "background extinction rate"—the average rate of species loss over geological time without human influence. The background rate for mammals is often estimated at 2 E/MSY (extinctions per million species per year), but this benchmark is itself a subject of scientific discussion [11].

- Genus- and Family-Level Analysis: Some studies examine extinctions at higher taxonomic levels (e.g., genus or family) to capture the loss of evolutionary history. This was the approach taken by both the 2023 study (73 genera extinct) [14] and the 2025 rebuttal (102 genera extinct) [13].

- Projection Modeling for Understudied Taxa: To address biases, scientists model projected extinction rates for understudied groups like invertebrates based on well-documented taxa or specific regional studies. One study used mollusc data to estimate a global extinction rate of 7.5-13% for all species [12].

Experimental Protocols & Data Tables

Protocol 1: Calculating Contemporary and Background Extinction Rates

Objective: To determine if the current rate of species extinction exceeds the natural background rate.

Workflow Diagram: Calculating Extinction Rates

Methodology:

- Data Compilation: Gather data on species officially declared extinct within a specified timeframe (e.g., since 1500 AD) from authoritative sources like the IUCN Red List. Data should be stratified by taxonomic group (mammals, birds, invertebrates, etc.) and habitat (continental vs. island) [13] [11].

- Calculate Modern Extinction Rate: Express the rate in Extinctions per Million Species per Year (E/MSY). The formula is:

Modern Rate (E/MSY) = (Number of Extinctions / Total Number of Assessed Species) / Time Period in Years * 1,000,000 - Establish Background Rate: Use a consensus value from paleontological literature. For example, a conservative background rate for mammals is 2 E/MSY (meaning 2 extinctions per 10,000 species per 100 years) [11]. Note that this value is debated.

- Comparison: Calculate the ratio of the Modern Rate to the Background Rate. A ratio significantly greater than 1 indicates an accelerated extinction crisis [11].

- Trend Analysis: Analyze the data for temporal patterns (e.g., acceleration or deceleration over centuries or decades) and spatial patterns (e.g., hotspots of extinction on islands) [13] [14].

Protocol 2: Implementing a Nature-Based Solution (NbS) Intervention

Objective: To design, implement, and monitor a conservation project that uses ecosystem management to simultaneously address biodiversity loss and climate change.

Workflow Diagram: NbS Project Workflow

Methodology:

- Site and Threat Assessment: Select a degraded ecosystem (e.g., a forest, peatland, or mangrove). Conduct a baseline survey to document existing biodiversity, carbon stocks, and primary threats (e.g., deforestation, invasive species, erosion) [16].

- Intervention Design: Formulate a specific, evidence-based action plan. Examples include [19] [16]:

- Reforestation/Restoration: Planting native tree species to rebuild habitat and sequester carbon.

- Invasive Species Removal: Eradicating non-native predators or plants to allow native species to recover.

- Ecosystem Protection: Formally protecting areas to prevent further land-use change.

- Community Livelihoods: Developing sustainable income alternatives for local communities to reduce pressure on natural resources.

- Implementation: Execute the planned actions, often through partnerships with NGOs, government agencies, and local communities [16].

- Monitoring: Track key performance indicators over the long term. This includes [20] [16]:

- Biodiversity Metrics: Changes in species abundance, richness, and composition.

- Ecosystem Metrics: Rates of habitat regeneration, reduction in soil erosion, and water quality improvements.

- Climate Metrics: Quantification of carbon sequestration in biomass and soil.

- Technology: Use drones, satellite imagery, environmental DNA (eDNA), and AI to enhance monitoring scale and efficiency [20].

- Adaptive Management: Use monitoring results to refine and improve the intervention strategies over time [16].

Data Tables

Table 1: Quantifying Genus-Level Extinctions Since 1500 AD Data sourced from a 2025 analysis of IUCN information. [13]

| Taxonomic Group | Number of Extinct Genera | Example of Extinct Genus | Key Context |

|---|---|---|---|

| All Animals | 90 | Raphus (Dodo) | Majority were monotypic (single-species) genera [14]. |

| All Plants | 12 | Cylindraspis (Giant Tortoises) | Represents 179 total species lost [13]. |

| Mammals | 21 | Hydrodamalis (Sea Cow) | 76% of extinctions were on islands [13] [14]. |

| Birds | 37 | Mohoidae (Hawaiian Honeyeaters) | Represents loss of an entire family [13]. |

Table 2: Comparative Extinction Rates and Frameworks Synthesized data from multiple studies and reports. [11] [15] [16]

| Metric | Value | Context / Source |

|---|---|---|

| Living Planet Index (2024) | 73% average decline in monitored wildlife populations (1970-2020) | Measures population abundance, not extinctions. Freshwater populations declined by 85% [15]. |

| Vertebrate Extinction Rate | Up to 100x background rate | Conservative estimate; previous century's extinctions took 800-10,000 years under background rates [11]. |

| Projected Invertebrate Loss | 7.5-13% of all species since 1500 | Estimate based on mollusc data; highlights limitation of IUCN Red List [12]. |

| Kunming-Montreal Framework | Protect 30% of Earth's land/oceans by 2030 | Global biodiversity target to reverse nature loss [18] [17]. |

The Researcher's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Tools and Technologies for Biodiversity Research and Conservation

| Tool / Solution | Function & Application |

|---|---|

| IUCN Red List | The world's most comprehensive inventory of the global conservation status of biological species. Serves as a primary data source for calculating extinction rates, though it has taxonomic and geographic biases [13] [12]. |

| Environmental DNA (eDNA) | A tool for detecting species from soil, water, or air samples. Allows for non-invasive, large-scale biodiversity monitoring and detection of rare or elusive species [20]. |

| Satellite Imagery & Remote Sensing | Enables monitoring of large-scale habitat changes, such as deforestation, wetland loss, and urban expansion. Provides critical data for tracking land-use change, a major driver of biodiversity loss [20]. |

| Drones | Used for detailed aerial surveys, mapping hard-to-reach habitats, planting trees (e.g., Flash Forest project), and monitoring wildlife populations with minimal disturbance to ecosystems [20]. |

| AI & Machine Learning | Processes large datasets from camera traps, acoustic sensors, and satellite images to identify species, count individuals, and detect patterns of habitat change that would be impossible to analyze manually [20]. |

| Stable Isotope Analysis | Used to trace food webs, understand animal migration patterns, and study nutrient cycling within ecosystems. Helps in understanding the functional roles of species and the impact of their loss [17]. |

Technical Support Center: Environmental Research and Analysis

This technical support center provides troubleshooting guides and FAQs for researchers and scientists investigating the mechanisms and impacts of three major environmental threats: air pollution, plastic waste, and deforestation. The content is framed within the context of a broader thesis on addressing environmental degradation, with a focus on experimental evidence and methodological support.

Air Pollution Research Support

Frequently Asked Questions

Q1: Our epidemiological study found an association between PM2.5 and neurodegenerative outcomes, but reviewers request biological plausibility. What experimental models can demonstrate mechanism?

A: To establish mechanism, integrate findings from complementary models. A recent Science study provides a robust template [21]:

- Human Data Analysis: Begin with large-scale epidemiological analysis. The cited study used hospital data from 56.5 million U.S. patients, linking ZIP-code-level PM2.5 exposure to Lewy body dementia risk (17% increased risk per interquartile range increase in PM2.5) [21].

- Animal Models: Expose wild-type and genetically modified mice (e.g., alpha-synuclein knockouts) to PM2.5 from diverse global sources (U.S., China, Europe) for 5-10 months. Monitor for brain atrophy, cell death, and cognitive decline [21].

- Molecular Analysis: Characterize alpha-synuclein clumps using biophysical and biochemical assays. Identify structurally distinct "strains" of toxic protein aggregates induced by pollution [21].

Q2: How does air pollution trigger protein misfolding in neural tissues at the molecular level?

A: Research identifies specific chemical pathways. At Scripps Research, scientists found that air pollution triggers excessive protein S-nitrosylation, particularly affecting CRTC1, a protein essential for memory and learning [22]. This "SNO-storm" disrupts the interaction between CRTC1 and CREB, impairing gene expression critical for synaptic function and cell survival. To confirm this in your models:

- Cell Cultures: Expose human neural cells derived from stem cells to pollution-related molecules.

- Biochemical Assays: Test for S-nitrosylation of CRTC1 and disrupted CREB binding.

- Intervention: Engineer CRTC1 variants resistant to S-nitrosylation; this partially reversed memory deficits in Alzheimer's mouse models [22].

Air Pollution Experimental Data Summary

Table 1: Quantitative Findings from Recent Air Pollution Studies

| Study Focus | Population/Model | Exposure Type & Duration | Key Quantitative Finding | Source |

|---|---|---|---|---|

| Mental Health Risk | 14,800 people in Bradford, UK | Relocation to more polluted area (1 year) | 11% greater risk of new mental health drug prescriptions | [23] |

| Lewy Body Dementia Risk | 56.5 million U.S. Medicare patients | Long-term PM2.5 exposure (2000-2014) | 17% higher risk of Parkinson's disease dementia per IQR increase in PM2.5 | [21] |

| Protein Misfiring (SNO-storm) | Human & mouse neural cells | PM2.5 / NOx molecules | S-nitrosylation of CRTC1 protein disrupts CREB binding, impairing memory genes | [22] |

| Green Space Mitigation | Population in Bradford, UK | Access to quality green space | Proximity to poor-quality green space can worsen mental health | [23] |

Experimental Protocol: Assessing PM2.5-Induced Neurotoxicity in Mouse Models

Methodology (Adapted from Mao et al., Science) [21]:

- Animal Subjects: Utilize both wild-type mice and genetically modified models (e.g., mice lacking alpha-synuclein gene and humanized A53T alpha-synuclein mice).

- Exposure Regimen: Expose animals to concentrated ambient PM2.5 or collected particulate matter from various sources (e.g., vehicle exhaust, industrial emissions) every other day.

- Dose: Typical studies use environmentally relevant concentrations (e.g., 50-200 μg/m³).

- Duration: Chronic exposure for 5-10 months to model long-term human exposure.

- Behavioral Testing: Conduct cognitive assessments (e.g., Morris water maze, novel object recognition) and motor function tests (e.g., rotarod, beam walking) at regular intervals.

- Tissue Collection and Analysis:

- Perfuse and collect brain tissues (cortex, hippocampus, substantia nigra).

- Perform immunohistochemistry for alpha-synuclein, p-Tau, and markers of neuroinflammation (e.g., GFAP for astrocytes, Iba1 for microglia).

- Analyze for protein aggregates using protein misfolding cyclic amplification (PMCA) or similar techniques.

- Conduct RNA sequencing to assess transcriptomic changes and compare with human LBD signatures.

Visualization: Air Pollution Neurotoxicity Pathway

Plastic Waste Research Support

Frequently Asked Questions

Q3: Our laboratory wants to quantify and reduce its single-use plastic waste. What validated reduction and reuse approaches can we implement?

A: A 2020 case study provides a measurable framework for plastic reduction in research laboratories [24]:

- Baseline Assessment: Monitor all single-use plastic consumption for 4 weeks. One laboratory documented use of nearly 2,000 single-use microbiology plastics and 2,200 tubes weekly, generating 97kg of biohazard waste over 4 weeks [24].

- Reduction Strategies:

- Reuse Protocol: Implement a chemical decontamination station for plastic tubes:

- Soak in high-level disinfectant (e.g., Distel) for >16 hours [24].

- Rinse thoroughly with water.

- Autoclave for sterilization.

- Impact Measurement: The cited study achieved substantial reductions in plastic consumption and significant cost savings through these approaches [24].

Q4: How can we accurately monitor global plastic pollution flows for large-scale environmental studies?

A: Utilize modeling tools and international data sources:

- EPA's Environmental Modeling Tools: The Environmental Modeling and Visualization Laboratory (EMVL) offers resources like the Real Time Geospatial Data Viewer (RETIGO) for analyzing air quality data and other environmental datasets [25].

- Satellite Monitoring: Leverage remote sensing data and the EPA's Remote Sensing Information Gateway (RSIG3D) to access multi-terabyte environmental datasets from satellites and monitoring sites [25].

- International Treaty Developments: Monitor the UN's ongoing process to create a legally binding international treaty on plastic pollution, which will influence future data collection frameworks [26].

Plastic Waste Experimental Data Summary

Table 2: Laboratory Plastic Waste Reduction Strategies and Efficacy

| Strategy Category | Specific Intervention | Replacement For | Efficacy & Notes | Source |

|---|---|---|---|---|

| Material Substitution | Metal inoculation loops | Plastic loops | Reusable, autoclavable | [24] |

| Material Substitution | Wooden inoculations sticks | Plastic spreaders | For bacterial colony picking | [24] |

| Process Change | Chemical decontamination station | Single-use plastic tubes | Soak in disinfectant >16 hrs, then autoclave | [24] |

| System Change | Centralized bulk ordering | Individual small orders | Reduces packaging waste | [24] |

| Global Context | --- | --- | 14 million tons of plastic enter oceans yearly; could grow to 29 million tons/year by 2040 without action | [26] |

Experimental Protocol: Implementing a Plastic Waste Reduction and Reuse System

Methodology (Adapted from McGorrian et al., 2020) [24]:

- Baseline Documentation (4 weeks):

- Empty all existing plastic consumables and waste bags.

- Introduce tracking systems (whiteboards, spreadsheets) for all laboratory members to record plastic items collected from communal stocks.

- Weigh all biohazard waste bags before disposal weekly.

- Intervention Implementation (7+ weeks):

- Procurement: Replace specific items with sustainable alternatives (see Table 2).

- Decontamination Station Setup: Establish a labeled container with appropriate disinfectant for reusable plastic tubes. Ensure safety protocols for handling and rinsing.

- Staff Training: Conduct sessions on new protocols for using metal loops, wooden sticks, and the decontamination station.

- Impact Assessment:

- Continue tracking plastic items collected and biohazard waste weight.

- Calculate percentage reduction in plastic consumption and waste generation.

- Analyze cost savings from reduced consumable purchases.

Visualization: Laboratory Plastic Waste Reduction Workflow

Deforestation Research Support

Frequently Asked Questions

Q5: Our ecological study needs to attribute local deforestation to specific human causes. What are the principal drivers we should quantify?

A: Research consistently identifies these primary human-induced causes [27] [28]:

- Agricultural Expansion: The leading cause, particularly for cattle ranching and cash crops like palm oil and soy [27] [26].

- Logging: Both legal and illegal timber extraction exceeding sustainable rates [28].

- Infrastructure Development: Road construction, urbanization, and dam projects [27] [28].

- Mining: Resource extraction requiring large-scale land clearance [27].

Quantification methods should include:

- Satellite Imagery Analysis: Use time-series data to track land-use changes.

- Economic Data Correlation: Cross-reference deforestation hotspots with agricultural commodity production data.

- Field Validation: Ground-truthing to confirm suspected causes.

Q6: What are the most critical consequences of deforestation we should prioritize in environmental impact assessments for development projects?

A: Focus on these evidence-based consequences with high ecological and societal impact [27] [28]:

- Biodiversity Loss: Habitat destruction is the primary driver of species extinction [26] [28].

- Climate Change Impact: Deforestation contributes significantly to greenhouse gas emissions and reduces carbon sequestration capacity [28].

- Soil Degradation: Loss of forest cover leads to erosion, reduced fertility, and desertification [28].

- Water Cycle Disruption: Altered rainfall patterns and increased flooding risk [28].

- Social Impacts: Displacement of indigenous communities and loss of livelihoods [27] [28].

Deforestation Experimental Data Summary

Table 3: Principal Causes and Consequences of Deforestation

| Category | Specific Factor | Key Impact / Metric | Source |

|---|---|---|---|

| Human Causes | Agricultural Expansion | Leading cause globally; for livestock and crops | [27] [28] |

| Human Causes | Logging & Wood Industry | Timber, paper products exceeding sustainable rates | [28] |

| Human Causes | Infrastructure Development | Roads, urban expansion, dams | [27] [28] |

| Human Causes | Mining | Resource extraction clearing large areas | [27] |

| Ecological Consequences | Biodiversity Loss | 68% average decline in population sizes of mammals, fish, birds, reptiles, and amphibians (1970-2016) | [26] |

| Ecological Consequences | Climate Change | Increased carbon emissions, altered weather patterns | [28] |

| Ecological Consequences | Soil Erosion | Loss of soil fertility, leading to desertification | [28] |

| Human Consequences | Indigenous Community Impact | Displacement and loss of traditional livelihoods | [27] [28] |

| Human Consequences | Disease Spread | Increased human-wildlife contact raising zoonotic disease risk | [28] |

Experimental Protocol: Monitoring Deforestation and Habitat Fragmentation

Methodology (Adapted from Bodo et al., 2021 and GeeksforGeeks, 2022) [27] [28]:

- Remote Sensing Data Acquisition:

- Source multi-temporal satellite imagery (e.g., Landsat, Sentinel) for the study area over 10-20 years.

- Ensure images are from the same season to minimize phenological variation.

- Land Use/Land Cover (LULC) Classification:

- Classify images into forest, agriculture, urban, water, and other relevant classes using supervised classification algorithms.

- Validate classification accuracy with ground truth data (>85% accuracy target).

- Change Detection Analysis:

- Perform post-classification comparison to identify forest loss areas.

- Calculate annual deforestation rates using compound interest formula:

r = (1/(t2-t1)) × ln(A2/A1)where A1 and A2 are forest areas at times t1 and t2.

- Fragmentation Analysis:

- Use landscape metrics software to calculate:

- Patch density and size distribution

- Edge-to-interior ratio

- Connectivity indices

- Use landscape metrics software to calculate:

- Driver Attribution:

- Correlate deforestation patches with proximity to roads, settlements, and agricultural areas.

- Conduct field surveys to verify drivers in selected locations.

The Scientist's Toolkit

Research Reagent Solutions for Environmental Health Studies

Table 4: Essential Research Materials for Environmental Threat Investigation

| Reagent/Material | Specific Example | Research Function | Application Context |

|---|---|---|---|

| Autoclavable Tubes | 50ml Falcon tubes (Griener Bio-one) | Reusable sample containers; withstands sterilization | Plastic waste reduction in labs [24] |

| Sustainable Inoculation Tools | Metal loops (Fisher Scientific) | Replacing single-use plastic for microbiology | Bacterial culture work without plastic waste [24] |

| Wooden Application Tools | Wooden inoculations sticks (Sigma) | Sustainable alternative for patch plating | Microbiology techniques [24] |

| Chemical Decontaminants | Distel (Scientific Lab Supplies) | High-level disinfectant for reuse protocols | Decontamination station for plasticware [24] |

| PM2.5 Exposure Samples | Collected particulate matter from various sources | Trigger for neurodegenerative pathways in models | Air pollution neurotoxicity studies [21] |

| Alpha-Synuclein Models | Humanized A53T alpha-synuclein mice | Model protein misfolding in neurodegeneration | Studying pollution-induced Lewy body formation [21] |

| Anti-SNO Antibodies | S-nitrosylation detection reagents | Identify polluted air-induced protein changes | Detecting "SNO-storm" in neural cells [22] |

| Remote Sensing Data | Satellite imagery (Landsat, Sentinel) | Deforestation monitoring and quantification | Tracking forest loss and fragmentation [28] |

Frequently Asked Questions (FAQs)

FAQ 1: What are the main types of biomarkers used to study pollution exposure? Biomarkers are essential tools for linking environmental exposure to health effects. They are categorized into three main types [29]:

- Biomarkers of Exposure: Used to quantify the internal dose of a specific chemical. This involves measuring the chemical itself, its metabolites, or its reaction products in biological samples like blood or urine.

- Biomarkers of Effect: Measurable biochemical, physiological, or behavioral changes that indicate a biological response to an exposure. Examples include oxidative stress markers and cytogenetic damage.

- Biomarkers of Susceptibility: Indicators of an inherent or acquired ability of an organism to respond to the challenge of exposure, such as genetic polymorphisms in metabolic enzymes.

FAQ 2: How does air pollution like PM2.5 cause damage at the cellular level? Fine particulate matter (PM2.5) can penetrate deep into the lungs and enter the bloodstream. A key mechanism of its toxicity is the induction of oxidative stress [30] [31]. Particles can generate reactive oxygen species (ROS), leading to an imbalance that damages cellular macromolecules. This oxidative damage to lipids and DNA is a critical event that can trigger inflammatory responses and is a documented precursor to chronic diseases, including cancer and cardiovascular conditions [31] [32].

FAQ 3: My research focuses on pharmaceuticals. How can I assess their environmental impact? The environmental impact of pharmaceuticals is a growing concern. A multi-faceted approach is recommended [33] [34]:

- Green Drug Design: Develop pharmaceuticals that are benign and easily biodegradable after excretion.

- Lifecycle Assessment: Consider the entire lifecycle of a drug, from green manufacturing and rational consumption to proper disposal of unused medicines.

- Environmental Risk Assessment (ERA): Submit new pharmaceutical products for rigorous ERAs during the marketing authorization process, as mandated in regions like the European Union.

FAQ 4: What advanced methods can elucidate the mechanisms linking pollutants to complex diseases? Traditional toxicology tests are being supplemented with advanced computational and omics technologies. One innovative approach involves integrating epigenome data (e.g., from ATAC-Seq, which identifies regions of open chromatin) with large-scale transcription factor (TF) binding data (from ChIP-Seq) [35]. This method, such as the DAR-ChIPEA pipeline, can identify pivotal TFs whose binding is disrupted by pollutants, thereby revealing disease-associated mechanisms, such as how PM2.5 may lead to immune dysfunction by altering the activity of TFs like C/EBPs and Rela [35].

FAQ 5: How significant is the global disease burden from environmental pollution? Environmental pollution remains a major source of health risk worldwide. Global burden of disease studies have attributed approximately 8–9% of the total disease burden to pollution, with a considerably higher impact in developing countries [36]. The major sources of exposure include unsafe water, poor sanitation, poor hygiene, and indoor air pollution.

Experimental Protocols & Workflows

Protocol 1: Assessing Oxidative Damage from Particulate Matter Exposure

This protocol outlines the methodology for measuring oxidatively damaged DNA and lipids as biomarkers of biologically effective dose in individuals exposed to combustion particles like PM2.5 [31].

- Study Population & Recruitment: Define your cohort (e.g., occupational groups, high-risk urban populations). Include a control group matched for age, gender, and smoking status to account for confounders.

- Biological Sample Collection: Collect samples pre- and post-exposure.

- Blood Collection: Draw blood into EDTA tubes. Centrifuge to separate plasma for lipid peroxidation assays and lymphocytes for DNA damage analysis.

- Urine Collection: Collect spot or 24-hour urine samples. Stabilize with antioxidants (e.g., ascorbic acid) and store at -80°C.

- Biomarker Analysis:

- Oxidatively Damaged DNA: Quantify 8-oxo-7,8-dihydro-2'-deoxyguanosine (8-oxodG) in DNA from lymphocytes or in urine using techniques like HPLC-ECD or ELISA.

- Lipid Peroxidation: Measure products like malondialdehyde (MDA) in plasma or urine using the thiobarbituric acid reactive substances (TBARS) assay or HPLC.

- Data Analysis: Calculate the standardized mean difference between exposed and control groups. Perform multiple regression analysis to adjust for potential covariates like body mass index and dietary intake of antioxidants.

Protocol 2: Computational Pipeline for Identifying Pollutant-Mediated Disease Mechanisms

This protocol describes a data-mining approach (DAR-ChIPEA) to identify transcription factors (TFs) that play a pivotal role in the modes of action of environmental pollutants [35].

- Data Acquisition:

- Obtain ATAC-Seq data from a chemical perturbation experiment (e.g., cells or tissues exposed to a pollutant like tributyltin or PM2.5).

- Retrieve genome-wide TF binding site data from a curated database like ChIP-Atlas.

- Acquire known TF-disease associations from a repository like DisGeNET.

- Identification of Differentially Accessible Regions (DARs): Process the ATAC-Seq data to identify genomic regions with statistically significant differences in chromatin accessibility between exposed and control conditions.

- Transcription Factor Enrichment Analysis (ChIPEA): Use the DARs as input for an enrichment analysis against the database of TF binding sites. This identifies TFs whose binding motifs are significantly overrepresented in the pollutant-altered genomic regions.

- Triadic Association Mapping: Cross-reference the resulting pollutant-TF association matrix with the TF-disease database to construct a pollutant-TF-disease triadic association, predicting the molecular mechanisms by which a pollutant may trigger a specific disorder.

Quantitative Data on Pollution Biomarkers

The following tables summarize key quantitative findings from meta-analyses and studies on biomarkers of pollution exposure.

Table 1: Standardized Mean Differences (SMD) in Oxidative Damage Biomarkers from Air Pollution Exposure (Meta-Analysis) [31]

| Biomarker | Biological Matrix | SMD (95% Confidence Interval) | Interpretation |

|---|---|---|---|

| Oxidized DNA | Blood | 0.53 (0.29 - 0.76) | Medium to large effect size |

| Oxidized DNA | Urine | 0.52 (0.22 - 0.82) | Medium to large effect size |

| Oxidized Lipids | Blood | 0.73 (0.18 - 1.28) | Large effect size |

| Oxidized Lipids | Urine | 0.49 (0.01 - 0.97) | Small to large effect size |

| Oxidized Lipids | Airways | 0.64 (0.07 - 1.21) | Medium to large effect size |

Table 2: Specific Biomarkers of Inflammation and Oxidative Stress Linked to Air Particles [30]

| Air Pollutant | Biomarkers Studied | Key Findings | Associated Health Effects |

|---|---|---|---|

| PM2.5 | 8-OHdG, IL-8, CC16 | Personal exposure leads to oxidative DNA damage | Increased lung damage and cancer risk |

| PM10 & PM2.5 | TNF-α, IL-6, IL-12p40, IL-10 | PM2.5 alters balance between pro- and anti-inflammatory cytokines | Aberrant and dysregulation of immune status |

| PM10, PM2.5, UFP | IL-6, TNF-α | Exposure increases IL-6; PM2.5 & UFP elevate TNF-α | Respiratory inflammation and systemic effects |

Signaling Pathways and Workflows

Pollutant-Induced Oxidative Stress and Inflammation Pathway

DAR-ChIPEA Computational Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Kits for Pollution-Health Research

| Research Reagent / Kit | Function / Application | Example Biomarkers/Targets |

|---|---|---|

| HPLC-ECD / LC-MS/MS Kits | High-sensitivity quantification of oxidized nucleosides in DNA/urine. | 8-oxodG, 8-OHdG [30] [31] |

| TBARS Assay Kit | Colorimetric measurement of lipid peroxidation in plasma/serum. | Malondialdehyde (MDA) [31] |

| ELISA Kits (Multiplex) | Simultaneous measurement of multiple inflammatory cytokines in serum/supernatant. | IL-6, TNF-α, IL-8, IL-10 [30] |

| Clara Cell Protein (CC16) ELISA | Quantification of a biomarker for lung epithelial damage. | CC16 (Uteroglobin) [30] |

| ATAC-Seq Kit | Identifies genome-wide regions of open chromatin for epigenetic analysis. | Differentially Accessible Regions (DARs) [35] |

| ChIP-Seq Grade Antibodies | Immunoprecipitation of specific transcription factors or histone modifications. | TFs (e.g., C/EBPs, Rela), H3K27ac [35] |

Research Frameworks and Analytical Tools for Assessing Environmental Health Impacts

Technical Support Center

Troubleshooting Guides

Guide 1: Resolving Data Integration and Tool Sprawl

Problem: Users report inefficiencies, errors, and difficulty obtaining a unified view of data due to an unmanageable number of disconnected data tools.

- Step 1: Balance Stakeholder Priorities

- Symptoms: Central IT teams report governance and security concerns, while business users complain about slow access to data and a lack of flexibility.

- Solution: Architect your ecosystem to balance centralized control (for governance, security, stability) with decentralized capabilities (for business user speed and self-service) [37].

- Step 2: Audit and Consolidate Your Tool Stack

- Symptoms: High costs for maintaining multiple tools, gaps in data ownership, and complex, poorly documented data lineages.

- Solution: Evaluate each tool based on its strategic fit. Consider a comprehensive, cloud-native data integration platform that supports universal connectors for various data sources and allows seamless switching between ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) workflows [37].

- Step 3: Implement Intelligent Automation

- Symptoms: Repetitive, manual data management tasks and inconsistent documentation.

- Solution: Leverage an AI co-pilot within your data platform to automate repetitive tasks, recommend efficient workflows, and auto-document processes and data catalogs [37].

Guide 2: Addressing Data Quality and Accessibility

Problem: Researchers cannot access or trust the data needed for analysis, often due to siloed systems, inconsistent formats, or unclear governance.

- Step 1: Establish a Data Mesh Architecture

- Symptoms: Data is locked within specific departments or projects, and there is no single source of truth.

- Solution: Shift from a centralized data ownership model to a decentralized "data mesh." This architecture treats data as a product, with domain-specific owners ensuring its quality and accessibility. Implement global governance standards to ensure interoperability [38].

- Step 2: Manage Identity and Access

- Symptoms: Unauthorized data access or, conversely, researchers being unable to access the data they need.

- Solution: Implement a centralized identity management system (e.g., Okta, OpenID) or a decentralized system (e.g., using blockchain) to control access securely [38].

- Step 3: Create a Central API Catalog

- Symptoms: Difficulty discovering and connecting to available data sources.

- Solution: Develop a central catalog of all Application Programming Interfaces (APIs) to ensure consistent and discoverable methods for data access and sharing [38].

Frequently Asked Questions (FAQs)

FAQ 1: What is an integrative data ecosystem, and why is it critical for environmental health research? An integrative data ecosystem is a platform that combines data from numerous providers—such as environmental monitors, health records, and economic databases—and builds value through the usage of this processed, unified data [38]. It is critical because environmental degradation, health, and socioeconomic resilience are interdependent [5]. Understanding these complex feedback loops requires a paradigm shift towards integrative, data-informed governance [5].

FAQ 2: Our research is suffering from "tool sprawl." What is the best way to consolidate our data integration tools? The best approach is to adopt a comprehensive, cloud-native data integration platform [37]. Look for these key characteristics:

- Universal Connectors: Tool-agnostic connectivity that works with various on-cloud and on-premises systems.

- Intelligent Automation: AI-driven features to automate tasks, recommend workflows, and auto-catalog data.

- Flexible Deployment: The ability to start with free versions and scale up seamlessly to full-service solutions as needed [37].

FAQ 3: How can we ensure our data ecosystem is scalable and that data assets are discoverable? To ensure scalability in a heterogeneous environment, enforce robust governance requiring all participants to:

- Make data assets discoverable, addressable, and trustworthy.

- Use self-describing semantics and open standards for data exchange.

- Support secure, granular-level access to data [38].

FAQ 4: What are the key technical considerations for setting up the data exchange and architecture? When setting up your ecosystem, you must resolve five key questions:

- Data Exchange: How will data be exchanged among partners? Use standard mechanisms like secure API-based connections [38].

- Identity & Access: How is identity managed? Consider centralized (e.g., OpenID) or decentralized (e.g., blockchain) systems [38].

- Data Domains & Storage: How are data domains defined? A data mesh architecture is often preferred over strict centralization [38].

- Access & Consolidation: How is access to non-local data managed? Use a central API catalog with strong governance for data sharing [38].

- Scaling: How will the ecosystem scale? This is achieved through the governance and standards described in FAQ 3 [38].

Data Presentation and Protocols

Table 1: Core Color Palette for Visualizations

Adhere to this palette to ensure accessibility and visual consistency across all diagrams and interfaces.

| Color Name | Hex Code | RGB Code | Use Case Example |

|---|---|---|---|

| Google Blue | #4285F4 |

(66, 133, 244) | Primary data source nodes, "Environmental" data flows |

| Google Red | #EA4335 |

(234, 67, 53) | Data processing/transformation nodes, "Health" data flows, error states |

| Google Yellow | #FBBC05 |

(251, 188, 5) | Integration/analysis nodes, "Socioeconomic" data flows, warnings |

| Google Green | #34A853 |

(52, 168, 83) | Output/result nodes, successful validation, final indicators |

| White | #FFFFFF |

(255, 255, 255) | Background for nodes and graphs |

| Light Gray | #F1F3F4 |

(241, 243, 244) | Diagram canvas background, secondary elements |

| Dark Gray | #202124 |

(32, 33, 36) | Primary text color on light backgrounds |

| Medium Gray | #5F6368 |

(95, 99, 104) | Secondary text, borders |

Table 2: WCAG Color Contrast Requirements for Data Visualizations

Ensure all text and graphical elements in your diagrams meet at least Level AA contrast ratios.

| Element Type | Minimum Contrast Ratio | Example Use Case |

|---|---|---|

| Normal Text | 4.5:1 | All labels inside nodes [39] |

| Large Text (18pt+) | 3:1 | Main titles or headers in diagrams [39] |

| Graphical Objects | 3:1 | Lines, arrows, and symbols [39] |

Experimental Protocol: Building an Integrative Data Ecosystem

This methodology outlines the steps for constructing a functional data ecosystem for interdisciplinary research.

1. Problem Formulation & Indicator Selection

- Objective: Define the specific research question linking environmental, health, and socioeconomic factors (e.g., "How does PM2.5 air pollution affect childhood asthma rates across socioeconomically diverse neighborhoods?").

- Procedure:

- Conduct a literature review to identify established and relevant indicators [5] [40].

- Environmental Indicators: PM2.5 levels, water quality indices, green space access [5].

- Health Indicators: Incidence rates of asthma, diabetes, obesity; mental health disorder prevalence [5] [40].

- Socioeconomic Indicators: Income levels, education quality, healthcare accessibility [5] [40].

2. Data Sourcing and Aggregation

- Objective: Collect and aggregate raw data from diverse sources.

- Procedure:

3. Data Processing and Integration

- Objective: Clean, standardize, and merge datasets into a unified model.

- Procedure:

- Employ both ETL and ELT workflows as needed to handle structured and unstructured data [37].

- Implement a decentralized "data mesh" architecture, where domain experts (e.g., environmental scientists, epidemiologists) are responsible for the quality and structure of their respective data [38].

- Apply data governance standards to ensure interoperability and quality across domains [38].

4. Analysis and Modeling

- Objective: Generate insights through statistical and spatial analysis.

- Procedure:

5. Visualization and Reporting

- Objective: Communicate findings effectively to stakeholders and policymakers.

- Procedure:

- Develop interactive dashboards and generate reports that show the interlinked trends, such as pollution maps overlaid with health outcome data and socioeconomic status [5].

- All visualizations must adhere to the color contrast and palette guidelines provided in Tables 1 and 2.

Mandatory Visualizations

Diagram 1: High-Level Architecture of an Integrative Data Ecosystem

Diagram 2: Data Processing Workflow from Source to Insight

Diagram 3: Logical Relationships Between Core Indicators

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for an Integrative Data Ecosystem

| Item / Solution | Function | Example in Context |

|---|---|---|

| Cloud-Native Data Integration Platform | Provides a unified, scalable environment for combining ETL and ELT workflows, ensuring flexibility and stability at scale [37]. | Informatica Cloud Data Integration; Apache Spark on Databricks [37] [41]. |

| Universal Connectors | Pre-built, tool-agnostic interfaces that enable seamless data extraction from a wide variety of sources and destinations without custom coding [37]. | Connectors for pulling data from government APIs (environmental), hospital EHRs (health), and census databases (socioeconomic). |

| Data Mesh Architecture | A decentralized operational model that treats data as a product, assigning ownership and quality control to domain-specific teams (e.g., environmental, health) [38]. | An environmental science team manages and curates all air and water quality data within the ecosystem. |

| Identity & Access Management (IAM) | A centralized or decentralized system that securely controls user authentication and authorization to data assets based on their role [38]. | Using Okta or a blockchain-based system to ensure only authorized researchers can access sensitive health records. |

| Central API Catalog | A discoverable registry of all available data interfaces, ensuring consistency, reusability, and clear governance for data sharing [38]. | A researcher can search the catalog to find the exact API endpoint for latest PM2.5 data or childhood obesity rates. |

| AI/ML Co-pilot & Automation | Intelligent tools that automate repetitive data engineering tasks, recommend optimal workflows, and auto-generate data catalogs and documentation [37]. | An AI suggests a data cleaning pipeline for new health data based on previous projects, saving analysts time. |

Global Burden at a Glance: Key Quantitative Data

The global health impacts of lead and PM(_{2.5}) are substantial. The tables below summarize the core quantitative data on mortality, morbidity, and associated economic losses.

Table 1: Global Mortality and Morbidity Burden (2019 Estimates)

| Pollutant | Attributable Deaths (Annual) | Key Morbidity Outcomes | Affected Populations |

|---|---|---|---|

| Lead Exposure | 5,545,000 adults from cardiovascular disease [42] | 765 million IQ points lost in children <5 years [42] | Children, adults, developing fetus [43] |

| PM(_{2.5}) Exposure | 4.14 million deaths from long-term exposure [44] | Ischemic heart disease, stroke, COPD, lung cancer, lower respiratory infections [44] | Older adults, children, people with pre-existing heart or lung disease [45] |

Table 2: Economic Costs and Regional Disparities

| Pollutant | Global Economic Cost | Regional Disparities |

|---|---|---|

| Lead Exposure | US\$6.0 trillion (6.9% of global GDP) [42] | 95% of IQ loss and 90% of CVD deaths occur in LMICs [42] |

| PM(_{2.5}) Exposure | Not quantified in search results, but significant regional burden. | China and India account for 58% of global PM(_{2.5}) mortality burden [44] |

Methodological Toolkit: Core Experimental Protocols

Health Impact Model for Adult Lead Exposure and CVD Mortality

This protocol outlines the steps for developing a concentration-response function to estimate cardiovascular disease mortality from adult lead exposure [46].

- Step 1: Define the Goal - The goal is to develop a scalable, quantitative Health Impact Model that relates a unit change in adult lead exposure to a unit change in CVD mortality risk [46].

- Step 2: Literature Identification - Conduct a systematic literature review. Build upon existing comprehensive reviews and perform a supplemental search in databases like PubMed using strings such as:

(lead OR pb OR "blood lead") AND (Cardiovascular Diseases AND mortality)[46]. - Step 3: Study Selection Criteria - Apply predefined criteria to identify the most useful studies:

- The study sample must be representative of the general adult population.

- The study should report blood lead levels below 5 µg/dL to reflect current exposures and higher incremental impacts at lower doses.

- The study must be peer-reviewed and published in English [46].

- Step 4: Model Derivation - Prefer studies that present continuous concentration-response functions. Use functions from selected studies to derive the HIM, which can be applied to population data to estimate attributable deaths or avoided mortality from changes in exposure levels [46].

Novel Exposure Model for PM2.5 and Mortality

This methodology assesses both short-term and long-term effects of PM(_{2.5}) exposure on population mortality using spatially resolved data [47].

- Exposure Assessment:

- Data: Utilize satellite-derived aerosol optical depth (AOD) measurements, land-use data, and meteorological variables.

- Model: Develop a prediction model to estimate daily PM(_{2.5}) concentrations at a high spatial resolution (e.g., 10x10 km). Incorporate a land-use regression component to refine estimates to the local address level [47].

- Health Data Analysis:

- Time-Series Analysis (Short-Term Effects): Regress daily counts of deaths in each geographic grid cell against cell-specific short-term PM({2.5}) exposure, controlling for temperature, socioeconomic data, and seasonal trends [47].

- Relative Incidence Analysis (Long-Term Effects): Use two long-term exposure metrics—regional PM({2.5}) predictions and local deviations—to analyze their relationship with the proportion of deaths from cardiovascular and respiratory diseases [47].

Visualizing Pathways and Workflows

Health Impact Modeling Workflow

The diagram below outlines the logical workflow for developing a health impact model for environmental exposures, based on the protocol for lead and CVD mortality [46].

Mechanistic Pathways of Lead Toxicity

This diagram illustrates the primary biological mechanisms by which lead exposure leads to adverse health outcomes, particularly cardiovascular and neurological effects [46] [48] [43].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Data Sources for Burden of Disease Studies

| Item / Reagent | Function / Application in Research |

|---|---|

| Global Burden of Disease (GBD) Data | Provides standardized country-level blood lead level estimates and PM(_{2.5}) data for comparative risk assessment [42]. |

| Satellite Aerosol Optical Depth (AOD) | Serves as a key input for spatiotemporally resolved PM(_{2.5}) prediction models, enabling exposure assessment in areas without ground monitors [47]. |

| NHANES Blood Lead Data | Provides representative biomonitoring data for a population, crucial for calibrating exposure models and tracking temporal trends [46]. |

| Land-Use Regression (LUR) Models | Refines regional air pollution exposure estimates to a local scale using variables like traffic density, land cover, and altitude [47]. |

| Concentration-Response Function | The core quantitative reagent; a function (e.g., relative risk per 10 µg/m³ PM2.5) that translates exposure into health risk, derived from meta-analyses or major cohort studies [49] [47]. |

| Values of Statistical Life (VSL) | A metric used in economics to estimate the welfare cost of premature mortality for cost-of-illness analyses [42]. |

Frequently Asked Questions (FAQs) for Researchers

Q1: Why is there a significant disparity between the GBD 2019 estimate for cardiovascular deaths from lead and the newer estimate of 5.5 million? A1: The newer estimate is approximately six times higher because it uses a health impact model that captures the effect of lead exposure on cardiovascular disease mortality mediated through mechanisms other than hypertension. The GBD 2019 estimate primarily included effects operating through hypertension, potentially missing a significant portion of the burden [42].

Q2: What is the key methodological advancement in recent PM({2.5}) exposure models that improves upon traditional methods? A2: Traditional models often rely on ground monitors, leading to exposure error and limited representativeness. Novel models combine satellite aerosol optical depth (AOD) with land-use data to create daily, high-resolution (e.g., 10x10 km) PM({2.5}) predictions. This provides full geographic coverage, reduces exposure misclassification, and allows for the assessment of both short-term and long-term effects in a single, population-wide study [47].

Q3: Is there a known safe threshold for blood lead concentration in children? A3: No. According to the WHO, there is no known safe blood lead concentration. Even low blood lead concentrations as low as 3.5 µg/dL are associated with decreased intelligence, behavioral difficulties, and learning problems in children. The harmful effects are believed to occur at any detectable level [43].

Q4: How do the economic costs of lead exposure break down? A4: The global US\$6.0 trillion cost is primarily driven by two factors: the welfare cost of premature cardiovascular mortality (about 77% of the total cost) and the present value of future income losses from IQ reduction in children (about 23% of the total cost) [42].

Frequently Asked Questions (FAQs)

Q1: What is green growth in the context of pharmaceutical research and development? A1: Green growth represents an economic development model that seeks to mitigate resource use and pollution by transitioning societies towards a low-carbon, efficient model of production and consumption. In pharmaceutical contexts, this involves nurturing innovation in cleaner technologies, investing in renewable energy, promoting resource conservation, and implementing environmental monitoring systems to ensure sustainable operations while maintaining product safety and compliance [50].

Q2: How does digital environmental monitoring directly support green growth objectives in a lab? A2: Digital environmental monitoring supports green growth by enhancing operational efficiency and preventing waste. It enables real-time tracking of critical parameters like airborne particles and microbial contamination, which leads to a 20% reduction in cleanroom validation time, a 15% decrease in microbial contamination incidents, and a 25% decrease in audit preparation time. This proactive, data-driven approach minimizes batch rejections and resource wastage, contributing to more sustainable manufacturing [51].

Q3: What are the most critical parameters to monitor in a pharmaceutical cleanroom environment? A3: Critical parameters for pharmaceutical cleanrooms include [51]:

- Airborne particulate levels

- Microbial contamination (viable particles)

- Temperature and humidity

- Pressure differentials

Q4: We are seeing inconsistent environmental monitoring data. What are the first steps we should take? A4: Your first steps should follow a systematic troubleshooting approach:

- Understand the Problem: Reproduce the issue by checking if the monitoring equipment or software is functioning as expected. Gather data logs and identify specific patterns of inconsistency [52].

- Isolate the Issue: Remove complexity by checking for recent changes in the environment, calibration status of sensors, or updates to the software. Change one variable at a time (e.g., test a single sensor in a different location) to identify the root cause [52].

- Find a Fix: Based on your findings, this could involve recalibrating sensors, updating software, or re-training personnel on sampling procedures. Document the solution for future reference [52].

Troubleshooting Guides

Guide 1: Resolving Data Integration Errors from Environmental Sensors

Issue: Environmental monitoring data is not streaming correctly from IoT sensors to the central data management platform, causing gaps in reporting.

Potential Causes:

- Cause 1: Network connectivity issues between the sensor and the hub.

- Cause 2: Incorrect configuration of the sensor's data output settings.

- Cause 3: Software version mismatch between the sensor firmware and the data platform.

Solutions:

- Solution 1: Verify Network Connectivity

- Description: Ensure the sensor has a stable connection to the local network.

- Step 1: Check the physical network connections and indicator lights on the sensor.

- Step 2: Use a network diagnostic tool to ping the sensor's IP address from the central server.

- Solution 2: Re-configure Sensor Settings

- Description: Validate and correct the sensor's data transmission settings.

- Step 1: Access the sensor's configuration menu via its local interface or software.

- Step 2: Confirm the correct data output format, transmission frequency, and destination IP address are set according to the system documentation.

Results: After following these steps, data should flow consistently from the sensor to the central platform, visible in the real-time dashboard and data logs.

Useful Resources: System Integration Manual, Network Troubleshooting Checklist.

Guide 2: Addressing High Particulate Count Alerts in a Cleanroom

Issue: The environmental monitoring system triggers repeated high particulate count alerts in a Grade A cleanroom zone.

Potential Causes:

- Cause 1: Compromised personnel gowning or aseptic technique.

- Cause 2: Failure or imbalance in the HVAC or filtration system.

- Cause 3: Equipment malfunction or improper introduction of materials into the zone.

Solutions:

- Solution 1: Escalate for Engineering Review

- Description: Immediately involve facilities engineering to inspect the HVAC system's integrity and performance.

- Step 1: Notify the engineering team and provide them with the alert logs and specific zone data.

- Step 2: Review pressure differential and air flow velocity logs for the affected zone.

- Solution 2: Enhance Personnel Monitoring

- Description: Increase the frequency and rigor of personnel monitoring and gowning qualification checks.

- Step 1: Conduct a focused gowning requalification for all staff accessing the zone.

- Step 2: Deploy additional personnel monitoring (e.g., contact plates, finger dabs) during subsequent operations to isolate the source [53].

Results: The root cause of the particulate excursion is identified and corrected, bringing the cleanroom environment back to its validated state and ensuring compliance.

Useful Resources: HVAC System Validation Protocol, Aseptic Gowning SOP.

Quantitative Data on Digital Monitoring Impact

The following table summarizes empirical data on the benefits of implementing digital environmental monitoring solutions in pharmaceutical settings [51].

Table 1: Measured Benefits of Digital Environmental Monitoring Systems

| Key Performance Indicator | Improvement Measured | Application Context |

|---|---|---|

| Cleanroom Validation Time | 20% reduction | Integration of IoT sensors for real-time alerts |

| Microbial Contamination Incidents | 15% reduction | Deployment of real-time microbial sensors integrated with MES |

| Audit Preparation Time | 25% decrease | Use of automated data collection and reporting tools |

| Production Throughput | 10% increase | Implementation of real-time monitoring to minimize batch delays |

Experimental Protocols

Protocol 1: Cleanroom Performance Qualification (PQ) Using Automated Monitoring

Objective: To qualify and validate that a cleanroom consistently operates within specified environmental parameters (e.g., particulate counts, pressure differentials, temperature, humidity) using a continuous, automated monitoring system.

Materials:

- Research Reagent Solutions & Essential Materials:

- Automated Particle Counter: Laser-based sensor for continuous monitoring of airborne particulate levels (e.g., 0.5 and 5.0 microns).

- Microbial Air Sampler: Active air sampler to capture viable particles for incubation and colony counting.

- Environmental Monitoring Software: A platform like CaliberEMpro for data aggregation, trend analysis, and alerting [53].

- Calibrated Sensors: For temperature, relative humidity, and pressure differentials, traceable to national standards.

Methodology:

- Installation and Calibration: Install sensors at pre-determined, critical locations as per the cleanroom mapping document. Ensure all sensors are calibrated and reporting to the central software.

- Baseline Data Collection: Operate the cleanroom under "at-rest" conditions (equipment on, no personnel present) and collect environmental data for a minimum of 24 hours to establish a baseline.