Ensuring Rigor in Environmental Evidence: A Comprehensive Guide to Peer-Reviewing Systematic Review Search Strategies

This article provides a definitive guide for researchers and professionals on implementing peer review for search strategies in environmental systematic reviews.

Ensuring Rigor in Environmental Evidence: A Comprehensive Guide to Peer-Reviewing Systematic Review Search Strategies

Abstract

This article provides a definitive guide for researchers and professionals on implementing peer review for search strategies in environmental systematic reviews. It covers the critical foundation of why search peer review is essential for minimizing bias and ensuring comprehensive evidence synthesis, aligning with standards from organizations like the Collaboration for Environmental Evidence (CEE). The guide offers a step-by-step methodological walkthrough for applying the Peer Review of Electronic Search Strategies (PRESS) checklist, a validated tool for evaluating conceptualization, syntax, and translation of searches. It further addresses practical troubleshooting for common errors and biases, and concludes with strategies for validating search performance and comparing peer review frameworks across disciplines. This resource is designed to enhance the quality, reproducibility, and reliability of systematic reviews in environmental science and related biomedical fields.

The Critical Role of Search Peer Review in Unbiased Environmental Evidence

Why Search Strategy Quality is the Foundation of a Valid Systematic Review

In environmental health sciences, where evidence informs critical public policy and regulatory decisions, the integrity of a systematic review hinges on the quality of its literature search. A flawed search strategy can introduce bias, miss pivotal studies, and lead to unreliable conclusions. This guide details the methodology for developing and troubleshooting robust search strategies, with a specific focus on the unique challenges of environmental systematic reviews.

Frequently Asked Questions (FAQs)

1. Why can't I just search a single database like PubMed for my environmental review? Relying on a single database is a common but critical mistake. Different databases index different journals and report types. For example, Embase has significantly greater coverage of European and pharmacological literature compared to MEDLINE, while SCOPUS and Web of Science offer broad, multidisciplinary coverage [1]. A comprehensive search requires multiple databases to ensure all relevant evidence is captured [2].

2. What is the difference between sensitivity and precision in searching, and which is more important?

- Sensitivity (Recall): The proportion of all relevant studies in the world that your search finds. A high-sensitivity search aims to miss as few relevant studies as possible.

- Precision: The proportion of the studies your search returns that are actually relevant. High-precision searches yield fewer irrelevant results.

For a full systematic review, high sensitivity is the primary goal to minimize the risk of bias [3]. However, an overly sensitive search can yield an unmanageable number of results. The art of search development lies in optimizing sensitivity while maintaining feasible precision [4].

3. How do I find specialized terminology for my environmental exposure (e.g., a specific chemical)? You must use a combination of approaches:

- Controlled Vocabulary: Use each database's thesaurus (e.g., MeSH in PubMed, Emtree in Embase) to find standardized terms [5].

- Text Word Searching: Identify synonyms, brand names, acronyms, and spelling variations from key articles and background reading. For chemicals, include CAS registry numbers [3].

- Search Existing Reviews: Identify systematic reviews on similar topics and adapt their search terms, with proper citation [4].

4. Is it acceptable to limit my search to English-language articles? While sometimes done for practicality, limiting by language can introduce a source of bias, as it may systematically exclude relevant studies published in other languages [5]. The best practice is to search without language restrictions and, if necessary, address the potential for language bias during the critical appraisal of the evidence [1].

5. What is search peer review, and is it necessary? Yes, peer review of the search strategy is a critical quality assurance step. The Peer Review of Electronic Search Strategies (PRESS) checklist is an evidence-based tool that prompts reviewers to check for errors in Boolean operators, spelling, syntax, and the appropriateness of subject headings and search terms [5] [3]. It is strongly recommended that an information specialist or another experienced searcher conduct this review [4].

Troubleshooting Common Search Strategy Problems

Table 1: Common Search Issues and Solutions

| Problem | Symptom | Underlying Cause | Solution |

|---|---|---|---|

| Low Sensitivity | Search fails to find known key papers; yield is suspiciously low. | Overly narrow search; missing synonyms or spelling variations; incorrect use of AND; failing to use database thesauri. |

Brainstorm all possible terms for each concept; use the OR operator to combine them; exploit "explosion" in thesaurus searching; validate search with gold-standard articles [5] [4]. |

| Low Precision | Search yields far too many irrelevant results. | Overly broad search; omitting a key concept; incorrect use of OR; failing to use appropriate field tags (e.g., [tiab]). |

Add a necessary search concept with AND; use proximity operators or field restrictions to focus terms; consider study design filters if appropriate for the question [5]. |

| Inconsistent Results Across Databases | The same search string returns vastly different numbers of results in different platforms. | Platform-specific syntax and controlled vocabularies. | Never copy-paste a search strategy between databases without adaptation. Adjust the syntax, field tags, and controlled vocabulary terms for each database [5] [2]. |

Experimental Protocols for Search Strategy Validation

Protocol 1: Using the PRESS Checklist for Peer Review

Objective: To systematically identify and correct errors in a draft search strategy before execution. Methodology:

- Preparation: The review team provides the draft search strategy and the full review protocol to the peer reviewer (ideally an information specialist).

- Review: The reviewer uses the PRESS checklist to assess the following domains [3]:

- Translation of Question: Are the search concepts correct and complete?

- Boolean and Proximity Operators: Are

AND,OR,NOTused correctly? Are proximity operators (e.g.,N/3) applied properly? - Subject Headings: Are relevant controlled vocabulary terms (MeSH, Emtree) included and exploded appropriately? Are any key terms missing?

- Text Word Searching: Are spelling variants, synonyms, acronyms, and plural forms accounted for? Is truncation used optimally?

- Spelling/Syntax: Are there any spelling errors or platform-specific syntax errors?

- Limits/Filters: Are any limits (e.g., by date, language) justified and documented?

- Feedback and Revision: The reviewer provides structured feedback. The search lead revises the strategy, and the process repeats until major issues are resolved.

Protocol 2: Validation with a Gold-Standard Set of Articles

Objective: To empirically test the performance (sensitivity) of the search strategy. Methodology:

- Create a Gold-Standard Set: Assemble a small set of articles (e.g., 5-10) that are known to be eligible for inclusion in the review. These are identified through scoping searches, expert consultation, or key seminal papers [5] [4].

- Execute the Test: Run the final search strategy in the target database.

- Check for Retrieval: Determine if each article in the gold-standard set is retrieved by the search.

- Analyze and Refine: If any gold-standard articles are missed, analyze the reason. Revise the search strategy to include the missing terms or concepts that would have retrieved those articles, then re-test.

Table 2: Key Reagents and Tools for Systematic Searching

| Tool / Resource | Function | Relevance to Environmental Systematic Reviews |

|---|---|---|

| Information Specialist / Librarian | Provides expertise in database selection, search syntax, and strategy development; often conducts peer review. | Critical for ensuring the search is comprehensive and reproducible, a core standard in evidence synthesis [6]. |

| Bibliographic Databases (e.g., MEDLINE, Embase, SCOPUS) | Primary sources for identifying peer-reviewed journal articles. | Embase is particularly valuable for its coverage of pharmaceutical and European literature, including environmental toxicology [1]. |

| Cochrane Handbook | The gold-standard methodological guide for systematic reviews. | Provides comprehensive guidance on all aspects of the search process, from sourcing to reporting [1] [2]. |

| PRESS Checklist | An evidence-based tool for the peer review of electronic search strategies. | Helps identify errors and improve search quality before resources are spent on screening [3] [4]. |

| Reference Management Software (e.g., EndNote, Zotero) | Manages, deduplicates, and stores search results from multiple databases. | Essential for handling the large volume of records generated by a comprehensive search [4]. |

| Grey Literature Sources (e.g., clinicaltrials.gov, agency websites) | Identifies unpublished or hard-to-find studies, reducing publication bias. | Crucial for environmental reviews, where significant evidence may reside in government or regulatory reports [2]. |

Search Strategy Development Workflow

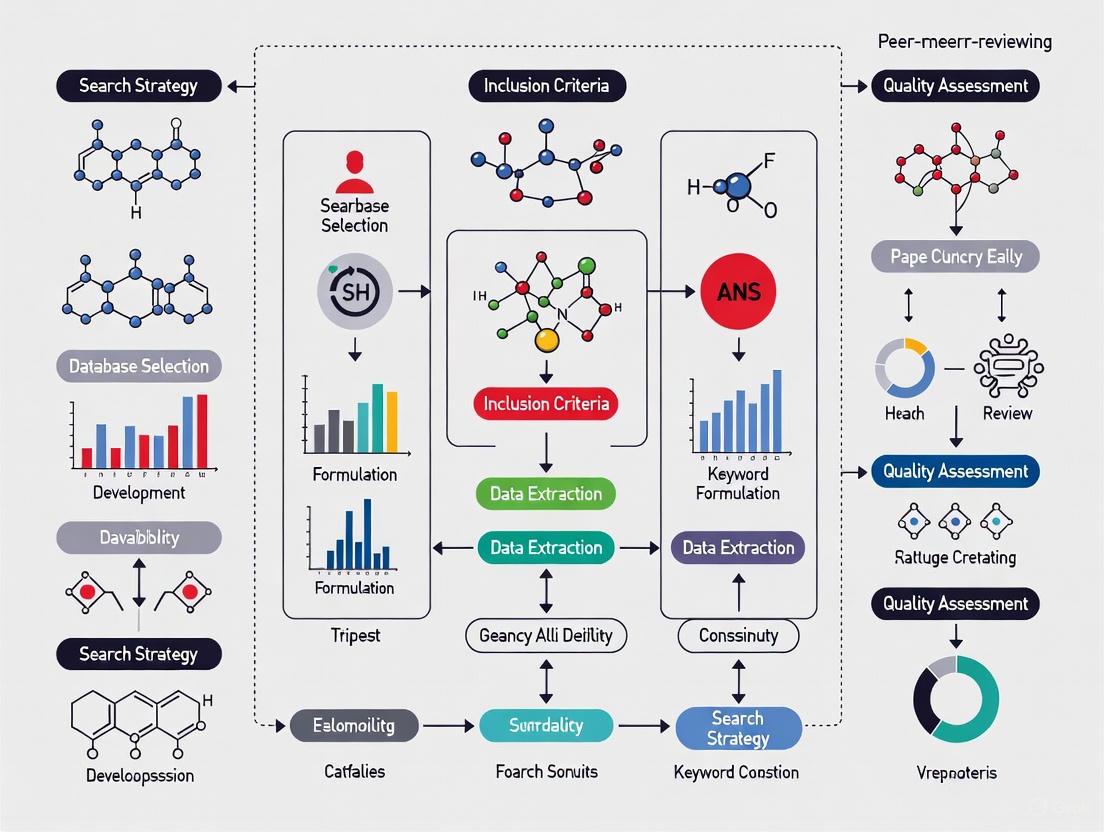

The following diagram outlines the logical workflow for developing, testing, and executing a high-quality search strategy for a systematic review.

This technical support center provides troubleshooting guides and FAQs to help researchers identify and correct common search errors, ensuring the integrity of your systematic reviews.

FAQs on Search Strategy and Research Bias

1. What is research bias and how does it relate to literature searching?

Research bias is a systematic error that can occur at any stage of the research process, leading to inaccurate conclusions [7] [8]. In the context of literature searching for systematic reviews, a flawed search strategy is a primary source of such bias. If your search does not comprehensively and accurately capture the available evidence on a topic, the foundation of your review is compromised, leading to selection bias in the body of evidence you consider [7]. This can distort your results and undermine the validity of your findings.

2. What are common errors in electronic search strategies?

Studies have found that errors in search strategies are common and can significantly limit a search's effectiveness [9]. The Peer Review of Electronic Search Strategies (PRESS) initiative identifies key areas where errors often occur [10] [11]. The table below summarizes these common errors and their potential impact on your research.

Table: Common Search Errors and Their Biasing Effects

| Error Category | Description of Error | Potential Consequence for the Review |

|---|---|---|

| Boolean & Proximity Operators | Incorrect use of AND, OR, NOT, or adjacency operators [9] [11]. | Excludes relevant studies or retrieves a large number of irrelevant records. |

| Subject Headings | Missing relevant controlled vocabulary (e.g., MeSH) or using inappropriate terms [10] [9]. | Fails to capture all studies indexed under that concept, reducing recall. |

| Text Word Searching | Omitting key free-text synonyms, spelling variants, or truncation [10] [9]. | Fails to capture studies where the concept is only in the title/abstract. |

| Spelling & Syntax | Spelling errors and mistakes in line numbers within complex searches [10] [9]. | The search may not run as intended, potentially missing critical studies. |

| Search Limits | Inappropriate use of filters (e.g., by language, date) [10] [11]. | Can introduce language bias or time-lag bias by excluding valid evidence. |

3. How can a flawed search strategy lead to publication bias in my review?

Publication bias occurs when the publication of research findings is influenced by the nature and direction of the results, with studies showing positive or statistically significant results being more likely to be published [7] [8] [12]. If your search strategy is not designed to also locate unpublished studies or those with negative or non-significant results (for example, by searching trial registries and grey literature), your systematic review will over-represent positive findings. This paints a misleading picture of the evidence, potentially making an intervention appear more effective than it truly is [8].

Troubleshooting Guide: Peer Reviewing Your Search Strategy

A formal peer review process for your search strategy is a critical method to identify and correct errors before they bias your conclusions [10] [9]. The following workflow and checklist provide a structured methodology.

Experimental Protocol: Implementing PRESS Peer Review

The Peer Review of Electronic Search Strategies (PRESS) is an evidence-based guideline for this process [9] [11]. The methodology below is adapted from the PRESS 2015 Guideline Statement.

Objective: To detect errors in electronic database search strategies before they are executed, thereby improving search quality and reducing the risk of missing relevant studies [10] [9].

Materials & Reagents:

- Research Question: A clearly defined question (e.g., using PICO/PICOS).

- Draft Search Strategy: The initial, untested search strategy for at least one bibliographic database (e.g., Ovid MEDLINE).

- PRESS Checklist: The validated PRESS 2015 Evidence-Based Checklist [10] [11].

- Peer Reviewer: An information specialist or experienced searcher independent of the search design.

Procedure:

- Preparation: The primary searcher finalizes the draft search strategy based on the research question.

- Self-Review: The primary searcher uses the PRESS Checklist to conduct an initial self-review of their own strategy to catch obvious errors.

- Formal Peer Review: The draft strategy and the PRESS Checklist are submitted to the peer reviewer. The reviewer systematically evaluates the strategy against the six core domains of the PRESS checklist:

- Translation of the research question: Does the search strategy logically and comprehensively represent all key concepts of the research question?

- Boolean and proximity operators: Are AND, OR, and NOT used correctly? Are proximity operators (e.g., NEAR) used appropriately if available?

- Subject headings: Are all relevant controlled vocabulary terms (e.g., MeSH, Emtree) included? Are they exploded and are subheadings used appropriately?

- Text word searching: Are adequate free-text terms and synonyms included for each concept? Is truncation used correctly?

- Spelling, syntax, and line numbers: Are there any spelling errors? Is the syntax correct, especially when combining sets using line numbers?

- Limits and filters: Are any applied limits (e.g., language, human) justified and appropriately documented?

- Feedback and Revision: The peer reviewer provides written feedback, often using a standardized form. The primary searcher and reviewer discuss the comments. The primary searcher then revises the search strategy accordingly.

- Finalization: The final, peer-reviewed search strategy is used to execute the literature search. The peer review process should be documented in the methods section of the final systematic review manuscript [9].

The Scientist's Toolkit: Essential Reagents for Unbiased Searching

Table: Key Resources for Developing and Validating Search Strategies

| Tool / Resource | Type | Primary Function in Preventing Search Bias |

|---|---|---|

| PRESS Checklist [9] [11] | Guideline | Provides a structured framework for identifying errors in electronic search strategies before execution. |

| Systematic Review Protocol (e.g., on PROSPERO or OSF) [13] [14] [15] | Planning Document | Locks in the planned methodology, including the search strategy, reducing reporting bias and ad-hoc changes. |

| Bibliographic Database Thesauri (e.g., MeSH in MEDLINE) | Terminology Tool | Ensures comprehensive capture of studies by identifying and using standardized subject headings, mitigating sample bias. |

| Information Specialist / Librarian | Human Expert | Brings specialized knowledge in search syntax and database-specific nuances to design a robust, unbiased strategy [9]. |

Common Errors & Troubleshooting Guides

Problem: My systematic review is being criticized for not being "systematic" enough. What did I miss?

- Root Cause: Over 95% of published environmental reviews claiming to be "Systematic Reviews" fall short of established methodological standards [16]. A common error is treating it as a simple literature review rather than a structured, bias-minimizing research project.

- Solution:

- Follow a Checklist: Use the CEE Checklist for Editors and Peer Reviewers to quickly validate your review's core methodology. A "yes" to all checklist questions is expected for a bona fide Systematic Review [16].

- Write a Protocol: Develop a detailed research plan before you begin. Pre-register your protocol using guidelines like PRISMA-P to define your objectives and methods upfront [17].

- Document Comprehensively: Use the ROSES (RepOrting standards for Systematic Evidence Syntheses) forms to ensure all methodological information is fully reported [17].

Problem: The peer-reviewer requested a "full search strategy" for my systematic review. What does this entail?

- Root Cause: A lack of transparency and reproducibility in the literature search is a major shortcoming. Reviewers need to verify that your search was comprehensive and unbiased.

- Solution:

- Report Exhaustively: Document all databases used, complete search strings (including all keywords and Boolean operators), and any filters or limits applied [17].

- Justify Restrictions: Explain the rationale for any language or date restrictions.

- Use Reporting Guidelines: Follow PRISMA-S, the reporting guideline for literature searches, to structure your methodology section [17].

Problem: I am an editor for a toxicology journal. How can I ensure the systematic reviews we publish are of high quality?

- Root Cause: Systematic reviews are complex projects requiring a distinct skill set, and without editorial standards, their quality can be inconsistent [18].

- Solution:

- Endorse and Enforce Guidelines: Officially endorse CEE or PRISMA guidelines in your author instructions and require submissions to include a completed checklist [18].

- Request Protocols: Encourage or mandate the submission of study protocols prior to the review's completion. This allows for early feedback on the methodology [18].

- Leverage Peer Reviewer Expertise: Specifically recruit peer reviewers with demonstrated expertise in systematic review methodology and evidence synthesis [18].

Frequently Asked Questions (FAQs)

Q1: What is the single most important standard for conducting a Systematic Review in environmental management? The Collaboration for Environmental Evidence (CEE) Guidelines are the definitive standards for the commissioning and conduct of Systematic Reviews in this field. They provide comprehensive guidance on the entire process, from developing a protocol to reporting the final review, ensuring minimal bias and maximum transparency [17].

Q2: How do I choose the right guidelines for my systematic review? The guidelines you select depend on your review type, discipline, and journal requirements. The table below summarizes key guidelines [17].

| Discipline/Focus | Primary Conducting & Reporting Guidelines | Key Resources |

|---|---|---|

| Environmental Management | CEE Guidelines, ROSES | Collaboration for Environmental Evidence (CEE) [17] |

| Health & Medicine | Cochrane MECIR Standards, PRISMA | Cochrane Handbook [17] |

| Education, Social & Behavioral Sciences | Campbell MECCIR Standards | What Works Clearinghouse (WWC) [17] |

| General / Cross-Disciplinary | PRISMA (Preferred Reporting Items for Systematic reviews and Meta-Analyses) | PRISMA Statement, Checklist, and Flow Diagram [17] |

Q3: What are the critical data extraction and appraisal steps often overlooked by researchers? Two steps are frequently underperformed:

- Reliability Assessment (Risk of Bias): Critically appraising each included study for its propensity for systematic error is mandatory, not optional. Use appropriate tools for your field (e.g., ROBIS for systematic reviews) [18].

- Data Synthesis: The synthesis must be appropriate to the data extracted. This can range from narrative synthesis to quantitative meta-analysis, and the method must be pre-specified and justified [18].

Experimental Protocols & Workflows

Detailed Methodology: Conducting a CEE-Compliant Systematic Review

The following workflow outlines the key stages of a rigorous systematic review, integrating CEE standards and troubleshooting checkpoints.

Systematic Review Workflow with Quality Checks

The Scientist's Toolkit: Research Reagent Solutions

This table details key methodological resources essential for conducting a high-quality environmental systematic review.

| Resource / 'Reagent' | Function & Application in the 'Experiment' |

|---|---|

| CEE Checklist [16] | A rapid assessment tool for validating the core methodology of a Systematic Review. Used by authors for self-check and by peer reviewers. |

| ROSES Reporting Forms [17] | Specialized reporting standards for systematic evidence syntheses in environmental research. Ensures all relevant methodological details are disclosed. |

| PRISMA 2020 Statement [17] | An evidence-based minimum set of items (27-item checklist and flow diagram) for reporting systematic reviews and meta-analyses, widely used across disciplines. |

| CEE Guidelines [17] | The comprehensive manual for the commissioning and conduct of Systematic Reviews in environmental management. The primary protocol for the research process. |

| Campbell MECCIR Standards [17] | Methodological standards for the conduct and reporting of systematic reviews in social sciences (e.g., education, crime and justice). |

In environmental systematic reviews, the integrity of your conclusions is entirely dependent on the evidence base you gather. A flawed or incomplete search strategy can introduce critical biases that skew results and mislead policy and practice. This guide helps you identify, troubleshoot, and mitigate three core biases—Publication, Language, and Temporal Bias—that threaten the validity of your environmental research.

#1 Defining the Core Biases and Their Impact

What are Publication, Language, and Temporal Bias?

- Publication Bias: A type of reporting bias where the publication of research results is influenced by the nature and direction of the findings [19]. It is the tendency to handle the reporting of positive (i.e., statistically significant) results differently from negative or inconclusive results [7].

- Language Bias: A form of selection bias where research is overlooked because it is published in a language other than English or the primary language of the review team [20] [21]. This can result in output that favours certain linguistic styles or cultural references, alienating others [20].

- Temporal Bias: Occurs when the evidence included in a review is not representative of the current context in time [20]. This can happen when using obsolete data or when a review is not updated to include recent studies, leading to conclusions that reflect past, not current, conditions [20] [21].

Why are These Biases Problematic?

These biases distort the available evidence, leading to a skewed understanding of environmental issues and interventions.

- Publication Bias creates an artificially positive picture of an intervention's effectiveness, as studies showing no effect or harmful effects remain unpublished [19] [7]. In healthcare, this has led to a false sense of security about treatment safety, resulting in patient harm [19].

- Language Bias can exclude locally relevant knowledge and evidence, particularly from non-English speaking regions, leading to conservation and policy strategies that are ineffective or inequitable [22] [21].

- Temporal Bias can cause reviews to be based on outdated science, especially in rapidly evolving fields. An environmental systematic map showed a 23% increase in evidence in just two years; failing to capture this new data renders a review unreliable [21].

#2 Troubleshooting Guide: Identifying Bias in Your Search Strategy

Use this guide to diagnose potential weaknesses in your search strategy that could introduce bias.

| Symptom | Potential Bias | Diagnostic Check | Implication for Your Review |

|---|---|---|---|

| Your meta-analysis shows a strong treatment effect, but funnel plot is asymmetrical. | Publication Bias | Plot effect sizes against their precision; check for missing studies in areas of non-significance. | Overestimation of an intervention's true effect; potential for flawed recommendations. |

| All included studies are in English, but the topic is relevant to non-English speaking countries. | Language Bias | Audit search strings for non-English database; record number of non-English studies excluded at full-text. | Evidence base lacks cultural/contextual diversity; limited generalizability of findings. |

| Search is more than 2-3 years old, and the field is rapidly evolving. | Temporal Bias | Check publication trends of included studies; run a limited new search for recent years. | Conclusions are based on outdated evidence, missing new insights or refutations [21]. |

| Grey literature searches yield few to no results. | Publication Bias | Verify access to institutional repositories, pre-print servers, and targeted grey literature databases. | Exclusion of potentially crucial null or negative results, often found in thesis and reports. |

| Included studies have a narrow geographical focus (e.g., only from Western countries). | Language & Selection Bias | Examine the "Methods" sections of included studies to map their geographical locations. | Findings may not be applicable to other ecological or socio-economic contexts. |

#3 Frequently Asked Questions (FAQs)

Q1: How often should I update the searches for my systematic review? There is no universal rule, but a common guideline in environmental evidence is to consider an update every 5 years [21]. The decision should be based on factors like the volume of new publications, changes in the field, and the reliability of the existing review. A quick scoping search can help estimate the amount of new evidence.

Q2: Is it sufficient to search only the major English-language databases (e.g., Scopus, Web of Science)? No. Relying solely on major English-language databases is a primary cause of Language and Publication Bias. You should supplement these with regional databases that publish in other languages (e.g., CNKI for Chinese literature) and extensive searches of the grey literature to capture a more representative sample of the global evidence [21].

Q3: What is the difference between an update and an amendment to a systematic review? An Update involves searching for new studies using the original, identical methods to expand the evidence base through time. An Amendment involves any other change or correction to the original methods, such as improving the search strategy, adding new languages, or using a different synthesis method. Amendments require a new, peer-reviewed protocol [21].

Q4: How can I proactively prevent Publication Bias in my review? The most important action is to prospectively register your review protocol, which commits you to your methods and analysis plan. During the search, be diligent in searching for grey literature and unpublished studies. After the review, you can use statistical methods like funnel plots to test for the presence of this bias [19] [7].

Q5: Our team only speaks English. How can we mitigate Language Bias? You have several options: collaborate with researchers who are native speakers of other relevant languages; use translation software for initial screening of titles and abstracts (though full-text translation is more reliable); or explicitly acknowledge the limitation of language restrictions in your review's limitations section [21].

#4 Experimental Protocols for Mitigating Bias

Protocol 1: Comprehensive Search Strategy to Minimize Publication and Language Bias

Objective: To execute a search that captures a globally representative sample of evidence, including published, unpublished, and non-English literature.

Methodology:

- Database Selection: Include at least two major multidisciplinary databases (e.g., Scopus, Web of Science) AND relevant regional/subject-specific databases (e.g., CAB Abstracts, GreenFILE, CNKI, SciELO, LILACS, AGRIS).

- Grey Literature Search:

- Search institutional websites (e.g., World Bank, UNEP, USDA, EPA).

- Search thesis and dissertation repositories (e.g., ProQuest Dissertations & Theses Global, DART-Europe, Networked Digital Library of Theses and Dissertations - NDLTD).

- Search pre-print servers (e.g., arXiv, bioRxiv) and clinical trial registries for relevant ecological data.

- Contact subject matter experts for unpublished data or reports.

- Search Strategy:

- Develop a robust, tested search string in collaboration with a research librarian.

- Do not apply language filters in the initial search. Document the number of records retrieved in each language.

- Screening and Data Extraction:

- At a minimum, screen titles and abstracts of non-English records. If resources allow, translate the full text of studies that appear to meet the inclusion criteria.

- Document all excluded studies at the full-text stage with reasons for exclusion.

Expected Outcome: A more comprehensive and less biased evidence base, increasing the validity and generalizability of the review's findings.

Protocol 2: Systematic Review Update to Mitigate Temporal Bias

Objective: To ensure a systematic review remains current by incorporating newly available evidence.

Methodology [21]:

- Decision to Update: Assess the need for an update by:

- Reviewing publication trends in the field.

- Running a scoping search to estimate the volume of new evidence.

- Considering methodological advances that might warrant an amendment.

- Notification: Inform the relevant review registry (e.g., Collaboration for Environmental Evidence) of your intent to update.

- Search Update:

- Re-run the original search strategies.

- Apply a date filter starting from the end date of the last search. Include a small overlap (e.g., 3-6 months) to account for indexing delays.

- Study Incorporation:

- Apply the original inclusion/exclusion criteria to new search results.

- Integrate new eligible studies into the existing data extraction and synthesis.

- Re-run all analyses with the expanded dataset.

- Reporting:

- Clearly state the update in the final report.

- Highlight any changes in conclusions or the strength of evidence resulting from the new studies.

Expected Outcome: An up-to-date systematic review that reflects the most current state of knowledge, enhancing its reliability for decision-makers.

#5 The Researcher's Toolkit: Essential Reagent Solutions

This table details key methodological "reagents" essential for conducting a rigorous, unbiased systematic review.

| Item | Function in the Research Process |

|---|---|

| Registered Protocol (e.g., in PROSPERO, Open Science Framework) | A prospective plan that locks in the review's methods, preventing bias from post-hoc changes and reducing duplication of effort [23] [21]. |

| Reporting Guidelines (e.g., PRISMA, ROSES) | A checklist to ensure transparent and complete reporting of the review, which is crucial for identifying potential biases [23]. |

| Critical Appraisal Tool (e.g., Cochrane Risk of Bias Tool, GRADE) | A structured instrument to assess the methodological quality and risk of bias in individual studies, informing the strength of conclusions [19] [23]. |

| Grey Literature Sources (e.g., institutional repositories, theses databases) | Evidence sources that help mitigate Publication Bias by capturing studies with null or non-significant results that are often unpublished. |

| Data Synthesis Software (e.g., R, RevMan, NVivo) | Tools for performing quantitative (meta-analysis) or qualitative synthesis, allowing for the exploration of heterogeneity and bias across studies. |

#6 Visualizing the Bias Mitigation Workflow

The following diagram illustrates a logical workflow for integrating bias checks and mitigation strategies into the standard systematic review process.

Diagram 1: A workflow for integrating bias mitigation into systematic reviews. Key mitigation steps (blue) are embedded in the standard process, with critical checkpoints (yellow and red) to ensure review validity and longevity.

Search strategy errors in systematic reviews significantly impact the quality and validity of the research. In environmental systematic reviews, where evidence synthesis informs critical policy and health decisions, comprehensive and unbiased search strategies are essential for minimizing bias and forming valid conclusions [24] [23]. Peer review of search strategies serves as a critical quality control measure to identify and rectify errors before they compromise the review's integrity. This technical support center provides evidence-based troubleshooting guidance to help researchers, scientists, and drug development professionals address common search strategy challenges.

Quantitative Evidence on Search Strategy Errors

A 2019 study analyzing 137 systematic reviews published in MEDLINE/PubMed revealed a high prevalence of search strategy errors [24]. The table below summarizes the key quantitative findings:

Table 1: Frequency and Types of Errors in Systematic Review Search Strategies [24]

| Error Category | Specific Error Type | Frequency (n=137) | Percentage |

|---|---|---|---|

| Strategies with any error | All errors | 127 | 92.7% |

| Errors affecting recall | All recall-affecting errors | 107 | 78.1% |

| Missing morphological variations (e.g., no truncation) | 68 | 49.6% | |

| Missing Medical Subject Headings (MeSH) terms | 30 | 21.9% | |

MeSH terms not searched in [mesh] field |

14 | 10.2% | |

| Non-explosion of MeSH terms | Information Missing | Information Missing | |

| Errors not affecting recall | All non-recall-affecting errors | 82 | 59.9% |

This evidence underscores the necessity of formal peer review processes, such as the Peer Review of Electronic Search Strategies (PRESS) checklist, to detect these common issues before execution [10].

Troubleshooting FAQs: Common Search Strategy Issues

How can I avoid missing relevant studies due to poor term coverage?

- Problem: Missing synonyms or morphological variants leads to low recall [24].

- Solution:

- Consult the MeSH database: Systematically identify all appropriate descriptors and entry terms (synonyms) for each concept [24].

- Use strategic truncation: Apply truncation correctly to retrieve word variants (e.g.,

plant*to find plant, plants, planting). Avoid truncating too short a root or inside quotation marks [24]. - Combine free-text and controlled language: Search for concepts using both natural language terms in free-text fields and controlled vocabulary (e.g., MeSH) in the designated

[mesh]field [24].

Why are my search results missing key concepts despite using MeSH terms?

- Problem: Incorrect MeSH term application fails to retrieve all relevant records [24].

- Solution:

- Always "explode" MeSH terms: Use the explosion feature to include all more specific terms in the hierarchical tree. Deliberately not exploding should be a rare, justified decision for precision [24].

- Search MeSH in the correct field: Ensure MeSH terms are searched using the

[mesh]field tag, not just in all fields [24]. - Supplement with free-text: Also search the MeSH term as a free-text keyword in title/abstract fields to catch records not yet fully indexed with MeSH [24].

What is the most efficient way to check my search strategy for errors before peer review?

- Problem: Unstructured self-review misses common mistakes.

- Solution: Use the PRESS checklist as a systematic self-audit tool [10]. Key items to verify include:

- Translation of the research question: The search concepts accurately reflect the review question.

- Spelling errors and correct line numbers in multi-line strategies.

- Appropriate use of Boolean operators (OR/AND) and parentheses to group concepts logically.

- Comprehensive coverage of subject headings and natural language synonyms.

- Correct application of spelling variants and truncation.

Experimental Protocol: Peer Review of Search Strategies

Objective

To implement a standardized, peer-review process for electronic search strategies in systematic reviews, ensuring strategies are comprehensive, accurate, and free from common errors prior to execution.

Materials and Reagents

Table 2: Research Reagent Solutions for Search Strategy Development

| Item Name | Function/Application |

|---|---|

| PRESS Checklist | Provides a structured framework for evaluating search strategies, covering key elements like conceptualization, syntax, and term selection [10]. |

| MeSH Database | Controlled vocabulary thesaurus used to identify standardized subject headings and synonyms for comprehensive concept coverage [24]. |

| Bibliographic Database (e.g., PubMed, Ovid MEDLINE) | Platform where the search strategy is executed; understanding its specific syntax and functionalities is crucial [24]. |

| Search Syntax Validator | Tool(s) inherent to the database interface or separate software used to check for typographical errors, unmatched parentheses, and correct field tag usage. |

Methodology

- Pre-Review Preparation: The search strategist finalizes the draft strategy based on the research question and documents it line-by-line.

- Reviewer Selection: An independent reviewer with expertise in information retrieval and the subject domain is appointed. This is often a librarian or information specialist [24].

- Review Execution: The reviewer uses the PRESS checklist to evaluate the strategy [10]. The review focuses on:

- Conceptualization: Does the strategy correctly translate the research question?

- Boolean Logic & Syntax: Are AND/OR operators used correctly? Are parentheses properly used to group concepts?

- Vocabulary: Are all relevant subject headings (e.g., MeSH) and free-text synonyms included? Is truncation used appropriately?

- Spelling and Syntax: Are there typos or incorrect field tags?

- Feedback and Revision: The reviewer provides written feedback. The original strategist revises the search strategy accordingly.

- Finalization: The revised strategy is approved by the reviewer and executed across all designated databases.

Workflow Visualization

The diagram below illustrates the logical workflow for the peer review of a search strategy.

A Step-by-Step Guide to Implementing the PRESS Framework

The Peer Review of Electronic Search Strategies (PRESS) Checklist is a structured, evidence-based tool designed to improve the quality of electronic literature search strategies for systematic reviews, health technology assessments, and other evidence syntheses [25] [11]. Developed through a systematic methodology that included a literature review, expert survey, and consensus forum, PRESS provides a comprehensive framework for peer-reviewing search strategies before they are executed [26]. This validated instrument addresses a critical need in evidence synthesis, as the search strategy forms the foundation upon which systematic reviews are built, and errors or sub-optimal strategies can introduce bias and affect review validity [10].

Within environmental systematic reviews, comprehensive and unbiased searching is particularly crucial due to the multidisciplinary nature of the evidence and its distribution across diverse sources [27]. The PRESS checklist helps researchers minimize errors and biases at the search stage, supporting the overall goal of environmental evidence synthesis to provide transparent, reproducible, and minimally biased conclusions [27]. By implementing PRESS, researchers and information specialists can systematically identify potential issues in search strategies, leading to more robust and reliable evidence synthesis.

Complete PRESS Checklist for Troubleshooting Search Strategies

The following table presents the complete PRESS 2015 Evidence-Based Checklist, organized by key domains for troubleshooting electronic search strategies. Use this checklist to systematically identify and address potential issues in your search strategies.

Table 1: PRESS 2015 Checklist for Peer Review of Search Strategies

| Domain | Key Review Questions | Common Issues to Identify |

|---|---|---|

| Translation of Research Question | Does the search match the research question/PICO/PECO? Are concepts clear and appropriately broad/narrow? [25] | Too many/few PICO elements; mismatched scope; unexplained complex strategies [25] |

| Boolean & Proximity Operators | Are Boolean operators (AND, OR, NOT) and nesting used correctly? Could precision be improved with proximity operators? [25] | Incorrect nesting with brackets; unintended exclusions from NOT; overly broad/narrow proximity [25] |

| Subject Headings | Are relevant subject headings included and exploded appropriately? Are major headings or subheadings used correctly? [25] | Missing relevant headings; too broad/narrow headings; improper exploding; missing floating subheadings [25] |

| Text Word Searching | Does the search include all spelling variants, synonyms, and truncation? Are acronyms and fields searched appropriately? [25] | Missing synonyms/spelling variants; too broad/narrow truncation; irrelevant acronyms; inappropriate field selection [25] |

| Spelling, Syntax & Line Numbers | Are there spelling errors or system syntax errors? Are there incorrect line combinations or orphan lines? [25] | Spelling mistakes; wrong truncation symbols; incorrect line combinations in final search [25] |

| Limits & Filters | Are all limits and filters appropriate for the research question and database? Are sources cited for filters? [25] | Irrelevant limits; missing helpful filters; unpublished filters without citation [25] |

Frequently Asked Questions (FAQs) on PRESS Implementation

Q1: At what stage in the search development process should PRESS peer review occur?

Most experts recommend that peer review using the PRESS checklist should be conducted after the MEDLINE search strategy has been prepared but before it has been translated to other databases [11] [26]. This timing allows for identification and correction of conceptual and structural issues before replicating the strategy across multiple platforms. Early review maximizes efficiency by preventing the propagation of errors to other database translations.

Q2: How does PRESS help mitigate bias in environmental systematic reviews?

PRESS addresses several potential biases in evidence synthesis through its comprehensive checking protocol [27]. The checklist helps researchers:

- Minimize publication bias by ensuring search strategies adequately capture grey literature and studies with non-significant results [27]

- Reduce language bias by verifying that search terms accommodate multiple languages and don't disproportionately favor English-language publications [27]

- Address database bias by confirming that search strategies are appropriately structured for different bibliographic sources beyond mainstream databases [27] The rigorous peer review process helps identify gaps or biases in search term selection, database coverage, and search syntax that might otherwise skew the evidence base [10].

Q3: What are the most common errors identified through PRESS peer review?

Research and experience with PRESS implementation have identified several recurring issues in electronic search strategies:

- Missing key subject headings or natural language search terms for important concepts [10]

- Inappropriate use of Boolean operators and nesting, potentially excluding relevant records [25]

- Failure to include all relevant spelling variants, synonyms, and truncations for comprehensive coverage [25]

- Insufficient documentation of limits and filters, making the search difficult to reproduce [25] Structured peer review using the PRESS checklist systematically identifies these and other errors before search execution, potentially improving both recall and precision [11].

Q4: How does PRESS address the unique challenges of environmental evidence synthesis?

Environmental systematic reviews often face particular challenges that PRESS helps mitigate:

- Multidisciplinary coverage: PRESS ensures search strategies adequately cover the diverse disciplines relevant to environmental topics [27]

- Grey literature importance: The checklist verifies appropriate inclusion of government reports, organizational documents, and other non-journal literature crucial for environmental policy [27]

- Geographic and linguistic diversity: PRESS reviews whether searches accommodate regional databases and non-English terminology common in environmental research [27]

- Complex intervention terminology: Environmental interventions often have multiple descriptive terms that PRESS helps identify and include [27]

Experimental Protocol: Implementing PRESS Peer Review

Methodology for Conducting PRESS Peer Review

The following workflow diagram illustrates the standardized protocol for conducting peer review of search strategies using the PRESS checklist:

Step-by-Step Experimental Protocol

Preparation Phase: Develop a complete search strategy for one database (typically MEDLINE/PubMed) based on the research question structured using PICO/PECO or other appropriate frameworks [27]. Document the strategy with all search lines, Boolean operators, subject headings, and limits.

Peer Review Initiation: Submit the complete search strategy to a peer reviewer with expertise in information retrieval methodology. This reviewer should be independent of the search development process to maintain objectivity [10].

Checklist Application: The reviewer systematically applies the PRESS 2015 Evidence-Based Checklist, evaluating the search strategy across all six domains: translation of the research question; Boolean and proximity operators; subject headings; text word searching; spelling, syntax and line numbers; and limits/filters [25] [11].

Evaluation and Feedback: The reviewer provides structured written feedback addressing each domain of the checklist, noting specific concerns and suggestions for improvement. Feedback should reference line numbers and specific terms in the original strategy [25].

Strategy Revision: The original searcher reviews the feedback, makes appropriate revisions to the search strategy, and documents all changes. This may involve adding missing synonyms, correcting Boolean logic, or modifying subject heading approaches.

Finalization and Translation: Once the revised strategy has been finalized and approved, it can be translated to other databases and information sources as needed for the comprehensive search [11].

Validation and Quality Control

The PRESS methodology has been validated through research showing its effectiveness in identifying errors and improving search term selection [11] [26]. Implementation studies suggest that structured peer review using PRESS can identify potential problems in search strategies that might otherwise be overlooked, thereby improving the quality of the evidence synthesis [10].

Research Reagent Solutions: Essential Components for Search Strategy Peer Review

Table 2: Essential Resources for Implementing PRESS Peer Review

| Resource Category | Specific Tool/Solution | Function in Search Peer Review |

|---|---|---|

| Reporting Guidelines | PRISMA-S (Extension for Searching) [2] | Ensures complete reporting of search methods, complementing PRESS quality assessment |

| Methodological Guidance | Cochrane Handbook (Chapter 4) [2] | Provides foundational principles for systematic search design and execution |

| Checklist Tools | PRESS 2015 Evidence-Based Checklist [25] | Primary validated instrument for structured assessment of search strategies |

| Evidence Synthesis Frameworks | CEE Guidelines (Environmental Evidence) [27] | Domain-specific guidance for environmental systematic reviews and maps |

| Documentation Standards | PRISMA-P (Protocol Guidelines) [2] | Standards for documenting planned search methods in review protocols |

Frequently Asked Questions

1. How do I know if my search strategy has sufficient high-contrast text in my documentation or visualization tools? To ensure text is readable, the contrast ratio between the text color and the background color must meet WCAG guidelines. For standard text, the minimum contrast ratio is 4.5:1 (Level AA), and for large-scale text (approximately 18pt or 14pt bold), it is 3:1. For enhanced compliance (Level AAA), the ratios are 7:1 for standard text and 4.5:1 for large text [28]. You can use automated color contrast checker tools to validate this [29].

2. What is the most common error in formulating Boolean operators for systematic review searches? A common error is incorrect nesting of search terms using parentheses, which changes the logic and can inadvertently include or exclude vast numbers of records. A missing parenthesis can break the entire strategy. The PRESS framework emphasizes the verification of Boolean logic to ensure the search executes as intended.

3. My search retrieves too many irrelevant results. Which PRESS element should I focus on? This typically indicates an issue with the Vocabulary and Spelling elements. First, verify that you are using the most appropriate, controlled vocabulary (e.g., MeSH for MEDLINE) for your key concepts. Second, check for and account for spelling variations, singular/plural forms, and hyphenation to ensure your search is precise.

4. How can I visually map my search strategy to validate its logic before execution? Creating a visual workflow of your search strategy can help identify logical flaws. The diagram below outlines the core process of search strategy validation, aligning with PRESS components. The colors used in this diagram adhere to accessibility contrast standards [30] [28].

5. What is the best way to document the peer-review process for my search strategy? Use a structured form or checklist based on the six PRESS elements. The table below summarizes quantitative benchmarks for evaluating a search strategy. Document the original strategy, the reviewer's comments, and all revisions made. This creates a transparent and reproducible audit trail.

PRESS Evaluation Checklist & Benchmarks

The following table outlines the six core PRESS elements and key metrics for evaluation during the peer-review process.

| PRESS Element | Focus of Evaluation | Common Error Examples | Quantitative Checkpoints |

|---|---|---|---|

| Vocabulary | Appropriate use of controlled vocab (MeSH, Emtree) and free-text terms. | Using outdated MeSH terms; missing key synonyms. | Confirm >90% of core concepts have controlled vocab; check term specificity/recall. |

| Spelling | Comprehensive inclusion of spelling variants, plurals, and hyphenation. | US vs. UK spelling (e.g., tumor/tumour); "health-care" vs. "healthcare". | Document all variants used; test impact of adding variants on result count. |

| Boolean Operators | Correct use of AND, OR, NOT and proper nesting with parentheses. | Incorrect nesting: (A OR B) AND C vs. A OR (B AND C); overuse of NOT. |

Validate logic with a small test dataset; check parentheses are balanced. |

| Translation | Accurate adaptation of the search strategy across multiple databases. | Field codes not adapted (e.g., [mesh] in PubMed vs. /exp in Embase). |

Run search in 2+ databases; compare result counts for consistency. |

| Limits/Filters | Justified application of limits like date, language, or study type. | Applying a language filter that inadvertently excludes key non-English studies. | Record number of results pre- and post-filter application. |

| Peer Review | Formal review by a second information specialist or subject expert. | Review is informal or not documented. | Use a standardized checklist; document all suggestions and revisions. |

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential "reagents" or tools for developing and evaluating a systematic review search strategy.

| Tool / Resource | Function in Search Strategy Development |

|---|---|

| Bibliographic Databases (e.g., MEDLINE, Embase) | Primary interfaces for executing searches; each has unique coverage and requires tailored strategy translation. |

| PRESS Peer Review Checklist | A standardized tool to guide the formal evaluation of a search strategy's completeness and accuracy. |

| Color Contrast Analyzer | A software tool or browser extension to ensure that any text in search documentation or visualizations meets WCAG contrast requirements, aiding readability for all users [29]. |

| Protocol Registration Platform (e.g., PROSPERO) | A public repository to pre-register your systematic review protocol, enhancing transparency and reducing bias. |

| Reference Management Software (e.g., EndNote, Zotero) | Essential for de-duplicating records retrieved from multiple databases and managing the final corpus of studies. |

Experimental Protocol: Executing a PRESS-Based Peer Review

Objective: To formally evaluate and refine a systematic review search strategy using the PRESS framework before final execution.

Methodology:

- Preparation: The original search strategist finalizes a draft strategy for one database (e.g., Ovid MEDLINE) and prepares a document with the strategy and the study's inclusion criteria.

- Peer Review: A second information specialist or trained peer independently reviews the strategy using the PRESS checklist (see table above). The reviewer evaluates all six elements: Vocabulary, Spelling, Boolean Operators, Translation, and Limits.

- Revision & Documentation: The original strategist addresses all comments from the reviewer. The review comments, decisions, and all revisions to the search strategy are meticulously documented to create an audit trail.

- Finalization & Translation: The finalized strategy for the first database is then accurately translated to the syntax of all other databases to be searched.

The logical relationships and decision points in this protocol are visualized below.

Frequently Asked Questions (FAQs)

Q1: What is PRESS and why is it critical for my environmental systematic review? PRESS (Peer Review of Electronic Search Strategies) is a structured, evidence-based checklist designed to improve the quality and reliability of database search strategies for systematic reviews [10]. In environmental science, where evidence is diverse and complex, a flawed search can lead to biased or incomplete conclusions. Peer review of your search strategy using PRESS helps identify errors and omissions, ensuring your review is built on a comprehensive and unbiased foundation of evidence [10] [11].

Q2: At what stage in the review process should the PRESS checklist be applied? The PRESS peer review should occur after you have developed a preliminary search strategy for at least one bibliographic database (like MEDLINE or Embase) but before you finalize and translate the search to other databases [11]. This ensures that any fundamental issues are corrected early, preventing the replication of errors across multiple search platforms.

Q3: I'm not a librarian. Who is qualified to conduct a PRESS review? The PRESS guideline was developed for and is ideally applied by information specialists or librarians with expertise in constructing systematic review searches [10] [11]. If such a specialist is unavailable, the review should be conducted by a member of the systematic review team who was not involved in developing the initial search strategy and who has a strong understanding of database-specific syntax and systematic search methods.

Q4: What are the most common errors caught by the PRESS process? Common issues identified during PRESS review include the omission of relevant subject headings or natural language synonyms, incorrect use of Boolean and proximity operators, spelling errors, and the inappropriate application of search limits that may inadvertently exclude relevant studies [10].

Q5: How does PRESS fit into broader systematic review methodologies like the Navigation Guide? The Navigation Guide is a rigorous methodology for translating environmental health science into evidence-based conclusions [31]. It explicitly requires a comprehensive and unbiased literature search as a foundational step. Applying the PRESS checklist to your search strategy directly supports and enhances the "Select the evidence" step of the Navigation Guide, ensuring the subsequent synthesis and rating of evidence are based on a robust and replicable search [31].

Troubleshooting Guide: Common PRESS Checklist Issues and Solutions

| Problem Identified | Potential Consequence | Recommended Corrective Action |

|---|---|---|

| Missed Subject Headings | Lowers search sensitivity (recall); misses key relevant studies. | Consult database thesauri (e.g., MeSH in MEDLINE, Emtree in Embase) to identify all controlled vocabulary terms for the concept. Check if newer terms have been introduced. |

| Inadequate Natural Language Terms | Lowers search sensitivity; fails to capture recent studies not yet indexed with subject headings. | Brainstorm synonyms, acronyms, plurals, and spelling variants (e.g., American vs. British). Use truncation (*) and wildcards (?) appropriately to capture these variations [10]. |

| Errors in Boolean/Proximity Operators | Incorrectly narrows or broadens the search, retrieving too many irrelevant records or excluding critical ones. | Review the logical structure: use AND to combine different concepts, OR to combine synonyms within a concept. Ensure proximity operators (e.g., N/n, W/n) are used and spaced correctly for the specific database. |

| Poor Translation of the Research Question | The search strategy does not accurately reflect the review's PICO/PECO (Population, Intervention/Exposure, Comparison, Outcome) question. | Re-map the search concepts against the PICO/PECO question. Verify that all key elements are represented with both subject headings and keywords. |

| Inappropriate Use of Search Limits | Unintentionally excludes valid studies, introducing bias. For example, using a language limit too early. | Justify every limit (e.g., date, language, document type) based on the review's protocol. Apply limits cautiously, if at all, during the primary search phase. |

The PRESS 2015 Evidence-Based Checklist

The core of the PRESS methodology is its evidence-based checklist. The following table summarizes the key elements a peer reviewer should evaluate [10] [11].

| Checklist Element | Description & What to Look For |

|---|---|

| 1. Translation of the Research Question | Does the search strategy accurately reflect all key concepts (e.g., PICO/PECO) of the systematic review question? |

| 2. Boolean and Proximity Operators | Are AND, OR, NOT used correctly? Are proximity operators (e.g., N/n, W/n) used and spaced appropriately for the specific database? |

| 3. Subject Headings | Are all relevant database-specific controlled vocabulary terms (e.g., MeSH, Emtree) included? Are they exploded where appropriate? Are any irrelevant headings removed? |

| 4. Text Word Search | Are comprehensive natural language terms (synonyms, acronyms, spelling variants) used for each concept? Is truncation and wildcarding used effectively? |

| 5. Spelling, Syntax, and Line Numbers | Are there any spelling errors? Is the syntax correct for the database? If line numbers are used (e.g., in Ovid), are they referenced correctly? |

| 6. Limits and Filters | Is the use of limits (e.g., by date, language, age group) justified and explained? Could any limit inadvertently exclude relevant studies? |

PRESS Workflow for Environmental Reviews

The following diagram illustrates the typical workflow for integrating PRESS into the development of a search strategy for an environmental systematic review.

Research Reagent Solutions: The PRESS Reviewer's Toolkit

Just as a lab requires specific reagents, effectively conducting a PRESS review requires a set of essential "tools."

| Item or Resource | Function in the PRESS Process |

|---|---|

| PRESS 2015 Evidence-Based Checklist | The core diagnostic tool that structures the peer review and ensures all critical elements of the search strategy are evaluated [10] [11]. |

| Bibliographic Database Thesauri (e.g., MeSH, Emtree) | Used to verify the completeness and accuracy of subject headings in the strategy, ensuring all relevant controlled vocabulary terms are included [10]. |

| Systematic Review Protocol | The reference document that defines the review's PICO/PECO question and eligibility criteria, against which the search strategy's conceptualization is checked [32]. |

| Search Strategy Documentation | A clear, annotated copy of the search strategy being reviewed, including the database and platform used, is essential for a replicable and thorough assessment [32]. |

| Text Editor with Syntax Highlighting | Helps the reviewer visually parse complex Boolean logic, spot spelling errors, and identify incorrect syntax or line numbers more easily. |

In the context of environmental systematic reviews, the integration of an information specialist (IS) into the research team is a core methodological recommendation. These professionals, often holding a master's degree in library and information science or a health-related field, are tasked with ensuring the search strategy is systematic, transparent, and reproducible [33]. Their involvement from the very start of a systematic review (SR) is crucial for minimizing bias, producing valid results, and reducing research waste, thereby increasing the overall trustworthiness of the review for informing health policy and clinical decision-making [33].

The complexity of conducting SRs has greatly increased due to a massive rise in available evidence and the complexity of information retrieval methods. This makes the information specialist's role not merely beneficial but essential for a high-quality, reliable output [33].

Troubleshooting Guides and FAQs

This section addresses common challenges teams face when integrating an information specialist, offering practical solutions based on established methodologies.

Frequently Asked Questions

Q1: What are the primary qualifications we should look for in an information specialist for our systematic review team?

The minimum requirements typically include a suitable university degree (e.g., a Master of Library and Information Science or an equivalent health/scientific qualification), several years of experience in information retrieval for evidence-based medicine, an understanding of health care, and evidence of continued education in information retrieval methods [33].

Q2: At what stage of the systematic review process should the information specialist be involved?

The information specialist should be routinely involved right from the start of the project. Their early involvement is critical for helping to formulate the research question, select appropriate information sources and techniques, and judge the potential complexity of the project, which ensures the search strategy is optimally designed from the outset [33].

Q3: Our team has limited resources. Is the involvement of an information specialist truly necessary?

While resource constraints are a recognized challenge, the involvement of an information specialist is considered a core methodological component for producing high-quality, reproducible systematic reviews. In resource-limited settings, exploring collaborations with larger organizations, specialist networks, or seeking consultancy from information specialists can be a way to access this expertise [33].

Q4: How does the role of an information specialist as a methodological peer-reviewer differ from a subject matter peer-reviewer?

Methodological peer-reviewers (often information specialists) focus on evaluating the conduct and reporting of the review's methodology, particularly the search strategy. Evidence shows that their comments are more focused on methodologies, are more frequently implemented by authors, and their recommendations carry significant weight in editorial decisions, sometimes leading to higher rejection rates due to methodological flaws [34].

Q5: What is the PRESS Checklist, and how is it used?

The Peer Review of Electronic Search Strategies (PRESS) Evidence-Based Checklist is a specially developed tool that assists in the scrutiny of search strategies. It is used to ensure search strategies have been designed appropriately for the topic and to avoid common mistakes, thereby improving the quality and reliability of the search [34].

Troubleshooting Common Collaboration Issues

Problem: Resistance to integrating the information specialist's feedback on the search strategy.

- Solution: Foster a culture of transparency and shared ownership. Involve the entire team in discussions about the search strategy early on. Frame the information specialist's feedback as a collaborative effort to strengthen the review's methodology rather than as criticism. Leadership should clearly communicate the value the information specialist brings to the project's success [35] [34].

Problem: The search strategy is not reproducible, or key terms are missed.

- Solution: Implement a formal peer-review process for the search strategy using the PRESS checklist. Furthermore, the information specialist should document every decision made during the development of the search strategy, including the databases selected, the terms used, any limits applied, and the date the search was run. This documentation should be included in the final review to ensure complete transparency and reproducibility [34].

Problem: Team members are unsure of their roles, leading to duplicated efforts or tasks being overlooked.

- Solution: Clearly define roles and responsibilities at the project's outset. For the information specialist, this explicitly outlines their tasks versus those of the subject experts and statisticians. Establishing clear protocols for communication and feedback loops, such as regular check-ins and designated facilitators, can keep the team aligned and on track [35] [33].

Quantitative Data on Collaboration and Peer-Review Impact

The tables below summarize quantitative findings on the benefits of collaborative workflows and the specific impact of information specialists acting as methodological peer-reviewers.

Table 1: Documented Benefits of Effective Real-Time Collaboration in Research Workflows

| Benefit Category | Specific Metric or Outcome | Source / Context |

|---|---|---|

| Efficiency & Speed | Boosts efficiency by 20–30% | General collaborative workflows [35] |

| Reduces revision cycles by 30% | General collaborative workflows [35] | |

| Cuts time spent on emails and meetings by up to 30% | Use of integrated communication systems [35] | |

| Workflow Quality | 76% of design teams report major workflow improvements | Use of collaborative design and prototyping tools [35] |

| 14% rise in productivity; 23% increase in profitability | Teams with well-organized documentation [35] | |

| Team Satisfaction | Increases employee satisfaction by 80% | Access to collaborative tools [35] |

| 85% of employees report feeling happier at work | Access to collaborative tools [35] |

Table 2: Impact of Librarians as Methodological Peer-Reviewers on Manuscript Quality

| Aspect Analyzed | Finding for Methodological Peer-Reviewers (MPRs) | Finding for Subject Peer-Reviewers (SPRs) |

|---|---|---|

| Focus of Comments | Made more comments specifically on methodologies [34] | Fewer methodology-focused comments [34] |

| Author Implementation | 52 out of 65 recommended changes were implemented (80%) [34] | 51 out of 82 recommended changes were implemented (62%) [34] |

| Recommendation to Editor | Editors were more likely to follow the MPR's recommendation (9 times) [34] | Editors were less likely to follow the SPR's recommendation (3 times) [34] |

| Rejection Rate | More likely to recommend rejection (7 times) [34] | Less likely to recommend rejection (4 times) [34] |

Experimental Protocols and Workflows

This section provides detailed methodologies for key collaborative activities.

Protocol: Developing and Peer-Reviewing a Search Strategy

This protocol outlines the steps for creating a robust, reproducible search strategy in collaboration with an information specialist.

Objective: To formulate, execute, and validate a comprehensive search strategy for a systematic review that minimizes bias and is fully reproducible.

Materials:

- Bibliographic databases (e.g., PubMed, Embase, Scopus)

- Trial registries and other grey literature sources

- Reference management software (e.g., EndNote, EPPI Reviewer)

- PRESS Evidence-Based Checklist [34]

Methodology:

- Initial Team Meeting: The information specialist meets with the research team to discuss and refine the research question, ensuring a shared understanding of the scope, key concepts, and inclusion/exclusion criteria.

- Preliminary Scoping: The information specialist may conduct a preliminary scoping search to identify key articles and relevant terminology.

- Draft Search Strategy Development:

- The information specialist develops a draft search strategy for one primary database (e.g., PubMed).

- The strategy uses a combination of controlled vocabulary (e.g., MeSH terms) and free-text keywords for each key concept.

- Boolean operators (AND, OR, NOT) are used to combine concepts.

- Peer-Review of Search Strategy:

- The draft search strategy is reviewed by a second information specialist or an experienced team member using the PRESS checklist.

- The reviewer assesses the strategy for completeness, syntax errors, and logical structure.

- Strategy Finalization and Translation:

- Feedback from the peer-review is incorporated to finalize the strategy.

- The finalized strategy is then translated for each additional database, accounting for differences in thesauri and syntax.

- Search Execution and Documentation:

- The searches are executed on all pre-specified databases and sources.

- The full search strategy for every database, including the date of search and number of records retrieved, is recorded verbatim for inclusion in the review's appendix.

Protocol: The Segmented Peer-Review Process for Manuscripts

This protocol describes a segmented peer-review model, which leverages the specific expertise of an information specialist.

Objective: To improve the quality of evidence synthesis manuscripts through a peer-review process that utilizes dedicated methodological experts for different aspects of the manuscript.

Materials: Manuscript submission to a journal that supports or is open to a segmented review process.

Methodology:

- Reviewer Identification: Upon submission, the journal editor or authors explicitly identify the areas of expertise required to review the paper (e.g., subject knowledge, statistical methods, and search methodology) [34].

- Reviewer Assignment: The editor assigns peer-reviewers based on this segmented expertise. The information specialist is invited specifically as a methodological peer-reviewer (MPR).

- Focused Review: The MPR focuses their review on the methods section, particularly the search strategy, source selection, and reporting of the search process. They do not need to be an expert in the paper's subject matter [34].

- Consolidation of Reviews: The editor receives separate reports from the subject peer-reviewer(s) and the methodological peer-reviewer.

- Editorial Decision: The editor synthesizes all reports, giving specific weight to the MPR's recommendations on methodological rigor, to make a final editorial decision [34].

Workflow Visualization

The following diagram illustrates the integrated workflow of a systematic review team, highlighting the key responsibilities and collaboration points of the information specialist.

Systematic Review Workflow with Information Specialist Integration

This diagram visualizes the collaborative workflow for a systematic review, emphasizing the critical and ongoing role of the information specialist. The process begins with the team defining the research question, upon which the information specialist immediately begins work on the search strategy. A key quality control step is the formal peer-review of this strategy (e.g., using the PRESS checklist) before it is finalized and executed. The team then screens the results and proceeds with data synthesis. Finally, the information specialist can contribute to quality assurance again by acting as a methodological peer-reviewer for the completed manuscript, ensuring the search is reported accurately and rigorously.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key tools, platforms, and methodological resources essential for the information specialist and the research team to collaborate effectively on a systematic review.

Table 3: Essential Tools and Resources for Collaborative Systematic Reviews

| Tool / Resource Name | Category | Primary Function in the Workflow |

|---|---|---|

| PRISMA Checklist [33] | Reporting Guideline | Ensures the systematic review is reported completely and transparently. |

| PRESS Checklist [34] | Methodological Tool | Provides a structured framework for peer-reviewing electronic search strategies to identify errors and improve quality. |

| Cochrane Handbook [34] | Methodological Guideline | The definitive guide to the methodology for conducting systematic reviews of interventions. |

| EndNote / EPPI-Reviewer [33] | Reference Management | Software for managing the large volume of references retrieved, deduplicating records, and facilitating the screening process. |

| Bibliographic Databases (e.g., PubMed, Embase) [33] | Information Source | Comprehensive sources of published scientific literature that are systematically searched. |

| Librarian Peer Reviewer Database [34] | Human Resource | A database that connects journal editors with librarians who have expertise in evidence synthesis for peer-review. |

| Collaboration Platforms (e.g., Slack, Teams) [35] [36] | Communication Tool | Enables real-time communication and integrated discussion tied to the project context, reducing email overload. |

| Shared Documentation (e.g., Notion, Confluence) [35] [36] | Documentation Hub | Serves as a single source of truth for the study protocol, search strategies, and meeting notes, ensuring version control and access for all team members. |

Documenting and Reporting Peer Review Findings in Your Manuscript

Why is proper documentation of the peer review process critical for a systematic review?

Proper documentation of the peer review process is a cornerstone of rigorous and transparent systematic reviews. It demonstrates methodological integrity, allows for the replication of your study, and provides readers and editors with confidence in your findings. For researchers in environmental and drug development fields, where evidence often informs critical decisions, this transparency is paramount. Documenting this process typically involves reporting the use of standardized reporting guidelines and detailing the specific methodological steps taken to ensure the review's comprehensiveness and reduce bias [37].

What are the established reporting guidelines for systematic reviews?

The most widely adopted reporting guideline for systematic reviews is the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) statement [37] [38] [39]. PRISMA provides an evidence-based minimum set of items for reporting in systematic reviews, which is highly recommended for authors. For other review types, different standards apply.

The table below summarizes key reporting guidelines and their applications:

| Review Type | Primary Reporting Guideline | Purpose & Focus |

|---|---|---|

| Systematic Review of Interventions | PRISMA 2020 [37] | The benchmark for reporting systematic reviews and meta-analyses, with a focus on randomized trials but applicable to other interventions. |

| Scoping Review | PRISMA for Scoping Reviews [37] | Guides reporting for scoping reviews, which aim to map the scope and volume of literature on a topic. |

| Review of Diagnostic Test Accuracy | PRISMA for Diagnostic Test Accuracy [37] | Provides specific guidance for the transparent reporting of diagnostic test accuracy reviews. |