Ensuring Forensic Accuracy: A Comprehensive Guide to Validating New Chemistry Techniques

This article provides a systematic framework for the validation of new forensic chemistry techniques, addressing critical needs for reliability and admissibility in the criminal justice system.

Ensuring Forensic Accuracy: A Comprehensive Guide to Validating New Chemistry Techniques

Abstract

This article provides a systematic framework for the validation of new forensic chemistry techniques, addressing critical needs for reliability and admissibility in the criminal justice system. It explores the foundational principles and pressing challenges driving method development, such as the rise of novel psychoactive substances. The content details practical methodological applications, including the use of rapid GC-MS and other emerging technologies, and offers strategies for troubleshooting and optimization. A core focus is placed on comprehensive validation protocols, comparative assessments against established standards, and the translation of validated methods into routine practice to reduce error rates and enhance the scientific robustness of forensic evidence.

The Urgent Need for Validated Methods: Addressing Backlogs, Novel Substances, and Wrongful Convictions

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What are the key parameters I need to validate for a new forensic chemistry method, such as a rapid GC-MS screening technique? A full method validation should assess accuracy, precision, selectivity, specificity, range, carryover/contamination, robustness, ruggedness, and stability to ensure reliable and court-defensible results [1]. For seized drug analysis using techniques like rapid GC-MS, your validation must demonstrate the method's capability for isomer differentiation and its limitations in analyzing complex mixtures [1].

Q2: What acceptance criteria should I use for precision in my validation study? A common threshold for precision, expressed as the percent relative standard deviation (% RSD), is 10% or less for many accredited forensic laboratories [1]. You should define this and all other acceptance criteria in a validation protocol before initiating experiments [2].

Q3: My laboratory is implementing a new method from an external source. What type of validation is required? Transferring a fully validated method to a new laboratory requires, at a minimum, a transfer validation (also known as a method qualification). This process involves generating at least one set of accuracy and precision data in the new laboratory using the same method, vehicle, and predefined acceptance criteria [2].

Q4: How can our laboratory ensure our results are comparable and reliable across different instruments and analysts? Incorporate robustness and ruggedness tests into your validation. Robustness assesses the method's reliability to deliberate, small variations in operational parameters (e.g., temperature, flow rate), while ruggedness evaluates its performance when used by different analysts or on different instruments within your laboratory [1] [2].

Q5: What is the consequence of a broken chain of custody for physical evidence? A broken chain of custody can render evidence inadmissible in court, significantly weakening a case. Proper procedures include labeling evidence with tamper-evident tape, maintaining detailed transfer logs, and using evidence management systems with barcodes or RFID tracking [3].

Troubleshooting Guides

Issue: Inconsistent or highly variable results (%RSD too high) during method development.

- Potential Cause & Solution: Assess container composition, sample stability, and filter bias. For low-dose formulations, verify that high amounts of vehicle components do not affect pH or specificity. Ensure solubility is properly evaluated, as visual observations can be unreliable [2].

Issue: Difficulty in differentiating isomeric species during seized drug analysis.

- Potential Cause & Solution: This is a known limitation of some techniques. Use a combination of retention time and mass spectral search scores for differentiation. If differentiation remains unsuccessful, note this as a limitation of the method, as not all isomers can be reliably distinguished [1].

Issue: Digital evidence is vulnerable to deletion, encryption, or hardware failure.

- Potential Cause & Solution: Isolate devices from networks immediately to prevent remote wiping. Clone hard drives to preserve original data integrity and maintain detailed logs of all access and duplication activities. Use proper warrants to ensure legal admissibility [3].

Experimental Protocols for Method Validation

Protocol 1: Validation of Rapid GC-MS for Seized Drug Screening [1] This protocol is designed to be comprehensive and can be adapted for various analytical techniques.

- Selectivity: Prepare and analyze mixtures of target analytes and potential interferents (e.g, isomeric species, diluents, excipients). The method should be able to differentiate the analyte from all interferents.

- Precision: Inject a minimum of six replicates of a homogeneous sample at low, mid, and high concentrations. Calculate the %RSD for the peak areas and retention times. The %RSD should not exceed the predefined threshold (e.g., 10%).

- Accuracy: Analyze quality control samples with known concentrations. The determined concentration should be within ±15% of the theoretical value.

- Robustness/Ruggedness: Deliberately vary method parameters (e.g., column temperature, flow rate) and have a second analyst perform the analysis on a different instrument. Results should remain within acceptance criteria.

- Stability: Analyze samples stored under various conditions (e.g., different temperatures, over time) to establish the stability profile of analytes in the formulation.

Protocol 2: Assessment of Biological Evidence Integrity [3]

- Collection: Wear sterile gloves and use clean, sterile tools for collection. Place evidence in breathable containers (e.g., paper envelopes) to prevent mold growth.

- Labeling: Label the container immediately with details including the collection date, time, location, case number, and item description.

- Packaging: Package each item separately to avoid cross-contamination.

- Storage: Transfer evidence to a climate-controlled, secure storage facility as soon as possible to prevent degradation of genetic material.

- Chain of Custody: Document every individual who handles the evidence, including the date, time, and purpose of transfer.

Data Presentation Tables

Table 1: Key Validation Parameters and Acceptance Criteria for a Forensic Analytical Method

| Parameter | Description | Example Acceptance Criteria |

|---|---|---|

| Accuracy | Closeness of measured value to true value | Mean value within ±15% of theoretical concentration [2]. |

| Precision | Closeness of repeated measurements | %RSD ≤ 10% [1]. |

| Selectivity | Ability to distinguish analyte from interferents | Baseline resolution of analyte peak from all interferent peaks [1]. |

| Range | Interval between upper and lower concentration of analyte | Linearity and acceptable accuracy/precision across the specified range [2]. |

| Robustness | Reliability under small, deliberate parameter changes | Results remain within acceptance criteria [1]. |

| Ruggedness | Reproducibility under different conditions (analyst, instrument) | Results remain within acceptance criteria [1]. |

| Stability | Ability of analyte to remain unchanged over time | Concentration within ±15% of initial value under stated conditions [2]. |

Table 2: Essential Research Reagent Solutions for Seized Drug Analysis [1] [2]

| Reagent / Material | Function / Purpose |

|---|---|

| HPLC-Grade Methanol / Acetonitrile | Used as solvents for preparing standard solutions and sample extracts due to high purity and compatibility with GC-MS and LC-MS systems. |

| Analytical Reference Standards | Pure substances of target analytes and isomers used to prepare calibration standards, confirm identity, and establish retention times. |

| Custom Compound Test Solution | A mixture of multiple target compounds at a known concentration used for precision, robustness, and stability studies during validation. |

| Vehicle/Excipients (e.g., 0.5% Methylcellulose) | The material(s) used to deliver the test article; critical for assessing method specificity and matrix effects during validation. |

| Gas Chromatography-Mass Spectrometry (GC-MS) System | The standard confirmatory analytical instrument for separating and identifying chemical compounds in a sample. |

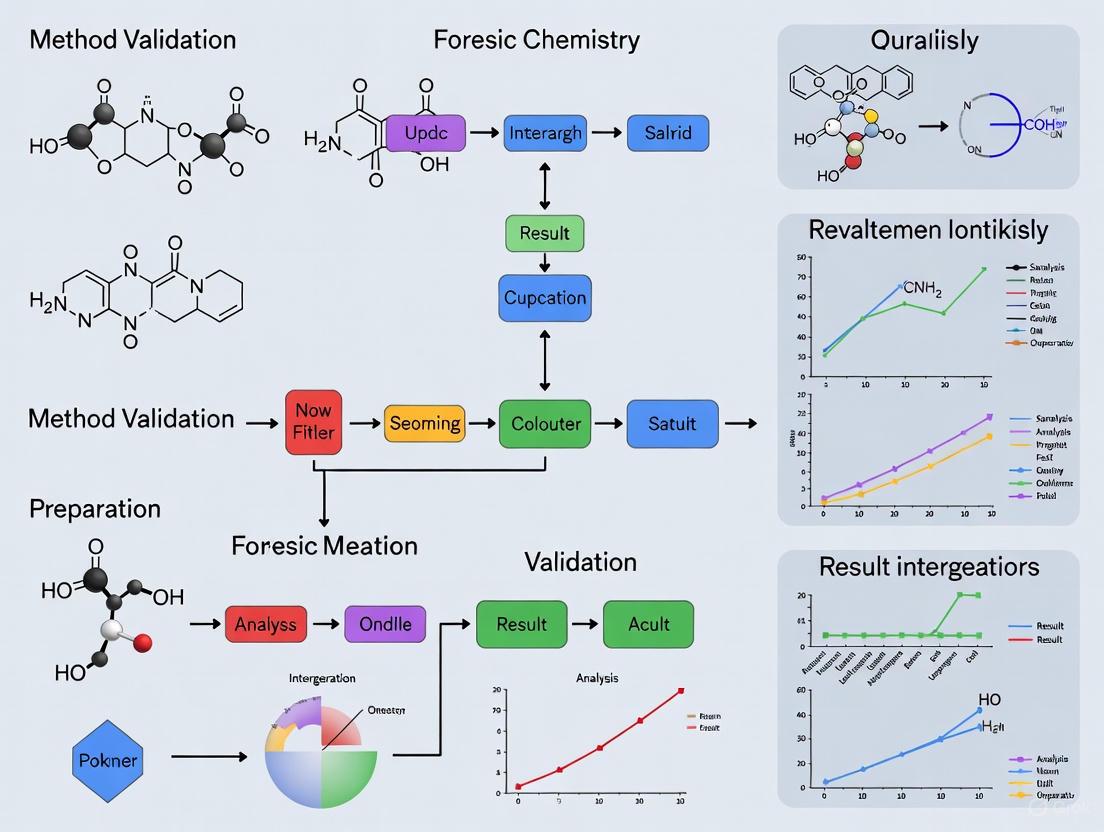

Workflow and Relationship Diagrams

Method Validation Workflow

Evidence Integrity Chain

The dynamic and illicit drug market, characterized by the constant emergence of novel psychoactive substances (NPS), presents a formidable challenge for forensic and clinical laboratories. The rapid evolution of synthetic opioids, cathinones, and cannabinoids necessitates equally agile and advanced analytical method development. This technical support center is framed within a broader thesis on method validation for new forensic chemistry techniques. It addresses the specific, pressing challenges that drive innovation in this field, providing troubleshooting guidance and foundational protocols for researchers and drug development professionals.

FAQs & Troubleshooting Guides: Overcoming Core Analytical Challenges

Synthetic Opioids

Question: Our standard fentanyl screening fails to detect new synthetic opioids like nitazenes. What methodological changes are required?

Answer: The emergence of nitazenes, a class of novel synthetic opioids (NSOs) structurally distinct from fentanyl, renders traditional immunoassays and even some chromatographic methods ineffective [4]. Their extreme potency means they are often present in biological samples at very low concentrations (sub-ng/mL), demanding highly sensitive and specific techniques.

- Challenge: Structural dissimilarity to fentanyl and low concentrations in biological samples [4].

- Recommended Solution: Implement liquid chromatography-tandem mass spectrometry (LC-MS/MS) methods. These offer the required sensitivity and selectivity.

- Troubleshooting Tip:

- Problem: Inadequate sensitivity for low-dose intoxication cases.

- Solution: Employ a simple protein precipitation or solid-phase extraction (SPE) from a small sample volume (e.g., 50 µL of whole blood), followed by LC-MS/MS analysis with a low limit of quantification (LOQ of 0.1 ng/mL has been demonstrated) [5]. This approach is suitable for both postmortem and in vivo samples.

Question: How can we proactively identify an unknown novel synthetic opioid in a case sample?

Answer: Targeted methods are insufficient for unknown substances. A shift to non-targeted screening and data mining workflows is necessary.

- Challenge: The synthetic opioid market can see substances replaced every 3-6 months, creating a lag in targeted method development [6].

- Recommended Solution: Develop non-targeted testing protocols using high-resolution mass spectrometry (HRMS). This allows for the detection of unexpected NPS.

- Troubleshooting Tip:

- Problem: Inability to identify a novel compound during death investigation.

- Solution: Prioritize the analytical testing of seized drug powders from the same scene. Knowing what drug powder was present provides a critical reference for subsequent toxicological analysis of biological samples, guiding the identification process [6].

Synthetic Cathinones

Question: How can we differentiate between positional isomers of synthetic cathinones that produce nearly identical mass spectra?

Answer: This is a classic challenge in cathinone analysis. Standard electron ionization (EI) in GC-MS causes extensive fragmentation, often destroying the molecular ion and producing indistinguishable spectra for isomers [7].

- Challenge: Positional isomers (e.g., 2-, 3-, and 4-methylmethcathinone) yield nearly identical mass spectra with a base peak at m/z 58, making definitive identification impossible by GC-EI-MS alone [7].

- Recommended Solution 1: Develop a targeted GC-MS method that optimizes chromatographic parameters to maximize retention time differences between isomers. One study demonstrated a two-fold increase in retention time differences for a test mixture, allowing for separation and identification [8].

- Recommended Solution 2: Utilize advanced techniques like GC with cold electron ionization (Cold EI). Cold EI reduces the internal energy of analytes, preserving the molecular ion and providing a more detailed fragmentation pattern, which can aid in discriminating between some challenging isomers [7].

- Troubleshooting Tip:

- Problem: A pair of cathinone isomers co-elutes using a general-purpose GC-MS method.

- Solution: Reanalyze the sample using a biphenyl stationary phase in LC, which can provide better shape selectivity for aromatic isomers, or employ a cathinone-specific targeted GC-MS method with an optimized temperature ramp [8] [9].

Synthetic Cannabinoids

Question: Our laboratory wants to transition from color tests to a more informative screening method for seized drugs containing synthetic cannabinoids. What are the benefits and considerations?

Answer: While color tests are fast, they lack specificity and can yield false positives. Modern screening techniques provide definitive information with comparable speed.

- Challenge: Color tests provide presumptive results only and cannot identify specific synthetic cannabinoids or distinguish them from other drug classes [10].

- Recommended Solution: Implement Direct Analysis in Real Time mass spectrometry (DART-MS) for screening. A comparative study showed that DART-MS requires the same amount of time as color tests but yields significantly more chemical information, allowing for tentative identification of the specific synthetic cannabinoid present [10].

- Troubleshooting Tip:

- Problem: High sample throughput creates a bottleneck with confirmation testing.

- Solution: Use DART-MS screening to triage samples. Then, apply targeted GC-MS confirmation methods only to samples where a positive identification was made. This workflow reduces instrument time and consumption of reference materials compared to using general-purpose GC-MS methods on all samples [10].

Question: What is the optimal chromatographic method for quantifying both acidic and neutral cannabinoids in plant material or edibles?

Answer: The choice between Gas Chromatography (GC) and Liquid Chromatography (LC) is critical and depends on the analytes of interest.

- Challenge: The high temperatures in a GC injector and column cause decarboxylation of acidic cannabinoids (e.g., THCA, CBDA) into their neutral forms (e.g., THC, CBD), preventing accurate quantification of the native acidic compounds [11].

- Recommended Solution: Use High-Performance Liquid Chromatography (HPLC or UPLC). LC techniques operate at room temperature, allowing for the direct quantification of both acidic and neutral cannabinoids without derivatization [9] [11]. Coupling to a mass spectrometer (LC-MS/MS) provides the highest level of sensitivity and specificity for complex matrices like edibles.

- Troubleshooting Tip:

- Problem: Poor resolution of structurally similar cannabinoids like THC and CBN on a C18 column.

- Solution: Utilize a biphenyl stationary phase. The biphenyl group enables π-π interactions, improving shape selectivity and providing better resolution of aromatic cannabinoids compared to conventional alkyl-silica phases [9].

Experimental Protocols for Key Analyses

1. Scope: This method is for the confirmatory analysis of synthetic cathinones in seized drug materials. 2. Materials:

- GC-MS System: Equipped with a standard EI source and a mass selective detector.

- Column: Mid-polarity capillary GC column (e.g., 5% diphenyl/95% dimethyl polysiloxane).

- Standards: Certified reference materials for target cathinones. 3. Method Development & Optimization:

- Goal: Maximize retention time differences between target cathinones to aid in identifying spectrally similar compounds.

- Procedure:

- Prepare a test mixture of cathinones known to be challenging (e.g., including positional isomers).

- Systematically investigate GC parameters: oven temperature ramp rate, inlet temperature, and carrier gas flow rate.

- The optimal method is achieved when the retention time differences for the test solution compounds are maximized within a reasonable runtime. 4. Validation: Validate the final method for specificity, sensitivity (LOD/LOQ), linearity, precision, and accuracy according to laboratory guidelines.

1. Scope: Simultaneous identification and quantification of synthetic opioids (e.g., fentanyl, nitazenes) and hallucinogens in whole blood. 2. Materials:

- LC-MS/MS System: Triple quadrupole mass spectrometer with electrospray ionization (ESI).

- LC Column: Reversed-phase C18 column.

- Sample: 50 µL of whole blood. 3. Sample Preparation:

- Perform a simple protein precipitation using an organic solvent (e.g., acetonitrile or methanol).

- Centrifuge, dilute the supernatant, and inject into the LC-MS/MS system. 4. Instrumental Analysis:

- Chromatography: Use a gradient elution with water and methanol, both containing a volatile buffer (e.g., 0.1% formic acid).

- MS Detection: Operate in multiple reaction monitoring (MRM) mode. Monitor at least two precursor ion → product ion transitions per analyte for definitive identification. 5. Method Performance: The validated method demonstrated linearity from 0.1 to 20 ng/mL for most opioids, with an LOQ of 0.1 ng/mL, good precision (%RSD < 13%), and minimal matrix effects [5].

Visualizing Analytical Workflows

Diagram: Comparative Workflows for Seized Drug Analysis

This diagram contrasts a traditional workflow with a modern, information-rich workflow, based on a comparative study [10].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for NPS Analysis

| Research Reagent / Material | Function in Analysis |

|---|---|

| Certified Reference Standards | Critical for method development, calibration, and definitive identification of target analytes by providing known retention times and mass spectra [8] [7]. |

| Deuterated Internal Standards | Essential for quantitative LC-MS/MS and GC-MS to correct for matrix effects, recovery variations, and instrument fluctuations, ensuring accuracy [5]. |

| C18 & Biphenyl LC Columns | C18 is the workhorse for reversed-phase separation; biphenyl columns offer improved resolution for aromatic and structurally similar cannabinoids via π-π interactions [9]. |

| Non-Polar GC Columns (e.g., 5% diphenyl/95% dimethyl polysiloxane) | Standard for separating semi-volatile compounds like cathinones and cannabinoids; optimized temperature programs are key for isomer resolution [8] [11]. |

| Solid-Phase Extraction (SPE) Cartridges | Used to clean up and concentrate analytes from complex matrices like wastewater or biological fluids, improving method sensitivity and reducing matrix effects [9]. |

| LC-MS/MS Mobile Phase Additives (e.g., Formic Acid, Ammonium Acetate) | Volatile buffers and pH modifiers that enhance ionization efficiency in the mass spectrometer, significantly improving signal intensity and stability [5]. |

Table 1: Key Challenges and Methodological Solutions for NPS Classes

| NPS Class | Exemplary Challenge | Driving Force for Method Development | Recommended Technical Solution |

|---|---|---|---|

| Synthetic Opioids (e.g., Nitazenes) | Extreme potency, structural novelty, low concentrations in biology [4]. | Need for sensitive, specific, and proactive detection [6] [4]. | LC-MS/MS for targeted quantitation; HRMS for non-targeted screening [6] [5]. |

| Synthetic Cathinones | Extensive fragmentation in GC-EI-MS; indistinguishable spectra for isomers [7]. | Requirement for confident isomer differentiation and identification [8]. | Targeted GC-MS methods; Advanced ionization (e.g., Cold EI); LC on biphenyl phases [8] [7]. |

| Synthetic Cannabinoids | Constant structural changes to evade laws; complex plant matrices [9] [10]. | Need for rapid, informative screening and accurate quantification of diverse structures. | DART-MS for screening; LC-MS/MS (ESI/APCI) for confirmation and quantification [9] [10]. |

Table 2: Performance Metrics of Developed Analytical Methods from Literature

| Analyte Class | Matrices Tested | Analytical Technique | Key Performance Metrics (e.g., LOQ, Runtime) | Citation |

|---|---|---|---|---|

| 6 Synthetic Opioids & Hallucinogens | Whole Blood (50 µL) | LC-MS/MS | LOQ: 0.1 ng/mL; Linearity: 0.1-20 ng/mL (r² >0.99); Runtime: Fast (specific time not given) | [5] |

| Synthetic Cathinones | Seized Drug Materials | Targeted GC-MS | Runtime: 3.83 min shorter than general method; Result: Increased retention time differences for better resolution | [8] |

| Cannabinoids | Plant Material, Edibles | HPLC-UV/MS | Advantage: Quantifies acidic & neutral cannabinoids without derivatization; superior for thermo-unstable compounds | [9] [11] |

Technical Support Center: FAQs & Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: What are the most common causes of backlogs in forensic chemistry laboratories? Several factors contribute to laboratory backlogs, often interacting to create significant delays [12]:

- Emergence of New Psychoactive Substances (NPS): The identification of NPS is complex and time-consuming, as they are transient, short-lived, and often lack readily available certified reference materials for reliable identification [12].

- Increased Casework and Complexity: Laboratories face not only an increase in the volume of evidence but also a rise in case complexity, demanding more specialized equipment and prolonged examination times [12].

- Resource Constraints: Limitations in funding, personnel, and equipment prevent laboratories from scaling their operations to match the growing workload. Budget cuts can limit hiring, increasing the workload per analyst [12].

- Time-Consuming Validation: Implementing new, faster technology requires a lengthy validation process to ensure results are court-defensible. Developing these validation protocols can take analysts months, pulling them away from casework [13].

Q2: How does the subjectivity of traditional analysis methods impact forensic results? Subjective analysis, which relies on an analyst's visual judgment or personal interpretation (e.g., comparing color changes or visual chemical fingerprints), introduces challenges [14]:

- Difficulty in Defense: Subjective conclusions can be difficult to defend in court, as they lack a quantifiable measure of confidence [14].

- Potential for Human Bias: Visual judgment calls can be affected by human bias, potentially influencing the analyst's conclusions [14].

- Lack of Objectivity: The field is pushing toward objective, probabilistic interpretations to ensure consistency and reliability, similar to standards commonplace in forensic biology (DNA) [14].

Q3: What is the difference between subjective and objective assessment methods in a laboratory context? Understanding this distinction is crucial for method validation [15]:

- Subjective Assessment: Data is based on personal experiences, opinions, and perceptions. For example, a sensory panel evaluating a product's feel. This data is qualitative and provides insights into user experience but is not measurable scientific evidence [15].

- Objective Assessment: Data is based on observable, measurable, and factual evidence, free from personal bias. For example, using a mass spectrometer to identify a compound based on its unique mass-to-charge ratio. This provides hard, reproducible data required for scientific and regulatory validation [15].

Q4: Are there strategies to manage resource constraints effectively? Yes, strategic planning can help mitigate the impact of limited resources [16] [17]:

- Prioritize Tasks: Identify and focus resources on the most critical tasks and milestones [16].

- Effective Resource Allocation: Assign available resources to high-priority tasks and reallocate from less critical areas as needed [18].

- Predict Resource Capacity: Accurately forecast employee availability and factor in absences to identify potential shortages early [16].

- Expand Talent Access: Broaden talent searches, develop upskilling programs, and create a structure that supports global collaboration to access a wider pool of expertise [17].

- Build Resilient Supply Systems: Develop modular and adaptable processes to withstand disruptions in the supply chain [17].

Troubleshooting Common Experimental Hurdles

Issue: Inconclusive results during qualitative analysis of aged marijuana samples via Thin-Layer Chromatography (TLC).

- Background: The primary psychoactive compound in marijuana, Delta-9-THC, degrades over time into cannabinol (CBN), particularly when exposed to light and higher temperatures [12]. This chemical transformation can lead to inconclusive or false-negative TLC results if the analytical method cannot distinguish this degradation.

- Troubleshooting Steps:

- Review Storage Conditions: Verify that evidence samples are stored in dark, cool conditions to minimize post-seizure degradation [12].

- Confirm Analytical Specificity: Ensure your TLC method or subsequent confirmatory methods can separate and identify both THC and CBN. The presence of CBN may mask the presence of residual THC.

- Implement a Confirmatory Technique: Use a more specific analytical technique, such as Gas Chromatography-Mass Spectrometry (GC-MS) or High-Performance Liquid Chromatography (HPLC), to quantify the ratio of THC to CBN and provide a definitive identification [12].

Issue: Prolonged method validation for new instrumentation (e.g., rapid GC-MS).

- Background: Validating new equipment to meet accreditation and court requirements is essential but can take months, diverting analysts from active casework and contributing to backlogs [13].

- Troubleshooting Steps:

- Leverage Pre-Validated Templates: Utilize free, comprehensive validation guides and templates developed by institutions like the National Institute of Standards and Technology (NIST). These resources provide detailed instructions, necessary materials, and automated calculation spreadsheets [13].

- Collaborate with Peers: Engage with a network of laboratories to share validation data and best practices, reducing redundant effort [14].

- Phased Implementation: Plan a phased rollout of the new technology, starting with a limited number of applications, to manage the validation workload effectively.

Issue: Differentiating between subjective and objective data in method validation reports.

- Background: A robust validation report for a new forensic technique should leverage both subjective and objective data to provide a comprehensive view of the method's performance and user experience [15].

- Troubleshooting Steps:

- Categorize Data Sources: Clearly separate data generated by instruments (objective, quantitative) from data gathered from analyst or user feedback (subjective, qualitative).

- Use Combined Data Strategically: Use objective data (e.g., accuracy, precision, detection limits) for the scientific core of the validation. Use subjective data (e.g., ease of use, clarity of software interface) to support practical implementation and training needs [15].

- Table for Data Triage: The following table can help in planning and reporting:

Table: Differentiating Data Types in Validation Reports

Data Type Source Example in Validation How to Report Objective Instruments, reproducible measurements Retention time precision, mass spectral matching, error rates Quantitative metrics, statistical analysis Subjective Analyst observations, user panels Assessment of chromatographic peak shape, ease of data interpretation Qualitative summaries, categorized feedback

Experimental Protocols & Data Presentation

Protocol: Validation of a Rapid GC-MS System for Seized Drug Screening

This protocol is based on resources provided by NIST to streamline the validation process for forensic laboratories [13].

1. Objective To demonstrate that the rapid Gas Chromatography-Mass Spectrometry (GC-MS) system performs seized drug screening with the required precision, accuracy, and reliability for implementation in casework.

2. Materials

- Rapid GC-MS system

- Certified reference materials (CRMs) for target drugs (e.g., cocaine, methamphetamine, fentanyl, common NPS)

- Mass spectrometry-grade solvents

- Data analysis software

- NIST Rapid GC-MS Validation Template and associated spreadsheets [13]

3. Methodology

- Precision (Repeatability): Inject a mid-level concentration of each CRM (n=5) in a single sequence. Calculate the %RSD for retention times and peak areas.

- Accuracy and Specificity: Analyze each CRM and confirm the system correctly identifies the target analyte based on retention time and mass spectral match against a certified library.

- Robustness: Analyze the same set of CRMs over three different days (inter-day precision) and by two different analysts (if possible).

- Limit of Detection (LOD): Serially dilute CRMs to determine the lowest concentration at which the analyte can be reliably detected.

- Carryover: Run a blank solvent sample immediately after analyzing a high-concentration standard and check for any peak presence.

4. Data Analysis Input the collected data (retention times, peak areas, identification results) into the automated spreadsheets provided in the NIST validation package. The built-in calculations will immediately indicate if the instrument meets the pre-set validation criteria [13].

Quantitative Data: Impact of Backlog on Analytical Outcomes

Table: Analysis of Marijuana Sample Backlog and Its Impact on TLC Results [12] This table summarizes data from a study on marijuana samples, demonstrating how storage time and resulting THC degradation directly impact analytical outcomes.

| Storage Time | Sample Condition | TLC Result for THC | Primary Cannabinoid(s) Identified | Impact on Laboratory |

|---|---|---|---|---|

| Fresh (0-6 months) | Properly stored, limited light exposure | Positive | THC | Case proceeds normally. |

| Aged (1-2 years) | Exposed to light and variable temperatures | Inconclusive | Mixed THC and CBN | Requires re-analysis with confirmatory techniques (e.g., GC-MS), increasing workload and cost. |

| Very Aged (>2 years) | Poor storage conditions | Negative (False Negative) | CBN | Risk of incorrect exclusion; potential failure to provide forensic intelligence. |

Workflow Diagrams

Forensic Method Validation Workflow

Data Integration for Robust Method Validation

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Materials for Forensic Drug Analysis and Method Validation

| Item | Function in Research/Validation |

|---|---|

| Certified Reference Materials (CRMs) | Pure, authenticated chemical standards used to confirm the identity and quantity of target analytes (e.g., drugs). Essential for calibrating instruments and establishing method accuracy [12]. |

| Gas Chromatograph-Mass Spectrometer (GC-MS) | The gold-standard instrument for separating and identifying chemical compounds in a mixture. Provides objective, high-confidence identifications [13]. |

| Rapid GC-MS Systems | A faster screening version of GC-MS. While less precise, it significantly reduces analysis time per sample, helping to alleviate backlogs when properly validated [13]. |

| Validation Protocols & Templates | Standardized documents (e.g., from NIST) that outline the experiments and criteria needed to prove a new method is reliable and court-defensible. They save laboratories months of development time [13]. |

| Thin-Layer Chromatography (TLC) | A simple, cost-effective, and quick planar chromatographic technique used for initial screening of samples. However, it may lack specificity for complex or aged samples [12]. |

| Objective Data Analysis Software | Software that uses probabilistic or statistical models to interpret data (e.g., mass spectra). This reduces reliance on subjective analyst judgment and provides quantifiable confidence metrics [14]. |

Forensic science is at a pivotal juncture, where its foundational principles are being re-examined through the critical lens of past errors. The analysis of wrongful convictions reveals a disturbing pattern: misapplied forensic science has contributed to more than half of documented wrongful conviction cases and nearly a quarter of all wrongful convictions since 1989 [19]. These are not merely isolated incidents but rather symptoms of systemic failures that continue to challenge the integrity of forensic evidence. The case of Brandon Mayfield—wrongfully implicated in the Madrid train bombings due to a faulty fingerprint match—exemplifies how confirmation bias, inadequate training, and lack of objective verification protocols can converge with devastating consequences [20]. Within this context, method validation emerges not as a bureaucratic hurdle but as an ethical imperative for forensic chemistry researchers developing new analytical techniques. This technical support guide addresses the critical need for robust validation frameworks that can withstand the complexities of modern forensic practice while safeguarding against the human and procedural vulnerabilities that have previously led to miscarriages of justice.

Troubleshooting Guides: Addressing Common Validation Challenges

FAQ: Method Development and Implementation

Q1: What are the most significant barriers to implementing new analytical technologies in forensic drug analysis, and how can they be overcome?

Forensic laboratories face multiple obstacles when implementing new technologies, including the substantial burden of validation required to demonstrate a method is fit-for-purpose, limited access to authentic samples for testing, and a shortage of discipline-specific training [21]. To address these challenges, researchers can develop comprehensive Validation and Implementation Packages that include method parameters, standard operating procedures, and data processing templates. These packages assume the burden of foundational validation, enabling laboratories to conduct simplified, yet rigorous, verification [21]. Additionally, initiatives such as providing panels of well-characterized authentic samples as research-grade test materials and offering specialized workshops on topics like mass spectral interpretation help lower these implementation barriers significantly.

Q2: How can we ensure analytical methods remain responsive to rapidly evolving illicit drug markets?

The dynamic nature of illicit drug markets, particularly the emergence of novel psychoactive substances and synthetic opioids, requires agile methodological adaptations. A multi-platform approach using AI-MS, GC-MS, and LC-IM-MS provides complementary data streams for structural elucidation when reference materials are unavailable [21]. Maintaining frequently updated internal spectral databases and implementing retrospective data mining of previously analyzed samples allows for identifying new compounds as they emerge. This strategy enables laboratories to detect when a new compound first appeared in the drug supply, even before formal identification [21].

Q3: What procedural safeguards are most effective against cognitive bias in forensic analysis?

The Brandon Mayfield case demonstrated how confirmation bias can undermine forensic conclusions when examiners become aware of initial findings [20]. Implementing independent, double-blind peer review processes, where reviewers are unaware of original conclusions, is critical for ensuring unbiased outcomes [20]. Additionally, structured transparency in methodologies and open dialogue between forensic teams and external experts creates systems of accountability that help identify and rectify potential biases before they result in erroneous conclusions.

FAQ: Data Interpretation and Reporting

Q4: How can machine learning models appropriately communicate uncertainty in forensic classification tasks?

Traditional forensic reporting often requires categorical statements that do not reflect analytical uncertainty. A promising approach involves formulating subjective opinions composed of belief, disbelief, and uncertainty masses that sum to one [22]. For binary classification problems, this can be achieved by fitting predicted posterior probabilities from an ensemble of ML models to a beta distribution, where the shape parameters determine the uncertainty estimate [22]. This framework explicitly quantifies "I don't know" in forensic assessments, allowing analysts to identify high-uncertainty predictions that require additional scrutiny rather than definitive classification.

Q5: What statistical framework best supports the logical interpretation of forensic evidence?

The likelihood ratio (LR) framework has emerged as the logically correct approach for evaluating forensic evidence, as it quantitatively assesses the strength of evidence under competing propositions [23] [24]. This framework is being implemented in automated forensic systems, such as the Fast DNA IDentification Line, which uses probabilistic genotyping models like ProbRank for DNA database searching [24]. The LR framework provides a transparent, quantitative measure of evidential strength that helps prevent the overstatement of forensic conclusions—a historically common contributor to wrongful convictions.

Experimental Protocols: Validation Frameworks for Forensic Chemistry

Protocol for Subjective Opinion Machine Learning in Forensic Chemistry

The following protocol outlines a method for developing ML models that provide transparent uncertainty estimates for binary classification in forensic chemistry, specifically applied to fire debris analysis [22].

Step 1: Data Generation and Feature Selection Generate ground truth data in silico by creating linear combinations of gas chromatography–mass spectrometry (GC-MS) data from ignitable liquids with pyrolysis GC-MS data from building materials and furnishings [22]. Select features with chemical significance to the classification problem (e.g., 33 initial features), then apply scaling and remove low-variance and highly correlated features to obtain a final feature set (e.g., 26 features) [22].

Step 2: Ensemble Model Training Sample the in silico data reservoir through bootstrapping to generate multiple training datasets. Train an ensemble of ML models (e.g., 100 copies) using appropriate algorithms such as Linear Discriminant Analysis (LDA), Random Forest (RF), or Support Vector Machines (SVM) on the bootstrapped datasets [22].

Step 3: Uncertainty Quantification Apply the ensemble of ML models to validation data to obtain posterior probabilities of class membership. Fit these probabilities to a beta distribution for each validation sample. Calculate the subjective opinion (belief, disbelief, uncertainty) using the shape parameters of the fitted distribution [22].

Step 4: Decision Making and Validation Convert subjective opinions to decisions for performance validation by projecting probabilities to calculate log-likelihood ratio scores. Generate Receiver Operating Characteristic (ROC) curves and calculate Area Under the Curve (AUC) to evaluate performance [22]. Validate the method using laboratory-generated evidence samples with known ground truth.

Protocol for Implementing ISO 21043 Forensic Standards

ISO 21043 provides an international standard for forensic science processes. This protocol outlines implementation for forensic chemistry methods [23].

Step 1: Process Mapping to ISO Framework Map existing laboratory procedures to the five parts of ISO 21043: (1) Vocabulary, (2) Recovery, transport, and storage of items, (3) Analysis, (4) Interpretation, and (5) Reporting. Identify gaps in current practices relative to standard requirements [23].

Step 2: LR Framework Integration Implement the likelihood ratio framework for evidence interpretation as specified in the standard. Develop proposition sets relevant to forensic chemistry analysis and establish calculation methods for LR values based on validated analytical data [23].

Step 3: Transparency and Documentation Establish comprehensive documentation protocols ensuring all methodological details, validation data, and interpretation criteria are recorded. Implement quality control measures including regular audits and proficiency testing aligned with standard requirements [23].

Step 4: Reporting Standardization Develop standardized report templates that clearly communicate methodological limitations, uncertainty estimates, and quantitative measures of evidential strength using the LR framework, avoiding categorical statements unless scientifically justified [23].

The experimental workflow for validating new forensic techniques, from development through to standardized reporting, is visualized below.

Quantitative Data: Performance Metrics in Forensic Science Research

Machine Learning Performance in Forensic Chemistry

Table 1: Performance metrics of machine learning algorithms applied to forensic fire debris analysis [22].

| Machine Learning Method | Training Set Size | Median Uncertainty | ROC AUC | Optimal Training Conditions |

|---|---|---|---|---|

| Linear Discriminant Analysis (LDA) | 60,000 samples | Lowest | 0.849 (with RF) | Statistically unchanged AUC beyond 200 samples |

| Random Forest (RF) | 60,000 samples | 1.39x10⁻² | 0.849 | Performance increases with sample size |

| Support Vector Machine (SVM) | 20,000 samples (max) | Highest | N/A | Limited by computational demands |

Error Analysis in Wrongful Convictions

Table 2: Forensic factors contributing to wrongful convictions based on innocence project exonerations [25] [19].

| Contributing Factor | Frequency in Wrongful Convictions | Examples of Problematic Methods |

|---|---|---|

| Official Misconduct | Most common factor in wrongful death penalty cases | Coercing witnesses, concealing exculpatory evidence, falsifying reports |

| False Testimony or Perjury | Nearly 70% of wrongful death penalty cases | Exaggerated statistical claims, misrepresented findings |

| Unreliable or Misapplied Forensic Science | ~50% of innocence project cases; ~33% of death row exonerations | Bite mark analysis, hair comparisons, tool mark evidence, arson investigation [19] |

| Eyewitness Misidentification | ~20% of wrongful death penalty convictions | Especially problematic cross-race identification |

| Cognitive Bias | Demonstrated in multiple high-profile errors | Confirmation bias, contextual bias [20] |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key resources and materials for developing and validating novel forensic chemistry techniques.

| Resource/Material | Function in Research | Application Examples |

|---|---|---|

| In silico Generated Data | Provides large-volume ground truth training data for ML models | Fire debris analysis using linear combinations of IL and pyrolysis profiles [22] |

| Validation and Implementation Packages | Lowers barriers to adopting new technologies | Standardized protocols for method validation including SOPs and data templates [21] |

| Authentic Sample Panels | Well-characterized real-world materials for method validation | Research-grade test materials for assessing performance on street drugs [21] |

| Probabilistic Genotyping Software | Enables quantitative LR-based interpretation of complex evidence | STRmix, EuroForMix, DNAStatistX for DNA evidence [24] |

| Ambient Ionization Mass Spectrometry | Enables rapid, non-chromatographic screening of evidence | DART-MS for seized drug analysis in public health and safety [21] |

| Standardized Spectral Libraries | Supports reproducible compound identification | Curated databases for emerging illicit drugs including novel psychoactive substances [21] |

| Systematic Review Methodologies | Comprehensively summarizes state of the field | Informing courts and decision-makers about forensic method validity [26] |

The evolution of forensic chemistry must be guided by both technical excellence and historical awareness. Quantitative frameworks for uncertainty estimation, such as subjective opinions in machine learning [22], coupled with international standards for methodological rigor [23], provide a pathway toward more robust and transparent forensic practice. The implementation of automated systems with built-in quality controls, such as the Fast DNA ID Line [24], demonstrates that efficiency gains need not come at the expense of reliability. However, technical solutions alone are insufficient without corresponding cultural commitment to acknowledging and learning from error. By systematically addressing the vulnerabilities documented in wrongful convictions—through enhanced training, independent verification, bias mitigation, and transparent reporting—forensic chemistry researchers can develop techniques that not only advance analytical capabilities but also strengthen the foundation of justice itself.

Frequently Asked Questions (FAQs)

Q1: What are the core functions of SWGDRUG, UNODC, and ASTM in forensic drug chemistry?

The table below summarizes the primary focus and key outputs of these three major organizations to help you navigate the regulatory landscape [27] [28] [29].

Table 1: Core Functions of Key Forensic Standards Organizations

| Organization | Primary Focus | Key Outputs & Resources |

|---|---|---|

| SWGDRUG (Scientific Working Group for the Analysis of Seized Drugs) | Developing internationally accepted minimum standards and best practices for the forensic examination of seized drugs [27]. | Recommendations (e.g., Version 8.2), Drug Monographs, Spectral Libraries (MS & IR), Supplementary guidance documents [27]. |

| UNODC (United Nations Office on Drugs and Crime) | Addressing the global drug problem through policy, monitoring illicit drug markets, and strengthening international law enforcement cooperation [28] [30]. | World Drug Report (annual), Thematic area strategies, Programmatic support for member states [28] [30]. |

| ASTM (ASTM International) | Developing and publishing voluntary consensus technical standards for a wide range of materials, products, systems, and services, including forensic sciences [29] [31]. | Standard test methods, practices, and guides (e.g., ANSI/ASB Standard 036 for method validation in forensic toxicology), Annual Book of ASTM Standards [29] [32] [31]. |

Q2: My laboratory is implementing a new rapid GC-MS method. What are the essential validation parameters I must assess?

For any new method, including rapid GC-MS, a comprehensive validation is crucial to demonstrate it is fit-for-purpose. The following parameters should be assessed, as demonstrated in recent literature [33] [34]:

- Selectivity/Specificity: Ensure the method can distinguish the analyte from other substances in the sample matrix.

- Precision: Demonstrate repeatability and reproducibility, often reported as Relative Standard Deviation (RSD). In a recent study, RSDs for retention time and mass spectral scores were ≤ 0.25% for stable compounds [34].

- Accuracy: Verify the closeness of agreement between the test result and the accepted reference value.

- Limit of Detection (LOD) and Limit of Quantitation (LOQ): Determine the lowest amount of analyte that can be detected and quantified with acceptable accuracy and precision. A recent rapid GC-MS method achieved an LOD for Cocaine as low as 1 μg/mL, a significant improvement over a conventional method's 2.5 μg/mL [34].

- Linearity and Range: Establish that the analytical procedure provides results directly proportional to the concentration of the analyte over a specified range.

- Robustness/Ruggedness: Evaluate the method's capacity to remain unaffected by small, deliberate variations in method parameters.

- Carryover/Contamination: Ensure that a sample does not influence the analysis of subsequent samples.

Q3: According to SWGDRUG, what are the critical components that must be included in a forensic drug analysis report?

SWGDRUG provides recommendations on report content to ensure clarity and completeness. Your report should generally include [35]:

- Laboratory and submitting agency information.

- A detailed description of all submitted items and samples.

- The clear results of the analysis (e.g., identity and weight of the seized drug).

- A list of all tests and techniques used (e.g., GC-MS, FTIR).

- The signature of the analyst.

- Dates of submittal and analysis.

- Any relevant remarks from the analyst.

Q4: We have encountered a seized drug sample with a very low analyte concentration. How can we improve detection sensitivity using rapid GC-MS?

Method optimization is key to improving sensitivity. Based on a recent validation study, consider the following approaches [34]:

- Optimize Temperature Programming: A carefully designed temperature ramp can enhance peak shape and resolution, improving signal response.

- Adjust Carrier Gas Flow Rate: Optimizing the helium flow rate (e.g., using a fixed rate of 2 mL/min) can improve analyte transport and detection.

- Column Selection: Using a 30-m DB-5 ms column with a 0.25 µm film thickness has proven effective for a broad range of drugs in a rapid method.

- Sample Preparation: Ensure your extraction technique (e.g., liquid-liquid extraction) is efficient for the target analytes to maximize the amount introduced into the instrument.

Troubleshooting Guides

Issue 1: Inconsistent Retention Times in Rapid GC-MS Analysis

Inconsistent retention times can lead to misidentification and unreliable results.

Table 2: Troubleshooting Inconsistent Retention Times

| Symptoms | Potential Causes | Corrective Actions |

|---|---|---|

| Retention time drift over multiple runs. | - Unstable column flow rate or pressure.- Oven temperature instability. | - Check for gas leaks and ensure regulator pressure is stable.- Verify oven calibration and integrity of insulation [34]. |

| Sudden shifts in all retention times. | - Change in carrier gas type, purity, or flow rate.- Column damage. | - Confirm carrier gas type and purity (e.g., 99.999% helium). Re-check method flow settings.- Inspect column for breaks or contamination [34]. |

| Irreproducible retention times for a specific analyte. | - Active sites in the liner or column.- Non-optimized temperature program. | - Replace or clean the injection liner, trim the column inlet.- Re-optimize the temperature program to ensure sufficient separation and elution [33]. |

Issue 2: Inability to Differentiate Isomers During Analysis

Some isomeric compounds may co-elute or produce highly similar mass spectra, making differentiation challenging.

- Confirm the Limitation: First, verify if your current method is inherently incapable of separating the isomers in question. The validation of a rapid GC-MS method confirmed this as a known limitation [33].

- Implement an Orthogonal Technique: Use a secondary technique that separates compounds based on a different chemical principle. SWGDRUG recommendations often require a combination of techniques for conclusive identification [27].

- Gas Chromatography with a Different Column Phase: Switch from a non-polar (e.g., DB-5) to a more polar stationary phase.

- Liquid Chromatography-Mass Spectrometry (LC-MS): LC separation is often better suited for isomer differentiation than GC.

- Fourier-Transform Infrared Spectroscopy (FTIR): FTIR can provide structural information that distinguishes between isomers.

Issue 3: High Background Noise or Contamination in Blanks

Carryover or contamination can compromise results and lead to false positives.

- Check Solvent and Reagent Purity: Always use high-purity solvents (e.g., 99.9% methanol) and ensure they are not the contamination source [34].

- Intensify Cleaning Procedures: Perform extensive system maintenance, including:

- Replace/Clean the Injection Liner: A dirty liner is a common source of carryover.

- Trim the GC Column Inlet: Removing the first 10-50 cm of the column can eliminate non-volatile residues.

- Perform Multiple Blank Injections: Inject a series of pure solvent blanks until the system is clean.

- Bake-Out the Column: Run a high-temperature column bake (without injecting) to volatilize any residual compounds.

- Review Injection Technique and Hardware: Ensure the autosampler syringe is functioning correctly and is being properly rinsed between injections.

Experimental Protocols: Validating a Rapid GC-MS Method

This protocol is adapted from recent research to provide a detailed methodology for validating a rapid GC-MS method for seized drug screening [34].

1. Instrumentation and Materials

- GC-MS System: Agilent 7890B GC connected to a 5977A single quadrupole MSD or equivalent.

- Column: Agilent J&W DB-5 ms column (30 m × 0.25 mm × 0.25 µm).

- Carrier Gas: Helium (99.999% purity), constant flow mode at 2.0 mL/min.

- Software: Data acquisition and processing software (e.g., Agilent MassHunter).

- Reference Standards: Prepare test mixtures from certified reference materials (e.g., from Cerilliant/Sigma-Aldrich or Cayman Chemical). Example mixture includes Tramadol, Cocaine, Heroin, MDMA, etc., at approximately 0.05 mg/mL in methanol [34].

2. Optimized Rapid GC-MS Method Parameters

- Injection Volume: 1 µL (splitless mode)

- Injector Temperature: 280°C

- Oven Temperature Program:

- Initial: 80°C (hold 0.5 min)

- Ramp 1: 50°C/min to 180°C (hold 0 min)

- Ramp 2: 30°C/min to 300°C (hold 0.5 min)

- Total Run Time: ~10 minutes

- MS Source Temperature: 230°C

- Quadrupole Temperature: 150°C

- Solvent Delay: 2.5 minutes

- Acquisition Mode: Scan (e.g., 40-550 m/z)

3. Step-by-Step Validation Procedure

- Selectivity: Analyze the test mixture and a blank solvent (methanol). The method should show no interference in the blank at the retention times of the target analytes.

- Precision (Repeatability): Inject the same test solution (n=5 or more) in a single sequence. Calculate the %RSD for retention times and mass spectral match scores. Acceptance criteria: %RSD ≤ 0.25% for retention times of stable compounds [34].

- LOD/LOQ Determination: Serially dilute the test mixture and analyze. The LOD is the lowest concentration yielding a recognizable chromatographic peak and a mass spectrum with a match score above the identification threshold (e.g., ≥ 90). The LOQ is the lowest concentration that can be quantified with acceptable accuracy and precision.

- Linearity: Prepare and analyze a calibration curve with at least 5 concentration levels. The correlation coefficient (R²) should typically be ≥ 0.990.

- Robustness: Deliberately introduce small changes in method parameters (e.g., flow rate ±0.1 mL/min, final oven temperature ±5°C). The system should meet acceptance criteria for precision and resolution under these varied conditions.

- Application to Real Samples: Extract and analyze 20 real case samples (e.g., solid powders and trace samples from swabs) using the validated method to confirm its practical utility [34].

Workflow for Method Development and Validation The following diagram illustrates the logical workflow for developing and validating a new analytical method, from initial setup to implementation in casework.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Seized Drug Analysis via GC-MS

| Item | Function / Purpose | Example from Literature |

|---|---|---|

| Certified Reference Standards | Provides known analytes for method development, calibration, and quality control. Essential for accurate identification and quantification. | Tramadol, Cocaine, Heroin, MDMA (sourced from Sigma-Aldrich/Cerilliant or Cayman Chemical) [34]. |

| High-Purity Solvents | Used for preparing standards, dilutions, and sample extraction. Minimizes background interference and contamination. | Methanol (99.9%), used for preparing test solutions and liquid-liquid extractions [34]. |

| DB-5 ms Capillary GC Column | A common non-polar/low-polarity stationary phase used for the separation of a wide range of organic compounds, including many seized drugs. | Agilent J&W DB-5 ms (30 m × 0.25 mm × 0.25 μm) [34]. |

| High-Purity Helium Gas | Serves as the carrier gas, transporting the vaporized sample through the GC column. | 99.999% purity helium at a fixed flow rate of 2 mL/min [34]. |

| Mass Spectral Libraries | Electronic databases of reference spectra used by the software to compare and identify unknown compounds from the sample. | Wiley Spectral Library, Cayman Spectral Library [34]. |

| Quality Control Materials | Used to verify the ongoing performance and accuracy of the analytical system (e.g., continuing calibration verification, blank samples). | Custom "general analysis" mixtures, procedural blanks, and control samples [33] [34]. |

Implementing Cutting-Edge Techniques: From Rapid GC-MS to Portable MS and AI

The escalating incidence of drug-related crimes and the emergence of novel psychoactive substances demand rapid and reliable analytical methods in forensic laboratories [34] [36]. Conventional Gas Chromatography-Mass Spectrometry (GC-MS), while highly specific and sensitive, often requires extensive analysis times (typically 20-30 minutes per sample), creating bottlenecks in judicial processes and law enforcement responses [34] [37]. This context frames a critical research thesis: that properly validated rapid GC-MS methodologies represent a paradigm shift for high-throughput seized drug screening, effectively reducing forensic backlogs while maintaining—and often enhancing—the analytical rigor required for forensic evidence [34] [1] [38].

Rapid GC-MS technologies address these challenges through significant instrumental and methodological optimizations. By employing accelerated temperature programming (ramps of 70°C/min versus conventional 15°C/min), shorter columns, and optimized flow rates, these methods achieve analysis times of 10 minutes or less—a threefold reduction compared to conventional methods—while preserving chromatographic resolution and detection sensitivity [34] [37]. This article establishes a technical support framework for implementing these advanced methodologies, providing troubleshooting guidance, experimental protocols, and resource documentation to support their validation and integration into forensic workflows.

Core Principles of Rapid GC-MS

Fundamental Technological Advancements

Rapid GC-MS achieves its significant time savings through several key technological modifications compared to conventional GC-MS systems. While traditional methods use slower temperature ramps (typically 10-20°C/min) on longer columns (20-30m), rapid approaches employ dramatically faster heating rates (up to 70°C/min) that propel analytes through the column more quickly [34]. These systems often utilize specialized columns with optimized dimensions and stationary phases—such as the DB-5ms (30m × 0.25mm × 0.25μm) or even shorter columns (1-2m) with narrower internal diameters—to maintain separation efficiency while reducing runtime [34] [37].

The mass spectrometry component typically employs electron ionization (EI), which generates highly reproducible, extensive fragmentation patterns suitable for library matching against extensive databases like Wiley and NIST, containing hundreds of thousands of reference spectra [39] [40]. This "hard" ionization approach provides characteristic fingerprint patterns for confident compound identification, making it ideal for comprehensive drug screening applications across multiple drug classes [39] [40].

Performance Validation and Advantages

Systematic validation studies demonstrate that optimized rapid GC-MS methods not only accelerate analysis but also enhance key performance metrics. Research shows limit of detection (LOD) improvements of at least 50% for key substances like cocaine and heroin, achieving detection thresholds as low as 1 μg/mL for cocaine compared to 2.5 μg/mL with conventional methods [34] [36]. These methods exhibit excellent repeatability and reproducibility with relative standard deviations (RSDs) for retention times consistently below 0.25% for stable compounds, ensuring reliable compound identification across multiple analyses [34].

When applied to real case samples from forensic laboratories, rapid GC-MS has successfully identified diverse drug classes—including synthetic opioids, stimulants, synthetic cannabinoids, and benzodiazepines—with match quality scores consistently exceeding 90% across tested concentrations [34] [37]. This performance, combined with significantly reduced analysis times, makes the technology particularly valuable for high-volume laboratories addressing case backlogs and needing rapid turnaround for law enforcement and public health initiatives [37] [38].

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Encountered Operational Challenges

Symptom: Poor Chromatographic Resolution or Peak Tailing

- Potential Cause: Active compounds interacting with non-inert liner or column

- Solution: Use Ultra Inert (UI) or specially deactivated liners and columns to reduce adsorption of active compounds like opioids and amphetamines [39]

- Potential Cause: Incorrect column selection for application

- Solution: Select appropriate column chemistry (e.g., DB-5ms for general screening, Wax columns for polar compounds) and ensure proper dimensions (shorter columns for rapid analysis) [39] [40]

Symptom: Elevated Baseline or Ghost Peaks

- Potential Cause: Column bleed exacerbated by rapid temperature programming

- Solution: Use MS-rated or Ultra Low Bleed (Q) columns specifically designed for sensitive mass spectrometric detection and rapid temperature programs [39]

- Potential Cause: Contamination from previous samples or septum degradation

- Solution: Implement regular maintenance schedule, replace injection port septum frequently, use high-temperature septa compatible with rapid method inlet temperatures [41] [39]

Symptom: Retention Time Drift During Sequence Analysis

- Potential Cause: Inadequate column equilibration in fast cycling methods

- Solution: Optimize post-run time and temperature conditions to ensure identical starting conditions for each analysis [41]

- Potential Cause: Carrier gas flow instability under rapid temperature changes

- Solution: Verify gas supply pressures, check for leaks, and consider electronic pressure control (EPC) verification [41]

Symptom: Reduced Sensitivity for Specific Compound Classes

- Potential Cause: Thermal degradation of labile compounds at high ramp rates

- Solution: Optimize temperature program to balance speed and compound stability; for highly labile compounds, consider derivatization prior to analysis [37] [40]

- Potential Cause: Source contamination from high-throughput analysis

- Solution: Implement more frequent source cleaning or utilize self-cleaning ion source technology (e.g., JetClean source) [39]

Method Development and Validation FAQs

What validation components are essential for implementing rapid GC-MS in forensic laboratories? Comprehensive validation should assess selectivity, matrix effects, precision, accuracy, range, carryover/contamination, robustness, ruggedness, and stability [1]. These studies establish method capabilities and limitations, with acceptance criteria aligning with accredited laboratory requirements (e.g., % RSD thresholds of ≤10% for precision studies) [1].

How does rapid GC-MS handle isomer differentiation, a critical need in drug analysis? Rapid GC-MS can differentiate some isomeric species using both retention time and mass spectral data, though capabilities vary. For example, method validation has demonstrated differentiation between methamphetamine, m-fluorofentanyl, and various positional isomers of pentylone, though not all isomeric pairs can be resolved [1]. This limitation should be documented during validation.

What strategies address carryover concerns in high-throughput screening environments? Carryover assessment should be integral to method validation. Mitigation strategies include: optimization of wash solvent sequences, implementation of blank injections between samples, and verification of injector and liner inertness [34] [1]. Acceptance criteria typically specify that carryover should not exceed a defined percentage of target analyte response.

How is method robustness demonstrated for rapid GC-MS methods? Robustness is evaluated by intentionally varying critical method parameters (e.g., temperature ramp rates ±5°C/min, flow rates ±0.1 mL/min) and measuring impact on retention time stability and identification confidence [1]. Successful validation demonstrates that typical instrumental variations do not compromise analytical outcomes.

What are the key considerations for transitioning from conventional to rapid GC-MS methods? Key considerations include: column selection and re-optimization of temperature programs, adaptation of data processing methods for narrower peaks, verification of detection limits for target compounds, and establishing correlation with existing confirmatory methods [34] [37].

Experimental Protocols for Method Validation

Instrument Configuration and Method Parameters

For optimal rapid GC-MS performance in seized drug screening, the following instrumental configuration has been demonstrated effective [34] [37]:

- GC System: Agilent 7890B or equivalent with advanced electronic pressure control

- MS System: Agilent 5977A/B single quadrupole mass spectrometer or equivalent

- Column: Agilent J&W DB-5 ms UI or DB-5Q (30 m × 0.25 mm × 0.25 μm) for balanced speed and resolution

- Liner: Ultra Inert split liner (deactivated) for active compounds

- Carrier Gas: Helium (99.999% purity), constant flow mode at 2.0 mL/min

- Injection: Split mode (20:1), injection volume: 1 μL

- Inlet Temperature: 280°C

- Transfer Line Temperature: 280°C

The optimized rapid temperature program should be structured as follows [34]:

- Initial Temperature: 120°C (no hold)

- Ramp 1: 70°C/min to 300°C

- Hold Time: 7.43 minutes

- Total Run Time: 10.00 minutes

Mass spectrometer parameters should be configured for:

- Ionization Mode: Electron Ionization (EI)

- Ionization Energy: 70 eV

- Ion Source Temperature: 230°C

- Quadrupole Temperature: 150°C

- Scan Range: m/z 40-550

- Solvent Delay: Set appropriate to application (typically 1.5-2 minutes)

Systematic Method Validation Protocol

A comprehensive validation template for rapid GC-MS screening should include the following experimental studies, designed to thoroughly characterize method performance [1]:

Selectivity Assessment:

- Prepare test mixtures containing target analytes and structurally similar compounds/isomers at concentrations spanning expected range (e.g., 1-100 μg/mL)

- Analyze in triplicate to evaluate chromatographic resolution and spectral differentiation

- Document retention time differences and match factor scores for isomeric pairs

- Acceptance criterion: Baseline resolution (R > 1.5) for critical pairs or documented differentiation strategy

Precision and Reproducibility Evaluation:

- Prepare quality control samples at low, medium, and high concentrations within linear range

- Analyze six replicates at each concentration level within a single sequence (repeatability)

- Analyze duplicate samples across three different days (intermediate precision)

- Calculate % RSD for retention times and quantitative response (if applicable)

- Acceptance criterion: % RSD ≤ 10% for retention times and spectral match scores

Limit of Detection (LOD) Determination:

- Prepare serial dilutions of target analytes from known stock solutions

- Identify concentration yielding signal-to-noise ratio ≥ 3:1 for qualifying ions

- Verify with minimum of six replicates at established LOD concentration

- Compare LOD values with conventional GC-MS methods to demonstrate improvement

Carryover Assessment:

- Inject high concentration standard (near upper limit of quantification) followed by blank solvent injection

- Measure residual analyte response in blank as percentage of high standard response

- Acceptance criterion: Carryover ≤ 1% of original response or ≤ LOD in blank

Robustness Testing:

- Deliberately vary critical method parameters (temperature ±2°C, flow rate ±0.1 mL/min, ramp rate ±5°C/min)

- Evaluate impact on retention time stability, peak symmetry, and resolution

- Establish system suitability criteria based on robustness results

Accuracy Confirmation with Case Samples:

- Analyze adjudicated case samples (minimum 15-20 samples) with known composition

- Compare results with those obtained by validated reference methods

- Document match scores, retention time agreement, and correct identifications

- Acceptance criterion: >95% concordance with reference method results

Table 1: Performance Metrics of Validated Rapid GC-MS Method for Selected Compounds [34] [37]

| Compound | LOD (μg/mL) | Retention Time RSD (%) | Match Quality Score (%) | Carryover Assessment |

|---|---|---|---|---|

| Cocaine | 1.0 | 0.18 | 96.2 | <0.5% |

| Heroin | 1.2 | 0.21 | 94.8 | <0.8% |

| MDMA | 0.8 | 0.15 | 97.1 | <0.3% |

| Methamphetamine | 0.9 | 0.17 | 95.7 | <0.4% |

| THC | 2.5 | 0.25 | 92.3 | <1.2% |

| Fentanyl | 1.1 | 0.19 | 95.5 | <0.6% |

Table 2: Comparison of Conventional vs. Rapid GC-MS Methods [34] [37]

| Parameter | Conventional GC-MS | Rapid GC-MS | Improvement |

|---|---|---|---|

| Analysis Time | 20-30 minutes | 1-10 minutes | 66-95% reduction |

| Carrier Gas Flow | 1 mL/min | 2 mL/min | Optimized for speed |

| Temperature Ramp | 15°C/min | 70°C/min | 367% faster |

| Cocaine LOD | 2.5 μg/mL | 1.0 μg/mL | 60% improvement |

| Retention Time RSD | 0.3-0.5% | <0.25% | Improved precision |

| Daily Throughput | 20-30 samples | 50-100+ samples | 150-400% increase |

Visualizing Method Validation and Troubleshooting Workflows

Rapid GC-MS Method Validation Pathway

Systematic Troubleshooting Logic Flow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Rapid GC-MS Seized Drug Analysis

| Item | Function/Purpose | Technical Specifications | Application Notes |

|---|---|---|---|

| DB-5ms UI Capillary Column | Primary separation column for broad drug screening | 30m × 0.25mm × 0.25μm; Ultra Inert deactivation | Optimal balance of speed and resolution for most applications [34] [39] |

| Ultra Inert Split Liner | Sample vaporization chamber with minimal activity | Deactivated glass wool; specially deactivated surface | Reduces peak tailing for active compounds like opioids and amphetamines [39] |

| Methanol (HPLC Grade) | Primary extraction and dilution solvent | 99.9% purity; low UV absorbance | Suitable for most drug extractions; minimal interference [34] [1] |

| Certified Reference Standards | Method development, calibration, and identification | Certified purity; traceable documentation | Essential for qualitative and quantitative method validation [34] [1] |

| Helium Carrier Gas | Mobile phase for chromatographic separation | 99.999% purity; with oxygen/moisture traps | Maintains consistent flow rates and reduces column degradation [34] [40] |

| Wiley and NIST Libraries | Spectral database for compound identification | Comprehensive EI mass spectral libraries | Critical for unknown identification; match scores >90% indicate high confidence [34] [39] |

| Quality Control Mix | System suitability and performance verification | Contains representative drugs at known concentrations | Verifies retention time stability, sensitivity, and resolution [1] [37] |

| Derivatization Reagents | Chemical modification of polar/non-volatile compounds | MSTFA, BSTFA, MBTFA for silylation | Enables analysis of compounds like cannabinoids and metabolites [40] |

| Internal Standards | Quantitation and injection volume normalization | Deuterated analogs (e.g., methamphetamine-d5, cocaine-d3) | Compensates for instrumental variations; improves quantitative accuracy [39] |

| Tuning Compounds | MS performance verification and calibration | PFTBA or similar standard with defined m/z ratios | Ensures optimal mass calibration and sensitivity before analysis [39] |

The integration of rapid GC-MS technologies into forensic drug screening workflows represents a significant advancement with demonstrated benefits for operational efficiency and analytical performance. Through systematic validation following established protocols—assessing selectivity, precision, sensitivity, carryover, and robustness—laboratories can confidently implement these methods to address the challenges of increasing casework and emerging drug threats [34] [1].

The troubleshooting guides, experimental protocols, and technical resources provided herein establish a comprehensive framework for successful method development and integration. When properly validated and implemented, rapid GC-MS methods deliver analytical results with equivalent or improved reliability compared to conventional approaches while providing threefold or greater improvements in analysis throughput [34] [37]. This paradigm shift enables forensic laboratories to more effectively support law enforcement responses, judicial processes, and public health initiatives through timely and reliable drug identification.

Troubleshooting Guides

Mass Spectrometer (MS) Troubleshooting Guide

This guide supports diagnosing and resolving common issues during MS data acquisition [42].

| Observed Problem | Possible Causes | Diagnostic Steps | Recommended Solutions |

|---|---|---|---|

| Empty Chromatograms | Spray instability, method setup errors [42] | Check sample introduction, verify method parameters, inspect ion source [42] | Re-tune instrument, ensure proper solvent flow, correct method file [42] |

| Inaccurate Mass Values | Calibration drift [42] | Analyze calibration standard, check for contamination [42] | Re-calibrate instrument using fresh standard solution [42] |

| High Signal in Blank Runs | System contamination, sample carryover [42] | Run blank solvents, inspect and clean ion source and sample path [42] | Flush system with clean solvent, replace contaminated parts, implement cleaning protocol [42] |

| Instrument Communication Failure | Loose cables, software errors, hardware faults [42] | Verify physical connections, restart software and PC, check error logs [42] | Re-seat cables, reinstall drivers, contact service engineer for hardware repair [42] |

Portable XRF Analyzer Troubleshooting Guide

This guide addresses frequent issues with handheld X-ray Fluorescence analyzers.

| Observed Problem | Possible Causes | Diagnostic Steps | Recommended Solutions |

|---|---|---|---|

| Unstable or Drifting Results | Detector instability, X-ray tube inactivity [43] | Perform stability test, check instrument condition report [43] | Power cycle instrument; for long storage, turn on for few minutes every 1-2 months [43] |

| Contaminated Instrument/Data | Dust, dirt, or debris in instrument nose [43] | Visually inspect ultralene window for damage or particles [43] | Regularly replace ultralene window; clean sample area before analysis [43] |

| System Crashes or Slows Down | Data storage overload [43] | Check internal storage space for thousands of accumulated scans [43] | Back up data daily to USB drive to free up system memory [43] |

| Physical Damage | Dropped instrument, impact damage [43] | Inspect housing, check graphene window (1-micron thick) [43] | Always use wrist strap; avoid using analyzer for non-analysis tasks [43] |

Frequently Asked Questions (FAQs)

Direct Analysis in Real Time Mass Spectrometry (DART-MS)

Q: What are the primary forensic applications of DART-MS? A: DART-MS is used for rapid screening and analysis of various forensic samples, including seized drugs, synthetic opioids, explosives, gunshot residues, inks, dyes, and paints. Its ability to provide results in under a minute makes it invaluable for real-time field analysis [21] [44].

Q: How does DART-MS achieve ionization without extensive sample preparation? A: DART-MS uses an ambient ionization mechanism. A stream of excited helium or nitrogen gas interacts with atmospheric water vapor to form protonated water clusters. These clusters then transfer protons to analyte molecules present on a sample surface, ionizing them for mass spectral analysis at atmospheric pressure, eliminating the need for complex preparation [44].

Q: What are the current software-related limitations of DART-MS and similar MS techniques? A: A key challenge is that data analysis software is often designed for "omics" fields and doesn't always translate well to small molecule forensics. Furthermore, proprietary software formats from different vendors can make it difficult to batch or merge datasets, complicating data management in multi-instrument labs [21].

Micro-X-Ray Fluorescence (μ-XRF)