Empirical Lower and Upper Bounds (ELUB) and the LR Method: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive overview of Empirical Lower and Upper Bounds (ELUB) and the Linear Regression (LR) validation method, tailored for researchers, scientists, and professionals in drug development and...

Empirical Lower and Upper Bounds (ELUB) and the LR Method: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive overview of Empirical Lower and Upper Bounds (ELUB) and the Linear Regression (LR) validation method, tailored for researchers, scientists, and professionals in drug development and biomedical research. It covers foundational concepts, methodological applications, optimization techniques, and comparative validation, with a specific focus on empirical risk minimization and confidence interval estimation to enhance the reliability of predictive models in clinical and genetic studies.

Understanding ELUB and the LR Method: Core Principles and Statistical Foundations

Defining Empirical Lower and Upper Bounds in Statistical Learning

In statistical learning, empirical lower and upper bounds are not merely theoretical constructs but are pragmatically derived from observed data and computational experiments. These bounds provide critical, data-informed limits on model performance, algorithm complexity, and parameter estimates, offering a realistic assessment of what can be achieved given finite data and computational resources. The empirical lower bound often represents the minimum achievable error rate or the best possible performance guarantee established through observation, while the empirical upper bound typically quantifies the worst-case scenario, maximum complexity, or performance limit of a learning algorithm on specific datasets [1] [2]. Within the broader ELUB (Empirical Lower Upper Bound) research framework, these bounds are not assumed theoretically but are established through rigorous computational experimentation and data analysis, making them particularly valuable for applied researchers who must make decisions under uncertainty with real-world data constraints.

The distinction between theoretical and empirical bounds is fundamental. Theoretical bounds, such as those derived from VC-dimension or Rademacher complexity, provide general guarantees that hold under idealized conditions but may be overly pessimistic or computationally intractable for practical applications. In contrast, empirical bounds are grounded directly in observational and experimental evidence, capturing the actual performance of algorithms on benchmark datasets or through simulation studies [2]. This empirical approach is especially crucial in domains like pharmaceutical research, where decisions about trial design and drug progression must incorporate both statistical principles and practical constraints observed in historical data [3].

Theoretical Foundations and Mathematical Formalization

Core Definitions and Order-Theoretic Framework

The mathematical foundation for empirical bounds originates in order theory, where bounds define limits within partially ordered sets. Formally, for a set ( S ) with a partial order relation ( \leq ) and a subset ( A \subseteq S ), an element ( b \in S ) is an upper bound of ( A ) if ( \forall x \in A, x \leq b ). Conversely, an element ( a \in S ) is a lower bound of ( A ) if ( \forall x \in A, a \leq x ) [1] [4]. In statistical learning, the set ( S ) may represent all possible values of a performance metric (e.g., error rates), while ( A ) constitutes the observed values across experimental conditions.

The least upper bound (supremum) and greatest lower bound (infimum) are particularly important concepts. The supremum is the smallest element among all upper bounds of a subset, while the infimum is the largest element among all lower bounds [4]. When applied empirically, these concepts translate to finding the tightest possible performance limits based on observed data. For instance, in algorithm analysis, the empirical supremum of error rates across multiple datasets provides the tightest possible guarantee on worst-case performance.

Relationship to Probability of Success Frameworks

In pharmaceutical statistics, the concept of Probability of Success (PoS) provides a practical application of empirical bounds. PoS calculations incorporate uncertainty in effect size estimates through a "design prior" distribution, which captures the range of possible treatment effects based on available data [3]. This approach extends traditional power calculations by replacing a fixed effect size assumption with a distribution derived from empirical evidence, effectively creating probabilistic bounds on trial outcomes.

The predictive power approach developed by Spiegelhalter and Freedman calculates what they term "average power" or "predictive power" by integrating over a prior distribution for the treatment effect [3]. This generates empirically bounded success probabilities that more accurately reflect real-world uncertainty than traditional power calculations based on fixed, assumed effect sizes. For drug development professionals, these empirically-derived bounds support more informed decision-making at critical milestones, such as progressing from Phase II to Phase III trials [3].

Quantitative Framework for Empirical Bound Estimation

Methodological Approaches for Bound Calculation

Table 1: Methods for Empirical Bound Estimation in Statistical Learning

| Method | Upper Bound Application | Lower Bound Application | Key Assumptions |

|---|---|---|---|

| Cross-Validation Bounds | Worst-case performance across validation folds | Best-case performance across validation folds | Data representative of population |

| Bootstrap Confidence Limits | Upper confidence limit for performance metrics | Lower confidence limit for performance metrics | Bootstrap samples approximate sampling distribution |

| Extreme Value Theory | Maximum expected loss in risk assessment | Minimum expected performance in optimization | Independent observations, tail behavior follows extreme value distribution |

| Empirical Bernstein Bounds | Concentration inequalities incorporating empirical variance | Performance guarantees with variance sensitivity | Bounded random variables, finite variance |

| Bayesian Posterior Intervals | Highest posterior density intervals for parameters | Credible intervals for predictive performance | Appropriate prior specification, model adequacy |

Performance Metrics and Their Empirical Bounds

Table 2: Common Performance Metrics and Their Empirical Bound Interpretations

| Performance Metric | Empirical Lower Bound | Empirical Upper Bound | Estimation Approach |

|---|---|---|---|

| Classification Error Rate | Minimum achievable error across hyperparameters | Maximum error observed across configurations | Cross-validation with multiple random seeds |

| Algorithmic Time Complexity | Best-case runtime on benchmark instances | Worst-case runtime on adversarial instances | Experimental analysis on diverse inputs |

| Probability of Trial Success | Conservative estimate based on historical data | Optimistic estimate incorporating all available evidence | Bayesian meta-analysis of related trials [3] |

| Model Calibration | Minimum expected calibration error | Maximum miscalibration observed | Resampling methods with confidence intervals |

| Generalization Gap | Minimum difference between train and test performance | Maximum observed generalization gap | Multiple train-test splits with varying ratios |

Experimental Protocols for ELUB Research

Protocol 1: Establishing Empirical Bounds for Algorithm Performance

Objective: To determine empirical lower and upper bounds for classification performance of a learning algorithm across multiple benchmark datasets.

Materials and Reagents:

- Benchmark Datasets: Curated collection from public repositories (e.g., UCI, OpenML) with varying characteristics

- Computational Environment: Standardized computing infrastructure with controlled specifications

- Evaluation Framework: Scripted pipeline for consistent training, validation, and testing

- Statistical Analysis Tools: Software for calculating confidence intervals and bound estimates (R, Python with scipy)

Procedure:

- Dataset Selection and Characterization:

- Select at least 10 benchmark datasets with varying dimensions, sample sizes, and problem complexities

- For each dataset, compute characteristics: number of features, number of instances, class distribution entropy, estimated Bayes error rate

Experimental Design:

- Implement the learning algorithm with 100 different hyperparameter configurations sampled via Latin Hypercube design

- For each hyperparameter configuration, perform 10-fold cross-validation with 5 different random seeds (50 total executions per configuration)

- Execute all experiments on identical hardware to control for computational variability

Performance Measurement:

- For each execution, record primary performance metrics (accuracy, F1-score, AUC-ROC) and secondary metrics (training time, memory usage)

- Compute per-configuration statistics: mean, standard deviation, minimum, and maximum across all executions

Bound Calculation:

- Calculate empirical lower bound as the 5th percentile of the best-performing configuration across datasets

- Calculate empirical upper bound as the 95th percentile of the worst-performing configuration across datasets

- Compute 95% confidence intervals for both bounds using bootstrap resampling with 10,000 iterations

Validation:

- Conduct sensitivity analysis to assess robustness to dataset selection

- Perform pairwise statistical tests (Bonferroni-corrected) to verify significant differences between bounds

Deliverables: Empirical bound estimates with confidence intervals, robustness analysis report, dataset characterization summary.

Protocol 2: Probability of Success Bounds in Clinical Development

Objective: To compute empirical lower and upper bounds for Phase III trial success probability based on Phase II data and external information sources.

Materials:

- Phase II Trial Data: Patient-level data from completed Phase II studies

- Historical Control Database: Curated repository of historical clinical trial results for similar compounds

- Real-World Data Sources: Relevant real-world evidence on target population and disease natural history

- Statistical Software: Bayesian analysis tools (Stan, JAGS, or specialized clinical trial software)

Procedure:

- Endpoint Mapping:

- Establish quantitative relationship between Phase II biomarkers/surrogate endpoints and Phase III clinical endpoints

- If direct endpoint data unavailable in Phase II, utilize external data sources to model the relationship [3]

Design Prior Specification:

- Define prior distribution for Phase III treatment effect based on Phase II data

- Incorporate uncertainty through mixture priors or hierarchical models

- Validate prior assumptions using historical data and expert elicitation

Probability of Success Calculation:

- Compute predictive power for Phase III success using the design prior

- Perform sensitivity analysis across different prior specifications

- Quantify the impact of external data sources on PoS estimates

Empirical Bound Estimation:

- Establish lower bound for PoS using conservative prior specifications

- Establish upper bound for PoS using optimistic but plausible assumptions

- Calculate confidence intervals for PoS using bootstrap or Bayesian posterior intervals

Decision Framework Application:

- Compare empirical PoS bounds against pre-specified decision thresholds

- Evaluate resource allocation implications based on bound tightness

- Document go/no-go recommendation with uncertainty quantification

Deliverables: Probability of Success estimates with empirical bounds, sensitivity analysis report, decision framework recommendation with justification.

Visualization Framework for ELUB Concepts

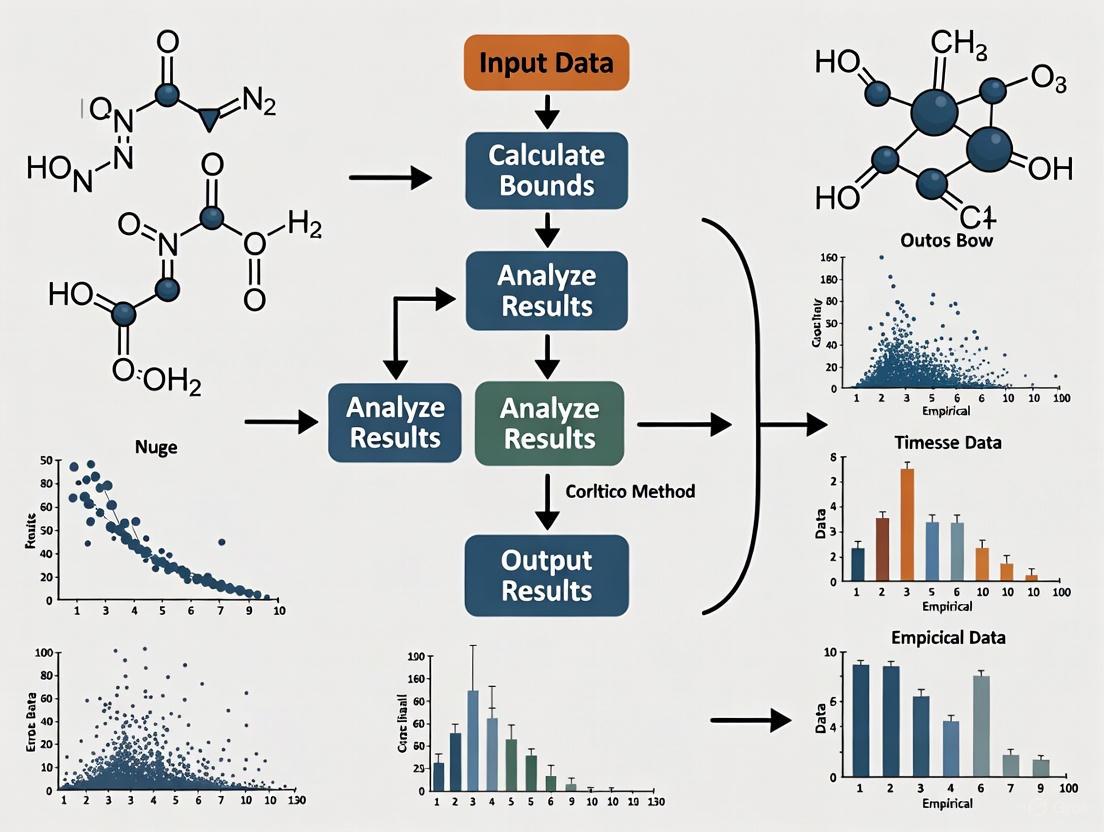

Empirical Bound Estimation Workflow

Probability of Success Calculation with External Data

Research Reagent Solutions for ELUB Experiments

Table 3: Essential Research Materials for Empirical Bound Research

| Research Component | Function in ELUB Research | Implementation Examples |

|---|---|---|

| Benchmark Dataset Collections | Provides standardized testing ground for empirical performance evaluation | UCI Machine Learning Repository, OpenML, DIMACS graph instances [5] |

| Performance Monitoring Tools | Tracks computational metrics during experimental runs | Custom logging frameworks, MLflow, Weights & Biases, TensorBoard |

| Statistical Analysis Packages | Computes empirical bounds with confidence intervals | R stats package, Python scipy, Bayesian analysis tools (Stan, PyMC3) |

| High-Performance Computing Infrastructure | Enables large-scale experimentation for robust bound estimation | Cloud computing platforms, computing clusters with job schedulers |

| External Data Repositories | Enhances prior specification in PoS calculations | ClinicalTrials.gov, PubMed, real-world data networks, historical trial databases [3] |

| Visualization Frameworks | Creates intuitive representations of empirical bounds | Graphviz, matplotlib, ggplot2, D3.js, Tableau |

The framework for defining and applying empirical lower and upper bounds in statistical learning represents a pragmatic approach to uncertainty quantification that is firmly grounded in observational and experimental evidence. By establishing performance boundaries through rigorous computation rather than theoretical assumption alone, ELUB research provides drug development professionals and other researchers with realistic assessments of what can be achieved given available data and resources. The experimental protocols and quantitative frameworks presented here offer practical guidance for implementing this approach across diverse applications, from algorithmic performance characterization to clinical trial probability of success estimation. As statistical learning continues to evolve, these empirical bound methodologies will play an increasingly critical role in bridging the gap between theoretical guarantees and practical performance in real-world applications.

The Role of the LR Method in Model Validation and Genetic Evaluations

The Linear Regression (LR) method for model validation is a robust statistical procedure designed to assess the quality of predictions, particularly estimated breeding values (EBVs) in genetic evaluations. This method compares predictions from two datasets—a partial dataset and a whole dataset—to estimate key validation statistics such as bias, dispersion, and accuracy [6]. When integrated with the concept of empirical lower and upper bounds (ELUB), the LR method becomes a powerful framework for evaluating the reliability of forensic evidence and ensuring that statistical models do not overstate the strength of findings [7]. This combination is crucial in fields where precise and unbiased estimation is paramount. These Application Notes and Protocols detail the implementation of the LR method across various scientific domains, providing structured quantitative summaries, experimental workflows, and essential research tools.

The following tables consolidate key performance metrics of the LR method and related EBV analyses from empirical studies.

Table 1: Performance Metrics of the LR Method in Genetic Evaluations

| Scenario / Condition | Bias (True) | Bias (LR Estimated) | Dispersion (True) | Dispersion (LR Estimated) | Accuracy / Reliability |

|---|---|---|---|---|---|

| Benchmark (BEN) [6] | Unbiased | Accurately Estimated | ~1.0 | Accurately Estimated | Good agreement |

| 25% Pedigree Errors (PE-25) [6] | -0.13 genetic s.d. | +0.17 overestimation | Exhibited inflation | Slightly underestimated | Good agreement |

| 40% Pedigree Errors (PE-40) [6] | -0.18 genetic s.d. | +0.25 overestimation | ~1.0 | Accurately Estimated | Good agreement |

| Weak Connectedness (WCO) [6] | Significant true bias | Inaccurate magnitude/direction | ~1.0 | Accurately Estimated | Good agreement |

Table 2: LR Method Application in Genomic Prediction for Thai-Holstein Cows

| Evaluation Method | Bias | Dispersion | Ratio of Accuracies | Accuracy of Predictions |

|---|---|---|---|---|

| Traditional BLUP [8] | 0.44 | 0.84 | 0.33 | 0.18 |

| Single-Step Genomic BLUP (ssGBLUP) [8] | -0.04 | 1.06 | 0.97 | 0.36 |

| ssGBLUP (Excluding old data: 2009-2018) [8] | Not Reported | Not Reported | Not Reported | 0.32 |

Application Protocols

Protocol 1: LR Method for Validating Genetic Evaluations Under Pedigree Misspecification

This protocol is designed to evaluate the performance of the LR method in detecting bias and dispersion in genetic evaluations when pedigree errors are present, simulating common challenges in beef cattle programs [6].

Materials and Reagents

- Population Data: A pedigree dataset, such as from the Argentinean Brangus program, containing at least 33,000 animals [6].

- Genotypic Data: Genome-wide markers for a subset of the population (e.g., 882 animals genotyped with an Illumina BovineSNP50 Bead Chip) [8].

- Phenotypic Data: Records for a quantitative trait (e.g., milk yield, a trait with heritability of 0.4) [6] [8].

- Simulation Software: A capable platform like AlphaSimR for generating historical populations and conducting gene-dropping procedures [6].

- Genetic Evaluation Software: Programs such as REMLF90 for estimating breeding values using BLUP or ssGBLUP methods [8].

Experimental Workflow

- Historical Population Simulation: Use a Markovian Coalescent Simulator to create a founder population with defined demographic parameters and genetic architecture. Simulate a divergence event to model different subspecies [6].

- Gene-Dropping with Real Pedigree:

- Prune a real pedigree to include the last two calf cohorts and all their ancestors.

- Assign haplotypes from the simulated founder pool to the pedigree's founders.

- Generate genomes for all descendants by randomly dropping these founder haplotypes through the real pedigree to replicate complex linkage disequilibrium patterns [6].

- Trait Simulation and Selection:

- From the last generation of the gene-dropping stage, select a base population (e.g., 300 males, 4000 females).

- Simulate True Breeding Values (TBVs) for an additive trait. For example, use 10,000 randomly selected quantitative trait loci (QTL) with effects sampled from a Gamma distribution to achieve a desired heritability (e.g., 0.4) [6].

- Simulate phenotypes by combining an overall mean, a herd effect, the TBV, and a random error term.

- Apply selection for the simulated trait over several overlapping generations.

- Introduction of Pedigree Errors: Systematically introduce pedigree misspecification into the dataset at defined rates (e.g., 25% and 40%) to create test scenarios [6].

- Genetic Evaluation and LR Validation:

- Perform genetic evaluations using both a partial dataset (EBV~p~) and a whole dataset (EBV~w~) for a focal group of individuals.

- Apply the LR method by regressing EBV~w~ on EBV~p~.

- Calculate key statistics: the intercept (estimating bias), the slope (estimating dispersion), and the correlation (related to accuracy and reliability) [6] [8].

- Compare these estimated statistics against their expected values under optimal conditions and against the known true values from the simulation.

Protocol 2: Application of the LR Method for Genomic Prediction Accuracy

This protocol outlines the use of the LR method to validate the accuracy of genomic predictions for complex traits in dairy cattle, demonstrating its utility in genomic selection programs [8].

Materials and Reagents

- Phenotypic and Pedigree Data: A comprehensive dataset, such as test-day milk yield records from the first parity of 15,380 Thai-Holstein cows collected over multiple years [8].

- Genotypic Data: Genotypes for a subset of animals (e.g., 882) using a platform like the Illumina BovineSNP50 Bead Chip [8].

- Environmental Data: Daily temperature and humidity records from nearby weather stations to calculate the Temperature-Humidity Index (THI) [8].

- Analysis Software: REMLF90 or equivalent for estimating genetic parameters and breeding values using a repeatability model with random regressions on THI [8].

Experimental Workflow

- Data Preparation and Quality Control:

- Edit phenotypic data by applying filters (e.g., exclude records outside 6-305 days in milk, herd-test-dates with fewer than 30 cows, etc.).

- Perform quality control on genomic data, retaining single nucleotide polymorphisms (SNPs) and animals with call rates >0.9 and minor allele frequencies >0.05.

- Calculate the daily THI from climate data and associate it with each test-day record [8].

- Model Definition and Analysis:

- Define a statistical model that accounts for fixed effects (e.g., herd-month-year, farm-calving season, breed group) and random effects (e.g., general additive genetic effect, additive genetic effect for heat tolerance).

- Incorporate a random regression on the THI function to model the effect of heat stress on the trait [8].

- Validation Population Definition: Identify a validation group of individuals (e.g., 66 bulls) whose phenotypes in the most recent generation are excluded to create a partial dataset [8].

- Genomic Evaluation:

- Conduct genomic evaluation using ssGBLUP, which integrates pedigree, genotype, and phenotype data from the partial dataset to generate GEBVs for the validation group (GEBV~p~).

- Perform a second genomic evaluation using the whole dataset (including the withheld phenotypes) to generate GEBVs (GEBV~w~) [8].

- LR Analysis and Metric Calculation:

- Regress GEBV~w~ on GEBV~p~ for the validation individuals.

- Estimate bias from the regression intercept, dispersion from the slope, and calculate the ratio of accuracies from the correlation. The accuracy of predictions can be derived from the model's coefficient of determination [8].

- Persistence Testing: Repeat the analysis while excluding older phenotypic data (e.g., the first 10 years) to assess the impact on prediction accuracy and the value of historical data [8].

Signaling Pathways, Workflows, and Logical Diagrams

LR Method Validation Workflow

ELUB in the Forensic Evaluation Framework

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for LR Method Experiments

| Item / Reagent | Function / Application in Protocol |

|---|---|

| Illumina BovineSNP50 Bead Chip [8] | A genotyping array used to obtain genome-wide SNP markers from animal blood or tissue samples, providing the genomic data essential for ssGBLUP. |

| REMLF90 Software [8] | A specialized software program for estimating variance components and genetic parameters using Restricted Maximum Likelihood, and for predicting breeding values. |

| AlphaSimR Software [6] | An R package used for simulating population genomes, breeding programs, and genetic traits, crucial for creating synthetic datasets to test the LR method under controlled conditions. |

| Pedigree Database [6] | A comprehensive record of ancestral relationships within a population, serving as the foundational data for constructing the relationship matrix in genetic evaluations. |

| Temperature-Humidity Index (THI) Data [8] | A calculated index derived from temperature and humidity data, used as an environmental covariate in models to assess the impact of heat stress on livestock traits. |

Bilevel optimization problems, characterized by their nested structure where one optimization task is embedded within another, are increasingly pivotal in machine learning. These frameworks are particularly powerful for formulating hierarchical processes such as hyperparameter tuning, meta-learning, and neural architecture search. A significant subclass of these problems involves bilevel empirical risk minimization (BERM), where both the upper (outer) and lower (inner) objectives represent empirical risks over finite datasets. This formulation is fundamental to many modern machine learning paradigms. Recent theoretical and algorithmic breakthroughs have established a near-optimal algorithm for BERM, achieving a lower bound on computational complexity that matches its sample efficiency, thereby providing a solid foundation for their application in resource-intensive fields like pharmaceutical development [9] [10] [11].

Within the broader context of Empirical Lower Upper Bound (ELUB) research, bilevel optimization offers a structured approach to managing uncertainty and hierarchical decision-making. The ability to simultaneously optimize primary objectives and constraint policies enables researchers to derive robust models even with complex, high-dimensional data. This document delineates the core theoretical principles of BERM, details a state-of-the-art algorithm, and presents structured protocols for its application in drug development, complete with quantitative comparisons and experimental workflows.

Theoretical Foundations and Algorithmic Advances

Problem Formulation of Bilevel Empirical Risk Minimization

In a standard bilevel optimization problem, the upper-level objective ( F ) depends on the solution ( y^* ) of a lower-level optimization problem. For empirical risk minimization, where objectives are sums of losses over samples, the BERM problem takes the form:

[ \min{x \in \mathbb{R}^{dx}} ~ F(x, y^(x)) := \frac{1}{n} \sum_{i=1}^{n} f_i(x, y^(x)) ] [ \text{subject to} \quad y^*(x) \in \arg\min{y \in \mathbb{R}^{dy}} ~ G(x, y) := \frac{1}{m} \sum{j=1}^{m} gj(x, y) ]

Here, ( F ) and ( G ) are the upper-level and lower-level empirical risk functions, respectively. The vector ( x ) represents the upper-level variables (e.g., hyperparameters), while ( y ) denotes the lower-level variables (e.g., model parameters). The functions ( fi ) and ( gj ) are loss functions corresponding to individual data points, with ( n ) and ( m ) being the number of samples for the upper and lower levels [9]. This structure captures a wide range of machine learning tasks; for instance, in hyperparameter optimization, ( x ) might be the hyperparameters, and ( y^* ) the model parameters that minimize the training loss ( G ), with ( F ) representing the validation error.

A Near-Optimal Algorithm: Bilevel Extension of SARAH

The bilevel SARAH algorithm is a breakthrough for solving BERM problems. It extends the celebrated SARAH stochastic variance reduction algorithm to the bilevel setting, achieving a provably optimal sample complexity [9] [10] [11].

The key innovation lies in its efficient handling of the hypergradient—the gradient of the upper-level objective ( F(x, y^(x)) ) with respect to ( x ). Computing the hypergradient exactly is computationally expensive as it involves solving a linear system derived from the implicit function theorem applied at the lower-level solution ( y^(x) ). The bilevel SARAH algorithm avoids this bottleneck by using unbiased stochastic estimates of the hypergradient, direction, and the main variable simultaneously. The algorithm proceeds iteratively with the following update for the upper-level variable:

[ x{t+1} = xt - \gammax \left( \nablax f{it}(xt, yt) - \nabla{xy}^2 g{jt}(xt, yt) vt \right) ]

Here, ( \gammax ) is the step size, ( it ) and ( jt ) are randomly sampled indices, ( yt ) is an approximation of the lower-level solution ( y^*(xt) ), and ( vt ) is an unbiased estimate of the solution to the linear system ( \nabla{yy}^2 G(xt, yt) v = \nablay F(xt, yt) ) [12]. The estimates for ( yt ) and ( vt ) are also updated using stochastic variance-reduced schemes similar to SAGA, which controls the variance introduced by stochastic sampling and accelerates convergence [12].

Optimal Sample Complexity and Lower Bound

A landmark result establishes that this bilevel SARAH algorithm achieves an ( \mathcal{O}((n+m)^{1/2} \varepsilon^{-1}) ) rate for finding an ( \varepsilon )-stationary point of the upper-level objective. This means the number of required gradient evaluations (or oracle calls) scales with the square root of the total sample size ( (n+m) ) and inversely with the accuracy ( \varepsilon ) [9] [10] [11].

Furthermore, this convergence rate is optimal because a matching lower bound proves that no algorithm can achieve ( \varepsilon )-stationarity with fewer than ( \Omega((n+m)^{1/2} \varepsilon^{-1}) ) oracle calls in the general BERM setting [9]. This establishes a fundamental limit for computational efficiency in bilevel learning.

Table 1: Key Properties of the Bilevel SARAH Algorithm

| Property | Description | Theoretical Guarantee |

|---|---|---|

| Sample Complexity | Number of gradient computations to achieve ( \varepsilon )-stationarity | ( \mathcal{O}((n+m)^{1/2} \varepsilon^{-1}) ) |

| Lower Bound | Minimum oracle calls any algorithm requires | Matches complexity: ( \Omega((n+m)^{1/2} \varepsilon^{-1}) ) |

| Variance Reduction | Technique to control error in gradient estimates | Global variance reduction (e.g., SAGA-based) |

| Convergence Rate | Speed of convergence to a stationary point | ( O(1/T) ); Linear under PL condition |

Applications in Pharmaceutical Sciences and Drug Development

The BERM framework is particularly suited to complex decision-making processes in pharmacology, where decisions are often hierarchical and data is costly.

Cost-Sensitive Feature Selection in Medical Diagnostics

A direct application of empirical risk minimization in medicine is cost-constrained feature selection. In many diagnostic and prognostic tasks, medical features (e.g., lab tests, imaging) come with associated financial costs, risks, or acquisition times. The goal is to build a predictive model that maximizes accuracy while respecting a total cost budget for feature acquisition [13].

This can be formulated as a penalized empirical risk minimization problem: [ \min{\beta} ~ \frac{1}{n} \sum{i=1}^{n} \mathcal{L}(yi, \beta^T xi) + \lambda \sum{j=1}^{p} cj \cdot P(|\betaj|) ] where ( \mathcal{L} ) is a loss function (e.g., logistic loss), ( \beta ) are model coefficients, ( cj ) is the cost of the ( j )-th feature, and ( P ) is a penalty function (e.g., lasso, MCP) that incorporates feature costs into the regularization term. This forces the model to prefer cheaper, sufficiently informative features over more expensive ones, especially under tight budgets [13]. Experiments on the MIMIC-II dataset demonstrated that such cost-sensitive methods could achieve an AUC of 0.88 for predicting liver diseases using only 5% of the total available feature cost, significantly outperforming traditional methods that ignore cost [13].

Bilevel Optimization for Pharmaceutical Risk Minimization

Risk-minimization programs for drugs with significant safety concerns can be viewed through a bilevel lens. A regulator (upper level) aims to minimize public health risk by mandating a risk-minimization program, whose design and implementation (lower level) is carried out by pharmaceutical companies and healthcare providers. The effectiveness of the upper-level regulatory objective depends on the optimal execution of the lower-level implementation tasks [14]. While not always a purely mathematical optimization in practice, this conceptual framework helps in designing more effective, evidence-based programs by explicitly considering the nested dependencies.

Molecular Optimization and Drug Design

Bilevel optimization is a natural fit for inverse design in molecular discovery. The upper-level goal is to generate molecular structures ( x ) with optimized properties (e.g., high efficacy, low toxicity). The lower-level problem involves a predictive model ( y ) that accurately estimates these properties for a given structure. The overall objective is to find molecules whose predicted properties, as determined by the best available model ( y^* ), are optimal [15]. Recent projects, such as those developing generative models for molecules via conditional diffusion and multi-property optimization, leverage such formulations to align generated molecular structures with a set of desired drug properties efficiently [15].

Table 2: Bilevel Applications in Pharmaceutical Development

| Application Area | Upper-Level Objective | Lower-Level Objective |

|---|---|---|

| Hyperparameter Tuning & Model Selection | Minimize validation error of a predictive model | Minimize training error of the model parameters |

| Cost-Sensitive Feature Selection | Maximize predictive accuracy under a total feature cost budget | Learn model parameters that optimally use selected features |

| Pharmaceutical Risk-Minimization | Minimize public health risk associated with a drug | Optimize implementation of risk-minimization tools by providers |

| Molecular Design | Generate molecular structures with optimal drug properties | Train a predictive model to accurately estimate molecular properties |

Experimental Protocols

Protocol 1: Hyperparameter Tuning for a Clinical Prediction Model

This protocol outlines a BERM approach for tuning hyperparameters of a machine learning model designed to predict patient outcomes from electronic health records.

Workflow Diagram: Hyperparameter Tuning

Materials and Reagents:

- Software: Python with JAX or PyTorch libraries for automatic differentiation.

- Data: De-identified patient dataset, split into training and validation sets.

- Computing: Access to a GPU cluster for efficient linear algebra computations.

Procedure:

- Problem Formulation:

- Let ( x ) be the vector of hyperparameters (e.g., learning rate, regularization strength).

- Let ( y ) be the parameters of the prediction model (e.g., weights of a neural network).

- Upper-Level Objective: ( F(x) = \frac{1}{n{\text{val}}} \sum{i=1}^{n{\text{val}}} \mathcal{L}(y^*(x), \xii^{\text{val}}) ), where ( \xi_i^{\text{val}} ) are validation samples.

- Lower-Level Objective: ( G(x, y) = \frac{1}{n{\text{train}}} \sum{j=1}^{n{\text{train}}} \mathcal{L}(y, \xij^{\text{train}}) + R(x, y) ), where ( R ) is a regularizer.

Algorithm Initialization:

- Initialize hyperparameters ( x0 ) and model parameters ( y0 ).

- Set step sizes ( \gammax, \gammay ) and the number of iterations ( T ).

Iterative Optimization:

- Inner Loop (Approximate ( y^*(xt) )): For several steps, update ( y ) using a stochastic gradient method on ( G(xt, y) ). The bilevel SARAH algorithm uses a variance-reduced update for this [12].

- Hypergradient Estimation: Compute an unbiased estimate of ( \nabla F(xt) ): [ \hat{\nabla} F(xt) = \nablax f{it}(xt, yt) - \nabla{xy}^2 g{jt}(xt, yt) vt ] where ( vt ) is an iterative estimate for the solution of the linear system ( \nabla{yy}^2 G(xt, yt) v = \nablay F(xt, yt) ).

- Update Hyperparameters: ( x{t+1} = xt - \gammax \hat{\nabla} F(xt) ).

Validation: The final hyperparameters ( x^* ) are used to train a model on a combined training and validation set, and its performance is reported on a held-out test set.

Protocol 2: Cost-Sensitive Feature Selection with Budget Constraint

This protocol details an experiment to select a subset of clinical features without exceeding a predefined budget.

Workflow Diagram: Feature Selection

Materials and Reagents:

- Dataset: MIMIC-II or similar clinical dataset.

- Cost Vector: A pre-defined list of costs for each feature, which can be financial or based on resource usage.

- Software: R or Python with

glmnetorncvregpackages for penalized regression.

Procedure:

- Data Preprocessing: Normalize all features and assign a cost ( c_j ) to each feature ( j ). Costs can be based on expert opinion or actual financial cost.

- Model Training: Solve the cost-sensitive ERM problem using a non-convex penalty like MCP, which is known to perform well under budget constraints [13]: [ \min{\beta} ~ \frac{1}{n} \sum{i=1}^{n} \log(1 + \exp(-yi \beta^T xi)) + \lambda \sum{j=1}^{p} (cj \cdot P{\text{MCP}}(|\betaj|)) ] The regularization parameter ( \lambda ) is chosen via cross-validation to meet the desired budget.

- Evaluation:

- Calculate the total cost of the selected features: ( \sum{j: \betaj \neq 0} c_j ).

- Measure the model's performance (e.g., AUC) on a held-out test set.

- Compute the Cost-Sensitive False Discovery Rate (CSFDR), which measures the proportion of the total cost wasted on irrelevant features [13].

- Comparison: Benchmark the performance and cost efficiency against traditional feature selection methods like standard lasso.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item Name | Function/Description | Example Use Case |

|---|---|---|

| Variance-Reduced SGD | Stochastic optimization with controlled variance for stable convergence. | Core update step in the bilevel SARAH algorithm for both upper and lower levels [12]. |

| Automatic Differentiation | Software tool to compute exact gradients and Hessian-vector products. | Efficiently computing the hypergradient ( \nabla F(x) ) in a bilevel problem [12]. |

| Non-Convex Penalties (MCP/SCAD) | Penalization functions that provide sparsity without excessive bias on large coefficients. | Enforcing cost-sensitive sparsity in feature selection models [13]. |

| Proxy Features | Artificially created low-cost, noisy versions of original features. | Simulating a cost-sensitive environment for benchmarking on datasets without known costs [13]. |

| KL Divergence / ELBO | Measures for comparing probability distributions and bounding log-likelihood. | Used in variational inference and connecting to the broader ELUB research context [16]. |

Key Applications in Drug Development and Clinical Research

Drug development and clinical research are undergoing a rapid transformation, driven by the adoption of sophisticated quantitative methods and innovative trial designs. These applications are critical for navigating the increasing complexity of clinical trials, which face challenges from rising costs, extensive data requirements, and stringent regulatory standards [17]. This document details key contemporary applications, with a specific focus on methodologies that align with the principles of empirical lower upper bound likelihood ratio (ELUB) research. These approaches enhance decision-making by providing a structured, quantitative framework to assess uncertainty, optimize resource allocation, and strengthen statistical inference throughout the drug development lifecycle. The following sections summarize current trends, provide detailed experimental protocols, and outline essential research tools.

Current Landscape and Quantitative Data

The biopharmaceutical industry is strategically focusing on high-value therapeutic areas and leveraging advanced analytics to improve R&D productivity. Table 1 summarizes the top therapeutic areas prioritized by drug developers and the key challenges they face, based on a recent global industry survey [17].

Table 1: Key Trends in Clinical Research (2025)

| Trend Area | Specific Focus | Industry Adoption/Impact Data |

|---|---|---|

| Therapeutic Area Prioritization | Oncology | 64% of sponsors are prioritizing this area [17]. |

| Immunology/Rheumatology | 41% of sponsors are prioritizing this area [17]. | |

| Rare Diseases | 31% of sponsors are prioritizing this area [17]. | |

| Top Industry Challenges | Rising Clinical Trial Costs | Cited as the top challenge by 49% of drug developers [17]. |

| Patient Recruitment | Cited as the second top challenge by 39% of developers [17]. | |

| Adoption of Innovative Methods | Use of Artificial Intelligence (AI) | 66% of large sponsors and 44% of small/mid-sized sponsors are pursuing AI [17]. |

| Innovative Trial Designs | Highlighted as the top transforming trend by over half of surveyed sponsors [17]. |

Concurrently, the drug development pipeline for specific complex diseases continues to expand. For instance, the Alzheimer's disease (AD) pipeline for 5 includes 138 drugs across 182 clinical trials. The pipeline is diverse, with 73% classified as disease-targeted therapies (30% biologics, 43% small molecules) and 27% as symptomatic therapies (14% cognitive enhancement, 11% neuropsychiatric symptoms, and 2% other) [18]. Biomarkers are integral to this progress, serving as primary outcomes in 27% of active AD trials [18].

Key Application Notes and Protocols

Application Note 1: Probability of Success (PoS) for Go/No-Go Decisions

1. Purpose: To quantify the probability of a successful Phase III trial outcome based on Phase II data, supporting the critical go/no-go decision for confirmatory evaluation [3].

2. Background: PoS moves beyond traditional power calculations by incorporating uncertainty in the treatment effect size, formalized through a "design prior" distribution. This provides a more realistic assessment of trial success [3].

3. Experimental Protocol:

- Step 1: Define Success Criteria. Clearly define the primary endpoint and the target effect size for Phase III success (e.g., a hazard ratio of 0.75 for a survival endpoint) [3].

- Step 2: Formulate the Design Prior. Construct a probability distribution for the treatment effect. This can be an informative prior based directly on Phase II data for the same endpoint, an elicited prior from clinical experts, or an informative prior derived from external data (e.g., real-world data or historical trials) [3].

- Step 3: Calculate Predictive Power. The PoS is computed as the expected power over the design prior. This is often referred to as assurance or average power [3]. The calculation integrates the statistical power across all plausible values of the treatment effect, weighted by their probability from the design prior.

- Step 4: Conduct Sensitivity Analysis. Perform a tipping-point analysis by varying the parameters of the design prior to assess how robust the PoS is to changes in assumptions [3]. This aligns with ELUB methodologies for evaluating inference stability.

4. Visualization of Workflow: The following diagram illustrates the logical workflow and decision points for the PoS calculation.

Application Note 2: Bayesian Tipping-Point Analysis for Prior Sensitivity

1. Purpose: To efficiently identify the threshold (tipping point) at which a prior distribution's influence changes the qualitative conclusion of a Bayesian analysis, which is essential for assessing robustness [19].

2. Background: Regulatory guidelines recommend sensitivity analysis for prior distributions. Tipping-point analysis systematically varies hyperparameters to find where a credible interval crosses a decision threshold (e.g., a null effect), quantifying the prior's impact [19].

3. Experimental Protocol using SIR:

- Step 1: Fit Base Model. Using Markov Chain Monte Carlo (MCMC), fit the Bayesian model with a baseline prior distribution to obtain posterior samples for the parameter of interest, θ [19].

- Step 2: Define Alternative Priors. Specify a range of alternative priors, π∗(θ), typically by varying a key hyperparameter, ψ [19].

- Step 3: Apply Sampling Importance Resampling (SIR). For each alternative prior:

- Calculate importance weights for each base posterior sample: ( wm \propto \frac{\pi*(\theta{(m)})}{\pi(\theta{(m)})} )

- Normalize the weights: ( \tilde{w}m = \frac{wm}{\sum{j=1}^{M} wj} )

- Resample from the base posterior samples using the normalized weights to generate approximate posterior samples under π∗(θ) [19].

- Step 4: Compute Posterior Summaries. From the resampled data, calculate the posterior mean, credible intervals (e.g., 95% CrI), and probability of success for each ψ [19].

- Step 5: Identify Tipping Point. Plot the posterior summary (e.g., upper credible limit) against ψ. The tipping point is the value of ψ where the summary statistic equals the decision threshold (e.g., θ0) [19]. A bisection algorithm can be used to find this value efficiently.

4. Visualization of Workflow: The diagram below outlines the SIR process for efficient tipping-point analysis, avoiding repeated MCMC runs.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and computational tools essential for implementing the advanced methodologies described in this document.

Table 2: Essential Research Reagents and Tools

| Item Name | Type | Function / Application Note |

|---|---|---|

| High-Quality External Data | Data | Real-world data (RWD) and historical clinical trial data used to inform and strengthen the "design prior" in Probability of Success calculations [3]. |

| Validated Biomarker Assays | Biochemical / Diagnostic | Used for patient stratification, target engagement, and as primary or secondary endpoints in clinical trials, crucial for precision medicine and disease-targeted therapies [18]. |

| MCMC Software (Stan, JAGS) | Computational Tool | Platforms for performing Bayesian statistical modeling and generating posterior samples via Markov Chain Monte Carlo sampling, forming the base for SIR and tipping-point analysis [19]. |

| Clinical Trial Scenario Modeling Software | Computational Tool | AI and predictive analytics platforms that simulate trial outcomes under various conditions (e.g., different protocols, recruitment rates) to optimize design and identify bottlenecks [17]. |

| Sampling Importance Resampling (SIR) Algorithm | Computational Method | A resampling technique used to approximate posterior distributions under alternative prior settings without computationally expensive MCMC re-fitting, core to efficient sensitivity analysis [19]. |

| Protocol Deviation Tracking System | Operational Tool | Systems to monitor and manage protocol deviations, which are a top cause of FDA Warning Letters, ensuring data integrity and regulatory compliance [20]. |

Interpreting Bias, Dispersion, and Accuracy in Predictive Models

Core Concepts and Definitions

Bias, dispersion, and accuracy are fundamental metrics for evaluating the performance and reliability of predictive models across scientific domains, from drug development to genomic selection. These metrics provide crucial insights into how well models generalize to new data and whether their predictions can be trusted for critical decision-making.

Accuracy represents the degree to which a model's predictions match true values, measuring overall correctness [21]. In classification, it quantifies correct prediction rates, while in regression, metrics like Mean Absolute Error (MAE) and Mean Squared Error (MSE) quantify prediction error magnitude [21].

Bias occurs when models produce systematically different predictions for population subgroups who are identical on specific criteria [22]. This manifests as outcome disparity (differences in final result distributions) or error disparity (variations in prediction errors across groups) [22]. Bias can originate from multiple sources including training labels, sample selection methods, model fitting approaches, and data representation [22].

Dispersion refers to the spread or variability of predictions around true values, with ideal models showing consistent error patterns across different datasets [23]. In genomic selection studies, dispersion values closer to 1.0 indicate desirable prediction stability, while values deviating from 1.0 suggest under-dispersion or over-dispersion [23].

Table 1: Key Evaluation Metrics for Predictive Models

| Metric Category | Specific Metric | Formula/Definition | Interpretation |

|---|---|---|---|

| Accuracy Metrics | Classification Accuracy | (True Positives + True Negatives)/Total Predictions | Proportion of correct predictions [21] |

| Mean Absolute Error (MAE) | Average absolute difference between predicted and actual values | Intuitive error interpretation in original units [21] | |

| Mean Squared Error (MSE) | Average of squared differences between predicted and actual values | Penalizes larger errors more severely [21] | |

| Bias Assessment | Outcome Disparity | Differences in prediction distributions across subgroups | Reveals systematic favoring of specific groups [22] |

| Error Disparity | Variation in error rates across demographic groups | Identifies performance inconsistencies [22] | |

| Predictive Bias Metric | D(Y, Ŷ | A) = 2(log(p(Y | A)) - log(p(Ŷ | A))) | Quantifies disparity between ideal and actual distributions [22] | |

| Dispersion Metrics | Regression Dispersion | Slope of regression line between predictions and actuals | Values near 1.0 indicate appropriate variability [23] |

Connection to ELUB Research Framework

The Empirical Lower and Upper Bound (ELUB) method addresses limitations in traditional predictive model evaluation by establishing realistic boundaries for likelihood ratios (LRs) in forensic applications [24]. Within the ELUB research framework, proper interpretation of bias, dispersion, and accuracy becomes essential for contextualizing model performance against empirical constraints.

ELUB emerged from observations of "unrealistically strong LRs" in forensic text comparison systems, where fused likelihood ratios from multiple procedures (multivariate kernel density, word token N-grams, character N-grams) required calibration to empirical boundaries [24]. This framework provides reference points for determining whether observed accuracy metrics represent genuine predictive power or statistical artifacts.

In drug development and genomic selection applications, the ELUB philosophy translates to establishing realistic performance expectations based on domain-specific constraints. For instance, research on virtual drug studies demonstrates how modeling and simulation face inherent accuracy boundaries dictated by biological complexity and data quality [25]. Similarly, genomic prediction accuracy in sheep populations shows empirical limits based on reference population size, genetic diversity, and pedigree error rates [23].

Quantitative Assessment in Practical Applications

Table 2: Empirical Performance Data Across Domains

| Application Domain | Model Type | Reported Accuracy/Bias Findings | Impact Factors |

|---|---|---|---|

| Genomic Prediction (Sheep) | Single-step Genomic BLUP | 4-8% accuracy improvement over pedigree-based BLUP; up to 20% accuracy increase in well-connected subpopulations [26] | Reference population size, genetic diversity, pedigree errors [23] |

| Hospital Readmission Prediction | LACE, HOSPITAL, ACG, HATRIX | LACE/HOSPITAL showed greatest bias potential; HATRIX demonstrated fewest bias concerns [27] | Data quality, feature selection, validation methodology [27] |

| Forensic Text Analysis | Fused Likelihood Ratio System | Cllr value of 0.15 achieved with 1500 token length; unrealistically strong LRs observed [24] | Text length, feature selection, fusion methodology [24] |

| Drug Discovery | Machine Learning Models | Potential to reduce failure rates but challenged by interpretability and repeatability [28] | Data quality, model transparency, validation rigor [28] |

Experimental Protocols for Evaluation

Protocol 1: Bias Assessment in Predictive Models

Purpose: Systematically evaluate potential bias sources throughout model development and deployment lifecycle.

Materials:

- Dataset with comprehensive demographic and clinical variables

- Bias evaluation checklist [27]

- Statistical software (R, Python with scikit-learn)

- Performance metrics (accuracy, precision, recall, F1-score across subgroups)

Procedure:

- Define disadvantaged groups and bias types relevant to predictive task

- Identify algorithm and validation evidence from model documentation

- Apply checklist questions across four phases:

- Model definition and design: Assess intended use case and potential impact

- Data acquisition and processing: Evaluate representativeness and preprocessing

- Validation: Scrutinize cross-validation strategies and subgroup analyses

- Deployment/model use: Monitor real-world performance drift [27]

- Quantify disparities using appropriate metrics (e.g., log-likelihood ratio)

- Implement countermeasures specific to identified bias sources:

- For label bias: Post-stratification, annotator retraining

- For selection bias: Stratified sampling, re-weighting techniques

- For over-amplification: Down-weight biased instances, modify cost function

- For semantic bias: Adjust embedding parameters [22]

Protocol 2: Accuracy and Dispersion Evaluation in Genomic Prediction

Purpose: Assess accuracy, bias, and dispersion of genomically-enhanced breeding values (GEBVs) under different scenarios.

Materials:

- Phenotypic records (32,713 animals in example study) [26]

- Genotypic data (3,238 animals with medium-density SNP panels) [26]

- Pedigree information

- Statistical software (BLUPF90 family programs, R) [26]

- Quality control tools for genomic data

Procedure:

- Data Preparation:

- Perform quality control on genomic data (call rate >0.90, MAF >0.05)

- Impute missing genotypes using reference panels

- Correct pedigree errors based on genomic information [26]

Model Implementation:

Validation:

- Partition population into training and validation sets

- Calculate validation statistics for GEBVs

- Assess accuracy as correlation between predicted and actual values

- Evaluate bias as regression slope (target = 1.0) [23]

- Measure dispersion as variability around the regression line

Scenario Testing:

- Test different genotyping strategies (random, highest EBV, highest phenotypic values)

- Evaluate impact of pedigree error rates (0-20%)

- Assess effect of different proportions of animals genotyped [23]

Research Reagent Solutions

Table 3: Essential Research Materials and Tools

| Tool/Reagent | Specific Examples | Function/Purpose |

|---|---|---|

| Genotyping Arrays | Illumina OvineSNP50 BeadChip, Axiom Ovine 60K, GeneSeek GGP | Genomic variant detection for breeding value prediction [26] |

| Statistical Software | BLUPF90 family, R packages (AlphaSimR), Python scikit-learn | Model implementation, variance component estimation, bias assessment [23] |

| Data Quality Tools | Seekparentf90, FImpute v3.0, preGSf90 | Pedigree error detection, genotype imputation, genomic data QC [26] |

| Bias Assessment Framework | Bias evaluation checklist, PROBAST, 3 central axes framework | Systematic identification of bias sources throughout model lifecycle [27] |

| Performance Metrics | Cllr (log-likelihood-ratio cost), MAE, MSE, ROC/AUC | Quantification of model accuracy, calibration, and discrimination [21] [24] |

Implementing ELUB and LR Methods: Practical Algorithms and Use Cases

Step-by-Step Guide to Data Truncation for Validation

Within empirical lower upper bound (ELUB) research, establishing robust and defensible bounds for data is paramount. Data truncation, the process of limiting data values to a specified range, serves as a critical validation step to ensure that empirical observations remain within theoretically or empirically justified limits. This protocol outlines a standardized methodology for implementing data truncation, framed within the context of ELUB research for drug development. The procedures ensure data integrity, enhance the reliability of statistical models, and support regulatory compliance by providing a clear, auditable trail for handling boundary data . [29]

Scope and Application

This document provides application notes and detailed protocols for data truncation. It is intended for researchers, scientists, and data professionals in pharmaceutical development and related fields where ELUB methods are applied to validate data ranges for critical parameters, such as drug concentration levels, physiological measurements, or assay results. The guide covers truncation logic, implementation workflows, and validation procedures . [29]

Pre-Truncation Data Assessment

Defining Truncation Boundaries

The first step involves establishing the Lower Bound (LB) and Upper Bound (UB) for the dataset. These bounds must be justified empirically from historical data, through theoretical models, or defined by physiological and pharmacological constraints (e.g., a value cannot exceed 100%, or a concentration must be within a detection limit). In ELUB research, these bounds represent the empirical limits under investigation . [29]

Data Quality Checks

Before truncation, perform foundational data validation checks to understand the dataset's profile and identify potential outliers . [30] [29]

Table 1: Pre-Truncation Data Quality Checks

| Check Type | Description | ELUB Relevance |

|---|---|---|

| Data Type Validation | Ensures each field contains the expected data type (e.g., numeric, text). | Confirms data is suitable for numerical bound comparisons. |

| Range & Boundary Check | Identifies values that fall outside predefined, plausible limits. | Provides the initial list of values requiring truncation analysis. |

| Completeness Check | Verifies that mandatory fields are not null or empty. | Incomplete data can skew the determination of valid bounds. |

| Data Profiling | Analyzes the dataset to understand value distributions, patterns, and anomalies. | Informs the empirical justification for the selected LB and UB. |

Data Truncation Protocol

Truncation Logic and Algorithm

The core truncation operation is a conditional transformation applied to each data point. For a given variable ( x ) with lower bound ( LB ) and upper bound ( UB ), the truncated value ( x' ) is defined as: [ x' = \begin{cases} LB & \text{if } x < LB \ x & \text{if } LB \leq x \leq UB \ UB & \text{if } x > UB \end{cases} ]

Implementation Workflow

The following diagram illustrates the end-to-end workflow for data truncation and validation, from bound definition to final output.

Experimental Protocol for Truncation Validation

This protocol validates the truncation process to ensure it performs as intended without corrupting the dataset.

Aim: To verify that the data truncation procedure correctly limits values to the specified LB and UB, accurately flags modified records, and maintains dataset integrity. Methodology:

- Test Dataset Creation: Generate a synthetic dataset containing known values below, within, and above the target LB and UB.

- Process Execution: Run the truncation algorithm on the test dataset.

- Output Validation:

- Record Count Reconciliation: Verify that the total number of records remains unchanged post-truncation.

- Value Inspection: Manually check that values below LB are set to LB, values above UB are set to UB, and intermediate values are unaltered.

- Flag Audit: Confirm that the flagging system correctly identifies all truncated records.

Table 2: Validation Metrics and Acceptance Criteria

| Metric | Measurement Method | Acceptance Criterion |

|---|---|---|

| Data Integrity | Record count comparison between source and output. | 100% record count match. |

| Truncation Accuracy | Inspection of values known to be outside bounds. | All values beyond LB/UB are correctly replaced. |

| Flagging Accuracy | Audit of the flagging column against the list of known out-of-bound values. | 100% of truncated records are flagged. |

| Performance | Execution time for the truncation process on a dataset of specified size. | Process completes within the required time window. |

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Data Validation

| Item | Function in Protocol |

|---|---|

| Data Profiling Tool (e.g., Great Expectations, custom Python/Pandas scripts) | Performs initial data assessment, identifies current value ranges, and detects anomalies. Informs the empirical setting of LB and UB. |

| Truncation Algorithm Script (e.g., Python, R, SQL) | The core code that implements the truncation logic, transforming the data based on the defined bounds. |

| Validation Framework (e.g., dbt tests, unit tests in Python) | Automated scripts that run the checks outlined in the experimental protocol to validate the output. |

| Version Control System (e.g., Git) | Tracks changes to both the data and the truncation algorithms, ensuring reproducibility and auditability. |

| Metadata Repository | Documents the justification for LB/UB, the truncation rules, and the results of validation checks, which is critical for regulatory compliance. |

Post-Truncation Analysis and Documentation

Impact Analysis

After truncation, analyze the impact on the dataset. Key metrics to report include:

- The number and percentage of records truncated at the lower bound.

- The number and percentage of records truncated at the upper bound.

- Summary statistics (e.g., mean, standard deviation) of the variable before and after truncation to quantify the effect . [30] [29]

Table 4: Example Post-Truncation Summary for a Pharmacokinetic Parameter (e.g., C~max~)

| Statistic | Pre-Truncation | Post-Truncation |

|---|---|---|

| n | 10,000 | 10,000 |

| Lower Bound (LB) | - | 0.1 ng/mL |

| Upper Bound (UB) | - | 150.0 ng/mL |

| Minimum | -0.5 ng/mL | 0.1 ng/mL |

| Maximum | 165.0 ng/mL | 150.0 ng/mL |

| Mean | 45.2 ng/mL | 44.8 ng/mL |

| Records Truncated at LB | - | 15 (0.15%) |

| Records Truncated at UB | - | 28 (0.28%) |

Audit Log and Documentation

Maintain a comprehensive audit log of the truncation process. This is a cornerstone of ELUB research and regulatory compliance . [30] [29] The following diagram outlines the critical information relationships that must be documented.

Documentation must include:

- Bound Justification: The empirical, theoretical, or protocol-defined rationale for the selected LB and UB.

- Algorithm Specification: The exact code or logic used to perform the truncation, including its version.

- Input/Output Profiles: Summary statistics of the data before and after the process.

- Impact Analysis: The report detailing the number of records altered and the effect on overall dataset statistics.

- Audit Log: A single, traceable record linking all the above elements, with timestamps and user identifiers.

Algorithmic Implementation of Near-Optimal Bilevel Optimization

Bilevel optimization addresses hierarchical decision-making problems where the upper-level (leader) optimization is constrained by the optimal solution of a lower-level (follower) problem. This framework naturally models numerous scientific and industrial applications, from drug development and hyperparameter tuning to economic policy design. The empirical lower upper bound (ELUB) research provides a methodological foundation for analyzing the theoretical and practical limits of these algorithms, establishing performance boundaries that guide computational implementations.

Near-optimal bilevel optimization specifically addresses scenarios where the lower-level solution may deviate from strict optimality due to computational constraints, bounded rationality, or practical implementation limitations. This approach incorporates robustness against such deviations, ensuring upper-level feasibility and performance stability when the lower-level solution is ε-optimal rather than perfectly optimal. Within the ELUB research context, this framework enables researchers to quantify the trade-offs between solution accuracy, computational efficiency, and implementation robustness across diverse application domains, particularly in pharmaceutical development where such trade-offs have significant practical implications.

Theoretical Foundations

Problem Formulations and Near-Optimality Concepts

The general bilevel optimization problem is classically formulated as:

Upper-level problem: $$ \min{x} F(x,v) $$ subject to: $$ Gk(x,v) \le 0 \quad \forall k \in [![m_u]!] $$ $$ x \in \mathcal{X} $$

Lower-level problem: $$ v \in \mathop{\mathrm{arg\,min}}\limits{y \in \mathcal{Y}} {f(x,y) \text{ s.t. } gi(x,y) \le 0 \ \forall i \in [![m_l]!]} $$

where $F, f: \mathcal{X} \times \mathcal{Y} \rightarrow \mathbb{R}$ represent the upper- and lower-level objective functions, respectively [31].

The near-optimal robust bilevel (NRB) problem introduces a robustness concept protecting the upper-level solution from limited deviations at the lower level. The near-optimality set for a given upper-level decision $x$ and tolerance $\varepsilon \geq 0$ is defined as: $$ \mathcal{S}(x, \varepsilon) = {y \in \mathcal{Y} : gi(x,y) \le 0 \ \forall i \in [![ml]!], \ f(x,y) \le \phi(x) + \varepsilon} $$ where $\phi(x) = \min_{y} {f(x,y) \text{ s.t. } g(x,y) \le 0}$ is the optimal value function of the lower-level problem [31].

This formulation acknowledges that in practical applications, including pharmaceutical portfolio optimization and adaptive therapy scheduling, followers may exhibit $\varepsilon$-rationality rather than perfect optimization due to computational limitations, incomplete information, or satisficing behavior.

ELUB Theoretical Framework

The empirical lower upper bound methodology establishes performance boundaries for bilevel optimization algorithms through both impossibility results (lower bounds) and algorithmic achievability (upper bounds). Recent theoretical advances demonstrate that for bilevel empirical risk minimization with a sum structure across $n+m$ total samples, the optimal sample complexity reaches $\mathcal{O}((n+m)^{1/2}\epsilon^{-1})$ oracle calls to achieve $\epsilon$-stationarity, with this bound being tight [10].

For zeroth-order stochastic bilevel optimization where only noisy function evaluations are available, recent breakthroughs achieve near-optimal sample complexity. Jacobian/Hessian-based approaches attain $\mathcal{O}(d^3/\epsilon^2)$ sample complexity, while penalty-based methods sharpen this to $\mathcal{O}(d/\epsilon^2)$, optimally reducing the dimension dependence to linear while preserving optimal accuracy scaling [32].

In differentially private bilevel optimization, novel algorithms achieve near-optimal excess empirical risk bounds that essentially match optimal rates for standard single-level differentially private ERM, up to additional terms capturing the intrinsic complexity of the nested bilevel structure [33].

Algorithmic Approaches

Near-Optimal Robust Formulations

The near-optimal robust bilevel problem can be formulated as: $$ \min{x} \sup{y \in \mathcal{S}(x, \varepsilon)} F(x,y) $$ subject to: $$ Gk(x,y) \le 0 \quad \forall y \in \mathcal{S}(x, \varepsilon), \ k \in [![mu]!] $$ $$ x \in \mathcal{X} $$

This pessimistic formulation ensures constraint satisfaction for all lower-level responses that are $\varepsilon$-close to optimality [31]. For the optimistic case where the lower-level cooperates within the near-optimal set, the "sup" operator is replaced with "inf".

When the lower-level problem is convex, the NRB problem can be reformulated as a single-level optimization problem using duality theory. For linear bilevel problems with linear lower-level, an extended formulation can be derived using disjunctive constraints to linearize the resulting bilinear terms [31].

Algorithm Classes and Performance Bounds

Table 1: Algorithmic Approaches for Near-Optimal Bilevel Optimization

| Algorithm Class | Key Mechanism | Theoretical Guarantees | Applicable Context |

|---|---|---|---|

| SARAH-based Bilevel [10] | Variance reduction for gradient estimation | $\mathcal{O}((n+m)^{1/2}\epsilon^{-1})$ oracle calls for $\epsilon$-stationarity | Bilevel empirical risk minimization |

| Zeroth-Order Penalty [32] | Penalty function reformulation with Gaussian smoothing | $\mathcal{O}(d/\epsilon^2)$ sample complexity | Stochastic bilevel with noisy evaluations |

| Differentially Private [33] | Exponential and regularized exponential mechanisms | Near-optimal excess empirical risk bounds | Privacy-sensitive bilevel applications |

| Near-Optimal Robust [31] | Duality-based reformulation | Protection against $\varepsilon$-deviations at lower level | Applications with bounded rationality |

Computational Implementation Workflow

Figure 1: Computational workflow for near-optimal bilevel optimization implementation

Applications in Pharmaceutical Research

R&D Project Portfolio Optimization

In pharmaceutical holding companies, R&D project portfolio optimization naturally fits a bilevel structure with multi-follower dynamics. The upper-level investment company allocates budgets across subsidiaries to maximize overall profit, while each subsidiary (lower-level) responds to its allocated budget by selecting and scheduling its optimal project portfolio [34].

The near-optimal robust formulation is particularly valuable in this context, as it protects the holding company's strategy against subsidiaries selecting projects that are near-optimal rather than strictly optimal from their local perspective. This accommodates practical decision-making where subsidiaries might prioritize projects based on secondary criteria not captured in the formal optimization model.

The resulting bi-level multi-follower mixed-integer optimization model can be converted to a single-level equivalent using parametric optimization approaches, enabling computational solution while preserving the hierarchical relationship [34].

Adaptive Cancer Therapy Optimization

Bilevel optimization provides a mathematical foundation for designing adaptive therapeutic schedules that combat drug resistance in metastatic castrate-resistant prostate cancer (mCRPC). The upper-level problem designs treatment schedules to maximize therapeutic efficacy, while the lower-level models cancer cell dynamics and evolution under treatment pressure [35].

The proposed optimal adaptive periodic therapy framework formulates a bilevel dynamic optimization problem with constraints to establish personalized adaptive therapeutic schedules. The solution identifies optimal therapeutic switches and doses under adaptive therapy, demonstrating superior performance compared to conventional maximum tolerated dose approaches through improved overall survival and reduced total drug doses [35].

This application exemplifies how near-optimal bilevel optimization can capture the dynamic interplay between treatment intervention and biological adaptation, with the near-optimality tolerance reflecting uncertainties in cancer cell dynamics and drug response mechanisms.

Experimental Protocols

Protocol 1: Near-Optimal Robust Bilevel Algorithm Implementation

Objective: Implement and validate a near-optimal robust bilevel optimization algorithm for a pharmaceutical R&D portfolio application.

Materials and Computational Environment:

- MATLAB (v2023a) or Python (v3.9+) with NumPy, SciPy

- Mixed-integer linear programming solver (e.g., Gurobi, CPLEX)

- Standard computing workstation (16GB RAM, multi-core processor)

Procedure:

- Problem Formulation:

- Define upper-level objective: Maximize expected portfolio value subject to budget constraints

- Define lower-level objectives: Individual subsidiary profit maximization given allocated budget

- Specify linking constraints: Budget allocation variables appear in lower-level constraints

Near-Optimal Tolerance Setting:

- Set ε = 0.05 (5% optimality gap) based on historical decision variance analysis

- Define near-optimal set $\mathcal{S}(x, \varepsilon)$ using lower-level objective tolerance

Reformulation:

- Apply Karush-Kuhn-Tucker conditions to lower-level problems

- Linearize complementarity conditions using big-M constraints

- Implement disjunctive constraints for near-optimal robust protection

Solution Algorithm:

- Initialize budget allocation $x^0$ uniformly across subsidiaries

- For iteration $k = 0, 1, 2, \ldots$ until convergence:

- Solve lower-level problems for all subsidiaries given $x^k$

- Identify near-optimal lower-level responses within ε-tolerance

- Update upper-level solution protecting against worst-case near-optimal responses

- Check convergence: $\|x^k - x^{k-1}\| < 10^{-4}$

Validation:

- Compare solution with standard bilevel approach (ε=0)

- Perform sensitivity analysis on ε parameter

- Validate constraint satisfaction under near-optimal lower-level responses

Protocol 2: Zeroth-Order Bilevel Optimization for Clinical Trial Planning

Objective: Implement zeroth-order bilevel optimization for clinical trial planning with noisy outcome evaluations.

Materials:

- Python with TensorFlow or PyTorch for automatic differentiation

- Clinical trial simulation framework

- Gaussian smoothing parameters: $\mu = 0.01$, $\delta = 0.001$

Procedure:

- Problem Setup:

- Upper-level: Optimize trial design parameters (dosage, frequency, patient selection)

- Lower-level: Model patient response and disease progression dynamics

Zeroth-Order Gradient Estimation:

- For iteration $t = 0, 1, 2, \ldots, T$:

- Sample random direction $d_t \sim \mathcal{N}(0, I)$

- Estimate gradient: $\hat{\nabla}F(xt) = \frac{F(xt + \mu dt) - F(xt - \mu dt)}{2\mu}dt$

- Update parameters: $x{t+1} = xt - \etat \hat{\nabla}F(xt)$

- For iteration $t = 0, 1, 2, \ldots, T$:

Penalty Function Implementation:

- Reformulate bilevel problem as single-level: $\min_{x,y} F(x,y) + \lambda \|y - y^*(x)\|^2$

- Adapt penalty parameter λ throughout optimization

Performance Validation:

- Compare with model-based gradient approaches

- Assess sample complexity relative to theoretical bounds

- Evaluate robustness to noise in objective evaluations

Research Reagent Solutions

Table 2: Essential Computational Tools for Bilevel Optimization Research

| Tool Category | Specific Implementation | Research Function | Application Context |

|---|---|---|---|

| Optimization Solvers | Gurobi, CPLEX, GAMS | Solve reformulated single-level problems | Mixed-integer linear bilevel problems |

| Algorithmic Frameworks | BilevelOptim.jl, BOA | Implement gradient-based bilevel algorithms | Smooth convex bilevel problems |

| Automatic Differentiation | PyTorch, TensorFlow, JAX | Gradient computation for neural net embeddings | Modern ML-based bilevel applications |

| Simulation Environments | Simulink, AnyLogic | Lower-level system dynamics simulation | Engineering and biological applications |

| Benchmark Problems | BOLIB, QAPLIB | Algorithm validation and comparison | General bilevel optimization research |

The algorithmic implementation of near-optimal bilevel optimization represents a significant advancement in addressing practical hierarchical decision problems across scientific domains, particularly in pharmaceutical R&D and adaptive therapy design. The empirical lower upper bound research framework provides essential theoretical foundations for understanding fundamental performance limitations and achievable guarantees.

Future research directions include developing more efficient algorithms for non-convex bilevel problems, improving scalability for high-dimensional applications, and enhancing integration with machine learning approaches for settings where lower-level dynamics are partially unknown or computationally intractable. The continued refinement of near-optimal robust formulations will further bridge the gap between theoretical bilevel optimization and practical decision-making under uncertainty.

Calculating Predictivity and Reliability in Genomic Predictions

Within genomic selection, the accurate calculation of predictivity and reliability is paramount for evaluating the performance of breeding values and forecasting genetic gain. These metrics are intrinsically linked to the uncertainty quantification inherent in predictive models. This protocol details the application of computational methods, framed within the broader research on empirical lower upper bound (ELUB) methods, to determine these crucial parameters. The approaches outlined herein leverage deterministic modeling and cross-validation techniques to provide researchers with robust tools for assessing genomic prediction efficacy in plant and animal breeding programs, as well as in biomedical research involving complex traits [36] [37].

Computational Foundations

Definitions and Core Concepts

- Predictivity: Typically reported as the predictive ability, it is the correlation between the genomic estimated breeding values (GEBV) and the observed or corrected phenotypic values in a validation population. This serves as a direct measure of model performance [37].

- Reliability: Represents the squared accuracy of the GEBV, indicating the proportion of genetic variance explained by the predictions. It is calculated as the square of the correlation between the true and estimated breeding values and is crucial for long-term breeding program design [38] [36].

- Empirical Lower Upper Bound (ELUB) Context: The methods for calculating predictivity and reliability provide empirical bounds on the expected performance of genomic prediction models. The reliability, in particular, sets a lower bound on the confidence of selection decisions, while predictivity offers an upper bound on realizable accuracy from a given model and dataset [39] [36].

Key Deterministic Parameters