Discriminatory Power in Analytical Method Validation: A Comprehensive Guide for Pharmaceutical Scientists

This article provides a complete overview of discriminatory power, a critical attribute in analytical method validation that ensures methods can detect meaningful changes in a drug product's critical quality attributes.

Discriminatory Power in Analytical Method Validation: A Comprehensive Guide for Pharmaceutical Scientists

Abstract

This article provides a complete overview of discriminatory power, a critical attribute in analytical method validation that ensures methods can detect meaningful changes in a drug product's critical quality attributes. Aimed at researchers, scientists, and drug development professionals, it covers foundational concepts, methodological applications across various dosage forms, troubleshooting strategies for common challenges, and validation approaches aligned with FDA, USP, and EMA guidelines. The content synthesizes current regulatory expectations with practical case studies from dissolution testing of solid oral dosage forms, fast-dispersible tablets, and complex formulations to equip professionals with the knowledge to develop, optimize, and validate robust, discriminative analytical methods.

What is Discriminatory Power? Defining the Cornerstone of Meaningful Analytical Methods

The Ability to Detect Changes in Critical Quality Attributes

Core Definition and Importance

In analytical method validation, discriminatory power is the capability of an analytical procedure to detect changes in the Critical Quality Attributes (CQAs) of a drug product. A CQA is a physical, chemical, biological, or microbiological property or characteristic that should be within an appropriate limit, range, or distribution to ensure the desired product quality [1]. These attributes are fundamental to guaranteeing that a drug is safe, efficacious, and consistent from batch to batch. The ability to measure them accurately is a cornerstone of modern pharmaceutical development and quality control [1].

The concept of discriminatory power is central to the development of meaningful and robust analytical methods. Without it, a method cannot reliably distinguish between acceptable and unacceptable product quality, nor can it ensure that changes in the manufacturing process that impact performance are detected [2] [3]. For complex dosage forms, such as suspensions or fast-dispersing tablets, developing a method with high discriminatory power is particularly challenging yet critical, as it often serves as a surrogate for in vivo performance and is vital for biowaiver approval of generic products [2].

Experimental Protocols for Demonstrating Discriminatory Power

The following section outlines detailed methodologies from case studies that successfully developed and validated discriminatory analytical methods.

Case Study 1: Discriminatory Release Method for an Otic Suspension

This study aimed to establish an in-vitro release method for dexamethasone in a ciprofloxacin-dexamethasone otic suspension that could discriminate based on key CQAs [2].

- Objective: To develop a discriminatory in-vitro release profile for dexamethasone using a flow-through cell apparatus (USP Type IV) [2].

- Materials:

- Drug Substance: Dexamethasone (Sanofi) [2].

- Apparatus: Flow-through cell dissolution apparatus (USP Type IV) with 7.4 pH simulated tear fluid as the dissolution medium. The system incorporated GF/F glass filters and a 5 mm ruby bead [2].

- Analysis: Dexamethasone release was quantified using a model-independent approach, with the similarity factor (f2) used for profile comparison [2].

- Methodology:

- Formulation Variations: Several formulations with intentional variations in CQAs were prepared:

- Particle Size: Five formulations with different dexamethasone particle sizes (D90 ranging from 1.75 µm to 142 µm) [2].

- Polymer Concentration: Formulations with varying hydroxyethyl cellulose concentration, leading to viscosities from 0.4 cP to 18.5 cP [2].

- pH: Formulations with adjusted pH (low pH 3.56 and high pH 4.81) [2].

- In-Vitro Release Testing: The release profile of each formulation was tested using the flow-through cell apparatus [2].

- Data Analysis: The similarity factor (f2) was calculated to compare the release profile of each modified formulation against the control profile. An f2 value between 50 and 100 suggests similarity, while values below 50 indicate a difference in release profiles, demonstrating the method's discriminatory power [2].

- Formulation Variations: Several formulations with intentional variations in CQAs were prepared:

Case Study 2: Discriminatory Dissolution Method for Fast-Dispersible Tablets

This study developed a discriminatory dissolution method for Domperidone Fast Dispersible Tablets (FDTs), a Biopharmaceutics Classification System (BCS) Class II drug [3].

- Objective: To develop and validate a dissolution method capable of discriminating between different formulations of Domperidone FDTs, which disintegrate very rapidly [3].

- Materials:

- Drug Substance: Domperidone reference standard [3].

- Apparatus: USP Apparatus II (paddle), eight-station Electrolab TDT-08L dissolution tester [3].

- Dissolution Media: Various media were screened, including 0.1 N HCl, phosphate buffer (pH 6.8), and sodium lauryl sulfate (SLS) in distilled water at concentrations of 0.5%, 1.0%, and 1.5% [3].

- Methodology:

- Solubility and Sink Condition Studies: The equilibrium solubility of domperidone was determined in all candidate media. The sink condition was evaluated to ensure the medium's capacity to dissolve the drug was not excessively high, which could mask discrimination [3].

- Method Optimization: Dissolution studies were performed on two different marketed FDTs (FDT1 and FDT2) across the different media and at agitation speeds of 50 and 75 rpm [3].

- Discrimination Testing: The optimized method (0.5% SLS in distilled water, 900 mL, USP Apparatus II, 50 rpm) was used to test the release profiles of two prepared FDT formulations (DOM-1 and DOM-2) [3].

- Data Analysis: Dissolution profiles were compared using similarity (f2) and difference (f1) factors. The method was validated for specificity, accuracy, precision, linearity, and robustness [3].

Data Presentation and Analysis

The following tables summarize the quantitative data from the cited experiments, demonstrating how discriminatory power is measured and confirmed.

Table 1: Discriminatory Power of In-Vitro Release Method for Dexamethasone Otic Suspension [2]

| Critical Quality Attribute (CQA) | Formulation Variation | Measured Value | f2 vs. Control | Interpretation |

|---|---|---|---|---|

| Particle Size (D90) | Control (F1) | 8.0 µm | (Baseline) | (Baseline) |

| Smaller Particles (F2) | 0.464 µm | 64 | Faster release | |

| Larger Particles (F3) | 20.0 µm | 41 | Slower release | |

| Larger Particles (F4) | 52.0 µm | 22 | Much slower release | |

| Larger Particles (F5) | 142.0 µm | 14 | Slowest release | |

| Polymer Concentration (Viscosity) | Control (F1) | 2.4 cP | (Baseline) | (Baseline) |

| No Polymer (F6) | 0.4 cP | 83 | Enhanced release | |

| High Polymer (F7) | 18.5 cP | 47 | Reduced release | |

| pH | Control (F1) | 4.18 | (Baseline) | (Baseline) |

| Low pH (F8) | 3.56 | 61 | Marginal difference | |

| High pH (F9) | 4.81 | 83 | Marginal difference |

Table 2: Research Reagent Solutions for Discriminatory Dissolution Testing [2] [3]

| Reagent / Material | Function in the Experiment |

|---|---|

| Flow-Through Cell (USP Type IV) | Apparatus that simulates dynamic fluid conditions, prevents saturation, and offers high discriminatory power for complex formulations [2]. |

| Simulated Tear Fluid (pH 7.4) | Dissolution medium that mimics the physiological environment for otic suspensions [2]. |

| Sodium Lauryl Sulfate (SLS) | Surfactant used in dissolution media to modulate solubility and sink conditions, crucial for discriminating the release of poorly soluble drugs [3]. |

| Hydroxyethyl Cellulose | Viscosity-modifying polymer; its concentration is a CQA that impacts drug release rate [2]. |

| GF/F Glass Filter & Ruby Bead | Components used in the flow-through cell to hold the suspension and ensure uniform flow, respectively [2]. |

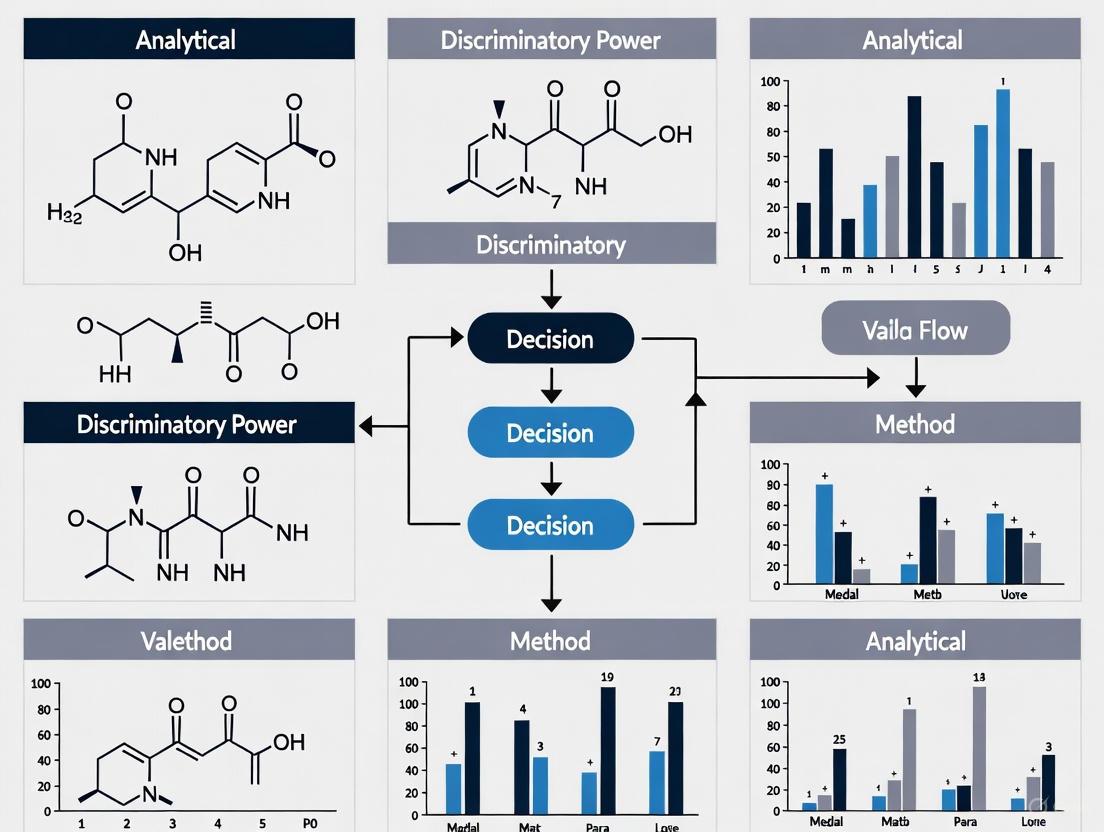

Visualization of Workflows and Relationships

The following diagrams, generated using Graphviz DOT language, illustrate the core logical relationships and experimental workflows in establishing discriminatory power.

Diagram 1: The Core Logic of Discriminatory Power.

Diagram 2: Experimental Workflow for Method Validation.

In the pharmaceutical industry, the quality of a drug product is not solely a function of its manufacturing process; it is intrinsically tied to the performance of the analytical methods used to measure its critical quality attributes (CQAs). Analytical method validation provides the scientific evidence that a test procedure is reliable, consistent, and suitable for its intended purpose, forming the bedrock of product quality assurance [4]. Without validated methods, the data generated to support a product's identity, strength, purity, and potency is questionable, introducing significant risk to patient safety and product efficacy.

This technical guide explores the pivotal link between method performance and product quality, with a specific focus on discriminatory power—the ability of an analytical method to detect changes in the product's performance characteristics. Discriminatory power is not merely a validation parameter; it is the core property that enables a method to function as a meaningful quality control tool, capable of guiding development, ensuring batch-to-bustch consistency, and ultimately protecting patient health [5].

The Central Role of Discriminatory Power

Discriminatory power, also referred to as discriminative capacity, is the ability of an analytical procedure to detect differences in the quality attribute it is designed to measure when the product is intentionally or unintentionally altered. A method with high discriminatory power can distinguish between acceptable and unacceptable product, while a non-discriminatory method may fail to detect critical quality failures [5].

The development of a dissolution method for Carvedilol tablets, a BCS Class II drug, serves as a prime example. The objective was to create a method that could not only quantify drug release but also differentiate between formulations with different release characteristics. The researchers evaluated various conditions—including dissolution medium, volume, and paddle speed—to find a setup that was sensitive enough to reflect changes in the product's performance. The final optimized method (Apparatus II, 50 rpm, 900 ml of pH 6.8 phosphate buffer) successfully demonstrated its discriminative capacity by detecting significant differences in the dissolution profiles of three different commercial products [5]. This underscores that a method's value in quality control is directly proportional to its ability to discriminate.

The Consequences of Poor Discriminatory Power

A method lacking sufficient discriminatory power poses a substantial risk to the drug lifecycle:

- Inadequate Formulation Selection: During development, a non-discriminatory method cannot reliably guide scientists toward the optimal formulation, potentially leading to the selection of a suboptimal product with poor bioavailability or stability.

- Masking of Critical Process Changes: It may fail to detect the impact of scale-up and post-approval changes (SUPAC), such as changes in excipient suppliers or manufacturing equipment, allowing potentially detrimental variations to go unnoticed [5].

- Stability Failures: A method that cannot accurately track degradation over time may lead to an inaccurate shelf-life determination, risking the release of sub-potent or degraded product.

- Batch Release Failures: It provides a false sense of security, where a product passing a non-discriminatory test may still perform poorly in vivo, jeopardizing patient therapeutic outcomes.

A Case Study in Method Discrimination: Carvedilol Dissolution

The development of a discriminative dissolution method for Carvedilol tablets illustrates the practical application and critical importance of this concept [5].

Experimental Objectives and Design

The primary objective was to develop and validate a dissolution method capable of differentiating Carvedilol tablet formulations based on their in vitro release profiles. The study was conducted in two phases:

- Selection of Dissolution Conditions: Various conditions were screened using a commercial product (Product-A) to identify the most discriminative setup.

- Validation and Optimization: The selected method was validated and its discriminative power was confirmed by comparing dissolution profiles of tablets made with two different API particle sizes (API-I: d90 ~25.3 μm; API-II: d90 ~8.5 μm) and three different commercial products (Product-A, Product-B, and Product-C).

Materials and Research Reagent Solutions

Table 1: Key Materials and Reagents for Discriminative Dissolution Study

| Material/Reagent | Function in the Experiment |

|---|---|

| Carvedilol API (API-I & API-II) | Active Pharmaceutical Ingredient; difference in particle size used to challenge method discrimination. |

| USP Apparatus II (Paddle) | Standard equipment to simulate drug dissolution in the gastrointestinal tract. |

| pH 6.8 Phosphate Buffer | Dissolution medium providing sink conditions and relevant physiological pH. |

| Reverse Phase HPLC System | Analytical technique for precise quantification of dissolved Carvedilol. |

| LiChrospher 100 RP-18 Column | Stationary phase for chromatographic separation of Carvedilol. |

| 0.45 μm Membrane Filter | To remove undissolved particles from dissolution samples prior to HPLC analysis. |

| Mobile Phase: Buffer-Methanol-Acetonitrile | Liquid medium to carry the sample through the HPLC column for analysis. |

Detailed Methodology and Workflow

The following workflow outlines the key steps involved in developing and executing a discriminative dissolution study.

Key Experimental Steps [5]:

- Saturation Solubility: The saturation solubility of Carvedilol (API-II) was determined in triplicate in various media (0.1N HCl, SGF, pH 4.5 acetate buffer, pH 6.8 phosphate buffer, distilled water) by shaking an excess of drug at 37°C for 24 hours. The equilibrated samples were filtered and analyzed by HPLC to select a medium providing sink conditions.

- Dissolution Testing: Tests were performed using USP Apparatus II at 37±0.5°C. Aliquots (5 ml) were withdrawn at 5, 10, 15, 30, 45, 60, and 120 minutes and replaced with fresh medium. Samples were filtered and analyzed by a validated HPLC method.

- HPLC Analysis: The drug content was quantified using a Reverse Phase HPLC system with a LiChrospher 100 RP-18 column and a UV detector set at 242 nm. The mobile phase was a mixture of 0.03M potassium dihydrogen orthophosphate (pH 4.8) buffer, methanol, and acetonitrile (58:32:10) at a flow rate of 1.2 ml/min.

Data Analysis and Interpretation of Discriminatory Power

The comparison of dissolution profiles was conducted using multiple statistical approaches to rigorously demonstrate the method's discriminatory power [5].

Table 2: Statistical Methods for Comparing Dissolution Profiles

| Method Category | Specific Method | Application in Assessing Discriminatory Power |

|---|---|---|

| ANOVA-based | One-way ANOVA at each time point | Identifies if statistically significant differences exist between the mean % dissolved of different products at specific time points. |

| Model-Independent | Difference Factor (f1) | Measures the relative error between two curves. Values of 0-15 indicate similarity. |

| Similarity Factor (f2) | Measures the logarithmic reciprocal of the square root of the sum of squared errors. Values of 50-100 indicate similarity. | |

| Model-Dependent | Fitting to kinetic models (e.g., zero-order, first-order, Higuchi, Korsmeyer-Peppas) | Compares the release kinetics and mechanisms between products. Significant differences in model parameters indicate different release behaviors. |

The results conclusively demonstrated the method's discriminatory power. The dissolution profiles of the three different products showed significant differences when analyzed by all three methods. The model-independent approach (f1 and f2 factors) and ANOVA confirmed that the profiles were not similar, while the model-dependent analysis revealed potential differences in the drug release mechanisms [5].

Ensuring Continued Method Performance

Method validation is not a one-time event. To maintain the vital link between method performance and product quality throughout a product's lifecycle, a strategy for Continued Method Performance Verification is essential [6]. This involves proactively monitoring method performance to ensure it remains in a state of control.

Tools for Continued Performance Verification

Commercial quality control laboratories can employ a toolkit of approaches to monitor method performance [6]:

Table 3: Tools for Continued Analytical Performance Verification

| Tool | Description | Best Use Case |

|---|---|---|

| Control Charting System Suitability | Trending attributes (e.g., resolution, peak asymmetry) from system suitability samples run with each analytical sequence. | A low-effort way to monitor the consistency of the analytical system itself over time. |

| Control Charting a Separate Sample | Incorporating an additional, well-characterized control sample in each run and charting its key quality attributes. | Provides direct insight into method performance for a specific material, but requires sample management. |

| Periodic Precision/Accuracy Assessment | Running a control sample repeatedly over a defined period (e.g., a quarter) to calculate precision and compare it to historical data. | For periodic, in-depth verification that method performance remains stable over longer timeframes. |

| Comparing Orthogonal Attributes | Charting the difference between two related test results (e.g., in-process test vs. release test) from production batches. | A high-level assessment to ensure method performance is consistent across different testing stages or labs. |

A successful strategy involves performing a comprehensive risk assessment for each method to select the most pertinent performance indicators and then intentionally applying the appropriate tools from the toolkit. This data must be integrated into a knowledge management program where trends are reviewed, and findings are communicated to testing personnel to foster scientific understanding and continuous improvement [6].

The critical link between method performance and product quality is undeniable. A robust, validated analytical method acts as the guardian of product quality, ensuring that every batch released to the market is safe, efficacious, and consistent. The discriminatory power of a method is its most crucial attribute, transforming it from a simple quantitative tool into a powerful sentinel capable of detecting meaningful changes in the product. Investing in the development of discriminative methods and implementing a vigilant continued performance strategy is not just a regulatory requirement—it is a fundamental commitment to patient safety and product excellence.

Analytical Method Validation (AMV) is a critical regulatory requirement in the pharmaceutical industry, ensuring that analytical procedures used for drug testing produce reliable, consistent, and accurate results. Global regulatory bodies including the U.S. Food and Drug Administration (FDA), the United States Pharmacopeia (USP), and the European Medicines Agency (EMA) have established harmonized yet distinct guidelines governing these validation processes. The recent implementation of updated ICH Q2(R2) and ICH Q14 guidelines in 2024 represents a significant evolution in regulatory expectations, placing greater emphasis on scientific rigor and risk-based approaches throughout the analytical procedure lifecycle [7] [8].

Within this validation framework, discriminatory power represents a fundamental characteristic of an analytical procedure, reflecting its ability to detect differences in the quality attribute being measured between samples. This capability is particularly crucial for methods intended to distinguish between drug substances and products with varying quality characteristics, stability profiles, or manufacturing processes. In method validation research, demonstrating sufficient discriminatory power provides scientific evidence that the procedure can adequately monitor critical quality attributes and detect potential quality deviations throughout the product lifecycle. The concept extends beyond simple specificity, encompassing the method's resolution capacity to differentiate between closely related analytes or product variants under various conditions [9].

Regulatory Framework and Recent Developments

The regulatory landscape for analytical method validation is undergoing significant transformation with the adoption of updated international guidelines. The ICH Q2(R2) guideline on "Validation of Analytical Procedures" provides a comprehensive framework for validation principles, while ICH Q14 on "Analytical Procedure Development" offers guidance on science-based approaches for developing robust analytical methods [7] [8]. These documents, implemented in June 2024, facilitate more efficient, science-based, and risk-based post-approval change management while encouraging innovation in analytical procedure development.

FDA Perspective

The FDA operates as a single national authority under the Department of Health and Human Services, providing centralized oversight of drug approval and quality surveillance. The agency has formally adopted the ICH Q2(R2) guideline as final guidance in March 2024, emphasizing its application for the validation of analytical procedures, including spectroscopic data applications [7]. The FDA's approach integrates this guidance within its existing framework for drug evaluation and research, requiring manufacturers to demonstrate robust method performance throughout the product lifecycle.

EMA Perspective

The EMA functions as a coordinating body across EU member states, working alongside national competent authorities to maintain consistent quality standards throughout Europe. The EMA published ICH Q14 as Step 5 in January 2024, with an effective date of 14 June 2024 [8]. This guideline applies to both new and revised analytical procedures used for release and stability testing of commercial drug substances and products, encompassing both chemical and biological/biotechnological entities. The EMA's implementation accounts for the need for harmonization across multilingual markets while maintaining the flexibility for science- and risk-based approaches.

Converging Standards

The collaborative development of ICH guidelines through the International Council for Harmonisation has created substantial alignment between FDA, USP, and EMA requirements for analytical method validation. Current global standards are evolving to make analytical results more reliable and compliant, with specific emphasis on enhanced validation parameters including mandatory forced degradation studies, stricter precision limits, and higher linearity expectations [10]. This harmonization enables manufacturers to develop standardized validation approaches for global markets while addressing regional nuances in implementation.

Core Validation Parameters and Requirements

The updated regulatory guidelines define specific, stringent requirements for key validation parameters that collectively demonstrate the reliability and capability of analytical procedures. These parameters must be thoroughly evaluated during method validation to establish scientific evidence that the method is suitable for its intended purpose.

Specificity and Discrimination

Specificity remains a mandatory validation parameter with enhanced requirements under current guidelines. Regulatory standards now explicitly require forced degradation studies and peak purity assessment with minimum thresholds ≥0.99, ensuring the method can unequivocally discriminate between the analyte and potential interferants [10]. This parameter is intrinsically linked to discriminatory power, as it verifies the method's ability to measure the analyte accurately in the presence of other components such as impurities, degradation products, or matrix components. The demonstration of specificity provides foundational evidence of the method's capacity to distinguish between closely related entities.

Accuracy and Precision

Accuracy, representing the closeness of agreement between the conventional true value and the value found, has been refined with tighter acceptance criteria for impurities quantification (80-120%) [10]. Precision, encompassing repeatability, intermediate precision, and reproducibility, now operates under stricter repeatability limits, requiring more consistent results under identical operating conditions [10]. These parameters collectively ensure the method generates reliable data with sufficient resolution to detect meaningful quality differences.

Linearity and Range

Linearity requirements have been enhanced with higher correlation coefficient expectations: ≥0.9999 for assay methods and ≥0.9995 for impurities [10]. This demonstrates the method's ability to produce results that are directly proportional to analyte concentration within a specified range, which is essential for accurately quantifying both major components and trace-level impurities. The range confirmation ensures the method maintains these linearity, accuracy, and precision characteristics throughout the intended operating interval.

Detection and Quantification Limits

LOD/LOQ determination is now mandatory according to ICH Q2(R2)+Q14 requirements [10]. These parameters establish the lowest levels of analyte that can be reliably detected or quantified, directly contributing to the method's discriminatory power for low-concentration analytes. The validation must demonstrate sufficient signal-to-noise ratios or statistical approaches to verify these limits.

Robustness

Robustness testing has become a mandatory requirement, demonstrating the method's capacity to remain unaffected by small, deliberate variations in method parameters [10]. This parameter is typically evaluated during development but must be confirmed during validation, providing evidence that the method will maintain its discriminatory power under normal operational variations.

Table 1: Core Analytical Method Validation Parameters and Requirements

| Validation Parameter | FDA/USP/EMA Requirements | Relationship to Discriminatory Power |

|---|---|---|

| Specificity | Mandatory forced degradation & peak purity ≥0.99 [10] | Ensures method can distinguish analyte from interferants |

| Accuracy | Impurities refined to 80-120% [10] | Verifies method correctly measures true values |

| Precision | Stricter repeatability limits [10] | Confirms method produces consistent results |

| Linearity | Assay ≥0.9999 | Impurities ≥0.9995 [10] | Demonstrates proportional response across range |

| LOD/LOQ | Now mandatory per ICH Q2(R2)+Q14 [10] | Establishes lowest detectable/quantifiable levels |

| Robustness | Now mandatory [10] | Confirms reliability under parameter variations |

Assessing Discriminatory Power in Method Validation

Conceptual Framework

In analytical method validation, discriminatory power represents the procedure's capacity to detect meaningful differences in the quality attribute being measured between test samples. This concept extends beyond basic specificity to include the method's resolution capability and its ability to monitor critical quality attributes throughout the product lifecycle. A method with sufficient discriminatory power can effectively distinguish between acceptable and unacceptable product quality, differentiate stability changes, and detect manufacturing variations [9].

The discriminatory power of an analytical method is not typically expressed as a single numerical value but is instead demonstrated through a combination of validation parameters including specificity, precision, and sensitivity. The relationship between these parameters collectively establishes the method's overall capability to discriminate between different product quality states.

Methodologies for Evaluation

The evaluation of discriminatory power employs both quantitative and comparative approaches. Statistical methods for assessing discrimination include calculation of resolution factors between closely eluting peaks in chromatography, signal-to-noise ratios for detection capability, and statistical tests for distinguishing between sample populations with different quality attributes.

Experimental designs for demonstrating discriminatory power typically include:

- Forced degradation studies: Evaluating the method's ability to separate and quantify degradation products from the active pharmaceutical ingredient [10]

- Spiking experiments: Assessing detection of low-level impurities in the presence of the main component

- Matrix variation studies: Testing method performance across different batches, compositions, or manufacturing conditions

- Stability-indicating method validation: Demonstrating the method can detect and quantify changes in product quality over time

Table 2: Experimental Protocols for Assessing Discriminatory Power

| Experimental Approach | Protocol Description | Measured Outcomes |

|---|---|---|

| Forced Degradation Studies | Subjecting drug substance to stress conditions (acid, base, oxidation, thermal, photolytic) and analyzing degradation profile [10] | Peak purity, resolution from main peak, mass balance |

| Spiked Recovery Experiments | Adding known quantities of impurities or related substances to sample matrix and measuring recovery | Accuracy of impurity quantification, detection capability |

| Deliberate Variation Testing | Intentionally modifying manufacturing parameters and testing ability to detect differences | Method sensitivity to process-related changes |

| Comparative Analysis | Testing method performance across different product formulations or manufacturing sites | Ability to distinguish between acceptable quality variations |

Computational Assessment

For quantitative assessment of discriminatory power, Simpson's Index of Diversity provides a statistical measure of a method's ability to differentiate between samples. This index, adapted from microbiological typing methods, calculates the probability that two unrelated samples will be placed into different categories by the analytical method [11].

The formula for Simpson's Index of Diversity (DI) is:

$$DI = 1 - \frac{\sum{j=1}^{s} nj(n_j-1)}{N(N-1)}$$

Where:

- $s$ = number of distinct types identified by the method

- $n_j$ = number of isolates of the jth type

- $N$ = total number of isolates in the sample population

This statistical approach allows for direct comparison of different analytical methods regarding their discrimination capability, with higher values (closer to 1.0) indicating greater discriminatory power [11].

Analytical Procedure Lifecycle and Workflow

The modern regulatory framework emphasizes an integrated lifecycle approach to analytical procedures, connecting development, validation, and ongoing monitoring through science- and risk-based principles. ICH Q14 specifically addresses analytical procedure development, establishing a systematic framework for building quality into methods from initial conception through commercial application [8].

This integrated workflow demonstrates how discriminatory power is built into analytical methods beginning with the Analytical Target Profile (ATP) definition, where required discrimination needs are specified based on the method's intended purpose. Throughout development and validation, experiments are designed to verify the method meets these discrimination requirements, with ongoing monitoring confirming maintained performance throughout the method's lifecycle.

Comparative Regulatory Analysis: FDA vs. EMA

While FDA and EMA requirements for analytical method validation are largely harmonized through ICH guidelines, important distinctions remain in implementation approaches, documentation expectations, and review processes that impact global validation strategies.

Structural and Philosophical Differences

The FDA operates as a single national authority, enabling consistent application of standards across the United States. In contrast, the EMA functions as a coordinating network across multiple EU member states, requiring consideration of national implementation alongside centralized procedures [12]. This structural difference influences the approach to method validation, with the FDA providing more centralized interpretation of requirements while the EMA must accommodate broader implementation across the regulatory network.

For review timelines, standard FDA reviews typically complete within approximately 10 months (6 months for Priority Review), while EMA standard reviews under the centralized procedure take approximately 210 days, though these timelines often extend due to "clock stops" for additional information requests [12]. These timing differences can impact validation strategy, particularly for methods supporting accelerated approval pathways.

Validation Documentation and Submission

A significant practical difference between the agencies lies in documentation and language requirements. While both accept electronic Common Technical Document (eCTD) format submissions, the FDA requires documentation only in English, whereas the EMA requires product information, labeling, and patient leaflets in all official languages of member states where the product will be marketed [12]. This linguistic requirement extends to method validation documentation supporting product quality information.

Regarding validation lifecycle approach, the FDA's process validation guidance employs a clear three-stage model (Process Design, Process Qualification, Continued Process Verification), while the EMA's Annex 15 categorizes approach as Prospective, Concurrent, and Retrospective validation [13]. For analytical method validation, this translates to differences in how the lifecycle approach is documented and presented, though the scientific principles remain consistent.

Table 3: Comparative Analysis of FDA and EMA Regulatory Approaches

| Aspect | FDA Approach | EMA Approach |

|---|---|---|

| Regulatory Structure | Single national authority [12] | Coordinating network across EU member states [12] |

| Review Timelines | ~10 months standard, ~6 months Priority Review [12] | ~210 days standard, often extended due to "clock stops" [12] |

| Documentation Language | English only [12] | Multilingual - dossier in English, labeling in all EU languages [12] |

| Lifecycle Terminology | Three-stage model (Design, Qualification, Continued Verification) [13] | Prospective, Concurrent, Retrospective Validation [13] |

| Ongoing Monitoring | Continued Process Verification (CPV) [13] | Ongoing Process Verification (OPV) [13] |

Essential Research Reagents and Materials

The experimental assessment of discriminatory power in analytical method validation requires specific reagents, reference materials, and technological resources to conduct appropriate studies. The selection of these materials directly impacts the reliability and regulatory acceptance of validation data.

Table 4: Essential Research Reagent Solutions for Discriminatory Power Assessment

| Reagent/Material Category | Specific Examples | Function in Validation Studies |

|---|---|---|

| System Suitability Standards | USP resolution mixtures, chromatographic efficiency standards | Verifies instrumental performance before validation experiments |

| Forced Degradation Reagents | Hydrogen peroxide (oxidative), hydrochloric acid/NaOH (acid/base), heat/light sources | Creates degradation products for specificity demonstration |

| Reference Standards | Qualified impurity standards, degradation product standards, drug substance CRS | Provides known qualifiers for identification and quantification |

| Matrix Components | Placebo formulations, blank biological fluids, synthetic membranes | Evaluates selectivity in presence of non-active components |

| Chromatographic Materials | Different column chemistries (C18, phenyl, HILIC), varying mobile phase buffers | Assesses robustness and specificity under modified conditions |

These reagents and materials enable the comprehensive experimental evaluation of a method's discriminatory power through controlled variation of test conditions and comparison against known standards. The qualification and documentation of these materials form an essential component of the validation data package submitted to regulatory agencies.

The regulatory imperative for analytical method validation represents a harmonized yet nuanced framework across FDA, USP, and EMA jurisdictions. The recent implementation of ICH Q2(R2) and Q14 guidelines has strengthened requirements for demonstrating methodological reliability, with particular emphasis on the built-in discriminatory power necessary to detect meaningful quality differences throughout the product lifecycle. As regulatory standards continue to evolve toward more scientific, risk-based approaches, the demonstration of sufficient discriminatory power through comprehensive validation studies remains fundamental to regulatory compliance and product quality assurance. Pharmaceutical manufacturers must maintain vigilance in understanding both the convergences and distinctions between regulatory expectations across global markets to ensure efficient approval and ongoing quality monitoring of medicinal products.

In the pharmaceutical sciences, the discriminatory power of an analytical method is its ability to detect differences in product performance resulting from deliberate, clinically relevant changes to critical quality attributes (CQAs). A method lacking this power poses a significant risk to public health and product quality, as it can fail to identify batches of generic drug products that are not therapeutically equivalent to their reference counterparts. Within the context of a broader thesis on discriminatory power, this technical guide details how poor method discrimination can lead to the release of non-bioequivalent batches, explores the underlying statistical and regulatory frameworks, and presents advanced methodologies for developing and validating truly discriminatory analytical procedures.

The Critical Link Between Discriminatory Power and Bioequivalence

Bioequivalence (BE) is the cornerstone for the approval of generic drugs, signifying that the generic product exhibits no significant difference in the rate and extent of drug absorption compared to the innovator product [14]. Regulators like the U.S. Food and Drug Administration (FDA) approve generic products based on demonstrated BE, largely relying on in vitro tests as surrogates for costly clinical studies [15].

The fundamental premise is that if a formulation is pharmaceutically equivalent and demonstrates comparable in vitro performance (e.g., dissolution) under discriminatory conditions, it will be therapeutically equivalent [15]. Discriminatory power is the property of the analytical method that validates this premise. A method with poor discriminatory power is "blind" to critical variations in CQAs, such as particle size or polymer viscosity, that can alter in vivo drug release and absorption. Consequently, it may falsely classify a non-bioequivalent batch as acceptable, undermining the entire generic drug approval and quality control system.

Table 1: Fundamental Concepts Linking Method Power to Bioequivalence

| Concept | Definition | Regulatory Impact |

|---|---|---|

| Pharmaceutical Equivalents | Drug products with identical active ingredients, dosage forms, strength, and route of administration [15]. | Prerequisite for generic substitution. |

| Therapeutic Equivalents | Pharmaceutical equivalents that are bioequivalent and can be expected to have the same clinical effect [15]. | Listed in the FDA Orange Book as substitutable. |

| Discriminatory Power | The ability of an analytical method to detect changes in a product's CQAs that impact its performance [2]. | Ensures in vitro BE tests are predictive of in vivo performance. |

Consequences of Inadequate Discriminatory Power

Clinical and Patient Safety Risks

The most severe consequence is the release of a generic product that is not therapeutically equivalent. For drugs with a Narrow Therapeutic Index (NTI), even small deviations in bioavailability can lead to therapeutic failure or toxic side effects [14]. A non-discriminatory dissolution method, for instance, would not detect changes in release profile that could lead to such clinical outcomes. Furthermore, undetected batch-to-batch variability increases the risk of adverse drug reactions and patient harm, eroding confidence in generic medicines.

Regulatory and Batch Failure Risks

Reliance on a non-discriminatory method for quality control creates a fragile quality system. A batch that passes internal quality control may still fail a regulatory bioequivalence study if the method did not accurately predict its performance. The financial and reputational costs of such batch failures, including product recalls and regulatory action, are substantial. Moreover, the method's inability to ensure consistent product quality across batches can lead to post-approval compliance issues and market withdrawal.

Economic and Development Setbacks

The use of a non-discriminatory method during formulation development can mislead scientists into believing that a suboptimal formulation is acceptable. This can result in a generic applicant submitting an Abbreviated New Drug Application (ANDA) with a formulation that subsequently fails the required BE study, leading to significant delays and costly re-development work. This inefficient process ultimately increases development costs and delays patient access to affordable medicines.

Quantitative Evidence: How Poor Discrimination Leads to Batch Failures

Experimental data clearly demonstrates the direct impact of CQA variations on drug release and how a discriminatory method detects these changes. A study on a ciprofloxacin-dexamethasone otic suspension developed a discriminatory in vitro release method using a flow-through cell apparatus (USP Type IV) [2].

The study deliberately altered CQAs and measured the impact on the release profile of dexamethasone, using the similarity factor (f2) for quantification. An f2 value between 50 and 100 indicates similar dissolution profiles, while a value below 50 indicates a difference [2].

Table 2: Impact of Critical Quality Attributes on Drug Release Profile [2]

| Critical Quality Attribute (CQA) | Formulation Variation | Observed f2 Value vs. Control | Interpretation |

|---|---|---|---|

| Particle Size (D90) | Smaller particles (1.75 µm) | 64 | Similar release |

| Larger particles (8.02 µm) | 41 | Different release | |

| Larger particles (18.94 µm) | 14 | Significantly different release | |

| Polymer Concentration (Viscosity) | No polymer (0.4 cPs) | 83 | Similar release |

| High polymer (18.5 cPs) | 47 | Different release | |

| pH | Low pH (3.56) | 61 | Similar release |

| High pH (4.81) | 83 | Similar release |

The data shows that the method was highly discriminatory for changes in particle size and polymer concentration, correctly identifying formulations with potentially different in vivo performance. A non-discriminatory method would have failed to detect these critical differences, leading to the release of non-bioequivalent batches.

Statistical and Methodological Foundations

The Challenge of Batch-to-Batch Variability

A significant challenge in bioequivalence testing is pharmacokinetic (PK) variability between batches of the same product. Standard BE studies use a single batch, which can be unreliable if batch-to-batch variability is high. For example, different batches of Advair Diskus have failed BE tests when compared against each other due to this variability [16]. This underscores the need for methods and study designs that account for this reality.

Advanced Statistical Approaches for BE Assessment

Regulatory agencies use different statistical methods to evaluate BE, each with limitations, especially concerning batch variability.

- Average Bioequivalence (ABE): The standard method in the EU, it uses a two one-sided t-test (TOST) to see if the 90% confidence interval for the Test/Reference ratio falls within set limits (typically 80-125%) [17]. A major limitation is its "one-size-fits-all" criterion, which does not scale with the variability of the reference product [17].

- Population Bioequivalence (PBE): The standard method in the US for in vitro BE for certain complex products, it incorporates a scaling factor based on the reference product's variability [17]. However, it can be asymmetric, potentially accepting equivalence when the test product has lower variability than the reference [17].

- Between-Batch Bioequivalence (BBE): A proposed alternative that explicitly accounts for between-batch variability in its statistical model. Simulation studies show BBE can have a higher true positive rate than ABE and PBE when reference product variability is high (>15%), providing a more robust assessment without increasing sample size [17].

Multiple-Batch Pharmacokinetic Study Designs

To improve BE study reliability, multiple-batch approaches have been proposed [16]. These designs dose different cohorts of subjects with different batches of the test and reference products.

- Fixed Batch Effect: Batch is a fixed factor in the statistical model. The conclusion applies only to the specific batches studied.

- Random Batch Effect: Batch is a random factor. This allows the BE conclusion to be generalized to the entire population of batches from the products, better controlling the false equivalence (Type I) error [16].

- Superbatch: Data from multiple batches are pooled and analyzed as a single batch.

- Targeted Batch: Using a bio-predictive in vitro test, the batch closest to the median performance is selected for the BE study.

These approaches, particularly the Random Batch Effect model, better account for batch-to-batch variability and reduce the risk of erroneous BE conclusions that could result from using a single, non-representative batch [16].

The following workflow illustrates the experimental and statistical pathway for assessing discriminatory power and bioequivalence, highlighting points of failure that can lead to the release of non-bioequivalent batches.

Experimental Protocols for Assessing Discriminatory Power

Development of a Discriminatory In-Vitro Release Method

The following protocol, adapted from a study on an otic suspension, outlines the steps for developing and validating a discriminatory method [2].

Objective: To establish an in vitro release method capable of differentiating dexamethasone release profiles based on changes in CQAs.

Materials and Equipment:

- Apparatus: Flow-through cell dissolution apparatus (USP Type IV) with 7.4 pH simulated tear fluid as dissolution medium.

- Analytical Instrumentation: HPLC system with UV detector for quantifying drug release.

- Materials: Drug substance, excipients, and glass microfiber filters (GF/F) for the flow-through cell.

Procedure:

- Formulate Variants: Create multiple formulations with deliberate, controlled variations in CQAs known or suspected to impact drug release. Key attributes include:

- Particle size distribution (varied through milling processes).

- Polymer concentration (varied to alter viscosity).

- pH of the formulation.

- Perform In-Vitro Release Testing: For each formulation variant, conduct the release test using the flow-through cell apparatus. Use standardized conditions (e.g., flow rate, temperature) relevant to the physiological environment.

- Quantify Drug Release: At predetermined time intervals, collect samples and analyze them using the validated HPLC method to determine the percentage of drug released over time.

- Data Analysis: Plot the mean release profile for each formulation. Calculate the f2 similarity factor between the test formulation (variant) and the control (reference) formulation.

- The f2 factor is calculated as:

f2 = 50 * log {[1 + (1/n) Σ (Rt - Tt)²]^{-0.5} * 100}, wherenis the number of time points, andRtandTtare the reference and test release values at timet.

- The f2 factor is calculated as:

- Interpret Results: An f2 value ≥ 50 suggests the profiles are similar, and the method may not be discriminatory for that CQA. An f2 value < 50 indicates the method can detect a difference, confirming its discriminatory power for that specific attribute [2].

Key Reagents and Research Solutions

The following toolkit is essential for executing the described experimental protocol.

Table 3: Research Reagent Solutions for Discriminatory Method Development

| Research Reagent / Material | Function in the Experiment |

|---|---|

| Flow-Through Cell (USP IV) Apparatus | Simulates dynamic fluid conditions of physiological environments (e.g., ear canal), preventing saturation and offering high discriminatory power for complex formulations [2]. |

| HPLC System with UV Detector | Provides precise and accurate quantification of the drug released from the formulation at various time points. |

| Glass Microfiber Filters (GF/F) | Serves as a support matrix within the flow-through cell, retaining the suspension while allowing the dissolution medium to pass through [2]. |

| Simulated Tear Fluid (pH 7.4) | Acts as a physiologically relevant dissolution medium to mimic the in vivo environment for otic or ophthalmic formulations [2]. |

| Malvern Mastersizer 3000 | Characterizes the particle size distribution of the drug substance, a critical quality attribute, in the different formulation variants [2]. |

The discriminatory power of an analytical method is not a mere technicality but a fundamental safeguard in pharmaceutical development and quality control. A poorly discriminatory method provides a false sense of security, creating a direct pathway for non-bioequivalent batches to enter the market, with serious implications for patient safety, regulatory integrity, and economic efficiency. As demonstrated, leveraging advanced apparatus like the flow-through cell, employing robust statistical models like BBE that account for batch variability, and adhering to rigorous experimental protocols for method validation are essential strategies to mitigate this risk. Ensuring that in vitro tests are truly predictive of in vivo performance is critical to fulfilling the public health promise of generic medicines.

Developing Discriminatory Methods: Practical Approaches for Different Dosage Forms

In analytical method validation, discriminatory power refers to the ability of a test method to detect meaningful differences in product quality attributes that may impact performance, such as bioavailability or therapeutic efficacy [18]. For dissolution and drug release testing, establishing scientifically sound test conditions is not merely a regulatory formality but a critical scientific endeavor to ensure that the method can distinguish between acceptable batches and those with critical variations in formulation or manufacturing [2] [19]. The apparatus, medium, and sink conditions collectively form the tripartite foundation upon which a discriminatory method is built, ensuring that in vitro release data provides a reliable predictor of in vivo behavior and product quality.

Regulatory agencies like the U.S. Food and Drug Administration (FDA) and European Medicines Agency (EMA) emphasize that dissolution methods must be discriminatory for most products to ensure batch-to-batch consistency and detect non-bioequivalent batches [18]. This guide details the establishment of these core test conditions, framed within the broader objective of developing analytically rigorous and clinically predictive release methods.

Apparatus Selection and Configuration

The choice of dissolution apparatus is fundamental to simulating the relevant physiological environment and generating mechanically robust hydrodynamics for testing.

Flow-Through Cell Apparatus (USP Type IV)

The Flow-Through Cell Apparatus (FTCA) has emerged as a powerful tool for testing complex dosage forms like suspensions, where maintaining sink conditions and handling insoluble drugs is challenging [2] [19].

- Principle and Advantages: The FTCA operates via a continuous flow of fresh medium through a cell containing the dosage form. This design prevents saturation, maintains sink conditions, and closely mimics dynamic biological environments like the ear canal or tear film [2] [19]. Its high discriminatory power is particularly valuable for differentiating formulations with subtle variations in release profiles, which is crucial for quality control and therapeutic consistency [2].

- Configurations: The system can be run in open-loop mode (fresh medium continuously circulates) or closed-loop mode (medium is recirculated) [19]. Open-loop configurations are often preferred for their ability to maintain perfect sink conditions.

- Critical Configurations: Key setup parameters include:

- Cell Type and Size: Standard 22.6 mm or 12 mm cells are common.

- Filter Selection: GF/F glass filters are often used to retain suspension particles while allowing dissolved drug to pass through [2].

- Bed of Glass Beads: The inclusion of inert, small glass beads (e.g., 1 mm diameter) within the cell creates a larger surface area for deposition, helping to prevent filter blockage and channeling, thereby improving reproducibility [19].

Apparatus for Immediate-Release Solid Oral Dosage Forms

For conventional solid oral dosage forms, USP Apparatus I (basket) and II (paddle) are most common. The selection between them is based on the product's behavior, with the basket often preferred for formulations that tend to float or clog the paddle [18]. Verification of the method's discriminatory power involves testing "bad batches" manufactured with intentional, meaningful changes to Critical Process Parameters (CPPs) or Critical Material Attributes (CMAs) to ensure the method can detect these differences [18].

Table 1: Comparison of Key Dissolution Apparatuses

| Apparatus (USP Type) | Typical Applications | Key Discriminatory Advantages | Reported Configurations in Research |

|---|---|---|---|

| Flow-Through Cell (IV) | Otic suspensions [2], Ophthalmic suspensions [19], Poorly soluble drugs | Maintains sink conditions; simulates dynamic biological fluids; handles insoluble particulates | 22.6 mm cell; 5 mm ruby bead [2]; Open-loop system; 1 mm glass beads [19] |

| Paddle (II) | Immediate-release solid oral dosage forms | Standardized hydrodynamics; well-understood for quality control | Standard 50-75 rpm; sinkers for floating products |

| Basket (I) | Floating tablets, beads, formulations that may clog paddles | Confines the dosage form; provides consistent agitation | Standard 50-100 rpm |

Design of the Dissolution Medium

The dissolution medium must provide a biorelevant environment while enabling the detection of critical quality differences.

Composition and Physicochemical Properties

- pH: The pH of the medium is a primary consideration, as it profoundly influences drug solubility and dissolution rate. For otic and ophthalmic suspensions, a pH of 7.4 is often selected to mimic physiological conditions (e.g., simulated tear fluid) [2] [19]. Studies show that while pH changes can alter release profiles, a method's sensitivity to pH-related attributes may be less pronounced than to factors like particle size [2].

- Buffer Species: Common buffers include phosphate buffers (e.g., pH 6.8 for solid oral dosage forms) and simulated biological fluids like simulated tear fluid [2] [20].

- Surfactants: The addition of surfactants (e.g., sodium lauryl sulfate) is a critical tool for enhancing the solubility of poorly soluble drugs and achieving sink conditions [20]. However, their use must be justified, as excessive surfactant can mask the discriminatory power of the method by dissolving the drug too rapidly, thereby obscuring the impact of formulation variables [20]. A discriminatory method should ideally use the minimum surfactant concentration necessary to achieve sink conditions.

Volume and Hydrodynamics

- Volume: Standard volumes are 500-1000 mL for Apparatus I and II. In flow-through systems, the effective volume is determined by the flow rate (e.g., 4-16 mL/min [19]), which continuously provides fresh medium.

- Temperature and Degassing: The temperature is rigorously controlled, typically at 37±0.5°C, to simulate body temperature. Dissolved gases should be removed by degassing the medium prior to testing, as bubbles can interfere with dissolution, particularly in paddle and basket methods.

Table 2: Examples of Discriminatory Dissolution Media from Case Studies

| Drug Product | Finalized Dissolution Medium | Rationale for Discriminatory Power | Reference |

|---|---|---|---|

| Ciprofloxacin-Dexamethasone Otic Suspension | Simulated Tear Fluid, pH 7.4 | Biorelevant medium that successfully differentiated formulations based on particle size and polymer viscosity. | [2] |

| Artemether-Lumefantrine Tablets | Phosphate Buffer, pH 6.8 (without surfactant) | The absence of surfactant created a sufficiently challenging environment to discriminate between conventional tablets and those made with solid dispersion technology. | [20] |

| Tobramycin-Dexamethasone Ophthalmic Suspension | Simulated Tear Fluid, pH 7.4 (Open-loop FTCA) | The dynamic, biorelevant medium in the flow-through cell allowed discrimination based on particle size, viscosity, and pH. | [19] |

Achieving and Verifying Sink Conditions

Sink conditions are defined as a volume of medium that is at least three times greater than the volume required to form a saturated solution of the drug substance. This ensures the driving force for dissolution—the concentration gradient—is maintained throughout the test.

The Principle of Sink Conditions

Maintaining sink conditions is critical for a discriminatory method because it ensures that the measured dissolution rate reflects the intrinsic properties of the dosage form (e.g., particle size, crystallinity, formulation matrix) rather than being limited by the solubility capacity of the medium itself [20]. When sink conditions are not met, the dissolution rate can slow artificially, and the method may fail to distinguish between different formulations.

Experimental Approach to Establishing Sink Conditions

The following workflow outlines the systematic process for establishing and verifying sink conditions for a discriminatory dissolution method.

The experimental protocol involves:

- Solubility Analysis: Determine the equilibrium solubility of the drug in the proposed medium under controlled conditions (37°C, agitation) [20].

- Sink Volume Calculation: Calculate the minimum volume required for sink condition (≥3 x saturation volume). Compare this with the intended test volume.

- Sink Condition Test: Perform a dissolution test on the product. A plot of cumulative release should reach at least 85% of the labeled claim without plateauing prematurely. The final concentration in the vessel should be well below the drug's solubility in the medium.

Integrated Experimental Protocol for Discriminatory Power Assessment

This protocol synthesizes the elements of apparatus, medium, and sink conditions into a cohesive workflow for assessing a method's discriminatory power, using an ophthalmic suspension as a model [19].

Method Development Workflow

The development of a discriminatory dissolution method is an iterative process that integrates the selection and optimization of all test conditions.

Detailed Experimental Steps

- Step 1: Manufacture Test Batches. Prepare a control formulation and several "bad batches" with intentional, meaningful variations in Critical Material Attributes (CMAs). For a suspension, this typically includes:

- Particle Size Variation: Create batches with larger particle sizes (e.g., D90 of 142 µm vs. control at 1.75 µm) using techniques like high-pressure homogenization for smaller particles or autoclaving to induce particle growth [2] [19].

- Polymer Concentration Variation: Prepare batches with different concentrations of viscosity-enhancing polymers (e.g., Hydroxyethyl Cellulose) to alter viscosity (e.g., from 0.4 cPs to 18.5 cPs) [2] [19].

- Step 2: Execute Dissolution Test. Test all batches using the developed method. For a flow-through cell apparatus, conditions may be: Apparatus USP Type IV (open-loop), 22.6 mm cell, medium: Simulated Tear Fluid pH 7.4, flow rate: 8 mL/min, temperature: 37°C [19]. Sample at appropriate time intervals (e.g., 15, 30, 45, 60, 90, 120 min).

- Step 3: Quantitative Analysis. Quantify the drug release at each time point using a validated stability-indicating HPLC method with UV detection [19] [20].

- Step 4: Data Analysis and Interpretation. Use a model-independent approach to compare profiles. The similarity factor (f2) is a standard metric [2] [19].

f2 = 50 · log { [1 + (1/n) Σ (Rt - Tt)² ]^{-0.5} · 100 }

Where:

nis the number of time pointsRtis the reference (control) percent dissolved at timetTtis the test (variant) percent dissolved at timetAn f2 value between 50 and 100 suggests similar profiles, while values below 50 indicate a significant difference, demonstrating the method's discriminatory power [2] [19].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions and Materials

| Item | Function in Discriminatory Method Development | Example from Literature |

|---|---|---|

| Hydroxyethyl Cellulose (HEC) | A viscosity-modifying polymer used to create test batches with different release kinetics. Evaluating its impact is crucial for assessing discriminatory power. | Used to create formulations with viscosities from 0.4 cPs to 18.5 cPs to study polymer impact on release [2] [19]. |

| Simulated Tear Fluid (STF) | A biorelevant dissolution medium with pH 7.4 used for ophthalmic and otic suspensions to mimic the in-vivo environment closely. | Served as the dissolution medium in flow-through cell testing of otic and ophthalmic suspensions [2] [19]. |

| Surfactants (e.g., SLS) | Added to the dissolution medium to increase the solubility of poorly soluble drugs and help achieve sink conditions. Concentration must be carefully optimized to avoid over-solubilizing and losing discriminatory power. | The discriminatory method for Artemether was successfully developed in phosphate buffer without surfactant, highlighting its careful use [20]. |

| Glass Beads (1 mm) | Used as an inert filling material in flow-through cells to prevent filter blockage, minimize dead volume, and provide a large surface area for sample deposition, improving test reproducibility. | Used in the flow-through cell apparatus for testing tobramycin-dexamethasone ophthalmic suspension [19]. |

| Standard Reference Materials | High-purity drug substance used for calibration curves, recovery studies, and preparation of quality control samples during method validation. | Dexamethasone and Artemether reference standards were sourced for quantification of % release [2] [20]. |

Establishing test conditions for apparatus, medium, and sink conditions is a foundational and interlinked process in developing a dissolution method with proven discriminatory power. The flow-through cell apparatus offers significant advantages for complex dosage forms, while the careful design of the medium—including the judicious use of surfactants—is essential for creating a biorelevant and discriminating environment. Ultimately, verification of discriminatory power through intentional formulation variations and statistical analysis of release profiles, such as with the f2 factor, is a regulatory and scientific imperative. A rigorously developed and validated discriminatory method is not just a quality control tool; it is a critical component in ensuring therapeutic consistency and protecting patient safety.

The discriminatory power of a dissolution method is its ability to detect meaningful changes in the critical quality attributes (CQAs) of a drug product, such as alterations in the active pharmaceutical ingredient's (API) particle size, crystal form, or formulation composition [18]. For Biopharmaceutical Classification System (BCS) Class II drugs like carvedilol, which exhibit low solubility and high permeability, dissolution is the rate-limiting step for oral absorption, making discriminatory dissolution testing particularly critical [5] [21]. A well-developed discriminatory method ensures that batches with changes in critical process parameters (CPPs) or critical material attributes (CMAs) that could impact bioavailability will be detected during quality control, thereby preventing the release of non-bioequivalent batches [18]. This case study explores the development and validation of a discriminatory dissolution method for carvedilol, a weakly basic BCS Class II drug, detailing the experimental methodologies, data interpretation, and regulatory considerations essential for ensuring consistent product quality and performance.

Theoretical Foundations: Carvedilol as a Model BCS Class II Drug

Carvedilol is a non-selective β-adrenergic blocking agent with α1-blocking activity, widely used in treating cardiovascular diseases such as hypertension and congestive heart failure [5]. As a weak base with a pKa of approximately 7.8, carvedilol exhibits pronounced pH-dependent solubility [21]. It is characterized by high solubility in acidic environments (simulating the stomach) and low solubility in neutral to basic environments (simulating the intestine) [21]. This solubility profile, combined with its low absolute bioavailability (approximately 25%) and high lipophilicity (log P ≈ 3.8-3.967), firmly places carvedilol in BCS Class II [5] [21]. The table below summarizes the key physicochemical and biopharmaceutical properties of carvedilol.

Table 1: Key Properties of Carvedilol as a BCS Class II Model Drug

| Property | Description | Implication for Dissolution |

|---|---|---|

| BCS Classification | Class II (Low Solubility, High Permeability) | Dissolution is rate-limiting for absorption; discriminatory method is crucial [5]. |

| Acidic pKa | ~7.8 [21] | Exhibits pH-dependent solubility; highly soluble at low pH, poorly soluble at higher pH [21]. |

| Log P | 3.8 - 3.967 [5] [21] | High lipophilicity contributes to poor aqueous solubility. |

| Solubility Profile | High in gastric pH (e.g., 545.1–2591.4 μg/mL at pH 1.2–5.0); Low in intestinal pH (e.g., 5.8–51.9 μg/mL at pH 6.5–7.8) [21] | Dissolution method must be designed to maintain sink conditions and be discriminative across relevant pH ranges. |

| Bioavailability | ~25% [5] | Low and variable absorption underscores the need for robust in vitro performance tests. |

Developing a Discriminatory Dissolution Method for Carvedilol

Critical Method Parameters and Selection

The development of a discriminatory dissolution method requires careful selection of apparatus and medium to ensure the test is biorelevant and capable of detecting critical changes. For carvedilol immediate-release tablets, research has demonstrated that USP Apparatus II (paddle) is most suitable [5]. Key parameters include an agitation speed of 50 rpm, which provides sufficient agitation without masking differences between formulations, and a medium volume of 900 mL to maintain sink conditions for a 25 mg tablet [5]. The choice of dissolution medium is paramount. While carvedilol dissolves completely in acidic media simulating gastric fluid (e.g., >95% release in 0.1N HCl or SGF within 60 minutes), these media lack discriminatory power [5] [21]. A pH 6.8 phosphate buffer has been identified as a discriminating medium, as it can effectively differentiate formulations based on critical attributes like API particle size and formulation composition [5]. The ionic strength and buffer capacity of the medium also significantly influence carvedilol solubility and dissolution rate and must be controlled [21].

"The Scientist's Toolkit": Essential Research Reagents and Materials

The following table details key materials and reagents required for developing and validating a discriminatory dissolution method for carvedilol tablets.

Table 2: Research Reagent Solutions for Carvedilol Dissolution Testing

| Reagent/Material | Function in the Experiment | Key Considerations |

|---|---|---|

| Carvedilol API | Active pharmaceutical ingredient for solubility studies and formulation of test batches. | Varying particle size (e.g., d90 of 8.5 μm vs. 25.3 μm) is a critical parameter for testing discriminatory power [5]. |

| pH 6.8 Phosphate Buffer | Discriminatory dissolution medium. | Must provide adequate buffer capacity to maintain constant pH; ionic strength impacts solubility [5] [21]. |

| USP Apparatus II (Paddle) | Standard dissolution apparatus for solid oral dosage forms. | Agitation speed (50 rpm) is critical to avoid coning and ensure proper hydrodynamics [5] [22]. |

| HPLC System with PDA/UV Detector | Analytical finish for quantifying carvedilol concentration in dissolution samples. | Provides selectivity and sensitivity; method typically uses a C18 column and a buffered mobile phase with organic modifiers [5] [23]. |

| Micronized Carvedilol (API-II) | Represents a critical material attribute (CMA) for discrimination. | Smaller particle size (d90 ~8.5 μm) should show enhanced dissolution compared to larger particles (d90 ~25.3 μm) [5]. |

| Microcrystalline Cellulose, Crospovidone | Common excipients in test formulations. | Variations in type and concentration can be used to challenge the method's discriminatory power [5]. |

Experimental Protocol and Data Interpretation

Workflow for Assessing Discriminatory Power

The following diagram illustrates the logical workflow for developing and validating a discriminatory dissolution method.

Diagram: Discriminatory Power Assessment Workflow

Detailed Experimental Methodology

- Preparation of Test Batches: To establish discriminatory power, intentional "bad batches" are manufactured by introducing meaningful variations in CMAs or CPPs [18]. For carvedilol, this includes:

- Dissolution Test Procedure:

- Apparatus: USP Dissolution Apparatus II (Paddle) [5].

- Medium: 900 mL of pH 6.8 phosphate buffer, maintained at 37 ± 0.5°C [5].

- Agitation Speed: 50 rpm [5].

- Sampling: Aliquots (e.g., 5 mL) are withdrawn at multiple time points (e.g., 5, 10, 15, 30, 45, and 60 minutes) and immediately replaced with fresh medium [5]. The samples are filtered through a 0.45 μm membrane filter.

- Analytical Finish: The concentration of carvedilol in the samples is quantified using a validated HPLC method [5] [23]. A typical method uses a C18 column, a mobile phase of phosphate buffer and acetonitrile/methanol, and detection by UV at 242-254 nm [5] [23].

Interpretation of Dissolution Data and the f2 Similarity Factor

Dissolution profiles are compared using the model-independent similarity factor (f2), as recommended by regulatory agencies [2] [22]. The f2 value is calculated as follows:

f2 = 50 * log { [1 + (1/n) Σ (Rt - Tt)² ]^(-0.5) * 100 }

Where:

nis the number of time pointsRtandTtare the mean percent dissolved of the reference and test batches at timet

An f2 value between 50 and 100 suggests similar dissolution profiles, while a value less than 50 indicates a significant difference, confirming the method's ability to discriminate between the two batches [2] [22]. The table below summarizes how a discriminatory method responds to variations in carvedilol formulations.

Table 3: Discriminatory Power of the Dissolution Method for Carvedilol Formulations

| Formulation Variable | Example Change | Observed Impact on Dissolution Profile | f2 Value vs. Control | Method Discriminatory? |

|---|---|---|---|---|

| API Particle Size | Larger particle size (d90: 25.3 μm) vs. smaller (d90: 8.5 μm) [5]. | Slower release rate due to reduced surface area [5]. | f2 < 50 [5] | Yes |

| Disintegrant Type/Level | Reduction in superdisintegrant concentration. | Slower disintegration and dissolution. | f2 < 50 (inferred) | Yes |

| Batch with acceptable CQAs | Bio-batch or pivotal clinical batch. | Target release profile. | f2 > 50 [2] | (Reference) |

Regulatory Landscape and Implications

Regulatory bodies like the FDA and EMA emphasize the necessity of discriminatory dissolution methods. The FDA requires that even compendial (e.g., USP) methods must be verified for discriminatory power before use in supporting bioequivalence studies or quality control [18]. The EMA similarly states that the dissolution test should ideally detect all non-bioequivalent batches [18]. For immediate-release solid oral dosage forms, the FDA recommends demonstrating discriminatory power by showing that batches with intentional variations (e.g., ±10–20% change in a critical variable) result in an f2 value of less than 50 when compared to the bio-batch [22]. An exception exists for highly soluble drugs (BCS I and III), where a discriminatory dissolution method may not be required, and a disintegration test could suffice [18].

Developing a discriminatory dissolution method is a cornerstone of ensuring the consistent quality and in vivo performance of BCS Class II drugs like carvedilol. This process involves a science-driven approach to select appropriate apparatus and medium, and a rigorous validation protocol using intentionally varied batches to challenge the method. The use of the f2 similarity factor provides a robust, model-independent means of quantifying the method's discriminatory power. As detailed in this case study, a well-developed method for carvedilol in pH 6.8 phosphate buffer using Apparatus II at 50 rpm successfully discriminates between batches with critical differences in API particle size. Adherence to this paradigm is essential for effective formulation development, meaningful quality control, and successful regulatory submission, ultimately ensuring that only bioequivalent and therapeutically effective products reach patients.

In pharmaceutical analysis, discriminatory power is the ability of an analytical method to detect meaningful differences in a drug product's performance when critical quality attributes are altered. It is a validation parameter that ensures an method is not merely precise and accurate, but also scientifically meaningful and capable of detecting manufacturing or formulation changes that could impact in vivo performance. For complex dosage forms like Fast-Dispersible Tablets (FDTs) and otic suspensions, a method lacking discriminatory power may fail to identify suboptimal batches, potentially compromising therapeutic efficacy. This guide explores the application of this critical concept through the lens of two challenging dosage forms, providing technical frameworks for developing and validating methods that can reliably distinguish between acceptable and unacceptable product quality.

Discriminatory Method Development for Fast-Dispersible Tablets (FDTs)

The Analytical Challenge with FDTs

FDTs are designed to disintegrate or disperse within seconds when placed in the mouth, making conventional dissolution testing methods inadequate. Their rapid disintegration, combined with the potential for poor solubility of Biopharmaceutics Classification System (BCS) Class II drugs, creates a significant challenge for meaningful dissolution assessment. A discriminatory method must be capable of detecting changes in formulation composition, manufacturing process, or raw material characteristics that could alter drug release profiles.

Case Study: Domperidone FDTs

A research team developed and validated a discriminatory dissolution method for domperidone FDTs, a BCS Class II drug with poor water solubility and high permeability [24] [3].

Experimental Methodology

Formulation Preparation: FDTs containing 10 mg domperidone were prepared by direct compression method using excipients including microcrystalline cellulose, sodium croscarmellose, magnesium stearate, sodium bicarbonate, and anhydrous citric acid [3].

Dissolution Method Optimization: Studies were performed using USP Apparatus II (paddle) with 900 mL of various dissolution media at 37±0.5°C and agitation speeds of 50 and 75 rpm [24] [3]. Tested media included:

- 0.1 N hydrochloric acid

- Simulated gastric fluid (SGF) pH 1.2 without enzymes

- Simulated intestinal fluid (SIF) pH 6.8

- Phosphate buffer solution (PBS) pH 6.8

- Distilled water with sodium lauryl sulfate (SLS) at concentrations of 0.5%, 1.0%, and 1.5%

Analysis: Samples were analyzed by UV spectrophotometer at 284 nm, with dissolution profiles compared using similarity (f2) and difference (f1) factors [3].

Results and Method Validation

The optimized method utilized 0.5% SLS in distilled water as the dissolution medium, which provided the highest discriminatory power while maintaining sink conditions [24] [3]. The method was validated for:

- Specificity: No interference from excipients