Dirichlet-Multinomial Models in Forensic Text Comparison: A Statistical Framework for Authorship Analysis and Evidence Evaluation

This article explores the application of Dirichlet-multinomial models (DMM) in forensic text comparison, a statistically robust framework for authorship attribution and evidence evaluation.

Dirichlet-Multinomial Models in Forensic Text Comparison: A Statistical Framework for Authorship Analysis and Evidence Evaluation

Abstract

This article explores the application of Dirichlet-multinomial models (DMM) in forensic text comparison, a statistically robust framework for authorship attribution and evidence evaluation. Aimed at researchers and forensic science professionals, it covers the foundational principles of DMM for analyzing multivariate count data like text, detailing methodological implementation for forensic contexts. The content addresses key challenges such as data sparsity and topic mismatch, and provides validation protocols and performance comparisons with alternative methods. By synthesizing recent research, this guide serves as a comprehensive resource for implementing scientifically defensible and legally sound text analysis in forensic casework.

Understanding the Dirichlet-Multinomial Framework for Forensic Text Analysis

Compositional data, representing parts of a whole, is fundamental to forensic text comparison. In authorship analysis, features like word frequencies, character n-grams, or syntactic pattern ratios form composition vectors that sum to a constant total. The Dirichlet-multinomial model provides the statistical foundation for analyzing this compositional nature of linguistic data, properly accounting for the inherent correlations between components that sum to a fixed total [1] [2].

Within forensic linguistics, these models enable quantitative authorship attribution through the likelihood ratio framework, addressing historical validation deficits in the field [2]. This approach aligns with modern forensic science requirements emphasizing empirically validated, quantitative methods resistant to cognitive bias [2].

Theoretical Foundation: Dirichlet-Multinomial Model

The Dirichlet-multinomial model operates within the likelihood ratio framework for forensic evidence evaluation. The likelihood ratio formula expresses the strength of evidence under competing hypotheses [2]:

$$LR = \frac{p(E|Hp)}{p(E|Hd)}$$

Where $Hp$ represents the prosecution hypothesis (same author) and $Hd$ represents the defense hypothesis (different authors). The Dirichlet distribution serves as a conjugate prior for the multinomial distribution of linguistic features, enabling Bayesian updating of author-specific compositional parameters.

Table 1: Core Components of the Dirichlet-Multinomial Model for Text Comparison

| Component | Mathematical Representation | Linguistic Interpretation |

|---|---|---|

| Feature Vector | $\mathbf{x} = (x1, x2, ..., x_k)$ | Counts of k linguistic features in a document |

| Compositional Proportions | $\mathbf{p} = (p1, p2, ..., p_k)$ | Underlying probability of each feature for an author |

| Concentration Parameters | $\mathbf{\alpha} = (\alpha1, \alpha2, ..., \alpha_k)$ | Author-specific stylistic consistency parameters |

| Dirichlet Prior | $P(\mathbf{p}) = \frac{1}{B(\alpha)} \prod{i=1}^k pi^{\alpha_i-1}$ | Prior belief about feature distribution before observing data |

This model accounts for the overdispersion common in linguistic data - the greater variability than would be expected under a simple multinomial model. The concentration parameters $\alpha_i$ capture author-specific consistency in employing particular linguistic features, which is crucial for distinguishing between authors [2].

Experimental Protocol for Forensic Text Comparison

Corpus Design and Validation Requirements

Forensic text comparison validation must replicate casework conditions using relevant data [2]. The protocol must address topic mismatch between questioned and known documents, a significant challenge in authorship analysis.

Table 2: Corpus Design Specifications for Validation Experiments

| Requirement | Optimal Validation | Inadequate Validation |

|---|---|---|

| Topic Alignment | Documents with matched topics between known and questioned texts | Topic mismatch between comparison documents |

| Data Relevance | Data relevant to specific case conditions | Generic datasets without case-specific relevance |

| Text Length | Comparable to evidentiary documents | Divergent length distributions |

| Genre/Register | Matched genres and formality levels | Mixed genres without control |

| Temporal Factors | Contemporary texts from similar period | Texts from vastly different time periods |

Step-by-Step Analytical Protocol

Protocol 1: Dirichlet-Multinomial Authorship Analysis

Feature Extraction: Identify and count linguistic features (e.g., character n-grams, function words, syntactic patterns) from both questioned and known documents.

Prior Specification: Set Dirichlet concentration parameters based on population-level language models or reference corpora.

Posterior Calculation: Compute posterior distributions for both prosecution and defense hypotheses using Bayesian updating.

Likelihood Ratio Computation: Calculate LR using the formula $LR = \frac{p(E|Hp)}{p(E|Hd)}$ where $Hp$ assumes common author and $Hd$ assumes different authors.

Logistic Regression Calibration: Apply calibration to improve the evidential interpretation of raw likelihood ratios [1].

Performance Assessment: Evaluate system using log-likelihood-ratio cost (Cllr) and Tippett plots for validation [2].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Forensic Text Comparison Research

| Research Reagent | Function/Application | Specifications |

|---|---|---|

| Reference Corpus | Population-level language model development | Balanced genre representation, sufficient size for statistical power |

| Dirichlet Prior Estimator | Estimation of concentration parameters | Robust to sparse data, computationally efficient |

| LR Calibration Tool | Calibration of raw likelihood ratios | Logistic regression implementation with cross-validation |

| Validation Metrics Suite | Performance assessment | Cllr, Tippett plots, accuracy measures |

| Feature Extraction Library | Linguistic feature identification | Support for multiple feature types (lexical, syntactic, character) |

Signaling Pathways in Authorship Analysis

The analytical pathway for forensic text comparison involves multiple decision points and validation checkpoints to ensure scientifically defensible results.

Validation Framework and Casework Applications

Essential Validation Requirements

Empirical validation must fulfill two critical requirements for forensic text comparison [2]:

Reflect casework conditions: Validation experiments must replicate the specific conditions of the case under investigation, particularly addressing challenges like topic mismatch between questioned and known documents.

Use relevant data: The data employed in validation must be relevant to the specific case, including considerations of genre, register, topic, and temporal factors.

The Dirichlet-multinomial framework supports proper validation through its ability to incorporate case-specific parameters and account for the complex, multivariate nature of linguistic data. The model's concentration parameters can be tuned to reflect specific author characteristics and writing conditions.

Quantitative Performance Assessment

Table 4: Validation Metrics for Forensic Text Comparison Systems

| Metric | Calculation | Interpretation |

|---|---|---|

| Cllr (Log-Likelihood Ratio Cost) | $\frac{1}{2}[\frac{1}{N{same}} \sum{i=1}^{N{same}} \log2(1+\frac{1}{LRi}) + \frac{1}{N{diff}} \sum{j=1}^{N{diff}} \log2(1+LRj)]$ | Overall system performance (lower values indicate better performance) |

| Tippett Plot | Graphical representation of cumulative distributions of LRs for same-author and different-author comparisons | Visualization of discrimination and calibration |

| Accuracy | Proportion of correct authorship decisions | Traditional accuracy measure (requires threshold selection) |

| Cross-Entropy | Measure of agreement between predicted and true distributions | Model fit assessment |

The research highlights that neglecting proper validation requirements can significantly mislead the trier-of-fact in their final decision, underscoring the critical importance of rigorous, case-relevant validation protocols [2].

The Dirichlet and Multinomial distributions are fundamental probability distributions with a close mathematical relationship, often used in concert to model categorical data. The Multinomial distribution is a generalization of the binomial distribution that models the outcomes of experiments with multiple categories. It is parameterized by the total number of trials n and a probability vector π which lies on the simplex (i.e., its components sum to 1). The probability mass function for a multinomial random vector Y is given by:

f_M(y; π) = [n! / (∏(y_r!))] * ∏(π_r^(y_r)) [3].

The Dirichlet distribution is a multivariate continuous distribution that is conjugate to the multinomial. It is a distribution over the probability simplex—that is, it defines probabilities for the possible values of the multinomial parameter vector π. A K-dimensional Dirichlet distribution is parameterized by a concentration vector α = (α_1, ..., α_K), where α_k > 0. The probability density function for a vector π on the K-1 simplex is:

f_D(π; α) = [1 / B(α)] * ∏(π_k^(α_k - 1)), where B(α) is the multivariate Beta function [4].

A Dirichlet-Multinomial (DM) model is constructed by first drawing a probability vector π from a Dirichlet distribution, and then drawing a categorical count vector Y from a Multinomial distribution using this π: π ~ Dirichlet(α), then Y ~ Multinomial(n, π). This compound distribution is more flexible than a standalone multinomial as it can account for overdispersion—a common phenomenon in real-world data where the variability exceeds what the multinomial distribution predicts [3] [4].

Table 1: Summary of Core Distributions

| Distribution | Type | Parameters | Support/Description |

|---|---|---|---|

| Multinomial | Discrete | n (count), π (probability vector) |

Counts of K categories from n independent trials. |

| Dirichlet | Continuous | α (concentration vector) |

A probability distribution over the (K-1)-simplex. |

| Dirichlet-Multinomial | Compound | n, α |

A hierarchical model that accounts for overdispersion in count data. |

Application in Forensic Text Comparison

In forensic text comparison (FTC), the central task is to evaluate the strength of evidence regarding the authorship of a questioned document. The Likelihood Ratio (LR) framework is the logically and legally correct approach for this evaluation [2]. The LR quantifies the strength of evidence by comparing the probability of the observed evidence under two competing hypotheses:

- Prosecution Hypothesis (

H_p): The suspect is the author of the questioned document. - Defense Hypothesis (

H_d): The suspect is not the author of the questioned document [2].

The LR is calculated as: LR = p(E | H_p) / p(E | H_d), where E represents the stylistic evidence extracted from the texts [2]. A critical requirement for validating any FTC system is that empirical validation must be performed by replicating the conditions of the case under investigation and using data relevant to the case [2]. The Dirichlet-multinomial model is particularly suited for this as it can formally incorporate population variability into the calculation of these probabilities.

Workflow of a Dirichlet-Multinomial Model for FTC

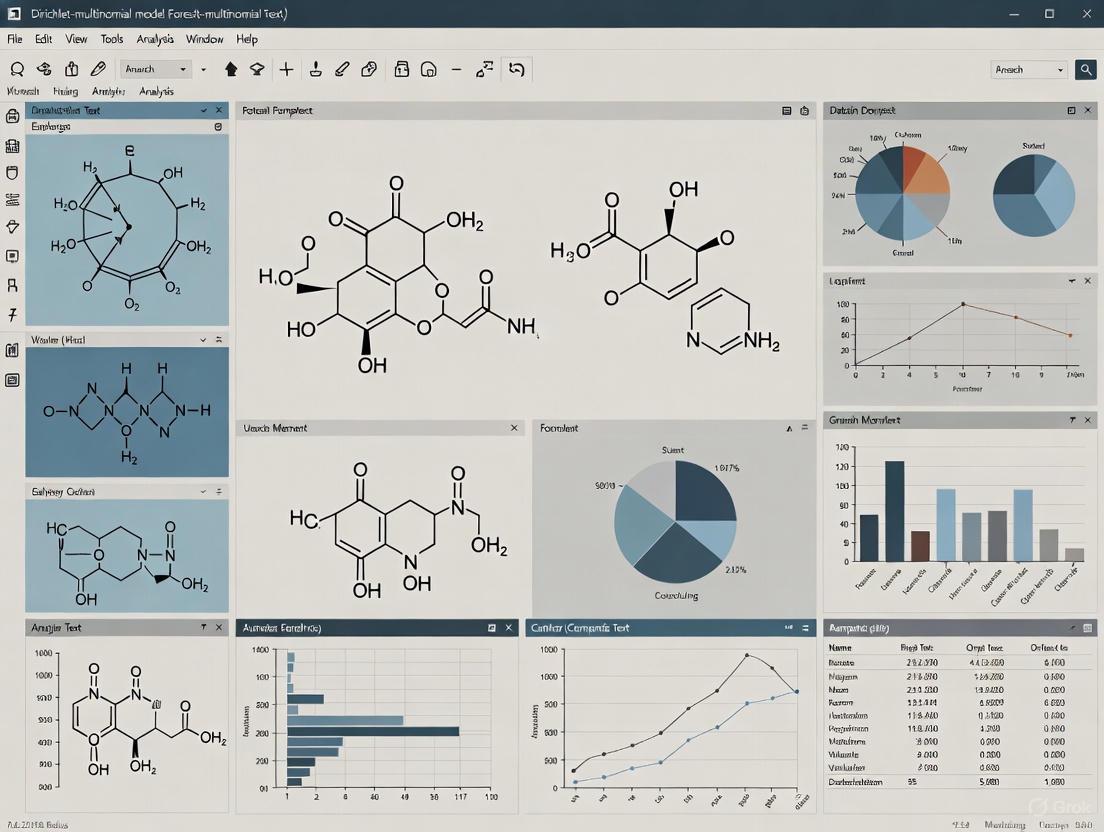

The following diagram illustrates the logical workflow and hierarchical structure of applying a Dirichlet-Multinomial model within the Likelihood Ratio framework for forensic text comparison.

Experimental Protocols and Methodologies

Protocol: Building a Dirichlet-Multinomial Model for Authorship

This protocol details the steps for constructing a Dirichlet-multinomial model to calculate a likelihood ratio for a questioned document.

1. Problem Formulation and Hypothesis Definition:

- Define

H_p: "The known and questioned documents were written by the same author." - Define

H_d: "The known and questioned documents were written by different authors."

2. Feature Extraction and Vectorization:

- From the questioned document and a set of known documents from a suspect, extract relevant linguistic features (e.g., function word frequencies, character n-grams, syntactic patterns).

- Represent each document as a vector of counts for these features. The sum of the vector is the total number of features tallied in that document.

3. Compilation of a Relevant Background Corpus:

- Assemble a large corpus of documents from many different authors. The topics and genres of these documents should be relevant to the case conditions to ensure a valid assessment of typicality [2].

4. Model Fitting and Prior Elicitation:

- Use the background corpus to estimate the parameters

αof the Dirichlet prior. This can be done via maximum likelihood or Bayesian methods. The vectorαrepresents the "pseudo-counts" of features from the background population.

5. Likelihood Calculation:

- Under

H_p, the combined known and questioned documents are treated as a single author. The probability of the evidence is calculated by integrating over the posterior distribution ofπgiven the Dirichlet prior and the combined data. - Under

H_d, the known and questioned documents are treated as coming from two different authors. The probability is the product of the probabilities for each document, calculated by integrating over the posterior distribution given the prior and the individual data sets.

6. Likelihood Ratio Computation and Calibration:

- Compute

LR = p(E | H_p) / p(E | H_d). - Apply post-hoc calibration, such as logistic regression calibration, to ensure that the computed LRs are valid and well-calibrated [2].

7. Validation and Performance Assessment:

- Evaluate the system's performance using metrics like the log-likelihood-ratio cost (C_llr) and Tippett plots to visualize the rates of support for the correct and incorrect hypotheses across many tested cases [2].

Table 2: Key Research Reagents and Computational Tools

| Reagent/Tool | Type | Function in FTC Research |

|---|---|---|

| Background Corpus | Data | Provides a representative sample of language use for estimating population parameters (the Dirichlet prior α). Must be relevant to case conditions [2]. |

| Linguistic Feature Set | Model Input | A predefined set of linguistic units (e.g., words, character n-grams) whose frequencies form the multivariate count data modeled by the multinomial distribution. |

| Dirichlet-Multinomial Model | Statistical Model | The core engine for calculating the probability of the observed evidence under the competing hypotheses H_p and H_d. |

| Likelihood Ratio (LR) Framework | Interpretative Framework | The logical structure for weighing evidence and reporting its strength, preventing the expert from opining on the ultimate issue [2]. |

| Calibration Model (e.g., Logistic Regression) | Statistical Method | Adjusts the output of the raw model to ensure that LRs are meaningful and correctly scaled (e.g., an LR of 10 truly provides 10:1 support for H_p) [2]. |

| PyMC / Probabilistic Programming Language | Software Library | Enables Bayesian inference for fitting Dirichlet-multinomial models and performing posterior predictive checks [4]. |

Workflow: Empirical Validation of an FTC System

The validation of a forensic text comparison system must be rigorous and mimic real-world conditions. The following workflow outlines the key stages for a robust empirical validation study.

Quantitative Data Presentation and Analysis

The performance of a forensic analysis method must be quantitatively assessed. For a Dirichlet-multinomial model in an FTC context, this involves summarizing the model's output and its diagnostic accuracy.

Table 3: Example Output from a Simulated FTC Experiment

This table simulates the results of a validation study where a Dirichlet-multinomial model was used to compute Likelihood Ratios for 10 document pairs, 5 of which were from the same author (H_p true) and 5 from different authors (H_d true). The log-Likelihood Ratio Cost (C_llr) is a single scalar measure of overall system performance, where a lower value indicates better accuracy and calibration [2].

| Comparison ID | Ground Truth | Raw LR | Log10(LR) | Calibrated LR | Supports Correct Hypothesis? |

|---|---|---|---|---|---|

| Comp_01 | H_p (Same) |

15.2 | 1.18 | 12.1 | Yes |

| Comp_02 | H_p (Same) |

8.1 | 0.91 | 7.5 | Yes |

| Comp_03 | H_d (Different) |

0.15 | -0.82 | 0.18 | Yes |

| Comp_04 | H_p (Same) |

120.5 | 2.08 | 85.3 | Yes |

| Comp_05 | H_d (Different) |

0.05 | -1.30 | 0.08 | Yes |

| Comp_06 | H_d (Different) |

1.5 | 0.18 | 1.1 | No (False Support for H_p) |

| Comp_07 | H_p (Same) |

2.3 | 0.36 | 2.8 | Yes |

| Comp_08 | H_d (Different) |

0.8 | -0.10 | 0.9 | Yes (Weakly) |

| Comp_09 | H_p (Same) |

45.0 | 1.65 | 38.2 | Yes |

| Comp_10 | H_d (Different) |

0.02 | -1.70 | 0.03 | Yes |

| Performance Metric | Value | ||||

Log-Likelihood Ratio Cost (C_llr) |

0.32 |

Hierarchical Dirichlet-Multinomial Model (DMM) for Text

The Hierarchical Dirichlet-Multinomial Model (DMM) represents a powerful Bayesian probabilistic framework for analyzing multivariate count data, particularly within text analysis applications. In forensic science, this model provides a mathematically rigorous foundation for addressing authorship verification tasks. The model's capacity to handle overdispersed count data—a common characteristic of textual information represented in a bag-of-words format—makes it particularly suitable for analyzing the complex and variable nature of writing styles [3]. Furthermore, its hierarchical nature allows for the effective modeling of grouped data, such as multiple documents written by the same author.

Within the context of forensic text comparison (FTC), the primary goal is to evaluate the strength of evidence regarding the authorship of a questioned document. The DMM framework integrates naturally into the likelihood ratio (LR) framework, which is widely recognized as the logically and legally correct method for forensic evidence evaluation [2] [5]. This framework quantitatively assesses whether the observed textual evidence is more likely under the prosecution's hypothesis (Hp: the suspect is the author) or the defense's hypothesis (Hd: another person is the author).

Key Applications in Forensic Text Comparison

The application of the Hierarchical DMM in forensic text comparison centers on its use as a statistical engine for calculating likelihood ratios. The following table summarizes the core components of this application:

Table 1: Core Application of the Hierarchical DMM in Forensic Text Comparison

| Application Component | Description | Role of Hierarchical DMM |

|---|---|---|

| Authorship Verification | Quantifying the evidence for whether a suspect authored a questioned document. | Provides a probabilistic model for text generation, allowing calculation of the evidence probability under both Hp and Hd. [2] |

| Strength of Evidence | Reporting the strength of the evidence on a continuous scale, avoiding categorical conclusions. | The output Likelihood Ratio (e.g., LR=100) indicates how much more likely the evidence is under Hp than under Hd. [5] |

| Handling Topic Mismatch | Addressing the challenge when known and questioned documents differ in topic, which can affect writing style. | The model's robustness helps manage vocabulary variations, though validation requires relevant data matching case conditions. [2] |

Experimental Protocols

Protocol for Model Training and Likelihood Ratio Calculation

This protocol outlines the procedure for training a Dirichlet-Multinomial model and using it to calculate likelihood ratios for authorship verification, as derived from forensic text comparison research [2] [5].

1. Data Preparation and Preprocessing

- Corpus Selection: Utilize a relevant corpus of text documents for training and validation. The Amazon Authorship Verification Corpus (AAVC) is a recognized benchmark, containing over 21,000 product reviews from 3,227 authors, with documents categorized into 17 topics [2].

- Text Processing: Convert all documents to lowercase. Remove punctuation, numbers, and extra whitespace.

- Feature Extraction: Implement a Bag-of-Words (BoW) model. Select the most frequent word tokens (e.g., the 140 most frequent tokens) as features to reduce dimensionality and mitigate overfitting [5].

- Vectorization: For each document, create a count vector representing the frequency of each selected token.

2. Model Training and Parameter Estimation

- Model Specification: Assume the count vectors for documents by an author follow a Multinomial distribution. Place a Dirichlet prior on the multinomial probability parameters to account for overdispersion and enable Bayesian inference.

- Parameter Estimation: Using the training documents from a known author, estimate the posterior distribution of the Dirichlet parameters. This is often achieved through maximum likelihood estimation or Bayesian methods, resulting in a set of author-specific parameters.

3. Likelihood Ratio Calculation Pipeline

The calculation of a Likelihood Ratio for a pair of documents (a known document K and a questioned document Q) is a two-stage process [5]:

- Score Calculation: The raw similarity score is computed using the Dirichlet-multinomial model. This score is based on the probability of the evidence (the word counts in

Q) given the author's model derived fromK. - Logistic Regression Calibration: The raw scores are then calibrated using logistic regression to produce well-calibrated Likelihood Ratios. This step is critical to ensure that the LRs are meaningful and not misleading [2] [5].

Protocol for Empirical Validation in Casework

A critical requirement in forensic science is the empirical validation of methods under conditions reflecting actual casework [2]. This protocol ensures that the DMM-based system's performance is evaluated realistically.

1. Define Casework Conditions

- Identify specific conditions of the case under investigation. A common and challenging condition is a mismatch in topics between the known and questioned documents [2].

2. Use Relevant Data

- The validation must use data that is relevant to the defined conditions. For a topic mismatch case, this means using a corpus where documents can be paired across different topics. The AAVC, with its 17 defined topics, is well-suited for this [2].

- Create validation pairs with different degrees of topic dissimilarity (e.g., "Cross-topic 1": highly dissimilar, "Cross-topic 2": moderately dissimilar) to test system robustness.

3. Performance Assessment

- Generate a sufficient number of same-author and different-author document pairs (e.g., 1,776 of each) under the defined cross-topic conditions [2].

- Calculate the log-likelihood-ratio cost (Cllr) for the system. This single metric evaluates the overall performance of the LR-based system, with lower values indicating better performance. Tippett plots can be used for visualization [2] [5].

Table 2: Key Performance Metrics for Validation

| Metric | Description | Interpretation |

|---|---|---|

| Log-Likelihood-Ratio Cost (Cllr) | A single scalar metric for the performance of a LR-based system across all its discrimination and calibration abilities. | A lower Cllr indicates better system performance. A Cllr > 1 suggests the system is jeopardizing the value of the evidence. [5] |

| Tippett Plots | A graphical representation showing the cumulative proportion of LRs supporting one hypothesis over the other for both same-source and different-source ground truths. | Allows visual assessment of the discrimination and calibration of the calculated LRs. |

The Scientist's Toolkit

The following table details key reagents, software, and data resources essential for conducting DMM-based forensic text comparison research.

Table 3: Research Reagent Solutions for DMM-based Forensic Text Analysis

| Tool / Resource | Function / Description | Relevance to DMM Forensic Analysis |

|---|---|---|

| Amazon Authorship Verification Corpus (AAVC) | A benchmark corpus of 21,347 product reviews from 3,227 authors, categorized into 17 topics. | Provides a standardized, well-controlled dataset for model development and validation, especially for cross-topic analysis. [2] |

| Dirichlet-Multinomial Statistical Model | A probabilistic model for multivariate count data that accounts for overdispersion. | Serves as the core statistical engine for calculating the initial similarity scores between documents. [2] [5] |

| Logistic Regression Calibration | A statistical method for transforming raw model scores into well-calibrated probabilities. | A critical post-processing step to ensure the output LRs are meaningful and accurately represent the strength of evidence. [5] |

| Likelihood Ratio (LR) Framework | The logical and legal framework for evaluating the strength of forensic evidence. | Provides the interpretable output (e.g., "The evidence is 100 times more likely under Hp than Hd") for courtroom presentation. [2] |

Advanced Model Visualization

The Hierarchical Dirichlet-Multinomial Model can be extended for more complex analyses. The Hierarchical Dirichlet Process (HDP) mixture model allows for sharing mixture components across different groups of data (e.g., different authors) in a non-parametric way, which is useful for modeling large and diverse corpora [6].

The Hierarchical Dirichlet-Multinomial Model provides a robust statistical foundation for forensic text comparison. Its integration into the likelihood ratio framework allows for the quantitative and transparent evaluation of authorship evidence. The successful application of this model in a forensic context is contingent upon rigorous empirical validation using data and conditions that mirror those of the case under investigation. Future work in this field will focus on refining these models to handle the full complexity of textual evidence, including the interplay of author-specific, community-level, and situational factors that influence writing style.

Advantages of DMM for Multivariate, Overdispersed Count Data

The Dirichlet-multinomial (DMM) is a compound probability distribution that is particularly effective for modeling multivariate count data exhibiting overdispersion (extra-variation) [7] [8]. It serves as a robust alternative to the standard multinomial distribution, which often fails to account for the increased variability commonly found in real-world datasets [3] [9]. The DMM is generated by first drawing a probability vector p from a Dirichlet distribution and then drawing a count vector from a multinomial distribution using that same p [8]. This two-step process provides the flexibility needed to model data where the variance exceeds what the standard multinomial distribution can accommodate.

In forensic science, particularly in forensic text comparison (FTC), the Dirichlet-multinomial model provides a statistical foundation for evaluating evidence under the likelihood ratio (LR) framework [2]. This framework is considered the logically and legally correct approach for interpreting the strength of forensic evidence, including textual evidence [2]. The application of DMM in this context helps address the complex nature of textual data, which encodes multiple layers of information—including authorship idiolect, group-level sociolinguistic patterns, and situational influences—all of which contribute to the overdispersed nature of linguistic count data [2].

Theoretical Advantages over the Multinomial Model

Handling Overdispersion and Complex Variance

The primary advantage of the Dirichlet-multinomial model lies in its ability to effectively handle overdispersed count data, where the observed variance significantly exceeds the nominal variance assumed by the multinomial distribution [7]. Table 1 summarizes the key differences in the mean-variance structure between the standard multinomial and the Dirichlet-multinomial distributions.

Table 1: Comparison of Multinomial and Dirichlet-Multinomial Properties

| Property | Multinomial Distribution | Dirichlet-Multinomial Distribution |

|---|---|---|

| Data Type | Discrete | Discrete |

| Support | Vectors of counts summing to n | Vectors of counts summing to n |

| Mean Structure | E(Xᵢ) = npᵢ | E(Xᵢ) = nαᵢ/α₀ |

| Variance Structure | Var(Xᵢ) = npᵢ(1-pᵢ) | Var(Xᵢ) = n(αᵢ/α₀)(1-αᵢ/α₀)[(n+α₀)/(1+α₀)] |

| Covariance Structure | Cov(Xᵢ,Xⱼ) = -npᵢpⱼ | Cov(Xᵢ,Xⱼ) = -n(αᵢαⱼ/α₀²)[(n+α₀)/(1+α₀)] |

| Overdispersion | Cannot model overdispersion | Explicitly accounts for overdispersion |

| Correlation between counts | Always negative | Always negative, but with increased flexibility |

The variance of the Dirichlet-multinomial distribution includes an additional multiplicative factor of (n+α₀)/(1+α₀) compared to the multinomial variance [8]. This factor explicitly accounts for the extra variation, making the DMM particularly suitable for real-world data that often exhibits greater variability than theoretical models can capture [7].

Accommodating Diverse Correlation Structures

While the basic DMM maintains the negative correlation structure of the multinomial distribution, extended versions like the Generalized Dirichlet-Multinomial (GDM) and Deep Dirichlet-Multinomial (DDM) models can accommodate both positive and negative correlations between variables [3] [9]. This flexibility is crucial for modeling complex datasets such as those found in microbiome research [3], RNA sequencing [9], and mutational signature analysis [10], where the relationships between different categories can be complex and varied.

The DMM's ability to model these complex correlation structures represents a significant advantage over the standard multinomial model, which imposes a rigid negative correlation structure that may not reflect biological or linguistic reality [3] [9]. As shown in RNA-seq data analysis, the multinomial-logit model can lead to seriously inflated Type I errors when testing null predictors, while the GDM approach maintains well-controlled Type I error while providing high power for detecting true effects [9].

Practical Implementation and Protocols

Experimental Design for Forensic Text Comparison

For forensic text comparison applications, the empirical validation of a Dirichlet-multinomial system should replicate the conditions of the case under investigation using relevant data [2]. The following protocol outlines the key steps:

Protocol 1: Dirichlet-Multinomial Model Application for Forensic Text Comparison

Data Collection and Preparation: Collect textual evidence from known and questioned sources. The Amazon Authorship Verification Corpus (AAVC) provides a suitable benchmark dataset, containing reviews from multiple authors across different topics [2].

Feature Extraction: Quantitatively measure properties of the documents. Common features include:

- Word or character n-gram frequencies

- Syntactic patterns

- Vocabulary richness measures

- Topic-specific terminology

Model Specification: Implement the Dirichlet-multinomial model with appropriate priors. The model can be specified as:

- p ~ Dirichlet(α)

- counts ~ Multinomial(n, p)

Likelihood Ratio Calculation: Compute likelihood ratios using the Dirichlet-multinomial model to evaluate the strength of evidence:

- LR = p(E|Hₚ) / p(E|H₅)

- Where Hₚ posits a common author and H₅ different authors

Model Calibration: Apply logistic regression calibration to the derived likelihood ratios to ensure well-calibrated values [2].

Performance Assessment: Evaluate the system using appropriate metrics such as the log-likelihood-ratio cost (Cllr) and visualize results using Tippett plots [2].

Bayesian Implementation with Spike-and-Slab Priors

For variable selection in high-dimensional settings, a Bayesian implementation with spike-and-slab priors can be employed [3]. This approach allows for simultaneous parameter estimation and variable selection, which is particularly useful when dealing with many potential predictors.

Protocol 2: Bayesian Estimation with Variable Selection

Model Reparameterization: Reparameterize the DMM for regression purposes, linking covariates to the marginal mean of the multivariate response [3].

Prior Specification:

- Apply spike-and-slab mixtures for variable selection

- Use Hamiltonian Monte Carlo (HMC) for estimation

Parameter Estimation: Implement a tailored HMC sampling method to efficiently explore the parameter space [3].

Model Diagnostics: Check convergence using trace plots and effective sample sizes, similar to the PyMC implementation example [4].

Posterior Predictive Checks: Validate model fit by comparing observed data with simulated data from the posterior predictive distribution [4].

The Bayesian approach is particularly advantageous for forensic applications as it provides a natural framework for incorporating prior knowledge and quantifying uncertainty in conclusions.

Application in Forensic Text Comparison Research

Addressing the Challenges of Textual Evidence

Textual evidence presents unique challenges for statistical modeling due to its complex, multi-layered nature [2]. A single text encodes information about:

- Authorship (the individual's unique idiolect)

- Social group (the community the author belongs to)

- Communicative situation (genre, topic, formality level)

The Dirichlet-multinomial model accommodates this complexity through its flexible structure, making it particularly suitable for forensic text comparison. When applying DMM to textual data, researchers must pay special attention to potential mismatches between documents, particularly in topic or domain, which can significantly affect writing style and consequently the model performance [2].

Validation Requirements for Forensic Applications

For forensic applications, proper validation of Dirichlet-multinomial systems requires:

- Reflecting case conditions: Validation experiments must replicate the specific conditions of the case under investigation [2].

- Using relevant data: The data used for validation must be appropriate for the specific case context [2].

Failure to adhere to these validation principles may mislead the trier-of-fact in their final decision [2]. The Dirichlet-multinomial framework, when properly validated, provides a scientifically defensible approach to forensic text comparison that is transparent, reproducible, and resistant to cognitive bias [2].

Research Reagents and Computational Tools

Table 2: Essential Research Tools for Dirichlet-Multinomial Modeling

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| R mglm package [9] | Software Package | Fitting multiple multivariate GLMs | General multivariate count data analysis |

| PyMC [4] | Probabilistic Programming | Bayesian modeling with MCMC sampling | Flexible DMM implementation |

| CompSign R package [10] | Specialized Software | Dirichlet-multinomial mixed models | Mutational signature analysis |

| Amazon Authorship Verification Corpus [2] | Benchmark Dataset | Validation of authorship methods | Forensic text comparison |

| Spike-and-Slab Priors [3] | Statistical Method | Variable selection in high dimensions | Feature selection in text analysis |

| Hamiltonian Monte Carlo [3] | Estimation Algorithm | Efficient posterior sampling | High-dimensional parameter estimation |

| Logistic Regression Calibration [2] | Calibration Method | Improving LR reliability | Forensic evidence evaluation |

Workflow and Conceptual Diagrams

Dirichlet-Multinomial Model Structure

The following diagram illustrates the hierarchical structure and data-generating process of the Dirichlet-multinomial model:

Diagram 1: Dirichlet-multinomial model structure showing the hierarchical data-generating process where a probability vector is first drawn from a Dirichlet distribution and then used to generate count data via a multinomial distribution.

Forensic Text Comparison Workflow

The workflow for applying Dirichlet-multinomial models in forensic text comparison involves multiple stages from data collection to evidence interpretation:

Diagram 2: Forensic text comparison workflow using Dirichlet-multinomial models, showing the process from data collection through to evidence interpretation, with system validation impacting multiple stages.

The Dirichlet-multinomial model provides a powerful framework for analyzing multivariate, overdispersed count data with significant advantages over the standard multinomial distribution. Its ability to account for extra variation, accommodate complex correlation structures, and integrate seamlessly into Bayesian frameworks with variable selection capabilities makes it particularly valuable for forensic text comparison applications. When implemented with proper validation protocols and computational tools, the DMM offers a scientifically defensible approach to evaluating the strength of textual evidence under the likelihood ratio framework. The continued development of specialized implementations, such as mixed-effects extensions and deep Dirichlet-multinomial architectures, promises to further enhance its applicability to complex forensic science challenges.

The Likelihood Ratio Framework for Evaluating Forensic Evidence

The likelihood ratio (LR) has become a cornerstone for the evaluation of forensic evidence, providing a logically and legally correct approach for quantifying the strength of evidence [2]. This framework offers a transparent, reproducible, and statistically sound method for forensic interpretation, increasingly adopted across various disciplines including forensic text comparison [2]. The LR framework separates the role of the forensic expert, who assesses the evidence, from that of the decision-maker (e.g., judge or juror), who considers the evidence in the context of prior case information [11]. Within forensic text comparison, the LR framework enables quantitative assessment of authorship by balancing similarity (how similar questioned and known documents are) and typicality (how distinctive this similarity is within the relevant population) [2]. This paper details the application of the Dirichlet-multinomial model within this framework, providing comprehensive protocols for its implementation in forensic text comparison research.

Theoretical Foundation of Likelihood Ratios

Basic Principles and Bayesian Interpretation

The likelihood ratio is a quantitative statement of evidence strength expressed as [2]:

LR = p(E|Hp) / p(E|Hd)

Where:

- E represents the observed evidence

- p(E|Hp) is the probability of observing evidence E given the prosecution hypothesis Hp is true

- p(E|Hd) is the probability of observing evidence E given the defense hypothesis Hd is true

In forensic text comparison, typical hypotheses are:

- Hp: "The source-questioned and source-known documents were produced by the same author"

- Hd: "The source-questioned and source-known documents were produced by different authors" [2]

The LR functions within the broader framework of Bayesian reasoning, where it updates prior beliefs about hypotheses based on new evidence [11]. This relationship is formally expressed through the odds form of Bayes' Theorem [2]:

Posterior Odds = Prior Odds × LR

This formula separates the fact-finder's initial beliefs (prior odds) from the evidence strength (LR) provided by the forensic expert [11]. The interpretation of LR values follows a standardized scale, where values further from 1 indicate stronger evidence [12]:

Table 1: Likelihood Ratio Interpretation Guide

| LR Value | Interpretation | Support for Hp |

|---|---|---|

| LR < 1 | Evidence supports Hd | Negative |

| LR = 1 | Evidence neutral | None |

| 1 < LR < 10 | Limited evidence | Weak |

| 10 ≤ LR < 100 | Moderate evidence | Moderate |

| 100 ≤ LR < 1000 | Moderately strong evidence | Moderately strong |

| 1000 ≤ LR < 10000 | Strong evidence | Strong |

| LR ≥ 10000 | Very strong evidence | Very strong |

Methodological Considerations and Uncertainty

The computation of LRs involves inherent subjectivity, as the LR in Bayes' formula is properly the personal LR of the decision-maker [11]. When experts provide LRs to decision-makers, this represents a hybrid adaptation of the Bayesian framework that requires careful uncertainty characterization [11]. The assumptions lattice and uncertainty pyramid concepts provide frameworks for assessing this uncertainty by exploring the range of LR values attainable under different reasonable models and assumptions [11]. This is particularly crucial in forensic text comparison, where methodological choices significantly impact LR values.

Dirichlet-Multinomial Model for Forensic Text Comparison

Model Foundation and Mathematical Formulation

The Dirichlet-multinomial distribution is a compound probability distribution that results from a multinomial distribution with a Dirichlet-distributed parameter vector [8]. Also known as the Dirichlet compound multinomial (DCM) or multivariate Pólya distribution, it provides a flexible framework for modeling multivariate count data with overdispersion, making it particularly suitable for textual data [8].

For a random vector of category counts x = (x₁, ..., xₖ) with total count n and parameter vector α = (α₁, ..., αₖ), the probability mass function is given by [8]:

Pr(x∣n,α) = [Γ(α₀)Γ(n+1) / Γ(n+α₀)] × ∏ₖ₌₁ᴷ [Γ(xₖ + αₖ) / Γ(αₖ)Γ(xₖ + 1)]

Where:

- α₀ = ∑αₖ (sum of all parameters)

- Γ is the gamma function

- K is the number of categories (e.g., vocabulary terms in text)

The mean and variance of the distribution are [8]:

- E(Xᵢ) = nαᵢ/α₀

- Var(Xᵢ) = n(αᵢ/α₀)(1 - αᵢ/α₀)[(n + α₀)/(1 + α₀)]

The Dirichlet-multinomial model effectively addresses the overdispersion common in textual data, where variability exceeds what standard multinomial models can capture [3]. This makes it particularly valuable for forensic text comparison, where both the presence of rare features and the absence of common ones contribute to authorship discrimination.

Application to Forensic Text Comparison

In forensic text comparison, the Dirichlet-multinomial model serves as the statistical foundation for calculating likelihood ratios in authorship analysis [2]. The model treats text as a collection of linguistic features (typically word frequencies or syntactic patterns) and calculates the probability of observing the specific feature distribution under both the prosecution (same-author) and defense (different-author) hypotheses [2].

The Dirichlet-multinomial model offers significant advantages over simple multinomial models or distance-based approaches (e.g., Cosine distance) because it [13]:

- Naturally accommodates overdispersed count data common in texts

- Provides better modeling of rare features through smoothing

- Enables more accurate probability estimation for small sample sizes

- Incorporates both similarity and typicality considerations

Table 2: Comparison of Text Comparison Methods

| Method | Similarity Assessment | Typicality Assessment | Handling of Sparse Data | Theoretical Foundation |

|---|---|---|---|---|

| Distance-based (e.g., Cosine) | Yes | Limited | Poor | Geometric |

| Simple Multinomial | Yes | Yes | Poor | Probability |

| Dirichlet-Multinomial | Yes | Yes | Good | Probability |

| Poisson Model | Yes | Yes | Moderate | Probability |

Feature-based methods using the Dirichlet-multinomial model have demonstrated superior performance compared to score-based methods using Cosine distance, with improvements quantified by the log-LR cost (Cllr) metric [13]. Performance can be further enhanced through appropriate feature selection techniques that identify the most discriminative linguistic features [13].

Experimental Protocols for Forensic Text Comparison

Core Validation Principles

Empirical validation of forensic text comparison methodologies must satisfy two critical requirements [2]:

- Reflecting case conditions: Experiments must replicate the specific conditions of the case under investigation

- Using relevant data: Validation must employ data relevant to the specific case circumstances

These requirements ensure that validation studies accurately represent the challenges present in actual casework, such as topic mismatch between questioned and known documents, which significantly impacts method performance [2]. Different types of mismatches (e.g., topic, genre, register) present distinct challenges and require separate validation [2].

Dirichlet-Multinomial LR Calculation Protocol

Objective: Calculate a likelihood ratio for authorship attribution using the Dirichlet-multinomial model.

Materials Required:

- Questioned document (Q)

- Known documents from suspected author (K)

- Reference corpus representing relevant population

- Computational resources for statistical analysis

Procedure:

Feature Extraction and Selection

- Identify and extract linguistic features (e.g., word frequencies, character n-grams, syntactic patterns)

- Apply feature selection to retain the most discriminative features

- Create a combined feature set across Q, K, and reference corpus

Model Training

- Estimate Dirichlet-multinomial parameters from reference corpus

- Calculate background feature probabilities

- Determine concentration parameters α for the model

Probability Calculation

- Compute p(E|Hp): Probability of observing feature counts in Q given K was written by the same author

- Compute p(E|Hd): Probability of observing feature counts in Q given K was written by a different author from the population

LR Computation and Calibration

- Calculate LR = p(E|Hp) / p(E|Hd)

- Apply logistic regression calibration if necessary

- Compute performance metrics (e.g., Cllr)

Validation and Reporting:

- Conduct black-box studies with known ground truth

- Report empirical cross-entropy or Tippett plots

- Provide uncertainty estimates for LR values

- Document all modeling assumptions and parameter choices

Workflow Visualization

Advanced Methodological Considerations

Model Extensions and Limitations

The standard Dirichlet-multinomial model has limitations in capturing the full complexity of microbiome data, which similarly applies to textual data [3]. The rigid covariance structure imposes pairwise negative correlations, limiting its ability to model co-occurrence relationships [3]. This has led to the development of extended models such as the Extended Flexible Dirichlet-Multinomial (EFDM) distribution, which accommodates both negative and positive dependence among variables [3].

The EFDM model can be viewed as a structured Dirichlet-multinomial mixture with specific parameter constraints that maintain interpretability while enhancing flexibility [3]. This extension provides explicit expressions for inter- and intraclass correlations, offering a more nuanced understanding of association patterns [3]. For forensic text comparison, this translates to improved modeling of feature co-occurrence patterns that may be author-specific.

Validation Framework and Uncertainty Quantification

Proper validation requires a systematic approach to uncertainty characterization through the assumptions lattice and uncertainty pyramid framework [11]. This involves:

- Identifying critical assumptions in the modeling process

- Exploring alternative assumptions and their impact on LR values

- Quantifying uncertainty across different assumption sets

- Reporting the range of plausible LR values

This approach acknowledges that even career statisticians cannot objectively identify one model as authoritatively appropriate, but can suggest criteria for assessing whether a given model is reasonable [11].

Table 3: Essential Research Reagents for Forensic Text Comparison

| Research Reagent | Function | Implementation Example |

|---|---|---|

| Reference Corpus | Represents relevant population for typicality assessment | Large collection of texts from potential authors |

| Feature Set | Defines measurable linguistic characteristics | Vocabulary items, character n-grams, syntactic patterns |

| Dirichlet-Multinomial Model | Statistical framework for probability calculation | Custom implementation or specialized software |

| Validation Dataset | Tests system performance with known ground truth | Controlled authorship dataset with verified authors |

| Calibration Tool | Adjusts raw scores to improve validity | Logistic regression or Platt scaling |

| Performance Metrics | Quantifies system reliability | Cllr, Tippett plots, accuracy measures |

Implementation Framework

System Architecture and Dependencies

The implementation of a Dirichlet-multinomial forensic text comparison system requires careful consideration of computational architecture and statistical dependencies. The system must handle the high-dimensional sparse data characteristic of textual evidence while providing statistically defensible results.

The key components include:

- Text preprocessing pipeline for normalization and feature extraction

- Feature selection mechanisms to identify discriminative linguistic features

- Parameter estimation routines for Dirichlet-multinomial models

- Probability calculation modules for both prosecution and defense hypotheses

- Validation frameworks for performance assessment and uncertainty quantification

Logical Relationship Diagram

The likelihood ratio framework provides a scientifically rigorous approach to forensic evidence evaluation, with the Dirichlet-multinomial model offering a powerful statistical foundation for forensic text comparison. The protocols and methodologies outlined in this document establish a comprehensive framework for implementation, validation, and uncertainty quantification. As the field advances, extended models such as the EFDM distribution promise enhanced capability to capture complex feature relationships while maintaining interpretability. Proper application requires strict adherence to validation principles, particularly replicating case-specific conditions and using relevant data, to ensure scientifically defensible and demonstrably reliable forensic text comparison.

Implementing DMM for Authorship Attribution and Forensic Text Comparison

Stylometric analysis is founded on the principle that every author possesses a unique, individual use of language manifested in their writings, which can be characterized through quantitative style markers [14]. The analysis does not focus on the content of a text but on the ways in which an author uses language features, making content-independent markers like grammatical categories, functional words, or syntactic structures particularly valuable [15]. The core of any stylometric procedure involves the selection and extraction of relevant stylistic features, with n-grams representing one of the most powerful and commonly employed style markers for authorship attribution tasks [15].

The application of stylometry has evolved from literary analysis to forensic science, where it assists in inferring the origin of disputed documents [14]. The field has seen a significant shift towards scientifically defensible approaches, particularly with the adoption of the likelihood ratio (LR) framework for evaluating evidence strength [14]. Within this framework, the Dirichlet-multinomial model has emerged as a statistically rigorous method for handling the discrete, multivariate nature of stylometric feature data, offering advantages over simpler distance-based measures or continuous statistical models [14].

Categories of Stylometric Features and N-grams

Style Marker Classification

Style markers in stylometric analysis can be broadly categorized based on the linguistic level they target and their independence from thematic content. The most robust markers are those that authors use unconsciously, providing a reliable fingerprint of individual style [16].

Table 1: Categories of Stylometric Features

| Feature Category | Description | Examples | Applications |

|---|---|---|---|

| Character N-grams | Contiguous sequences of characters of length n | Letters, punctuation, digits | Authorship attribution, plagiarism detection [15] |

| Word N-grams | Contiguous sequences of words of length n | Frequent words, phrases | Fake news detection, authorship verification [15] |

| Syntactic Features | Features capturing grammatical structure | POS tags, syntactic relations n-grams | Detecting writing style changes over time [15] |

| Structural Features | Document-level organizational patterns | Sentence length, paragraph length, punctuation frequency | Preliminary authorship screening [17] |

N-gram Features in Detail

N-grams constitute one of the most fundamental and successful feature types in stylometry. An n-gram is a contiguous sequence of n elements extracted from a longer sequence of text, with the value of n determining the granularity of the stylistic information captured [15].

Character N-grams identify the frequency of use at the level of the alphabet of a language, including letters, capital letters, punctuation marks, or digits [15]. These features are particularly valuable because they are largely language-independent and can capture sub-word stylistic patterns, such as common misspellings, preferred suffixes, or typing habits.

Word N-grams relate to the vocabulary and phraseology used in a document. These features encompass not only the frequency of individual words but also collocations and fixed expressions [15]. Function words (e.g., "the," "and," "of") are especially discriminative in word n-gram analyses as they are used largely unconsciously and are relatively independent of text topic [16].

Part-of-Speech (POS) N-grams and Syntactic Relation N-grams represent the grammatical and syntactic structure of text. POS n-grams sequences of grammatical tags assigned to words, while syntactic relation n-grams capture relationships between words in dependency parse trees [15]. These features are highly content-independent as they focus on how ideas are expressed rather than what ideas are expressed.

Table 2: N-gram Types and Their Characteristics

| N-gram Type | Elements Captured | Discriminatory Power | Topic Independence |

|---|---|---|---|

| Character (n=3-5) | Orthographic patterns, misspellings | High | Moderate to High |

| Word Unigrams | Vocabulary preferences, function words | High | Moderate (except function words) |

| Word Bigrams/Trigrams | Phrasal patterns, collocations | Very High | Low to Moderate |

| POS Tag N-grams | Grammatical patterns, syntax | Moderate to High | High |

| Syntactic Relation N-grams | Clause structures, dependency relations | High | High |

Dirichlet-Multinomial Model for Forensic Text Comparison

Theoretical Foundation

The Dirichlet-multinomial model provides a mathematically sound framework for forensic text comparison within the likelihood ratio paradigm. This model is particularly appropriate for stylometric features because it respects their discrete, multivariate nature, unlike continuous models that may violate statistical assumptions when applied to count data [14].

The model is based on the Dirichlet-multinomial distribution, which arises when multinomial distributions have their parameters drawn from a Dirichlet distribution. The probability mass function is defined as [18]:

$\Pr(\mathbf{x}\mid\boldsymbol{\alpha})=\frac{\left(n!\right)\Gamma\left(\alpha0\right)}{\Gamma\left(n+\alpha0\right)}\prod{k=1}^K\frac{\Gamma(x{k}+\alpha{k})}{\left(x{k}!\right)\Gamma(\alpha_{k})}$

where:

- $x_k$ is the k-th count (frequency of a specific n-gram)

- $n = \sumk xk$ (total number of n-grams)

- $\alpha_k$ is a prior belief about the k-th count

- $\alpha0 = \sumk \alpha_k$

- $\Gamma$ is the gamma function

In forensic applications, this model serves as a feature-based method that maintains the original multidimensional features for estimating likelihood ratios, preserving more authorship information compared to score-based methods that project features onto a univariate space [14].

Workflow and Integration

The following diagram illustrates the complete workflow for forensic text comparison using the Dirichlet-multinomial model with n-gram features:

Experimental Protocols for Feature Extraction and Analysis

Text Preprocessing and Normalization Protocol

Objective: To standardize text inputs before feature extraction, minimizing noise from formatting inconsistencies while preserving stylistic patterns.

Materials:

- Raw text documents (questioned and known authorship)

- Computational environment with text processing capabilities

Procedure:

- Text Cleaning:

- Remove extraneous metadata, headers, and footers

- Convert encoding to uniform standard (UTF-8 recommended)

- Replace special characters with canonical equivalents

- Mark or remove direct quotations to avoid source contamination

Tokenization:

- For word n-grams: Split text into word tokens using language-specific rules

- For character n-grams: Treat text as continuous character stream

- Preserve sentence boundaries for structural features

- Document all normalization decisions for forensic transparency

Consistency Checks:

- Verify uniform treatment of hyphenated words and contractions

- Standardize case handling (recommend lowercasing for n-grams)

- Ensure consistent handling of numbers and symbols

Quality Control: Process a small sample manually to verify automated procedures. Maintain detailed preprocessing log for forensic accountability.

N-gram Feature Extraction Protocol

Objective: To generate comprehensive n-gram features from preprocessed texts for stylistic analysis.

Materials:

- Preprocessed text documents

- Computational linguistics toolkit (e.g., NLTK, SpaCy) or specialized stylometry software

Table 3: N-gram Extraction Parameters

| N-gram Type | Recommended N values | Culling Threshold | Domain Considerations |

|---|---|---|---|

| Character N-grams | 3, 4, 5 | Minimum frequency: 5 | Language-specific character sets |

| Word N-grams | 1, 2, 3 | Minimum frequency: 2 | Topic sensitivity assessment |

| POS N-grams | 2, 3, 4 | Minimum frequency: 3 | Tagset consistency |

| Syntactic N-grams | 2, 3 | Minimum frequency: 2 | Parser accuracy validation |

Procedure:

- Parameter Configuration:

- Set n-gram type and n-value range based on research questions

- Establish frequency thresholds to filter rare n-grams

- Determine maximum feature set size based on computational resources

Feature Generation:

- Extract n-grams according to specified parameters

- Count raw frequencies for each n-gram in each document

- Apply feature selection if necessary (e.g., mutual information, chi-square)

Vector Representation:

- Create document-term matrix with documents as rows and n-grams as columns

- Consider normalization (e.g., relative frequencies, TF-IDF)

- Preserve raw counts for Dirichlet-multinomial modeling

Validation: Extract n-grams from control texts with known authorship to verify system discriminative power.

Dirichlet-Multinomial Model Implementation Protocol

Objective: To implement a Dirichlet-multinomial model for calculating likelihood ratios in forensic text comparison.

Materials:

- N-gram feature matrices (raw counts)

- Statistical computing environment (R, Python with appropriate packages)

- Reference population data for background modeling

Procedure:

- Prior Specification:

- Set Dirichlet prior parameters α based on reference population data

- Consider symmetric priors (α_k = α for all k) for minimal informativeness

- Validate prior sensitivity through robustness checks

Model Training:

- For each known-author document set, estimate posterior distributions

- Use empirical Bayes approaches for hyperparameter tuning

- Implement smoothing to handle zero counts appropriately

Likelihood Ratio Calculation:

- Compute probability of evidence under prosecution hypothesis (same author)

- Compute probability of evidence under defense hypothesis (different authors)

- Calculate LR as ratio of these probabilities: LR = P(E|Hp) / P(E|Hd)

Performance Validation:

- Use log-likelihood ratio cost (C_llr) to assess system performance

- Conduct cross-validation with held-out texts

- Evaluate calibration and discrimination separately

Forensic Reporting: Document all modeling decisions, assumptions, and validation results. Report LRs with appropriate measures of uncertainty.

Research Reagent Solutions

Table 4: Essential Tools and Resources for Stylometric Analysis

| Tool/Resource | Type | Function | Implementation Notes |

|---|---|---|---|

| Signature | GUI-based software | Generates frequency data for word lengths, sentence lengths, and other basic features | User-friendly for beginners; limited analytical options [17] |

| JGAAP | Java-based platform | Provides extensive customization for text normalization, feature extraction, and analysis | Used in high-profile cases including J.K. Rowling pseudonym discovery [17] |

| R-stylo | R package | Offers comprehensive, customizable analytical options for advanced stylometry | Requires coding knowledge; active development community [17] |

| Fast Stylometry | Python library | Implements Burrows' Delta and other distance measures for authorship attribution | Includes probability calibration techniques [19] |

| Dirichlet-Multinomial Code | Custom implementation | Implements the core statistical model for forensic text comparison | Requires mathematical and programming expertise [14] [18] |

Advanced Methodological Considerations

Feature Selection and Fusion

When working with n-gram features, the dimensionality of the feature vector can become extremely large, with some studies reporting 20,000 to 500,000 dimensions [14]. Effective feature selection is therefore critical for model performance and interpretability.

The following diagram illustrates the feature fusion approach for combining multiple n-gram categories in a forensic comparison system:

Research indicates that feature fusion approaches, which estimate LRs separately for each feature type (e.g., character unigrams, bigrams, trigrams; word unigrams, bigrams, trigrams) and then combine them using logistic regression fusion, can yield superior performance compared to single-feature-type models [14].

Validation and Forensic Considerations

For forensic applications, rigorous validation of the entire stylometric analysis pipeline is essential. This includes:

System Performance Validation:

- Use appropriate metrics like log-likelihood ratio cost (Cllr) which separately measures discrimination and calibration [14] [20]

- Conduct black-box tests with material similar to casework

- Validate with different text genres and lengths

Case-Specific Validation:

- Assess the suitability of the reference population

- Evaluate the robustness of results to modeling choices

- Conduct sensitivity analyses for key parameters

Forensic Reporting:

- Clearly communicate the strength of evidence using the LR scale

- Acknowledge limitations and assumptions

- Provide meaningful context for fact-finders

The Dirichlet-multinomial model represents a statistically rigorous approach for forensic text comparison that properly handles the discrete, multivariate nature of n-gram features, providing a solid foundation for scientifically defensible authorship analysis in forensic contexts.

Modeling Text as a Multivariate Response with DMM

Forensic text comparison (FTC) aims to evaluate whether two texts were written by the same author, a critical task in criminal investigations involving disputed authorship. The Dirichlet-multinomial model (DMM) provides a robust statistical framework for this analysis by treating text as a multivariate response of word counts, effectively capturing author-specific writing styles while accounting for the inherent variability in natural language. This approach aligns with the movement in forensic science toward quantitative measurements, statistical models, and the likelihood-ratio framework for evaluating evidence [2].

In FTC, the core hypothesis is that each author possesses a unique "idiolect" – a distinctive, individuating way of speaking and writing. However, a text is a complex reflection of human activity, encoding not only authorship but also information about the author's social group, the communicative situation, genre, and topic [2]. The DMM is particularly suited to this context as it models the word count vectors from a set of documents, accommodating the overdispersion common in count data—where variability exceeds that which a simple multinomial distribution can capture [8]. This makes it superior for modeling the rich and varied features of textual data.

Theoretical Foundation of the Dirichlet-Multinomial Model

The Dirichlet-multinomial distribution is a compound probability distribution. It arises when the probability vector p of a multinomial distribution is itself drawn from a Dirichlet distribution with parameter vector α [8]. This two-stage process makes it an excellent model for text, where the word counts in a document can be thought of as a multinomial sample, and the underlying word probabilities can vary from document to document according to a Dirichlet distribution.

For a random vector of word counts x = (x₁, ..., x_K) from a vocabulary of size K, and a total word count per document n, the probability mass function is given by:

Pr(x | n, α) = [Γ(α₀) Γ(n+1)] / [Γ(n + α₀)] * Π_{k=1}^K [Γ(x_k + α_k)] / [Γ(α_k) Γ(x_k + 1)]

where α₀ = Σ α_k and Γ is the Gamma function [8].

Key Properties and Advantages for Text Modeling

- Mean and Variance: The mean of the count for the

k-th word isE(X_k) = n * (α_k / α₀). The variance isVar(X_k) = n * (α_k / α₀)(1 - α_k / α₀) * [(n + α₀) / (1 + α₀)], which is larger than the multinomial variance by a factor of(n + α₀) / (1 + α₀), thus explicitly modeling overdispersion [8]. - Negative Covariance: All covariances between counts of different words are negative,

Cov(X_i, X_j) = -n * (α_i α_j) / α₀² * [(n + α₀) / (1 + α₀)], because for a fixed document lengthn, an increase in one word's count necessitates a decrease in another's [8]. - Handling Sparsity: The model can efficiently handle the high-dimensional and sparse nature of text data, where the number of possible words (

K) is large, but only a subset appears in any given document [8].

Application Protocol: DMM for Forensic Text Comparison

This protocol details the process of applying the DMM to calculate a likelihood ratio (LR) for a forensic authorship comparison, based on the methodology described by Ishihara et al. [2] [5].

Experimental Workflow

The following diagram illustrates the end-to-end workflow for a DMM-based forensic text comparison, from data preparation to the final interpretation of the likelihood ratio.

Detailed Methodologies

Stage 1: Data Preparation and Feature Extraction

- Text Preprocessing: For both the questioned (

Q) and known (K) texts, perform word tokenization. This involves splitting the text into individual words, often with additional steps like converting to lowercase and removing punctuation [21] [5]. - Bag-of-Words (BoW) Model: Represent each document as a vector of word counts, ignoring word order. The model uses a fixed vocabulary, typically the

Nmost frequent words in the relevant corpus (e.g.,N=140) [21] [5]. - Data Partitioning for Validation: Establish three mutually exclusive databases to ensure rigorous validation [5]:

- Test Database: Contains the specific

QandKtext pairs from the case under investigation. - Reference Database: A large collection of texts from many authors, used to model the population distribution of writing styles (i.e., to estimate the parameters of the background DMM).

- Calibration Database: A set of text pairs with known ground truth (same-author and different-author), used to learn the calibration function that maps raw scores to LRs.

- Test Database: Contains the specific

Stage 2: Statistical Modeling and Score Calculation with DMM

- Model Specification: The word count vector for a document,

x, with total word countnand vocabulary sizeK, is modeled asx ~ DirMult(n, α). The parameter vectorα = (α₁, ..., α_K)characterizes the underlying word probability distribution for an author or a population [8] [5]. - Fitting the Population Model: Use the Reference Database to estimate the

αparameters of a "background" DMM. This model represents the typical word usage in the relevant population of potential authors. Estimation is typically done via maximum likelihood. - Calculate a Raw Score: For a pair of documents (

Q,K), a raw score quantifying their similarity is calculated. This is not yet a likelihood ratio. The score is derived from the probability of observing the two documents under the fitted DMM. This step reduces the multivariate word count data to a single, scalar value for comparison [21] [5].

Stage 3: Calibration to Likelihood Ratio

- The Need for Calibration: Raw scores are often misleading and cannot be directly interpreted as a valid LR [5]. Calibration is the process of transforming these scores into well-calibrated LRs.

- Logistic Regression Calibration: Use the Calibration Database, which contains many scored pairs with known ground truth, to train a logistic regression model. This model learns the relationship between the raw scores and the log-odds of the texts being from the same author [2] [5].

- LR Calculation: The calibrated LR for a new case is obtained by applying the trained regression model to the raw score of the (

Q,K) pair. The final output is an LR of the form:LR = p(E | H_p) / p(E | H_d)whereH_pis the prosecution hypothesis (same author) andH_dis the defense hypothesis (different authors) [2].

Critical Validation Considerations

A key finding in FTC research is that validation must replicate the conditions of the case. For example, if the case involves texts on different topics (e.g., a questioned email about politics and a known blog post about sports), the validation experiments must also be performed under this cross-topic condition using a relevant dataset [2]. Failure to do so can lead to over- or under-estimation of the LR, potentially misleading a court.

Table 1: Key Experimental Factors and Their Impact on DMM Performance

| Experimental Factor | Consideration | Impact on Validation |

|---|---|---|

| Topic Mismatch | The degree of topic dissimilarity between Q and K texts. |

Using an irrelevant topic setting for validation (e.g., same-topic) when the case is cross-topic can drastically overestimate system performance [2]. |

| Document Length | The word count of the texts under comparison. | Shorter documents provide less data, leading to higher uncertainty and potentially weaker LRs. Performance generally improves with longer documents [21]. |

| Feature Vector Dimension (N) | The number of most-frequent words used in the BoW model. | An optimal N exists; too small loses discriminative power, too large introduces noise. Must be determined empirically for a given corpus [21]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Resources for DMM-based FTC Research

| Item / Resource | Function / Purpose in the Protocol |

|---|---|

| Specialized Text Corpora (e.g., Amazon Product Data Corpus) | Provides a controlled, topic-labeled dataset of authentic texts for developing and validating models under specific conditions like cross-topic comparison [2] [5]. |

| Bag-of-Words Feature Extractor | Converts raw text documents into numerical feature vectors (word counts) required for statistical modeling. A foundational pre-processing step. |

| Dirichlet-Multinomial Fitting Algorithm | Estimates the parameters (α) of the DMM from the reference population data. Essential for building the background model. |

| Logistic Regression Calibrator | Transforms the raw similarity scores from the DMM into properly calibrated likelihood ratios, ensuring the validity of the evidence weight. |

Performance Metrics (e.g., C_llr) |

The log-likelihood-ratio cost is a primary metric for numerically assessing the accuracy and discrimination of the computed LRs [2] [21]. |

| Visualization Tools (e.g., Tippett Plot Generator) | Provides a visual assessment of LR system performance, showing the cumulative proportion of LRs supporting the correct and incorrect hypotheses for same-author and different-author pairs [2] [21]. |

Data Presentation and Experimental Findings

The following table summarizes quantitative outcomes from simulated experiments that highlight the critical importance of proper validation, specifically regarding topic mismatch.

Table 3: Impact of Validation Design on FTC System Performance (Cllr)

| Validation Experiment Design | Description | Key Finding (Cllr) | Interpretation |

|---|---|---|---|

| Matches Casework (Cross-topic 1) | Validation data perfectly mirrors the topic mismatch in the case. | Highest Cllr (e.g., ~0.8, indicating worst performance in this context) | This result is the most forensically relevant and reliable for the specific case, honestly reflecting the difficulty of the comparison [2]. |

| Ignores Casework (Any-topic) | Validation uses a mixture of topic matches and mismatches. | Lower Cllr (e.g., ~0.5, indicating apparently better performance) | This overestimates real-world performance for the cross-topic case and is forensically misleading [2]. |

| Uses Irrelevant Data | Calibration data is not relevant to the case condition. | Cllr can exceed 1.0 | This is highly detrimental, completely jeopardizing the value of the evidence and leading to potentially highly misleading LRs [5]. |

Note on Cllr: The log-likelihood-ratio cost is a scalar metric that measures the average performance of a system across all its LRs. A lower Cllr indicates better performance, with a value of 0 representing a perfect system. A Cllr of 1 represents an uninformative system [21].

Modeling text as a multivariate response using the Dirichlet-multinomial model provides a scientifically defensible framework for forensic text comparison. Its ability to handle the overdispersed, count-based nature of textual data makes it a superior choice over simpler models. The outlined protocol—from data preparation through DMM scoring to LR calibration—provides a roadmap for rigorous application. However, the core tenet of this approach is that scientific validity is paramount. As demonstrated, the failure to validate the system under conditions that reflect the actual casework, including topic mismatch and using relevant data, can render the resulting likelihood ratios forensically unreliable. Future work must focus on developing comprehensive validation protocols that address the full complexity of textual evidence.

Forensic text comparison (FTC) is a scientific discipline that involves the analysis and interpretation of textual evidence for legal purposes. Within the broader thesis research on the application of the Dirichlet-multinomial model in FTC, this document establishes a detailed, practical workflow for implementing this statistical approach. The methodology outlined here adheres to the fundamental principles of forensic science: the use of quantitative measurements, statistical models, the likelihood-ratio (LR) framework, and, crucially, empirical validation of the method under conditions reflecting casework realities [2]. This protocol is designed for researchers and forensic practitioners, providing a standardized yet flexible pathway from initial evidence handling to the calculation of a statistically robust measure of evidence strength.

Background and Theoretical Framework

The Likelihood-Ratio Framework in Forensic Science

The likelihood ratio is the logically and legally correct framework for evaluating the strength of forensic evidence, including textual evidence [2]. It provides a transparent and quantitative measure that helps the trier-of-fact update their beliefs based on the evidence presented.