Digital Forensic Readiness Maturity Models Compared: A Framework for Secure Research

This article provides a comprehensive analysis of Digital Forensic Readiness (DFR) maturity models, a critical component for building cyber-resilient research organizations.

Digital Forensic Readiness Maturity Models Compared: A Framework for Secure Research

Abstract

This article provides a comprehensive analysis of Digital Forensic Readiness (DFR) maturity models, a critical component for building cyber-resilient research organizations. Aimed at researchers, scientists, and drug development professionals, it explores the foundational concepts of DFR and its necessity in protecting sensitive intellectual property and clinical data. The content systematically compares established maturity models and frameworks, evaluates their application in complex research environments, and addresses common implementation challenges. By validating these models against legal and scientific standards, this guide offers a structured approach for research institutions to assess and enhance their forensic capabilities, ensuring both data integrity and regulatory compliance in an evolving threat landscape.

Understanding Digital Forensic Readiness: Why Maturity Matters for Research Security

Defining Digital Forensic Readiness (DFR) and Its Core Objectives

Digital Forensic Readiness (DFR) is defined as an anticipatory approach within the digital forensics domain that prepares organizations to effectively manage and utilize digital evidence in anticipation of cyber incidents [1]. It involves assessing the necessary structures and maturity levels to enhance preparedness against potential cyber threats [1]. The concept was first formally published in 2001 by John Tan, who outlined that its primary objectives are to maximize an organization's ability to collect credible digital evidence while minimizing the cost of forensics during an event or incident [1].

DFR operates on the core anticipation that an incident will occur, enabling organizations to make the most efficient use of digital evidence instead of relying on the traditional responsive nature of incident management [1]. This proactive stance is crucial in today's landscape where cybercriminal activities have shown a notable increase, making it difficult for law enforcement agencies worldwide [2]. DFR is not merely a technical initiative but a strategic organizational capability that requires the participation of multiple stakeholders, including investigative teams, senior and executive management, human resources, privacy and compliance, corporate security, IT support staff, and legal counsel [1].

Core Objectives of Digital Forensic Readiness

The implementation of Digital Forensic Readiness serves multiple interconnected objectives that collectively enhance an organization's security posture, operational efficiency, and legal compliance.

Primary Strategic Objectives

Maximize Prospective Use of Digital Evidence: DFR enables organizations to proactively maximize their prospective use of electronically stored information, ensuring that potential digital evidence is available when needed without requiring extensive reactive efforts [1]. This involves establishing processes for identifying, preserving, and managing digital evidence from various sources within the organization's infrastructure.

Minimize Investigative Costs and Business Disruption: By preparing in advance for potential incidents, DFR significantly reduces the costs associated with digital forensic investigations, including personnel time, specialized tools, and business interruption [1] [3]. The cost difference is measurable, with mature programs demonstrating significantly lower per-incident costs compared to reactive approaches [4].

Enhance Legal Compliance and Evidence Admissibility: A properly implemented DFR program ensures that digital evidence is collected, preserved, and presented in a manner that meets legal admissibility requirements across jurisdictions [1] [2]. This includes adhering to standards such as ISO/IEC 27037:2012 for digital evidence handling and satisfying legal tests like the Daubert Standard, which assesses the reliability and validity of scientific evidence in court [2].

Secondary Operational Objectives

Strengthen Information Management Strategies: DFR strengthens broader information management strategies, including data retention, disaster recovery, and business continuity planning [1]. By integrating forensic readiness into these domains, organizations create synergistic effects that enhance overall resilience.

Support Regulatory and Insurance Requirements: Many regulatory frameworks (such as GDPR, DPA) and cyber insurance providers now require proof of log retention, incident documentation, and forensic readiness before processing claims [5]. Implementing DFR helps organizations meet these obligations efficiently.

Facilitate Efficient Incident Response: While digital forensics investigates how an attack happened, it works complementarily with incident response, which focuses on containment and recovery [5]. DFR ensures that investigative activities do not impede the restoration of business operations.

Comparative Analysis of Digital Forensic Readiness Maturity Models

Various frameworks and maturity models provide structured approaches for organizations to implement and assess their Digital Forensic Readiness programs. The table below compares four prominent models and their key characteristics.

Table 1: Comparison of Digital Forensic Readiness Maturity Models

| Maturity Model | Core Dimensions | Maturity Levels | Primary Focus | Key Differentiators |

|---|---|---|---|---|

| Digital Forensic Readiness Commonalities Framework (DFRCF) [1] | Strategy, Systems and Events, Legal Involvement | Not Specified | Enterprise-wide adoption of proactive digital forensics | Considers interconnected domains and subdomains with emphasis on legal aspects |

| Digital Forensic Maturity Model (DFMM) [1] | People, Process, Technology | 5 levels with specific compliance conditions | Assessing forensic readiness and security incident response | Enables organizations to assess readiness and identify improvement roadmaps |

| Digital Forensics Management Framework (DFMF) [1] | Governance, Operational, Technical | Layered model approach | Managing forensic readiness capability | Advocates for clear separation of responsibilities and establishment of overall digital forensic policy |

| People-Process-Technology (PPT) Framework [6] | People, Process, Technology | 5 maturity levels | Organizational maturity and readiness | Applies long-recognized organizational improvement concepts to digital forensics |

Model Methodologies and Implementation Approaches

Each maturity model employs distinct methodologies for assessing and improving Digital Forensic Readiness:

The Digital Forensic Maturity Model (DFMM) enables organizations to assess their forensic readiness and security incident responses through five levels of maturity that require compliance with specific conditions for each level [1]. This structured approach allows organizations to measure their current capabilities against defined benchmarks and plan systematic improvements.

The Digital Forensics Management Framework (DFMF) provides a layered model to manage forensic readiness capability, advocating for the establishment of an overall digital forensic policy and clear separation of responsibilities in investigations [1]. This framework emphasizes governance aspects alongside technical capabilities, recognizing that organizational structure is crucial for effective digital forensics.

The People-Process-Technology (PPT) framework applies long-recognized organizational improvement concepts specifically to digital forensics, addressing how these three elements must evolve together to enhance investigative capabilities [6]. This model is particularly valuable for addressing the non-technical challenges that often impede forensic readiness.

Experimental Protocols for Maturity Model Validation

Research into Digital Forensic Readiness maturity models employs rigorous methodologies to validate frameworks and assess their effectiveness in organizational settings.

Systematic Literature Review Protocol

Multiple studies have utilized Systematic Literature Reviews (SLRs) as a foundational approach for developing and validating DFR maturity models [3] [6]. The typical SLR protocol involves:

- Research Question Formulation: Clearly defining objectives and scope for the literature review [6]

- Source Selection: Identifying relevant academic databases (e.g., IEEE Explore, ScienceDirect, Springer Link, Semantic Scholar, Emerald Insight) [6]

- Search String Development: Creating comprehensive search queries using keywords such as 'digital forensic', 'forensic readiness', 'digital evidence', and 'organisational and digital forensic' [3]

- Inclusion/Exclusion Criteria Application: Screening publications based on relevance, publication date (typically 5-10 year window), and methodological rigor [6]

- Quality Assessment: Evaluating selected studies against predefined quality standards [3]

- Data Extraction and Synthesis: Systematically extracting relevant findings and synthesizing them to identify patterns, gaps, and conceptual frameworks [3]

This methodology was employed in a 2021 study that reviewed literature from 2011-2020 to identify indicators for maturity and readiness for digital forensic investigation in the era of Industrial Revolution 4.0 [6].

Focus Group Evaluation Protocol

The ETHICore framework development utilized focus group discussions as a key validation methodology [3]. The experimental protocol included:

- Expert Recruitment: Establishing a comprehensive scoring system to select eligible experts based on academic qualifications (Master's or PhD in digital forensics), professional experience (minimum 5 years), research contributions, and technical expertise [3]

- Structured Discussions: Conducting five focus group discussions to elaborate, test, and obtain feedback on framework development [3]

- Qualitative Analysis: Thematically analyzing expert input to identify areas for improvement and refinement of the framework [3]

- Framework Iteration: Incorporating expert feedback to enhance the framework's practicality and effectiveness in organizational settings [3]

This approach ensured that the resulting framework balanced theoretical soundness with practical applicability, addressing real-world challenges in implementing digital forensic readiness.

Comparative Tool Validation Protocol

Studies evaluating digital forensic tools, including those relevant to DFR implementation, often employ rigorous experimental methodologies [2]. A typical validation protocol includes:

- Controlled Testing Environment: Implementing comparative analyses between different tools or approaches using standardized testing environments [2]

- Scenario Testing: Designing distinct test scenarios (e.g., preservation and collection of original data, recovery of deleted files, targeted artifact searching) [2]

- Repeatability Metrics: Conducting each experiment in triplicate to establish repeatability metrics and calculate error rates [2]

- Benchmarking: Comparing results against control references or established commercial tools to validate performance [2]

This methodology was used in a 2025 study that compared commercial tools (FTK and Forensic MagiCube) with open-source alternatives (Autopsy and ProDiscover Basic), demonstrating that properly validated approaches can produce reliable and repeatable results with verifiable integrity [2].

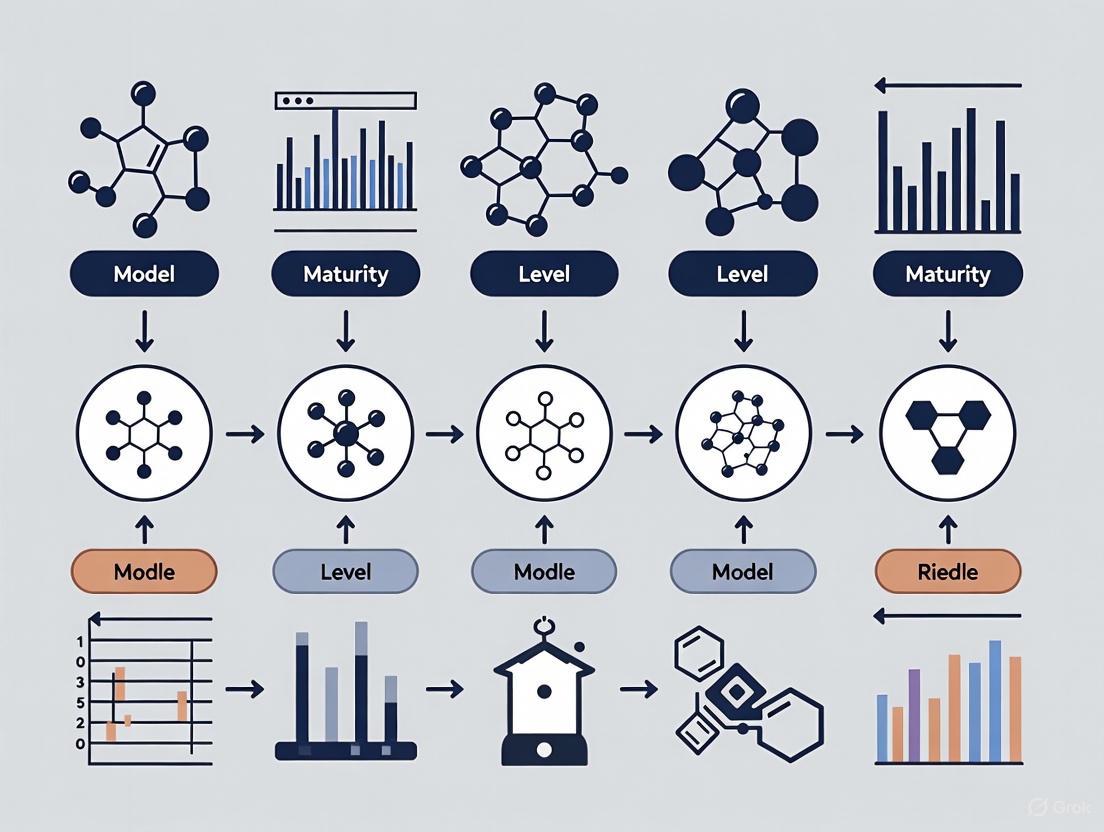

Visualization of Digital Forensic Readiness Framework Relationships

The following diagram illustrates the structural relationships between core components of a comprehensive Digital Forensic Readiness program:

DFR Framework Component Relationships

This visualization illustrates how Digital Forensic Readiness serves as an overarching concept supported by three foundational pillars (Strategic Foundation, Operational Implementation, and Target Outcomes), with specific interdependencies between component elements.

Research Reagent Solutions: Essential Materials for DFR Implementation

The table below details key "research reagents" - essential tools, standards, and frameworks - required for implementing and assessing Digital Forensic Readiness programs.

Table 2: Essential Research Reagent Solutions for Digital Forensic Readiness

| Research Reagent | Type | Function in DFR Implementation | Exemplars |

|---|---|---|---|

| Maturity Models | Assessment Framework | Enable organizations to evaluate current DFR capabilities and identify improvement roadmaps | Digital Forensic Maturity Model (DFMM), People-Process-Technology (PPT) Framework [1] [6] |

| International Standards | Guidelines | Provide standardized processes for digital evidence handling to ensure legal admissibility | ISO/IEC 27037:2012 (digital evidence), ISO/IEC 27043 (Readiness Process Class) [1] [2] |

| Forensic Tools | Technical Solutions | Enable identification, collection, preservation, and analysis of digital evidence | Commercial (FTK, EnCase), Open-Source (Autopsy, Sleuth Kit) [2] |

| Legal Standards | Admissibility Criteria | Define requirements for digital evidence to be accepted in legal proceedings | Daubert Standard (testability, peer review, error rates, general acceptance) [2] |

| Validation Frameworks | Methodology | Ensure tools and processes produce reliable, repeatable results with verifiable integrity | NIST Computer Forensics Tool Testing standards, Experimental validation protocols [2] |

Digital Forensic Readiness represents a fundamental shift from reactive digital forensics to a proactive organizational capability that maximizes evidence collection while minimizing investigative costs. Through the implementation of structured maturity models such as the Digital Forensic Maturity Model (DFMM) and People-Process-Technology (PPT) Framework, organizations can systematically assess and improve their preparedness for digital incidents. The rigorous experimental protocols developed through systematic literature reviews, focus group evaluations, and comparative tool validation provide methodological soundness to DFR implementation. As digital environments continue to evolve with emerging technologies such as IoT, cloud computing, and Industrial Revolution 4.0, Digital Forensic Readiness will remain critical for organizations seeking to maintain robust security postures, ensure legal compliance, and effectively respond to cyber incidents.

The Critical Role of DFR in Protecting Research Data and Intellectual Property

In the life sciences and drug development sectors, research data and intellectual property (IP) represent the cornerstone of innovation and competitive advantage. Digital Forensic Readiness (DFR) is no longer a reactive IT measure but a strategic necessity, forming a critical layer of defense for these invaluable assets. A DFR framework ensures that an organization is optimally prepared to collect, preserve, and analyze digital evidence in a forensically sound manner, which is essential for responding to data breaches, proving IP ownership, and maintaining regulatory compliance [7]. This guide explores the maturity models that help organizations assess and elevate their DFR capabilities, providing a structured path to robustly safeguarding research and IP.

DFR Maturity Models: A Comparative Framework

Digital Forensic Readiness maturity models provide a structured pathway for organizations to evaluate and enhance their capabilities. These models assess an organization's preparedness across multiple dimensions, ensuring a holistic approach to forensic readiness. Based on synthesis of current research, the following table compares the core components and indicators of two predominant models discussed in the literature.

Table: Comparison of DFR Maturity Model Components

| Maturity Dimension | PPT-Based Model [6] | Operational & Infrastructural Model [7] | Key Assessment Indicators |

|---|---|---|---|

| People | Technical training, skills development, staffing levels | Operational Readiness (focus on individuals) | Staff expertise, defined roles, awareness training, cultural adoption |

| Process | Standard Operating Procedures (SOPs), chain of custody, incident response | Governance, policy, planning, and control | Forensic policies, audit trails, evidence handling protocols, regulatory adherence |

| Technology | Tools for data acquisition, analysis, and secure storage | Infrastructural Readiness (focus on data processes) | Advanced forensic tools, immutable backup solutions, encryption, access controls |

| Strategy & Governance | Integrated within Process dimension; policy development | Explicitly called out via top management support and governance | Executive buy-in, dedicated budget, integration with enterprise risk management |

The relationship between these dimensions forms the foundation of an effective DFR program. The following diagram illustrates how strategic governance drives the development of People, Process, and Technology capabilities, which collectively work to protect research data and intellectual property.

Experimental Validation of DFR Protocols

Validating DFR maturity models requires a methodological approach to gather empirical data from industry experts. The following workflow outlines a qualitative research methodology, as employed in recent studies, to assess and refine DFR frameworks.

Table: Key Components for DFR Assessment Methodology

| Component | Function in DFR Assessment |

|---|---|

| Expert Focus Groups | Provides qualitative, in-depth data on real-world forensic challenges and operational needs [7]. |

| Case Study Scenarios | Simulates real-world incidents (e.g., data exfiltration) to test the applicability of DFR frameworks in a controlled manner [7]. |

| Categorization Matrix (NVivo) | Enables systematic analysis of qualitative feedback to identify recurring themes and critical success factors [7]. |

| Systematic Literature Review | Establishes a foundational understanding of existing challenges and maturity indicators [6]. |

Experimental Protocol: Focus Group Analysis for DFR Maturity

- Participant Recruitment: Select 11-15 experts with diverse backgrounds in digital forensics, including professionals from business, consulting, law, and military sectors to ensure a comprehensive perspective [7].

- Stimulus Material: Develop detailed case studies based on realistic scenarios relevant to the life sciences industry, such as intellectual property theft or a ransomware attack disrupting a clinical trial.

- Moderation and Data Collection: Conduct multiple focus group sessions, each lasting approximately two hours. A senior researcher moderates the session, first assessing baseline knowledge before guiding a discussion based on the provided case study and the draft DFR framework [7].

- Data Analysis: Transcribe recordings of the sessions verbatim. Analyze the transcripts using content analysis methods and software tools like NVivo to code the data against a predefined categorization matrix based on the maturity model dimensions (People, Process, Technology, Strategy) [7].

- Synthesis: Identify areas of expert consensus and disagreement. Use these insights to validate, critique, and refine the indicators and structure of the proposed DFR maturity model.

The Researcher's Toolkit: Essential DFR Solutions

Building forensic readiness requires a combination of technical tools, strategic policies, and security controls. The following table details key solutions and their functions in protecting research data.

Table: Essential Digital Forensic Readiness Solutions Toolkit

| Solution / Control | Primary Function in DFR | Relevance to Research Data & IP |

|---|---|---|

| Immutable Backups | Creates data copies that cannot be altered or deleted, preserving evidence and enabling recovery. | Mitigates ransomware impact on clinical trials; ensures data integrity for drug discovery research [8]. |

| Identity & Access Management (IAM) | Manages and controls user access to data and systems based on the principle of least privilege. | Prevents unauthorized access to sensitive research data; provides audit trails for regulatory compliance (e.g., FDA 21 CFR Part 11) [9]. |

| End-to-End Encryption | Protects data confidentiality both at rest and in transit. | Safeguards intellectual property, such as proprietary compound libraries, from interception or theft [8] [9]. |

| DFIR Retainer Services | Pre-negotiated contracts with digital forensics and incident response experts for immediate assistance. | Satisfies evolving regulatory and cyber insurance requirements; provides expert response to minimize business disruption from a security incident [10]. |

Strategic Implementation and Future Trends

Successfully implementing a DFR program requires more than just technology. Top management support and a strong security culture are consistently identified as the most critical success factors [7] [6]. Executive leadership ensures adequate funding and organizational priority, while a culture of awareness empowers all employees, especially researchers and scientists, to become the first line of defense.

The field of digital forensics is continuously evolving. Key trends that will impact DFR in life sciences include:

- AI and Machine Learning: These technologies are being leveraged to accelerate digital forensics triage, analyze vast datasets for anomalies, and improve threat detection, which is crucial for managing the high-volume data from clinical trials and research [8] [10].

- Regulatory Scrutiny: Regulations like the Digital Operational Resilience Act (DORA) are making DFR retainers and proven readiness programs a mandatory requirement, not just a best practice [10].

- Proactive Defense: The integration of DFR with proactive measures like tabletop exercises and incident readiness assessments is becoming standard to build holistic cyber resilience [10].

For research institutions and life science companies, intellectual property and research data are assets that demand the highest level of protection. Digital Forensic Readiness provides a structured, measurable approach to transforming cybersecurity from a reactive cost center into a proactive, strategic function. By adopting a maturity model focused on People, Process, Technology, and Strategy, organizations can systematically build their resilience. This readiness not only minimizes the impact of security incidents but also serves as a powerful competitive advantage, safeguarding the innovation that defines the industry.

In the contemporary digital landscape, the preparedness of an organization to investigate security incidents, known as digital forensic readiness, has become a critical component of cybersecurity and risk management. Framed within the broader research context of comparing digital forensic readiness maturity models, this analysis explores the tangible consequences of forensic unreadiness. Such unreadiness undermines an organization's ability to effectively respond to data breaches and compromises the integrity of digital evidence. The maturity and capability of an organization's forensic processes directly influence its resilience against cyber threats and its capacity to perform robust root cause analysis, ensure regulatory compliance, and maintain operational continuity [6]. This guide objectively compares states of readiness and unreadiness, synthesizing current research to provide researchers and professionals with a structured understanding of the impacts and requisite methodologies for improvement.

Defining Forensic Readiness and Unreadiness

Digital forensic readiness is defined as the achievement of an appropriate level of capability by an organization to collect, preserve, protect, and analyze digital evidence so that this evidence can be effectively used in legal matters, disciplinary proceedings, or internal investigations [11]. It represents a proactive stance, aiming to maximize the potential to use digital evidence while minimizing the cost of an investigation.

Conversely, forensic unreadiness is a state of inadequate preparedness characterized by the absence of policies, tools, and trained personnel necessary for competent digital evidence handling. This state significantly increases an organization's vulnerability, leading to prolonged system downtimes, irreversible data loss, and an inability to identify the root causes of security incidents [5]. The core distinction lies in an organization's ability to not only react to incidents but to do so in a way that ensures evidence is technically sound, legally admissible, and operationally efficient [12].

Comparative Analysis: Readiness vs. Unreadiness

The consequences of an organization's level of forensic preparedness become starkly evident during a cybersecurity incident. The following table contrasts the outcomes based on preparedness levels.

Table 1: Consequences of Forensic Readiness vs. Unreadiness

| Aspect | Forensic Readiness | Forensic Unreadiness |

|---|---|---|

| Incident Response Time | Swift response and containment; Efficient evidence collection [12] | Slow reaction; Prolonged investigation and system downtime [5] |

| Evidence Integrity & Availability | Evidence is preserved with a strong chain of custody; Logs are retained and accessible [5] [11] | Fragile evidence is lost or corrupted; Lack of logs prevents root cause analysis [5] |

| Financial Impact | Reduced investigation costs; Lower regulatory fines; Successful insurance claims [12] [11] | Higher recovery costs; Potential for massive regulatory fines; Insurance claim denials [5] |

| Legal & Regulatory Compliance | Meets e-discovery and data protection laws (e.g., GDPR); Demonstrates due diligence [12] [11] | Non-compliance with disclosure requirements; Risk of negligence charges [11] |

| Root Cause Analysis | Effective identification of attack vectors and system vulnerabilities [12] | Inability to determine how a breach occurred, leading to repeat incidents [5] |

| Reputational & Trust Impact | Maintains customer and investor confidence through demonstrated competence [12] [11] | Erosion of stakeholder trust due to poor handling of the incident [5] |

Experimental Protocols for Assessing Readiness

To quantitatively assess an organization's forensic readiness, researchers and auditors can employ specific experimental protocols. These methodologies evaluate the practical implementation of readiness frameworks.

Protocol 1: Mock Incident Response and Evidence Collection

This protocol tests the end-to-end capability of an organization to handle a digital forensics incident.

- Objective: To measure the time-to-collection and forensic soundness of digital evidence acquired during a simulated security breach.

- Methodology:

- Scenario Design: Develop a realistic breach scenario (e.g., a ransomware infection on an endpoint, a phishing-based account compromise).

- Evidence Seeding: Pre-place specific digital artifacts (e.g, a specific registry key, a dummy file, a unique log entry) on target systems without the knowledge of the response team.

- Trigger and Monitoring: Initiate the scenario and monitor the response team's actions, documenting the time taken to identify the incident, isolate affected systems, and begin evidence collection.

- Collection and Analysis: The team must collect evidence from identified sources (e.g., memory, disk, logs) while maintaining a verifiable chain of custody. The output is analyzed for completeness and adherence to forensic standards.

- Success Metrics: Percentage of pre-seeded artifacts successfully collected; Adherence to chain-of-custody documentation; Total time from incident trigger to secured evidence.

Protocol 2: Log Retention and Integrity Verification

This protocol evaluates the data governance and preservation controls that are foundational to forensic readiness.

- Objective: To verify that critical log data from diverse sources (e.g., cloud platforms, network devices, endpoints) is retained for a defined period and is tamper-proof.

- Methodology:

- Scope Definition: Identify and inventory all critical evidence sources as defined in a forensic readiness policy [12].

- Retention Checking: For each source, verify that the configured retention period meets or exceeds the policy mandate (e.g., 90-180 days) [5].

- Integrity Testing: For a sample of stored logs, calculate cryptographic hashes (e.g., SHA-256) at the beginning and end of a test period. Any change in the hash indicates potential tampering or corruption.

- Access Control Audit: Review access permissions to log repositories to ensure they are restricted to authorized personnel only.

- Success Metrics: 100% of critical sources meet retention policy; 0% integrity check failures; No unauthorized access permissions.

Visualizing the Forensic Readiness Logic

The following diagram illustrates the decision-making workflow and logical relationships in achieving forensic readiness, from foundational steps to full maturity.

For professionals developing or evaluating forensic readiness maturity models, a core set of tools and frameworks is essential. The table below details key resources referenced in contemporary research.

Table 2: Key Forensic Readiness Frameworks and Tools

| Resource Name | Type | Primary Function | Relevant Context |

|---|---|---|---|

| ISO/IEC 27037 | International Standard | Provides guidelines for identification, collection, acquisition, and preservation of digital evidence [12]. | Foundational for evidence handling procedures in any maturity model. |

| NIST Cybersecurity Framework (CSF) | Framework | A risk-based framework for improving cybersecurity, which includes forensic readiness as a component of response and recovery functions [12]. | Helps integrate forensic readiness into broader organizational cybersecurity risk management. |

| Digital Forensics & Incident Response (DFIR) Framework | Operational Framework | A structured approach combining digital forensics and incident response to manage security incidents effectively [12]. | Core methodology for building mature incident response and forensic capabilities. |

| ThreatResponder FORENSICS (TRF) | Software Tool | An agentless, portable tool for forensic acquisition and triage on Windows endpoints, useful for rapid evidence collection [13]. | Enables practical evidence collection in isolated or sensitive environments; supports protocol validation. |

| People-Process-Technology (PPT) | Model | A maturity model indicator focusing on the interaction between skilled personnel, defined processes, and appropriate technology [6]. | A holistic model for assessing and building the key pillars of organizational forensic readiness. |

The comparative analysis presented in this guide underscores a clear dichotomy: forensic readiness is an indispensable element of organizational resilience, while forensic unreadiness leads directly to severe operational, financial, and legal consequences, including prolonged data breaches and irrevocable loss of critical evidence. For researchers and professionals, the evaluation protocols and resources detailed herein provide a foundation for systematically assessing and enhancing forensic capabilities. The integration of robust maturity models, such as those based on the People-Process-Technology framework, is not merely a technical exercise but a strategic imperative. As digital environments grow in complexity, the objective comparison and continuous improvement of forensic readiness processes will remain vital for mitigating risk, ensuring compliance, and safeguarding an organization's future.

Maturity Models (MMs) are strategic tools used to assess and improve the current state of processes, objects, or people within an organization, with the ultimate goal of achieving continuous performance enhancement [14]. The core concept of "maturity" implies an evolutionary progress in demonstrating a specific ability, moving from an initial to a desired or normally occurring end stage [15]. These models divide this evolutionary progress into a sequence of distinct levels or stages that form a logical path from an initial state to a final level of maturity, providing organizations with a structured framework to evaluate their current capabilities and identify areas for improvement [15].

Maturity models serve multiple purposes in organizational development. They function both as assessment tools and as frameworks for continuous improvement, helping organizations gain a comprehensive understanding of their capabilities, prioritize improvements, and drive continuous enhancement [14] [15]. According to research, maturity models can be categorized into three primary purposes: descriptive (for 'as-is' assessments), comparative (enabling internal or external benchmarking), and prescriptive (indicating how to determine desirable maturity levels and providing improvement guidance) [15].

The origins of modern maturity models lie in software development, specifically with the Capability Maturity Model (CMM) and the Capability Maturity Model Integrated (CMMI) developed by the Software Engineering Institute [14] [15]. These pioneering models established the foundational five-level approach that many subsequent maturity models have adopted, describing an evolutionary path of increasingly organized and systematic maturity stages [15].

Maturity Models in Digital Forensics

In the specialized field of digital forensics, maturity models have emerged as critical tools for addressing growing cybersecurity challenges and increasing sophistication of cybercrimes. Digital Forensic Readiness (DFR) represents an anticipatory approach within the digital forensics domain that seeks to maximize an organization's ability to collect digital evidence while minimizing the cost of such operations [16]. The implementation of maturity models in this context is particularly crucial as organizations face evolving challenges in the era of Industrial Revolution 4.0 (IR 4.0), where technologies such as the Internet of Things (IoT), cloud computing, blockchains, and big data have created new vulnerabilities and complexities for digital forensic investigations [6].

The fundamental objective of any digital forensic investigation is to reveal events that occurred, with digital evidence playing a crucial role. Digital evidence is defined as data or information stored or transmitted in digital form that can be applied to support or reject hypotheses about digital events [6]. In criminal investigations, this constitutes information that holds critical links between the cause of crime and the victim. The digital forensic investigation process must follow appropriate scientific methods consisting of identification, preservation, analysis, and presentation to ensure evidence is accepted and understood by courts [6].

Recent research has highlighted the growing importance of Digital Forensic Maturity Models (DFMM) as organizations increasingly recognize the need to measure their security mechanisms and forensic readiness to mitigate economic crime exploitation risks [16]. Without a structured means to assess DFR maturity, organizations remain exposed to cyber incidents as weaknesses and opportunities to strengthen preparedness remain undiscovered [16].

The People-Process-Technology Framework in Digital Forensic Maturity

Research indicates that effective digital forensic maturity models typically incorporate the People-Process-Technology (PPT) framework as a foundational element. This framework has long been recognized as key to improving organizations and ensuring comprehensive maturity assessment [6]. Within digital forensics, these components encompass:

- People: The qualifications, training, and expertise of digital forensic personnel

- Process: Standardized procedures for evidence handling, chain of custody, and investigation methodologies

- Technology: Appropriate tools and infrastructure for digital evidence collection, preservation, and analysis

This holistic framework ensures that maturity assessments consider all critical aspects of digital forensic operations rather than focusing narrowly on technical capabilities alone.

Comparative Analysis of Digital Forensic Readiness Maturity Models

Established Digital Forensic Maturity Models

Table 1: Comparison of Digital Forensic Readiness Maturity Models

| Model Name | Core Focus | Maturity Levels | Key Assessment Dimensions | Primary Applications |

|---|---|---|---|---|

| Extended Digital Forensic Maturity Model (DFMM) [16] | Digital Forensic Readiness | 5 levels (unspecified names) | Based on extended DFRCFv2 structure; domains identified through practitioner feedback | Financial services, general enterprise DFR assessment |

| DF Maturity Indicators Framework [6] | DF Investigation Capability | Not specified | People-Process-Technology (PPT) indicators; IR 4.0 readiness | Law enforcement agencies, DF laboratories |

| Global Guidelines for DF Labs (Interpol, 2019) [6] | DF Laboratory Standards | Not specified | Premises, staff, equipment, management, procedures, quality assurance | International DF laboratory standardization |

Key Assessment Dimensions in Digital Forensic Maturity

Research by [6] has identified crucial indicators for maturity and readiness of digital forensic organizations in the IR 4.0 era. These indicators are categorized across the PPT framework:

- Process Dimensions: Chain of custody procedures, evidence handling protocols, case management systems, quality assurance mechanisms

- Technology Dimensions: Forensic tool capabilities, evidence preservation infrastructure, data analysis capacity, encryption handling capabilities

- People Dimensions: Staff qualifications and certifications, training programs, expertise with emerging technologies, continuing education

The adaptation of maturity models to digital forensics requires consideration of legal admissibility requirements, evidentiary standards, and jurisdictional variations in digital evidence handling, which represent unique challenges not present in other application domains [6] [16].

Visualization of Maturity Model Progression

Digital Forensic Maturity Evolution Pathway

Maturity Assessment Methodology Workflow

Experimental Protocols and Validation Methods

Systematic Literature Review Methodology

Recent comprehensive studies on maturity models have employed systematic literature review (SLR) methodologies following PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) guidelines [15]. The protocol typically involves:

- Planning Phase: Defining research questions and developing review protocols

- Search Strategy: Identifying relevant databases and developing comprehensive search strings

- Selection Process: Applying inclusion/exclusion criteria through screening phases

- Quality Assessment: Evaluating selected studies for methodological rigor

- Data Extraction: Systematic extraction of relevant data using standardized forms

- Synthesis: Analyzing and synthesizing findings through both quantitative and qualitative methods

This methodology was applied in a recent review of information and cyber security maturity assessment, which analyzed 96 studies from 2012-2024, with detailed qualitative synthesis limited to 36 research papers [15].

Qualitative Validation with Practitioners

The development of robust digital forensic maturity models typically incorporates qualitative validation approaches with forensic practitioners. The methodology used in developing the Extended Digital Forensic Maturity Model exemplifies this approach [16]:

Table 2: Digital Forensic Maturity Model Validation Protocol

| Research Phase | Methodology | Participant Profile | Data Collection Methods | Analysis Approach |

|---|---|---|---|---|

| Model Development | Design Science Research | Forensic academics & researchers | Literature synthesis, Framework analysis | Thematic analysis, Model structuring |

| Model Refinement | Semi-structured interviews | Experienced forensic practitioners (multiple industries) | Qualitative interviews, Domain expert feedback | Content analysis, Pattern identification |

| Model Validation | Expert review | Board-certified forensic experts | Structured feedback sessions, Model assessment | Gap analysis, Consensus building |

This methodology follows a design science approach adapted from maturity assessment model design frameworks, incorporating multiple iterations of development and validation to ensure practical applicability and theoretical soundness [16]. The qualitative approach involves interviewing participants and analyzing inputs using thematic analysis to identify common patterns and domain-specific requirements.

The Researcher's Toolkit: Essential Components for Maturity Model Implementation

Table 3: Digital Forensic Maturity Assessment Research Toolkit

| Tool/Component | Category | Function | Application Context |

|---|---|---|---|

| Semi-structured Interview Protocols | Data Collection | Gather qualitative insights on current practices and challenges | Initial assessment, model validation |

| Likert-like Questionnaires | Assessment Tool | Quantify maturity levels across defined dimensions | Benchmarking, comparative analysis |

| Maturity Grids | Visualization | Matrix representation of maturity levels and characteristics | Results communication, gap analysis |

| Systematic Literature Review Framework | Methodology | Comprehensive analysis of existing research | Model development, trend identification |

| Thematic Analysis Framework | Data Analysis | Identify, analyze, and report patterns in qualitative data | Interview data interpretation |

| People-Process-Technology (PPT) Framework | Assessment Framework | Ensure comprehensive evaluation across organizational dimensions | Model structuring, assessment design |

| Design Science Research Approach | Methodology | Structured framework for model development and iteration | Model creation and refinement |

Current Trends and Future Directions

Recent analyses of maturity models in specialized fields including digital forensics reveal several emerging trends. There is a growing emphasis on sector-specific customization rather than generic models, with particular attention to the unique requirements of different organizational contexts [15]. Research also indicates increasing development of lightweight maturity models specifically designed for small and medium-sized enterprises (SMEs) that may lack extensive resources for implementation [15].

The integration of emerging technologies such as artificial intelligence and machine learning into maturity assessment processes represents another significant trend, potentially enabling more dynamic and responsive maturity evaluation [15]. Furthermore, recent literature shows heightened focus on models assessing Industry 4.0 readiness and sustainability principles, reflecting broader technological and societal shifts [14].

Future developments in digital forensic maturity models are likely to address current gaps identified in research, including limited attention to optimizing and integrating logistic processes, underutilized and unvalidated models, and the absence of comprehensive improvement guidelines in existing frameworks [14]. The application of maturity models in digital forensics continues to evolve as organizations recognize their strategic importance in building resilience against increasingly sophisticated cyber threats.

Digital Forensic Readiness (DFR) is the proactive ability of an organization to maximize its potential to use digital evidence while minimizing the costs of an investigation [12]. In the context of modern cybersecurity, DFR has evolved from a reactive, post-incident activity to a crucial strategic function integrated throughout the organizational infrastructure. A robust DFR framework ensures that when a security incident occurs, an organization can efficiently collect, preserve, and analyze digital evidence in a way that is technically sound, legally admissible, and operationally efficient [12]. This preparedness facilitates swift incident response, ensures regulatory compliance, minimizes operational impact, and preserves organizational reputation [12].

The fundamental premise of DFR is to shift digital forensics from an "after-the-fact" investigation to a built-in organizational capability. For researchers and professionals evaluating maturity models, understanding these core components provides a structured basis for comparing how different frameworks operationalize forensic readiness. This analysis is particularly critical as organizations face increasingly sophisticated threats leveraging technologies like Living Off the Land Binaries (LOLBins) and cloud-native persistence techniques [17]. The following sections detail the essential components, compare implementation frameworks, and provide methodological guidance for assessing DFR maturity.

Core Components of a DFR Framework

A comprehensive Digital Forensic Readiness framework consists of several interconnected components that span policy, technical infrastructure, and human resources. The table below summarizes these key elements and their primary functions within a DFR strategy.

Table: Key Components of a Digital Forensic Readiness Framework

| Component Category | Specific Component | Description & Function |

|---|---|---|

| Policy & Governance | Readiness Policy | A comprehensive policy outlining the organization’s approach to evidence collection, preservation, and legal considerations [12]. |

| Legal & Compliance Alignment | Ensures evidence collection adheres to regulations (e.g., GDPR, HIPAA) and maintains legal admissibility [12]. | |

| Scope Definition | Clearly identifies which systems, departments, and data types fall within the DFR framework's scope [12]. | |

| Technical Infrastructure | Evidence Source Identification | Mapping all potential sources of digital evidence (network logs, cloud services, user devices) [12]. |

| Evidence Collection Mechanisms | Tools and systems (e.g., SIEM) to automate logging, monitoring, and forensically-sound collection of critical data [12]. | |

| Secure Evidence Storage | Immutable, secure storage solutions that preserve evidence integrity and chain of custody [12] [17]. | |

| Operational Processes | Incident Response Integration | Embedding forensic capabilities within the incident response team for real-time analysis during breaches [12] [18]. |

| Proactive Monitoring | Continuous monitoring of systems to identify potential incidents and gather baseline data [17]. | |

| Forensic Tooling | Investment in specialized digital forensic tools for imaging, cloud forensics, and big data analysis [12] [18]. | |

| Human Resources | Trained Personnel | Training for IT, security, and legal staff on proper evidence handling and analysis procedures [12]. |

| Dedicated Forensic Team | Designated team with clear roles for managing forensic investigations, potentially including external experts [12]. | |

| Cross-Functional Training | Ensuring security architects and cloud engineers design systems with investigative capabilities in mind [17]. |

The Relationship Between DFR Components

The components of a DFR framework do not operate in isolation; they form a cohesive system where policy guides operations, technical infrastructure enables processes, and human resources execute the plan. The following diagram illustrates the logical relationships and workflow between these core component groups.

Diagram 1: Logical relationships between DFR framework components

This interconnectedness highlights that effective DFR requires continuous feedback between an organization's policies, its technical infrastructure, its active processes, and its people. For instance, a forensic readiness policy mandates the identification of evidence sources, which drives the technical implementation of collection mechanisms. These technical capabilities then enable operational processes like proactive monitoring, which are carried out by trained personnel. Lessons learned during operations subsequently inform updates to both policy and technical systems [12] [17].

Comparison of DFR Frameworks and Standards

Several standardized frameworks provide structured guidelines for implementing digital forensic readiness. These frameworks help organizations integrate digital forensics with incident response, ensuring they can manage incidents effectively. The table below compares the most prominent frameworks based on their focus, applicability, and originating authority.

Table: Comparison of Digital Forensic Readiness Frameworks

| Framework Name | Issuing Body/Organization | Applicable Environments | Core Focus & Distinctive Features |

|---|---|---|---|

| ISO/IEC 27043 | International Organization for Standardization (ISO) | General IT environments [12]. | Provides guidelines based on international standards for the identification, collection, and preservation of digital evidence [19] [12]. |

| Digital Forensics and Incident Response (DFIR) | National Institute of Standards and Technology (NIST), SANS Institute | General IT, OT, hybrid environments [12]. | Integrates digital forensics with incident response, providing a structured approach for detection, containment, and recovery while preserving evidence [12]. |

| Cloud Forensic Readiness Framework | Various academic and industry bodies | Cloud environments (IaaS, PaaS, SaaS) [12]. | Addresses unique challenges of cloud forensics, including evidence collection in distributed systems and compliance with cloud-specific regulations [12]. |

| ETHICore Framework | Developed through collaborative research | General IT cybersecurity environments [12]. | Integrates technical and ethical aspects of forensic readiness, addressing concerns like data privacy, integrity, and algorithmic bias [12]. |

| NIST Cybersecurity Framework (CSF) | National Institute of Standards and Technology (NIST) | IT, OT, and Critical Infrastructure [12]. | A broader risk management framework that includes forensic readiness as a component of recovery and response functions. |

Maturity Progression in DFR Frameworks

The concept of maturity is central to evaluating and implementing any DFR framework. Organizations typically progress from ad-hoc, reactive forensic practices to a state where forensic considerations are deeply embedded in their architecture and operations. The following diagram visualizes this maturity progression, drawing parallels to established AI maturity models in cybersecurity [20].

Diagram 2: Digital Forensic Readiness maturity model progression

This maturity model illustrates a clear pathway for organizations. It begins at L0, characterized by reactive, manual processes after an incident occurs. As maturity increases to L1, basic automation and logging are implemented. At L2, standardized policies and defined forensic processes are established. L3 represents integration with incident response and proactive monitoring capabilities. The most advanced stage, L4, involves optimized processes where technologies like AI and machine learning are used for predictive analysis and autonomous response [20]. This maturity model provides researchers with a structured scale for comparing the forensic readiness of different organizations or systems.

Experimental Protocols for DFR Assessment

Evaluating the effectiveness of a DFR framework requires rigorous, empirical methodologies. Researchers and auditors can employ the following experimental protocols to assess an organization's forensic readiness and the performance of specific tools within its environment.

Mock Investigation Drill

Objective: To simulate a real-world security incident to evaluate the end-to-end effectiveness of the DFR framework, from detection to evidence presentation.

- Methodology:

- Scenario Design: Develop a realistic attack scenario (e.g., a compromised user account exfiltrating data) that triggers the organization's detection and response protocols. The scenario should involve multiple evidence sources, such as endpoint logs, network traffic, and cloud service audit trails [12] [17].

- Execution: Execute the scenario in a controlled testing environment that mirrors the production network. The incident response and forensic teams should not be given prior warning of the exact timing or nature of the drill.

- Measurement: Measure key performance indicators (KPIs), including:

- Time to Detect (TTD): The time from the initial malicious action to its detection by monitoring systems.

- Time to Evidence Collection: The time taken to initiate forensically-sound collection of relevant data from all identified sources [12].

- Evidence Integrity: The ability to maintain a verifiable chain of custody for all collected evidence.

- Root Cause Analysis Accuracy: The correctness and completeness of the investigative findings regarding the attack's methodology and scope [12].

- Data Analysis: Analyze the results to identify gaps in logging, flaws in collection procedures, and bottlenecks in the analysis workflow. The output is a quantitative and qualitative assessment of the DFR framework's operational efficacy.

Tool Efficacy and Performance Benchmarking

Objective: To quantitatively compare the performance of different digital forensic tools in processing evidence from relevant environments (e.g., cloud, mobile, endpoints).

- Methodology:

- Test Environment Setup: Create a standardized, forensically-sound dataset that incorporates diverse data types (disk images, memory dumps, cloud audit logs, mobile device backups). The dataset should be of a known size and contain hidden artifacts for recovery.

- Tool Selection: Select a range of commercial, open-source, and specialized tools (e.g., forensics imaging tools, cloud forensics technologies) for testing [12] [18].

- Controlled Testing: For each tool, perform identical tasks:

- Data Ingestion & Indexing: Measure the time and computational resources required to ingest and index the standardized dataset.

- Artifact Recovery: Measure the tool's success rate in recovering and parsing known-hidden artifacts, such as deleted files or specific log entries.

- Reporting Capabilities: Evaluate the clarity, comprehensiveness, and customizability of the generated forensic reports.

- Analysis of AI-Powered Features: For tools incorporating AI/ML, design tests to evaluate their anomaly detection capabilities and resistance to false positives. This is crucial given that Agentic GenAI systems can be error-prone and require strong human oversight [20].

- Data Analysis: The results provide a comparative benchmark of tool performance, helping organizations select the right tools based on their specific environment and investigative needs.

The Researcher's Toolkit: Essential Components for DFR

Implementing and testing a DFR framework requires a specific set of technical solutions and tools. The following table details key "research reagent solutions" — the essential materials and technologies used in the field of digital forensic readiness.

Table: Essential Research Reagents for Digital Forensic Readiness

| Category | Tool/Solution | Function in DFR Implementation & Testing |

|---|---|---|

| Evidence Collection | Security Information and Event Management (SIEM) | Centralizes logging and monitoring data from across the infrastructure, providing a primary source for timeline reconstruction [12]. |

| Write Blockers | Hardware or software tools that prevent modification of original evidence during the acquisition process, preserving integrity [17]. | |

| Evidence Analysis | Forensic Imaging Tools (e.g., FTK, EnCase) | Create bit-for-bit copies of digital storage media for analysis without altering the original evidence [12]. |

| Cloud Forensics Platforms | Specialized tools designed to acquire and analyze evidence from diverse cloud environments (IaaS, PaaS, SaaS) while navigating provider-specific APIs and data formats [12] [18]. | |

| Infrastructure & Storage | Immutable Logging & Storage | Secure storage solutions where data cannot be altered or deleted after being written, crucial for preserving evidence integrity [17]. |

| Virtualized Test Environment | A sandboxed replica of the production network for conducting mock investigation drills and tool testing without operational risk. | |

| Advanced Analytics | AI & Machine Learning Platforms | Analyze large volumes of data to identify patterns, anomalies, and potential leads, thereby augmenting human analysts [20] [18] [21]. |

| Deepfake Detection Tools | Specialized software to analyze digital media for subtle inconsistencies, verifying the authenticity of video and audio evidence [18]. |

A robust Digital Forensic Readiness framework is a multi-faceted system composed of interdependent components spanning policy, technology, processes, and people. As the comparison of frameworks demonstrates, organizations can choose from several standardized approaches, such as the holistic model based on ISO/IEC 27043 or the specialized Cloud Forensic Readiness Framework, to guide their implementation [19] [12].

The progression towards forensic maturity is a strategic journey that transforms forensics from a reactive cost center into a proactive strategic capability. For researchers and professionals, the experimental protocols and toolkit outlined provide a foundation for empirically evaluating and comparing the effectiveness of different DFR approaches. As cyber threats continue to evolve in sophistication, leveraging AI and machine learning will become increasingly central to advanced DFR frameworks, though this must be balanced with rigorous oversight and ethical considerations [20] [18]. Ultimately, integrating forensic readiness into the very fabric of an organization's architecture is no longer optional but a fundamental requirement for resilient cybersecurity operations in 2025 and beyond.

A Deep Dive into DFR Maturity Models: Structures, Dimensions, and Assessment

Digital Forensics Readiness Maturity Models (DFRMMs) provide organizational roadmaps for building sustainable capabilities to manage digital evidence and respond to incidents. These models enable organizations to systematically assess and improve their digital forensic capabilities through defined evolutionary stages and multidimensional perspectives [22]. As cybercrime complexity increases, particularly with emerging technologies, these frameworks have become essential for law enforcement, corporations, and academic institutions seeking to validate their investigative methodologies and ensure evidence admissibility [6] [2]. This guide objectively compares architectural components across prominent maturity models, analyzing their structural dimensions, progression mechanisms, and validation protocols to inform research and development in digital forensic science.

Core Architectural Framework of Maturity Models

Maturity models share fundamental architectural components that create standardized assessment frameworks. These structural elements establish consistent evaluation criteria across different organizational contexts and digital forensic specializations.

Foundational Components

Evolutionary Stages: Sequential maturity levels depicting capability progression from initial/ad hoc practices to optimized/adaptive states [23] [24]. Most models incorporate 4-6 hierarchical stages with descriptive characteristics for each capability level [24].

Assessment Dimensions: Categorical areas representing organizational facets requiring development. Most models implement multidimensional assessment across 4-9 domains to evaluate holistic capability [24]. The People-Process-Technology (PPT) framework serves as the foundational dimensional structure for many models [6].

Maturity Indicators: Specific, measurable attributes defining capability achievement within each dimension-stage combination. These operationalize abstract maturity concepts into assessable organizational characteristics [6] [22].

Structural Visualization

The following diagram illustrates the core architectural relationships between these components, showing how dimensions and stages interact within a maturity model framework:

Maturity Model Architecture - This diagram illustrates the core components and their relationships in maturity model design.

Comparative Analysis of Digital Forensic Maturity Models

Model Dimensions Comparison

Digital forensic maturity models emphasize different capability areas through their dimensional structures. The following table compares dimensions across prominent models:

| Model Name | Core Dimensions | Dimension Count | Primary Focus Areas |

|---|---|---|---|

| DF-C²M² [22] | People, Processes, Tools | 3 | Organizational capabilities, investigative methodologies, technological infrastructure |

| DFR Maturity Framework [6] | People, Process, Technology | 3 | Staff competencies, procedural rigor, tool integration |

| Industry 4.0 Maturity [25] [24] | Strategy, Culture, Technology, Processes, Products/Services | 5-9 | Business alignment, digital transformation, operational integration |

| DF Readiness Indicators [6] | Organizational, Technical, Procedural | 3 | Governance structures, system capabilities, evidence handling protocols |

Evolutionary Stages Comparison

Maturity progression follows similar evolutionary patterns across models, though with varying terminology and stage counts:

| Model/Standard | Stage 1 | Stage 2 | Stage 3 | Stage 4 | Stage 5 | Stage 6 |

|---|---|---|---|---|---|---|

| Standard CMM [23] [24] | Initial | Managed | Defined | Quantitatively Managed | Optimizing | - |

| IMPULS [24] | Outsider | Beginner | Intermediate | Experienced | Expert | Performer |

| Industrie 4.0 Index [24] | Computerization | Connectivity | Visibility | Transparency | Predictability | Adaptability |

| Digital Enterprise [24] | Digital Novice | Vertical Integrator | Horizontal Collaborator | Digital Champion | - | - |

| DREAMY [24] | Initial | Digital-Oriented | Integrated & Interoperable | Defined | Managed | - |

Experimental Validation Protocols

Digital Forensic Tool Validation Methodology

Rigorous experimental validation ensures maturity model assessments produce reliable, admissible evidence. The following protocol, adapted from Ismail et al. (2025), provides a standardized approach for validating tools referenced in maturity models [2]:

Experimental Design: Controlled testing environment utilizing two Windows-based workstations for comparative analysis between commercial and open-source tools. Testing incorporates three distinct forensic scenarios:

- Preservation and collection of original data

- Recovery of deleted files through data carving

- Targeted artifact searching in case-specific scenarios

Validation Metrics: Each experiment performed in triplicate to establish repeatability metrics with error rates calculated by comparing acquired artifacts to control references. Tools evaluated against these criteria:

- Testability: Methods must be testable and capable of independent verification

- Peer Review: Methods subject to peer review and publication

- Error Rates: Established error rates or capability for accurate results

- General Acceptance: Wide acceptance by relevant scientific community [2]

Procedural Controls: Maintenance of chain of custody documentation throughout evidence handling process. Hash verification (MD5, SHA-1) of acquired evidence to ensure integrity. Controlled access to testing environment to prevent evidence contamination.

Maturity Assessment Validation

Organizational maturity assessments require different validation approaches focusing on measurement consistency:

Assessment Protocol: Multi-method evaluation combining document analysis, tool verification, staff interviews, and procedural observation. Triangulation of findings across data sources to minimize single-method bias.

Reliability Measures: Inter-rater reliability testing with multiple assessors evaluating same organizational functions. Test-retest reliability assessment with evaluations repeated after 30-day interval.

The experimental workflow for validating digital forensic maturity follows a systematic process:

Experimental Validation Workflow - This diagram outlines the systematic process for validating digital forensic tools and methodologies.

Essential Research Reagents and Solutions

DFRMM research requires specific methodological tools and assessment instruments. The following table details key "research reagent solutions" essential for experimental work in this field:

| Research Reagent | Function | Exemplars | Application Context |

|---|---|---|---|

| Commercial Forensic Tools | Baseline comparison for capability assessment | FTK, Forensic MagiCube, EnCase [2] | Tool validation studies, capability benchmarking |

| Open-Source Forensic Tools | Cost-effective alternatives for resource-constrained environments | Autopsy, ProDiscover Basic, The Sleuth Kit [2] | Admissibility framework testing, tool reliability verification |

| Standardized Test Images | Controlled reference material for tool comparison | Digital Forensic Tool Testing images, customized scenario builds [2] | Experimental validation, tool capability assessment |

| Maturity Assessment Instruments | Structured tools for evaluating organizational capability | DF-C²M² assessment framework, PPT evaluation matrix [6] [22] | Organizational maturity measurement, capability gap analysis |

| Legal Admissibility Frameworks | Criteria for evaluating evidence acceptability | Daubert Standard, ISO/IEC 27037:2012 [2] | Evidence validation, procedural compliance verification |

Cross-Model Analysis and Research Implications

Architectural Patterns and Trends

Analysis reveals consistent architectural patterns across DFRMMs despite varying terminology. Most models follow a progressive maturation sequence beginning with technical capability development, advancing through process integration, and culminating in strategic organizational alignment [23] [24]. The People-Process-Technology framework appears in 78% of analyzed models as the foundational dimensional structure, with specialized models adding domain-specific dimensions [6] [24].

Model specialization correlates with application context. Law enforcement-focused models emphasize evidence admissibility and chain of custody procedures [22], while enterprise models prioritize regulatory compliance and incident response capabilities [5]. This contextual adaptation demonstrates the framework's flexibility but complicates cross-domain comparison.

Research Applications and Limitations

DFRMMs enable standardized capability assessment across four primary research contexts:

- Comparative Organizational Studies: Benchmarking forensic capability across departments or institutions [22]

- Longitudinal Development Tracking: Measuring capability improvement following intervention initiatives [6]

- Regulatory Compliance Assessment: Evaluating adherence to evolving standards like ISO 27037 [2]

- Technology Integration Research: Measuring organizational adoption of new forensic tools and methodologies [26]

Current model limitations include inconsistent validation methodologies and minimal empirical evidence establishing correlation between maturity levels and investigative outcomes [23]. Commercial tool dominance in validation studies may also introduce bias against open-source solutions despite comparable technical capabilities [2]. Future research should address these limitations through standardized validation protocols and broader tool inclusivity.

Analysis of the Extended Digital Forensic Readiness and Maturity Model (DFRMM)

Digital Forensic Readiness (DFR) is defined as an anticipatory approach within the digital forensics domain that seeks to maximize an organization's ability to collect digital evidence while minimizing the cost of such an operation [16]. The concept has gained significant importance as organizations face growing cyber threats and potential regulatory requirements for evidence collection. The fundamental goal of implementing DFR is to ensure that organizations can effectively gather admissible digital evidence to support potential investigations, whether for internal disciplinary actions, civil litigation, or criminal prosecution [16].

The Extended Digital Forensic Readiness and Maturity Model (DFRMM) emerges as a structured framework to assess and improve an organization's preparedness for digital forensic investigations. This model addresses the critical need for organizations to measure their current capabilities and systematically enhance their forensic processes over time [16]. The development of the DFRMM represents an evolution from earlier frameworks, integrating concepts from the Digital Forensics Readiness Commonalities Framework (DFRCF) and the Digital Forensics Management Framework (DFMF) to create a more comprehensive assessment tool [16].

Within the context of Industrial Revolution 4.0 (IR 4.0), characterized by technologies such as the Internet of Things (IoT), cloud computing, and big data, the challenges for digital forensics have intensified [6]. These technologies not only expand the attack surface for cybercriminals but also complicate the process of evidence collection and preservation. In this complex landscape, maturity models like the DFRMM provide essential guidance for organizations seeking to strengthen their forensic capabilities amid evolving technological challenges [6].

Theoretical Foundations and Development

The Extended DFRMM builds upon established principles from organizational management and cybersecurity, particularly adopting the People-Process-Technology (PPT) framework as a foundational structure [6]. This tripartite approach recognizes that effective digital forensic readiness requires synchronized capabilities across human resources, defined procedures, and appropriate technological tools. The model's development followed a rigorous design science methodology, incorporating qualitative research methods including semi-structured interviews with digital forensic practitioners to ensure practical relevance and validity [16].

The model addresses a critical gap identified in research: many organizations lack a systematic mechanism to measure their digital forensic readiness, leaving them vulnerable to undetected weaknesses that could be exploited during cyber incidents [16]. By providing a structured assessment framework, the DFRMM enables organizations to identify areas for improvement and prioritize investments in their digital forensic capabilities. The model's theoretical underpinnings also draw from capability maturity concepts widely used in other domains, adapting them specifically to the unique requirements of digital forensics [27].

Core Components and Structure

The Extended DFRMM organizes digital forensic capabilities into several key domains, each containing specific subdomains that represent critical aspects of forensic readiness. While the complete list of domains is extensive, the model notably emphasizes Legal Involvement as a central component, positioning it as the foundational axis around which other capabilities revolve [27]. This structural decision reflects the fundamental importance of legal admissibility and compliance in digital evidence collection and handling.

The model's architecture enables organizations to assess their maturity across multiple dimensions simultaneously, providing a holistic view of their forensic readiness posture. Unlike earlier models that focused predominantly on technical aspects, the Extended DFRMM incorporates organizational and procedural elements essential for sustainable forensic capabilities. This comprehensive approach ensures that improvements in one domain are supported by corresponding capabilities in related areas, creating a cohesive and effective digital forensic ecosystem within the organization [16].

Comparative Analysis of Digital Forensic Maturity Models

Key Maturity Models in Digital Forensics

The Extended DFRMM exists within a landscape of several maturity models developed to address digital forensic capabilities. Table 1 provides a comparative overview of prominent models in this field, highlighting their distinct characteristics and focus areas.

Table 1: Comparison of Digital Forensic Maturity Models

| Model Name | Primary Focus | Key Components | Strength Areas | Citation |

|---|---|---|---|---|

| Extended DFRMM | Comprehensive organizational readiness | People, Process, Technology, Legal | Holistic coverage, Legal compliance focus | [16] |

| DF-C2M2 | Digital forensics organizational capabilities | Process areas, capability levels | Structured improvement roadmaps | [6] |

| C2M2 for IT Services | Cybersecurity capabilities for IT services | Maturity indicator levels | IT service integration | [6] |

| NIST SP 800-86 | Forensic evidence analysis framework | Collection, examination, analysis, reporting | Technical guidance | [27] |

| ISO/IEC 27037 | Digital evidence preservation | Identification, collection, acquisition, preservation | International standard compliance | [27] |

Distinctive Features of the Extended DFRMM

The Extended DFRMM differentiates itself through several key characteristics. First, it explicitly integrates legal considerations throughout all capability domains, recognizing that technical evidence collection must align with legal standards for admissibility [16]. This integration is particularly valuable for organizations operating in regulated industries or those that may need to present digital evidence in legal proceedings.

Second, the model emphasizes proactive preparedness rather than reactive investigation capabilities. This orientation encourages organizations to implement systems and processes that facilitate evidence collection before incidents occur, potentially reducing investigation costs and improving evidence quality [16]. The proactive stance aligns with modern cybersecurity practices that emphasize prevention and preparedness alongside detection and response.

Third, the Extended DFRMM was specifically validated with forensic practitioners, ensuring that its components reflect real-world challenges and priorities [16]. This practical validation distinguishes it from theoretically derived models and enhances its utility for organizations seeking to improve their actual forensic capabilities rather than merely achieving compliance with standards.

Methodological Approaches in DFRMM Research

Research Design and Validation

The development of the Extended DFRMM employed a qualitative research methodology grounded in thematic analysis of data collected through semi-structured interviews with digital forensic professionals [16]. This approach allowed researchers to capture nuanced insights from practitioners with direct experience in digital forensic investigations across various industries and contexts. The participatory design process ensured that the resulting model addressed practical concerns rather than purely academic considerations.

The research methodology followed a design science approach, which focuses on creating artifacts that solve identified organizational problems [16]. This methodology is particularly appropriate for maturity model development, as it emphasizes utility and practicality alongside theoretical soundness. The design science process typically involves five key stages: problem identification, objectives definition, design and development, demonstration, and evaluation [16]. The Extended DFRMM development adhered to this structured approach, contributing to its robustness as an assessment tool.

Data Collection and Analysis

Data collection for the Extended DFRMM validation involved engaging with professionals possessing diverse backgrounds in digital forensics, including variations in industry experience, organizational size, and forensic specializations [16]. This diversity strengthened the model's generalizability across different organizational contexts. The semi-structured interview format allowed researchers to explore both anticipated themes and emergent insights from participants, creating a rich dataset for analysis.

The analytical process employed thematic analysis techniques to identify patterns and relationships within the qualitative data [16]. Through iterative coding and categorization, researchers distilled the extensive practical knowledge shared by participants into structured capability domains and maturity indicators. This rigorous analytical approach helped ensure that the resulting model comprehensively represented the critical components of digital forensic readiness while maintaining practical applicability for organizations.

Core Components and Signaling Pathways in DFRMM

Conceptual Architecture of the Extended DFRMM

The Extended DFRMM organizes digital forensic readiness capabilities into a structured framework with interconnected components. The model's architecture emphasizes the integration of legal considerations throughout all capability domains, reflecting the essential requirement for digital evidence to meet legal standards for admissibility. The following diagram illustrates the core conceptual relationships and signaling pathways within the Extended DFRMM:

DFRMM Core Conceptual Relationships