Designing TRL 4 Inter-Laboratory Validation Studies for Court-Ready Forensic Methods

This article provides a comprehensive framework for designing inter-laboratory validation studies to advance forensic methods to Technology Readiness Level (TRL) 4.

Designing TRL 4 Inter-Laboratory Validation Studies for Court-Ready Forensic Methods

Abstract

This article provides a comprehensive framework for designing inter-laboratory validation studies to advance forensic methods to Technology Readiness Level (TRL) 4. It guides researchers and forensic professionals through the foundational principles, methodological execution, troubleshooting strategies, and final validation required to demonstrate that a method is robust, standardized, and ready for implementation in casework. Special emphasis is placed on meeting the stringent legal admissibility standards, such as the Daubert Standard and Federal Rule of Evidence 702, which require known error rates, peer review, and general acceptance within the scientific community.

Laying the Groundwork: From TRL 3 to TRL 4 and Legal Admissibility

Defining Technology Readiness Level 4 in Forensic Contexts

Technology Readiness Levels (TRLs) represent a systematic metric for assessing the maturity of a particular technology, typically on a scale from 1 (basic principles observed) to 9 (system proven in operational environment) [1]. In forensic science, this framework helps standardize the development and implementation of new analytical methods, ensuring they meet the rigorous demands of legal proceedings. The transition from promising research to court-admissible evidence requires careful navigation of both technical and legal standards, including the Daubert Standard and Federal Rule of Evidence 702 in the United States, which emphasize testing, peer review, error rates, and general acceptance within the scientific community [2].

Within forensic chemistry publications, a specialized four-level TRL scale is often employed to better reflect the development pathway of analytical methods intended for crime laboratory implementation [3]. This adapted framework places specific emphasis on validation and standardization requirements at each stage. TRL 4 represents a critical milestone where methods transition from preliminary proof-of-concept to being substantiated through multi-laboratory validation, making them candidates for implementation in operational forensic laboratories [3].

Defining TRL 4 in Forensic Contexts

Core Definition and Position in the Development Pathway

In forensic contexts, Technology Readiness Level 4 signifies the stage where a method undergoes refinement, enhancement, and inter-laboratory validation to become a standardized protocol ready for implementation in forensic laboratories [3]. Research at this level generates knowledge that can be "immediately adopted or used in casework" [3]. This represents a significant advancement beyond TRL 3, where techniques are applied to specific forensic applications with measured figures of merit and aspects of intra-laboratory validation, but lack independent verification across multiple laboratories [3].

The fundamental distinction of TRL 4 research is its focus on establishing reproducibility and reliability across different institutional settings, instruments, and operators. This inter-laboratory validation is essential for forensic methods because results must withstand legal scrutiny and be independent of the specific laboratory that generated them. Methods reaching TRL 4 have typically addressed key variables that could affect analytical outcomes and have demonstrated robustness through standardized protocols.

Comparative Analysis of TRL Frameworks

Table 1: TRL 4 Definitions Across Different Frameworks

| Framework | TRL 4 Definition | Key Emphasis | Primary Context |

|---|---|---|---|

| Forensic Chemistry Journal | "Refinement, enhancement, and inter-laboratory validation of a standardized method ready for implementation in forensic laboratories" [3] | Inter-laboratory validation, error rate measurement, database development | Forensic method development for crime laboratories |

| Traditional NASA/ESA Scale | "Component and/or breadboard validation in laboratory environment" [1] | Component integration and testing in laboratory setting | Aerospace and general technology development |

| Canadian Government Scale | "Component and/or validation in a laboratory environment" [4] | Integration of basic technological components in laboratory | Broad technology assessment |

| Medical Countermeasures | "Optimization and Preparation for Assay, Component, and Instrument Development" [5] | Down-selecting targets, finalizing methods, developing detailed plans | Medical device and diagnostic development |

As illustrated in Table 1, the forensic chemistry adaptation of TRL 4 places greater emphasis on collaborative validation and immediate applicability to casework compared to more traditional TRL frameworks. While the NASA/ESA scale focuses on component-level validation in laboratory environments, the forensic context specifically requires multi-laboratory participation to establish method reliability across the forensic community.

Experimental Protocols for TRL 4 Validation Studies

Comprehensive Inter-Laboratory Comparison Design

A representative example of TRL 4 research in forensic science is demonstrated in a 2025 study published in Forensic Chemistry titled "Improving inter-laboratory comparability of tooth enamel carbonate stable isotope analysis (δ13C, δ18O)" [6]. This study exemplifies the systematic approach required for establishing method reliability across multiple laboratories.

The experimental protocol involved:

- Sample Selection and Preparation: Ten "modern" faunal teeth obtained from field recoveries were selected as test samples. Enamel powder subsamples were prepared using standardized protocols across participating laboratories [6].

- Variable Testing: The study systematically compared the effects of multiple methodological variables:

- Chemically pretreated versus untreated samples

- Standardized versus non-standardized acid reaction temperatures

- Samples analyzed with and without baking to remove moisture before analysis [6]

- Data Analysis: Isotopic δ values (δ13C and δ18O) generated by different laboratories were compared using statistical methods to identify systematic differences and their causes [6].

This experimental design allowed researchers to identify that "δ values from the two laboratories were systematically different when samples were chemically pretreated, but that differences were smaller or negligible for untreated samples" [6]. Such findings are crucial for establishing standardized protocols that minimize inter-laboratory variability.

Methodologies for Forensic Chemistry Applications

In forensic chemistry applications such as comprehensive two-dimensional gas chromatography (GC×GC), TRL 4 validation requires specific experimental approaches:

- Intra- and Inter-laboratory Validation: Conducting rigorous testing within a single laboratory followed by collaborative trials across multiple laboratories to establish reproducibility [2].

- Error Rate Analysis: Quantifying methodological uncertainty and potential sources of error through controlled experiments and statistical analysis [2].

- Standardization Development: Creating detailed protocols that can be implemented across different laboratory settings with different instrument configurations [2].

Table 2: Key Experimental Components for TRL 4 Forensic Validation

| Component | Protocol Requirements | Validation Metrics | Outcome Measures |

|---|---|---|---|

| Inter-laboratory Testing | Identical samples analyzed across multiple laboratories using standardized protocols | Statistical comparison of results (e.g., ANOVA, t-tests) | Establishment of reproducibility limits and systematic biases |

| Error Rate Analysis | Controlled introduction of known variables and potential interferents | Quantification of false positive/negative rates, measurement uncertainty | Defined confidence intervals for analytical results |

| Method Robustness | Deliberate variations in analytical conditions (temperature, timing, reagents) | Determination of critical parameters affecting results | Established tolerances for methodological variables |

| Reference Materials | Development and characterization of standardized control materials | Consistency in measurement across laboratories and over time | Quality control framework for ongoing method implementation |

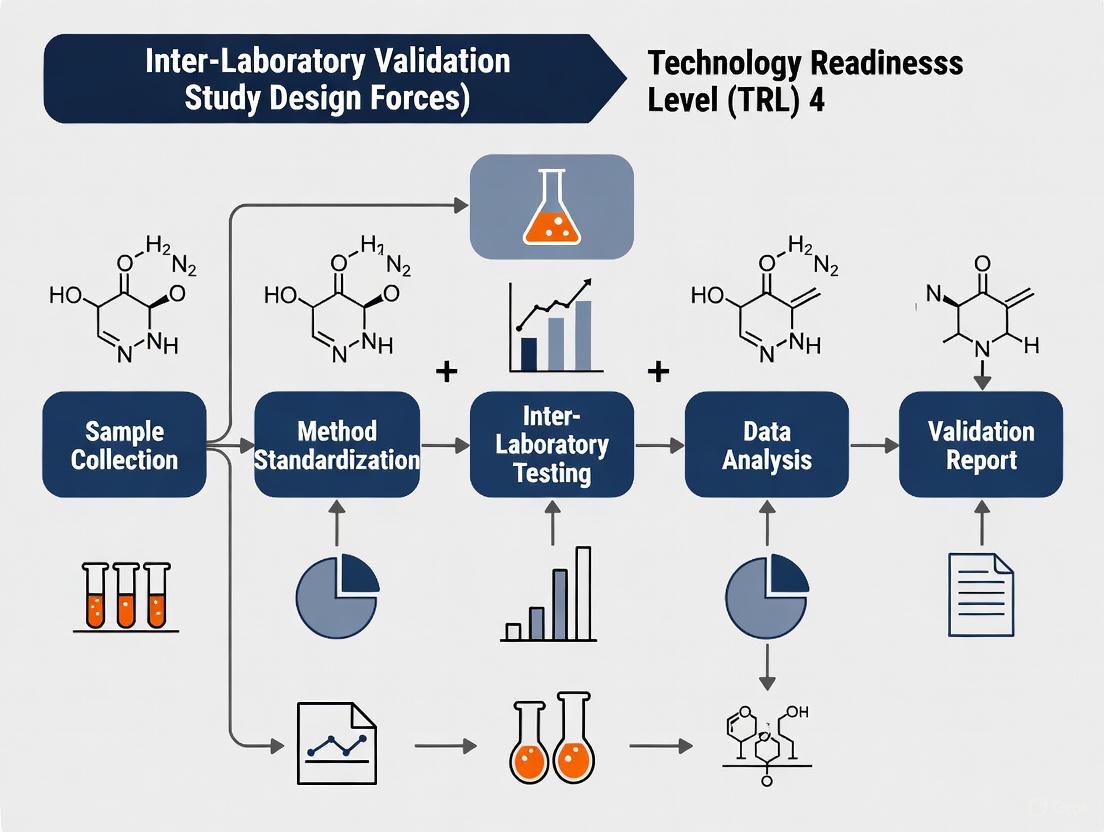

Visualization of TRL 4 Advancement Pathway

The following diagram illustrates the progression from TRL 3 to TRL 4 in forensic contexts and the key components required for validation:

Diagram 1: TRL 4 Advancement Pathway in Forensic Science

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for TRL 4 Forensic Validation Studies

| Item | Function in TRL 4 Research | Application Examples |

|---|---|---|

| Reference Standard Materials | Provide calibrated benchmarks for inter-laboratory comparison and method validation | Characterized control samples with known properties for instrument calibration [6] |

| Certified Reference Materials | Establish traceability and accuracy in quantitative analyses | Materials with certified isotopic compositions or chemical concentrations [6] |

| Standardized Chemical Reagents | Ensure consistency in sample preparation and treatment across laboratories | High-purity acids, solvents, and derivatization agents with specified lot-to-lot consistency [6] |

| Stable Isotope Standards | Enable comparative analysis of isotopic ratios across different instrumental platforms | Internationally recognized isotopic reference materials for forensic isotope analysis [6] |

| Quality Control Materials | Monitor analytical performance over time and across different laboratory environments | Control samples analyzed repeatedly to establish method precision and reproducibility [6] |

Comparative Performance Data for TRL 4 Methods

Quantitative Assessment of Method Performance

TRL 4 validation requires comprehensive quantitative data demonstrating method performance across multiple laboratories. The tooth enamel carbonate stable isotope study provides exemplary data for such assessment:

Table 4: Performance Comparison of TRL 4 Validated Method Versus Pre-Validation State

| Performance Metric | Pre-TRL 4 (Single Laboratory) | Post-TRL 4 (Multi-Laboratory Validated) | Improvement |

|---|---|---|---|

| Inter-laboratory Variability (δ13C) | Significant systematic differences between laboratories (e.g., up to 0.5‰) [6] | Reduced differences (e.g., < 0.1‰) through protocol standardization [6] | >80% reduction in systematic bias |

| Effect of Chemical Pretreatment | Introduced measurable bias in isotopic measurements [6] | Elimination of pretreatment-induced variability through protocol modification [6] | Removal of significant error source |

| Data Comparability | Limited due to methodological heterogeneity [6] | Enabled through standardized protocols and elimination of unnecessary steps [6] | Establishment of reliable cross-study comparisons |

| Method Robustness | Susceptible to variations in sample preparation protocols [6] | Resilient to minor variations in implementation across laboratories [6] | Enhanced reproducibility across different operational environments |

Legal Admissibility Considerations

For forensic methods, TRL 4 validation directly addresses key legal admissibility criteria:

- Testing and Error Rates: TRL 4 research explicitly quantifies methodological uncertainty and error rates through inter-laboratory studies, satisfying Daubert requirements [2].

- Peer Review and Publication: Research at this level is typically published in peer-reviewed journals, providing independent scientific validation [2] [6].

- General Acceptance: Inter-laboratory validation across multiple institutions demonstrates growing acceptance within the relevant scientific community [2].

- Standardization: Development of standardized protocols supports consistent application across forensic laboratories, enhancing reliability [2].

Technology Readiness Level 4 represents a critical transition point in forensic method development where techniques progress from single-laboratory applications to multi-laboratory validated protocols ready for implementation in casework. The defining characteristics of TRL 4 research include systematic inter-laboratory comparison, rigorous error rate analysis, and development of standardized protocols that can be consistently applied across different forensic laboratory environments.

The experimental approaches and validation methodologies required at TRL 4 directly address the legal standards for admissibility of scientific evidence in judicial proceedings, particularly the Daubert Standard and Federal Rule of Evidence 702 in the United States. By establishing method reliability through collaborative validation studies, TRL 4 research provides the necessary foundation for forensic techniques to withstand legal scrutiny while producing scientifically robust evidence. As forensic science continues to evolve toward more quantitative, data-driven approaches [7], the rigorous validation standards embodied by TRL 4 will become increasingly essential for maintaining and enhancing the quality and reliability of forensic evidence in the justice system.

For researchers and scientists developing novel forensic methods, navigating the legal standards for evidence admissibility is a critical final step in the technology transfer pipeline. The admissibility of expert testimony in U.S. courts is governed primarily by two competing standards: the Frye standard established in 1923 and the Daubert standard from 1993, with Federal Rule of Evidence 702 providing the statutory framework for federal courts [8]. For forensic methods at Technology Readiness Level (TRL) 4—where experimental prototypes have been validated in a laboratory environment—understanding these legal frameworks during study design is paramount for eventual courtroom acceptance [2].

Recent amendments to Federal Rule of Evidence 702, effective December 2023, have clarified that the proponent of expert testimony must demonstrate to the court that "it is more likely than not that" the testimony meets all admissibility requirements [9]. This heightened emphasis on the judge's gatekeeping role makes rigorous inter-laboratory validation studies essential for novel forensic techniques like comprehensive two-dimensional gas chromatography (GC×GC) and other analytical methods being developed for forensic applications [2].

Comparative Analysis of Legal Standards

The Frye Standard: General Acceptance Test

The Frye standard, derived from Frye v. United States (1923), establishes that expert testimony is admissible only if the scientific technique on which the opinion is based is "generally accepted" as reliable in the relevant scientific community [10]. This standard essentially makes the scientific community the gatekeeper of evidence admissibility, with courts considering the issue once and not revisiting it in subsequent cases after establishing general acceptance [11].

Under Frye, novel scientific methods that produce "good science" may be excluded if they have not yet reached the level of general acceptance within their field [11]. Conversely, techniques that are generally accepted but poorly applied in a specific case ("bad science") will likely still be admitted, with challenges going to the weight rather than admissibility of the evidence [8].

The Daubert Standard and Federal Rule 702

The Daubert standard, established in Daubert v. Merrell Dow Pharmaceuticals, Inc. (1993), significantly expanded the judge's role as evidentiary gatekeeper [12]. Daubert held that Rule 702 of the Federal Rules of Evidence superseded Frye's general acceptance test, requiring trial judges to ensure that proffered expert testimony rests on a reliable foundation and is relevant to the case [13].

The Daubert decision provided a non-exclusive checklist of factors for trial courts to consider [14]:

- Whether the theory or technique can be and has been tested

- Whether it has been subjected to peer review and publication

- Its known or potential error rate

- The existence and maintenance of standards controlling its operation

- Whether it has attracted widespread acceptance in a relevant scientific community

The 2000 and 2023 amendments to Federal Rule of Evidence 702 codified and clarified these principles, emphasizing that judges must evaluate whether the proponent has demonstrated by a preponderance of the evidence that: (a) the expert is qualified; (b) the testimony is based on sufficient facts or data; (c) the testimony is the product of reliable principles and methods; and (d) the expert has reliably applied the principles and methods to the facts of the case [14] [9].

Jurisdictional Application of Standards

| Jurisdiction Type | Governing Standard | Key Characteristics |

|---|---|---|

| Federal Courts | Daubert + FRE 702 [12] | Judges act as active gatekeepers; flexible, multi-factor analysis [8] |

| Daubert States (Majority) | Daubert/Modified Daubert [11] | Variations include "Shreck/Daubert" (CO), "Porter/Daubert" (CT) [11] |

| Frye States (Minority) | Frye Standard [11] | CA, IL, PA, WA; focuses primarily on "general acceptance" [13] |

| Hybrid Jurisdictions | Mixed Standards [11] | NJ applies different standards depending on case type [11] |

Table 1: Jurisdictional application of expert testimony admissibility standards across United States courts.

Implications for TRL 4 Forensic Research Design

Designing Inter-Laboratory Studies for Legal Admissibility

For forensic methods at TRL 4—where technology components are validated as laboratory prototypes—inter-laboratory validation studies must be designed with specific legal admissibility criteria in mind [2]. Research indicates that GC×GC and other novel forensic applications face significant hurdles in courtroom implementation due to strict legal standards, despite their analytical advantages [2].

A comprehensive review of forensic applications using GC×GC noted that "future directions for all applications should place a focus on increased intra- and inter-laboratory validation, error rate analysis, and standardization" to meet legal admissibility requirements [2]. This aligns directly with Daubert factors emphasizing known error rates and maintenance of standards.

Experimental Protocols for Legal Readiness

Error Rate Determination: Under Daubert, courts consider "the known or potential rate of error" of a technique [12]. TRL 4 research should incorporate protocols that quantitatively assess method reliability across multiple laboratories. For example, a recent inter-laboratory comparison of tooth enamel carbonate stable isotope analysis (δ13C, δ18O) implemented a systematic comparison of isotope delta values measured in two different laboratories, evaluating variations across pretreatment protocols and analytical conditions [6].

Standardization Protocols: The existence and maintenance of standards controlling a technique's operation is another key Daubert factor [12]. Research should establish standardized protocols that can be consistently applied across laboratory environments. The tooth enamel study demonstrated that standardization of acid reaction temperature and baking improved inter-laboratory comparability, while chemical pretreatment introduced unnecessary variability [6].

Blinded Testing: Incorporating blinded testing procedures across multiple laboratories helps establish whether a technique can be tested objectively—another Daubert consideration [12]. This approach minimizes contextual bias and demonstrates methodological rigor.

Data Transparency: Complete documentation of all methodological variations, statistical analyses, and raw data supports peer review and scientific acceptance. The tooth enamel study made their data and R code publicly available on GitHub, facilitating transparency and further validation [6].

The Scientist's Toolkit: Essential Materials for Admissibility-Focused Research

| Research Component | Function in Validation | Relevance to Legal Standards |

|---|---|---|

| Inter-laboratory Protocols | Standardized procedures across multiple labs | Demonstrates "existence of standards" (Daubert) [12] |

| Reference Materials | Certified materials with known properties | Establishes methodology reliability and testing capability [12] |

| Statistical Analysis Packages | Quantify error rates and variability | Addresses "known or potential error rate" (Daubert) [12] |

| Blinded Sample Sets | Controls for analyst bias during testing | Supports objective testability requirement [12] |

| Data Transparency Platforms | Share raw data and analytical code | Facilitates peer review and scientific acceptance [2] |

Table 2: Essential research components for designing TRL 4 validation studies that address legal admissibility criteria.

Case Study: GC×GC Forensic Applications at TRL 4

Comprehensive two-dimensional gas chromatography (GC×GC) represents an illustrative case study of advanced analytical techniques navigating the path toward courtroom admissibility. Current research on GC×GC use for forensic applications was summarized and reviewed for analytical advances and technology readiness, with seven forensic chemistry applications categorized into technology readiness levels based on current literature [2].

These applications face significant admissibility hurdles despite their analytical advantages. As noted in the research, "routine evidence analysis in forensic science laboratories does not currently use GC×GC–MS as an analytical technique due to strict criteria set by legal systems that limit the entrance of scientific expert testimony into a legal proceeding" [2]. This challenge is particularly relevant for analytical chemists developing new methods, as "the standards required of research for eventual admission into the legal system are not set by scientists but rather other stakeholders in the legal system" [2].

Visualizing the Legal-Admissibility Pathway for Forensic Research

Diagram 1: Legal-admissibility pathway for TRL 4 research.

The pathway from laboratory validation to courtroom admissibility for novel forensic methods requires strategic research design that explicitly addresses the legal standards of the relevant jurisdiction. For TRL 4 research, this means designing inter-laboratory studies that not only establish analytical validity but also specifically generate the evidence needed to satisfy Daubert factors or the Frye general acceptance test.

Researchers should prioritize error rate quantification, inter-laboratory standardization, robust sample sizes, and peer-reviewed publication to build the foundation for eventual expert testimony admissibility. As the 2023 amendments to Rule 702 have emphasized, the burden is squarely on the proponent of expert testimony to demonstrate its reliability by a preponderance of the evidence, making rigorous validation studies at the TRL 4 stage more critical than ever for forensic method development.

The Critical Role of Inter-Laboratory Comparisons (ILC) and Proficiency Testing (PT)

In the rigorous field of forensic science, the validation of new analytical methods is paramount to ensuring that results are reliable, reproducible, and defensible in a court of law. For methods at Technology Readiness Level (TRL) 4, characterized by the refinement and inter-laboratory validation of a standardized method ready for implementation, this process is particularly critical [15]. Within this framework, Inter-Laboratory Comparisons (ILC) and Proficiency Testing (PT) emerge as indispensable tools. According to international standards, an Inter-Laboratory Comparison (ILC) is the organization, performance, and evaluation of tests on the same or similar items by two or more laboratories under predetermined conditions. Proficiency Testing (PT), a specific type of ILC, is defined as the evaluation of participant performance against pre-established criteria [16] [17]. While the terms are often used interchangeably, a key distinction exists: PT is a formal, third-party-managed exercise that includes a reference laboratory to determine participant performance, whereas an ILC can be a simpler agreement between laboratories to compare results among themselves [17]. For forensic methods transitioning from development to operational use, these processes provide the external, objective evidence needed to demonstrate that a method is not only functional in a single laboratory but also robust and transferable across multiple facilities, thereby forming the bedrock of methodological credibility [15] [18].

The Strategic Importance of ILC/PT in Forensic Research

Participation in ILC and PT schemes offers strategic benefits that extend far beyond mere regulatory compliance. For a forensic laboratory, these activities are a cornerstone of quality assurance.

Promoting Confidence and Ensuring Compliance: Successful participation in ILC/PT promotes confidence among external stakeholders, including regulators, customers, and the legal system, as well as within the laboratory's own staff and management [16]. Furthermore, it is a direct requirement for accreditation to international standards such as ISO/IEC 17025 [19]. For forensic evidence, which can directly impact individual liberties and legal outcomes, this external validation is not just beneficial—it is essential [18].

Assessing and Improving Laboratory Competence: ILC/PT provides an unparalleled, holistic assessment of a laboratory's entire testing process. It simultaneously evaluates all factors influencing a test result, including the validity of methods, the adequacy of equipment, the correctness of data handling, and the competence of personnel [19]. This comprehensive check offers laboratories an early warning of potential measurement problems, allowing for corrective actions before casework is compromised [20].

Supporting Method Validation and Uncertainty Estimation: From a TRL 4 research perspective, ILC/PT data is vital for method validation. It helps demonstrate method precision, accuracy, and robustness across different laboratory environments [16]. The results provide valuable data for comparing results obtained from different methods and are crucial for the realistic estimation of measurement uncertainty by revealing laboratory-specific bias and generating reproducibility standard deviations that account for all known and unknown sources of error [16] [19].

Cost-Benefit Analysis: The cost of participating in a proficiency test is typically only a few hundred euros. When weighed against the potentially catastrophic costs of unreliable forensic results—which can include miscarriages of justice, loss of reputation, and massive litigation—the investment is overwhelmingly justified [19].

The following diagram illustrates the logical relationship between the core concepts of ILC/PT and their critical outcomes in a forensic research context.

Experimental Protocols for ILC/PT in Validation Studies

Designing and executing a robust ILC or PT study for a forensic method at TRL 4 requires meticulous planning and adherence to established protocols. The process can be broken down into three key phases, with specific considerations for method validation at this stage.

Phase 1: Pre-Testing Preparation and Planning

- Developing the PT Plan: Laboratories must develop and document a formal PT plan. For accreditation, this often entails a four-year plan to ensure annual participation and adequate coverage of the laboratory's full scope of accreditation within the cycle [16]. For a TRL 4 method validation study, the plan should specify the number of participating laboratories, the homogeneity and stability testing of test items, and the statistical model for evaluation.

- Enrollment and Sample Selection: Laboratories must enroll in a CMS-approved PT program (if applicable) or, for a novel method, establish a collaborative agreement with other laboratories [21]. The test materials must be homogeneous, stable, and mimic real casework samples as closely as possible. For a shooting distance determination test, for example, this might involve preparing a series of controlled specimens at various known distances [15].

- Defining Objective Performance Criteria: Before testing begins, a validation plan must define the objective performance criteria for the method. This framework, crucial for developmental validation, should address parameters such as specificity, sensitivity, reproducibility, and false-positive and false-negative rates [18].

Phase 2: Sample Processing and Testing

- Routine Sample Handling: A cardinal rule of PT is that the proficiency test samples must be processed in the same manner as routine casework samples to the extent possible [21]. This means using the same methods, personnel, equipment, and data handling procedures. Testing should be rotated among all analysts who normally perform the test.

- Avoiding Unusual Practices: Laboratories must refrain from repeating tests on PT samples unless such repetition is standard operating procedure for patient or casework samples. Furthermore, inter-laboratory communication regarding the PT samples is prohibited until after the results submission deadline [21].

- Blind Testing: For a rigorous internal validation, the use of blind samples—where the analyst is unaware that the sample is part of a test—can provide the most unbiased assessment of routine performance.

Phase 3: Results Analysis and Reporting

- Data Collection and Submission: Participants submit their results to the coordinating body according to the prescribed format and timeline. For a quantitative test, this includes the measured value and its associated estimate of measurement uncertainty [17].

- Performance Evaluation (Z-Score and Eₙ Number): The coordinating body evaluates performance using statistical metrics. The Z-score indicates how many standard deviations a laboratory's result is from the consensus mean of all participants, with |Z| ≤ 2 considered satisfactory. The Normalized Error (Eₙ) compares the participant's result to the reference value, considering the uncertainty of both, with |Eₙ| ≤ 1 indicating satisfactory performance [17].

- Internal Corrective Action: If unsatisfactory or questionable results are obtained (|Z| ≥ 3 or |Eₙ| > 1), the laboratory must initiate a root cause analysis and implement a corrective action plan. This is a fundamental part of the quality improvement cycle and is essential for demonstrating a commitment to reliability [17].

The workflow for a typical PT scheme, from preparation to corrective action, is visualized below.

Performance Evaluation and Data Analysis

The quantitative data generated through ILC/PT programs are analyzed using standardized statistical methods to provide an objective measure of a laboratory's performance. The two primary metrics used are the Z-score and the Normalized Error (Eₙ).

Table 1: Key Statistical Metrics for Evaluating ILC/PT Results

| Metric | Calculation Formula | Performance Interpretation | Primary Use Case |

|---|---|---|---|

| Z-Score | ( Z = \frac{x{lab} - X}{\sigma} ) Where ( x{lab} ) is the lab's result, ( X ) is the assigned value (e.g., consensus mean), and ( \sigma ) is the standard deviation for proficiency assessment. | Satisfactory: |Z| ≤ 2 Questionable: 2 < |Z| < 3 Unsatisfactory: |Z| ≥ 3 | Comparing a laboratory's result to the population of all participants to identify outliers. |

| Normalized Error (Eₙ) | ( En = \frac{x{lab} - x{ref}}{\sqrt{U{lab}^2 + U{ref}^2}} ) Where ( x{lab} ) and ( x{ref} ) are the lab and reference values, and ( U{lab} ) and ( U_{ref} ) are their expanded uncertainties. | Satisfactory: |Eₙ| ≤ 1 Unsatisfactory: |Eₙ| > 1 | Determining conformance when the reference value and both participants' uncertainties are known and reliable. |

The power of ILC/PT data extends beyond a simple pass/fail grade. For a TRL 4 research project, analyzing results across multiple laboratories allows for the determination of key method performance characteristics, such as the method's repeatability standard deviation (within-lab precision) and reproducibility standard deviation (between-lab precision) [19]. This data is indispensable for defining the reportable range of the method and understanding its limitations under different operating conditions, as required for a rigorous developmental validation [18].

Essential Research Reagent Solutions for ILC/PT

The successful execution of an ILC/PT study, particularly for validating novel forensic methods, relies on a suite of essential materials and reagents. These components ensure the integrity of the test and the validity of the resulting data.

Table 2: Key Materials and Reagents for Forensic ILC/PT Studies

| Item Category | Specific Examples | Critical Function in ILC/PT |

|---|---|---|

| Homogeneous Test Materials | Certified Reference Materials (CRMs), synthetic saliva/drug mixtures, fortified substrates, controlled gunshot residue patterns [15]. | Serves as the consistent, stable, and uniform test item circulated among participants; fundamental for a fair comparison of results. |

| Calibration Standards | Pure analyte standards, internal standards, calibration solutions traceable to national metrology institutes. | Ensures the traceability and accuracy of all measurements performed by participating laboratories. |

| Specialized Assay Components | Specific primers and probes for DNA/RNA targets, antibodies for immunoassays, enzymes, buffers, and extraction kits. | Enables the specific detection, identification, and quantification of the target analytes (e.g., drugs, explosives, biological agents). |

| Quality Control Materials | Positive, negative, and sensitivity controls. | Run concurrently with PT samples to monitor the correct performance of the assay and instrument stability throughout the testing event. |

For forensic methods at TRL 4, standing on the precipice of implementation, Inter-Laboratory Comparisons and Proficiency Testing are not optional exercises but fundamental components of a robust validation framework. They provide the critical, external evidence required to transition a method from a research prototype to an operational tool that can withstand legal scrutiny. Through structured experimental protocols and rigorous data analysis using metrics like Z-scores and the Eₙ number, ILC/PT delivers an objective assessment of a method's precision, accuracy, and reproducibility across multiple laboratory environments. By participating in these programs, forensic researchers and laboratory managers can confidently demonstrate the reliability of their results, fulfill accreditation requirements, and, most importantly, uphold the integrity of the justice system.

Establishing the Scope and Objectives for a TRL 4 Validation Study

Technology Readiness Levels (TRL) are a systematic metric used to assess the maturity of a particular technology. The scale runs from TRL 1 (basic principles observed) to TRL 9 (actual system proven through successful mission operations). TRL 4 represents a critical stage where component validation is performed in a laboratory environment. According to NASA's definition, this level is achieved when a proof-of-concept technology is ready and "multiple component pieces are tested with one another" [22]. In forensic science, this stage bridges foundational research and practical application, establishing that an analytical method functions correctly as an integrated system before advancing to more complex testing environments.

Reaching TRL 4 is particularly significant for forensic methods due to the stringent legal admissibility standards they must eventually meet. At this stage, the scientific research transitions from speculative investigation to practical application, setting the foundation for eventual implementation in casework [2]. For techniques like comprehensive two-dimensional gas chromatography (GC×GC), which is being explored for forensic applications including illicit drug analysis, toxicology, and fire debris analysis, TRL 4 validation provides the initial laboratory evidence that the method can deliver reliable, reproducible results under controlled conditions [2]. This stage establishes the groundwork for the more rigorous inter-laboratory studies required at higher TRLs.

Defining the Scope of a TRL 4 Validation Study

Core Components of Scope

The scope of a TRL 4 validation study must be carefully delineated to demonstrate that the method is "fit for purpose" while acknowledging the limitations of this development stage. The scope should explicitly define the boundaries of the validation, including the specific forensic applications, sample types, and analytical ranges covered. For a GC×GC method, this might include defining the specific compound classes it can detect, the concentration ranges validated, and the sample matrices tested [2].

A properly scoped TRL 4 study also identifies what falls outside its current parameters. While the method should be tested with forensically relevant materials, it may not yet address all the complexities of real casework evidence. The UK Government's guidance on method validation in digital forensics emphasizes that "data for all validation studies have to be representative of the real life use the method will be put to," but at TRL 4, this may involve controlled samples that approximate, rather than perfectly replicate, actual forensic evidence [23]. The scope should clearly state that the validation occurs in a laboratory environment and may not yet account for all the variables encountered in operational forensic settings.

Technology Readiness in Context

Understanding where TRL 4 sits in the broader technology development pathway helps clarify its appropriate scope. The table below outlines the progression from basic research to operational implementation:

Table: Technology Readiness Levels for Forensic Methods

| TRL | Stage Description | Key Activities | Forensic Context |

|---|---|---|---|

| TRL 1-2 | Basic principles observed and formulated | Fundamental research; practical applications conceived | Exploring feasibility of new analytical techniques [22] |

| TRL 3 | Active research and design initiated | Analytical and laboratory studies; proof-of-concept model construction | Experimental proof of concept for forensic application [22] [2] |

| TRL 4 | Component validation in laboratory environment | Multiple component pieces tested together; basic functionality established | Integrated testing of analytical method with controlled forensic samples [22] |

| TRL 5-6 | Validation in relevant environment | Rigorous testing in simulated conditions; prototype development | Testing with realistic forensic evidence; establishing error rates [22] [2] |

| TRL 7-9 | System demonstration in operational environment | Field testing; method qualification; implementation in real cases | Courtroom admissibility; use in casework [22] [2] |

Establishing Core Objectives for TRL 4 Validation

Primary Validation Objectives

The objectives of a TRL 4 validation study should focus on generating objective evidence that the method performs reliably for its intended purpose. The UK Government's validation guidance emphasizes that "validation involves demonstrating that a method used for any form of analysis is fit for the specific purpose intended, i.e. the results can be relied on" [23]. At TRL 4, this translates to several key objectives:

First, the study must demonstrate that all integrated components of the analytical system function together correctly. For a GC×GC-MS method, this would involve verifying that the modulator, columns, detector, and data processing software work seamlessly as a system to produce reliable chromatographic separations [2]. Second, the study should establish basic performance characteristics under controlled laboratory conditions, including sensitivity, specificity, and reproducibility for the target analytes. Third, the validation should identify any significant limitations or failure modes of the method within the tested parameters.

Analytical Performance Metrics

At TRL 4, specific performance metrics should be established to quantitatively evaluate the method. These metrics form the basis for assessing whether the method meets its intended purpose and provide benchmarks for comparison with existing methods. The validation should employ a validation matrix that clearly links performance characteristics with specific metrics, graphical representations, and validation criteria [24].

Table: Essential Performance Metrics for TRL 4 Validation

| Performance Characteristic | Recommended Metrics | TRL 4 Acceptance Criteria | Measurement Approach |

|---|---|---|---|

| Accuracy | Cllr (Log-likelihood ratio cost) | Minimum acceptable value established | Comparison of method results with known ground truth [24] [25] |

| Discriminating Power | EER (Equal Error Rate), Cllrmin | Maximum acceptable error rate defined | Ability to distinguish between similar and non-similar sources [24] |

| Calibration | Cllrcal | Threshold for calibration quality set | Agreement between calculated likelihood ratios and ground truth [24] |

| Robustness | Variation in Cllr, EER under modified conditions | Acceptable performance range established | Testing with deliberate variations in method parameters [24] [26] |

| Reproducibility | Percentage correct decisions, AUC (Area Under Curve) | Minimum reproducibility standard defined | Repeated testing across multiple runs and analysts [25] [26] |

Experimental Protocols for TRL 4 Validation

Core Validation Protocol

A robust TRL 4 validation protocol should be designed to stress test the method under conditions that challenge its reliability while remaining within laboratory parameters. The experimental design must incorporate appropriate controls and reference materials to generate meaningful validation data. The protocol should include:

Controlled Sample Analysis: Testing the method with samples of known composition that represent the expected range of forensic evidence. For drug analysis, this might include certified reference materials at various concentrations in appropriate matrices [2]. The dataset should be carefully designed to "include data challenges that can stress test the method" without overwhelming it with unrealistic complexity at this development stage [23].

Systematic Variation of Critical Parameters: A key objective at TRL 4 is understanding how the method performs when operating conditions change slightly. As outlined in chromatography validation literature, this involves "deliberate variations in procedural parameters listed in the documentation" such as mobile phase composition, temperature, or instrumental settings [26]. This systematic approach helps establish the method's robustness and identifies which parameters require strict control.

Experimental Workflow

The following diagram illustrates the typical experimental workflow for a TRL 4 validation study in forensic science:

TRL 4 Experimental Workflow

Robustness Testing Design

Robustness testing is a critical component of TRL 4 validation that investigates a method's capacity to remain unaffected by small, deliberate variations in method parameters. According to chromatographic validation literature, "robustness traditionally has not been considered as a validation parameter in the strictest sense because usually it is investigated during method development" [26]. However, at TRL 4, formal robustness testing becomes essential.

Effective robustness studies employ multivariate experimental designs rather than one-variable-at-a-time approaches. These designs efficiently identify which factors significantly affect method performance. Common approaches include:

- Full Factorial Designs: All possible combinations of factors are tested (practical for up to 5 factors)

- Fractional Factorial Designs: A carefully chosen subset of factor combinations is tested (efficient for larger numbers of factors)

- Plackett-Burman Designs: Highly efficient screening designs where only main effects are of interest [26]

For a chromatographic method, typical factors to vary might include mobile phase composition, pH, flow rate, temperature, and detection wavelength. The results from robustness testing help establish system suitability parameters and define the operational boundaries for the method.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful TRL 4 validation requires specific materials and reference standards to ensure the reliability and relevance of the validation data. The following table outlines essential research reagent solutions for forensic method validation:

Table: Essential Research Reagent Solutions for TRL 4 Validation

| Category | Specific Examples | Function in TRL 4 Validation | Forensic Relevance |

|---|---|---|---|

| Certified Reference Materials | Certified drug standards, controlled substance analogs, metabolite standards | Provide ground truth for method accuracy assessment; enable quantification and identification verification [2] [23] | Essential for validating methods against known standards with established properties |

| Quality Control Materials | Internal standards, system suitability test mixtures, proficiency test materials | Monitor method performance during validation; detect instrumental drift or performance issues [23] [26] | Ensure consistent method performance across validation experiments |

| Sample Matrices | Synthetic bodily fluids, fortified substrates, simulated casework samples | Test method performance with forensically relevant materials without operational evidence [2] [23] | Bridge between clean standards and complex real-world evidence |

| Data Quality Tools | Validation software, statistical packages, likelihood ratio calculation tools | Quantify performance metrics; calculate error rates; support objective decision making [24] [25] | Enable statistical rigor required for admissibility standards |

| Chromatographic Supplies | GC×GC columns, modulators, liners, septa, specialty gases | Ensure system components meet specification; test method with different column batches [2] [26] | Critical for separation science methods common in forensic chemistry |

Successful completion of a TRL 4 validation study represents a significant milestone in forensic method development. It transforms a proof-of-concept into a laboratory-validated integrated system with documented performance characteristics and recognized limitations. The data generated at this stage provides the evidentiary foundation for deciding whether to advance the method to higher TRLs, where it will face more rigorous testing in forensically relevant environments.

The scope and objectives established at TRL 4 directly support subsequent validation stages. The performance metrics, robustness data, and operational boundaries defined at this level inform the design of TRL 5-6 studies, which focus on testing the method with realistic forensic evidence and establishing known error rates [2]. By thoroughly addressing the component validation objectives at TRL 4, researchers create a robust platform for the inter-laboratory studies and eventual implementation needed to meet legal admissibility standards such as Daubert and Frye [2] [27].

Building the Blueprint: A Step-by-Step Study Design Protocol

Selecting Participating Laboratories and Defining Sample Logistics

The transition of a forensic analytical method from research to routine casework is a critical juncture. For methods at Technology Readiness Level 4, defined as the refinement, enhancement, and inter-laboratory validation of a standardized method ready for implementation, this transition is predicated on robust inter-laboratory validation studies [3]. The design of these studies, particularly the selection of participating laboratories and the definition of sample logistics, forms the bedrock of generating defensible, reliable, and legally admissible data. This guide objectively compares different approaches to these core design elements, providing a framework for researchers to build studies that meet the stringent requirements of the legal system, including the Daubert Standard and Federal Rule of Evidence 702, which emphasize testing, known error rates, and standardisation [2].

Laboratory Selection Frameworks

The choice of laboratories for a validation study directly impacts the generalizability and acceptance of the results. A poorly selected laboratory cohort can introduce bias and limit the perceived applicability of the method.

Comparative Approaches to Laboratory Selection

The table below outlines three primary models for laboratory selection, comparing their objectives, implementation, and suitability for TRL 4 research.

Table 1: Objective Comparison of Laboratory Selection Frameworks for Validation Studies

| Selection Framework | Primary Objective | Implementation Strategy | Key Performance Metrics | Suitability for TRL 4 |

|---|---|---|---|---|

| Representative Sampling | To reflect the operational conditions and resource levels of the target community of forensic labs. | Recruit labs based on stratified sampling (e.g., by size, funding, geographic location). | Demographics of participating labs; diversity of instrument platforms. | High. Provides data on real-world robustness and implementation ease [3]. |

| Expert Performance-Based | To establish the upper limits of method performance under optimal, expert conditions. | Select labs with proven expertise and state-of-the-art instrumentation in the specific method domain. | Sensitivity; specificity; rate of inconclusive decisions; adherence to protocol [28]. | Medium. Essential for initial benchmark setting but may overestimate typical lab performance. |

| Census-Based Invitation | To achieve maximum uptake and demonstrate broad community consensus. | Invite all accredited forensic laboratories within a jurisdiction or network to participate. | Participation rate as a percentage of the total invited lab population. | Medium-High. Builds widespread acceptance but may be resource-intensive [2]. |

Experimental Protocols for Laboratory Evaluation

Prior to final selection, a lab qualification protocol is recommended. This involves:

- Pre-Study Questionnaire: Distributing a detailed survey to potential labs to catalog their capabilities, including instrument types and models (e.g., GC×GC–MS configurations), analyst qualifications and experience, and current casework volumes [29].

- Method Demonstration Kit: Providing a small set of pre-characterized samples for labs to analyze using the proposed method. This verifies basic competency and instrumental compatibility before the full study begins, providing an early measure of procedural adherence [28].

Sample Logistics and Design

The design and distribution of samples are perhaps the most critical operational aspect of an inter-laboratory study. Flaws here can invalidate the entire dataset.

Sample Design Strategies

A successful sample set must challenge the method across its intended scope while being logistically feasible to produce, distribute, and analyze.

Table 2: Comparison of Sample Set Design and Logistics Models

| Aspect | Blinded Proficiency Model | Collaborative Validation Model | Tiered-Difficulty Model |

|---|---|---|---|

| Core Principle | Mimics routine proficiency testing; labs are unaware of sample identities and expected results. | Open collaboration; all participants know the sample compositions and work together to characterize method performance. | Sample set includes a gradient of difficulty, from straightforward to highly challenging samples. |

| Key Data Outputs | False positive rate; false negative rate; rates of inconclusive decisions; measures reproducibility in a "real-world" context [28]. | Reproducibility standard deviation; collaborative assessment of systematic bias (trueness). | Diagnostic sensitivity and specificity across a spectrum of realistic scenarios; identifies method limitations [29]. |

| Logistics Complexity | High. Requires secure, centralized packaging and distribution to prevent decoding. Blind coding must be impeccable. | Moderate. Simplified logistics as blinding is not required, but sample homogeneity is still critical. | High. Requires careful design and pre-testing to ensure the difficulty gradient is accurate and informative. |

| Statistical Power | Provides direct estimates of error rates suitable for courtroom testimony under the Daubert standard [2]. | Provides high-quality data on precision and trueness for method refinement. | Offers a comprehensive view of method robustness and analyst skill under varying conditions [28]. |

Experimental Protocol for Sample Logistics

- Sample Preparation & Homogeneity Testing: A single, large batch of each sample type is prepared. Random sub-samples are analyzed in replicate using a reference method to statistically confirm homogeneity. This is a non-negotiable step; without it, inter-lab variance cannot be attributed to the method or the labs themselves [29].

- Stability Testing: Samples are stored under accelerated aging conditions (e.g., elevated temperature) to ensure they remain stable for the duration of the study.

- Blinding & Randomization: Each sample is assigned a unique, random code. The sample set for each lab is assembled to include replicates and a randomized order of presentation to control for sequence effects and within-lab bias [28].

- Structured Data Reporting: Participants receive a standardized data sheet or electronic portal for reporting. This sheet must explicitly capture inconclusive responses separately from forced binary choices (match/non-match) to allow for proper data analysis according to best practices in forensic science [28].

The Scientist's Toolkit: Essential Materials for Inter-Laboratory Studies

The following reagents and materials are critical for executing a forensic chemistry inter-laboratory study, particularly for techniques like comprehensive two-dimensional gas chromatography (GC×GC).

Table 3: Key Research Reagent Solutions and Materials

| Item Name | Function/Application | Critical Specifications |

|---|---|---|

| Consecutively Manufactured Tools | Provides a source of known-match and known-non-match samples for toolmark or impression evidence studies. Essential for establishing foundational data on method discrimination [29]. | Tools (e.g., screwdrivers) from the same production batch to minimize intrinsic variation. |

| Certified Reference Materials (CRMs) | To calibrate instruments across all participating laboratories and provide a benchmark for quantifying trueness. | Independently certified purity and concentration, with a valid chain of custody. |

| Stable Isotope-Labeled Internal Standards | Used in quantitative MS-based methods (e.g., toxicology) to correct for analyte loss during sample preparation and instrument variability. | High chemical and isotopic purity; must be spectrally distinct from the target analyte. |

| Inert Sample Storage Vials | To maintain sample integrity during storage and shipping. Prevents adsorption, contamination, or degradation of volatile analytes. | Headspace vials with polytetrafluoroethylene (PTFE)-lined septa, certified for the analytes of interest (e.g., for ignitable liquid residues) [2]. |

| Modulator Cryogen & Consumables | Specific to GC×GC systems, the modulator is critical for separation. A consistent supply of consumables (e.g., liquid nitrogen, CO₂) or modulator parts is needed for methods at this technical level [2]. | Purity and supply reliability to prevent study interruptions. |

Visualizing Study Workflows

The following diagrams, defined using the DOT language and adhering to the specified color and contrast rules, illustrate the logical relationships and workflows in inter-laboratory study design.

Lab Selection and Validation Workflow

This diagram outlines the sequential process for selecting participating laboratories and validating their readiness.

Sample Logistics and Data Analysis Process

This diagram details the pathway for sample preparation, distribution, and the subsequent analysis of returned data.

TRL Progression to Legal Admissibility

This diagram shows the logical relationship between technology readiness, inter-laboratory validation, and the criteria for legal admissibility.

The development of a robust test plan for forensic methods at Technology Readiness Level (TRL) 4 requires rigorous validation frameworks to ensure scientific reliability and legal admissibility. Inter-laboratory studies at this stage must demonstrate that analytical techniques are accurate, reproducible, and fit-for-purpose within the justice system. Research in forensic science must adhere to international standards and legal precedents governing expert testimony and evidence admission [2] [30]. The ISO 21043 standard provides requirements and recommendations designed to ensure the quality of the forensic process, covering vocabulary, recovery, transport, storage of items, analysis, interpretation, and reporting [30]. This guide outlines the comprehensive test plan structure necessary for validating emerging forensic methods through multi-laboratory studies, with particular focus on materials, standardized methodologies, and data reporting protocols that meet both scientific and legal requirements.

Materials and Research Reagent Solutions

A standardized set of materials and reagents is fundamental to any inter-laboratory validation study. The consistent use of certified reference materials and quality-controlled reagents across participating laboratories minimizes variability and ensures comparable results. The following table details essential research reagent solutions for forensic method validation:

Table: Essential Research Reagent Solutions for Forensic Method Validation

| Item Name | Function/Application | Specifications/Standards |

|---|---|---|

| Certified Reference Materials (CRMs) | Calibration and quality control; provides known quantitative values for method accuracy assessment | Traceable to national/international standards; certificate of analysis with stated uncertainty |

| Internal Standards (IS) | Correction for analytical variability in mass spectrometry; improves data accuracy and precision | Stable isotope-labeled analogs of target analytes; high chemical purity (>95%) |

| Quality Control Materials | Monitoring analytical process performance; detecting systematic errors and drift | Characterized pools with established target values and acceptable ranges |

| Mobile Phase Solvents | Liquid chromatography separation; compound elution and ionization | HPLC or LC-MS grade; low UV absorbance; minimal particulate matter |

| Stationary Phase Columns | Compound separation based on chemical properties; critical for resolution and sensitivity | Specified dimensions, particle size, and surface chemistry; from reputable manufacturers |

| Derivatization Reagents | Chemical modification of analytes to enhance detection, volatility, or stability | High purity; demonstrated reaction efficiency with target compounds |

The selection of these materials must be documented with detailed specifications, including manufacturer, lot numbers, storage conditions, and expiration dates. For inter-laboratory studies, central procurement and distribution of critical reagents enhance consistency across participating sites [31].

Methodological Protocols for Inter-laboratory Validation

Experimental Design and Sample Preparation

Inter-laboratory validation studies for TRL 4 forensic methods require meticulously controlled experimental protocols to generate statistically meaningful data. The sample set should include certified reference materials, real-world case-type samples, and negative controls to comprehensively evaluate method performance. Sample preparation protocols must be explicitly detailed, including extraction methods, purification steps, and derivatization procedures where applicable. For comprehensive two-dimensional gas chromatography (GC×GC) applications, which provide advanced chromatographic separation for forensic evidence, method parameters including column selection, temperature programs, and modulation periods must be standardized across participating laboratories [2]. All protocols should specify equipment calibration procedures, acceptance criteria for quality control samples, and contingency plans for protocol deviations.

Key Validation Parameters and Testing Protocols

Forensic method validation requires systematic assessment of multiple performance parameters to establish reliability, accuracy, and robustness. The following experimental protocols outline the core validation tests required for TRL 4 inter-laboratory studies:

Table: Core Validation Parameters and Testing Methodologies

| Validation Parameter | Experimental Protocol | Acceptance Criteria | Data Reporting Requirements |

|---|---|---|---|

| Accuracy and Trueness | Analysis of certified reference materials (n≥5 replicates) and comparison to reference values; recovery studies at multiple concentration levels | Mean accuracy 85-115%; CV <15% for most analytes | Reported as percent recovery or bias; statistical significance testing |

| Precision | Intra-day (n≥5) and inter-day (n≥3 days) replication at low, medium, and high concentrations; inter-laboratory comparison | Intra-laboratory CV <15%; inter-laboratory CV <20% | CV values for each concentration level; ANOVA components of variance |

| Selectivity/Specificity | Analysis of blank matrix samples and samples with potentially interfering compounds; assessment of chromatographic resolution | No significant interference at target analyte retention times; resolution >1.5 between critical pairs | Chromatograms demonstrating separation; peak purity data |

| Linearity and Range | Analysis of calibration standards at 5-7 concentration levels across expected measurement range; triplicate measurements | R² ≥0.990; residual plots without systematic patterns | Regression equation, R² value, residual plots |

| Limit of Detection (LOD) / Limit of Quantification (LOQ) | Serial dilution of low-concentration samples; signal-to-noise ratio of 3:1 for LOD and 10:1 for LOQ | LOD/LOQ appropriate for intended application; sufficient sensitivity for casework | Justification for established limits; supporting chromatograms |

| Robustness | Deliberate, small variations in method parameters (pH, temperature, flow rate); Youden's ruggedness test | Method performance maintained within acceptable criteria under varied conditions | Experimental design matrix; results of parameter variations |

Implementation of these validation protocols across multiple laboratories provides essential data on method transferability and reliability—key factors for legal admissibility under standards such as Frye, Daubert, and Federal Rule of Evidence 702 in the United States, and the Mohan criteria in Canada [2]. These legal frameworks require that scientific techniques be generally accepted in the relevant scientific community, peer-reviewed, testable, and have known error rates [2] [31].

Data Analysis and Reporting Requirements

Statistical Analysis Framework

Inter-laboratory validation studies require sophisticated statistical analysis to evaluate method performance across multiple sites. Data should be analyzed using both descriptive statistics (mean, standard deviation, coefficient of variation) and inferential statistics (ANOVA, regression analysis, outlier tests). The use of the likelihood-ratio framework for interpretation of evidence provides a logically correct framework that is consistent with the forensic-data-science paradigm [30]. Statistical packages should be specified in the test plan, along with predetermined significance levels (typically α=0.05). Data normalization procedures should be documented, and all statistical tests should be justified based on data distribution characteristics.

Standardized Reporting Protocols

Comprehensive documentation is essential for forensic method validation. The test plan must specify standardized reporting templates that include all elements required by ISO 21043, particularly Part 4 (interpretation) and Part 5 (reporting) [30]. Reports should transparently document all procedures, software versions, logs, and chain-of-custody records [31]. Error rate analysis is particularly critical for legal proceedings and must be explicitly reported with confidence intervals [2] [31]. All reports should include statements of uncertainty for quantitative measurements and clearly distinguish between observational data and interpretive conclusions.

Workflow Visualization for Inter-laboratory Validation

The following diagram illustrates the complete workflow for developing and executing an inter-laboratory test plan for forensic method validation at TRL 4:

Legal Admissibility Criteria Mapping

The successful implementation of a forensic test plan requires alignment with legal admissibility standards. The following diagram maps the relationship between validation activities and legal criteria:

Statistical Framework for Data Analysis and Determining Consensus

The validation of forensic methods at Technology Readiness Level (TRL) 4 represents a critical juncture in the transition of analytical techniques from proof-of-concept to operational implementation. At this stage, methods undergo inter-laboratory validation to demonstrate reliability across different institutional settings, instrumentation, and personnel. The statistical frameworks used to analyze this validation data and determine consensus are fundamental to establishing the scientific rigor and legal admissibility of forensic techniques. This guide compares predominant statistical frameworks applied in TRL 4 forensic research, evaluating their performance characteristics, implementation requirements, and applicability to various evidence types.

Within forensic science, TRL 4 is defined by the refinement and inter-laboratory validation of a standardized method ready for implementation in forensic laboratories [3]. Achieving this requires robust statistical approaches to demonstrate that a method produces consistent, reproducible, and reliable results across multiple laboratories—a process essential for meeting the admissibility standards outlined in legal precedents such as Daubert and Frye [2].

Comparative Analysis of Statistical Frameworks

The following analysis compares three statistical frameworks with demonstrated applicability to forensic validation studies and consensus determination.

Table 1: Comparison of Statistical Frameworks for Data Analysis and Consensus Determination

| Framework Feature | Median Aggregation with ICC Validation [32] | Functional Linear Mixed Models (FLMM) [33] | Histogram-Based Classification [34] |

|---|---|---|---|

| Primary Application | Multi-rater evaluation systems without objective ground truth | Analysis of trial-level temporal dynamics (e.g., photometry) | Categorizing opinion distributions (e.g., survey data) |

| Core Methodology | Robust median estimation; Intraclass Correlation Coefficient (ICC2k) | Functional regression exploiting signal autocorrelation; joint confidence intervals | Bin-counting algorithm; pre-defined category thresholds |

| Consensus Metric | Inter-rater reliability (ICC2k ≥ 0.955 reported) | Statistical significance of covariate effects across time-points | Qualitative categories: Perfect Consensus, Consensus, Polarization, Clustering, Dissensus |

| Handling of Variance | Quantifies individual rater alignment via consistency metrics (R², variance) | Accounts for within-trial, between-trial, and between-animal variance | Uses bin count thresholds (T₁, T₂) to discriminate signal from noise |

| Key Performance | 67% reduction in computational cost with minimal reliability loss | Identifies significant effects obscured by trial-averaging | Captures evolution of qualitative states via transition tables |

| Technology Readiness | High (validated on ~14,384 samples) | Emerging (primarily in neuroscience) | Moderate (validated on World Values Survey data) |

| Implementation Complexity | Low to Moderate | High | Moderate |

Experimental Protocols for Framework Evaluation

The following section details the experimental methodologies employed in the cited studies to generate the performance data summarized in Table 1.

Protocol for Median Aggregation and ICC Validation

This protocol is designed to assess inter-laboratory consensus when objective ground truth is unavailable [32].

- Step 1: Data Collection. Multiple evaluators (e.g., laboratories, instruments, algorithms) analyze an identical set of samples. In the cited study, 17 Large Language Models evaluated ~14,384 samples for semantic similarity.

- Step 2: Consensus Estimation. Calculate the median value across all evaluators for each individual sample. The median provides a robust consensus estimate with a 50% breakdown point, resistant to outliers.

- Step 3: Reliability Assessment. Compute the Intraclass Correlation Coefficient (ICC2k) using the median consensus as a reference standard. The two-way random, absolute agreement model (ICC2k) is appropriate for assessing the reliability of multiple raters.

- Step 4: Core Set Optimization. Apply algorithms to select a minimal subset of evaluators that maintains high reliability (e.g., ICC > 0.95), thereby reducing computational or operational costs.

Protocol for Functional Linear Mixed Models (FLMM)

This protocol is optimized for analyzing complex, time-series data from repeated-measures experiments, common in instrumental analysis [33].

- Step 1: Model Specification. Formulate a functional linear mixed model. The model incorporates fixed effects for experimental conditions (e.g., treatment/control) and random effects to account for variability between subjects (e.g., different laboratories) and within subjects across repeated trials.

- Step 2: Hypothesis Testing. Test the association between covariates and the signal at every time-point within the trial, rather than condensing the signal into a single summary statistic.

- Step 3: Confidence Interval Construction. Exploit the inherent autocorrelation in the time-series signal to calculate joint 95% confidence intervals. These intervals account for multiple comparisons across the entire trial without being overly conservative.

- Step 4: Visualization and Interpretation. Generate plots showing covariate effect estimates and their statistical significance at each time-point, unifying hypothesis testing with data visualization.

Protocol for Histogram-Based Classification

This protocol provides a structured method for categorizing quantitative data into qualitative consensus states [34].

- Step 1: Data Binning. Partition the data range (e.g., -1 to +1 for opinion data) into M bins of equal width. For 10-point Likert scale data, M=10 is typical.

- Step 2: Bin Classification. Normalize bin counts to 100%. Classify each bin as:

- Green: > T₁% (e.g., T₁=50, indicating a majority)

- Blue: < T₂% (e.g., T₂=5, indicating residual noise)

- Red: Values between T₂ and T₁.

- Step 3: Group Formation. Define a "group" as consecutive green or red bins.

- Step 4: Category Assignment. Apply classification criteria:

- Perfect Consensus: A single green bin exists.

- Consensus: One group of ≤ B bins containing >50% of responses.

- Polarization: Two groups, separated by ≥ K bins, containing >50% of responses combined.

- Clustering: More than two groups containing >50% of responses.

- Dissensus: No grouping contains a majority.

Figure 1: Workflow for the histogram-based classification algorithm, illustrating the logical sequence from data input to final consensus category assignment [34].

The Scientist's Toolkit: Essential Reagents & Materials

Successful implementation of statistical frameworks for inter-laboratory validation requires both computational and experimental resources. The following table details key solutions and their functions.

Table 2: Key Research Reagent Solutions for Inter-Laboratory Validation

| Reagent/Material | Function in Validation Study | Example Application |

|---|---|---|

| Standardized Reference Materials | Provides a common, homogeneous sample for all participating laboratories to analyze, enabling direct comparison of results. | Ten "modern" faunal teeth used across labs in isotope analysis [6]. |

| Validated Calibrants & Controls | Ensures analytical instruments across different laboratories are producing accurate and comparable measurements. | GMP-compliant pilot lots in drug development [35]. |

| Open-Source Data Analysis Platforms (e.g., R, GitHub Code) | Promotes transparency, reproducibility, and allows all laboratories to apply the exact same statistical algorithms. | R code provided on GitHub for inter-laboratory comparison [6]. |

| Statistical Reference Datasets | Serves as a benchmark for testing and validating new statistical frameworks and software implementations. | World Values Survey data for testing opinion formation models [34]. |

| Documented Standard Operating Procedures (SOPs) | Guarantees that all sample preparation, analysis, and data collection steps are performed identically across labs. | ISO 21043 standards for forensic analysis [36]. |

The selection of an appropriate statistical framework is paramount for robust inter-laboratory validation at TRL 4. The Median Aggregation with ICC Validation framework offers a robust, computationally efficient solution for establishing consensus in subjective evaluation tasks, directly addressing legal standards for reliability and known error rates [2] [32]. For forensic disciplines generating complex temporal or spectral data, FLMM provides superior power to detect significant effects by leveraging full datasets without coarsening information [33]. Finally, the Histogram-Based Classification framework provides a transparent and intuitive method for translating quantitative results into actionable, qualitative categories, facilitating decision-making [34].

Future directions should emphasize the development of standardized, discipline-specific validation frameworks that incorporate these statistical principles, enabling more efficient adoption of novel forensic methods into operational casework.

Defining Performance Metrics and Acceptance Criteria for Success

The transition of a forensic method from research to routine casework is a critical juncture. For methods at Technology Readiness Level 4 (TRL 4), defined as the stage for "refinement, enhancement, and inter-laboratory validation of a standardized method ready for implementation in forensic laboratories," establishing robust performance metrics and acceptance criteria is the cornerstone of success [15]. The core objective of a TRL 4 validation study is to demonstrate that an analytical method is not only functionally effective but also reliable, reproducible, and legally defensible across multiple independent laboratories.

This process is governed by a stringent framework of legal and scientific standards. Before forensic evidence can be admitted in court, the underlying analytical method must satisfy specific legal precedents, such as the Daubert Standard in the United States or the Mohan Criteria in Canada [2]. These standards require that a method has been tested, subjected to peer review, has a known error rate, and is generally accepted in the scientific community [2]. Therefore, the performance metrics and acceptance criteria defined during inter-laboratory validation are not merely scientific exercises; they are essential for ensuring the method's admissibility and the integrity of subsequent justice outcomes.

Performance Metrics Framework for Forensic Validation

The validation of a forensic method requires a multi-faceted approach to performance assessment. The following metrics are universally critical for evaluating a method's fitness for purpose.

Table 1: Core Performance Metrics and Their Definitions in Forensic Validation

| Metric | Definition | Significance in Forensic Context |

|---|---|---|

| Trueness (Accuracy) | The closeness of agreement between the average value obtained from a large series of test results and an accepted reference value [37]. | Ensures that evidence is correctly identified and quantified, preventing miscarriages of justice. |

| Precision | The closeness of agreement between independent test results obtained under stipulated conditions [37]. | Can be measured as repeatability (within-lab) and reproducibility (between-lab). |

| Specificity | The ability of the method to distinguish the target analyte from other substances in a complex mixture [37]. | Critical for analyzing trace evidence or complex mixtures where contaminants may be present. |

| Limit of Detection (LOD) | The lowest concentration of an analyte that can be detected, but not necessarily quantified, under the stated experimental conditions [37]. | Defines the sensitivity of the method for analyzing minimal or degraded samples. |

| Limit of Quantification (LOQ) | The lowest concentration of an analyte that can be quantified with acceptable levels of trueness and precision [37]. | Essential for reliable quantitative analysis, such as determining drug concentrations. |