Defining End-User Requirements in Forensic Method Validation: A Strategic Framework for Researchers and Scientists

This article provides a comprehensive framework for defining and implementing end-user requirements in forensic method validation, a critical process for ensuring analytical methods are scientifically sound and legally defensible.

Defining End-User Requirements in Forensic Method Validation: A Strategic Framework for Researchers and Scientists

Abstract

This article provides a comprehensive framework for defining and implementing end-user requirements in forensic method validation, a critical process for ensuring analytical methods are scientifically sound and legally defensible. Tailored for researchers, scientists, and development professionals, it explores the foundational principles of establishing fitness-for-purpose, outlines methodological steps for requirement specification, addresses common challenges in the validation lifecycle, and presents collaborative models for efficient verification. By synthesizing current guidelines and best practices, this guide aims to enhance the robustness, reliability, and accreditation readiness of validated methods in forensic and biomedical research.

The Cornerstone of Reliability: Understanding End-User Requirements and Fitness for Purpose

Defining Fitness for Purpose in Forensic Science

Fitness for purpose is a foundational principle in forensic science, serving as the benchmark for the validity and admissibility of scientific evidence within the criminal justice system. It is formally defined as a method or process being "good enough to do the job it is intended to do, as defined by the specification developed from the end-user requirement" [1]. This concept moves beyond mere technical function, demanding that forensic science activities demonstrably fulfill the needs of all stakeholders—from the investigating officers to the courts—by producing reliable, accurate, and interpretable results upon which legal decisions can be based [1].

The legal and regulatory imperative for this principle is unequivocal. Courts are expected to consider the validity of the methods by which an expert's data were obtained [1]. Furthermore, demonstrating fitness for purpose through method validation is a central requirement for accreditation to international standards such as ISO/IEC 17025 and is mandated by the Forensic Science Regulator’s Codes of Practice and Conduct [1] [2]. This document provides an in-depth technical guide to defining and demonstrating fitness for purpose, framed within the critical context of establishing explicit end-user requirements for forensic method validation research.

The Regulatory and Standardization Framework

The landscape of forensic science is guided by a robust and evolving framework of international standards and regulatory codes, all of which anchor their requirements to the principle of fitness for purpose.

- ISO/IEC 17025: This is the cornerstone standard for testing and calibration laboratories. Accreditation to ISO/IEC 17025 includes an assessment that an organization's methods are valid and that the organization is competent to perform them [1]. The standard necessitates a process for validating methods to ensure they are fit for the intended purpose.

- The Forensic Science Regulator’s Code of Practice: In England and Wales, the Forensic Science Regulator Act 2021 established a statutory code of practice. This code requires forensic units to implement effective quality management systems, with validation of techniques being a key element to understand and manage the risk of a quality failure, the consequences of which can be profound for the administration of justice [2].

- Emerging ISO 21043 Series: Recognizing that generic standards like ISO 17025 may have limitations for forensic science, the International Organization for Standardization is developing the ISO 21043 series, a dedicated standard for forensic sciences [3]. This multi-part standard covers the entire forensic process, from vocabulary and crime scene investigation to analysis, interpretation, and reporting, providing a more tailored framework for ensuring quality and fitness for purpose [4].

A significant development in harmonizing practices globally is the Sydney Declaration (SD) for Forensic Sciences. This initiative outlines seven fundamental tenets, redefining forensic science as "the oriented research activity based on cases... that uses scientific principles to study traces… to understand anomalous events of public interest" [3]. The SD emphasizes that forensic science deals with a continuum of uncertainties and that its findings acquire meaning in context, thereby providing a principled foundation for defining fitness for purpose, particularly in regions like Africa that are building their forensic capabilities [3].

The Core Principle: Linking End-User Requirements to Fitness for Purpose

At its heart, demonstrating fitness for purpose is an evidence-based process that connects a method's performance to a clearly defined need. The "end-user requirement" is the critical starting point, acting as the specification against which fitness is measured [1].

Defining End-User Requirements

The end-user requirement captures what the different users of the method's output need it to reliably accomplish. In their simplest form, these requirements define the aspects of the method the expert will rely on for their critical findings in a statement or report [1]. Failure to define these requirements at the outset can lead to unfocused testing that amasses data which may not increase understanding or confidence in the method [1].

Identifying End-Users: The process involves identifying all parties who are users of the information. This typically includes:

- The Forensic Scientist/Expert: Requires the method to produce accurate, reliable, and interpretable data to form an objective opinion.

- Investigating Officers: Need intelligence and evidence that is actionable and reliable to guide an investigation.

- The Courts (Judge and Jury): Require evidence that is scientifically sound, understandable, and whose limitations are clear to aid in the administration of justice.

The Validation Process: A Structured Workflow

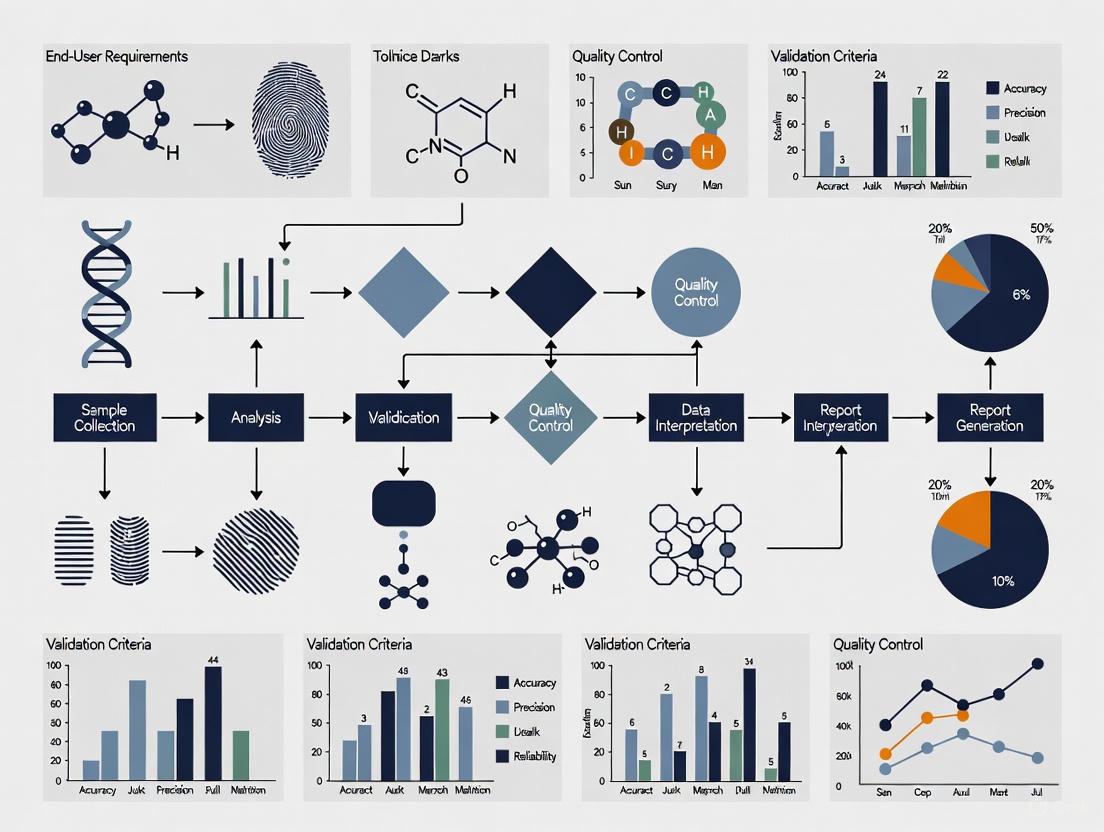

The process for validating a method, and thus demonstrating its fitness for purpose, follows a logical sequence. The framework published in the Forensic Science Regulator's Codes of Practice outlines the essential stages, which are visualized in the workflow below [1].

Figure 1: Forensic Method Validation Workflow. This diagram outlines the key stages for validating a forensic method, from defining requirements to implementation. Critical stages for defining fitness for purpose are highlighted.

Experimental Design for Validation Studies

The objective evidence that a method meets its acceptance criteria is the test data generated during the validation exercise. Therefore, the selection and design of tests are critical [1].

Core Methodological Principles

- Representative Test Data: Data for all validation studies must be representative of the real-life use the method will be put to. This requires test materials that replicate the range of materials encountered in casework, including degraded, mixed, or otherwise challenging samples [1] [5].

- Stress Testing: For a robust validation, the method must also be tested with data challenges that "stress test" it. This involves pushing the method beyond ideal conditions to understand its limitations and failure modes [1].

- Accuracy and Reliability: The validation must empirically assess the method's performance, testing for accuracy (how close the results are to the true value) and reliability (the consistency of results under defined conditions) [5].

- Calibration: The results should be well-calibrated to the expected result, meaning that the reported probabilities or confidence levels accurately reflect the true underlying probabilities [5].

Quantitative Frameworks for Validation Data

The design of a validation study must be tailored to the method's intended use. The table below summarizes key experimental parameters and metrics that should be considered.

Table 1: Key Experimental Parameters and Metrics for Validation Studies

| Parameter Category | Specific Metric | Methodology for Assessment | Link to Fitness for Purpose |

|---|---|---|---|

| Accuracy & Precision | Measurement uncertainty, False positive/negative rates, Repeatability (same conditions), Reproducibility (different conditions) | Repeated analysis of certified reference materials (CRMs) and control samples with known values by multiple practitioners over time. | Ensures results are both correct and consistent, which is fundamental for evidential reliability. |

| Specificity & Selectivity | Ability to distinguish target analyte from interferents or mixtures. | Challenging the method with samples containing known potential interferents and complex mixtures. | Demonstrates the method is targeted and robust in complex, real-world sample matrices. |

| Sensitivity | Limit of Detection (LoD), Limit of Quantitation (LoQ). | Analyzing a series of samples with decreasing concentrations of the target analyte to determine the lowest detectable and quantifiable level. | Defines the scope of the method and its applicability to traces with minimal material. |

| Robustness & Ruggedness | Performance under deliberate, small variations in method parameters (e.g., temperature, pH, analyst). | Introducing minor, predefined variations to the standard protocol and measuring the impact on the results. | Ensures the method remains reliable despite minor, inevitable fluctuations in the operational environment. |

Collaborative versus Traditional Validation Models

A significant development in validation strategy is the move towards collaborative models, which offer substantial efficiencies. The table below contrasts this with the traditional approach.

Table 2: Comparison of Traditional and Collaborative Validation Models

| Aspect | Traditional Independent Validation | Collaborative Validation Model |

|---|---|---|

| Core Principle | Each Forensic Science Service Provider (FSSP) independently designs and executes a full validation for its own use. | FSSPs work cooperatively to standardize methods and share validation data. An originating FSSP publishes a peer-reviewed validation for others to verify [6]. |

| Process | The FSSP follows all stages in Figure 1 independently. | Subsequent FSSPs review the published validation data. If it fits their purpose, they perform a verification to demonstrate competence, avoiding full re-validation [6]. |

| Resource Impact | High cost, time-consuming, and laborious, with significant redundancy across the community [6]. | Significant savings in time, cost, and labor. Allows smaller FSSPs to implement new technology more efficiently [6]. |

| Data Comparability | No benchmark for cross-comparison of results between FSSPs. | Emulation of a published validation provides an inter-FSSP study, building a shared body of knowledge and enabling direct cross-comparison of data [6]. |

| Business Case | High opportunity cost as resources are diverted from casework [6]. | Reduces activation energy for technology adoption and raises all FSSPs to the highest published standard simultaneously [6]. |

The methodology for a collaborative verification, following a published validation, is outlined in the diagram below.

Figure 2: Collaborative Method Verification Process. This workflow shows the steps for a laboratory to verify a method that has been previously validated and published by another organization.

The Scientist's Toolkit: Essential Research Reagents for Validation

While specific reagents vary by discipline, the conceptual "reagents" for a robust validation study are universal. These are the essential materials and resources required to execute the experimental protocols described in Section 4.

Table 3: Essential Research "Reagents" for Method Validation

| Tool / Material | Function in Validation | Critical Application Notes |

|---|---|---|

| Certified Reference Materials (CRMs) | Provides a ground truth with a known, certified value for assessing method accuracy and establishing calibration curves. | Must be traceable to a national or international standard. Used to test the method across the dynamic range of the assay. |

| Characterized Real-World Samples | Serves as representative test material to challenge the method with the complexity and variability encountered in casework. | Should include a portfolio of samples of varying quality, quantity, and composition (e.g., clean, degraded, mixed). |

| Proficiency Test (PT) Samples | Provides an external, blind assessment of the method's performance and the practitioner's competency in a controlled setting. | Participation in inter-laboratory PT schemes is a key requirement for accreditation and ongoing quality assurance. |

| Data Analysis & Statistical Software | Enables the quantitative analysis of validation data, calculation of metrics (e.g., LoD, precision), and assessment against acceptance criteria. | Software tools and scripts used must be verified and their use documented in the standard operating procedure. |

| Documented Standard Operating Procedure (SOP) | The definitive protocol against which the validation is performed. Ensures the validation study is conducted on the final, documented method. | The creation of a draft SOP is a recommended good practice before commencing any validation study [1]. |

Defining fitness for purpose is not an abstract exercise but a rigorous, evidence-based process that sits at the very heart of reliable and credible forensic science. It is achieved by systematically linking a method's performance, through robust experimental validation, to explicitly defined end-user requirements. The frameworks provided by standards such as ISO 17025, the new ISO 21043 series, and the principles of the Sydney Declaration offer a pathway to this demonstration.

The growing adoption of collaborative validation models presents a powerful opportunity to increase efficiency, standardize best practices, and enhance the comparability of forensic data across jurisdictions. As forensic science continues to evolve, with an increasing reliance on automated tools and complex data analysis, the principles outlined in this guide will become even more critical. Ultimately, a steadfast commitment to defining and demonstrating fitness for purpose is the primary safeguard for producing forensic evidence that is safe, impartial, and worthy of trust in the criminal justice system.

The Critical Role of End-User Requirements in Legal Admissibility and ISO 17025 Accreditation

Within the rigorous framework of forensic method validation research, end-user requirements represent the specific, documented needs and objectives that a forensic method must fulfill to be considered fit-for-purpose in the criminal justice system. These requirements form the fundamental criteria against which a method's performance is measured during validation, creating an unambiguous link between scientific procedure and legal utility. The Forensic Capability Network (FCN) defines validation as "a comprehensive scientific study which includes a series of tests that produces objective evidence that a finalised method, process, or equipment is fit for the specific purpose intended" [7]. In practice, this process begins with "determining and reviewing the end user requirements and specification" before any testing occurs [7].

The international standard ISO/IEC 17025:2017 establishes the foundational requirements for laboratory competence, impartiality, and consistent operation [8] [9] [10]. For forensic science service providers, accreditation to this standard demonstrates technical competence and provides the judicial system with confidence in the reliability of evidence presented. The standard's requirements for method validation create a structured pathway for incorporating end-user needs into formal scientific protocols, thereby ensuring that forensic methods not only produce scientifically sound results but also meet the practical and legal demands of their application [8].

The Intersection of End-User Requirements and ISO 17025

Method Validation as a Core ISO 17025 Requirement

ISO/IEC 17025 mandates that laboratories validate non-standard methods, laboratory-designed methods, and standard methods used outside their intended scope [8]. This process requires objective evidence that a method is fit for its intended purpose, which is fundamentally defined by its end-user requirements. The standard specifies that laboratories must use "appropriate methods and procedures for all laboratory activities" and evaluate "measurement uncertainty for all calibrations and testing where applicable" [8]. These requirements compel laboratories to formally document the performance characteristics needed from a method based on the specific forensic questions it must answer and the legal standards it must satisfy.

The management system requirements outlined in ISO 17025 emphasize the importance of a structured approach to laboratory operations, including documentation control, risk management, and continual improvement [9]. This framework ensures that end-user requirements are not merely considered during initial validation but are maintained throughout the method's lifecycle. As the FCN notes, "validation is a continuous iterative process" that requires periodic review and potentially re-validation when methods change or new information emerges about user needs [7].

Defining End-User Requirements for Forensic Applications

End-user requirements in forensic science encompass multiple dimensions that extend beyond basic technical performance. These requirements must address the needs of all stakeholders in the criminal justice process, from investigators to courts. The following table summarizes the core components of end-user requirements in forensic method validation:

Table 1: Core Components of End-User Requirements in Forensic Method Validation

| Requirement Category | Definition | Stakeholders Served |

|---|---|---|

| Technical Sensitivity | The minimum level of detection required for the analyte of interest | Forensic practitioners, investigators |

| Specificity/Selectivity | The ability to distinguish target analytes from interfering substances | Forensic practitioners, quality managers |

| Legal Reliability | The standard of proof required for admissibility in legal proceedings | Courts, legal professionals, oversight boards |

| Reporting Clarity | The format and content requirements for clear, unambiguous reporting | Legal professionals, juries, investigators |

| Operational Practicality | Considerations of time, cost, and equipment for implementation | Laboratory management, funding bodies |

| Uncertainty Quantification | The measurement uncertainty thresholds acceptable for the application | Quality managers, scientific peers |

The FCN emphasizes that validation must confirm that methods are "fit for the specific purpose intended" and that "any limitations are well understood and communicated appropriately" [7]. This necessitates a thorough understanding of how the method will be used in practice and what demands the legal system will place upon its results. Recent research has highlighted transparency as a "core principle and fundamental obligation of forensic science reporting," requiring disclosure of information about the "scientists' Authority, Compliance, Basis, Justification, Validity, Disagreements, and Context" [11]. These transparency obligations must be incorporated into the definition of end-user requirements from the outset.

Experimental Protocols for Defining and Validating Against End-User Requirements

Protocol for Establishing End-User Specifications

A systematic approach to defining end-user requirements ensures that all relevant criteria are captured and documented before method validation begins. The following protocol provides a structured methodology for establishing these specifications:

Stakeholder Identification and Analysis: Convene a panel representing all end-user groups, including forensic practitioners, investigators, prosecutors, defense attorneys, laboratory management, and quality assurance personnel. Document the specific needs and expectations of each group through structured interviews or surveys [7].

Regulatory and Legal Framework Review: Systematically identify all applicable standards, guidelines, and legal precedents that will govern method admissibility and implementation. This includes the ISO/IEC 17025 standard, the Forensic Science Regulator's Code (in the UK), relevant judicial rulings, and organizational policies [8] [7].

Technical Performance Parameter Definition: Based on stakeholder input and regulatory requirements, establish quantitative performance criteria for the method. This must include:

- Accuracy and Precision Requirements: Define acceptable thresholds for systematic and random error based on the method's intended application.

- Sensitivity and Detection Limits: Establish the required detection and quantification limits appropriate for the forensic context.

- Specificity Parameters: Document required discrimination power for the evidence type in question.

- Robustness and Ruggedness Criteria: Define acceptable performance boundaries under varying conditions [8].

Operational Requirement Specification: Document practical implementation requirements, including:

- Sample throughput and turnaround time expectations

- Equipment and facility requirements

- Personnel competency and training needs

- Data management and reporting formats

- Cost constraints and resource limitations [9]

Uncertainty and Reliability Thresholds: Establish acceptable measurement uncertainty targets and reliability standards based on the consequences of potential errors in the legal context. This includes defining statistical confidence levels required for reporting conclusions [8].

The output of this protocol is a comprehensive end-user requirement specification document that serves as the foundation for all subsequent validation activities.

Protocol for Validation Against End-User Requirements

Once end-user requirements are formally documented, a validation protocol must be designed to test the method against each requirement. The following experimental approach ensures comprehensive validation:

Validation Plan Development: Create a detailed plan that directly links each validation activity to specific end-user requirements. The plan should include:

- Experimental designs for testing each performance parameter

- Acceptance criteria for each requirement directly quoted from the specification document

- Statistical approaches for data analysis and interpretation

- Contingency procedures for addressing failures to meet requirements [7]

Technical Performance Verification: Execute experiments to verify the method meets all technical requirements:

- Accuracy and Precision Studies: Conduct replicate analyses of certified reference materials and quality control samples across multiple runs, operators, and instruments.

- Sensitivity Studies: Determine detection and quantification limits using serial dilutions of target analytes in relevant matrices.

- Specificity Assessment: Challenge the method with potentially interfering substances and similar but non-target materials.

- Robustness Testing: Deliberately introduce minor variations in procedure, reagents, equipment, or environmental conditions to establish method tolerance [8].

Operational Capability Demonstration: Conduct practical trials to verify operational requirements:

- Perform method trials in the actual operational environment with typical casework samples.

- Demonstrate that the method can be successfully executed by multiple operators with appropriate training.

- Verify that reporting formats meet stakeholder needs for clarity and comprehensiveness.

- Confirm that turnaround times can be met under normal operating conditions [9].

Uncertainty Quantification: Evaluate all significant sources of measurement uncertainty and calculate combined uncertainty estimates for the method. Verify that these estimates fall within the acceptable range defined in the end-user requirements [8].

Comparative Analysis (where applicable): Compare method performance with existing validated methods or reference methods to establish relative performance characteristics.

The following workflow diagram illustrates the integrated process of defining end-user requirements and validating methods against them:

Quantitative Frameworks for End-User Requirement Validation

The validation of methods against end-user requirements necessitates the collection and analysis of quantitative data to demonstrate compliance with established criteria. The following table presents a structured approach to data collection for requirement verification:

Table 2: Quantitative Data Collection Framework for End-User Requirement Validation

| Requirement Category | Data to Collect | Statistical Analysis Method | Acceptance Criteria |

|---|---|---|---|

| Accuracy | Mean recovery percentage from certified reference materials; comparison with reference method results | t-Tests; regression analysis; bias estimation | Recovery within 85-115%; no significant bias (p>0.05) |

| Precision | Replicate results across multiple runs, days, operators | Relative Standard Deviation (RSD); ANOVA | RSD <5% within run; <10% between runs |

| Sensitivity | Signal-to-noise ratios at lowest concentrations; replicate measurements of blanks | 3x standard deviation of blank; calibration curve parameters | Limit of Detection (LOD) sufficient for casework samples |

| Specificity | Results from analysis of potentially interfering substances; false positive/negative rates | Specificity and selectivity calculations; cross-reactivity assessment | No false positives in negative controls; correct identification in mixtures |

| Measurement Uncertainty | All significant uncertainty contributors; combined uncertainty estimates | Uncertainty budget development; coverage factor application | Combined uncertainty within pre-defined thresholds for legal applications |

Quantitative data analysis for requirement validation employs both descriptive and inferential statistical approaches. Descriptive statistics summarize the central tendency and dispersion of validation data, including measures such as mean, median, standard deviation, and relative standard deviation [12]. Inferential statistics enable conclusions beyond the immediate dataset, using techniques such as hypothesis testing, confidence intervals, and regression analysis to determine whether the method meets the established requirements [12]. For forensic applications, the evaluation of measurement uncertainty is particularly critical, as it provides judicial stakeholders with information about the reliability of reported results [8].

Successful validation against end-user requirements necessitates specific resources and tools. The following table details essential components of the validation toolkit:

Table 3: Essential Research Reagent Solutions for Method Validation

| Tool/Resource | Function in Validation | Application Example |

|---|---|---|

| Certified Reference Materials (CRMs) | Provide traceable standards for accuracy determination and calibration | CRM for blood alcohol concentration to validate forensic toxicology methods [8] |

| Proficiency Test Materials | Assess method and laboratory performance compared to peers | Collaborative testing program samples for DNA analysis methods [8] |

| Quality Control Materials | Monitor ongoing method performance and stability | Control samples with known drug concentrations for daily instrument verification [9] |

| Statistical Analysis Software | Perform required statistical calculations and uncertainty analysis | R, Python, or specialized packages for statistical evaluation of validation data [13] [12] |

| Document Management System | Maintain records of requirements, validation protocols, and results | Laboratory Information Management System (LIMS) for document control and version management [9] |

| Uncertainty Budget Templates | Structure the identification and quantification of uncertainty sources | Spreadsheet templates for systematic compilation of uncertainty contributors [8] |

Laboratories must ensure that reference materials and critical reagents are obtained from competent producers and are traceable to international standards where applicable [8]. The management of these resources should be incorporated into the laboratory's quality management system, with procedures for receipt, verification, storage, and use that prevent compromise of their integrity.

Legal Admissibility: The Consequence of Requirement-Driven Validation

The legal admissibility of forensic evidence hinges on the demonstration that methods used to generate it are scientifically valid and reliably applied. Recent U.S. Supreme Court decisions, including Smith v. Arizona, have "redefined the boundaries of forensic testimony and the Confrontation Clause," placing increased scrutiny on the validity and reliability of forensic methods [14]. Properly documented end-user requirements and validation against those requirements provide the foundational evidence needed to withstand such scrutiny.

The framework of transparency advocated by forensic science researchers requires "disclosing information about the scientists' Authority, Compliance, Basis, Justification, Validity, Disagreements, and Context" [11]. End-user requirement documentation directly supports this transparency by explicitly recording the methodological goals, performance standards, and limitations that define a method's appropriate application. This documentation becomes particularly crucial when forensic findings are challenged in legal proceedings, as it provides objective evidence that the method was designed and validated with the specific demands of the legal system in mind.

Validation that incorporates end-user requirements also addresses growing concerns about cognitive bias in forensic decision-making. As noted in discussions of independent audits, "troubling patterns of systemic deficiencies, questionable determinations, and possible bias" can undermine confidence in forensic results [14]. A requirement-driven validation approach establishes objective criteria for method performance and application, creating a barrier against subjective influences and ensuring that methods produce consistent, reliable results regardless of the specific practitioner or context.

The integration of end-user requirements into forensic method validation represents a critical nexus between scientific rigor and legal utility. The ISO/IEC 17025 standard provides the framework for this integration, mandating validation processes that objectively demonstrate methodological fitness for purpose. By systematically defining, documenting, and validating against end-user requirements, forensic science service providers not only satisfy accreditation requirements but also build a foundation for legal admissibility and professional credibility.

The evolving landscape of forensic science, with increasing emphasis on transparency, cognitive bias mitigation, and scientific validity, makes requirement-driven validation increasingly essential. As oversight bodies and legal standards continue to evolve, the explicit linkage between end-user needs and methodological validation will likely become even more central to forensic practice. Forensic researchers and laboratory managers should therefore prioritize the development of robust processes for requirement definition and validation, ensuring that their methods meet both scientific and legal standards for reliability and relevance.

Within the framework of modern forensic science, the validation of new methods is not merely a scientific exercise but a critical process that ensures the reliability and admissibility of evidence in the legal system. Defining end-user requirements is the foundational step in method validation research, serving as the benchmark against which a method's performance, limitations, and fitness for purpose are measured [15] [7]. This process is intrinsically stakeholder-driven. A comprehensive understanding of the needs, constraints, and expectations of all entities involved—from the laboratory bench to the courtroom—is therefore paramount. The international standard ISO 21043, which outlines requirements for the entire forensic process, underscores the necessity of this multi-stakeholder approach [4]. Failures in adequately considering stakeholder requirements can lead to flawed methodologies, evidence exclusion in court, and ultimately, miscarriages of justice [16] [7]. This guide provides a technical roadmap for identifying these key stakeholders and systematically integrating their requirements into forensic method validation research.

Mapping the Stakeholder Ecosystem

The ecosystem for a validated forensic method comprises a diverse network of individuals and organizations, each with distinct roles, interests, and requirements. These stakeholders can be categorized into several core groups, as detailed in Table 1.

Table 1: Key Stakeholders in Forensic Method Validation and Their Requirements

| Stakeholder Category | Specific Roles / Sub-groups | Primary Requirements & Interests |

|---|---|---|

| Forensic Service Providers (FSPs) | - Forensic Laboratory Managers- DNA Analysts- Latent Print Examiners- Digital Evidence Examiners- Crime Scene Investigators- Medicolegal Death Investigators | - Technical: Method reliability, reproducibility, sensitivity, specificity, and defined error rates [16] [17].- Operational: Throughput, cost-effectiveness, compatibility with existing workflows, and clear standard operating procedures (SOPs) [7].- Quality & Compliance: Adherence to standards (e.g., ISO 21043, FSR Code), accreditation requirements, and robust documentation for validation [4] [15]. |

| Judicial System Actors | - Judges- Prosecuting Attorneys- Defense Attorneys- Juries | - Admissibility: Scientific validity and reliability under relevant legal standards (e.g., Daubert, Frye) [16].- Clarity & Transparency: Understandable and logically correct reporting of evidence, including clear statements of limitations and uncertainty (e.g., via Likelihood Ratios) [4] [17].- Scrutiny: Ability to meaningfully challenge evidence, including access to underlying data and algorithms [17]. |

| Research & Standardization Bodies | - National Institute of Standards and Technology (NIST)- Organization of Scientific Area Committees (OSAC)- ISO Committees- Scientific Research Communities | - Scientific Rigor: Empirically calibrated and validated methods under casework conditions [4].- Standardization: Development of uniform standards, best practices, and terminology to ensure consistency across disciplines and jurisdictions [4].- Innovation: Promotion of transparent, reproducible, and bias-resistant methods like those in the forensic-data-science paradigm [4]. |

| Oversight & Funding Entities | - The Forensic Science Regulator (FSR)- National Institute of Justice (NIJ)- Police and Government Agencies | - Accountability & Governance: Compliance with legal and quality standards [7].- Public Trust: Ensuring forensic evidence is reliable and impartial.- Resource Management: Efficient use of funding and resources, supporting a resilient workforce [18]. |

| The Subject of Analysis | - Defendant / Accused- Victim | - Rights & Fairness: Evidence that is obtained and processed fairly, and that is adequately reliable to avoid wrongful conviction [16].- Understanding: The ability to comprehend the evidence presented against them. |

Experimental Protocols for Eliciting Stakeholder Requirements

A structured, scientific approach is essential for gathering robust data on stakeholder needs. The following protocols outline methodologies for conducting this critical research.

Protocol for Semi-Structured Interviews with Criminal Justice Stakeholders

This qualitative method is ideal for exploring the complex, in-depth perspectives of key figures in the judicial system [17].

- Objective: To elicit detailed perspectives on interpretation and reporting practices, the use of computational algorithms, and the practical challenges of integrating new forensic methods into the legal process.

- Materials:

- Recruitment materials and informed consent forms.

- An interview protocol guide with open-ended questions and probes.

- High-quality audio/video recording equipment (e.g., Zoom platform).

- Transcription software and services.

- Qualitative data analysis software (e.g., NVivo).

- Methodology:

- Participant Solicitation: Identify and recruit participants via invitation based on their active engagement in forensic science policy and practice. Target a range of roles, including laboratory managers, prosecutors, defense attorneys, judges, and academic scholars [17].

- Data Collection: Conduct one-on-one, semi-structured interviews using a video-based virtual meeting platform. The semi-structured format allows for consistency while permitting flexibility to explore emerging themes.

- Data Analysis: Transcribe interviews verbatim. Employ a thematic analysis approach, which involves:

- Familiarization: Repeated reading of transcripts.

- Coding: Generating systematic codes for key phrases and ideas.

- Theme Development: Collating codes into potential themes and sub-themes that represent patterns of meaning across the dataset.

- Review and Refinement: Ensuring themes accurately reflect the coded data and the entire dataset.

- Outcome Measures: A rich, qualitative account of stakeholder values, concerns, and preferences, identifying both consensus and conflict points across different groups [17].

Protocol for National Surveys on Operational and Stress Factors

This quantitative method is effective for measuring the prevalence of specific issues, such as work-related stress, and its impact on operational requirements.

- Objective: To quantify work-related stress levels, organizational practices, and resource gaps among specific forensic professional groups, such as medicolegal death investigators (MDIs) or digital evidence examiners [18].

- Materials:

- A validated survey instrument, which may include standardized scales (e.g., for PTSD, depression, burnout, coping self-efficacy).

- A secure online survey platform (e.g., Qualtrics).

- Statistical analysis software (e.g., R, SPSS).

- Methodology:

- Sampling: Administer a cross-sectional survey to a large, national sample of the target professional group (e.g., 1,000 MDIs) [18].

- Measures: The survey should capture:

- Demographic and professional background.

- Frequency and duration of exposure to traumatic materials.

- Levels of stress, depression, and burnout using validated scales.

- Perceptions of organizational support and availability of wellness resources.

- Current agency mitigation practices and perceived barriers to wellness.

- Data Analysis: Use descriptive statistics to summarize findings. Employ inferential statistics (e.g., regression analysis) to identify relationships between variables, such as the correlation between organizational wellness culture and reduced burnout [18].

- Outcome Measures: Quantitative data on the prevalence of stressors, gaps in protective practices, and statistically significant factors that impact workforce resilience and, by extension, operational reliability.

Essential Research Reagents and Tools for Stakeholder Analysis

Table 2: Research Reagent Solutions for Stakeholder Requirement Studies

| Item / Tool | Function in Stakeholder Research |

|---|---|

| Qualitative Data Analysis Software (e.g., NVivo) | Facilitates the organization, coding, and thematic analysis of complex textual data from interviews and open-ended survey questions. |

| Video Conferencing Platform (e.g., Zoom) | Enables remote, face-to-face data collection via semi-structured interviews, allowing for a wider geographical reach of participants [17]. |

| Validated Psychometric Scales | Provides objective, quantitative measures of psychological constructs like stress, trauma, burnout, and coping self-efficacy within survey-based research [18]. |

| Statistical Analysis Software (e.g., R, SPSS, SAS) | Used to perform descriptive and inferential statistical analyses on quantitative survey data, identifying significant patterns and correlations. |

| Validation Plan Template | A structured document (as recommended by FCN) that guides the process of defining and documenting end-user requirements, acceptance criteria, and testing protocols [7]. |

Visualizing Stakeholder Relationships and Validation Workflows

The following diagrams, generated using Graphviz DOT language, illustrate the complex relationships within the stakeholder ecosystem and the iterative process of integrating their requirements into method validation.

Diagram 1: Forensic Method Stakeholder Ecosystem

Diagram 2: Requirement-Driven Validation Workflow

The journey of a forensic method from development to courtroom acceptance is paved by the requirements of its diverse stakeholders. A systematic approach to identifying these groups—encompassing forensic practitioners, judicial actors, standard-setting bodies, and oversight entities—and rigorously eliciting their needs is not optional but fundamental to scientific validity and legal robustness. By employing structured methodologies, such as semi-structured interviews and national surveys, researchers can capture the critical data necessary to define fitness-for-purpose. Integrating these end-user requirements into every stage of the validation lifecycle, as visualized in the provided workflows, ensures that forensic methods are not only scientifically sound but also legally defensible, operationally viable, and ultimately, trustworthy pillars of the justice system.

Translating Investigative Needs into Testable Technical Specifications

In forensic method validation research, the accuracy, reliability, and admissibility of scientific evidence depend fundamentally on a rigorous foundation of well-defined technical specifications. This process begins with the precise articulation of investigative needs—the complex problems and questions arising from forensic casework—and their systematic translation into testable technical specifications for analytical methods. This translation ensures that developed methods are not only scientifically sound but also legally defensible and practically applicable to real-world scenarios. The core challenge lies in transforming often-qualitative user requirements from various stakeholders—including laboratory analysts, legal professionals, and law enforcement investigators—into unambiguous, quantifiable parameters that can be systematically validated. This guide provides a structured framework for bridging this critical gap, enabling researchers and drug development professionals to create robust validation protocols that stand up to scientific and legal scrutiny.

Foundational Concepts: User Needs and Requirements

Understanding User Needs

User needs represent the fundamental desires, goals, and expectations of end-users when they interact with a product, system, or, in this context, a forensic method [19]. In forensic science, these needs extend beyond basic functionality to encompass critical factors such as reliability, reproducibility, sensitivity, specificity, and legal admissibility. A key challenge is that users may not always articulate these needs explicitly or may express them as solutions rather than underlying problems. The famous adage attributed to Henry Ford illustrates this point: "If I asked people what they wanted, they would have said faster horses" [20]. Therefore, the researcher's role involves deep investigation to uncover the real needs behind stated requests through careful observation and empathetic engagement with the forensic workflow.

Classifying User Requirements

User requirements can be systematically categorized to ensure comprehensive coverage of all critical aspects. Understanding these categories helps in structuring technical specifications that address the full spectrum of user needs [21]:

Table: Types of User Requirements in Forensic Method Development

| Requirement Type | Definition | Forensic Science Examples |

|---|---|---|

| Functional Requirements | Specific functionalities and behaviors the system must exhibit | Method must detect target analyte at concentrations ≤ 5 ng/mL; Must distinguish between structural isomers; Must generate interpretable output within 4 hours |

| Usability Requirements | Aspects related to user interaction efficiency and effectiveness | Method protocol must be executable by trained analysts with ≤ 2 hours training; Critical steps must have clear indicators; Error recovery must be possible without sample loss |

| User Interface Requirements | Visual design, layout, and presentation elements | Software interface must display chromatograms with adjustable scaling; Results must be exportable in standardized reporting formats; Alert thresholds must be visually distinct |

A Framework for Translating Needs into Specifications

The User Need Statement Framework

A powerful tool for initiating the translation process is the user need statement, a structured approach that captures who the user is, what they need, and why that need is important [20]. This three-part format follows the pattern: [A user] needs [need] in order to accomplish [goal].

In forensic contexts, this might translate to: "A forensic toxicologist needs to reliably quantify 12 common benzodiazepines and their metabolites in blood samples at concentrations as low as 0.5 ng/mL in order to provide conclusive evidence for impaired driving cases that meets Daubert standards."

This statement format offers multiple benefits for the validation process:

- Captures the user and the need: Distills complex research insights into a single, actionable sentence

- Aligns the team: Serves as a consistent reference point for all team members and stakeholders

- Identifies a benchmark for success: Provides a clear metric for validation before ideation and prototyping begin

From Needs to Technical Specifications

The transition from user need statements to testable specifications requires systematic decomposition of each need into measurable parameters. The following workflow diagram illustrates this translation process:

Specification Development Methodology

The translation process employs several critical techniques to ensure comprehensive specification development:

Stakeholder Analysis: Actively involve all relevant stakeholders—including laboratory analysts, quality managers, legal experts, and instrument specialists—throughout the requirement gathering process [21]. Conduct structured workshops and interviews to capture diverse perspectives and ensure alignment.

User Stories and Use Cases: Employ narrative formats to capture requirements from the user's perspective [21]. For example: "As a forensic chemist, I need to automatically flag potential isobaric interferences so that I can focus verification efforts on high-risk samples." These stories should include acceptance criteria that define when the requirement is satisfied.

Gap Analysis: Compare current capabilities with desired outcomes to identify specific technical hurdles [12]. This involves assessing existing instrumentation, methodology, and expertise against the requirements of the new method.

Quantitative Technical Specifications for Forensic Validation

Core Performance Parameters

For forensic method validation, user needs must be translated into specific, quantifiable parameters that can be systematically tested. The following table summarizes critical technical specifications derived from common investigative needs:

Table: Technical Specifications for Forensic Toxicology Method Validation

| Investigative Need | Technical Parameter | Testable Specification | Acceptance Criterion |

|---|---|---|---|

| Detect minute quantities of analyte | Sensitivity | Limit of Detection (LOD) | ≤ 0.1 ng/mL with signal-to-noise ratio ≥ 3:1 |

| Accurately measure concentration | Accuracy | Percent recovery of known standards | 85-115% across calibration range |

| Produce consistent results | Precision | Relative Standard Deviation (RSD) | Intra-day RSD ≤ 5%; Inter-day RSD ≤ 10% |

| Distinguish target from interferents | Specificity | Resolution from closest eluting interferent | Resolution factor ≥ 1.5 for all structurally similar compounds |

| Handle realistic sample volumes | Extraction Efficiency | Absolute recovery | ≥ 70% across low, medium, and high QC concentrations |

| Ensure method robustness | Ruggedness | RSD under varied conditions | ≤ 8% when operator, instrument, or day is changed |

Experimental Protocol for Key Parameters

For each technical specification, a detailed experimental protocol must be developed to ensure consistent testing and evaluation:

Protocol for Determining Limit of Detection (LOD) and Limit of Quantification (LOQ):

- Prepare a series of standard solutions at decreasing concentrations (e.g., 5, 1, 0.5, 0.1, 0.05, 0.01 ng/mL)

- Analyze each concentration in replicates (n=6) using the complete method

- Plot signal-to-noise ratio (S/N) versus concentration

- LOD = Concentration where S/N ≥ 3:1

- LOQ = Concentration where S/N ≥ 10:1 with precision ≤ 15% RSD

- Verify by independent preparation and analysis of standards at the determined LOD and LOQ concentrations

Protocol for Establishing Precision:

- Prepare quality control samples at three concentrations (low, medium, high) covering the calibration range

- Analyze six replicates of each QC level within a single analytical batch (intra-day precision)

- Repeat analysis of QC samples across three separate analytical batches (inter-day precision)

- Calculate mean, standard deviation, and relative standard deviation (%RSD) for each set

- Compare results against pre-defined acceptance criteria

The relationship between these experimental protocols and their role in method validation can be visualized as follows:

The Scientist's Toolkit: Essential Research Reagents and Materials

The translation of investigative needs into testable specifications requires specific materials and reagents that ensure methodological rigor and reproducibility. The following table catalogues essential components for forensic method development and validation:

Table: Essential Research Reagents for Forensic Method Development

| Reagent/Material | Technical Function | Application Example |

|---|---|---|

| Certified Reference Standards | Provides known identity and purity for quantification | Creating calibration curves for targeted analyte quantification |

| Stable Isotope-Labeled Internal Standards | Compensates for matrix effects and procedural losses | Correcting for extraction efficiency variations in complex biological matrices |

| Mass Spectrometry-Grade Solvents | Minimizes background interference and ion suppression | Mobile phase preparation for LC-MS/MS to maintain signal stability |

| Solid Phase Extraction Cartridges | Isolates and concentrates analytes from complex matrices | Extracting drugs of abuse from blood or urine samples prior to analysis |

| Derivatization Reagents | Enhances detection characteristics of target compounds | Improving chromatographic behavior or mass spectrometric response |

| Quality Control Materials | Monitors method performance over time | Inter-laboratory reproducibility assessment and longitudinal performance tracking |

Validation and Verification of Technical Specifications

Establishing Validation Protocols

Once technical specifications have been defined, rigorous validation protocols must be established to verify that each specification can be met consistently. This involves designing experiments that stress the method under conditions mimicking real-world scenarios. For forensic applications, this includes testing with case-type samples that may contain complex matrices, potential interferents, and analyte concentrations at the extremes of the measuring range.

The validation process should employ a combination of descriptive statistics to summarize data characteristics (mean, standard deviation, range) and inferential statistics to make generalizations about method performance [12]. For example, regression analysis demonstrates the relationship between instrument response and analyte concentration, while t-tests or ANOVA can determine if significant differences exist between results obtained under varying conditions.

Data Visualization for Method Validation

Appropriate data visualization is essential for interpreting validation data and demonstrating that technical specifications have been met. The selection of visualization methods should match the data type and analytical question [22]:

Table: Data Visualization Methods for Technical Specification Validation

| Analytical Question | Recommended Visualization | Application Example |

|---|---|---|

| Comparison of means between groups | Box plots or bar charts | Comparing extraction efficiency across different sample preparation methods |

| Distribution of continuous data | Histograms or dot plots | Assessing normality of calibration curve residuals |

| Relationship between variables | Scatter plots with regression lines | Demonstrating linearity of detector response across concentration range |

| Monitoring process over time | Control charts or line graphs | Tracking quality control results across multiple analytical batches |

The translation of investigative needs into testable technical specifications represents a critical pathway to forensically sound, legally defensible analytical methods. This process requires systematic decomposition of often-vague user requirements into discrete, measurable parameters that can be objectively validated. By employing the structured frameworks, classification systems, and visualization tools presented in this guide, researchers and drug development professionals can create validation protocols that not only meet scientific standards but also address the practical realities of forensic casework. The ultimate goal is to establish a clear, documented chain of logic connecting investigative needs to technical capabilities, ensuring that analytical methods produce reliable evidence that withstands scientific and legal scrutiny.

Inadequate definition of end-user requirements during the initial phases of forensic method validation introduces profound legal and scientific risks that compromise the entire judicial process. Poorly specified requirements lead to non-compliance with international standards, admissibility challenges of scientific evidence in legal proceedings, and fundamental failures in scientific reproducibility. Within forensic science research and practice, where methods must be demonstrably fit-for-purpose, the failure to precisely capture and validate against end-user needs creates cascading vulnerabilities across the criminal justice system. This technical guide examines these interconnected risks through quantitative analysis, experimental protocols, and conceptual frameworks, providing researchers and drug development professionals with structured approaches for mitigating liability through robust requirement definition.

The validation of forensic methods constitutes a comprehensive scientific study designed to produce objective evidence that a method, process, or piece of equipment is fit for its specific intended purpose [7]. Within this framework, the precise definition of end-user requirements establishes the foundational criteria against which all validation activities are measured. These requirements specify the operational context, performance thresholds, and analytical outputs necessary for a method to reliably support legal conclusions.

Inadequate requirement definition creates a latent vulnerability at the most critical phase of method development—the point at which scientific capability is formally linked to legal utility. When requirements are ambiguous, incomplete, or misaligned with actual forensic needs, the resulting validation gaps propagate through subsequent scientific processes, ultimately manifesting as legal challenges to evidence, reproducibility failures in independent verification studies, and operational breakdowns in casework applications. The following sections detail the specific legal and scientific consequences of these deficiencies, supported by quantitative data and analytical frameworks.

Legal Consequences of Inadequate Requirement Definition

Regulatory Non-Compliance and Legal Liability

Forensic science operates within a stringent regulatory landscape where method validation is mandated by codes of practice such as the Forensic Science Regulator's requirements [7]. Inadequate requirement definition directly violates the fundamental principle of establishing "objective evidence that a finalised method, process, or equipment is fit for the specific purpose intended" [7]. This failure constitutes regulatory non-compliance with cascading legal implications:

- Civil Liability: Organizations may face negligence claims when inadequate requirements lead to erroneous results causing harm. Legal liability arises from breaches of "duty of care" where entities fail to implement necessary precautions to protect stakeholders [23].

- Regulatory Penalties: Non-compliant organizations face civil and criminal liabilities, including fines, sanctions, and operational restrictions. Regulatory authorities may impose significant financial penalties or even revoke licenses and permits [23] [24].

- Contractual Disputes: Ambiguous requirements in forensic service contracts lead to disputes over performance, delivery, and intellectual property clauses, potentially resulting in litigation and damaged stakeholder relationships [25].

Table 1: Financial and Operational Consequences of Legal Non-Compliance

| Consequence Type | Specific Impact | Quantitative Measure |

|---|---|---|

| Financial Penalties | Cost of non-compliance | 2.71x higher than compliance costs (averaging $14.82M annually) [25] |

| Data breach costs | Global average of $4.88M per incident (2024) [25] | |

| Operational Disruption | Regulatory proceedings | 61% of companies faced ≥1 proceeding (avg. 3.9 proceedings) [25] |

| Litigation volume | Median of 6 lawsuits per company (42% expected increase) [25] |

Evidence Admissibility Challenges

In legal proceedings, the admission of forensic evidence hinges on its reliability, validity, and relevance. Courts increasingly scrutinize the methodological foundations of forensic evidence, particularly the rigor of validation processes [7]. Inadequately defined requirements create critical vulnerabilities in this admissibility framework:

- Interpretation Challenges: Methods lacking clear requirement boundaries enable contradictory interpretations of identical evidence, potentially leading to miscarriages of justice [4].

- Context Misalignment: Requirements that fail to specify operational conditions may produce valid results in laboratory settings that nevertheless misrepresent real-world forensic scenarios [26].

- Documentation Deficiencies: Inadequate documentation of requirement definition processes impedes the ability to demonstrate methodological rigor during legal challenges [23].

The implementation of ISO 21043 for forensic sciences further institutionalizes the necessity of precise requirement definition, emphasizing vocabulary standardization, interpretation protocols, and reporting consistency as essential components of legally defensible forensic practice [4].

Scientific Risks of Inadequate Requirement Definition

Reproducibility and Replicability Failures

The scientific credibility of forensic methods depends fundamentally on their reproducibility—the ability to consistently obtain the same results when studies are repeated under specified conditions. Inadequate requirement definition directly undermines this foundation by introducing methodological ambiguities that propagate through experimental workflows.

Table 2: Taxonomy of Reproducibility Types in Scientific Research

| Reproducibility Type | Core Definition | Validation Focus |

|---|---|---|

| Type A: Methods Reproducibility | Ability to implement identical computational procedures with same data/tools [27] | Verification of analytical pipelines |

| Type B: Results Reproducibility | Production of corroborating results using same experimental methods [27] | Direct replication studies |

| Type C: Inferential Reproducibility | Drawing qualitatively similar conclusions from independent replication [28] | Theoretical framework validation |

| Type D: Cumulative Reproducibility | New data from same laboratory produces same conclusion [27] | Internal consistency assessment |

| Type E: Independent Reproducibility | New data from different laboratory produces same conclusion [27] | External validity verification |

Research demonstrates alarming reproducibility failure rates across scientific domains. In preclinical cancer research, 47 of 53 published papers could not be validated despite attempts to consult original authors [27]. Similarly, large-scale replication efforts in psychology have confirmed only 40% of positive effects and 80% of null effects [27]. These systematic reproducibility failures frequently originate from poorly defined methodological requirements that permit uncontrolled variability across experimental implementations.

Methodological Limitations and Validation Gaps

Forensic method validation requires comprehensive testing of method limits, identification of potential error sources, and clear communication of limitations [7]. Inadequate requirement definition creates fundamental validation gaps:

- Uncalibrated Error Rates: Requirements that fail to specify acceptable error thresholds prevent meaningful measurement of method reliability and accuracy [26].

- Context Insensitivity: Methods validated against narrow requirements may perform poorly when applied to novel evidentiary materials or atypical casework scenarios [7].

- Instrumentation Dependencies: Requirements that omit specification of necessary equipment capabilities create interoperability challenges and introduce unquantified technical variability [26].

The conceptual relationship between requirement definition, validation activities, and scientific/legal risks can be visualized through the following workflow:

Experimental Protocols for Requirement Validation

Protocol 1: Digital Forensic Location Data Validation

The validation of location data in digital forensics exemplifies the critical importance of precise requirement definition. This protocol addresses the specific risk of misinterpretation between carved and parsed location data [26]:

Objective: To validate that location artifacts (GPS coordinates, Wi-Fi access points, cell tower data) accurately represent real-world device presence and movement patterns.

Required Materials:

- Forensic imaging tools (e.g., Cellebrite UFED, FTK Imager)

- Analytical software (e.g., Cellebrite Physical Analyzer, X-Ways)

- Reference devices with known location history

- Documentation framework for recording validation outcomes

Experimental Workflow:

- Data Extraction: Create forensic images of test devices with known location history using multiple extraction methods.

- Parsed Data Analysis: Extract location records from known database schemas (e.g., Cache.sqlite from Apple's RoutineD service on iOS devices).

- Carved Data Analysis: Perform pattern-based carving for location coordinates in unallocated space and app cache files.

- Corroborative Validation: Compare parsed and carved results against known device location history.

- Contextual Analysis: Examine source files and surrounding bytes for carved artifacts to determine semantic context.

- Error Quantification: Document false positive rates, coordinate precision variances, and timestamp misinterpretations.

Validation Metrics:

- Coordinate precision tolerance: ≤10 meters for high-confidence locations

- Timestamp synchronization: ≤60 seconds for correlated events

- False positive rate: ≤5% for carved location artifacts

This protocol demonstrates how explicitly defined accuracy requirements enable meaningful validation and prevent the presentation of misleading digital evidence in legal proceedings [26].

Protocol 2: Method Transfer Verification Between Laboratories

The transfer of validated methods between laboratories represents a critical point where inadequate requirement definition creates reproducibility failures:

Objective: To verify that a forensic method validated in one laboratory produces equivalent results when implemented in a different laboratory setting.

Required Materials:

- Standardized reference materials with known properties

- Identical or comparable analytical instrumentation

- Detailed method documentation including acceptance criteria

- Statistical analysis package for comparative analysis

Experimental Workflow:

- Documentation Review: The receiving laboratory reviews all method requirements, including equipment specifications, reagent qualifications, and procedural tolerances.

- Training and Knowledge Transfer: Personnel from the implementing laboratory receive training from the originating laboratory.

- Blinded Sample Analysis: Both laboratories analyze identical reference materials using the transferred method.

- Statistical Comparison: Results are compared using pre-defined equivalence criteria (e.g., statistical significance threshold of p<0.05, Cohen's d<0.5 for effect size).

- Proficiency Assessment: Implementing laboratory analysts demonstrate competency through successful analysis of proficiency test materials.

Validation Metrics:

- Inter-laboratory correlation coefficient: ≥0.95

- Mean difference between results: ≤5% of measured value

- Success rate on proficiency tests: ≥90%

This verification protocol directly addresses the "reproducibility crisis" documented across scientific disciplines by ensuring that methodological requirements contain sufficient specificity to enable successful implementation across different laboratory environments [27].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Reagent Solutions for Forensic Method Validation

| Reagent/Material | Function in Validation | Critical Quality Attributes |

|---|---|---|

| Certified Reference Materials | Provide ground truth for method accuracy assessment | Documented purity, traceable certification, stability data |

| Negative Control Matrices | Establish baseline signals and interference thresholds | Representative composition, documented lot variability |

| Proficiency Test Panels | Assess analyst competency and method robustness | Blind coding, realistic concentrations, stability documentation |

| Internal Standard Solutions | Correct for analytical variability and instrument drift | Isotopic purity, chemical stability, compatibility with analytes |

| Quality Control Materials | Monitor method performance over time | Defined acceptance ranges, long-term stability |

| Inhibitor Testing Materials | Identify sample-specific interferences | Representative inhibitor profiles, concentration gradients |

The selection and qualification of these research reagents must be explicitly guided by end-user requirements that specify the necessary analytical sensitivity, specificity, and reliability needed for casework applications. Each reagent must be documented according to ISO 21043 standards for forensic vocabulary and reporting requirements [4].

Inadequate definition of end-user requirements creates interconnected legal and scientific risks that undermine the validity and reliability of forensic science. The consequences extend beyond individual casework to impact systemic trust in criminal justice outcomes. Robust requirement definition establishes the necessary foundation for method validation, reproducibility verification, and legal defensibility.

Forensic researchers and drug development professionals must implement structured approaches to requirement definition that explicitly link scientific capabilities to operational needs. This includes the development of comprehensive validation protocols, standardized documentation practices, and reproducibility assessments throughout the method lifecycle. By addressing these fundamental requirements, the forensic science community can enhance scientific credibility, reduce legal vulnerabilities, and fulfill its essential role in the justice system.

From Concept to Criteria: A Step-by-Step Methodology for Defining and Documenting Requirements

Conducting a Stakeholder Analysis to Capture All End-User Needs

In forensic science, the validity of a method is fundamentally determined by its fitness for purpose [1]. This principle places the accurate identification and understanding of end-user needs at the very foundation of reliable forensic method validation research. Forensic science is an applied discipline where scientific principles are employed to obtain results that investigating officers and courts can expect to be reliable [1]. The process of validation involves providing objective evidence that a method, process, or device is fit for its specific intended purpose, ensuring results can be relied upon within the criminal justice system [1]. When courts assess the reliability of expert opinion, they explicitly consider "the extent and quality of the data on which the expert's opinion is based, and the validity of the methods by which they were obtained" [1].

Stakeholder analysis serves as the critical bridge between technical method development and real-world applicability. Without systematic identification of all relevant stakeholders and their requirements, forensic methods risk being technically sound but practically inadequate. The goal of validation is for both the user of the method (the forensic unit) and the user of any information derived from it (the end user) to be confident about whether the method is fit for purpose while understanding its limitations [1]. This confidence can only be established when stakeholder needs are comprehensively captured and translated into measurable requirements. In the context of evolving international standards like ISO 21043, which covers the entire forensic process from recovery to reporting, the formalization of stakeholder needs becomes increasingly paramount [4].

Defining Stakeholders in the Forensic Ecosystem

Primary Stakeholder Categories

The forensic science ecosystem comprises multiple stakeholder groups with varying needs and expectations. Properly classifying these groups ensures comprehensive coverage during requirements gathering.

Table 1: Key Stakeholder Categories in Forensic Method Validation

| Stakeholder Category | Key Representatives | Primary Needs and Concerns |

|---|---|---|

| End Users of Information | Investigating Officers, Prosecutors, Defense Attorneys, Judges, Juries | Reliable, interpretable results; understanding of limitations; adherence to legal standards; clarity in reporting [1] [4] |

| Method Operators | Forensic Practitioners, Laboratory Analysts, Digital Forensic Examiners | Robust, reproducible protocols; clear operating procedures; adequate training; competent tools; quality control mechanisms [1] [5] |

| Method Developers | In-house Developers, Tool Vendors, Research Scientists, Software Engineers | Detailed technical specifications; performance parameters; resource constraints; integration capabilities [1] [5] |

| Oversight Bodies | Accreditation Bodies, Forensic Science Regulator, Quality Managers | Compliance with standards (ISO 17025); validation records; competency frameworks; quality assurance [1] [29] |

| Indirect Stakeholders | Victims, Defendants, General Public | Impartiality; scientific rigor; procedural fairness; privacy considerations [29] |

The Stakeholder Identification Process

Identifying stakeholders is an iterative process that should begin during the initial planning phase of method development or adoption. The first step involves brainstorming a comprehensive list of all individuals, groups, or organizations affected by the implementation and outputs of the forensic method. This includes those who provide input to the process, are involved in its operation, or use its results for decision-making.

Following initial identification, categorization and prioritization are essential. A power-interest grid can be a valuable tool for this purpose, helping to classify stakeholders based on their level of influence over the project and their interest in its outcomes. This analysis guides the development of an appropriate engagement strategy for each group. High-power, high-interest stakeholders, for instance, require close management and active involvement, while those with low power and low interest may simply need monitoring. The final component is documenting stakeholder attributes, including their specific roles, expectations, potential influence on the project, and key concerns related to the method's performance and output.

Methodologies for Eliciting End-User Requirements

Research and Engagement Techniques

Capturing the authentic voice of the customer requires structured and multifaceted research approaches. No single method can fully illuminate all aspects of user needs; a combination of techniques provides the most robust understanding.

- Observational Techniques: Methods like job shadowing and ethnography involve spending significant time with device users in their actual working environment [30]. This approach allows researchers to witness firsthand the challenges, workarounds, and contextual factors that users might not think to verbalize in an interview setting.

- Interviewing Techniques: Individual interviews and focus groups provide a direct channel for understanding user perspectives [31] [30]. These structured conversations should explore users' current workflows, pain points with existing methods, and desired outcomes from a new solution.

- Usability Techniques: Contextual inquiries or cognitive walkthroughs involve asking users to perform specific tasks while verbalizing their thought process [30]. This technique is particularly effective for understanding the cognitive load associated with a method and identifying interface or process improvements.

- Market Research Techniques: A competitive analysis of similar products or methods can reveal established user expectations and identify gaps in current market offerings [30]. Reviewing validation records from adopted methods used in other organizations can also provide valuable insights [1].

A Framework for Remote Engagement

The COVID-19 pandemic necessitated the development of robust remote engagement frameworks, which remain valuable for reaching geographically dispersed stakeholders. One such framework, developed for security research projects, consists of four key steps designed to assure high-quality user requirement collection in online settings [31].

Stakeholder Engagement Framework

This systematic approach ensures that requirements are not only gathered but also evaluated, prioritized, and technically assessed. The framework offers a structured methodology that is easily adaptable to different forensic contexts and project types, while mitigating drawbacks associated with remote collaboration such as reduced informal networking opportunities [31].

Translating Stakeholder Needs into Technical Requirements

Distinguishing User Needs from Requirements

A critical step in the process is the formal translation of broadly-stated user needs into specific, testable technical requirements. This translation forms the foundation for both method development and subsequent validation.

Table 2: Translation from User Needs to Technical Requirements

| User Need (Stakeholder Perspective) | Technical Requirement (Validation Perspective) | Acceptance Criteria |

|---|---|---|

| "As a digital forensic examiner, I need to efficiently extract data from mobile devices." | The method must successfully extract a minimum of 95% of user-generated data (SMS, contacts, images) from supported iOS and Android devices. | Data extraction completeness is measured against a known reference set and meets the 95% threshold across 20 test devices. |

| "As a forensic biologist, I need to distinguish between multiple contributors in a DNA mixture." | The probabilistic genotyping software must accurately estimate the number of contributors in mixtures of 2-4 individuals with 98% accuracy. | Performance is validated using 100 simulated mixtures with known ground truth; contributor number is correctly estimated in ≥98 instances. |

| "As a reporting officer, I need to understand the limitations of the method for court testimony." | The method documentation must clearly state limitations regarding sample quality, known interferences, and statistical uncertainty. | A limitations section is included in the validation report and standard operating procedure, reviewed and approved by quality assurance. |