Data Quality and Validation in Drug Development: Ensuring Integrity from Discovery to Regulatory Approval

This article provides a comprehensive guide to data quality and validation for researchers and drug development professionals.

Data Quality and Validation in Drug Development: Ensuring Integrity from Discovery to Regulatory Approval

Abstract

This article provides a comprehensive guide to data quality and validation for researchers and drug development professionals. It explores the foundational dimensions of data quality—such as accuracy, completeness, and consistency—and their critical role in generating reliable evidence for regulatory submissions. The content details practical methodologies for implementing validation rules and quality checks throughout the drug development lifecycle, addresses common challenges like data heterogeneity and volume, and outlines rigorous validation frameworks required for AI/ML models and regulatory acceptance. By synthesizing current standards, best practices, and emerging trends, this resource aims to equip scientific teams with the knowledge to build robust, data-driven development strategies that accelerate the delivery of safe and effective therapies.

The Pillars of Data Quality: Core Dimensions and Regulatory Imperatives in Biomedical Research

Data Quality FAQs for Research and Drug Development

What are the core dimensions of data quality in regulatory-grade research?

Data quality dimensions are standardized criteria used to evaluate the accuracy, consistency, and reliability of data [1]. For regulatory-grade research, such as studies supporting drug development, ensuring data reliability is paramount. The US Food and Drug Administration's (FDA) Real-World Evidence Program guidance highlights that data reliability rests on dimensions including accuracy, completeness, and traceability [2].

- Accuracy: This is the degree to which data correctly represents the real-world events or objects it is intended to describe [3]. In healthcare, an inaccurate patient medication dosage in a dataset could threaten lives if acted upon [3]. It is often measured using the F1 score, a harmonic mean of precision and recall [2].

- Completeness: This dimension ensures that all required data is available and sufficiently detailed [4]. An incomplete dataset, such as one missing patient address information, can impact processes and lead to misinformed decisions [4]. In research, it can be estimated as a weighted mean of available data sources for each patient over the study period [2].

- Traceability: This provides an estimate of the proportion of data elements successfully tracked to a source of truth, such as clinical source documentation [2]. It is crucial for auditability and verifying the provenance of data points in validation studies.

Other critical dimensions include:

- Timeliness: This involves data being up-to-date and available when it is needed [4]. A lack of timeliness results in decisions based on old information, which is dangerous in fast-moving domains [3].

- Uniqueness: This ensures that all data entities are represented only once in the dataset, preventing duplication [4]. Duplicate patient records can lead to incorrect treatment decisions and skewed analysis [5].

- Consistency: This ensures that data does not conflict between systems or within a dataset [3]. Inconsistent data leads to "multiple versions of the truth," causing misreporting [3].

- Validity: This refers to whether data follows defined formats, values, and business rules [4].

Why is data uniqueness critical in clinical research datasets?

Data uniqueness is critical because duplicate records can distort analytical outcomes and skew ML models when used as training data [6]. In clinical research specifically, duplicate patient records can lead to:

- Incorrect treatment decisions and poor performance on core measures reporting [5].

- Compliance and audit violations, potentially triggering lawsuits and fines [5].

- Inflated patient counts, leading to flawed conclusions about treatment efficacy or disease prevalence [3].

- Operational inefficiencies as valuable time and resources are spent managing and reconciling duplicate data [5].

How can we quantitatively measure data quality improvements in a study?

The effectiveness of different data handling approaches can be quantitatively measured and compared. The following table summarizes findings from a quality improvement study involving records of 120,616 patients, which compared traditional data approaches (using single-source structured data) with advanced approaches (incorporating multiple data sources and AI technologies) [2].

Table 1: Quantitative Comparison of Traditional vs. Advanced Data Approaches

| Data Quality Dimension | Traditional Approach Performance | Advanced Approach Performance |

|---|---|---|

| Accuracy (F1 Score) | 59.5% [2] | 93.4% [2] |

| Completeness | 46.1% (95% CI, 38.2%-54.0%) [2] | 96.6% (95% CI, 85.8%-107.4%) [2] |

| Traceability | 11.5% (95% CI, 11.4%-11.5%) [2] | 77.3% (95% CI, 77.3%-77.3%) [2] |

This demonstrates that measurement of data reliability aligning with FDA guidance is achievable, and that advanced methods can significantly enhance data quality [2].

What are common data quality issues and their solutions?

Table 2: Common Data Quality Issues and Remediation Strategies

| Data Quality Issue | Impact on Research | How to Deal With It |

|---|---|---|

| Duplicate Data | Inflates metrics, skews analysis, and can lead to faulty conclusions [5]. | Use rule-based data quality management and tools that detect fuzzy and exact matches [6]. |

| Inaccurate or Missing Data | Does not provide a true picture, leading to poor decision-making [6]. | Use specialized data quality solutions to proactively correct concerns early in the data lifecycle [6]. |

| Outdated Data | Leads to inaccurate insights, poor decision-making, and misleading results [6]. | Review and update data regularly, develop a data governance plan, and use machine learning solutions for detection [6]. |

| Inconsistent Data | Creates confusion, erodes confidence, and leads to misreporting [3]. | Use a data quality management tool that automatically profiles datasets and flags quality concerns [6]. |

| Hidden or Dark Data | Causes organizations to miss opportunities to improve services or optimize procedures [6]. | Use tools that find hidden correlations and a data catalog solution to make data discoverable [6]. |

Experimental Protocols for Data Quality Validation

Protocol for Measuring Data Accuracy and Completeness

This protocol is derived from a real-world evidence quality improvement study [2].

1. Objective: To quantify the accuracy and completeness of a real-world data (RWD) cohort for a specific disease area (e.g., asthma).

2. Data Sources:

- Traditional Approach: Relies on a single data source, such as medical and pharmacy claims data.

- Advanced Approach: Integrates multiple data sources, including EHRs (structured and unstructured data extracted using AI methods), medical claims, pharmacy claims, and mortality registry data [2].

3. Patient Cohort:

- Identify eligible patients based on diagnosis codes and treatment criteria within a specified date range.

- Apply minimum data requirements for inclusion (e.g., continuous enrollment, presence of key variables) [2].

4. Accuracy Measurement:

- Variable Selection: Select clinical variables relevant to the disease area a priori, based on clinical specialist input [2].

- Reference Standard Development: A subset of clinical encounters is manually annotated by multiple clinician annotators with a predefined minimum interrater reliability (e.g., Cohen κ score of 0.7) [2].

- Calculation: For each variable, data accuracy is quantified as recall, precision, and the F1 score against the reference standard [2].

5. Completeness Measurement:

- Weighting: Assign weights to different data sources (e.g., medical claims, pharmacy claims, EHR structured data, EHR unstructured data) based on their known contribution of clinical information [2].

- Calculation: For each patient, calculate a completeness percentage per calendar year based on the sum of weights for available data sources. The mean completeness score across all patients and years is the overall estimate [2].

6. Traceability Measurement:

- Calculate the proportion of data elements in the final dataset that can be identified and traced back to the original clinical source documentation [2].

The Scientist's Toolkit: Essential Data Quality Solutions

Table 3: Research Reagent Solutions for Data Quality

| Tool or Methodology | Function | Example Use Case in Research |

|---|---|---|

| Data Quality Tools (e.g., Great Expectations, Soda) | Automated software for data validation, profiling, and monitoring [7]. | Embedding validation checks directly into CI/CD pipelines to catch schema issues and anomalies early [7]. |

| Data Governance Framework | A structured framework with defined roles (e.g., data stewards) and policies for managing data [8]. | Ensuring clear accountability for data quality and compliance with established standards across a research consortium [8]. |

| AI & Machine Learning | Technologies to process unstructured data at scale and predict potential data quality issues [2] [9]. | Extracting critical patient information from unstructured clinical notes in EHRs to improve data completeness and accuracy [2]. |

| Data Catalog | A centralized, searchable inventory of data assets, including metadata and lineage [6]. | Making data discoverable across a research organization and providing context on data sources and definitions [6]. |

| Data Cleansing | The process of identifying and correcting inaccuracies, duplicates, and outdated information [8]. | Preparing a clinical trial dataset for analysis by removing duplicate patient records and standardizing lab value formats [8]. |

Data Validation FAQs for Researchers

What is data validation and why is it a critical first step in research data management?

Data validation is a process used in data management and database systems to ensure that data entered or imported into a system meets specific quality and integrity standards [10]. Its primary goal is to prevent inaccurate, incomplete, or inconsistent data from being stored or processed, which can lead to errors in various applications and analyses [10].

For researchers, scientists, and drug development professionals, data validation serves as the first line of defense because it [10]:

- Prevents costly errors at the point of entry, reducing the need for later data correction.

- Safeguards data integrity, which is paramount in regulated environments like clinical trials.

- Ensures compliance with regulatory requirements such as FDA 21 CFR Part 11 and Good Clinical Practices (GCP) [11].

- Leads to more reliable and informed decision-making by providing a trustworthy foundation for analysis.

What are the different types of data validation and when should I use them?

Data validation can be broken down into three main types, each serving a distinct purpose in the research data lifecycle [10].

Table 1: Types of Data Validation

| Type of Validation | Purpose | Common Examples |

|---|---|---|

| Pre-entry Validation [10] | Prevents obviously incorrect data from being entered. Occurs before data is submitted. | Required fields, data type checks (e.g., date fields), format checks (e.g., email address structure). |

| Entry Validation [10] | Provides real-time checks and feedback during data entry. | Drop-down menus, auto-suggestions, flagging out-of-range values (e.g., a negative number for a quantity). |

| Post-entry Validation [10] | Assesses and maintains quality of data already in the system. | Data cleansing (removing duplicates), checking referential integrity, periodic batch validation checks. |

What are some common data validation rules I can implement in my research forms?

Implementing a mix of rule types ensures comprehensive data quality control.

Table 2: Common Data Validation Rules and Checks

| Validation Rule | Description | Research Application Example |

|---|---|---|

| Data Type Check [10] | Verifies data matches the expected format (text, number, date). | Ensuring a "Patient Age" field contains only numbers. |

| Range Check [10] | Confirms a numerical value falls within an acceptable range. | Checking that a lab result value is within physiologically plausible limits. |

| Format Check [10] | Ensures data adheres to a specific structure. | Validating that a participant ID follows a predefined alphanumeric pattern (e.g., ABC-001). |

| Consistency Check | Checks if data in one field logically aligns with data in another. | Verifying that a "Treatment End Date" is not earlier than the "Treatment Start Date." |

| Uniqueness Check [10] | Confirms that a value is not duplicated where it shouldn't be. | Ensuring a "Subject Identifier" is unique across all records in a clinical trial database. |

How can I design accessible data visualization and interfaces for my research tools?

When creating diagrams, charts, or user interfaces, ensuring sufficient color contrast is crucial for accessibility and legibility for all users, including those with low vision or color blindness [12] [13].

- Follow WCAG Guidelines: The Web Content Accessibility Guidelines (WCAG) recommend a minimum contrast ratio of 4.5:1 for standard text and 3:1 for large-scale text (approximately 18pt or 14pt bold) for an AA rating. For the highest AAA rating, aim for 7:1 for standard text and 4.5:1 for large text [13].

- Use High-Contrast Color Palettes: Choose foreground and background colors that have a significant difference in luminance. The Google News color palette, for instance, provides a range of colors that can be combined for good contrast (e.g.,

#174EA6on#FFFFFF) [14]. - Utilize Checking Tools: Use online color contrast analyzers or browser developer tools to verify your color combinations meet these ratios [12] [13].

What is an experimental protocol for validating a research data capture system?

The following workflow outlines a comprehensive, risk-based protocol for validating an Electronic Data Capture (EDC) system like REDCap in a regulated research environment, based on industry best practices [11].

Title: EDC System Validation Workflow

Step 1: Define User Requirements Specification (URS) Document all functional and non-functional requirements for the system. This includes detailed specifications for data entry forms, user workflows, reporting capabilities, and security needs. The URS serves as the foundation for the entire validation process [11].

Step 2: Conduct a Risk Assessment Identify potential threats to data integrity, patient safety, and regulatory compliance. Focus validation efforts on high-risk areas, such as modules handling patient randomization, adverse event reporting, or electronic signatures, using a Risk-Based Validation (RBV) approach [11].

Step 3: Execute Testing Protocols

- Functional Testing: Rigorously test every system module, including data entry forms, automated calculations, branching logic, and data export functions, to ensure they meet URS specifications [11].

- Performance Testing: Simulate high-load conditions to verify the system can handle large datasets and multiple concurrent users without performance degradation [11].

- Security Validation: Verify role-based access controls, encryption mechanisms, and audit trails to protect sensitive patient data and ensure compliance with standards like HIPAA [11].

Step 4: Compile Validation Report and Establish Change Control Document all test scripts, execution logs, and results. A formal validation report summarizes the evidence that the system is fit for use. Implement a change control process to ensure any future system modifications are documented and re-validated as necessary [11].

Research Reagent Solutions for Data Quality Assurance

Just as a lab experiment requires specific reagents, ensuring data quality requires a toolkit of specialized solutions.

Table 3: Essential Research Reagents for Data Quality

| Reagent / Tool | Function |

|---|---|

| Automated Validation Software [15] [11] | Executes automated test scripts to perform functional and performance tests, reducing manual effort and improving accuracy in the validation process. |

| Electronic Data Capture (EDC) System [11] | Provides a structured platform for data collection, often with built-in validation checks (e.g., REDCap). |

| Color Contrast Analyzer [12] [13] | A tool to verify that color choices in data visualization and interface design meet accessibility standards, ensuring legibility for all users. |

| Audit Trail System [11] | A secure, computer-generated log that chronologically records details of data creation, modification, and deletion, which is a regulatory requirement for data integrity. |

| Risk Management Framework [11] | A systematic process for identifying, assessing, and mitigating risks to data integrity and patient safety throughout the research lifecycle. |

Technical Support Center

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common data standard-related errors that cause submission rejections? A common reason for rejection is non-compliance with FDA Validator Rules and Business Rules [16]. These rules check that study data, formatted in standards like SEND and SDTM, are compliant and support meaningful review. Submissions often fail due to incomplete test reports, disorganized documentation, or missing justifications for omitted sections [17]. Regular internal audits and using the FDA's Refuse to Accept (RTA) checklist during document preparation can help identify and correct these issues early [17].

FAQ 2: How does ICH E6(R3) change the approach to data quality and management in clinical trials? ICH E6(R3) modernizes Good Clinical Practice (GCP) by advocating for flexible, risk-based approaches and encouraging the use of innovative technology and trial designs [18] [19]. It emphasizes quality by design and proportionality, meaning data management efforts should be scaled based on the risks to participant safety and data reliability [18]. This guideline also provides clearer guidance on data governance, helping sponsors and investigators implement more efficient and focused data quality oversight [19].

FAQ 3: Where can I find the complete, official list of required data standards for my submission? The definitive source is the FDA Data Standards Catalog [20] [21]. This catalog lists all supported or required standards and indicates their implementation dates. It is the primary resource for verifying which standards apply to your specific regulatory submission [21].

FAQ 4: Is electronic submission mandatory, and what is the standard format? Yes, for many submission types, electronic format is required. The standard method for submitting applications is the Electronic Common Technical Document (eCTD) [21]. Submitting electronically speeds up processing and allows for automatic validation checks, which helps to ensure the completeness and correctness of the submission [22].

FAQ 5: What should I do if my 510(k) submission for a medical device is delayed due to requests for additional performance data? This challenge is often due to incomplete test protocols or summaries [17]. To overcome it, ensure you provide full test reports that include clear results, detailed test protocols, and the rationales for all tests conducted. Align all testing with current FDA and consensus standards, and plan testing schedules early in the development process to avoid data gaps [17].

Troubleshooting Guides

Issue 1: FDA Validator Rule Failures in Study Data

- Problem: Your study data submission fails one or more of the FDA's automated Validator Rules.

- Solution:

- Identify Specific Rules: Obtain the specific rule identification numbers and error messages from the FDA's feedback.

- Consult Documentation: Refer to the technical specifications for the relevant data standard (e.g., CDISC SDTM or SEND) to understand the requirement that was violated.

- Correct the Dataset: Fix the underlying data in your dataset to ensure compliance with the standard's structure and formatting rules.

- Re-validate Locally: Before re-submission, use a local copy of the FDA Validator Rules (version 1.6 or newer) to check your data [16].

Issue 2: Gaps in Quality Management System (QMS) Documentation

- Problem: The FDA identifies flaws in your Quality Management System or risk management controls during a review.

- Solution:

- Implement a QMS: Adopt a recognized QMS, such as ISO 13485, at the start of the product development project [17].

- Maintain Design History Files (DHFs): Use DHFs to meticulously document all design changes, tests, and risk control measures throughout the device lifecycle [17].

- Conduct Internal Audits: Schedule regular internal document audits to verify ongoing compliance and identify any missing data or inconsistencies [17].

Issue 3: Inadequate Evidence for Substantial Equivalence in a 510(k)

- Problem: The FDA questions whether your medical device is substantially equivalent to the chosen predicate device.

- Solution:

- Re-evaluate Predicate Selection: Thoroughly research FDA databases to ensure your predicate device is appropriate and not technologically obsolete or subject to a recall [17].

- Strengthen Comparative Data: Clearly outline the similarities and differences between your device and the predicate. Support your claims with robust performance data and risk analysis [17].

- Use Supplementary Evidence: Where feasible, supplement physical test data with advanced modeling, simulation, and statistical analyses to provide robust performance evidence for novel features [17].

Data Quality and Validation Protocols

Protocol 1: Data Cleaning and Quality Assurance for Research Datasets Prior to statistical analysis, research data requires systematic quality assurance to ensure accuracy, consistency, and reliability [23]. The following workflow outlines the key steps:

Table 1: Key Steps in Data Quality Assurance

| Step | Description | Key Considerations |

|---|---|---|

| Check for Duplications | Identify and remove identical copies of data, leaving only unique participant data [23]. | Particularly important for online data collection where respondents might complete a questionnaire twice [23]. |

| Assess Missing Data | Establish percentage thresholds for inclusion/exclusion and analyze the pattern of missingness [23]. | Use a Missing Completely at Random (MCAR) test. Decide on thresholds (e.g., 50% completeness) and use imputation methods if data are not missing at random [23]. |

| Check for Anomalies | Detect data that deviate from expected/usual patterns [23]. | Run descriptive statistics to ensure all responses are within the expected scoring range (e.g., Likert scale boundaries) [23]. |

| Establish Psychometric Properties | Test the reliability and validity of standardized instruments [23]. | Report Cronbach's alpha (scores >0.7 are acceptable) for your study sample or from similar studies if sample size is small [23]. |

Protocol 2: Implementing Data Validation Rules Data validation involves setting rules to ensure data entered into a system meets specific criteria, preventing errors and inconsistencies [24]. The table below summarizes common validation types.

Table 2: Common Data Validation Types and Rules

| Validation Type | Description | Example |

|---|---|---|

| Data Type | Ensures data matches the expected data type [24]. | A field must contain only numerical values. |

| Range | Restricts data entry to values within a specified range [24]. | A patient's age must be between 0 and 120. |

| List | Limits data entry to a predefined list of acceptable values [24]. | A dropdown menu for "Ethnicity" with specific options. |

| Pattern Matching | Validates data based on specific patterns or formats [24]. | Ensuring an email address contains an "@" symbol. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Resources for Regulatory Submissions and Data Quality

| Item | Function |

|---|---|

| FDA Data Standards Catalog | The official list of data standards currently supported or required by the FDA for regulatory submissions [20] [21]. |

| eCTD (Electronic Common Technical Document) | The standard format for submitting regulatory applications, amendments, supplements, and reports to the FDA's CDER and CBER centers [21]. |

| CDISC Standards (e.g., SDTM, SEND) | Define a standard way to exchange clinical and nonclinical research data between computer systems, ensuring consistency and predictability for FDA reviewers [21] [16]. |

| FDA Validator Rules | A set of rules used by the FDA to ensure submitted study data are standards-compliant and support meaningful review and analysis [16]. |

| ICH E6(R3) Guideline | The international standard for Good Clinical Practice, outlining a modern, flexible, and risk-based approach to conducting clinical trials [18] [19]. |

| Pre-Submission Meeting (Q-Sub) | A formal process to obtain FDA feedback on a proposed regulatory strategy or specific issues before officially submitting an application [17]. |

Technical Support Center

Troubleshooting Guides

Guide 1: Troubleshooting Poor Quality in Clinical Data Submissions

Problem: Regulatory submissions are delayed or rejected due to non-compliant clinical data.

| Symptoms | Potential Root Causes | Corrective & Preventive Actions |

|---|---|---|

| Receipt of Data Integrity deficiency letters from regulators [25] | • Data collection processes not aligned with CDISC standards [26]• Manual data entry errors and inconsistent formats [26] | • Implement CDISC standards (SDTM, ADaM) from study start [26] [27]• Invest in CDISC-compliant EDC systems and staff training [26] |

| Inability to maintain Audit Readiness [25] | • Lack of standardized processes for data documentation [26]• Paper-based or disparate digital systems [25] | • Adopt a Digital Validation Tool (DVT) to centralize data and documents [25]• Establish a Validation Master Plan with continuous monitoring [28] |

| High costs and delays in Data Management [26] | • Need for extensive data cleansing and transformation late in the study [26]• Lack of risk-based validation approach [28] | • Apply Quality by Design (QbD) principles to build quality into processes [28]• Conduct risk assessments with FMEA to prioritize critical systems [28] |

Guide 2: Troubleshooting Inconsistent Results in High-Throughput Screening (HTS)

Problem: HTS assays produce highly variable potency estimates (e.g., AC50), leading to unreliable data for compound prioritization.

| Symptoms | Potential Root Causes | Corrective & Preventive Actions |

|---|---|---|

| Wide variance in potency estimates for a single compound [29] | • Systematic experimental factors (e.g., compound supplier, preparation site) [29]• Multiple cluster response patterns not identified [29] | • Implement the CASANOVA ANOVA-based clustering method to flag inconsistent compounds [29]• Apply integrated SSMD and AUROC metrics for robust quality control [30] |

| Poor concordance between different HTS studies [29] | • Differences in laboratory protocols and conditions [29]• No systematic Q/C procedure for concentration-response data [29] | • Standardize assay methods and laboratory conditions across runs [29]• Incorporate positive and negative controls for standardized effect size measurement [30] |

| False positive/negative calls in screening [29] | • Single-concentration HTS design [29]• Heteroscedastic responses and outliers not accounted for [29] | • Use quantitative HTS (qHTS) that tests at multiple concentrations [29]• Employ robust statistical modeling (e.g., Hill model with preliminary test estimation) [29] |

Frequently Asked Questions (FAQs)

Q1: Our validation team is struggling with audit readiness and growing workloads with limited staff. What solutions can we implement?

A: This is a common challenge, with 39% of companies reporting having fewer than three dedicated validation staff [25]. A two-pronged approach is recommended:

- Adopt Digital Validation Tools (DVTs): These systems streamline document workflows, centralize data access, and support a state of continuous inspection readiness. Adoption has jumped to 58% in 2025 for this reason [25].

- Implement a Risk-Based Approach: Use tools like Failure Modes and Effects Analysis (FMEA) to prioritize validation efforts on critical systems and processes that impact product quality, maximizing the efficiency of limited resources [28].

Q2: We are preparing a submission that includes Pharmacokinetic (PK) data. What are the common pitfalls in making PK data CDISC-compliant?

A: The main pitfall is failing to properly integrate data from different sources. Successful CDISC-compliant PK datasets require:

- Merging CRF and Bioanalytical Data: The PK concentration (PC) domain is built by merging sample timing from the EDC with drug concentration results from the BA lab [27].

- Creating the Relating Records (RELREC) Domain: This critical, but often overlooked, domain links the PC domain with the PK parameters (PP) domain, ensuring traceability and a cohesive submission package [27].

- Using the Correct Analysis Domains: The Analysis Dataset of PK Concentrations (ADPC) must be generated to support Non-Compartmental Analysis (NCA), followed by the ADPP domain for the resulting parameters [27].

Q3: What is the single most impactful step we can take to improve data quality and reduce regulatory risk in clinical trials?

A: The most impactful step is the early and consistent implementation of CDISC data standards. CDISC directly addresses quality and risk by [26]:

- Enhancing Data Quality: Standardized formats like SDTM and ADaM ensure data is clear, consistent, and minimize errors from integrating different sources.

- Accelerating Regulatory Approval: The FDA and PMDA require CDISC standards, and a compliant submission reduces the risk of rejections and requests for clarification.

- Mitigating Costs: While initial implementation has a cost, CDISC streamlines data management and significantly reduces the time and money spent on data cleansing and validation later in the trial.

Table 1: Financial & Operational Impact of Data Quality Initiatives

| Initiative | Key Metric | Impact | Source |

|---|---|---|---|

| CDISC Standards Adoption | Regulatory review & audit processes | Considerably accelerated [26] | Clinilaunch |

| CDISC Standards Adoption | Data management costs and delays | Significant reduction [26] | Clinilaunch |

| Digital Validation Tools (DVT) Adoption | Industry adoption rate (2024 to 2025) | 30% to 58% [25] | Kneat/ISPE |

| Quality Control (CASANOVA method) | Error rate (clustering) | < 5% [29] | Front. Genet. |

| Challenge / Resource | Statistic | Detail |

|---|---|---|

| Top Challenge | Audit Readiness | #1 challenge, above compliance and data integrity [25] |

| Team Size | 39% of companies | Have fewer than three dedicated validation staff [25] |

| Workload | 66% of companies | Report increased validation workload over past 12 months [25] |

Experimental Protocols

Protocol 1: CASANOVA for Quality Control in Quantitative High-Throughput Screening (qHTS)

Purpose: To identify and filter out compounds with multiple cluster response patterns in order to produce trustworthy potency (AC50) estimates [29].

Methodology:

- Data Input: For a given compound, collect all concentration-response profiles ("repeats") from the qHTS assay [29].

- Cluster Analysis by Subgroups using ANOVA (CASANOVA): Apply an analysis of variance (ANOVA) model to the response patterns of the compound to cluster the repeats into statistically supported subgroups [29].

- Interpretation & Filtering:

- A compound with repeats that fall into a single cluster is considered to have "consistent" responses. Its AC50 can be estimated via a weighted average approach [29].

- A compound with repeats that fall into multiple clusters is flagged as "inconsistent." The wide variance in AC50 estimates (e.g., from 3.93 × 10⁻¹⁰ to 19.57 μM) makes the overall potency estimate unreliable for downstream analysis [29].

- Validation: The method demonstrates low error rates (<5% for incorrect separation or clumping of clusters) in simulation studies [29].

Protocol 2: Implementing CDISC-Compliant Pharmacokinetic (PK) Data Structure

Purpose: To structure PK data from collection through analysis to meet regulatory submission standards and ensure traceability [27].

Methodology:

- Data Collection:

- SDTM Domain Creation:

- PC (Pharmacokinetic Concentrations) Domain: Merge CRF and BA lab data using unique identifiers (e.g., participant ID, sample matrix, nominal time). Combine with other relevant SDTM domains (e.g., EX, DM) [27].

- ADaM Analysis Dataset Creation:

- ADPC (Analysis Dataset of PK Concentrations): Translate the PC domain into an analysis-ready dataset. Add variables such as calculated elapsed time, imputed concentration values for BLQ, and analysis flags [27].

- ADPP (Analysis Dataset of PK Parameters): After performing Non-Compartmental Analysis (NCA), create this dataset from the PP domain to hold the resulting PK parameters (e.g., Cmax, Tmax, half-life) [27].

- Linking for Traceability:

- RELREC (Related Records) Domain: Create this domain to formally relate the concentration records in the PC domain to the parameter records in the PP domain, using USUBJID and SEQ as key variables [27].

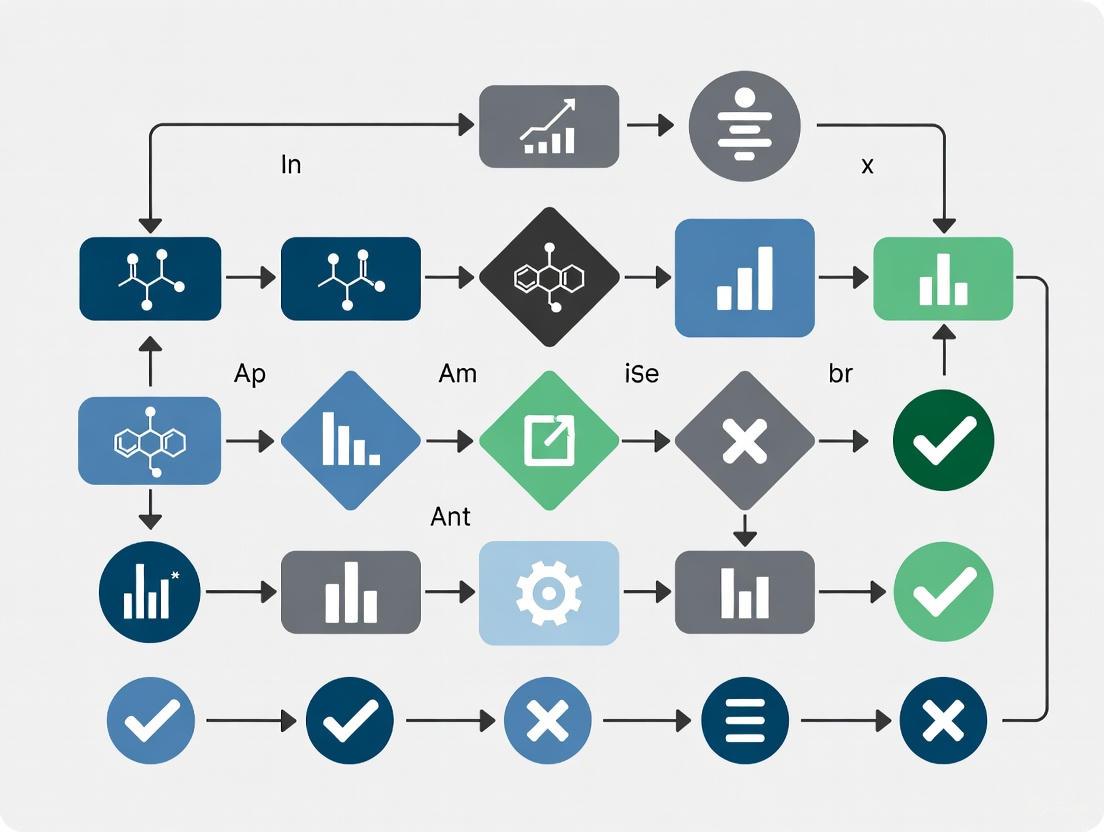

Workflow Visualizations

HTS Quality Control Pathway

CDISC PK Data Flow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function & Application |

|---|---|

| CDISC Standards (SDTM/ADaM) | Global standards for structuring clinical trial data to ensure regulatory compliance, enhance data quality, and streamline reviews [26] [27]. |

| Digital Validation Tool (DVT) | Software to digitalize validation processes, centralize data, manage documents, and maintain continuous audit readiness [25]. |

| CASANOVA Algorithm | An ANOVA-based clustering method used in qHTS to identify compounds with inconsistent response patterns, improving the reliability of potency estimates [29]. |

| SSMD & AUROC Metrics | Integrated statistical metrics for robust quality control in HTS; SSMD measures effect size, while AUROC assesses discriminative power [30]. |

| Structured Datasets (e.g., DOSAGE) | Curated, machine-readable datasets (e.g., for antibiotic dosing) that provide reliable, guideline-based logic for consistent decision-making [31]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What is the key difference between structured and unstructured data in a clinical trial context? A1: Structured data is highly organized, with separate fields for specific data elements like numeric results or coded terminology (e.g., lab values, vital signs). It is easily queried and stored in relational databases. In contrast, unstructured data, such as clinical notes or medical imaging, does not fit predefined models or formats and is stored in its native form, making it harder to search and analyze without specialized tools [32] [33].

Q2: Our site is struggling with the manual entry of EHR data into the EDC system. Are there automated solutions? A2: Yes, EHR-to-EDC technology can automate this transfer. For instance, one pilot study demonstrated that 100% of vital signs and laboratory data could be successfully mapped and transferred from the EHR to the EDC, resulting in significant time savings and reduced source data verification (SDV) efforts [32]. These solutions use standardized data formats like HL7 FHIR to ensure interoperability [32] [34].

Q3: A large portion of our data comes from physician notes. How can we effectively analyze this unstructured data? A3: Generative AI (GenAI) and Natural Language Processing (NLP) are emerging as key solutions. These technologies can process free-text notes, extract critical information (e.g., diagnoses, treatments), and transform it into a structured format suitable for analysis. This automates the categorization and summarization of previously difficult-to-use data [32] [35].

Q4: What are the best practices for ensuring the quality of real-time data streams from wearables or IoT devices? A4: Implement data validation rules at the point of entry to enforce format requirements and prevent invalid data [8]. Furthermore, utilizing a data architecture that supports real-time processing, such as a clinical data lakehouse (cDLH), can help manage the velocity and variety of this data while maintaining governance. Regular data audits are also essential to identify and rectify inaccuracies early [36] [37].

Q5: We need an infrastructure that can handle both structured datasets and unstructured text. What are our options? A5: A clinical data lakehouse (cDLH) is a modern architecture designed for this purpose. It combines the scalable, flexible storage of a data lake (ideal for unstructured data) with the management and querying capabilities of a data warehouse (ideal for structured data). This hybrid approach supports advanced analytics and AI research on diverse data types [36].

Troubleshooting Common Data Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| High error rate in manually entered data | Lack of validation rules; human error during transcription [8]. | Implement data validation rules at the point of entry (e.g., range checks, format checks). Use automated EHR-to-EDC data transfer where possible [32] [8]. |

| Inability to analyze physician notes | Data is locked in unstructured free-text format [32]. | Employ AI and NLP tools to extract and structure relevant information from the text, such as specific medical events or patient outcomes [35]. |

| Difficulty integrating diverse data sources | Incompatible schemas and formats; use of siloed systems [37]. | Adopt a data architecture like a lakehouse and enforce common data standards (e.g., FHIR) for interoperability [36] [34]. |

| Slow analysis due to data volume/variety | Traditional data warehouse struggles with unstructured data and real-time streams [36]. | Migrate to a more scalable solution like a clinical data lake or lakehouse that can handle large volumes and varieties of data [36]. |

| Poor data quality affecting analysis | Lack of regular data quality checks and cleansing processes [8]. | Establish a data governance framework, conduct regular audits, and use data quality tools for automated cleansing and monitoring [8] [37]. |

Data Classification and Characteristics

The table below summarizes the core characteristics of the three data types, helping to inform data management and infrastructure choices.

| Feature | Structured Data | Unstructured Data | Real-Time Streaming Data |

|---|---|---|---|

| Definition | Highly organized data with predefined formats [32] [33]. | Data stored in its native format without a predefined model [33] [38]. | Information that is continuously updated and provided with minimal delay [34]. |

| Proportion in Clinical Trials | ~50% of clinical trial data [32]. | Majority of overall healthcare data (~80%) [32] [35]. | Growing volume with wearables and IoT [34]. |

| Common Examples | Lab results, vital signs, coded medications [32]. | Clinical notes, medical imaging, patient feedback [32] [35]. | Continuous vital signs from ICU monitors, data from wearable sensors [34]. |

| Primary Storage | Data Warehouses [33] [36]. | Data Lakes [33] [36]. | Data Lakes / Lakehouses (for processing) [36]. |

| Ease of Analysis | Easy to query and analyze with standard tools [33]. | Requires specialized AI/NLP tools for analysis [32] [33]. | Requires stream processing engines for real-time analysis [34]. |

| Key Challenges | Lack of flexibility; predefined purpose [33]. | Difficult to search and analyze; variations in terminology [32]. | Low latency requirements; data consistency at high velocity [34]. |

Experimental Protocols and Data Handling

Protocol for Implementing EHR-to-EDC Data Transfer

This methodology automates the transfer of structured data from Electronic Health Records to an Electronic Data Capture system.

1. Mapping and Configuration

- Objective: Define the correspondence between source (EHR) and target (EDC) data fields.

- Procedure:

- Identify all data domains to be transferred (e.g., Vital Signs, Labs, Concomitant Medications).

- Use a mapping engine to link each specific field in the EHR (e.g., systolic blood pressure in the FHIR standard) to its corresponding field in the EDC [32].

- Perform a pilot mapping to validate that a high percentage (e.g., >95%) of targeted CRF fields can be mapped [32].

2. System Integration and Validation

- Objective: Establish a secure, automated data pipeline.

- Procedure:

- Utilize REST APIs, commonly supported by modern EHRs using standards like HL7 FHIR, to enable communication [34].

- Implement a secure connection, ensuring compliance with data protection regulations (e.g., HIPAA, GDPR).

- For a defined pilot patient group, execute the automated transfer for initial visits.

- Validate the process by comparing a sample of electronically transferred data against manually entered data to ensure 100% accuracy for mapped domains [32].

3. Operational Deployment and Monitoring

- Objective: Integrate the automated transfer into the live study workflow.

- Procedure:

- Activate the automated transfer for all subsequent patient visits.

- Monitor the system for failed transfers or data mismatches.

- Measure key performance indicators, such as time savings on data entry and reduction in source data verification (SDV) efforts [32].

Protocol for Structuring Unstructured Clinical Notes with GenAI

This methodology uses Generative AI to extract meaningful, structured information from free-text clinical notes.

1. Data Preparation and Model Selection

- Objective: Prepare the unstructured data and select an appropriate AI model.

- Procedure:

- Data Aggregation: Collect clinical notes from EHRs, ensuring a de-identification process is in place for patient privacy [35].

- Model Selection: Choose a GenAI model specialized in medical knowledge. Models with high performance on clinical benchmarks (e.g., high accuracy on the MedQA benchmark) are preferred [35].

- Local Hosting: For enhanced data security, consider hosting the selected open-source model (e.g., from platforms like Hugging Face) on local servers to comply with GDPR/HIPAA [35].

2. AI Processing and Information Extraction

- Objective: Automate the extraction and categorization of key medical concepts.

- Procedure:

- Define the target structured output (e.g., a table with columns for Diagnosis, Medication, Dosage, Adverse Event).

- Use the GenAI model to process the clinical notes. The model will analyze the text, identify relevant entities, and classify them into the predefined categories [35].

- The output is a structured dataset (e.g., CSV, database entries) where information from the notes is organized into queryable fields.

3. Quality Assurance and Insight Generation

- Objective: Validate the output and use the structured data for analysis.

- Procedure:

- Perform a manual review of a sample of the AI-generated structured data to check for accuracy and completeness.

- Once validated, the structured data can be integrated with other trial data for comprehensive analysis, such as identifying trends in adverse events or patient outcomes [35].

Workflow Visualization

Clinical Trial Data Management Workflow

The diagram below illustrates the logical flow and integration points for structured, unstructured, and real-time data within a modern clinical data architecture.

The Scientist's Toolkit: Research Reagent Solutions

Essential Tools for Modern Clinical Data Management

This table details key technologies and standards essential for handling the diverse data types in contemporary clinical research.

| Tool / Technology | Function | Relevant Data Type |

|---|---|---|

| EHR-to-EDC Automation | Automates the transfer of structured data from hospital EHRs to clinical trial EDC systems, saving time and reducing errors [32]. | Structured Data |

| HL7 FHIR Standard | A modern interoperability standard for healthcare data exchange. Using RESTful APIs, it enables seamless and secure data sharing between different systems [34]. | Structured Data, Real-Time Data |

| Clinical Data Lakehouse | A hybrid data architecture that combines the cost-effective storage of a data lake with the data management and querying features of a data warehouse. It is ideal for managing diverse data types and supporting AI/ML research [36]. | All Data Types |

| Generative AI (GenAI) / NLP | Processes and interprets unstructured text (e.g., clinical notes). It extracts key information, summarizes content, and transforms it into a structured format for analysis [35]. | Unstructured Data |

| Stream Processing Engines | Software frameworks designed to process continuous, real-time data streams with low latency, enabling immediate analysis of data from sources like wearables [34]. | Real-Time Streaming Data |

| Data Quality Tools | Software that automates data profiling, cleansing, validation, and monitoring to ensure data accuracy, completeness, and consistency throughout its lifecycle [8] [37]. | All Data Types |

From Theory to Practice: Implementing Data Quality Checks and Validation Rules Across the Drug Development Lifecycle

In scientific research and drug development, the integrity of data directly dictates the success of operations, from AI-powered insights to daily process automation [39]. Data validation acts as a systematic quality control measure, ensuring that data is accurate, consistent, and fit for its intended purpose before it enters critical systems [39] [40] [41]. For researchers, implementing robust validation is not merely an IT task but a fundamental scientific practice that prevents a ripple effect of flawed decision-making, operational inefficiencies, and compromised results [39]. This guide provides a technical deep-dive into four core validation techniques—type, range, list, and pattern matching—framed within the context of ensuring data quality for validation studies.

FAQ & Troubleshooting Guide

What are the fundamental data validation checks I should implement first?

The six main data validation checks provide a foundational framework for data quality control [41].

| Check Type | Core Function | Research Application Example |

|---|---|---|

| Data Type | Ensures data matches the expected type (number, text, date) [39] [41]. | Rejecting text entries in a numerical column for patient age [40]. |

| Format | Checks data adheres to a specific structural rule [39]. | Validating that a lab specimen ID follows the required 'ID-XXX-XXX' pattern [39]. |

| Range | Confirms numerical data falls within a predefined, acceptable spectrum [39] [41]. | Ensuring a physiological measurement like pH is between 6.5 and 8.5 [39]. |

| Consistency | Ensures data is logically consistent across related fields [41]. | Verifying that a patient's disease diagnosis is consistent with their reported symptoms. |

| Uniqueness | Ensures records do not contain duplicate entries [41]. | Preventing duplicate patient enrollment IDs in a clinical trial database [39]. |

| Completeness | Ensures all required fields are populated [41]. | Mandating entry of a principal investigator's name before a case report form can be submitted [40]. |

Why does my dropdown list (list validation) allow an invalid entry that I can see is not in the list?

This behavior is typically by design in systems like Excel or Google Sheets, which offer different levels of strictness for handling invalid data [42].

- Problem: The validation rule for the dropdown list is likely configured to show a warning instead of rejecting the input outright [42]. A user can override a warning and submit the invalid data.

- Solution: Reconfigure the data validation rule to reject invalid input.

How can I validate data that depends on the value of another field (cross-field validation)?

This requires multivariate validation, which enforces complex business rules and data integrity requirements beyond simple formats or ranges [39] [44].

- Problem: You need to enforce a logical relationship between two or more data points. For example, if a patient is marked as deceased, the date of death must be provided [44].

- Solution: Use a custom formula to create a conditional rule.

- In your data validation settings, select the "Custom formula" option [43].

- For the example above, a formula like

=NOT(AND(A2="Yes", B2=""))would check if "Yes" is selected in cell A2 (deceased status) and if cell B2 (date of death) is empty. The validation would fail if both conditions are true.

My custom pattern for email validation is rejecting known good addresses. What is wrong?

The regular expression (regex) used for pattern matching might be too rigid or contain an error [39].

- Problem: Overly complex or handwritten regex can fail to account for all valid address formats (e.g., newer top-level domains, plus signs, or special characters) [39].

- Solution:

- Use Well-Tested Libraries: Instead of writing complex regex from scratch, leverage established, vetted libraries for common formats like email addresses, phone numbers, or postal codes [39].

- Test Thoroughly: Use a regex testing tool to validate your pattern against a comprehensive set of both valid and invalid examples [39].

- Simplify if Possible: Many systems, including Google Sheets, have built-in validators for common formats like "Text is valid email," which can be more reliable than a custom pattern [43].

How do I create a dynamic list where the options change based on another input?

This advanced technique, called conditional data validation, often requires a helper function like FILTER to dynamically generate the list of valid options [43].

- Problem: You need a dropdown list in one cell (e.g.,

Substance) to only show options relevant to a selection in another cell (e.g.,Experiment Type). - Solution (Google Sheets Example):

- Create a main reference table that maps all

Experiment Typesto their validSubstances. - Use the

FILTERfunction in a separate "helper" range to extract only the substances that match the selectedExperiment Type[43]. - Set the data validation rule for the

Substancecell to be a list based on that dynamic helper range.

- Create a main reference table that maps all

The following workflow visualizes the structured process for implementing and troubleshooting data validation in a research environment.

Data Validation Implementation Workflow

Experimental Protocols & Methodologies

Protocol 1: Implementing and Testing a Range Validation Rule

This protocol details the steps to establish a range validation check, a fundamental technique for confirming numerical, date, or time-based data fits within a predefined, acceptable spectrum [39].

- 1. Define Boundaries: Establish realistic minimum and maximum values based on scientific or business logic. For example, set a plausible range for human body temperature (e.g., 36°C to 42°C) or a lab instrument's detection limit [39] [44].

- 2. Configure the Rule: In your system (e.g., Excel, Google Sheets, or an Electronic Data Capture - EDC - system), select the target cells and access the data validation menu. Choose "Number" (or "Decimal"/"Whole number") and set the condition to "between," entering your predefined limits [43] [42].

- 3. Set Up Alerts: Customize the error message to be precise and guiding. Instead of "Invalid input," use "Error: Body temperature must be between 36.0 and 42.0 degrees Celsius" [39] [44].

- 4. Test the Validation:

- Positive Control: Enter a value within the range (e.g., 37.2). The system should accept it.

- Negative Control: Enter a value outside the range (e.g., 5 or 50). The system should display your custom error alert [44].

Protocol 2: Establishing a Pattern Matching (Format) Validation Rule

This protocol outlines the use of pattern matching, often implemented through regular expressions (regex), to validate that text data adheres to a specific structural format [39].

- 1. Define the Pattern: Clearly specify the required structure. For a lab sample ID that must be "LAB-" followed by exactly 5 digits, the regex pattern is

^LAB-\d{5}$. - 2. Implement the Rule:

- 3. Test the Validation:

- True Positive: Test with a correctly formatted ID (e.g.,

LAB-12345). It should pass. - True Negative: Test with invalid formats (e.g.,

lab-123,LAB-12X34,12345). These should be rejected [39].

- True Positive: Test with a correctly formatted ID (e.g.,

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and tools essential for designing and implementing data validation within research environments.

| Tool / Solution | Function in Data Validation |

|---|---|

| Electronic Data Capture (EDC) System | A specialized software platform for clinical data collection that provides built-in, audit-ready validation rules (edit checks) for complex clinical trial data [44]. |

| Regular Expressions (Regex) | A powerful syntax for defining text patterns, used to enforce format validation on structured identifiers like sample IDs, patient codes, and genetic sequences [39]. |

| Data Validation in Spreadsheets | Features in tools like Excel and Google Sheets that allow setting rules (list, range, custom formula) directly on cells to ensure clean, error-free data entry [43] [42]. |

| FAIR Data Principles | A guiding framework to ensure data is Findable, Accessible, Interoperable, and Reusable. High-quality validation is a prerequisite for creating FAIR data, which is critical for AI and machine learning applications in bio/pharma [45]. |

| Audit Trail | A secure, computer-generated log that chronologically records events related to data creation, modification, or deletion, providing transparency for all validation actions and queries [40] [44]. |

This technical support center provides a framework for ensuring data quality throughout the drug development lifecycle. For researchers, scientists, and development professionals, maintaining high data quality is not an administrative task but a scientific imperative that underpins the validity, reliability, and regulatory acceptance of your work. The following troubleshooting guides, FAQs, and protocols are structured within the context of data quality validation studies to provide practical support for the specific challenges encountered during experimentation and data collection at each development stage.

Drug Discovery Stage

Quality Dimensions Framework

The discovery phase focuses on identifying and validating active compounds. Data quality here ensures that initial findings are robust and reproducible for subsequent development.

Table: Key Data Quality Dimensions for Drug Discovery

| Quality Dimension | Target Application | Validation Method | Acceptance Criteria |

|---|---|---|---|

| Accuracy [37] | High-throughput screening results, compound structure data | Cross-verification with reference standards, control samples [37] | >95% agreement with known control values |

| Completeness [8] | Chemical library data, assay results, experimental metadata | Data profiling to count missing values and fields [8] | <5% missing critical data fields in any experimental run |

| Consistency [8] | Compound naming conventions, data formats across assays | Automated checks against predefined formatting rules [8] | 100% uniform nomenclature and units across all data outputs |

| Reliability [37] | Replication of experimental results | Statistical analysis of replicate samples and experiments [37] | Coefficient of variation <15% for replicate measurements |

Troubleshooting Guide & FAQs

FAQ: Our high-throughput screening (HTS) data shows high variability between replicate plates. What could be causing this, and how can we improve data reliability?

- Answer: High inter-plate variability often stems from instrumentation drift, reagent degradation, or environmental fluctuations. Implement this systematic troubleshooting protocol:

- Review Instrument Calibration: Confirm that liquid handlers and readers were recently calibrated. Check logfiles for errors.

- Analyze Control Patterns: Examine the spatial distribution of controls on the plate. Edge effects may indicate evaporation issues; consider using edge-safe plates or volume adjustments.

- Validate Reagent Stability: Use a freshly prepared control compound to test for reagent degradation.

- Standardize Assay Conditions: Ensure consistent incubation times, temperature, and humidity across all runs.

- Expected Data Quality Outcome: Following this protocol should improve the reliability of your HTS data, reducing the coefficient of variation to within acceptable limits (<15%) [37].

FAQ: How do we ensure the completeness of metadata for our compound libraries to avoid future reproducibility issues?

- Answer: Incomplete metadata is a common source of experimental dead ends. Implement a structured data capture system:

- Define Mandatory Fields: Establish a minimum set of required metadata for each compound entry (e.g., source, batch ID, purity, solvent, concentration, storage conditions).

- Use Data Validation at Entry Points: Configure your Laboratory Information Management System (LIMS) with dropdown menus and real-time validation rules to prevent incomplete submissions [46].

- Perform Regular Data Audits: Schedule monthly reviews to identify and rectify records with missing critical metadata [8] [46].

Experimental Protocol: Data Quality Assurance for a High-Throughput Screening Campaign

Objective: To generate accurate, complete, and reliable data from a high-throughput screen while minimizing false positives and negatives.

Methodology:

- Plate Design:

- Include positive controls (known active compound) and negative controls (vehicle only) in designated wells on every plate.

- Randomize compound placement to avoid systematic bias.

- Data Acquisition:

- Perform instrument calibration before each run.

- Capture raw data and all instrumental metadata automatically.

- Data Preprocessing:

- Apply normalization using plate-based controls.

- Use statistical methods (e.g., Z'-factor calculation) to assess assay quality for each plate. Plates with a Z' factor < 0.5 should be flagged and potentially repeated.

- Primary Hit Identification:

- Set activity thresholds based on statistical significance (e.g., mean + 3 SD of the negative controls).

- Document all criteria and steps for full traceability.

Research Reagent Solutions

Table: Essential Reagents for Discovery-Stage Quality Control

| Reagent / Material | Function in Quality Control |

|---|---|

| Validated Control Compounds | Serves as a benchmark for assessing accuracy and reliability of assay results across experimental runs. |

| Standardized Assay Kits | Provides pre-optimized protocols and reagents to minimize inter-experimental variability, enhancing consistency. |

| LIMS (Laboratory Information Management System) | Enforces data standardization and captures mandatory metadata, ensuring completeness and consistency [46]. |

Data Quality Workflow

Data Quality Workflow in Drug Discovery

Preclinical Development Stage

Quality Dimensions Framework

This stage establishes safety and efficacy in biological systems. Data quality must support critical decisions for filing an Investigational New Drug (IND) application [47].

Table: Key Data Quality Dimensions for Preclinical Development

| Quality Dimension | Target Application | Validation Method | Acceptance Criteria |

|---|---|---|---|

| Validity [48] | Toxicology study protocols, pharmacokinetic (PK) models | Adherence to ICH guidelines (e.g., S1-S12), GLP standards [47] [49] | Compliance with all prescribed regulatory and scientific standards |

| Timeliness [48] | In-life study observations, sample analysis | Monitoring of data entry timestamps against sample collection times [48] | >99% of critical data entered within 24 hours of observation/analysis |

| Traceability [37] | Chain of custody for bioanalytical samples, data lineage | Audit trails tracking data from origin to report [37] | Unbroken chain of custody for all samples; full data lineage for key results |

| Cohesiveness [48] | Integrating data from pharmacology, PK, and toxicology | Logical alignment checks between study findings (e.g., exposure in PK and findings in tox) [48] | All data forms a logically consistent story with no unexplained contradictions |

Troubleshooting Guide & FAQs

FAQ: During a GLP toxicology study, we are encountering issues with the timeliness of data entry, leading to potential gaps. How can we resolve this?

- Answer: Delayed data entry compromises timeliness and can introduce errors. Implement a real-time data capture strategy:

- Use Electronic Data Capture (EDC) Systems: Replace paper-based forms with tablets or direct-entry systems in the animal facility.

- Implement Automated Data Feeds: For instrumental data (e.g., clinical pathology analyzers), use automated transfers to the study database to prevent manual entry lag.

- Establish Clear SOPs: Define required timeframes for data entry post-observation (e.g., "within 4 hours") and assign accountability.

- Audit for Timeliness: Include timeliness checks in your regular data audits [48].

- This ensures data is current and available for ongoing study monitoring, a key aspect of timeliness [48].

FAQ: A regulatory question has arisen regarding a specific pharmacokinetic parameter. How can we quickly trace the raw data, its transformations, and the final reported value?

- Answer: This requires robust traceability, achieved through:

- Data Lineage Mapping: Use tools that automatically track the provenance of data. This maps how raw concentration data is processed through non-compartmental analysis to yield the final parameter (e.g., AUC) [37].

- Version Control for Analysis Scripts: All data processing scripts (e.g., in R or Python) must be under version control, linking specific script versions to the generated results.

- Comprehensive Audit Trails: Ensure your database systems generate immutable audit trails that log every change, including who made it, when, and why.

- A well-documented data lineage is critical for responding to regulatory inquiries efficiently [37].

Experimental Protocol: Quality Control for a GLP Bioanalytical Method

Objective: To validate a bioanalytical method (e.g., LC-MS/MS) for determining drug concentration in plasma, ensuring the generated data is accurate, reliable, and valid for regulatory submission.

Methodology:

- Method Development and Qualification: Establish chromatography and mass spectrometry conditions.

- Full Method Validation (Per FDA/EMA guidelines):

- Accuracy and Precision: Run QC samples at low, medium, and high concentrations across multiple runs (n≥5). Accuracy should be 85-115%, and precision (RSD) <15%.

- Selectivity: Demonstrate no interference from blank plasma matrix.

- Calibration Curve Linearity: Analyze a minimum of 6 non-zero standards. The correlation coefficient (r) should be >0.99.

- Stability: Evaluate analyte stability under various conditions (freeze-thaw, benchtop, long-term).

- Documentation: Record all procedures, raw data, processed results, and deviations in a bound notebook or electronic system. This ensures full traceability and validity.

Research Reagent Solutions

Table: Essential Reagents for Preclinical-Stage Quality Control

| Reagent / Material | Function in Quality Control |

|---|---|

| Certified Reference Standards | Essential for calibrating instruments and validating bioanalytical methods, ensuring accuracy and validity. |

| Quality Control Samples | Used to monitor the precision and accuracy of bioanalytical runs over time, confirming reliability. |

| Audit Trail Software | Provides an immutable record of data creation and modification, which is mandatory for traceability in GLP studies. |

Preclinical Data Integration

Preclinical Data Integration and Quality

Clinical Trial Stage

Quality Dimensions Framework

Clinical trials test the drug in humans. Data quality is paramount for protecting patient safety and proving efficacy for regulatory approval [47].

Table: Key Data Quality Dimensions for Clinical Trials

| Quality Dimension | Target Application | Validation Method | Acceptance Criteria |

|---|---|---|---|

| Accuracy [37] [48] | Case Report Form (CRF) entries, lab data, endpoint adjudication | Source Data Verification (SDV), electronic data validation checks [37] | >99.5% error-free data points in critical efficacy/safety variables |

| Uniqueness [48] | Patient identifiers across sites | Use of unique subject IDs, screening for duplicate enrollments [48] | 100% unique patient identification across the entire trial database |

| Consistency [8] | Data collected across multiple sites and timepoints | Centralized monitoring to detect systematic site differences [8] | Consistent data distributions and trends across all investigative sites |

| Compliance | Adherence to approved protocol, GCP, and regulations | Protocol deviation tracking, audit preparation | Minimal major protocol deviations affecting primary endpoints |

Troubleshooting Guide & FAQs

FAQ: We are seeing an unusual number of data queries for a specific lab parameter at one clinical site. How should we investigate this inconsistency?

- Answer: This signals a potential consistency issue. Isolate the root cause:

- Isolate the Issue: Determine if the problem is with the site's equipment, sample handling, or data entry process.

- Remove Complexity: Ask the site to use a central lab for the next set of samples, if possible, to rule out local analyzer issues [50].

- Change One Thing at a Time: If using a local lab, have them run the test on a different analyzer, with different reagents, and by a different technician to isolate the variable causing the error [50].

- Compare to a Working Version: Compare the site's procedures and results against a site that is not generating queries [50].

- This systematic isolation process will identify whether the issue is technical, procedural, or human, allowing for a targeted correction [50].

FAQ: How can we prevent duplicate patient records from being created in our clinical database when using multiple recruitment sites?

- Answer: Duplicate records violate uniqueness and can compromise patient safety and data integrity.

- Implement a Centralized Subject Registration System: Use a system that requires a unique identifier (not just name/DOB) before a subject is registered.

- Use Automated Duplicate Detection Tools: Configure your EDC system with algorithms that flag potential duplicates based on multiple fields for review before database lock [46] [48].

- Establish Clear SOPs for Subject Enrollment: Train sites on the precise procedure for checking if a potential subject is already in the system.

- Proactive measures are significantly more effective than post-hoc cleaning for ensuring uniqueness [48].

Experimental Protocol: Data Quality Review Plan for a Phase III Clinical Trial

Objective: To ensure the accuracy, consistency, and completeness of clinical trial data prior to database lock and statistical analysis.

Methodology:

- Risk-Based Monitoring:

- Identify critical to quality (CtQ) data points (primary/secondary endpoints, key safety data).

- Focus source data verification (SDV) and query efforts on these CtQ elements.

- Centralized Statistical Monitoring:

- Use statistical algorithms to detect outliers, implausible data, and systematic site differences that may indicate consistency problems.

- Query Management:

- Manage the lifecycle of all data queries from initiation to resolution within the EDC system. Track query rates as a performance metric.

- User Acceptance Testing (UAT) of the EDC System:

- Before study initiation, thoroughly test all electronic data validation rules to ensure they correctly flag invalid or out-of-range entries, preventing errors at the point of entry [46].

Research Reagent Solutions

Table: Essential Tools for Clinical-Stage Quality Control

| Reagent / Tool | Function in Quality Control |

|---|---|

| EDC (Electronic Data Capture) System | Enforces data validation at entry, standardizes data formats, and provides an audit trail, ensuring accuracy and consistency. |

| Clinical Trial Management System (CTMS) | Tracks protocol adherence and manages site performance, supporting compliance with the study protocol. |

| IVRS/IWRS (Interactive Voice/Web Response System) | Manages patient randomization and drug inventory, ensuring the uniqueness of patient treatment assignments. |

Clinical Data Flow

Clinical Trial Data Flow and Quality Control

Post-Market Surveillance Stage

Quality Dimensions Framework

After drug approval, monitoring continues in the general population. Data quality ensures the timely identification of rare or long-term risks [47].

Table: Key Data Quality Dimensions for Post-Market Surveillance

| Quality Dimension | Target Application | Validation Method | Acceptance Criteria |

|---|---|---|---|

| Timeliness [48] | Adverse Event (AE) reporting | Monitoring time from AE awareness to regulatory submission [48] | 100% of serious AEs reported within mandated regulatory timelines |

| Completeness [8] [48] | AE report forms, patient registry data | Checklists to ensure all required fields (e.g., patient demographics, event description, outcome) are populated [8] [48] | <2% of mandatory fields missing in submitted AE reports |

| Uniqueness [48] | Global safety database records | Deduplication algorithms for reports from multiple sources (e.g., HCP, patient, literature) [48] | >99.9% duplicate-free case series for signal detection |

| Cohesiveness [48] | Integrated safety signal from multiple data sources (spontaneous reports, registries, EHRs) | Logical alignment and reconciliation of data from disparate sources to form a unified safety profile [48] | Safety signals can be coherently explained across all available data sources |

Troubleshooting Guide & FAQs

FAQ: Our safety database is receiving adverse event reports with critical missing information (e.g., outcome, concomitant medications). How can we improve completeness?

- Answer: Incomplete reports hinder robust safety analysis. Implement a two-pronged approach:

- At the Point of Entry: Design the AE reporting form (e.g., MedWatch) with structured fields and real-time validation rules that mandate completion of critical fields before submission [46] [48].

- Post-Submission Process: Establish a dedicated pharmacovigilance team to follow up on submitted reports that are incomplete. Use a tracking system to monitor the timeliness and rate of follow-up completion.

FAQ: We suspect duplicate reporting of the same adverse event from a healthcare professional and a patient for the same case. How should we handle this to maintain data uniqueness?

- Answer: Managing duplicates is critical for accurate frequency calculations.

- Automated Detection: Use safety database software with configurable deduplication algorithms that check for matches on key identifiers (e.g., patient initials, age, event, drug, date).

- Manual Triage: Flag potential duplicates for review by a safety scientist.

- Merge Logic: Establish and document clear rules for merging duplicate cases, ensuring all information is retained in a single "master" case.

- Source Verification: Where possible, follow up with the reporters to confirm if the reports are for the same event.

- A rigorous deduplication process is non-negotiable for maintaining the uniqueness of safety cases [48].

Experimental Protocol: Signal Detection and Validation in Pharmacovigilance

Objective: To proactively identify potential new safety signals from disparate data sources and validate them through rigorous analysis.

Methodology:

- Data Aggregation: Integrate data from spontaneous reports, electronic health records (EHRs), literature, and patient registries. A key challenge is ensuring the cohesiveness of this integrated data [48].

- Data Cleaning and Standardization:

- Apply MedDRA coding to all adverse event terms.

- Deduplicate case reports to ensure uniqueness.

- Quantitative Signal Detection:

- Use disproportionality analysis (e.g., calculating Reporting Odds Ratios) to identify drug-event combinations reported more frequently than expected.

- Clinical Review and Validation:

- A safety physician reviews the potential signal, considering factors like clinical plausibility, strength of association, and completeness of case information.

- Action: Validated signals may lead to updates in the product label, required safety studies, or communication to healthcare providers.

Research Reagent Solutions

Table: Essential Tools for Post-Market-Stage Quality Control

| Reagent / Tool | Function in Quality Control |

|---|---|

| MedDRA (Medical Dictionary for Regulatory Activities) | Standardizes the terminology for adverse event reporting, ensuring consistency and cohesiveness in safety data analysis. |

| Pharmacovigilance Database | Provides a centralized repository with deduplication and data validation features to manage uniqueness and completeness. |

| Data Mining & Signal Detection Software | Automates the analysis of large datasets to identify potential safety issues in a timely manner. |

For researchers and scientists in drug development, the integrity of data underpinning validation studies is paramount. The adage "garbage in, garbage out" is especially critical in this field, where decisions can impact patient safety and regulatory submissions. Modern software tools offer a paradigm shift from manual, error-prone data quality checks to automated, intelligent, and continuous observability. This technical support center guide provides practical troubleshooting and foundational knowledge for implementing these technologies within the context of data quality and quantity requirements validation research [51] [52].

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between data cleaning and data observability?

- Data Cleaning (or Cleansing): This is a proactive, one-time or batch-process task focused on correcting identified errors and inconsistencies in a static dataset. Its goal is to make data accurate, complete, and consistent for a specific analysis or model training. Techniques include deduplication, handling missing values, and standardizing formats [52] [53].

- Data Observability: This is a continuous, holistic practice focused on understanding and monitoring the health of data across the entire pipeline. It uses automated monitoring, lineage tracking, and anomaly detection to answer not just if data is broken, but why, where, and what the impact is. It helps catch unknown or unexpected issues ("unknown unknowns") that traditional cleaning would miss [54] [55] [56].

2. Why are AI and Machine Learning (ML) particularly suited for data quality in drug development research?

AI and ML excel at identifying complex patterns and anomalies in large, high-dimensional datasets, which are common in omics studies, high-throughput screening, and clinical trial data. They enable:

- Automated Anomaly Detection: ML models learn the "normal" behavior of your data and can flag subtle drifts in data distributions, freshness, or volume without needing pre-defined rules [54] [57] [56].

- Handling Scale and Complexity: Manual data quality checks do not scale with the volume and velocity of modern research data. AI-powered tools can monitor millions of data points in real-time [57].

- Proactive Issue Resolution: By detecting issues early—such as a failed data ingestion from a lab instrument or a schema change in a clinical data capture system—teams can remediate problems before they corrupt downstream analyses or models [55].

3. Our validation study is subject to strict regulatory oversight (e.g., FDA, EMA). How do these tools support compliance?

Regulatory agencies like the FDA and EMA emphasize data integrity, traceability, and the principles of ALCOA+ (Attributable, Legible, Contemporaneous, Original, and Accurate). Modern tools support this by:

- Automated Audit Trails: Data lineage features provide a complete, visual map of data from its source through all transformations, making it easy to demonstrate provenance and trace the impact of changes [54] [25].