Cross-Topic Authorship Verification: Experimental Protocols for Robust and Clinically Relevant Biomarker Development

This article provides a comprehensive framework for designing and implementing cross-topic authorship verification experimental protocols, tailored for biomedical and clinical research audiences.

Cross-Topic Authorship Verification: Experimental Protocols for Robust and Clinically Relevant Biomarker Development

Abstract

This article provides a comprehensive framework for designing and implementing cross-topic authorship verification experimental protocols, tailored for biomedical and clinical research audiences. We explore the foundational principles of authorship verification, detailing how stylistic and semantic features can function as unique 'biomarkers' of writing. The piece offers practical methodologies for feature extraction and model architecture, including advanced neural networks like Siamese and Feature Interaction Networks. It addresses critical challenges such as topic leakage and dataset bias, presenting optimization strategies like the HITS sampling method. Finally, we establish validation frameworks and comparative analyses of state-of-the-art models, culminating in a discussion of the profound implications for research integrity, pharmacovigilance, and clinical documentation in the pharmaceutical and drug development sectors.

Understanding Authorship Verification: From Writing Style as a Digital Biomarker to Cross-Topic Challenges

Defining Authorship Verification and Its Core Task in Textual Analysis

Authorship Verification is a fundamental task in computational linguistics and digital text forensics. It is defined as the process of analyzing a set of documents to determine whether they were written by a specific author [1]. In its most common form, the task addresses the following problem: given a set of documents known to be written by an author and a document of doubtful attribution to that author, the verification system must decide whether that document was truly written by that author [2]. This process relies on stylometry—the statistical analysis of linguistic style—to quantify an author's unique writing patterns into a measurable "fingerprint" for comparison [3].

The core task distinguishes itself from related authorship analysis problems through its specific decision structure. Unlike authorship attribution, which seeks to identify the most likely author from a set of candidates, verification presents a binary decision regarding a single candidate author [4]. This functionality is essential for applications where the question is not "who wrote this?" but rather "did this specific person write this?"—a scenario frequently encountered in forensic, academic, and cybersecurity contexts [1] [3].

Core Tasks and Decision Problems

Authorship verification addresses three principal decision problems, each tailored to different evidential scenarios [1]:

AV_Core: This is the fundamental decision problem. Given two documents,D1andD2, the task is to determine whether both were written by the same author. This setup is symmetric and does not require pre-existing author profiles.AV_Known: This common forensic scenario involves a set of documentsD_A = {D1, D2, ...}known to be written by authorA, and a documentD_Uof unknown authorship. The system must determine whetherAalso wroteD_U(aY-case), or if it was written by a different author (¬A, anN-case).AV_Batch: This problem extends the verification to sets of documents. Given two sets,D_AandD_B, each containing documents written by a single author, the task is to decide whether both sets were written by the same author.

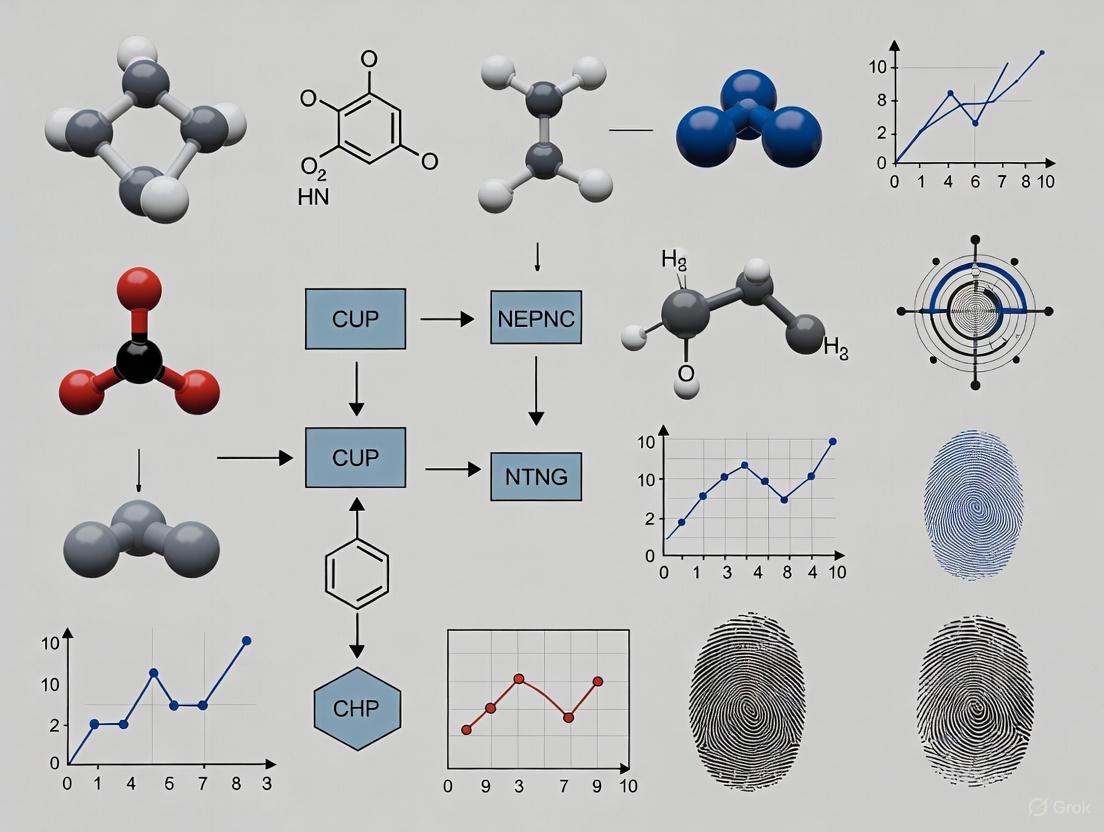

The following workflow generalizes the process for addressing these verification problems, particularly the AV_Known scenario:

Critical Methodologies and Feature Engineering

Linguistic Feature Categories

The effectiveness of authorship verification hinges on the extraction and analysis of linguistic features that capture an author's unique stylistic signature. These features are broadly categorized as follows:

Table 1: Categories of Linguistic Features for Authorship Verification

| Feature Category | Description | Specific Examples | Performance Notes |

|---|---|---|---|

| Lexical Features [2] | Analyze word-level choices and patterns | Word n-grams, word frequency, word-length distribution [5] [4] | Lower individualization for Classical Arabic [2] |

| Syntactic Features [2] | Capture sentence structure and grammar | POS (Part-of-Speech) distributions, syntactic n-grams, sentence length [5] [4] | High discriminative power; core of grammar models [2] [1] |

| Morphological Features [2] | Examine word formation and structure | Character n-grams, suffixes/prefixes | Lower individualization for Classical Arabic [2] |

| Semantic Features [5] | Relate to meaning and topic | RoBERTa embeddings, topic models [5] | Risk of topic bias; requires control [6] |

Advanced Computational Models

Recent research has developed sophisticated models that integrate multiple feature types to improve verification accuracy:

Feature-Integrated Deep Learning Models: These include architectures like the Feature Interaction Network, Pairwise Concatenation Network, and Siamese Network, which combine RoBERTa embeddings (semantic features) with stylistic features such as sentence length and punctuation to enhance performance [5].

Grammar Model Likelihood Ratio (LambdaG): This method calculates the ratio (

λG) between the likelihood of a document given a model of the candidate author's grammar and the likelihood given a model of a reference population's grammar. The grammar models are estimated using n-gram language models trained solely on grammatical features, making the approach particularly robust and interpretable [1].LLM-Based Style Transfer (OSST Score): A novel unsupervised approach leverages the causal language modeling (CLM) pre-training of Large Language Models (LLMs). It uses an LLM's log-probabilities to measure style transferability between texts, providing a powerful metric for verification without requiring supervised training [4].

The LambdaG method, which has demonstrated state-of-the-art performance, can be visualized as follows:

Experimental Protocols for Cross-Topic Authorship Verification

A primary challenge in authorship verification is ensuring models rely on genuine stylistic patterns rather than topical cues. The following protocol provides a framework for robust, cross-topic evaluation.

Protocol 1: Cross-Topic Evaluation with HITS

Objective: To evaluate and benchmark authorship verification models under conditions that minimize the confounding effect of topic leakage.

Background: Conventional cross-topic evaluations assume minimal topic overlap between training and test data, but topic leakage—where topics from the test set are represented in the training set—can lead to misleading performance and unstable model rankings [6] [7].

Materials:

- PAN Datasets: Standardized datasets from PAN competitions, which include fanfiction, essays, emails, and social media posts [8] [4].

- RAVEN Benchmark: The Robust Authorship Verification bENchmark, designed specifically for topic shortcut testing [6] [7].

- HITS Script: Implementation of the Heterogeneity-Informed Topic Sampling procedure.

Procedure:

- Topic Annotation: Manually or automatically annotate all documents in the corpus with topic labels.

- HITS Sampling: Apply Heterogeneity-Informed Topic Sampling (HITS) to create evaluation splits [6]. This involves:

- Identifying all topics present in the corpus.

- Sampling a heterogeneous set of topics to ensure the test set contains a balanced and diverse topic distribution that is distinct from the training/development sets.

- Model Training: Train the AV models (e.g., Siamese Networks, LambdaG, OSST) on the training split, which contains a specific set of topics.

- Model Testing: Evaluate the trained models on the HITS-sampled test set, which contains a different, heterogeneously distributed set of topics.

- Metric Calculation: Calculate standard performance metrics, including Accuracy and Area Under the Curve (AUC).

- Robustness Analysis: Analyze the stability of model rankings across multiple random seeds and HITS-generated splits to ensure performance is not dependent on a favorable topic alignment.

Validation: A valid cross-topic evaluation will show a stable ranking of models across different HITS-sampled splits and will typically reveal a performance drop for models that are overly reliant on semantic/topic features [6].

The Scientist's Toolkit: Key Research Reagents

Table 2: Essential Materials and Resources for Authorship Verification Research

| Resource Name | Type | Function in Research | Key Characteristics |

|---|---|---|---|

| PAN Datasets [8] [4] | Data Corpus | Provides standardized benchmarks for training and evaluating AV models. | Includes diverse genres (fanfiction, essays, emails, social media); central to modern AV research. |

| Enron Email Dataset [3] | Data Corpus | Serves as a rich source of genuine, multi-author text for building author profiles. | Contains >600k emails from 158 authors; provides "ground truth" for known authors. |

| Blog Authorship Corpus [3] | Data Corpus | Enables testing of AV models on long-form, multi-topic texts from many authors. | Contains over 600 authors and 300,000 posts; high topic diversity. |

| RoBERTa Model [5] | Computational Model | Provides deep contextualized semantic embeddings for text. | Transformer-based; used to capture semantic features in feature-integrated models. |

| HITS (Heterogeneity-Informed Topic Sampling) [6] [7] | Methodology | Creates evaluation splits with controlled topic distribution to minimize topic leakage. | Improves stability of model rankings; crucial for rigorous cross-topic evaluation. |

| LambdaG Algorithm [1] | Algorithm | Computes the likelihood ratio for verification based on grammatical models. | High accuracy and AUC; robust to genre variations; more interpretable than deep learning models. |

| OSST Score Algorithm [4] | Algorithm | Provides an unsupervised, LLM-based metric for authorship by measuring style transferability. | Zero-shot capability; performance scales with base LLM size. |

Quantitative Performance Comparison

Empirical evaluations across multiple datasets and against various baseline methods provide a clear picture of the relative performance of modern AV approaches.

Table 3: Comparative Performance of Authorship Verification Methods

| Methodology | Key Features | Reported Accuracy/AUC | Strengths and Limitations |

|---|---|---|---|

| LambdaG (Grammar Model) [1] | Likelihood ratio of author-specific vs. population grammar models (n-grams). | Outperformed baselines in 11 out of 12 datasets in terms of accuracy and AUC. | Strengths: High accuracy; robust to genre variation; interpretable. Limitations: Requires a representative reference population. |

| Feature-Integrated Deep Models [5] | Combination of RoBERTa (semantics) and style features (punctuation, sentence length). | Consistently improved over semantic-only models; competitive on challenging datasets. | Strengths: Leverages both style and deep semantics. Limitations: Requires careful feature engineering; performance can be sensitive to dataset. |

| Siamese Network [5] | Deep learning model that learns similarity between text pairs. | Competitive results, but can be outperformed by LambdaG [1]. | Strengths: Effective at capturing complex stylistic similarities. Limitations: Can be computationally complex; less interpretable. |

| LLM One-Shot Style Transfer (OSST) [4] | Unsupervised method using LLM log-probabilities to measure style transfer. | Higher accuracy than contrastively trained baselines when controlling for topic. | Strengths: Zero-shot capability; no training data needed. Limitations: Performance and cost depend on underlying LLM size. |

| Traditional Feature Ensemble [2] | Ensemble of lexical, morphological, and syntactic features. | 87.1% accuracy on corpus of 31 Classical Arabic books. | Strengths: Effective with specific feature combinations. Limitations: Performance varies significantly by feature category and language. |

The validation of authorship verification (AV) systems requires methodologies that can distinguish an author's unique writing style from topic-specific content. This application note proposes a framework that treats semantic and stylometric features as discriminative biomarkers, adapting rigorous validation principles from biomedical sciences [9] [10] [11] to computational linguistics. We detail experimental protocols designed to address the critical challenge of topic leakage [12] [13], which can lead to misleading performance metrics and unstable model rankings. By introducing the Heterogeneity-Informed Topic Sampling (HITS) method [12] [13] and leveraging large-scale, cross-domain corpora like the Million Authors Corpus [14], we provide a pathway for developing robust, cross-topic AV systems with validated probative value for forensic applications [15].

In forensic science, the statistical analysis of writing style, or stylometry, is founded on the principle that every individual possesses a distinct, albeit variable, writing style [15]. The central challenge in modern authorship verification is to build models that recognize this stylistic "biomarker" independent of the text's topic. A model that fails to do so may rely on spurious correlations between topic-specific keywords and authors, rather than genuine stylistic patterns [12]. This is analogous to a clinical biomarker test that confuses a correlated symptom with the underlying disease state [9] [11]. The phenomenon of topic leakage—where test data unintentionally contains topical information similar to training data—has been shown to inflate performance and compromise the evaluation of an AV system's true robustness [12] [13]. This note outlines a protocol for the discovery and validation of stylometric biomarkers, ensuring they are diagnostically specific to author identity.

Biomarker Discovery: Feature Extraction and Rationale

The first step in the AV pipeline is the selection and extraction of features that serve as potential authorship biomarkers. These features can be categorized as either individual characteristics, specific to an author, or class characteristics, common to a broader population [15].

- Lexical-Syntactic Biomarkers: These include features such as:

- Function Word Frequencies: The usage rates of words like "the," "and," "of," which are largely employed unconsciously and are resistant to topic influence [15].

- Character N-Grams: Sub-word sequences that capture idiosyncratic spelling, hyphenation, or morphological preferences [15].

- Syntax Tree Structures: Patterns in sentence construction and grammar.

- Semantic Biomarkers: These features capture content-related choices that may still be style-indicative, such as:

The rationale for biomarker selection must be pre-specified, and the analytical validity of the feature extraction process must be ensured through standardized, reproducible scripts [10].

Experimental Protocol for Cross-Topic Validation

A critical phase in validating authorship biomarkers is assessing their performance under a strict cross-topic regimen. The following protocol mitigates the risk of topic leakage.

Protocol: Heterogeneity-Informed Topic Sampling (HITS)

Objective: To create evaluation datasets with topically heterogeneous splits, thereby reducing topic leakage and enabling a more reliable assessment of model robustness [12] [13].

Materials:

- A source corpus with topic annotations for documents (e.g., fanfiction datasets from PAN competitions [12] or the Million Authors Corpus [14]).

- Computing environment with standard machine learning libraries (e.g., scikit-learn) and SentenceBERT models for generating topic representations.

Procedure:

- Topic Representation: For each topic category in the source corpus, calculate a vector representation by averaging the SentenceBERT embeddings of all documents belonging to that topic [13].

- Initialization: Start with an empty set

Sfor selected topics. Choose the first topic as the one with the highest average pairwise similarity to all other topics. Remove it from the candidate pool and add it toS. - Iterative Selection: While the number of selected topics is less than the desired sample size (k):

a. For each topic in the candidate pool, calculate its minimum similarity to any topic already in

S. b. Select the candidate topic with the largest minimum similarity (i.e., the most distinct from all already-selected topics). c. Add this topic toSand remove it from the candidate pool. - Dataset Construction: Populate the final dataset using documents only from the selected heterogeneous topic set

S. Perform a train-test split ensuring that no topic in the training set is present in the test set.

Validation: The success of HITS can be measured by the increased stability of model rankings across different random seeds and evaluation splits compared to conventional random sampling [12].

Workflow: Cross-Topic Authorship Verification

The following diagram illustrates the complete experimental workflow for cross-topic authorship verification, integrating the HITS protocol.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources essential for conducting rigorous cross-topic authorship verification studies.

Table 1: Essential Research Reagents for Authorship Verification

| Research Reagent | Function & Description | Exemplars |

|---|---|---|

| Cross-Topic Benchmarks | Provides a controlled environment for evaluating model robustness against topic shifts by ensuring training and test sets are topically distinct. | RAVEN benchmark [12], PAN Fanfiction dataset [12] |

| Large-Scale Multi-Domain Corpora | Enables large-scale training and cross-domain ablation studies to test generalizability across vastly different writing contexts. | Million Authors Corpus (MAC) [14] |

| Stylometric Feature Extractors | Software libraries for quantifying an author's unconscious writing style, transforming text into analyzable biomarkers. | N-gram counters, function word lists, syntactic parsers [15] |

| Topic-Representation Models | Generates semantic vector representations for topics, which is a prerequisite for executing the HITS sampling protocol. | SentenceBERT models [13] |

| Validation & Analysis Suites | Provides statistical tools to control for multiple comparisons, assess within-subject correlation, and compute robust performance metrics. | Mixed-effects models, False Discovery Rate (FDR) control [11] |

Validation Metrics and Data Analysis

Interpreting the results of a cross-topic AV experiment requires careful statistical analysis to avoid false discoveries and ensure findings are reproducible [11].

Key Performance Metrics

Performance should be reported using multiple metrics to provide a comprehensive view of model capability. The following table summarizes the core metrics used in biomarker validation.

Table 2: Key Statistical Metrics for Biomarker Validation [9] [16]

| Metric | Formula / Description | Interpretation in AV Context |

|---|---|---|

| Sensitivity (Recall) | True Positives / (True Positives + False Negatives) | The proportion of same-author text pairs correctly identified. |

| Specificity | True Negatives / (True Negatives + False Positives) | The proportion of different-author text pairs correctly identified. |

| Area Under the Curve (AUC) | Area under the Receiver Operating Characteristic (ROC) curve. | Overall measure of how well the model distinguishes between same-author and different-author pairs, across all classification thresholds. A value of 0.5 is no better than chance. |

| Positive Predictive Value (Precision) | True Positives / (True Positives + False Positives) | The probability that a text pair predicted to be from the same author is truly from the same author. Highly dependent on the base rate of same-author pairs in the test set. |

Addressing Common Data Analysis Concerns

- Multiplicity: When evaluating a large panel of stylometric features, the probability of false positives increases. Correction methods like the False Discovery Rate (FDR) must be applied to ensure that only truly discriminative biomarkers are selected [9] [11].

- Within-Author Correlation: Multiple text samples from the same author are not independent. Statistical models (e.g., mixed-effects models) that account for this intra-class correlation are necessary to generate accurate p-values and confidence intervals [11].

This application note establishes a rigorous framework for treating semantic and stylometric features as validated discriminative biomarkers. By adopting protocols from clinical biomarker development—such as pre-specified analytical plans, controlled validation studies, and careful statistical correction—resitects can significantly improve the reliability of authorship verification systems. The explicit mitigation of topic leakage through the HITS protocol is a critical advancement, ensuring that models are evaluated on their ability to capture an author's unconscious signature rather than superficial topic cues. The continued development and application of these principles are essential for the acceptance of stylometry as a robust forensic discipline [15].

The Critical Challenge of Topic Shift and Dataset Bias in Real-World Applications

The reliability of machine learning models in real-world applications is critically threatened by dataset shift, a phenomenon where the data used during the model's deployment differs from the data it was trained on. Within this broad challenge, topic shift—a change in the thematic content of data—presents a particularly insidious problem in tasks like authorship verification (AV), where models may inadvertently learn to recognize topics rather than an author's unique stylistic signature [6]. Similarly, in computer vision, models often learn spurious correlations from biased datasets, causing them to fail when these correlations change in the test environment [17] [18]. These issues are not merely academic; they lead to systemic failures, perpetuate inequalities, and erode trust in AI systems [19]. This document outlines the core challenges, provides experimental protocols for studying these biases, and presents mitigation strategies, with a specific focus on cross-topic authorship verification. The insights are framed within a broader thesis on developing robust, topic-invariant AV models.

The Problem: Topic Leakage and Spurious Correlations

Topic Leakage in Authorship Verification

In authorship verification, the ideal is to identify an author based on stylistic, topic-agnostic features. However, topic leakage occurs when there is unintended thematic overlap between training and test datasets [6] [7]. This creates a "topic shortcut," allowing models to achieve deceptively high performance by simply matching topics instead of learning the more nuanced, stable features of an author's writing style. Consequently, model evaluations become misleading, and their real-world robustness is severely overestimated. The conventional evaluation practice, which assumes minimal topic overlap, is insufficient to prevent this leakage, necessitating more rigorous benchmarking frameworks like RAVEN (Robust Authorship Verification bENchmark) [6].

Spurious Correlations and Dataset Bias

Beyond text, computer vision models are similarly hampered by dataset bias. A model might learn to associate a background feature (e.g., the presence of a ruler in dermatology images, or a specific environment in bird photographs) with a target class, rather than the actual pathological or object-related features [17] [18]. These spurious correlations are a form of correlation shift. Research shows that even small, low-intensity correlation shifts between training and test data are sufficient to cause significant performance degradation, posing a serious dataset-bias issue [17]. This is compounded by the fact that models often learn robust features during training but default to using spurious ones during testing [17].

Experimental Protocols for Analysis and Mitigation

Protocol 1: Heterogeneity-Informed Topic Sampling (HITS) for Authorship Verification

The HITS protocol is designed to create evaluation datasets that minimize the confounding effects of topic leakage, enabling a more accurate assessment of a model's true stylistic understanding [6] [7].

Objective: To generate a benchmark dataset that reduces topic leakage and produces a stable ranking of AV models. Application: Cross-topic authorship verification.

Methodology:

- Data Collection and Topic Annotation: Assemble a large, topic-diverse corpus of documents. Annotate each document with its primary topic label.

- Heterogeneous Topic Set Construction: Instead of a simple train-test split, construct a topic set that ensures heterogeneity. This involves selecting topics for the test set such that they are maximally distinct from each other and from the topics in the training set.

- Stratified Sampling of Documents: For each selected topic, sample documents from a wide variety of authors. This ensures that the evaluation tests the ability to verify authorship across topics, not within a single, homogeneous topic.

- Benchmark Creation (RAVEN): Compile the sampled documents into the Robust Authorship Verification bENchmark (RAVEN). This benchmark is explicitly designed to include a "topic shortcut test" to uncover and penalize models that rely on topic-specific features.

- Model Evaluation and Ranking: Train and evaluate multiple AV models on the RAVEN benchmark. The use of a heterogeneously distributed topic set yields a more stable and reliable ranking of model performance across different random seeds and data splits [6].

The following workflow diagram illustrates the HITS protocol:

Protocol 2: Quantifying Correlation and Diversity Shifts

This protocol provides a framework for systematically investigating the nuanced impacts of different types of dataset shifts, particularly the interplay between correlation and diversity shifts [17].

Objective: To analyze how varying intensities of correlation and diversity shifts impact model performance and reliance on spurious features. Application: General model robustness evaluation, especially in healthcare and biased imaging datasets.

Methodology:

- Dataset Generation with Controlled Bias: Start with a base dataset (e.g., CelebA [18] or a synthetic dataset like Waterbirds [17]). Introduce a known, controlled spurious correlation (e.g., between a background feature and a class label) at varying intensity levels (e.g., from low 55% to high 95% correlation).

- Define Multiple Test Sets: Create a battery of test sets to probe model behavior under different conditions:

- Same-Source (In-Distribution): Test set shares the same data distribution as the training set.

- Diversity-Shifted: Test set contains new, unseen variations of the core classes (e.g., skin lesions on dark skin when trained mostly on light skin [17]).

- Correlation-Shifted (No Shortcuts): Test set breaks the spurious correlation present in training (e.g., "waterbirds" on land backgrounds).

- Model Training and Evaluation: Train models on the biased training sets and evaluate comprehensively across all test sets. Monitor not only overall accuracy but also performance disaggregated by subgroups affected by the spurious correlation.

- Internal Bias Analysis: Utilize advanced metrics like Attention-IoU (Intersection over Union) [18] to analyze the model's internal attention maps. This reveals whether the model is focusing on the core features of interest (e.g., a bird's beak) or the spurious features (e.g., the background water).

Table 1: Taxonomy of Dataset Shifts and Their Characteristics

| Type of Shift | Definition | Primary Manifestation | Common Evaluation Protocol |

|---|---|---|---|

| Prior Probability Shift [20] [21] | Change in the distribution of the class labels, P(Y). | Prevalence of classes differs between training and test sets. | Artificial Prevalence Protocol (APP) [20]. |

| Covariate Shift [20] [21] | Change in the distribution of the input features, P(X). | Data distribution (features) differs between training and test sets. | Testing on data from a different domain or population. |

| Concept Shift [20] [21] | Change in the relationship between inputs and outputs, P(Y|X). | The underlying concept or mapping from X to Y changes. | Evaluation over time or in non-stationary environments (e.g., pre/post financial crisis). |

| Internal Covariate Shift [21] | Change in the distribution of internal network activations. | Input distribution to hidden layers changes during training, slowing learning. | Use of Batch Normalization layers to stabilize distributions. |

The Scientist's Toolkit: Key Research Reagents and Materials

For researchers developing and evaluating models against topic shift and dataset bias, a specific set of "research reagents" is essential.

Table 2: Essential Research Reagents for Bias and Shift Analysis

| Reagent / Resource | Type | Primary Function | Example / Reference |

|---|---|---|---|

| RAVEN Benchmark | Dataset & Benchmark | Provides a controlled environment for evaluating AV models' robustness to topic leakage, free from topic shortcuts. | [6] [7] |

| CelebA Dataset | Dataset | A real-world, biased image dataset used to study spurious correlations (e.g., accessories correlated with gender). | [18] |

| Waterbirds Dataset | Dataset | A synthetic dataset where birds are artificially placed on land/water backgrounds, creating a known spurious correlation. | [17] [18] |

| Attention-IoU Metric | Metric & Tool | Uses model attention maps to quantify which image features a model uses for prediction, revealing internal bias. | [18] |

| AI Fairness 360 (AIF360) | Software Toolkit | An open-source library containing metrics and algorithms to check and mitigate bias in datasets and ML models. | [19] |

| Fairlearn | Software Toolkit | An open-source project for assessing and improving fairness of AI systems. | [19] |

Visualization of Model Reliance on Spurious Features

The following diagram illustrates the core problem of spurious feature reliance and how different types of shifts can intervene, based on findings from Bissoto et al. [17]:

Addressing topic shift and dataset bias is not a single-step process but requires a rigorous, protocol-driven approach integrated throughout the machine learning lifecycle. The experimental frameworks of HITS and controlled shift analysis are critical for moving beyond misleading in-distribution metrics and building models that are truly robust in the real world. Key findings indicate that even small, often overlooked shifts can be critically damaging [17], and that diversity shift can, in some cases, attenuate a model's reliance on spurious correlations [17]. Future work must focus on developing more realistic and comprehensive benchmarks, integrating bias detection and mitigation tools like AIF360 [19] and Attention-IoU [18] into standard development workflows, and establishing rigorous reporting standards akin to SPIRIT [22] for model transparency. For authorship verification specifically, the RAVEN benchmark and the HITS protocol provide a necessary foundation for developing the next generation of topic-invariant stylometric models.

The integrity of scientific publications and clinical documentation is foundational to progress in biomedical research, ensuring that findings are reliable, reproducible, and trustworthy. Authorship verification is a critical component of this integrity, serving to authenticate the provenance of scientific texts and protect intellectual property [5]. Within the context of a broader thesis on cross-topic authorship verification, this protocol explores the application of advanced natural language processing (NLP) models to discern an author's unique stylistic signature, irrespective of the document's topic. This is particularly vital for detecting plagiarism, confirming authorship in multi-contributor papers, and safeguarding the authenticity of clinical trial documentation [5] [6]. The following sections provide a detailed application note, presenting a standardized experimental protocol for robust authorship verification, complete with data presentation, workflow visualizations, and a catalogue of essential research reagents.

Background and Significance

Authorship verification (AV) is defined as the task of determining whether two texts were written by the same author [5] [6]. In biomedical research, where collaboration is the norm and the stakes for accuracy are high, robust AV systems are essential for several reasons. They help prevent fraudulent claims of authorship, ensure proper credit is assigned, and protect the chain of custody for data and findings in clinical documentation.

A significant challenge in this domain is topic leakage, where an AV model makes predictions based on shared subject matter between texts rather than on genuine stylistic cues unique to an author [6]. This confounds the evaluation of a model's true capability to identify writing style. To address this, recent research emphasizes cross-topic evaluation setups, which deliberately use texts on different topics to train and test models, ensuring they learn stylistic features rather than topic-based shortcuts [6] [23]. The integration of deep learning models that combine semantic features (meaning and content) with stylistic features (sentence length, punctuation, word frequency) has been shown to significantly improve model accuracy and robustness in real-world, stylistically diverse datasets [5].

The evaluation of authorship verification models relies on several key performance metrics. The following table summarizes these common metrics and the impact of different feature types on model performance, providing a basis for comparing experimental results.

Table 1: Key Performance Metrics for Authorship Verification Models

| Metric | Description | Interpretation in AV Context |

|---|---|---|

| Accuracy | The proportion of correct predictions (same author/different author) out of all predictions. | Provides a general measure of model effectiveness, but can be misleading on imbalanced datasets [5]. |

| Macro-averaged F1-Score | The harmonic mean of precision and recall, averaged across all classes (same/different author). | A robust metric for imbalanced datasets, as it treats both classes equally and is less sensitive to class distribution [23]. |

| Model Ranking Stability | The consistency of a model's performance ranking across different evaluation splits or random seeds. | Highlights a model's reliability; improved by evaluation methods like HITS that mitigate topic leakage [6]. |

Table 2: Impact of Feature Types on Authorship Verification Model Performance

| Feature Category | Examples | Contribution to Model Performance |

|---|---|---|

| Semantic Features | RoBERTa embeddings, contextual word meanings [5]. | Captures the underlying meaning and content of the text. Essential for deep understanding but susceptible to topic bias if used alone. |

| Stylistic Features | Sentence length, word frequency, punctuation usage [5]. | Captures an author's unique writing habits that are largely independent of topic. Crucial for cross-topic robustness. |

| Combined Features | Interaction of semantic and stylistic features in a single model [5]. | Consistently improves model performance and generalizability by leveraging the strengths of both feature types. |

Experimental Protocol for Cross-Topic Authorship Verification

Protocol Title

Validation of a Combined Semantic and Stylistic Feature Model for Robust, Cross-Topic Authorship Verification in Biomedical Text.

Author Information

[Affiliation: Department, Research Institution, City, Country for each author]

This protocol details a methodology for applying and evaluating deep learning models for authorship verification (AV) in a cross-topic setting, a critical challenge for ensuring integrity in biomedical publications. It combines RoBERTa-based semantic embeddings with hand-crafted stylistic features to enhance model robustness against topic shifts. The protocol is designed to minimize the effects of topic leakage, providing a more reliable assessment of true writing style and offering a tool for authenticating scientific and clinical documents.

Key Features

- Cross-Topic Robustness: Employs evaluation splits designed to minimize topic overlap between training and testing data.

- Hybrid Feature Approach: Integrates state-of-the-art semantic understanding with fundamental stylistic features for improved accuracy.

- Bias Mitigation: Utilizes the HITS (Heterogeneity-Informed Topic Sampling) method to create evaluation datasets that ensure stable model rankings [6].

- Real-World Applicability: Tested on challenging, imbalanced, and stylistically diverse datasets reflective of actual biomedical literature.

Keywords

Authorship Verification, Cross-Topic Evaluation, RoBERTa, Style Features, Topic Leakage, Biomedical Text Analysis.

A graphical overview of the experimental workflow is provided in Section 4.13.

Background

Authorship verification is a key task in Natural Language Processing (NLP), essential for applications like plagiarism detection and content authentication in biomedical research. Conventional AV evaluations often suffer from topic leakage, where models exploit topical similarities rather than learning genuine stylistic markers, leading to inflated and misleading performance metrics [6]. This protocol is situated within a thesis focused on developing experimental setups that isolate and measure an model's ability to verify authorship across different topics, thereby ensuring that the systems are learning authorial style [23]. The methodology described herein is adapted from recent work that demonstrates the efficacy of combining semantic and stylistic features in deep learning architectures such as Feature Interaction Networks, Pairwise Concatenation Networks, and Siamese Networks [5].

Materials and Reagents

Table 3: Research Reagent Solutions for Authorship Verification Experiments

| Item | Function / Application | Specifications / Notes |

|---|---|---|

| PAN AV Dataset | A benchmark dataset for authorship verification tasks. | Provides text pairs with same-author/different-author labels. Ensure usage of a cross-topic split [5] [23]. |

| RAVEN Benchmark | A specialized benchmark for testing AV model robustness against topic shortcuts [6]. | Used for the final evaluation to assess real-world performance. |

| RoBERTa Model | A pre-trained transformer model for generating semantic text embeddings. | Captures deep contextual semantic information from text inputs [5]. |

| Python Programming Language | The primary language for implementing and executing the AV models. | Version 3.8 or above. Essential for scripting the analysis pipeline. |

| Relevant Software Libraries | Provides pre-built functions for machine learning and NLP. | Libraries include PyTorch or TensorFlow, Transformers, Scikit-learn, NLTK, Pandas. |

Equipment

- Computer Workstation: High-performance computing workstation with a multi-core CPU (e.g., Intel Xeon or AMD Ryzen 7/9), minimum 32 GB RAM, and a GPU (e.g., NVIDIA RTX 3080 or higher with 12+ GB VRAM) to accelerate deep learning model training and inference.

- Storage: Fast Solid State Drive (SSD) with at least 1TB of storage for housing datasets, model files, and experiment logs.

Software and Datasets

- Operating System: Ubuntu 20.04 LTS or Windows 10/11.

- Python Libraries: PyTorch (v1.12+), Transformers (v4.20+), Scikit-learn (v1.1+), NLTK (v3.7), Pandas (v1.5), NumPy (v1.22).

- Datasets: PAN Authorship Verification dataset [5], RAVEN benchmark [6].

Procedure

CAUTION: Always ensure data privacy and ethical guidelines are followed when handling text data, especially clinical documents.

Data Acquisition and Preparation: a. Download the PAN AV dataset and the RAVEN benchmark. b. CRITICAL: Apply the HITS sampling method to create a heterogeneously distributed topic set for evaluation to mitigate topic leakage [6]. This step is crucial for a valid cross-topic assessment. c. Partition the data into training, validation, and test sets, ensuring no author or topic overlaps between the splits unless intentionally designed for a specific cross-validation experiment. d. Preprocess the text: lowercasing, removing extraneous whitespace, and tokenization.

Feature Extraction: a. Semantic Features: Use the pre-trained

roberta-basemodel from the Hugging Face Transformers library to generate contextual embeddings for each text in the pair. Average the token embeddings to create a fixed-length document vector [5]. b. Stylistic Features: For each text, extract a set of predefined stylistic features, including: - Average sentence length. - Average word length. - Punctuation frequency (e.g., commas, semicolons). - Function word frequency. c. PAUSE POINT: The extracted feature sets can be saved to disk for future runs to expedite the model training process.Model Architecture and Training: a. Implement one of the proposed deep learning architectures (e.g., Feature Interaction Network) that takes both the semantic embedding vector and the stylistic feature vector as input [5]. b. The model should be designed to learn interactions between the two feature types. c. Train the model using the training set. Use the validation set for hyperparameter tuning and to monitor for overfitting. Employ a binary cross-entropy loss function and an optimizer like AdamW.

Model Evaluation: a. CRITICAL: Run the final evaluation on the held-out test set that was constructed using HITS sampling [6]. b. Calculate key performance metrics: Accuracy, Macro-averaged F1-Score, and observe Model Ranking Stability if multiple models are being compared. c. Benchmark performance against the RAVEN dataset to test for reliance on topic-specific features [6].

Data Analysis

- Statistical Analysis: Perform significance testing (e.g., paired t-test) to determine if the performance improvement gained by adding stylistic features is statistically significant across multiple runs with different random seeds.

- Error Analysis: Manually inspect text pairs where the model made incorrect predictions. Categorize the errors to identify common failure modes (e.g., short texts, highly formulaic writing).

- Validation of Protocol: The robustness of this protocol is validated by its design to outperform models that rely on semantic features alone, as demonstrated in prior studies [5], and by its use of the HITS method to ensure a stable and reliable evaluation [6].

Workflow and Logical Diagrams

Diagram 1: AV Model Workflow

Diagram 2: Topic Leakage Solution

Building Robust Verification Systems: Architectures, Feature Engineering, and Protocol Design

The proliferation of large language models (LLMs) has revolutionized text generation but also introduced significant challenges in authorship verification (AV), particularly in identifying the sources of AI-generated text and countering misinformation [24]. Conventional AV methods often rely on singular feature types, making them susceptible to cross-domain performance degradation when topic-based features overshadow genuine authorship signatures. Advanced feature extraction, which synergistically combines dense, contextual embeddings from pre-trained models like RoBERTa with hand-crafted stylometric features, presents a formidable solution. This approach is pivotal for cross-topic authorship verification experimental protocols, as it enables models to capture both deep semantic representations and surface-level stylistic patterns that are inherently topic-agnostic [14]. The integration of these feature types creates a more robust and generalizable representation of an author's unique writing signature, which is essential for applications ranging from identity verification and plagiarism detection to forensic analysis of AI-generated content [24] [14].

Theoretical Foundation

RoBERTa Embeddings

RoBERTa (Robustly Optimized BERT Pre-training Approach) is a transformer-based model that provides dense, contextualized embeddings for text. Unlike static word embeddings, RoBERTa generates dynamic representations that adapt to the surrounding context of each word in a sentence. This allows the model to capture nuanced semantic meanings and syntactic relationships that are characteristic of an author's writing style at a deep, linguistic level. In the context of neural authorship attribution, the embeddings from RoBERTa's final layers serve as a high-dimensional feature space where texts from the same LLM are hypothesized to cluster together [24].

Stylometric Features

Stylometric features are quantitative measures of an author's writing style, traditionally used in authorship analysis. They can be categorized into several groups:

- Lexical Features: These include vocabulary richness, word length distribution, and character-level n-grams. They capture an author's choice of words and their patterns of use.

- Syntactic Features: These features describe the grammatical structure of sentences, including part-of-speech (POS) tag frequencies, usage of function words, sentence length variability, and the prevalence of active versus passive voice [24].

- Structural Features: These encompass document-level characteristics such as average paragraph length, punctuation frequency, and capitalization patterns [24].

Complementary Nature

RoBERTa embeddings and stylometric features offer complementary strengths. RoBERTa excels at modeling complex, contextual linguistic phenomena, while stylometrics provide interpretable, surface-level markers of style. Their combination mitigates the risk of models latching onto topic-specific artifacts, thereby enhancing cross-topic robustness. Research has shown that the fusion of these features creates a writing signature vector that is both comprehensive and distinctive, improving the ability to differentiate between authors and AI models, including distinguishing between proprietary (e.g., GPT-3.5, GPT-4) and open-source LLMs (e.g., Llama 1, GPT-NeoX) [24].

Experimental Protocols

Dataset Generation and Curation

A high-quality, diverse dataset is foundational for training and evaluating a robust authorship verification model. The following protocol outlines the steps for dataset creation, drawing from established methodologies [24] [14].

- Step 1: Source Selection. Identify and select a wide array of text sources. The Million Authors Corpus (MAC) is an exemplary resource, containing 60.08 million textual chunks from 1.29 million Wikipedia authors across dozens of languages, ensuring inherent cross-lingual and cross-domain variability [14].

- Step 2: LLM Text Generation. To incorporate AI-generated text, use multiple LLMs (both proprietary like GPT-3.5/GPT-4 and open-source like Llama 1/2 and GPT-NeoX). Generate text by prompting these models with human-authored article headlines to produce news-style articles, controlling for domain while eliciting model-specific stylistic signatures [24].

- Step 3: Data Preprocessing and Balancing. Clean the collected texts by removing metadata and standardizing formatting. For classification tasks, ensure the dataset is balanced by sampling an equal number of text samples per author or per source LLM to prevent model bias toward majority classes [24].

Feature Extraction Methodology

This protocol details the parallel extraction of RoBERTa embeddings and stylometric features.

Step 1: Stylometric Feature Extraction.

- Lexical: Calculate type-token ratio (TTR), hapax legomena, and frequency of character n-grams (e.g., n=3,4).

- Syntactic: Use a POS tagger to compute the normalized frequency of nouns, verbs, adjectives, prepositions, and adverbs. Calculate average sentence length and standard deviation.

- Structural: Determine average paragraph length (in words), frequency of commas, semicolons, and exclamation marks per 1000 words.

- Normalization: Normalize all extracted features to a common scale (e.g., Z-score normalization) to form the final stylometry feature vector [24].

Step 2: RoBERTa Embedding Extraction.

- Model Setup: Utilize a pre-trained RoBERTa model (e.g.,

roberta-base). - Input Processing: Tokenize the input text using the RoBERTa tokenizer. For each text sample, pass the tokenized input through the model.

- Embedding Pooling: Extract the hidden state from the final layer. To obtain a fixed-length representation for the entire text, apply a pooling strategy—such as mean pooling over all token embeddings—to create a dense contextual embedding vector [24].

- Model Setup: Utilize a pre-trained RoBERTa model (e.g.,

Step 3: Feature Fusion.

- Concatenation: Combine the normalized stylometry feature vector and the pooled RoBERTa embedding vector into a single, unified feature vector via concatenation. This fused vector represents the comprehensive "writing signature" [24].

Model Training and Evaluation

This protocol covers the training and systematic evaluation of the authorship verification model.

Step 1: Model Architecture Selection.

- Option 1 (Traditional ML): Use a gradient-boosting framework like XGBoost, which can effectively handle the fused feature vector (XGBstylo) or a bag-of-words baseline (XGBbow) [24].

- Option 2 (Neural): Employ a neural classifier that takes the fused embeddings as input. Alternatively, fine-tune the RoBERTa model end-to-end, using the stylometric features as auxiliary inputs in a later layer.

Step 2: Experimental Design for Cross-Topic Verification.

- Train-Test Split: Partition the dataset into training and testing sets, ensuring that texts from the same author (or source LLM) are present in both sets but with distinct topics. This is crucial for forcing the model to learn topic-agnostic features.

- Cross-Validation: Perform k-fold cross-validation, where folds are stratified by author/LLM but varied by topic, to obtain reliable performance estimates.

Step 3: Model Interpretation.

- SHAP Analysis: Apply SHapley Additive exPlanations (SHAP) to the trained model (e.g., XGBoost) to identify which stylometric features (e.g., specific POS tags, lexical diversity) are most influential in distinguishing between authors or LLM categories [24].

Table 1: Key Stylometric Features for Differentiating Proprietary and Open-Source LLMs (based on SHAP analysis)

| Feature Category | Specific Feature | Importance for Differentiation |

|---|---|---|

| Lexical | Lexical Diversity | High |

| Syntactic | Preposition Frequency | High |

| Syntactic | Adjective Frequency | High |

| Syntactic | Noun Frequency | High |

| Structural | Paragraph Length | Medium |

Visualization of Workflows

Feature Extraction and Fusion Process

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools for Authorship Verification

| Item Name | Type/Function | Application in Protocol |

|---|---|---|

| Million Authors Corpus (MAC) | Dataset | Provides a massive, cross-lingual, and cross-domain benchmark for evaluating model generalizability [14]. |

| RoBERTa (base model) | Pre-trained Language Model | Serves as the core engine for generating contextualized, deep semantic embeddings from text inputs [24]. |

| XGBoost | Machine Learning Classifier | A robust gradient boosting framework used for classification based on fused or individual feature sets [24]. |

| SHAP (SHapley Additive exPlanations) | Model Interpretation Library | Provides post-hoc explainability, identifying the most influential stylometric features for model decisions [24]. |

| t-SNE | Dimensionality Reduction Algorithm | Used for visualizing the separation of different author/LLM classes in high-dimensional embedding spaces [24]. |

Results and Data Presentation

The efficacy of the fused feature approach is demonstrated through quantitative results from controlled experiments. The following tables summarize key performance metrics.

Table 3: Performance Comparison of Different Feature Configurations in Neural Authorship Attribution

| Model / Feature Set | Proprietary vs. Open-Source Accuracy | Intra-Proprietary Accuracy | Intra-Open-Source Accuracy |

|---|---|---|---|

| XGBoost (Stylometry only) | 89.2% | 85.7% | 78.3% |

| RoBERTa (Embeddings only) | 91.5% | 88.1% | 80.9% |

| Fusion (RoBERTa + Stylometry) | 95.8% | 92.4% | 85.6% |

Table 4: Impact of Llama 2 on Open-Source Category Classification Performance

| Scenario | Open-Source Classification Accuracy | Notes |

|---|---|---|

| Open-Source (Excluding Llama 2) | 88.1% | Clearer separation between older open-source models. |

| Open-Source (Including Llama 2) | 80.7% | Performance drop of ~7.4%, indicating Llama 2's style is distinct and closer to proprietary models [24]. |

In the domain of authorship verification (AV), which aims to determine whether a pair of texts is written by the same author, robust feature learning is paramount. The core challenge lies in learning a representation space where feature embeddings from the same author are mapped closely together, while those from different authors are pushed apart. This document details application notes and experimental protocols for three powerful deep learning architectures adept at this task: Siamese Networks, Feature Interaction Networks, and Pairwise Concatenation Networks. The content is framed within cross-topic authorship verification research, which emphasizes model robustness against topic shifts and minimizes reliance on topic-specific features [6].

Architectural Definitions and Application Rationale

Siamese Neural Networks

A Siamese Neural Network is a specialized class of neural network that contains two or more identical sub-networks with shared weights, working in tandem on two different input vectors to compute comparable output vectors [25] [26]. The shared weights ensure that two similar input samples from the same author cannot be mapped to different locations in the feature space. During learning, the network is trained using a contrastive or triplet loss function. These functions aim to minimize the distance between feature embeddings from the same author (positive pairs) and maximize the distance between embeddings from different authors (negative pairs) [25] [26]. This architecture is particularly suitable for authorship verification, a task often framed as a similarity learning problem where the model must learn to verify whether a pair of text samples belongs to the same author or not.

Feature Interaction Networks

Feature interaction refers to the phenomenon where the combination of two or more features produces a non-additive effect on the model's prediction. In the context of AV, different writing style markers (e.g., lexical, syntactic, and structural features) can interact in complex ways that are highly indicative of a unique authorial style. Table 1 summarizes key feature interaction types in AV. Modeling these interactions explicitly can allow the model to capture the complex, compositional nature of an author's writing style more effectively than considering features in isolation.

Table 1: Types of Feature Interactions in Authorship Verification

| Interaction Type | Description | AV Application Example |

|---|---|---|

| Statistical Pairwise | Quantifiable, non-additive effect between two features. | Interaction strength measured via H-statistics [27]. |

| Spatio-Temporal | Correlation between spatial and temporal signal features. | In EEG, integrates spatial distribution & temporal dynamics [28]. |

| Logical/Sequential | Interactions governed by logical or sequential constraints. | Analyzed using formal methods and logic [29]. |

Pairwise Concatenation Networks

Pairwise Concatenation is a fundamental yet effective method for combining features from two input samples. This operation involves concatenating the feature vectors (or embeddings) of the two text samples in a pair, typically after they have been processed by a base network. The resulting combined vector is then passed through one or more fully connected layers to learn the non-linear relationships between the features of the two samples, ultimately leading to a binary (same/not-same) classification. While simpler than a Siamese architecture with a specialized loss, it allows the model to directly learn discriminative patterns from the juxtaposed feature sets.

Quantitative Performance Comparison

The performance of deep learning architectures is quantitatively evaluated on standard benchmarks. The following table summarizes key metrics, providing a basis for comparison and selection.

Table 2: Performance Comparison of Deep Learning Architectures for AV and Related Tasks

| Architecture | Dataset | Key Metric(s) | Performance | Key Feature |

|---|---|---|---|---|

| AVSiam (Siamese ViT) [30] | AudioSet-20K, VGGSound | Audio-visual Retrieval | Competitive or superior to state-of-the-art | Single shared backbone for audio & visual inputs. |

| Siamese Network (EEG) [28] | BCI IV-2a | Classification Accuracy | Better than baseline | High discriminative feature learning for cross-subject tasks. |

| InHRecon (Feature Interaction) [27] | Multiple Feature Sets | Model Improvement (vs. baseline) | Significant improvement | Interaction-aware hierarchical reinforced reconstruction. |

| AVA-Net (Artery-Vein) [31] | OCTA Images (DR) | Arterial-Venous PID Ratio (AV-PIDR) | Significant differences among control, NoDR, mild DR | Most sensitive feature for early disease detection. |

Experimental Protocols

Protocol 1: Siamese Network for Textual Similarity

This protocol outlines the steps for training a Siamese network for authorship verification using a triplet loss function.

Workflow Diagram:

Procedure:

- Data Preparation: Compile a dataset of text documents with author labels. From this, generate triplets for training: an anchor text (A), a positive text (P) from the same author as A, and a negative text (N) from a different author.

- Input Encoding: Convert all text samples into a numerical representation, such as word embeddings or TF-IDF vectors.

- Sub-network Forward Pass: Process the anchor, positive, and negative samples through the identical sub-networks (e.g., a multi-layer perceptron or a recurrent neural network) with shared weights to obtain their respective feature embeddings: ( f(A) ), ( f(P) ), and ( f(N) ).

- Loss Calculation: Compute the triplet loss using the formula: ( Loss{Triplet} = \sumi^N \left[ \|f(Ai) - f(Pi)\|2^2 - \|f(Ai) - f(Ni)\|2^2 + \lambda \right] ) where ( \lambda ) is a margin that enforces a minimum distance between positive and negative pairs [26].

- Backpropagation & Optimization: Update the weights of the shared sub-network using backpropagation and an optimizer like Adam to minimize the triplet loss.

- Inference: For a new text pair, compute their embeddings and calculate their Euclidean or cosine distance. Classify as "same author" if the distance is below a learned threshold.

Protocol 2: Feature Interaction Modeling with Hierarchical Reinforcement

This protocol describes a method for automated feature space reconstruction that explicitly captures and leverages feature interactions, which can be adapted for AV.

Workflow Diagram:

Procedure:

- Problem Formulation: Define the task as learning an optimal and meaningful feature set ( \mathcal{F}^* ) that maximizes the performance ( VA ) on the authorship verification task: ( \mathcal{F}^* = \arg\max{\mathcal{\hat{F}}}(V_A(\mathcal{\hat{F}}, y)) ) [27].

- Agent Setup: Implement a hierarchical reinforcement learning structure with three agents:

- An Operation Agent that selects a mathematical operation (e.g., "Combine", "Multiply") from a predefined set ( \mathcal{O} ).

- Two Feature Agents that each select one existing feature from the feature pool.

- Feature Generation: At each step, apply the selected operation to the two selected features to generate a new feature.

- Interaction-Aware Reward: Quantify the strength of the interaction between the selected features using a statistical measure like H-statistics [27]. Reward the agents based on this interaction strength and the operational validity of the new feature.

- Iterative Reconstruction: Repeat the generation and selection process. The agents learn a policy to create an optimal, interpretable feature set that enhances the downstream AV classifier's performance.

Protocol 3: Pairwise Concatenation for Author Discrimination

This protocol provides a straightforward method for combining features from two text samples for direct classification.

Procedure:

- Feature Extraction: For each text in a pair, extract a comprehensive set of features (e.g., character n-grams, syntactic patterns, vocabulary richness indices).

- Vector Concatenation: For a text pair (Text₁, Text₂), let their feature vectors be ( V1 ) and ( V2 ). Create a combined feature vector ( V{\text{pair}} = V1 \oplus V_2 ), where ( \oplus ) denotes the concatenation operation.

- Classification Network: Feed the concatenated vector ( V_{\text{pair}} ) into a fully connected neural network.

- Output and Training: The final layer uses a sigmoid activation function to output a probability that the two texts are from the same author. Train the network end-to-end using binary cross-entropy loss.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Materials

| Item Name | Function/Application |

|---|---|

| Transformer Models (e.g., BERT) | Serves as a foundational sub-network for generating contextualized text embeddings in Siamese or Pairwise architectures [30]. |

| H-Statistic | A statistical measure used to quantify the interaction strength between selected features during reinforced feature space reconstruction [27]. |

| Triplet Loss Function | A discriminative loss function that trains Siamese networks by pulling anchor and positive samples together while pushing anchor and negative samples apart [25] [26]. |

| Contrastive Loss Function | An alternative loss for Siamese networks that reduces the distance for positive pairs and increases it for negative pairs beyond a margin [26]. |

| Hierarchical Reinforcement Learning (HRL) Framework | A structure with cascading Markov Decision Processes to automate feature and operation selection for feature interaction modeling [27]. |

A foundational challenge in authorship verification (AV) is ensuring that models genuinely learn an author's unique writing style rather than relying on topic-specific vocabulary, which acts as a confounding variable. Conventional cross-topic evaluations aim to measure model robustness to topic shifts by assuming minimal topic overlap between training and test data. However, topic leakage—the residual presence of topic-related features in the test data—can lead to misleading performance and unstable model rankings, as models may exploit these subtle topic shortcuts rather than learning style-invariant features [6]. This Application Note details advanced protocols for designing experimental splits that effectively isolate writing style from topic bias, a critical requirement for developing robust AV models in scientific and pharmaceutical research, where verifying authorship can have significant implications for intellectual property and data integrity.

Core Concepts and Quantitative Evidence

The Problem of Topic Leakage

Topic leakage occurs when the evaluation data, despite an intended cross-topic split, contains residual topic information that creates an inadvertent shortcut for AV models. This compromises the validity of the evaluation because a model can achieve high performance by detecting topical similarities rather than stylistic consistencies [6]. The Heterogeneity-Informed Topic Sampling (HITS) method was developed to address this by constructing evaluation datasets with a heterogeneously distributed topic set, thereby reducing the effects of topic leakage and yielding more stable model rankings across different evaluation splits [6].

Comparative Analysis of Dataset Partitioning Strategies

The table below summarizes the characteristics of different dataset partitioning strategies, highlighting the advantages of the HITS method.

Table 1: Characteristics of Dataset Partitioning Strategies for Authorship Verification

| Partitioning Strategy | Core Principle | Key Advantage | Primary Limitation | Impact on Model Ranking Stability |

|---|---|---|---|---|

| Random Split | Random assignment of texts to training and test sets. | Simple to implement. | High risk of topic leakage; fails to test cross-topic robustness. | Low (Highly unstable across seeds/splits) [6]. |

| Naive Cross-Topic Split | Attempts to separate training and test sets by topic. | Explicitly aims for topic independence. | Susceptible to insufficient topic isolation and latent topic leakage. | Moderate (Can be unstable) [6]. |

| HITS (Heterogeneity-Informed Topic Sampling) | Creates a smaller, heterogeneously distributed topic set for evaluation. | Actively mitigates topic leakage by design. | May require more sophisticated sampling and reduce dataset size. | High (More stable across seeds/splits) [6]. |

Experimental Protocols

Protocol 1: Implementing Heterogeneity-Informed Topic Sampling (HITS)

The HITS protocol is designed to create evaluation splits that minimize the risk of models leveraging topic-based shortcuts [6].

3.1.1 Reagents and Materials

- Raw Text Corpus: A collection of documents labeled with author and topic identifiers (e.g., PAN AV datasets).

- Computing Environment: Standard hardware capable of running natural language processing (NLP) libraries.

- Software Tools: Python with scikit-learn, NumPy, and pandas for data manipulation and sampling.

3.1.2 Step-by-Step Procedure

- Topic Identification and Labeling: Manually or algorithmically assign a discrete topic label to every document in the corpus. The granularity of topics (e.g., "Molecular Biology" vs. "Cell Culture Techniques") should be appropriate to the corpus's domain.

- Author-Topic Matrix Construction: Create a matrix where rows represent authors, columns represent topics, and each cell indicates the number of documents an author has written on a given topic.

- Heterogeneity Calculation: For each author, calculate a heterogeneity score based on the distribution of their documents across topics (e.g., using entropy or the Gini-Simpson index).

- Stratified Author Selection: Prioritize authors with high heterogeneity scores for inclusion in the test set. These authors provide diverse topic contexts, which is crucial for a robust evaluation.

- Iterative Split Generation: For the selected authors, algorithmically partition their documents into training and test splits, ensuring that no topic present in the test split is represented in the training split for that author. This is a non-trivial step that may require an optimization procedure to maximize the number of usable author-topic pairs while respecting the topic-exclusivity constraint.

- Validation: Manually inspect a sample of the final splits to confirm the absence of obvious topical overlap and that the topic distribution is sufficiently heterogeneous.

Figure 1: The HITS methodology workflow for creating robust cross-topic evaluation splits.

Protocol 2: Establishing a Baseline with the RAVEN Benchmark

The Robust Authorship Verification bENchmark (RAVEN) provides a standardized framework for conducting a "topic shortcut test" to diagnose a model's over-reliance on topic features [6].

3.2.1 Reagents and Materials

- HITS-Processed Dataset: The dataset generated from Protocol 1.

- AV Models: The authorship verification models to be evaluated.

- Evaluation Framework: A codebase for training models, running inference, and calculating performance metrics (e.g., AUC, F1-score).

3.2.2 Step-by-Step Procedure

- Model Training: Train the candidate AV models on the training portion of the HITS dataset.

- Standard Evaluation: Evaluate the trained models on the standard HITS test set, recording standard performance metrics (e.g., accuracy, AUC). This provides the primary measure of cross-topic robustness.

- Topic Shortcut Test: a. From the test set, identify pairs of documents that are on the same topic but are from different authors. b. Use the trained model to generate predictions for these same-topic, different-author pairs. c. A model that has learned genuine stylistic features should predominantly predict "different author." A high rate of "same author" false positives indicates that the model is conflating topic similarity with author identity.

- Performance Comparison: Compare the model's performance on the standard test set with its performance on the topic shortcut test. A significant performance drop in the shortcut test is indicative of topic bias.

- Benchmarking: Rank all evaluated models based on a composite score that balances performance on the standard test and the topic shortcut test.

Figure 2: The RAVEN benchmark workflow for evaluating model robustness and identifying topic shortcuts.

The Scientist's Toolkit

This section details the key resources required to implement the protocols described in this note.

Table 2: Essential Research Reagent Solutions for Cross-Topic Authorship Verification

| Item Name | Function/Description | Example/Format | Critical Parameters |

|---|---|---|---|

| PAN AV Datasets | Provides standardized, pre-collected text corpora with author and topic labels for benchmarking. | Datasets from PAN@CLEF competitions (e.g., PAN 2020, 2023) [23]. | Topic granularity, number of authors, number of documents per author. |

| Topic Labeling Tool | Algorithmically assigns topic labels to documents when manual labeling is infeasible. | Latent Dirichlet Allocation (LDA), BERTopic. | Number of topics, topic coherence score. |

| HITS Sampling Script | Implements the Heterogeneity-Informed Topic Sampling algorithm to generate robust train/test splits. | Custom Python script using pandas and NumPy. | Heterogeneity metric (e.g., entropy), target test set size. |

| RAVEN Benchmark Suite | A standardized software package for running the topic shortcut test and evaluating model robustness. | Python-based evaluation framework [6]. | Metrics for standard evaluation and shortcut test (e.g., AUC, false positive rate). |

| AV Model Architectures | The candidate models whose robustness is being assessed. | Fine-tuned Large Language Models (LLMs), Siamese Neural Networks, InstructAV [23]. | Model capacity, hyperparameters, fine-tuning method. |

This document provides detailed Application Notes and Protocols for implementing a robust experimental pipeline for cross-topic authorship verification (AV). The content is framed within a broader thesis on cross-topic authorship verification experimental protocols, specifically addressing the challenge of topic leakage, where models exploit topic-specific features rather than genuine stylistic patterns, leading to inflated and misleading performance metrics [6]. The protocols herein are designed for researchers and scientists developing reliable AV systems that generalize across topics and domains.

The core challenge in cross-topic AV is ensuring that models learn authorial style, independent of text topic. Conventional evaluations often contain hidden topic overlaps between training and test splits, a phenomenon known as topic leakage [6]. This protocol outlines a comprehensive workflow—from data collection using Heterogeneity-Informed Topic Sampling (HITS) [6] through to modern post-training techniques [32]—to build models that are robust to topic shifts.

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential Materials and Reagents for Authorship Verification Research

| Item Name | Function/Application | Key Characteristics |

|---|---|---|

| PAN AV Datasets [6] [23] | Standardized benchmarks for training and evaluating AV models. | Contains text pairs labeled for authorship; often includes cross-topic or cross-domain splits. |

| RAVEN Benchmark [6] | Evaluates model robustness against topic shortcuts. | Implements HITS sampling; provides a "topic shortcut test" to uncover reliance on topic-specific features. |

| Pre-trained Language Models (e.g., BERT, LLMs) [23] | Foundation for feature extraction or base for fine-tuning. | Provides generalized text representations; can be adapted for stylistic analysis. |

| HITS Sampling Protocol [6] | Creates evaluation datasets with controlled topic distribution. | Reduces topic leakage by ensuring a heterogeneous topic set; stabilizes model ranking. |

| Verification-oriented Orchestration [33] | Improves quality of AI-generated annotations (e.g., for data labeling). | Uses self- and cross-verification with LLMs to increase annotation reliability. |

Data Collection and Annotation Protocols

Core Data Collection and Curation Workflow

A rigorous data collection strategy is fundamental for cross-topic evaluation. The following protocol, centered on HITS, mitigates topic leakage [6].

- Objective: To assemble a dataset where topics are heterogeneously distributed between training and test splits, preventing models from exploiting topic similarities as a shortcut for authorship decisions.

- Materials: A raw corpus of texts with associated metadata, including author identity and topic labels.

- Procedure:

- Topic Identification: Manually or automatically label all documents in the corpus with a discrete set of topics.

- Heterogeneity-Informed Topic Sampling (HITS):

- Select a subset of topics that maximizes intra-topic diversity and inter-topic heterogeneity.

- Partition the selected topics into training and test sets, ensuring minimal thematic overlap.

- From the chosen topics, sample document pairs for the authorship verification task, ensuring a balanced representation of same-author and different-author pairs within and across topics.

- Dataset Splitting: Formally create training, validation, and test splits based on the topic partitions, not random sampling. The test set must contain topics unseen during training.

- Benchmark Creation: Package the resulting dataset as a benchmark, such as the Robust Authorship Verification bENchmark (RAVEN) [6], for standardized evaluation.

AI-Assisted Data Annotation and Verification

For projects requiring manual annotation (e.g., labeling tutoring moves or stylistic features), LLMs can scale the process, but their outputs require verification [33].

- Objective: To produce high-quality, reliable annotations for qualitative data using LLM orchestration.

- Materials: Unlabeled text data (e.g., tutoring transcripts), a detailed codebook (rubric) of constructs to label, and access to frontier LLMs (e.g., GPT, Claude, Gemini).

- Procedure:

- Unverified Annotation: A primary LLM ("annotator") generates initial labels based on the codebook prompt.

- Verification Orchestration:

- Self-Verification: The same LLM that generated the initial labels is prompted to re-check and justify its own outputs.

- Cross-Verification: A different LLM ("verifier") audits the initial labels generated by the annotator model.

- Adjudication: The final label is determined based on the verification step. This process can nearly double agreement with human annotations (Cohen's κ) compared to unverified baselines [33].

- Documentation: Use the notation

verifier(annotator)(e.g.,Gemini(GPT)) to standardize reporting of the orchestration method [33].

Table 2: Impact of HITS Sampling and Verification on Key Performance Metrics

| Method / Condition | Reported Performance Improvement | Primary Effect |

|---|---|---|

| HITS Sampling [6] | "More stable ranking of models across random seeds and evaluation splits." | Mitigates topic leakage, leading to more robust and reliable model evaluation. |

| Self-Verification Orchestration [33] | "Nearly doubles agreement relative to unverified baselines." | Significantly improves AI annotation reliability, especially for challenging constructs. |

| Cross-Verification Orchestration [33] | "Achieves a 37% improvement [in Cohen's κ] on average." | Leverages complementary model strengths to improve annotation quality, though benefits are pair-dependent. |

Table 3: Comparative Cost and Focus of Modern LLM Training Stages

| Training Stage | Primary Objective | Relative Cost & Data Focus |

|---|---|---|

| Pretraining [34] | Learn general language patterns and world knowledge via next-token prediction. | Extremely high cost; uses massive, raw text corpora. |

| Post-Training [32] | Align model with human preferences and specific tasks (e.g., instruction following). | Growing cost, but less than pretraining; increasingly uses synthetic/AI-generated data. |

Experimental Training Pipeline Protocol

The modern LLM training pipeline is broadly divided into pretraining and post-training. For AV, this pipeline is applied to adapt a general-purpose model to the specific task of stylistic analysis [32] [34].

Pipeline Workflow Diagram

Protocol: Post-Training for Authorship Verification