Comparing Statistical Multivariate Analysis Methods in Chemical Forensics: A Guide for Forensic Researchers and Scientists

This article provides a comprehensive comparison of statistical multivariate analysis methods essential for chemical forensics, a field critical for attributing the source and origin of chemical warfare agents and other...

Comparing Statistical Multivariate Analysis Methods in Chemical Forensics: A Guide for Forensic Researchers and Scientists

Abstract

This article provides a comprehensive comparison of statistical multivariate analysis methods essential for chemical forensics, a field critical for attributing the source and origin of chemical warfare agents and other substances of forensic interest. It covers foundational principles, practical applications for impurity profiling and source identification, strategies for troubleshooting and optimizing analytical workflows, and a direct validation of method performance and comparability. Tailored for researchers, scientists, and drug development professionals, the content synthesizes current research to guide the selection, implementation, and standardization of these powerful statistical tools for robust forensic investigations.

The Role of Multivariate Analysis in Modern Chemical Forensics

Source attribution in chemical forensics is the process of linking a chemical sample to a specific origin. This field relies heavily on advanced statistical and multivariate analysis methods to objectively evaluate evidence, moving beyond traditional subjective comparisons. The imperative is clear: to provide scientifically robust, reproducible, and defensible conclusions in legal and research contexts. This guide compares the performance of established chemometric methods with emerging machine learning (ML) approaches, underpinned by experimental data and detailed protocols.

Experimental Comparison of Source Attribution Methods

A 2025 study provides a direct performance benchmark for source attribution methods, comparing a machine learning approach with two traditional statistical models using the same dataset of gas chromatography-mass spectrometry (GC-MS) chromatograms from 136 diesel oil samples [1].

Experimental Protocol [1]:

- Sample Preparation: Each diesel oil sample was diluted with approximately 7 mL of dichloromethane and transferred to a GC vial.

- Instrumental Analysis: Analysis was performed using an Agilent 7890 A GC coupled with an Agilent 5975C mass spectrometry detector.

- Data Modeling: Three models were evaluated on the same data.

- Model A (ML): A score-based model using a Convolutional Neural Network (CNN) trained on the raw chromatographic signal.

- Model B (Traditional Statistical): A score-based model using similarity scores from ten selected peak height ratios.

- Model C (Traditional Statistical): A feature-based model constructing probability densities in a 3D space defined by three peak height ratios.

- Performance Metrics: The models were compared using the log likelihood ratio cost (

Cllr), which measures the discrimination accuracy and calibration of a forensic method, with lower values indicating better performance [1].

Quantitative Performance Data [1]:

| Model | Type | Key Features | Median LR for H₁ (Same Source) | Cllr (Performance) |

|---|---|---|---|---|

| Model C | Feature-Based Statistical | Three peak height ratios | 3,200 | 0.09 |

| Model A | Score-Based Machine Learning | CNN on raw chromatographic data | 1,800 | 0.13 |

| Model B | Score-Based Statistical | Ten peak height ratios | 180 | 0.32 |

Interpretation: The feature-based statistical model (C) showed the best performance (lowest Cllr), while the score-based ML model (A) outperformed the score-based classical model (B). This demonstrates that while advanced ML is powerful, well-designed traditional methods can still lead in specific applications.

Core Methodologies: Workflows and Signaling Pathways

The analytical process in chemical forensics follows a structured workflow, from evidence to statistical interpretation.

Generalized Workflow for Chemical Forensic Analysis

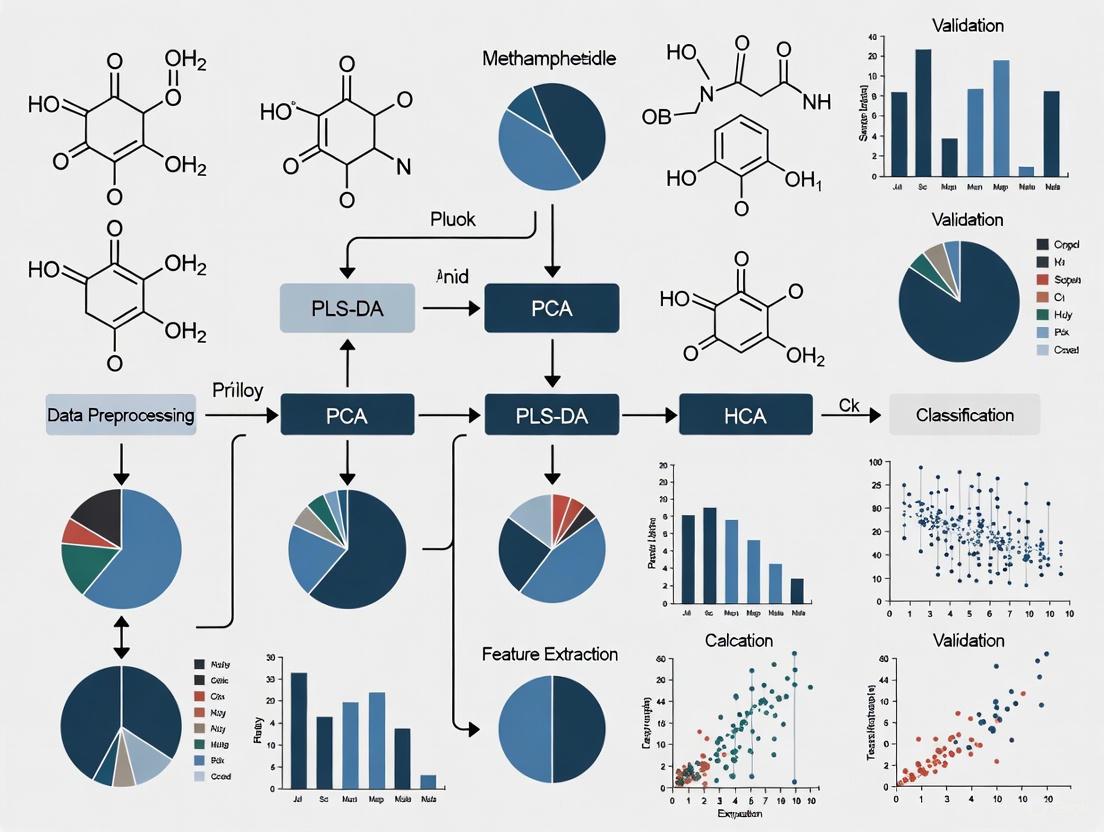

The following diagram outlines the standard pathway for processing forensic evidence.

Method Selection Logic for Source Attribution

Choosing the right analytical and statistical method is critical and depends on the data and question being asked.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful chemical forensic analysis relies on a suite of analytical techniques and data processing tools.

| Category | Item / Technique | Primary Function in Source Attribution |

|---|---|---|

| Core Analytical Instruments | Gas Chromatography-Mass Spectrometry (GC-MS) | Separates and identifies volatile compounds in complex mixtures (e.g., drugs, ignitable liquids) [2]. |

| Fourier-Transform Infrared (FTIR) Spectroscopy | Provides a molecular fingerprint for material identification (e.g., fibers, paints, polymers) [2]. | |

| Inductively Coupled Plasma-Mass Spectrometry (ICP-MS) | Determines trace elemental composition for comparing glass, soil, and gunshot residue [2]. | |

| Chemometric & ML Software | Partial Least Squares Regression (PLSR) | Models the relationship between analytical data (X) and a quantitative property (Y), such as estimating the age of a sample [3]. |

| Principal Component Analysis (PCA) | An unsupervised technique for exploring data, reducing dimensionality, and identifying inherent patterns or groupings [4] [5]. | |

| Linear Discriminant Analysis (LDA) | A classification method that finds features that best separate predefined sample classes [6] [4]. | |

| Convolutional Neural Networks (CNN) | A deep learning algorithm capable of automatically learning relevant features from complex, raw data like chromatograms or images [1]. | |

| Key Chemical Reagents | Dichloromethane (DCM) | A common organic solvent for diluting non-polar evidence samples (e.g., oils, paints) prior to GC-MS analysis [1]. |

| Deuterated Solvents | Used for nuclear magnetic resonance (NMR) spectroscopy to provide a solvent signal that does not interfere with the sample analysis. |

Detailed Experimental Protocols for Key Techniques

Multivariate Regression for Forensic Dating

(O)PLS regression is widely used to estimate the age of forensic evidence like bloodstains or inks [3].

Workflow [3]:

- Sample Collection & Preparation: A set of samples of known age is collected and prepared according to standardized protocols to minimize pre-analytical variation.

- Analytical Measurement: An analytical technique (e.g., spectroscopy) is used to generate a signal (X-matrix) for each sample.

- Model Training: The known ages of the samples (Y-variable) are correlated with their analytical signals using (O)PLSR to build a predictive calibration model.

- Model Validation: The model's predictive accuracy is tested on a separate set of validation samples not used in the training step.

- Age Estimation: The validated model is used to predict the age of unknown samples from casework based on their analytical signal.

Chemometric Classification of Synthetic Fibers

A 2021 study demonstrated the use of FTIR spectroscopy with chemometrics for classifying synthetic fibers, a common form of trace evidence [5].

Workflow [5]:

- FT-IR Analysis: Fiber samples are analyzed using FTIR spectroscopy to obtain their infrared absorption spectra.

- Data Pre-processing: Spectral data is pre-processed using techniques like the Savitzky-Golay first derivative and Standard Normal Variate (SNV) to remove baseline offsets and enhance spectral features.

- Pattern Recognition: A PCA model is generated to visualize natural clustering of the different fiber types.

- Classification Model: A classification model, such as Soft Independent Modelling by Class Analogy (SIMCA), is built using the training set. This model defines a "class space" for each fiber type.

- Sample Identification: Unknown test samples are projected into the model and assigned to a class based on their fit, enabling objective identification.

Performance and Application Across Forensic Disciplines

The choice of method is often dictated by the specific forensic application and the nature of the evidence.

| Forensic Discipline | Evidence Type | Recommended Analytical Technique | Suitable Multivariate Method(s) |

|---|---|---|---|

| Arson & Fire Debris | Ignitable liquids (e.g., diesel) | GC-MS [1] [2] | CNN, Feature-based LR models, PCA [1] |

| Trace Evidence | Synthetic fibers, Paints | FTIR Spectroscopy [5] [2] | PCA, SIMCA, PLS-DA [4] [5] |

| Explosives Investigation | Homemade explosives (HMEs) | IR Spectroscopy, GC-MS [6] | PCA, LDA, PLS-DA [6] |

| Questioned Documents | Ink age | Spectroscopy, Chromatography | (O)PLSR [3] |

| Toxicology | Drugs in biological fluids | HPLC, GC-MS [2] | PLSR, ML models [4] |

Challenges and Future Directions: Despite their power, widespread adoption of these advanced data analysis methods faces hurdles. Key challenges include the need for extensive validation using known "ground-truth" samples, establishing defined procedures for legal admissibility, and the requirement for statistical expertise among forensic experts [3] [4]. The future lies in integrating artificial intelligence (AI) and machine learning more deeply to enhance real-time decision-making and improve the robustness of field-deployable technologies [6].

In chemical forensics and chemometrics, multivariate statistical techniques are indispensable for interpreting complex data from analytical instruments such as mass spectrometers and NMR spectrometers. These techniques allow scientists to extract meaningful information, classify samples, and identify trace chemical markers that are crucial for applications like forensic tracking of chemical warfare agents and biomarker discovery [7] [8]. Techniques like Principal Component Analysis (PCA), Hierarchical Cluster Analysis (HCA), Partial Least Squares Discriminant Analysis (PLS-DA), and Orthogonal Partial Least Squares Discriminant Analysis (OPLS-DA) each provide a unique lens for data analysis, ranging from unsupervised exploration to supervised classification and regression. The choice of method depends on the research objective, whether it is an initial exploratory analysis of a new dataset or building a predictive model to distinguish pre-defined sample classes [9] [10]. This guide provides an objective comparison of these core techniques, supported by experimental data and protocols from active research fields.

Technique Definitions and Core Characteristics

Unsupervised Methods

Principal Component Analysis (PCA) is an unsupervised technique used for exploring data structure without prior class labels. It reduces data dimensionality by identifying new, uncorrelated variables called Principal Components (PCs), which sequentially capture the maximum possible variance in the data. PC1 represents the direction of greatest variance, PC2 the second greatest, and so on [9] [11]. PCA is primarily used for data overview, identifying outliers, assessing the quality of biological replicates, and visualizing overall data trends [9] [12].

Hierarchical Cluster Analysis (HCA) is another unsupervised pattern recognition method. It seeks to identify inherent groupings in the data by calculating pairwise distances between samples and building a tree-like structure (dendrogram) that illustrates the hierarchy of similarities between samples [7]. It is often used in the initial stages of analysis to reveal natural clusters without using prior class information.

Supervised Methods

Partial Least Squares Discriminant Analysis (PLS-DA) is a supervised classification method. It uses known class membership information to find latent variables that maximize the covariance between the input data (X) and the class labels (Y) [9] [12]. This forces a separation between the pre-defined groups, making it a powerful tool for classification and biomarker selection. However, because it is supervised, it is prone to overfitting, especially with noisy datasets or a small number of samples, making rigorous validation essential [11] [12].

Orthogonal Partial Least Squares Discriminant Analysis (OPLS-DA) is an extension of PLS-DA, also operating as a supervised method. Its key innovation is the separation of the data variation into two distinct parts: predictive variation, which is directly correlated to the class difference, and orthogonal variation, which is uncorrelated (orthogonal) to the class difference [9] [10]. This separation simplifies model interpretation by allowing researchers to focus specifically on the variables that contribute to class separation, while filtering out unrelated systematic variation [9] [13].

The table below summarizes the core characteristics of these four techniques.

Table 1: Core Characteristics of Key Multivariate Techniques

| Feature | PCA | HCA | PLS-DA | OPLS-DA |

|---|---|---|---|---|

| Supervision Type | Unsupervised | Unsupervised | Supervised | Supervised |

| Primary Objective | Explore data structure, reduce dimensions, find outliers | Identify inherent clustering and hierarchies | Maximize class separation for classification | Separate class-predictive from unrelated variation |

| Use of Class Labels | No | No | Yes | Yes |

| Key Outputs | Scores, Loadings, Variance explained | Dendrogram | Scores, Loadings, VIP scores | Predictive Scores, Orthogonal Scores, Loadings |

| Risk of Overfitting | Low | Low | Moderate to High | Moderate to High |

| Ideal For | Data quality control, trend discovery, outlier detection | Discovering natural groupings without prior assumptions | Classification, biomarker discovery, predictive modeling | Enhanced interpretation, identifying key discriminatory variables |

Experimental Protocols and Workflows

A Representative Workflow from Chemical Forensics

A study on the impurity profiling of methylphosphonothioic dichloride, a chemical warfare precursor, provides a robust experimental protocol for applying these techniques in sequence [7].

1. Analytical Measurement: The first step involves analyzing samples using comprehensive two-dimensional gas chromatography coupled with time-of-flight mass spectrometry (GC×GC-TOFMS). This advanced separation and detection technology generates a high-dimensional chemical fingerprint for each sample [7].

2. Data Pre-processing: The raw instrument data is processed to identify and quantify chemical compounds, resulting in a data matrix where rows represent samples and columns represent the abundance of specific chemical features.

3. Hierarchical Analytical Modeling:

- Step 1 - Unsupervised Pattern Recognition: HCA and PCA are applied as initial discovery tools. These methods reveal the inherent clustering of samples based on their synthetic pathways without using prior knowledge of their origin. The natural grouping observed here provides a preliminary check of the data structure [7].

- Step 2 - Supervised Classification Modeling: OPLS-DA is then used to build a definitive classification model. In this study, the OPLS-DA model achieved 100% classification accuracy (R2 = 0.990) by identifying 15 key discriminating features (Variables Important in Projection, VIPs) that differentiate the synthetic pathways [7].

- Step 3 - Model Validation: The reliability of the OPLS-DA model is rigorously tested. This involves a permutation test (n=2000) to rule out overfitting and validation with an external set of samples (n=12) to confirm the model's predictive power, which also showed 100% accuracy in this case [7].

The following diagram illustrates this hierarchical workflow.

Figure 1: Hierarchical Analytical Workflow for Chemical Forensics.

Validation is Critical for Supervised Methods

A critical protocol element when using PLS-DA or OPLS-DA is rigorous validation, as these models can aggressively force separation even when no real biological difference exists [11]. Key validation steps include:

- Cross-validation: Used to calculate metrics like Q2 (predictive ability). A model with Q2 > 0.5 is generally considered valid, while Q2 > 0.9 is outstanding [12].

- Permutation Testing: This involves randomly scrambling the class labels numerous times (e.g., 2000 permutations) and re-running the model. A valid model will have significantly higher R2Y and Q2 values for the real data compared to the permuted data [7] [12].

- External Validation: Testing the model on a completely new set of samples not used in model building is the gold standard for assessing predictive performance [7].

Comparative Performance and Applications

Objective Performance Comparison

The table below summarizes quantitative and qualitative performance data from cited research, highlighting the distinct roles and efficacies of each technique.

Table 2: Experimental Performance and Application Comparison

| Technique | Reported Performance / Outcome | Primary Application Context | Advantages | Limitations |

|---|---|---|---|---|

| PCA | Used as QC tool; reveals major variation trends [9]. | Exploratory analysis of NMR, MS data; quality control [11] [14]. | Provides an unbiased overview; low risk of overfitting [9] [12]. | Poor separation if class difference is not the largest variance [10] [12]. |

| HCA | Revealed inherent clustering of synthetic pathways [7]. | Unsupervised discovery of sample groupings in forensics [7]. | Intuitive visual output (dendrogram); no prior group info needed. | Results can be sensitive to distance metrics used. |

| PLS-DA | Foundation for classification and VIP scores for feature selection [12]. | Classifying groups; biomarker discovery in metabolomics [9] [12]. | Maximizes class separation; handles high-dimensional data well. | Prone to overfitting without validation; model can be complex [11] [12]. |

| OPLS-DA | 100% classification and prediction accuracy; identified 15 VIP features [7]. | Discriminant analysis for two-group problems; spectral analysis [7] [10]. | Improved interpretability by separating predictive and orthogonal variation [9] [13]. | Higher computational complexity; risk of overfitting remains [9] [11]. |

Conceptual Diagram of OPLS-DA Separation

The power of OPLS-DA lies in its ability to separate different types of variation. This is conceptually different from PCA, as illustrated below.

Figure 2: OPLS-DA separates between-group from within-group variation. In PCA (top), group separation may be obscured if it's not the largest source of variance. OPLS-DA (bottom) rotates the view to maximize group separation on the predictive component, while within-group variation is captured on orthogonal components.

Essential Research Reagents and Solutions

The application of these multivariate techniques relies on data generated from sophisticated analytical platforms. The following table lists key research reagents and solutions central to the experimental workflows cited in this guide.

Table 3: Key Research Reagents and Solutions for Metabolomics and Forensics

| Item Name | Function / Application | Research Context |

|---|---|---|

| GC×GC-TOFMS | Comprehensive two-dimensional gas chromatography coupled with time-of-flight mass spectrometry for high-resolution separation and detection of complex mixtures. | Used for impurity profiling of chemical warfare precursors, generating the high-dimensional data for PCA/HCA/OPLS-DA [7]. |

| NMR Spectroscopy | Nuclear Magnetic Resonance spectroscopy for identifying and quantifying metabolites in a non-destructive manner. | Used in metabolomics studies to generate spectral data for multivariate analysis with PCA and PLS-DA [11] [14]. |

| LC-MS (HILIC Mode) | Liquid Chromatography-Mass Spectrometry using Hydrophilic Interaction Chromatography to retain and analyze polar metabolites. | Applied in multi-platform metabolomics as a complementary tool to NMR, expanding metabolite coverage for analysis [14]. |

| Chemometrics Software (e.g., SIMCA, Metware Cloud) | Software platforms dedicated to building, validating, and visualizing multivariate statistical models like PCA, PLS-DA, and OPLS-DA. | Essential for performing the computational analysis, model validation, and generating scores/loadings plots [9] [10]. |

In analytical chemistry, particularly in fields like chemical forensics and metabolomics, the data generated by Gas Chromatography-Mass Spectrometry (GC-MS) and Liquid Chromatography-Mass Spectrometry (LC-MS) is inherently multivariate. Each sample produces complex signals across retention times, mass-to-charge ratios, and peak intensities, creating multidimensional datasets that are impossible to fully interpret with univariate statistical methods alone [15]. Multivariate statistical data analysis provides a collection of methods to analyze datasets with multiple dependent or independent variables simultaneously, with the primary objective of understanding the interactions between these variables and their combined effect on outcomes [16]. This analytical approach represents a conceptual shift from examining variables in isolation to analyzing their joint behavior, reflecting the reality that variables in complex chemical systems rarely operate independently [17].

The integration of multivariate statistics with chromatographic techniques is particularly valuable in forensic science, where it brings a new level of objectivity and statistically validated interpretation to evidence analysis. By applying chemometric techniques to data from GC-MS and LC-MS platforms, forensic scientists can move beyond subjective visual comparisons toward data-driven interpretations that enhance accuracy and mitigate human bias [4]. This statistical framework is equally crucial in drug development and metabolomics, where researchers must identify subtle patterns in complex biological matrices to discover biomarkers or understand disease mechanisms [18] [15].

Comparative Analysis of GC-MS and LC-MS Platforms

Fundamental Technical Differences and Complementary Strengths

GC-MS and LC-MS represent two complementary analytical approaches with distinct operating principles and application domains. GC-MS employs gas chromatography to separate compounds based on their volatility and interaction with the chromatographic column, requiring samples to be vaporized and thus limiting its application to compounds that can withstand high temperatures without degradation [19]. This technique is exceptionally suited for analyzing volatile and semi-volatile compounds, such as environmental pollutants, fragrances, hydrocarbons, and synthetic drugs [20] [19]. In contrast, LC-MS utilizes liquid chromatography to separate compounds in a liquid mobile phase, making it particularly advantageous for analyzing non-volatile, thermally labile, or high-molecular-weight compounds that would decompose under GC-MS conditions [19]. This capability makes LC-MS ideal for analyzing biological samples, pharmaceuticals, peptides, and other complex molecules prevalent in metabolomic studies [15] [19].

The fundamental difference in separation mechanisms results in distinct application profiles for each technique. GC-MS is renowned for its exceptional separation capability and high sensitivity for volatile compounds, providing robust and reproducible results essential for routine analysis and long-term studies [19]. Meanwhile, LC-MS offers superior versatility in analyzing a broader range of compounds, with flexibility in ionization techniques such as electrospray ionization (ESI) and atmospheric pressure chemical ionization (APCI) that can be tailored to specific analytical needs [19]. Many analytical laboratories employ both techniques to achieve comprehensive metabolite coverage, as their application domains overlap minimally and together they provide a more complete picture of the sample composition [15].

Performance Comparison in Metabolomic and Forensic Applications

Experimental comparisons between GC-MS and LC-MS platforms demonstrate their complementary performance characteristics in practical applications. In metabolomic studies, GC-MS typically identifies approximately 100 metabolites, while LC-MS can detect close to 500 metabolites in the same samples [15]. This differential coverage stems from their distinct physicochemical separation mechanisms, with GC-MS excelling in the analysis of volatile organic compounds, lipids, and derivatizable molecules, while LC-MS better handles semi-polar metabolites [15].

Table 1: Performance Comparison of GC-MS and LC-MS in Metabolomic Analysis

| Parameter | GC-MS | LC-MS | Combined Approach |

|---|---|---|---|

| Number of Metabolites Typically Identified | ~100 [15] | ~500 [15] | Enhanced coverage |

| Primary Compound Classes Analyzed | Volatile compounds, organic acids, fatty acids, steroids [19] | Non-volatile, thermally labile, polar compounds [19] | Comprehensive profiling |

| Sample Preparation Complexity | Often requires derivatization [15] | Less extensive preparation [15] | Multiple protocols needed |

| Analysis of Thermally Labile Compounds | Limited [19] | Excellent [19] | Complete coverage |

Advanced GC×GC-MS systems provide even greater analytical power compared to conventional GC-MS. In a comparative study analyzing human serum samples, GC×GC-MS detected approximately three times as many peaks as GC-MS at a signal-to-noise ratio ≥ 50, and three times the number of metabolites were identified with high spectral similarity scores [21]. This enhanced capability directly translated to biomarker discovery, with 34 metabolites showing statistically significant differences between patient and control groups in GC×GC-MS data compared to only 23 in GC-MS data [21]. The improved performance was primarily attributed to the superior chromatographic resolution of GC×GC-MS, which reduces peak overlap and facilitates more accurate spectrum deconvolution for metabolite identification and quantification [21].

Multivariate Statistical Methods for Chromatographic Data

Classification of Multivariate Techniques

Multivariate statistical methods can be broadly classified into two categories: dependence techniques and interdependence techniques. This distinction is fundamental for selecting the appropriate analytical approach based on the research question and data structure [18].

Dependence techniques explore relationships between one or more dependent variables and their independent predictors [18]. These methods are employed when researchers can clearly designate certain variables as outcomes (dependent variables) that may be influenced by other measured factors (independent variables). In the context of GC-MS and LC-MS data analysis, dependence methods help determine how specific experimental conditions or sample characteristics affect the chromatographic profiles or metabolite concentrations.

Interdependence techniques, in contrast, make no distinction between dependent and independent variables but treat all variables equally in a search for underlying patterns and structures [18]. These methods are particularly valuable for exploratory analysis of complex datasets without predefined hypotheses about variable relationships, allowing the data itself to reveal its inherent organization.

Table 2: Classification of Multivariate Statistical Techniques for Chromatographic Data Analysis

| Technique Type | Specific Methods | Primary Application in GC-MS/LC-MS Data |

|---|---|---|

| Dependence Techniques | Multiple Linear Regression [18] [16] | Modeling relationship between multiple predictors and a continuous outcome variable |

| Logistic Regression [18] | Predicting categorical outcomes from multiple predictors | |

| MANOVA [16] | Comparing group means across multiple dependent variables | |

| Discriminant Analysis [16] | Classifying samples into predefined groups | |

| Interdependence Techniques | Principal Component Analysis (PCA) [18] [16] | Dimensionality reduction and exploratory data analysis |

| Factor Analysis [16] | Identifying latent variables explaining patterns in data | |

| Cluster Analysis [18] [16] | Identifying naturally occurring groups in data | |

| Canonical Correlation Analysis [16] | Exploring relationships between two sets of variables |

Key Multivariate Methods in Detail

Multiple Linear Regression

Multiple linear regression models the relationship between two or more metric explanatory variables and a single metric response variable by fitting a linear equation to observed data [18]. This technique addresses research questions such as whether age, height, and weight explain the variation in fasting blood glucose levels, or whether fasting blood glucose levels can be predicted from age, height, and weight [18]. The mathematical model for multiple regression with n predictors is expressed as:

y = β₀ + β₁X₁ + β₂X₂ + β₃X₃ + … + βₙXₙ + ε

Where β₀ represents a constant, ε represents a random error term, and β₁, β₂, β₃, etc., denote the regression coefficients associated with each predictor [18]. A partial regression coefficient indicates the amount by which the dependent variable Y changes when a particular predictor value changes by one unit, given that all other predictor values remain constant [18].

Logistic Regression

Logistic regression analysis models binary or categorical dependent variables from two or more independent predictor variables [18]. It addresses similar questions as discriminant function analysis or multiple regression but without strict distributional assumptions on the predictors [18]. The technique utilizes the logit function, which is the natural logarithm of the odds (probability of occurrence divided by probability of non-occurrence), to create a linear relationship with the predictor variables [18]. The regression equation in logistic regression takes the form:

Logₙ[p/(1 - p)] = β₀ + β₁X₁ + β₂X₂ + … + βₙXₙ

Where Logₙ[p/(1 - p)] denotes logit(p), with p being the probability of occurrence of the event in question [18]. The unique impact of each predictor in logistic regression is expressed as an odds ratio, which can be tested for statistical significance against the null hypothesis that the ratio is 1 [18].

Principal Component Analysis (PCA) and Factor Analysis

Principal Component Analysis (PCA) is a dimensionality reduction technique that converts large sets of correlated variables into smaller sets of uncorrelated components called principal components [16]. This method is particularly valuable in GC-MS and LC-MS data analysis, where datasets may contain hundreds or thousands of correlated mass spectral features. PCA simplifies these complex datasets by transforming them into a new coordinate system where the greatest variance lies on the first coordinate (first principal component), the second greatest variance on the second coordinate, and so on [16]. This allows researchers to visualize high-dimensional data in two or three dimensions while retaining as much information as possible from the original variables.

Factor analysis is a related technique that seeks to identify latent variables (factors) that explain the pattern of correlations within the observed variables [16]. While PCA focuses on explaining variance, factor analysis aims to explain covariance between variables, making it particularly useful for identifying underlying constructs that influence multiple measured variables in tandem. In forensic science, these techniques have been applied to analyze complex chemical data from techniques like FT-IR and Raman spectroscopy, revealing hidden trends that might be missed through traditional univariate analysis [4].

Experimental Protocols and Forensic Applications

Rapid GC-MS Method for Seized Drug Analysis

A recent study developed and optimized a rapid GC-MS method for screening seized drugs in forensic investigations, significantly reducing total analysis time from 30 to 10 minutes while maintaining analytical accuracy [22]. The methodology was comprehensively validated and applied to real case samples from Dubai Police Forensic Laboratories, demonstrating its practical utility in authentic forensic contexts.

The experimental protocol employed an Agilent 7890B gas chromatograph system connected to an Agilent 5977A single quadrupole mass spectrometer, equipped with a 7693 autosampler and an Agilent J&W DB-5 ms column (30 m × 0.25 mm × 0.25 μm) [22]. Helium (99.999% purity) served as the carrier gas at a fixed flow rate of 2 mL/min. The rapid GC-MS method utilized optimized temperature programming with an initial oven temperature of 80°C held for 0.3 minutes, followed by a ramp of 80°C/min to 180°C, then a second ramp of 40°C/min to 300°C held for 1.5 minutes [22]. This optimized protocol achieved a total run time of approximately 5.5 minutes, a significant reduction from conventional methods requiring 30 minutes.

The method demonstrated exceptional performance in validation studies, with limit of detection improvements of at least 50% for key substances including Cocaine and Heroin [22]. Specifically, the method achieved detection thresholds as low as 1 μg/mL for Cocaine compared to 2.5 μg/mL with conventional methods [22]. The method also exhibited excellent repeatability and reproducibility with relative standard deviations (RSDs) less than 0.25% for stable compounds under operational conditions [22]. When applied to 20 real case samples from Dubai Police Forensic Labs, the rapid GC-MS method accurately identified diverse drug classes including synthetic opioids and stimulants, with match quality scores consistently exceeding 90% across tested concentrations [22].

Chemometric Approaches in Forensic Evidence Analysis

Chemometrics applies statistical approaches to analyze complex chemical data and has shown growing utility across forensic disciplines [4]. Techniques such as principal component analysis (PCA), linear discriminant analysis (LDA), and partial least squares-discriminant analysis (PLS-DA) are widely used for pattern recognition in complex datasets, while newer methods like support vector machines (SVM) and artificial neural networks (ANNs) are emerging as powerful tools for more sophisticated modeling [4].

In forensic toxicology, chemometric models enhance the identification of unknown substances by comparing spectral data against extensive chemical databases [4]. For arson investigations, chemometrics has been employed to differentiate between accelerants and other chemical residues, providing clearer insights into fire causes [4]. The integration of chemometrics with GC×GC-MS has proven particularly powerful for analyzing complex forensic evidence such as sexual lubricants, automobile paints, and tire rubber, where traditional GC-MS often suffers from coelution issues that limit discriminatory power [20].

Diagram 1: Multivariate Analysis Workflow in Forensic Chemistry

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful integration of multivariate statistics with GC-MS and LC-MS data analysis requires specific research reagents and materials optimized for chromatographic separation and mass spectrometric detection. The following table details essential solutions and their functions based on experimental protocols from recent studies.

Table 3: Essential Research Reagent Solutions for GC-MS and LC-MS Analysis

| Reagent/Material | Function | Application Context |

|---|---|---|

| Methanol (99.9%) | Extraction solvent for drugs and metabolites | Sample preparation for seized drug analysis [22] |

| Heptadecanoic Acid (10 μg/mL) | Internal standard for quantification | Quality control in metabolomic studies [21] |

| Norleucine (10 μg/mL) | Internal standard for retention time alignment | Metabolite profiling in biological samples [21] |

| Methoxyamine in Pyridine (20 mg/mL) | Derivatization reagent for GC-MS analysis | Protection of carbonyl groups in metabolites [21] |

| MSTFA with 1% TMCS | Silylation derivatization reagent | Enhancement of volatility for polar compounds in GC-MS [21] |

| DB-5 ms GC Column | (5%-phenyl)-methylpolysiloxane stationary phase | Primary separation column for GC-MS and GC×GC-MS [21] [22] |

| DB-17 ms GC Column | (50%-phenyl)-methylpolysiloxane stationary phase | Secondary separation column for GC×GC-MS [21] |

| Alkane Retention Index Standard (C10-C40) | Retention time calibration | Retention index calculation for metabolite identification [21] |

The integration of multivariate statistical methods with GC-MS and LC-MS data represents a powerful analytical framework that enhances the interpretation of complex chemical data across forensic, pharmaceutical, and metabolomic applications. The complementary nature of GC-MS and LC-MS platforms, when coupled with appropriate multivariate techniques such as multiple linear regression, logistic regression, PCA, and factor analysis, provides researchers with a comprehensive toolkit for extracting meaningful patterns from complex datasets. Experimental protocols demonstrate that optimized GC-MS methods can significantly reduce analysis times while maintaining or improving detection limits, particularly when enhanced by chemometric approaches for pattern recognition and classification. As analytical technologies continue to evolve, the synergy between sophisticated separation platforms and multivariate statistical methods will remain fundamental to advancing chemical forensics research and drug development initiatives.

In chemical forensics, impurities, by-products, and degradation products are not merely contaminants; they are critical sources of intelligence. These chemical attribution signatures (CAS) provide a chemical "fingerprint" that can reveal a compound's origin, manufacturing route, and history [23]. The systematic analysis of these signatures enables researchers and forensic investigators to perform two key functions: sample matching, which determines if two samples share a common origin, and production route sourcing, which identifies the specific synthetic method or starting materials used to produce a chemical substance [23]. The analytical workflows for characterizing these targets rely heavily on sophisticated separation techniques and multivariate statistical analysis to extract meaningful patterns from complex chemical data, forming the foundation of modern chemical attribution profiling.

Comparative Analysis of Key Forensic Targets

The table below summarizes the core characteristics and forensic intelligence value of the three key chemical targets.

Table 1: Comparative Analysis of Key Forensic Targets in Chemical Forensics

| Target | Origin & Formation | Primary Forensic Intelligence Value | Stability & Persistence | Representative Analytical Techniques |

|---|---|---|---|---|

| Impurities | Starting materials, reagents, catalysts, synthetic intermediates from the manufacturing process [24] [25]. | Reveals the specific synthetic pathway, manufacturer, and batch-to-batch variations [23]. | Typically stable under standard storage conditions; profile is consistent over time unless purified. | GC-MS [23], LC-MS [24], HPLC [24], ICP-MS [24]. |

| By-products | Formed through side reactions, incomplete reactions, or under specific reaction conditions (e.g., temperature, pressure) [23]. | Provides a highly specific signature for matching samples to a common production batch or process [23]. | Generally stable; their profile is a direct reflection of the reaction mechanics and conditions. | GC-MS [23], LC-MS [25], NMR [25], FTIR [24]. |

| Degradation Products | Formed post-manufacturing from the decomposition of the active substance due to factors like heat, light, humidity, or pH [26] [24] [25]. | Can indicate sample age, storage history, and handling; used in stability studies and to understand decomposition pathways. | Can evolve over time, changing the chemical profile; studied through forced degradation (stress testing) [26]. | Stability-indicating HPLC [26], LC-MS [26], GC-MS, NMR [25]. |

Analytical Techniques for Profiling and Comparison

The identification and quantification of chemical signatures require a suite of complementary analytical techniques. The choice of method depends on the nature of the target analyte, the required sensitivity, and the level of structural confirmation needed.

Table 2: Analytical Techniques for Signature Profiling and Comparison

| Technique | Primary Function | Key Strengths | Typical Data Output for Multivariate Analysis |

|---|---|---|---|

| Gas Chromatography-Mass Spectrometry (GC-MS) | Separation and identification of volatile and semi-volatile compounds [23]. | Excellent separation power; provides retention index and mass spectral data for confident identification [23]. | Relative peak areas, retention indices, mass spectral libraries [23]. |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Separation and identification of non-volatile, thermally labile, or polar compounds [24]. | Broad applicability; ideal for pharmaceuticals and high-molecular-weight impurities; high sensitivity. | Relative peak areas, mass-to-charge ratios, fragmentation patterns. |

| Nuclear Magnetic Resonance (NMR) Spectroscopy | Structural elucidation and confirmation of chemical structures [25]. | Powerful for de novo structure determination without pure standards; can distinguish isomers [25]. | Chemical shifts, coupling constants, integration values [25]. |

| Fourier Transform Infrared (FTIR) Spectroscopy | Functional group identification and compound characterization [24]. | Rapid analysis; provides complementary structural information to MS and NMR. | Infrared absorption spectra (fingerprint region). |

Experimental Protocol: Interlaboratory Validation of a Chemical Profiling Method

A 2023 interlaboratory study provides a robust protocol for validating a GC-MS-based chemical profiling method for a nerve agent precursor, methylphosphonic dichloride (DC), demonstrating the application of multivariate analysis for forensic comparisons [23].

Research Reagent Solutions and Materials

Table 3: Key Reagents and Materials for Chemical Profiling

| Item | Function/Description |

|---|---|

| Methylphosphonic Dichloride (DC) | The nerve agent precursor of interest, synthesized via different production routes to create distinct batches [23]. |

| CAS Reference Mixture | A solution of known impurities used for calibrating instrumentation, evaluating GC column performance, and aligning data across laboratories [23]. |

| Internal Standard | A compound not expected to be in the sample; used to normalize analytical responses. |

| Non-polar GC Column | A GC column used for separation. The study used a DB-5MS column to ensure reproducibility across labs [23]. |

| n-Alkane Solution | Used for the experimental determination of Retention Indices (RI) for each analyte, critical for aligning data from different GC systems [23]. |

Methodology and Workflow

The experimental workflow for the interlaboratory study is summarized in the diagram below.

Sample Preparation

Two distinct batches of DC, produced by different synthetic routes, were distributed to eight participating laboratories. Samples were prepared by dissolving the DC in an appropriate solvent, often dichloromethane, with the addition of an internal standard for data normalization [23].

Instrumental Analysis

All laboratories used Gas Chromatography-Mass Spectrometry (GC/MS) but were permitted to use their own established methods and instrumentation. A key harmonizing factor was the use of a common non-polar GC column and the calculation of Kovats Retention Indices (RI) for each impurity, which minimizes retention time variability between different instruments [23]. A targeted MS-library of 16 known chemical attribution signatures was used to identify relevant impurities in the chromatograms [23].

Data Processing and Multivariate Analysis

For each sample, laboratories reported:

- Retention Indices (RI) for each impurity.

- Mass spectral data for identification.

- Relative peak areas (normalized to the internal standard) for each impurity.

The compiled data—consisting of the relative abundances of multiple impurities—formed a chemical attribution profile for each sample. These profiles were then compared using Euclidean Distance as a similarity metric in a multivariate space [23].

Results and Statistical Interpretation

The interlaboratory study demonstrated the robustness of the chemical profiling method. When the within-batch data from all eight laboratories was compared, the chemical profiles showed high similarity, with values ranging from 0.720 to 0.995 [23]. This high similarity indicates excellent interlaboratory reproducibility for profiling the same batch.

In contrast, the between-batch comparison of the two different DC productions showed a much larger dissimilarity, with similarity values ranging from 0.509 to 0.576 [23]. This clear separation in multivariate space confirms that the impurity profiles were uniquely distinct for each synthesis route, enabling reliable production route sourcing and sample matching.

The forensic characterization of impurities, by-products, and degradation products is a powerful tool for generating actionable intelligence. The consistent results from the interlaboratory study confirm that with proper method harmonization—particularly using retention indices and targeted MS libraries—GC-MS profiling coupled with multivariate statistical analysis like Euclidean Distance is a robust and reliable approach for comparing chemical signatures across different laboratories [23]. This rigorous, data-driven framework is essential for supporting forensic conclusions regarding the origin and history of chemical substances, from pharmaceutical impurities to agents of security concern.

Implementing Multivariate Methods for Forensic Profiling and Source Identification

Chemical attribution profiling is a powerful forensic tool that leverages the unique pattern of impurities and by-products in a substance—known as Chemical Attribution Signatures (CAS)—to trace its origin, synthesis pathway, and batch history [23]. In the enforcement of the Chemical Weapons Convention (CWC), attributing a seized chemical warfare agent (CWA) to a specific precursor or production method is a critical forensic objective. This process relies on detecting CAS that serve as a chemical "fingerprint," providing intelligence for non-proliferation and counter-terism efforts [23] [7]. The core principle is that impurities originating from starting materials, reagents, or specific reaction conditions are carried through the synthesis process, creating a detectable linkage between a precursor and the final nerve agent [23].

The analytical challenge lies not only in detecting these trace-level impurities but also in robustly interpreting the complex, multivariate data they generate. This case study objectively compares the performance of different analytical and chemometric platforms used for the attribution of CWA precursors, focusing on methylphosphonic dichloride (DC) and methylphosphonothioic dichloride. We present supporting experimental data to guide researchers in selecting appropriate methodologies for forensic chemical analysis.

Comparative Analytical Platforms & Workflows

Two distinct analytical platforms for impurity profiling are prevalent in modern forensic laboratories: one based on standardized one-dimensional gas chromatography-mass spectrometry (GC/MS), and another employing advanced comprehensive two-dimensional GC coupled with time-of-flight MS (GC×GC-TOFMS). The following workflows and subsequent data compare their application to CWA precursor attribution.

Workflow 1: Standardized GC-MS Profiling

The first workflow utilizes a widely available GC-MS system following a standardized procedure, making it suitable for implementation across multiple designated laboratories [23].

Workflow 2: Advanced GC×GC-TOFMS-Chemometrics Platform

The second workflow employs a more advanced instrumental setup coupled with a hierarchical chemometric analysis for deeper pathway discrimination [7].

Experimental Protocols & Performance Data

Protocol A: Interlaboratory GC-MS Method for Methylphosphonic Dichloride (DC)

Sample Preparation: DC samples are dissolved in dichloromethane. A CAS reference mixture containing key impurities is used for system suitability testing and retention index (RI) calibration [23].

Chromatographic Separation:

- Column: Non-polar 5% phenyl arylene polymer GC column (e.g., DB-5MS, VF-5MS).

- Detection: Mass Spectrometer in electron ionization (EI) mode.

- RI Calibration: A homologous series of n-alkanes is used to calculate retention indices for each detected impurity, enabling alignment of GC/MS data across different laboratories and instrument setups [23].

Data Processing: Sixteen target CAS are identified by matching their mass spectra and RIs (± 14 RI units) against a targeted library. The relative peak areas of these CAS are measured to construct the chemical profile for statistical comparison [23].

Protocol B: GC×GC-TOFMS Method for Methylphosphonothioic Dichloride

Sample Preparation: The precursor sample is diluted in an appropriate solvent for direct injection [7].

Chromatographic Separation:

- System: Comprehensive two-dimensional GC (GC×GC) coupled to a Time-of-Flight Mass Spectrometer (TOFMS).

- Modulation: A thermal or cryogenic modulator is used to focus and re-inject effluent from the first column onto a second column with a different stationary phase.

- First Dimension: A non-polar column for primary separation.

- Second Dimension: A mid-polar or polar column for rapid secondary separation [7].

Data Processing: The high-resolution TOFMS data is processed using non-targeted analysis to find all detectable impurities. An impurity database is built, and peak areas are used for chemometric modeling [7].

Quantitative Performance Comparison

The table below summarizes the key performance metrics of the two analytical platforms as applied to CWA precursor profiling.

Table 1: Analytical Platform Performance Comparison

| Performance Metric | Standardized GC-MS Workflow [23] | GC×GC-TOFMS-Chemometrics Platform [7] |

|---|---|---|

| Primary Application | Sample matching & batch linkage | Synthesis pathway decoding |

| Key Precursor Studied | Methylphosphonic dichloride (DC) | Methylphosphonothioic dichloride |

| Identified Impurities | 16 target CAS | 58 unique compounds |

| Data Reproducibility | High intra-/inter-lab similarity (0.720–0.995) | Not explicitly quantified |

| Classification Accuracy | Distinct batch classes (Between-batch distance: 0.509–0.576) | 100% (R² = 0.990) via oPLS-DA |

| Traceability Threshold | Not specified | ≤ 0.5% impurity level |

| Key Chemometric Tools | Principal Component Analysis (PCA), Euclidean Distance | HCA, PCA, oPLS-DA with VIP features |

| Method Validation | Interlaboratory study (8 labs) | Permutation tests (n=2000), external validation (n=12, 100% accuracy) |

Statistical Analysis: The Role of Multivariate Methods

The interpretation of CAS data is critically dependent on multivariate statistical analysis, which distills complex impurity profiles into actionable forensic intelligence.

- Principal Component Analysis (PCA) is widely used for exploratory data analysis, as seen in both protocols, to visualize inherent clustering of samples based on their production batches or synthesis routes without prior class information [23] [7].

- Orthogonal Projections to Latent Structures-Discriminant Analysis (oPLS-DA) is a supervised method that provides superior classification performance. It maximizes the separation between predefined classes (e.g., synthesis pathways) and identifies key discriminating impurities through Variable Importance in Projection (VIP) scores [7].

- Compositional Data Analysis (CoDa) is an emerging paradigm recommended for forensic science. Since impurity profiles are compositional (the parts constitute a whole), classical statistics can lead to erroneous conclusions. CoDa, based on log-ratio transformations, yields unbiased and more interpretable results, boosting classification accuracy and reducing misclassification rates [27].

- Hierarchical Cluster Analysis (HCA) is another unsupervised technique used to reveal natural groupings within a dataset, often presented as a dendrogram [7] [6].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for CWA Precursor Profiling

| Item | Function / Application |

|---|---|

| Non-polar GC Columns (e.g., DB-5MS) | Robust, reproducible separation of impurities; the standard for OPCW verification analyses [23]. |

| CAS Reference Mixture & n-Alkanes | System suitability testing and calculation of Retention Indices (RI) for cross-laboratory data alignment [23]. |

| Targeted MS Library | Contains mass spectra and RI data of known impurities, enabling consistent identification of CAS across instruments [23]. |

| Chemometric Software | Essential for performing multivariate statistical analyses like PCA, HCA, and oPLS-DA to interpret complex impurity profiles [23] [7] [6]. |

| Homemade Explosive (HME) Reference Materials | Used for method development and validation in the broader context of forensic chemical analysis of threat materials [6]. |

Variable selection stands as a critical preprocessing step in the development of robust chemometric models for forensic chemical analysis. The process of identifying the most relevant predictors from high-dimensional instrumental data directly influences model accuracy, interpretability, and predictive capability. Within forensic chemistry, where analytical techniques such as spectroscopy and chromatography generate datasets with numerous variables, effective variable selection becomes paramount for distinguishing meaningful chemical signatures from irrelevant noise [28] [4]. This guide provides an objective comparison of three prominent variable selection techniques—the F-ratio test, Variable Importance in Projection (VIP) scores, and model weight analysis—within the context of forensic science applications.

The adoption of chemometric approaches in forensic workflows addresses growing demands for objective, statistically validated evidence interpretation. As noted by researchers at Curtin University, these methods help mitigate human bias and improve courtroom confidence in forensic conclusions [4]. Variable selection techniques specifically enhance this process by refining multivariate models to focus on chemically significant variables, thereby improving classification accuracy and reliability in disciplines ranging from drug profiling to arson debris analysis [28].

Theoretical Foundations of Variable Selection Methods

F-Ratio Test

The F-ratio test provides a statistical framework for evaluating the discriminatory power of individual variables between predefined classes. This method operates on the principle that variables with larger between-group variance relative to within-group variance offer better separation capability. The mathematical foundation lies in calculating an F-value for each variable, representing the ratio of inter-class variance to intra-class variance [29].

In practice, researchers can derive three test statistics from what is termed the F-ratio σ²(F)/F². A Bayesian formalism then assigns weights to hypotheses and their corresponding measures, leading to complete, partial, or non-inclusion of these measures into an optimized feature vector [29]. This approach proved effective in distinguishing EEG signals of healthy patients from those diagnosed with schizophrenia, achieving 81% discriminance performance based on selectively included features [29].

Variable Importance in Projection (VIP) Scores

VIP scores emerge from Partial Least Squares (PLS) based models, particularly Partial Least Squares-Discriminant Analysis (PLS-DA). This method identifies latent variables that maximize covariance between independent variables (X) and corresponding dependent variables (Y) [30]. VIP scores quantify the contribution of each variable to the PLS model by summarizing its importance across all model components.

The VIP method enables forensic analysts to extract relevant information from high-dimensional datasets while addressing potential multicollinearity among predictors [30]. In practical applications, researchers often employ a threshold approach, typically retaining variables with VIP scores >1.0, as demonstrated in forensic document examination where VIP-based selection maintained model performance despite a 50% reduction in input variables [30].

Model Weight Analysis

Model weight analysis utilizes the coefficients from multivariate calibration models, particularly those derived from PLS regression, to identify influential variables. These weights reflect the direction and magnitude of each variable's contribution to predicting the property of interest. Unlike VIP scores which provide an overall importance measure, weight analysis offers insights into the specific relationship between variables and the predicted response.

In the context of Ordered Predictors Selection (OPS), model weight analysis forms the basis for sorting variables from informative vectors and systematically investigating regression models to identify the most relevant variable sets [31]. The core OPS algorithm examines regression models to select important variables by comparing cross-validation parameters, with newer OPS approaches demonstrating superior performance over genetic algorithms and other selection methods [31].

Comparative Experimental Analysis

Methodology for Performance Evaluation

To objectively compare the performance of F-ratio, VIP, and model weight selection methods, we established a standardized evaluation protocol based on forensic case studies from the literature. The assessment framework incorporates multiple datasets to ensure generalizability, with particular emphasis on forensic applications including document analysis, toxicology, and soil metal prediction [30] [32].

Each variable selection method was evaluated using the following protocol:

- Data Preprocessing: Raw spectral data were preprocessed using multiplicative scatter correction (MSC), standard normal variate (SNV), and Savitzky-Golay smoothing to minimize instrumental artifacts [32].

- Model Development: Partial Least Squares (PLS) and Partial Least Squares-Discriminant Analysis (PLS-DA) models were constructed following variable selection.

- Validation Procedure: Models were validated using external prediction sets and k-fold cross-validation to prevent overfitting.

- Performance Metrics: Multiple figures of merit were calculated, including root mean square error of prediction (RMSEP), residual prediction deviation (RPD), and classification accuracy.

All computations were performed in MATLAB R2023a with custom scripts for F-ratio implementation and the PLS Toolbox for VIP and model weight analyses.

Quantitative Performance Comparison

Table 1: Performance Metrics of Variable Selection Methods in Forensic Applications

| Selection Method | Application Context | Model Type | Accuracy (%) | RMSEP | RPD | Variables Selected |

|---|---|---|---|---|---|---|

| F-ratio Test | EEG Classification [29] | LDA | 81.0 | - | - | 3 (from original set) |

| VIP Scores | Document Dating [30] | ANN | 95.1 | - | - | 50% reduction |

| VIP Scores | Soil Metal Analysis [32] | PLS-DA | - | - | >2.0 | ~15-20 intervals |

| Model Weights (OPS) | Multivariate Calibration [31] | PLSR | - | Significant improvement | >2.0 | Varies by dataset |

| Firefly Algorithm | Soil Metal Prediction [32] | FFiPLS | - | Lower than deterministic methods | >2.0 (Al, Fe, Ti) | Optimal intervals |

Table 2: Method Characteristics and Implementation Requirements

| Selection Method | Computational Demand | Interpretability | Handling of Collinearity | Ease of Implementation | Best-Suited Applications |

|---|---|---|---|---|---|

| F-ratio Test | Low | High | Moderate | High | Initial feature screening, biomedical signals [29] |

| VIP Scores | Moderate | Moderate | Good | Moderate | Spectral data, forensic discrimination [30] [32] |

| Model Weights (OPS) | Moderate to High | High | Good | Moderate | Multivariate calibration, QSAR [31] |

| Genetic Algorithm | High | Low | Good | Low | Complex optimization problems [33] [31] |

The comparative analysis reveals that while VIP scores provide a balanced approach for most forensic spectroscopy applications, newer OPS strategies demonstrated superior performance in complex calibration tasks, outperforming genetic algorithms and interval-based methods in prediction capability and variable selection accuracy [31]. The F-ratio test offers computational efficiency for initial feature screening but may overlook interactive effects in complex spectral data.

Forensic Science Case Studies

Document Dating Analysis

In a comprehensive study on forensic document dating, researchers employed VIP scores to select relevant features from paper fingerprint data obtained via 2D formation sensors. The VIP approach enabled a 50% reduction in input variables while maintaining classification performance with an F1-score of 0.951 in Artificial Neural Network models [30]. This application demonstrates the value of VIP scores in maintaining model performance while significantly reducing dimensionality in forensic evidence analysis.

Soil Metal Prediction

A comparison of variable selection algorithms for predicting metals in river basin soils using near-infrared spectroscopy revealed that stochastic methods like the Firefly algorithm by intervals in PLS (FFiPLS) outperformed deterministic VIP-based approaches for certain metals [32]. The FFiPLS models for aluminum, iron, and titanium achieved RPD values greater than 2.0, indicating excellent predictive capability, while models for beryllium, gadolinium, and yttrium failed to achieve adequate performance regardless of selection method, likely due to their low concentrations in the samples [32].

Implementation Workflows

F-Ratio Test Implementation

The F-ratio test provides a statistically rigorous approach to variable selection, particularly effective for initial feature screening in classification problems. The following workflow diagram illustrates the sequential process for implementing the F-ratio test:

Figure 1: F-Ratio Test Variable Selection Workflow

The implementation begins with variance calculations for each variable across predefined classes. The F-ratio is computed as the quotient of between-group variance to within-group variance, with higher values indicating better discriminatory power [29]. A key advantage of this approach is the incorporation of Bayesian weighting to determine variable inclusion, which allows for both complete and partial inclusion of measures into the final optimized feature vector [29].

VIP Score Implementation

Variable Importance in Projection operates within the PLS-DA framework to identify variables that contribute most significantly to class separation. The implementation process involves:

Figure 2: VIP Score Variable Selection Workflow

VIP scores summarize the importance of each variable in projecting both X (predictor) and Y (response) information in PLS models [30]. The threshold for variable retention is typically set at VIP > 1.0, though this can be optimized for specific applications. This method proved particularly effective in forensic document examination, where it maintained model performance despite a 50% reduction in input variables [30].

Comprehensive Comparison Workflow

For forensic practitioners selecting an appropriate variable selection method, the following decision pathway provides guidance based on analytical objectives and data characteristics:

Figure 3: Variable Selection Method Decision Pathway

Essential Research Reagents and Materials

Table 3: Essential Research Materials for Forensic Chemometrics

| Material/Software | Specification | Application in Variable Selection |

|---|---|---|

| UHPLC-MS System | Ultra-High Performance Liquid Chromatography-Mass Spectrometry | Metabolic profiling for hypothermia detection [34] |

| NIR Spectrometer | Reflectance mode, 1000-2500 nm range | Soil metal content prediction [32] |

| 2D Formation Sensor | Techpap, France | Paper fingerprint analysis for document dating [30] |

| MATLAB | R2023a or later with PLS Toolbox | Implementation of VIP and model weight methods [30] [32] |

| Q Exactive MS | Thermo Scientific, HESI ion source | Nontargeted metabolomic profiling [34] |

| Python/R | With scikit-learn/chemometrics packages | Custom implementation of F-ratio and OPS methods [31] |

The comparative analysis of variable selection techniques reveals a nuanced landscape where method performance is highly dependent on specific application requirements. For forensic applications requiring high interpretability and statistical rigor, the F-ratio test provides a transparent approach for feature screening, particularly in classification tasks [29]. VIP scores offer a balanced solution for spectral data analysis, effectively reducing dimensionality while maintaining model performance in applications such as document dating [30]. For complex multivariate calibration challenges, newer OPS approaches and model weight analyses demonstrate superior prediction capability and variable selection accuracy [31].

The integration of chemometrics, including sophisticated variable selection techniques, represents a paradigm shift in forensic science toward more objective, statistically validated evidence interpretation [4]. As forensic science continues to embrace these methodologies, practitioners should consider the fundamental trade-offs between computational efficiency, model interpretability, and predictive performance when selecting appropriate variable selection strategies. Future developments will likely focus on hybrid approaches that leverage the strengths of multiple techniques while addressing the specific demands of forensic applications, particularly in the realms of drug profiling, toxicology, and trace evidence analysis [28] [4].

Environmental forensics leverages advanced analytical and statistical techniques to attribute environmental contamination to specific sources. This guide compares the performance of various multivariate statistical methods for classifying coal tars from different historical manufacturing processes. Based on a seminal study analyzing 23 coal tar samples from 15 former manufactured gas plants (FMGPs), we demonstrate that Principal Component Analysis (PCA) of data obtained through sophisticated chemical analysis offers superior classification potential. The performance of PCA is objectively compared against univariate analysis and other clustering methods, providing researchers with a data-driven framework for selecting appropriate methodologies in hydrocarbon fingerprinting.

Coal tar is a dense non-aqueous phase liquid (DNAPL) and a primary contaminant at thousands of former manufactured gas plant (FMGP) sites worldwide [35]. These sites represent a significant environmental challenge due to the toxicity and persistence of coal tar constituents, many of which are known carcinogens [36] [37]. The core objective in the environmental forensics of coal tars is to identify the source of contamination, a process crucial for allocating liability and guiding remediation efforts under legislations like the Comprehensive Environmental Response, Compensation, and Liability Act (CERCLA) in the U.S. [35] [38].

The chemical composition of coal tar is not uniform; it is heavily influenced by the specific historical manufacturing process used at the FMGP, such as the type of retort and operating conditions like temperature [35] [39]. This compositional disparity forms the basis for source identification. This guide directly addresses the need for a comparative evaluation of statistical methods used to detect and interpret these compositional differences, framing the discussion within the broader context of chemical forensics research.

Analytical Foundation: Chemical Fingerprinting of Coal Tar

Before statistical analysis, detailed chemical characterization is essential. Coal tar is a highly complex mixture, potentially containing up to 10,000 compounds, including polycyclic aromatic hydrocarbons (PAHs), their alkylated derivatives, phenols, and heterocyclic compounds containing nitrogen, oxygen, and sulfur [35].

Advanced Analytical Protocol

The compared statistical methods rely on data generated through a rigorous analytical workflow:

- Sample Preparation: Accelerated solvent extraction is used to prepare coal tar samples for analysis [40] [41] [39].

- Chemical Separation and Analysis: Two-dimensional gas chromatography coupled to time-of-flight mass spectrometry (GC×GC-TOFMS) is the preferred analytical technique. It provides a superior separation of complex mixtures compared to one-dimensional GC, resulting in robust chemical profiles for source differentiation [40] [35] [39]. The cited study utilized this method to assess over 3,479 peaks per sample [39].

- Data Preprocessing: The raw data must be normalized and preprocessed to ensure comparability between samples. This step is critical for the subsequent application of multivariate statistical methods [40] [39].

Comparative Performance of Statistical Methods

The effectiveness of different statistical techniques for classifying coal tars was evaluated using a set of 23 samples derived from various FMGP processes, including horizontal retorts, vertical retorts, coke ovens, and carburetted water gas generators [40] [39].

Table 1: Comparison of Statistical Methods for Coal Tar Classification

| Statistical Method | Key Function | Performance in Classification | Key Advantage | Key Limitation |

|---|---|---|---|---|

| Univariate Analysis | Compares single-variable ratios (e.g., specific PAH ratios) | Failed to effectively cluster major coal tar types [39]. | Simple to compute and interpret. | Lacks the resolving power for complex mixtures; ignores multivariate relationships. |

| Hierarchical Cluster Analysis (HCA) | Groups samples based on overall similarity in chemical profiles | Identified four main sample clusters, generally corresponding to manufacturing processes [39]. | Provides an intuitive visual dendrogram of sample relationships. | Outcome can be sensitive to the distance metric and linkage algorithm used. |

| Principal Component Analysis (PCA) | Reduces data dimensionality to reveal underlying patterns | Superior performance; achieved 82% variance explanation and successfully predicted processes for unknown samples [40] [41] [39]. | Powerful visualization; simplifies complex data while retaining essential information for classification. | Requires data normalization; results may need careful interpretation by an expert. |

Key Experimental Findings and Data

The core finding of the benchmark study was the clear superiority of multivariate methods over univariate approaches. While univariate PAH ratios were insufficient for reliable classification, multivariate techniques successfully discriminated tars based on their manufacturing origin [39].

Table 2: Relationship Between Manufacturing Process and Coal Tar Chemistry

| Manufacturing Process | Characteristic Chemical Features | Statistical Classification Outcome |

|---|---|---|

| Coke Ovens | High parent PAH content [39]. | Distinctly separated from retort tars by PCA [39]. |

| Vertical Retorts | Presence of distinctive phenolic compounds [39]. | HCA and PCA successfully grouped these samples [39]. |

| Horizontal Retorts | Unique chemical signature influenced by process conditions [39]. | Differentiated from other processes in multivariate space [39]. |

| Carburetted Water Gas (CWG) | Presence of low molecular weight alkanes [39]. | Successfully identified as a distinct class [39]. |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful forensic classification requires a suite of analytical reagents and materials. The following table details key solutions and their functions based on the cited methodologies.

Table 3: Essential Reagents and Materials for Coal Tar Forensic Analysis

| Research Reagent / Material | Function in Experimental Protocol |

|---|---|

| Certified Reference PAH Standards | Calibration and quantification of target polycyclic aromatic hydrocarbons during GC×GC-TOFMS analysis. |

| Accelerated Solvent Extraction Cells | High-pressure, high-temperature vessels for efficient and automated extraction of organic compounds from solid matrices. |

| High-Purity Solvents (e.g., acetone, methanol, benzene) | Extraction and dilution of coal tar samples; different solvent mixtures can affect derivatization and are studied for method optimization [35]. |

| Derivatization Reagents | Chemicals used to modify polar compounds (e.g., phenols) to improve their volatility and chromatographic behavior. |

| Silica Gel / Solid-Phase Extraction Cartridges | Used for sample clean-up to remove interfering compounds, either "in-cell" or as a separate step after extraction [35]. |

| GC×GC-TOFMS System | Instrumentation comprising a two-dimensional gas chromatograph coupled to a time-of-flight mass spectrometer for high-resolution separation and identification of thousands of compounds. |

Operational Workflow for Forensic Classification

Implementing a forensic classification strategy involves a sequence of logical decisions to move from raw data to a forensically sound conclusion.

Discussion and Implications

The ability to accurately classify coal tars using multivariate statistics like PCA has direct and significant applications. It provides a scientifically robust method for allocating liability at contaminated sites, directly supporting the "polluter-pays" principle enshrined in regulations like CERCLA [35] [38]. By linking a contaminated sample to a specific manufacturing process, stakeholders can more accurately identify responsible parties and recover remediation costs.

This comparison establishes that the choice of statistical methodology is not merely academic but has practical consequences for the conclusiveness of a forensic investigation. While HCA offers valuable insights, PCA of normalized, preprocessed GC×GC-TOFMS data has been demonstrated to have the greatest potential for the source identification of coal tars, including predicting the processes used to create unknown samples [40] [41] [39]. This approach forms a powerful toolkit for researchers, scientists, and environmental consultants working on hydrocarbon contamination.

Tip-Enhanced Raman Spectroscopy (TERS) represents a powerful analytical technique that combines the single-molecule sensitivity of surface-enhanced Raman spectroscopy with the exceptional spatial resolution of scanning probe microscopy. This synergy enables chemical imaging at the subnanometer scale, providing researchers with site-specific chemical fingerprints of surfaces and nanostructures [42] [43]. However, the full potential of TERS has long been constrained by a fundamental challenge: the inherent weakness of Raman signals often results in poor signal-to-noise ratios (SNRs) at the individual pixel level, particularly when attempting high-resolution imaging of complex molecular architectures [43].

The integration of multivariate analysis techniques has revolutionized TERS imaging by enabling researchers to extract meaningful chemical information from noisy spectral data. Where conventional single-peak analysis methods fail to distinguish complex molecular structures, multivariate approaches leverage the entire spectral fingerprint, providing an unbiased panoramic view of chemical identity and spatial distribution across a sample surface [42] [43]. This analytical advancement has proven particularly valuable in chemical forensics research, where precise molecular identification can provide critical evidence for investigative purposes.

This guide provides a comprehensive comparison of multivariate analysis methodologies for TERS, detailing experimental protocols, statistical frameworks, and performance metrics to assist researchers in selecting appropriate analytical techniques for their specific chemical imaging requirements.

Experimental Protocols: TERS with Multivariate Analysis

Instrumentation and Sample Preparation

The foundational TERS methodology discussed here employs a scanning tunneling microscope (STM)-controlled system operating under low temperature (≈80 K) and ultrahigh vacuum (UHV) conditions (base pressure: ~1 × 10⁻¹⁰ Torr) [43]. This configuration provides the stability necessary for subnanometer-resolution imaging. The optical component consists of a side-illumination confocal system with a continuous-wave 532 nm laser source. The photon flux is maintained at approximately 100 W cm⁻² over the junction area to ensure sufficient signal generation while minimizing potential sample damage [43].

Sample preparation involves thermal evaporation of analyte molecules onto atomically flat Ag(111) substrates. For example, in demonstrated applications, zinc-5,10,15,20-tetraphenyl-porphyrin (ZnTPP) and free-base meso-tetrakis(3,5-di-tertiarybutyl-phenyl)-porphyrin (H₂TBPP) are sequentially deposited through thermal sublimation at approximately 580 K [43]. Electrochemically etched silver tips serve dual purposes for both STM topography imaging and plasmon-enhanced Raman signal generation. The Ag nanogap between tip and substrate creates the strong plasmonic enhancement essential for detectable Raman signals [43].

Data Acquisition Parameters