Comparing Discriminatory Power of Data-Driven Methods: A Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on evaluating the discriminatory power of data-driven techniques.

Comparing Discriminatory Power of Data-Driven Methods: A Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on evaluating the discriminatory power of data-driven techniques. It covers foundational principles, from defining discriminatory power and its importance in distinguishing clinical groups or predicting outcomes, to practical methodologies like Global Difference Maps (GDMs) and feature selection criteria. The content addresses common challenges in model comparison and optimization, including handling high-dimensional data and mitigating overfitting. Finally, it outlines robust validation frameworks using real-world case studies from fMRI analysis and survival modeling to ensure reliable, interpretable results for critical biomedical applications.

What is Discriminatory Power and Why Does It Matter in Biomedical Research?

Discriminatory power is a fundamental concept in data-driven research, quantifying the capability of a model, test, or system to effectively distinguish between distinct classes, groups, or outcomes. Within the broader scope of methodological research for comparing data-driven techniques, a precise understanding and measurement of discriminatory power is paramount. It directly influences a model's practical utility, determining its reliability in applications ranging from pharmaceutical development to fairness audits in artificial intelligence. This article delineates the core principles, measurement protocols, and application-specific considerations for evaluating discriminatory power, providing researchers and scientists with a structured framework for robust methodological comparisons.

The core principle of discriminatory power lies in its ability to measure separation. In machine learning, this is the model's proficiency in separating one class from another (e.g., sick versus healthy patients) [1] [2]. In analytical chemistry, it refers to a method's sensitivity in detecting differences between formulations or batches [3] [4]. In microbial typing, it is the probability that a system will assign different types to two unrelated strains [5]. Despite the contextual differences, the unifying goal is to validate that a method or model is sufficiently sensitive to meaningful distinctions.

Core Principles and Quantitative Metrics

The evaluation of discriminatory power is rooted in specific, quantitative metrics. The choice of metric is dictated by the problem domain, whether it involves classification, regression, or physical testing protocols.

Metrics for Classification and Machine Learning

In machine learning, discriminatory power is assessed through metrics derived from the confusion matrix, which tabulates true positives (TP), false positives (FP), true negatives (TN), and false negatives (FN) [1] [2].

Table 1: Key Evaluation Metrics for Classification Models

| Metric | Formula | Interpretation and Focus |

|---|---|---|

| Sensitivity (Recall) | TP / (TP + FN) | Measures the ability to correctly identify all relevant positive instances. |

| Specificity | TN / (TN + FP) | Measures the ability to correctly identify all relevant negative instances. |

| Precision | TP / (TP + FP) | Measures the accuracy of positive predictions. |

| F1 Score | 2 * (Precision * Recall) / (Precision + Recall) | Harmonic mean of precision and recall; balances the two. |

| AUC-ROC | Area under the ROC curve | Measures the model's ability to separate classes across all possible thresholds. |

The AUC-ROC (Area Under the Receiver Operating Characteristic Curve) is a particularly important metric. The ROC curve plots the True Positive Rate (Sensitivity) against the False Positive Rate (1 - Specificity) at various classification thresholds. A model with perfect discriminatory power has an AUC of 1.0, while a model with no discriminatory power (equivalent to random guessing) has an AUC of 0.5 [1] [2]. The AUC provides a single scalar value that summarizes the model's ranking performance, independent of any specific classification threshold.

Metrics for Fairness in AI

In the context of AI fairness, discriminatory power is framed in terms of ensuring equitable outcomes across different demographic groups. Key metrics here include [6] [7]:

- Demographic Parity: Requires the probability of a positive outcome to be the same across different groups.

- Equal Opportunity: Requires that true positive rates are similar across groups.

- Statistical Parity Difference (SPD): Quantifies the difference in the average probability of a positive outcome between a privileged group and a disadvantaged group.

Metrics in Physical Sciences

In pharmaceutical development, the discriminatory power of a dissolution method is its ability to detect changes in the performance of a drug product resulting from variations in manufacturing or formulation [3] [4]. This is often validated by intentionally creating batches with meaningful variations (e.g., ±10–20% change to a critical variable) and demonstrating that the dissolution profiles are statistically different, often using the similarity factor (f2). An f2 value of less than 50 indicates a difference in profiles, confirming the method's discriminatory power [4].

In microbial studies, discriminatory power (D) is defined as "the average probability that the typing system will assign a different type to two unrelated strains randomly sampled in the microbial population" [5].

Experimental Protocols for Assessing Discriminatory Power

A standardized, cross-disciplinary protocol is essential for consistent and comparable results when evaluating the discriminatory power of data-driven techniques.

General Workflow for Model Comparison

The following workflow, adapted from a neuroscientific method for comparing factorization algorithms like ICA and IVA on fMRI data, provides a robust template for general model comparison [8].

Protocol 1: Comparing Data-Driven Factorization Techniques

This protocol is based on the Global Difference Maps (GDMs) method, which was developed to compare techniques like Independent Component Analysis (ICA) and Independent Vector Analysis (IVA) on real fMRI data where the ground truth is unknown [8].

Data Acquisition and Preparation:

- Acquire a dataset with a known or hypothesized structure of groups or classes. The example used fMRI data from 109 patients with schizophrenia and 138 healthy controls performing three tasks [8].

- Preprocess the data according to standard practices for the field.

Application of Data-Driven Techniques:

- Apply the multiple data-driven techniques to be compared (e.g., ICA and IVA) to the same dataset.

- Generate the resultant factors, components, or models from each technique.

Generation of Global Difference Maps (GDMs):

- The core of this method involves creating difference maps that visually and quantitatively highlight the disparities in the results produced by the different techniques.

- This step allows for the quantification of relative performance and helps visually identify regions or features where the techniques yield divergent results, even in the absence of a perfect ground truth [8].

Interpretation:

- In the cited study, GDMs revealed that IVA was more effective than ICA at determining brain regions that were discriminatory between patients and controls, though it was less effective at emphasizing regions found in only a subset of the tasks [8].

Protocol for Pharmaceutical Dissolution Testing

This protocol outlines the steps for developing and validating a discriminatory dissolution method for Immediate Release (IR) solid oral dosage forms, based on FDA guidance and related research [3] [4].

Protocol 2: Developing a Discriminatory Dissolution Method

Apparatus and Condition Selection:

- Apparatus: Typically use USP Apparatus 1 (basket) or 2 (paddle) [4].

- Agitation Speed: Select a speed that avoids "excessive agitation," which can lead to a failure to discriminate between inequivalent formulations. Common speeds are 50-75 rpm for the paddle method [4]. A study on fast-dispersible tablets used 50 and 75 rpm for optimization [3].

- Volume and Temperature: Standard volume is 500, 900, or 1000 mL, maintained at 37 ± 0.5°C [4].

Dissolution Medium Optimization:

- The medium must provide sink conditions (volume sufficient to dissolve at least three times the amount of drug in the dosage form) to ensure the dissolution rate is influenced by formulation rather than drug solubility [3] [4].

- The composition (e.g., pH, use of surfactants like Sodium Lauryl Sulfate - SLS) should be justified based on drug substance properties. The goal is to find a medium that provides a higher rate of discriminatory power without being overly aggressive [3]. For a domperidone tablet, 0.5% SLS in distilled water was found to be optimal [3].

Validation of Discriminatory Power:

- Sample Preparation: Prepare batches of the drug product that are intentionally manufactured with meaningful variations in the most relevant critical manufacturing variables (e.g., ±10–20% change in disintegrant or lubricant concentration) [4].

- Testing and Analysis: Perform dissolution testing on the altered batches and compare their profiles to the profile of the bio- or clinical batch.

- Similarity Factor (f2) Calculation: Calculate the f2 value. The method is considered to have satisfactory discriminatory power if the f2 value is less than 50 [4].

Table 2: Research Reagent Solutions for Discriminatory Dissolution Testing

| Reagent/Material | Function/Justification | Example from Literature |

|---|---|---|

| Sodium Lauryl Sulfate (SLS) | Anionic surfactant; lowers surface tension to improve drug solubility and wettability in the medium. | Used at 0.5%, 1.0%, and 1.5% concentrations in water to find the optimally discriminatory medium for domperidone FDTs [3]. |

| pH Buffers | Maintains a constant pH throughout the test, critical for ionizable drugs (weak acids/bases). | Simulated Gastric Fluid (pH 1.2) and Simulated Intestinal Fluid (pH 6.8) without enzymes were tested [3]. |

| Deaerated Medium | Prevents air bubbles from adhering to the dosage form or apparatus, which can adversely affect dissolution rates and result reliability [4]. | Prepared by heating, filtering, and drawing a vacuum on the medium prior to use [4]. |

Application Notes and Interpretation of Results

Successfully implementing the aforementioned protocols requires careful consideration of several factors to ensure valid and interpretable results.

The Trade-off Between Standardization and Realism: In sensory science, studies have shown that highly standardized test setups can increase discriminatory power by reducing noise. However, introducing elements of a natural environment (or mixed reality) can sometimes further enhance discriminatory power and consumer engagement, suggesting that the optimal setup balances control with ecological validity [9].

The Accuracy-Fairness Trade-off in ML: In machine learning, highly accurate models can still be unfair. A model may demonstrate high discriminatory power in separating classes overall but do so in a way that disproportionately harms a specific demographic group. Therefore, evaluation must include fairness metrics like Demographic Parity and Equal Opportunity alongside traditional performance metrics [6] [7]. Sometimes, a less accurate but fairer model is the more desirable outcome.

Context is Critical for Interpretation: The interpretation of a metric is entirely context-dependent. An AUC of 0.8 might be excellent for a diagnostic tool in a difficult domain but unacceptable for a mission-critical system. Similarly, in dissolution testing, the level of difference that must be detected (and thus the required discriminatory power) is defined by the product's quality control and performance requirements [4].

The proliferation of data-driven analytical methods across scientific domains, from neuroscience to cosmology, has created an urgent need for robust comparison frameworks. Researchers and drug development professionals face fundamental challenges when evaluating which algorithm or factorization technique will perform best for their specific dataset and research question. Two interconnected problems consistently hamper these efforts: the alignment problem, where matching factors or components across different methods is impractical and imprecise, and the challenge of unknown ground truth, where researchers lack ideal benchmarks to validate results against objective reality [10] [8]. This application note examines these core challenges through the lens of discriminatory power comparison and provides structured protocols for objective method evaluation.

Core Challenges in Method Comparison

The Alignment Problem

The alignment problem emerges when researchers attempt to compare multivariate methods that produce multiple factors, components, or networks. Traditional approaches require manually matching these outputs across methods, a process that becomes exponentially difficult with increasing model complexity.

Key Aspects of the Alignment Problem:

- Factor Correspondence: Different methods may identify similar underlying patterns but label, scale, or group them differently [10]

- Dimensionality Mismatch: Techniques may produce varying numbers of components, making one-to-one mapping impossible

- Property Variance: Each method exploits different statistical properties of the signal (independence, sparsity, non-negativity), resulting in fundamentally different decompositions [10]

In real-world applications such as functional magnetic resonance imaging (fMRI) analysis, aligning even a subset of factors from multiple techniques can be prohibitively time-consuming, while visual comparisons remain inherently subjective [10].

The Unknown Ground Truth Challenge

When evaluating data-driven methods on real-world datasets, researchers rarely possess perfect knowledge of the underlying system being modeled. This absence of objective benchmarks makes quantitative method comparison exceptionally difficult.

Manifestations of Unknown Ground Truth:

- Simulation-Reality Gap: Artificial datasets used for validation often oversimplify complex real-world phenomena [10]

- Validation Paradox: Methods are often compared using metrics that inherently favor certain algorithmic approaches

- Explanation Contradiction: Multiple contradictory explanations can appear equally valid for a single model prediction when ground truth is unavailable [11]

The table below summarizes key challenges and their implications for method comparison:

Table 1: Core Challenges in Comparing Data-Driven Methods

| Challenge | Technical Definition | Practical Impact | Common Domains Affected |

|---|---|---|---|

| Factor Alignment | Inability to establish precise correspondence between components across different decomposition methods | Subjective comparison, labor-intensive manual matching | Neuroimaging (ICA, IVA) [10], Cosmological analysis [12] |

| Unknown Ground Truth | Absence of objective benchmark for validating method outputs | Inability to quantitatively verify results, reliance on proxy metrics | fMRI analysis [10] [8], XAI evaluation [11], Generative AI [13] |

| Methodological Heterogeneity | Different methods optimize for different statistical properties | Apples-to-oranges comparison, method selection bias | Sustainability clustering [14], Optimization methods [15] |

Methodological Solutions and Evaluation Frameworks

Global Difference Maps (GDMs) for Factorization Methods

Global Difference Maps (GDMs) address the alignment problem in factorization-based analyses by providing a visualization framework that highlights differences between method outputs without requiring explicit factor matching [10] [8].

Theoretical Basis: GDMs quantify and visualize the relational or discriminatory power of different decompositions by creating composite maps that emphasize regions where methods disagree most strongly [10].

Application Context: Originally developed for comparing Independent Component Analysis (ICA) and Independent Vector Analysis (IVA) on fMRI data from 109 patients with schizophrenia and 138 healthy controls across three cognitive tasks [10] [8].

Key Findings from GDM Application:

- IVA identified brain regions with higher discriminatory power between patients and controls than ICA

- ICA proved more effective at emphasizing task-specific networks present in only a subset of tasks

- The trade-off between relational power and task-specificity became quantitatively measurable [10]

Agnostic Explanation Evaluation (AXE) Framework

For explanation methods where ground truth is unknowable, the AXE framework evaluates local feature-importance explanations through predictive accuracy rather than comparison to ideal benchmarks [11].

Core Principle: A good explanation correctly identifies features most predictive of model behavior, enabling users to emulate and predict model outputs [11].

Three Foundational Principles:

- Local Contextualization: Evaluation must consider the specific data point being explained

- Model Relativism: Quality measures should be relative to the model being explained

- On-Manifold Evaluation: Perturbations for evaluation should remain on the data manifold [11]

Table 2: Comparison of Explanation Evaluation Metrics

| Evaluation Metric | Requires Ground Truth | Sensitivity-Based | Satisfies AXE Principles | Primary Use Case |

|---|---|---|---|---|

| Feature Agreement | Yes [11] | No | ✕ ✕ [11] | Synthetic data with known factors |

| Rank Agreement | Yes [11] | No | ✕ ✕ [11] | Controlled validation studies |

| Prediction-Gap Important (PGI) | No [11] | Yes [11] | ✕ [11] | Faithfulness verification |

| Prediction-Gap Unimportant (PGU) | No [11] | Yes [11] | ✕ [11] | Faithfulness verification |

| AXE Framework | No [11] | No [11] | [11] | Real-world applications without ground truth |

Experimental Protocols

Protocol 1: Applying Global Difference Maps for Method Comparison

This protocol details the application of GDMs to compare factorization methods, using fMRI analysis as an exemplar [10].

Research Reagent Solutions:

Table 3: Essential Research Reagents for GDM Analysis

| Reagent/Resource | Specifications | Function in Protocol |

|---|---|---|

| Multi-task fMRI Dataset | 109 patients, 138 controls, 3 tasks (AOD, SIRP, SM) [10] | Primary experimental data for method comparison |

| SPM Toolbox | Statistical Parametric Mapping (SPM5, 2011) [10] | Preprocessing and feature extraction via linear regression |

| ICA Algorithm | Standard implementation (e.g., FastICA) [10] | Baseline factorization method for comparison |

| IVA Algorithm | Multiset extension of ICA [10] | Joint analysis method for comparison |

| GDM Computation Script | Custom MATLAB/Python implementation [10] | Generation of global difference maps from method outputs |

Methodological Steps:

Feature Extraction

- For each subject, run separate linear regressions on each task's fMRI data using SPM

- Use regression coefficient maps as features for subsequent analysis [10]

Method Application

- Apply ICA to each task individually (traditional approach)

- Apply IVA jointly to all tasks (multiset approach)

- Extract component spatial maps and subject weights for each method [10]

GDM Generation

- Compute relational GDMs by highlighting regions with significant between-method differences in component weights

- Compute discriminatory GDMs by emphasizing regions with significant group differences (patients vs. controls) [10]

- Use brightness intensity in GDMs to represent statistical significance of differences [10]

Interpretation

- Brighter regions in GDMs indicate greater methodological differences or discriminatory power

- Quantify overall performance by measuring extent and intensity of significant regions [10]

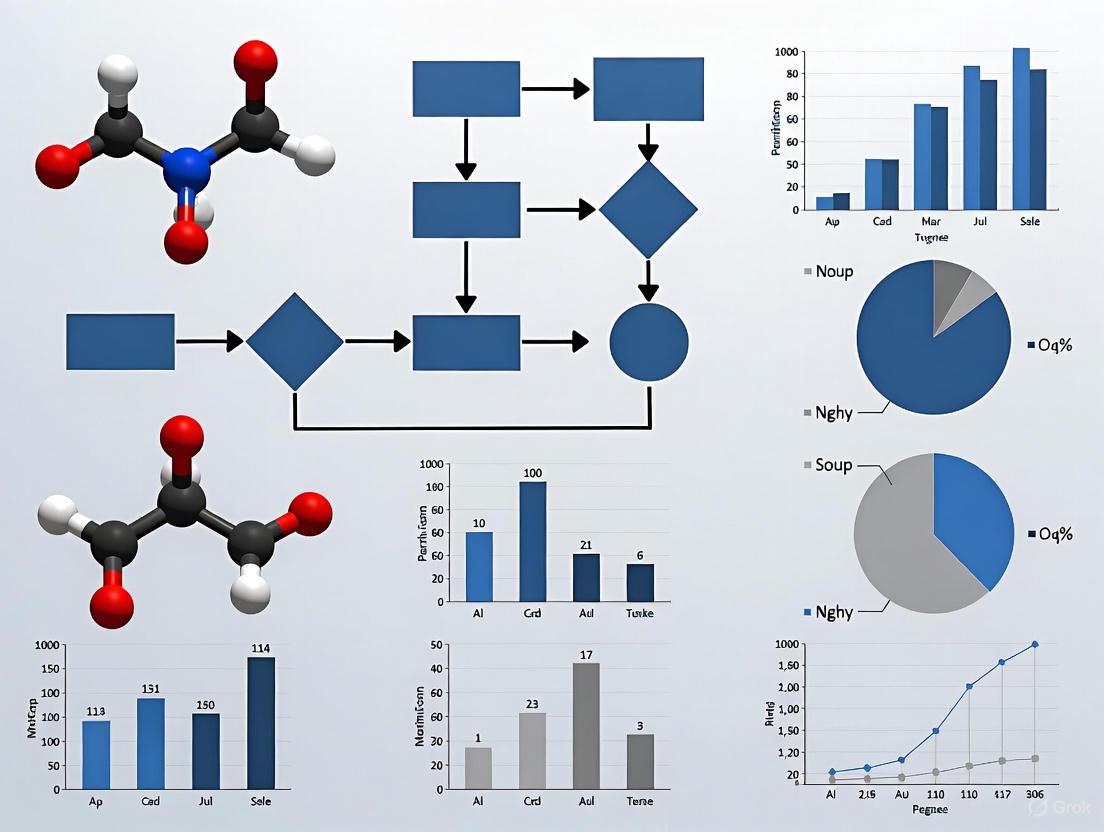

GDM Analysis Workflow: This diagram illustrates the parallel processing and comparison of factorization methods using Global Difference Maps.

Protocol 2: Ground Truth Generation for AI Question-Answering Evaluation

This protocol adapts enterprise-scale ground truth generation practices from AWS for scientific method evaluation, particularly useful when no ground truth exists [13].

Methodological Steps:

Human Curation Foundation

- Subject Matter Experts (SMEs) manually curate a small, high-signal dataset of question-answer pairs

- Focus on fundamental questions critical to the business or research domain

- Objectives: stakeholder alignment, evaluation process awareness, high-fidelity starter dataset [13]

LLM-Scaling Pipeline

- Implement serverless batch processing architecture (AWS Step Functions, Lambda, S3)

- Process source documents through chunking and prompt-based generation

- Use carefully designed prompts with chain-of-thought logic to generate question-answer-fact triplets [13]

Human-in-the-Loop Review

- Apply risk-based sampling for SME review of LLM-generated ground truth

- Verify critical business/research logic is appropriately represented

- Maintain SME involvement despite automation to preserve domain alignment [13]

Implementation Considerations:

- For scientific applications, modify prompt templates to emphasize technical precision over business language

- Implement validation checks specific to scientific domain knowledge

- Maintain audit trails for regulatory compliance in drug development contexts

Ground Truth Generation Pipeline: This workflow combines human expertise with scalable automation to create evaluation benchmarks.

Application Across Scientific Domains

Neuroscience and fMRI Analysis

The comparison of ICA and IVA using GDMs demonstrates how methodological trade-offs become quantifiable even without perfect ground truth [10]. IVA's superior identification of discriminatory networks for schizophrenia diagnosis came at the cost of reduced sensitivity to task-specific activation patterns, enabling researchers to select methods based on study priorities rather than defaulting to established techniques.

Cosmological Model Evaluation

In cosmology, traditional statistical methods (MCMC, nested sampling) and machine learning approaches face similar validation challenges when discriminating between cosmological models like ΛCDM and alternative dark energy theories [12]. Feature selection techniques, particularly Boruta, significantly improved model performance, revealing potential improvements to initially weak models that could guide future observational campaigns [12].

Sustainability Science

Machine learning clustering of global sustainability performance demonstrated how hybrid unsupervised-supervised approaches can identify structural disparities without pre-existing categorization [14]. The perfect classification accuracy (AUC=1.0) achieved by Random Forest, SVM, and ANN validated cluster robustness, while feature importance analysis revealed SDG and regional scores as most predictive of cluster membership [14].

The alignment problem and unknown ground truth present significant but surmountable challenges in comparing data-driven methods. Frameworks like GDMs and AXE enable researchers to move beyond subjective comparisons and ground-truth dependence by focusing on relational differences and predictive accuracy. As methodological diversity continues to grow across scientific domains, these approaches provide structured pathways for evidence-based method selection that acknowledges inherent trade-offs rather than seeking illusory universal superiority. For drug development professionals and researchers, implementing these protocols can standardize evaluation practices and enhance reproducibility in complex analytical workflows.

In data-driven research, particularly in fields like medicine and drug development, accurately evaluating model performance is paramount. The discriminatory power of a model—its ability to distinguish between different states or outcomes—is often assessed using core metrics such as the Area Under the Receiver Operating Characteristic Curve (AUC-ROC) and the Concordance Index (C-index) [16] [17]. While sometimes used interchangeably, they serve distinct purposes. AUC-ROC typically evaluates binary classification models, whereas the C-index is predominantly used in survival analysis to assess how well a model ranks survival times [18] [17]. This article details these metrics, their protocols for application, and methods for establishing the statistical significance of findings, providing a framework for robust model comparison.

Metric Definitions and Theoretical Foundations

AUC-ROC: For Binary Classification

The Receiver Operating Characteristic (ROC) curve is a fundamental tool for evaluating binary classifiers. It visualizes the trade-off between the True Positive Rate (TPR) and the False Positive Rate (FPR) across all possible classification thresholds [19] [18].

- True Positive Rate (TPR/Sensitivity/Recall): The proportion of actual positives correctly identified. ( TPR = \frac{TP}{TP + FN} ) [18] [20].

- False Positive Rate (FPR): The proportion of actual negatives incorrectly classified as positives. ( FPR = \frac{FP}{FP + TN} ) [18] [20].

The Area Under the ROC Curve (AUC-ROC or simply AUC) summarizes the classifier's performance across all thresholds into a single value [20]. Its value represents the probability that the model will rank a randomly chosen positive instance higher than a randomly chosen negative instance [19]. A perfect model has an AUC of 1.0, a model no better than random guessing has an AUC of 0.5, and an AUC below 0.5 indicates the model is performing worse than chance [18] [20].

C-index: For Survival Analysis

The Concordance Index (C-index or C-statistic) is the primary metric for evaluating the discriminatory power of survival models [17]. It measures a model's ability to correctly rank pairs of individuals by their survival times or risk scores [21] [17].

In essence, a pair of individuals is "concordant" if the individual who experienced the event first had a higher risk score predicted by the model. The C-index calculates the proportion of all comparable pairs (where the order of events can be determined, i.e., at least one has experienced the event) that are concordant [17]. A value of 1 indicates perfect ranking, 0.5 indicates random ranking, and 0 indicates perfect inverse ranking.

Table 1: Core Metric Comparison

| Feature | AUC-ROC | C-index |

|---|---|---|

| Primary Use Case | Binary Classification | Survival (Time-to-Event) Analysis |

| Core Interpretation | Probability a positive instance is ranked higher than a negative instance. | Probability that predicted risk scores correctly order survival times. |

| Perfect Score | 1.0 | 1.0 |

| Random Guessing | 0.5 | 0.5 |

| Handles Censoring | No | Yes |

| Key Limitation | Can be optimistic for imbalanced datasets [18]. | Conservative; insensitive to meaningful model improvements [22] [17]. |

Experimental Protocols and Application

Protocol for AUC-ROC Analysis in Binary Classification

This protocol outlines the steps for evaluating a binary classifier using AUC-ROC, using a comparison between Logistic Regression and Random Forest as an example [18].

1. Research Question: Which of the two models better distinguishes between patients with and without a specific disease?

2. Data Preparation:

- Generate or obtain a dataset with binary outcomes (e.g., disease: yes/no).

- Split the dataset into training (e.g., 80%) and testing (e.g., 20%) sets to ensure unbiased evaluation [18].

3. Model Training:

- Train a Logistic Regression model on the training set: LogisticRegression(random_state=42).fit(X_train, y_train) [18].

- Train a Random Forest model on the training set: RandomForestClassifier(n_estimators=100, random_state=42).fit(X_train, y_train) [18].

4. Prediction and Probability Calculation:

- Use the trained models to generate predicted probabilities for the positive class on the test set: .predict_proba(X_test)[:, 1] for each model [18].

5. ROC Curve Calculation and Plotting:

- For each model, compute the FPR and TPR at various thresholds using roc_curve(y_test, y_pred_proba) [18].

- Calculate the AUC for each model using auc(fpr, tpr) [18].

- Plot the ROC curves for both models on the same graph, including a diagonal line for the random classifier (AUC=0.5) for reference [18].

6. Interpretation:

- The model with the higher AUC is generally considered to have better overall discriminatory power.

- Visually, the curve that is closer to the top-left corner indicates better performance [20].

Protocol for C-index Evaluation in Survival Analysis

This protocol describes how to validate a survival model, such as a Cox Proportional Hazards model, using the C-index [21] [17].

1. Research Question: How well does a prognostic model rank cervical cancer patients by their risk of mortality? 2. Data Source and Preprocessing: - Utilize a relevant dataset (e.g., the SEER database for cancer studies) [21]. - Preprocess data: handle missing values (e.g., via imputation), normalize continuous variables, and encode categorical variables [21]. - Split the data into training and independent test sets (e.g., 70%/30%) [21]. 3. Model Training: - Train the survival model (e.g., Cox PH with Elastic Net regularization) on the training dataset. Use cross-validation on the training set to optimize hyperparameters [21]. 4. Risk Score Generation and Ranking: - Use the trained model to generate risk scores for each individual in the test set. 5. C-index Calculation: - Calculate the C-index on the test set by comparing the model's risk score rankings against the actual observed survival times and event indicators. - Formally, the C-index is the proportion of all usable pairs where the predictions and outcomes are concordant [17]. 6. Interpretation: - A C-index significantly above 0.5 indicates the model has predictive power. In clinical contexts, a value of 0.7-0.8 is often considered acceptable, and >0.8 is considered strong [21].

Table 2: Essential Materials for Survival Analysis

| Research Reagent / Material | Function / Explanation |

|---|---|

| SEER Database | A large, publicly available cancer registry dataset used for developing and validating oncological survival models [21]. |

| Cox Proportional Hazards (Cox PH) Model | A semi-parametric statistical model that relates survival time to predictors via hazard rates; provides interpretable hazard ratios [21]. |

| Elastic Net Regularization | A regularization technique that combines L1 (Lasso) and L2 (Ridge) penalties. It prevents overfitting and performs feature selection in high-dimensional data [21]. |

| Random Survival Forest (RSF) | A non-parametric, machine learning model that can capture complex, non-linear relationships between covariates and survival without assuming a specific hazard structure [21]. |

| Integrated Brier Score (IBS) | A metric used alongside the C-index to evaluate the overall accuracy of predicted survival probabilities, accounting for calibration across the follow-up period [21]. |

Assessing Statistical Significance and Robustness

Statistical Testing for AUC-ROC

When comparing models or assessing fairness, it is not enough to observe a difference in AUC values; one must test if this difference is statistically significant.

- Comparing Two Models on the Same Dataset: Use methods like DeLong's test to compare the AUCs of two models evaluated on the same test set. This determines if one model's superior performance is likely real and not due to random chance.

- Algorithmic Fairness with ABROCA: To assess if a model is biased against a demographic group, the Area Between ROC Curves (ABROCA) metric can be used. It measures the disparity in model performance (AUC) between subgroups [23]. Due to its potentially skewed distribution, nonparametric randomization tests are recommended for reliable significance testing of the ABROCA statistic [23]. A significant ABROCA result indicates that the performance difference between groups is statistically unlikely to have occurred by chance.

Limitations of the C-index and Complementary Metrics

The C-index, while popular, has known limitations. It is a rank-based statistic that is often conservative and insensitive to the addition of new, clinically significant biomarkers to an already robust model [22] [17]. It measures discrimination (ranking) but not calibration (the agreement between predicted and observed event rates) [17].

A comprehensive survival model evaluation should therefore complement the C-index with other metrics:

- Brier Score: Measures the overall accuracy of probabilistic predictions, with lower scores indicating better accuracy [22].

- Calibration Plots: Visually assess the agreement between predicted survival probabilities and observed outcomes (e.g., via Kaplan-Meier estimates) at specific time points [21].

- Net Reclassification Improvement (NRI): Quantifies how well a new model reclassifies individuals (to higher or lower risk groups) correctly compared to an old model [22].

The Role of Discriminatory Power in fMRI, Survival Analysis, and Drug Development

Application Note: Discriminatory Power in Functional Magnetic Resonance Imaging (fMRI)

Core Concept and Quantitative Benchmarking

In fMRI, discriminatory power refers to the capacity of analytical methods to differentiate distinct neural states, individual subjects, or clinical groups based on functional connectivity (FC) patterns. The choice of pairwise interaction statistic used to calculate FC from regional time series data fundamentally influences this power. A comprehensive benchmark of 239 pairwise statistics revealed substantial variation in their ability to capture canonical features of brain networks and predict individual differences in behavior [24].

Table 1: Benchmarking Performance of Select fMRI Pairwise Statistics

| Family of Statistics | Example Measures | Structure-Function Coupling (R²) | Individual Fingerprinting Accuracy | Key Strengths |

|---|---|---|---|---|

| Covariance | Pearson's Correlation | Moderate | Moderate | Standard approach, good all-rounder [24] |

| Precision | Partial Correlation | High (up to ~0.25) | High | Emphasizes direct connections; high correspondence with structural connectivity and biological similarity networks [24] |

| Information Theoretic | Mutual Information | Moderate | Moderate | Sensitive to non-linear dependencies [24] |

| Spectral | Imaginary Coherence | High | Moderate | Robust to certain artifacts; high structure-function coupling [24] |

Task vs. Resting-State Paradigms

The discriminatory power of fMRI is also highly dependent on the experimental paradigm. Task-based fMRI, which engages specific neural circuits, often outperforms resting-state fMRI in predictive modeling for behaviorally relevant outcomes. Evidence suggests there are unique optimal pairings between specific fMRI tasks and the neuropsychological outcomes they best predict [25]. For instance, emotional N-back tasks may be more effective for investigating conditions like depression, while gradual-onset continuous performance tasks show stronger links with sensitivity and sociability outcomes [25].

Advanced Data-Driven Methods

Beyond pairwise statistics, advanced factorization methods like Independent Component Analysis (ICA) and its multiset extension, Independent Vector Analysis (IVA), offer different discriminatory advantages. In a study comparing patients with schizophrenia and healthy controls, IVA was found to determine brain networks that were more discriminatory between the groups, whereas ICA was more effective at emphasizing task-specific networks present in only a subset of tasks [10]. Global Difference Maps (GDMs) provide a novel method to visually highlight and quantify these performance differences between analytical techniques on real fMRI data where the ground truth is unknown [10].

Protocol: Comparing Factorization Methods with Global Difference Maps (GDMs)

Objective

To quantitatively and visually compare the discriminatory power of different data-driven factorization methods (e.g., ICA vs. IVA) for fMRI data in differentiating two or more subject groups (e.g., patients vs. controls).

Materials and Reagent Solutions

Table 2: Essential Research Toolkit for fMRI Factorization Analysis

| Item | Function/Description | Example |

|---|---|---|

| fMRI Data | Preprocessed BOLD time series from subjects. | Data from tasks (AOD, SIRP, SM) and/or resting-state [10]. |

| Feature Extraction Tool | Software to create subject-level feature maps. | Statistical Parametric Mapping (SPM) toolbox for generating regression coefficient maps [10]. |

| Factorization Algorithms | Software packages to perform decompositions. | ICA (e.g., FastICA) and IVA implementations [10]. |

| Statistical Testing Suite | Environment for hypothesis testing on subject weights. | MATLAB or Python with functions for t-tests/ANOVA [10]. |

| GDM Computation Script | Custom code to calculate and visualize Global Difference Maps. | In-house scripts as described in [10]. |

Experimental Workflow

Step-by-Step Procedure

- Feature Extraction: For each subject, run a first-level analysis (e.g., a general linear model in SPM) on the fMRI data from each task. Use the resulting regression coefficient maps per task as the input features for the factorization methods [10].

- Factorization: Apply the data-driven methods to be compared (e.g., ICA and IVA) to the feature maps from all subjects. This will decompose the data into spatial components (for ICA) or source vectors (for IVA) and their corresponding subject-specific weights [10].

- Identify Discriminatory Components: For each component from each method, perform a statistical test (e.g., two-sample t-test) on the subject weights to determine which components significantly differentiate the pre-defined groups (e.g., patients vs. controls) [10].

- Calculate GDM:

- For each method, create a binary mask of all voxels that belong to any component found to be significantly discriminatory in the previous step.

- For each voxel in the mask, calculate its GDM value. This value is a function of the statistical significance (p-values) of the subject weights for all components that the voxel belongs to. The function can be defined as the negative logarithm of the product of the p-values for that voxel across components, thereby giving higher values to voxels in more significantly discriminatory components [10].

- Visualization and Interpretation: Plot the GDM for each method. Brighter regions in the GDM indicate brain areas where the method has found more statistically significant differences between groups. The GDMs can be compared visually and quantitatively to assess which method highlights more, or different, discriminatory brain networks [10].

Application Note: Discriminatory Power in Survival Analysis

Core Concept and Metric Comparison

In survival analysis, discriminatory power often refers to a model's ability to correctly rank individuals by their risk of an event (e.g., death, disease progression). The C-index (concordance index) is the standard metric for assessing this aspect of model performance [26]. Beyond discrimination, calibration—the agreement between predicted and observed survival probabilities—is crucial. The novel A-calibration method has been introduced as a more powerful goodness-of-fit test for model calibration under censoring compared to the existing D-calibration method [27].

Table 3: Comparison of Calibration Tests for Survival Models

| Feature | D-Calibration | A-Calibration |

|---|---|---|

| Core Principle | Pearson's goodness-of-fit test on transformed survival times [27]. | Akritas's goodness-of-fit test designed for censored data [27]. |

| Handling of Censoring | Uses an imputation approach, which can lead to conservative tests and loss of power [27]. | Specifically designed for randomly censored time-to-event data [27]. |

| Statistical Power | Lower; sensitive to censoring mechanism and rate [27]. | Similar or superior power in all tested cases; less sensitive to censoring [27]. |

| Primary Advantage | Provides a single numeric value for calibration across follow-up time [27]. | More robust and powerful test for assessing the accuracy of predicted survival distributions [27]. |

Model Comparisons in Cancer Prognosis

Studies comparing traditional parametric survival models (e.g., Weibull, log-logistic) with machine learning (ML) algorithms (e.g., Random Survival Forests, neural networks) show that ML methods can achieve high discriminatory power. For example, in breast cancer prognosis, neural networks have exhibited the highest predictive accuracy, and Random Survival Forests have been noted for their strong performance and balance between model fit and complexity [26]. A key finding is that ML models like Random Survival Forest and DeepHit can sometimes slightly outperform the traditional Cox proportional hazards model in terms of the C-index [26].

Protocol: Implementing A-Calibration for Survival Model Validation

Objective

To assess the calibration of a predictive survival model using the A-calibration method, which tests the agreement between the model's predicted survival distributions and the observed outcomes in the presence of censoring.

Materials and Reagent Solutions

Table 4: Essential Research Toolkit for Survival Model Validation

| Item | Function/Description |

|---|---|

| Survival Dataset | Time-to-event data including event indicator (e.g., 1 for death, 0 for censored) and predicted survival probabilities from the model under evaluation [27]. |

| Statistical Software | Environment with survival analysis and statistical testing capabilities (e.g., R, Python). |

| A-Calibration Implementation | Code for performing the Akritas's goodness-of-fit test. This may require custom implementation based on the seminal paper [27]. |

Validation Workflow

Step-by-Step Procedure

- Model Prediction: Using the trained survival model, generate predicted survival probabilities for all subjects in the validation dataset across all observed time points.

- Apply Test: Perform the A-calibration test (Akritas's goodness-of-fit test). This test is designed specifically for censored data and compares the model's predicted survival probabilities with the observed survival data, accounting for the censoring mechanism [27].

- Obtain Results: The output of the test is a test statistic and an associated p-value.

- Interpretation: A non-significant p-value (e.g., greater than 0.05) suggests that there is no statistically significant evidence against the null hypothesis that the model is well-calibrated. In other words, the model's predictions are in good agreement with the observed outcomes. A significant p-value indicates poor calibration [27].

Application Note: Discriminatory Power in Drug Development and Biomarker Discovery

Core Concept and Quantitative Metrics

In drug development, discriminatory power is the ability of a diagnostic tool or biomarker to accurately distinguish between disease states (e.g., healthy vs. diseased) or between different levels of disease severity. The Area Under the Receiver Operating Characteristic Curve (AUC or AUROC) is the primary quantitative metric used for this purpose. An AUC of 1 represents perfect discrimination, while 0.5 represents discrimination no better than chance. Sensitivity and specificity at an optimal cutoff are also key reporting metrics.

Exemplary Biomarker Performance

Recent studies highlight biomarkers with high discriminatory power across various diseases:

- Pancreatic Cancer: Serum fucosylated receptor expression-enhancing protein 5 (REEP5) demonstrated an AUC of 0.928 for detecting pancreatic ductal adenocarcinoma (PDAC) versus non-cancer controls, outperforming the current standard CA19-9 (AUC=0.805). For early-stage (I & II) PDAC, its performance was even more remarkable (AUC=0.962) [28].

- Prostate Cancer: Thymidine Kinase 1 (TK1) showed excellent discriminatory power (AUC=0.973) for diagnosing prostate cancer. When combined with the standard PSA test, the AUC improved to 0.996, near-perfect discrimination [29].

- Non-Alcoholic Steatohepatitis (NASH): An AI-based biomarker (iBiopsy) applied to MRI/MRE images achieved an AUROC of 0.90 for diagnosing advanced fibrosis (F3), with a specificity of 0.89 and sensitivity of 0.86 [30].

- Multiple Sclerosis (MS): A multimodal approach combining biomarkers from MRI (gray matter volume), OCT (retinal layers), and blood (serum neurofilament light chain) achieved the highest discriminative accuracy for predicting 4-year disability progression, outperforming any single biomarker alone [31].

Table 5: Discriminatory Power of Novel Biomarkers in Drug Development

| Disease | Biomarker | AUC | Sensitivity / Specificity | Clinical Context |

|---|---|---|---|---|

| Pancreatic Cancer | Fucosylated REEP5 [28] | 0.928 | High (exact values not specified) | Detection vs. non-cancer controls |

| Pancreatic Cancer (Early Stage) | Fucosylated REEP5 [28] | 0.962 | High (exact values not specified) | Detection of Stage I & II cancer |

| Prostate Cancer | Thymidine Kinase 1 (TK1) [29] | 0.973 | 91.11% / 88.89% | Diagnosis |

| Prostate Cancer | TK1 + Total PSA [29] | 0.996 | 95.56% / 97.78% | Diagnosis (combined markers) |

| NASH Fibrosis | AI iBiopsy (on MRE) [30] | 0.90 | 86% / 89% | Diagnosing advanced fibrosis (F3) |

Protocol: Developing a Multimodal Biomarker Signature

Objective

To develop and validate a biomarker signature that combines measures from different modalities (e.g., imaging, liquid biopsy, clinical tests) to maximize discriminatory power for predicting a specific clinical outcome.

Materials and Reagent Solutions

Table 6: Essential Research Toolkit for Multimodal Biomarker Development

| Item | Function/Description | Example in Multiple Sclerosis [31] |

|---|---|---|

| Imaging Modality | Provides structural or functional data on disease pathology. | MRI for Lesion Volume (LV) and Gray Matter Volume (GMV). |

| Liquid Biopsy Assay | Measures circulating biomarkers reflecting cellular damage. | SiMoA technology for Serum Neurofilament Light Chain (sNfL) and Glial Fibrillary Acidic Protein (sGFAP). |

| Other Non-Invasive Test | Captures additional disease-relevant data. | Optical Coherence Tomography (OCT) for Retinal Nerve Fiber Layer (RNFL) and Ganglion Cell-Inner Plexiform Layer (GCIPL). |

| Statistical Software | For advanced statistical modeling and ROC analysis. | Software capable of Structural Equation Modeling (SEM) and logistic regression. |

Experimental Workflow

Step-by-Step Procedure

- Cohort Definition: Establish a well-characterized patient cohort with clearly defined clinical outcomes (e.g., disease progression over 4 years, as defined by an EDSS increase). Collect baseline data across the chosen modalities [31].

- Data Acquisition and Processing:

- Acquire structural MRI scans (e.g., T1-weighted, FLAIR) and process them using tools like SPM and the lesion segmentation toolbox to quantify T2-hyperintense Lesion Volume (LV) and Gray Matter Volume (GMV) [31].

- Collect serum samples and measure concentrations of biomarkers like sNfL and sGFAP using highly sensitive assays (e.g., SiMoA technology) [31].

- Perform OCT imaging to extract thickness measurements of retinal layers (RNFL and GCIPL) [31].

- ROC Analysis: Perform Receiver Operator Characteristic (ROC) analysis for each individual biomarker to assess its standalone discriminatory power (AUC, sensitivity, specificity). Then, evaluate all possible combinations of biomarkers (e.g., MRI+OCT, MRI+Blood, OCT+Blood, all three) to identify which combination yields the highest discriminative accuracy for the clinical outcome [31].

- Causal Modeling (Optional but Advanced): Apply Structural Equation Modeling (SEM) to the single biomarkers to determine their causal inter-relationships. This can help elucidate the pathways through which biomarkers influence the clinical outcome. For example, SEM might reveal that lesion volume mediates a significant part of the effect of gray matter volume and sNfL on disability progression [31].

- Signature Validation: The final step is to validate the identified optimal multimodal biomarker signature in an independent, larger patient cohort to confirm its clinical utility and generalizability.

Practical Techniques and Frameworks for Direct Method Comparison

The proliferation of data-driven factorization methods for analyzing complex biomedical data, such as functional magnetic resonance imaging (fMRI), has created an urgent need for robust comparison frameworks. Traditional approaches for comparing methods like Independent Component Analysis (ICA) and Independent Vector Analysis (IVA) face significant limitations when applied to real-world data where ground truth is unknown. Global Difference Maps (GDMs) emerge as a novel solution to this challenge, providing both a quantitative and visual means to compare the results of different fMRI analysis techniques on real data without requiring tedious factor alignment steps [10] [8]. This capability is particularly valuable in psychiatric disorder research, where understanding neural function disruptions requires methods that can highlight biologically meaningful differences between patient and control groups.

The fundamental innovation of GDMs lies in their ability to transform abstract methodological comparisons into visually interpretable spatial maps while simultaneously quantifying the relative performance of different factorization approaches. By bypassing the need for precise factor alignment across methods—a process described as "impractical and imprecise" for real fMRI data—GDMs enable researchers to objectively assess which analytical approach best captures clinically or biologically relevant signals in their specific dataset [10]. This addresses a critical gap in the analytical pipeline for neuroimaging and other complex data domains, where method selection significantly impacts findings but has historically lacked rigorous comparison frameworks.

Theoretical Foundation and Comparative Framework

The Factorization Method Comparison Challenge

Factor model performance is inherently dependent on the validity of underlying modeling assumptions for the specific dataset being analyzed. This dependency motivates direct comparison of different factor models, but such comparison presents substantial methodological challenges [10]. Traditional comparison approaches have primarily relied on simulated data, but these artificial datasets often lack the complexity of real biological data [10]. When applied to real data, most comparison techniques require aligning factors from different methods and relying on visual comparison, which is not only time-consuming but also inherently subjective [10].

Global Difference Maps address these limitations through a structured framework that evaluates factorization methods based on two primary criteria: discriminatory power (the ability to differentiate between groups, such as patients and controls) and relational power (the ability to identify biologically related networks) [10]. This dual-evaluation framework allows researchers to select methods based on their specific analytical goals, whether focused on biomarker discovery or understanding fundamental network organization.

Mathematical Underpinnings of GDM

While the complete mathematical formulation of GDMs is beyond our scope here, the core concept involves calculating significant differences in factor weights between experimental groups and aggregating these differences into composite spatial maps. The GDM approach incorporates the statistical significance of latent subject weights into the visualization, with brighter regions in the maps corresponding to more significant discriminative power [10]. This creates an intuitive yet statistically grounded visualization that summarizes decomposition results while maintaining a direct connection to the underlying statistical evidence.

Table: Core Comparison Metrics for Factorization Methods

| Metric Category | Specific Measures | Interpretation |

|---|---|---|

| Discriminatory Power | Between-group significance of component weights | Brightness in GDM indicates stronger group separation |

| Relational Power | Cross-task consistency of identified networks | Measures biological coherence across conditions |

| Spatial Specificity | Focus and spread of significant regions | Indicates whether methods emphasize broad or focal differences |

Experimental Protocols for GDM Implementation

Data Preparation and Preprocessing

The application of GDMs requires careful data preparation to ensure valid comparisons. For neuroimaging applications, the process begins with feature extraction tailored to the experimental design [10]. When analyzing multi-task fMRI data with different stimulus timing, a linear regression approach is recommended using statistical parametric mapping tools. Regressors should be created by convolving the hemodynamic response function with task-specific predictors, producing regression coefficient maps that serve as features for each subject and task [10]. This standardized feature extraction ensures that subsequent factorization methods operate on comparable inputs, a critical prerequisite for meaningful methodological comparison.

Data organization follows a structured pipeline: (1) subject-level processing to extract relevant features, (2) quality control to identify outliers or artifacts, and (3) data formatting for compatibility with different factorization algorithms. For multi-subject studies involving group comparisons (e.g., patients vs. controls), group assignment must be maintained throughout the pipeline to support subsequent discriminatory analysis. The dataset should include a sufficient sample size to ensure statistical power; exemplar studies utilizing GDMs have included substantial cohorts (e.g., 109 patients with schizophrenia and 138 healthy controls) [10] [8].

Factorization Method Implementation

With prepared data, researchers implement the factorization methods to be compared. For ICA, multiple algorithms are available, with FastICA and Entropy Bound Minimization (EBM) being commonly used approaches [10]. For IVA, the IVA-GL algorithm (combining IVA with multivariate Gaussian and Laplace source component vectors) has been widely used in neuroimaging applications and provides an attractive tradeoff between complexity and performance [32]. This algorithm can be accessed through the Group ICA for fMRI toolbox (GIFT) and involves performing subject-level PCA on each subject's data before applying IVA-GL to estimate subject-specific components and time courses [32].

Diagram Title: GDM Experimental Workflow

GDM Computation and Visualization

The core GDM algorithm processes the results from multiple factorization methods to generate comparative visualizations. The implementation involves calculating significant differences in component weights between groups for each method and transforming these statistical differences into spatial maps [10]. The technical process can be implemented using MATLAB, Python, or R, with specialized neuroimaging toolboxes like GIFT providing foundational functions [32].

The visualization component of GDMs should highlight regions where different factorization methods yield divergent results in terms of discriminatory or relational power. Brighter regions in the resulting maps indicate areas where the factorization method demonstrates stronger discriminatory power between groups [10]. This visualization should be accompanied by quantitative metrics that capture the overall performance differences between methods, allowing for both visual inspection and statistical comparison.

Application to ICA and IVA Comparison

Performance Profiling of Factorization Methods

Applied to the comparison of ICA and IVA, GDMs reveal distinct performance profiles for each method. Studies consistently show that IVA demonstrates superior discriminatory power for identifying regions that differentiate patient populations (e.g., schizophrenia patients vs. healthy controls) [10] [8]. This enhanced sensitivity to group differences makes IVA particularly valuable for clinical neuroscience applications aimed at identifying potential biomarkers. However, this advantage comes with a tradeoff: IVA is less effective than ICA at emphasizing regions that appear in only a subset of tasks [10].

Complementary research comparing IVA with Group Information Guided ICA (GIG-ICA) further refines our understanding of these methodological tradeoffs. GIG-ICA shows better recovery accuracy for both components and time courses than IVA for subject-common sources, while IVA outperforms GIG-ICA in component and time course estimation for subject-unique sources [32]. This suggests that GIG-ICA is more appropriate for estimating networks consistent across subjects, while IVA better captures networks with significant inter-subject variability [32].

Table: Comparative Performance of ICA and IVA in fMRI Analysis

| Performance Dimension | ICA | IVA |

|---|---|---|

| Group Discrimination | Moderate | Superior [10] [8] |

| Cross-Task Consistency | Strong | Limited [10] |

| Subject-Common Sources | Strong | Moderate [32] |

| Subject-Unique Sources | Moderate | Strong [32] |

| Network Reliability | High | Variable [32] |

Context-Dependent Method Selection

The GDM framework enables context-dependent method selection by clearly delineating the strengths of each approach. IVA is particularly advantageous in scenarios with substantial inter-subject variability or when the primary analytical goal is maximizing sensitivity to group differences [10] [32]. This makes it well-suited for clinical applications focusing on disorder characterization or biomarker identification. Additionally, when subject-mode patterns differ across time windows, IVA has demonstrated particular accuracy in capturing these dynamic changes [33].

Conversely, ICA remains preferable when analyzing networks consistent across subjects or when the research aims to identify task-specific regional engagement that appears only in subsets of experimental conditions [10] [32]. ICA also produces more reliable spatial functional networks and yields higher, more robust modularity properties of functional network connectivity compared to IVA [32]. This makes ICA better suited for studies focused on fundamental network organization rather than group discrimination.

Advanced Applications and Extensions

Integration with Tensor Factorization Approaches

Recent methodological advances have expanded the comparison landscape beyond ICA and IVA to include tensor factorization approaches. The PARAFAC2 model has emerged as a powerful alternative, particularly for analyzing time-evolving data arranged as subject × voxel × time window tensors [33]. This approach compactly summarizes dynamic data by revealing underlying networks, their temporal evolution, and associated temporal patterns [33]. Comparative studies indicate that PARAFAC2 provides a compact representation across all modes (subjects, time, and voxels), simultaneously revealing temporal patterns and evolving spatial networks [33].

The expanding methodological ecosystem underscores the continued value of GDMs for objective comparison. As the number of analytical options grows, tools that enable direct performance comparison on real datasets become increasingly essential for methodological selection and validation.

Translational Applications in Drug Development

While initially developed for neuroimaging, the GDM framework holds significant promise for translational applications, including drug development. The ability to objectively compare analytical methods directly supports biomarker identification and validation—critical components of modern drug development pipelines [34] [35]. As pharmaceutical research increasingly focuses on neuropsychiatric disorders and central nervous system therapeutics, robust analytical frameworks for neuroimaging data become essential for establishing drug efficacy and understanding mechanisms of action.

The GDM approach could be particularly valuable during the clinical trial phase of drug development, where understanding how experimental therapeutics affect brain network organization could provide crucial evidence of biological effects beyond behavioral measures [34]. Furthermore, the method's ability to highlight differential sensitivity between analytical approaches helps researchers select the most appropriate method for their specific application context, potentially reducing false leads and enhancing research efficiency.

Research Reagent Solutions

Table: Essential Research Tools for GDM Implementation

| Tool Category | Specific Solutions | Application Context |

|---|---|---|

| Data Processing | Statistical Parametric Mapping (SPM) | Feature extraction via linear regression [10] |

| Factorization Algorithms | Group ICA for fMRI Toolbox (GIFT) | Implementation of ICA, IVA, and GIG-ICA [32] |

| Visualization Platforms | MATLAB with customized scripts | GDM generation and visualization [10] |

| Statistical Analysis | R or Python with specialized packages | Significance testing of component weights [10] |

| Data Management | Structured data formats (NIfTI, CIFTI) | Standardized handling of neuroimaging data [10] |

Global Difference Maps represent a significant advancement in the methodological toolkit for comparing data-driven factorization approaches. By providing both quantitative metrics and visual representations of methodological performance on real datasets, GDMs enable more informed method selection and enhance the interpretability of analytical results. The application of this framework to ICA and IVA comparison has revealed complementary strengths—with IVA offering superior discriminatory power for group comparisons, while ICA provides more consistent identification of task-specific networks. As analytical methods continue to evolve, frameworks like GDMs will play an increasingly important role in ensuring methodological rigor and biological relevance in computational analysis of complex biomedical data.

Feature selection is a critical dimensionality reduction step in the analysis of high-dimensional data, serving to improve model interpretability, mitigate overfitting, and enhance computational efficiency [36]. Within this domain, two principal criteria guide the selection of features: discrimination-based feature selection (DFS) and reliability-based feature selection (RFS). The former prioritizes features based on their ability to distinguish between predefined classes or brain states, while the latter selects for features that demonstrate high stability across samples or repeated measurements [37]. Framed within a broader thesis on comparing the discriminatory power of data-driven techniques, this application note provides a structured comparison of these two paradigms. It details experimental protocols and offers a practical toolkit for researchers, particularly those in scientific fields such as drug development, to inform their analytical workflows.

Comparative Analysis of DFS and RFS

The core distinction between these paradigms lies in their optimization target: DFS maximizes separation between classes, whereas RFS maximizes consistency within classes. A large-scale study on fMRI data from the Human Connectome Project (HCP), encompassing 987 subjects, provides empirical evidence for their complementary strengths and weaknesses [37].

DFS features, often selected using metrics like Analysis of Variance (ANOVA), excel at maximizing classification accuracy. They are particularly effective at identifying salient biomarkers that differentiate biological states or treatment outcomes [37]. However, a known limitation is their potential instability; the specific features selected can be sensitive to variations in the sample population, which may raise concerns about the generalizability of the findings [37] [38].

Conversely, RFS features, selected using metrics like Kendall's concordance coefficient, offer superior stability. These features remain consistent across different subsets of subjects or data splits, making the analytical results more reliable and reproducible—a critical consideration in preclinical and clinical development [37] [36]. This stability, however, can come at the cost of raw discriminatory power, as the most stable features are not always the most distinguishing [37].

Table 1: Quantitative Comparison of DFS vs. RFS from an fMRI Study

| Metric | Discrimination-Based (DFS) | Reliability-Based (RFS) |

|---|---|---|

| Classification Performance | Superior at distinguishing brain states [37] | Lower compared to DFS [37] |

| Feature Stability | Less stable across subject subsets [37] | Highly stable about the number of subjects and features [37] |

| Sensitivity to Feature Number | Performance varies with the number of features selected [37] | Performance is more stable across different numbers of selected features [37] |

| Primary Application | When the goal is maximal prediction accuracy [37] | When reproducibility and reliability are paramount [37] |

Furthermore, the performance and characteristics of these methods are influenced by dataset dimensions. The distribution of selected features can shift as the number of features extracted increases, often expanding from primary sensory areas to associative regions of the brain in neuroimaging data [37]. It is also crucial to note that the "curse of dimensionality"—where a large number of features confronts a small sample size—is a common challenge that feature selection aims to address [36].

Experimental Protocols for Evaluation

To rigorously compare feature selection methods, a standardized evaluation framework is essential. The following protocols outline the core workflow and key metrics.

General Evaluation Workflow

A robust evaluation involves a cross-validation procedure to assess how the selected features generalize to unseen data. The typical workflow is as follows [37] [36]:

- Data Partitioning: Split the dataset into k-folds (e.g., k=10).

- Feature Selection: In each training fold, apply both DFS and RFS algorithms to select the top-N features.

- Model Training & Validation: Train a classifier (e.g., Support Vector Machine, Logistic Regression) using the selected features from the training set and evaluate its performance on the held-out test fold.

- Performance Aggregation: Calculate average performance metrics across all k-folds.

- Stability Assessment: Measure the consistency of the selected feature subsets across the different training folds.

Detailed Protocol: A Comparative Study

This protocol is adapted from a comparison study using large-scale fMRI data [37].

- Aim: To compare the classification performance and stability of DFS and RFS criteria.

- Dataset:

- Source: 987 subjects from the Human Connectome Project (HCP).

- Tasks: fMRI data from six different tasks (e.g., working memory, gambling).

- Features: Voxel-wise brain activity maps.

- Feature Selection Methods:

- DFS Proxy: ANOVA, chosen for its ability to maximize separation between task-specific brain states.

- RFS Proxy: Kendall's concordance coefficient, chosen for its measurement of feature stability across subjects.

- Evaluation Metrics:

- Performance: Classification accuracy, Area Under the Curve (AUC).

- Stability: Measured using metrics like the Kuncheva index or Jaccard similarity to quantify the overlap of feature subsets selected across different subject groups or data splits [36].

- Experimental Variables:

- Systematically vary the number of subjects (e.g., 50, 100, 200) and the number of top features selected (e.g., 50, 100, 500) to evaluate the robustness of each method.

Protocol for Stability Assessment

Stability is a critical metric for RFS and should be evaluated separately [36] [38].

- Aim: To quantify the consistency of a feature selection algorithm under perturbations of the training data.

- Method:

- Generate multiple subsamples (e.g., 100 iterations) of the training dataset via bootstrapping or random sampling.

- Apply the feature selection algorithm to each subsample.

- For each pair of selected feature subsets (e.g., from two different subsamples), compute a stability index.

- Stability Metrics:

- Jaccard Index: The size of the intersection divided by the size of the union of two feature sets.

- Kuncheva Index: A correction of the Jaccard index that accounts for the overlap expected by chance when selecting a large number of features.

Visualization of Workflows and Relationships

The following diagrams illustrate the core concepts and experimental workflows discussed.

Diagram 1: A comparison of the DFS and RFS paradigms, highlighting their distinct goals, metrics, and primary applications.

Diagram 2: A standard experimental workflow for the comparative evaluation of feature selection methods, utilizing k-fold cross-validation.

The Scientist's Toolkit

Table 2: Key Reagents and Computational Tools for Feature Selection Research

| Item Name | Function / Description | Example Use Case |

|---|---|---|

| ANOVA (Analysis of Variance) | A discrimination-based filter method that scores features based on their ability to separate groups. | Selecting voxels in fMRI data that best distinguish between task conditions or patient cohorts [37]. |

| Kendall's W (Concordance) | A reliability-based filter method that measures the agreement or stability of a feature across multiple subjects or trials. | Identifying genes or imaging biomarkers that show consistent expression patterns across different sample batches [37]. |

| Stability Index (e.g., Kuncheva) | A metric to quantify the consistency of selected feature subsets across different data samples. | Evaluating the robustness of a proposed biomarker signature to variations in the study population [36] [38]. |

| Python FS Framework | An open-source, extensible framework for benchmarking feature selection algorithms against multiple metrics. | Systematically comparing new and existing feature selection methods on custom datasets for performance and stability [36]. |

Feature selection (FS) is a critical preprocessing step in machine learning and data mining, aimed at identifying the most informative attributes or variables from high-dimensional data to build predictive models while eliminating redundant or irrelevant noise features [39]. In the context of drug development and precision medicine, this process is particularly vital for building interpretable models that can predict drug responses from molecular profiles, ultimately guiding personalized treatment strategies [40] [41].

Traditional FS methods can be broadly categorized into filter and wrapper approaches [39]. Filter methods utilize a simple weight score criterion to estimate feature goodness and are classifier-independent, making them computationally efficient. However, they often disregard feature correlations and may select subsets with redundant information [39]. Wrapper methods depend on a specific classifier to evaluate feature subsets, generally yielding superior classification accuracy but at a significantly higher computational cost due to repeated classifier training [39].

A fundamental limitation of many conventional methods, including popular mutual information-based techniques, is their focus on evaluating features individually [39] [42]. These univariate approaches ignore features that, while weak in discriminatory power alone, may become highly informative when combined with others [43] [42]. Furthermore, they are often ineffective at eliminating redundant features [39]. Subset evaluation methods offer a better alternative by considering feature relevance and redundancy collectively [39]. Community modularity presents a novel solution to the feature subset evaluation problem by providing a criterion that selects highly informative features as a group, even if these features are not relevant individually [39].

Theoretical Foundation of Community Modularity in Feature Selection

Community modularity is a concept borrowed from complex network theory that measures the strength of division of a network into modules or communities [39]. Networks with high community modularity exhibit strong internal connections within communities and relatively sparse connections between different communities [39] [42].

When applied to feature selection, this concept is implemented by constructing a sample graph (SG) where nodes represent individual samples, and edges represent the similarities between samples when projected into the space defined by a particular feature subset [39]. In this graph, a good feature subset will cause samples from the same class to form tight clusters (communities) that are well-separated from samples of other classes [39]. The community modularity (Q value) quantitatively measures this property, with higher values indicating feature subsets with greater discriminative power [39].

The key advantage of this approach is its ability to capture what is termed "relevant in-dependency" - the collective discriminatory power of a feature subset as a group, rather than simply aggregating individually strong features [39]. This allows the method to identify feature subsets where features may have weak discriminative power individually but strong power when combined [39].

Table 1: Key Concepts in Community Modularity for Feature Selection

| Concept | Definition | Role in Feature Selection |

|---|---|---|

| Sample Graph (SG) | A graph where nodes represent samples and edges represent similarities between samples in the feature space [39] | Provides the structural foundation for evaluating feature subsets |

| Community Structure | The organization of nodes into groups with dense internal connections and sparser connections between groups [39] | Reflects how well samples from the same class cluster together in the feature subset |

| Modularity (Q value) | A scalar value measuring the strength of the community structure in a network [39] | Serves as the evaluation criterion for ranking feature subsets |

| Relevant In-dependency | The collective discriminative power of features as a group rather than as individuals [39] | Enables identification of features that are only powerful when combined |

Quantitative Comparison of Feature Selection Methods

Evaluations of feature selection methods, including community modularity-based approaches, typically employ classification accuracy as the primary performance metric [39] [42]. Standard experimental protocols involve multiple runs of k-fold cross-validation (typically 10-fold) to obtain reliable accuracy estimates and avoid overfitting [39] [44]. Common classifiers for evaluation include 1-Nearest Neighbor (1NN) and Support Vector Machines (SVM) with radial basis function kernels [39] [42].

Table 2: Performance Comparison of Feature Selection Methods on Cancer Classification Tasks

| Dataset | Community Modularity Method | mRMR | MIFS-U | CMIM | Relief | SVMRFE |

|---|---|---|---|---|---|---|

| ALL-AML-3C | 98.57% (1NN), 98.75% (SVM) [42] | Not specified | Not specified | Not specified | Not specified | Not specified |

| DLBCL_A | 98.62% (1NN), 99.28% (SVM) [42] | 95.71% (1NN), 98.66% (SVM) [42] | Lower than proposed method [42] | Lower than proposed method [42] | Lower than proposed method [42] | Not specified |

| SRBCT | 100% (1NN & SVM) [42] | 100% (with more genes) [42] | Lower than proposed method [42] | Lower than proposed method [42] | Lower than proposed method [42] | Not specified |

| MLL | 100% (1NN & SVM) [42] | 100% (with more genes) [42] | Lower than proposed method [42] | Lower than proposed method [42] | Lower than proposed method [42] | Not specified |

| Lymphoma | 100% (1NN & SVM) [42] | 100% (with similar gene count) [42] | Lower than proposed method [42] | Lower than proposed method [42] | Lower than proposed method [42] | Not specified |

In broader comparative studies of feature reduction methods for drug response prediction, knowledge-based approaches and feature transformation methods have shown competitive performance [40]. For instance, transcription factor activities have demonstrated superior performance in predicting drug responses for multiple compounds, effectively distinguishing between sensitive and resistant tumors [40]. Ridge regression often performs as well as or better than other machine learning models across different feature reduction methods [40].

Experimental Protocols and Methodologies

Community Modularity-based Feature Selection Protocol

The following protocol details the implementation of community modularity-based feature selection, adaptable for various data types including gene expression, SNP data, or high-content screening features [39] [42] [44].

Procedure: