Comparative Analysis of Machine Learning Algorithms for Explosives Classification: From Fundamentals to Cutting-Edge Applications

This article provides a comprehensive analysis of machine learning algorithms applied to explosives classification, addressing the critical needs of researchers, scientists, and security professionals.

Comparative Analysis of Machine Learning Algorithms for Explosives Classification: From Fundamentals to Cutting-Edge Applications

Abstract

This article provides a comprehensive analysis of machine learning algorithms applied to explosives classification, addressing the critical needs of researchers, scientists, and security professionals. It explores foundational algorithms including Naive Bayes, SVM, Decision Trees, and neural networks, while examining their implementation across diverse detection modalities such as OFETs, Raman spectroscopy, FTIR, and hyperspectral imaging. The content systematically evaluates performance optimization strategies, addresses real-world challenges like background interference and data scarcity, and presents rigorous validation metrics for objective algorithm comparison. By synthesizing current research trends and performance data, this review serves as an essential resource for selecting, implementing, and advancing ML solutions in explosives detection and related chemical analysis domains.

Fundamental Machine Learning Paradigms for Explosives Sensing

In the face of evolving global security challenges, the rapid and accurate identification of hazardous materials has become a critical imperative. Traditional methods for detecting explosives and other threats often face limitations in speed, sensitivity, or adaptability. The integration of machine learning (ML) into analytical sciences has ushered in a new era for security applications, enabling systems to learn from complex data and identify threats with remarkable precision. This guide provides a comprehensive comparison of the three core machine learning paradigms—supervised, unsupervised, and reinforcement learning—within the specific context of explosives classification research. By examining experimental protocols, performance data, and real-world applications, we aim to equip researchers and security professionals with the knowledge to select and implement the most effective ML strategies for their specific threat detection challenges.

Machine learning algorithms are typically categorized into three primary types based on their learning mechanism and the nature of the problems they solve. The table below summarizes their fundamental characteristics, particularly within security and classification contexts.

Table 1: Fundamental Characteristics of Core Machine Learning Types

| Aspect | Supervised Learning | Unsupervised Learning | Reinforcement Learning |

|---|---|---|---|

| Core Principle | Learns from labeled data to map inputs to known outputs [1] [2] | Identifies hidden patterns or structures in unlabeled data [1] [3] | Learns optimal actions through trial-and-error interactions with an environment [1] [2] |

| Primary Security Tasks | Classification (e.g., explosive/non-explosive), Regression [1] | Clustering, Anomaly Detection, Dimensionality Reduction [1] [4] | Sequential decision-making (e.g., robot navigation for bomb disposal) [1] |

| Data Requirements | Large amounts of accurately labeled historical data [3] | Unlabeled data; raw datasets are acceptable [3] | No prior training data; requires an environment to interact with [3] |

| Common Algorithms | SVM, Random Forest, Neural Networks, KNN [1] [5] | K-Means, PCA, Autoencoders [1] [4] | Q-learning, Deep Q-Networks (DQN), Policy Gradients [1] [2] |

| Key Advantage | High accuracy for well-defined prediction tasks [3] | No need for costly and time-consuming data labeling [3] | Adapts to dynamic, complex environments and learns optimal strategies [3] |

| Primary Challenge | Prone to overfitting; cannot handle classes not seen during training [2] [3] | Results can be unpredictable and difficult to validate [2] [3] | Training can be slow, resource-intensive, and complex to implement [2] [3] |

The following diagram illustrates the logical relationship between the data state and the primary learning objectives of these three paradigms.

Diagram 1: Logical flow from data state to security applications for core ML paradigms.

Experimental Comparisons in Explosives Classification

The theoretical strengths and limitations of each ML paradigm are best understood through their application in real-world experimental settings. Recent research in spectroscopic and imaging analysis of explosives provides robust, quantitative data for comparison.

Case Study 1: Terahertz Spectroscopy with Traditional ML and Deep Learning

A 2025 study by Periketi and Chaudhary directly compared multiple algorithms for classifying five high-energy secondary explosives (RDX, TNT, HMX, PETN, Tetryl) using terahertz time-domain spectroscopy (THz-TDS) [5].

Table 2: Performance Comparison of ML Models on Terahertz Spectroscopic Data [5]

| Algorithm | Algorithm Type | Input Features | Reported Accuracy | Key Strengths |

|---|---|---|---|---|

| 1D-CNN | Supervised (Deep Learning) | FFT Amplitude, Absorption Coefficient, Refractive Index | 99.58% | Automatically extracts relevant features without manual preprocessing; computationally efficient. |

| SVM (RBF Kernel) | Supervised (Traditional ML) | Absorption Coefficient & Refractive Index | 95.83% | Effective in high-dimensional spaces. |

| Random Forest | Supervised (Traditional ML) | Absorption Coefficient & Refractive Index | 93.75% | Robust to outliers. |

| K-Nearest Neighbors (KNN) | Supervised (Traditional ML) | Absorption Coefficient & Refractive Index | 91.67% | Simple to implement and understand. |

Experimental Protocol Summary [5]:

- Sample Preparation: 100 mg of each explosive material was mixed with 200 mg of Teflon powder and pressed into pellets.

- Data Acquisition: Terahertz time-domain signals were acquired in reflection geometry over a frequency range of 0.2–3.0 THz. A gold-coated mirror served as the reference.

- Feature Extraction: For the 1D-CNN, raw FFT amplitude, absorption coefficient, and refractive index data were used. For traditional models (SVM, RF, KNN), the absorption coefficient and refractive index were calculated and used as input features.

- Model Training & Evaluation: The dataset was split into training and testing sets. The 1D-CNN architecture was designed to process sequential spectral data, while traditional models were trained on the extracted optical features.

Case Study 2: Near-Infrared Hyperspectral Imaging with CNN

Another 2025 study developed a custom near-infrared (NIR) hyperspectral imaging system (900–1700 nm) for the stand-off identification of hazardous materials, including TNT, RDX, and PETN [6]. The system was designed to detect trace levels of explosives as low as 10 mg/cm², even when concealed by barriers like clothing, plastic, or glass [6].

Experimental Protocol Summary [6]:

- Imaging System: A custom-built NIR hyperspectral imager with a transmissive grating and lateral scanning mechanism was used.

- Data Collection: Hyperspectral cubes (spatial and spectral data) were collected for target chemicals on various surfaces and under concealment.

- Model Training: A Convolutional Neural Network (CNN) was trained on the hyperspectral data to learn the distinct NIR spectral signatures of each explosive.

- Performance: The CNN model's performance was benchmarked against traditional classifiers like Support Vector Machine (SVM) and K-Nearest Neighbors (KNN).

Table 3: Performance of CNN vs. Traditional Models on NIR Hyperspectral Data [6]

| Model | Accuracy | Recall | Precision | F1-Score |

|---|---|---|---|---|

| Convolutional Neural Network (CNN) | 91.08% | 91.15% | 90.17% | 0.924 |

| Support Vector Machine (SVM) | Lower than CNN | Lower than CNN | Lower than CNN | Lower than CNN |

| K-Nearest Neighbors (KNN) | Lower than CNN | Lower than CNN | Lower than CNN | Lower than CNN |

The study concluded that the CNN significantly outperformed the traditional methods, highlighting the advantage of deep learning in interpreting complex spectroscopic data for real-world, stand-off detection scenarios [6].

Case Study 3: Fluorescence Sensing with Similarity Measures

A 2025 study on trace TNT detection explored a different analytical modality: fluorescence sensing [7]. This research combined a highly specific and reversible fluorescent sensor (LPCMP3) with time-series similarity measures for classification.

Experimental Protocol Summary [7]:

- Sensing Mechanism: The fluorescent sensor film was exposed to TNT acetone solutions and common chemical reagents. Interaction with TNT causes fluorescence quenching via photoinduced electron transfer.

- Data Recording: The fluorescence response over time was recorded for different concentrations and under varying conditions (e.g., UV irradiation time).

- Classification Method: Instead of a traditional ML model, the classification was performed using similarity measures between the unknown time-series response and reference responses. The methods tested were:

- Pearson Correlation Coefficient

- Spearman Correlation Coefficient

- Dynamic Time Warping (DTW) distance

- Derivative Dynamic Time Warping (DDTW) distance

- Results: The method achieved a limit of detection (LOD) of 0.03 ng/μL with a response time of less than 5 seconds. The integration of the Spearman correlation coefficient and DDTW distance was found to be an effective classification method [7].

This approach demonstrates an alternative to model-based classification that is highly effective for specific, well-defined sensing tasks.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, materials, and instruments used in the featured explosives classification experiments, providing a reference for researchers seeking to replicate or build upon this work.

Table 4: Key Research Reagents and Materials for Explosives Detection Experiments

| Item Name | Function/Application | Example from Research Context |

|---|---|---|

| Secondary Explosive Samples | Target analytes for classification and detection. | RDX, HMX, TNT, PETN, Tetryl [5]. |

| Terahertz Time-Domain Spectrometer (THz-TDS) | A non-destructive spectroscopic technique that captures both amplitude and phase of THz waves, allowing calculation of complex optical parameters. | Used to characterize the absorption coefficient and refractive index of explosives in reflection geometry [5]. |

| Near-Infrared (NIR) Hyperspectral Imager | An imaging system that captures spatial and spectral information across many contiguous NIR bands, enabling material identification. | A custom Hypersec VNIR-A system (400-1000 nm) was used to image explosive fragments and background materials [8]. |

| Fluorescent Sensing Material (LPCMP3) | A polymer whose fluorescence is quenched upon interaction with nitroaromatic explosives, enabling highly sensitive detection. | Used as the active element in a trace explosive fluorescence detection system for TNT [7]. |

| Binding Agent (Teflon Powder) | Used to mix with and stabilize explosive powders for pellet formation in spectroscopic analysis. | Mixed with explosive samples to prepare pellets for THz-TDS measurements [5]. |

| Halogen Lamp Light Source | Provides broad-spectrum illumination required for hyperspectral imaging. | Used in the hyperspectral image acquisition system to ensure consistent, sufficient lighting [8]. |

Integrated Workflows and Hybrid Approaches

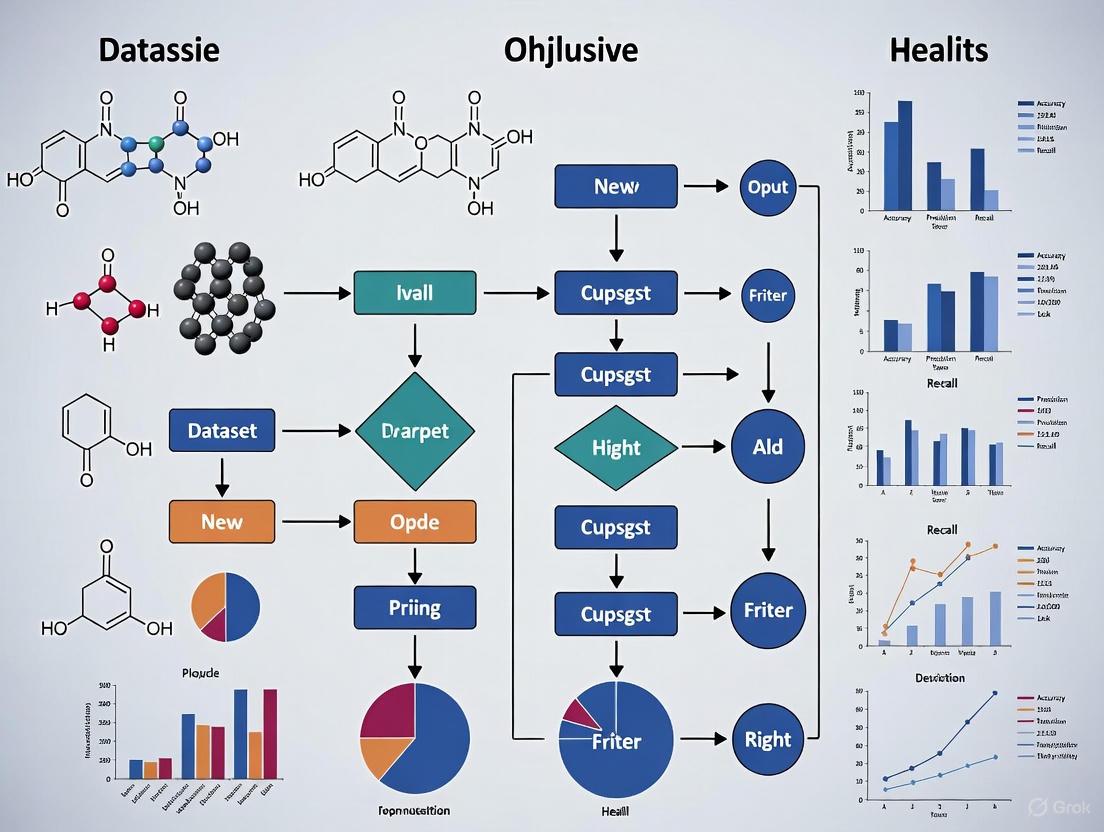

Modern security systems often leverage hybrid approaches that combine multiple ML paradigms to create more robust solutions. The workflow below illustrates how different learning types can be integrated into a comprehensive explosives classification system, from data acquisition to final identification.

Diagram 2: Integrated workflow for explosives classification combining multiple ML approaches.

The experimental data clearly demonstrates that the choice of machine learning paradigm has a profound impact on the performance of explosives classification systems. Supervised learning, particularly deep learning models like 1D-CNN, currently sets the state-of-the-art for accuracy in classifying known explosives based on spectral data, achieving accuracies exceeding 95-99% in controlled studies [5] [6]. Unsupervised learning and similarity-based methods play a crucial role in anomaly detection and specialized sensing applications, offering solutions when labeled data is scarce [7]. While less prominent in direct classification, reinforcement learning holds potential for optimizing broader security processes, such as autonomous robot navigation for inspection in hazardous environments [1] [3].

Future research will likely focus on hybrid models that combine the strengths of these paradigms, improve explainability of AI decisions for forensic applications, and enhance robustness against adversarial attacks. The trend towards using deep learning for multi-sensor data fusion, as seen in spatial-spectral combination algorithms for hyperspectral imaging [8], is particularly promising for developing next-generation, field-deployable security systems that can operate effectively in complex, real-world environments.

In the field of machine learning, particularly for classification tasks such as explosives detection in security and research applications, several traditional algorithms have established strong theoretical foundations and demonstrated significant practical utility. Naive Bayes, Support Vector Machines (SVM), and Decision Trees represent three fundamentally distinct approaches to pattern recognition, each with unique mathematical principles and operational characteristics. These algorithms form the backbone of many classification systems, offering varying trade-offs between interpretability, computational efficiency, and predictive performance. Understanding their theoretical underpinnings is essential for researchers and scientists seeking to select appropriate methodologies for specific classification problems, including the critical domain of explosives detection where accuracy and reliability are paramount.

The selection of an appropriate machine learning algorithm depends heavily on the nature of the dataset, the problem context, and the relative importance of factors such as interpretability, computational resources, and predictive accuracy. This comprehensive review examines the theoretical bases, operational mechanisms, and practical considerations of these three key algorithms, providing a structured framework for their comparison and application in research settings, particularly those involving sensitive classification tasks such as explosives identification where misclassification carries significant consequences.

Theoretical Foundations and Algorithmic Principles

Naive Bayes: Probabilistic Classification Based on Conditional Independence

Naive Bayes classifiers are founded on Bayesian probability theory and operate under the fundamental assumption of feature independence given the class label. Despite the often unrealistic nature of this "naive" independence assumption, these classifiers perform remarkably well in many practical applications, including text classification and medical diagnosis [9] [10]. The algorithm applies Bayes' Theorem to calculate the posterior probability of a class given the observed features:

Bayes' Theorem Formula: P(y|X) = [P(X|y) * P(y)] / P(X)

Where:

- P(y|X) is the posterior probability of class y given features X

- P(X|y) is the likelihood of features X given class y

- P(y) is the prior probability of class y

- P(X) is the probability of features X [10]

The "naive" conditional independence assumption simplifies the calculation by assuming that features are independent of each other given the class variable, allowing the joint probability to be expressed as the product of individual probabilities: P(x₁, x₂, ..., xₙ|y) = P(x₁|y) * P(x₂|y) * ... * P(xₙ|y) [9]. This simplification makes the model highly scalable, requiring only a single parameter for each feature in a learning problem [9].

Table: Types of Naive Bayes Classifiers and Their Applications

| Type | Data Characteristics | Common Applications |

|---|---|---|

| Gaussian Naive Bayes | Continuous features assumed to follow normal distribution | Medical diagnosis, weather prediction [10] [11] |

| Multinomial Naive Bayes | Discrete features representing frequencies or counts | Text classification, document categorization, sentiment analysis [10] [11] |

| Bernoulli Naive Bayes | Binary/boolean features indicating presence or absence | Spam filtering, document classification with binary term occurrence [10] |

Support Vector Machines (SVM): Maximum-Margin Classification

Support Vector Machines represent a fundamentally different approach, operating on the principle of structural risk minimization and maximum-margin classification [12]. Rather than relying on probability estimates, SVMs seek to find the optimal hyperplane that separates classes in the feature space with the greatest possible margin. The algorithm transforms the classification problem into a convex optimization task with the objective of finding the decision boundary that maximizes the distance to the nearest data points from any class [12] [13].

For a linearly separable dataset, a hard-margin SVM finds the hyperplane that completely separates classes with maximum margin. However, for non-linearly separable data, soft-margin SVMs introduce slack variables (ζ) that allow some misclassification while penalizing it in the objective function [12]. The optimization problem for a soft-margin SVM can be formalized as:

SVM Optimization Problem: Minimize: ‖w‖² + CΣζᵢ Subject to: yᵢ(wᵀxᵢ - b) ≥ 1 - ζᵢ and ζᵢ ≥ 0 for all i [12]

Where w is the weight vector, C is the regularization parameter, and ζᵢ are slack variables. The parameter C controls the trade-off between maximizing the margin and minimizing classification errors [12]. A key innovation in SVM is the kernel trick, which enables efficient non-linear classification by implicitly mapping inputs into higher-dimensional feature spaces without explicitly computing the transformed coordinates [12] [13]. This approach allows SVMs to handle complex, non-linear decision boundaries while maintaining computational tractability.

Decision Trees: Hierarchical Feature Partitioning

Decision Trees employ a fundamentally different strategy based on recursive partitioning of the feature space [14] [15]. These algorithms construct a tree-like model of decisions and their potential consequences, creating a hierarchical structure where internal nodes represent feature tests, branches represent test outcomes, and leaf nodes represent class predictions [14]. The tree construction process follows a divide-and-conquer approach, conducting a greedy search to identify optimal split points within the tree [14].

Several mathematical metrics are used to determine the optimal feature for splitting at each node:

- Information Gain: Based on entropy reduction, where entropy measures the impurity of the sample values [14]. Information Gain represents the difference in entropy before and after a split on a given attribute [14].

- Gini Impurity: Measures how often a randomly chosen element would be incorrectly classified if it were randomly labeled according to the class distribution in the subset [14].

- Entropy: Quantifies the uncertainty or randomness in the data, with higher values indicating greater impurity [14].

The decision tree construction process continues in a top-down, recursive manner until a stopping criterion is met, such as when all or most records have been classified under specific labels or when further splitting provides no significant information gain [14]. To prevent overfitting, techniques like pre-pruning (halting tree growth early) or post-pruning (removing subtrees after construction) are employed [14] [15].

Algorithm Comparison and Performance Characteristics

Theoretical and Operational Comparison

Table: Comprehensive Comparison of Algorithm Characteristics

| Characteristic | Naive Bayes | Support Vector Machines | Decision Trees |

|---|---|---|---|

| Theoretical Basis | Bayesian probability with conditional independence assumption | Statistical learning theory, maximum-margin classification | Hierarchical recursive partitioning, information theory |

| Key Assumptions | Feature independence given class, specific distribution forms (e.g., Gaussian) | Data is representative, appropriate kernel selection | Features can be partitioned effectively, hierarchical structure captures patterns |

| Mathematical Metrics | Posterior probability, likelihood, prior probability | Margin width, kernel similarity, regularization penalty | Information gain, Gini impurity, entropy |

| Handling of Non-linearity | Limited unless features are transformed | Excellent through kernel trick (RBF, polynomial, etc.) | Moderate through recursive partitioning |

| Training Approach | Maximum likelihood estimation, closed-form calculation | Convex optimization (quadratic programming) | Greedy top-down recursive partitioning |

| Interpretability | Moderate (probabilistic reasoning) | Low (black-box, especially with kernels) | High (clear decision rules and hierarchy) |

| Computational Efficiency | High (fast training and prediction) | Moderate to low (depends on kernel and dataset size) | Moderate (efficient training, but can grow complex) |

Performance Considerations for Research Applications

Each algorithm presents distinct advantages and limitations that must be carefully considered for research applications such as explosives classification:

Naive Bayes offers exceptional scalability and requires only a small amount of training data to estimate parameters necessary for classification [9] [10]. The algorithm is highly resilient to irrelevant attributes, though it can be influenced by them when they are correlated with meaningful features [10]. Its primary limitation lies in the strong feature independence assumption, which rarely holds completely in real-world scenarios, potentially limiting its ability to capture complex feature interactions [9] [11].

Support Vector Machines demonstrate particular strength in high-dimensional spaces and are effective even when the number of dimensions exceeds the number of samples [12]. Their resilience to noisy data and misclassified examples makes them suitable for datasets with measurement inaccuracies [12]. A significant limitation, however, is their poor interpretability, especially when using non-linear kernels, as the transformation to high-dimensional space obscures the decision-making process [13].

Decision Trees provide exceptional transparency, as the hierarchical decision process is easily visualized and understood by domain experts [14] [15]. They require minimal data preparation, handling various data types without extensive preprocessing [14]. However, they are prone to overfitting, particularly with complex trees, and can exhibit high variance, where small variations in data can produce significantly different trees [14] [15].

Experimental Framework for Algorithm Evaluation

Standardized Experimental Protocol

To ensure rigorous comparison of algorithm performance in research contexts such as explosives classification, the following experimental protocol is recommended:

Dataset Preparation and Partitioning:

- Apply appropriate data cleaning procedures to handle missing values and outliers

- Normalize or standardize continuous features, particularly for SVM and Naive Bayes

- Partition data into training, validation, and test sets using stratified sampling to maintain class distribution

- For feature selection, apply consistent criteria across all algorithms to ensure fair comparison

Model Training and Hyperparameter Tuning:

- Implement k-fold cross-validation on the training set to optimize hyperparameters

- For Naive Bayes: Select appropriate variant (Gaussian, Multinomial, or Bernoulli) based on feature characteristics [10] [11]

- For SVM: Optimize regularization parameter C and kernel parameters (e.g., γ for RBF kernel) [12] [13]

- For Decision Trees: Optimize maximum depth, minimum samples per leaf, and splitting criterion (Gini impurity or information gain) [14] [15]

Performance Evaluation Metrics:

- Calculate standard classification metrics: accuracy, precision, recall, F1-score

- Generate ROC curves and compute AUC values for probabilistic assessments

- Record computational efficiency metrics: training time, prediction latency, memory usage

- Assess model stability through repeated experiments with different random seeds

Research Reagent Solutions: Essential Computational Tools

Table: Essential Research Reagents for Algorithm Implementation

| Research Reagent | Function in Analysis | Algorithm Application |

|---|---|---|

| Feature Selection Algorithms | Identify most discriminative features, reduce dimensionality | Critical for all algorithms, improves performance and interpretability |

| Cross-Validation Framework | Hyperparameter tuning, performance estimation, avoid overfitting | Essential for robust evaluation, particularly for SVM and Decision Trees |

| Kernel Functions (Linear, RBF, Polynomial) | Transform feature space for non-linear separation | SVM-specific, significantly impacts model capability |

| Pruning Methods (Pre-pruning, Post-pruning) | Reduce tree complexity, prevent overfitting | Decision Tree-specific, crucial for generalization |

| Probability Calibration Methods | Improve reliability of probability estimates | Particularly beneficial for Naive Bayes and Decision Trees |

| Ensemble Methods (Bagging, Boosting) | Combine multiple models, reduce variance, improve accuracy | Applicable to all algorithms, especially effective for Decision Trees |

Visualization of Algorithm Decision Mechanisms

Naive Bayes Probabilistic Decision Process

SVM Maximum-Margin Optimization

Decision Tree Recursive Partitioning

The comparative analysis of Naive Bayes, Support Vector Machines, and Decision Trees reveals that each algorithm possesses distinct strengths and limitations rooted in their theoretical foundations. For research applications such as explosives classification, algorithm selection should be guided by dataset characteristics, performance requirements, and interpretability needs.

Naive Bayes offers exceptional computational efficiency and performs well with limited training data, making it valuable for rapid prototyping and applications with clear feature independence [10] [11]. Support Vector Machines provide powerful non-linear classification capabilities through the kernel trick, demonstrating particular strength in high-dimensional spaces and noisy datasets [12] [13]. Decision Trees deliver superior interpretability and minimal data preparation requirements, functioning effectively with both numerical and categorical data while providing transparent decision logic [14] [15].

In practice, the optimal approach often involves empirical evaluation of all three algorithms on representative datasets, as theoretical predictions of performance may not account for domain-specific characteristics. For critical applications such as explosives classification, ensemble methods that combine the strengths of multiple algorithms may provide enhanced robustness and predictive accuracy. Future advancements in explainable AI may further bridge the gap between the high performance of complex models like SVM and the interpretability of Decision Trees, offering researchers increasingly powerful tools for sensitive classification tasks.

Convolutional Neural Networks (CNNs) for Spectral and Image Data

Within the critical field of explosives classification research, the accurate analysis of spectral and image data is paramount for applications ranging from threat detection in security checkpoints to the development of novel energetic materials. The ability to automatically and precisely identify explosive compounds hinges on the effective processing of complex data signatures. Among machine learning algorithms, Convolutional Neural Networks (CNNs) have emerged as a powerful tool, capable of learning high-level spatial and spectral features directly from data. This guide provides an objective comparison of CNN performance against other machine learning algorithms, presenting supporting experimental data to inform researchers and scientists in their selection of analytical methods.

Performance Comparison: CNNs vs. Alternative Machine Learning Algorithms

The performance of CNNs and other algorithms for spectral/image classification has been quantitatively evaluated across multiple studies. Key metrics include overall accuracy and computational efficiency.

Table 1: Performance Comparison on Hyperspectral Image Classification (Indian Pines Dataset)

| Algorithm | Input Data Type | Overall Accuracy | Key Features Extracted |

|---|---|---|---|

| 1D-CNN with Spectral-Spatial Data [16] | Augmented vector (spectral bands + spatial PCA) | 98.1% | Deep spectral features & spatial correlation from adjacent pixels |

| 1D-CNN with Pixel-wise Data [16] | Pixel spectral data only | Lower than 98.1% (exact value not specified) | Spectral features only |

| 2D-CNN with Principal Components [16] | Spatial-spectral principal components | Lower than 98.1% (exact value not specified) | Spatial-spectral features via PCA |

| SVM with PCA [17] | Principal components after dimensionality reduction | Lower than CNN (exact value not specified) | Linear patterns in reduced feature space |

Table 2: Broad Algorithm Performance in Forecasting and Classification

| Algorithm Category | Example Algorithms | Relative Performance | Noted Strengths and Weaknesses |

|---|---|---|---|

| Deep Learning | CNN, LSTM, RNN | CNN outperformed SVM on image data [18]; LSTM and RNN were classified as "inefficient" for gas forecasting [19] | CNNs excel with spatial patterns; some deep models can be computationally inefficient for certain forecasting tasks |

| Traditional Machine Learning | SVM, RF, LR, KNN | SVM and RF were among the most efficient for short-term gas forecasting [19]; SVM was outperformed by CNN on image classification [17] [18] | Often efficient and robust, especially with smaller datasets or less complex data patterns |

| Other | ARIMA, Perceptron | ARIMA was "efficient" for forecasting; Perceptron was "suboptimal" [19] | Performance is highly dependent on the specific application and data characteristics |

Experimental Protocols and Methodologies

Hyperspectral Image Classification with CNNs

Dataset: The Indian Pines dataset, containing two-thirds agricultural crops and one-third forest or other natural perennial vegetation [16].

Methodology Overview: Several 1D and 2D CNN architectures were developed and compared [16].

- 1D-CNN with Pixel-wise Spectral Data: The input vector was created by extracting the spectral data from each pixel individually. The network typically consisted of convolutional layers (with batch normalization, ReLU activation, and maxpooling), followed by fully connected layers and a softmax classifier [16].

- 1D-CNN with Spectral-Spatial Data: The input vector was augmented by concatenating the spectral bands of the target pixel with the Principal Component Analysis (PCA) data extracted from the surrounding pixels. This approach exploits spatial correlation between neighboring pixels [16].R×R

- 2D-CNN with Principal Components: PCA was first applied to all spectral bands of each pixel to extract the first Q principal components. The Q components from each of the surrounding pixels formed an input layer for the 2D-CNN, which used convolutional layers with 2D kernels to learn spatial-spectral features [16].R×R

- Band Selection CNN (BSCNN): A CNN was first trained using all spectral bands. Then, bands (N′) were randomly selected over L iterations, and the combination delivering the highest accuracy was used to retrain the CNN [16].N′

Traditional Machine Learning Baseline

Methodology Overview: A comparative study utilized the same Indian Pines dataset to evaluate a traditional machine learning pipeline [17].

- Dimensionality Reduction: Principal Component Analysis (PCA) was applied to the hyperspectral data to reduce redundancy and curtail the high dimensionality of the data [17].

- Classification: A Support Vector Machine (SVM) classifier was then trained on the principal components to perform the terrain classification [17].

Workflow and Signaling Pathways

The following diagram illustrates a generalized experimental workflow for comparing CNN architectures against traditional methods for spectral data classification, as described in the cited research [16] [17].

The Scientist's Toolkit: Research Reagent Solutions

This table details key materials and computational tools used in advanced spectral and image data analysis for explosives research, as derived from the experimental methodologies.

Table 3: Essential Research Materials and Tools for Spectral Data Analysis

| Item Name | Function/Brief Explanation | Example Context |

|---|---|---|

| Hyperspectral Image (HSI) Cube | A three-dimensional data structure containing spatial information (x, y) and spectral information across many bands. | Core data format for terrain and agricultural classification [16]. |

| Principal Component Analysis (PCA) | A feature extraction algorithm used to reduce data dimensionality while preserving dominant spectral information [16]. | Preprocessing step for 2D-CNN input and traditional SVM classification [16] [17]. |

| Fluorescent Sensing Material (e.g., LPCMP3) | A material that undergoes fluorescence quenching upon interaction with nitroaromatic explosives like TNT via photoinduced electron transfer (PET) [7]. | Used in trace explosive detection systems to generate response signals [7]. |

| Raman Spectrometer | An analytical instrument that fires a laser at a sample to excite molecules and detects the scattered light to chart unique vibrational frequencies, creating a spectral signature [20]. | Used for identifying explosive compounds at security checkpoints by matching spectra to a chemical library [20]. |

| Chiral-Specified SMILES Strings | A line notation for representing molecular structures that preserves chiral center and 3D bond orientation information, crucial for accurate property prediction [21]. | Input for machine learning models predicting crystal density and detonation properties of high explosives [21]. |

Emerging self-supervised and semi-supervised approaches for limited labeled data

In machine learning applications for specialized domains like explosives classification and drug development, a significant bottleneck is the scarcity of expensive, expert-annotated data. Self-supervised learning (SSL) and semi-supervised learning (SSL) have emerged as powerful paradigms to overcome this challenge by leveraging readily available unlabeled data to improve model performance. Self-supervised learning methods learn useful representations from unlabeled data by defining a pretext task that generates its own supervisory signals from the data's structure [22] [23]. In contrast, semi-supervised learning methods simultaneously learn from a small set of labeled data and a larger pool of unlabeled data, often using techniques like consistency regularization to guide the learning process [24] [25]. For researchers dealing with sensitive or distributed data, such as in explosives research or multi-institutional medical studies, Federated Learning (FL) frameworks combine these approaches to enable collaborative model training without sharing raw data [26]. This guide provides a systematic comparison of these emerging approaches, focusing on their practical application, experimental performance, and implementation protocols.

Comparative Analysis of Performance and Methodology

Key Concepts and Definitions

Self-Supervised Learning (SSL): A machine learning paradigm where models learn from unlabeled data by generating their own supervisory signals through pretext tasks, such as predicting missing parts of the input or contrasting similar and dissimilar data pairs [22] [23] [27]. The process typically involves two stages: (1) self-supervised pre-training where the model learns general data representations, and (2) supervised fine-tuning where the model adapts to a specific task using limited labeled data [26] [23].

Semi-Supervised Learning (SSL): A learning method that utilizes both a small amount of labeled data and large amounts of unlabeled data to improve model accuracy [24] [25]. These methods often rely on the smoothness assumption, which posits that data points close to each other are likely to share the same label [25].

Federated Learning (FL): A distributed training technique that trains machine learning models on decentralized data across multiple private clients without exchanging the data itself [26].

Table 1: Fundamental comparison between self-supervised and semi-supervised learning approaches

| Aspect | Self-Supervised Learning | Semi-Supervised Learning |

|---|---|---|

| Core Principle | Learns representations by creating supervisory signals from unlabeled data [23] | Leverages both labeled and unlabeled data simultaneously [25] |

| Data Requirements | Primarily unlabeled data; small labeled set for fine-tuning [26] | Requires a mix of labeled and unlabeled data [24] |

| Common Techniques | Masked image modeling, contrastive learning, pretext tasks [26] [23] | Consistency regularization, pseudo-labeling, entropy minimization [24] [25] |

| Training Phases | Two-stage: pre-training then fine-tuning [23] | Single-stage: joint optimization on labeled and unlabeled data [24] |

| Typical Applications | Representation learning, transfer learning, data compression [23] [27] | Scenarios with limited labeled data, medical imaging, chemical property prediction [24] [25] |

Quantitative Performance Comparison

Recent systematic evaluations provide compelling evidence for the effectiveness of these methods in real-world scenarios with limited labeled data.

Table 2: Performance comparison of self-supervised and semi-supervised methods across domains

| Method | Domain | Dataset | Performance | Comparison Baseline |

|---|---|---|---|---|

| MixMatch (Semi-SL) [24] | Medical Imaging | 4 classification tasks | Most reliable gains across datasets | Superior to supervised baselines and other SSL methods |

| SSL-GCN (Semi-SL) [25] | Chemical Toxicity | Tox21 (12 endpoints) | Avg. ROC-AUC: 0.757 (6% improvement) | Outperformed supervised GCN and traditional ML |

| MAE/BEiT (Self-SL) [26] | Medical Imaging (Federated) | Retinal, Dermatology, Chest X-ray | 5.06%, 1.53%, 4.58% accuracy improvements | Surpassed supervised ImageNet pre-training under severe heterogeneity |

| Masked Image Modeling (Self-SL) [26] | Medical Imaging | Non-IID datasets | Significant robustness to distribution shifts | Better generalization to out-of-distribution data |

Federated Learning Integration

Federated Learning frameworks address critical data privacy concerns in sensitive domains like explosives research and healthcare. These frameworks become particularly powerful when combined with self-supervised approaches:

- Federated Self-Supervised Pre-training: Uses masked image modeling (e.g., BEiT, MAE) to learn visual representations from decentralized unlabeled data without centralizing sensitive information [26].

- Federated Supervised Fine-tuning: Transfers the learned representations to specific target tasks using limited labeled data available at each client [26].

- Advantages in Non-IID Settings: Vision Transformers with self-supervised pre-training demonstrate remarkable robustness against various degrees of data heterogeneity, a common challenge in real-world federated scenarios [26].

Experimental Protocols and Methodologies

Semi-Supervised Learning with Mean Teacher for Chemical Toxicity Prediction

Objective: To predict chemical toxicity using limited annotated data by leveraging unlabeled molecular structures [25].

Dataset:

- Labeled data: Tox21 dataset with 12 toxicological endpoints

- Unlabeled data: Compounds from other chemical databases

- Optimal ratio: Unlabeled to annotated data between 1:1 and 4:1 [25]

Architecture: Graph Convolutional Neural Network (GCN)

- Molecules represented as undirected graphs (atoms as nodes, bonds as edges)

- Layer-wise propagation based on Kipf et al. (2017) [25]

- Equation: $hi^{(l+1)} = \text{ReLU}\left(b^{(l)} + \sum{j\in N(i)} \frac{1}{\sqrt{N(i)N(j)}} h_j^{(l)} W^{(l)}\right)$

SSL Algorithm: Mean Teacher

- Maintains two models: Student model (standard weights) and Teacher model (exponential moving average of student weights)

- Consistency loss between predictions of student and teacher models

- Combined classification loss on labeled data and consistency loss on unlabeled data [25]

Experimental Protocol:

- Molecular featurization using graph representations

- Model training with progressive ramp-up of consistency weight

- Evaluation on held-out test set using ROC-AUC metrics

- Ablation studies on unlabeled data ratios [25]

Self-Supervised Learning with Masked Image Modeling for Federated Settings

Objective: To enable robust representation learning across decentralized medical datasets with limited labels and data heterogeneity [26].

Architecture: Vision Transformer (ViT) with Masked Image Modeling

- Pretext task: Reconstruct randomly masked patches of input images

- Two implementations: BEiT and MAE frameworks [26]

Federated Learning Framework:

- Federated Self-Supervised Pre-training:

- Local training on each client using masked image modeling

- Server aggregation of model weights using FedAvg

- Multiple communication rounds [26]

- Federated Supervised Fine-tuning:

- Transfer learning to downstream tasks with limited labeled data

- Final model evaluation on test datasets [26]

Datasets and Evaluation:

- Multiple medical imaging modalities: retinal, dermatology, chest X-ray

- Real-world benchmark: COVID-FL with data from 8 medical sites

- Comparison against supervised baselines with ImageNet pre-training

- Assessment of label efficiency with varying fractions of labeled data [26]

Table 3: Essential research reagents and computational tools for SSL/SSL research

| Resource | Type | Function/Purpose | Example Applications |

|---|---|---|---|

| Tox21 Dataset [25] | Labeled Data | Benchmark for chemical toxicity prediction | Semi-supervised learning for molecular property prediction |

| Graph Convolutional Networks [25] | Algorithm | Processes molecular graph structures | Chemical toxicity prediction, molecular property estimation |

| Mean Teacher Algorithm [25] | SSL Algorithm | Provides consistent targets for unlabeled data | Semi-supervised classification with limited labels |

| Vision Transformers (ViT) [26] | Architecture | Self-attention based model for image processing | Masked image modeling, federated self-supervised learning |

| BEiT/MAE Frameworks [26] | SSL Algorithm | Masked image modeling pre-training | Representation learning from unlabeled images |

| Federated Averaging (FedAvg) [26] | Distributed Algorithm | Aggregates model updates in federated learning | Privacy-preserving collaborative training |

| FTIR Spectroscopy [28] | Analytical Tool | Molecular fingerprinting through infrared absorption | Explosives residue classification, material identification |

The systematic comparison of self-supervised and semi-supervised approaches reveals their significant potential for applications with limited labeled data, such as explosives classification and drug development. Semi-supervised methods like MixMatch and Mean Teacher with GCNs demonstrate reliable performance gains in chemical domain applications, while self-supervised approaches using masked image modeling show exceptional robustness in federated settings with data heterogeneity. The experimental protocols and performance metrics outlined provide researchers with practical guidance for implementing these approaches. For explosives classification research specifically, the combination of FTIR spectroscopy with these advanced learning paradigms offers promising avenues for more accurate and data-efficient identification of hazardous materials, though careful attention to hyperparameter tuning and realistic validation set sizes remains crucial for success.

The accurate detection and identification of high-energy materials are critical for security, industrial safety, and environmental monitoring. This guide provides a systematic comparison of five critical explosive targets: RDX, TNT, PETN, HMX, and ammonium nitrate. Within the broader context of machine learning algorithms for explosives classification, we objectively compare their performance characteristics and the experimental protocols used for their analysis. The increasing deployment of techniques like terahertz time-domain spectroscopy (THz-TDS) and near-infrared (NIR) hyperspectral imaging, combined with convolutional neural networks (CNNs) and other machine learning models, enables rapid, non-destructive, and standoff detection of these materials [5] [6]. This guide serves as a reference for researchers and professionals developing next-generation detection and classification systems.

Fundamental Properties and Characteristics

Explosives are categorized as high explosives when they detonate and propagate at velocities greater than 1,000 meters per second (m/s) [29]. The properties of explosives are measurable physical attributes typical of a single crystal, while characteristics are performance attributes measured during or after the chemical reaction [29].

Table 1: Fundamental Physical and Chemical Properties

| Property | RDX | TNT | PETN | HMX | Ammonium Nitrate |

|---|---|---|---|---|---|

| Common Name | Cyclotrimethylenetrinitramine | Trinitrotoluene | Pentaerythritol tetranitrate | Cyclotetramethylene-tetranitramine | - |

| Chemical Formula | C₃H₆N₆O₆ | C₇H₅N₃O₆ | C₅H₈N₄O₁₂ | C₄H₈N₈O₈ | NH₄NO₃ |

| Molar Mass (g/mol) | - | - | - | - | 80.043 |

| Density (g/cm³) | - | - | - | - | 1.725 (at 20°C) |

| Melting Point (°C) | - | - | - | - | 169.6 |

| Decomposition Temperature (°C) | - | - | - | - | ~210 |

Table 2: Detonation and Sensitivity Characteristics

| Characteristic | RDX | TNT | PETN | HMX | Ammonium Nitrate |

|---|---|---|---|---|---|

| Detonation Velocity (m/s) | - | ~6,800 [29] | - | - | ~2,500 [30] |

| Shock Sensitivity | - | - | - | - | Very Low [30] |

| Friction Sensitivity | - | - | - | - | Very Low [30] |

| TNT Equivalency | ~1.5 | 1.0 (by definition) | ~1.66 | ~1.70 | Varies with mixture |

| Major Uses | Military compositions, mining | Military shells, mining | Detonation cords, boosters | High-performance propellants, PBX | Fertilizer, ANFO industrial explosive [30] |

Ammonium nitrate (AN) itself has poor explosive properties but is a powerful oxidizer. Its explosive power is realized in mixtures like ANFO (Ammonium Nitrate Fuel Oil), which accounts for 80% of explosives used in North American mining and quarrying [30]. The sensitivity of AN increases dramatically with contaminants like organic materials, sulfur, or metals [31] [32]. Its decomposition is complex and can become explosive under conditions of confinement and high temperatures, leading to disasters such as the Beirut port explosion [31].

Experimental Methodologies for Characterization

Advanced spectroscopic techniques are central to modern explosives characterization, providing the data for machine learning algorithms.

Terahertz Time-Domain Spectroscopy (THz-TDS)

Terahertz radiation (0.1-10 THz) is non-destructive and can penetrate many common materials, making it ideal for detecting concealed explosives [5].

- Sample Preparation: For reflection geometry measurements, 100 mg of the explosive material is mixed with 200 mg of Teflon powder. This mixture is then pressed under 2-3 tons of pressure to form a pellet approximately 1 mm thick and 13 mm in diameter [5].

- Data Acquisition: A pulsed terahertz beam is directed at the sample. A gold-coated mirror serves as a reference due to its high reflectivity in the THz range. The system captures the reflected time-domain electric field from both the sample and the reference [5].

- Data Analysis: The time-domain signals are converted to the frequency domain using a Fourier transform. The complex optical properties—absorption coefficient and refractive index—are calculated by comparing the sample's signal with the reference. These spectra show distinct features for each explosive, serving as fingerprints for identification [5].

Near-Infrared Hyperspectral Imaging (NIR-HSI)

NIR-HSI (900-1700 nm) combines spatial and spectral information, allowing for non-contact, standoff detection of trace substances on various surfaces [6].

- System Setup: A custom NIR hyperspectral imaging system uses a transmissive grating for spectral dispersion and a lateral scanning mechanism. This setup captures detailed data across large areas [6].

- Data Collection: The system scans the target area, collecting a full spectrum for each pixel in the image. For example, ammonium nitrate shows a strong absorption band at 1585 nm, while TNT has several smaller, identifiable absorptions [6].

- Classification: The hyperspectral data cube is processed by a machine learning model, such as a Convolutional Neural Network (CNN), which learns to classify the hazardous materials based on their unique spectral signatures [6].

Machine Learning for Explosives Classification

Machine learning algorithms are critical for automating the accurate and rapid identification of explosives from complex spectral data.

Algorithm Performance Comparison

In a study classifying RDX, TNT, HMX, PETN, and Tetryl using THz-TDS data, a 1D Convolutional Neural Network (1D-CNN) was implemented and compared against traditional machine learning models [5]. The input features for the models were the extracted spectral features, including FFT amplitude, absorption coefficient, and refractive index [5].

Table 3: Machine Learning Model Performance for Explosives Classification

| Machine Learning Model | Reported Classification Accuracy | Key Advantages |

|---|---|---|

| 1D Convolutional Neural Network (1D-CNN) | High (Specific metrics not provided in source) | Automatically extracts relevant features from raw spectral data; computationally efficient for sequential data [5]. |

| Support Vector Machine (SVM) | Outperformed by CNN [5] | Effective in high-dimensional spaces; robust against overfitting. |

| K-Nearest Neighbors (KNN) | Outperformed by CNN [5] | Simple implementation and interpretation. |

| Random Forest (RF) | Outperformed by CNN [5] | Handles non-linear data well; provides feature importance. |

Similarly, in NIR hyperspectral imaging, a CNN model demonstrated superior performance with 91.08% accuracy, 91.15% recall, and 90.17% precision, significantly outperforming traditional methods like SVM and KNN [6].

Classification Workflow

The process of applying machine learning to spectroscopic data follows a structured pipeline, from data acquisition to final classification.

The Scientist's Toolkit: Key Research Reagents and Materials

This section details essential materials and reagents used in the preparation and analysis of explosive mixtures in a research context.

Table 4: Essential Research Reagents and Materials

| Item | Function/Description | Example Use Case |

|---|---|---|

| Ammonium Nitrate (Ground) | The primary oxidizer in many industrial explosive mixtures. | Base component in ammonals and ANFO analogs [32]. |

| Flaked Aluminium (Alf) Powder | Fuel that increases the blast wave characteristics and energy output of an explosion. | Added to AN mixtures to form ammonals; enhances afterburning reactions [32]. |

| Aluminium-Magnesium (AlMg) Alloy Powder | A reactive fuel additive that can increase the temperature and duration of the fireball. | Modifying AN/Al mixtures to increase blast overpressure and detonation product temperature [32]. |

| Teflon Powder | An inert binding agent used to create uniform pellets for spectroscopic analysis. | Preparing samples for THz-TDS measurements by pressing explosive/Teflon mixtures [5]. |

| Pyrite (FeS₂) | A common sulfide mineral that can catalyze the exothermic decomposition of ammonium nitrate. | Studying the risk of spontaneous explosion in mining environments where ANFO contacts sulfide ores [33]. |

| Fuel Oil (FO) | A combustible liquid fuel that serves as a reducer in the most common AN-based explosive. | Mixed with porous AN prills to create ANFO [30]. |

This guide has provided a comparative characterization of RDX, TNT, PETN, HMX, and ammonium nitrate, emphasizing the experimental data and protocols relevant to machine learning classification. The distinct physicochemical and detonation properties of these materials, coupled with their unique spectral fingerprints in the terahertz and near-infrared ranges, form the basis for their identification. The integration of advanced spectroscopic techniques with robust machine learning models, particularly 1D-CNNs, demonstrates a powerful and evolving methodology for the accurate, non-destructive, and standoff detection of hazardous explosives. This field continues to advance with ongoing research, such as the SpectrEx project, which aims to create large, annotated spatio-spectral-temporal datasets to further improve detection algorithms [34].

Implementation Across Detection Modalities and Real-World Deployment

Organic Field-Effect Transistors (OFETs) with ML Pattern Recognition

Organic Field-Effect Transistors (OFETs) have emerged as a promising sensing platform, combining the amplification function of a transistor with the selective sensing capabilities of organic materials. A typical OFET consists of three electrodes (source, drain, and gate), a gate dielectric, and an organic semiconductor (OSC) layer [35] [36]. When exposed to target analytes, interactions at the OSC layer modulate the channel current, providing a measurable signal that can be amplified by the transistor itself [35]. This unique combination enables OFET-based sensors to detect various stimuli with high sensitivity, flexibility, and potential for low-cost manufacturing through solution processing techniques [35] [36].

The integration of machine learning (ML) with OFET sensing addresses key challenges in chemical detection, particularly for explosives classification. While OFETs provide the physical sensing mechanism, ML algorithms excel at pattern recognition in complex datasets, enabling accurate identification of target substances based on their unique spectral signatures [5] [6]. This synergistic approach enhances detection capabilities beyond what either technology could achieve independently, offering improved accuracy, sensitivity, and specificity in identifying hazardous materials.

Fundamental Sensing Mechanisms of OFETs

Operational Principles and Device Architectures

OFETs operate on the field-effect principle, where a gate voltage modulates the current flowing between source and drain electrodes through the organic semiconductor channel. The fundamental current-voltage relationships are described by:

In the linear region (V~DS~ < V~GS~ - V~T~): I~DS~ = (W/L) μ C~i~ (V~GS~ - V~T~) V~DS~ [36]

In the saturation region (V~DS~ > V~GS~ - V~T~): I~DS~ = (W/2L) μ C~i~ (V~GS~ - V~T~)^2^ [36]

Where W and L represent channel width and length, μ is field-effect mobility, C~i~ is insulator capacitance per unit area, and V~T~ is the threshold voltage.

For sensing applications, four primary OFET architectures are employed, each offering distinct advantages for specific detection scenarios:

- Bottom Gate/Bottom Contact (BGBC): Gate electrode positioned below semiconductor layer with source/drain contacts on bottom [36]

- Bottom Gate/Top Contact (BGTC): Gate electrode below with source/drain contacts on top of semiconductor [36]

- Top Gate/Bottom Contact (TGBC): Gate electrode above with source/drain contacts below semiconductor [36]

- Top Gate/Top Contact (TGTC): All components stacked from bottom to top [36]

The sensing mechanism in OFET-based detectors relies on changes in electrical parameters (threshold voltage, mobility, drain current) when the OSC layer interacts with target molecules. These interactions may involve physical adsorption, chemical reactions, or supramolecular interactions that alter charge transport properties [35] [36]. For explosives detection, electron-deficient nitro groups in explosive compounds can act as charge traps when interacting with electron-rich OSCs, leading to measurable changes in transistor characteristics that serve as detection signals [35].

Material Considerations for Explosives Detection

The organic semiconductor layer is the critical component determining sensing performance in OFET-based explosives detection. Key material considerations include:

- Energy Level Alignment: The HOMO/LUMO levels of the OSC must facilitate charge transfer interactions with explosive compounds, which typically feature electron-deficient nitro groups [35] [36]

- Morphology and Molecular Packing: Crystalline domains and π-orbital overlap affect charge carrier mobility and provide sites for analyte interaction [35]

- Functionalization: Incorporating specific receptor moieties into OSC materials enhances selectivity toward target explosives through molecular recognition [35]

Table: Key Material Classes for OFET-Based Explosives Sensing

| Material Type | Example Materials | Key Properties | Relevance to Explosives Detection |

|---|---|---|---|

| Polymer OSCs | Polythiophenes, P3HT, P3OT | Good solution processability, mechanical flexibility | Enable printable, large-area sensors [36] |

| Small Molecule OSCs | Pentacene, Rubrene, C~8~-BTBT | High crystallinity, pure domains | Provide well-defined interaction sites [35] |

| Functionalized OSCs | Receptor-grafted semiconductors | Specific molecular recognition | Enhanced selectivity for target analytes [35] |

| Composite Materials | OSC-nanoparticle blends | Synergistic properties | Amplified response signals [35] |

Machine Learning Algorithms for Explosives Classification

Traditional Machine Learning Approaches

Traditional machine learning algorithms provide effective solutions for explosives classification using OFET-generated data. These methods typically require feature extraction as a preprocessing step before classification:

Support Vector Machines (SVM) construct hyperplanes in high-dimensional space to separate different classes of explosives based on their spectral features. SVMs are particularly effective for small to medium-sized datasets and can handle non-linear decision boundaries through kernel functions [5] [37].

Random Forest (RF) operates by constructing multiple decision trees during training and outputting the class that is the mode of the classes of individual trees. This ensemble method reduces overfitting and provides robust performance across diverse datasets [5].

K-Nearest Neighbors (KNN) is a simple, instance-based learning algorithm that classifies samples based on the majority class among their k-nearest neighbors in the feature space. While computationally intensive for large datasets, KNN requires no explicit training phase [5] [37].

These traditional methods typically achieve prediction accuracies above 90% for explosives classification when applied to terahertz spectral data, providing reliable baseline performance [5] [37].

Deep Learning and 1D-CNN Architectures

One-dimensional Convolutional Neural Networks (1D-CNNs) have demonstrated superior performance for explosives classification using spectral data from OFET-based sensing platforms. Unlike traditional ML approaches that require manual feature engineering, 1D-CNNs automatically learn relevant features directly from raw spectral data through multiple convolutional layers [5].

The 1D-CNN architecture for spectral analysis typically includes:

- Input Layer: Accepts raw spectral data (absorption, refractive index, FFT amplitude)

- Convolutional Layers: Apply filters to extract local patterns and features

- Pooling Layers: Reduce dimensionality while retaining important information

- Fully Connected Layers: Integrate features for final classification [5]

This architecture has demonstrated prediction accuracies exceeding 95% for secondary explosives including RDX, HMX, TNT, PETN, and Tetryl, significantly outperforming traditional machine learning methods [5] [37].

ML Workflow for Explosives Classification

Comparative Performance Analysis

Algorithm Performance Metrics

Comprehensive evaluation of machine learning algorithms for explosives classification reveals significant differences in performance metrics. The following table summarizes quantitative comparisons between traditional ML approaches and deep learning methods based on terahertz spectral data of secondary explosives:

Table: Performance Comparison of ML Algorithms for Explosives Classification

| Algorithm | Accuracy (%) | Precision (%) | Recall (%) | F1-Score | Training Time | Computational Requirements |

|---|---|---|---|---|---|---|

| 1D-CNN | >95 [5] [37] | 90.17 [6] | 91.15 [6] | 0.924 [6] | Moderate-High | GPU recommended |

| SVM | >90 [5] [37] | ~85 [6] | ~86 [6] | ~0.85 [6] | Low-Moderate | CPU sufficient |

| Random Forest | >90 [5] [37] | ~84 [6] | ~85 [6] | ~0.84 [6] | Low | CPU sufficient |

| K-NN | >90 [5] [37] | ~82 [6] | ~83 [6] | ~0.82 [6] | Very Low (lazy learner) | CPU (memory-intensive) |

The superior performance of 1D-CNN stems from its ability to automatically learn hierarchical features from raw spectral data without manual feature engineering, which is particularly advantageous for capturing subtle spectral patterns characteristic of different explosive compounds [5].

Experimental Protocols and Methodologies

Standardized experimental protocols are essential for reproducible ML-based explosives classification using OFET platforms:

Sample Preparation Protocol:

- Precisely weigh 100 mg of explosive material (RDX, HMX, TNT, PETN, or Tetryl)

- Mix with 200 mg Teflon powder as binding agent

- Press mixture into pellets using hydraulic press under controlled pressure

- Verify uniform thickness and density across all samples [5]

Spectral Data Acquisition:

- Utilize Terahertz Time-Domain Spectroscopy (THz-TDS) in reflection geometry

- Set frequency range: 0.2-3.0 THz

- Collect time-domain signals with gold-coated mirror as reference

- Measure each sample multiple times to account for variability

- Extract absorption coefficient and refractive index spectra [5]

Data Preprocessing for ML:

- Apply Fast Fourier Transform (FFT) to time-domain signals

- Normalize spectral data to zero mean and unit variance

- Perform Principal Component Analysis (PCA) for dimensionality reduction (traditional ML)

- Split dataset into training (70%), validation (15%), and test (15%) sets [5]

Model Training and Evaluation:

- Implement 5-fold cross-validation to assess model robustness

- For 1D-CNN: optimize hyperparameters (learning rate, filter size, network depth)

- For traditional ML: perform grid search for optimal parameters

- Evaluate final models on held-out test set

- Compute performance metrics: accuracy, precision, recall, F1-score [5] [37]

Research Reagent Solutions and Materials

Successful implementation of OFET-ML platforms for explosives detection requires specific materials and reagents with defined functions:

Table: Essential Research Reagents and Materials for OFET-ML Explosives Detection

| Material/Reagent | Function | Specifications | Example Applications |

|---|---|---|---|

| Organic Semiconductors | Charge transport and sensing element | High purity, tailored HOMO/LUMO levels | P3HT, pentacene for sensing layer [35] [36] |

| Secondary Explosives | Target analytes for classification | Analytical standard grade (>98% purity) | RDX, HMX, TNT, PETN, Tetryl [5] [37] |

| Teflon Powder | Binding agent for sample preparation | 200 mg per 100 mg explosive | Sample pellet formation [5] |

| Dielectric Materials | Gate insulation in OFET structure | High capacitance, low leakage current | PMMA, PI, Al~2~O~3~ [35] |

| Substrate Materials | Mechanical support for OFET devices | Flexible, thermally stable | PET, PI, PEN for flexible sensors [36] |

| Electrode Materials | Source, drain, and gate contacts | High conductivity, appropriate work function | Au, Ag, PEDOT:PSS [35] [36] |

Implementation Considerations and Challenges

Technical Challenges and Limitations

Despite promising performance, several technical challenges persist in implementing OFET-ML systems for explosives detection:

OFET Stability Issues: Operational instability remains a significant limitation, with device performance degrading over time due to interactions with oxygen and moisture [35]. This instability manifests as decreased current, increased threshold voltage, and hysteresis in transfer characteristics, ultimately affecting detection reliability [35].

Response and Recovery Times: OFET-based sensors often exhibit slow response and recovery kinetics, defined as the time required to reach 90% of maximum response and return to 10% above baseline, respectively [35]. This limitation restricts real-time monitoring capabilities in field applications.

Sensitivity-Selectivity Trade-off: While high sensitivity enables detection of trace explosives, it also increases susceptibility to interference from similar compounds or environmental contaminants [35]. Achieving optimal balance requires careful material selection and ML model training.

Data Requirements for ML: Deep learning approaches like 1D-CNN typically require large, annotated datasets for training, which can be challenging to acquire for hazardous materials like explosives [5].

Emerging Solutions and Future Directions

Several strategies are being developed to address these challenges and advance OFET-ML platforms:

Material Engineering: Developing novel organic semiconductors with improved environmental stability and tailored interaction sites for specific explosives [35]. Hybrid materials combining OSCs with inorganic nanoparticles show promise for enhanced performance [38].

Device Architecture Optimization: Advanced OFET structures including extended-gate, electrolyte-gated, and dual-gate configurations improve sensing capabilities while mitigating stability issues [35].

Federated Learning Approaches: Emerging privacy-preserving ML techniques enable model training across multiple institutions without sharing sensitive explosive spectral data, addressing data scarcity challenges [39].

Edge Computing Integration: Deploying optimized ML models for real-time inference on edge devices reduces latency and enables field deployment of OFET-based detection systems [39].

System Architecture for OFET-ML Explosives Detection

The integration of Organic Field-Effect Transistors with machine learning pattern recognition represents a significant advancement in explosives detection technology. OFETs provide a versatile, sensitive, and potentially low-cost sensing platform, while ML algorithms, particularly 1D-CNNs, enable accurate classification of explosive compounds based on their unique spectral signatures.

Performance comparisons demonstrate that 1D-CNN architectures achieve superior accuracy (>95%) compared to traditional machine learning methods (>90%) for classifying secondary explosives including RDX, HMX, TNT, PETN, and Tetryl [5] [37]. This enhanced performance stems from the automatic feature learning capability of deep learning models, which effectively capture subtle spectral patterns that might be overlooked in manual feature engineering approaches.

Future developments in OFET-ML detection systems will likely focus on improving device stability through material engineering, optimizing model architectures for efficient edge deployment, and implementing federated learning approaches to address data scarcity while maintaining privacy [35] [39]. These advancements will gradually transition this technology from laboratory settings to practical field applications in security screening, environmental monitoring, and emergency response scenarios.

Raman Spectroscopy Combined with AI/ML for Rapid Library Updates

The rapid and accurate identification of explosive compounds is a critical challenge in security and forensic science. Traditional Raman spectroscopy, while a powerful non-destructive analytical tool, relies on extensive spectral libraries for material identification. Updating these libraries with new or emerging threat compounds has historically been a slow, labor-intensive process, creating a significant capability gap in the field [20]. The integration of Artificial Intelligence (AI) and Machine Learning (ML) is revolutionizing this process, enabling rapid library updates and enhancing the classification of complex mixtures. This guide objectively compares the performance of various AI/ML algorithms when combined with Raman spectroscopy for explosives classification, providing researchers and development professionals with a clear analysis of available methodologies and their experimental backing.

Performance Comparison of AI/ML Algorithms for Raman Spectroscopy

The effectiveness of Raman spectroscopy for explosive identification is directly tied to the algorithms processing the spectral data. The following table summarizes the documented performance of various machine learning algorithms applied to Raman and other spectroscopic data for hazardous material classification.

Table 1: Performance Comparison of Machine Learning Algorithms for Explosive Classification

| Algorithm | Application & Context | Reported Performance Metrics | Key Experimental Findings |

|---|---|---|---|

| Convolutional Neural Network (CNN) | Raman spectroscopy of pure drugs & mixtures [40] | 100% correct ID for pure substances; 64% for binary mixtures | Superior correct identification vs. other algorithms; outperforms traditional methods. |

| NIR Hyperspectral Imaging of explosives [6] | 91.08% accuracy, 91.15% recall, 90.17% precision, F1 score: 0.924 | Significantly outperformed SVM and KNN in classification accuracy. | |

| Random Forest (RF) | Raman spectroscopy of pure drugs & mixtures [40] | 97% correct identification for pure substances | Comparable, but slightly lower performance than CNN. |

| Artificial Neural Network (NN) | Raman spectroscopy of pure drugs & mixtures [40] | 65% correct ID for binary mixtures (both compounds) | Superior performance on authentic binary mixtures data. |

| Support Vector Machine (SVM) | Raman spectroscopy of pure drugs & mixtures [40] | High accuracies observed | Use of a linear kernel suggested data was linearly separable. |

| k-Nearest Neighbors (kNN) | Raman spectroscopy of pure drugs & mixtures [40] | Lower accuracy compared to NN and CNN | Outperformed by deep learning methods on complex spectra. |

| Naive Bayes (NB) | Classification of explosives using OFETs [41] | Fast results with reasonable accuracy | Simple to calculate and suitable for large databases. |

| Hybrid LDA-PCA | FTIR spectroscopy of post-blast residues [28] | Successful identification achieved | Provided best results for classifying post-blast explosive residues. |

Key Performance Insights

- Deep Learning Superiority: Convolutional Neural Networks (CNNs) consistently demonstrate superior performance in classifying spectroscopic data. In Raman analysis, a CNN achieved perfect (100%) identification of pure test compounds, outperforming other algorithms [40]. Similarly, in NIR hyperspectral imaging, a CNN model significantly surpassed traditional methods like SVM and kNN, achieving over 91% accuracy across multiple metrics [6].

- Advantage for Complex Mixtures: For complex samples like binary mixtures of drugs and diluents, deep learning methods (NN and CNN) resulted in superior correct identification (65% and 64%, respectively) compared to other algorithms [40]. This highlights their enhanced capability to handle real-world, complex spectra where traditional correlation methods can struggle.

- Traditional Methods for Linear Data: Algorithms like Support Vector Machines (SVM) with linear kernels can achieve high accuracy when the spectral data is linearly separable, offering a potentially less complex solution [40].

Detailed Experimental Protocols

To evaluate and compare the performance of these AI/ML algorithms, researchers follow rigorous experimental protocols. The workflow below illustrates the general process for developing an AI/ML-enhanced Raman system, from data acquisition to final validation.

Diagram 1: AI/ML-Raman Development Workflow

Spectra Acquisition and Dataset Creation

In a seminal study evaluating ML for portable Raman, spectra were acquired using a TacticID portable Raman spectrometer (B&W Tek) with a 785 nm laser and 9 cm⁻¹ resolution. Samples included 14 drugs and 15 diluents, measured through glass vials and plastic bags, resulting in 444 pure spectra. These spectra were baseline-corrected and truncated to the 176–2000 cm⁻¹ range. To simulate real-world complexity, a large dataset of 39,000 synthetic mixtures (binary, ternary, and quaternary) was computationally created by scaling and adding pure spectra together, representing "worst-case" scenarios for identification [40].

AI/ML Model Training and Validation

The core of the methodology involves training multiple algorithms on the created dataset. In the same study, six machine learning algorithms—k-Nearest Neighbors (kNN), Naive Bayes (NB), Random Forest (RF), Support Vector Machine (SVM), Neural Networks (NN), and Convolutional Neural Networks (CNN)—were trained and evaluated. The models were trained to report the identification of compounds and their broader class. A critical step is the validation process, where the trained models are tested against "authentic" samples not used in training. Furthermore, the process follows a "trust, but verify" principle, where the AI's identifications are rigorously challenged with samples containing additives and masking agents to ensure it is not fooled by background noise or deliberate obfuscation [40] [20].

Impact on Rapid Library Updates

The most significant advantage of integrating AI/ML with Raman spectroscopy is the dramatic reduction in the time required to update spectral libraries with new threat compounds.

Closing the Capability Gap

The traditional process for adding a new explosive compound to a detection library is slow and meticulous, involving manual programming of spectrographic characteristics by scientists and contractors to ensure high Probability of Detection (PD) and low Probability of False Alarm (PFA). This process can traditionally take one to two years [20]. Research funded by the Department of Homeland Security Science and Technology Directorate (DHS S&T) has demonstrated that AI/ML solutions can close this critical time gap. The new process, which involves training the AI with examples of the new compound and then rigorously validating its ability to identify it amidst interference, can now be completed in a matter of days or weeks [20].

Enhanced Identification of Complex Compositions

AI/ML models excel in identifying mixtures and pure compounds even through barriers. For example, a portable NIR hyperspectral imaging system combined with a CNN successfully identified trace levels (as low as 10 mg/cm²) of explosives like TNT and ammonium nitrate through glass, plastic, and clothing [6]. This demonstrates the model's robustness to environmental interference, a common challenge in real-world scenarios. Furthermore, CNNs have been specifically designed to analyze pure compounds, binary, and ternary mixtures with accuracies of 99.9%, 96.7%, and 85.7% respectively, far exceeding the capabilities of traditional library matching using Hit Quality Index (HQI) in complex situations [40].

The Scientist's Toolkit: Key Research Reagents and Materials

The following table details essential materials and software solutions used in the featured experiments for developing AI/ML-enhanced Raman systems.

Table 2: Essential Research Reagents and Software Solutions

| Item Name | Function / Application in Research |

|---|---|

| Portable Raman Spectrometer (e.g., TacticID, Agilent Resolve) | Field-deployable instrument for on-site spectral acquisition; some models offer through-barrier analysis [40] [42]. |

| Commercial Spectral Libraries (e.g., Metrohm MCRL, Agilent Resolve) | Large, validated databases (>13,000 spectra) of known compounds for initial algorithm training and validation [42] [43] [44]. |

| Fluorescent Sensing Material (LPCMP3) | Polymer used in fluorescence-based trace explosive detection; interacts with nitroaromatics via photoinduced electron transfer [7]. |

| R Software Environment & RStudio | Code-driven, open-source platform for statistical analysis, data pre-treatment, and implementation of machine learning classification techniques [28]. |