Collaborative Method Validation in Forensic Laboratories: Boosting Efficiency, Standardization, and Cost-Effectiveness

This article explores the transformative collaborative method validation model that is reshaping forensic science.

Collaborative Method Validation in Forensic Laboratories: Boosting Efficiency, Standardization, and Cost-Effectiveness

Abstract

This article explores the transformative collaborative method validation model that is reshaping forensic science. Aimed at researchers, scientists, and laboratory directors, it details how forensic service providers (FSSPs) are moving beyond isolated validation work to create national consortia for sharing data, resources, and standardized methods. The content covers the foundational principles of this approach, provides methodological guidance for implementation through real-world case studies, addresses common troubleshooting and optimization challenges, and presents validation metrics and comparative analyses that demonstrate significant gains in efficiency, cost savings, and data reliability. This model offers a scalable blueprint for improving quality and throughput in resource-constrained environments.

The Paradigm Shift: From Isolated Validation to National Collaboration

In accredited crime laboratories and other Forensic Science Service Providers (FSSPs), method validation is a mandatory, yet traditionally time-consuming and laborious process, particularly when performed independently by an individual laboratory [1]. This application note delineates a paradigm shift from this traditional, isolated approach to a collaborative method validation model. This modern framework encourages FSSPs using the same technology to work cooperatively, enabling the standardization and sharing of common methodologies to drastically increase validation efficiency and implementation speed [1]. The content herein is structured to provide researchers, scientists, and development professionals with a clear understanding of the model's principles, a direct comparison with traditional practices, and detailed protocols for its practical application.

Core Principles: Collaborative vs. Traditional Validation

The foundational principle of the collaborative model is that an FSSP that is first to validate a method incorporating a new technology, platform, or kit is encouraged to publish its work in a recognized peer-reviewed journal [1]. This publication acts as a foundational resource for other laboratories. Subsequently, other FSSPs can conduct a much more abbreviated method verification, rather than a full validation, provided they adhere strictly to the method parameters detailed in the original publication [1]. This process is supported by accreditation standards like ISO/IEC 17025 [1].

The following table contrasts the defining characteristics of the traditional and collaborative validation models.

Table 1: Key Differences Between Traditional and Collaborative Validation Models

| Aspect | Traditional Validation Model | Collaborative Validation Model |

|---|---|---|

| Core Approach | Isolated, performed independently by each FSSP [1]. | Cooperative, with FSSPs working together and sharing data [1]. |

| Resource Expenditure | High cost, time, and labor per laboratory due to redundancy [1]. | Significant cost savings and increased efficiency through shared effort [1]. |

| Method Standardization | Low; leads to similar techniques with minor variations across hundreds of FSSPs [1]. | High; promotes standardization and sharing of best practices [1]. |

| Data & Benchmarking | No common benchmark; results from independent validations cannot be directly compared [1]. | Enables direct cross-comparison of data and provides a benchmark for ongoing quality control [1]. |

| Implementation Pathway | Each FSSP must complete a full validation. | Second-tier FSSPs can perform a verification against a published validation [1]. |

This shift aligns with a broader movement in forensic science toward more objective, transparent, and empirically validated methods based on quantitative measurements and statistical models, moving away from those reliant solely on human perception and subjective judgement [2].

Experimental Protocols for Implementation

Protocol for the Originating Laboratory (Full Collaborative Validation)

This protocol guides a laboratory conducting the initial validation of a method with the intent to share it.

1. Planning and Design:

- Define Scope: Clearly define the method's intended use, including target analytes (e.g., specific seized drugs) and sample types [3].

- Incorporate Standards: Design the validation protocol to incorporate the latest relevant standards from organizations such as SWGDAM or OSAC from the outset [1].

- Plan for Publication: Structure the entire study with the goal of eventual publication in a peer-reviewed journal (e.g., Forensic Science International: Synergy) [1].

2. Experimental Validation Parameters: Systematically assess the following performance characteristics, documenting all procedures and results in detail. The example parameters below are indicative of a seized drug screening method using Gas Chromatography-Mass Spectrometry (GC-MS) [3].

- Selectivity/Specificity: Demonstrate the method's ability to distinguish and identify analytes in the presence of potential interferences.

- Sensitivity (Limit of Detection, LOD): Determine the lowest detectable amount of an analyte. The collaborative model has demonstrated improvements, such as LOD for Cocaine as low as 1 μg/mL compared to 2.5 μg/mL with a conventional method [3].

- Precision: Determine repeatability (intra-day) and reproducibility (inter-day) by analyzing replicates. Report as Relative Standard Deviation (RSD). High-quality validations achieve RSDs <0.25% for stable compounds [3].

- Accuracy: Verify correct identification against certified reference materials or standard databases.

- Robustness: Assess the method's resilience to deliberate, small variations in operational parameters (e.g., temperature programming, flow rate) [3].

3. Data Analysis and Publication:

- Analyze Data: Compile all data from the validation studies.

- Publish Comprehensively: Publish a detailed account of the method, including all instrumentation, parameters, reagents, and validation data to allow for exact replication by other laboratories [1].

Protocol for the Adopting Laboratory (Verification)

This protocol is for a laboratory adopting a method that has been previously validated and published according to the collaborative model.

1. Method Acquisition and Review:

- Obtain the published, peer-reviewed validation study.

- Conduct a thorough review to ensure the method is fit for the laboratory's intended purpose and scope.

2. Verification Experiment:

- Adhere Strictly to Protocol: Source the same instrumentation, reagents, and materials as described in the original publication. Implement the exact method parameters [1].

- Perform Limited Testing: Analyze a representative set of samples, which may include certified reference materials and a subset of real-case samples, to verify that the performance characteristics (e.g., retention times, detection limits, selectivity) match those reported in the original study [1] [3].

- Example: For a rapid GC-MS method, verify the retention time reproducibility and identification accuracy for key substances like heroin and cocaine against the published data [3].

3. Documentation and Reporting:

- Document all verification data, demonstrating that the method performs as expected in the new laboratory setting.

- The verification report should reference the original published validation, which the adopting laboratory reviews and accepts, thereby eliminating the need for redundant method development work [1].

Workflow and Logical Pathway

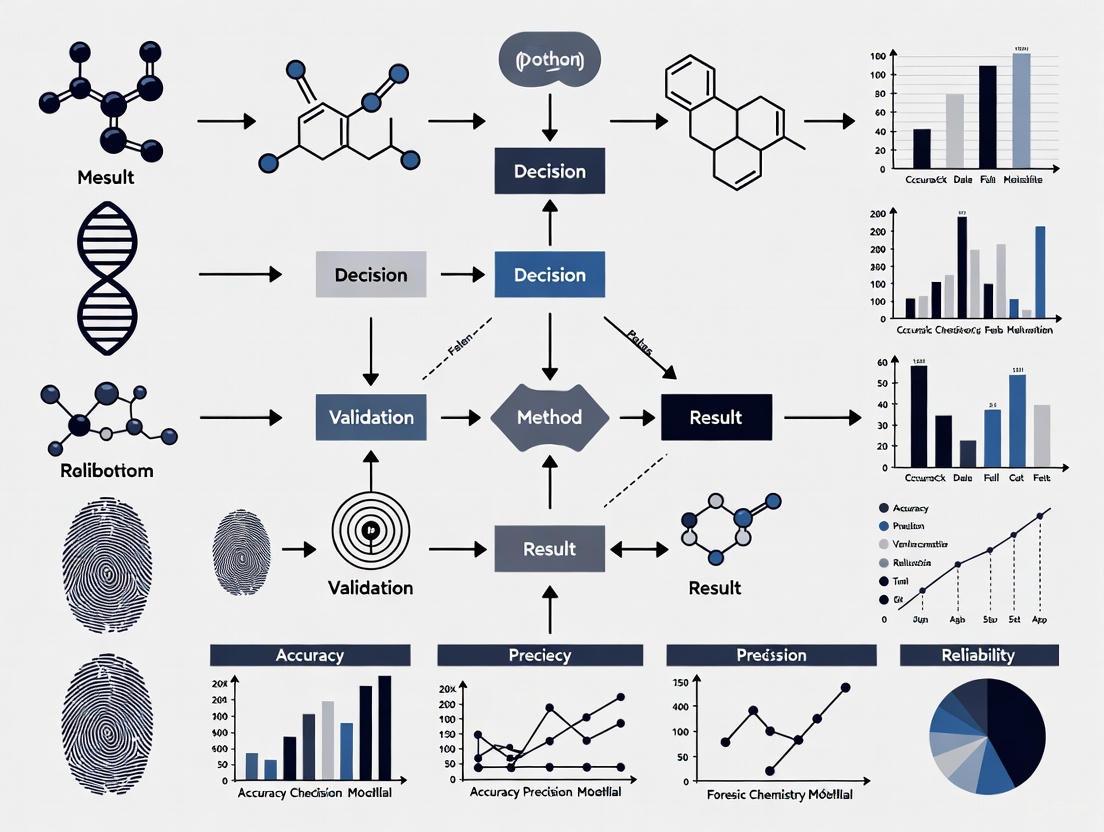

The following diagram illustrates the streamlined logical pathway of the collaborative validation model, from initial development to final implementation across multiple laboratories.

Successful implementation of the collaborative model relies on both traditional laboratory reagents and modern digital resources that facilitate sharing and standardization.

Table 2: Essential Research Reagents and Resources for Collaborative Validation

| Item / Resource | Function / Purpose |

|---|---|

| Certified Reference Materials (e.g., from Cerilliant, Cayman Chemical) [3] | Provide analytically pure substances for method development, calibration, and accuracy determination. |

| NIST DART-MS Forensics Database [4] | A freely available, evaluated spectral library for over 800 compounds of forensic interest, enabling consistent compound identification across laboratories. |

| NIST/NIJ DART-MS Data Interpretation Tool (DIT) [4] | An open-source, vendor-agnostic software tool for searching and interpreting mass spectral data, promoting standardized data analysis. |

| Standard Operating Procedure (SOP) Templates [4] | Example documentation (e.g., from NIST) for validation plans and SOPs that laboratories can adapt to ensure all critical elements are addressed. |

| Collaborative Working Groups | Forums for FSSPs using the same validated method to share results, monitor performance, and optimize cross-laboratory comparability [1]. |

The collaborative method validation model represents a definitive break from tradition, offering a structured pathway to overcome the inefficiencies and redundancies of isolated validation efforts. By leveraging published data and shared resources, FSSPs can accelerate the implementation of new technologies, reduce operational costs, and enhance the standardization and reliability of forensic science practice. This framework empowers researchers and laboratory professionals to build upon a collective scientific foundation, fostering a more efficient and robust forensic service system.

Forensic science service providers (FSSPs) operate at the critical intersection of science and justice, where the efficiency and reliability of workflows have direct implications for public safety and judicial integrity. A 2014 census of publicly funded forensic crime laboratories revealed a median of just 20 employees per institution, often responsible for managing significant case backlogs [5]. In this resource-constrained environment, optimizing workflows through strategic approaches becomes not merely advantageous but essential. This application note establishes a comprehensive business case for implementing collaborative models and optimized workflows in forensic science, presenting quantified evidence of cost and time savings alongside practical protocols for adoption. The content is specifically framed within the context of a broader thesis on collaborative method validation, demonstrating how standardized approaches can transform forensic laboratory efficiency while maintaining the highest scientific standards required for court-defensible results.

Quantitative Foundations: The Cost of Inefficiency and Value of Optimization

The business case for optimized forensic workflows begins with understanding both the costs of current inefficiencies and the potential savings from evidence-based improvements. The quantitative data below establishes a baseline for evaluating workflow interventions.

Table 1: Quantified Impact of Forensic Analysis Timeliness on Public Safety

| Metric | Value | Significance |

|---|---|---|

| Sexual assaults per year per recidivist offender | 7.1 | [5] |

| Days between offenses for a sexual predator | 51.41 | [5] |

| Average output per DNA analyst (annual cases) | 96-102 | [5] |

| Cases output per analyst per day | 0.4636 | [5] |

| Potential cost savings from solvent substitution | >25% | [6] |

The data in Table 1 reveals a critical relationship between analytical timeliness and public safety. Each day that forensic analysis is delayed represents an opportunity for recidivist offenders to commit additional crimes. With perpetrators committing new offenses every 51.41 days on average, backlog reduction directly translates to crime prevention [5].

Table 2: Economic and Productivity Metrics in Forensic Workflows

| Efficiency Measure | Current Standard | Optimized Potential |

|---|---|---|

| DNA analyst daily output | 0.4636 cases/day | Variable with economies of scale [5] |

| Method validation approach | Individual FSSP independent validation | Collaborative validation with verification [1] |

| Digital evidence processing | Qualitative assessment | Quantitative Bayesian metrics [7] |

| Laboratory design impact | Unquantified | Significant time savings and reduced labor costs [8] |

The Collaborative Validation Model: Framework and Business Case

The traditional model of method validation, where each FSSP independently validates identical methods, represents significant redundant expenditure. The collaborative validation model proposes a paradigm shift toward shared validation resources and standardized protocols.

Conceptual Framework and Workflow

The following diagram illustrates the stark contrast between traditional and collaborative validation approaches, highlighting the redundant resource expenditure in the traditional model:

Business Case Analysis

The collaborative model transforms method validation from a redundant, resource-intensive process into an efficient, shared knowledge resource. When an originating FSSP publishes comprehensive validation data in a peer-reviewed journal, subsequent adopters can perform verifications rather than full validations, reducing resource investment by 60-75% per laboratory [1]. For a technology adopted by 100 FSSPs, this represents a potential savings of 20,000-30,000 personnel hours across the community, dramatically accelerating implementation while maintaining scientific rigor [9].

Implementation Protocols: Collaborative Validation Framework

Protocol 1: Originating Laboratory Validation Process

Purpose: To establish a scientifically robust, publishable validation of a new forensic method that can be adopted by other FSSPs.

Materials and Reagents:

- Standard Reference Materials (SRMs) from NIST [10]

- Appropriate instrumentation and platform-specific reagents

- Quality control materials matching forensic standards

- Documented protocols from standards organizations (OSAC, SWGDAM)

Procedure:

- Method Selection and Planning: Identify technology addressing a forensic need. Form a collaboration with academic institutions where possible to incorporate graduate research [1].

- Validation Design: Develop a validation protocol incorporating all relevant published standards from OSAC and SWGDAM [1].

- Experimental Phase: Conduct studies addressing the following parameters:

- Sensitivity and specificity using standard reference materials

- Reproducibility and repeatability across multiple operators

- Stability and robustness under varying conditions

- Mock casework samples representing typical evidence

- Data Analysis and Documentation: Compile results with statistical analysis. Clearly define all method parameters, including:

- Instrumentation and software versions

- Reagent sources and lot numbers

- Sample preparation protocols

- Data interpretation guidelines

- Peer Review and Publication: Submit complete validation package to a forensic journal such as Forensic Science International: Synergy for peer review [1].

Validation Timeline: 6-9 months for comprehensive validation

Protocol 2: Adopting Laboratory Verification Process

Purpose: To efficiently verify and implement a method previously validated and published by an originating FSSP.

Materials and Reagents:

- Identical instrumentation and software versions as original publication

- Same source reagents and SRMs as used in original validation

- Casework-like samples for verification testing

Procedure:

- Method Assessment: Obtain and review the published validation. Verify that all equipment and reagents match the published method exactly [1].

- Verification Study Design: Design a limited verification study testing key performance claims:

- Conduct a minimum of 3 reproducibility experiments across multiple operators

- Test 20-30 mock casework samples

- Verify sensitivity and specificity claims with standard materials

- Comparative Analysis: Compare results directly with published data. Statistical equivalence should be demonstrated within predefined acceptance criteria.

- Documentation and Reporting: Compile verification report referencing the original publication. Include:

- Demonstration of equivalent instrumentation and reagents

- Verification data showing comparable results

- Any minor adjustments required with justification

- Implementation: Once verification is complete, proceed to competency testing and casework implementation.

Verification Timeline: 4-6 weeks for abbreviated verification

Laboratory Efficiency Optimization: Practical Applications

Beyond methodological validation, significant efficiencies can be gained through optimized laboratory design and workflow management. Studies indicate that proper laboratory design can yield substantial time savings by eliminating hardware and software incompatibilities, automating report generation, and streamlining case management [8].

Digital Forensic Laboratory Design Protocol

Purpose: To establish an efficient, secure digital forensic laboratory configuration that optimizes workflow and reduces operational costs.

Materials and Equipment:

- SalvationDATA Digital Forensic Lab or equivalent integrated system

- Separate evidence storage lockers or locking cabinets

- Surge-protected electrical circuits with backup power

- Ergonomic, adjustable furniture and sufficient workspace (24-48 inches per analyst)

- Soundproofing materials (carpeting, tiled ceilings)

- White noise generators for acoustic privacy

- Network security infrastructure (firewall, VPN, encryption)

Procedure:

- Workflow Zoning: Establish distinct laboratory zones based on security clearance:

- Low-security: Administrative areas and common spaces

- Mid-security: Primary analysis stations

- High-security: Evidence storage and sensitive data discussion areas

- Evidence Handling Protocol: Implement chain of custody maintenance through:

- Secure evidence lockers with access control

- Automated chain of custody documentation where possible

- Separate evidence storage from general laboratory equipment

- Ergonomic Optimization: Configure workspaces to maximize productivity:

- Position monitors away from windows to reduce glare and maintain privacy

- Provide adjustable chairs and desks for extended analysis sessions

- Ensure sufficient lighting for detailed evidence examination

- Security Implementation: Establish comprehensive physical and cybersecurity:

- Install CCTV surveillance and access control systems

- Implement network encryption and firewall protection

- Maintain regular software updates and security patches

Efficiency Gains: Laboratories implementing these design principles report reduced labor costs through big data analysis automation and time savings from streamlined evidence processing [8].

Quantitative Evaluation in Forensic Analysis

The implementation of quantitative evaluation methods represents another frontier for workflow optimization in forensic science. While conventional forensic disciplines like DNA analysis provide random match probabilities of approximately 10⁻⁸, digital forensics has historically lacked analogous quantifiable metrics [7].

Bayesian Analysis Protocol for Digital Evidence

Purpose: To apply quantitative Bayesian methods to digital forensic investigations, providing measurable confidence metrics for investigative findings.

Materials and Software:

- Bayesian network analysis software

- Domain expert panel for likelihood estimation

- Case-specific digital evidence

- Alternative hypothesis framework

Procedure:

- Hypothesis Definition: Establish mutually exclusive and exhaustive hypotheses:

- Prosecution hypothesis (Hₚ)

- Defense hypothesis (Hd)

- Prior Probability Assignment: Assign non-informative priors (0.5/0.5) unless case-specific information justifies informative priors.

- Bayesian Network Construction: Map the relationship between evidence and hypotheses:

- Identify all relevant items of digital evidence

- Establish conditional dependencies between evidence items

- Likelihood Estimation: Survey domain experts to establish conditional probabilities for:

- Probability of evidence given prosecution hypothesis [Pr(E|Hₚ)]

- Probability of evidence given defense hypothesis [Pr(E|Hd)]

- Likelihood Ratio Calculation: Compute the likelihood ratio using the formula:

- LR = [Pr(E|Hₚ)] / [Pr(E|Hd)]

- Sensitivity Analysis: Test result robustness to variations in conditional probabilities.

Interpretation: Likelihood ratios above 10,000 provide "very strong support" for the prosecution hypothesis, as demonstrated in internet auction fraud cases where LRs of 164,000 were obtained [7].

The business case for optimizing forensic workflows is compelling, with demonstrated savings exceeding 25% for specific material substitutions and potentially reducing validation efforts by 60-75% through collaborative approaches [6] [1]. More significantly, efficiency gains directly impact public safety by reducing backlogs that otherwise enable recidivist crime. The protocols presented herein provide a practical roadmap for laboratories to implement these evidence-based improvements while maintaining scientific rigor and legal defensibility. As forensic science continues to evolve toward more quantitative and standardized practices, these optimized workflows will be essential for maximizing the societal value of forensic evidence while operating within constrained public sector budgets.

Research Reagent Solutions

Table 3: Essential Materials for Optimized Forensic Workflows

| Item | Function | Application |

|---|---|---|

| BestSolv Sierra/Delta | Drop-in replacement solvents for fingerprint processing | Cost-saving substitution for Novec solvents in fingerprint development [6] |

| NIST Standard Reference Materials (SRMs) | Reference materials for method validation | Ensuring analytical accuracy and measurement traceability [10] |

| SalvationDATA Digital Forensic Lab | Integrated digital forensic workstation | Streamlined digital evidence processing and case management [8] |

| OSAC-Published Standards | Standardized methods and protocols | Supporting collaborative validation and implementation [10] |

| Bayesian Network Analysis Software | Quantitative evidence evaluation | Calculating likelihood ratios for digital evidence [7] |

The National Technology Validation and Implementation Collaborative (NTVIC) represents a transformative model for advancing forensic science through strategic partnership. Established in 2022, the NTVIC's mission is to facilitate collaboration across the United States on validation, method development, and implementation of forensic technologies [11] [12]. This consortium comprises 13 federal, state, and local government crime laboratory leaders, joined by university researchers and private technology and research companies, creating a multifaceted ecosystem for forensic innovation [11]. The collaborative functions as a response to the critical need for standardized, efficient, and scientifically defensible methods within publicly funded forensic science service providers (FSSPs) and forensic science medical providers (FSMPs) [13].

The NTVIC emerged from recognizing that individual forensic laboratories often lack the resources to independently validate complex new technologies, leading to duplicated efforts and inefficient resource allocation across the judicial system [1]. By creating a structured collaborative framework, the NTVIC enables participating organizations to share resources, expertise, and data, thereby accelerating the implementation of novel forensic methods while maintaining rigorous scientific standards [1]. This national blueprint represents a paradigm shift from isolated validation efforts to a unified approach that elevates forensic practice across jurisdictions through shared minimum standards and best practices [11].

The Collaborative Validation Model: Framework and Business Case

Theoretical Foundation and Operational Framework

The collaborative validation model championed by NTVIC addresses fundamental inefficiencies in traditional forensic method validation. Where individual laboratories historically developed and validated methods independently—often tailoring parameters and procedures to specific jurisdictional needs—the collaborative approach establishes standardized methodologies that can be adopted across multiple laboratories [1]. This framework operates on the principle that while forensic laboratories serve different jurisdictions, they examine common evidence types using similar technologies and methods, creating natural opportunities for standardization and cooperation [1].

The model incorporates a three-phase validation structure that can be distributed across participating organizations:

- Phase One (Developmental Validation): Establishes proof of concept and general procedures, typically conducted by research scientists [1]

- Phase Two (Internal Validation): Conducted by the originating FSSP using forensically relevant samples to establish performance characteristics [1]

- Phase Three (Verification): Performed by subsequent FSSPs that adopt the exact methodology, dramatically reducing implementation time [1]

This distributed approach to validation creates an ecosystem where method development and refinement become collaborative endeavors rather than competitive pursuits, leveraging the collective expertise of participating institutions [1].

Quantitative Business Case and Efficiency Metrics

The business case for collaborative validation demonstrates substantial efficiency gains across multiple dimensions. By sharing validation data and standardizing methodologies, participating laboratories significantly reduce the resource burden associated with implementing new technologies [1].

Table 1: Comparative Analysis of Traditional vs. Collaborative Validation Models

| Validation Component | Traditional Model | Collaborative Model | Efficiency Gain |

|---|---|---|---|

| Method Development Time | 6-12 months | 1-2 months | 75-85% reduction |

| Sample Testing Requirements | 100% performed in-house | 30-40% verification testing | 60-70% reduction |

| Implementation Timeline | 12-18 months | 3-6 months | 65-75% reduction |

| Cost Burden | Full allocation of personnel and resources | Shared across consortium members | 50-60% cost savings |

| Data Comparability | Limited to internal benchmarks | Cross-laboratory comparison enabled | Enhanced reliability |

These efficiency metrics translate to tangible operational benefits, including faster implementation of improved forensic capabilities, reduced backlog of casework, and more consistent results across jurisdictions [1]. The model also creates opportunity for smaller laboratories with limited research and development capacity to implement advanced technologies that would otherwise be beyond their resource constraints [1].

Implementation Protocols: Forensic Investigative Genetic Genealogy (FIGG) Case Study

Experimental Design and Workflow Specifications

The NTVIC's first implemented initiative focused on creating standardized protocols for Forensic Investigative Genetic Genealogy (FIGG) programs, providing an exemplary case study of the collaborative model in practice [11] [14]. FIGG combines genetic testing with traditional genealogical research to generate investigative leads in unsolved violent crimes and cases of unidentified human remains [11]. The technical workflow integrates two complementary components: Forensic Genetic Genealogy (FGG) for developing SNP profiles from forensic evidence, and Investigative Genetic Genealogy (IGG) for genealogical research and analysis [11].

The FIGG experimental protocol requires precise sample handling and analytical procedures:

- Sample Requirements: Biological material collected from crime scenes, including blood, semen, saliva, tissue, bone, hair, touch DNA, or other human components bearing DNA [11]

- Sample Processing: Validated methods must demonstrate successful analysis of forensic samples, with additional testing requirements for mixed samples [11]

- Quality Thresholds: Quantity and quality requirements vary by sample type, with good quality single-source samples requiring less material than degraded samples [11]

- Consumption Considerations: Procedures must address sample consumption, with separate approval required when analysis will consume the entire sample [11]

Quality Assurance and Compliance Framework

The FIGG protocol establishes rigorous quality standards to ensure scientific defensibility. Laboratories conducting FGG must operate within an accredited quality assurance system, though FGG itself currently falls outside the scope of accredited forensic public laboratories [11]. The protocol mandates clearly delineated roles and responsibilities with documented accountability through job descriptions or a RACI matrix (responsible, accountable, consulted, and informed documentation) [11].

Critical compliance requirements include:

- Case Acceptance Criteria: FIGG analysis is restricted to specific case categories, primarily unsolved violent crimes (murder, rape, felony sexual offenses) and unidentified human remains, with additional consideration for crimes presenting substantial threats to public safety [11]

- Investigative Exhaustion: Reasonable investigative efforts must have been pursued and failed unless the crime presents an ongoing threat [11]

- Legal Framework: Memoranda of Understanding (MOUs) must be established between forensic service providers, law enforcement, and prosecutorial agencies prior to conducting FIGG [11]

- Third-Party Protections: Specific protocols govern interactions with third parties identified during genealogical research, emphasizing informed consent and privacy protections [11]

Research Reagent Solutions and Essential Materials

The implementation of advanced forensic methodologies like FIGG requires specialized reagents and materials to ensure reliable, reproducible results. The following table catalogues essential research reagents and their specific functions within the forensic genetic genealogy workflow.

Table 2: Essential Research Reagents for Forensic Genetic Genealogy Applications

| Reagent/Material | Technical Function | Application Specifics |

|---|---|---|

| SNP Sequencing Kits | Generation of single nucleotide polymorphism (SNP) profiles from forensic samples | Enables deliberate search for biologically related individuals through kinship analysis [11] |

| Direct-to-Consumer (DTC) DNA Data Files | Reference comparison files from third parties potentially biologically related to putative perpetrator | May be voluntarily provided for upload to genetic genealogy databases; requires informed consent [11] |

| Genetic Genealogy Database Access | Platform for comparing forensic SNP profiles against voluntarily submitted genetic data | Must comply with database Terms of Service; provides investigative leads through relative matching [11] |

| Buccal Collection Kits | Overt reference sample collection from third parties identified during genealogical research | Enables SNP sequencing for upload and comparison; requires written informed consent [11] |

| Quality Control Materials | Monitoring analytical process performance and ensuring result reliability | Must be incorporated throughout FGG analysis to maintain quality assurance standards [11] |

Data Sharing and Collaborative Governance Protocols

Data Sharing Agreements and Security Frameworks

Effective collaboration within the NTVIC model requires structured mechanisms for data sharing that balance scientific transparency with privacy and security requirements [15]. Formal data sharing agreements established in advance of data transfer ensure all parties—researchers, scientists, administrators, and legal teams—agree on terms, use, transfer, and storage protocols [15]. These agreements typically take the form of Confidential Disclosure Agreements (CDAs) or Non-Disclosure Agreements (NDAs), providing a legal framework for protecting sensitive information [15].

The data sharing protocol incorporates multiple security considerations:

- Operations Security (OPSEC): Systematic process for denying potential adversaries information about capabilities and intentions through identification, control, and protection of sensitive activity evidence [15]

- Information Security (INFOSEC): Protection of information and information systems from unauthorized access, use, disclosure, disruption, modification, or destruction to ensure confidentiality, integrity, and availability [15]

- Platform Selection: Data sharing platforms must align with security requirements, with options including Microsoft OneDrive, Google Drive, Dropbox, and Box, selected based on data type, quantity, and security needs [15]

Ethical Considerations and Human Subjects Protections

The NTVIC framework incorporates rigorous ethical standards, particularly for methodologies involving genetic data. Projects involving human subjects research must comply with requirements outlined in the Common Rule (45 CFR Part 46) when federally funded [15]. Institutional Review Board (IRB) approval is generally required for projects involving interaction or intervention with human subjects where identifiable private information or biological specimens are collected or analyzed [15].

For FIGG applications specifically, ethical protocols include:

- Third-Party Consent: Written informed consent must be obtained from third parties for reference sample collection or upload of existing genetic data to databases [11]

- Covert Collection Restrictions: Covert collection of third-party reference samples requires prior court approval based on demonstrated substantial risk [11]

- Privacy Safeguards: Use of all samples collected for forensic casework must align with genetic genealogy database Terms of Service and privacy protections [11]

The NTVIC model represents a transformative national blueprint for forensic method validation and implementation that addresses systemic inefficiencies while elevating scientific standards across jurisdictions. By creating structured mechanisms for collaboration, data sharing, and standardized protocol development, this consortium enables more rapid adoption of advanced forensic technologies while maintaining scientific rigor and defensibility [11] [1]. The success of initial initiatives like the FIGG validation guidelines demonstrates the practical utility of this approach for complex, emerging forensic methodologies [14].

For researchers and forensic science professionals, the NTVIC framework offers a replicable model for accelerating technology implementation while reducing redundant validation efforts. The collaborative approach enhances standardization across laboratories, improves result comparability, and creates opportunities for smaller laboratories to implement technologies that would otherwise exceed their resource capacity [1]. As forensic technologies continue to advance in complexity and capability, collaborative validation consortia like NTVIC provide an essential infrastructure for ensuring these innovations are implemented efficiently, ethically, and consistently across the forensic science enterprise.

Forensic Science Service Providers (FSSPs) operate in a complex landscape characterized by rapidly advancing technology, increasing methodological complexity, and significant resource constraints [1]. The traditional model of independent method validation creates substantial inefficiencies, with approximately 409 U.S. FSSPs often performing similar validation procedures with only minor modifications [1]. This redundancy represents a significant waste of precious resources that could otherwise be directed toward active casework and innovative research. Simultaneously, the National Institute of Justice (NIJ) has identified key research priorities for Fiscal Year 2025 that emphasize improving forensic science systems, identifying best practices, and supporting foundational applied research [16]. A strategic alignment emerges between these priorities and collaborative scientific approaches that can simultaneously enhance research impact while optimizing resource utilization across the forensic science community.

Collaborative models fundamentally reshape how forensic laboratories approach method validation, technology implementation, and knowledge transfer [1]. By working cooperatively on validation projects, FSSPs performing similar analyses using comparable technology can standardize methodologies, share development costs, and accelerate implementation timelines [12]. This approach directly supports NIJ's research mission to "increase the body of knowledge to guide and inform forensic science policy and practice" while resulting "in the production of useful materials, devices, systems, or methods that have the potential for forensic application" [17]. The collaborative validation model represents a paradigm shift from isolated institutional efforts to coordinated community-driven scientific advancement.

NIJ Research Priority Areas and Collaborative Opportunities

The National Institute of Justice's anticipated research interests for Fiscal Year 2025 present multiple avenues for collaborative engagement across the forensic science community [16]. These priorities reflect both enduring challenges and emerging opportunities in forensic science practice and research.

Analysis of FY 2025 NIJ Research Interests

Table: NIJ FY 2025 Research Priorities Relevant to Forensic Collaboration

| Priority Category | Specific Research Topics | Collaborative Potential |

|---|---|---|

| Research & Evaluation | Social science research on forensic science systems | Multi-site evaluation of implementation barriers |

| Research & Evaluation | Identifying forensic community best practices | Cross-jurisdictional comparison of validation approaches |

| Applied Research | Foundational/applied R&D in forensic sciences | Inter-laboratory validation of novel technologies |

| Research & Evaluation | AI use within the criminal justice system | Shared datasets for algorithm validation |

These priorities share a common thread of requiring diverse perspectives and multi-site participation to produce scientifically robust and generally applicable findings. The emphasis on "social science research and evaluative studies on forensic science systems" specifically invites investigations into how collaborative networks form, operate, and sustain themselves [16]. Similarly, the focus on "research and evaluation projects to identify and inform the forensic community of best practices" naturally aligns with comparative studies across laboratories employing different validation strategies [16].

Strategic Alignment Mapping

Collaborative models directly advance NIJ priorities through several distinct mechanisms:

Accelerating Knowledge Transfer: When originating FSSPs publish validation data in peer-reviewed journals, they communicate technological improvements and allow peer review that supports establishing validity [1]. This process directly creates the "body of knowledge to guide and inform forensic science policy and practice" that NIJ prioritizes [17].

Resource Optimization: Smaller laboratories with limited research capacity can leverage validations conducted by larger or more specialized facilities, reducing the "activation energy" required to implement new technologies [1]. This efficiency enables broader participation in technological advancement across laboratory tiers.

Standardization and Quality Enhancement: Collaborative working groups that share results and monitor parameters optimize direct cross-comparability between FSSPs [1]. This alignment supports the development of consistent best practices across jurisdictions.

The National Technology Validation and Implementation Collaborative (NTVIC) exemplifies this strategic alignment in practice. Established in 2022, this collaborative brings together 13 federal, state, and local government crime laboratory leaders with university researchers and private technology companies to develop validation standards and implementation guidelines for emerging methods like Forensic Investigative Genetic Genealogy (FIGG) [12].

Collaborative Method Validation: Framework and Implementation

The collaborative validation model operates through a structured framework that maintains scientific rigor while distributing workload across participating organizations. This approach transforms validation from an isolated institutional requirement to a community-sourced scientific process.

Core Principles of Collaborative Validation

The foundational principle of collaborative validation is that FSSPs following applicable standards who are first to validate a method incorporating new technology, platform, kit, or reagents should publish their work in recognized peer-reviewed journals [1]. Publication provides objective evidence that method performance is adequate for intended use and meets specified requirements [1]. Subsequent FSSPs can then conduct an abbreviated method validation—a verification—if they adhere strictly to the method parameters provided in the publication [1]. This verification process requires the second FSSP to review and accept the original published data and findings, thereby eliminating significant method development work [1].

This approach is supported by international standards, including ISO/IEC 17025, which permits laboratories to verify methods previously validated by others [18]. The standard states: "When a method has been validated in another organization the forensic unit shall review validation records to ensure that the validation performed was fit for purpose. It is then possible for the forensic unit to only undertake verification for the method to demonstrate that the unit is competent to perform the test/examination" [18].

Three-Phase Validation Model

Collaborative validation occurs across three distinct phases that can be distributed across multiple organizations:

Table: Phases of Collaborative Method Validation

| Validation Phase | Primary Objectives | Typical Lead Organizations | Collaborative Opportunities |

|---|---|---|---|

| Developmental Validation | Proof of concept, general procedures | Research institutions, manufacturers | Literature synthesis, basic research sharing |

| Internal Validation | Establish laboratory-specific parameters | Large reference laboratories, core facilities | Multi-site testing, shared sample exchanges |

| Verification | Demonstrate competency with established methods | Implementing laboratories, small FSSPs | Shared protocols, cross-training, proficiency testing |

Phase One (Developmental Validation) is typically performed at a high level with general procedures and proof of concept, frequently by research scientists and often migrating from non-forensic applications [1]. Publication of this material in peer-reviewed journals is common [1]. This phased approach allows organizations with different resources and expertise to contribute according to their capacities while all participants benefit from the collective output.

Experimental Protocols for Collaborative Validation

Implementing collaborative validation requires structured methodologies to ensure scientific rigor while facilitating multi-site participation. The following protocols provide detailed frameworks for key collaborative activities.

Protocol 1: Inter-Laboratory Method Verification

Purpose: To establish a standardized procedure for verifying a previously validated method across multiple implementing laboratories.

Materials and Reagents:

- Reference standards traceable to national or international standards

- Control materials with characterized properties

- Testing materials representative of typical casework samples

- All reagents specified in the original validation publication

Procedure:

- Documentation Review: Comprehensively review the original validation publication, focusing on methods, materials, acceptance criteria, and limitations.

- Protocol Alignment: Adapt laboratory standard operating procedures to exactly match the published method parameters.

- Pre-Verification Testing: Conduct preliminary tests using control materials to establish baseline performance.

- Blinded Sample Analysis: Analyze a standardized set of blinded samples provided by the originating laboratory or third party.

- Data Comparison: Compare results across participating laboratories using statistical measures of agreement.

- Proficiency Assessment: Implement ongoing proficiency testing as part of quality assurance.

Validation Criteria: Results must fall within established confidence intervals for precision and accuracy defined in the original validation. Inter-laboratory comparison should demonstrate >95% concordance for qualitative methods and statistical equivalence for quantitative methods.

Protocol 2: Multi-Site Validation Data Pooling

Purpose: To combine validation data from multiple laboratories to establish more robust performance characteristics and population statistics.

Data Collection Standards:

- Standardized data formatting using agreed-upon templates

- Complete metadata documentation including instrument conditions, reagent lots, and analyst information

- Uniform statistical analyses specified in the study design phase

Analysis Framework:

- Data Harmonization: Apply consistent data transformation and normalization procedures across all datasets.

- Outlier Assessment: Identify and investigate methodological versus true outliers using predefined criteria.

- Meta-Analysis: Combine results using appropriate random-effects or fixed-effects models depending on heterogeneity.

- Sensitivity Analysis: Evaluate the impact of individual laboratories on overall conclusions.

This protocol enables the creation of larger, more diverse datasets that provide better estimates of method performance across different laboratory environments, instrument platforms, and analyst skill levels [15].

Visualization of Collaborative Validation Workflows

The following diagrams illustrate key processes and relationships in collaborative validation models, created using Graphviz DOT language with specified color palette and contrast requirements.

Collaborative Validation Implementation Pathway

NTVIC Organizational Ecosystem

The Scientist's Toolkit: Research Reagent Solutions for Collaborative Studies

Successful collaborative validation requires careful selection and standardization of reagents and materials across participating laboratories. The following table details essential components for forensic method validation studies.

Table: Essential Research Reagents for Collaborative Forensic Validation Studies

| Reagent/Material | Function in Validation | Standardization Requirements | Collaborative Application |

|---|---|---|---|

| Reference Standards | Calibration and quality control | Traceability to national standards | Cross-laboratory comparability |

| Control Materials | Monitoring analytical performance | Characterized for stability and homogeneity | Inter-laboratory proficiency testing |

| Certified Reference Materials | Method accuracy assessment | Documented uncertainty measurements | Shared between originating and verifying labs |

| Commercial Kits/Reagents | Standardized analytical procedures | Lot-to-lot consistency documentation | Shared procurement for multi-site studies |

| Synthetic DNA Profiles | Bioinformatics validation | Sequence verification and documentation | Shared digital resources |

| Blinded Sample Sets | Method performance evaluation | Homogeneity testing and characterization | Circulation between participating labs |

Data Sharing and Security in Collaborative Research

Effective collaboration requires structured approaches to data sharing that balance accessibility with security and confidentiality. Forensic data often contains multiple layers of confidentiality, including information associated with non-adjudicated casework or identifiable private information from biospecimens [15].

Data Sharing Agreement Framework

Formal data sharing agreements established in advance of data transfer ensure all parties—researchers, scientists, administrators, and legal teams—agree on terms, use, transfer, and storage of data [15]. These agreements typically include:

- General Terms: Legal framework for data protection

- Disclosure Period: Timeframe during which data can be shared

- Disclosure Coordinators: Designated individuals at each institution

- Confidential Information Specification: Precise description of what data is covered

- Purpose Statement: Approved uses for the shared data

The agreement process typically begins with one party initiating a Confidential Disclosure Agreement (CDA) or Non-Disclosure Agreement (NDA) using an institutionally approved template [15]. This undergoes review by both parties' legal departments or sponsored programs offices before being sent to designated signatory authorities for final approval [15].

Data Security and Platform Selection

Collaborative forensic research must implement appropriate data security measures based on data type and confidentiality requirements. Key security frameworks include:

- Operations Security (OPSEC): Systematic process to deny potential adversaries information about capabilities and intentions by identifying, controlling, and protecting generally unclassified evidence [15]

- Information Security (INFOSEC): Protection of information and information systems from unauthorized access, use, disclosure, disruption, modification, or destruction [15]

Platform selection for data sharing should consider data type, quantity, and security requirements. Common platforms include Microsoft OneDrive, Google Drive, Dropbox, and Box for file sharing, and Microsoft Teams, Slack, or Discord for collaborative communication [15]. The simplest method that meets security requirements is typically preferred.

Collaborative models represent a transformative approach to forensic method validation that directly supports NIJ's research priorities by enhancing efficiency, standardization, and knowledge transfer across the forensic science community. By working cooperatively, FSSPs can accelerate the implementation of new technologies, reduce redundant validation efforts, and create more robust performance data through multi-site studies [1]. The emerging framework of organizations like the National Technology Validation and Implementation Collaborative demonstrates the practical application of this model [12].

As forensic science continues to evolve with technological advancements in areas like genetic genealogy, artificial intelligence, and rapid DNA analysis, collaborative approaches will become increasingly essential for maintaining scientific rigor while maximizing limited resources. The strategic alignment between collaborative validation models and NIJ research priorities creates a powerful synergy that advances forensic science as a discipline while enhancing its capacity to serve the criminal justice system.

The escalating complexity of forensic analyses, from seized drug screening to taphonomy studies, demands rigorous, reliable, and efficient methodological processes. The collaborative method validation model presents a transformative framework for Forensic Science Service Providers (FSSPs). This paradigm shifts away from isolated, redundant validations towards a cooperative approach where laboratories performing the same tasks using the same technology work together to standardize methods and share data [1]. This model is foundational to a modern forensic science ethos, strengthening scientific validity, conserving resources, and ensuring that methods meet the stringent admissibility standards required by court systems, such as the Daubert standard [19]. The core principles of Standardization, Data Sharing, and Peer Review are interwoven pillars that support this collaborative framework, enabling forensic laboratories to keep pace with technological advancement while maintaining the highest levels of quality and scientific integrity.

The Pillars of Collaborative Method Validation

Standardization

Standardization ensures that methods are fit for purpose, scientifically sound, and produce reliable, repeatable results across different laboratories and jurisdictions. In a collaborative model, the originating FSSP develops a method using robust, well-designed validation protocols that incorporate relevant published standards from organizations such as SWGDAM or OSAC [1]. This initial, thorough validation provides a benchmark for the entire community.

- Efficiency and Direct Comparability: When subsequent FSSPs adopt the exact instrumentation, procedures, reagents, and parameters of the published method, they can perform an abbreviated verification process instead of a full validation [1]. This eliminates significant method development work and, crucially, enables direct cross-comparison of data between laboratories, fostering ongoing methodological improvements and inter-laboratory consistency.

- Meeting Legal and Accreditation Standards: Standardization is critical for satisfying legal criteria for the admissibility of scientific evidence. Methods must be broadly accepted in the scientific community and reliably applied [1]. Furthermore, accreditation to standards such as ISO/IEC 17025 requires that all methods be validated prior to use on casework, and the concept of verification based on a prior validation is an accepted practice within these requirements [1].

Data Sharing

Data sharing is the mechanism that makes collaborative validation possible. It involves the proactive deposition and publication of method validation data, making it accessible to the wider forensic science community.

- Publication and Dissemination: Originating FSSPs are encouraged to publish their complete validation data in recognized, peer-reviewed journals, often in an open-access format to ensure broad dissemination [1]. This practice communicates technological improvements and allows for scrutiny by peers.

- FAIR Data Principles: To promote true reproducibility and reuse, shared data should adhere to the FAIR principles—being Findable, Accessible, Interoperable, and Reusable [20]. Depositing datasets in appropriate, discipline-specific repositories with persistent identifiers (e.g., DOIs) is a best practice that supports this principle. For example, spectral data can be shared via MassBank, and crystallographic data via the Cambridge Structural Database (CSD) [20].

- Benefits for Laboratories of All Sizes: Data sharing democratizes access to advanced methodologies. Smaller FSSPs with limited resources can leverage the expertise and validation work of larger entities, reducing the "activation energy" required to implement new technology [1].

Peer Review

Peer review acts as the quality control mechanism for both published method validations and the scientific data presented in court. It provides objective, expert assessment to ensure that methods, data, and conclusions are sound.

- Pre-Publication Scrutiny: The peer-review process for journal articles containing method validations involves critical evaluation by independent experts. This review assesses the experimental design, data analysis, and conclusions, ensuring the validation is comprehensive and the method is truly fit for its intended purpose [1].

- Supporting Legal Admissibility: Peer-reviewed publications contribute significantly to satisfying Daubert criteria, which require that expert testimony be based on methods derived from reliable principles and methods [19]. The existence of peer-reviewed publication demonstrates that the method has been subjected to scientific scrutiny beyond the originating laboratory.

The synergistic relationship between these three pillars is illustrated in the workflow below.

Application in Forensic Research: Case Studies

Case Study 1: Rapid GC-MS for Seized Drug Analysis

A recent study developed and optimized a rapid Gas Chromatography-Mass Spectrometry (GC-MS) method for screening seized drugs, reducing the total analysis time from 30 minutes to 10 minutes while improving detection limits [3]. This study serves as an exemplary model of the collaborative validation principles in action.

- Systematic Validation: The method underwent a comprehensive validation protocol assessing repeatability, reproducibility, accuracy, detection limits, and carryover, following established forensic guidelines [3].

- Application to Real Casework: The validated method was successfully applied to 20 real case samples from the Dubai Police Forensic Labs, accurately identifying diverse drug classes including synthetic opioids and stimulants [3]. The quantitative data from this validation are summarized in the table below.

Table 1: Quantitative Validation Data for Rapid GC-MS Method in Seized Drug Analysis [3]

| Performance Characteristic | Result / Value | Comparative Benchmark |

|---|---|---|

| Total Analysis Time | 10 minutes | 30 minutes (conventional method) |

| Limit of Detection (LOD) for Cocaine | 1 μg/mL | 2.5 μg/mL (conventional method) |

| LOD Improvement for Key Substances | At least 50% improvement | Conventional method baseline |

| Repeatability & Reproducibility (RSD) | < 0.25% for stable compounds | Method-dependent |

| Match Quality Score (Real Samples) | Consistently > 90% | Method-dependent |

Experimental Protocol: Rapid GC-MS Method Validation

Title: Protocol for the Development and Validation of a Rapid GC-MS Method for Seized Drug Screening.

1. Instrumentation and Materials:

- GC-MS System: Agilent 7890B GC connected to 5977A MSD [3].

- Column: Agilent J&W DB-5 ms (30 m × 0.25 mm × 0.25 μm) [3].

- Carrier Gas: Helium, 99.999% purity, fixed flow rate of 2 mL/min [3].

- Test Solutions: Custom mixtures prepared in methanol (approx. 0.05 mg/mL per compound) including Tramadol, Cocaine, Heroin, MDMA, synthetic cannabinoids, and others [3].

2. Method Development and Optimization:

- The temperature program and flow rate were optimized via a trial-and-error process using the general analysis mixtures to achieve peak resolution and the 10-minute runtime [3].

- The final method parameters are proprietary to the study but involved advanced temperature programming [3].

3. Validation Procedure:

- Selectivity: Investigate by injecting extracted sample to demonstrate absence of interference from the matrix at the retention time of the analyte [21].

- Linearity: Prepare standard solutions at a minimum of six concentration levels (e.g., 25-200% of target). Analyze replicates at each level. Calculate regression equation and correlation coefficient (r). Acceptance criteria: r ≥ 0.997 for active ingredients [21].

- Accuracy: Prepare spiked samples at three concentrations over the range (e.g., 50%, 100%, 150% of target). Analyze replicates and calculate percent recovery. Acceptance criteria: mean recovery within 90-110% of theoretical value [21].

- Precision (Repeatability): Analyze ten replicates from a single sample solution at the target level. Calculate the Relative Standard Deviation (RSD) of the results. Acceptance criteria: RSD ≤ 2% for drug products [21].

- Limit of Detection (LOD) and Quantitation (LOQ): Determine the lowest concentration yielding a signal-to-noise ratio of 3:1 for LOD and 10:1 for LOQ, with LOQ also demonstrating an RSD of approximately 10% for six replicates [21].

- Robustness/Ruggedness: Demonstrate intermediate precision by having two analysts using two instruments on different days evaluate samples. Acceptance criteria: RSD between operators and instruments ≤ 2% [21].

4. Application to Case Samples:

- Extraction (Solid Samples): Grind tablet/capsule to powder. Add ~0.1 g to 1 mL methanol, sonicate for 5 min, centrifuge, and transfer supernatant to GC vial [3].

- Extraction (Trace Samples): Swab surfaces with methanol-moistened swab. Immerse swab tip in 1 mL methanol, vortex, and transfer extract to GC vial [3].

- Analysis: Analyze all extracts using the validated rapid GC-MS method and compare identifications and quality scores to a conventional method [3].

Case Study 2: Forensic Taphonomy Decomposition Studies

Forensic taphonomy, the study of post-mortem changes, faces significant challenges in standardization to satisfy Daubert criteria. The field has moved towards quantification to reduce observer variability, but debates persist regarding experimental design, such as the use of human versus animal analogues [19].

- The Model Organism Debate: While human donors are the ideal subject, their use is restricted by ethical and legal constraints. Pigs (Sus scrofa domesticus) have emerged as the preferred model organism due to anatomical and physiological similarities, including skin structure, body fat percentage, and being monogastric omnivores [19].

- Standardization of Experimental Design: To ensure data is applicable to real forensic cases, studies must be designed with forensic realism. This includes using single, clothed, uncaged carcasses to reflect regionally specific casework and to account for the effects of scavengers [19]. A suite of standardized design aspects is recommended for systematic data collection across different environments [19].

Table 2: Key Considerations for Standardizing Taphonomic Experimental Design [19]

| Experimental Factor | Recommended Best Practice | Rationale |

|---|---|---|

| Subject Type | Pigs as a proxy, with validation from human donors where available. | Anatomical similarities; addresses ethical/logistical hurdles of human subjects. |

| Subject Presentation | Single, clothed, uncaged carcasses. | Maximizes forensic realism by reflecting typical homicide scenarios and allowing for scavenger access. |

| Data Collection | Quantitative measurements using standardized protocols. | Reduces inter-observer variability, satisfies Daubert criteria for scientific rigor. |

| Geographical Replication | Studies in multiple, varied biogeographic circumstances. | Facilitates independent global validation of decomposition patterns. |

Experimental Protocol: Establishing a Taphonomic Decomposition Baseline

Title: Protocol for a Baseline Forensic Taphonomy Study Using Animal Analogues.

1. Experimental Site and Carcass Preparation:

- Select a study site that is secure and representative of local biogeoclimatic conditions [19].

- Obtain juvenile pig carcasses of similar mass. Clothing each carcass in standardized, natural fiber garments (e.g., 100% cotton t-shirt) [19].

- Place carcasses on the soil surface in a prone position, without caging, to allow for full scavenger access and natural decomposition processes [19].

2. Data Collection Schedule and Metrics:

- Total Body Score (TBS): Document standardized quantitative scores daily for the first week, then weekly thereafter. Scoring should cover distinct body regions (head, trunk, limbs) for decompositional changes including color, bloat, marbling, and purge fluid [19].

- Environmental Data: Log temperature, humidity, and precipitation at the site daily. Soil temperature and pH should be recorded at regular intervals.

- Photographic Documentation: Take high-resolution, color-calibrated photographs from fixed points and distances at each scoring interval to create a permanent visual record [19].

- Faunal Activity: Document presence and activity of insects and scavengers.

3. Data Sharing and Repository:

- All raw quantitative data (TBS, temperature), metadata (carcass mass, clothing type), and calibrated photographs should be compiled.

- Data should be deposited in an appropriate generalist or institutional repository in machine-readable formats (e.g., .csv for data, .tiff for images) to ensure FAIR principles are met [20].

The logical flow of a taphonomy study, from design to data sharing, is depicted below.

The Scientist's Toolkit: Research Reagent Solutions

The implementation of standardized and validated methods relies on a suite of essential materials and reagents. The following table details key items used in the featured experiments and their broader application in forensic research.

Table 3: Essential Research Reagents and Materials for Forensic Method Development and Validation

| Item / Reagent | Function / Application | Example in Context |

|---|---|---|

| DB-5 ms GC Column | A low-polarity, general-purpose chromatography column used for the separation of a wide range of organic compounds. | The 30m DB-5 ms column was central to the rapid GC-MS method for seized drug analysis, enabling the separation of diverse drug classes within 10 minutes [3]. |

| Certified Reference Materials (CRMs) | Highly pure, characterized substances used to calibrate instruments, validate methods, and ensure accuracy and traceability of results. | Used in the GC-MS study to prepare accurate test solutions for method development and to assess accuracy during validation [3] [21]. |

| Stable Isotope-Labeled Internal Standards | Analytes with identical chemical properties but different mass, used in mass spectrometry to correct for sample loss and matrix effects. | Critical for quantitative LC-MS/MS or GC-MS analyses of drugs in biological matrices, improving precision and accuracy. |

| Proteinase K | A broad-spectrum serine protease used in forensic DNA extraction to digest proteins and degrade nucleases, freeing DNA. | A standard reagent in DNA extraction kits for processing challenging samples like bone, tissue, and degraded blood stains. |

| Methanol (HPLC/GC-MS Grade) | A high-purity solvent used for sample dissolution, dilution, and liquid-liquid extraction procedures. | Used as the extraction solvent for both solid and trace drug samples in the rapid GC-MS protocol [3]. |

| Solid Phase Extraction (SPE) Cartridges | Devices containing a sorbent to selectively isolate and concentrate analytes from complex liquid samples, purifying them for analysis. | Commonly used to extract and clean up drugs, pesticides, or toxins from biological fluids like blood or urine prior to instrumental analysis. |

Building the Framework: A Step-by-Step Guide to Collaborative Validation

In forensic science, the traditional model of individual laboratories independently validating methods is a significant source of inefficiency, leading to redundant expenditure of time, resources, and expertise [1]. A collaborative method validation model presents a transformative alternative, enabling Forensic Science Service Providers (FSSPs) to work together to standardize methodologies, share data, and increase overall efficiency [1]. The establishment of a structured collaborative working group is critical to this model's success. Effective collaboration requires a formal governance structure to ensure that all participants, including government crime laboratories, academic researchers, and private technology companies, can work together effectively towards the common goal of developing and validating robust forensic methods [12] [15]. This document outlines application notes and protocols for creating and maintaining such a collaborative working group, framed within a broader thesis on advancing forensic laboratory research through collaborative validation models.

Core Governance Framework

A collaborative working group requires a formal structure to define roles, processes, and interactions. The governance model should integrate broad conceptual frameworks [22] [23] with the specific needs of forensic science research and development [15].

Table 1: Core Components of a Collaborative Governance Model

| Component | Description | Key Considerations for Forensic Collaborations |

|---|---|---|

| Stakeholder Identification & Mapping | Identify relevant stakeholders with a vested interest or expertise [24]. | Include federal, state, and local government crime labs, university researchers, and private technology companies [12]. Map based on influence, resources, and forensic domain expertise. |

| Formation of Collaborative Structures | Establish a governance structure that enables coordination and decision-making [24]. | Form steering committees, technical working groups (e.g., for DNA, digital forensics), and administrative task forces [12]. |

| Shared Vision & Goals Setting | Develop a shared vision and common goals that reflect collective priorities [24]. | Goals may include standardizing methodologies, sharing validation data, and elevating quality standards across laboratories [1] [12]. |

| Decision-Making Processes | Define processes that promote collaborative leadership and accountability [24]. | Aim for consensus-oriented and deliberative processes [23]. Define criteria for decision-making, including transparency and inclusivity. |

| Communication & Information-Sharing | Implement channels for sharing information, updates, and feedback [24]. | Use secure, approved platforms (e.g., Microsoft OneDrive) and establish clear data sharing agreements (DSAs) and Non-Disclosure Agreements (NDAs) [15]. |

| Conflict Resolution Mechanisms | Develop mechanisms for managing conflicts and resolving disagreements [24]. | Provide for mediation or facilitated dialogue to find mutually acceptable solutions, acknowledging potential power imbalances [22] [23]. |

| Resource Mobilization & Allocation | Identify and mobilize financial, human, and technical resources [24]. | Pool resources from multiple sectors to maximize efficiency. Allocate equitably to ensure meaningful participation from all parties, including smaller labs [1] [24]. |

| Monitoring, Evaluation & Learning | Establish mechanisms for monitoring progress and evaluating outcomes [24]. | Use data and feedback to assess effectiveness, identify improvements, and inform future actions. Publish results to contribute to the broader forensic science knowledge base [1] [15]. |

The collaborative process is cyclical and iterative, fostering ongoing trust, commitment, and shared ownership of outcomes among stakeholders [22]. The National Technology Validation and Implementation Collaborative (NTVIC) serves as a successful real-world example of this model, comprising 13 federal, state, and local government crime laboratories, university researchers, and private companies to develop guidelines for Forensic Investigative Genetic Genealogy (FIGG) [12].

Experimental Protocols for Collaborative Method Validation

The following protocols provide a detailed methodology for conducting a collaborative validation study, from initial planning to final publication. These protocols ensure the validation is fit-for-purpose and meets accreditation standards such as ISO/IEC 17025 [18].

Protocol: Collaborative Validation Master Plan

Objective: To define the end-user requirements, scope, and acceptance criteria for the new method through a collaborative consensus process. Materials: Draft standard operating procedure (SOP) for the method; relevant accreditation standards (e.g., ISO/IEC 17025); communication platform. Procedure:

- Constitute a Technical Working Group: Assemble representatives from each participating laboratory with expertise in the relevant forensic discipline [1].

- Define End-User Requirements: Collaboratively draft a document capturing what the method must reliably do. This includes:

- Functional Requirements: The specific tasks the method must perform (e.g., extract DNA from touch samples, recover specific file types from a mobile device).

- Inputs and Outputs: The type of evidence input and the required form of the result [18].

- Constraints: Any limitations on time, cost, or sample consumption [18].

- Draft the Standard Operating Procedure (SOP): Based on the requirements, develop a unified, detailed SOP that all participating laboratories commit to following without modification. This is critical for direct cross-comparison of data [1].

- Perform a Risk Assessment: Identify potential points of failure or error in the method and define controls to mitigate these risks [18].

- Set Acceptance Criteria: Define objective, measurable metrics that will demonstrate the method is fit-for-purpose (e.g., limit of detection, precision, accuracy, specificity) [18].

- Develop the Validation Plan: Create a master plan detailing the experimental design, number and type of samples, data analysis methods, and roles and responsibilities for each participating laboratory.

Protocol: Verification of a Published Method

Objective: To allow a laboratory (the "verifying lab") to adopt a method that has been previously validated and published by another laboratory (the "originating lab") [1] [18]. Materials: Peer-reviewed publication of the original validation study; full validation report from the originating lab (if available via data sharing agreement). Procedure:

- Review Published Validation Data: The verifying lab must critically assess the original validation data against its own end-user requirements and accreditation standards. The review must confirm that the original study robustly tested the method [18].

- Conduct a Verification Study: The verifying lab performs a subset of the original validation experiments to demonstrate competence in performing the method. This is not a full re-validation [1] [18].

- Compare Results: The verifying lab compares its results to the benchmark data from the originating lab. This acts as an inter-laboratory study, adding to the body of knowledge and supporting the method's validity [1].

- Document and Report: Document the review of the original data and the results of the verification study. The final report should state that the method has been successfully verified and is now implemented for casework.

The Scientist's Toolkit: Research Reagent Solutions

Collaborative research in forensics involves both administrative and technical tools to ensure secure and effective cooperation.

Table 2: Essential Materials for Collaborative Forensic Research

| Item / Solution | Function in Collaborative Research |

|---|---|

| Data Sharing Agreement (DSA) | A legal framework, often under an NDA, that defines the terms, use, transfer, and storage of confidential data, ensuring ethical and confidential use by all collaborators [15]. |

| Institutional Review Board (IRB) Approval | Ensures that research involving human subjects or identifiable private information (e.g., genetic data, fingerprints) adheres to ethical standards and federal regulations (Common Rule) [15]. |

| Non-Disclosure Agreement (NDA) | Protects sensitive information and intellectual property shared between institutions during the collaboration [15]. |

| Secure Data Sharing Platform | Cloud-based services (e.g., Microsoft OneDrive, Box) that enable the transfer of large datasets while meeting institutional security requirements for data confidentiality [15]. |

| Operations Security (OPSEC) | A systematic process to deny potential adversaries information about capabilities and intentions by identifying, controlling, and protecting evidence of sensitive activities [15]. |

| Information Security (INFOSEC) | The protection of information and systems from unauthorized access or destruction to provide confidentiality, integrity, and availability—a critical practice when handling forensic data [15]. |

| Standard Operating Procedure (SOP) | A unified, detailed written method that all collaborating laboratories adhere to strictly, which is the foundation for direct cross-comparison of data and collaborative validation [1] [18]. |

| External Proficiency Test | Commercially available tests that allow multiple laboratories to analyze the same samples, enabling inter-laboratory comparison of performance and identifying systematic problems [25]. |

Workflow Visualization of Collaborative Validation

The following diagram illustrates the logical workflow and decision points in establishing a collaborative working group and executing a validation project.

Collaborative Method Validation Workflow

The establishment of a formally governed collaborative working group is a powerful strategy for advancing forensic science. It moves the community away from wasteful redundancy and toward a model of shared efficiency, standardized excellence, and accelerated innovation [1]. By adhering to a structured governance framework with clear roles, shared goals, and robust communication protocols, researchers and scientists can effectively pool resources and expertise. The detailed protocols for collaborative validation and verification provide a clear path for laboratories to implement new technologies more rapidly and reliably. Ultimately, this collaborative model, supported by secure data sharing and a commitment to publication, strengthens the scientific foundation of forensic evidence and enhances its reliability within the justice system.