Chemometric Machine Learning for Document Paper Discrimination: Techniques, Applications, and Future Frontiers

This article provides a comprehensive review of the application of chemometric and machine learning techniques for the discrimination and comparison of document papers, a critical task in forensic science and...

Chemometric Machine Learning for Document Paper Discrimination: Techniques, Applications, and Future Frontiers

Abstract

This article provides a comprehensive review of the application of chemometric and machine learning techniques for the discrimination and comparison of document papers, a critical task in forensic science and quality control. It explores the foundational principles of paper composition and analytical methods like spectroscopy and chromatography. The scope extends to detailed methodologies, including data preprocessing, feature selection, and the application of both shallow and deep learning algorithms. The content further addresses crucial troubleshooting and optimization strategies to overcome real-world challenges, and concludes with a rigorous discussion on model validation, comparative performance analysis, and the future trajectory of this interdisciplinary field, highlighting its potential implications for biomedical and clinical research documentation integrity.

The Foundation of Paper Discrimination: Composition, Analytical Techniques, and Data Fundamentals

Modern paper is a complex, engineered composite material whose physicochemical properties provide a powerful basis for forensic discrimination. The inherent diversity in raw materials and manufacturing processes endows different paper products with distinct signatures, offering crucial associative or exclusionary evidence in questioned document examinations [1]. Paper is a ubiquitous and forensically significant substrate, primarily composed of a network of cellulosic fibers integrated with a suite of inorganic fillers, sizing agents, optical brightening agents (OBAs), and other functional additives designed to impart specific properties [1]. This compositional complexity creates a unique, measurable signature that can differentiate paper sources or production batches.

However, a significant challenge exists in translating analytical potential from research into reliable, validated protocols for routine forensic casework. Analysis of the paper substrate itself remains underdeveloped compared to the examination of overlying inks or printed text [1]. This application note provides detailed methodologies for characterizing paper's complex composition, framed within chemometric machine learning research to robustly discriminate documents.

Compositional Analysis of Paper

Core Components and Their Functions

The table below summarizes the primary components of modern paper, their typical chemical identities, and their functional roles in the final paper product.

Table 1: Core Components of Modern Paper and Their Functions

| Component Category | Example Substances | Primary Function in Paper |

|---|---|---|

| Cellulosic Fibers | Wood pulp (softwood/hardwood), cotton linters, recycled fibers | Forms the foundational fibrous network; provides basic mechanical strength and structure. |

| Inorganic Fillers | Precipitated Calcium Carbonate (PCC), Kaolin (clay), Titanium Dioxide (TiO₂) | Improves optical properties (brightness, opacity), smoothness, and printability. |

| Sizing Agents | Rosin, Alkyl Ketene Dimer (AKD), Alkenyl Succinic Anhydride (ASA) | Imparts hydrophobicity to control liquid penetration (e.g., ink). |

| Optical Brighteners | Silbene-, coumarin-, or pyrazoline-based compounds (OBAs) | Enhances perceived whiteness and brightness by absorbing UV light and emitting blue light. |

| Other Additives | Starch, polyacrylamide resins, dyes, biocides | Improves dry/wet strength, provides color, and prevents microbial growth. |

Quantitative Profile of Waste Paper Contaminants

Beyond deliberate additives, paper contains substances from its raw materials, manufacturing, and usage. Analysis of waste paper identified 138 distinct compounds, whose origins and hazard profiles are quantified below [2].

Table 2: Organic Compounds Identified in Waste Paper and Their Hazards

| Origin of Compounds | Number of Identified Compounds | Examples and Hazard Notes |

|---|---|---|

| Virgin Wood | 31 | Pesticides and natural wood extractives. |

| Paper Manufacturing & Recycling | 19 | Process chemicals and by-products. |

| Fragrance Compounds | 15 | Added for sensory properties in certain products. |

| Printing Inks | 67 | Solvents, pigments, resins, and plasticizers. |

| Solvents (Largest Subgroup) | 25 | Exhibited the highest proportion of hazardous classifications. |

| Other (surface treatments, ink formulations) | Not specified | Includes persistent organic pollutants like benzophenone, butylated hydroxytoluene (BHT), bis(2-ethylhexyl) phthalate, bisphenol A, and bisphenol S [2]. |

Analytical Techniques for Paper Characterization

A multi-technique approach is essential for comprehensive forensic characterization, as no single method can capture the full physicochemical diversity of paper [1].

Spectroscopic Techniques

Spectroscopy provides non-destructive or minimally destructive probes into the molecular and elemental composition of paper.

- Vibrational Spectroscopy: Fourier-Transform Infrared (FTIR) and Raman spectroscopy probe molecular vibrations, yielding information about cellulose structure, fillers, and sizing agents. Attenuated Total Reflectance (ATR) accessories enable direct solid-sample analysis [1].

- Elemental Techniques: Laser-Induced Breakdown Spectroscopy (LIBS) and X-ray Fluorescence (XRF) provide elemental signatures, crucial for identifying and quantifying filler minerals like calcium carbonate (Ca) and kaolin (Al, Si) [1]. LIBS is a micro-destructive technique with high sensitivity, while XRF is non-destructive.

- Other Spectroscopic Methods: Near-Infrared (NIR) spectroscopy, combined with chemometrics, has been demonstrated as a powerful tool for discriminating materials like tobacco trademarks, a approach directly transferable to paper analysis [3]. UV-Vis spectroscopy with integrating spheres can measure optical properties such as whiteness and brightness [1].

Chromatographic and Mass Spectrometric Techniques

These techniques provide detailed chemical characterization of organic additives and contaminants.

- Gas Chromatography-Mass Spectrometry (GC-MS): Ideal for volatile and semi-volatile organic compounds. It is perfectly suited for identifying solvents, sizing agents, and contaminants like those listed in Table 2. Thermal Desorption (TD)-GC/MS can detect previously undetectable compounds embedded within the paper matrix [2].

- Isotope Ratio Mass Spectrometry (IRMS): Measures stable isotope ratios (e.g., 13C/12C), which can trace the geographical and botanical origin of cellulose fibers, providing a high-level discriminatory signature [1].

Other Analytical Methods

- X-ray Diffraction (XRD): Identifies crystalline phases, allowing for precise differentiation between different forms of calcium carbonate fillers (e.g., calcite vs. aragonite) and other minerals [1].

- Thermal Analysis: Techniques like Thermogravimetric Analysis (TGA) measure changes in mass as a function of temperature, providing information on filler content (as residue) and the thermal stability of organic components [1].

Experimental Protocols

Protocol 1: Acetic Acid Extraction of Fillers and Contaminants

This protocol, adapted from research on sustainable fiber reuse, effectively removes inorganic fillers and a significant portion of organic contaminants from paper samples [2].

- Sample Preparation: Cut approximately 20 g of the questioned paper document into small pieces (approx. 1 cm2) to increase surface area.

- Reaction: Place the paper pieces into a glass beaker and add 500 mL of 0.2 M acetic acid (CH₃COOH). Gently stir the suspension for a defined period (e.g., 1-2 hours) at room temperature.

- Chemical Principle: Acetic acid reacts selectively with calcium carbonate (PCC) to form soluble calcium acetate, water, and carbon dioxide, without significantly damaging the crystalline structure of cellulose fibers [2].

- Filtration and Washing: Recover the de-filled cellulose fibers by filtration. Wash the residue thoroughly with deionized water to remove any soluble reaction products and residual acid.

- Analysis of Extracts:

- The liquid filtrate can be analyzed via ICP-MS or ICP-OES to quantify the elemental composition of the dissolved fillers.

- The washed solid residue (fibers) can be analyzed by FTIR-ATR or XRD to confirm filler removal and assess fiber integrity. The efficiency of this method for PCC removal can reach 86% [2].

- Contaminant Analysis: The extraction also removes hazardous organic solvents with an efficiency of 93%. The liquid extract can be liquid-liquid extracted with an organic solvent (e.g., dichloromethane) and the concentrate analyzed by GC-MS to profile organic contaminants [2].

Protocol 2: NIR Spectroscopy with Chemometrics for Paper Discrimination

This non-destructive protocol is ideal for a rapid preliminary classification of paper samples.

- Sample Presentation: Ensure the paper sample is flat and clean. If using a pressed pellet, prepare by pulverizing and pressing at 20 tons in a hydraulic press to ensure surface homogeneity [3].

- Spectral Acquisition: Use a NIR spectrometer with a diffuse reflectance accessory. Acquire spectra in the range of 7600–3900 cm⁻¹. Collect multiple replicates (e.g., 3) per sample, averaging 64 scans per spectrum at a resolution of 4 cm⁻¹ to ensure a high signal-to-noise ratio [3].

- Spectral Pre-processing: Apply pre-processing techniques to the raw spectral data to remove physical artifacts like light scattering. Standard Normal Variate (SNV) transformation is a common and effective method for this purpose [3].

- Chemometric Analysis:

- Exploratory Analysis: Use Principal Component Analysis (PCA), an unsupervised method, to explore the natural clustering of samples in a reduced-dimensionality space. This can reveal inherent groupings and outliers without prior class assignments.

- Classification Modeling: Use Partial Least Squares-Discriminant Analysis (PLS-DA), a supervised method, to develop a calibration model that maximizes the separation between pre-defined classes (e.g., different paper brands or types) [3].

Chemometric Machine Learning Workflow

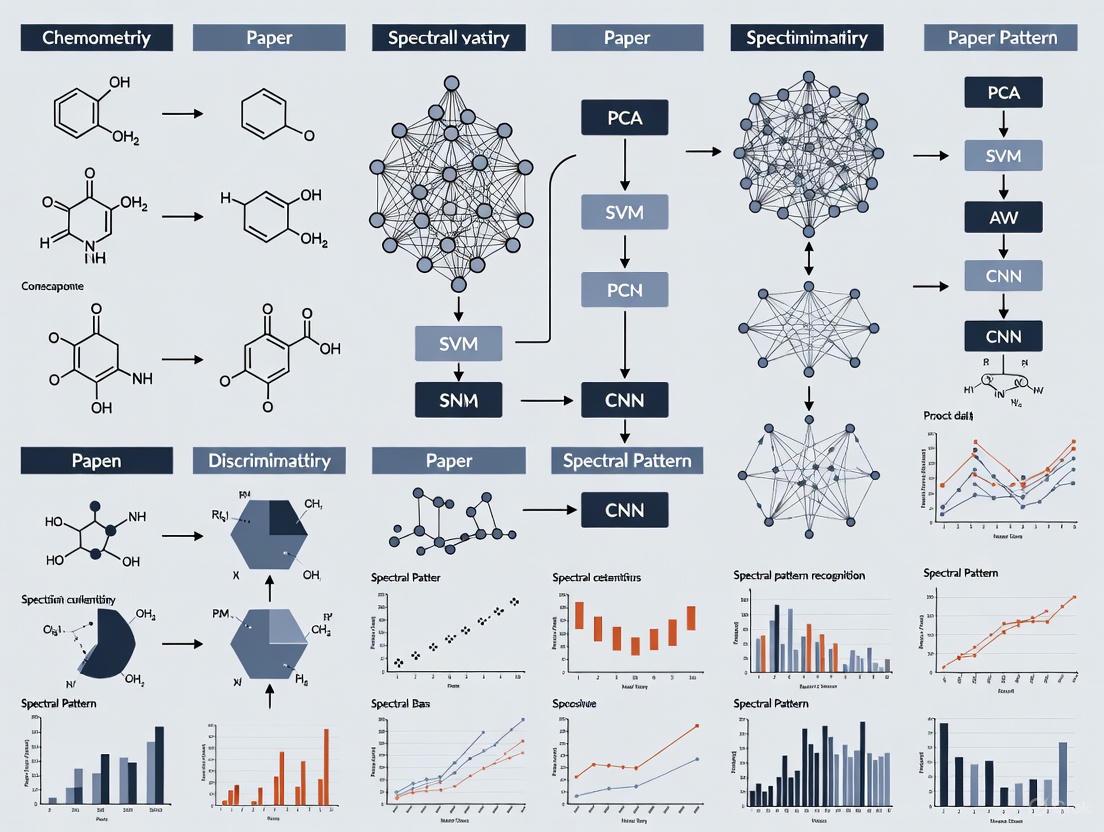

The power of modern paper discrimination lies in the fusion of analytical data with chemometric machine learning models. The following workflow diagrams the process from sample to validated result.

Diagram 1: Integrated Chemometric Workflow for Paper Analysis

Model Development and Validation Pathway

The development of a robust classification model requires a structured approach to handle data, train models, and evaluate their performance.

Diagram 2: Machine Learning Model Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Paper Analysis Protocols

| Reagent/Material | Function/Application | Key Consideration for Protocol |

|---|---|---|

| Acetic Acid (CH₃COOH), 0.2 M | Selective extraction of calcium carbonate fillers and co-removal of organic contaminants. | A "gentle" acid that minimizes cellulose degradation compared to strong mineral acids [2]. |

| Deionized Water | Washing and rinsing of samples post-extraction. | Removes soluble reaction products and residual reagents to prevent interference in subsequent analysis. |

| Potassium Bromide (KBr) | Matrix for preparing pellets for FTIR transmission analysis. | Must be of spectroscopic grade and thoroughly dried. |

| NIR Spectrometer | Non-destructive acquisition of spectral profiles for chemometric analysis. | Requires an integrating sphere for diffuse reflectance measurements on solid samples [3]. |

| GC-MS System | Separation and identification of volatile and semi-volatile organic compounds. | TD-GC/MS is particularly effective for detecting embedded compounds in the paper matrix [2]. |

| The Unscrambler / CAMO Software | Industry-standard platform for performing PCA, PLS-DA, and other multivariate analyses. | Critical for reducing spectral data dimensionality and building classification models [3]. |

In modern analytical science, the discrimination of complex materials such as paper presents a significant challenge, requiring a multifaceted approach to uncover subtle compositional differences. This application note details the integration of four core analytical techniques—Vibrational Spectroscopy (FT-IR and Raman), Laser-Induced Breakdown Spectroscopy (LIBS), and X-Ray Fluorescence (XRF)—within a chemometric machine learning framework. The synergy of these methods provides a powerful tool for non-destructive, high-throughput analysis of paper substrates, enabling precise classification and provenance determination essential for forensic document analysis, historical preservation, and quality control in manufacturing. By combining the molecular specificity of vibrational spectroscopy with the elemental sensitivity of LIBS and XRF, and processing the resulting multivariate data through advanced machine learning algorithms, researchers can build robust predictive models for paper discrimination that surpass the capabilities of any single technique.

Experimental Protocols and Methodologies

Vibrational Spectroscopy: FT-IR and Raman

Principle: FT-IR and Raman spectroscopy provide complementary molecular information about vibrational energy levels in a sample. FT-IR measures absorption of infrared light, while Raman measures inelastic scattering of monochromatic light, typically from a laser source. For paper analysis, these techniques probe molecular structures of cellulose, hemicellulose, lignin, fillers, and coatings.

Sample Preparation:

- FT-IR Analysis: For paper samples, employ Attenuated Total Reflection (ATR) objective with diamond crystal for direct measurement without preparation. Ensure flat, clean sample surface contact with ATR crystal. Apply consistent pressure for reproducible contact. For heterogeneous samples, map multiple regions (minimum 5 points per sample) [4].

- Raman Analysis: Minimal preparation required. Place paper sample on microscope slide. Focus laser on area of interest. Avoid fluorescent additives that may interfere with signal. Use low laser power (1-10% of maximum, typically 1-10 mW) to prevent sample degradation, especially for historical documents [4].

Instrument Parameters:

- FT-IR Settings: Spectral range: 4000-650 cm⁻¹; Resolution: 4 cm⁻¹; Scans: 32; Detector: MCT cooled with liquid nitrogen [4].

- Raman Settings: Laser wavelength: 785 nm (reduces fluorescence); Spectral range: 100-3200 cm⁻¹; Grating: 600 lines/mm; Acquisition time: 10-30 seconds; Accumulations: 3-5 [4].

Data Collection Workflow:

- Instrument calibration using background reference (FT-IR) or silicon wafer (Raman)

- Sample positioning on microscope stage

- Region selection via visual inspection

- Spectral acquisition with specified parameters

- Data export in JCAMP-DX or ASCII format for chemometric analysis

Laser-Induced Breakdown Spectroscopy (LIBS)

Principle: LIBS uses a high-energy laser pulse to ablate a micro-sample and create a plasma, whose emitted atomic and ionic line spectra reveal elemental composition. For paper discrimination, LIBS detects trace elements from fillers, inks, coatings, and manufacturing residues.

Sample Preparation:

- Mount paper samples on rigid, non-reflective backing

- Ensure flat surface to maintain consistent laser focus distance

- No other preparation required, enabling rapid analysis

Instrument Parameters [5]:

- Laser: Pulsed Nd:YAG laser

- Wavelength: 1064 nm (fundamental) or 266 nm (quadrupled)

- Pulse energy: 10-100 mJ (adjust based on sample sensitivity)

- Spot size: 50-200 µm

- Pulse width: 5-20 ns

- Repetition rate: 1-20 Hz

- Detector: ICCD (Intensified CCD) gated detector

- Delay time: 1 µs (to avoid continuum background)

- Gate width: 5-10 µs

- Spectrometer: Echelle type with broadband coverage (200-900 nm)

Data Collection Workflow:

- System alignment and laser energy verification

- Sample positioning with camera monitoring

- Laser focusing on sample surface

- Plasma generation and spectral acquisition

- Multi-pulse analysis (3-5 spots per sample) to account for heterogeneity

- Data export with wavelength calibration using standard reference materials

X-Ray Fluorescence (XRF)

Principle: XRF identifies elements by measuring characteristic X-rays emitted when sample atoms are excited by a primary X-ray source. For paper analysis, XRF detects major and trace elements from fillers, pigments, and contaminants.

Sample Preparation:

- For handheld XRF: Direct measurement on paper surface

- For lab-based XRF: Place sample in spectrometer cup with polypropylene film window

- For quantitative analysis: Prepare pressed pellets with binder or fuse with lithium borate for homogeneous distribution

Instrument Parameters [6]:

- X-ray tube: Rhodium anode, 4-50 kV, 0.05-2.0 mA (adjustable for light/heavy elements)

- Detector: Silicon Drift Detector (SDD) with Peltier cooling

- Analysis time: 30-60 seconds per spot

- Atmosphere: Air, helium, or vacuum for light elements

- Spot size: 1-10 mm (handheld); down to 3 µm (micro-XRF)

Data Collection Workflow:

- Instrument calibration with certified reference materials

- Selection of analysis mode (empirical vs fundamental parameters)

- Sample positioning in X-ray beam

- Spectral acquisition with live-time counting

- Qualitative and quantitative analysis using manufacturer software

- Data export for multivariate analysis

Chromatography for Paper Analysis

Principle: Chromatography separates complex mixtures in paper extracts (inks, sizing agents, degradation products) for identification and quantification. While not directly featured in the search results, common approaches include:

Sample Preparation:

- Accelerated Solvent Extraction (ASE) for efficient extraction of organic compounds

- Solid-phase microextraction (SPME) for volatile components

- Derivatization for GC analysis of non-volatile compounds

Instrument Parameters (based on analogous applications [7]):

- GC-MS/MS: For analysis of volatile organics, pesticides, and POPs in complex matrices

- HPAE-PAD: For carbohydrate profiling (cellulose/hemicellulose degradation products)

- IC: For anion/cation analysis from paper fillers and coatings

Chemometric Machine Learning Integration

Data Preprocessing for Paper Discrimination

The integration of spectroscopic and chromatographic data requires systematic preprocessing to optimize model performance. Implement the following preprocessing pipeline:

Spectral Data Preprocessing:

- Smoothing: Savitzky-Golay filter (window: 9-15 points, polynomial order: 2-3) to reduce high-frequency noise

- Baseline Correction: Asymmetric least squares (AsLS) or modified polynomial fitting to remove fluorescence and scattering effects

- Normalization: Standard Normal Variate (SNV) or vector normalization to correct for path length and concentration variations

- Alignment: Correlation optimized warping (COW) or dynamic time warping (DTW) to correct for wavelength shifts between measurements

- Outlier Detection: Mahalanobis distance or Hotelling's T² to identify spectral outliers for exclusion or separate modeling

Feature Engineering:

- Peak Detection: Local maxima identification with automated baseline recognition

- Feature Extraction: Principal Component Analysis (PCA) for dimensionality reduction before model training

- Data Fusion: Mid-level fusion of features from multiple techniques before classifier input

Machine Learning Algorithms for Paper Classification

The following machine learning approaches are recommended for paper discrimination based on spectroscopic and elemental data:

Table 1: Machine Learning Algorithms for Paper Discrimination

| Algorithm | Type | Application in Paper Analysis | Advantages |

|---|---|---|---|

| Principal Component Analysis (PCA) | Unsupervised | Exploratory data analysis, outlier detection, dimensionality reduction | Identifies natural clustering, reduces data complexity, visualizes patterns [8] |

| Partial Least Squares-Discriminant Analysis (PLS-DA) | Supervised | Classification of paper types, origins, or manufacturing batches | Handles multicollinear variables, works with more variables than samples, provides variable importance [8] |

| Random Forest (RF) | Supervised | Authentication, provenance determination, quality grading | Robust to outliers, provides feature importance rankings, handles nonlinear relationships [8] |

| Support Vector Machine (SVM) | Supervised | Discrimination of similar paper types, counterfeit detection | Effective in high-dimensional spaces, works well with limited samples, versatile through kernel functions [8] |

| Convolutional Neural Networks (CNN) | Supervised | Automated feature extraction from raw spectral data, pattern recognition | Learns relevant features automatically, handles complex spectral patterns, state-of-the-art performance [8] |

Model Validation Protocols

Implement rigorous validation to ensure model reliability:

- Data Splitting: 70% training, 15% validation, 15% test sets with stratified sampling

- Cross-Validation: k-fold (k=5-10) with Venetian blinds or random subsets

- Performance Metrics: Accuracy, precision, recall, F1-score, and Cohen's kappa

- External Validation: Prediction on completely independent sample set not used in model development

Research Reagent Solutions and Essential Materials

Table 2: Essential Research Materials for Paper Analysis Techniques

| Material/Reagent | Function/Purpose | Application Technique |

|---|---|---|

| ATR Diamond Crystal | Internal reflection element for FT-IR measurement | FT-IR Spectroscopy [4] |

| Silicon Wafer Standard | Raman wavelength and intensity calibration | Raman Spectroscopy [4] |

| Certified Reference Materials | Quantitative calibration and method validation | XRF, LIBS [6] |

| Polypropylene Film | Sample support for XRF analysis | XRF Spectroscopy [6] |

| Liquid Nitrogen | Cooling for semiconductor detectors | XRF, FT-IR (MCT detector) [6] |

| Accelerated Solvent Extractor | Automated extraction of organic compounds | Chromatography sample prep [7] |

| Boron/Lithium Tetraborate | Flux for fused bead sample preparation | XRF quantitative analysis [6] |

| Microcrystalline Cellulose | Reference standard for paper component analysis | All techniques |

Experimental Workflows and Signaling Pathways

The following diagrams illustrate the integrated experimental and computational workflows for paper discrimination research.

Paper Analysis Experimental Workflow

Chemometric Machine Learning Pipeline

Data Analysis and Interpretation

Quantitative Elemental and Molecular Signatures

Table 3: Characteristic Analytical Signatures for Paper Discrimination

| Analytical Technique | Measurable Parameters | Paper Discrimination Markers | Typical Detection Limits |

|---|---|---|---|

| FT-IR Spectroscopy | Molecular functional groups | Cellulose crystallinity, lignin content, filler types (carbonates, sulfates), coating polymers | 0.1-1.0 wt% for major components |

| Raman Spectroscopy | Molecular vibrations, crystal structures | Pigment identification (TiO₂ polymorphs), cellulose structure, synthetic dyes | 0.5-2.0 wt% for most components |

| LIBS | Elemental composition | Trace metals (Ca, Mg, Al, Si, Fe, Cu), filler elements, contaminants | 1-100 ppm for most elements |

| XRF | Elemental composition | Major fillers (CaCO₃, kaolin), trace elements, heavy metal contaminants | 0.1-10 ppm for most elements |

Case Study: Paper Document Discrimination

A representative study demonstrates the application of these integrated techniques:

Objective: Discriminate between 15 historically significant paper types from different manufacturers and time periods.

Methodology:

- FT-IR and Raman analysis of molecular composition

- LIBS and XRF for elemental profiling

- Data fusion and PCA for exploratory analysis

- Random Forest classification for provenance prediction

Results:

- FT-IR successfully differentiated lignin-containing papers from rag papers

- Raman identified distinct TiO₂ polymorphs (anatase vs. rutile) as manufacturing markers

- LIBS detected trace element signatures (Mn/Cu ratios) characteristic of water sources

- XRF quantified filler composition (Ca/Si ratios) specific to manufacturers

- Combined model achieved 96.2% classification accuracy versus 72-85% for individual techniques

The integration of vibrational spectroscopy (FT-IR, Raman), LIBS, XRF, and chromatography within a chemometric machine learning framework provides an unparalleled approach to paper discrimination research. This multimodal methodology leverages the complementary strengths of each technique—molecular specificity from vibrational methods, elemental sensitivity from LIBS and XRF, and separation power from chromatography—to build comprehensive chemical profiles of paper substrates. The implementation of advanced machine learning algorithms, particularly Random Forest and Convolutional Neural Networks, enables robust classification models that can identify subtle compositional differences invisible to individual techniques. This approach establishes a powerful paradigm for document authentication, historical analysis, and forensic investigations, with potential applications extending to other complex material systems requiring non-destructive characterization and classification.

In the fields of analytical chemistry, pharmacognosy, and food science, reliably discriminating between highly similar complex mixtures—such as medicinal plants, food products, or geological samples—presents a significant challenge. Traditional analytical techniques often struggle to capture the holistic chemical composition of such samples, leading to potential misidentification with consequences for drug safety, food authenticity, and product quality [9] [10]. The paradigm has shifted with the adoption of spectral and chromatographic fingerprinting, a concept where the entire profile of a sample, as generated by techniques like chromatography or spectroscopy, is treated as a unique identifier. Interpreting these complex, multidimensional fingerprints, however, requires sophisticated statistical and machine learning approaches, collectively known as chemometrics [11] [12]. This application note details the practical integration of analytical fingerprinting and chemometric modeling to create a robust framework for sample discrimination, providing validated protocols and workflows for researchers and drug development professionals.

The Analytical Toolkit: Fingerprinting Techniques

The foundation of this discriminatory approach lies in generating high-quality, reproducible fingerprints that capture a sample's intrinsic chemical characteristics. The following core techniques are commonly employed, each providing complementary information.

Chromatographic Fingerprints

Chromatographic methods, such as High-Performance Liquid Chromatography (HPLC) and Liquid Chromatography coupled with high-resolution mass spectrometry (LC-HR-Q-TOF-MS/MS), separate the individual chemical components of a complex mixture. The resulting chromatogram, with its unique pattern of peaks, serves as a fingerprint. This technique is particularly powerful for identifying specific marker compounds. For instance, in differentiating the poisonous Asarum heterotropoides (AH) from Cynanchum paniculatum (CP), LC-HR-Q-TOF-MS/MS identified 91 and 90 compounds in each, respectively, with the unique presence of toxic aristolochic acid D in AH serving as a critical discriminatory marker [10].

Vibrational Spectroscopic Fingerprints

Vibrational spectroscopy, including Fourier-Transform Infrared (FTIR) and Near-Infrared (NIR) spectroscopy, measures the interaction of infrared light with molecular bonds, providing a rapid and non-destructive chemical snapshot. The resulting spectra are dominated by functional group vibrations, creating a unique fingerprint for each sample. Key spectral regions for discrimination include the carbohydrate fingerprint region (1200–950 cm⁻¹) and the C–H stretching zone (2935–2885 cm⁻¹) [13]. NIR spectroscopy (12,000–4000 cm⁻¹) is especially useful for capturing overtone and combination bands of C–H, O–H, and N–H groups [9].

Electronic Sensor Fingerprints

Electronic noses (E-nose) and electronic tongues (E-tongue) mimic human senses by using sensor arrays to respond to volatile (odor) and non-volatile (taste) compounds in a sample, respectively. They provide a distinct sensor response pattern as a fingerprint. An E-nose analysis was able to identify 25 major odor components in AH and 12 in CP in a single 140-second run, offering a rapid preliminary discrimination tool [10].

Electrochemical Fingerprints

This technique involves recording the current-potential response of a sample within an electrochemical cell (e.g., the Belousov-Zhabotinsky reaction). The resulting voltammogram acts as a fingerprint that reflects the holistic redox-active composition of the sample. It is a low-cost and rapid technique that can achieve 100% classification accuracy when combined with pattern recognition methods like Principal Component Analysis (PCA) [10].

Table 1: Summary of Key Analytical Fingerprinting Techniques

| Technique | Measured Signal | Key Applications | Advantages | Limitations |

|---|---|---|---|---|

| LC-MS | Separation & mass detection of compounds | Identification of specific toxic markers (e.g., aristolochic acids) [10] | High specificity and sensitivity | Expensive instrumentation; complex sample prep |

| FTIR/NIR | Molecular bond vibrations | Discrimination of nectar botanical origin [13]; monitoring TCM processing [9] | Rapid, non-destructive, high-throughput | Limited sensitivity for trace components |

| E-nose / E-tongue | Sensor array response to odors/tastes | Rapid odor/taste profiling of medicinal plants [10] | Fast, objective, mimics human senses | Less specific for individual compounds |

| Electrochemical | Redox behavior of sample | Overall characterization of herbal medicines [10] | Low-cost, simple sample treatment | Lacks specificity for individual components |

The Computational Engine: Chemometric and Machine Learning Workflow

Raw fingerprint data is complex and multivariate. Extracting meaningful discriminatory information requires a structured chemometric workflow encompassing data preprocessing, fusion, and modeling.

Data Preprocessing

Spectral and chromatographic data often contain non-chemical variances (noise, baseline drift, light scattering effects). Preprocessing is critical to enhance the chemical signal. Common strategies include:

- Smoothing (e.g., Savitzky-Golay): Reduces high-frequency noise [13].

- Scatter Correction (e.g., Multiplicative Signal Correction - MSC): Corrects for additive and multiplicative baseline effects.

- Derivatization: Enhances resolution of overlapping peaks by highlighting inflection points.

Data Fusion Strategies

To overcome the limitations of any single technique, data from multiple analytical platforms can be fused to create a more comprehensive chemical descriptor of the sample [9] [14].

- Low-Level Fusion: Concatenates raw or preprocessed data from multiple instruments into a single matrix. It is simple to implement and can yield high accuracy with limited data [9].

- Mid-Level Fusion: Involves feature extraction (e.g., via PCA) from each data block first, followed by concatenation of the selected features.

- High-Level Fusion: Builds separate models on different data blocks and combines their predictions.

- Complex-Level Ensemble Fusion (CLF): An advanced two-layer algorithm that jointly selects variables from concatenated data, projects them with Partial Least Squares (PLS), and stacks the latent variables into a powerful ensemble model like XGBoost, effectively capturing feature- and model-level complementarities [14].

Machine Learning for Discrimination and Classification

Both traditional chemometric and modern machine learning (ML) algorithms are used to model the data.

- Unsupervised Learning (Exploration): Principal Component Analysis (PCA) is the most common method for exploratory data analysis. It reduces data dimensionality and allows visualization of natural sample clustering and outlier detection [13] [10].

- Supervised Learning (Prediction): These models are trained on labeled data to predict the class of unknown samples.

- PLS-Discriminant Analysis (PLS-DA): A classical chemometric method that finds a linear relationship between spectral data (X) and class membership (Y) [13].

- Support Vector Machine (SVM): Effective for both linear and non-linear classification, especially with limited training samples [12].

- Random Forest (RF): An ensemble of decision trees that provides robust performance and feature importance rankings [12].

- XGBoost: An advanced gradient-boosting algorithm known for high predictive accuracy in regression and classification tasks [12] [14].

Diagram 1: Data Fusion and Modeling Workflow. This diagram outlines the logical flow from sample analysis through multiple techniques, data preprocessing, fusion of fingerprints, and final model-based discrimination.

Application Notes & Detailed Experimental Protocols

Protocol 1: Discrimination of Medicinal Plants via Multi-Sensor and Fingerprint Fusion

This protocol is adapted from research on discriminating Asarum heterotropoides (AH) from Cynanchum paniculatum (CP) [10].

4.1.1 Research Reagent Solutions & Materials Table 2: Essential Materials for Protocol 1

| Item | Function / Description | Source Example |

|---|---|---|

| Plant Material | 7+ batches each of AH and CP, authenticated by a botanist. | Regional medicinal herb trading centers. |

| Chemical Standards | e.g., asarinin, methyl eugenol; purity >98% for method validation. | Commercial biotechnology suppliers (e.g., Chengdu Push). |

| Belousov-Zhabotinsky Reagents | H₂SO₄, CH₂(COOH)₂, (NH₄)₂SO₄·Ce(SO₄)₂ for electrochemical fingerprinting. | Standard chemical reagent suppliers (e.g., Sinopharm). |

| Purified Water | Solvent for E-tongue and LC-MS mobile phase preparation. | Commercial suppliers (e.g., Wahaha Group). |

4.1.2 Step-by-Step Procedure

- Sample Preparation:

- Pulverize dried plant material to a homogeneous powder.

- For E-nose/E-tongue: Use a defined weight of powder, optionally with a standardized extraction procedure.

- For LC-MS: Extract powder with a suitable solvent (e.g., methanol/water) and filter through a 0.22 μm membrane.

- For Electrochemical fingerprint: Prepare an aqueous extract for injection into the electrochemical cell.

Instrumental Analysis:

- E-nose: Place the sample headspace into the sensor chamber. Acquire data for a set time (e.g., 140 s) to capture the full sensor response profile.

- E-tongue: Immerse the sensor array into the liquid sample or extract. Record the taste response signal.

- LC-HR-Q-TOF-MS/MS: Inject the sample extract. Use a C18 column and a water-acetonitrile gradient elution. Operate the MS in positive/negative ion mode for broad metabolite coverage.

- Electrochemical Fingerprint: Load the sample extract into the reaction cell containing the B-Z reaction mixture. Initiate the reaction and record the potential/time or current/potential profile.

Data Processing & Modeling:

- Export raw data from all instruments.

- Preprocess spectra and chromatograms (smoothing, alignment, etc.).

- Fuse the processed data from the four techniques (DES and DFS) using a low-level or mid-level fusion approach.

- Input the fused data matrix into a pattern recognition model:

- Use PCA for unsupervised exploration of natural clustering.

- Use OPLS-DA or SVM to build a supervised classification model with 100% accuracy, as demonstrated in the source study [10].

Protocol 2: Monitoring Herbal Processing with Multimodal Spectroscopy

This protocol is based on quality control of processed Trionycis Carapax using chromatography, E-eye, E-nose, and NIR [9].

4.2.1 Step-by-Step Procedure

- Sample Processing & Design:

- Obtain 10+ batches of raw material.

- Subject them to different processing degrees (e.g., raw, steamed for 45 mins, vinegar-processed).

Multimodal Analysis:

- HPLC Fingerprinting: Perform amino acid profile analysis. Use a C18 column and a gradient elution. Employ a fingerprint similarity evaluation system to identify common peaks.

- Electronic Eye (E-eye): Capture images of the samples under standardized lighting. Convert the colors to Lab* parameters for quantitative analysis.

- E-nose & NIR: Follow standard operational procedures as described in Protocol 1 for these techniques.

Data Fusion and Modeling:

- Concatenate the key variables from HPLC (peak areas), E-eye (Lab* values), E-nose (sensor responses), and NIR (absorbance at key wavelengths) into a single data matrix using low-level fusion.

- Analyze the fused data with PCA to visualize the clustering of samples based on processing method.

- Build a PLS-DA or Random Forest model to predict the processing degree of unknown samples and identify which analytical variables are most influential in the discrimination.

The fusion of spectral/chromatographic fingerprinting and chemometric machine learning represents a powerful and transformative approach for the discrimination of complex samples. By moving beyond single-technique analysis and embracing multimodal data fusion, researchers can build models that are more accurate, robust, and informative. The protocols outlined herein provide a clear roadmap for implementing this strategy, enabling advancements in drug safety, food authentication, and quality control across industries. As machine learning algorithms continue to evolve, their integration with these analytical techniques will further enhance our ability to decode the complex chemical narratives contained within spectral and chromatographic fingerprints.

Chemometrics, defined as the chemical discipline that uses mathematics, statistics, and formal logic to design optimal experiments and extract relevant chemical information from data, has undergone a profound transformation [11]. From its early foundations in linear methods like Principal Component Analysis (PCA), the field has progressively integrated advanced machine learning (ML) and artificial intelligence (AI) techniques to handle the complexity and volume of modern chemical data [15] [8]. This evolution has enabled researchers to move beyond simple linear relationships to model intricate, non-linear patterns in complex datasets, revolutionizing areas from spectroscopy to drug development.

The integration of AI represents a paradigm shift in chemometrics [8]. Modern AI and machine learning techniques, including supervised, unsupervised, and reinforcement learning, are now applied across spectroscopic methods using near-infrared (NIR), infrared (IR), Raman, and atomic spectroscopy [8]. This partnership enhances spectroscopy by automating feature extraction and nonlinear calibration, significantly improving the analysis of complex datasets [8].

Theoretical Foundations and Evolution

From Classical Chemometrics to Artificial Intelligence

The field of chemometrics emerged in the 1970s, with seminal work bringing computer-assisted analysis to chromatography, UV, IR, 13C-NMR, and mass spectrometric data [11]. Early efforts focused on pattern recognition influenced by two primary approaches: statistical methods (including discriminant analysis and Bayesian models) and kernel methods (which would later evolve into machine learning techniques like self-organizing maps and support vector machines) [11].

A fundamental distinction exists between classical chemometrics and modern machine learning. Traditional chemometrics primarily relies on linear relationships within data, while machine learning excels at handling large, non-linear datasets [11]. Machine learning involves training algorithms with chemical data, allowing them to learn from examples rather than following exclusively pre-programmed rules [11].

Key Definitions in Modern Chemometric AI:

- Artificial Intelligence (AI): The engineering of systems capable of producing intelligent outputs, predictions, or decisions based on human-defined objectives [8].

- Machine Learning (ML): A subfield of AI that develops models capable of learning from data without explicit programming, identifying structure in data and improving performance with more examples [8].

- Deep Learning (DL): A specialized subset of ML employing multi-layered neural networks capable of hierarchical feature extraction, including architectures like convolutional neural networks (CNNs) and recurrent neural networks (RNNs) [8].

- Generative AI (GenAI): Extends deep learning by enabling models to create new data, spectra, or molecular structures based on learned distributions, useful for balancing datasets or simulating missing spectral data [8].

Core Algorithm Types in Modern Chemometrics

The machine learning algorithms applied in chemometrics fall into several key categories, each with distinct strengths for analytical chemistry applications.

Table 1: Core Machine Learning Models in Modern Chemometrics

| Model | Primary Function | Key Strengths | Common Spectroscopic Applications |

|---|---|---|---|

| Principal Component Analysis (PCA) | Dimensionality reduction, exploratory analysis | Identifies patterns, highlights similarities/differences, reduces data dimensionality without significant information loss | Outlier detection, data structure visualization, exploratory spectral analysis [8] |

| Partial Least Squares (PLS) | Regression, classification | Handles correlated variables, works with more variables than samples, models relationship between spectra and properties | Quantitative calibration, multivariate classification, concentration prediction [8] |

| Support Vector Machine (SVM) | Classification, regression | Effective in high-dimensional spaces, handles non-linear relationships via kernels, robust with limited samples | Food authentication, pharmaceutical quality control, disease diagnosis based on spectral patterns [8] |

| Random Forest (RF) | Classification, regression | Reduces overfitting, handles non-linearity, provides feature importance rankings | Spectral classification, authentication, process monitoring, identifying diagnostic wavelengths [8] |

| Multilayer Perceptron (MLP) | Regression, classification | Models complex non-linear relationships, learns hierarchical features, high predictive accuracy | Drug release prediction, complex spectral quantification, pattern recognition in spectral data [16] [17] |

Application Note: Predicting Drug Release in Polysaccharide-Coated Formulations

Experimental Background and Objective

Targeted colonic drug delivery requires formulations that remain intact in stomach conditions but release their active ingredients in the colonic tissue [16]. This is typically achieved by coating drug formulations with polysaccharides [16]. In this application note, we detail a methodology based on PCA and machine learning regression for predicting 5-aminosalicylic acid (5-ASA) drug release from polysaccharide-coated formulations, providing a robust framework for similar analytical challenges in pharmaceutical development.

The primary objective was to develop a predictive model that could accurately forecast drug release behavior at different time points using Raman spectral data, thereby reducing the need for extensive physical testing and accelerating formulation development [16].

Research Reagent Solutions and Materials

Table 2: Essential Research Materials and Their Functions

| Material/Reagent | Specifications | Function in Experiment |

|---|---|---|

| 5-aminosalicylic acid (5-ASA) | Active Pharmaceutical Ingredient (API) | Model drug compound for colonic delivery formulations [16] |

| Polysaccharide Coatings | Various types (e.g., chitosan, alginate) | Formulation coating that provides persistence in stomach conditions and targeted release in colonic tissue [16] |

| Raman Spectrometer | Spectral data collection capability | Analytical instrument for non-destructive collection of spectral data from pharmaceutical formulations [16] |

| Experimental Media | Control, Patient, Rat, Dog media conditions | Simulates different biological environments for drug release testing [16] |

| Computational Tools | Python/R with ML libraries, Slime Mould Algorithm | Environment for model development, hyperparameter tuning, and data analysis [16] |

Experimental Protocol and Workflow

Step 1: Data Collection and Dataset Construction

- Collect Raman spectral data from polysaccharide-coated 5-ASA formulations under different conditions [16].

- Structure a dataset containing 155 data points with over 1500 spectral features as predictor variables [16].

- Include critical metadata: "time" (2, 8, and 24 hours), "medium" (Control, Patient, Rat, Dog), and "polysaccharide name" as categorical variables [16].

- Define the target variable as the measured release of 5-ASA drug [16].

Step 2: Data Preprocessing and Enhancement

- Apply standard normalization to ensure all features have a mean of zero and standard deviation of one, preventing features with larger scales from disproportionately influencing models [16].

- Implement Principal Component Analysis (PCA) for dimensionality reduction, retaining the most significant variance while simplifying the feature space from 1500+ features [16].

- Perform outlier detection using Cook's Distance to identify and exclude influential outliers that could distort regression models [16].

Step 3: Model Selection and Hyperparameter Tuning

- Select multiple machine learning models for comparison: Elastic Net (EN), Group Ridge Regression (GRR), and Multilayer Perceptron (MLP) [16].

- Optimize model hyperparameters using the Slime Mould Algorithm (SMA), inspired by the food foraging behavior of slime moulds, which efficiently balances exploration of new solutions with exploitation of promising areas in the solution space [16].

Step 4: Model Validation and Performance Assessment

- Implement k-fold cross-validation (k=3) to mitigate overfitting and provide reliable performance estimates across different data subsets [16].

- Evaluate models using multiple metrics: coefficient of determination (R²), root mean square error (RMSE), and mean absolute error (MAE) [16].

- Compare actual versus predicted values using parity plots and analyze learning curves to assess model reliability and identify potential overfitting [16].

Results and Performance Metrics

The comparative analysis revealed significant performance differences among the three machine learning models evaluated for predicting 5-ASA drug release.

Table 3: Performance Comparison of Machine Learning Models for Drug Release Prediction

| Model | R² Score | RMSE | MAE | Key Characteristics |

|---|---|---|---|---|

| Elastic Net (EN) | 0.9760 | 0.0342 | 0.0267 | Blends LASSO and Ridge regression, offers feature selection and regularization [16] |

| Group Ridge Regression (GRR) | 0.7137 | 0.0907 | 0.0744 | Applies regularization at group level, effective for structured data [16] |

| Multilayer Perceptron (MLP) | 0.9989 | 0.0084 | 0.0067 | Deep learning model with multiple neuron layers, excels at nonlinear patterns [16] |

The MLP model demonstrated exceptional performance, achieving remarkably high R² values and low error metrics, indicating close alignment between actual and predicted drug release values [16]. Parity plots and learning curves further validated MLP's predictive reliability, showing efficient learning with minimal overfitting compared to the other models [16].

Advanced Applications in Spectroscopy and Drug Discovery

Spectroscopy and Chemical Analysis

The integration of chemometrics with spectroscopy has transformed analytical chemistry, enabling rapid, non-destructive, and high-throughput chemical analysis across numerous domains [8]. In food chemistry, machine learning techniques discriminate between quality grades of products like sauce-flavor baijiu based on biomarker and key flavor compound screening [17]. Similar approaches are applied in food authentication, pharmaceutical quality control, and environmental analysis [8].

Deep learning approaches have shown particular promise for enhancing spectroscopic data analysis. Convolutional Neural Networks (CNNs) have been successfully implemented as single-step preprocessing tools for Raman spectra, handling multiple preprocessing steps including cosmic ray removal, smoothing, and baseline subtraction simultaneously [15]. These AI-driven approaches often achieve higher quality results than traditional reference methods like second-difference, asymmetric least squares, and cross-validation [15].

Drug Discovery and Development

Artificial intelligence is revolutionizing traditional drug discovery and development models by seamlessly integrating data, computational power, and algorithms [18]. This synergy enhances the efficiency, accuracy, and success rates of drug research while shortening development timelines and reducing costs [18].

AI and machine learning demonstrate significant advancements across multiple pharmaceutical domains, including drug characterization, target discovery and validation, small molecule drug design, and clinical trial acceleration [18]. Through molecular generation techniques, AI facilitates the creation of novel drug molecules while predicting their properties and activities, and virtual screening optimizes drug candidates [18].

Challenges and Future Perspectives

Despite the remarkable progress in chemometric machine learning applications, several challenges remain. Data availability and reproducibility represent particular concerns in applying machine learning to chemistry [11]. Furthermore, AI-driven pharmaceutical companies must effectively integrate biological sciences with algorithms, ensuring the successful fusion of wet and dry laboratory experiments [18].

The establishment of robust data-sharing mechanisms and more comprehensive intellectual property protections for algorithms will be crucial for advancing the field [18]. Additionally, as models become more complex, interpretability remains a challenge, motivating the use of explainable AI (XAI) techniques to preserve chemical insight while leveraging powerful predictive models [8].

Future developments will likely focus on enhanced automation, improved model interpretability, and the integration of generative AI for synthetic data generation to address data scarcity issues [8]. As these technical and methodological barriers are addressed, AI-driven therapeutics and analytical methods are poised for broader and more impactful implementation across the chemical and pharmaceutical sciences [18].

Defining the Forensic and Industrial Challenges in Paper Analysis and Differentiation

The analysis and differentiation of paper substrates represent a critical challenge at the intersection of forensic science and industrial manufacturing. In forensic contexts, it facilitates the investigation of document forgery, fraud, and historical authentication, while industrially, it supports quality control, brand protection, and the development of sustainable products [19] [20]. The convergence of increased data complexity, evolving material compositions, and the demand for non-destructive, rapid analysis necessitates advanced analytical frameworks. This document details the specific challenges and provides application notes and protocols, framed within a thesis on chemometric machine learning for paper discrimination research, to guide researchers and scientists in developing robust analytical solutions.

Defining the Challenges

The field of paper analysis is constrained by a series of interconnected forensic and industrial challenges, which are summarized in the table below.

Table 1: Core Challenges in Forensic and Industrial Paper Analysis

| Challenge Domain | Specific Challenge | Impact on Analysis & Differentiation |

|---|---|---|

| Forensic Challenges | Cross-Modal Authorship Verification [19] | Difficulty in determining if handwritten documents on physical paper and digital devices are from the same author. |

| Data Volume & Variety [21] | Large amounts of data from multiple sources (e.g., paper, digital scans) complicate evidence processing. | |

| Evidence Authenticity [21] | Proliferation of AI-generated forgeries (e.g., deepfakes) challenges the verification of document authenticity. | |

| Industrial Challenges | Resource Intensive Production [20] [22] | High water and energy consumption, alongside wastewater generation, complicates sustainable analysis. |

| Raw Material Cost & Supply [20] | Price volatility and supply chain disruptions for wood pulp affect batch-to-batch consistency and analysis. | |

| Digital Media Competition [20] | Declining demand for graphic paper pushes analysis focus towards packaging and specialty papers. | |

| Labor Shortages [20] | Lack of skilled personnel for traditional analysis accelerates the need for automated, machine-learning solutions. | |

| Technical & Analytical Challenges | Complex Data Interpretation | Data from techniques like spectroscopy require multivariate analysis (chemometrics) for accurate classification. |

| Need for Non-Destructive Methods | Forensic and valuable historical samples require analytical techniques that preserve sample integrity. |

Chemometric Machine Learning Framework for Paper Discrimination

Chemometrics, which applies mathematical and statistical methods to chemical data, is fundamental to modern paper analysis. When combined with machine learning (ML), it creates a powerful framework for discriminating between paper types based on their chemical or physical signatures [23] [24]. The general workflow is depicted below.

Figure 1: Chemometric Machine Learning Workflow for Paper Analysis. This diagram outlines the standard pipeline for differentiating paper samples, from data acquisition to final classification.

Key Chemometric and Machine Learning Techniques

- Principal Component Analysis (PCA): An unsupervised technique used for exploratory data analysis and dimensionality reduction. It helps visualize natural clustering of paper samples based on their intrinsic properties [25] [24].

- Partial Least Squares Discriminant Analysis (PLS-DA): A supervised classification method that finds a linear relationship between spectral data (X) and the class membership of paper samples (Y). It is highly effective for building predictive models [25] [24].

- Hierarchical Cluster Analysis (HCA): Another unsupervised method that builds a hierarchy of clusters, often visualized as a dendrogram, to show the relatedness between different paper samples [25].

- Deep Convolutional Neural Networks (DCNNs): These deep learning models are highly effective for analyzing complex data like spectral images, offering superior accuracy and noise tolerance, though they require significant computational resources [26].

Application Notes & Experimental Protocols

Protocol 1: Mid-Infrared Spectroscopy with Chemometric Analysis for Paper Fiber Identification

This protocol is adapted from methodologies used in plastic waste discrimination and is tailored for paper analysis [26]. It is designed to identify the primary fiber composition (e.g., wood pulp, cotton, bamboo) and detect additives.

1. Objective: To discriminate between paper types based on their molecular fingerprint using Attenuated Total Reflectance Fourier Transform Infrared (ATR-FTIR) spectroscopy coupled with chemometric analysis.

2. Research Reagent Solutions & Essential Materials

Table 2: Key Materials for ATR-FTIR Analysis of Paper

| Item | Function/Description |

|---|---|

| ATR-FTIR Spectrometer | Instrument for collecting mid-infrared spectra. Equipped with a diamond or crystal ATR accessory. |

| Paper Samples | Samples of interest, including standards of known composition for model training. |

| Laboratory Press | (Optional) Used to create uniform, smooth pellets if a transmission mode is used instead of ATR. |

| Hydraulic Pellet Press | (Optional) Used with KBr to create transparent pellets for transmission FTIR. |

| Potassium Bromide (KBr) | High-purity salt used for preparing solid sample pellets for transmission FTIR analysis. |

| Spectroscopy Software | Vendor software for instrument control, data acquisition, and initial spectral processing. |

| Chemometrics Software | Software platform (e.g., Python with scikit-learn, R, MATLAB, commercial suites) for multivariate analysis. |

3. Experimental Procedure:

- Step 1: Sample Preparation. Cut a small section (approx. 2mm x 2mm) from the paper sample. For ATR-FTIR, no further preparation is typically needed. Flatten the sample to ensure good contact with the ATR crystal. If using transmission FTIR, homogenize the sample and prepare a KBr pellet.

- Step 2: Spectral Acquisition. Place the paper sample firmly onto the ATR crystal. Collect spectra in the range of 4000 - 400 cm⁻¹. Set the resolution to 4 cm⁻¹ and accumulate 32-64 scans to ensure a high signal-to-noise ratio. Record a background spectrum with a clean ATR crystal before measuring each sample or set of samples.

- Step 3: Data Preprocessing. Process all raw spectra to minimize the influence of non-compositional variances. Standard preprocessing steps include:

- Absorbance Conversion: Convert raw interferograms to absorbance spectra.

- Vector Normalization: Scale the spectra to account for minor path length or concentration differences.

- Savitzky-Golay Smoothing: Apply to reduce high-frequency noise.

- Standard Normal Variate (SNV) or Multiplicative Scatter Correction (MSC): Correct for light scattering effects, particularly if sample surface texture varies.

4. Chemometric Modeling & Differentiation:

- Exploratory Analysis: Perform PCA on the preprocessed spectral data to visualize inherent clustering and identify potential outliers.

- Classification Model: Develop a PLS-DA model using the spectra from a training set of paper samples with known origins or compositions.

- Model Validation: Validate the model's performance using a separate test set of samples. Employ cross-validation (e.g., leave-one-out or k-fold) to assess the model's robustness and predictive accuracy.

Protocol 2: Paper-Based Analytical Devices (PADs) for Colorimetric Analysis of Paper Coatings

This protocol leverages the principles of paper-based analytical devices, turning the paper substrate itself into a sensor platform [24]. It can be used to detect and semi-quantify specific chemical agents (e.g., coatings, fillers, or residues) on paper surfaces.

1. Objective: To develop a simple, low-cost colorimetric assay on a paper platform for the rapid detection of specific chemical components in paper coatings.

2. Experimental Workflow:

The logical flow for developing and utilizing a PAD for paper analysis is as follows.

Figure 2: Workflow for Paper-Based Colorimetric Analysis. This diagram outlines the steps for creating and using a paper-based device to detect chemical components.

3. Research Reagent Solutions & Essential Materials

Table 3: Key Materials for Paper-Based Colorimetric Analysis

| Item | Function/Description |

|---|---|

| Filter/Chromatography Paper | The substrate for creating the microfluidic PAD. |

| Wax Printer or Plotter | Used to create hydrophobic barriers on the paper, defining the hydrophilic test zones. |

| Colorimetric Probe | A chemical reagent that changes color upon reaction with the target analyte (e.g., ninhydrin for proteins). |

| Micropipettes | For precise application of reagents and sample solutions. |

| Hot Plate/Oven | To melt printed wax and form solid hydrophobic barriers. |

| Imaging Device | Flatbed scanner or smartphone with a fixed mount for consistent image capture. |

| Image Analysis Software | Software (e.g., ImageJ) to convert color intensity in the test zones into numerical values. |

4. Experimental Procedure:

- Step 1: PAD Fabrication. Design a simple pattern with one or more test zones and a sample introduction zone. Print the pattern onto filter paper using a wax printer. Heat the paper on a hot plate (e.g., ~120°C for 2 minutes) to allow the wax to penetrate the paper and create complete hydrophobic barriers.

- Step 2: Reagent Deposition. Apply a precise volume (e.g., 1-5 µL) of the colorimetric reagent solution to the test zone(s). Allow the PAD to dry completely at room temperature, protected from light if the reagent is light-sensitive.

- Step 3: Sample Preparation & Introduction. Prepare an extract from the paper sample under investigation. This may involve soaking a small piece of the paper in a solvent (e.g., water, ethanol) to dissolve the target analyte. Apply a controlled volume (e.g., 10-30 µL) of the sample extract to the sample introduction zone of the PAD.

- Step 4: Image Acquisition & Analysis. After the color development is complete (e.g., after 5-10 minutes), capture an image of the PAD under consistent lighting conditions using a scanner or smartphone. Use image analysis software to measure the color intensity (e.g., in the red, green, and blue channels) of the test zone. Correlate the intensity value to analyte concentration using a calibration curve constructed with standards of known concentration.

The challenges in paper analysis and differentiation are multifaceted, spanning forensic, industrial, and technical domains. The integration of advanced analytical techniques, such as spectroscopy and colorimetric assays, with a robust chemometric machine learning framework provides a powerful solution. The application notes and detailed protocols outlined herein offer researchers and scientists a structured approach to tackle these challenges, enabling precise discrimination, authentication, and quality assessment of paper substrates. This structured, data-driven methodology is essential for advancing research and application in both forensic science and industrial paper manufacturing.

Methodologies in Action: Building Robust Chemometric and Machine Learning Models

In chemometric machine learning for document paper discrimination, spectral data acquired from analytical techniques like Raman, FT-IR, or NIR spectroscopy is inherently affected by various non-ideal phenomena that can obscure chemically relevant information. These undesired effects include instrumental noise, baseline shifts, and light scattering effects caused by physical sample properties. Without proper correction, these artifacts can severely degrade the performance of multivariate classification and regression models, leading to inaccurate discrimination of paper types, inks, or other forensic evidence. Data preprocessing serves as a critical bridge between raw spectral acquisition and meaningful chemometric modeling, transforming raw data into chemically interpretable features by minimizing systematic noise and sample-induced variability [27].

The fundamental challenge in document analysis research lies in ensuring that spectral differences used for machine learning models reflect genuine compositional variations between paper samples rather than artifacts from sample presentation or instrument drift. Proper preprocessing ensures that subtle spectral features crucial for discriminating between chemically similar papers are enhanced and made accessible to pattern recognition algorithms. This protocol outlines a systematic approach to spectral preprocessing, providing researchers with standardized methodologies for achieving reliable, reproducible results in document discrimination studies.

Core Preprocessing Techniques: Principles and Applications

Smoothing Techniques

Smoothing algorithms reduce high-frequency random noise in spectral data while preserving the underlying signal shape. This process is essential for enhancing the signal-to-noise ratio before subsequent analysis steps.

Savitzky-Golay Smoothing: This widely-used method performs local polynomial regression to smooth spectral data. It operates by fitting successive subsets of adjacent data points with a low-degree polynomial using the method of linear least squares. The key advantage of Savitzky-Golay filtering is its ability to preserve the shape and height of spectral peaks better than adjacent averaging techniques. For Raman spectra of paper samples, a common implementation uses a 7-point quadratic filter with a first-order derivative to simultaneously smooth spectra and remove baseline variations [28].

Wavelet Transform Denoising: Wavelet-based methods provide multi-resolution analysis capabilities, making them particularly effective for signals with non-stationary noise characteristics. The process involves decomposing spectra into different frequency components using a chosen wavelet function (e.g., 'db6'), selectively suppressing high-frequency coefficients corresponding to noise, and reconstructing the signal. This approach is highly effective for removing complex noise patterns from NIR spectra of document papers while preserving critical discriminant features [29].

Baseline Correction Methods

Baseline correction addresses low-frequency background signals caused by fluorescence, detector drift, or sample matrix effects that can obscure Raman and NIR spectral features crucial for paper discrimination.

Asymmetric Least Squares (ALS): This iterative algorithm fits a smooth baseline to spectra by applying differential penalties to positive (peak) and negative (baseline) deviations. The method uses two key parameters: λ (smoothness) and p (asymmetry). Typical values for Raman spectra range from λ=10³-10⁹ and p=0.001-0.1, with optimal parameters determined through systematic evaluation. ALS effectively handles varying baseline shapes commonly encountered in paper document analysis, particularly with aging or degraded samples [29].

Wavelet Transform Baseline Correction: Operating as the inverse of wavelet denoising, this method removes low-frequency components by setting the approximation coefficients to zero after wavelet decomposition. While computationally efficient, this approach may oversimplify complex baselines in paper spectra with broad fluorescence backgrounds, requiring careful selection of wavelet type and decomposition level [29].

Derivative-Based Correction: First and second derivatives of spectra effectively eliminate constant and linear baseline offsets respectively. The Savitzky-Golay algorithm is frequently employed to compute derivatives while simultaneously smoothing data. Second-derivative transformation is particularly effective for resolving overlapping peaks in NIR spectra of complex paper compositions [27].

Scatter Correction Techniques

Light scattering effects from surface irregularities and particle size differences in paper samples can create multiplicative effects that dominate spectral variance, masking chemically relevant information.

Multiplicative Scatter Correction (MSC): This method models and removes scattering effects by comparing each spectrum to an ideal reference spectrum (typically the mean spectrum). MSC calculates two parameters for each spectrum: an additive term (baseline shift) and a multiplicative term (scale effect). The algorithm effectively normalizes spectra to a common scale, making it particularly valuable for paper discrimination studies where surface texture variations might otherwise dominate classification models [27] [30].

Standard Normal Variate (SNV): SNV processes each spectrum individually by centering (subtracting the mean) and scaling (dividing by the standard deviation). This approach is particularly effective when no ideal reference spectrum exists, making it suitable for heterogeneous document collections with diverse paper types and compositions. SNV successfully reduces scattering effects from irregular paper surfaces and fiber density variations [27] [30].

Extended Multiplicative Scatter Correction (EMSC): An advanced extension of MSC, this method incorporates wavelength-dependent effects and can separate chemical light absorption from physical light scattering. EMSC is particularly valuable for paper discrimination research as it can model and correct for specific known interferents, such as fillers or coatings, that might otherwise confound classification algorithms [30].

Table 1: Performance Comparison of Scatter Correction Methods for Paper Sample Classification

| Method | Accuracy Improvement | Processing Time | Key Advantage | Limitation |

|---|---|---|---|---|

| MSC | 25-35% | Fast | Preserves chemical band ratios | Requires representative reference |

| SNV | 20-30% | Fast | No reference needed | May over-correct in noisy regions |

| EMSC | 30-40% | Moderate | Separates chemical/physical effects | Requires prior knowledge of components |

| OPLEC | 35-45% | Moderate | Optimal for multi-parameter estimation | Complex parameter optimization |

Experimental Protocols for Document Analysis Applications

Comprehensive Spectral Preprocessing Workflow

The following protocol outlines a systematic approach for preprocessing spectral data in document discrimination research, from initial quality assessment through to preparation for chemometric modeling.

Step 1: Data Quality Assessment and Validation

- Visually inspect all raw spectra to identify obvious abnormalities, saturation effects, or instrumental artifacts

- Calculate signal-to-noise ratios for key diagnostic peaks (e.g., cellulose band at 1095 cm⁻¹ in Raman spectra)

- Establish quality control metrics and exclusion criteria for spectra failing to meet minimum data quality standards

Step 2: Spectral Smoothing Procedure

- Select smoothing parameters based on spectral characteristics and noise level

- For Savitzky-Golay smoothing:

- Apply a 7-point window with second-order polynomial fitting

- Validate smoothing effectiveness by monitoring signal-to-noise improvement without significant peak broadening

- For wavelet denoising:

- Use 'db6' wavelet with 7 decomposition levels

- Apply soft thresholding to detail coefficients using universal threshold rule

- Compare smoothed spectra to originals to ensure critical discriminant features are preserved

Step 3: Baseline Correction Implementation

- Assess baseline shape and complexity to determine optimal correction method

- For asymmetric least squares (ALS) baseline correction:

- Set initial parameters to λ=10⁶ and p=0.01

- Perform 10 iterations or until convergence (baseline change < 0.1%)

- Optimize parameters using simulated datasets with known baselines

- Validate correction by confirming baseline flatness in known peak-free regions

- Ensure residual baseline does not exceed 1% of dominant peak intensity

Step 4: Scatter Correction Application

- Apply SNV correction to address multiplicative effects:

- Calculate mean and standard deviation for each spectrum individually

- Center and scale each spectrum using these sample-specific statistics

- For MSC, use class-specific mean spectra as references when known paper types are available

- Verify correction effectiveness through PCA scores plots showing improved clustering by paper type rather than surface texture

Step 5: Data Integrity Validation

- Confirm that preprocessing maintains relative peak intensities between known standards

- Verify that class differences in validation samples are enhanced rather than diminished

- Ensure processed spectra maintain mathematical validity (non-negative intensities where appropriate)

Spectral Preprocessing Workflow for Document Analysis

Protocol for Preprocessing Method Optimization

Selecting optimal preprocessing parameters requires systematic evaluation to maximize model performance while avoiding over-processing that could discard chemically relevant information.

Experimental Design for Parameter Optimization

- Prepare a representative dataset including all expected paper types and conditions

- Divide data into training, validation, and test sets (60/20/20 split recommended)

- Process training set with varying parameter combinations

- Evaluate preprocessing effectiveness using both qualitative (visual inspection) and quantitative (model performance metrics) approaches

Parameter Optimization Procedure

- Establish baseline performance with raw spectra using a standard classification algorithm (e.g., PLS-DA)

- Systematically test preprocessing combinations using a factorial design:

- Smoothing: Window size (5-15 points), polynomial order (2-3)

- Baseline correction: Method (ALS, derivative, wavelet), parameters (λ, p)

- Scatter correction: Method (SNV, MSC, EMSC)

- Evaluate each combination using cross-validated classification accuracy on the validation set

- Select optimal parameter set that maximizes discrimination accuracy while maintaining model interpretability

- Validate final workflow on independent test set to estimate real-world performance

Performance Metrics for Optimization

- Calculate root mean squared error (RMSE) of prediction for quantitative models

- Determine classification accuracy, precision, and recall for discriminant models

- Assess model robustness through ratio of performance to inter-quartile distance (RPIQ)

- Evaluate model simplicity through number of latent variables required

Table 2: Essential Research Reagents and Computational Tools for Spectral Preprocessing

| Category | Specific Tool/Software | Application in Document Analysis | Key Parameters |

|---|---|---|---|

| Spectral Processing Software | R Language (v4.1.2+) | Data preprocessing, spectral preprocessing, and features selection | Packages: prospectr, baseline, hyperSpec |

| Python Libraries | Python (v3.10.1+) | Full-range ML model development | Libraries: PyWavelets, SciPy, Scikit-learn |

| Smoothing Algorithms | Savitzky-Golay Filter | Removal of high-frequency noise from paper spectra | Window size: 7-15 points, Polynomial order: 2-3 |

| Baseline Correction Methods | Asymmetric Least Squares | Correction of fluorescence background in Raman spectra | λ: 10³-10⁹, p: 0.001-0.1, Iterations: 5-15 |

| Scatter Correction Techniques | Standard Normal Variate | Normalization for surface texture variations in paper | Individual spectrum centering and scaling |

| Wavelet Analysis Tools | PyWavelets Library | Multi-resolution analysis for noise and baseline removal | Wavelet type: 'db6', Levels: 5-7, Threshold: universal |

Advanced Applications and Ensemble Approaches

Ensemble Preprocessing Strategies

Recent advances in chemometric preprocessing have demonstrated that combining multiple preprocessing techniques in complementary ways can remove artifacts more effectively than any single method. Ensemble approaches are particularly valuable for document discrimination research where multiple interference types often coexist.

Complementary Method Selection: Combine techniques that address different types of artifacts:

- SNV followed by derivative processing to address both scattering and baseline effects

- Wavelet denoising combined with ALS baseline correction for complex backgrounds

- MSC with EMSC extensions to handle both general and specific scattering effects

Multi-Block Data Analysis: This advanced ensemble approach combines multiple preprocessed versions of the same spectral data, treating each version as a separate data block. The method has shown superior performance for complex classification tasks involving historical documents with multiple interference sources [31].

Fusion Method Implementation:

- Apply multiple preprocessing techniques to the same spectral dataset

- Develop separate classification models for each preprocessed version

- Combine model outputs using consensus voting or stacked generalization

- Validate ensemble performance against individual preprocessing methods

Method Selection Guidelines for Document Types

The optimal preprocessing strategy varies significantly based on document type, analytical technique, and specific research questions:

- Historical Documents: For aged papers with fluorescence and degradation products, implement ALS baseline correction followed by SNV normalization

- Modern Office Papers: With more uniform surfaces, Savitzky-Golay smoothing with MSC provides efficient preprocessing

- Security Documents: For complex documents with coatings and security features, ensemble approaches combining multiple techniques yield best results

- Mixed Quality Collections: When analyzing heterogeneous document sets, implement robust preprocessing with SNV and derivative methods