Challenging the Numbers: A Guide to Cross-Examining Likelihood Ratio Testimony in Scientific and Legal Contexts

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the foundational principles, practical application, and critical evaluation of Likelihood Ratio (LR) testimony.

Challenging the Numbers: A Guide to Cross-Examining Likelihood Ratio Testimony in Scientific and Legal Contexts

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the foundational principles, practical application, and critical evaluation of Likelihood Ratio (LR) testimony. It explores the statistical underpinnings of the LR as a measure of evidential weight, its application in drug safety signal detection and forensic science, and the essential methods for challenging its validity in legal and regulatory settings. The content addresses common pitfalls, optimization strategies for LR methodologies, and comparative analyses with alternative statistical approaches. By synthesizing insights from forensic statistics, legal evidence, and clinical research, this article equips professionals with the knowledge to critically assess, validate, and effectively communicate the strengths and limitations of LR-based evidence.

Demystifying the Likelihood Ratio: Core Concepts and Legal Relevance

Frequently Asked Questions (FAQs)

Foundational Concepts

Q1: What is a likelihood ratio (LR) within a Bayesian framework? The likelihood ratio is a central component of Bayesian inference, quantifying how strongly observed evidence supports one hypothesis relative to another. It is the engine that updates prior beliefs to posterior beliefs. Formally, the LR is the probability of observing the evidence under one hypothesis (H1) divided by the probability of observing that same evidence under an alternative hypothesis (H2): LR = P(Evidence | H1) / P(Evidence | H2) [1] [2]. Within Bayes' theorem, the LR bridges the prior and posterior odds: Posterior Odds = LR × Prior Odds [2].

Q2: How does the Bayesian interpretation of the LR differ from other statistical approaches? The key difference lies in the interpretation of probability and the final output. The Bayesian framework uses the LR to update personal degrees of belief in hypotheses, resulting in direct probability statements about those hypotheses (e.g., "The probability this suspect is the source of the evidence is X%") [1] [2]. In contrast, traditional frequentist statistics might use the LR for model comparison but does not output probabilities of hypotheses, as parameters are considered fixed, not random [1] [3].

Q3: What are the most common cognitive biases that affect the interpretation of LRs, and how can they be mitigated? Research has identified two primary probabilistic biases:

- Conservatism Bias: Individuals underweight new evidence and inadequately update their prior beliefs [4]. This is often observed in abstract, "small-world" tasks like urn problems.

- Base-Rate Neglect: Individuals underweight or ignore prior probabilities (base rates) and over-rely on the new evidence [4]. This is frequently seen in realistic, "large-world" scenarios like the taxi problem.

Mitigation strategies include using relative frequency formats instead of probabilities and designing evidence presentation to make prior information more salient [4].

Presentation and Communication

Q4: What is the best way to present LRs to maximize understanding for legal decision-makers? The existing empirical literature does not conclusively identify a single best format [5]. Studies have compared numerical LRs, numerical random-match probabilities, and verbal statements of the strength of support, but none have found a method that guarantees comprehension. Research indicates that simply explaining the meaning of an LR in testimony leads to only a small improvement in laypersons' understanding and does not reduce the occurrence of reasoning fallacies like the prosecutor's fallacy [6]. Therefore, presentation methods remain an active area of research.

Q5: What is the "prosecutor's fallacy" and how is it related to the LR? The prosecutor's fallacy is a common error of logic that confuses two different conditional probabilities. In the context of LRs, it manifests as mistaking the LR for the probability of the hypothesis being true. For example, an LR of 1000 does not mean there is a 99.9% probability the suspect is the source of the evidence; it only means the evidence is 1000 times more likely if the suspect is the source than if they are not. This fallacy arises from neglecting the prior odds of the hypothesis [6].

Experimental Validation and Application

Q6: How is the LR methodology applied in pharmacovigilance for drug safety? Likelihood Ratio Test (LRT) methodologies are rigorous statistical tools used to identify adverse events (AEs) linked to specific drugs in spontaneous reporting system databases. They help distinguish true safety signals from random noise. Recent advancements have led to novel pseudo-LRT methods that are superior for handling real-world data challenges, such as zero-inflated data (where there are many reports of zero AEs), offering better control of false discovery rates and substantially enhanced computational power [7].

Q7: How can the LR be used to re-interpret evidence from randomized clinical trials? The LR provides a method to quantify the strength of evidence for different treatment effects based on trial data. It moves beyond simple significance testing (p-values) to offer a more nuanced measure of how much the trial data supports one hypothesis about the treatment effect over another. This allows for a continuous interpretation of evidence, which can be particularly useful for trials that are not definitively positive or negative [8].

Troubleshooting Guides

Problem: Legal Decision-Makers Misinterpret Your LR Testimony

Potential Causes and Solutions:

- Cause 1: The trier of fact is committing the prosecutor's fallacy.

- Solution: Proactively address this in your testimony. Explicitly state what the LR means and what it does not mean. Use clear, non-technical language to explain that the LR is not the probability the hypothesis is true.

- Cause 2: The format of the LR presentation is confusing.

- Cause 3: The base rate (prior odds) is being neglected.

- Solution: Ensure that the prior odds are clearly communicated alongside the LR. Use educational interventions that emphasize the importance of both the prior information and the new evidence in forming a correct conclusion [4].

Problem: Your Computational Model for LR Calculation Fails to Converge or Produces Errors

Diagnostic Steps:

- Check Model Specification: Verify the underlying statistical model (e.g., Poisson, Zero-Inflated Poisson) is appropriate for your data type. Model misspecification is a common cause of failure [7].

- Examine the Data: Look for issues such as zero-inflation, over-dispersion, or outliers. If zero-inflation is present, a standard Poisson model may be invalid, and a Zero-Inflated Poisson (ZIP) model should be used instead [7].

- Validate Priors (Bayesian Models): If using a Bayesian model, conduct a sensitivity analysis. Check if your results change significantly with different, but still reasonable, prior distributions. An overly informative or poorly chosen prior can lead to computational instability or biased results [1] [2].

- Assess Algorithm Convergence: When using Markov Chain Monte Carlo (MCMC) methods (e.g., in Stan or JAGS), use diagnostics like trace plots, the Gelman-Rubin statistic (R-hat), and effective sample size (ESS) to ensure the algorithm has converged to the true posterior distribution [1].

Experimental Protocols for Key Cited Studies

Protocol 1: Investigating Context-Dependence of Probabilistic Biases

This protocol is based on the research detailed in [4], which studied how task context influences the weighting of priors and evidence.

1. Objective: To determine how "small-world" (abstract) versus "large-world" (realistic) scenarios affect the manifestation of conservatism bias and base-rate neglect.

2. Materials:

- Participants: 48 individuals (a mix of students and staff).

- Task Scenarios: 12 probability judgment tasks.

- Small-world scenarios: Classic urn problems (e.g., "An urn contains 85% green and 15% blue balls...").

- Large-world scenarios: Realistic narratives like the taxi problem (e.g., "A taxi was involved in a hit-and-run. 85% of taxis are Green, 15% are Blue. A witness identifies the taxi as Blue...").

- Framing: Each scenario is presented in both probability and relative frequency formats.

- Data Collection Tool: Paper-and-pencil or digital questionnaire.

3. Methodology:

- Independent Variables:

- Task Content: Urn (small-world) vs. Taxi (large-world).

- Amount of Evidence: Single instance vs. multiple samples.

- Framing: Probability vs. relative frequency.

- Procedure:

- Participants are randomly assigned to different conditions.

- For each task, prior probabilities and evidence likelihoods are provided.

- Participants are asked to provide a subjective probability judgment (the posterior probability).

- Dependent Variable: The participant's stated posterior probability, which is compared to the normative Bayesian posterior calculated via Bayes' theorem [4].

4. Analysis:

- Calculate the deviation of subjective judgments from the Bayesian norm.

- Use statistical models (e.g., ANOVA) to test for main effects and interactions between the independent variables.

- Identify systematic patterns: underweighting of evidence (conservatism) in urn tasks and underweighting of priors (base-rate neglect) in taxi tasks [4].

Protocol 2: Testing the Efficacy of LR Explanations in Expert Testimony

This protocol is modeled on the experiment from [6], which evaluated whether explaining LRs improves comprehension.

1. Objective: To assess if an expert witness's explanation of the meaning of LRs leads to more accurate interpretation by laypersons and reduces the prosecutor's fallacy.

2. Materials:

- Participants: A cohort of laypersons representing a jury pool.

- Stimuli: Video recordings of realistic expert testimony presenting LRs.

- Experimental Group Video: Includes an explanation of the LR (e.g., "The likelihood ratio tells us how much more likely we are to see this evidence if the prosecution's proposition is true compared to if the defense's proposition is true.").

- Control Group Video: Presents the LR value without an explanation.

- Data Collection: Electronic survey to elicit prior and posterior odds from participants.

3. Methodology:

- Design: Between-subjects design (Explanation vs. No Explanation).

- Procedure:

- Participants are randomly assigned to one of the two groups.

- They watch the assigned video testimony.

- Before the testimony, they are asked to state their prior odds (belief in the hypothesis before hearing the evidence).

- After the testimony, they are asked to state their posterior odds (updated belief after hearing the evidence).

- Dependent Variable: The Effective Likelihood Ratio (ELR), calculated as: ELR = Posterior Odds / Prior Odds. This ELR is then compared to the LR presented by the expert (PLR) [6].

4. Analysis:

- Compare the percentage of participants in each group whose ELR equals the PLR.

- Compare the rate of the prosecutor's fallacy between groups (identified by participants stating a posterior probability that equates to the value of the LR, thus neglecting the prior).

Data Presentation

Table 1: Comparison of Common Prior Distributions in Bayesian Analysis

This table summarizes types of prior probability distributions, which are combined with the likelihood to form the posterior [2].

| Prior Type | Description | Expected Effect | Common Use Case |

|---|---|---|---|

| Uninformative / Flat | Represents minimal prior knowledge; all parameter values are equally likely. | Neutral | Default choice when no reliable prior information exists; lets the data "speak for itself." [2] |

| Skeptical | An informative prior centered on "no effect" with limited range. | Neutral | To build a high burden of proof; new data must be strong to shift belief away from no effect [2]. |

| Optimistic | An informative prior where probability is concentrated on a beneficial effect. | Positive | When pre-existing evidence or theory suggests a positive outcome [2]. |

| Pessimistic | An informative prior where probability is concentrated on a harmful effect. | Negative | When pre-existing evidence suggests potential harm or lack of efficacy [2]. |

Table 2: Key Probabilistic Biases in Interpreting Forensic Evidence

This table outlines the two key biases identified in research on subjective probability judgments [4].

| Bias Name | Description | Typical Context | Impact on Bayesian Reasoning |

|---|---|---|---|

| Conservatism | The tendency to underweight new evidence, leading to inadequate updating of prior beliefs. | Small-world, abstract tasks (e.g., urn problems) | Posterior beliefs are not updated enough from the prior, as the likelihood is underweighted [4]. |

| Base-Rate Neglect | The tendency to underweight or ignore prior probabilities (base rates) in favor of new, singular evidence. | Large-world, realistic scenarios (e.g., taxi problem, eyewitness testimony) | Posterior beliefs are overly influenced by the likelihood, as the prior is underweighted [4]. |

Visualizations

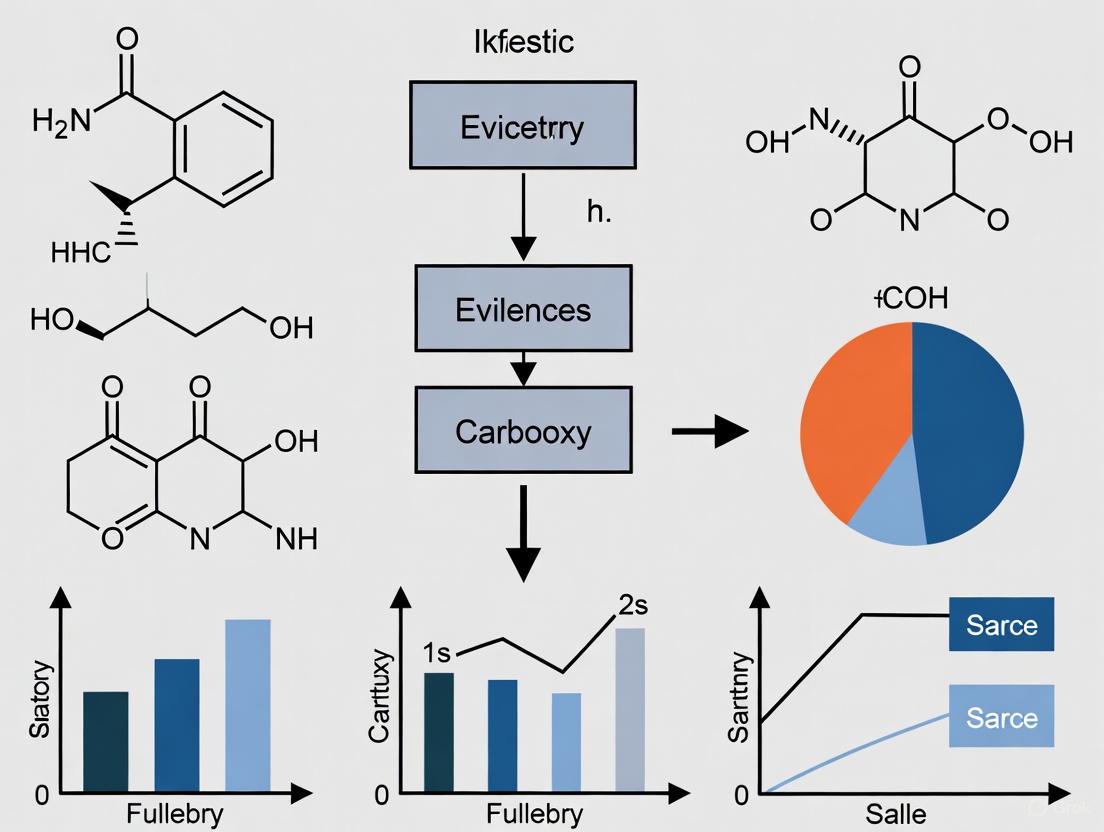

Diagram 1: The Bayesian Inference Workflow

Workflow of Belief Update

Diagram 2: Research Reagent Solutions for Likelihood Ratio Studies

Experimental Research Toolkit

The Scientist's Toolkit: Key Research Reagents

Table 3: Essential Materials for Likelihood Ratio and Bayesian Reasoning Research

| Item | Function / Application |

|---|---|

| Statistical Software (R, Python) | Primary environments for data analysis, statistical modeling, and running Bayesian computational engines [1]. |

| Computational Engines (Stan, JAGS) | Specialized platforms that perform Markov Chain Monte Carlo (MCMC) sampling to compute complex posterior distributions that are analytically intractable [1] [2]. |

| Experimental Paradigms (Urn & Taxi Problems) | Standardized cognitive tasks used to probe how individuals integrate prior information and evidence, allowing for the study of biases like conservatism and base-rate neglect [4]. |

| Data Collection Platforms | Online survey tools (e.g., Qualtrics) or laboratory software used to present stimuli and collect probability judgments from participants in a controlled manner [4] [6]. |

| Convergence Diagnostics (R-hat, Trace Plots) | Essential tools for validating MCMC algorithms. They ensure the sampling process has converged to the true posterior distribution, guaranteeing the reliability of computed LRs and other parameters [1]. |

Conceptual Foundations of the Likelihood Ratio

What is a Likelihood Ratio and how is it calculated?

A Likelihood Ratio (LR) is a statistical measure used to quantify the strength of forensic evidence or a safety signal by comparing two competing hypotheses [9]. It is the ratio of two probabilities of observing the same evidence under different scenarios.

The fundamental formula for a Likelihood Ratio is: LR = P(E|H1) / P(E|H0)

Where:

- P(E|H1) is the probability of observing the evidence (E) given that the first hypothesis (H1) is true.

- P(E|H0) is the probability of observing the evidence (E) given that the alternative hypothesis (H0) is true [9].

In forensic science, H1 typically represents the prosecution's proposition (e.g., the DNA came from the suspect), while H0 represents the defense's proposition (e.g., the DNA came from a random individual) [9]. In drug safety, H1 might represent the hypothesis that a drug causes an adverse event, while H0 represents the hypothesis that it does not [10].

How should LR results be interpreted?

The numerical value of the LR indicates the degree of support the evidence provides for one hypothesis over the other [9].

Table 1: Interpreting Likelihood Ratio Values

| LR Value Range | Interpretation | Strength of Evidence |

|---|---|---|

| LR > 10,000 | Very strong evidence to support H1 | Very Strong |

| LR 1,000 - 10,000 | Strong evidence to support H1 | Strong |

| LR 100 - 1,000 | Moderately strong evidence to support H1 | Moderately Strong |

| LR 10 - 100 | Moderate evidence to support H1 | Moderate |

| LR 1 - 10 | Limited evidence to support H1 | Limited |

| LR = 1 | Evidence has equal support for both hypotheses | Non-informative |

| LR < 1 | Evidence has more support for H0 | Supports Alternative |

Methodological Protocols

What is the standard workflow for LR assessment?

The following diagram illustrates the core logical process for conducting a Likelihood Ratio assessment, applicable across forensic and pharmacovigilance contexts.

How is the LR method applied to drug safety signal detection with multiple studies?

The Likelihood Ratio Test (LRT) method for drug safety signal detection from multiple studies involves a two-step approach to handle heterogeneity across different data sources [10]. The workflow below details this process.

The test statistic for a drug i and adverse event j in a single study is derived from a Poisson model and calculated as [10]:

LRij = [ (nij / Eij)^nij * ((n.j - nij) / (n.j - Eij))^(n.j - nij) ]

Where:

- nij = cell count for drug i and adverse event j

- n.j = total count for adverse event j across all drugs

- ni. = total count for drug i across all adverse events

- n.. = total count for all drugs and adverse events

- Eij = expected count under null hypothesis (ni. * n.j / n..)

For the overall analysis, the Maximum Likelihood Ratio (MLR) test statistic is used [10]: MLR = max(LRij) across all i and j

Implementation Tools & Data

What are the key methodological components for LR analysis?

Table 2: Research Reagent Solutions for LR Analysis

| Component | Function | Application Context |

|---|---|---|

| Poisson Model | Models cell counts in contingency tables as Poisson random variables. | Fundamental to the LRT method for drug safety signal detection [10]. |

| 2x2 Contingency Table | Organizes data into a structured format for calculating observed and expected frequencies. | Used in both forensic evidence evaluation and drug-AE association analysis [10]. |

| Bayes' Rule Framework | Provides the theoretical foundation for updating prior beliefs with new evidence. | The odds form (Posterior Odds = Prior Odds × LR) separates the fact (LR) from context (Prior Odds) [11]. |

| Verbal Equivalence Scale | Translates numerical LR values into qualitative statements of support. | Aids communication to non-statisticians such as jurors and legal professionals [9]. |

| Uncertainty Pyramid Framework | Assesses the range of LR values under different reasonable models and assumptions. | Critical for evaluating the fitness for purpose of a reported LR value [11]. |

Troubleshooting Guide

Why might my LR implementation fail and how can I resolve these issues?

Problem: Inconsistent LR values across studies

- Cause: Heterogeneity across studies in terms of sample sizes, study sites, personnel, patients enrolled, and study timing [10].

- Solution: Use weighted LRT methods that incorporate total drug exposure information by study, or apply random-effects models to account for between-study variance [10].

Problem: Difficulty interpreting LR for legal testimony

- Cause: The subjective nature of probability assessment and the misconception that an expert's LR can directly replace a decision-maker's personal LR in Bayesian updating [11].

- Solution: Clearly communicate that the LR is a measure of evidence strength, not a probability of guilt or causation. Use verbal equivalents alongside numerical values, and acknowledge uncertainties in the analysis [11] [9].

Problem: Inadequate uncertainty characterization

- Cause: Failure to account for sampling variability, measurement errors, and variability in choice of assumptions and models [11].

- Solution: Implement an assumptions lattice and uncertainty pyramid framework to explore the range of LR values attainable under different reasonable models and explicitly report this uncertainty [11].

Problem: Lack of drug exposure information

- Cause: In passive surveillance systems, precise exposure data may be unavailable, forcing reliance on adverse event reports rather than true patient exposure [10].

- Solution: Use reporting rates (e.g., nij/ni.) as approximations when exposure data is unavailable, but clearly state this limitation. When exposure data is available from clinical trials, replace ni. with actual exposure measures (Pi) for more accurate risk ratios [10].

Frequently Asked Questions

Can I testify about a Likelihood Ratio without an uncertainty assessment?

No. An extensive uncertainty analysis is critical for assessing when and how likelihood ratios should be used [11]. Even career statisticians cannot objectively identify one model as authoritatively appropriate for translating data into probabilities, nor can they state what modeling assumptions one should accept [11]. Without an uncertainty assessment, the fact-finder cannot properly evaluate the weight to give the evidence. The assumptions lattice and uncertainty pyramid framework should be used to explore the range of LR values attainable by models that satisfy stated criteria for reasonableness [11].

How do I handle conflicting LR values from different analytical methods?

This is expected and should be transparently reported. Different reasonable models will often produce different LR values [11]. The exploration of several such ranges, each corresponding to different criteria, provides the opportunity to better understand the relationships among interpretation, data, and assumptions [11]. Document all approaches used (e.g., simple pooled LRT, weighted LRT, maximum LRT) and present the range of results, providing context for why different methods might yield different values [10].

What are the common pitfalls when presenting LR testimony?

The most significant pitfall is the "hybrid adaptation" fallacy: presenting an expert's LR as if it can be directly multiplied by a decision-maker's prior odds [11]. Bayesian decision theory applies only to personal decision making, not to the transfer of information from an expert to a separate decision maker [11]. Other pitfalls include:

- Failing to explain that the LR is a measure of evidence strength, not probability of guilt [9].

- Using numerical LRs without verbal equivalents that are more accessible to laypersons [9].

- Not maintaining all supporting documentation in the case file as required by quality assurance standards [12].

Is the LR method scientifically valid for forensic testimony?

Yes, when implemented with proper uncertainty characterization and empirical validation. However, it is not the "only logical approach" [11]. Recent reports focus on the scientific validity of expert testimony, requiring empirically demonstrable error rates, often through "black-box" studies where practitioners assess constructed control cases where ground truth is known [11]. The LR provides a potential tool, but forensic experts should openly consider what communication methods are scientifically valid and most effective for each forensic discipline [11].

For researchers and scientists in drug development, the application of statistical and probabilistic reasoning extends beyond the laboratory into the legal and regulatory spheres. When scientific evidence is presented in court, the framework for evaluating that evidence hinges on understanding propositions, probabilities, and the proper role of the expert witness. This is particularly critical for testimony involving the likelihood ratio (LR), a method for evaluating the strength of forensic evidence. This guide provides troubleshooting and FAQs to help scientific professionals navigate the common challenges associated with this form of testimony, framed within the context of research on its cross-examination.

Troubleshooting Guides

Guide 1: Resolving Misinterpretation of Statistical Evidence

- Problem: The fact-finder (judge or jury) misinterprets a likelihood ratio as the probability that the prosecution's proposition is true. This is a classic instance of the Prosecutor's Fallacy [13].

- Example Incorrect Statement: "The DNA evidence is one million times more probable if the defendant is the source; therefore, there is a 99.9999% probability the defendant is the source."

- Root Cause: This fallacy conflates the probability of the evidence given a proposition (which the expert can address) with the probability of the proposition given the evidence (which requires prior probabilities) [14] [13].

- Solution:

- Clarify the Question: Emphasize that the LR addresses the question: "How much more likely is the observed evidence under the prosecution's proposition compared to the defense's proposition?" It does not directly answer: "How likely is the proposition itself to be true?" [13].

- Use Clear Testimony: Structure testimony to strictly separate the LR from posterior probabilities. Explicitly state that the LR is a statement about the evidence, not the hypotheses [14].

- Visual Aid: Use visual aids, such as the logical relationship diagram below, to explain the distinct roles of the expert and the fact-finder in the Bayesian updating process.

Guide 2: Addressing Challenges to the Assumption of Prior Probabilities

- Problem: An expert witness is challenged for overstepping their role by assigning a prior probability to a proposition (e.g., assuming the prior odds are 1:1, or 50% probability) [14].

- Example Incorrect Action: In a paternity case, a DNA analyst assumes the prior probability of paternity is 0.5, reports a posterior probability of 0.999999, and is challenged for violating the presumption of innocence in a criminal context [14].

- Root Cause: The expert has conflated their scientific role with the fact-finding role. Assigning prior probabilities requires considering all non-scientific evidence in the case, which is the exclusive responsibility of the judge or jury [14].

- Solution:

- Stay Within the Scope: Limit testimony to the calculation and presentation of the likelihood ratio. Do not assign prior odds or calculate posterior probabilities [14].

- Affirm the Fact-Finder's Role: In testimony, explicitly acknowledge that the LR is one piece of the puzzle and that the fact-finder must combine it with other evidence to reach a final conclusion.

- Methodology Justification: Be prepared to explain why the methodology for calculating the LR is sound and generally accepted, independent of any prior probabilities [15].

Guide 3: Formulating and Stating Expert Opinions

- Problem: An expert's opinion is excluded or challenged for being stated as mere possibility or speculation rather than a probabilistic conclusion [16].

- Example Incorrect Statement: "The formulation error could have caused the instability."

- Root Cause: The opinion does not meet the legal standard for admissibility, which in civil cases is typically "more likely than not" (i.e., >50% probability) [16].

- Solution:

- Use Appropriate Phrases: Frame conclusions using legally sufficient phrases that convey a "reasonable degree of scientific certainty" [16].

- Base Opinions on Reliable Data: Ensure the opinion is supported by sufficient facts, data, and a reliable methodology, such as rigorous root cause analysis [17] [16].

- Prepare for Cross-Examination: Be ready to defend the methodology, data, and the logical connection between them and the stated opinion [16].

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between a Likelihood Ratio and a Posterior Probability? The Likelihood Ratio is the probability of the observed scientific evidence under two competing propositions (e.g., prosecution's vs. defense's). It is a measure of the strength of the forensic evidence itself. A Posterior Probability is the probability that a proposition is true, given all the evidence, both scientific and non-scientific. The LR is a component used to update a prior probability into a posterior probability, but the expert's domain is typically limited to the LR [14] [13].

FAQ 2: Why shouldn't a forensic expert assign a prior probability to a proposition? Assigning a prior probability requires an assessment of all the non-scientific evidence in the case (e.g., witness statements, alibis). This assessment is the core duty of the judge or jury. For an expert to assign a prior usurps this role, risks "double-counting" evidence, and may violate legal principles like the presumption of innocence by making an assumption about the defendant's guilt before considering the scientific evidence [14].

FAQ 3: What is the "Prosecutor's Fallacy" and how can I avoid perpetuating it in my testimony? The Prosecutor's Fallacy is the mistaken transposition of the conditional probability. It incorrectly presents the probability of the evidence given the proposition (e.g., "The chance of this DNA match if the defendant is innocent is 1 in a million") as the probability of the proposition given the evidence (e.g., "The chance the defendant is innocent is 1 in a million"). To avoid it, consistently and clearly articulate which probability you are discussing and use the LR framework correctly [13].

FAQ 4: What language should I use to state my expert opinion to ensure it is legally sufficient? To meet the "preponderance of the evidence" standard common in civil cases, your opinion should be stated in terms of probability, not mere possibility. Use phrases such as "more likely than not," "based on a reasonable degree of scientific certainty," or "based on a reasonable degree of medical probability" to convey that your conclusion is more than 50% likely to be correct [16].

Experimental Protocols & Methodologies

Protocol: Validating a Probabilistic Genotyping (PG) Software for DNA Evidence

This protocol outlines key steps for validating computational methods like PG software, which calculates likelihood ratios for complex DNA mixtures, ensuring the methodology is robust and defensible in court [15].

- 1. Define Scope and Principles: Establish that the software is based on generally accepted scientific principles for population genetics and whole genome sequencing [15].

- 2. Reference Database Validation: Verify the use of an appropriate, widely accepted reference panel (e.g., the 1,000 Genomes Project) for calculating genotype probabilities and ensure the software is accurate when calibrated with it [15].

- 3. Performance Testing with Known Samples:

- 3.1. Same-Source Comparisons: Test the software with known matching samples (e.g., a hair and saliva from the same person). The reported LRs should strongly support the match [15].

- 3.2. Different-Source Comparisons: Test with known non-matching samples. The reported LRs should correctly provide support for the exclusion proposition [15].

- 3.3. Record Results: Document the range of LRs obtained for true and false propositions to understand the software's performance and potential for over/under-estimation [15].

- 4. Peer Review and Publication: Subject the validation study and software methodology to peer review and publication to demonstrate general acceptance in the scientific community [15].

The workflow for this validation is a critical path that ensures reliability.

The Scientist's Toolkit: Key Research Reagents for Forensic Testimony

The following table details essential conceptual "reagents" for formulating and defending likelihood ratio testimony.

| Item/Concept | Function & Explanation |

|---|---|

| Competing Propositions | The two mutually exclusive hypotheses framed by the court (e.g., "The DNA came from the defendant" vs. "The DNA came from an unrelated person in population X"). The LR measures the support of the evidence for one proposition over the other [14]. |

| Likelihood Ratio (LR) | A quantitative measure of the strength of the evidence. It is calculated as the probability of the evidence under the prosecution's proposition divided by the probability of the evidence under the defense's proposition [14] [13]. |

| Reference Population Database | A dataset of genetic markers from a relevant population (e.g., the 1,000 Genomes Project). It is used to calculate the probability of observing the evidence under the proposition that someone other than the defendant was the source [15]. |

| Validation Protocol | A documented plan that provides a high degree of assurance that a specific process (e.g., PG software) will consistently produce reliable results meeting pre-determined acceptance criteria [15]. |

| "Reasonable Degree of Scientific Certainty" | A legal standard for the expression of an expert opinion, signifying that the conclusion is more probable than not (i.e., greater than 50% probability) and is based on reliable methods, not speculation [16]. |

Data Presentation: Common Statistical Fallacies

The table below summarizes common fallacies to avoid when presenting probabilistic evidence.

| Fallacy Name | Erroneous Interpretation | Correct Interpretation |

|---|---|---|

| Prosecutor's Fallacy | Transposes the conditional. Treats P(Evidence|Proposition) as P(Proposition|Evidence). Example: "The LR of 1,000,000 means there is only a 1 in a million chance the defendant is innocent." [13] | The LR of 1,000,000 means the evidence is 1 million times more likely if the prosecution's proposition is true than if the defense's is. It is not a probability of innocence or guilt. |

| Defense Fallacy | Dismisses strong evidence by arguing that in a large population, other people could also match. Example: "Since the city has 1 million people, several others would also match, so the evidence is meaningless." [14] | While others might match, the evidence is still highly relevant. The LR quantitatively assesses the strength of the evidence against the specific defendant, given the match. |

| Source Probability Error | Presents the source probability (a posterior probability) as if it were derived from the forensic evidence alone, ignoring prior odds [14]. | A source probability can only be validly calculated by combining the LR with a prior probability, which is the role of the fact-finder, not the expert. |

Troubleshooting Guides

Guide 1: Low Comprehension of Likelihood Ratios by Laypersons

Problem: Experimental data shows that laypersons (mock jurors) often do not correctly interpret the meaning of Likelihood Ratios (LRs) presented in expert testimony, leading to a failure in understanding the true strength of the presented evidence [6] [18].

Solution: Implement and test different presentation formats.

- Root Cause: Research indicates that the traditional presentation of a numerical LR value, without further explanation, is insufficient for clear comprehension. A significant proportion of laypersons commit the "prosecutor's fallacy," misinterpreting the LR as the probability that the prosecution's hypothesis is true [6].

- Diagnosis: During experimental simulations, compare the "Presented Likelihood Ratio" (PLR) with the "Effective Likelihood Ratio" (ELR), which is derived from the participant's elicited posterior odds divided by their prior odds. A discrepancy between the ELR and PLR indicates a comprehension failure [6].

- Resolution Steps:

- Provide a Clear Explanation: The expert witness should include a plain-language explanation of what the LR means in their testimony. For example, explaining that it measures the relative support the evidence provides for one proposition over another [6].

- Explore Alternative Formats: Move beyond simple numerical values. Research is actively investigating whether verbal expressions of strength of support (e.g., "moderate support," "strong support") or numerical random-match probabilities are more easily understood [18].

- Validate Effectiveness: Post-explanation, it is crucial to test whether understanding has improved. The small positive effect observed in one study, where explanation slightly increased the number of participants whose ELRs matched the PLRs, suggests this is a complex issue requiring rigorous validation [6].

Guide 2: Choosing an Inappropriate Method for LR Calculation

Problem: A researcher or forensic practitioner uses a method for calculating LRs that does not properly account for both similarity and typicality, potentially overstating the strength of the forensic evidence [19].

Solution: Select a calculation method that inherently incorporates typicality with respect to the relevant population.

- Root Cause: Some calculation methods, particularly those based solely on similarity scores, consider how similar two items are but fail to account for how common or rare those features are in the broader population. This can lead to inflated and misleading LR values [19].

- Diagnosis: Review the methodology used for LR calculation. If the method converts feature values into similarity scores without a model of the feature distribution in the relevant population, it likely does not account for typicality [19].

- Resolution Steps:

- Avoid Similarity-Only Scores: Do not rely on methods that use simple similarity scores without a population model [19].

- Adopt Robust Methods: Use specific-source or common-source methods for calculation. These methods are designed to take account of both similarity and typicality [19].

- Method Recommendation: Since case-relevant data for specific-source models is often scarce, the common-source method is generally recommended as the preferred alternative to the similarity-score method [19].

Frequently Asked Questions (FAQs)

Q1: What is the current state of comprehension research regarding the presentation of likelihood ratios?

A1: Existing empirical literature has not yet definitively identified the best way to present LRs. Past research has tended to study the understanding of "strength of evidence" broadly, rather than focusing specifically on LRs. Furthermore, while various formats (numerical LRs, random-match probabilities, verbal statements) have been compared, no single method has emerged as a clear winner for maximizing comprehension among legal decision-makers. This has led to calls for more targeted future research with improved methodologies [18].

Q2: Does explaining the meaning of a likelihood ratio to laypersons improve their understanding?

A2: The evidence is nuanced. One experimental study using video testimony found that providing an explanation of the LR's meaning led to a small increase in the number of participants who correctly interpreted its value. However, this explanation did not reduce the rate at which participants committed the prosecutor's fallacy. Therefore, while potentially helpful, a simple explanation is not a complete solution to the problem of comprehension [6].

Q3: What are the key methodological indicators for assessing comprehension of likelihood ratios in research?

A3: When designing experiments to test LR understanding, researchers should measure comprehension against established indicators such as the CASOC indicators [18]:

- Coherence: Does the participant's interpretation of the evidence align with the rules of probability?

- Orthodoxy: Does the participant's interpretation match the intended meaning of the LR?

- Sensitivity: Does the participant's interpretation of the evidence strength change appropriately when the value of the LR changes?

Q4: In the context of U.S. drug and medical device litigation, how are expert witnesses utilized?

A4: In the U.S. legal system, experts are almost always selected and retained by the opposing parties, not the court. This creates a partisan dynamic where each expert advances a party's interests. These experts are essential, as cases often require specialized knowledge from multiple fields, such as engineering (for device design), pharmacology (for drugs), epidemiology (for post-market data), and various medical specialists (to address alleged injuries). A failure to support a case with admissible expert testimony can lead to its dismissal [20].

Data Presentation

Table 1: Impact of LR Explanation on Comprehension in an Experimental Setting

The following table summarizes key quantitative findings from a study that tested whether explaining the meaning of LRs improved layperson comprehension. Participants watched video testimony and their understanding was measured by comparing their Effective Likelihood Ratio (ELR) to the Presented Likelihood Ratio (PLR) [6].

| Experimental Condition | Percentage of Participants with ELR = PLR | Occurrence of Prosecutor's Fallacy | Key Finding |

|---|---|---|---|

| With LR Explanation | Higher percentage | Not lower than the "no explanation" group | Small improvement in matching ELR to PLR, but no reduction in major fallacy [6] |

| Without LR Explanation | Lower percentage | Not higher than the "explanation" group | Basic presentation of LR value is insufficient for robust comprehension [6] |

Table 2: Comparison of Likelihood Ratio Calculation Methods

This table compares different methodological approaches for calculating LRs, highlighting the critical importance of accounting for "typicality" to avoid overstating evidence [19].

| Calculation Method | Accounts for Similarity? | Accounts for Typicality? | Recommended for Use? | Rationale |

|---|---|---|---|---|

| Similarity-Score Method | Yes | No | No | Overstates evidence by ignoring feature commonness; should be avoided [19] |

| Specific-Source Method | Yes | Yes | If possible | Requires extensive case-relevant data for modeling, which is often unavailable [19] |

| Common-Source Method | Yes | Yes | Yes | Properly accounts for both similarity and typicality; recommended as the standard alternative [19] |

Experimental Protocols

Protocol 1: Testing the Efficacy of LR Explanations in Testimony

Objective: To measure whether including an explanation of the Likelihood Ratio (LR) in expert testimony improves laypersons' comprehension and reduces the incidence of logical fallacies like the prosecutor's fallacy [6].

Materials:

- Video recording equipment for creating realistic expert testimony.

- A scripted legal case scenario involving forensic evidence.

- Two versions of expert testimony: one with a plain-language LR explanation, and one without.

- A questionnaire to elicit participants' prior odds, posterior odds, and understanding of the evidence.

Methodology:

- Participant Recruitment & Group Assignment: Recruit a pool of laypersons eligible for jury duty. Randomly assign them to either the "Explanation" group or the "No Explanation" control group [6].

- Stimulus Presentation: Participants watch the video testimony. The expert presents the same LR value in both groups, but only the "Explanation" group receives a clarification of its meaning [6].

- Data Elicitation: After viewing, participants provide:

- Their prior odds (belief about the defendant's guilt before hearing the expert).

- Their posterior odds (belief about guilt after hearing the expert) [6].

- Data Calculation:

- Calculate the Effective Likelihood Ratio (ELR) for each participant using the formula: ELR = Posterior Odds / Prior Odds [6].

- Compare each participant's ELR to the Presented LR (PLR) from the testimony.

- Analysis:

- Determine the percentage of participants in each group for whom the ELR equals the PLR.

- Identify the percentage of participants who commit the prosecutor's fallacy (e.g., by interpreting the LR as the probability of guilt).

Protocol 2: Evaluating LR Calculation Methods for Typicality

Objective: To demonstrate that likelihood ratio calculation methods which fail to account for "typicality" can overstate the strength of forensic evidence [19].

Materials:

- A dataset of forensic feature measurements from a relevant population (e.g., glass fragments, voice recordings).

- Software for statistical analysis (e.g., Matlab, R).

- A pair of items for comparison (the "questioned" and "known" item).

Methodology:

- Feature Extraction: Measure and define the relevant features from the "questioned" and "known" items [19].

- Method Application: Calculate the LR using different methods:

- Similarity-Score Method: Compute a score based only on the similarity between the two items' features [19].

- Common-Source Method: Calculate the LR using a model that evaluates the probability of the feature data under the hypothesis that the items come from the same source versus the hypothesis that they come from different sources, explicitly using the population data to assess typicality [19].

- Comparison and Validation:

- Compare the LR values generated by each method.

- Using synthetic data experiments, verify that the Similarity-Score method produces inflated LRs compared to the Common-Source method, especially when the features of the items are rare in the population [19].

Diagrams

Diagram 1: LR Comprehension Experiment Workflow

Diagram 2: LR Calculation Method Decision Tree

The Scientist's Toolkit: Key Research Reagents & Materials

The following table details essential methodological components and "research reagents" for conducting robust studies on Likelihood Ratio testimony and its impact.

| Item Name | Function/Description | Application in Research |

|---|---|---|

| Video Testimony Stimuli | Recorded, scripted expert testimony allowing for controlled manipulation of variables (e.g., with/without explanation). | Essential for creating ecologically valid experiments that mimic a courtroom setting, as used in [6]. |

| Prior/Posterior Odds Elicitation Tool | A questionnaire or interactive tool to quantitatively measure a participant's beliefs before and after hearing the expert evidence. | Critical for calculating the Effective Likelihood Ratio (ELR), the key metric for gauging actual comprehension [6]. |

| CASOC Comprehension Framework | A set of indicators (Coherence, Orthodoxy, Sensitivity) used to assess the quality of a participant's understanding of the evidence. | Provides a structured, multi-faceted approach for analyzing and interpreting comprehension data in research [18]. |

| Common-Source Model Software | Statistical software or code (e.g., in Matlab or R) designed to implement common-source LR calculation methods. | Necessary for computing LRs that properly account for typicality, avoiding the overstatement of evidence [19]. |

| Population Reference Database | A curated dataset of feature measurements from a relevant population, used to model typicality and feature distribution. | Serves as the foundational data required for robust LR calculation methods like the common-source approach [19]. |

Common Misconceptions and the 'Straw Man' Arguments in LR Interpretation

Frequently Asked Questions

Q1: What is a Likelihood Ratio (LR) in the context of forensic testimony? A Likelihood Ratio (LR) is a statistical measure used to evaluate the strength of forensic evidence. It compares the probability of observing the evidence under two competing hypotheses [9]:

- The Prosecutor's Hypothesis (H1): The evidence came from the suspect.

- The Defense Hypothesis (H0): The evidence came from an unrelated, random person from the population.

The formula is expressed as: LR = P(E|H1) / P(E|H0)

For single-source DNA evidence, this often simplifies to LR = 1 / P, where P is the random match probability of the genotype [9].

Q2: What is the most common misconception about linear regression assumptions, and how does it relate to LR validation? A common misconception, identified in a cross-sectional study of health research, is that the dependent variable (Y) itself must be normally distributed. The correct assumption is that the residuals (the differences between observed and predicted values) should be normally distributed [21]. This is analogous to LR testimony, where the focus must be on the underlying statistical model's validity. Flawed foundational assumptions can invalidate the entire analysis, leading to misleading conclusions in court.

Q3: What is a "Straw Man" argument, and how might it appear in the cross-examination of LR testimony? A Straw Man fallacy occurs when someone distorts an opponent's argument into a weaker or exaggerated version and then attacks that distortion instead of the original point [22]. In cross-examination, this might look like:

- Oversimplification: "So, you're claiming this DNA evidence proves with 100% certainty that my client was there?"

- Exaggeration: "Your method is just fancy statistics that can be made to say anything you want."

- Misrepresentation: "You only ran the test once; how can you possibly call this science?"

Q4: How should I respond if my LR testimony is misrepresented with a Straw Man argument? The most effective response is to politely but firmly draw attention to the misrepresentation.

- Acknowledge and Clarify: "With respect, that is not what my testimony stated. The LR does not prove anything; it assesses the strength of the evidence under two specific hypotheses."

- Reiterate the Core Principle: "The LR is a measure of support for one hypothesis over another, not a statement of absolute truth or probability of guilt [9] [23]."

- Redirect: "The question is not whether the statistics are 'fancy,' but whether the method is scientifically valid and properly applied to the evidence in this case."

Q5: Can LRs be validated for use in series (i.e., applying multiple LRs sequentially to different tests)? No, this is a critical limitation. While it may seem intuitive to chain LRs together, there is no established scientific validation for using LRs in series or in parallel [23]. Applying one LR to generate a post-test probability and then using that as a pre-test probability for a second, different LR is not a statistically supported practice and should be avoided or explicitly acknowledged as an unvalidated extension.

The Scientist's Toolkit: Research Reagent Solutions

| Item/Concept | Function & Explanation |

|---|---|

| Simple vs. Composite Hypotheses | Function: Defines the scope of the LR. Simple hypotheses (e.g., θ=θ₀ vs. θ=θ₁) are used in foundational tests, while composite hypotheses (e.g., θ∈S₀ vs. θ∈S₁) are used for more complex, real-world models [24]. |

| Pre-Test Probability | Function: The estimated probability of a proposition before new evidence is considered. It is the crucial starting point for applying Bayes' Theorem with an LR to update to a Post-Test Probability [23]. |

| Verbal Equivalents Table | Function: A guide to translate numerical LR values into qualitative statements of support for the benefit of a lay audience, such as a jury [9]. |

| Fagan Nomogram | Function: A graphical tool used to bypass complex calculations. By drawing a line from the pre-test probability through the LR, one can easily read the resulting post-test probability [23]. |

| Sensitivity & Specificity | Function: The fundamental properties of a diagnostic test used to calculate LRs in clinical and diagnostic fields (LR+ = sensitivity / (1 - specificity)) [23]. |

Structured Data for LR Interpretation

Table 1: Interpretation Guide for Likelihood Ratio Values

| LR Value | Support for H1 (Prosecutor's Hypothesis) | Verbal Equivalent (Guide) |

|---|---|---|

| > 10,000 | Extremely Strong | Very strong evidence to support |

| 1,000 to 10,000 | Very Strong | Strong evidence to support |

| 100 to 1,000 | Strong | Moderately strong evidence to support |

| 10 to 100 | Moderately Strong | Moderate evidence to support |

| 1 to 10 | Limited | Limited evidence to support |

| 1 | None | The evidence has equal support for both hypotheses |

| < 1 | Supports H0 (Defense Hypothesis) | The evidence has more support from the denominator hypothesis |

Table 2: Common Misconceptions in Statistical Model Interpretation

| Misconception | Reality | Core Principle |

|---|---|---|

| The Y variable in linear regression must be normally distributed [21]. | The errors or residuals of the model should be normally distributed. | A model's validity depends on the distribution of its unexplained variance, not the raw data. |

| An LR represents the probability that the suspect is the source. | An LR quantifies how much the evidence supports one hypothesis over another, not the probability of the hypotheses themselves [9] [23]. | The LR is about the probability of the evidence given the hypothesis, not the probability of the hypothesis given the evidence. |

| LRs from different tests can be chained together sequentially. | LRs have not been validated for use in series or in parallel [23]. | The application of multiple LRs requires a unified model, not sequential updates. |

Experimental Protocols for LR Research

Protocol 1: Performing a Likelihood Ratio Test for Simple Hypotheses This methodology tests between two precise, simple hypotheses [24].

- Define Hypotheses: State the null (H₀: θ = θ₀) and alternative (H₁: θ = θ₁) hypotheses.

- Calculate Likelihoods: Based on observed data x₁, x₂, ..., xₙ, compute the likelihood functions L(θ₀) and L(θ₁).

- Form the Ratio: Compute the likelihood ratio λ = L(θ₀) / L(θ₁).

- Set Decision Threshold: Choose a constant c based on the desired significance level (α) for the test. The value of c is often determined so that P(type I error) = α.

- Make a Decision: Reject H₀ if λ < c (or a monotonically related statistic exceeds a threshold).

Protocol 2: Evaluating a Forensically Reported LR A framework for critiquing LR testimony based on common misconceptions.

- Identify the Hypotheses: Clearly state H₁ and H₀ as presented in the testimony. Are they fair and balanced?

- Interrogate the Model: Scrutinize the statistical model used to calculate P(E|H) for each hypothesis. Ask: "Have the model's assumptions been checked and validated?" (See misconception in Table 2).

- Check for Fallacies: Listen for "Straw Man" arguments that misrepresent the meaning of the LR (e.g., conflating "support for a hypothesis" with "probability of guilt").

- Assess the Conclusion: Ensure the conclusion is limited to the strength of the evidence and does not make claims about the hypotheses themselves that are not justified by the LR alone.

Logical Relationship Diagrams

Diagram 1: The Straw Man Fallacy

Diagram 2: LR Methodology

From Theory to Practice: Implementing LR Methods in Research and Testimony

Frequently Asked Questions

What are competing propositions, and why are they crucial in court? Competing propositions are pairs of alternative explanations—typically one from the prosecution and one from the defense—offered for the same forensic findings. Instead of stating that evidence "matches" a suspect, scientists evaluate the probability of the evidence under each proposition, often expressed as a Likelihood Ratio (LR). This structured approach is fundamental to modern, balanced reporting and helps the court avoid logical fallacies, such as the prosecutor's fallacy, by separating the statistical strength of the evidence from the ultimate issue of guilt [25] [13].

How do I move from a 'source' proposition to an 'activity' proposition? Many DNA cases now involve tiny, easily transferred traces where the source may not be disputed. The real question becomes, "How did the DNA get there?" [25]. To formulate activity-level propositions:

- Start with the alleged activity: For example, "The suspect stabbed the victim."

- Define a specific alternative activity: This should be a reasonable alternative explaining the presence of the trace, such as, "The suspect was present at the scene but did not stab the victim, and the DNA was transferred via secondary means."

- Consider the implications: Activity-level evaluation requires considering additional factors like the mechanisms of transfer, persistence, and background prevalence of DNA, which go beyond simple profile rarity [25].

What is a common mistake when formulating the alternative proposition? A common and critical error is proposing an alternative that is unrealistically specific or narrow, such as "an unknown person unrelated to the defendant is the source." This can artificially inflate the strength of the evidence. The alternative should be a reasonable and relevant explanation for the findings, often phrased as "the DNA came from an unknown person in the population" [13]. The framework provides a transparent way for experts to evaluate a case, where differences of opinion about the propositions can be discussed and resolved [25].

Troubleshooting Guides

Issue: The Likelihood Ratio Seems Overwhelmingly Biased Toward One Proposition

| Symptom | Possible Cause | Diagnostic Steps | Resolution |

|---|---|---|---|

| The LR strongly favors one proposition, but the overall case context seems weak. | The competing propositions are unbalanced. One proposition may be too vague or inherently improbable, making the other seem more likely by default. | 1. Review the propositions for clarity and specificity.2. Check if the alternative proposition is a realistic and legitimate possibility in the case.3. Conduct a sensitivity analysis to see how small changes in the propositions affect the LR [25]. | Reformulate the propositions to be more balanced and mutually exclusive. Ensure they are at the same hierarchical level (e.g., both at the activity level). |

Issue: Difficulty Incorporating Activity-Level Factors like Transfer and Persistence

| Symptom | Possible Cause | Diagnostic Steps | Resolution |

|---|---|---|---|

| Lack of data or knowledge to assign probabilities for how DNA was transferred or persisted. | Reluctance to use data from controlled laboratory studies, fearing they don't perfectly match the unique circumstances of the case [25]. | 1. Identify the key activity factors (e.g., shedder status, type of contact).2. Search the scientific literature for relevant experimental data on these factors.3. Acknowledge the uncertainty and use a range of probabilities based on the available data. | Use data from controlled experiments, as their inherent variation often accounts for real-world uncertainty. If exact states of factors are unknown, incorporate all possible states weighted by their probabilities [25]. |

The Scientist's Toolkit: Key Reagents for Proposition Formulation

The following table details the essential conceptual "reagents" required for robust evaluation of forensic evidence.

| Research Reagent | Function & Explanation |

|---|---|

| Hierarchy of Propositions | A conceptual framework that classifies propositions into three levels: Source (who is the source?), Activity (how did it get there?), and Offense (is the suspect guilty?). Scientists typically address the source or activity levels [25]. |

| Likelihood Ratio (LR) | The core quantitative measure of probative value. The LR is the probability of the evidence under the prosecution's proposition divided by the probability of the evidence under the defense's proposition. An LR greater than 1 supports the prosecution's case; an LR less than 1 supports the defense's [13]. |

| Prosecutor's Fallacy | A logical error where the probability of the evidence given the proposition (e.g., "the probability of this DNA if it came from someone else") is mistakenly transposed with the probability of the proposition given the evidence (e.g., "the probability it came from someone else given this DNA") [13]. Using the LR helps avoid this. |

| Transfer & Persistence Data | Empirical data from controlled studies used to inform probabilities at the activity level. This includes data on how much DNA is deposited during specific activities and how long it remains under various conditions [25]. |

| 1,000 Genomes Project | A large, publicly available reference panel of human genome sequences from a diverse population. It is widely accepted and used to calculate the statistical significance and rarity of DNA profiles, including in low-template or complex mixtures [15]. |

Experimental Protocol: Evaluating Evidence Under Activity-Level Propositions

Objective: To quantitatively assess the probative value of forensic DNA evidence given two competing activity-level propositions using a likelihood ratio framework.

Methodology:

- Define Competing Propositions (H₁ and H₂): Formulate two mutually exclusive propositions at the activity level. For example:

- H₁ (Prosecution): The suspect assaulted the victim.

- H₂ (Defense): The suspect and victim shook hands socially earlier in the day.

- Identify Relevant Probabilities: Break down each activity into the necessary probabilistic components. This typically includes:

- Transfer (T): The probability of DNA being transferred, and in the amount recovered, given the activity.

- Persistence (P): The probability of DNA persisting until collection, given the time elapsed and environment.

- Background (B): The probability of finding the DNA profile as background on the surface or item, unrelated to the alleged activity.

- Rarity (R): The random match probability of the DNA profile in the relevant population.

- Assign Probabilities: Use a combination of case-specific information, relevant scientific literature, and empirical data from controlled experiments to assign values or distributions for T, P, B, and R under each proposition, H₁ and H₂ [25].

- Calculate the Likelihood Ratio: Construct a ratio that compares the probability of the entire set of findings (E) under both propositions.

LR = P(E | H₁) / P(E | H₂)The complexity of this formula depends on the specific propositions and findings. In many activity-level cases, it expands to include the factors listed above. - Report and Interpret: Report the LR and provide a clear, balanced interpretation of what this value means regarding the support the evidence provides for one proposition over the other, without infringing on the ultimate issue of guilt.

Logical Framework for Proposition Formulation

The diagram below visualizes the decision-making process for structuring a balanced forensic evaluation.

Within the context of research on cross-examination likelihood ratio (LR) testimony, selecting the appropriate statistical model is a critical step. The choice often centers on whether to use a feature-based approach, which works directly with the raw features of the evidence, or a score-based approach, which uses a similarity score generated by some comparison algorithm as an intermediate step [26]. This technical guide outlines these two methodologies, provides protocols for their implementation, and addresses common troubleshooting issues encountered by researchers and scientists in the field.

Core Concepts: Feature-Based vs. Score-Based Likelihood Ratios

The likelihood ratio is a fundamental statistical tool for comparing two competing hypotheses in light of observed evidence [27]. In a forensic context, it typically weighs the probability of the evidence under the prosecution's hypothesis (e.g., the suspect is the source of the trace) against the probability of the evidence under the defense's hypothesis (e.g., someone else is the source) [26].

| Aspect | Feature-Based LR | Score-Based LR |

|---|---|---|

| Definition | A statistical model where the LR is computed directly from the feature distributions of the population of sources [26]. | A two-step method where a similarity score is calculated first, and the LR is then computed from the distributions of this score under the two hypotheses [26]. |

| Input Data | Raw or preprocessed feature vectors (e.g., specific measurements, characteristics) [26]. | A single scalar value representing the similarity between two feature vectors, generated by a comparator. |

| Model Complexity | Can be high, as it requires a full probabilistic model of the feature space. | Simpler, as it reduces the problem to modeling one-dimensional score distributions. |

| Key Challenge | Requires a well-defined and accurate population model for all features, which can be complex for high-dimensional data [26]. | Requires representative data to accurately model the within-source and between-source score distributions. |

| Interpretability | High, as the contribution of individual features can, in principle, be understood. | Lower, as the similarity score may obscure the contribution of individual features. |

| Primary Use Case | Often preferred when a comprehensive statistical model of the feature space is feasible and necessary. | Common in fields like fingerprints or DNA where a comparison algorithm generates a score [28]. |

FAQs & Troubleshooting Guides

How do I choose between a feature-based and a score-based model for my evidence type?

The decision is fundamentally a matter of the available information and the complexity of your data [26].

- Scenario A: Choose a Feature-Based Model if:

- You have a reliable probabilistic model for your raw data features.

- The feature space is not excessively high-dimensional.

- High interpretability and the ability to trace the impact of each feature are required.

- Scenario B: Choose a Score-Based Model if:

- A well-validated comparison algorithm exists that outputs a reliable similarity score.

- The raw feature space is too complex to model directly, but score distributions are manageable.

- Operational speed is a priority, as score-based systems can be faster once the score is computed.

Troubleshooting Tip: A common point of confusion is viewing these as fundamentally different "systems." In reality, the choice is pragmatic. If you have the information to build a feature-based model, you should. If not, a score-based approach using a well-calibrated algorithm is a valid alternative [26].

What are the common pitfalls when implementing a score-based LR system and how can I avoid them?

Several limitations have been identified in the literature, particularly for forensic applications like latent print analysis [28].

| Common Pitfall | Description | Solution / Mitigation |

|---|---|---|

| Inadequate Score Distribution Modeling | The LR is highly sensitive to the accuracy of the within-source and between-source score distributions. | Use large, representative datasets for modeling. Validate distributions on separate test data. Consider the potential for different performance across evidence subtypes. |

| Ignoring Dependencies | Assuming feature independence when it does not exist, leading to biased LRs. | Use models that can account for feature correlations, or ensure your scoring algorithm inherently handles these dependencies. |

| Instability for "Close Non-Matches" | The model may produce unreliable LRs for comparisons that are very similar but not matches [28]. | Research is ongoing to improve software capabilities to account for differences between a latent print and a known print to provide more accurate LR [28]. |

| Lack of Standardization | Different experts or software can produce substantially different LRs for the same evidence [29]. | Promote transparency by documenting all data sources, model assumptions, and software parameters. The field requires continued development of standardized measurement practices [29]. |

My likelihood ratios are unstable. What could be the cause?

Instability, where small changes in input data lead to large changes in the LR, can stem from several issues:

- Cause 1: Small or Non-Representative Training Data. The statistical models for either features or scores are not robust. Solution: Increase the size and diversity of your background population data.

- Cause 2: Highly Correlated Features. In a feature-based model, strong correlations can make parameter estimation unstable. Solution: Use dimensionality reduction techniques or models designed for correlated data.

- Cause 3: Poorly Calibrated Similarity Score. The algorithm generating the score may not be optimal for the task. Solution: Re-calibrate or choose a different comparison algorithm.

Experimental Protocols for LR Model Validation

Protocol 1: Validating a Score-Based LR System

This protocol outlines the steps to empirically validate the performance of a score-based LR system.

- Dataset Curation: Assemble a large dataset with known source pairs (same-source,

H1) and known non-mated pairs (different-source,H2). - Score Generation: For all pairs in the dataset, compute the similarity score using your chosen comparison algorithm.

- Model Fitting: Use the scores from

H1pairs to model the within-source (mated) score distribution. Use scores fromH2pairs to model the between-source (non-mated) score distribution. Common models include kernel density estimation or parametric distributions (e.g., Gamma, Normal). - LR Computation: For a given test score

s, compute the LR as:LR = f(s | H1) / f(s | H2), wherefis the probability density function of the fitted distributions. - Validation: Use a separate test set not used for model fitting. Calculate LRs for all test pairs and analyze the results with:

- Discrimination Plots: Histograms of

log10(LR)forH1andH2populations. Good performance shows clear separation. - Calibration Plots: Plot the observed proportion of

H1against the predicted probability from the LR. A well-calibrated system follows the diagonal line. - Rates of Misleading Evidence: Calculate the proportion of

H1pairs withLR < 1(false support forH2) andH2pairs withLR > 1(false support forH1).

- Discrimination Plots: Histograms of

Protocol 2: Building a Simple Feature-Based LR Model (for Continuous Features)

This protocol describes a method for a simple two-feature system, assuming feature independence.

- Population Modeling: For each feature, gather measurements from a representative population of sources. Model the distribution of each feature in the general population (e.g., using a Normal distribution, estimating the mean

μand standard deviationσ). - Measurement Uncertainty Modeling: For the specific source in question (e.g., a suspect's sample), take repeated measurements to estimate the within-source variability for each feature (e.g., estimate a mean

mand standard deviations). - LR Calculation: For an evidence sample with measurements

x1, x2:- Under

H1(same source), the probability is the product of the probabilities of observingx1andx2given the specific source's distribution. - Under

H2(different source), the probability is the product of the probabilities of observingx1andx2given the general population distributions. - The LR is the ratio of these two probabilities:

LR = [P(x1|H1) * P(x2|H1)] / [P(x1|H2) * P(x2|H2)].

- Under

Workflow Visualization

The following diagram illustrates the logical workflow and key decision points for choosing and implementing an LR model.

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key components and their functions for researchers developing or validating LR systems.

| Item / Solution | Function in LR Research |

|---|---|

| Reference Datasets | Curated datasets with known ground truth (mated and non-mated pairs) are essential for training statistical models, validating system performance, and estimating error rates [28]. |

| Comparison Algorithm | The software or function that generates a similarity score from two pieces of evidence. This is the core of a score-based system and must be carefully selected and validated [26]. |

| Statistical Modeling Software | Tools (e.g., R, Python with scikit-learn) used to fit probability distributions to features or scores, and to compute the resulting likelihood ratios. |

| Population Data | Data representative of the relevant population, used to model the distribution of features or scores under the different-source hypothesis (H2) [26]. |

| Validation Framework | A set of scripts and protocols for performing discrimination and calibration analysis, which is critical for demonstrating the validity and reliability of the LR system [28]. |

FAQs on Likelihood Ratios (LRs) in Research

Q1: What is a Likelihood Ratio (LR), and why is it important in scientific research? A Likelihood Ratio (LR) is a statistical measure that compares the probability of observing specific evidence under two competing hypotheses. In scientific research, it is a fundamental tool for quantifying the strength of evidence, helping researchers move from a subjective interpretation of data to an objective, quantifiable metric. Its importance spans multiple fields:

- Drug Safety: LRs can be used in disproportionality analysis to detect potential safety signals by comparing the probability of a specific adverse event being reported for a target drug versus all other drugs in a database [30].

- Forensic DNA: The LR compares the probability of observing a DNA profile if the defendant is the source versus if another person from the population is the source. Proper presentation and interpretation are critical to avoid miscarriages of justice, such as the "Prosecutor's Fallacy" [31].

Q2: What are common pitfalls when presenting Likelihood Ratios to non-expert audiences? A primary challenge is ensuring that the numerical value of the LR is understood correctly by laypersons, such as legal decision-makers or regulatory professionals. Common pitfalls include:

- The Prosecutor's Fallacy: This is the misinterpretation of the LR as the probability of the prosecution's hypothesis being true. For example, an LR of 10,000 does not mean there is a 99.99% probability the defendant is guilty; it means the evidence is 10,000 times more likely under the prosecution's hypothesis than the defense's [31].

- Miscommunication of Strength: Presenting only the raw number without contextual, verbal scales of support can lead to confusion. Research is ongoing to determine the best way to present LRs to maximize understandability [5].

Q3: How can a researcher validate a Likelihood Ratio model in drug safety signal detection? Validation ensures the model reliably identifies true signals and minimizes false positives. Key methodologies include:

- Using Multiple Algorithms: A robust approach employs several statistical methods (e.g., ROR, PRR, BCPNN, MGPS) concurrently. A signal is considered stronger or more reliable when it is flagged by multiple, independent algorithms [30].

- Clinical Review and Triage: All statistically significant signals must undergo a clinical review by a subject matter expert (e.g., a physician or pharmacist) to determine if there is a plausible biological mechanism and to assess the potential clinical impact [32] [33].

- Comparison with External Data: Validating signals against other data sources, such as findings published in scientific literature or results from other databases, is a critical step [33].

Troubleshooting Common Experimental Issues

Problem: High Rate of False Positive Signals in Drug Safety Surveillance

- Potential Cause: Over-reliance on a single statistical algorithm or an inappropriately low threshold for signal detection.

- Solution: Implement a multi-method approach. Use a combination of algorithms (e.g., ROR, PRR, BCPNN, MGPS) and require a signal to be detected by more than one method to be considered for further investigation [30]. This cross-validation significantly reduces false positives. Additionally, always correlate statistical findings with clinical plausibility.

Problem: Misinterpretation of Forensic DNA Evidence by a Jury

- Potential Cause: The LR is presented as a complex number without a clear explanation, or the presentation inadvertently leads to the "Prosecutor's Fallacy."

- Solution: Adopt a standardized, clear format for presenting testimony. This includes:

- Explicitly stating the two competing hypotheses (Hp: Prosecution's and Hd: Defense's).

- Clearly explaining that the LR measures the strength of the evidence, not the probability of the hypothesis.

- Using verbal scales (e.g., "moderate support," "strong support") alongside numerical values to aid comprehension, though the exact phrasing should be carefully considered based on ongoing research [5] [31].

Problem: Inconsistent Findings Between Preclinical Animal Studies and Human Clinical Trials

- Potential Cause: Animal models are often poor predictors of human toxicity. Analysis shows their predictive accuracy is often little better than chance [34].

- Solution: While animal testing is currently a regulatory requirement, researchers should:

- Interpret results with caution: Understand the significant limitations and high failure rate of animal-to-human translation.

- Invest in alternatives: Explore and validate human-relevant alternatives, such as in vitro models and human tissue-based tests, which are under development to better predict human responses [34].

Experimental Protocols & Data Presentation

Protocol 1: Drug Safety Signal Detection Using the FAERS Database

This protocol outlines the methodology for detecting adverse event (AE) signals associated with a specific drug from the FDA Adverse Event Reporting System (FAERS) database [30].

- Data Extraction: Download and process data from the FAERS database for the desired time period. Remove duplicate reports.