Case Assessment and Interpretation (CAI): A Robust Protocol for Reliable Scientific Opinion in Research and Development

This article provides a comprehensive guide to the Case Assessment and Interpretation (CAI) model, a formal framework designed to enhance the robustness and reliability of expert opinion in scientific research...

Case Assessment and Interpretation (CAI): A Robust Protocol for Reliable Scientific Opinion in Research and Development

Abstract

This article provides a comprehensive guide to the Case Assessment and Interpretation (CAI) model, a formal framework designed to enhance the robustness and reliability of expert opinion in scientific research and development. Tailored for researchers, scientists, and drug development professionals, we explore CAI's foundational principles rooted in Bayesian logic and likelihood ratios. The content covers its methodological application across various disciplines, strategies for troubleshooting and optimizing its implementation, and a review of validation studies that demonstrate its concordance with established methods. This guide aims to equip professionals with the knowledge to integrate CAI into their workflows, thereby improving decision-making and ensuring value in complex research and development projects.

Understanding CAI: The Bayesian Framework for Robust Scientific Assessment

Case Assessment and Interpretation (CAI) represents a paradigm shift in the evaluation and application of expert testimony within drug development. This protocol establishes a structured framework designed to overcome long-standing challenges such as cognitive bias, inconsistent methodologies, and opaque decision-making processes that have historically undermined the reliability of expert evidence. By integrating quantitative data analysis with structured, case-based reasoning, CAI provides researchers and drug development professionals with a standardized yet adaptable tool for generating robust, defensible, and transparent expert opinions [1] [2]. The implementation of CAI is critical for upholding the highest standards of scientific rigor in regulatory submissions and legal proceedings.

Core CAI Protocol Framework

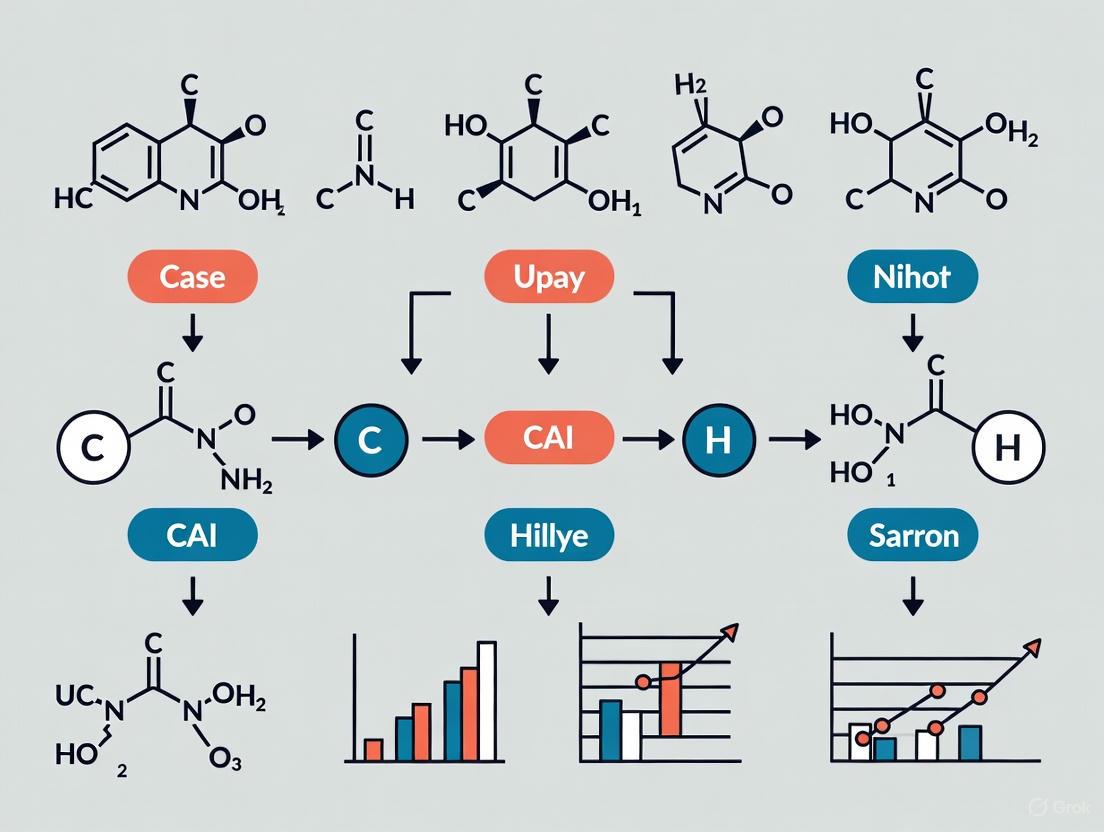

The CAI protocol is built on a continuous cycle of evaluation, designed to systematically interpret complex data within the context of existing knowledge. The workflow, detailed in the diagram below, ensures a consistent and transparent assessment process. This structured approach mitigates the risk of individual cognitive biases and enhances the reproducibility of expert conclusions [2].

Figure 1. The CAI protocol operates on a 4R cycle (Retrieve, Re-use, Revise, Retain), ensuring a systematic approach to case assessment [2]. The process begins with a new query, retrieves analogous past cases, adapts previous solutions, rigorously evaluates the proposed output, and finally updates the knowledge base with validated conclusions for future use.

Quantitative Analysis in CAI

A cornerstone of the CAI protocol is the rigorous application of quantitative data analysis to transform raw numerical data into objective, evidence-based insights. The selection of the appropriate analytical method is determined by the specific research question and data type [1].

Table 1: Quantitative Data Analysis Methods for Expert Testimony

| Analysis Type | Primary Function | Example Application in Drug Development | Key Statistical Methods |

|---|---|---|---|

| Descriptive | Summarizes what the data shows. | Calculating average patient response, dosage frequency, or most common adverse event. | Means, medians, modes, standard deviation, frequency distributions [1]. |

| Diagnostic | Identifies reasons or causes for observed phenomena. | Analyzing why a specific patient subgroup experienced a higher rate of a particular side effect. | Correlation analysis, Chi-square tests, regression analysis [1]. |

| Predictive | Forecasts future outcomes or trends based on historical data. | Modeling patient survival rates or predicting long-term treatment efficacy. | Time series analysis, regression modeling, machine learning algorithms [1]. |

| Prescriptive | Recommends specific actions based on diagnostic and predictive insights. | Optimizing clinical trial design or determining go/no-go decisions for drug development phases. | Optimization algorithms, simulation models, decision analysis frameworks [1]. |

Experimental Protocols & Workflows

Protocol: Quantitative Analysis of Clinical Trial Data

This protocol provides a standardized methodology for analyzing clinical trial data within the CAI framework, ensuring consistency and transparency in expert testimony.

Objective: To systematically analyze clinical trial outcomes to determine drug efficacy and safety. Materials: See Section 5 for a detailed list of research reagents and solutions.

Procedure:

- Data Preparation: Clean and preprocess raw clinical data. Handle missing values using predefined rules (e.g., imputation or exclusion) and identify statistical outliers for further investigation.

- Descriptive Analysis: Calculate key summary statistics for all primary and secondary endpoints (e.g., mean change from baseline, response rates, incidence of adverse events). Visualize data distributions using histograms or box plots.

- Statistical Testing:

- Perform t-tests (for continuous data like blood pressure) or Chi-square tests (for categorical data like responder/non-responder) to compare treatment and control groups [1].

- Conduct Analysis of Variance (ANOVA) for comparisons involving more than two groups.

- Report p-values and confidence intervals for all key comparisons.

- Diagnostic & Predictive Analysis:

- Interpretation & Documentation: Integrate all quantitative findings into a comprehensive report. Clearly state conclusions, acknowledge any analytical limitations, and document all steps and parameters used in the analysis for full transparency.

Workflow: Case-Based Reasoning for Toxicological Assessment

The following workflow leverages historical data to assess the toxicological risk of a new compound, a common challenge in drug development and expert testimony.

Figure 2. The Case-Based Reasoning (CBR) workflow for toxicological assessment. A new compound is assessed by retrieving and adapting solutions from a knowledge base of historical toxicology data, creating a data-driven and auditable risk profile [2].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for CAI-Driven Research

| Item | Function in CAI Protocol |

|---|---|

| Statistical Software (R, Python, SAS) | Performs the quantitative analyses central to the CAI protocol, including descriptive statistics, hypothesis testing, and regression modeling [1]. |

| Structured Case Knowledge Base | A repository of historical cases, including compound data, experimental results, and expert conclusions. Serves as the foundation for case-based reasoning and similarity assessment [2]. |

| Similarity Calculation Algorithm | A computational tool (e.g., k-Nearest Neighbors) used in the CBR workflow to quantitatively identify the most relevant historical cases for a new query [2]. |

| Data Visualization Toolkit | Software libraries (e.g., ggplot2, Matplotlib) used to create clear, accessible charts and graphs for communicating complex data in expert reports and testimony [3] [4]. |

| Accessible Color Palette | A predefined set of colors with sufficient contrast (≥ 3:1 ratio) to ensure data visualizations are interpretable by all stakeholders, including those with color vision deficiencies [4]. |

Bayesian logic is a powerful framework for updating the probability of a hypothesis as new evidence is acquired. Named after Thomas Bayes, this theorem provides a mathematical rule for inverting conditional probabilities, allowing us to find the probability of a cause given its observed effect [5]. In the context of Case Assessment and Interpretation (CAI) protocol research, this offers a formal structure for evidence-based decision-making throughout the drug development lifecycle.

The core mathematical expression of Bayes' theorem is:

P(H|E) = [P(E|H) × P(H)] / P(E)

Where:

- P(H|E) is the posterior probability: the probability of hypothesis H given the evidence E.

- P(E|H) is the likelihood: the probability of observing evidence E if hypothesis H is true.

- P(H) is the prior probability: the initial probability of H before seeing evidence E.

- P(E) is the marginal probability of the evidence, often calculated as P(E) = P(E|H)P(H) + P(E|not H)P(not H) [5] [6].

This formalism enables researchers to move beyond simple binary outcomes and quantitatively update their beliefs in the face of new data, which is fundamental to CAI protocols that emphasize iterative learning and evidence integration.

Fundamental Principles of Likelihood Ratios

The Likelihood Ratio (LR) quantifies the diagnostic strength of a piece of evidence by comparing how likely that evidence is under two competing hypotheses. It serves as a direct multiplier for updating prior beliefs to posterior beliefs, bridging the gap between evidence and hypothesis within Bayesian logic [7] [8].

Calculation and Interpretation

For a given test result and a target condition (e.g., a disease), the LR is calculated using the test's sensitivity and specificity [8]:

- Positive Likelihood Ratio (LR+) = Sensitivity / (1 - Specificity)

- Negative Likelihood Ratio (LR-) = (1 - Sensitivity) / Specificity

Table 1: Interpreting Likelihood Ratios in Diagnostic and Research Contexts

| LR Value | Interpretation of Evidence Strength |

|---|---|

| > 10 | Large and often conclusive increase in the probability of the target condition |

| 5 - 10 | Moderate increase in probability |

| 2 - 5 | Small but sometimes important increase in probability |

| 1 - 2 | Minimal and rarely important increase in probability |

| 1 | No diagnostic or evidential value |

| 0.5 - 1.0 | Minimal decrease in probability |

| 0.2 - 0.5 | Small decrease in probability |

| 0.1 - 0.2 | Moderate decrease in probability |

| < 0.1 | Large and often conclusive decrease in probability |

LRs provide a critical advantage in CAI by harmonizing the interpretation of test results that may otherwise be expressed in various units and manufacturer-defined scales, making it possible to compare results across different assay platforms and technical methods [7].

Integration with Bayesian Logic

The relationship between Bayes' Theorem and the Likelihood Ratio is fundamental. The posterior odds of a hypothesis are calculated by multiplying the prior odds by the LR [8]:

Posterior Odds = Prior Odds × Likelihood Ratio

This provides a direct mechanism for moving from pre-test to post-test probability. The pre-test probability, often estimated from disease prevalence or clinical context, is converted to pre-test odds, multiplied by the LR, and the result is converted back to a post-test probability [8]. This workflow is essential for quantitative CAI.

Application in CAI Protocol Research

Quantitative Diagnostic Interpretation

In clinical and laboratory diagnostics, LRs move beyond simple "positive/negative" dichotomies. By calculating LRs for specific intervals of quantitative test results, the precise diagnostic weight of any result can be communicated, which is vital for biomarker interpretation in clinical trials [7]. For instance, a D-dimer value of 500 ng/mL and another of 1500 ng/mL may both be "positive," but they carry vastly different implications for the probability of thrombotic disease, which can be precisely expressed via their different LRs.

Protocol for Establishing Test-Specific Likelihood Ratios

Implementing LRs in research requires a structured approach.

Table 2: Experimental Protocol for Likelihood Ratio Determination

| Step | Action | Key Output |

|---|---|---|

| 1. Cohort Definition | Define a study population with a representative spectrum of the target condition and relevant differential diagnoses. | Clearly characterized patient cohorts. |

| 2. Reference Standard | Apply a gold standard diagnostic method (e.g., histopathology, clinical follow-up) to all subjects to establish true disease status. | A robust "ground truth" classification. |

| 3. Index Test Measurement | Perform the test or assay under investigation on all subjects, ensuring blinding to the reference standard result. | Raw, quantitative test results for all subjects. |

| 4. ROC Analysis | Construct a Receiver Operating Characteristic (ROC) curve by plotting sensitivity vs. (1-specificity) across all possible test cut-offs. | A complete ROC curve. |

| 5. LR Calculation | For a specific test result or result interval, calculate LR as (Percentage of Diseased with result) / (Percentage of Non-Diseased with result). The slope of the secant on the ROC curve corresponds to the LR for an interval [7]. | Test result-specific Likelihood Ratios. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Bayesian Diagnostic Research

| Item | Function in Research |

|---|---|

| Well-Characterized Biobank Samples | Provides the necessary patient cohorts with linked clinical data for robust LR calculation and assay validation. |

| Reference Standard Assays | Gold-standard tests (e.g., PCR, sequencing, validated ELISA) used to establish the definitive disease status for each subject in the study. |

| Index Test Kits/Platforms | The diagnostic assay or technology being evaluated. Multiple platforms can be harmonized via their LRs [7]. |

| Statistical Software (R, Python) | Used for data analysis, including ROC curve construction, AUC calculation, and LR computation for result intervals. |

| Data Management System | Securely manages patient data, test results, and reference standard information, ensuring data integrity for analysis. |

Visualizing Bayesian Reasoning and Workflows

Core Bayesian Inference Relationship

This diagram illustrates the fundamental relationship in Bayesian inference, showing how prior belief is updated with evidence via Bayes' Theorem to form a posterior belief.

Diagnostic Test Evaluation Workflow

This flowchart outlines the key steps for evaluating a diagnostic test and establishing its quantitative interpretability through Likelihood Ratios.

From Pre-Test to Post-Test Probability

This diagram demonstrates the complete clinical reasoning pathway, showing how a pre-test probability is quantitatively updated to a post-test probability using a Likelihood Ratio.

Case Assessment and Interpretation (CAI) represents a foundational methodology originally developed to address the complex challenges of forensic science. Initially proposed in 1998, CAI introduced a structured framework based on the underlying logic of Bayes' Theorem and the use of likelihood ratios as a model of good practice for forensic science [9]. This model emerged in response to a critical need within forensic laboratories for a process that could deliver both robust, reliable opinion and value-for-money, especially as technological advances increased the volume and complexity of cases [9]. The core philosophy of CAI provides a systematic approach to forming and expressing expert opinions, particularly when handling ambiguous, complex, or trace evidence where traditional binary interpretations prove insufficient.

The evolution of CAI from its forensic origins to its current applications demonstrates the methodology's adaptability and power. Originally designed to help forensic scientists meet new challenges in evidence interpretation, the fundamental principles of CAI have since proven applicable across diverse scientific domains [9]. The integration of artificial intelligence and machine learning into the CAI framework represents the latest stage in this evolution, enabling more sophisticated, dynamic, and automated approaches to data interpretation across research and development fields.

Historical Development and Core Principles

The development of the CAI model was driven by several factors that exposed limitations in traditional forensic practice. The creation of a commercial market for forensic science in the 1990s in England and Wales created additional pressures on suppliers to provide cost-effective services without compromising scientific integrity [9]. Furthermore, highly publicized miscarriages of justice highlighted the dangers of poorly formulated expert opinions, creating an urgent need for a more rigorous and transparent methodology [9].

Over the 12 years following its initial proposal, the CAI model was systematically applied to most mainstream forensic science disciplines, leading to refinement of its core ideas and fresh insights into the nature of expertise [9]. The model evolved through practical application, demonstrating its versatility across different types of evidence and forensic contexts.

Table: Historical Development of CAI in Forensic Science

| Time Period | Key Development | Impact on Forensic Science |

|---|---|---|

| Pre-1998 | Traditional expert opinion evidence | Celebrated miscarriages of justice revealed limitations of unstructured approaches [9] |

| 1998 | Initial CAI model proposed | Introduced Bayesian framework and likelihood ratios as systematic approach [9] |

| 1998-2010 | Application across forensic disciplines | Model refined through practical application; fresh insights on expertise gained [9] |

| 2011-Present | Integration with AI technologies | Enhanced capability for handling complex, trace, and challenging samples [10] |

The core principles that have defined CAI throughout its evolution include:

- Bayesian Framework: The use of likelihood ratios to quantitatively evaluate evidence under competing propositions [9]

- Transparency: Making the reasoning process explicit and open to scrutiny

- Contextual Assessment: Considering the full context of a case when interpreting evidence

- Robustness: Ensuring conclusions remain reliable under challenging conditions

- Efficiency: Delivering value through optimized processes and reduced errors

Modern CAI Applications Across Research Domains

AI-Enhanced Forensic DNA Analysis

The integration of artificial intelligence with CAI principles has revolutionized forensic DNA analysis, particularly for challenging samples. Traditional PCR methods used in forensic DNA profiling have followed a standardized approach with fixed cycling conditions since their adoption in the 1990s [10]. These methods struggle with degraded, trace, and inhibited samples—failing to yield usable profiles in many cases [10].

Modern CAI approaches address these limitations through machine-learning-driven "smart" PCR systems that dynamically adjust cycling conditions in real-time [10]. This AI-enhanced optimization represents a significant evolution beyond static protocols:

- Real-time Fluorescence Feedback: Monitoring amplification efficiency as a proxy for assessing changing reaction conditions [10]

- Dynamic Condition Adjustment: Altering cycling parameters during the run based on real-time feedback [10]

- Machine Learning Integration: Using comprehensive databanks of DNA profiles to train algorithms that associate cycling conditions with profile quality [10]

This approach has demonstrated particular value for samples containing degraded DNA, inhibitory compounds, or low DNA quantities—challenges common in forensic casework [10]. By increasing success rates for these challenging samples, AI-enhanced CAI methods reduce the number of cases where no usable profile is obtained, thereby increasing the efficacy of forensic DNA analysis [10].

Table: Performance Comparison of Traditional PCR vs. AI-Enhanced CAI Approach

| Parameter | Traditional PCR | AI-Enhanced CAI Approach |

|---|---|---|

| Cycling Conditions | Static, fixed throughout run [10] | Dynamic, adjusted in real-time [10] |

| Feedback Mechanism | End-point analysis only [10] | Real-time fluorescence monitoring [10] |

| Handling Challenging Samples | Often yields poor or unusable data [10] | Improved success rates and profile quality [10] |

| Process Optimization | Uniform for all samples [10] | Tailored to each sample's characteristics [10] |

| Workflow Efficiency | Separate qPCR and endpoint PCR steps [10] | Potential to consolidate into single process [10] |

CAI in Pharmaceutical and Biomedical Research

Beyond forensic science, CAI principles have found significant applications in pharmaceutical and biomedical research. The structured framework for evidence assessment and interpretation translates effectively to drug development processes, particularly in areas requiring complex decision-making under uncertainty.

In pharmaceutical manufacturing, CAI approaches are being applied to AI-driven process optimization. For instance, at Johnson & Johnson Innovative Medicine, researchers are specializing in AI-driven pharmaceutical manufacturing, applying computational modeling and data science to enhance production processes [11]. The CAI framework provides a structure for interpreting complex data from multiple sources to optimize manufacturing parameters and ensure quality control.

In behavioral health research, organizations are implementing CAI-inspired approaches to analyze qualitative data more efficiently. The Research and Evaluation Team at one organization partnered with Project SUCCEED to leverage AI for innovative qualitative data analysis [12]. Their approach implemented an AI-powered strategy to systematically analyze interview transcripts, resulting in data analysis that's three times faster, with a 97% initial accuracy rate [12]. This application demonstrates how CAI methodologies enhance efficiency while maintaining reliability through a streamlined human review protocol that ensures 100% reliability [12].

CAI in Data Science and AI Governance

The expansion of CAI principles into data science represents a natural evolution of the methodology. As organizations increasingly rely on data-driven decision making, the need for structured assessment and interpretation frameworks has grown correspondingly.

Leading technology companies are now applying CAI principles to ensure responsible AI development. Tutorials on "Applying Responsible AI Principles for GenAI" are being offered to AI researchers and practitioners, focusing on ethical implications of advancements in large language models and generative systems [13]. These trainings address critical issues including bias mitigation, privacy preservation, and trustworthy AI assessment [13], all within a framework that echoes the structured assessment approach of traditional CAI.

The emergence of specialized assessment methodologies like Z-inspection further demonstrates the evolution of CAI principles into new domains [13]. This comprehensive ethical AI assessment methodology spans the entire AI lifecycle and aligns with the European Commission's expert group guidelines on Trustworthy AI [13]. The framework incorporates four key principles—respect for human autonomy, prevention of harm, fairness, and explicability [13]—that parallel the rigorous, transparent assessment goals of forensic CAI.

Experimental Protocols and Methodologies

Protocol: AI-Optimized PCR for Challenging Forensic Samples

This protocol details the methodology for implementing AI-enhanced PCR within a CAI framework for forensic DNA analysis, based on research by Caitlin McDonald at Flinders University [10].

Objective: To improve DNA amplification efficiency and profile quality from challenging forensic samples (degraded, inhibited, or low-template) through machine learning-driven optimization of PCR cycling conditions.

Materials and Equipment:

- Thermal cycler with real-time fluorescence monitoring capability

- Standard forensic DNA extraction kits

- Commercial forensic STR amplification kits

- Quantitative PCR (qPCR) instrumentation

- Machine learning software platform (Python with scikit-learn or equivalent)

- High-performance computing resources for model training

Procedure:

Step 1: Training Data Collection

- Establish a comprehensive databank of DNA profiles characterizing the impact of altering specific elements in the PCR process [10]

- Systematically vary parameters including denaturation timing, annealing temperature, extension time, and cycle number

- For each parameter combination, record resulting profile quality metrics including allele balance, peak heights, and peak height ratios [10]

- Include diverse sample types: pristine, degraded, inhibited, and low-template DNA

Step 2: Machine Learning Model Development

- Develop a machine learning algorithm using PCR cycling conditions as inputs and DNA profile features as outputs [10]

- Train the model to associate different cycling conditions with resulting profile quality

- Implement reinforcement learning approach where PCR programs producing "good" quality DNA profiles are attractive to the system, and programs producing "poor" quality profiles are repulsive [10]

- Validate model performance using cross-validation with holdout sample sets

Step 3: Real-time PCR Optimization

- For each new sample, initiate amplification with standard baseline conditions

- Monitor amplification efficiency in real-time using fluorescence feedback [10]

- Input efficiency metrics into trained machine learning model

- Dynamically adjust cycling conditions during the run based on model recommendations [10]

- Continue optimization through completion of amplification cycles

Step 4: Profile Assessment and Model Refinement

- Compare resulting DNA profiles with historical data

- Incorporate new results into training database to continuously improve model accuracy

- Assess profile quality using standard forensic metrics (peak height, balance, mixture indicators)

Troubleshooting Notes:

- If model recommendations produce suboptimal results, review training data for similar sample types

- Ensure fluorescence monitoring is calibrated correctly to provide accurate efficiency measurements

- For novel sample types, consider running parallel standard PCR for comparison

Protocol: AI-Assisted Qualitative Data Analysis for Behavioral Health Research

This protocol adapts the CAI approach for qualitative data analysis in behavioral health research, based on methodology implemented by CAI's Research and Evaluation Team [12].

Objective: To systematically analyze qualitative interview transcripts using AI-powered strategies that increase efficiency while maintaining reliability.

Materials and Equipment:

- Qualitative interview transcripts (text format)

- AI-powered text analysis platform (e.g., NLP-based classification system)

- Structured codebook for qualitative analysis

- Statistical software for inter-rater reliability assessment

- Secure data storage environment

Procedure:

Step 1: AI Model Training

- Develop a comprehensive codebook based on preliminary manual review of transcript subset

- Train natural language processing algorithm on manually-coded transcripts

- Establish clear classification criteria for each thematic code

- Validate initial AI coding against human coders using Cohen's kappa statistic

Step 2: AI-Powered Transcript Analysis

- Process interview transcripts through trained AI model

- Implement systematic analysis to identify thematic patterns [12]

- Generate confidence scores for each automated coding decision

- Flag low-confidence classifications for human review

Step 3: Streamlined Human Review

- Establish protocol for human review of AI-generated analyses

- Focus human review on flagged low-confidence classifications and random sample of high-confidence classifications [12]

- Resolve discrepancies through consensus coding

- Document all revisions to automated coding

Step 4: Reliability Assessment

- Calculate final inter-rater reliability between AI and human coders

- Assess accuracy metrics across thematic categories

- Achieve target of 97% initial accuracy rate with streamlined human review protocol ensuring 100% reliability [12]

Validation Metrics:

- Measure time efficiency compared to manual coding (target: 3x faster analysis) [12]

- Track initial accuracy rate (target: 97%) [12]

- Document human review time reduction while maintaining 100% reliability [12]

Research Reagent Solutions

Table: Essential Research Reagents and Materials for CAI Methodologies

| Reagent/Material | Function/Application | Specific Examples/Notes |

|---|---|---|

| STR Amplification Kits | Forensic DNA profiling using short tandem repeat markers | Commercial kits compatible with real-time fluorescence monitoring [10] |

| DNA Polymerase Enzymes | Catalyzing DNA amplification during PCR | Enzymes with demonstrated stability under varying cycling conditions [10] |

| Real-time Fluorescence Dyes | Monitoring amplification efficiency during PCR | Dyes compatible with STR amplification chemistry and detection systems [10] |

| Quality Control Standards | Ensuring reagent performance and process reliability | Reference DNA standards of known concentration and quality [10] |

| Inhibition Removal Reagents | Addressing PCR inhibitors in challenging samples | Chemical or enzymatic treatments to improve amplification efficiency [10] |

| AI Training Datasets | Developing machine learning models for optimization | Curated databases of DNA profiles with associated amplification conditions [10] |

| Qualitative Codebooks | Structured analysis frameworks for qualitative data | Comprehensive coding criteria for thematic analysis of interview transcripts [12] |

| NLP Algorithm Platforms | Automated text analysis for qualitative research | Natural language processing tools trainable on domain-specific content [12] |

Workflow and Conceptual Diagrams

CAI Process Evolution Diagram

AI-Optimized PCR Workflow Diagram

Cross-Domain CAI Application Diagram

The evolution of Case Assessment and Interpretation from its origins in forensic science to its current applications across diverse research domains demonstrates the versatility and enduring value of its core principles. The integration of artificial intelligence with the CAI framework represents a natural progression that enhances the methodology's capability to handle complex, ambiguous, or trace data across multiple fields.

The successful application of CAI principles in domains as varied as forensic DNA analysis, pharmaceutical manufacturing, qualitative research, and AI governance indicates the methodology's robust foundation and adaptability. As research challenges grow increasingly complex and data-intensive, the structured yet flexible approach offered by CAI provides a valuable framework for ensuring robust, transparent, and reliable interpretation of evidence.

Future developments in CAI will likely focus on further cross-pollination across disciplines, enhanced real-time decision support systems, and standardized implementation frameworks that maintain the methodology's core principles while adapting to new technological capabilities. The continued evolution of CAI promises to enhance research quality, efficiency, and reliability across an expanding range of scientific and technical domains.

Application Note: Quantitative Framework for Intervention Assessment in Prediabetes

Background and Rationale

Prediabetes (PD) represents a critical intervention target characterized by impaired glucose tolerance (IGT) or impaired fasting glucose (IFG), with a high-risk trajectory of 5-10% annual progression rate to type 2 diabetes mellitus (T2DM) and lifetime conversion risk exceeding 70% [14]. The substantial economic burden of disease progression, with costs increasing from approximately US$500 per PD case to US$13,240 annually for diagnosed T2DM patients, necessitates a rigorous methodology for evaluating interventions that balance clinical reliability with economic feasibility [14]. This application note establishes a standardized CAI (Case Assessment and Interpretation) protocol for systematic evaluation of non-surgical interventions (NSIs), incorporating both clinical effectiveness and cost-effectiveness metrics within a unified analytical framework.

Quantitative Analysis of Intervention Outcomes

Table 1: Comparative Effectiveness of Non-Surgical Interventions for Prediabetes Management

| Intervention Category | Specific Intervention | Diabetes Incidence Reduction | Annual Cost per Participant (USD) | NNT | Cost per Averted Diabetes Case |

|---|---|---|---|---|---|

| Lifestyle Modification | Intensive Diet & Exercise Program | 40-70% [14] | $300-$700 [14] | TBD* | TBD* |

| Pharmacological Therapy | Metformin | ~30% [14] | $100-$200 [14] | TBD* | TBD* |

| Community-Based Programs | Behavioral Therapy | 35-50% (estimated) | $400-$600 (estimated) | TBD* | TBD* |

| Digital Therapeutics | Mobile Health Platforms | 25-45% (estimated) | $200-$500 (estimated) | TBD* | TBD* |

TBD (To Be Determined) through systematic review and meta-analysis of included studies

Table 2: Cardiovascular Risk Factor Outcomes from PD Interventions

| Intervention Type | HbA1c Reduction (%) | FPG Reduction (mg/dL) | Body Weight Reduction (kg) | Systolic BP Reduction (mmHg) | LDL-C Reduction (mg/dL) |

|---|---|---|---|---|---|

| Lifestyle Modification | -0.3 to -0.6 [14] | -5 to -10 [14] | -3 to -7 [14] | -3 to -7 | -5 to -10 |

| Pharmacological Therapy | -0.2 to -0.5 [14] | -4 to -8 [14] | -2 to +1 (variable) | -1 to -3 | -3 to -8 |

| Combined Approach | -0.4 to -0.8 [14] | -7 to -12 [14] | -4 to -6 | -4 to -8 | -6 to -12 |

Economic Evaluation Framework

The assessment of cost-effectiveness utilizes standardized metrics including Incremental Cost-Effectiveness Ratio (ICER), cost per quality-adjusted life year (QALY) gained, and cost per averted diabetes case [14]. The partitioned survival model approach, demonstrated in recent economic evaluations, provides a robust methodology for long-term cost-effectiveness projections [15]. This model structure partitions overall survival into discrete health states relevant to disease progression, enabling precise estimation of intervention impact on both clinical outcomes and economic burden.

Experimental Protocols

Protocol 1: Systematic Review Methodology for Intervention Assessment

Purpose: To comprehensively identify, evaluate, and synthesize scientific literature reporting on the effectiveness and cost-effectiveness of NSIs for preventing the progression of PD to T2DM among adults [14].

Eligibility Criteria:

- Population: Adults aged ≥18 years diagnosed with PD according to American Diabetes Association (ADA) or WHO criteria (IFG, IGT, or elevated HbA1c) [14]

- Interventions: NSIs including LM strategies (dietary changes, physical activity programs, educational initiatives) and pharmacological treatments [14]

- Comparators: Standard care, placebo, or active comparators

- Outcomes: Primary outcome: diabetes incidence (ADA or WHO glycaemic criteria). Secondary outcomes: (1) CVD risk factors, (2) health utilities, (3) healthcare cost analyses [14]

- Study Designs: Observational studies (cross-sectional, case-control, cohort) and interventional studies (RCTs, non-RCTs, non-controlled trials, quasi-experimental), economic evaluations [14]

- Time Frame: Intervention period ≥1 year [14]

Information Sources and Search Strategy:

- Electronic Databases: PubMed, Cochrane Library, Scopus, Web of Science [14]

- Search Date: Records published up to July 2024 [14]

- Search Concepts: (1) Adult with PD, (2) Non-surgical approaches to PD management, (3) Clinical and economic outcomes [14]

- Methodology: Combination of Medical Subject Headings (MeSH) and free-text terms with Boolean operators; search strategy initially designed for PubMed and adapted for other databases [14]

Study Selection Process:

- Two independent reviewers screen titles/abstracts followed by full-text assessment [14]

- Discrepancies resolved through consensus or third reviewer adjudication [14]

- Data extraction using standardized forms capturing study characteristics, methodology, participant demographics, intervention details, outcome measures, and results [14]

Data Synthesis:

- Narrative synthesis of included studies structured around intervention types, populations, and outcomes [14]

- Meta-analysis if sufficient homogeneity in interventions, comparators, and outcome measures [14]

- Calculation of number needed to treat (NNT) for studies reporting T2DM incidence [14]

- Economic evaluation synthesis including cost per QALY gained and ICER [14]

Protocol 2: Partitioned Survival Modeling for Economic Evaluation

Purpose: To estimate long-term cost-effectiveness of interventions using a state-transition modeling approach that partitions overall survival into health states relevant to disease progression [15].

Model Structure:

- Develop a multi-state partitioned survival model with discrete health states (e.g., PD, T2DM, diabetes complications, death) [15]

- Model transitions between states based on time-dependent probabilities derived from survival analysis of relevant Kaplan-Meier data [15]

- Implement operational model in R programming environment for transparency and reproducibility [15]

Parameter Estimation:

- Clinical Effectiveness: Derive from systematic review and meta-analysis of intervention studies

- Cost Parameters: Incorporate healthcare costs (drug acquisition, monitoring, administration) and out-of-pocket costs from relevant societal perspective [15]

- Utility Weights: Measure quality of life using standardized instruments (e.g., EQ-5D-5L) and time trade-off valuation of health-state vignettes matching model states [15]

Analysis Framework:

- Base Case Analysis: Compare lifetime costs and outcomes of intervention versus standard care [15]

- Sensitivity Analyses: Conduct probabilistic and deterministic sensitivity analyses to evaluate parameter uncertainty and identify key drivers of cost-effectiveness [15]

- Scenario Analyses: Explore alternative modeling assumptions, discount rates, time horizons, and subgroup populations [15]

Output Metrics:

- Incremental quality-adjusted life years (QALYs)

- Incremental costs

- Incremental cost-effectiveness ratio (ICER) [15]

- Cost per averted diabetes case

Visualization Framework

CAI Protocol Decision Pathway

Partitioned Survival Model Structure

Economic Evaluation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Methodological Components for CAI Protocol Implementation

| Tool/Component | Specification | Application in CAI Protocol |

|---|---|---|

| Systematic Review Framework | PRISMA-P 2015 guidelines [14] | Protocol development and reporting for comprehensive evidence synthesis |

| Economic Evaluation Model | Partitioned survival model in R [15] | Long-term projection of costs and outcomes for intervention comparison |

| Quality Assessment Tool | Cochrane Risk of Bias, CONSORT for economic evaluations | Methodological rigor assessment of included studies |

| Data Extraction Platform | Customized standardized extraction forms | Systematic capture of clinical, economic, and methodological data |

| Statistical Analysis Package | R, Python with specialized libraries (survival, metafor) | Meta-analysis, survival modeling, and cost-effectiveness calculation |

| Cost-Effectiveness Threshold | Country-specific willingness-to-pay benchmarks | Decision context for intervention value assessment |

| Sensitivity Analysis Framework | Probabilistic sensitivity analysis with Monte Carlo simulation [15] | Quantification of parameter uncertainty and model robustness |

| Visualization Toolkit | Graphviz DOT language, statistical plotting libraries | Transparent communication of model structures and results |

Implementing CAI: A Step-by-Step Methodology for Research and Analysis

Case Assessment and Interpretation (CAI) is a formalized framework that provides a systematic methodology for designing effective, efficient, and robust examination strategies across scientific disciplines [16]. Founded on Bayesian principles, the CAI model brings clarity to the role of scientists within broader investigative processes, encourages consistency of approach, and helps direct research effort [16]. Originally developed in the 1990s by the Forensic Science Service in the UK, CAI offers a structured procedure for the interpretation of findings within the context of case circumstances to form an optimal examination and interpretation strategy [17]. This framework has since been adapted beyond its forensic origins to inform evidence-based evaluation in other fields requiring rigorous assessment of complex data, including the interpretation of artificial intelligence (AI) outputs in drug development.

The core strength of CAI lies in its logical framework for evaluating evidence, which mandates that all findings are considered within a framework of circumstances, evaluated against at least two competing propositions, and that the expert's role is strictly to consider the probability of the findings given the propositions—not the probability of the propositions themselves [17]. This separation of responsibilities ensures balanced, transparent, and robust conclusions while minimizing cognitive biases. This application note details the CAI process flow and adapts its principles for researchers, scientists, and drug development professionals navigating the complex evaluation of AI-generated data in pharmaceutical research and development.

Theoretical Foundation: The Bayesian Framework of CAI

The CAI framework is grounded in Bayesian reasoning, which provides a mathematically rigorous method for updating beliefs based on new evidence. At the heart of this approach is the likelihood ratio (LR), a measure of the strength of evidence that quantifies how much more likely the evidence is under one proposition compared to an alternative [17].

The likelihood ratio is expressed as: Likelihood Ratio (LR) = p(E|H1,I) / p(E|H2,I)

In this equation:

- E represents the observed evidence or findings

- H1 and H2 represent two competing propositions or hypotheses

- I represents the relevant contextual information

- p(E|H1,I) is the probability of observing the evidence E given that proposition H1 is true and considering context I

- p(E|H2,I) is the probability of observing the evidence E given that proposition H2 is true and considering context I [17]

Within the framework of Bayes' theorem, the likelihood ratio mathematically updates prior beliefs about the relative probabilities of propositions to arrive at posterior probabilities: p(E|H1,I)/p(E|H2,I) × p(H1|I)/p(H2|I) = p(H1|E,I)/p(H2|E,I) [17]

This Bayesian framework clearly delineates the roles of the scientist from those of the decision-maker. The scientist's expertise lies in assigning the probabilities of the evidence given the propositions (the likelihood ratio), while the decision-maker (which could be a research team, regulatory body, or ethics committee in drug development) considers the prior and posterior probabilities of the propositions themselves [17].

Table 1: Core Principles of Case Assessment and Interpretation

| Principle | Description | Implication for Scientific Practice |

|---|---|---|

| Framework of Circumstances | All findings should be evaluated within the specific context of the case [17]. | Ensures conclusions are relevant to the specific decision needing resolution. |

| Competing Propositions | Findings should be evaluated with respect to at least two alternative explanations [17]. | Guarantees balanced evaluation by comparing evidence across multiple scenarios. |

| Role Separation | Experts consider probability of findings given propositions, not probability of propositions themselves [17]. | Maintains scientific objectivity and defers ultimate decisions to appropriate stakeholders. |

The CAI Process Flow: A Stage-by-Stage Analysis

The CAI process follows a structured, sequential flow that guides the scientist from initial case reception through to final interpretation and reporting. The workflow ensures methodological rigor at every stage.

Stage 1: Pre-Assessment

The pre-assessment phase represents the strategic planning stage where the examination and interpretation strategy is designed before laboratory work begins. During this critical preliminary stage, scientists collaborate with relevant stakeholders to define the scope of work, identify key issues in the case, and formulate the competing propositions that will frame the evaluation [17]. For each proposition, the team considers: What types of findings might we expect? What is the probability of observing these potential findings if each proposition were true? The assessment should be informed by scientific literature, existing data, case-specific experiments, or the knowledge and experience of the experts involved [17].

In the context of AI-assisted drug development, this phase might involve defining competing propositions about an AI model's performance, such as "The AI model reliably predicts patient stratification for Trial X" versus "The AI model does not reliably predict patient stratification for Trial X." The pre-assessment would then outline the types of evidence needed to distinguish between these propositions and the methods for obtaining that evidence.

Stage 2: Analysis and Examination

During this phase, the planned laboratory examinations and analyses are executed according to the strategy determined during pre-assessment [17]. The forensic examiner or researcher conducts the technical work—which could include chemical analysis, genetic testing, or assessment of AI model outputs—to generate the findings that will inform the interpretation. The examination is conducted as objectively as possible, following validated protocols and standardized procedures to ensure the reliability and reproducibility of the results. In our AI drug development example, this might involve running the AI model on validation datasets, comparing its predictions with known outcomes, and documenting its performance metrics according to predefined criteria.

Stage 3: Interpretation

The interpretation phase involves evaluating the findings generated during the analysis in the context of the competing propositions defined during pre-assessment. The scientist assigns probabilities to the findings under each proposition and calculates the likelihood ratio to quantify the strength of the evidence [17]. This probability assignment is inherently subjective to some degree but should be informed by all available relevant information, including experimental data, scientific literature, and case-specific studies. The sources of information used must be transparently documented to enable the fact-finder to assess the robustness of the probability assignment [17].

The following diagram illustrates the complete CAI process flow from initial case reception through the three core stages to final reporting:

Application of CAI in Pharmaceutical Sciences: Evaluating AI in Drug Development

The CAI framework provides a robust methodology for addressing the complex evidentiary challenges presented by the integration of artificial intelligence into drug development. Regulatory agencies are increasingly emphasizing the need for transparent, validated, and well-documented AI applications throughout the medicinal product lifecycle [18] [19].

Regulatory Context for AI in Drug Development

Both the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA) have developed approaches to oversee the implementation of AI in pharmaceutical development. The FDA has adopted a flexible, dialog-driven model that includes a risk-based credibility assessment framework for evaluating AI models in specific contexts of use [18] [19]. The EMA has established a more structured, risk-tiered approach that mandates comprehensive documentation, validation, and performance monitoring, particularly for high-impact applications affecting patient safety or regulatory decision-making [19].

Table 2: Regulatory Approaches to AI in Drug Development

| Agency | Overall Approach | Key Features | CAI Alignment |

|---|---|---|---|

| U.S. FDA | Flexible, case-specific assessment [19] | Seven-step risk-based credibility framework for specific "contexts of use" [18] | Focus on defining propositions based on context of use |

| European EMA | Structured, risk-tiered oversight [19] | Explicit requirements for high patient risk and high regulatory impact applications [19] | Systematic evaluation of competing risk propositions |

| Japan PMDA | "Incubation function" with adaptive approval [18] | Post-Approval Change Management Protocol for AI systems [18] | Ongoing assessment as new evidence emerges |

CAI Protocol for Validating AI-Assisted Clinical Trial Optimization

The following detailed protocol applies the CAI framework to the validation of an AI tool used to optimize clinical trial design through patient stratification.

Protocol Title: CAI-Based Validation of AI-Assisted Patient Stratification for Clinical Trials

1. Pre-Assessment Phase

- Stakeholder Consultation: Engage multidisciplinary team including clinical researchers, statisticians, bioethicists, and regulatory affairs specialists.

- Define Competing Propositions:

- H1: The AI model accurately identifies patient subgroups that will exhibit enhanced response to the investigational treatment.

- H2: The AI model does not improve identification of responsive patient subgroups beyond standard methods.

- Define Potential Findings: Specify the types of evidence needed, including:

- Predictive accuracy metrics (sensitivity, specificity, AUC-ROC)

- Comparison with conventional stratification methods

- Robustness across demographic subgroups

- Computational reproducibility

- Plan Examination Strategy: Design validation study using historical clinical trial data with known outcomes, reserving a portion for blind testing.

2. Analysis Phase

- Data Curation: Implement pre-specified data preprocessing pipeline following FAIR principles (Findable, Accessible, Interoperable, Reusable).

- Model Execution: Run the AI model on validation datasets under controlled conditions.

- Performance Assessment: Calculate predefined metrics for predictive performance, fairness, and robustness.

- Bias Evaluation: Conduct subgroup analysis to identify potential disparities in model performance across demographic groups.

3. Interpretation Phase

- Probability Assignment: Estimate the probability of observing the performance metrics under H1 and H2, based on existing literature on predictive models in similar contexts.

- Likelihood Ratio Calculation: Compute LR using the formula: LR = p(Observed Performance Metrics | H1) / p(Observed Performance Metrics | H2)

- Sensitivity Analysis: Assess robustness of probability assignments to variations in underlying assumptions.

4. Reporting

- Transparent Documentation: Report all assumptions, data sources, analytical methods, and probability assignments.

- Contextualize Findings: Present likelihood ratio with clear explanation of its meaning in practical terms.

- Communicate Limitations: Acknowledge any constraints in the validation approach and areas requiring further research.

The following diagram illustrates the application of the CAI framework to this AI validation context:

Successfully implementing the CAI framework requires both conceptual tools and practical resources. The following table details key research reagents and methodological solutions essential for applying CAI in pharmaceutical research contexts.

Table 3: Essential Research Reagent Solutions for CAI Implementation

| Tool Category | Specific Solution | Function in CAI Process |

|---|---|---|

| Statistical Software | R, Python with SciPy/StatsModels | Bayesian analysis and likelihood ratio calculation |

| Data Management | Electronic Lab Notebooks, FAIR Data Repositories | Ensuring data integrity and traceability throughout assessment |

| Validation Frameworks | AI Credibility Assessment Framework (FDA) [18] | Structured approach for evaluating AI model reliability |

| Reference Materials | Historical control data, Standardized reference datasets | Providing baseline information for probability assignments |

| Bias Assessment Tools | Fairness metrics, Subgroup analysis scripts | Identifying and quantifying potential discriminatory impacts |

| Documentation Systems | Computational notebooks, Version control (Git) | Maintaining transparent record of all assumptions and analyses |

The Case Assessment and Interpretation framework provides a rigorous, transparent, and logically sound methodology for evaluating complex evidence in pharmaceutical research and development. By adopting its structured approach—from pre-assessment through analysis to interpretation—drug development professionals can enhance the robustness and credibility of their research outputs, particularly when navigating emerging technologies like artificial intelligence. The Bayesian foundation of CAI offers a mathematically coherent approach to evidence evaluation that aligns well with regulatory expectations for transparent and validated methods. As the pharmaceutical industry increasingly embraces AI-driven approaches, the principles of CAI will play an essential role in ensuring that these innovative tools are implemented responsibly, ethically, and effectively to advance public health.

In the specialized domain of Case Assessment and Interpretation (CAI) protocol research, the formulation of competing hypotheses is a foundational scientific activity. It provides the structural framework for evaluating evidence, guiding analytical processes, and deriving robust, defensible conclusions. For researchers, scientists, and drug development professionals, this practice moves inquiry beyond simple confirmation of a single theory, mitigating cognitive biases and ensuring that investigative or validation pathways remain objective and comprehensive. This protocol details the application of this principle within modern research environments, particularly those leveraging Artificial Intelligence (AI) and data-driven methodologies to manage complex compliance and analytical landscapes [20]. The systematic testing of competing propositions is paramount where conclusions have significant ramifications, such as in regulatory compliance, therapeutic development, and diagnostic innovation.

Core Principles and Theoretical Framework

The evaluation of competing hypotheses is governed by several core principles essential for maintaining scientific integrity in CAI research.

- Falsifiability and Testability: Every proposition must be structured in a way that allows for empirical refutation. A hypothesis that cannot be tested or falsified by data falls outside the realm of scientific evaluation.

- Mutual Exclusivity and Exhaustiveness: A robust set of competing hypotheses should be structured to be mutually exclusive where possible, and collectively exhaustive of the plausible explanations for the observed data or phenomenon. This ensures that the evaluation covers the relevant spectrum of possibilities.

- Parsimony (Occam's Razor): Given multiple hypotheses with equivalent explanatory power, the simplest explanation is generally favored. However, this principle does not override empirical evidence.

- Quantitative Scrutiny and Bayesian Reasoning: The framework encourages the assignment of likelihoods and the continuous updating of the probability for each hypothesis as new evidence emerges, facilitating a quantitative approach to evidence evaluation [20].

Within CAI, this process is increasingly augmented by AI. Large Language Models (LLMs) and machine learning algorithms can analyze vast corpora of regulatory texts, identify potential compliance risks, and even help generate initial hypothesis sets based on historical patterns and predefined rules [20]. This transforms hypothesis formulation from a purely manual, expert-driven task to a collaborative, AI-assisted process, enhancing both speed and coverage.

Application Notes: Implementing the Framework in CAI

Defining the Analytical Question and Propositions

The initial phase involves precisely defining the analytical question to be resolved. The question must be specific, measurable, and actionable. Subsequently, a set of competing propositions is formulated.

For example, in a CAI context focused on GDPR compliance within a business process, the question might be: "Does the data processing activity 'X' comply with the GDPR's lawfulness principle?" The competing propositions could be:

- H1: The processing activity is based on unambiguous consent obtained from the data subject.

- H2: The processing activity is necessary for the performance of a contract with the data subject.

- H3: The processing activity is necessary for compliance with a legal obligation.

- H4: The processing activity does not meet any condition for lawfulness under Article 6 of the GDPR [20].

Evidence Identification and Mapping

Once propositions are defined, the relevant evidence is identified and mapped against each hypothesis. This step involves determining the diagnostic value of each piece of evidence—how well it helps distinguish between the competing hypotheses. A key tool in this phase is the use of a matrix, which systematically tracks the consistency of evidence with each proposition.

AI-Enhanced Analysis and Refinement

Modern CAI protocols leverage technology to enhance this process. AI applications can automate the repetitive tasks of evidence gathering and initial consistency checks [20]. Predictive compliance monitoring models, potentially using mashup-based approaches, can analyze real-time data streams to test hypotheses about ongoing process adherence [20]. Furthermore, AI can assist in root-cause analysis by identifying patterns that may not be immediately apparent to human analysts, leading to the refinement of existing hypotheses or the generation of new ones.

The final phase involves synthesizing the analyzed evidence to arrive at a conclusion. The goal is to identify the hypothesis that is most consistent with the preponderance of the evidence. The analysis should also acknowledge and document any remaining uncertainties and the rationale for rejecting alternative propositions, ensuring the audit trail is transparent and justifiable.

Experimental Protocols for Hypothesis Evaluation

Protocol 1: Manual Matrix-Based Hypothesis Analysis

This protocol outlines the traditional, manual method for evaluating competing hypotheses, ideal for smaller-scale analyses or when a transparent, step-by-step audit trail is required.

- 1. Preparation: Clearly define the central question and formulate 3-5 competing propositions. Record these in a matrix, with hypotheses as columns.

- 2. Evidence Collection: Gather all available data and evidence relevant to the question. List each discrete piece of evidence as a row in the matrix.

- 3. Diagnostic Evaluation: For each cell in the matrix (evidence-hypothesis intersection), assign a consistency rating:

- C+: The evidence is consistent with the hypothesis.

- C-: The evidence is inconsistent with the hypothesis.

- N/A: The evidence is not applicable or does not discriminate.

- 4. Refinement and Analysis: Review the matrix for gaps in evidence and refine hypotheses as needed. Identify evidence that is highly diagnostic (e.g., supports one hypothesis while refuting others).

- 5. Reporting: Document the matrix, the final conclusion, and the reasoning process, including the rejection of alternative hypotheses.

Protocol 2: AI-Augmented Workflow for Predictive Compliance Monitoring

This protocol describes a methodology for using AI to automate and enhance hypothesis testing in regulatory compliance, as explored in workshops on Compliance in the Era of AI [20].

- 1. System Setup and Model Training:

- Ingest relevant regulatory texts (e.g., GDPR, SOX, AML) and organizational policies into a knowledge base.

- Train or fine-tune LLMs and machine learning classifiers to recognize compliance patterns and control requirements [20].

- 2. Hypothesis Generation:

- Input a description of a business process or data operation.

- Use the AI system to generate initial compliance hypotheses (e.g., "Process is compliant," "Process violates data retention policy," "Process lacks necessary consent mechanism") [20].

- 3. Automated Evidence Mapping:

- The system automatically maps process attributes, data logs, and control outputs against the relevant regulatory clauses.

- It assigns a probabilistic score indicating the consistency of the evidence with each hypothesis.

- 4. Human-in-the-Loop Analysis:

- Researchers review the AI-generated hypothesis matrix and evidence mappings.

- Analysts refine the model's conclusions, incorporate contextual knowledge, and make the final determination.

- 5. Feedback and Model Iteration:

- The outcomes of the analysis are fed back into the AI system to continuously improve its accuracy and predictive capabilities [20].

The following workflow diagram illustrates the key stages of this AI-augmented protocol:

Data Presentation and Analysis

The quantitative and qualitative data generated during hypothesis evaluation must be systematically organized to facilitate clear comparison and decision-making.

Table 1: Hypothesis Evaluation Matrix for GDPR Lawfulness Analysis

| Evidence Item | H1: Based on Consent | H2: Necessary for Contract | H3: Legal Obligation | H4: Non-Compliant |

|---|---|---|---|---|

| Signed consent form on file | C+ | N/A | N/A | C- |

| Processing is required to deliver service | N/A | C+ | N/A | C- |

| No relevant law identified | N/A | N/A | C- | C+ |

| User attempted to withdraw | C+ | C- | N/A | N/A |

| Diagnostic Summary | Strongly Supported | Partly Supported | Refuted | Partly Supported |

Table 2: Performance Metrics for AI-Augmented vs. Manual CAI Protocols

| Evaluation Metric | Manual Protocol | AI-Augmented Protocol |

|---|---|---|

| Analysis Time (hrs/case) | 40-50 | 8-12 |

| Hypothesis Coverage | Limited by expert knowledge | Broad, data-driven |

| Consistency Rating Accuracy | ~85% (Subject to bias) | >95% (Based on trained model) |

| Evidence Items Processed/Case | ~100-200 | >1000 |

| Adaptability to New Regulations | Slow (Manual updates) | Rapid (Model retraining) |

The Scientist's Toolkit: Research Reagent Solutions

The effective application of CAI protocols requires a suite of methodological and technological "reagents."

Table 3: Essential Reagents for CAI and Hypothesis Evaluation Research

| Reagent / Tool | Type | Function in CAI Protocol |

|---|---|---|

| Regulatory Knowledge Base | Software/Database | A centralized repository of regulatory texts (GDPR, SOX, ISO) and internal policies that serves as the foundational dataset for hypothesis formulation [20]. |

| Large Language Model (LLM) | AI Model | Analyzes complex regulatory text, helps generate initial hypotheses, and assists in mapping evidence to relevant clauses [20]. |

| Hypothesis Evaluation Matrix | Analytical Framework | A structured worksheet (digital or physical) for systematically recording and visualizing the consistency of evidence with each competing proposition. |

| Predictive Compliance Monitor | Software Tool | An application, potentially using mashup-based approaches, that performs real-time monitoring of business processes against compliance rules to test adherence hypotheses [20]. |

| Root-Cause Analysis Algorithm | AI/Software Tool | Helps identify the underlying causes of compliance deviations, supporting the refinement of hypotheses during the investigative process [20]. |

| Data Analysis Pipeline (e.g., diatools) | Software Protocol | A reproducible environment for processing raw data into analyzable formats, ensuring consistency and reliability in the evidence used for evaluation [21]. |

Visualizing Logical Relationships in Hypothesis Testing

The logical pathway from question to conclusion can be modeled as a decision tree, which is particularly useful for understanding the points of discrimination between hypotheses. The following diagram maps this relationship, highlighting how evidence directs the analytical flow.

The rigorous formulation and evaluation of competing hypotheses are not merely an academic exercise but a critical operational necessity within Case Assessment and Interpretation protocol research. The structured approach detailed in these application notes and protocols provides a defensible methodology for navigating complex analytical landscapes, from regulatory compliance to drug development. The integration of AI and machine learning represents a paradigm shift, offering unprecedented scalability and precision in handling large-scale, complex data [20]. By adhering to these principles and leveraging the outlined toolkit, researchers and professionals can enhance the objectivity, reliability, and traceability of their interpretations, ultimately leading to more robust and scientifically sound outcomes.

The Likelihood Ratio (LR) is a fundamental statistical measure for evaluating evidence, enabling researchers to quantify how strongly observed data supports one hypothesis over another. Within a Case Assessment and Interpretation (CAI) framework, the LR provides a structured, transparent, and quantitative method for evidence evaluation, which is critical for making objective decisions in forensic science, clinical diagnostics, and drug development [22] [23]. The LR compares the probability of observing the evidence under two competing propositions. Typically, these are the prosecution proposition (Hp) and the defense proposition (Hd) in forensic contexts, or, more generally, a test hypothesis versus a null hypothesis [22] [24].

The core formula for the likelihood ratio is: LR = P(Evidence | Hp) / P(Evidence | Hd)

An LR greater than 1 indicates that the evidence is more likely under Hp, while an LR less than 1 suggests the evidence is more likely under Hd. An LR equal to 1 means the evidence is uninformative and does not change the prior odds [23] [25]. The strength of this evidence is often interpreted using standardized scales, which provide verbal equivalents for ranges of LR values.

Calculating the Likelihood Ratio

Foundational Formulas and Concepts

The calculation of a likelihood ratio depends on the nature of the data and the competing hypotheses. The following presents the core formulas and concepts.

Binary Data (Sensitivity and Specificity): For diagnostic tests with dichotomous outcomes (positive/negative), the LR can be derived from a 2x2 contingency table [23].

- Positive Likelihood Ratio (LR+): Indicates how much the odds of the target condition increase when a test is positive.

LR+ = Sensitivity / (1 - Specificity) - Negative Likelihood Ratio (LR-): Indicates how much the odds of the target condition decrease when a test is negative.

LR- = (1 - Sensitivity) / Specificity

- Positive Likelihood Ratio (LR+): Indicates how much the odds of the target condition increase when a test is positive.

Complex Data and Models: For more complex data, such as continuous measurements or high-dimensional data, the probabilities P(Evidence | H) are often derived from statistical models (e.g., probability density functions). The LR is then calculated as the ratio of the probability densities under the two hypotheses [26] [22]. In machine learning applications, similarity scores across multiple data types (e.g., drug structure, gene expression) can be converted into individual likelihood ratios and combined into a total likelihood ratio [27].

Accounting for Similarity and Typicality

A critical consideration in forensic LR calculation, particularly for source-level propositions, is accounting for both similarity (how close two pieces of evidence are to each other) and typicality (how common or rare that evidence is within the relevant population) [26]. Research by Morrison (2024) demonstrates that methods failing to account for typicality, such as those based solely on similarity scores or converted percentile-rank values, should not be used. Instead, common-source methods that properly incorporate population data are generally recommended [26].

Quantitative Data Interpretation Table

For clinical laboratory results, which are often continuous, the LR can be calculated for specific intervals or values of the test result. This allows for a more nuanced interpretation than a simple positive/negative dichotomy [7].

Table 1: Interpreting Quantitative Test Results Using Likelihood Ratios

| Likelihood Ratio Value | Approximate Change in Disease Probability* | Interpretation of Evidence Strength |

|---|---|---|

| > 10 | +45% | Large increase in disease likelihood, often "rules in" a disease |

| 5 - 10 | +30% to +45% | Moderate to substantial increase |

| 2 - 5 | +15% to +30% | Small but sometimes important increase |

| 1 - 2 | 0% to +15% | Minimal change, rarely significant |

| 1 | 0% | Non-diagnostic |

| 0.5 - 1.0 | 0% to -15% | Minimal decrease |

| 0.2 - 0.5 | -15% to -30% | Small decrease |

| 0.1 - 0.2 | -30% to -45% | Moderate decrease, often "rules out" a disease |

| < 0.1 | -45% | Large decrease in disease likelihood |

Note: *Approximate change from a pre-test probability between 30% and 70% [25].

Calibration and Validation of LR Systems

As automated LR systems become more prevalent, ensuring their validity and calibration is paramount. A well-calibrated LR system produces values where the reported LR accurately reflects the true strength of the evidence [22]. For example, when an LR of 10 is reported, it should indeed be ten times more likely to observe that evidence under Hp than under Hd. Ill-calibration can lead to misleadingly large or small posterior odds, resulting in incorrect conclusions [22].

Metrics for Calibration

Several metrics exist to measure the calibration of an LR system. A comparative simulation study based on Gaussian Log LR-distributions evaluated four metrics [22]:

Cllrcal: A metric from the literature that measures the cost of miscalibration in a log-likelihood ratio framework.devPAV: A newly proposed metric that demonstrated equal or better performance compared toCllrcalunder almost all simulated conditions. The study recommends using bothdevPAVandCllrcalfor measuring calibration, withdevPAVbeing the preferred metric [22].

Other metrics, such as the rate of misleading evidence (e.g., an LR > 1 when Hd is true) or the expected values of LR and 1/LR, were found to be less effective in differentiating between well- and ill-calibrated systems [22].

Application Protocols

Protocol for Diagnostic Test Evaluation in Clinical Research

This protocol outlines the steps for calculating and applying LRs to interpret diagnostic test results, harmonizing different assays and units into a universal measure of diagnostic evidence [7] [23].

Table 2: Research Reagent Solutions for Diagnostic Test Evaluation

| Reagent/Material | Function in LR Calculation |

|---|---|

| Well-characterized patient cohorts (Diseased & Non-diseased) | Serves as the reference population for establishing the distribution of test results under Hp and Hd. |

| Validated diagnostic assay kit | Generates the quantitative or semi-quantitative test result (evidence) to be evaluated. |

| Statistical software (e.g., R, Python, SAS) | Used to perform ROC analysis, calculate probability densities, and compute the final LR values. |

| ROC curve data | Serves as the basis for calculating test result interval-specific LRs; the slope of the tangent to the ROC curve gives the LR for a single test result [7]. |

Step-by-Step Workflow:

- Define the Diagnostic Hypothesis: Precisely state the target condition (Hp: Disease is present) and the alternative condition (Hd: Disease is absent).

- Generate ROC Curve: Using the data from your characterized cohorts, plot the Receiver Operating Characteristic (ROC) curve, which plots sensitivity against (1-specificity) across all possible test cut-offs.

- Calculate Likelihood Ratios: For a specific test result value or interval, calculate the LR.

- Apply the LR using Bayes' Theorem: Convert the pre-test probability of disease to pre-test odds, multiply by the LR to obtain post-test odds, and then convert back to a post-test probability [23].

- Simplified Estimation: For a rapid bedside assessment, use the approximation table (Table 1) to estimate the change in probability without calculations involving odds [25].

- Report and Interpret: Report the test result alongside its specific LR. Use the interpretation guidelines in Table 1 to convey the strength of the evidence.

Figure 1: Workflow for Diagnostic LR Application

Protocol for Evidence Evaluation in Forensic Science

This protocol emphasizes the calculation of calibrated LRs for forensic evidence, integrating population data to account for both similarity and typicality [26] [22].

Step-by-Step Workflow:

- Define Propositions: Formulate two mutually exclusive propositions at the same hierarchical level (e.g., source-level: Hp - "The sample comes from the suspect," Hd - "The sample comes from another person in the relevant population").

- Select a Validated Method: Choose an LR calculation method that properly accounts for both similarity and typicality, such as the common-source method [26].

- Model the Evidence: Develop or use a statistical model to calculate the probability of the observed evidence (e.g., a set of feature measurements) given each proposition. This requires a relevant population database.

- Calculate the LR: Compute the ratio of the two probabilities obtained in the previous step.

- Validate and Calibrate: Test the performance of the LR system using calibration metrics such as

devPAVandCllrcalto ensure it is not over- or understating the evidence [22]. - Report the Findings: Report the LR value and, if appropriate, its interpretation based on a recognized scale. The report should transparently state the methods and population data used.

Figure 2: Workflow for Forensic LR Calculation

Protocol for Integrating Diverse Data in Drug Discovery

The BANDIT framework provides a powerful example of using LRs to integrate multiple, disparate data types for drug target identification, a complex problem in pharmaceutical development [27].

Step-by-Step Workflow:

- Compile Diverse Data Types: For a set of drugs with known targets, gather data from multiple sources, such as:

- Drug structure

- Post-treatment transcriptional responses

- Drug efficacy (e.g., from NCI-60 screens)

- Reported adverse effects

- Bioassay results

- Calculate Similarity Scores: For each drug pair and each data type, calculate a dataset-specific similarity score.

- Convert to Likelihood Ratios: For each similarity score, calculate a likelihood ratio that represents the probability of that similarity if the drugs share a target versus the probability if they do not.

- Combine Evidence: Combine the individual LRs from all data types into a Total Likelihood Ratio (TLR), which is proportional to the overall odds that two drugs share a target.

- Predict New Targets: For an "orphan" drug with an unknown target, identify its nearest neighbors (drugs with known targets) based on a high TLR. The most frequently occurring targets among these neighbors are the top predictions for the orphan drug's target [27].

The likelihood ratio is a versatile and robust statistical tool for evidence evaluation across scientific disciplines. Its proper calculation requires careful consideration of the underlying data and hypotheses, with a particular emphasis on using methods that account for both similarity and typicality in forensic applications. For any automated or semi-automated LR system, rigorous validation and calibration are essential to ensure the reported LRs truthfully represent the strength of the evidence, thereby preventing misleading conclusions. When integrated within a CAI framework, the LR provides a structured and transparent methodology for researchers, scientists, and drug development professionals to make objective, data-driven decisions, from diagnosing diseases and evaluating forensic evidence to identifying novel drug targets.