Building Better Evidence: A Comprehensive Guide to Developing Forensic Text Comparison Datasets

This article provides a structured framework for researchers and forensic professionals developing datasets for forensic text comparison (FTC).

Building Better Evidence: A Comprehensive Guide to Developing Forensic Text Comparison Datasets

Abstract

This article provides a structured framework for researchers and forensic professionals developing datasets for forensic text comparison (FTC). It explores the foundational principles of FTC, including the linguistic basis of authorship and the critical role of empirical validation. The guide details modern methodological approaches, from leveraging Large Language Models (LLMs) for synthetic data generation to constructing Question-Context-Answer (Q-C-A) formats. It addresses key challenges such as algorithmic bias, topic mismatch, and data scarcity, offering practical optimization strategies. Furthermore, the article establishes a robust validation framework centered on the Likelihood Ratio (LR) and comparative performance analysis, aiming to advance the creation of reliable, forensically relevant datasets that meet the stringent demands of legal admissibility and scientific rigor.

The Linguistic and Evidential Basis of Forensic Text Comparison

An idiolect refers to the unique and distinctive language use of an individual speaker or writer. This linguistic fingerprint encompasses vocabulary preferences, syntactic patterns, grammatical idiosyncrasies, and other stylistic features that remain consistent across an individual's texts. In forensic authorship analysis, the systematic examination of idiolect provides the theoretical foundation for determining authorship of questioned documents, whether in criminal investigations, civil litigation, or academic integrity cases. The development of robust datasets and standardized protocols for idiolect analysis represents a critical research direction for advancing the scientific rigor of forensic text comparison.

The idiolect R package provides a comprehensive suite of tools specifically designed for comparative authorship analysis within a forensic context using the Likelihood Ratio Framework [1]. This package implements several authorship analysis methods that process sets of texts and output scores that can be calibrated into likelihood ratios, offering a statistically grounded approach to quantifying the strength of authorship evidence. Dependent on the quanteda package for natural language processing functions, idiolect enables researchers to perform sophisticated analyses while maintaining methodological transparency [1] [2].

Experimental Protocols for Authorship Analysis

Corpus Creation and Preprocessing Protocol

Purpose: To create a standardized textual corpus suitable for authorship analysis.

Materials Required:

- Digital text documents from known and questioned authors

- R statistical software environment

idiolectandquantedaR packages

Procedure:

- Data Collection: Gather text documents of sufficient length (typically > 500 words per document) from potential authors. Ensure documents are in plain text format (.txt).

- Corpus Initialization: Use the

create_corpus()function from theidiolectpackage to import texts into a structured corpus object [1]. - Text Cleaning:

- Remove extraneous formatting, headers, and footers

- Normalize text by converting to lowercase

- Handle special characters and punctuation appropriately

- Content Masking (Optional): Apply the

contentmask()function to reduce topic-dependent vocabulary, focusing analysis on stylistic rather than content features [1]. - Feature Extraction: Transform texts into document-feature matrices capturing lexical, syntactic, or character-level features.

Troubleshooting Tips:

- For imbalanced corpora, consider stratified sampling approaches

- Validate text encoding to prevent character recognition errors

- Document all preprocessing decisions for methodological transparency

Authorship Method Application Protocol

Purpose: To apply computational authorship analysis methods to distinguish between authors.

Materials Required:

- Preprocessed textual corpus

- R software with

idiolectpackage installed - High-performance computing resources for large datasets

Procedure:

- Feature Selection: Identify the linguistic features to analyze (e.g., function words, character n-grams, syntactic patterns).

- Method Selection: Choose appropriate authorship analysis methods based on research questions:

- Method Application: Execute selected methods using corresponding functions in the

idiolectpackage. - Performance Validation: Test method performance on ground truth data using the

performance()function to evaluate accuracy metrics [1]. - Likelihood Ratio Calibration: Apply

calibrate_LLR()to questioned texts to generate likelihood ratios quantifying evidence strength [1].

Quality Control Measures:

- Implement cross-validation procedures

- Establish confidence intervals for accuracy metrics

- Document all parameter settings and methodological choices

Validation and Likelihood Ratio Framework Protocol

Purpose: To validate authorship analysis results and express findings within the Likelihood Ratio framework.

Materials Required:

- Processed output from authorship analysis methods

- Ground truth data for validation

- R software with

idiolectpackage

Procedure:

- Performance Assessment: Use the

performance()function to evaluate method accuracy on texts with known authorship, generating metrics such as precision, recall, and AUC values [1]. - Likelihood Ratio Calculation: Apply the

calibrate_LLR()function to questioned texts to compute likelihood ratios that quantify the strength of evidence for authorship hypotheses [1]. - Uncertainty Quantification: Calculate confidence intervals for likelihood ratios using bootstrapping or other resampling methods.

- Sensitivity Analysis: Test the robustness of results to different parameter settings and feature selections.

- Result Interpretation: Frame conclusions according to the Likelihood Ratio framework, avoiding categorical claims of authorship.

Interpretation Guidelines:

- Likelihood ratios > 1 support the prosecution hypothesis

- Likelihood ratios < 1 support the defense hypothesis

- Likelihood ratios close to 1 provide limited evidential value

- Always report confidence intervals and methodological limitations

Data Presentation and Analysis

Table 1: Performance Metrics of Authorship Analysis Methods

| Method | Precision | Recall | F1-Score | AUC-ROC | Optimal Text Length |

|---|---|---|---|---|---|

| Delta | 0.85 | 0.82 | 0.835 | 0.89 | > 1,000 words |

| N-gram Tracing | 0.88 | 0.79 | 0.833 | 0.91 | > 500 words |

| Impostors Method | 0.92 | 0.85 | 0.884 | 0.94 | > 1,500 words |

| LambdaG | 0.94 | 0.89 | 0.914 | 0.96 | > 800 words |

Table 2: Feature Type Performance in Authorship Attribution

| Feature Category | Sample Features | Accuracy (%) | Cross-Author Stability | Topic Resistance |

|---|---|---|---|---|

| Function Words | "the", "and", "of", "to" | 78.3 | High | High |

| Character N-grams | "ing", "the_" | 85.7 | Medium | High |

| Syntactic Patterns | POS tag sequences | 82.4 | High | Medium |

| Vocabulary Richness | Type-token ratio | 65.2 | Low | Low |

| Punctuation Patterns | Comma usage frequency | 71.8 | Medium | High |

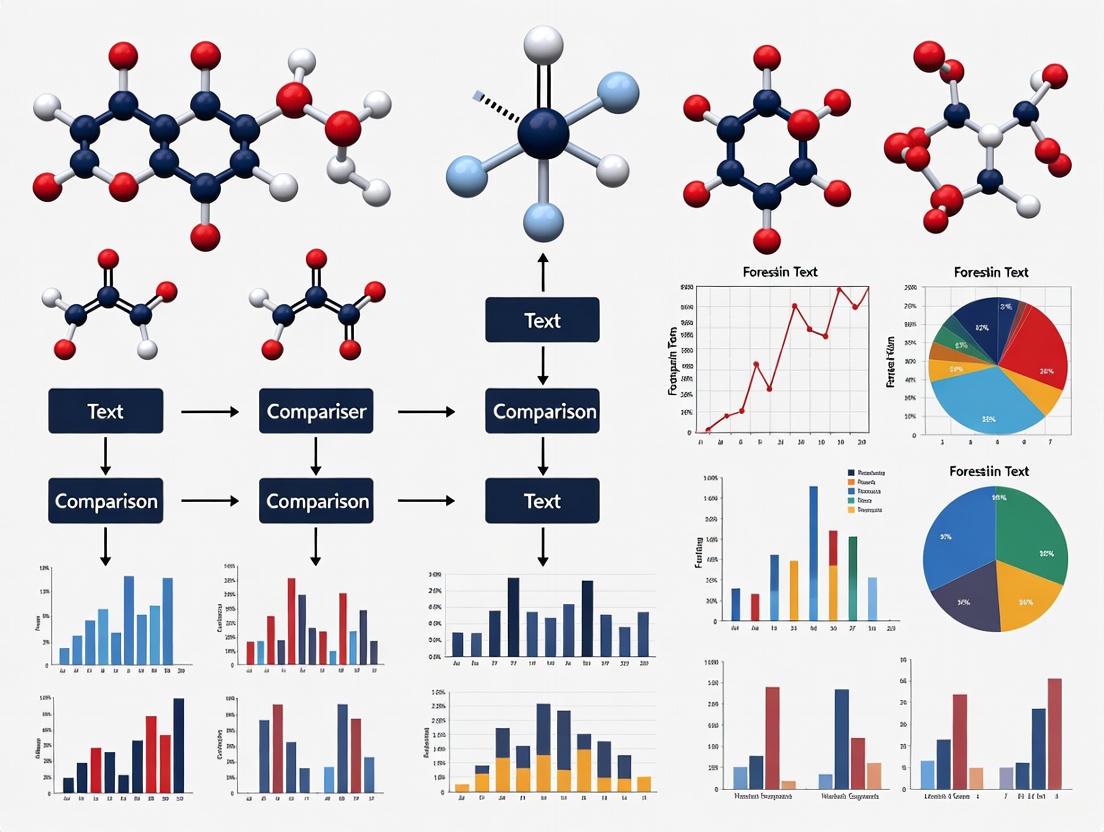

Workflow Visualization

Forensic Authorship Analysis Workflow

Linguistic Feature Analysis Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Forensic Authorship Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| idiolect R Package | Provides comprehensive suite for comparative authorship analysis using Likelihood Ratio Framework | Primary analysis tool for forensic text comparison [1] |

| quanteda R Package | Natural language processing infrastructure for text analysis | Required dependency for text preprocessing and feature extraction [1] |

| MAXDictio/MAXQDA | Quantitative text analysis with vocabulary and dictionary-based analysis | Alternative commercial solution for quantitative content analysis [3] |

| ForensicsData Dataset | Extensive Question-Context-Answer dataset from malware reports | Model dataset for forensic text analysis development [4] |

| PubMed Central Corpus | 15 million full-text scientific articles for methodological validation | Large-scale corpus for testing authorship methods [5] |

| ANY.RUN Platform | Malware analysis reports for forensic dataset development | Source of authentic forensic texts for dataset creation [4] |

| LambdaG Method | Advanced authorship analysis method with improved performance | State-of-the-art technique for authorship attribution [2] |

The Likelihood Ratio (LR) framework is widely recognized as the logically and legally correct approach for evaluating the strength of forensic evidence, including textual evidence in forensic text comparison (FTC) [6]. It provides a transparent, reproducible, and quantitative method that is intrinsically resistant to cognitive bias, making it particularly suitable for scientific and legal applications [6]. The core of this framework is a formula that compares the probability of the observed evidence under two competing hypotheses [6]:

LR = p(E|Hp) / p(E|Hd)

In this equation, p(E|Hp) represents the probability of observing the evidence (E) given that the prosecution hypothesis (Hp) is true, while p(E|Hd) represents the probability of the same evidence given that the defense hypothesis (Hd) is true [6]. In practical terms, these probabilities can be interpreted as measuring similarity (how similar the compared texts are) and typicality (how distinctive this similarity is within the relevant population) [6].

The LR framework logically updates the beliefs of the trier-of-fact through Bayes' Theorem, which in its odds form states [6]:

Prior Odds × LR = Posterior Odds

This means that the fact-finder's prior belief about the hypotheses (prior odds) is rationally updated by the strength of the forensic evidence (LR) to form a new belief (posterior odds) [6]. Critically, the forensic scientist's role is limited to presenting the LR, as they are not positioned to know the fact-finder's prior beliefs, and venturing into posterior probabilities would encroach on the ultimate issue of guilt or innocence [6].

Essential Principles for Validation

For LR-based systems to be scientifically defensible, they must undergo rigorous empirical validation. Research in forensic science broadly, and in FTC specifically, indicates that proper validation must fulfill two critical requirements [6]:

- Reflecting Casework Conditions: The experimental design must replicate the conditions of the case under investigation.

- Using Relevant Data: The data used for validation must be appropriate and relevant to the specific case.

These requirements are crucial because the presence of mismatches between compared documents (e.g., in topics, genres, or communicative situations) can significantly impact system performance [6]. The complex nature of textual data, where writing style is influenced by multiple factors including the author's idiolect, social background, and immediate context, makes validation under realistic conditions essential for reliable results [6].

Table 1: Key Requirements for Empirical Validation of LR Systems in FTC

| Requirement | Description | Implication for FTC Research |

|---|---|---|

| Casework Condition Replication | Experimental setup must mirror the conditions of actual cases under investigation. | Researchers must identify and simulate realistic mismatches (e.g., in topics, genres) that occur in real forensic texts [6]. |

| Use of Relevant Data | Data used for validation must be appropriate to the case circumstances. | Dataset collection must prioritize authenticity and relevance, including factors like text type, topic variation, and author demographics [6]. |

| Quantitative Measurement | Use of numerical measurements of evidential properties. | Relies on computational text analysis, such as stylometric features (e.g., vocabulary richness, punctuation patterns) [7]. |

| Statistical Modeling | Application of probabilistic models to interpret the measured data. | Implementation of statistical models (e.g., Multivariate Kernel Density, Poisson models) for LR calculation [7] [8]. |

Experimental Protocols for FTC Research

Core Protocol: LR-Based Authorship Analysis with Stylometric Features

This protocol outlines a methodology for evaluating the strength of authorship attribution evidence using word- and character-based stylometric features within the LR framework, based on published research [7].

Purpose: To quantify the strength of evidence for authorship attribution using multivariate likelihood ratios and to investigate the effect of sample size on system performance.

Materials and Reagents:

- Text Corpora: Collection of texts from multiple authors. The protocol used chatlog messages from 115 authors in a real forensic context [7].

- Text Analysis Software: Tools for extracting stylometric features from texts (e.g., vocabulary richness, average characters per word, punctuation ratios) [7].

- Statistical Computing Environment: Software capable of implementing the Multivariate Kernel Density formula and calculating log-likelihood ratio costs (Cllr), such as R or Python with appropriate statistical libraries [7].

Procedure:

- Text Preparation:

- Select authentic text samples relevant to the forensic context (e.g., chatlogs, reviews, emails).

- For each author, create text samples of varying lengths (e.g., 500, 1000, 1500, and 2500 words) to analyze the impact of sample size [7].

- Feature Extraction:

- For each text sample, extract a set of stylometric features. Robust features include [7]:

- Average character number per word token

- Punctuation character ratio

- Vocabulary richness measures

- For each text sample, extract a set of stylometric features. Robust features include [7]:

- Likelihood Ratio Calculation:

- System Performance Assessment:

- Data Analysis:

- Compare discrimination accuracy across different sample sizes.

- Analyze the magnitude of LRs that are consistent-with-fact versus those that are contrary-to-fact [7].

Expected Outcomes:

- The system should achieve higher discrimination accuracy with larger sample sizes (e.g., approximately 76% with 500 words versus 94% with 2500 words, as reported in one study) [7].

- Larger sample sizes should improve discriminability, increase the magnitude of correct LRs, and decrease the magnitude of incorrect LRs [7].

- Features such as 'Average character number per word token', 'Punctuation character ratio', and vocabulary richness measures should demonstrate robustness across varying sample sizes [7].

Advanced Protocol: Feature-Based Comparison Using a Poisson Model

This protocol describes a feature-based method for forensic text comparison using a Poisson model for likelihood ratio estimation, which has demonstrated advantages over traditional score-based methods [8].

Purpose: To implement a feature-based LR estimation method that accounts for both similarity and typicality, overcoming limitations of distance-based measures.

Materials and Reagents:

- Text Corpora: Large collection of texts from numerous authors (e.g., 2,157 authors) to ensure robust background population representation [8].

- Computational Linguistics Tools: Software for extracting linguistic features from text corpora.

- Statistical Software: Environment capable of implementing Poisson models and calculating Cosine distances for comparative analysis.

Procedure:

- Data Collection:

- Gather a substantial dataset of texts from a wide array of authors to build a representative background population [8].

- Methodology Comparison:

- Implement a score-based method using Cosine distance as a baseline, as distance measures are standard in authorship attribution studies [8].

- Implement a feature-based method using a Poisson model, which is theoretically more appropriate for textual data as it can handle the violation of statistical assumptions common in distance-based models [8].

- Feature Selection:

- For the feature-based method, perform feature selection to identify the most discriminative linguistic features for authorship attribution [8].

- LR Estimation and Evaluation:

- Estimate LRs using both methods.

- Evaluate system performance using the log-likelihood ratio cost (Cllr) to compare the discrimination accuracy of both approaches [8].

Expected Outcomes:

- The feature-based Poisson model method is expected to outperform the score-based Cosine distance method by a measurable Cllr value (approximately 0.09 under optimal settings) [8].

- Feature selection should further improve the performance of the feature-based method [8].

- The feature-based method should provide more reliable LR estimates as it assesses both similarity and typicality, unlike distance-based models that primarily measure similarity [8].

Visualization of Workflows

LR Framework Logic and Application

Experimental Validation Workflow

The Researcher's Toolkit

Table 2: Essential Research Reagents and Materials for FTC-LR Research

| Tool/Category | Specific Examples | Function in FTC-LR Research |

|---|---|---|

| Text Corpora | Amazon Authorship Verification Corpus (AAVC) [6], Forensic Chatlog Archives [7] | Provides authentic textual data for developing and validating LR systems; enables testing under realistic conditions including topic mismatch. |

| Stylometric Features | Vocabulary Richness, Punctuation Character Ratio, Average Characters Per Word [7] | Serves as measurable linguistic elements that capture authorial style; used as variables in statistical models for LR calculation. |

| Statistical Models | Multivariate Kernel Density Formula [7], Poisson Models [8] | Provides the mathematical framework for calculating likelihood ratios from observed textual features; translates similarity and typicality into quantitative LRs. |

| Performance Metrics | Log-Likelihood Ratio Cost (Cllr) [7] [8], Tippett Plots [6] | Assesses the discrimination accuracy and validity of the LR system; Cllr provides an overall measure of system performance across all LRs. |

| Validation Frameworks | Casework-Replication Protocol, Relevant-Data Requirement [6] | Ensures empirical validation reflects real forensic conditions; critical for establishing scientific defensibility and demonstrable reliability of FTC methods. |

Forensic text comparison (FTC) is a scientific discipline that involves the analysis and interpretation of textual evidence for legal purposes. The core challenge resides in establishing a methodology that ensures results are not only scientifically sound but also legally admissible in court. The admissibility of forensic evidence, including textual analysis, is often judged against standards such as the Daubert Standard, which provides a legal framework for assessing the reliability and validity of scientific evidence [9] [10]. This standard emphasizes several key factors: the testability of the methods used, their submission to peer review, the establishment of known error rates, and the general acceptance of the methodologies within the relevant scientific community [9] [10].

A scientifically defensible approach to FTC increasingly relies on a framework incorporating quantitative measurements, statistical models, and the Likelihood Ratio (LR) as a measure of evidentiary strength [6]. The LR quantitatively expresses the probability of the evidence under two competing hypotheses: the prosecution hypothesis (Hp) and the defense hypothesis (Hd) [6]. Crucially, the empirical validation of any FTC system or methodology must be performed by replicating the conditions of the case under investigation and using data relevant to that specific case [6]. Failure to do so risks producing misleading results and compromises the legal admissibility of the evidence.

Core Data Requirements for Forensic Relevance

For a data set to be considered forensically relevant and to satisfy the prerequisites for legal admissibility, it must be constructed with specific, rigorous criteria in mind. These requirements ensure that the analysis is both scientifically robust and applicable to the context of a real-world investigation.

Table 1: Core Data Requirements for Forensic Text Comparison

| Requirement Category | Description | Legal/Scientific Rationale |

|---|---|---|

| Casework Relevance | Data must reflect the specific conditions of the case under investigation, including potential confounding factors like topic mismatch between known and questioned documents [6]. | Ensures external validity and meets Daubert's requirement for reliable application to case facts. |

| Data Authenticity & Integrity | The provenance and integrity of data must be verifiable, often through hash validation and a documented chain of custody [11]. | Authenticity is a foundational requirement for evidence admissibility under rules of evidence (e.g., Rule 901) [11]. |

| Representative Sampling | Data must be representative of the population of potential authors and the stylistic variations within a single author's idiolect. | Strengthens the statistical model's accuracy and the reliability of the calculated Likelihood Ratio [6]. |

| Quantitative Measurement | Data must be amenable to quantitative feature extraction (e.g., lexical, syntactic, character-level features). | Moves analysis from subjective opinion to objective, testable science, satisfying a key Daubert factor [6]. |

| Metadata Completeness | Data should be accompanied by relevant metadata (e.g., genre, topic, creation date, medium) to control for stylistic covariates. | Allows for proper experimental design and validation under controlled, case-realistic conditions [6]. |

Beyond the requirements outlined in Table 1, researchers must account for the complexity of textual evidence. A text encodes not only information about its authorship but also about the author's social group and the communicative situation (e.g., topic, genre, formality) [6]. A forensically relevant data set must therefore allow for the isolation of authorship signals from these other confounding factors. The concept of "idiolect"—an individual's distinctive way of speaking and writing—is central to this endeavor and is compatible with modern theories of language processing [6].

Experimental Protocols for Validation

To validate an FTC methodology and establish its error rates, a structured experimental protocol is essential. The following provides a detailed methodology for a validation study targeting a specific case condition, such as topic mismatch.

Protocol: Validation under Topic Mismatch Conditions

1. Objective: To empirically determine the performance and reliability of a forensic text comparison system when the known and questioned documents exhibit a mismatch in topic.

2. Hypotheses:

- Hp (Prosecution Hypothesis): The questioned and known documents were written by the same author.

- Hd (Defense Hypothesis): The questioned and known documents were written by different authors.

3. Experimental Design:

- Data Collection & Curation: Assemble a corpus of documents from multiple authors. For each author, collect texts written on multiple distinct topics. The topics should be sufficiently different to represent a realistic challenge for the authorship attribution model.

- Data Partitioning: For each author, designate one topic as the "known" data and a different topic as the "questioned" data. This creates a cross-topic comparison scenario.

- Likelihood Ratio Calculation: For each author and topic pair, calculate the Likelihood Ratio (LR) using a pre-defined statistical model (e.g., a Dirichlet-multinomial model). The LR is computed as

LR = p(E|Hp) / p(E|Hd), whereErepresents the quantitative evidence extracted from the texts [6]. - Model Calibration: Apply a post-hoc calibration, such as logistic regression calibration, to the output LRs to improve their reliability and interpretability [6].

- Performance Assessment: Evaluate the calibrated LRs using metrics like the log-likelihood-ratio cost (Cllr) and visualize the results using Tippett plots [6]. These tools help assess the discrimination and calibration of the system, effectively establishing its "error rate."

4. Controls and Replication:

- The experiment should include same-author/different-topic and different-author/different-topic comparisons.

- Following best practices in digital forensics, key experiments should be performed in triplicate to establish repeatability metrics [9].

The following workflow diagram illustrates the key stages of this experimental protocol.

Experimental Workflow for FTC Validation

The Researcher's Toolkit for Forensic Text Comparison

The successful implementation of FTC research requires a suite of methodological tools and conceptual frameworks. The table below details essential components of the researcher's toolkit.

Table 2: Essential Research Reagent Solutions for Forensic Text Comparison

| Tool Category | Specific Example(s) | Function in FTC Research |

|---|---|---|

| Statistical Framework | Likelihood Ratio (LR), Bayes' Theorem | Provides a logically and legally sound method for evaluating and interpreting the strength of evidence [6]. |

| Computational Models | Dirichlet-Multinomial Model, n-gram models, Deep Learning Models | Enables the quantitative analysis of textual data and the calculation of probabilities underpinning the LR [6]. |

| Validation Software | LRmix Studio, STRmix, EuroForMix | Software platforms (from related forensic fields) that demonstrate the implementation of qualitative and quantitative models for LR calculation and validation [12]. |

| Performance Metrics | Log-Likelihood-Ratio Cost (Cllr), Tippett Plots | Used to empirically assess the validity, discrimination, and calibration of a forensic inference system, thereby establishing its reliability and error rates [6]. |

| Data Integrity Tools | Hash Validation (e.g., MD5, SHA-256), Chain-of-Custody Documentation | Critical for maintaining and demonstrating the authenticity and integrity of digital evidence from collection to analysis [11]. |

The following diagram illustrates the logical and procedural relationships between the core components of the FTC research process, from data preparation to legal presentation.

Core Logical Flow for FTC Research

Application Note: Understanding the Core Challenges

The development of forensically relevant datasets for text comparison research is fundamentally constrained by a triad of interconnected challenges: the scarcity of representative data, stringent privacy protections, and multifaceted legal restrictions. These barriers impede the creation of standardized evaluation frameworks and hinder the validation of methods under true casework conditions.

Dataset Scarcity arises from the absence of large-scale, realistic collections that mirror the complex variables encountered in forensic casework. The Forensic Handwritten Document Analysis Challenge 2025 highlights this by creating a novel dataset specifically to address the need for diverse handwriting styles, writing instruments, and environmental conditions for cross-modal authorship verification [13]. Furthermore, the problem extends to the nuanced representation of casework conditions. Research demonstrates that validation must be performed using data relevant to the specific case under investigation, including factors like topic mismatch between documents, which significantly impacts the reliability of forensic text comparison methods [6].

Privacy Considerations are paramount, especially when dealing with personal communications like text messages or social media data. The handling of such data is governed by strict legal frameworks. Research into the forensic analysis of social media data for criminal investigations underscores the critical need to adhere to privacy laws such as GDPR and country-specific jurisdiction guidelines, often requiring legal warrants or subpoenas for access to private data [14]. The Clearview AI litigation globally exemplifies the heightened sensitivity surrounding biometric and personal data, where evidence of processing must meticulously document data collection, storage, and access patterns [15].

Legal Restrictions encompass both the admissibility of digital evidence in court and the legal authority to collect data. Courts increasingly demand technical proof over policy narratives, expecting reproducible evidence like network logs and packet captures to prove data transfers and tracking [15]. For evidence to be admissible, the methods used must satisfy legal standards such as the Daubert Standard, which assesses factors like testability, peer review, error rates, and general acceptance by the scientific community [9]. The ISO 21043 international standard for forensic science further provides a framework to ensure the quality of the entire forensic process, from recovery to reporting [16].

Table 1: Core Challenges in Forensic Text Comparison Dataset Development

| Challenge | Key Aspects | Impact on Dataset Development |

|---|---|---|

| Dataset Scarcity | Lack of large-scale, realistic data; Need to represent diverse casework conditions (e.g., topic mismatch, writing modalities) [13] [6] | Limits model training and robust validation, risking poor real-world performance. |

| Privacy | Compliance with GDPR, CCPA, and other data protection laws; Sensitivity of personal communications and biometric data [14] [15] | Restricts data sourcing and sharing, necessitates anonymization and secure storage protocols. |

| Legal Restrictions | Admissibility standards (e.g., Daubert); Legal authority for data collection (warrants, subpoenas); ISO 21043 forensic standards [16] [15] [9] | Dictates the methodologies for data acquisition and evidence handling to ensure judicial acceptance. |

Protocol for Developing a Forensically Relevant Text Dataset

This protocol outlines a standardized methodology for the collection, validation, and documentation of textual data intended for forensic comparison research, ensuring scientific rigor and compliance with legal and privacy norms.

Phase 1: Project Definition and Legal Scoping

Objective: Define the dataset's scope and establish a legally compliant foundation for data collection.

- Define Casework Conditions: Formally specify the

relevant conditionsthe dataset must reflect. This includes:- Modality: Scanned paper documents vs. digitally born documents (e.g., from tablets) [13].

- Topic Variation: Explicitly plan for and document topic mismatches between known and questioned text samples [6].

- Text Type: Define the genres and formats (e.g., formal letters, social media messages, handwritten notes).

- Legal Compliance Review:

- Identify all applicable privacy laws (e.g., GDPR, CCPA) based on the data source and jurisdiction [14] [15].

- Determine the lawful basis for data collection (e.g., explicit participant consent, court order, subpoena). For public data, review terms of service.

- Consult with legal experts to ensure the data collection and usage plan is court-defensible.

Phase 2: Data Acquisition and Preservation

Objective: Collect data using forensically sound methods that preserve integrity and chain of custody.

- Source Data Collection:

- Cloud/Server Data: For data from services like email or social media, issue legal processes (e.g., subpoenas) to the service provider to obtain data bundles. Avoid relying solely on screenshots [17].

- Device Data: Perform a

forensic acquisitionof the physical device (e.g., smartphone, computer) using specialized tools (e.g., Autopsy, FTK) to create a bit-for-bit copy. This captures deleted items and metadata and allows for integrity verification viacryptographic hash values(e.g., SHA-256) [9] [18] [17]. - Manual Examination (If acquisition is impossible): If a forensic acquisition is not feasible (e.g., with a witness's device), a manual examination may be conducted with strict documentation:

- Obtain written consent from the device owner.

- Perform a continuous

video recordingof the entire process, from power-on to navigating to and photographing the relevant messages. - Photograph the device, messages, and associated metadata [18].

- Preservation of Integrity:

Phase 3: Data Preparation and Validation

Objective: Process the raw data into a structured, research-ready dataset and validate its quality and representativeness.

- Anonymization and Redaction:

- Remove or redact all direct personal identifiers (names, addresses, phone numbers) and sensitive personal information to comply with privacy laws [14].

- Document all redaction procedures performed.

- Structuring and Annotation:

- Structure the data into pairs (questioned vs. known samples) with binary labels indicating whether they originate from the same author [13].

- Annotate the data with metadata detailing the

casework conditions, such as modality, topic, and writing instrument.

- Empirical Validation:

- Design validation experiments that test the dataset's utility. This involves using statistical models to calculate

Likelihood Ratios (LRs)and evaluating system performance using metrics like thelog-likelihood-ratio cost (Cllr)[6] [19]. - The validation must demonstrate that the dataset can be used to develop methods that are

calibrated and validated under casework conditions[16].

- Design validation experiments that test the dataset's utility. This involves using statistical models to calculate

Dataset Development Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Forensic Dataset Development

| Tool / Material | Function in Research |

|---|---|

| Cryptographic Hash Algorithms (SHA-256, MD5) | Provides a digital fingerprint for data, verifying the integrity of the forensic image and proving it has not been altered since collection [9] [17]. |

| Open-Source Forensic Tools (Autopsy, Sleuth Kit) | Cost-effective software for creating forensic acquisitions of digital devices. Their reliability for evidence admissibility is strengthened when used within a validated framework [9]. |

| Write-Blocking Hardware | A physical device that allows a computer to read data from a storage drive (e.g., HDD) without any possibility of writing to it, preserving evidence integrity [17]. |

| Likelihood Ratio (LR) Framework | The logically correct framework for interpreting forensic evidence strength. It quantifies the probability of the evidence under two competing hypotheses (same source vs. different sources) [6] [16] [19]. |

| Validation Metrics (Cllr, Tippett Plots) | Used to empirically validate the performance of a forensic method. Cllr measures the overall accuracy of the LR system, while Tippett plots visualize the distribution of LRs for same-source and different-source comparisons [6] [19]. |

| ISO 21043 Forensic Standard | An international standard providing requirements and recommendations to ensure the quality of the entire forensic process, from vocabulary and recovery to interpretation and reporting [16]. |

| Daubert Standard Criteria | A legal test used to assess the admissibility of expert scientific testimony. Guides researchers to ensure their methods are testable, peer-reviewed, have known error rates, and are generally accepted [9]. |

Modern Methods for Building Forensic Text Comparison Datasets

Leveraging Large Language Models (LLMs) for Synthetic Data Generation

The field of digital forensics faces a significant challenge: a scarcity of realistic, publicly available datasets for training and evaluating analytical tools due to stringent privacy regulations, legal restrictions, and the inherently sensitive nature of forensic evidence [4]. This data scarcity hampers the development of robust forensic tools and limits research reproducibility, particularly in specialized sub-fields like forensic text comparison [4]. Synthetic data generation using Large Language Models (LLMs) presents a transformative solution to this bottleneck. By leveraging LLMs to create artificial datasets that preserve the linguistic and structural properties of authentic forensic data, researchers can generate the large-scale, diverse training and testing resources necessary for advancing forensic text comparison research without relying on sensitive real-world evidence [4].

Core Methodologies for LLM-Driven Synthetic Data Generation

Several methodological paradigms have emerged for generating high-quality synthetic data using LLMs. The table below summarizes the primary approaches, their mechanisms, and relevant applications in forensic contexts.

Table 1: Core Methodologies for LLM-Driven Synthetic Data Generation

| Method | Mechanism | Key Features | Representative Techniques | Relevance to Forensic Text Analysis |

|---|---|---|---|---|

| Prompt-Based Generation [20] [21] | Uses carefully crafted instructions to guide a pre-trained LLM to generate specific data types. | Highly accessible; leverages model's inherent knowledge; requires meticulous prompt engineering. | Direct prompting, few-shot examples. | Generating synthetic suspect statements, forensic reports, or phishing emails with specified stylistic attributes. |

| Data Evolution [20] | Iteratively enhances simple seed queries into more complex and diverse instructions. | Systematically increases complexity and diversity; mimics realistic data variation. | In-depth evolving, in-breadth evolving, elimination evolving. | Creating complex forensic query pairs for text comparison from basic templates. |

| Self-Improvement [20] | A model generates data iteratively from its own output without external dependencies. | Enables model alignment without external models; risk of amplifying biases. | Self-Instruct, STaR (Bootstrapping Reasoning With Reasoning) [22]. | Refining a model's capability to generate forensic linguistic patterns internally. |

| Distillation [20] | A stronger, often larger, model generates synthetic data to train or evaluate a weaker model. | Achieves higher data quality; limited only by the best available model. | Symbolic Knowledge Distillation [22]. | Transferring forensic analysis expertise from a powerful, general-purpose LLM to a smaller, specialized model. |

| Retrieval-Augmented Generation (RAG) [23] | Grounds the LLM's generation process by retrieving relevant information from a knowledge base before synthesis. | Enhances factual consistency and traceability; reduces hallucination. | Vector database integration, context-aware generation. | Ensuring synthetic forensic texts are grounded in real-world legal or procedural contexts. |

Application Notes and Protocols for Forensic Text Comparison

The following section outlines specific protocols and workflows for generating synthetic datasets tailored to forensic text comparison research.

Protocol 1: Generating a Synthetic Forensic Q&A Dataset

This protocol, inspired by the creation of the ForensicsData dataset, details the generation of Question-Context-Answer (Q-C-A) triplets for evaluating forensic text analysis capabilities [4].

Workflow Overview:

Detailed Procedure:

Data Collection and Preprocessing:

- Source: Collect raw text data from relevant forensic sources. For malware analysis, this could be execution reports from platforms like ANY.RUN [4]. For text comparison, this could be a corpus of genuine forensic texts (e.g., transcribed interviews, police reports) where privacy-permitting.

- Preprocessing: Clean the source data (e.g., remove excess whitespace, standardize formats). For document-based generation, split documents into coherent chunks using a token splitter, considering chunk size and overlap to mirror the application's retriever logic [20].

Structured Data Extraction:

- Programmatically extract structured elements from the preprocessed reports. This includes metadata (e.g., author demographics, document type) and key textual entities (e.g., named entities, specific forensic terminology, psychological markers).

LLM-Driven Q-C-A Synthesis:

- Model Selection: Choose a state-of-the-art LLM suitable for the task. Evaluations suggest models like Gemini 2 Flash have demonstrated strong performance in aligning with forensic terminology [4].

- Prompt Engineering: Design a system prompt that instructs the LLM to generate Q-C-A triplets based on the provided structured data and context chunks. The prompt should specify:

- Question Generation: To create questions that a forensic analyst might ask about the text.

- Context Sourcing: To clearly identify the text span from the source data that contains the answer.

- Answer Formulation: To provide a precise, context-grounded answer.

- Execution: Process the extracted data through the LLM in a parallelized, automated pipeline to generate a large pool of candidate Q-C-A triplets [4].

Multi-Stage Quality Validation:

- Format Validation: Automatically check that all triplets adhere to the required Q-C-A structure.

- Semantic Deduplication: Remove triplets that are semantically redundant to ensure dataset diversity [4].

- LLM-as-a-Judge: Use a separate, powerful LLM to score the quality of the triplets based on criteria such as clarity, consistency, relevance, and factual correctness against the source context [20] [4].

- Expert Review (Optional but Recommended): Have domain experts (e.g., forensic linguists) review a subset of the data to validate its forensic relevance and accuracy.

Protocol 2: Evolving Simple Texts for Complex Psycholinguistic Analysis

This protocol uses data evolution techniques to create complex textual data for analyzing psycholinguistic features like deception and emotion, which are central to forensic text comparison [20] [24].

Workflow Overview:

Detailed Procedure:

Seed Collection:

- Start with a small set of simple, human-created textual statements or queries. For a fictional crime scenario, this could be basic character statements or simple Q&A pairs [24].

In-Depth Evolution:

- Apply evolution techniques to make each seed instruction more complex. The LLM is prompted to:

- Complicate the input by adding constraints or multi-step reasoning requirements.

- Increase the need for reasoning, for example, by asking for justification.

- Incorporate deeper psycholinguistic features. For instance, evolve a straightforward alibi statement into one that contains subtle cues of deception, emotional distress, or subjective narration [20] [24].

- Example Evolution:

- Seed: "The suspect said he was at home all night."

- Evolved: "Generate a first-person alibi statement from a suspect who was at home all night but is experiencing high levels of fear and neutrality, and incorporates subtle deceptive elements regarding their interaction with a household object." [24]

- Apply evolution techniques to make each seed instruction more complex. The LLM is prompted to:

In-Breadth Evolution:

- From a single seed instruction, generate new yet related instructions to increase the diversity of the dataset. This creates multiple variations on a theme, covering different aspects of forensic text analysis [20].

Elimination Evolving:

- Filter the evolved dataset to remove instructions that are low-quality, failed, or do not meet the complexity and relevance criteria for forensic text comparison [20].

Styling and Formatting:

- Ensure the final evolved texts are styled appropriately for the target application. This may involve structuring the output in a specific JSON format for automated analysis or ensuring the text mimics the narrative style of a police interview transcript [20].

Quality Assurance and Validation Framework

Generating synthetic data for forensic research demands rigorous quality control to ensure the data's utility and reliability. The following table outlines a multi-faceted validation framework.

Table 2: Synthetic Data Quality Assurance and Validation Framework

| Validation Stage | Technique | Description | Key Metrics/Outcomes |

|---|---|---|---|

| Context Filtering [20] | LLM-as-Judge | Uses an LLM to evaluate and filter out low-quality context chunks (e.g., unintelligible, poorly structured) before synthetic input generation. | Clarity, Depth, Structure, Relevance, Precision, Novelty, Conciseness, Impact. |

| Input Filtering [20] | LLM-as-Judge | Evaluates the generated synthetic inputs (queries) based on specific criteria to ensure they are fit for purpose. | Self-containment, Clarity, Consistency, Relevance, Completeness. |

| Automated Validation [4] | Format & Semantic Checks | Applies automated checks for format correctness and semantic deduplication to remove redundant entries. | Format adherence, Diversity (low semantic similarity). |

| Expert Evaluation [4] | Human-in-the-Loop | Forensic domain experts assess a curated subset of the data for realism, relevance, and accuracy. | Forensic relevance, Realism, Ground-truth alignment. |

| Performance Benchmarking [25] | Model Fine-Tuning & Testing | The synthetic dataset is used to fine-tune a model (e.g., a specialized ForensicLLM), and performance is quantitatively evaluated against a baseline. |

Attribution accuracy (e.g., 86.6% [25]), Correctness, Relevance (via user surveys). |

The Scientist's Toolkit: Essential Research Reagents and Solutions

This section catalogs key tools, models, and datasets essential for implementing the aforementioned protocols in a forensic text comparison research context.

Table 3: Essential Research Reagents and Solutions for Forensic Synthetic Data Generation

| Item | Type | Function/Description | Example Instances |

|---|---|---|---|

| Base LLM | Model | A powerful, general-purpose model used for data generation and distillation. | GPT-4, Claude, Gemini 2 Flash [4], LLaMA series [25]. |

| Specialized Forensic LLM | Model | A fine-tuned model designed for digital forensics, used as a benchmark or for data annotation. | ForensicLLM (a fine-tuned LLaMA model) [25]. |

| Evaluation Framework | Software | An open-source framework to facilitate the generation and evaluation of synthetic data and LLM outputs. | DeepEval's Synthesizer [20]. |

| Forensic Dataset | Data | A publicly available, structured dataset for training and benchmarking models in forensic applications. | ForensicsData (5,000+ Q-C-A triplets from malware reports) [4]. |

| Vector Database | Infrastructure | Enables semantic search and Retrieval-Augmented Generation (RAG) by storing data as numerical vectors, ensuring generated content is grounded in a knowledge base. | Chroma, Pinecone, Weaviate [23]. |

| Fine-Tuning Library | Software | Provides efficient methods to adapt general LLMs to forensic terminology and tasks, reducing computational cost. | LoRA (Low-Rank Adaptation), QLoRA [23]. |

| Psycholinguistic Analysis Library | Software | Provides tools for extracting features relevant to forensic text comparison, such as deception and emotion. | Empath (for deception over time analysis) [24], LIWC (Linguistic Inquiry and Word Count). |

| Forensic Text Corpus | Data | A foundational collection of genuine forensic texts (e.g., interviews, reports) used as a source for context or for seed generation. | (Researcher must assemble, subject to privacy constraints). |

The Question-Context-Answer (Q-C-A) format provides a structured framework for developing forensic text comparison data sets. This methodology addresses the critical need for empirical validation in forensic science, which requires replicating case-specific conditions and using relevant data [6]. The Q-C-A structure ensures transparent documentation of the investigative process, from initial inquiry through analytical context to interpretative conclusions, facilitating scientifically defensible and demonstrably reliable forensic text analysis.

Quantitative Data Framework

WCAG Color Contrast Requirements for Data Visualization

The following table summarizes the minimum contrast ratios required for accessible data visualization, ensuring information is perceivable to all researchers and end-users of forensic data sets.

Table 1: WCAG Contrast Requirements for Visual Elements

| Element Type | Contrast Ratio (Enhanced) | Size & Weight Specifications | Application in Forensic Visualization |

|---|---|---|---|

| Normal Text | 7:1 [26] | Less than 18pt/24px or 14pt/19px bold [27] | Labels, annotations, detailed analysis text |

| Large Text | 4.5:1 [26] | At least 18pt/24px or 14pt/19px bold [27] | Headers, titles, highlighted findings |

| User Interface Components | 3:1 [28] | Graphical objects, charts, diagrams [28] | Timelines, network graphs, evidence boards |

| Logos, Brand Names | Exempt [26] | Decorative or non-informative | Institutional branding on reports |

Forensic Text Comparison Metrics

Table 2: Quantitative Metrics for Forensic Text Validation

| Metric | Application in Q-C-A Framework | Target Threshold | Data Relevance Requirement |

|---|---|---|---|

| Likelihood Ratio (LR) | Strength of evidence evaluation [6] | LR > 1 supports prosecution hypothesis; LR < 1 supports defense hypothesis [6] | Must reflect case conditions |

| Magic Number (Color Grade Difference) | Accessible data visualization [28] | 50+ for AA contrast; 70+ for AAA contrast [28] | Ensures readability for all users |

| Text Size Validation | Determining contrast requirements [29] | Minimum 18.66px for large text [29] | Accurate measurement of visual presentation |

| Log-Likelihood-Ratio Cost | Performance assessment of FTC systems [6] | Lower values indicate better performance [6] | Requires relevant reference data |

Experimental Protocols

Protocol: Implementing Q-C-A Framework for Forensic Text Comparison

Question Formulation Phase

- Define Competing Hypotheses: Establish prosecution hypothesis (Hp) that source-questioned and source-known documents share authorship, and defense hypothesis (Hd) that they originate from different authors [6].

- Identify Case Conditions: Document specific mismatches between documents (topics, genres, registers) that must be reflected in validation experiments [6].

- Establish Prior Odds: Recognize that prior belief of trier-of-fact forms before new evidence presentation, though quantification falls outside forensic scientist's role [6].

Context Documentation Phase

- Data Relevance Assessment: Select reference data that matches the specific conditions of the case under investigation, including topic domains, writing contexts, and demographic factors [6].

- Text Feature Extraction: Apply quantitative measurements of linguistic features including lexical, syntactic, and structural properties that constitute authorial "idiolect" [6].

- Cross-Topic Validation: Implement specific validation for topic mismatches, known to be challenging for authorship attribution algorithms [6].

Answer Derivation Phase

- Likelihood Ratio Calculation: Compute LR using statistical models (e.g., Dirichlet-multinomial model followed by logistic-regression calibration) [6].

- Performance Assessment: Evaluate derived LRs using log-likelihood-ratio cost and visualize using Tippett plots [6].

- Interpretation Framework: Present LR as quantitative statement of evidence strength without computing posterior odds, which would encroach on ultimate issue of guilt [6].

Protocol: Accessible Visualization for Forensic Data Presentation

Color Selection Process

- Magic Number Application: Calculate difference between color grades (e.g., gray-90 background with grade 40 or below text ensures AA contrast) [28].

- Relative Luminance Verification: Consult luminance ranges for specific grades to ensure WCAG contrast compliance [28].

- Color Deficiency Consideration: Avoid color-exclusive meaning conveyance, as approximately 4.5% of population has color insensitivity [28].

Timeline Construction for Digital Forensic Analysis

- Initial Event Anchoring: Begin with point of known compromise and fill in data chronologically before and after event [30].

- Multi-Thread Correlation: Create separate, color-coded timelines for different connection types, file activities, or user actions [30].

- Temporal Analysis: Correlate events across timelines to identify patterns, causality, and attacker intent [30].

Visualization Schematics

Q-C-A Framework Implementation Workflow

Forensic Text Comparison Validation Methodology

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Forensic Text Comparison Research

| Research Reagent | Function in Q-C-A Framework | Application Specification |

|---|---|---|

| Likelihood Ratio Framework | Quantitative evidence evaluation [6] | Calculates probability of evidence under competing hypotheses; prevents ultimate issue encroachment |

| Dirichlet-Multinomial Model | Statistical analysis of text features [6] | Processes quantitative measurements of linguistic properties for authorship attribution |

| Logistic Regression Calibration | Model performance optimization [6] | Adjusts derived likelihood ratios to improve accuracy and reliability |

| Color Grade System | Accessible data visualization [28] | Ensures WCAG compliance through magic number application (50+ for AA contrast) |

| Timelining Methodology | Visual correlation of digital events [30] | Maps chronological relationships in forensic data using visuospatial sketchpad principles |

| Tippett Plots | Visualization of LR performance [6] | Assesses calculated likelihood ratios across multiple validation trials |

| Contrast Color Function | Automated accessibility compliance [31] | CSS function returning white or black for maximum contrast with input color |

| Visuospatial Sketchpad Techniques | Enhanced cognitive processing [30] | Leverages human visual learning for pattern recognition in complex data sets |

The reliability of any forensic text comparison (FTC) study is fundamentally dependent on the quality and relevance of its underlying data sets. Developing robust, forensically realistic datasets is a critical prerequisite for the empirical validation that the field now demands [6]. This document provides detailed Application Notes and Protocols for sourcing and curating data from two prevalent modern domains: cybersecurity malware reports and social media platforms. The procedures outlined herein are designed to support the development of data sets for FTC research that meet the dual requirements of reflecting real-world case conditions and utilizing relevant data, thereby ensuring the scientific defensibility of the analysis [6].

Data Presentation: Malware and Social Media Landscapes

To inform data collection strategies, it is essential to first understand the current quantitative landscape of these domains. The following tables summarize key metrics and trends from 2025.

Table 1: Q3 2025 Open Source Malware Ecosystem Metrics [32]

| Metric | Value | Trend & Implication |

|---|---|---|

| New Malware Packages | 34,319 (Q3) | 140% increase from Q2; indicates rapidly accelerating threat volume. |

| Total Malware Packages | >877,000 | Cumulative threat environment is vast and requires filtering. |

| Most Common Threat Type | Data Exfiltration (37%) | Shift toward intelligence-gathering and data monetization. |

| Fastest-Growing Threat Type | Droppers (38% of Q3 threats) | 2,887% increase; signifies rise in multi-stage, modular attacks. |

| Notable Incident: Package Hijack | chalk, debug (npm) |

Impact on projects with >2B weekly downloads; highlights software supply chain risk. |

| Notable Incident: Self-Replicating Malware | Shai-Hulud worm (npm) | First-of-its-kind; compromised >500 components; demonstrates automated propagation. |

Table 2: 2025 Social Media Trends Relevant for Data Sourcing [33]

| Trend Category | Key Statistic | Implication for FTC Data Collection |

|---|---|---|

| Content Experimentation | >60% of social content aims to entertain, educate, or inform. | Data will contain diverse communicative purposes beyond promotion. |

| Brand Persona Shifts | 80-100% of content is entertainment-driven for 25% of organizations. | Authorial style (e.g., corporate brands) may vary significantly from other channels. |

| Outbound Engagement | 41% of organizations test proactive engagements (e.g., commenting on creators' posts). | Creates rich, interactive text for analyzing conversational style and response patterns. |

| AI-Generated Content | 69% of marketers see AI as revolutionary, with high adoption for content creation. | Introduces a new variable: machine-generated text that may mimic human authorship. |

Experimental Protocols for Data Sourcing and Curation

Protocol: Sourcing Malware Reports and Threat Intelligence

Objective: To collect a comprehensive corpus of malware-related text from trusted sources for analyzing the writing styles of threat actors and security researchers.

Materials:

- Computer with internet access

- Web scraping tool (e.g., Python

requests/BeautifulSoup, Scrapy) or API clients - Secure storage (e.g., encrypted drive or server)

- Data organization software (e.g., spreadsheet or database)

Methodology:

- Source Identification and Selection:

- Identify and vet high-authority sources such as security vendor blogs (e.g., Bitsight [34], Sonatype [32]), official cybersecurity advisories (e.g., CISA), and curated threat intelligence platforms.

- Prioritize sources that provide detailed technical analysis, Indicators of Compromise (IoCs), and excerpts from dark web forums.

Data Collection:

- For web-based sources, use a web scraper to extract the textual content from relevant reports and blog posts. Configure the scraper to respect

robots.txtand implement polite crawling delays. - Where available, use official APIs (e.g., Twitter API for threat intelligence feeds) for more efficient and structured data collection.

- For each collected text, record essential metadata in a structured format (e.g., CSV, JSON). This must include:

- For web-based sources, use a web scraper to extract the textual content from relevant reports and blog posts. Configure the scraper to respect

Data Sanitization:

- Remove all HTML/XML tags, extraneous JavaScript, and CSS.

- Identify and redact or remove any Personally Identifiable Information (PII) inadvertently present in the texts.

- Normalize text encoding to UTF-8 to ensure consistency.

Protocol: Curating Social Media Corpora

Objective: To build a dataset of social media texts suitable for studying authorial variation across platforms, topics, and time.

Materials:

- Computer with internet access

- Social media API access (e.g., X, Reddit, Meta)

- Social listening or data aggregation tools (e.g., Hootsuite [33])

- Data organization software

Methodology:

- Research Question Formulation:

- Define the specific FTC variable to be studied. This will dictate the collection parameters. Examples include:

- Topic Mismatch: Collecting posts from the same author on different topics.

- Platform-induced Style Shift: Collecting content from the same author on different platforms (e.g., X vs. Threads).

- AI vs. Human Authored Text: Collecting posts identified as AI-generated and those claimed to be human-written [33].

- Define the specific FTC variable to be studied. This will dictate the collection parameters. Examples include:

Stratified Data Collection:

- Use platform APIs to collect public posts based on predefined strata:

- Author Strata: Collect multiple posts from a set of identified authors.

- Topic Strata: Use keywords and hashtags to collect posts about specific topics (e.g., "malware," "content curation").

- Platform Strata: Collect data from multiple platforms to compare linguistic features.

- Temporal Strata: Collect data over time to analyze stylistic evolution.

- Use platform APIs to collect public posts based on predefined strata:

Metadata Annotation:

- Annotate each post with rich metadata, which is critical for subsequent experimental control [6]:

Author_IDPlatformTimestampTopic_CategoryPost_Type(e.g., original, reply, quote)Engagement_Metrics(e.g., likes, shares)AI_Flag(if determinable)

- Annotate each post with rich metadata, which is critical for subsequent experimental control [6]:

Protocol: Forensic Text Comparison Validation Experiment

Objective: To empirically validate an FTC methodology using a sourced and curated dataset, specifically testing its performance under a condition like topic mismatch.

Materials:

- Curated text corpus with author and topic annotations.

- Statistical software (e.g., R, Python with

scikit-learn). - Computational model for text comparison (e.g., Dirichlet-multinomial model [6]).

Methodology:

- Define Hypotheses and Conditions:

- Prosecution Hypothesis (Hp): The questioned and known documents were written by the same author.

- Defense Hypothesis (Hd): The questioned and known documents were written by different authors [6].

- Define the specific "condition" to test, e.g., "topic mismatch between known and questioned documents."

Create Experimental Pairs:

- Same-Author (SA) Pairs: Create document pairs where the same author writes two documents on different topics. This reflects the casework condition.

- Different-Author (DA) Pairs: Create document pairs where two different authors write documents on different topics.

Feature Extraction & Likelihood Ratio (LR) Calculation:

- Extract quantitative features from the text pairs (e.g., character n-grams, syntactic features).

- For each text pair, calculate a Likelihood Ratio (LR) using a pre-defined statistical model [6]. The LR is: LR = p(E|Hp) / p(E|Hd) where E is the evidence (the textual features).

Validation and Performance Assessment:

- Use logistic regression calibration to refine the LRs [6].

- Assess the validity and performance of the LRs using metrics like the Log-Likelihood-Ratio Cost (Cllr), which measures the overall accuracy and discrimination of the system.

- Visualize the results using Tippett plots, which show the cumulative proportion of LRs supporting the correct and incorrect hypotheses for both SA and DA pairs.

Workflow Visualization

The following diagram illustrates the end-to-end process of data sourcing, curation, and experimental validation for FTC research.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for FTC Data Workflows

| Item/Reagent | Function in FTC Research |

|---|---|

| Web Scraping Framework (e.g., Scrapy, BeautifulSoup) | Automated collection of textual data from public websites and forums. |

| Social Media APIs (e.g., X, Reddit) | Programmatic, policy-compliant access to structured social media data. |

| Social Listening Tools (e.g., Hootsuite, Talkwalker) | Provides aggregated data and trend analysis across multiple social platforms [33]. |

| Statistical Software Environment (e.g., R, Python with NumPy/SciPy) | Platform for quantitative text measurement, statistical modeling, and LR calculation [6]. |

| Dirichlet-Multinomial Model | A specific statistical model used for calculating likelihood ratios from text count data (e.g., n-grams) [6]. |

| Logistic Regression Calibration | A method to calibrate the output scores of a model to produce well-calibrated Likelihood Ratios [6]. |

| Secure Data Storage (Encrypted Drives/Servers) | Ensures the integrity and confidentiality of collected text corpora. |

| Metadata Schema (Structured CSV/JSON templates) | Provides a consistent framework for annotating texts with author, topic, and platform data, which is critical for validation [6]. |

Within forensic text comparison (FTC) research, the empirical validation of methodologies requires replicating specific case conditions using forensically relevant data [6]. A significant challenge in real-world authorship analysis involves comparing documents with topic mismatches, where writing styles may vary substantially based on subject matter [6]. This case study details the construction of a specialized dataset designed specifically for cross-topic authorship verification, addressing a critical gap in forensic linguistics resources. Such datasets enable rigorous testing of authorship verification methods under conditions that mirror actual forensic challenges, where questioned and known documents often differ in thematic content.

The importance of this work extends to multiple domains where authorship verification is applied, including forensic investigations, academic integrity cases, journalism attribution, and social media analysis [35]. By providing a structured framework for dataset development with explicit documentation of topic variation, this resource supports the advancement of more robust and forensically valid authorship verification techniques.

Dataset Design and Composition

Core Design Principles

The dataset construction adheres to two fundamental requirements established for empirical validation in forensic science [6]:

- Reflecting case conditions: Explicitly modeling the topic mismatch scenario commonly encountered in casework

- Using relevant data: Incorporating authentic textual materials comparable to those examined in actual investigations

Topic mismatch represents one of the most challenging conditions in authorship analysis, as writing style often varies substantially across different subject matters [6]. The dataset systematically controls for this variable to enable testing method robustness under these adverse conditions.

Dataset Specifications

Table 1: Dataset composition and structure

| Component | Specification | Purpose |

|---|---|---|

| Authors | 100-150 individuals | Provides sufficient author population for statistical significance |

| Documents per Author | 4-6 documents minimum | Enables multiple cross-topic comparisons per author |

| Topic Categories | 5-8 distinct themes | Ensures substantial topical variation within and between authors |

| Text Length | 500-5000 words | Maintains practical forensic relevance while ensuring sufficient features |

| Genre | Single consistent genre (e.g., blogs, emails, academic abstracts) | Controls for genre as a confounding variable |

| Metadata | Author demographics, topic labels, collection dates | Supports controlled experiments and confounding factor analysis |

The dataset structure enables three primary authorship verification decision problems [35]:

- AV_Core: Determining whether two specific documents were written by the same author

- AV_Batch: Assessing whether two sets of documents share the same authorship

- AV_Known: Verifying if a disputed document was written by a specific candidate author based on their known writings

Experimental Protocol for Dataset Construction

Data Collection and Curation

The dataset construction follows a systematic workflow to ensure forensic relevance and methodological rigor:

Figure 1: Workflow for constructing a cross-topic authorship verification dataset.

Phase 1: Source Identification and Author Selection

- Identify appropriate text sources with verified authorship and substantial topical diversity

- Select 100-150 authors with multiple documents across different topics

- Ensure each author has at least 4-6 documents with explicit topic variation

- Balance author demographics (gender, age, expertise) where possible

Phase 2: Topic Categorization and Text Extraction

- Define 5-8 broad topic categories relevant to the text genre

- Manually annotate each document with primary and secondary topic labels

- Extract and clean text content, removing boilerplate and non-text elements

- Perform text normalization (lowercasing, punctuation standardization)

Phase 3: Quality Verification and Dataset Splitting

- Verify authorship attribution through multiple independent sources

- Ensure minimum text length requirements are met

- Create standardized training, validation, and test splits with no author overlap

- Document all processing steps and quality control measures

Cross-Topic Pair Construction

For authorship verification tasks, the dataset systematically constructs positive and negative pairs with varying degrees of topic overlap:

Positive Pairs: Documents from the same author across different topics Negative Pairs: Documents from different authors with both matched and mismatched topics

This structure enables testing of verification methods under three conditions:

- Same topic, same author (control condition)

- Different topics, same author (cross-topic verification)

- Different topics, different authors (cross-topic impostor detection)

Validation Framework

Benchmark Experimental Protocol

The validation of authorship verification methods using the cross-topic dataset follows a standardized experimental protocol:

Figure 2: Experimental protocol for validating authorship verification methods.

Implementation Details:

- Data Partitioning: Strict separation of training, validation, and test sets with no author overlap

- Feature Extraction: Multiple feature types at different linguistic levels

- Model Training: Training on single-topic pairs, testing on cross-topic pairs

- Evaluation: Comprehensive metrics including accuracy, AUC, Cllr, and Tippett plots

Evaluation Metrics

Table 2: Evaluation metrics for authorship verification performance

| Metric | Calculation | Interpretation | Forensic Relevance |

|---|---|---|---|

| Area Under Curve (AUC) | Area under ROC curve | Overall discrimination ability | General method performance |

| Log-Likelihood Ratio Cost (Cllr) | −12[(1N∑i=1Nlog2(1+1LRi))+1N∑i=1Nlog2(1+LRi)] | Calibration quality | Reliability of likelihood ratios |

| Accuracy | (TP+TN)(TP+TN+FP+FN) | Overall correct decisions | Practical utility |

| Tippett Plot | Graphical representation of LR distributions | Method calibration | Forensic evidence interpretation |

The Cllr metric is particularly important in forensic applications as it assesses the reliability of likelihood ratios, which form the basis of forensic evidence evaluation under the likelihood ratio framework [6].

The Scientist's Toolkit

Essential Research Reagents

Table 3: Key research reagents and computational tools for authorship verification research

| Tool/Resource | Type | Function | Application in Protocol |

|---|---|---|---|

| stylo R Package [36] | Software Library | Implements imposters method and stylometric analysis | Authorship verification using general imposters method |

| LambdaG Method [35] | Computational Method | Calculates likelihood ratio based on grammar models | Authorship verification using grammatical features |

| n-gram Language Models | Computational Method | Models language using contiguous character/word sequences | Feature extraction for stylistic analysis |

| LIWC (Linguistic Inquiry Word Count) | Software Tool | Analyzes psychological processes in text | Psycholinguistic feature extraction |

| Empath Python Library [37] | Software Library | Analyzes text against lexical categories | Deception and emotion analysis in forensic texts |

| AIDBench Benchmark [38] | Evaluation Framework | Benchmarks authorship identification capabilities | Performance comparison across methods |

| ForensicsData Dataset [4] | Data Resource | Provides malware analysis reports in Q-C-A format | Training data for forensic question answering |

This case study presents a comprehensive framework for constructing cross-topic authorship verification datasets to advance forensic text comparison research. By systematically addressing the challenge of topic mismatch—a prevalent condition in real forensic cases—this approach enables more rigorous validation of authorship verification methods. The detailed protocols for dataset construction, experimental validation, and performance assessment provide researchers with standardized methodologies for developing forensically relevant resources.

The resulting datasets support the development of more robust authorship verification techniques that can withstand challenging cross-topic conditions, ultimately enhancing the scientific foundation of forensic text comparison. Future work will expand this framework to incorporate additional confounding factors such as genre variation, temporal evolution of writing style, and multi-author documents, further increasing the forensic relevance of the resources.

Navigating Challenges in Dataset Creation: Bias, Mismatch, and Ethics

Mitigating Algorithmic Bias and Ensuring Fairness in Training Data

Algorithmic bias refers to the systematic and repeatable errors that create unfair outcomes, such as privileging one arbitrary group of users over others. In forensic text comparison research, biased datasets can perpetuate and even amplify societal inequalities, leading to discriminatory outcomes and reduced validity of scientific conclusions. A landmark case is the COMPAS recidivism algorithm, which was found to disproportionately classify Black defendants as higher risk compared to White defendants, despite race not being an explicit input feature [39]. This bias stemmed from historical data that reflected existing societal disparities, which were then learned and perpetuated by the algorithm.

The sources of bias in training data are multifaceted. Systemic bias occurs due to societal conditions and inequalities that become embedded in datasets. Data collection and annotation bias arises during the processes of gathering or labeling data. Algorithm or system design bias originates from the choices made in developing the model architecture or objective functions [39]. A well-documented example of data collection bias is Amazon's AI recruiting tool, which penalized resumes containing the word "women's" because it was trained on historical hiring data dominated by male applicants [39] [40]. Similarly, the "Gender Shades" study exposed significant race and gender biases in commercial facial recognition software, with accuracy rates dropping to as low as 65.3% for darker-skinned women compared to over 99% for white males, due to training data heavily skewed toward lighter-skinned subjects [40].

Frameworks and Standards for Bias Mitigation

The IEEE 7003-2024 standard, "Standard for Algorithmic Bias Considerations," provides a comprehensive framework for addressing bias throughout the AI system lifecycle [41]. This landmark framework establishes processes to help organizations define, measure, and mitigate algorithmic bias while promoting transparency and accountability. The standard encourages an iterative, lifecycle-based approach that considers bias from initial system design through decommissioning [41].

Another foundational framework is the FAIR Principles, which provide guidelines to improve the Findability, Accessibility, Interoperability, and Reuse of digital assets [42]. These principles emphasize machine-actionability – the capacity of computational systems to find, access, interoperate, and reuse data with minimal human intervention – which is crucial for dealing with the increasing volume, complexity, and creation speed of data in forensic research [42].

For organizations implementing these standards, key steps include establishing a bias profile to document considerations throughout the system's lifecycle, identifying stakeholders early in development, ensuring data representation, monitoring for data drift and concept drift, and promoting accountability through clear documentation [41].

Experimental Protocols for Bias Assessment and Mitigation

Three-Stage Bias Intervention Framework

Table 1: Stages of Bias Intervention in Machine Learning

| Intervention Stage | Description | Key Techniques | Pros and Cons |

|---|---|---|---|

| Pre-processing | Adjusts data before model training | Resampling, reweighting, relabeling, feature selection [40] [43] | Pros: Addresses root causesCons: Data collection can be expensive/difficult [40] |

| In-processing | Modifies model training process | Prejudice removers, adversarial debiasing, fairness constraints [40] [43] | Pros: Provides theoretical fairness guaranteesCons: Computationally intensive [40] |

| Post-processing | Adjusts model outputs after training | Threshold adjustment, reject option classification, calibration [40] [43] | Pros: Computationally efficient, works with black-box modelsCons: May require sensitive attribute information [40] |

Protocol for Bias Evaluation in Forensic Text Comparison

Objective: To systematically evaluate and quantify algorithmic bias in forensic text comparison models across protected subgroups.

Materials and Dataset Requirements:

- Text Corpora: Representative samples of forensic text data (e.g., chat logs, written statements, transcribed interviews)