Building a Robust Risk Assessment Framework for Forensic Method Validation in Pharmaceutical Sciences

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to implementing a risk assessment framework for forensic method validation.

Building a Robust Risk Assessment Framework for Forensic Method Validation in Pharmaceutical Sciences

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to implementing a risk assessment framework for forensic method validation. It covers foundational principles from international standards like ISO 21043, details methodological steps for application, addresses common troubleshooting scenarios, and establishes rigorous validation and comparative techniques. The content is designed to ensure that forensic methods in drug development are accurate, reliable, legally defensible, and suitable for regulatory submission, with a focus on managing uncertainties and leveraging emerging technologies such as Artificial Intelligence.

The Pillars of Forensic Validation: Principles, Standards, and Regulatory Requirements

Forensic validation is a systematic process essential for ensuring the reliability and accuracy of tools, methods, and analytical findings in forensic science. In the context of a risk assessment framework, validation provides the empirical foundation that allows researchers and practitioners to trust and defend their scientific conclusions, whether in a laboratory setting or a legal proceeding. At its core, validation refers to the process of ensuring that extracted data truly represents real-world events and that the methods used to obtain this data are robust, reproducible, and fit for purpose [1]. This process serves as a critical form of quality assurance, confirming that data is accurate, correctly interpreted, and meaningful within the specific context of a case [1].

The importance of validation extends beyond mere technical compliance. In digital forensics, for example, improperly validated evidence can be challenged for credibility in legal settings, potentially undermining case outcomes [1]. Similarly, in forensic chemistry and psychiatry, the validity of methods and tools directly impacts public safety, judicial decisions, and therapeutic interventions [2] [3] [4]. As forensic science continues to evolve with new technologies and methodologies, establishing a rigorous risk assessment framework for validation becomes paramount for ensuring that novel approaches meet the stringent requirements of scientific and legal scrutiny.

Core Dimensions of Forensic Validation

Forensic validation encompasses three interconnected dimensions, each addressing distinct aspects of the forensic workflow but collectively contributing to the overall reliability of forensic conclusions.

Tool Validation

Tool validation focuses on verifying that the software, instruments, and hardware used in forensic investigations produce accurate and consistent results. This dimension recognizes that forensic tools parse raw data into human-readable form, but no tool is infallible [1]. Parsing errors, software bugs, or unsupported data formats can lead to significant inaccuracies if undetected [1]. In digital forensics, for instance, the distinction between carved versus parsed data highlights this necessity. Parsed data is extracted from known database schemas and is generally more reliable, while carved data obtained by scanning raw data for patterns can produce false positives if not properly validated [1].

Tool validation extends to various forensic domains. In chemical analysis, Gas Chromatography-Mass Spectrometry (GC-MS) instruments require rigorous validation to ensure they detect and quantify substances accurately [3]. For forensic tools assessing risk in psychiatric populations, validation establishes whether these instruments reliably predict dangerousness or recidivism [2] [4]. The validation process for tools typically involves testing against known standards, verifying output consistency across multiple platforms, and assessing performance under different operating conditions.

Method Validation

Method validation establishes that the overall procedures and protocols used in forensic investigations are scientifically sound and consistently executable. This dimension addresses the complete analytical process rather than just the tools employed. A validated method demonstrates specificity, sensitivity, precision, and accuracy under defined operational parameters [3].

In forensic chemistry, method validation follows established guidelines such as those from the Scientific Working Group for the Analysis of Seized Drugs (SWGDRUG) and includes parameters like limit of detection (LOD), limit of quantification (LOQ), linearity, robustness, and reproducibility [3]. For example, a validated rapid GC-MS method for screening seized drugs demonstrated a 50% improvement in detection limits for key substances like cocaine and heroin, achieving detection thresholds as low as 1 μg/mL compared to 2.5 μg/mL with conventional methods [3]. The method also exhibited excellent repeatability and reproducibility with relative standard deviations (RSDs) less than 0.25% for stable compounds [3].

Analysis Validation

Analysis validation ensures that the interpretation of results is correct and contextually appropriate. This dimension addresses the human element of forensic science – how experts draw conclusions from data generated by validated tools and methods. Analysis validation involves cross-artifact corroboration, where multiple independent pieces of evidence are examined to determine if they tell a consistent story [1]. It also requires understanding the limitations of analytical techniques and recognizing when results may be misleading or inconclusive.

In digital forensics, analysis validation might involve verifying that a timestamp extracted from a device correctly accounts for timezone offsets and daylight saving time, rather than simply accepting the raw value at face value [1]. In forensic psychiatry, it entails ensuring that risk assessment scores are interpreted in the context of the individual's clinical history and current presentation, rather than being applied mechanistically [4]. Proper analysis validation acknowledges that even with validated tools and methods, interpretative errors can occur if the context and limitations of the data are not fully understood.

Table 1: Key Aspects of the Three Dimensions of Forensic Validation

| Dimension | Primary Focus | Validation Parameters | Common Challenges |

|---|---|---|---|

| Tool Validation | Instruments, software, hardware | Accuracy, consistency, output reliability, compatibility | Parser errors, software bugs, unsupported data formats, version compatibility |

| Method Validation | Procedures, protocols, workflows | Specificity, sensitivity, precision, accuracy, LOD, LOQ, robustness | Reproducibility across operators, environmental factors, matrix effects |

| Analysis Validation | Interpretation, contextualization, conclusion | Logical consistency, cross-artifact corroboration, contextual understanding | Cognitive biases, contextual misunderstandings, overinterpretation of limited data |

Experimental Protocols for Method Validation

The following section provides detailed protocols for validating forensic methods, with specific examples from forensic chemistry and risk assessment tool development.

Protocol for Validating a Rapid GC-MS Method for Seized Drug Analysis

This protocol outlines the systematic validation of a rapid Gas Chromatography-Mass Spectrometry (GC-MS) method for screening seized drugs, based on research conducted by the Dubai Police Forensic Laboratories [3].

Instrumentation and Materials

- GC-MS System: Agilent 7890B gas chromatograph connected to an Agilent 5977A single quadrupole mass spectrometer

- Column: Agilent J&W DB-5 ms column (30 m × 0.25 mm × 0.25 μm)

- Carrier Gas: Helium (99.999% purity) at a fixed flow rate of 2 mL/min

- Data Acquisition: Agilent MassHunter software (version 10.2.489) and Agilent Enhanced ChemStation software

- Reference Standards: Certified reference materials for target compounds (e.g., Tramadol, Cocaine, Codeine, Diazepam, THC, Heroin) from reputable suppliers like Cayman Chemical or Sigma-Aldrich

- Solvents: High-purity methanol (99.9%) for sample preparation

Method Optimization Procedure

Temperature Programming Optimization:

- Begin with an initial oven temperature of 80°C, held for 0.5 minutes

- Implement a ramp rate of 40°C/minute to 220°C

- Follow with a second ramp of 25°C/minute to 300°C, held for 1.5 minutes

- Maintain the transfer line temperature at 280°C

Flow Rate Optimization:

- Set helium carrier gas flow rate to 2 mL/min constant flow

- Use a 10:1 split ratio for injection

MS Parameter Configuration:

- Set ion source temperature to 280°C

- Configure quadrupole temperature at 180°C

- Utilize electron impact (EI) ionization mode at 70 eV

- Employ selected ion monitoring (SIM) mode for target compounds

Validation Parameters and Acceptance Criteria

Table 2: Validation Parameters for Rapid GC-MS Method for Seized Drug Analysis [3]

| Validation Parameter | Experimental Procedure | Acceptance Criteria | Reported Results |

|---|---|---|---|

| Limit of Detection (LOD) | Serial dilution of standards until S/N ratio ≥ 3 | Improvement over conventional methods | 50% improvement for key substances; cocaine LOD: 1 μg/mL vs. 2.5 μg/mL conventional |

| Precision (Repeatability) | Multiple injections (n=6) of same sample | RSD ≤ 2% for retention times | RSD < 0.25% for stable compounds |

| Reproducibility | Analysis by different analysts on different days | RSD ≤ 5% for retention times and peak areas | RSD < 0.25% under operational conditions |

| Specificity | Analysis of blank samples and potential interferents | No interference at retention times of target analytes | Baseline separation of all target compounds |

| Identification Accuracy | Comparison with reference standards and spectral libraries | Match quality score ≥ 90% | Match quality scores consistently > 90% across tested concentrations |

| Analysis Time | Comparison with conventional method | Significant reduction without sacrificing quality | Reduction from 30 minutes to 10 minutes total analysis time |

Application to Real Case Samples

Sample Preparation:

- For solid samples: Grind tablets/capsules to fine powder using mortar and pestle

- Extract approximately 0.1 g with 1 mL methanol via sonication for 5 minutes

- Centrifuge and transfer supernatant to GC-MS vial

- For trace samples: Swab surfaces with methanol-moistened swabs, extract swabs in 1 mL methanol via vortexing

Data Analysis:

- Compare retention times with certified standards

- Perform library searches using Wiley Spectral Library (2021 edition) and Cayman Spectral Library

- Verify identifications based on retention time and mass spectral match

Protocol for Validating a Risk Assessment Tool in Forensic Psychiatry

This protocol outlines the development and validation of a risk assessment tool for forensic psychiatry, based on the methodology used for the Dangerousness Index in Forensic Psychiatry (IPPML) [4].

Participant Recruitment and Sampling

Sample Composition:

- Recruit 261 participants (157 males, 104 females) aged 19-75 years

- Divide into experimental group (n=126) with history of forensic psychiatric examination and control group (n=135) diagnosed with schizophrenia but no forensic history

- Obtain written informed consent from all participants

- Secure ethical approval from institutional review board

Inclusion/Exclusion Criteria:

- Inclusion: Age ≥ 18 years, history of forensic psychiatric examination (for experimental group), ability to provide informed consent

- Exclusion: Uncontrolled mental illness, inability to provide informed consent, age < 18 years

Tool Development and Validation Procedure

Item Generation:

- Develop a broad set of initial items covering all potential aspects of dangerousness in forensic psychiatry

- Convene a panel of 10 experts (5 university professors, 5 medical specialists) to evaluate each statement for content validity and formal validity

- Experts rate each item on a 5-point scale from "Strongly Disagree (1)" to "Strongly Agree (5)"

- Retain items scoring above 3 or selected by at least one expert

- Further reduce item list by keeping only items assigned a priori by at least 60% of evaluators

Factor Analysis:

- Conduct exploratory factor analysis to identify underlying factor structure

- Determine number of factors based on eigenvalues > 1 and scree plot examination

- Interpret factors based on items with high loadings (> 0.4)

Reliability Assessment:

- Calculate internal consistency using Cronbach's alpha for the entire scale and for each identified factor

- Establish test-retest reliability through repeated administration to a subset of participants

Validity Testing:

- Assess discriminant validity by comparing scores between experimental and control groups

- Evaluate predictive validity through longitudinal follow-up of recidivism outcomes

- Examine convergent validity by correlating with established risk assessment tools

Validation Parameters and Outcomes

Table 3: Validation Parameters for Forensic Psychiatry Risk Assessment Tool [4]

| Validation Parameter | Methodology | Reported Outcomes for IPPML |

|---|---|---|

| Internal Consistency | Cronbach's alpha | α = 0.881 for entire sample; α = 0.896 for Factor 1; α = 0.628 for Factor 2 |

| Factor Structure | Exploratory factor analysis | Two factors identified: Performance and Social, explaining 45.55% of variance |

| Discriminant Validity | Comparison between experimental and control groups | Higher scores in forensic psychiatric evaluation group vs. schizophrenia-only group |

| Group Differences | Comparison of scores by gender | Higher dangerousness with forensic implications in males |

| Content Validity | Expert panel evaluation | 20 items retained from initial pool after expert review |

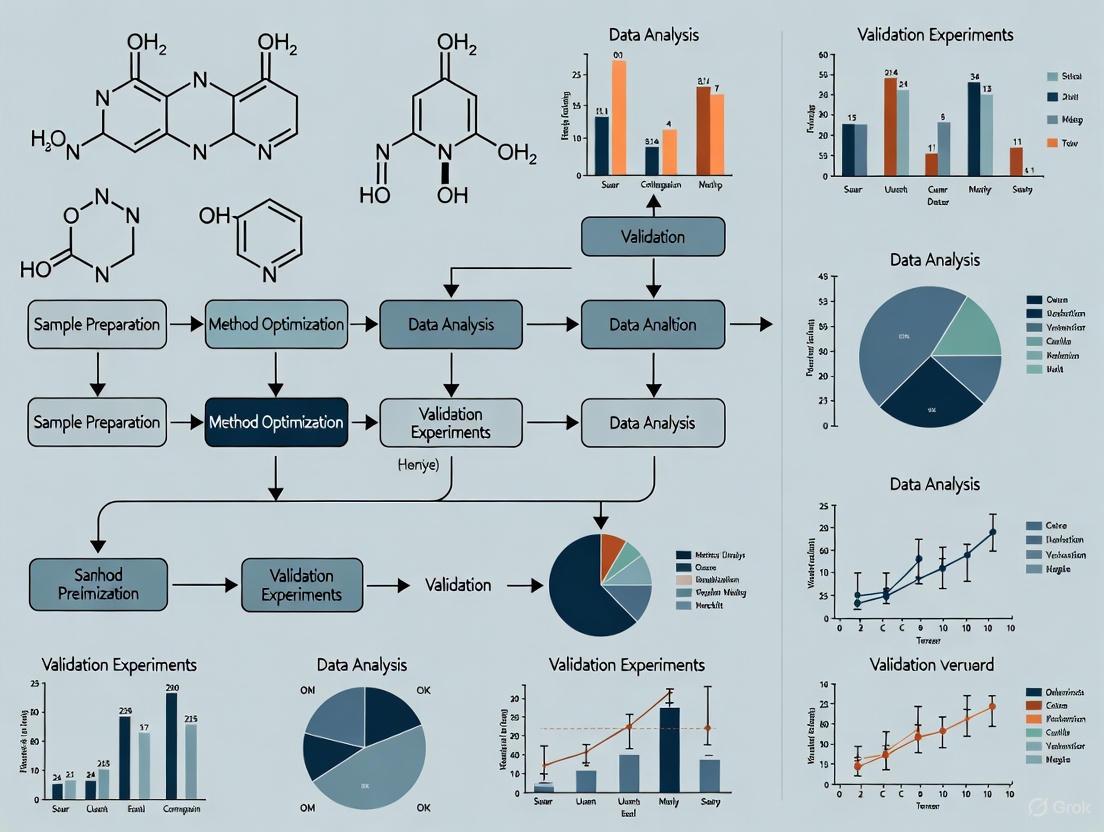

Visualization of Forensic Validation Workflows

Digital Forensic Validation Workflow

Forensic Chemistry Method Validation Workflow

Essential Research Reagent Solutions

Table 4: Essential Research Reagents and Materials for Forensic Validation Studies

| Category | Specific Items | Function in Validation | Example Applications |

|---|---|---|---|

| Reference Standards | Certified reference materials (CRMs) for drugs, explosives, toxicology | Provide known quantities for method calibration and accuracy determination | GC-MS method development for seized drugs [3] |

| Quality Control Materials | Blank matrices, spiked samples, proficiency test materials | Monitor analytical performance and detect contamination or interference | Validation of forensic toxicology methods |

| Software Tools | Volatility, Autopsy, Sleuth Kit, Wireshark, FTK Imager | Enable digital evidence acquisition, analysis, and verification | Memory forensics, disk imaging, network analysis [5] [6] |

| Instrumentation | GC-MS systems, HPLC, spectroscopic instruments, microscopy | Generate analytical data for qualitative and quantitative analysis | Drug identification, material analysis, trace evidence [3] |

| Statistical Packages | R, SPSS, Python with scikit-learn, specialized psychometric software | Perform statistical analysis of validation data and reliability assessments | Risk assessment tool validation, method comparison studies [2] [4] |

| Validation Guidelines | SWGDRUG guidelines, ISO standards, professional organization protocols | Provide standardized frameworks for validation parameters and acceptance criteria | Method validation in forensic laboratories [3] |

Forensic validation represents a multifaceted process that spans tools, methods, and analytical interpretations. Within a risk assessment framework, validation provides the evidentiary foundation that supports reliable and defensible forensic conclusions across diverse domains from digital forensics to forensic chemistry and psychiatry. The protocols and workflows presented in this document offer practical approaches for implementing comprehensive validation procedures that meet both scientific and legal standards.

As forensic science continues to advance with new technologies and methodologies, the principles of validation remain constant: systematic testing, empirical verification, and critical assessment of limitations. By adhering to rigorous validation practices, forensic researchers and practitioners can enhance the reliability of their findings, support the administration of justice, and contribute to the ongoing development of forensic science as a rigorous scientific discipline.

Forensic validation is a fundamental practice that ensures the tools and methods used to analyze evidence are accurate, reliable, and legally admissible [7]. Within a risk assessment framework for forensic research, validation functions as a critical safeguard against error, bias, and misinterpretation [7]. The core principles of Reproducibility, Transparency, and Error Rate Awareness form the foundational pillars of this process. These principles are essential for establishing scientific credibility and gaining legal acceptance under standards such as the Daubert Standard, which requires that scientific methods be demonstrably reliable [7]. This document outlines detailed application notes and experimental protocols to implement these principles effectively in forensic method validation research.

Core Principles and Application Notes

Principle 1: Reproducibility

Reproducibility ensures that results can be consistently repeated by different qualified professionals using the same method and data [7]. In practice, this means that any forensic method must produce equivalent outcomes when applied to the same evidence sample across different laboratories, instruments, and analysts.

Application Notes:

- Definition: Reproducibility is achieved when an independent party can follow the documented protocol and obtain results that fall within an acceptable margin of error of the original findings [7].

- Risk of Non-Compliance: Lack of reproducibility severely undermines the credibility of forensic findings and can lead to the exclusion of evidence in legal proceedings [7]. It introduces uncertainty and potential for miscarriages of justice.

- Implementation Strategy: Utilize controlled reference materials and standardized testing environments to establish a baseline for repeatable outcomes. Key practices include using hash values to confirm data integrity and comparing tool outputs against known datasets [7].

Principle 2: Transparency

Transparency requires that all procedures, software versions, logs, assumptions, and chain-of-custody records are thoroughly and clearly documented [7] [8]. A transparent methodology allows for the critical evaluation of the process and conclusions by the broader scientific and legal communities.

Application Notes:

- Definition: Full transparency involves disclosing all matters of scientific relevance, including fundamental principles, methodology, validity and error, assumptions and limitations, and areas of scientific controversy [8].

- Risk of Non-Compliance: Opaque methods create doubt regarding the reliability and impartiality of the analysis. This can lead to legal challenges, loss of credibility for the expert or laboratory, and increased questioning in court [7] [8].

- Implementation Strategy: Adopt a comprehensive reporting framework that documents every stage of the forensic process. As demonstrated by one multi-disciplinary laboratory, this includes information on competency testing, quality assurance, and cognitive factors, which ultimately improves the use of scientific evidence in courts [8].

Principle 3: Error Rate Awareness

Error Rate Awareness involves understanding, quantifying, and disclosing the known or potential error rates associated with a forensic method [7]. This principle is a key factor for courts in assessing the reliability of scientific evidence.

Application Notes:

- Definition: The error rate refers to the frequency with which a method produces false positives, false negatives, or other erroneous results under specified conditions. This must be established through rigorous empirical testing [7].

- Risk of Non-Compliance: Operating with an unknown or high error rate risks basing decisions on flawed evidence, potentially leading to wrongful convictions, acquittals of the guilty, and civil liability [7].

- Implementation Strategy: Conduct repeated testing (e.g., in triplicate) using control samples to calculate quantitative error rates [9]. Cross-validate results using multiple tools or methods to identify and account for inconsistencies [7].

Experimental Protocols for Validation

The following protocols provide a template for designing validation studies that adhere to the core principles.

Protocol: Tool Performance and Reproducibility Assessment

This protocol is designed to test the reliability and repeatability of a specific forensic tool or software.

1. Objective: To determine the reproducibility and error rate of [Tool Name] in performing [Specific Function, e.g., deleted file recovery]. 2. Materials: - See "Research Reagent Solutions" table for standard tools and reference materials. 3. Methodology: - Sample Preparation: Create a controlled testing environment with a standardized digital evidence sample (e.g., a forensic disk image) containing a known set of artifacts [9]. - Experimental Replication: Execute the core function (e.g., data carving) in triplicate to establish repeatability metrics [9]. - Data Integrity Checks: Use cryptographic hash values (e.g., SHA-256) to confirm the evidence integrity before and after analysis [7]. - Data Analysis: Calculate the tool's error rate by comparing the acquired artifacts against the known control reference. Metrics should include true positives, false positives, and false negatives [9]. 4. Documentation: Record all parameters, tool version, operating environment, and raw results. Any deviation from the protocol must be documented.

Protocol: Method Transparency and Cross-Validation

This protocol assesses the consistency of a forensic method across different tools or analysts.

1. Objective: To validate the transparency and robustness of the [Method Name] for [Analysis Type] by cross-validating results. 2. Materials: - See "Research Reagent Solutions" table. 3. Methodology: - Independent Analysis: Have multiple trained analysts or different software tools (e.g., commercial and open-source) analyze the same standardized evidence sample [7] [9]. - Result Comparison: Systematically compare the outputs from all sources to identify any inconsistencies in recovered data or interpreted results [7]. - Blind Testing: Where possible, incorporate blind testing to minimize cognitive bias. 4. Documentation: Maintain detailed logs from all tools and analysts. The final report must clearly present all findings, highlight any discrepancies, and discuss their potential impact on the conclusions.

Workflow and Signaling Pathways

The following diagram illustrates the logical workflow for integrating the core principles into a forensic method validation study, from planning through to court admission.

Forensic Validation Workflow

Research Reagent Solutions

The table below catalogues essential tools and materials for conducting rigorous forensic validation experiments, drawing on examples from digital forensics.

| Item Name | Type/Category | Function in Validation | Example Products/Tools |

|---|---|---|---|

| Commercial Forensic Suite | Software | Provides a benchmark for comparison; often court-accepted and commercially validated [9]. | FTK, EnCase, Forensic MagiCube [9] |

| Open-Source Forensic Tool | Software | A cost-effective alternative for cross-validation; allows peer review of methodologies [9]. | Autopsy, Sleuth Kit, ProDiscover Basic [9] |

| Standardized Reference Material | Data Set | A controlled evidence sample (disk image) with known content for testing tool accuracy and calculating error rates [7] [9]. | Custom-made disk images, NIST test datasets |

| Hash Algorithm Tool | Software/Utility | Generates cryptographic hashes (e.g., SHA-256) to verify data integrity and ensure evidence is unaltered during analysis [7]. | Built-in OS tools, forensic software modules |

| Validation Framework | Protocol | A structured methodology outlining steps for testing and confirming the reliability of tools and methods [9]. | Enhanced framework per Ismail et al., NIST Computer Forensics Tool Testing standards [9] |

Data Presentation and Analysis

Quantitative Metrics from Tool Validation Studies

The following table summarizes example outcomes from a comparative tool validation study, illustrating how key metrics like error rates are quantified.

| Tool Name | Tool Type | Test Scenario | Success Rate (%) | False Positive Rate (%) | False Negative Rate (%) |

|---|---|---|---|---|---|

| Tool A | Commercial | Data Carving | 99.5 | 0.5 | 0.1 |

| Tool B | Open-Source | Data Carving | 98.7 | 1.2 | 0.2 |

| Tool A | Commercial | Artifact Search | 98.9 | 0.8 | 0.4 |

| Tool B | Open-Source | Artifact Search | 97.5 | 2.1 | 0.5 |

| Tool C | Commercial | File Recovery | 99.8 | 0.1 | 0.1 |

Note: Data is illustrative, based on experimental methodologies described in the literature [9]. Success Rate is defined as the percentage of known artifacts correctly identified and recovered. Rates should be established through repeated testing in triplicate [9].

The ISO 21043 Forensic sciences standard series represents a comprehensive, internationally recognized framework designed to unify and advance forensic science as a discipline. Developed by ISO Technical Committee (TC) 272, this series provides a well-structured framework that addresses the entire forensic process, from crime scene to courtroom [10]. The standard aims to enhance the reliability of expert opinions and ultimately improve trust in the justice system by establishing common requirements, recommendations, and terminology across forensic practices [10].

The development of ISO 21043 was a worldwide effort, bringing together experts in forensic science, law, law enforcement, and quality management from 27 participating and 21 observing national standards organizations [10]. The complete publication of Parts 3, 4, and 5 in 2025 marks a significant milestone in establishing a unified approach to forensic science practice internationally [10].

Structural Framework of ISO 21043

The ISO 21043 standard is organized into five distinct parts, each addressing specific stages of the forensic process while working in tandem with established standards like ISO/IEC 17025 for testing and calibration laboratories [10].

Table 1: Components of the ISO 21043 Series

| Part Number | Title | Scope and Focus | Publication Status |

|---|---|---|---|

| ISO 21043-1 | Vocabulary [10] | Defines terminology and provides a common language for discussing forensic science [10] | Published [10] |

| ISO 21043-2 | Recognition, recording, collecting, transport and storage of items [11] | Addresses forensic science at the scene; early stages that can impact all subsequent processes [10] | Published 2018 [11] |

| ISO 21043-3 | Analysis [12] | Applies to all forensic analysis, emphasizing issues specific to forensic science [10] | Published 2025 [12] |

| ISO 21043-4 | Interpretation [10] | Centers on case questions and answers provided as opinions; links observations to case questions [10] | Published 2025 [10] |

| ISO 21043-5 | Reporting [10] | Addresses communication of forensic process outcomes, including reports and testimony [10] | Published 2025 [10] |

The relationship between these components follows the logical progression of the forensic process, with outputs from one stage serving as inputs for the next. This creates a seamless framework that maintains integrity and continuity throughout the entire forensic workflow [10].

Core Principles and Requirements for Forensic Analysis

Foundational Principles of ISO 21043-3

ISO 21043-3: Analysis establishes critical requirements to safeguard the process for analyzing items of potential forensic value. The standard is designed to ensure the use of suitable methods, proper controls, qualified personnel, and appropriate analytical strategies throughout the forensic analysis of items [12]. It applies to activities conducted by forensic service providers at the scene and within a facility, covering all disciplines of forensic science with the exception of digital data recovery, which falls under ISO/IEC 27037 [12].

The requirements and recommendations in ISO 21043-3 are designed to facilitate comprehensive, accurate, and reliable analysis of items through standardized approaches [12]. The standard works in conjunction with ISO 17025, referencing it where issues are not specific to forensic science while emphasizing aspects particularly relevant to forensic analysis [10].

Method Validation Framework

A cornerstone of reliable forensic science is the demonstration that analytical methods are fit for purpose. Validation involves providing objective evidence that a method, process, or device is suitable for its specific intended purpose [13]. This process is critical for meeting accreditation requirements under ISO 17025 and ensuring that results presented in legal contexts can be relied upon [13].

The validation framework outlined in forensic guidance documents follows a structured process:

End-User Requirements Specification

A critical component of method validation involves determining end-user requirements. This process captures what different users of the method output require and focuses particularly on aspects that experts will rely on for their critical findings [13]. The end-user requirement directly influences the dataset needed to adequately assess the efficiency, effectiveness, and competence to perform the activity [13].

For novel methods developed in-house, user requirements may originate from method development documentation, while adopted or adapted methods require creating these requirements from scratch with focus on features affecting reliable results [13]. Defining these requirements specifically helps ensure that validation testing uses representative data that reflects real-life applications without being unnecessarily complex [13].

Experimental Protocols for Method Validation

Protocol for Developmental Validation of Novel Methods

Purpose: To establish objective evidence that a novel forensic method is fit for purpose when no prior validation data exists [13].

Scope: Applicable to newly developed analytical techniques, instruments, or methodologies with limited or no existing validation history.

Procedure:

Define Requirements Specification

- Document functional and non-functional requirements

- Identify critical findings the method must support

- Establish minimum performance thresholds

- Define operational constraints and limitations

Conduct Risk Assessment

- Identify potential failure modes

- Assess impact on result reliability

- Determine control measures for identified risks

- Document risk mitigation strategies

Set Acceptance Criteria

- Establish quantitative performance metrics

- Define qualitative assessment parameters

- Determine statistical confidence levels

- Specify tolerance ranges for control measures

Develop Validation Plan

- Design experiments to challenge method capabilities

- Select test materials representing casework scenarios

- Include stress testing with extreme conditions

- Plan replication studies to assess reproducibility

Execute Validation Study

- Generate test data under controlled conditions

- Implement quality assurance checks at critical points

- Document all deviations from protocol

- Collect data for statistical analysis

Assess Acceptance Criteria Compliance

- Analyze data against predefined metrics

- Evaluate method limitations and boundaries

- Document observed error rates or uncertainties

- Verify risk control effectiveness

Compile Validation Report

- Summarize objective evidence of fitness for purpose

- Document all method limitations

- Provide statement of validation completion

- Include implementation recommendations

Quality Control: Incorporate reality checks by independent experts, instrument calibration verification, and control samples throughout validation process [13].

Protocol for Adopted Method Verification

Purpose: To demonstrate laboratory competence for methods previously validated by another organization [13].

Scope: Applicable to standardized methods or techniques with existing validation data from reputable sources.

Procedure:

Review Existing Validation Records

- Evaluate original validation study design robustness

- Assess relevance to intended application

- Verify data comprehensiveness

- Identify potential gaps for specific applications

Define Laboratory-Specific Requirements

- Adapt end-user requirements to local context

- Consider population-specific factors

- Account for equipment differences

- Address jurisdictional legal standards

Design Verification Study

- Select representative test materials

- Focus on critical method aspects

- Include known reference materials

- Plan limited challenge testing

Execute Verification Testing

- Demonstrate analyst competency

- Verify equipment performance

- Confirm result reproducibility

- Validate reporting procedures

Document Verification Evidence

- Record performance metrics achieved

- Document any limitations identified

- Provide statement of verification

- Reference original validation data

Acceptance Criteria: Performance metrics must meet or exceed those documented in original validation studies and satisfy laboratory-specific requirements [13].

Quantitative Framework for Validation Assessment

Table 2: Validation Metrics for Forensic Method Evaluation

| Validation Parameter | Assessment Methodology | Acceptance Criteria Guidelines | Data Documentation Requirements |

|---|---|---|---|

| Accuracy | Comparison with reference materials or known values [13] | Agreement within established uncertainty margins [13] | Deviation from reference values, measurement uncertainty [13] |

| Precision | Repeated analysis of homogeneous samples [13] | Coefficient of variation ≤ laboratory-defined threshold [13] | Within-run and between-run variability estimates [13] |

| Specificity | Challenge with potentially interfering substances [13] | No significant interference at relevant concentrations [13] | List of substances tested and interference levels observed [13] |

| Robustness | Deliberate variation of operational parameters [13] | Method performance maintained within acceptable limits [13] | Parameter variations tested and their impact on results [13] |

| Sensitivity | Analysis of samples with decreasing analyte levels [13] | Reliable detection at or below relevant decision point [13] | Limit of detection, limit of quantification values [13] |

| Reproducibility | Inter-laboratory comparison or different analysts [13] | Consistent results across different implementations [13] | Between-operator, between-instrument, between-day variation [13] |

| Reliability | Extended analysis under routine conditions [13] | Consistent performance throughout method application [13] | Summary of performance over time and maintenance cycles [13] |

Table 3: Risk Assessment Matrix for Method Validation

| Risk Category | Potential Impact | Control Measures | Validation Approach |

|---|---|---|---|

| False Positive Results | Wrongful associations; miscarriage of justice [14] | Confirmatory techniques; independent verification [13] | Challenge with known exclusion samples; specificity testing [13] |

| False Negative Results | Missed associations; failure to solve crimes [14] | Sensitivity controls; minimum detection levels [13] | Analysis of low-level samples; dilution studies [13] |

| Contextual Bias | Influenced interpretation; skewed results [14] | Sequential unmasking; linear examination [13] | Blind testing; variation of irrelevant contextual information [13] |

| Method Limitations | Inappropriate application; overstatement of conclusions [13] | Clear documentation; staff training [13] | Boundary testing; application outside intended scope [13] |

| Data Integrity | Compromised results; challenged admissibility [13] | Audit trails; access controls; version management [13] | System security testing; audit trail verification [13] |

The Scientist's Toolkit: Essential Materials for Validation Research

Table 4: Research Reagent Solutions for Forensic Method Validation

| Reagent/Category | Function in Validation Studies | Application Examples | Quality Control Requirements |

|---|---|---|---|

| Reference Standards | Establish accuracy and calibration curves [13] | Quantification of analytes; method calibration [13] | Certified purity; documentation of traceability [13] |

| Control Materials | Monitor method performance and stability [13] | Positive and negative controls; process verification [13] | Documented stability; appropriate storage conditions [13] |

| Matrix Samples | Assess specificity and potential interferences [13] | Testing with different sample types; interference studies [13] | Representative of casework samples; documented composition [13] |

| Challenge Samples | Evaluate method limitations and robustness [13] | Stress testing; boundary condition assessment [13] | Known characteristics; appropriate heterogeneity [13] |

| Calibration Verification | Confirm instrument performance and response [13] | Regular performance checks; instrument qualification [13] | Traceable reference values; defined acceptance ranges [13] |

Integration with Risk Assessment Framework

The ISO 21043 standards provide an essential foundation for implementing a comprehensive risk assessment framework for forensic method validation research. By establishing standardized requirements across the entire forensic process, these standards enable systematic identification, evaluation, and mitigation of risks associated with forensic analysis [10].

The framework incorporates four key guidelines for evaluating forensic feature-comparison methods: plausibility, soundness of research design and methods, intersubjective testability, and availability of valid methodology to reason from group data to statements about individual cases [14]. These guidelines help bridge the gap between general scientific principles and the specific requirements of forensic applications, supporting the development of validated methods that meet both scientific and legal standards [14].

Implementation of ISO 21043 within a risk assessment framework emphasizes the importance of error rate quantification, method limitation documentation, and clear communication of uncertainties in forensic conclusions [14]. This approach aligns with legal admissibility standards such as Daubert, which require demonstration of methodological reliability and known error rates for scientific evidence presented in court proceedings [14] [15].

For researchers and scientists developing forensic methods, understanding the legal admissibility of expert testimony is crucial for ensuring that analytical techniques withstand judicial scrutiny. In the United States, admissibility is governed primarily by two competing standards: the Frye standard and the Daubert standard [16] [17]. The appropriate standard depends on the jurisdiction in which testimony is offered, with federal courts and a majority of states following Daubert, while a minority of states continue to adhere to Frye [16] [17].

This article provides application notes and experimental protocols to help forensic researchers design validation studies that satisfy these legal thresholds. A robust validation framework not only enhances scientific integrity but also ensures that expert testimony based on research findings will be admitted in legal proceedings.

Core Legal Standards: Comparative Analysis

The Frye Standard: General Acceptance

The Frye standard originates from the 1923 case Frye v. United States [16] [17]. This standard employs a "general acceptance" test, requiring that the scientific methodology underlying an expert's opinion be generally accepted as reliable within the relevant scientific community [16] [17].

Key Frye Characteristics:

- Primary Question: Has the methodology gained general acceptance in the relevant scientific field? [16] [17]

- Judicial Role: Courts evaluate whether the principle from which the deduction is made is sufficiently established in its field [16].

- Application Scope: Primarily applied to novel scientific techniques rather than all expert testimony [16].

- Hearing Focus: Frye hearings are narrow, addressing only general acceptance of the methodology, not the reliability or relevance of the conclusions [16].

The Daubert Standard: Relevance and Reliability

The Daubert standard emerged from the 1993 Supreme Court case Daubert v. Merrell Dow Pharmaceuticals, Inc., which held that the Federal Rules of Evidence superseded the Frye standard [17] [18]. Daubert assigns trial judges a "gatekeeping" role to ensure expert testimony rests on a reliable foundation and is relevant to the case [17] [18].

Daubert's five-factor test provides a framework for evaluating methodology reliability [18]:

- Testability: Whether the expert's technique or theory can be or has been tested

- Peer Review: Whether the technique or theory has been subjected to peer review and publication

- Error Rate: The known or potential rate of error of the technique

- Standards: The existence and maintenance of standards controlling the technique's operation

- General Acceptance: Whether the technique or theory has attained general acceptance in the relevant scientific community

The Daubert trilogy of cases further refined this standard:

- Daubert (1993): Established the factors and overruled Frye in federal courts [18]

- General Electric Co. v. Joiner (1997): Clarified that conclusions and methodology are not entirely distinct and established abuse of discretion as the standard for appellate review [18]

- Kumho Tire Co. v. Carmichael (1999): Extended Daubert to all expert testimony, not just scientific testimony [18]

Table 1: Comparison of Frye and Daubert Standards

| Feature | Frye Standard | Daubert Standard |

|---|---|---|

| Originating Case | Frye v. United States (1923) [16] [17] | Daubert v. Merrell Dow Pharmaceuticals (1993) [17] [18] |

| Primary Test | "General Acceptance" in the relevant scientific community [16] [17] | Relevance and Reliability, with a five-factor analysis [17] [18] |

| Judicial Role | Determines acceptance within scientific community [16] | "Gatekeeper" ensuring reliable foundation and relevance [17] [18] |

| Scope | Primarily novel scientific techniques [16] | All expert testimony (scientific, technical, specialized knowledge) [18] |

| Burden of Proof | Proponent must demonstrate general acceptance [16] | Proponent must demonstrate admissibility by preponderance of evidence [19] [18] |

| Key Considerations | - Widespread acceptance in field- Scientific publications- Judicial decisions [16] | - Testability- Peer review- Error rate- Standards & controls- General acceptance [18] |

Recent Legal Developments

Recent amendments to Federal Rule of Evidence 702 (effective December 2023) emphasize that the proponent of expert testimony must demonstrate by a preponderance of the evidence that the testimony meets all admissibility requirements [19]. The rule now explicitly states that the expert's opinion must "reflect[] a reliable application of the principles and methods to the facts of the case" [19]. This amendment clarifies that courts must perform their gatekeeping role with diligence, ensuring that expert testimony stays within the bounds of what can be concluded from a reliable application of the expert's basis and methodology [19].

Quantitative Data in Risk Assessment Validation

Forensic risk assessment tools require rigorous quantitative validation to meet legal admissibility standards. The following data points are critical for demonstrating reliability and accuracy under both Frye and Daubert.

Table 2: Key Quantitative Metrics for Risk Assessment Tool Validation

| Metric Category | Specific Measures | Daubert Consideration | Data Presentation Requirements |

|---|---|---|---|

| Predictive Accuracy | - Sensitivity & Specificity- Area Under Curve (AUC)- Positive/Negative Predictive Values [20] | Known or potential rate of error [18] | Report rates for relevant subpopulations; avoid highly selected samples [20] |

| Population Norms | - True/False Positive Rates- True/False Negative Rates [20] | General acceptance in relevant community [18] | Present raw numbers and percentages; disclose conflicts of interest [20] |

| Reliability | - Inter-rater Reliability- Test-retest Reliability- Internal Consistency | Maintenance of standards and controls [18] | Report statistical coefficients and confidence intervals |

| Validation Evidence | - Cross-validation Results- External Validation Findings [20] | Whether theory has been tested [18] | Specify validation sample characteristics and generalizability [20] |

Experimental Protocols for Method Validation

Protocol 1: Establishing Predictive Accuracy

Objective: To determine the predictive accuracy of a risk assessment tool for violent behavior using quantitative measures.

Materials:

- Validated risk assessment tool (e.g., HCR-20, VRAG, LS/CMI)

- Representative sample population (ensure inclusion of relevant subpopulations)

- Data collection instruments (standardized protocols)

- Statistical analysis software (R, SPSS, or SAS)

Procedure:

- Sample Recruitment: Recruit a prospective cohort of participants (N ≥ 300 recommended) from the target population. Document inclusion/exclusion criteria.

- Baseline Assessment: Administer the risk assessment tool to all participants at baseline. Record individual item scores and total scores.

- Rater Training: Train all assessors to established competency standards. Calculate inter-rater reliability coefficients (kappa ≥ 0.70 acceptable).

- Follow-up Period: Track participants for a predetermined follow-up period (typically 1-5 years for violence risk). Document all violent incidents using standardized definitions.

- Data Analysis:

- Calculate sensitivity, specificity, positive predictive value, and negative predictive value

- Generate receiver operating characteristic (ROC) curves and calculate area under the curve (AUC)

- Report true positive, false positive, true negative, and false negative rates

- Subgroup Analysis: Conduct stratified analyses for relevant demographic subgroups (e.g., by gender, ethnicity, age) to evaluate predictive fairness.

Validation Criteria: The tool demonstrates at least moderate predictive accuracy (AUC ≥ 0.70) with comparable performance across relevant demographic subgroups.

Protocol 2: Error Rate Determination

Objective: To establish the known error rate of a forensic methodology as required under Daubert.

Materials:

- Standardized protocol for the methodology

- Reference standards or ground truth data

- Blinded examiners with appropriate expertise

- Statistical analysis package

Procedure:

- Test Design: Create a set of test cases with known ground truth (e.g., known positive and negative samples). Ensure case complexity reflects real-world applications.

- Examiner Blinding: Assign examiners to analyze test cases under blinded conditions to prevent confirmation bias.

- Data Collection: Have each examiner analyze all test cases using the standardized methodology. Record all observations, interpretations, and conclusions.

- Error Calculation:

- Compare examiner results to ground truth

- Calculate false positive and false negative rates

- Determine overall accuracy rate

- Compute confidence intervals for error rates

- Sources of Error: Document and categorize sources of error (e.g., measurement error, interpretation error, contamination).

- Replication: Conduct multiple rounds of testing to establish stable error rate estimates.

Validation Criteria: The methodology demonstrates a known and acceptable error rate with confidence intervals that support reliability for forensic application.

Daubert Admissibility Assessment Workflow

The following diagram illustrates the logical relationship between research validation activities and judicial admissibility determinations under Daubert:

The Scientist's Toolkit: Research Reagent Solutions

Forensic validation research requires specific methodological "reagents" - standardized components that ensure reproducibility and reliability.

Table 3: Essential Research Reagents for Forensic Method Validation

| Research Reagent | Function | Application in Legal Standards |

|---|---|---|

| Standardized Protocols | Detailed, step-by-step procedures for method application | Ensures consistent application and maintenance of standards (Daubert factor) [18] |

| Reference Materials | Certified controls and standards with known properties | Provides basis for method calibration and accuracy determination |

| Validation Datasets | Curated collections of data with known ground truth | Enables empirical testing and error rate determination (Daubert factor) [18] |

| Statistical Analysis Plans | Pre-specified protocols for data analysis | Demonstrates methodological rigor and minimizes analytical flexibility |

| Blinded Assessment Tools | Instruments for unbiased evaluation of outcomes | Reduces bias in validation studies and error rate determination |

| Peer-Reviewed Publications | Scholarly articles vetted by experts in the field | Provides evidence of peer review and general acceptance (Daubert factors) [18] |

Navigating the Daubert and Frye standards requires forensic researchers to implement robust validation frameworks that address specific legal criteria. By employing the protocols, metrics, and reagents outlined in this article, researchers can generate evidence that demonstrates the reliability, validity, and general acceptance of their methodologies. This scientific rigor not only advances forensic science but also ensures that expert testimony based on research findings meets the evolving standards for legal admissibility.

The Critical Role of Risk Assessment in Proactive Method Development

The integrity of forensic and pharmaceutical data rests upon the reliability of analytical methods. A proactive, risk-based framework for method development, aligned with the forensic-data-science paradigm, ensures that methods are transparent, reproducible, and intrinsically resistant to cognitive bias [21]. This approach shifts the paradigm from a reactive "quality by testing" (QbT) model to a systematic Analytical Quality by Design (AQbD) framework, where quality and robustness are built into the method from its inception [22].

International guidelines, such as ICH Q9 on Quality Risk Management, define risk as the combination of the probability of occurrence of harm and the severity of that harm [22]. In the context of method development, this translates to a systematic process of identifying potential variables that may impact method performance and employing structured experiments to understand and control them. This is particularly critical in forensic science, where the method's output must withstand rigorous legal scrutiny. The adoption of a lifecycle management model, as reinforced by the modernized ICH Q2(R2) and ICH Q14 guidelines, moves validation from a one-time event to a continuous process that begins with predefined objectives [23].

The Analytical Method Lifecycle and Risk Management

The analytical method lifecycle encompasses all stages from initial conception through routine use and eventual retirement. A holistic risk management strategy must cover the entire lifecycle to guarantee the method remains fit-for-purpose [22].

The following diagram illustrates the continuous, risk-informed stages of the analytical method lifecycle:

Figure 1: The Analytical Method Lifecycle. This continuous process begins with method design and development (yellow), transitions to formal validation and operational control (green), and includes ongoing monitoring and improvement (red). Knowledge gained in later stages feeds back to inform future development cycles [22].

The initial Design and Development phase is where risk assessment plays its most crucial role. Here, the Analytical Target Profile (ATP) is defined, and risks to achieving its performance criteria are identified. The subsequent Validation phase confirms that the method meets the ATP. The Control Strategy and Continual Improvement phases rely on ongoing risk monitoring to manage post-approval changes and performance trends, ensuring the method's long-term robustness [22]. This lifecycle approach, supported by tools like the ATP, provides a structured framework that is consistent with the principles of ISO 21043 for forensic sciences, which emphasizes vocabulary, interpretation, and reporting [21].

Core Principles and Regulatory Framework

The transition from a unstructured approach to a systematic framework is guided by key principles and regulatory guidelines.

From Quality by Testing (QbT) to Analytical Quality by Design (AQbD)

The traditional Quality by Testing (QbT) approach involves varying one factor at a time (OFAT) and often leads to a "false optimum" with limited understanding of variable interactions, making the method fragile and difficult to modify [22]. In contrast, Analytical Quality by Design (AQbD) is a systematic, risk-based approach that begins with predefined objectives. It incorporates prior knowledge, risk assessment, and multivariate experiments via Design of Experiments (DoE) to build a deep understanding of the method [22]. The outcome is a well-understood Method Operability Design Region (MODR) where method performance is guaranteed with a defined probability.

Key Regulatory Guidelines: ICH Q2(R2), Q14, and Q9

Modern regulatory guidance firmly supports this proactive, scientific approach:

- ICH Q2(R2): Validation of Analytical Procedures: This revised guideline is the global standard for validation. It expands its scope to include modern technologies and formalizes a science- and risk-based approach, emphasizing that validation is part of a broader lifecycle [23].

- ICH Q14: Analytical Procedure Development: This new guideline complements Q2(R2) by providing a structured framework for development. It introduces the Analytical Target Profile (ATP) as a foundational element and differentiates between traditional and enhanced, more flexible development approaches [23] [24].

- ICH Q9: Quality Risk Management: This guideline provides the overarching principles for risk management, defining risk and outlining systematic processes for risk assessment, control, communication, and review [22].

The following table summarizes the core validation parameters as outlined in ICH Q2(R2), which form the basis of the ATP and method performance criteria [23].

Table 1: Core Analytical Method Validation Parameters as per ICH Q2(R2)

| Parameter | Definition | Typical Acceptance Criteria |

|---|---|---|

| Accuracy | The closeness of test results to the true value. | Measured by recovery of a known amount; typically ±10-15% of the theoretical value for assay. |

| Precision | The degree of agreement among individual test results. Includes repeatability, intermediate precision, and reproducibility. | Relative Standard Deviation (RSD) < 2% for assay, < 5-10% for impurities. |

| Specificity | The ability to assess the analyte unequivocally in the presence of other components. | No interference from blank, placebo, or known impurities. |

| Linearity | The ability to obtain test results proportional to the analyte concentration. | Correlation coefficient (r) > 0.998. |

| Range | The interval between upper and lower analyte concentrations for which linearity, accuracy, and precision are demonstrated. | Defined by the intended use of the method (e.g., 50-150% of test concentration). |

| LOD / LOQ | The Lowest Amount that can be Detected (LOD) or Quantitated (LOQ). | Signal-to-noise ratio of 3:1 for LOD, 10:1 for LOQ. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters. | Method meets all validation criteria when parameters are deliberately altered. |

Practical Application: A Risk Assessment Workflow

Implementing a risk-based program requires a practical and standardized workflow. The following protocol and diagram outline a robust process for conducting an analytical risk assessment.

Experimental Protocol: Conducting an Analytical Risk Assessment (RA)

Objective: To systematically evaluate a developed analytical method to identify and mitigate risks, ensuring it is fit-for-purpose and ready for formal validation and technical transfer to a quality control (QC) environment [24].

Materials and Reagents:

- Method Documentation: Complete method development history, including the defined Analytical Target Profile (ATP) and proposed standard operating procedure (SOP).

- Risk Assessment Tool: A predefined spreadsheet template with lists of potential method concerns for the specific technique (e.g., LC, GC, LC/MS) [24].

- Data: All robustness study data and any method performance data from the development phase.

Procedure:

- Preparation: The method developer collates all method information, including the ATP, validation requirements, and robustness study data. The method risk assessment spreadsheet template is pre-populated with known parameters and potential concerns [24].

- Team Assembly: Convene a meeting with key stakeholders, including the method developer, analytical project lead, subject matter experts (SMEs), and quality and commercial analytical representatives [24].

- Risk Assessment Meeting (Round 1): a. Present the method development history, challenges, and data to "set the scene." b. Using the pre-populated spreadsheet tool, review the method line-by-line. The tool should assess variables related to sample preparation and sample analysis, organized by categories such as the "6 Ms" (Machine, Method, Material, humanpower, Measurement, Mother Nature) [24]. c. For each variable, the team discusses and agrees on one of three outcomes: the variable meets the ATP, it is a risk (graded as medium/yellow or high/red), or there is a knowledge gap. d. All identified risks and gaps are transcribed to a "Risk Heat Map" along with a proposed mitigation plan (e.g., perform further DoE, implement a control) [24].

- Mitigation Actions: Execute the experimental plan defined in the mitigation strategy (e.g., additional robustness testing or knowledge-gathering experiments).

- Re-assessment (Round 2): Re-convene the team to review the new data. The "Risk Heat Map" is updated to reflect the revised risk level (e.g., green after successful mitigation). The outcome is a formal confirmation of the method's readiness for validation or a directive for further work [24].

The Risk Assessment Workflow

The following diagram visualizes the iterative workflow of the risk assessment process:

Figure 2: The Iterative Risk Assessment Workflow. The process begins with a proposed method and its data. A formal risk assessment evaluates it against the ATP, leading to a decision point. Unacceptable risks trigger additional experiments, creating an iterative cycle until the method is deemed ready for validation [24].

The Scientist's Toolkit: Essential Research Reagent Solutions

A robust risk assessment program is supported by both conceptual tools and practical materials. The following table details key reagents and materials critical for developing and validating analytical methods, particularly in a pharmaceutical QC or forensic context.

Table 2: Essential Research Reagent Solutions for Analytical Method Development

| Item | Function & Importance in Risk Mitigation |

|---|---|

| Certified Reference Standards | High-purity materials with certified identity and purity. Essential for accurately determining method Accuracy, Specificity, and for calibrating instruments. Using sub-standard materials is a major risk to data integrity. |

| System Suitability Test (SST) Mixtures | A prepared mixture of analytes and key impurities designed to verify that the chromatographic system (or other instrument) is operating correctly before analysis. A critical control to mitigate risks related to instrument performance [24]. |

| Stable Isotope-Labeled Internal Standards | Used in mass spectrometric methods (e.g., for mutagenic impurities). They correct for matrix effects and variability in sample preparation and ionization, directly improving Accuracy and Precision, thereby mitigating a key risk in quantitative bioanalysis [24]. |

| Forced Degradation Samples | Samples of the drug substance or product that have been intentionally stressed (e.g., with heat, light, acid, base, oxidant). Used to validate the Specificity of stability-indicating methods and demonstrate that the method can accurately measure the analyte in the presence of its degradation products. |

| Placebo/Blank Matrix | The formulation base without the active ingredient (for drugs) or a representative biological fluid/sample without the analyte (for forensics). Critical for assessing Specificity by confirming the absence of interfering signals from the sample matrix itself. |

The integration of a proactive risk assessment framework into analytical method development is no longer a best practice but a scientific and regulatory imperative. By adopting the principles of AQbD and leveraging tools like the ATP and structured risk assessments, researchers can build quality and robustness directly into their methods. This systematic approach yields methods that are not only compliant with global standards like ICH Q2(R2) and ISO 21043 but are also more resilient, understandable, and adaptable throughout their entire lifecycle. This ultimately ensures the generation of reliable, defensible data that is crucial for both patient safety and the integrity of the forensic justice system.

A Step-by-Step Framework: Implementing Risk Assessment in Validation Protocols

Within a comprehensive risk assessment framework for forensic method validation, the initial and most critical step is the systematic identification of risks. This process involves cataloging potential vulnerabilities inherent in analytical procedures before they can compromise data integrity, result reliability, or regulatory compliance. In forensic science, where findings must withstand legal scrutiny, and in drug development, where they impact patient safety, a structured approach to risk identification is an ethical and professional imperative [7]. This document provides detailed application notes and protocols for researchers and scientists to execute this foundational step effectively.

Methodology for Risk Identification

A multi-faceted approach ensures a holistic cataloging of vulnerabilities. The following methodologies should be employed concurrently.

Process Deconstruction and Mapping

The analytical procedure must be deconstructed into its discrete, sequential steps—from sample receipt and preparation to data analysis and reporting. Each step is then examined for potential failure modes. This mapping creates a logical workflow that is essential for visualizing and analyzing the entire process.

Expert-Led Brainstorming Sessions

Leverage the collective expertise of cross-functional teams, including analytical scientists, quality assurance personnel, and regulatory affairs specialists. Sessions should be structured using prompts derived from key validation parameters, such as "How could this method fail to be specific for the target analyte?" or "What conditions could affect the accuracy of this result?" [25].

Review of Historical Data and Deviations

Analyze data from past method validations, transfers, and routine use. Previous deviations, out-of-specification (OOS) results, and audit findings are invaluable resources for identifying recurrent or latent vulnerabilities.

Quantitative Risk Assessment Framework

Once potential vulnerabilities are identified, they must be assessed and prioritized based on their Likelihood (probability of occurrence) and Impact (severity of consequence). A risk matrix is the standard tool for this prioritization [26] [27].

Likelihood (Probability) Rating Scale

The following 5-point scale defines the probability of a risk event occurring. Definitions should be customized for the specific application, whether for a design flaw (DFMEA) or an operational failure [27].

Table 1: 5-Point Likelihood Rating Scale

| Likelihood Rating | Label | Description | Quantitative Guide (Probability) |

|---|---|---|---|

| 1 | Rare | Failure is highly improbable; method is proven and highly reliable. | < 0.01% |

| 2 | Unlikely | Failure is unlikely; low risk exposure with strong controls. | 0.1% - 1% |

| 3 | Occasional | Failure may occur under specific conditions; moderate controls. | 1% - 20% |

| 4 | Likely | Failure is likely; method shows weaknesses or insufficient controls. | 20% - 95% |

| 5 | Almost Certain | Failure is expected; method is new, untested, or has inherent flaws. | > 95% |

Impact (Severity) Rating Scale

The Impact scale measures the consequence of a single occurrence of the failure. The rating should consider multiple dimensions of effect [27].

Table 2: 5-Point Impact Rating Scale

| Impact Rating | Label | Operational & Scientific Impact | Regulatory & Legal Impact |

|---|---|---|---|

| 1 | Insignificant | Negligible delay or data noise; no impact on conclusion. | No regulatory impact. |

| 2 | Minor | Minor operational delay; requires data re-processing. | Minor documentation finding. |

| 3 | Moderate | Significant project delay; unreliable data for a parameter. | Regulatory observation; requires response. |

| 4 | Major | Widespread project disruption; invalidates a critical result. | Submission rejection; compliance warning. |

| 5 | Catastrophic | Project failure; scientifically incorrect conclusion. | Legal exclusion of evidence; wrongful conviction [7]. |

Risk Scoring and Prioritization Matrix

The Risk Score is calculated by multiplying the Likelihood and Impact ratings, emphasizing high-likelihood, high-impact risks. The resulting score places the risk into a priority category, which dictates the required response [26] [27].

Table 3: Risk Prioritization Matrix (Score = Likelihood x Impact)

| Likelihood Impact | 1 (Rare) | 2 (Unlikely) | 3 (Occasional) | 4 (Likely) | 5 (Almost Certain) |

|---|---|---|---|---|---|

| 1 (Insignificant) | 1 (Low) | 2 (Low) | 3 (Low) | 4 (Low) | 5 (Medium) |

| 2 (Minor) | 2 (Low) | 4 (Low) | 6 (Medium) | 8 (High) | 10 (High) |

| 3 (Moderate) | 3 (Low) | 6 (Medium) | 9 (High) | 12 (Extreme) | 15 (Extreme) |

| 4 (Major) | 4 (Low) | 8 (High) | 12 (Extreme) | 16 (Extreme) | 20 (Extreme) |

| 5 (Catastrophic) | 5 (Medium) | 10 (High) | 15 (Extreme) | 20 (Extreme) | 25 (Extreme) |

- Extreme (Red, GU): Unacceptable. Immediate mitigation and stringent controls are required. Action must be taken before method use.

- High (Orange, ALARP/GU): Undesirable. Requires specific mitigation plans and management attention.

- Medium (Yellow, ALARP): Acceptable with review. Mitigation measures should be considered.

- Low (Green, GA): Acceptable. Managed by routine procedures.

The relationship between the risk components and the resulting mitigation strategy can be visualized as a decision pathway.

Catalog of Common Vulnerabilities and Experimental Protocols

The following table catalogs common vulnerabilities associated with key analytical method validation parameters, providing a structured starting point for risk identification. It integrates the risk scoring framework and links vulnerabilities to experimental protocols for their detection.

Table 4: Catalog of Vulnerabilities in Analytical Method Validation

| Validation Parameter | Identified Vulnerability (Failure Mode) | Potential Root Cause | Risk Score (L x I) | Experimental Detection Protocol |

|---|---|---|---|---|

| Specificity/ Selectivity | Interference from sample matrix or impurities co-eluting with the analyte. | Inadequate chromatographic separation or detection wavelength. | 4-16 (M-E) | Protocol 1: Specificity Challenge. Inject blank matrix, placebo, and standard solutions. Compare chromatograms to confirm baseline resolution of the analyte from any interfering peaks. Calculate resolution factor (Rs > 1.5). [25] |

| Accuracy & Precision | Systematic bias (inaccuracy) or high variability (imprecision) in results. | Faulty reference standard, sample preparation error, or instrumental drift. | 6-20 (M-E) | Protocol 2: Spike/Recovery & Repeatability. Prepare samples at 3 concentration levels (low, mid, high) in triplicate. Calculate accuracy as mean % recovery (e.g., 98-102%). Calculate precision as %RSD of the measurements (e.g., RSD < 2%). [25] |

| Linearity & Range | Non-linear response across the intended working range. | Saturation of detector or non-optimal sample concentration. | 3-12 (L-E) | Protocol 3: Linearity Curve. Analyze a minimum of 5 concentration levels across the specified range. Plot response vs. concentration. Determine the correlation coefficient (R² > 0.998) and residual plots. [25] |

| Robustness & Ruggedness | Method performance is highly sensitive to small, deliberate variations in parameters. | Poorly optimized method conditions (e.g., pH, temperature, mobile phase). | 4-15 (M-E) | Protocol 4: Deliberate Variation. Intentionally vary one parameter at a time (e.g., flow rate ±0.1 mL/min, temperature ±2°C). Monitor the effect on critical performance attributes (e.g., retention time, resolution). [25] |

| LOD & LOQ | Inability to detect or quantify analytes at low concentrations. | Insufficient method sensitivity or high background noise. | 2-10 (L-H) | Protocol 5: Signal-to-Noise Determination. Analyze low concentration samples and measure the signal-to-noise ratio (S/N). LOD is typically S/N ≥ 3, and LOQ is S/N ≥ 10. [25] |

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and solutions required for conducting the experiments outlined in the risk identification and validation protocols.

Table 5: Essential Research Reagent Solutions and Materials

| Item Name | Function / Rationale for Use | Example / Specification |

|---|---|---|

| Certified Reference Standard | Provides the benchmark for accurate quantification and method calibration. Ensures traceability and validity of results. | Certified purity (e.g., > 99.5%), with valid Certificate of Analysis (CoA). Stored under specified conditions. |

| Blank Matrix | Used in specificity experiments to identify and account for interfering components from the sample itself. | The actual sample material (e.g., blood, tablet excipients) without the target analyte. |

| Internal Standard | Added to samples to correct for analyte loss during sample preparation and for instrumental variability. | A stable, non-interfering compound with similar chemical properties to the analyte, but distinguishable analytically. |

| Chromatographic Mobile Phase | The solvent system that carries the sample through the HPLC/UPLC column. Its composition is critical for retention and separation. | High-purity solvents (HPLC-grade) and buffers, prepared with precise pH and composition. Filtered and degassed. |

| System Suitability Test (SST) Solutions | A standardized solution used to verify that the total analytical system is performing adequately before and during sample analysis. | A mixture containing the analyte and any critical partners at a known concentration to test parameters like retention, resolution, and peak shape. |

Risk analysis is a fundamental step in establishing a robust risk assessment framework for forensic method validation research. It involves the systematic process of evaluating identified risks to determine their potential impact on the validation outcomes and the likelihood of their occurrence. In forensic science, where results carry significant weight in the criminal justice system, demonstrating that analytical methods are fit for purpose and produce reliable results is paramount [28]. This analysis occurs after risk identification and provides the critical data needed to prioritize risks and allocate resources effectively for risk treatment. The process enables researchers and scientists to make informed decisions about which risks require immediate mitigation and which can be accepted or monitored, ensuring that validation studies meet the rigorous standards expected by courts and regulatory bodies [28].

The Forensic Science Regulator's guidance emphasizes that validation involves "providing objective evidence that a method, process or device is fit for the specific purpose intended" [28]. Within the criminal justice system, there is a very reasonable expectation that forensic science results can be shown to be reliable. The risk assessment element helps ensure that "the validation study is scaled appropriately to the needs of the end-user," which for forensic science is primarily the criminal justice system rather than any particular analyst or laboratory [28]. This document provides detailed application notes and protocols for conducting both qualitative and quantitative risk assessments specifically within the context of forensic method validation research.

Foundational Concepts and Definitions

Key Risk Parameters

Understanding the core parameters of risk is essential for conducting a thorough analysis. The table below summarizes these fundamental concepts:

Table 1: Core Risk Parameters in Forensic Method Validation

| Parameter | Definition | Application in Forensic Validation |

|---|---|---|

| Impact | The effect a risk will have on the validation project if it occurs [29] | Also called consequence; measured in terms of effect on cost, schedule, functionality, and quality [29] |

| Likelihood | The extent to which the risk effects are likely to occur [29] | Comprises probability of occurrence and intervention difficulty; measured on defined scales [29] |

| Precision | The degree to which the risk is currently known and understood [29] | Indicates confidence in impact and likelihood estimates; rated as low, medium, or high [29] |

| Risk Severity | Combined measurement derived from impact and likelihood [29] | Determined using a risk matrix; used to prioritize risks [29] |

| Risk Appetite | The amount and type of risk an organization is willing to pursue or retain [30] | In forensic validation, typically very low for risks affecting result reliability [28] |

Qualitative vs. Quantitative Risk Analysis

Risk analysis approaches fall into two primary categories, each with distinct characteristics and applications:

Qualitative Risk Analysis involves identifying threats and opportunities, assessing how likely they are to happen, and evaluating the potential impacts if they do occur. The results are typically shown using a Probability/Impact ranking matrix [31]. This approach operates in a more generalized, "big-picture" space and is particularly valuable for prioritizing risks according to probability and impact, identifying the main areas of risk exposure, and improving understanding of project risks [31]. In forensic contexts, qualitative analysis helps researchers quickly identify which aspects of a method validation require the most attention.

Quantitative Risk Analysis (QRA) involves assessing and quantifying risks by assigning probabilistic values to potential outcomes. This technique helps organizations make more informed decisions by measuring the probability and impact of risks in financial or measurable terms [32]. According to Meyer, quantitative risk management in project management is "the process of converting the impact of risk on the project into numerical terms" [33]. This numerical information is frequently used to determine cost and time contingencies. In forensic validation, QRA might be applied to quantify the probability of false positives/negatives or to estimate the financial impact of validation delays.

Qualitative Risk Assessment Protocols

Core Qualitative Assessment Methodology

The qualitative risk assessment process for forensic method validation involves a structured approach to evaluating risks based on their potential impact and likelihood of occurrence. The protocol consists of the following key steps: