Blind vs. Traditional Proficiency Testing: A 2025 Comparative Analysis for Biomedical Research

This article provides a comparative analysis of blind and traditional proficiency testing methodologies, tailored for researchers and professionals in drug development and clinical diagnostics.

Blind vs. Traditional Proficiency Testing: A 2025 Comparative Analysis for Biomedical Research

Abstract

This article provides a comparative analysis of blind and traditional proficiency testing methodologies, tailored for researchers and professionals in drug development and clinical diagnostics. It explores the foundational principles of both approaches, details their practical application across laboratory and clinical trial settings, and addresses key implementation challenges. The analysis synthesizes current regulatory trends, including recent CLIA updates, and offers evidence-based insights to guide the selection, optimization, and validation of testing strategies to enhance data integrity, reduce bias, and ensure regulatory compliance in biomedical research.

Defining the Paradigms: Core Concepts in Traditional and Blind Proficiency Testing

Defining Traditional Declared Proficiency Testing

Traditional Declared Proficiency Testing (PT) is a fundamental quality assurance process where laboratories analyze samples of unknown values provided by an external source to evaluate their analytical performance. After testing, laboratories receive comparative data showing their results alongside those from other laboratories that tested the same specimens, enabling them to identify potential issues and implement corrective actions [1].

This form of testing serves as an external quality control mechanism, contrasting with internal quality checks. Originally developed as an educational tool to help laboratories investigate procedural problems, it has evolved into a mandatory requirement for accreditation and regulatory compliance across numerous testing industries [1] [2]. In declared PT—the most common format—participants know they are being tested, which distinguishes it from blind proficiency testing where analysts are unaware they are evaluating test samples [3] [4].

The Operational Framework of Declared Proficiency Testing

Core Components and Process Flow

The traditional declared PT process follows a standardized workflow with distinct stages and key participants. The sequence below outlines the primary steps in a single PT cycle:

Regulatory Context and Requirements

For many laboratories, participation in declared PT programs is not optional but mandated by regulatory frameworks:

- Clinical Laboratory Improvement Amendments (CLIA): Requires most U.S. facilities performing tests on human specimens to participate in approved PT programs, with specific requirements for test frequency and sample numbers [1]

- ISO/IEC 17025:2017: International standard for testing laboratories requiring participation in proficiency testing as evidence of technical competence [5]

- ISO/IEC 17043:2010: Provides the conformity assessment for PT providers, ensuring they operate under standardized quality systems [6]

Regulated analytes under CLIA require laboratories to analyze five samples or "challenges" across three shipments annually [1]. This structured approach helps ensure consistent quality monitoring throughout the year.

Experimental Protocols in Declared Proficiency Testing

Standardized Implementation Methodology

The experimental protocol for traditional declared PT follows rigorous standardization to ensure fair assessment across participating laboratories:

Sample Development: PT providers create characterized samples with predetermined values that closely mimic real patient, environmental, or product samples [2]. These samples are homogeneous and stable to ensure all participants receive equivalent materials.

Sample Distribution: Providers ship blind-coded samples to participating laboratories according to a predefined schedule, typically three times annually for regulated tests [1].

Laboratory Analysis: Participating laboratories analyze the PT samples using their standard methods, equipment, and personnel. The testing is performed with the knowledge that it is a proficiency assessment, but without knowing the expected values [2].

Result Submission: Laboratories confidentially report their analytical results to the PT provider within specified deadlines.

Performance Assessment: PT providers statistically evaluate each laboratory's results against pre-established acceptable performance criteria, which may include peer group comparisons and deviation from assigned values [2].

Grading and Reporting: Participants receive detailed reports showing their performance relative to peers and whether they met acceptance criteria, enabling identification of potential areas for improvement.

Veterinary Diagnostic Case Study Protocol

A 2025 study demonstrated a comprehensive approach to declared PT in veterinary diagnostics [7]. Fourteen veterinary diagnostic laboratories participated in an exercise to identify the root cause of simulated lead toxicosis in cattle using a multi-step methodology:

- Materials Provided: Participants received a clinical case description, digitized brain histology slides, and tissue samples (liver and brain) for optional chemical analysis [7]

- Experimental Timeline: Laboratories had 14 days to report histopathologic findings and differential diagnoses, and 21 days total to complete chemical analyses and final diagnosis [7]

- Assessment Criteria: Performance was evaluated across multiple competencies including histology interpretation, differential diagnosis formulation, appropriate test selection, analytical accuracy, and final diagnosis [7]

- Outcome Measurement: Thirteen of fourteen laboratories successfully diagnosed lead toxicosis by correctly completing all investigative stages [7]

Key Research Reagents and Materials

Table: Essential Components in Proficiency Testing Programs

| Component | Function | Quality Requirements |

|---|---|---|

| PT Samples | Unknown test materials for analysis | Homogeneous, stable, matrix-matched to routine samples [2] |

| Certified Reference Materials (CRMs) | Calibration and quality control | Certified values with established uncertainty, ISO 17034 accredited [2] |

| Method Validation Documents | Verify test procedures are fit for purpose | Established accuracy, precision, linearity, LOD/LOQ [2] |

| Statistical Analysis Package | Performance evaluation and peer comparison | Compliance with ISO 13528:2005 statistical methods [6] |

| Quality Control Materials | Internal process monitoring | Commutable with patient samples, well-characterized [2] |

Performance Data and Market Context

Adoption Rates and Comparative Effectiveness

Table: Declared vs. Blind Proficiency Testing Adoption and Characteristics

| Characteristic | Traditional Declared PT | Blind PT |

|---|---|---|

| Adoption in Forensic Labs | ~90% of U.S. forensic laboratories [4] | ~10% of U.S. forensic laboratories [4] |

| Analyst Awareness | Analysts know they are being tested | Analysts unaware they are being tested |

| Error Rate Detection | May not reflect real-world error rates due to heightened awareness | More accurately reflects routine performance and true error rates [8] |

| Implementation Complexity | Relatively straightforward, well-established protocols | Logistically challenging, requires external cooperation [3] |

| Cultural Acceptance | Widely accepted, minimal resistance | May challenge "myth of 100% accuracy" in some fields [4] |

Market Presence and Economic Impact

The laboratory proficiency testing market reflects the widespread adoption of declared PT schemes across industries:

- The global laboratory PT market was valued at USD 1.58 billion in 2025 and is projected to reach USD 1.98 billion by 2030, representing a 6.5% compound annual growth rate [5]

- Clinical diagnostics represents the largest segment with 38.67% market share in 2024, driven largely by regulatory mandates [5]

- Independent/third-party providers dominate the market with 54.45% share, highlighting the preference for vendor-neutral schemes [5]

Advantages and Limitations in Research Applications

Strengths of Traditional Declared PT

- Educational Value: Serves as a powerful teaching tool for laboratory staff to recognize methodological limitations and improve techniques [1]

- Comparative Benchmarking: Provides interlaboratory comparison that helps laboratories understand their performance relative to peers [2]

- Regulatory Compliance: Meets accreditation requirements for major regulatory bodies including CLIA and ISO [1] [5]

- Process Improvement: Identifies specific areas needing corrective action through standardized performance metrics [2]

Limitations and Methodological Considerations

- Potential for Enhanced Performance: Knowing they are being tested may cause analysts to exercise special care, potentially producing results that don't reflect routine performance [3]

- Limited Error Rate Data: May not detect all sources of error present in the complete testing process from sample receipt to reporting [8]

- Behavioral Modification: The knowledge of testing can change normal workflow patterns, reducing the ecological validity of the assessment [3]

Traditional declared proficiency testing represents the foundational approach to external quality assessment across diagnostic, forensic, and research laboratories. While it provides essential comparative data and educational value for continuous improvement, its limitations—particularly the potential for altered behavior when analysts know they are being tested—have prompted the development and increasing adoption of blind proficiency testing methods.

For researchers and drug development professionals, understanding both declared and blind PT methodologies is crucial for designing comprehensive quality systems. The optimal approach often involves implementing both methods strategically: using declared PT for educational development and method validation, while incorporating blind PT to obtain more realistic error rate data and validate the entire testing pipeline under routine operational conditions [3] [8]. This integrated strategy provides the most complete assessment of laboratory performance, supporting the generation of reliable, reproducible scientific data across all research and diagnostic applications.

In the rigorous world of scientific validation and product development, the methodology used for performance assessment can significantly influence outcomes and interpretations. Traditional proficiency testing, long considered the gold standard across various scientific disciplines, operates on a fundamental premise: participants know they are being evaluated using standardized materials under controlled conditions. While this approach provides valuable benchmarking data, it introduces potential biases that can compromise the real-world applicability of results. The emerging paradigm of blind testing represents a fundamental shift toward assessment methodologies that mirror authentic usage scenarios, delivering unbiased data that more accurately predicts real-world performance.

This comparative analysis examines the fundamental distinctions between these two methodological approaches, with a specific focus on their application in cutting-edge technological and scientific fields. Through detailed experimental data and case studies, we demonstrate how blind testing methodologies uncover performance insights that traditional proficiency testing often misses. As assessment protocols evolve, understanding the relative advantages, limitations, and appropriate applications of each approach becomes crucial for researchers, product developers, and quality assurance professionals aiming to make data-driven decisions based on the most reliable validation data possible.

Understanding the Methodological Spectrum

Traditional Proficiency Testing: Structured Assessment with Known Samples

Traditional proficiency testing represents a structured approach to evaluation where analysts or laboratories are assessed using standardized reference materials with known expected outcomes. This system has formed the backbone of quality assurance programs across numerous industries, particularly in regulated fields like food safety and clinical diagnostics.

The U.S. Food and Drug Administration's Grade "A" Milk Proficiency Testing Program exemplifies a mature, well-integrated proficiency testing system. This program annually distributed blinded milk samples to certified laboratories nationwide, requiring analysis for key safety parameters including bacterial counts, coliform levels, somatic cell counts, and antibiotic residues [9]. Laboratories analyzed these samples using prescribed methodologies and reported their results to the FDA's Moffett Center Proficiency Testing Laboratory for statistical analysis against expected values [9]. This system operated under a cooperative federal-state structure, with the National Conference on Interstate Milk Shipments (NCIMS) providing oversight and uniform standards across all participating laboratories [9]. The program demonstrated measurable success in improving laboratory performance over time, with peer-reviewed data showing a steady increase in correct results reported by laboratories from 2012 to 2018 [9].

Blind Testing: Real-World Evaluation Through Anonymous Assessment

Blind testing adopts a fundamentally different approach by removing the awareness of evaluation from the testing process. In this methodology, evaluators make comparative assessments without knowing the identity of the products, systems, or solutions they are evaluating. This approach effectively eliminates various forms of bias, including brand preference, expectation effects, and contextual influences that can consciously or subconsciously influence human judgment.

The LMArena platform, developed by the University of California, Berkeley, implements a sophisticated blind testing framework for evaluating AI models [10]. This platform presents users with anonymous outputs from different AI systems and collects preference data based solely on perceived quality without brand identification. This "blind" evaluation mechanism has become an internationally recognized benchmark for AI model performance, with recent assessments involving 26 competing models in a head-to-head comparison [10]. The platform's massive global user base generates substantial preference data that directly shapes public performance rankings, making it one of the most authoritative evaluation systems in the AI field [10].

Table: Fundamental Characteristics of Testing Methodologies

| Characteristic | Traditional Proficiency Testing | Blind Testing |

|---|---|---|

| Awareness of Evaluation | Participants know they are being assessed | Evaluators unaware of specific assessment context |

| Sample Identity | Known reference materials with expected values | Anonymous samples without identification |

| Primary Objective | Verify technical competence and method accuracy | Measure real-world performance and user preference |

| Data Output | Quantitative accuracy against reference standard | Qualitative preference and comparative ranking |

| Evaluation Context | Controlled laboratory conditions | Simulated real-world usage scenarios |

| Bias Control | Standardized methods to minimize procedural variation | Anonymous assessment to eliminate brand/preference bias |

Comparative Case Study: AI Model Evaluation

Experimental Protocol and Methodology

The LMArena blind testing platform employs a rigorous experimental protocol designed to eliminate bias while generating robust comparative data. The evaluation process begins with users submitting textual prompts or questions to the platform. The system then processes each query through two different AI models selected randomly from a pool of candidates. Critically, the outputs are presented to users without any identification of the underlying AI systems that generated them [10].

Users then evaluate the anonymous responses based on their subjective preference, considering factors such as accuracy, completeness, clarity, and usefulness. This preference data is aggregated across thousands of independent comparisons to generate a global performance ranking. The platform's authority stems from its massive scale and elimination of brand identification, forcing evaluations based solely on output quality rather than reputation or market presence [10].

In a recent evaluation cycle, the platform assessed 26 competing AI models through this blind comparison methodology. The extensive dataset generated through this process identified Tencent's Hunyuan Image 3.0 as the top-performing model, surpassing established competitors including Seedream 4 and Gemini 2.5 Flash Image Preview [10]. This outcome was particularly noteworthy as it represented the first time an open-source model achieved the top position in these rankings, demonstrating how blind testing can reveal performance advantages that might be obscured in traditional testing environments.

Quantitative Results and Performance Metrics

The blind testing results provided multidimensional insights into model performance that extended beyond simple ranking positions. The evaluation categorized Hunyuan Image 3.0 as the "Best Comprehensive Text-to-Image Model" and "Best Open-Source Text-to-Image Model," indicating strengths across both general performance and specific implementation attributes [10].

Qualitative analysis of the winning model's capabilities revealed several distinctive strengths. The model demonstrated exceptional semantic understanding accuracy, robust commonsense reasoning capabilities, and what evaluators described as "ultimate aesthetic quality" in generated images [10]. Additionally, the system supported both Chinese and English text generation with sophisticated long-text rendering capabilities. These attributes emerged organically through the blind evaluation process rather than being measured against predetermined benchmarks.

Table: Blind Testing Performance Evaluation of AI Models

| Evaluation Metric | Hunyuan Image 3.0 | Seedream 4 | Gemini 2.5 Flash |

|---|---|---|---|

| Overall Ranking | 1st | Outperformed | Outperformed |

| Model Type | Open-source | Not Specified | Proprietary |

| Semantic Understanding | Exceptional accuracy | Not specified | Not specified |

| Aesthetic Quality | Ultimate aesthetic quality | Not specified | Not specified |

| Multilingual Support | Chinese and English | Not specified | Not specified |

| Text Rendering | Advanced long-text capability | Not specified | Not specified |

| Commonsense Reasoning | Strong capabilities | Not specified | Not specified |

Comparative Analysis: Methodological Strengths and Limitations

Bias Elimination and Real-World Predictive Value

The fundamental advantage of blind testing lies in its ability to eliminate multiple forms of assessment bias that can skew results in traditional proficiency testing. By removing brand identification and evaluation context, blind testing forces assessments based solely on performance and output quality. This approach provides superior predictive value for real-world performance where end-users typically engage with products or systems without the awareness that they're participating in an evaluation.

In the AI model assessment case, the blind testing methodology prevented the reputation of established technology providers from influencing results. This allowed a relatively new open-source model to demonstrate its competitive advantages based purely on output quality [10]. The massive scale of the evaluation—with thousands of independent comparisons—provided statistical power that compensated for the inherent subjectivity of individual preference assessments. This combination of bias elimination and large-sample validation creates a compelling argument for blind testing when the primary concern is predicting actual user satisfaction and adoption.

Measurement Precision and Technical Competence Assessment

Traditional proficiency testing excels in its ability to generate precise, quantitative measurements of technical competence against established reference standards. The FDA milk testing program, for instance, provided specific, measurable performance metrics for laboratory analytical capabilities across multiple critical safety parameters [9]. The program's structured approach allowed for direct comparison across laboratories and over time, creating a robust dataset for tracking performance trends and identifying areas needing improvement.

The 2021 review of the FDA proficiency exercises demonstrated the effectiveness of this approach, showing steady improvement in correct results from participating laboratories between 2012 and 2018 [9]. This longitudinal improvement suggests that the iterative feedback loop inherent in traditional proficiency testing—where laboratories receive specific performance data and can implement corrective measures—drives tangible improvements in technical competence. This characteristic makes traditional proficiency testing particularly valuable for regulatory compliance and quality assurance in fields where precise measurement against established standards is paramount.

Implementation Challenges and Resource Requirements

Both methodologies present distinct implementation challenges that influence their appropriateness for specific assessment contexts. Traditional proficiency testing requires sophisticated reference material preparation, standardized distribution protocols, and centralized data analysis capabilities. The suspension of the FDA milk proficiency testing program in 2025 highlights the vulnerability of these complex systems to resource constraints and organizational changes [9]. The program's suspension was directly attributed to "major federal workforce reductions" and the pending closure of the supporting laboratory facility, demonstrating how resource-intensive traditional proficiency testing programs can be [9].

Blind testing implementations face different challenges, particularly regarding scale and evaluation criteria. To generate statistically significant results, blind testing typically requires massive participation volumes—the LMArena platform leverages its global user base to achieve the necessary comparison volume [10]. Additionally, the subjective nature of preference-based evaluation requires careful design to ensure that assessments measure meaningful quality dimensions rather than superficial characteristics. For AI model evaluation, this meant designing interfaces that allowed users to naturally engage with model outputs as they would in real-world usage scenarios, then capturing preference data based on that authentic interaction [10].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Research Reagent Solutions for Testing and Evaluation

| Reagent/Material | Function in Testing Protocol | Application Context |

|---|---|---|

| Standardized PT Samples | Reference materials with known values for accuracy verification | Traditional proficiency testing programs [9] |

| Bacterial Count Spikes | Milk samples with predetermined bacterial concentrations | SPC, coliform, and PLC proficiency testing [9] |

| Antibiotic Residue Spikes | Samples containing known drug residue concentrations | Appendix N drug residue screening tests [9] |

| Somatic Cell Count Standards | Reference materials with established somatic cell levels | Milk quality assessment in proficiency testing [9] |

| Alkaline Phosphatase Controls | Samples with known enzyme activity levels | Pasteurization verification testing [9] |

| Text Prompt Libraries | Standardized input sets for consistent model evaluation | AI model blind testing platforms [10] |

| Response Comparison Interfaces | Software systems for anonymous output presentation | Blind preference evaluation platforms [10] |

The comparative analysis of blind testing versus traditional proficiency testing reveals complementary rather than competing methodologies. Each approach brings distinctive strengths to the assessment landscape, with optimal application depending on the specific objectives and constraints of the evaluation context.

Traditional proficiency testing remains indispensable for verifying technical competence, ensuring regulatory compliance, and driving continuous improvement in analytical capabilities. The highly structured nature of these programs provides unambiguous performance metrics against established standards, making them particularly valuable in fields where measurement precision directly impacts safety and quality outcomes. The documented improvement in laboratory performance within the FDA milk testing program demonstrates how iterative proficiency testing with structured feedback creates tangible quality enhancements over time [9].

Blind testing emerges as a superior methodology for predicting real-world adoption, user satisfaction, and overall quality perception in competitive environments. By eliminating the biases inherent in branded evaluations, blind testing provides unique insights into how products or systems will perform in authentic usage scenarios. The ability of blind testing to identify unexpected performance advantages—such as the top ranking of an open-source AI model against established proprietary competitors [10]—demonstrates its value for strategic decision-making and product development.

For research and quality assurance professionals, the most effective approach involves strategically combining these methodologies to leverage their complementary advantages. Traditional proficiency testing ensures technical excellence and compliance with established standards, while blind testing validates user-centric quality attributes and predicts market acceptance. As assessment methodologies continue to evolve, this integrated framework will provide the most comprehensive understanding of performance across both technical and user-experience dimensions.

Proficiency testing (PT) serves as a critical component of external quality assurance, enabling laboratories to validate their testing accuracy and demonstrate competency to accreditation bodies and regulators. In clinical diagnostics, the Clinical Laboratory Improvement Amendments (CLIA) establish the foundational requirements for laboratory testing, including mandatory participation in proficiency testing for regulated analytes. The recent updates to CLIA regulations, implemented in January 2025, represent the most significant changes in decades, tightening acceptance limits for numerous analytes to reflect advancing analytical capabilities and clinical needs.

Within this regulatory framework, two distinct methodological approaches have emerged for assessing laboratory performance: traditional declared proficiency testing and blind proficiency testing. While both methods serve quality assessment purposes, they differ fundamentally in design, implementation, and ability to reflect real-world laboratory performance. This guide provides a comparative analysis of these approaches, examining their respective advantages, limitations, and applications within modern laboratory medicine amidst evolving regulatory standards.

CLIA 2025 Updates: Key Changes and Implications

The updated CLIA regulations, formalized through CMS-3355-F, introduce significant modifications to proficiency testing requirements that laboratories must incorporate into their quality assurance programs. These changes, which became fully implemented on January 1, 2025, include tighter performance standards for many established analytes and the addition of new regulated tests.

Major Changes in Acceptance Criteria

The following tables summarize key changes in acceptable performance criteria across different testing specialties:

Table: Selected CLIA 2025 Changes in Chemistry and Toxicology

| Analyte or Test | OLD Acceptance Criteria | NEW 2025 Acceptance Criteria |

|---|---|---|

| Alanine aminotransferase (ALT) | Target value ± 20% | Target value ± 15% or ± 6 U/L (greater) |

| Glucose | Target value ± 6 mg/dL or ± 10% (greater) | Target value ± 6 mg/dL or ± 8% (greater) |

| Creatinine | Target value ± 0.3 mg/dL or ± 15% (greater) | Target value ± 0.2 mg/dL or ± 10% (greater) |

| Hemoglobin A1c | Not previously regulated | Target value ± 8% |

| Blood Alcohol | Target value ± 25% | Target value ± 20% |

| Blood Lead | Target value ± 10% or ± 4 mcg/dL (greater) | Target value ± 10% or ± 2 mcg/dL (greater) |

| Troponin I | Not previously regulated | Target value ± 0.9 ng/mL or ± 30% (greater) |

| Troponin T | Not previously regulated | Target value ± 0.2 ng/mL or ± 30% (greater) |

Table: Selected CLIA 2025 Changes in Hematology and Immunology

| Analyte or Test | OLD Acceptance Criteria | NEW 2025 Acceptance Criteria |

|---|---|---|

| Hematocrit | Target value ± 6% | Target value ± 4% |

| Hemoglobin | Target value ± 7% | Target value ± 4% |

| Leukocyte count | Target value ± 15% | Target value ± 10% |

| Unexpected antibody detection | 80% accuracy | 100% accuracy |

| Complement C3 | Target value ± 3 SD | Target value ± 15% |

| IgA, IgE, IgG, IgM | Target value ± 3 SD | Target value ± 20% |

Implications for Laboratory Operations

These updated requirements reflect several important trends in laboratory medicine. The tighter acceptance limits for many established analytes demonstrate increasing expectations for analytical precision, driven by technological advancements in instrumentation and reagents. The addition of new regulated analytes, including hemoglobin A1c, troponins, and various endocrinology tests, expands the scope of quality monitoring to reflect evolving clinical practice guidelines and the growing importance of these markers in diagnostic and therapeutic decisions.

Furthermore, the shift from standard deviation-based criteria to percentage-based criteria for immunology tests (e.g., Complement C3, immunoglobulins) represents a move toward more consistent evaluation methods across different concentration levels. Laboratories must review their method verification data, establish new baseline performance metrics, and potentially enhance quality control procedures to meet these updated standards consistently.

Comparative Methodologies: Blind vs. Traditional Proficiency Testing

Traditional Declared Proficiency Testing

Traditional proficiency testing, the most widely implemented approach in accredited laboratories, involves the scheduled distribution of known test samples to participating laboratories. These samples are clearly identified as part of a proficiency testing program, and personnel are aware they are being evaluated when processing these specimens.

Key Characteristics:

- Scheduled distribution at regular intervals (typically 3 times annually)

- Overt identification as proficiency testing materials

- Predictable timing and sample type expectations

- Standardized grading against peer group performance

- Educational focus with detailed performance reports

This approach allows laboratories to prepare specifically for proficiency testing events, often assigning their most experienced personnel and applying special quality checks to ensure optimal performance. While this provides valuable educational benefits and helps identify methodological limitations, it may not accurately reflect routine laboratory operations [3].

Blind Proficiency Testing

Blind proficiency testing involves the submission of test samples that mimic routine patient specimens, with laboratory personnel unaware they are being evaluated. This approach, while logistically challenging, provides a more authentic assessment of routine laboratory performance.

Key Characteristics:

- Covert submission disguised as routine patient specimens

- Unpredictable timing and sample characteristics

- Testing of entire laboratory pipeline from receipt to reporting

- Assessment of routine performance without special preparation

- Potential to detect misconduct or systematic procedural deviations

Blind PT programs are more established in federal forensic facilities and certain medical testing industries, but remain underrepresented in clinical laboratory practice despite their significant advantages for quality assessment [3].

Experimental Comparison: Methodologies and Outcomes

Historical Case Study Design

A seminal 1977 study provides compelling experimental data comparing laboratory performance with blind and traditional proficiency testing methodologies [11]. The investigation employed a rigorous paired-comparison design:

Experimental Protocol:

- Sample Preparation: Identical simulated addict urine samples containing drugs were prepared for distribution

- Dual Distribution Mechanism:

- Blind testing arm: Samples were sent to collaborating hospital administrators and methadone center officials, who forwarded them to their supporting laboratories as ordinary patient specimens

- Traditional testing arm: The identical samples were mailed directly to the same laboratories as part of a regular CDC proficiency testing program

- Participating Laboratories: Multiple laboratories already participating in the CDC proficiency testing program

- Performance Evaluation: Comparison of testing accuracy between the two distribution methods for identical samples

Table: Experimental Design of 1977 Proficiency Testing Comparison

| Experimental Component | Blind Testing Arm | Traditional Testing Arm |

|---|---|---|

| Sample Composition | Identical simulated addict urine samples with drugs | Identical simulated addict urine samples with drugs |

| Sample Identification | Presented as routine patient specimens | Identified as proficiency testing materials |

| Distribution Pathway | Via hospital administrators and methadone center officials | Direct mail from CDC proficiency testing program |

| Laboratory Awareness | Unaware of testing situation | Aware of proficiency testing evaluation |

| Performance Metric | Testing accuracy for drug detection | Testing accuracy for drug detection |

Comparative Results and Implications

The findings revealed significant disparities in laboratory performance between the two testing approaches:

Performance Outcomes:

- Most laboratories performed acceptably with the traditionally distributed proficiency testing samples

- Many of these same laboratories performed poorly when analyzing the identical samples submitted as blind specimens

- The performance gap demonstrated that awareness of testing conditions substantially influenced laboratory operations and results quality

This study highlighted fundamental limitations of traditional proficiency testing alone and prompted recommendations for complementary monitoring approaches, including onsite performance evaluation programs to provide more comprehensive quality assessment [11].

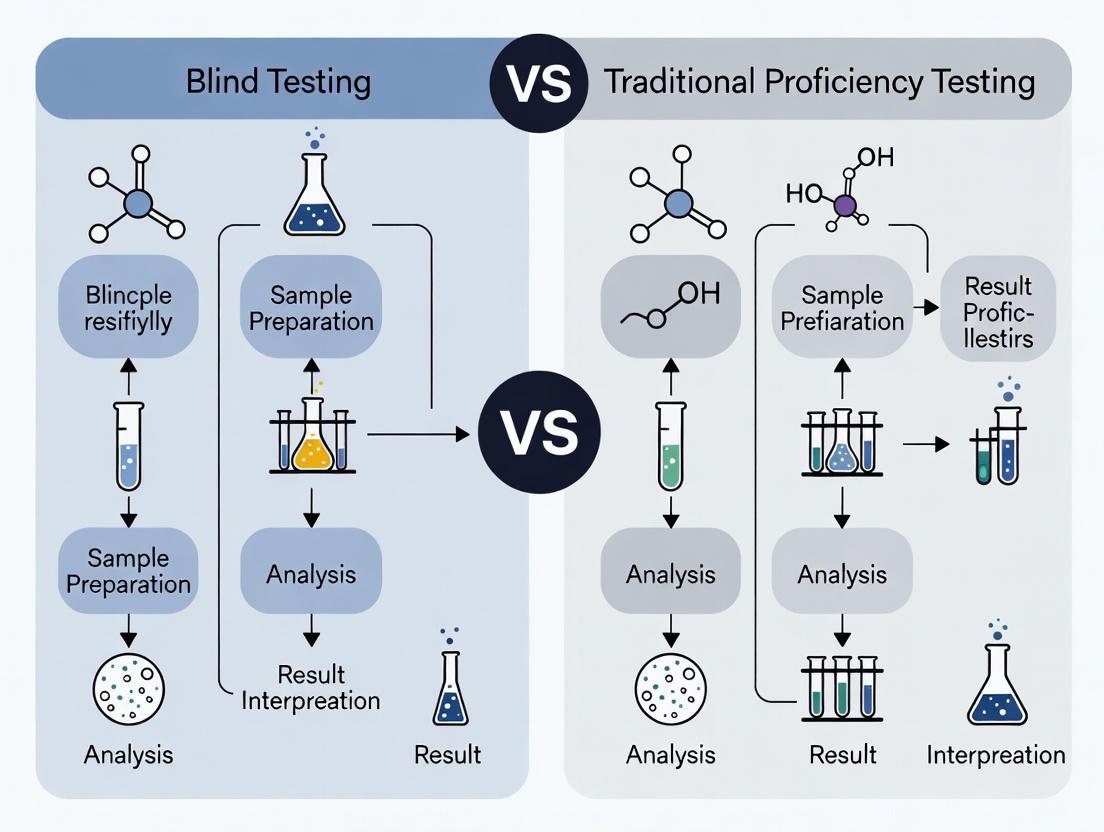

Visualizing Proficiency Testing Methodologies

Diagram: Proficiency Testing Methodologies Comparison

This workflow illustrates the fundamental differences between traditional and blind proficiency testing approaches. The divergence at the distribution phase creates fundamentally different testing conditions, with traditional PT triggering special handling protocols while blind PT maintains normal operational conditions, resulting in potentially different performance outcomes.

Implementation Considerations and Industry Outlook

Barriers to Blind Proficiency Testing Adoption

Despite its theoretical advantages, blind proficiency testing faces significant implementation challenges in clinical laboratory settings:

Logistical Constraints:

- Sample authenticity: Creating blind samples that perfectly mimic patient specimens across all test modalities

- Regulatory compliance: Navigating CLIA requirements while maintaining blinding integrity

- Result reconciliation: Managing clinical reporting obligations for disguised specimens

- Program administration: Higher complexity and cost compared to traditional PT programs

Cultural and Operational Barriers:

- Resource intensiveness: Requires significant coordination with clinical partners for sample submission

- Resistance to change: Laboratories may perceive blind testing as unfairly punitive rather than educational

- Accreditation frameworks: Traditional PT is deeply embedded in current quality assurance paradigms [3]

The Evolving Proficiency Testing Market

The global proficiency testing market reflects growing emphasis on laboratory quality standards, with the market valued at approximately $1.2 billion in 2023 and projected to reach $1.6 billion by 2028 [12]. Key providers driving innovation include:

Table: Leading Proficiency Testing Providers and Specializations

| Provider | Specializations | Global Reach |

|---|---|---|

| LGC Limited (UK) | Clinical, food, environmental, pharmaceutical | ~19% market share; 13,000+ labs in 160+ countries |

| College of American Pathologists (US) | Clinical laboratory medicine | 25,000+ participating laboratories worldwide |

| Bio-Rad Laboratories (US) | Clinical chemistry, immunoassays, hematology | ~14% market share; 150+ countries |

| Randox Laboratories (UK) | RIQAS - clinical chemistry, hematology, immunoassay | 70,000+ participants across 140 countries |

| Fera Science (UK) | FAPAS - food, water, environmental | Thousands of labs in 130+ countries |

These organizations are increasingly incorporating technological innovations, including AI-driven result analysis and expanded test menus for emerging diagnostics, to enhance the value and efficiency of proficiency testing programs [12].

Essential Research Reagent Solutions for Proficiency Testing

Implementing robust proficiency testing programs requires specific materials and reagents to ensure accurate, reproducible results. The following solutions are fundamental to both traditional and blind PT methodologies:

Table: Essential Research Reagent Solutions for Proficiency Testing

| Reagent Category | Specific Examples | Primary Function in PT |

|---|---|---|

| Matrix-Matched Materials | Synthetic urine, artificial serum, lyophilized blood | Provides physiologically relevant sample matrices that mimic patient specimens for realistic testing conditions |

| Stable Analyte Solutions | Certified reference materials, spiked solutions | Delivers known analyte concentrations at critical decision levels for accurate performance assessment |

| Preservation and Stabilization Reagents | Antimicrobial agents, enzyme inhibitors, stabilizers | Maintains sample integrity during shipping and storage, preventing analyte degradation |

| Interference Testing Panels | Hemolyzed, icteric, lipemic samples | Evaluates method specificity and identifies potential interferents affecting accuracy |

| Calibration Verification Materials | Standards traceable to reference methods | Ensures analytical measurement continuity and standardization across testing events |

These reagent systems must demonstrate commutability with patient samples (reacting similarly in analytical systems), long-term stability throughout PT event cycles, and concentration accuracy at clinically relevant decision points to provide meaningful performance assessment.

The evolving regulatory landscape, exemplified by the CLIA 2025 updates, reflects increasing expectations for analytical quality in laboratory medicine. While traditional proficiency testing remains a foundational component of quality assurance programs, evidence suggests that supplementing with blind testing methodologies could provide more authentic assessment of routine laboratory performance.

The comparative analysis presented demonstrates that methodology significantly influences performance outcomes, with laboratories typically demonstrating better results under declared testing conditions. As the proficiency testing industry continues evolving, incorporating technological innovations and complementary assessment approaches will be essential for advancing quality standards.

For researchers, scientists, and drug development professionals, understanding these methodological distinctions is crucial when evaluating laboratory performance data or designing quality assessment protocols. A balanced approach incorporating both traditional educational PT and periodic blind assessment may offer the most comprehensive evaluation of laboratory competency, ultimately supporting improved patient care through enhanced diagnostic accuracy.

盲测试 vs 传统能力验证:质量保证与性能验证的双重路径

方法论基础与核心目标

在科学研究与产品开发,尤其是药物研发领域,质量保证和性能验证是确保结果可靠性的基石。盲测试与传统能力验证作为两种核心实验方法,虽共享确保数据准确性的终极目标,但其哲学基础、实施路径和适用场景存在系统性差异。

盲测试,尤其在在线对照实验中,通过将受试单元随机分配至实验组和对照组,并在不知情条件下施加不同干预,以验证因果关系。该方法源自生物医学的“双盲测试”,随机化过程能有效控制除干预策略外的混杂变量,确保结果差异可归因于干预本身。在理想情况下,它通过创建可比较的组群来近似“平行时空”,从而定量评估策略收益、风险和成本 [13]。

传统能力验证则是一种外部质量评估程序,通过实验室间比对来确定实验室从事特定测试的能力。它作为临床实验室改进修正案的核心组成部分,通过向参与实验室分发已知样本,将其检测结果与参考值或同行结果进行比较,从而评估并证明其检测系统的准确性。2024年美国医疗保险和医疗补助服务中心的新规将其更新为包含更多分析物、更多挑战次数和更严格的评分标准,以符合现代医学实践需求 [14]。

关键参数的系统比较

下面的综合对比表格详细列出了两种方法在核心参数上的差异,为研究人员的方法选择提供依据。

表:盲测试与传统能力验证的关键参数比较

| 比较维度 | 盲测试 | 传统能力验证 |

|---|---|---|

| 核心目标 | 验证因果关系;定量评估干预效果 | 评估实验室检测准确性;确保结果可比性 |

| 方法论基础 | 随机分组;对照原则;假设检验 | 样本循环;实验室间比对;一致性评估 |

| 随机化应用 | 核心要素,通过随机分配消除混杂偏倚 | 通常不涉及,主要依赖既定检测流程 |

| 实施频率 | 按需进行,与产品迭代或策略变更同步 | 定期进行,通常每年3次挑战 [14] |

| 样本类型 | 真实用户、实验动物或模拟案例 | 已知特性的标准物质或临床样本 |

| 结果评估 | 统计显著性检验;效应值计算 | 与靶值或共识值的偏差分析;通过/失败判定 |

| 主要输出 | 因果关系的定性结论与效果大小的定量估计 | 检测准确性的客观证据;实验室能力证明 |

| 监管地位 | 多数情况下为内部决策工具 | CLIA等法规的明确要求;实验室认证必备 [14] |

| 适用领域 | 药物疗效试验、产品特性评估、用户体验优化 | 临床诊断、环境监测、法医学检测 |

| 典型分析单元 | 用户行为指标、临床终点事件、产品使用数据 | 分析物浓度、微生物鉴定、基因序列 [15] |

能力验证的评分标准近年来持续进化。以临床化学为例,新规将许多分析物的可接受性能界限从标准差改为百分比基础限值,或结合绝对值与百分比中更宽容者。例如,胆红素的性能要求为±20%或±0.4 mg/dL,甲状腺刺激激素为±20%或±0.2 mIU/L,锂为±15%或±0.3 mmol/L [14]。

实验方案与工作流程

盲测试的标准实施流程

规范的盲测试流程包括实验设计、随机分组、干预实施、数据收集和统计分析等关键阶段。下面的流程图详细描述了这一标准化过程。

传统能力验证的执行路径

传统能力验证遵循样本准备、分发、检测、结果报告和性能评估的系统流程,其标准操作流程如下:

研究试剂与关键材料

在两种方法中,一系列标准化的试剂和材料对保证实验质量至关重要。以下表格列出了关键研究试剂解决方案及其功能。

表:质量验证研究中的关键试剂与材料

| 试剂/材料类别 | 主要功能 | 应用场景 |

|---|---|---|

| 认证参考物质 | 提供可溯源的定量标准 | 能力验证样本制备;方法校准 |

| 稳定化临床样本 | 模拟真实患者样本的基质效应 | 能力验证;检测方法验证 |

| 冻干质控品 | 长期稳定性,便于运输 | 实验室内部质量控制;能力验证 |

| 分析物特异性试剂 | 确保检测方法特异性 | 方法开发与验证;盲测试终点检测 |

| 标准化培养基 | 提供微生物一致性生长环境 | 微生物学能力验证;盲测试 |

| DNA提取与纯化试剂盒 | 保证核酸质量与一致性 | 分子诊断能力验证 [15] |

| PCR主混合物 | 提供扩增反应稳定性 | 核酸检测盲测试;分子方法验证 |

| 校准品套装 | 建立检测标准曲线 | 方法标准化;设备校准 |

能力验证样本的制备需满足一致性、稳定性和互换性要求。新规要求每年进行三次能力验证挑战,每次包含五个样本,较之前的两挑战有所增加,以提高评估可靠性 [14]。在微生物能力验证中,混合培养要求已从50%降至25%,适应了临床样本的真实复杂性 [14]。

应用场景与典型案例

盲测试的典型应用场景

盲测试在多个领域具有广泛应用,尤其在需要确立因果关系的场景中表现卓越:

- 药物临床试验:通过随机双盲对照研究,评估新药疗效与安全性,是药品注册的黄金标准。

- 产品策略优化:如美团在评估新补贴策略时,通过随机将用户分为实验组和对照组,量化策略对下单规模的影响 [13]。

- 界面与用户体验测试:在A/B测试中比较不同设计对用户行为的影响,如验证App弹窗和标签展示对用户下单意愿的促进效果 [13]。

- 算法性能评估:在相同数据集上盲测不同算法,避免主观偏见影响性能评估。

传统能力验证的核心应用领域

传统能力验证在确保实验室检测质量方面发挥着不可或缺的作用:

- 临床实验室检测:如心脏肌钙蛋白和HbA1c等关键指标的性能验证,新规已将这些现代医学重要指标纳入必需的能力验证项目 [14]。

- 微生物鉴定与药敏试验:通过样本循环确保菌种鉴定和药物敏感性检测的准确性。

- 分子诊断检测:如DNA测序流程的验证,确保分子生物监测结果的可重复性 [15]。

- 法医学检测:通过实验室间比对确保检测结果的可比性与可靠性。

- 环境监测:如通过水质、空气样本分析验证检测能力。

方法选择与整合策略

面对两种方法的选择,研究人员需考虑研究目标、资源约束和监管要求。盲测试更适合因果推断和策略效果评估,而传统能力验证则是实验室质量保证和法规符合性的必备要素。

在实际研究中,两种方法可协同应用。例如,在评估新检测方法时,可先通过盲测试确定其诊断性能,再通过能力验证确认其在常规实验室条件下的稳健性。能力验证的新规指南强调,实验室不应仅将可接受限作为性能目标,而应将其视为最低标准,并在此基础上追求更优的质量目标 [14]。

在面临小样本或溢出效应等复杂情况时,如美团履约业务中的区域策略测试,需要设计更精细的实验方案,如采用随机轮转实验或准实验设计来克服传统方法的局限 [13]。这些创新方法扩展了质量验证方法学的边界,为复杂场景下的性能验证提供了新思路。

两种方法共同构成了科学研究与专业实践中的质量保证体系,通过不同的路径确保了从实验室发现到产品应用全链条的可靠性与可信度。

From Theory to Practice: Implementing Testing Strategies in Research and Diagnostics

This guide provides a comparative analysis of traditional (declared) proficiency testing (PT) and blind proficiency testing, two critical methodologies for ensuring quality in forensic laboratories. For researchers and drug development professionals, understanding the structures, workflows, and comparative effectiveness of these approaches is essential for implementing robust quality assurance systems. Traditional PT, while established and logistically simpler, exhibits significant limitations in ecological validity compared to blind PT, which tests the entire laboratory pipeline under realistic conditions. The data and workflows presented herein stem from current practices and research within forensic science, offering a framework for evaluating these complementary quality assessment tools.

Understanding Proficiency Testing Modalities

Proficiency testing (PT) is a mandatory quality assurance component for accredited forensic laboratories, designed to monitor and validate the performance of examiners and analytical processes [3] [16]. The execution and ecological validity of PT, however, differ substantially based on whether the testing is declared or blind.

Traditional (Declared) Proficiency Testing: In this common model, examiners are aware that they are being evaluated. Known samples are submitted explicitly as a test, and examiners typically process them outside the normal casework flow. This approach helps identify gross technical errors but fails to assess the full laboratory ecosystem.

Blind Proficiency Testing: This method involves submitting known samples to the laboratory disguised as regular casework [17]. The goal is to test the entire laboratory pipeline—from evidence intake and assignment to analysis and reporting—without altering examiner behavior due to the awareness of being assessed [3]. It is one of the only methods capable of detecting systemic issues and misconduct [3].

The core distinction lies in behavioral fidelity. As noted by researchers, when examiners know they are being tested, they "will possibly behave differently than they do in everyday casework" [17]. Blind testing eliminates this "observer effect," providing a more authentic measure of a laboratory's operational performance [3].

Comparative Analysis: Blind vs. Traditional PT

The table below summarizes the key characteristics and comparative performance of traditional and blind proficiency testing models based on current implementations in forensic laboratories.

Table 1: Comparative Analysis of Traditional vs. Blind Proficiency Testing

| Feature | Traditional (Declared) PT | Blind PT |

|---|---|---|

| Primary Objective | Technical competency check of individual examiners [3] | Assessment of the entire laboratory pipeline and operational performance [3] [17] |

| Ecological Validity | Low; does not mimic real casework pressure and workflow [3] | High; designed to resemble actual cases [3] |

| Examiner Behavior | Potentially altered (Hawthorne Effect) [17] | Reflects normal, real-world behavior [3] |

| Error Rate Estimation | Provides limited, potentially optimistic error rates | Offers realistic preliminary data on performance in casework-like situations [17] |

| Misconduct Detection | Limited capability | One of the only reliable methods for detection [3] |

| Logistical Complexity | Low; easily integrated into quality manual protocols | High; requires careful planning and resources to mimic casework [3] [16] |

| Current Adoption | Majority of forensic laboratories [3] [16] | Limited, primarily in some federal facilities; growing interest [3] [17] |

Workflow Breakdown: A Step-by-Step Guide

The execution of traditional and blind PT programs follows distinct workflows. The following diagrams and breakdowns illustrate the procedural steps for each.

Traditional (Declared) PT Workflow

The traditional PT process is a linear, controlled sequence managed within the laboratory's quality assurance framework.

Diagram 1: Traditional declared PT follows a linear, controlled path.

- Step 1: Program Initiation & Sample Receipt: The Quality Assurance (QA) Manager receives a known proficiency test sample from an external provider or an internal source. The sample is explicitly logged as a PT sample.

- Step 2: Declared Assignment: The QA manager assigns the PT sample to an examiner or a team. The assignment explicitly communicates that the task is a proficiency test, not actual casework.

- Step 3: Examiner Analysis: The examiner analyzes the sample following standard operating procedures. Critically, their behavior may be altered because they know they are being evaluated (e.g., increased caution, repetition, consultation) [17].

- Step 4: Result Submission & Scoring: The examiner submits their findings to the QA manager or the external PT provider. The results are scored against the known ground truth.

- Step 5: Performance Review: The scores are reviewed. Satisfactory performance is documented. Unsatisfactory results trigger a predefined corrective action process, which may include retraining and re-testing.

Blind PT Workflow

The blind PT workflow is a cyclical, integrated process designed to inject test samples seamlessly into the regular casework stream, testing the system from intake to final report.

Diagram 2: Blind PT integrates test samples secretly into the casework flow.

- Step 1: Covert Sample Introduction: A blind proficiency test sample, designed to closely resemble actual casework, is submitted to the laboratory through its standard intake channels [3]. This is often orchestrated by researchers or a dedicated internal team in collaboration with external partners [17].

- Step 2: Evidence Intake & Assignment: The evidence receiving unit processes the sample according to standard protocols, logging it as a regular case. It is then assigned to an examiner through the normal workflow management system, with no indication it is a test.

- Step 3: Unaware Examiner Analysis: The examiner analyzes the sample as they would any other case, with no behavioral changes due to test awareness [3]. This step tests the entire analytical pipeline under realistic conditions.

- Step 4: Normal Result Reporting: The examiner completes their analysis and generates a final report, which is submitted through the standard chain of command.

- Step 5: Reveal & Systemic Debrief: Once the final report is issued, the test is revealed. Laboratory leadership and researchers then conduct a comprehensive debriefing. This analyzes not just the accuracy of the result, but also the entire process, "from workflow to customer service," to identify systemic strengths and weaknesses [17].

Experimental Protocols & Key Methodologies

Implementing a robust blind PT program requires meticulous experimental design. The following protocol is synthesized from successful implementations discussed in forensic science workshops and literature [17] [16].

Protocol for Implementing a Blind Proficiency Test

- Objective: To assess the accuracy, efficiency, and adherence to protocol of the laboratory's casework pipeline under realistic operating conditions.

- Hypothesis: The laboratory's error rate and procedural compliance in blind testing will be consistent with (or provide a more valid measure than) rates derived from declared PT.

- Materials: See "The Scientist's Toolkit" below for specific reagent solutions and materials. The core material is a pre-validated, stable test sample with a ground-truth value known only to the test administrators.

- Methodology:

- Sample Design & Validation: Develop or source a test sample that is forensically realistic and forensically relevant. It must be stable, safe to handle, and its "ground truth" must be unequivocally known and pre-validated by a reference method.

- Blinding Procedure: A dedicated "blinding team" (which may include external researchers) prepares the sample for submission. This includes creating a plausible, fictional scenario or donor information and using standard evidence packaging.

- Submission and Monitoring: The blinding team submits the sample to the laboratory's standard evidence intake. The case progress is monitored discreetly through the laboratory's case management system, if possible, without alerting staff.

- Data Collection: Data points collected include: the analysis result, the time-to-completion, all case notes, procedures used, any chain-of-custody documentation, and the final report.

- Reveal and Analysis: After the final report is issued, the test is revealed. The result is compared to the ground truth. The process is reviewed not just for accuracy, but for all aspects of laboratory function.

The Scientist's Toolkit

Implementing proficiency testing, particularly the blind model, requires both conceptual and material resources. The table below details essential components for establishing a proficient testing program.

Table 2: Key Research Reagent Solutions for Proficiency Testing

| Item / Solution | Function in PT Execution |

|---|---|

| Pre-Validated Reference Materials | Serve as the ground-truth sample for blind or declared PT. Their known composition is the benchmark against which examiner performance is measured. |

| Realistic Matrix Blanks | Provides the substrate (e.g., synthetic sweat, inert cloth, mock biological tissue) for the reference material, ensuring the test sample mimics real evidence. |

| Secure Case Management System | The software platform for tracking the blind PT case through the laboratory's normal workflow, allowing for discreet monitoring by administrators. |

| Standard Evidence Packaging | Used to present the blind PT sample identically to real case evidence, maintaining the deception necessary for ecological validity. |

| Statistical Analysis Package | Software used to analyze results, calculate error rates, and determine the statistical significance of performance data from multiple PT rounds. |

| Corrective Action Protocol | A predefined, documented process for addressing unsatisfactory PT results, which is a critical component of a closed-loop quality system. |

The comparative analysis reveals that traditional declared PT and blind PT are not mutually exclusive but serve complementary roles in a comprehensive quality assurance program [17]. Traditional PT remains a logistically straightforward tool for mandatory competency checks and foundational skill assessment. However, its limitation in ecological validity is a significant shortcoming. Blind PT, while resource-intensive to implement, provides unparalleled insights into the true operational health of a forensic laboratory, testing the entire system from intake to reporting and capturing realistic error rates [3] [17].

The primary obstacles to blind PT are logistical and cultural, including the difficulty of designing realistic cases and integrating them seamlessly into workflow, as well as potential resistance from within the laboratory culture [3] [16]. However, the trend is toward greater adoption. As noted by Dr. Jeff Salyards, "The future is bright as more and more laboratory leaders see value of blind proficiency testing" [17]. For researchers and professionals committed to rigorous quality assessment, a dual-strategy approach—using declared PT for fundamental competency and blind PT for systemic validation—represents the current state-of-the-art in ensuring the reliability and integrity of forensic and analytical sciences.

Blind testing serves as a critical methodology in comparative analysis, providing a mechanism for objectively evaluating product performance while minimizing bias. Unlike traditional proficiency testing, which may involve open assessments where participants know they are being evaluated, blind testing conceals the test's identity from participants, ensuring they perform as they would under normal conditions [18]. This approach is particularly valuable in scientific fields and drug development, where it helps generate unbiased data on error rates, accuracy, repeatability, and reproducibility of methods and instruments [19].

Framed within a broader thesis on comparative analysis, this guide explores how blind testing offers distinct advantages over traditional proficiency testing by more accurately simulating real-world conditions and providing less biased performance metrics. Where traditional proficiency testing often follows established protocols with known samples, blind testing introduces an element of realism that can better reveal true performance characteristics under operational conditions [18]. This objective comparison is essential for researchers, scientists, and drug development professionals who rely on accurate performance data to make informed decisions about methodologies, instruments, and technologies.

Key Concepts and Definitions

Fundamental Terminology

- Blind Test: A controlled assessment where the examiner or participant is unaware they are being tested, ensuring performance reflects normal operational conditions [18]. The test source or purpose is concealed until after completion.

- Proficiency Testing (PT): The determination of calibration or testing performance of a laboratory or inspection body against pre-established criteria through interlaboratory comparisons [18].

- Interlaboratory Comparisons (ILC): The organization, performance, and evaluation of tests on the same or similar items by two or more laboratories in accordance with predetermined conditions [18].

- Internal Validity: The extent to which study results are trustworthy and free from biases, ensuring observed effects truly result from the variables being studied rather than external factors [20].

- External Validity: The extent to which research findings can be generalized or applied to situations, settings, populations, or times outside the study itself [20].

Experimental Design and Methodologies

Core Blind Test Design Principles

Effective blind testing requires meticulous planning across several key dimensions. The sample design must incorporate appropriate challenge levels that reflect real-world scenarios while controlling for variables that could confound results. Participant selection should represent the target user population, with sample sizes determined by statistical power requirements rather than convenience [21].

Three primary design architectures dominate blind testing methodologies:

- Full Blind Design: Participants are completely unaware of their involvement in a test, with test materials integrated seamlessly into normal workflow [18].

- Single-Blind Design: Participants know they are being tested but lack critical information about expected outcomes or sample origins.

- Double-Blind Design: Neither participants nor administrators know critical test parameters until after evaluation, minimizing unconscious influence on results [19].

The selection of appropriate positive and negative controls is paramount, as these determine the test's ability to accurately classify performance. Positive controls should represent known functioning systems, while negative controls should include samples with confirmed absence of the target characteristic or effect.

Quantitative Research Designs Hierarchy

The hierarchy of evidence provides a framework for evaluating research design strength, with blind testing occupying the higher tiers due to its robust controls against bias [20].

Figure 1: Evidence Hierarchy in Research Design

Logistics Framework Implementation

Sample Design and Distribution Logistics

Implementing a successful blind test requires meticulous logistical planning, particularly regarding sample design and distribution. The sample matrix must represent the full spectrum of challenges encountered in real-world applications, including edge cases and potential interferents. For drug development studies, this includes varying concentrations, matrices, and stability conditions.

Distribution logistics must maintain the blind nature of the study while ensuring sample integrity. For physical samples, this requires standardized packaging, shipping conditions, and chain-of-custody documentation. Electronic sample distribution offers advantages for data integrity but requires secure, validated systems to prevent technical artifacts from influencing results [18].

The following workflow illustrates a comprehensive blind testing implementation process:

Figure 2: Blind Test Implementation Workflow

Data Collection and Management

Data collection in blind testing must balance comprehensive information gathering with the need to maintain blinding. Standardized data collection forms (either electronic or paper-based) should capture all relevant variables without revealing test parameters. For comparative studies, this includes:

- Raw instrument outputs and calculated results

- Environmental conditions during testing

- Operator information and experience level

- Time stamps for each testing phase

- Any deviations from standard protocols

Data management systems must ensure confidentiality while allowing for appropriate aggregation and analysis. Automated data validation checks should flag outliers or missing values without revealing expected results to maintain blinding.

Comparative Analysis: Blind Testing vs Traditional Proficiency Testing

Methodological Comparison

Blind testing and traditional proficiency testing represent complementary but distinct approaches to performance assessment. Understanding their relative strengths and limitations enables researchers to select the most appropriate methodology for their specific comparative analysis needs.

Table 1: Methodological Comparison of Testing Approaches

| Characteristic | Blind Testing | Traditional Proficiency Testing |

|---|---|---|

| Participant Awareness | Unaware of being tested [18] | Aware of evaluation [18] |

| Sample Origin | Concealed until after assessment [18] | Often known or suspected |

| Performance Realism | High (simulates real conditions) [18] | Variable (potential for optimized performance) |

| Error Rate Detection | More accurate representation of operational errors [18] | May underestimate true error rates |

| Implementation Complexity | High (requires deception infrastructure) | Moderate (standardized protocols) |

| Cost Considerations | Generally higher due to complexity | Typically lower |

| Regulatory Acceptance | Growing recognition as superior method | Well-established in many industries |

Performance Metrics and Outcomes

Quantitative comparison of error rates between blind and traditional proficiency testing reveals significant differences in performance assessment accuracy across multiple studies.

Table 2: Performance Metrics Comparison

| Performance Metric | Blind Testing Results | Traditional Proficiency Testing Results |

|---|---|---|

| False Positive Rate | Higher, more accurate reflection of operational performance [18] | Often lower due to heightened participant caution |

| False Negative Rate | More representative of real-world conditions [18] | May be underestimated |

| Inter-laboratory Variability | Better identification of true methodological differences [18] | May be masked by optimized performance |

| Repeatability | Accurate assessment under normal conditions [19] | Potentially inflated |

| Reproducibility | Realistic measure across different operators [19] | May not reflect daily performance |

The Scientist's Toolkit: Essential Research Reagents and Materials

Core Research Materials

Table 3: Essential Research Reagents and Solutions

| Item | Function/Purpose | Application Context |

|---|---|---|

| Reference Standards | Certified materials with known properties for instrument calibration and method validation | Quality control, assay calibration, method verification |

| Internal Controls | Samples with predetermined results for monitoring assay performance | Process control, error detection, validity determination |

| Matrix-Matched Samples | Test materials in appropriate biological or chemical matrices | Simulation of real-world conditions, interference assessment |

| Blinded Sample Panels | Curated sample sets with concealed identities | Performance assessment, bias minimization, competency evaluation |

| Stability Materials | Samples for evaluating stability under various conditions | Shelf-life determination, storage condition optimization |

Regulatory Considerations and Compliance

Recent regulatory changes have heightened requirements for robust testing methodologies across industries. In clinical laboratories, updated CLIA regulations effective January 2025 require more frequent proficiency testing challenges—increasing from two to three challenges annually with five samples per challenge rather than fewer samples [14]. This reflects a growing recognition of the importance of comprehensive performance assessment.

Both blind testing and traditional proficiency testing must address regulatory compliance requirements, though their paths may differ. Traditional proficiency testing often follows prescribed protocols with established acceptance criteria, such as the percentage-based limits now implemented under updated CLIA rules where, for example, bilirubin testing must achieve ±20% or ±0.4 mg/dL, and thyroid stimulating hormone must meet ±20% or ±0.2 mIU/L [14]. Blind testing methodologies, while potentially providing superior performance assessment, may require additional validation to demonstrate equivalence to regulatory standards.

Blind testing represents a sophisticated methodology for comparative analysis that provides distinct advantages over traditional proficiency testing in assessing true operational performance. By concealing the testing nature from participants, blind testing generates more accurate error rate data, identifies operational weaknesses, and provides a realistic assessment of method performance under normal working conditions [18].

The future of blind testing in research and drug development will likely see increased adoption as regulatory bodies recognize its superior ability to assess true operational performance. Emerging trends include virtual blind testing platforms, AI-assisted result analysis, and integrated testing frameworks that combine blind and traditional approaches for comprehensive performance assessment.

For researchers designing comparative studies, the methodological framework presented here provides a foundation for implementing robust blind testing protocols that yield meaningful, actionable data for product development and method validation. As the scientific community continues to prioritize data quality and reproducibility, blind testing methodologies will play an increasingly central role in evidence generation across diverse scientific disciplines.

Detection bias is a systematic error that occurs in clinical trials when the knowledge of a patient's assigned treatment influences how outcomes are ascertained or measured [22]. This bias is a paramount concern in unblinded pragmatic trials and observational studies, where patients, healthcare providers, or outcome assessors are aware of the treatment assignment. Such knowledge can consciously or subconsciously affect behaviors; for instance, patients might report symptoms differently, clinicians might monitor more closely, or outcome assessors might interpret ambiguous data favorably towards the expected treatment effect [22] [23]. The direction of this bias is often towards exaggerating the perceived benefits of an intervention.

Blinding, also known as masking, is a critical methodological procedure designed to mitigate this bias. It involves concealing information about treatment allocation from one or more individuals involved in the trial [24]. While blinding patients and treating clinicians is important, this article focuses specifically on the role of blinding outcome assessors—the personnel who collect, interpret, and adjudicate endpoint data. When these individuals are unaware of whether a patient received the experimental treatment or control, their assessments are less likely to be influenced by preconceptions about the treatment's effectiveness, thereby yielding more objective and reliable results [24] [23]. Empirical evidence demonstrates that non-blinded outcome assessors can exaggerate effect sizes, with one meta-analysis finding exaggerated odds ratios by an average of 36% in studies with binary outcomes [23].

Experimental Evidence: Quantitative Data on Blinding Effectiveness

The quantitative impact of unblinded outcome assessment is not merely theoretical. Data from real clinical trials and meta-analyses provide compelling evidence of the bias it introduces.

A salient example comes from the Interventional Management of Stroke (IMS) III trial, a prospective randomized open blinded endpoint (PROBE) design study [25]. In this trial, local outcome assessors, who were intended to be blinded, guessed the patient's actual treatment allocation significantly more often than would be expected by chance alone (58.2% correct guesses, p=0.0003). More importantly, the success of their guess was strongly associated with the patient's measured outcome. A correctly guessed allocation was associated with better scores on the modified Rankin Scale in the intervention group (cOR: 2.28, 95% CI: 1.50–3.48) and with worse scores in the control group (cOR: 0.47, 95% CI: 0.27–0.83). This interaction was highly significant (p<0.001), suggesting that the assessors' knowledge, or subconscious inference, of the treatment directly biased their assessment of this functional outcome [25].

Table 1: Association Between Correctly Guessed Treatment Allocation and 90-day Modified Rankin Scale Score in the IMS III Trial

| Actual Treatment Group | Assessor's Guess | Common Odds Ratio (cOR) for a Better mRS Score | 95% Confidence Interval |

|---|---|---|---|

| Intervention | Correct | 2.28 | 1.50 - 3.48 |

| Intervention | Incorrect | Reference | - |

| Control | Correct | 0.47 | 0.27 - 0.83 |

| Control | Incorrect | Reference | - |

These findings are consistent with broader meta-epidemiological studies. A series of meta-analyses by Hróbjartsson et al. quantified the impact of non-blinded assessment across different outcome types, demonstrating that failure to blind outcome assessors leads to a systematic overestimation of treatment effects [23].

Table 2: Summary of Meta-Analyses on the Impact of Non-Blinded Outcome Assessment on Effect Size

| Outcome Type | Exaggeration of Effect Size in Non-Blinded vs. Blinded Assessment | Source |

|---|---|---|

| Time-to-event outcomes | Exaggerated hazard ratios by 27% on average | Hróbjartsson et al. [23] |

| Binary outcomes | Exaggerated odds ratios by 36% on average | Hróbjartsson et al. [23] |

| Measurement scale outcomes | Exaggerated pooled effect size by 68% | Hróbjartsson et al. [23] |

Methodological Protocols for Blinding Outcome Assessors

Implementing effective blinding for outcome assessors requires deliberate planning and execution. The following protocols detail established methodologies.

Core Blinding Techniques

- Independent and Uninformed Assessors: The most fundamental technique is to employ outcome assessors who are not involved in the patient's clinical care and have no access to information that could reveal the treatment assignment. This includes shielding them from clinical notes, medication records, and conversations with the treating team [24].

- Centralized Adjudication Committees: For major, often subjective, clinical events (e.g., myocardial infarction, stroke), a common practice is to use a centralized committee of experts who adjudicate outcomes based on pre-specified, standardized criteria. This committee reviews source documents that have been redacted to remove any references to the treatment arm [23].

- Concealment of Physical Evidence: In surgical or device trials, physical evidence like incisions or scars can unintentionally unblind assessors. Techniques to prevent this include using identical dressings over all incision sites or conducting assessments via telephone to eliminate visual cues [24].

- Blinded Analysis of Diagnostic Data: For outcomes based on diagnostic tests (e.g., radiographs, pathology slides, lab results), the data can be anonymized and presented to assessors in a random order without identifying information. In some cases, advanced techniques like digitally altering radiographs to mask the type of implant used have been employed [24].

Validation and Quality Control

- Testing the Success of Blinding: It is considered good practice to formally test the success of blinding. At the end of the outcome assessment process, assessors can be asked to guess the treatment allocation for each participant. The results are then compared to what would be expected by chance (e.g., 50% for a two-arm trial), as demonstrated in the IMS III trial [25].

- Use of Negative Control Outcomes: A more advanced method to detect the presence of detection bias is the use of negative control outcomes [22]. These are outcomes that the treatment under study cannot plausibly affect (e.g., using a diagnosis of peptic ulcer as a negative control in a study of statins and diabetes). An association between the treatment and the negative control outcome suggests the presence of bias, including detection bias, provided the control outcome shares the same determinants of ascertainment as the primary outcome [22].

Comparative Analysis: Blinded Assessment vs. Traditional Proficiency Testing

The concept of ensuring accuracy in measurement has a direct parallel in laboratory medicine through Proficiency Testing (PT). A comparative analysis reveals both philosophical and practical distinctions between blinding in clinical trials and traditional PT, underscoring why blinding is the superior method for mitigating detection bias in therapeutic research.

Table 3: Comparison of Blinded Outcome Assessment and Laboratory Proficiency Testing

| Feature | Blinded Outcome Assessment in Clinical Trials | Traditional Laboratory Proficiency Testing |

|---|---|---|

| Primary Objective | Mitigate detection/ascertainment bias in outcome measurement [24] [22] | Ensure analytical accuracy and precision of lab test methods [14] |

| What is Tested | The objectivity and interpretation of the human assessor | The technical performance of equipment and reagents |

| Nature of Test | Integrated into actual patient follow-up; continuous process | External simulated samples; periodic event (e.g., 3x/year) [14] |

| State of Awareness | Assessor is unaware a "test" is occurring; mimics real conditions | Analyst is aware they are being tested, which may alter behavior [3] |

| Key Advantage | Prevents bias from influencing the primary study results | Identifies technical deficiencies in laboratory procedures |

A significant limitation of traditional PT is that it is predominantly declared or non-blinded, meaning the analysts know they are being evaluated. This awareness can trigger a "Hawthorne effect," where performance temporarily improves due to the knowledge of being observed, which may not reflect routine conditions [3]. In contrast, blind proficiency testing, where samples are submitted as routine patient samples, is recognized as a more robust method for testing the entire laboratory pipeline and is one of the only methods that can detect misconduct [3]. The implementation of blind PT in fields like forensic science faces logistical hurdles, but it represents a gold standard toward which testing programs can strive. This evolution mirrors the rationale in clinical trials: the most valid assessment occurs when the measurer is unaware that a measurement is being scrutinized, thereby ensuring the result reflects true performance rather than a reaction to being tested.

The Researcher's Toolkit: Essential Reagents and Materials

Successful implementation of blinding strategies often relies on specific materials and operational plans. Below is a list of key resources for designing a trial with blinded outcome assessment.

Table 4: Essential Reagents and Materials for Blinding Outcome Assessors

| Item / Solution | Function in Blinding |

|---|---|

| Redacted Source Documents | Physical or digital copies of medical records, imaging reports, and lab reports with all treatment identifiers removed. Serves as the primary data source for blinded adjudicators. |

| Centralized Adjudication Charter | A detailed, pre-approved protocol defining outcome definitions, procedures for review, and rules for handling ambiguous cases. Ensures standardized, objective judgment. |

| Telephone Interview Scripts | Standardized scripts for conducting patient interviews by phone, ensuring all patients are asked identical questions in the same way, minimizing verbal cues from the interviewer. |

| Digital Alteration Software | In surgical or device trials, software to anonymize or alter medical images (e.g., radiographs) to hide evidence of the specific intervention received. |

| Blinding Success Questionnaire | A short form administered to outcome assessors at the trial's end to record their guess of the treatment allocation and their confidence, used to validate blinding integrity [25]. |