Bibliometric Analysis of Economic Growth and Environmental Degradation: Research Trends, Methods, and Future Directions

This article provides a comprehensive guide to conducting bibliometric analysis on the complex relationship between economic growth and environmental degradation.

Bibliometric Analysis of Economic Growth and Environmental Degradation: Research Trends, Methods, and Future Directions

Abstract

This article provides a comprehensive guide to conducting bibliometric analysis on the complex relationship between economic growth and environmental degradation. Tailored for researchers and professionals, it explores foundational concepts, methodological applications using tools like VOSviewer and Bibliometrix, troubleshooting for common analytical challenges, and validation techniques for robust research. By synthesizing current trends and emerging themes such as the Environmental Kuznets Curve and low-carbon growth, this review serves as an essential resource for designing, executing, and validating rigorous bibliometric studies in environmental economics and sustainable development.

Understanding the Research Landscape: Core Concepts and Evolutionary Trends

Defining Bibliometric Analysis in Environmental Economics

Bibliometric analysis serves as a critical quantitative methodology for mapping the intellectual landscape of scientific research through statistical analysis of publications. Within environmental economics, this approach systematically examines research trends, collaboration patterns, and conceptual evolution in domains intersecting economic activity and environmental systems. This technical guide elaborates the fundamental principles, methodological protocols, and analytical frameworks specific to applying bibliometric analysis within environmental economics, with particular emphasis on research addressing economic growth and environmental degradation. The comprehensive examination covers database selection, data extraction protocols, analytical techniques, and visualization methodologies, providing environmental economics researchers with rigorous tools to quantify and interpret scholarly communication patterns within this interdisciplinary field.

Bibliometric analysis is defined as a quantitative approach that employs mathematical and statistical methods to analyze scientific activities within a specific research field [1]. As an integral component of scientometrics, this methodology enables large-scale analysis of academic literature to identify emerging trends, intellectual structures, and knowledge diffusion patterns within defined knowledge domains [1]. In the context of environmental economics, bibliometric analysis has emerged as an indispensable technique for navigating the expansive, interdisciplinary literature examining relationships between economic systems and environmental outcomes.

The methodology has gained substantial traction in environmental economics research due to its capacity to objectively analyze thousands of publications, identify research hotspots, and trace conceptual evolution [1] [2]. The analysis of publications, citations, authors, and keywords provides valuable insights into the dynamics of scholarly communication and knowledge production in fields such as environmental Kuznets curve (EKC) hypothesis testing [3], sustainable development assessment [2] [4], and environmental degradation drivers [5]. By quantifying research impact, collaboration networks, and thematic clusters, bibliometric analysis offers systematic approaches to synthesizing fragmented literature and identifying knowledge gaps in the complex interplay between economic growth and environmental systems.

Theoretical Foundations and Key Concepts

Historical Development

Bibliometric analysis originated from early 20th-century efforts to systematically organize knowledge, with foundational contributions from Paul Otlet and Henri La Fontaine's Universal Decimal Classification system [6]. The term "bibliometrics" was formally coined by Alan Pritchard, who defined it as "the application of mathematical and statistical methods to books and other media of communication" [6]. Seminal developments include Eugene Garfield's introduction of the Science Citation Index in 1964, which revolutionized citation analysis, and Derek J. de Solla Price's pioneering work on the exponential growth of scientific literature [6].

The methodology has evolved substantially with computational advances, enabling sophisticated analysis of large bibliographic datasets through specialized software tools. In environmental economics, bibliometric approaches have gained prominence as the field has expanded rapidly in response to growing concerns about resource depletion, climate change, and sustainability challenges [2] [5].

Core Analytical Components

Bibliometric analysis in environmental economics encompasses several distinct but interrelated analytical approaches:

Citation Analysis: Examines frequency and patterns of citations received by publications, authors, or journals to measure scholarly impact and influence within the research community [6]. Highly cited works on topics like the Environmental Kuznets Curve [3] or sustainable inclusive economic growth [4] represent foundational knowledge within the field.

Co-citation Analysis: Identifies relationships between frequently cited-together publications, revealing intellectual connections and schools of thought within environmental economics research [6]. This approach can cluster research traditions in domains like ecological economics versus environmental econometrics.

Keyword Co-occurrence Analysis: Maps conceptual structure by analyzing frequency and relationships between author keywords, identifying dominant research themes and emerging topics [1] [2]. Applications in sustainability research have revealed clusters focusing on environmental sustainability, sustainable development, urban sustainability, and ecological footprint [2].

Collaboration Analysis: Examines co-authorship patterns across individuals, institutions, and countries to identify research networks and knowledge exchange channels [6]. Studies of sustainable inclusive economic growth research identify China, India, and Italy as particularly productive countries with extensive collaboration networks [4].

Bibliographic Coupling: Connects publications that share common references, indicating intellectual similarities and thematic relationships [6]. This approach effectively groups contemporary research addressing similar aspects of economic growth-environmental degradation relationships.

Methodological Protocol

Research Design and Question Formulation

The foundation of a robust bibliometric analysis in environmental economics lies in precisely defining research objectives and questions. Research questions should be specific enough to yield meaningful insights while accommodating the expansive nature of bibliographic data. Exemplary research questions from environmental economics bibliometric studies include:

- "How has the application of data visualization techniques evolved in program evaluation research from 2010-2025?" [7]

- "What are the most frequent topics of papers published in Environment, Development and Sustainability?" [2]

- "Which countries, institutions and funding agencies lead in FDI research and FDI research dedicated to climate change?" [8]

For research focusing on economic growth and environmental degradation, specific questions might examine the conceptual evolution of the Environmental Kuznets Curve hypothesis, identify emerging methodological approaches, or map international collaboration patterns in climate change economics research.

Database Selection and Search Strategy

Database selection significantly influences bibliometric analysis outcomes. The primary databases used in environmental economics research include:

Table 1: Bibliometric Database Comparison

| Database | Coverage Strengths | Environmental Economics Relevance | Export Limitations |

|---|---|---|---|

| Scopus | Comprehensive social sciences coverage, robust citation metrics | Strong coverage of environmental and sustainability journals | 2,000 record export limit per download [7] |

| Web of Science (WoS) | High-impact journals, strong citation analysis tools | Extensive coverage of economics and environmental studies | Limited coverage of some evaluation journals [7] |

| Google Scholar | Broadest coverage including gray literature | Useful for capturing interdisciplinary work | Uncurated, noisy data unsuitable for systematic analysis [7] |

Effective search strategy development employs Boolean operators (AND, OR, NOT) and field-specific tags (TITLE-ABS-KEY) to balance recall and precision. For environmental economics topics, search strings typically combine conceptual terms ("sustainable development," "economic growth") with environmental indicators ("CO2 emissions," "ecological footprint") and methodological filters ("bibliometric analysis"). An example search strategy for EKC research might appear as:

Search results should be documented using the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) framework to ensure transparency and reproducibility [4].

Data Extraction and Cleaning

Data extraction involves exporting complete bibliographic records including titles, authors, abstracts, keywords, publication years, journals, citations, and references. For large-scale analyses, API (Application Programming Interface) access enables automated data retrieval, though this requires approval and may be subject to weekly limits (e.g., Scopus's 20,000 publication weekly cap) [7].

Data cleaning is essential for analytical accuracy and involves:

- Duplicate removal using DOI matching or title matching [7]

- Standardization of author names, affiliations, and journal titles

- Filtering by document type (e.g., retaining only journal articles) and relevance [1]

- Field-specific cleaning such as keyword normalization using R's

janitorordplyrpackages [7]

Automated screening tools like Loonlens.com, Rayyan.ai, and ASReview can expedite the screening process using predefined inclusion/exclusion criteria [7].

Analytical Techniques and Software Tools

Bibliometric analysis employs both performance analysis and science mapping approaches. Performance analysis examines publication and citation metrics to measure productivity and impact, while science mapping techniques reveal conceptual and intellectual structures within the research landscape.

Table 2: Bibliometric Analysis Software Tools

| Software | Primary Functionality | Strengths in Environmental Economics |

|---|---|---|

| VOSviewer | Network visualization, clustering, density maps | Intuitive interface for keyword co-occurrence and co-authorship networks [2] [5] |

| Bibliometrix (R package) | Comprehensive bibliometric analysis, multiple format support | Statistical power for trend analysis and thematic evolution [7] [4] |

| CiteSpace | Temporal pattern detection, burst analysis | Identifies emerging trends and pivotal publications [1] |

| ScientoPy | Bibliographic data analysis and visualization | Specialized for thematic evolution mapping [1] |

The analytical workflow typically involves both quantitative bibliometric indicators and qualitative content analysis to provide comprehensive insights into research patterns.

Application in Environmental Economics: Economic Growth and Environmental Degradation Research

Research Trends and Patterns

Bibliometric analysis reveals substantial growth in environmental economics research intersecting economic growth and environmental concerns. Studies examining determinants of environmental degradation have experienced an annual publication growth rate exceeding 80% in recent years [5]. Research has particularly accelerated around themes like renewable energy, ecological footprint, and the Environmental Kuznets Curve [3] [5].

Analysis of 997 sustainability articles in Environment, Development and Sustainability identified six major research clusters: (1) environmental sustainability, (2) sustainable development, (3) urban sustainability, (4) ecological footprint, (5) environment, and (6) climate change [2]. Each cluster represents distinct yet interconnected research trajectories within the broader sustainability domain.

Key Research Themes and Conceptual Structure

Keyword co-occurrence analysis of environmental degradation research reveals several dominant thematic areas:

Economic Growth and Environmental Kuznets Curve: The EKC hypothesis, proposing an inverted U-shaped relationship between economic development and environmental degradation, represents a central research stream [3] [5]. Bibliometric analysis shows continuous scholarly debate around this hypothesis across different economic and institutional contexts.

Energy Consumption and Carbon Emissions: Research examining relationships between energy consumption, economic growth, and CO2 emissions constitutes a major research cluster, with particular focus on renewable energy transitions and energy efficiency [5].

Sustainable Inclusive Economic Growth (SIEG): Emerging research integrates social equity dimensions with environmental sustainability concerns within the SDG 8 framework [4]. Thematic evolution shows a shift from financial inclusion and CSR (2014-2023) toward digital economy, blue economy, employment, and entrepreneurship (2024-2025) [4].

Foreign Direct Investment (FDI) and Environment: Research examines FDI's dual role in promoting economic growth while potentially contributing to environmental degradation through pollution haven effects [8]. Bibliometric analysis reveals only 15% of FDI research addresses climate change impacts, highlighting a significant research gap [8].

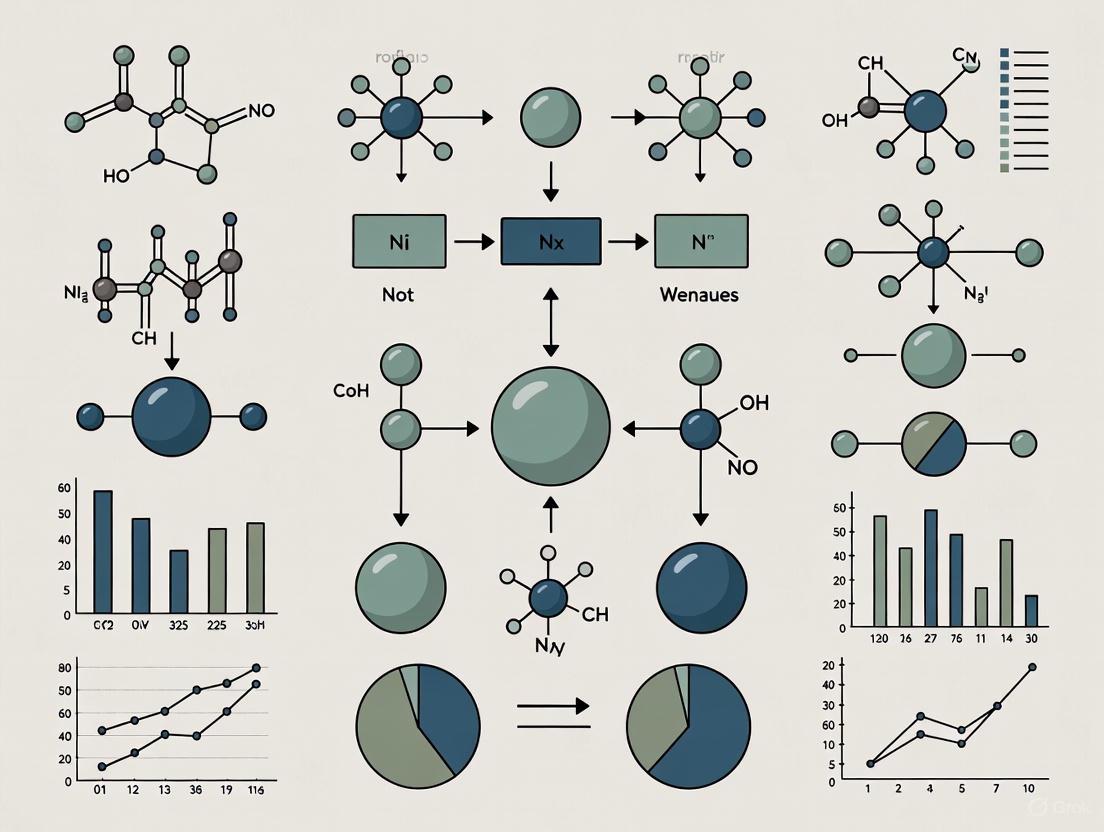

The following diagram illustrates the conceptual structure and relationships between key themes in environmental economics research on economic growth and environmental degradation:

Methodological Approaches in EKC Research

Bibliometric analysis of Environmental Kuznets Curve research reveals diverse methodological approaches employed in testing the hypothesis. The standard EKC model specification takes the form:

[ Y{it} = \sigma{it} + \gamma1 X{it} + \gamma2 X{it}^2 + \gamma3 X{it}^3 + \gamma4 D{it} + \varepsilon_{it} ]

Where ( Y ) represents environmental indicators (typically CO2 emissions), ( X ) represents economic development (typically GDP per capita), and ( D ) represents additional independent variables [3]. Different combinations of coefficients produce varied relationships between economic growth and environmental indicators:

Table 3: EKC Model Specifications and Interpretations

| γ1 | γ2 | γ3 | Relationship | EKC Support | Environmental Economics Interpretation |

|---|---|---|---|---|---|

| + | - | Not significant | Inverted U-shape | Supported | Environmental degradation increases then decreases with economic growth |

| - | + | Not significant | U-shape | Not supported | Environmental quality improves then deteriorates |

| + | Not significant | Not significant | Monotonic increase | Not supported | Growth consistently increases degradation |

| - | Not significant | Not significant | Monotonic decrease | Not supported | Growth consistently improves environment |

| + | - | + | N-shaped | Partial support | Degradation increases, decreases, then increases again |

Bibliometric analysis of EKC research identifies predominant methodological approaches including panel data analysis, time series techniques, and increasingly, heterogeneous estimation methods accounting for cross-sectional dependencies [3].

Analytical Framework and Visualization

Network Analysis and Mapping

Network visualization represents a core component of bibliometric analysis, enabling intuitive interpretation of complex relationships within environmental economics literature. VOSviewer software creates maps based on co-occurrence networks, citation networks, and co-authorship structures [5]. In these visualizations:

- Node size indicates frequency or importance (e.g., keyword occurrence, author publication count)

- Node proximity reflects relationship strength

- Cluster colors identify thematic groupings

- Link thickness represents connection strength between nodes [1]

For environmental degradation research, network mapping typically reveals dense interconnections between economic growth, CO2 emissions, energy consumption, and urbanization concepts [5].

Temporal Trend Analysis

Temporal analysis examines the evolution of research themes and impact over time. Citation burst detection identifies publications experiencing sharp increases in citation frequency, signaling growing influence or controversy [6]. Thematic evolution maps track conceptual shifts, such as the movement from pollution haven hypothesis studies toward more nuanced institutional and governance perspectives in FDI-environment research [8].

Analysis of sustainability research shows remarkable growth from 4 publications in 1999 to 255 in 2021 in Environment, Development and Sustainability, reflecting accelerating scholarly attention to sustainability challenges [2].

Geographic and Institutional Analysis

Spatial bibliometrics examines geographic patterns in research production and collaboration. China, Pakistan, and Turkey emerge as particularly productive countries in environmental degradation research [5], while China, India, and Italy lead in sustainable inclusive economic growth studies [4]. International collaboration networks reveal knowledge flow patterns, with developed countries typically maintaining more extensive cooperative ties than developing nations despite the latter's often more direct experience with environmental challenges [8].

Research Reagents and Tools

Table 4: Essential Bibliometric Research Resources

| Tool Category | Specific Solutions | Function in Bibliometric Analysis |

|---|---|---|

| Reference Management | EndNote, Mendeley, Zotero | Duplicate removal, bibliographic data organization [6] |

| Data Extraction | Scopus API, WoS API, Bibliometrix R package | Automated retrieval of bibliographic records [7] |

| Network Visualization | VOSviewer, CiteSpace, Gephi | Creating co-authorship, keyword co-occurrence, citation network maps [2] [6] |

| Statistical Analysis | R (Bibliometrix, biblioshiny), Python | Statistical computation, trend analysis, model fitting [7] [4] |

| Content Analysis | WordStat, NVivo | Qualitative analysis of publication content, topic modeling [2] |

The following workflow diagram illustrates the sequential stages of bibliometric analysis in environmental economics research:

Limitations and Methodological Considerations

While bibliometric analysis offers powerful quantitative insights, several limitations require consideration in environmental economics applications:

Citation Biases: Citation counts may reflect popularity, controversy, or perfunctory referencing rather than genuine scholarly quality or impact [6]. The Matthew Effect describes how prominent researchers receive disproportionate credit, potentially skewing analyses [6].

Database Coverage Limitations: Selective coverage of journals, languages, and publication types in major databases may underrepresent research from developing regions or in non-English languages [6] [5].

Temporal Lags: The time required for publications to accumulate citations means bibliometric analyses may not capture the very latest research developments [6].

Methodological Oversimplification: Quantitative metrics cannot capture nuanced intellectual contributions, theoretical innovations, or policy relevance of research [6].

Context Interpretation Challenges: Co-occurrence patterns require domain expertise for accurate interpretation beyond statistical relationships [7].

These limitations necessitate complementary qualitative assessment and expert interpretation to validate bibliometric findings in environmental economics research.

Bibliometric analysis represents a sophisticated methodological approach for mapping the intellectual structure and evolutionary dynamics of environmental economics research. The technique provides powerful quantitative tools to identify influential publications, trace conceptual developments, analyze collaboration patterns, and detect emerging research frontiers in the complex interplay between economic growth and environmental systems.

For researchers examining economic growth-environmental degradation relationships, bibliometric methods offer systematic approaches to navigate expansive, interdisciplinary literature and identify knowledge gaps. The integration of performance analysis, science mapping, and temporal trend analysis provides multidimensional insights into how scholarly understanding of environmental-economic relationships has evolved and where future research should be directed.

As environmental economics continues to address pressing sustainability challenges, bibliometric analysis will play an increasingly important role in synthesizing research findings, identifying innovation opportunities, and informing evidence-based policy decisions. The methodological rigor, visualization capabilities, and comprehensive scope of bibliometric approaches make them indispensable for researchers seeking to understand and advance this critically important field.

Historical Evolution of Economic Growth-Environment Research

The interplay between economic growth and environmental degradation represents one of the most critical research domains in sustainability science. This field has evolved from early theoretical explorations to a sophisticated, data-rich interdisciplinary area employing advanced bibliometric techniques to map knowledge trajectories. The Environmental Kuznets Curve (EKC) hypothesis, which posits an inverted U-shaped relationship between economic development and environmental degradation, has served as a foundational framework driving empirical investigation for decades [3]. As environmental challenges have intensified, research has expanded to examine multifaceted drivers including energy consumption, globalization, urbanization, and institutional factors that mediate the growth-environment relationship [5]. This article provides a comprehensive bibliometric analysis of this evolving research landscape, offering researchers methodological protocols, conceptual frameworks, and analytical tools to navigate this complex domain. The exponential growth in publications—exceeding an 80% annual growth rate recently—demonstrates the field's accelerating importance in addressing global sustainability challenges [5].

Quantitative Landscape of Research Output

Publication Trends and Growth Patterns

Research examining the economic growth-environment nexus has experienced remarkable expansion over the past three decades. A recent analysis of 1365 research papers from 1993 to 2024 reveals accelerating publication output, particularly around themes like economic growth, renewable energy, and the Environmental Kuznets Curve [5]. This growth pattern reflects both increasing scientific concern and policy urgency regarding environmental challenges.

Table 1: Annual Publication Trends in Economic Growth-Environment Research

| Time Period | Publication Output | Characteristic Features | Key Emerging Themes |

|---|---|---|---|

| 1993-2011 | Limited but steady output | Founding theoretical work; Early EKC validation | Environmental Kuznets Curve; Basic growth-degradation relationships |

| 2012-2018 | Rapid growth | Methodological diversification; Panel data studies | Renewable energy; FDI impacts; Urbanization effects |

| 2019-2024 | Exponential growth (>80% annually) | Integration of advanced metrics; Country-specific studies | Green TFP; ESG metrics; Digitalization; Climate policy alignment |

Analysis of citation patterns and publication metrics reveals key contributors shaping the research domain. China, Pakistan, and Turkey lead in research output, with China particularly dominant as both a research subject and producer of knowledge [5]. The most prominent journals include Environmental Science and Pollution Research and Sustainability, which have published extensively on economic growth as the most frequently studied factor in environmental degradation [5].

Table 2: Key Contributors and Research Focus Areas

| Category | Top Contributors | Research Focus | Citation Impact |

|---|---|---|---|

| Authors | Ozturk I. (13 papers) | EKC hypothesis; Energy-growth nexus | 3153 citations [3] |

| Dogan E. (7 papers) | Methodological approaches to EKC | 2190 citations [3] | |

| Shahbaz B. (7 papers) | Policy implications of EKC | 1347 citations [3] | |

| Countries | China | Domestic environmental policy; EKC validation | Core force in publications [9] |

| European nations | Comparative policy analysis; Regulatory impacts | Important collaborative force [9] | |

| United States | Theoretical development; Innovation studies | Significant influence metrics [10] |

Methodological Protocols for Bibliometric Analysis

Data Collection and Preprocessing

Protocol 1: Database Selection and Query Formulation

Database Identification: Select comprehensive abstract and citation databases—primarily Scopus and Web of Science (WoS) core collections—which provide robust coverage of environmental economics literature [5] [9].

Keyword Strategy: Develop search queries using Boolean operators that combine conceptual domains:

Time Delineation: Define appropriate temporal boundaries based on research objectives. For comprehensive evolution analysis, include publications from 1993 to present [5].

Document Filtering: Restrict to peer-reviewed articles; exclude conference proceedings, books, and non-English publications unless specifically relevant to research questions [5].

Protocol 2: Data Extraction and Cleaning

Export Citation Data: Download full record and cited references in standardized formats (e.g., RIS, Plain Text).

Data Cleaning:

- Standardize author names and institutional affiliations

- Harmonize keyword variations (e.g., "CO2" → "carbon dioxide")

- Resolve journal name discrepancies

- Remove duplicates using two-step verification [10]

Field Extraction: Parse structured data fields (authors, titles, abstracts, keywords, citations, publication years, institutions, countries) for analysis.

Analytical Software and Visualization Techniques

Software Selection Criteria:

- VOSviewer: Optimal for creating distance-based maps where similarity indicates relatedness; excels at network visualization of co-authorship, citation, and co-occurrence relationships [5] [10].

- CiteSpace: Specializes in temporal pattern detection, burst analysis, and emerging trend identification through time-slicing algorithms [11] [9].

- Biblioshiny: R-based interface for Bibliometrix package; provides comprehensive suite of bibliometric indicators and integration with statistical analysis [10].

Diagram 1: Bibliometric Analysis Workflow

Analytical Framework and Metrics

Performance Analysis:

- Productivity Metrics: Publication counts by author, institution, country

- Impact Indicators: Citation counts, h-index, g-index

- Collaboration Measures: Co-authorship index, international collaboration rate

Science Mapping:

- Co-word Analysis: Keyword co-occurrence networks to identify conceptual structure

- Thematic Evolution: Longitudinal analysis of conceptual dynamics using Callon's density-centrality framework [10]

- Co-citation Analysis: Document and author co-citation patterns to map intellectual base

Conceptual Framework and Thematic Evolution

Foundational Theories and Their Development

The Environmental Kuznets Curve (EKC) hypothesis represents the seminal theoretical framework in growth-environment research. Originating from Kuznets' 1955 work on income inequality, it was adapted to environmental studies in the early 1990s by Grossman and Krueger [3]. The hypothesis proposes three developmental phases:

Scale Effect: Initial economic development increases environmental degradation through resource exploitation and pollution-intensive industrialization.

Composition Effect: Structural economic changes shift production toward less pollution-intensive sectors as economies develop.

Technique Effect: Advanced economies develop and deploy cleaner technologies through innovation and stricter environmental regulations.

The EKC framework has been empirically tested using various model specifications, most commonly:

Yit = σit + γ1Xit + γ2Xit2 + γ3Xit3 + γ4Dit + εit

Where Y represents environmental indicators, X denotes economic development measures, D encompasses control variables, and γ coefficients determine the shape of the relationship [3].

Diagram 2: Environmental Kuznets Curve Framework

Research Themes and Conceptual Evolution

Bibliometric analysis reveals four distinct thematic categories in the growth-environment research landscape, mapped using Callon's density-centrality framework:

- Motor Themes (well-developed, central): Circular economy, sustainability assessment, green technology innovation

- Basic Themes (fundamental, cross-cutting): SDGs alignment, corporate governance, environmental policy

- Niche Themes (specialized, peripheral): Economic growth measurements, emissions accounting techniques

- Emerging/Declining Themes (developmental): ESG integration, digital transformation impacts [10]

Table 3: Thematic Evolution in Growth-Environment Research

| Time Period | Dominant Themes | Emerging Concepts | Methodological Innovations |

|---|---|---|---|

| 1993-2005 | EKC validation; Growth-environment tradeoffs | Ecological modernization; Institutional theory | Time-series analysis; Basic panel data methods |

| 2006-2015 | Renewable energy; FDI impacts; Urbanization | Carbon footprint; Ecological footprint | Advanced panel techniques; Spatial econometrics |

| 2016-2021 | Green innovation; Climate policy; ESG metrics | Green TFP; Digitalization effects | Network analysis; Machine learning applications |

| 2022-Present | Carbon neutrality; AI applications; Circular business models | Behavioral factors; Sector-specific innovations | Bibliometric synthesis; Integrated assessment models |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Analytical Tools for Growth-Environment Research

| Tool Category | Specific Solutions | Function/Application | Key Features |

|---|---|---|---|

| Bibliometric Software | VOSviewer | Network visualization and analysis | Distance-based mapping; Cluster analysis; User-friendly interface [5] |

| CiteSpace | Temporal pattern detection and burst analysis | Time-slicing capability; Betweenness centrality; Structural variation analysis [11] [9] | |

| Biblioshiny | Comprehensive bibliometric indicators | R-based statistical power; Diverse visualization options; Integration with Bibliometrix [10] | |

| Data Resources | Scopus Database | Primary literature data source | Comprehensive coverage; Robust citation tracking; API access [5] [10] |

| Web of Science Core Collection | Secondary validation database | Selective quality coverage; Citation network data; Historical depth [3] [9] | |

| World Development Indicators | Supplementary economic and environmental data | Standardized country statistics; Longitudinal consistency; Cross-national comparability [5] | |

| Analytical Frameworks | Callon's Density-Centrality | Thematic mapping and classification | Strategic diagram creation; Theme categorization; Evolution tracking [10] |

| Directional Distance Functions | Green productivity measurement | Environmental technology modeling; Undesirable output incorporation [12] | |

| STIRPAT Model | Driver impact analysis | Stochastic impacts estimation; Factor decomposition; Policy scenario testing [11] |

Emerging Frontiers and Future Research Trajectories

The bibliometric analysis reveals several promising research frontiers in the economic growth-environment domain. Green Total Factor Productivity (GTFP) has emerged as a crucial metric that incorporates energy consumption and pollution outputs, addressing limitations of conventional productivity measures [12]. The integration of ESG (Environmental, Social, Governance) metrics with traditional economic indicators represents another significant evolution, particularly in corporate sustainability assessment [10].

Future research directions identified through bibliometric mapping include:

Methodological Innovations: Development of standardized ESG measurement frameworks adapted for emerging markets; integration of artificial intelligence and big data analytics in environmental performance assessment [10].

Conceptual Expansions: Incorporation of behavioral and psychological factors influencing environmental decisions; examination of sector-specific innovation pathways [5].

Policy Applications: Analysis of environmental regulation synergies; impact assessment of digital economy on green development efficiency; design of adaptive governance frameworks for climate resilience [9].

The field continues to evolve toward more integrated approaches that account for the complex interdependencies between economic systems and ecological constraints, with bibliometric analysis serving as an essential tool for mapping this dynamic landscape and guiding future inquiry.

This whitepaper provides an in-depth technical examination of the Environmental Kuznets Curve (EKC) hypothesis, a foundational framework in environmental economics that posits an inverted U-shaped relationship between economic development and environmental degradation. Through bibliometric analysis of research spanning three decades (1994-2021), we map the intellectual structure and evolution of EKC scholarship, identifying dominant research trends, influential contributors, and emerging directions. The analysis synthesizes findings from over 200 empirical studies, revealing China and Turkey as the most prolific contributors and economic growth, CO2 emissions, and energy consumption as predominant thematic foci. Beyond the classical EKC framework, we explore theoretical extensions accounting for technological progress, consumption patterns, and institutional factors that reshape the growth-environment nexus. Methodological protocols for bibliometric analysis and EKC testing are detailed alongside visualizations of theoretical relationships and research workflows, providing researchers with comprehensive analytical tools for advancing this critical field of study.

The Environmental Kuznets Curve (EKC) hypothesis represents one of the most extensively debated and empirically tested frameworks in environmental economics, attempting to explain the dynamic relationship between economic growth and environmental degradation [3]. First proposed by Grossman and Krueger in 1991, the EKC challenges conventional assumptions by suggesting that environmental deterioration may initially intensify then improve as economies develop beyond specific income thresholds [3]. This hypothesis has generated substantial scholarly attention, with bibliometric analyses revealing a gradual increase in publications over years, establishing EKC as a central paradigm for understanding the tradeoffs between economic development and environmental sustainability [13].

This technical guide situates the EKC within a broader bibliometric analysis of economic growth-environmental degradation research, examining the hypothesis' theoretical foundations, empirical evidence, methodological approaches, and evolving critiques. The EKC debate spans over four decades and warrants continued empirical scrutiny as global environmental challenges intensify [3]. Our analysis leverages curated research from SCOPUS and Web of Science databases, employing bibliometric methods to trace the evolution, trends, and knowledge gaps within this prolific research domain [3]. The whitepaper specifically addresses how environmental challenges have grown increasingly complex and examines whether the EKC framework remains adequate for capturing the intricate interrelationships between economic systems and environmental systems in an era of climate change and resource constraints.

Theoretical Foundations of the Environmental Kuznets Curve

Origins and Conceptual Framework

The EKC hypothesis derives its name and fundamental shape from Simon Kuznets' Nobel Prize-winning work on economic development and income inequality, which proposed an inverted U-shaped relationship between per capita income and income inequality [3]. In the early 1990s, researchers adapted this framework to environmental quality, hypothesizing that "the inverted U-shape was accepted in the field of environmental studies" [3]. The seminal work of Grossman and Krueger (1991) provided the first comprehensive empirical test of this relationship, examining air quality measures across countries at different development stages [3].

The fundamental EKC proposition suggests that during early stages of economic development, nations prioritize growth over environmental protection, leading to increased resource exploitation and pollution. However, beyond a certain income threshold (the "turning point"), societies begin to value environmental quality, implement stricter regulations, develop cleaner technologies, and shift toward less pollution-intensive service sectors, resulting in environmental improvement [3]. This progression occurs through three interconnected effects:

- Scale Effect: Initial economic expansion increases resource consumption and pollution emissions due to heightened industrial activity and energy use [3].

- Composition Effect: Economic structural transformation from agriculture to industry then to services alters pollution intensity [3].

- Technotechnique Effect: Advanced technologies and environmental regulations improve resource efficiency and reduce pollution per output unit [3].

These effects collectively generate the characteristic inverted U-shaped curve when environmental degradation is plotted against per capita income.

EKC Functional Specifications and Mathematical Formulations

Empirical tests of the EKC hypothesis typically employ reduced-form econometric models that relate environmental indicators to income measures and other control variables. The base specification follows this general form [3]:

Where Y represents an environmental indicator, X denotes economic development (typically per capita income), D contains additional independent variables, σ is a constant, γ represents coefficients, and ε is the standard error term.

The relationship between economic development (X) and environmental degradation (Y) manifests in several potential forms based on the significance and signs of the coefficients:

Table 1: EKC Functional Forms and Interpretations

| Functional Form | γ₁ Coefficient | γ₂ Coefficient | γ₃ Coefficient | Economic-Environment Relationship |

|---|---|---|---|---|

| Monotonic increase | Positive | Not significant | Not significant | Linear degradation with growth |

| Monotonic decrease | Negative | Not significant | Not significant | Linear improvement with growth |

| U-shaped | Negative | Positive | Not significant | Improvement then degradation |

| Inverted U-shaped | Positive | Negative | Not significant | Classic EKC pattern |

| N-shaped | Positive | Negative | Positive | Degradation resumes after improvement |

| Inverted N-shaped | Negative | Positive | Negative | Improvement-Degradation-Improvement |

The cubic terms (N-shaped and inverted N-shaped curves) represent more recent theoretical extensions, suggesting that environmental improvement may not persist indefinitely with income growth [3]. These complex functional forms acknowledge that at very high income levels, scale effects may once again overwhelm technique and composition effects, potentially leading to renewed environmental degradation.

Bibliometric Analysis of EKC Research

Publication Trends and Intellectual Structure

Bibliometric analysis of EKC research reveals a steadily growing field with distinctive productivity patterns and intellectual networks. Analysis of publications from 1994-2021 shows a gradual increase in research output, with particular acceleration following the adoption of the Paris Agreement in 2015 [13]. The descriptive analysis component of bibliometric studies typically examines publication trends, language distribution, publisher influence, Web of Science categories, and citation patterns, while networking analysis includes keyword co-occurrence, co-authorship, and co-citation patterns [13].

The most prolific publishers in the EKC domain are Elsevier and Springer Nature, which collectively dominate the dissemination of research in this field [13]. Geographic analysis indicates that researchers from China and Turkey represent the most prolific contributors, producing the highest volume of publications along with the most citations, co-authorships, and co-citations [13]. This geographic concentration reflects both the environmental challenges facing rapidly developing economies and the growing research capacity within these regions.

Table 2: Influential Contributors to EKC Research (Based on Bibliometric Analysis)

| Researcher | Publication Count | Total Citations | Link Strength | Primary Contribution Focus |

|---|---|---|---|---|

| Ozturk I. | 13 | 3,153 | 2 | Energy-economic growth nexus |

| Dogan E. | 7 | 2,190 | 0 | Methodology & econometrics |

| Shahbaz B. | 7 | 1,347 | 1 | Financial development |

| Saboori B. | 7 | 677 | 1 | Renewable energy transition |

| Liu Y. | 6 | 582 | 0 | Trade openness impacts |

Citation analysis further reveals the intellectual pillars of EKC research, with seminal works by Grossman and Krueger (1991), Panayotou (1993), and Stern (2004) forming the foundational literature. The co-citation networks demonstrate how later research builds upon these theoretical and methodological foundations while introducing new variables and contextual applications.

Thematic Evolution and Keyword Analysis

Keyword co-occurrence analysis provides valuable insights into the conceptual structure and evolving research priorities within the EKC domain. Analysis of more than 200 articles from 1998 to 2022 identifies several persistent and emerging thematic clusters [14]. The most frequent keywords appearing in EKC studies include "economic growth," "CO2 emissions," "energy consumption," "China," "renewable energy," and "financial development" [13]. These keywords represent the core concerns of the field, reflecting both the fundamental relationships under investigation and the most studied geographical contexts.

The keyword analysis reveals several important thematic shifts over time:

- Early Phase (1990s): Focus on air pollutants (SO2, NOx) and basic model specification

- Expansion Phase (2000s): Incorporation of energy consumption, deforestation, water quality

- Contemporary Phase (2010s-present): Integration of institutional factors, renewable energy, financial development, and ecological footprint

The rising prominence of "renewable energy" and "financial development" keywords in recent years indicates a growing research interest in policy mechanisms and transition pathways that might accelerate or reshape the EKC trajectory. Similarly, the focus on specific countries like China reflects both data availability concerns and scholarly interest in testing the hypothesis in the world's largest developing economy.

Methodological Protocols for EKC Research

Bibliometric Analysis Methodology

Bibliometric analysis follows standardized protocols to map the intellectual structure of research fields. For EKC studies, the standard methodology encompasses:

Data Collection Protocol:

- Database Selection: SCOPUS and Web of Science Core Collection

- Time Frame: Typically 1994-2021 or similar multi-decade spans

- Search Query: ("environmental Kuznets curve" OR "EKC") in titles, abstracts, keywords

- Inclusion Criteria: English-language research articles, reviews

- Exclusion Criteria: Editorials, conference proceedings, non-peer-reviewed works

Analytical Framework:

- Descriptive Analysis: Publication trends, citation counts, journal impact

- Network Analysis: Co-authorship, co-citation, keyword co-occurrence

- Software Tools: VOSviewer, CiteSpace, or Bibliometrix for visualization

- Performance Analysis: Most cited authors, institutions, countries

The bibliometric methodology enables objective identification of research trends, influential works, and emerging topics without the subjective biases that might influence traditional literature reviews [3]. This approach is particularly valuable for a field as extensive and methodologically diverse as EKC research.

Empirical EKC Testing Protocol

Empirical testing of the EKC hypothesis follows established econometric protocols with specific considerations for environmental economics:

Model Specification:

- Dependent Variable Selection: Environmental indicators (CO2, SO2, ecological footprint)

- Core Independent Variables: GDP per capita, squared term, cubed term

- Control Variables: Energy consumption, trade openness, population density, institutional quality

- Functional Form: Base model with incremental complexity

Data Considerations:

- Data Type: Panel data (preferred for cross-country comparison), time series

- Transformation: Natural logarithms to normalize distribution and interpret coefficients as elasticities

- Time Span: Sufficiently long to capture development trajectories

Estimation Techniques:

- Panel Estimation: Fixed effects, random effects, Hausman test for specification

- Advanced Methods: GMM, ARDL, quantile regression for heterogeneity

- Diagnostic Testing: Cross-sectional dependence, unit roots, cointegration

Validation Procedures:

- Turning Point Calculation: Derived from estimated coefficients

- Robustness Checks: Alternative specifications, sub-samples, additional controls

- Policy Relevance: Interpretation of findings for sustainable development strategies

Research Reagent Solutions: Methodological Toolkit

EKC researchers employ a standardized methodological toolkit comprising specific analytical approaches, data resources, and software solutions. This "reagent kit" enables consistent, replicable research across the field.

Table 3: EKC Research Reagent Solutions and Methodological Toolkit

| Research Component | Standard Solutions | Application in EKC Research | Advanced Alternatives |

|---|---|---|---|

| Environmental Indicators | CO₂ emissions, SO₂ concentrations, ecological footprint | Dependent variable measuring environmental degradation | PM2.5, biodiversity loss, water quality indices |

| Economic Data | GDP per capita (constant USD), industrialization index | Core independent variable for economic development | Green GDP, wealth accounts, nighttime lights |

| Control Variables | Energy consumption, trade openness, urbanization rate | Account for confounding factors | Institutional quality, financial development, technology transfer |

| Econometric Software | Stata, EViews, R (plm package) | Model estimation and diagnostic testing | Python (statsmodels), MATLAB, Gauss |

| Bibliometric Tools | VOSviewer, CiteSpace, Bibliometrix | Research trend analysis and visualization | CitNetExplorer, SciMAT, Leximancer |

| Data Sources | World Bank WDI, IEA, EDGAR, GTAP | Authoritative secondary data collection | National statistical offices, satellite data |

Beyond the EKC: Theoretical Extensions and Critiques

Theoretical Limitations and Empirical Contradictions

Despite its influential position in environmental economics, the EKC hypothesis faces substantial theoretical and empirical challenges. The fundamental assumption of "growth-first, clean-later" has been questioned on both theoretical and ethical grounds, particularly given the urgency of contemporary environmental crises [3]. Several critical limitations have emerged:

Methodological Concerns:

- Specification Sensitivity: EKC estimation proves highly sensitive to functional form, variable selection, and dataset composition [14].

- Omitted Variable Bias: Early models frequently excluded crucial factors like energy consumption, trade patterns, and institutional quality.

- Heterogeneity Issues: Single turning points may not apply universally across pollutants, countries, or temporal contexts.

Theoretical Shortcomings:

- Consumption Oversight: EKC focuses on production-based emissions while ignoring consumption-based environmental impacts from imported goods [14].

- Pollution Offshoring: Developed economies may appear to improve environmentally by relocating polluting industries abroad rather than genuinely reducing impacts [14].

- Irreversible Damage: The growth path traced by the inverted U-shaped curve may prove inefficient, and environmental damage provoked in early development phases might not be reparable [14].

Empirical evidence for the EKC remains mixed, with some studies supporting the inverted U-shape for certain local air pollutants while finding little evidence for greenhouse gases like CO2 [3]. The Russian-Ukrainian conflict and post-COVID-19 pandemic recovery have further complicated the global economic-environment relationship, highlighting how external shocks can disrupt presumed development trajectories [3].

Theoretical Extensions and Alternative Frameworks

In response to these limitations, researchers have developed several theoretical extensions and alternative frameworks that refine or challenge the conventional EKC model:

Modified EKC Specifications:

- Technology-Enhanced EKC: Incorporates endogenous technological progress and innovation diffusion

- Institutional EKC: Integrates governance quality, regulatory effectiveness, and corruption controls

- Trade-Augmented EKC: Accounts for emissions embedded in international trade and specialization patterns

Alternative Conceptual Frameworks:

- Green Solow Model: Integrates technological progress in abatement activities to explain simultaneous economic growth and environmental improvement [14].

- Ecological Modernization Theory: Emphasizes how societies can restructure institutions to reconcile economic and environmental goals.

- Decoupling Framework: Distinguishes between relative (reduced emission intensity) and absolute (reduced total emissions) decoupling of economic growth from environmental impacts.

These theoretical extensions acknowledge that the relationship between economic development and environmental quality is neither automatic nor universally applicable, but instead depends on policy choices, technological availability, and institutional contexts. The role of climate finance, technological progress, and energy transition could significantly improve EKC assessment and potentially accelerate the movement toward environmental improvement [14].

Emerging Trends and Future Research Directions

Contemporary EKC research reflects several emerging trends that respond to both methodological critiques and evolving global environmental challenges. Analysis of more than 200 articles from 1998 to 2022 identifies several promising directions for future inquiry [14]:

Conceptual Expansions:

- Beyond CO2: Incorporation of biodiversity loss, water stress, and composite environmental indicators

- Spatial Dependencies: Integration of spatial econometrics to account for transboundary pollution

- Nonlinear Dynamics: Application of regime-switching models and threshold effects

Policy-Relevant Innovations:

- Climate Finance: Investigation of how financial mechanisms can lower EKC turning points [14]

- Technological Acceleration: Analysis of how renewable energy costs and digital technologies reshape development pathways [15]

- Just Transition Frameworks: Integration of equity considerations into environmental policy modeling [15]

The integration of artificial intelligence presents both challenges and opportunities for EKC trajectories, as AI has the potential to radically improve energy efficiency and resource use while simultaneously increasing electricity demand from data centers [15]. Similarly, emerging sustainability reporting standards and natural capital accounting initiatives may create more robust datasets for testing refined EKC specifications [15].

Future research directions emphasize practical solutions coupled with increasing ambition levels that could unlock meaningful private capital mobilization for environmental protection [15]. The continuing evolution of carbon markets, particularly following agreements on Article 6 of the Paris Agreement at COP29, offers new mechanisms for countries to cooperate in reducing carbon emissions [15]. These developments suggest that the next generation of EKC research will increasingly focus on policy mechanisms and transition pathways rather than simply documenting historical relationships.

The Environmental Kuznets Curve hypothesis has evolved from a provocative theoretical proposition to a extensively researched framework with substantial policy relevance. Bibliometric analysis reveals a dynamically growing field with distinctive intellectual structure, geographic concentrations, and evolving research priorities. While the core EKC model provides a valuable heuristic for understanding economy-environment relationships, contemporary research increasingly emphasizes the contingent nature of these relationships and the critical importance of policy interventions, technological innovation, and institutional contexts.

The future trajectory of EKC research lies in developing more nuanced models that account for consumption-based environmental impacts, spatial dependencies, and heterogeneous development pathways across countries and pollutants. As global environmental challenges intensify, particularly climate change and biodiversity loss, the integration of EKC insights with practical policy mechanisms offers promise for accelerating the transition toward sustainable development. The continued evolution of this research domain will likely focus on identifying leverage points, policy interventions, and transition pathways that can lower turning points and minimize environmental damage during early development stages, ultimately contributing to both economic prosperity and environmental sustainability.

Identifying Major Research Clusters and Knowledge Domains

Bibliometric analysis has emerged as a powerful quantitative method for mapping the intellectual structure of scientific fields, enabling researchers to identify major research clusters and knowledge domains through systematic examination of publication patterns, citation networks, and keyword co-occurrences. Within the context of economic growth and environmental degradation research, this methodology provides valuable insights into the evolution of scholarly discourse, emerging trends, and collaborative networks. The growing urgency of environmental challenges, coupled with the need for sustainable economic development, has generated a substantial body of literature that can be effectively analyzed through bibliometric techniques to guide future research directions and policy decisions. This technical guide provides researchers with comprehensive methodologies for conducting bibliometric analyses, with specific application to the economic growth-environmental degradation nexus.

Core Principles of Bibliometric Analysis

Bibliometric analysis employs statistical methods to examine publication patterns and citation relationships within a body of scientific literature. When applied to the study of economic growth and environmental degradation, this approach enables the identification of intellectual structures, emerging trends, and collaborative networks that define the field. The fundamental premise involves treating scientific publications as measurable artifacts that reveal the conceptual organization of knowledge domains.

The analytical process typically incorporates several complementary approaches. Co-citation analysis identifies frequently cited reference pairs, suggesting conceptual relationships and foundational knowledge structures. Co-word analysis examines keyword co-occurrence patterns to map the conceptual landscape of a research field. Bibliographic coupling links documents that share common references, indicating thematic relationships among current research. Collaboration analysis maps networks of co-authorship, revealing patterns of scientific cooperation across institutions and countries. These methods collectively enable the quantification and visualization of knowledge domains within complex research landscapes like the economic growth-environmental degradation nexus.

Performance analysis constitutes another critical dimension, focusing on productivity and impact metrics for authors, institutions, countries, and journals. When combined with the science mapping approaches above, this multidimensional analysis provides a comprehensive understanding of the research field's structural and dynamic characteristics, effectively highlighting major research clusters and their temporal evolution.

Methodological Framework

Data Collection and Preprocessing

The initial phase involves systematic data collection from established scholarly databases, primarily Scopus and Web of Science, which provide comprehensive coverage of high-quality, peer-reviewed literature. As demonstrated in recent studies, search strategies typically employ carefully constructed query strings combining keywords related to economic growth ("economic growth," "GDP," "economic development") and environmental degradation ("environmental degradation," "carbon emissions," "CO2," "ecological footprint," "pollution") [5] [2].

Inclusion and exclusion criteria must be explicitly defined, including document types (e.g., research articles, review articles), time spans, and language restrictions. The data extraction process should be documented using frameworks such as PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) to ensure transparency and reproducibility [16]. Following data retrieval, preprocessing involves standardization of terminology (e.g., merging synonyms), removal of duplicates, and extraction of relevant metadata including citations, author affiliations, keywords, and references.

Analytical Workflow

The core analytical workflow encompasses both performance analysis and science mapping, implemented through specialized software tools. The following diagram illustrates the standard bibliometric analysis workflow for identifying research clusters:

Figure 1: Bibliometric Analysis Workflow

Performance analysis focuses on quantifying productivity and impact through metrics such as publication counts, citation rates, h-index, and field-weighted citation impact. This analysis can be conducted at multiple levels including authors, institutions, countries, and journals to identify key contributors and influential works within the research domain.

Science mapping employs several complementary techniques to uncover intellectual structures and relationships. Co-citation analysis reveals foundational knowledge structures by identifying frequently cited reference pairs. Co-word analysis maps conceptual networks through keyword co-occurrence patterns. Bibliographic coupling connects documents sharing common references, highlighting current thematic relationships. Collaboration analysis visualizes networks of co-authorship across individuals, institutions, and countries.

Software Tools for Bibliometric Analysis

Several specialized software tools facilitate the implementation of bibliometric analysis, each with distinctive capabilities and functions:

Table 1: Essential Software Tools for Bibliometric Analysis

| Tool | Primary Function | Key Features | Application in Research |

|---|---|---|---|

| VOSviewer | Network visualization | Creates maps based on bibliometric networks, intuitive visualizations | Used in environmental degradation studies to visualize keyword co-occurrence and citation networks [5] [2] |

| Biblioshiny (R Package) | Comprehensive bibliometrics | Provides suite of bibliometric analysis functions, integrates with R | Employed for co-authorship, citation, and bibliographic coupling analysis [16] |

| CiteSpace | Temporal pattern detection | Identifies emerging trends and paradigm shifts, burst detection | Useful for tracking evolution of research themes over time |

| CitNetExplorer | Citation network analysis | Explores and analyzes citation networks of publications | Helps identify key papers and their intellectual connections |

Key Research Clusters in Economic Growth and Environmental Degradation

Bibliometric analyses of the economic growth-environmental degradation nexus have consistently identified several major research clusters that structure the field. These clusters represent concentrated areas of scholarly activity with distinct thematic focus.

Table 2: Major Research Clusters in Economic Growth and Environmental Degradation

| Research Cluster | Key Concepts | Methodological Approaches | Seminal Contributions |

|---|---|---|---|

| Environmental Kuznets Curve (EKC) | Income-environment relationship, inverted U-curve, turning point | Panel data regression, time-series analysis, non-linear models | Grossman & Krueger (1995); pioneering EKC empirical studies [17] [18] |

| Energy Consumption and Emissions | Renewable energy, fossil fuels, carbon emissions, energy intensity | Decomposition analysis, input-output models, life cycle assessment | Studies linking energy consumption patterns to environmental degradation [5] |

| Globalization and Trade | Foreign direct investment (FDI), pollution haven hypothesis, international trade | Simultaneous equations, gravity models, global value chain analysis | Research on FDI-environment relationship and pollution haven debate [19] |

| Sustainable Development Pathways | Green growth, decoupling, circular economy, sustainable development goals (SDGs) | Integrated assessment models, scenario analysis, sustainability indicators | Work on reconciling economic development with environmental constraints [2] [20] |

| Sectoral Analysis and Technological Innovation | Sectoral complexity, green technology, research and development | Sectoral complexity index (SCI), patent analysis, innovation metrics | Studies examining sector-specific environmental impacts and technological solutions [17] |

The Environmental Kuznets Curve Cluster

The Environmental Kuznets Curve (EKC) hypothesis represents one of the most prominent research clusters, proposing an inverted U-shaped relationship between economic development and environmental degradation [17] [18]. This cluster encompasses studies testing the EKC hypothesis across different countries, time periods, and environmental indicators, with recent work introducing more nuanced approaches including the sectoral complexity index (SCI) to examine sector-specific environmental dynamics [17].

Key methodological developments in this cluster include the application of non-linear models such as the Markov regime-switch vector autoregression (MS-VAR) model to capture the complex, multi-stage dynamic processes governing the relationship between environmental pollution and economic growth [18]. Recent bibliometric analyses reveal that economic growth remains the most frequently studied factor in environmental degradation research, with the EKC hypothesis continuing to generate substantial scholarly interest and debate [5].

Emerging Research Frontiers

Beyond established clusters, bibliometric analysis has identified several emerging research frontiers. The "Triple Green Strategy" integrating green energy, green innovation, and green finance has gained prominence as a framework for achieving environmental sustainability without compromising economic development [20]. Research on ecological footprint as a comprehensive measure of environmental degradation has also expanded, moving beyond traditional focus on carbon emissions to include broader ecological impacts [20].

The sectoral economic complexity approach represents another emerging frontier, introducing more granular analysis of how sophistication in specific economic sectors influences environmental outcomes [17]. Additionally, technological innovations such as artificial intelligence, blockchain, and their applications for environmental sustainability are increasingly intersecting with traditional economic growth-environment research [16] [21].

Experimental Protocols and Analytical Techniques

Network Construction and Analysis

The construction of bibliometric networks follows standardized protocols that ensure reproducibility and analytical rigor. For co-occurrence analysis, the process involves:

Keyword Extraction and Standardization: Author keywords and database index terms are extracted, with synonymous terms merged and spelling variations standardized.

Co-occurrence Matrix Construction: A matrix is created counting how frequently each keyword pair appears together in the same publications.

Network Normalization: Association strength normalization is typically applied to the co-occurrence matrix to account for differences in keyword frequencies.

Clustering and Visualization: The VOS clustering technique groups related items, and network visualization maps are generated displaying items as nodes and relationships as links [5] [2].

For citation-based networks (co-citation and bibliographic coupling), similar protocols are followed with appropriate normalization to account for citation practices across fields and over time.

Temporal Analysis and Trend Detection

Analyzing the evolution of research clusters requires specialized temporal analysis techniques. The following diagram illustrates the protocol for detecting emerging trends and thematic evolution:

Figure 2: Temporal Analysis Protocol

CiteSpace software provides specialized algorithms for detecting emerging trends through burst detection, which identifies sharp increases in citation frequency or keyword usage that signal growing research interest. Thematic evolution maps can be created by comparing keyword co-occurrence networks across successive time periods, revealing how research clusters have merged, split, or dissolved over time.

Research Reagent Solutions: Essential Analytical Tools

Successful implementation of bibliometric analysis requires specific "research reagents" – the essential tools, data sources, and analytical components that enable comprehensive investigation of research clusters and knowledge domains.

Table 3: Essential Research Reagent Solutions for Bibliometric Analysis

| Reagent Category | Specific Tools/Sources | Function/Purpose | Application Notes |

|---|---|---|---|

| Data Sources | Scopus, Web of Science | Comprehensive bibliographic data with citation information | Scopus generally provides broader coverage; WoS offers more selective content [5] [16] |

| Analytical Software | VOSviewer, Biblioshiny, CiteSpace | Network analysis, visualization, trend detection | VOSviewer excels at intuitive visualization; Biblioshiny offers comprehensive metric suites [5] [16] [2] |

| Reference Management | EndNote, Zotero, Mendeley | Organization of retrieved records, duplicate removal | Critical for handling large datasets from multiple database searches |

| Data Processing Tools | R (bibliometrix), Python | Data cleaning, transformation, and analysis | Custom scripts enable specialized analyses beyond standard software capabilities |

Interpretation and Validation Framework

Robust interpretation of bibliometric findings requires systematic validation and contextualization. Cluster labels should be derived both algorithmically (from prominent terms within clusters) and through expert review to ensure conceptual coherence. Validation techniques include:

- Stability Testing: Assessing the sensitivity of cluster solutions to parameter choices and data variations

- Conceptual Consistency: Evaluating whether publications within clusters share substantive theoretical or methodological commonalities

- Triangulation: Comparing results from different bibliometric techniques (e.g., co-citation vs. co-word analysis) to identify robust patterns

Interpretation should contextualize findings within broader scientific and policy discourses, particularly important in the economically and politically salient domain of economic growth and environmental degradation. For instance, the prominence of the EKC cluster reflects ongoing debates about the feasibility of decoupling economic growth from environmental harm, while emerging clusters around green innovation reflect policy interest in technological solutions to sustainability challenges [17] [20].

Bibliometric analysis provides powerful methodological approaches for identifying and characterizing research clusters and knowledge domains in the study of economic growth and environmental degradation. Through systematic application of the protocols and techniques outlined in this guide, researchers can map the intellectual structure of this complex, interdisciplinary field, track its evolution over time, and identify emerging research frontiers. The continuing development of bibliometric methods, including integration with natural language processing and machine learning techniques, promises to further enhance our ability to understand and navigate the expanding scientific literature on one of the most critical challenges of our time – achieving sustainable economic development within planetary boundaries.

Leading Journals, Institutions, and Country Contributions

Bibliometric analysis has become an indispensable tool for mapping the intellectual structure of scientific fields, quantifying research trends, and identifying key contributors. In the interdisciplinary research domain linking economic growth and environmental degradation, such analyses provide clarity on the evolution of scholarly focus—from purely economic metrics towards integrated frameworks that balance prosperity with planetary health. This whitepaper synthesizes findings from recent bibliometric studies to present a comprehensive overview of the leading journals, institutions, and country contributions in this field. The analysis reveals a research landscape increasingly characterized by its global nature and its alignment with the United Nations Sustainable Development Goals (SDGs), particularly SDG 8 (Decent Work and Economic Growth) and SDG 13 (Climate Action). Understanding these patterns of contribution and collaboration is essential for researchers, policymakers, and funding agencies aiming to navigate this rapidly evolving field and address the pressing challenge of achieving sustainable economic development.

Bibliometric studies consistently reveal a significant increase in research output concerning economic growth and environmental degradation, with a notable acceleration post-2015, coinciding with the adoption of the UN 2030 Agenda for Sustainable Development [4]. The field is global in scope, with contributions from developed and developing economies alike, though leadership in publication volume is concentrated in a few key nations.

Table 1: Leading Countries in Economic Growth and Environmental Degradation Research

| Country | Key Research Focus | Remarks | Primary Source |

|---|---|---|---|

| China | Sustainable Inclusive Economic Growth (SIEG), determinants of carbon emissions | Most productive country; high research output [4] [5]. | |

| India | SIEG, collaboration networks | Leads in collaboration, contributing 63 publications to one network analysis [4]. | |

| USA | Sustainable financial inclusion, ESG | A leading contributor alongside China and India [22]. | |

| Pakistan | Determinants of environmental degradation | A leading country in research output on environmental degradation [5]. | |

| Italy | Sustainable Inclusive Economic Growth (SIEG) | Ranks among the most productive countries [4]. | |

| Turkey | Determinants of carbon emissions | A leading country in research output on environmental degradation [5]. |

Table 2: Leading Academic Journals in the Field

| Journal Name | Key Published Research | Remarks | Primary Source |

|---|---|---|---|

| Sustainability (Switzerland) | Sustainable Inclusive Economic Growth (SIEG) | Ranked as the leading journal in the SIEG domain [4]. | |

| Environmental Science and Pollution Research | Determinants of environmental degradation | One of the most frequent journals for research on economic growth and environmental degradation [5]. | |

| Journal of Cleaner Production | Green Total Factor Productivity (GTFP) | A key journal for GTFP literature [12]. | |

| Journal of Risk and Financial Management | Sustainable financial inclusion | Publishes research on the intersection of finance and sustainability [22]. |

Table 3: Key Research Institutions and Authors

| Institution/Author | National Context | Research Focus | Primary Source |

|---|---|---|---|

| Bekun FV | Turkey | Highly cited researcher in SIEG and environmental degradation [4] [5]. | |

| Onifade ST | Turkey | Highly cited researcher in SIEG [4]. | |

| Zhang X | China | Highly cited researcher in SIEG [4]. | |

| Tamil Nadu Agricultural University | India | Contributed to bibliometric analysis on institutional climate adaptation [23]. | |

| Guangdong University of Science and Technology | China | Contributed research on the "Triple Green Strategy" in OECD countries [20]. |

Methodological Protocols for Bibliometric Analysis

The credibility of bibliometric findings hinges on rigorous, transparent, and reproducible methodologies. The following protocols, synthesized from recent high-quality studies, outline the standard workflow.

Data Collection and Preprocessing

The foundation of any bibliometric analysis is a comprehensive and relevant dataset, typically sourced from established academic databases.

- Database Selection: The Scopus and Web of Science (WoS) core collections are the most frequently used databases due to their high-quality indexing, comprehensive coverage, and reliable citation data [4] [22] [23]. Using both databases in tandem can help mitigate the inherent coverage biases of each.

- Search Strategy: A structured and reproducible search query is developed using key keywords and Boolean operators. Examples from the literature include:

- Data Screening: The retrieved records are filtered according to predefined inclusion and exclusion criteria. This often follows the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) protocol, which ensures transparency and rigor in the selection process [4] [22] [23]. Criteria may include document type (e.g., prioritizing research articles), language (typically English), and publication year.

- Data Extraction: Key metadata is extracted for analysis, including title, authors, affiliations, publication year, source journal, abstract, keywords, and citation data.

Analytical and Visualization Techniques

Once the dataset is finalized, a suite of software tools and analytical techniques is employed to uncover patterns.

- Analytical Software:

- VOSviewer: A dominant software for constructing and visualizing bibliometric networks based on co-authorship, co-citation, and keyword co-occurrence [4] [5] [22]. It is prized for its ability to create intuitive, color-coded cluster maps.

- Biblioshiny (R-tool): An R-based tool integrated with the

bibliometrixpackage, used for conducting a comprehensive suite of bibliometric analyses and generating data summaries [4] [10].

- Key Analytical Methods:

- Performance Analysis: Uses bibliometric indicators to quantify the productivity and impact of countries, institutions, authors, and journals. Common metrics include publication count, citation count, and h-index.

- Science Mapping: Reveals the intellectual structure of the research field.

- Co-authorship Analysis: Maps collaboration networks between countries and institutions [4].

- Keyword Co-occurrence Analysis: Identifies the main research themes and how they are interconnected by analyzing how often keywords appear together in publications [4] [10].

- Co-citation Analysis: Examines the relationships between frequently cited documents and authors, helping to identify foundational knowledge groups [12].

- Thematic Evolution: Tracks how research themes emerge, mature, or decline over different time periods [4] [10]. Callon's density-centrality methodology is sometimes used to categorize themes as motor, basic, niche, or emerging/declining [10].

The workflow below illustrates the sequential stages of a robust bibliometric analysis.

The Researcher's Toolkit: Essential Reagents for Bibliometric Analysis

In bibliometric research, "research reagents" refer to the essential digital tools, data sources, and software required to conduct a robust analysis. The table below details these core components.

| Tool/Resource | Type | Primary Function | Key Feature |

|---|---|---|---|

| Scopus Database | Data Source | Provides comprehensive bibliographic data and citations. | High-quality indexing, reliable citation metrics. [4] [10] |

| Web of Science (WoS) | Data Source | Provides comprehensive bibliographic data and citations. | Rigorous journal selection, strong historical data. [22] [8] |