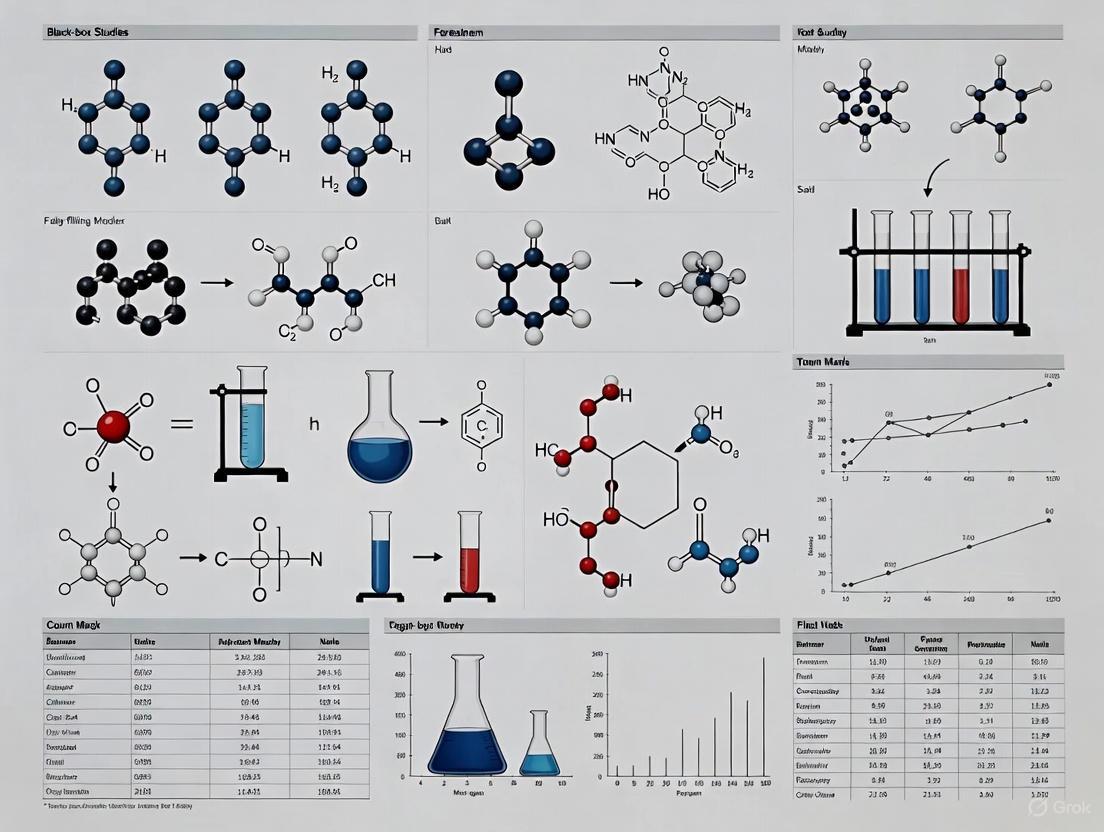

Beyond the Black Box: A Critical Analysis of Forensic Method Error Rates and Validity

This article provides a comprehensive analysis of black-box studies in forensic science, examining their critical role in estimating method error rates and establishing scientific validity for the courts.

Beyond the Black Box: A Critical Analysis of Forensic Method Error Rates and Validity

Abstract

This article provides a comprehensive analysis of black-box studies in forensic science, examining their critical role in estimating method error rates and establishing scientific validity for the courts. It explores the foundational principles of black-box design, its application across disciplines like firearms and latent prints, and the significant methodological challenges that compromise existing studies. By dissecting debates on inconclusive findings, hidden multiple comparisons, and sampling biases, this analysis offers a framework for troubleshooting and optimizing future research. Aimed at researchers and scientific professionals, the article synthesizes key insights to guide the development of more rigorous, transparent, and forensically sound validation studies.

The Foundation of Forensic Validity: Understanding Black-Box Studies and Legal Imperatives

Black-box studies represent the gold standard experimental design for establishing the foundational validity and estimating error rates across forensic science disciplines. These studies assess the accuracy of forensic examiners by presenting them with evidence samples of known origin, a fact concealed from the participants, thereby simulating real-world decision-making conditions. The framework for these studies has gained paramount importance following critical reports from the National Academy of Sciences (NAS) and the President's Council of Advisors on Science and Technology (PCAST), which highlighted the need for establishing empirical measures of reliability for feature-comparison methods [1] [2]. The fundamental principle underlying black-box testing is its double-blind, controlled approach, which quantifies examiner performance through statistically rigorous measures of false positives, false negatives, and inconclusive rates across a representative sample of practitioners and challenging evidence specimens.

The NAS 2009 report fundamentally criticized that much forensic evidence, including firearm and toolmark identification, was introduced in trials "without any meaningful scientific validation, determination of error rates, or reliability testing" [2]. In response, black-box studies have emerged as a primary methodology to address these scientific concerns. These studies are characterized by an "open set" design where there may not necessarily be a match for every questioned specimen, avoiding the underestimation of false positives inherent in closed sets and providing a more realistic assessment of examiner performance in operational contexts [1]. This framework now provides the empirical foundation for evaluating the validity and reliability of forensic disciplines, with error rates from these studies increasingly informing legal proceedings and judicial rulings on the admissibility of forensic evidence.

Comparative Performance Across Forensic Disciplines

Quantitative Error Rate Comparisons

Black-box studies have been implemented across multiple forensic disciplines, revealing distinct patterns of examiner performance and methodological challenges. The following table summarizes key findings from recent large-scale studies:

Table 1: Forensic Black-Box Study Error Rates Across Disciplines

| Discipline | False Positive Rate | False Negative Rate | Sample Size (Examiners/Decisions) | Study Characteristics |

|---|---|---|---|---|

| Firearms (Bullets) | 0.656% (0.305%-1.42%) | 2.87% (1.89%-4.26%) | 173 examiners/8,640 comparisons | Open set design; consecutive manufacture firearms; challenging specimens [1] |

| Firearms (Cartridge Cases) | 0.933% (0.548%-1.57%) | 1.87% (1.16%-2.99%) | 173 examiners/8,640 comparisons | Same participant pool as bullets; steel cartridge cases [1] |

| Palmar Friction Ridge | 0.7% | 9.5% | 226 examiners/12,279 decisions | First large-scale palm print study; stratified by size/difficulty [3] |

| Probabilistic Genotyping (STRmix) | N/A | N/A | 156 sample pairs | Quantitative model; 21 STR markers; 2-3 contributor mixtures [4] |

| Probabilistic Genotyping (EuroForMix) | N/A | N/A | 156 sample pairs | Quantitative model; same sample set as STRmix [4] |

The observed variance in error rates across disciplines reflects both inherent methodological differences and study design parameters. Firearms examination demonstrates relatively low false positive rates (0.656%-0.933%) but higher false negative rates (1.87%-2.87%), while palmar friction ridge analysis shows a notably higher false negative rate at 9.5% [1] [3]. Importantly, these studies consistently reveal that errors are not uniformly distributed across examiners, with a limited number of examiners accounting for the majority of incorrect decisions [1]. This finding underscores the importance of large sample sizes in black-box studies to reliably estimate discipline-wide error rates rather than individual examiner performance.

Analysis of Inconclusive Determinations

Inconclusive decisions represent a significant methodological challenge in interpreting black-box study results, with approaches varying across studies and disciplines. Some studies treat inconclusives as functionally correct, others consider them irrelevant to error rates, while yet others treat them as potential errors [5]. A variance decomposition approach to analyzing inconclusives in fingerprint and bullet studies reveals that the overall pattern of inconclusives can shed light on the proportion attributable to examiner variability versus other factors [5]. The reporting of error rates is substantially affected by how inconclusives are handled, with "failure rate" analyses that incorporate inconclusives yielding dramatically different results than traditional error rate calculations [5].

Table 2: Methodological Variations in Black-Box Study Designs

| Design Element | Variations | Impact on Error Rates |

|---|---|---|

| Study Design | Open set vs. closed set | Open set avoids false positive underestimation but may increase inconclusives [1] |

| Specimen Selection | Consecutively manufactured sources; challenging specimens | Provides upper bound error estimates; more rigorous testing [1] |

| Ground Truth | Known manufacturing history; reference samples | Critical for validating match/non-match determinations [1] [2] |

| Inconclusive Handling | Varied classification methods | Significantly affects reported error rates and interpretability [5] |

| Statistical Modeling | Beta-binomial vs. simple proportion | Accounts for unequal examiner-specific error rates [1] |

Experimental Protocols for Black-Box Studies

Standardized Implementation Framework

Implementing a forensically rigorous black-box study requires meticulous attention to experimental design, participant recruitment, specimen preparation, and data analysis protocols. The following workflow outlines the standardized methodology derived from recent high-impact studies:

Critical Methodological Components

Specimen Preparation and Ground Truth Establishment

The foundation of any valid black-box study lies in the meticulous preparation of specimens with unequivocally established ground truth. In firearms studies, this involves using consecutively manufactured components (e.g., barrels and slides) to create challenging comparisons that test examiner ability to distinguish between highly similar sources [1]. The protocol includes a firearm "break-in" process (e.g., 30-60 test firings) to stabilize internal wear and achieve consistent toolmarks before evidentiary specimen collection [1]. Test packets are assembled using an open-set design where each comparison set contains one questioned item and two reference items, with no match existing for every questioned specimen. This approach prevents artificial inflation of performance by mimicking real-world conditions where examiners cannot assume a match exists.

Participant Recruitment and Blind Administration

Maintaining strict double-blind protocols and recruiting a representative sample of examiners are critical methodological requirements. Participant recruitment typically occurs through professional organizations (e.g., Association of Firearm and Toolmark Examiners), forensic conferences, and email listservs, with voluntary participation from qualified examiners working across multiple jurisdictions [1]. The median examiner experience in recent studies was approximately 9 years, representing realistic operational expertise levels [1]. Communication between participants and researchers is strictly compartmentalized to preserve anonymity and prevent bias, with Institutional Review Board (IRB) oversight ensuring ethical compliance and informed consent [1]. This rigorous approach maintains the integrity of the black-box design while addressing logistical challenges associated with large-scale multi-laboratory studies.

Statistical Analysis and Error Rate Calculation

The statistical analysis of black-box data requires specialized approaches that account for the hierarchical nature of forensic decisions. The beta-binomial probability model provides maximum-likelihood estimates that do not depend on the assumption of equal examiner-specific error rates, addressing the reality that error probabilities are not identical across all examiners [1]. This approach is particularly important given that most errors tend to be committed by a limited number of examiners rather than being uniformly distributed across all participants [1]. Variance decomposition methods further enhance analysis by distinguishing between item difficulty and examiner variability as contributors to inconclusive decisions, providing more nuanced understanding of performance factors [5].

Emerging Methodologies and Quantitative Approaches

Probabilistic Genotyping Software Comparisons

Forensic DNA analysis has evolved from traditional capillary electrophoresis interpretation to sophisticated probabilistic genotyping methods implemented as specialized software. These systems employ either qualitative models (considering only detected alleles) or quantitative models (incorporating both alleles and peak height information) to compute likelihood ratios (LRs) comparing probabilities of evidence under alternative hypotheses [4]. A recent comparative analysis of 156 sample pairs using LRmix Studio (qualitative), STRmix (quantitative), and EuroForMix (quantitative) revealed that quantitative tools generally produce higher LRs than qualitative approaches, with STRmix typically generating higher LRs than EuroForMix [4]. This demonstrates how different mathematical models and statistical approaches within the same forensic discipline can yield varying evidentiary strength measurements, highlighting the importance of understanding underlying methodologies when interpreting black-box results.

Quantitative Fracture Surface Topography

Emerging quantitative approaches aim to supplement or replace traditional pattern-matching methodologies with objective statistical frameworks. For fracture matching, researchers are developing methods that use spectral analysis of surface topography mapped by three-dimensional microscopy, with multivariate statistical learning tools classifying "match" and "non-match" candidates [2]. This approach leverages the unique, non-self-affine characteristics of fracture surfaces at microscopic length scales (typically 50-70μm), where the interaction between propagating cracks and material microstructure creates distinctive topographical signatures [2]. The methodology produces likelihood ratios similar to those used in fingerprint and ballistic identification, providing a statistical foundation for source attribution while enabling estimation of misclassification probabilities [2]. These quantitative frameworks represent the next generation of forensic methodologies designed specifically to address the scientific validity concerns raised in the NAS and PCAST reports.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Methodologies for Forensic Black-Box Studies

| Research Reagent | Function in Experimental Design | Exemplary Implementation |

|---|---|---|

| Consecutively Manufactured Firearms | Provides challenging specimens with subclass characteristics; tests discrimination ability | Jimenez JA-Nine, Beretta M9A3-FDE pistols with new consecutively manufactured barrels [1] |

| Specialized Ammunition | Creates subtle toolmarks; increases comparison difficulty | Wolf Polyformance 9mm with steel cartridge cases and steel-jacketed bullets [1] |

| Probabilistic Genotyping Software | Computes likelihood ratios for DNA mixture interpretation; enables quantitative evidence assessment | STRmix, EuroForMix, LRmix Studio for analyzing complex DNA mixtures [4] |

| 3D Microscopy Systems | Captures surface topography for quantitative fracture analysis; enables statistical matching | Spectral analysis of fracture surfaces at transition scale (50-70μm) [2] |

| Statistical Modeling Packages | Analyzes hierarchical decision data; computes error rates accounting for examiner variability | Beta-binomial models for error rate estimation; variance decomposition for inconclusives [5] [1] |

| Open Set Design Framework | Prevents underestimation of false positives; mimics real-world operational conditions | Comparison sets with no match for every questioned specimen [1] |

The black-box study framework represents a transformative development in forensic science, providing empirically validated measures of examiner performance across disciplines. Current research demonstrates that error rates vary significantly between forensic domains, with false positive rates generally lower than false negative rates, and inconclusive determinations presenting ongoing methodological challenges. The consistent finding that errors are not uniformly distributed across examiners underscores the importance of large-scale studies with representative participant pools.

Future directions include developing more sophisticated statistical models that account for item difficulty and examiner expertise, standardizing the treatment of inconclusive decisions across studies, and expanding the implementation of quantitative methodologies that provide objective statistical foundations for source attributions. As black-box studies become increasingly central to establishing the scientific validity of forensic methods, their continued refinement and standardization will play a crucial role in strengthening the reliability and credibility of forensic science in legal proceedings.

The quest for scientific validity within the U.S. justice system converged dramatically with the demands of modern forensic science in the last three decades. Two pivotal events created a legal and practical catalyst for enduring reform: the U.S. Supreme Court's 1993 decision in Daubert v. Merrell Dow Pharmaceuticals, which established a new legal standard for the admissibility of expert testimony [6], and the 2004 Madrid train bombing fingerprint misidentification, a high-profile error that exposed critical vulnerabilities in forensic practice [7]. This article examines how these two events, one legal and one practical, collectively spurred a movement toward greater scientific rigor, with a specific focus on their impact on black-box studies and the assessment of forensic method error rates. For researchers and scientists, this interplay between legal precedent and forensic practice provides a powerful case study in how systemic pressure can accelerate empirical research and the implementation of robust scientific protocols.

The Daubert Standard: Reshaping the Admissibility of Expert Evidence

The Legal Framework

Prior to 1993, the dominant standard for admitting expert testimony was the Frye standard, established in 1923, which required that the methods an expert uses be "generally accepted" in the relevant scientific community [8]. The Supreme Court's ruling in Daubert replaced this with a more flexible, yet more demanding, standard derived from the Federal Rules of Evidence. The Daubert ruling cast trial judges in the role of "gatekeepers" responsible for ensuring that proffered expert testimony is not only relevant but also reliable [6] [9].

The Court provided a set of illustrative factors for judges to consider when assessing reliability [6] [8]:

- Testing and Falsifiability: Whether the expert's theory or technique can be (and has been) tested.

- Peer Review: Whether the method has been subjected to peer review and publication.

- Error Rates: The known or potential error rate of the technique.

- Standards and Controls: The existence and maintenance of standards controlling the technique's operation.

- General Acceptance: The degree to which the theory or technique is "generally accepted" within the relevant scientific community (a vestige of the Frye standard).

Subsequent rulings in General Electric Co. v. Joiner (1997) and Kumho Tire Co. v. Carmichael (1999) clarified that the trial judge's gatekeeping function applies to all expert testimony, not just "scientific" knowledge, and that appellate courts should review such decisions for an "abuse of discretion" [6] [8].

Catalyzing Scrutiny of Forensic Disciplines

Daubert's requirement to consider a method's known or potential error rate created a direct legal imperative for the forensic science community to quantify the reliability of its practices [6]. For disciplines long assumed to be infallible, such as fingerprint analysis, this legal catalyst forced a reckoning. Courts began to demand empirical evidence of validity and reliability, moving beyond an uncritical acceptance of expert assertions. This legal pressure created a burgeoning need for black-box studies—experiments that measure the accuracy of forensic examiners' decisions by presenting them with evidence samples of known origin—to generate the error rate data demanded by the legal standard [10].

The Madrid Bombing Misidentification: A Case Study in Systemic Failure

The Incident and the Error

In 2004, a series of bombs exploded on commuter trains in Madrid, Spain, killing 191 people and wounding thousands. During the investigation, the FBI identified a latent fingerprint found on a bag of detonators as belonging to Brandon Mayfield, an American attorney from Oregon [11]. The FBI's Latent Print Unit reported a match between the latent print and Mayfield's prints in the database, and he was detained as a material witness for two weeks. However, the Spanish National Police subsequently identified the print as belonging to an Algerian national. The FBI was forced to concede the error and release Mayfield [7].

Root Causes and Revelations

An internal FBI review and an external inquiry committee identified several critical failures [7] [11]:

- Flawed Application of Methodology: The examiners failed to correctly apply the ACE-V methodology (Analysis, Comparison, Evaluation, and Verification), a structured protocol for fingerprint examination.

- Confirmation Bias: The initial examiner's "match" declaration influenced the subsequent verification process, which became a confirmation rather than an independent check.

- Lack of Robust Error Rate Data: The incident highlighted that the field operated without a clear understanding of its own potential for error, undermining the reliability of its evidence in court.

The Mayfield case was a watershed moment. It demonstrated that even the most established forensic disciplines were vulnerable to human error and cognitive bias, providing a concrete and devastating example of why the scientific rigor demanded by Daubert was necessary.

The Research Response: Black-Box Studies and the Challenge of Error Rates

The combined pressure of Daubert's legal requirements and the practical demonstration of error in the Madrid case galvanized the research community. A primary response has been the proliferation of black-box studies, particularly in pattern evidence disciplines like firearms and toolmark analysis.

The Critical Challenge of "Inconclusive" Findings

Black-box studies have consistently reported low error rates, often below 1% [12]. However, researchers have demonstrated that the calculation of these rates is highly sensitive to how inconclusive results are treated [10]. In a typical study, examiners may conclude "identification," "elimination," or "inconclusive." The methodological debate centers on whether to:

- Exclude inconclusives from error rate calculations.

- Count inconclusives as correct results (a "conservative" approach).

- Count inconclusives as incorrect results.

A study led by researchers at the Center for Statistics and Applications in Forensic Evidence (CSAFE) revisited several major firearms examination black-box studies and found that the treatment of inconclusives dramatically impacts the resulting error rate estimates [10]. The researchers noted that examiners were more likely to reach an inconclusive conclusion with different-source evidence, a finding that could mask potential errors in real casework [12].

Table 1: Impact of Inconclusive Result Treatment on Error Rate Calculations in Firearms Studies

| Treatment Method | Impact on Error Rate | Interpretation |

|---|---|---|

| Exclude Inconclusives | Artificially lowers error rate | Fails to account for a significant examiner decision, overstating reliability |

| Count as Correct | Lowers or stabilizes error rate | Assumes inconclusive is a safe, neutral decision, which may not be valid |

| Count as Incorrect | Artificially inflates error rate | Over-penalizes a cautious decision that may be methodologically justified |

| Proposed Separated Analysis | Provides bounds for potential error | Calculates error rates for identification and elimination decisions separately for a more accurate range [10] |

Experimental Protocols in Black-Box Studies

A standard black-box study in a pattern evidence discipline follows a core protocol designed to simulate real-world conditions while maintaining experimental control.

- Stimuli Creation: Researchers assemble a set of evidence samples (e.g., cartridge cases fired from known firearms, fingerprints from known donors). These are organized into pairs that are either "same-source" (mated) or "different-source" (non-mated).

- Participant Recruitment: Certified practicing forensic examiners are recruited to participate, ensuring the study tests the relevant expertise.

- Blinded Administration: Examiners are presented with the sample pairs without knowledge of their ground truth (mated or non-mated status). The study is "black-box" because the participants do not know which samples are the targets of analysis.

- Data Collection: For each comparison, examiners provide one of the predetermined conclusions (e.g., Identification, Inconclusive, Elimination).

- Data Analysis: Researcher responses are compared to the ground truth. The analysis focuses on calculating false positive (different-source pair called an identification) and false negative (same-source pair called an elimination) rates. The central analytical challenge lies in determining how to classify and weight inconclusive findings [10] [12].

Visualizing the Catalytic Cycle of Reform

The dynamic relationship between the legal catalyst, forensic error, and scientific reform can be visualized as a self-reinforcing cycle.

Current Research Priorities and the Scientist's Toolkit

The momentum generated by Daubert and the Madrid case continues to shape the forensic science research agenda. The National Institute of Justice (NIJ), a key funder of forensic research, has outlined strategic priorities that directly address the identified challenges [13].

Table 2: Key Forensic Science Research Priorities and Objectives (2022-2026)

| Strategic Priority | Key Research Objectives |

|---|---|

| Advance Applied R&D | Develop automated tools to support examiners' conclusions; standardize criteria for analysis and interpretation; optimize analytical workflows [13]. |

| Support Foundational Research | Measure accuracy/reliability via black-box studies; identify sources of error via white-box studies; research human factors [13]. |

| Maximize Research Impact | Disseminate research products; support implementation of new methods; assess the role and value of forensic science in the criminal justice system [13]. |

| Cultivate the Workforce | Foster the next generation of researchers; facilitate research within public labs; advance workforce training and continuing education [13]. |

The Scientist's Toolkit: Research Reagent Solutions

For researchers embarking on studies of forensic method error rates, the following "reagents" are essential:

- Black-Box Study Designs: The core methodology for estimating real-world accuracy. Function: Provides an empirical measure of an examiner's decision-making performance by presenting them with samples of known origin under blinded conditions [10].

- Open-Set vs. Closed-Set Designs: Two fundamental experimental structures. Function: Closed-set designs (all samples come from a known pool) simplify analysis, while open-set designs (including samples from unknown sources outside the pool) more accurately mimic casework complexity and help measure the rate of inconclusive decisions [10].

- Statistical Models for Inconclusive Results: Advanced statistical frameworks. Function: Allow researchers to model the impact of inconclusive findings on error rates, providing a bounded estimate of potential error rather than a single, potentially misleading number [12].

- Human Factors Analysis: A research approach borrowed from psychology and engineering. Function: Identifies cognitive biases (e.g., contextual bias, confirmation bias) and ergonomic factors that contribute to examiner error, leading to improved protocols and procedures [13].

- Validated Reference Material Databases: Curated, diverse collections of known evidence samples (e.g., fingerprints, cartridge cases, fibers). Function: Serves as the ground-truth foundation for creating valid and reliable black-box study stimuli, ensuring that results are based on realistic and forensically relevant materials [13].

The journey from the Supreme Court's chamber in Daubert to the fingerprint misidentification in the Madrid bombing investigation has forged a new era of accountability in forensic science. The legal catalyst established a framework for scrutiny, while the practical failure provided an undeniable impetus for change. Together, they ignited a sustained research enterprise focused on empirically validating forensic methods through black-box studies and a clear-eyed assessment of error rates. For the research community, this history underscores a critical mandate: to continue developing rigorous, transparent, and statistically sound methods for measuring reliability. The ultimate goal is a forensic science system that is not only effective in the pursuit of justice but is also fundamentally and demonstrably scientific.

In the rigorous world of scientific research, particularly in fields concerned with error rates such as forensic method validation, the choice of experimental design is paramount. It directly determines the validity, reliability, and ultimate interpretability of the data. Three core methodological components—randomized designs, double-blind protocols, and open-set recognition techniques—serve as critical pillars for minimizing bias, establishing causality, and ensuring that systems perform reliably in real-world conditions. This guide provides a comparative analysis of these foundational designs, framing them within the context of black-box studies and forensic error rate research. It is tailored for researchers, scientists, and drug development professionals who require a clear understanding of the experimental protocols, advantages, and limitations of each approach to design robust and defensible studies.

Comparative Analysis of Research Designs

The table below summarizes the key characteristics, applications, and quantitative measures associated with randomized, double-blind, and open-set designs.

Table 1: Comparison of Key Research Design Components

| Feature | Randomized Designs | Double-Blind Protocols | Open-Set Recognition |

|---|---|---|---|

| Primary Function | Assigns subjects to groups by chance to eliminate selection bias [14]. | Withholds treatment allocation information from participants and researchers to prevent bias [15]. | Enables classification of known categories and identification of unknown inputs [16]. |

| Core Methodology | Random allocation using simple, block, or stratified methods [14]. | Concealment of group identity (e.g., treatment vs. placebo) from subjects and investigators [15]. | Utilizes prototype learning, one-versus-all frameworks, and threshold calibration [16]. |

| Key Advantage | Maximizes internal validity; balances known and unknown confounding factors [17] [18]. | Minimizes performance and assessment bias, plus placebo effects [15]. | Acknowledges and manages real-world uncertainty where not all classes are pre-defined. |

| Common Applications | Clinical trials, efficacy studies, causal inference research [19] [18]. | Drug efficacy trials, psychological interventions, any study susceptible to subjective judgment [15]. | Autonomous navigation, medical diagnostics, cybersecurity, and forensic analysis [16]. |

| Typical Data Output | Causal effect size with measures of statistical significance (p-values, confidence intervals). | Treatment effect estimates purified from observer and participant bias. | Classification labels with "unknown" flags; confidence scores for known classes. |

| Key Error Metrics | Type I (false positive) and Type II (false negative) error rates [18]. | Inflation of effect size due to failed blinding; increased risk of Type I error [15]. | False Positive Rate (FPR) and False Negative Rate (FNR), which is often critically overlooked [20]. |

Detailed Examination of Components

Randomized Designs

Randomized designs refer to the experimental strategy where participants are allocated to different study groups (e.g., treatment or control) using a chance mechanism, ensuring every participant has an equal probability of being assigned to any group [14].

Experimental Protocol for Randomization

- Define Population and Sample: Clearly specify the target population and use a sampling method (often random sampling) to obtain the initial study participants.

- Choose Randomization Technique: Select an appropriate method:

- Simple Randomization: Using a random number generator or coin flip for each assignment. Best for large sample sizes [14].

- Block Randomization: Participants are divided into small blocks (e.g., of 4 or 6) to ensure equal group sizes at multiple points during recruitment. This prevents an imbalance in the number of subjects per group [14].

- Stratified Randomization: Participants are first grouped (stratified) based on key prognostic factors (e.g., age, disease severity). Within each stratum, randomization is performed to ensure balance of these specific factors across the study groups [14].

- Implement Allocation Concealment: The sequence of group assignments should be concealed from the researchers enrolling participants. This prevents selection bias, as the person enrolling a subject cannot know or influence the upcoming assignment [18].

- Execute Assignment: As each eligible participant is enrolled, the next assignment in the concealed sequence is revealed.

The following workflow diagram illustrates the key decision points in selecting and implementing a randomization strategy.

Strengths and Limitations

Randomized designs are considered the gold standard for establishing causal relationships because they minimize selection bias and confounding, ensuring that the groups are comparable at baseline [17] [18]. However, they can be logistically challenging, expensive, and sometimes unethical. Furthermore, their strict inclusion criteria can limit the generalizability (external validity) of the findings to real-world populations [19] [17] [18].

Double-Blind Protocols

A double-blind study is one in which both the subjects and the researchers directly involved in the study (e.g., those administering treatment or assessing outcomes) are kept unaware of (blinded to) the treatment allocation [15] [21].

Experimental Protocol for Double-Blinding

- Preparation of Interventions: The investigational treatment and the control (e.g., placebo or active comparator) are prepared by a third party (e.g., a pharmacist or an independent methodologist) not involved in patient care or outcome assessment.

- Coding: Each intervention is assigned a unique code. The master list linking codes to actual treatments is held securely and is inaccessible to investigators and participants.

- Distribution: The coded interventions are distributed to the study sites and administered to participants based on their randomization assignment.

- Maintaining the Blind: Throughout the trial, all personnel (clinicians, nurses, data collectors) and participants are prevented from discovering the treatment codes. This includes using placebos that are identical in appearance, smell, and taste to the active treatment.

- Unblinding Procedure: A formal procedure for emergency unblinding must be established. However, any premature unblinding must be documented and reported, as it is a potential source of bias [15].

The logical structure of a double-blind, randomized, placebo-controlled trial, which is considered the gold standard for therapeutic validation, is shown below.

Strengths and Limitations

The primary strength of double-blinding is its power to minimize multiple forms of bias, including performance bias (if caregivers treat groups differently) and assessment/detection bias (if outcome assessors interpret results differently) [15]. It also helps control for placebo effects. The main limitations are practical: it is not always feasible to blind participants or clinicians (e.g., in surgical trials), and maintaining the blind throughout the study can be complex [15] [22].

Open-Set Recognition

Open-set recognition is a classification paradigm in machine learning and pattern recognition where the system is trained on a set of known classes but must also correctly identify and flag inputs that belong to unknown classes not encountered during training [16].

Experimental Protocol for Open-Set Validation

- Dataset Partitioning: Split data into training, validation, and test sets. Crucially, the training set contains only data from "known" classes. The validation and test sets contain data from both known classes and "unknown" classes that are withheld from training.

- Model Training: Train a classifier on the known classes. Common approaches include:

- Threshold Calibration: Using the validation set, determine a decision threshold on the model's output (e.g., confidence score, distance to a prototype) to decide when to classify an input as "unknown."

- Performance Evaluation: Test the model on the held-out test set. Reporting must include metrics for both the classification of known classes and the correct rejection of unknown classes.

The conceptual process for developing and testing an open-set recognition system is outlined below.

Strengths and Limitations in Forensic Context

Open-set recognition is crucial for real-world applications where systems cannot be trained on every possible object or pattern they will encounter. In forensic science, this is analogous to an examiner declaring a piece of evidence "inconclusive" or "not from a known source." A critical strength is its formal framework for handling the unknown. A major limitation, as highlighted in forensic literature, is the frequent failure to empirically validate and report the false negative rate (FNR)—the risk of incorrectly excluding a true source—which can have serious consequences in closed-pool suspect scenarios [20].

Essential Research Reagents and Solutions

The following table details key methodological "reagents" essential for implementing the three core components discussed.

Table 2: Key Research Reagents and Methodological Solutions

| Reagent/Solution | Function | Relevant Design |

|---|---|---|

| Random Number Generator (RNG) | Generates a statistically random sequence for assigning participants to groups, forming the foundation of unbiased allocation [14]. | Randomized Designs |

| Allocation Concealment Mechanism | A tool (e.g., sealed opaque envelopes or a secure computer system) that implements allocation concealment to prevent foreknowledge of the next assignment and thus selection bias [18]. | Randomized Designs |

| Placebo | An inert substance or procedure designed to be indistinguishable from the active intervention in every way (appearance, smell, administration). This is the key reagent for blinding [15]. | Double-Blind Protocols |

| Coded Intervention Pack | The physical or digital kit containing the active treatment or placebo, identifiable only by a unique code that is linked to the master allocation list held by a third party. | Double-Blind Protocols |

| Validated Known-Class Dataset | A curated and labeled dataset representing the "known" classes used to train the core classification model. Its quality and representativeness are paramount. | Open-Set Recognition |

| Curated Unknown-Class Library | A small, evolving library of samples from novel or unknown classes. Used during deployment to adapt the model and refine the decision threshold for rejection [16]. | Open-Set Recognition |

| Decision Threshold Algorithm | The method (e.g., based on maximum softmax probability or distance metrics in a latent space) for calibrating the system's sensitivity to flagging unknown inputs [16]. | Open-Set Recognition |

In forensic science, particularly in disciplines such as firearm and toolmark examination, "black-box studies" are instrumental for estimating the reliability of expert conclusions. These studies measure how often examiners correctly identify or eliminate sources (true positives and true negatives) and how often they err (false positives and false negatives). A false positive occurs when an examiner incorrectly concludes that two items share a common origin (an identification), when in fact they do not. Conversely, a false negative occurs when an examiner incorrectly concludes that two items do not share a common origin (an elimination), when in fact they do [20].

Accurately measuring these error rates is not just an academic exercise; it is a fundamental requirement for establishing the scientific validity of a forensic method. The 2016 report from the President's Council of Advisors on Science and Technology (PCAST) emphasized that a forensic method is not scientifically valid unless its error rates have been measured in studies that reflect casework conditions [20] [23]. Despite this, a significant asymmetry exists in forensic practice. While recent reforms have focused on reducing false positives, the risk of false negatives has often been overlooked [20]. This is a critical gap, as false negatives can be equally detrimental, especially in cases involving a closed pool of suspects where an elimination can function as a de facto identification of another individual [20].

This guide provides a comparative analysis of the current state of error rate measurement in forensic black-box studies, detailing the key findings, methodological challenges, and essential components for robust experimental design.

Current Landscape of Forensic Error Rate Studies

Key Findings from Black-Box Studies

The table below summarizes the core challenges and findings regarding error rate estimation in forensic firearm comparisons, as revealed by recent analyses and studies.

| Aspect | Key Finding | Implication |

|---|---|---|

| State of Validity | The scientific validity of forensic firearm comparisons has not been demonstrated, as adequate studies on accuracy and reproducibility are lacking [23]. | Statements about the common origin of bullets or cartridge cases based on individual characteristics currently lack a scientific foundation [23]. |

| Methodological Foundation | A 2024 evaluation concluded that every existing black-box study of forensic firearm comparisons has methodological flaws so grave that they render the studies invalid [23]. | Current error rates for firearms examiners, both collectively and individually, remain unknown [23]. |

| Reporting Asymmetry | Professional guidelines and major government reports have focused on false positive rates, often failing to report false negative rates [20]. | The potential for false negative errors has escaped scrutiny, leading to unmeasured error and potential miscarriages of justice [20]. |

| Context Dependence | An examiner's performance can vary substantially based on the specific conditions of the case (e.g., quality of the evidence) [24]. | A single, general error rate is insufficient; error rates must be estimated under conditions that reflect the specific circumstances of a case [24]. |

The False Negative Challenge in Forensic Practice

The problem of false negatives is particularly acute. An elimination conclusion based on class characteristics or intuitive judgment, without empirical support, carries a high risk of error [20]. This risk is compounded by contextual bias, where an examiner's knowledge of investigative constraints (e.g., a closed suspect pool) can unconsciously influence their decision-making [20]. Consequently, an elimination must be subjected to the same rigorous empirical validation as an identification to ensure the integrity of forensic conclusions.

Experimental Protocols for Valid Error Rate Studies

To address the methodological flaws in prior research, future black-box studies must adhere to rigorous experimental design and statistical analysis protocols. The following outlines the key requirements for producing scientifically valid error rates.

Core Methodological Requirements

- Comprehensive Error Reporting: Studies must move beyond reporting only false positive rates. A complete assessment of a method's accuracy requires the balanced reporting of both false positive and false negative rates to provide a full picture of performance [20].

- Individual Examiner Performance Data: For a likelihood ratio or error rate to be meaningful in a specific case, the underlying data must be representative of the performance of the particular examiner who performed the analysis. A model trained on data pooled from multiple examiners may not accurately reflect the skill level of an individual practitioner [24].

- Condition-Specific Validation: The test trials used in a validation study must reflect the conditions of the case at hand. This includes factors such as the quality of the questioned item and the characteristics of the known-source item. An examiner's performance, and thus their error rate, can vary significantly between challenging and straightforward conditions [24].

- Rigorous Design and Analysis: As statisticians with expertise in experimental design have highlighted, past studies have suffered from fundamental flaws in their design and statistical analysis. Future studies must be developed with expert statistical input to ensure they are capable of producing reliable estimates of examiner performance [23].

Protocol for a Condition-Specific Black-Box Study

The following workflow details the steps for conducting a black-box study designed to generate valid, condition-specific error rates. This process addresses the core methodological requirements and is adapted from proposals in the literature [24].

Title: Black-Box Study Workflow

Workflow Steps Explained:

- Define Casework Conditions: Subject-area expertise is used to determine the relevant sets of conditions for the study (e.g., for a fired cartridge case, this could include caliber, firearm type, and quality of the impression) [24].

- Select Examiner Cohort: Identify the examiners who will participate in the study.

- Develop Test Trials: Create a set of test trials where the ground truth (same-source or different-source) is known. The items in these trials must reflect the conditions defined in Step 1.

- Administer Trials: The trials are administered to examiners in a blinded and randomized fashion to prevent contextual bias [20].

- Collect Categorical Conclusions: Examiners provide their conclusions using a standardized scale (e.g., Identification, Inconclusive A, Inconclusive B, Inconclusive C, Elimination) [24].

- Calculate Ground-Truth Conditional Probabilities: For each examiner, calculate the probability of each categorical conclusion given that the items were from the same source, and the probability given they were from different sources [24].

- Model Individual Examiner Performance: Use statistical models, such as Bayesian methods with informed priors from multiple examiners updated by the individual's data, to estimate performance specific to that examiner [24].

- Generate Key Outputs: The model produces the two critical outputs:

- Individual Error Rates: Calculation of the examiner's specific false positive and false negative rates [20] [24].

- Condition-Specific Likelihood Ratios: Conversion of categorical conclusions into a likelihood ratio that is meaningful for the specific case conditions and the individual examiner's performance [24].

The Scientist's Toolkit: Research Reagent Solutions

The table below details essential materials and conceptual tools required for conducting robust error rate studies in forensic science.

| Tool / Reagent | Function in Research |

|---|---|

| Validated Test Materials | Sets of items (e.g., cartridge cases, bullets) with known ground truth used to create test trials that reflect real-world casework conditions [24]. |

| Standardized Conclusion Scales | Ordinal scales (e.g., the AFTE Range of Conclusions) that provide a consistent framework for examiners to report their decisions, enabling data pooling and analysis [24]. |

| Statistical Models for Individual Performance | Bayesian models (e.g., beta-binomial) that leverage pooled data from multiple examiners as an informed prior, which is then updated with data from a specific examiner to estimate their personal error rates [24]. |

| Likelihood Ratio Framework | The logically correct framework for interpreting forensic evidence, which quantifies the strength of evidence for one proposition (same source) against an alternative proposition (different sources) [24]. |

| Blinded Testing Protocols | Experimental procedures that prevent examiners from having access to extraneous contextual information, thereby mitigating contextual bias and producing more reliable error rate estimates [20]. |

| Conditional Probability Calculations | The mathematical foundation for calculating likelihood ratios and error rates, based on the probability of an examiner's response given same-source and different-source scenarios [24]. |

The accurate measurement of both false positive and false negative rates is a cornerstone of scientifically valid forensic practice. Current research indicates that this field is in a state of development, with existing black-box studies suffering from significant methodological shortcomings [23]. A paradigm shift is required—one that moves from reporting aggregate, general error rates to adopting a more nuanced approach that accounts for individual examiner performance and specific casework conditions [24]. By implementing the rigorous experimental protocols and utilizing the tools outlined in this guide, the forensic science community can generate the reliable error rate data necessary to uphold the integrity of forensic conclusions and strengthen the administration of justice.

From Theory to Practice: Implementing Black-Box Studies in Firearms and Latent Prints

The interpretation of forensic fingerprint evidence has relied on examiner expertise for over a century, yet until 2011, its accuracy and reliability had not been systematically measured through large-scale empirical research [25]. Increased scrutiny of the discipline emerged following highly publicized misidentifications, including the 2004 Madrid train bombing case where the FBI erroneously identified Oregon attorney Brandon Mayfield [26] [27]. These errors, combined with legal challenges to the scientific basis of fingerprint evidence under the Daubert standard—which requires courts to consider a method's known or potential error rate—created an urgent need for rigorous validation studies [26].

In response, the FBI Laboratory commissioned a groundbreaking black-box study to examine the accuracy and reliability of forensic latent fingerprint decisions [26] [25]. This research approach, conceived by physicist and philosopher Mario Bunge, treats examiners as "black boxes" where inputs (fingerprint pairs) are entered and outputs (decisions) emerge without considering the internal decision-making processes [26]. The study, conducted in partnership with the scientific nonprofit Noblis, represented a pivotal moment for forensic science, marking the first large-scale effort to empirically measure the performance of latent print examiners under controlled conditions [25].

Experimental Design and Methodologies

Core Study Parameters and Participant Recruitment

The FBI/Noblis study was designed to replicate operational conditions while maintaining scientific rigor through a double-blind, open-set, randomized approach [26]. The research team developed specific parameters to ensure statistically meaningful results while incorporating realistic casework challenges.

Table 1: Key Study Design Parameters

| Parameter Category | Specification | Rationale |

|---|---|---|

| Participants | 169 practicing latent print examiners | Broad representation from federal, state, local agencies, and private practice [25] |

| Experience Level | Median 10 years; 83% certified | Representative of qualified practitioner community [25] |

| Fingerprint Data | 744 latent-exemplar image pairs (520 mated, 224 nonmated) | Sufficient volume for statistical analysis while encompassing quality range [25] |

| Assignment Structure | Each examiner received ~100 pairs from total pool | Open-set design prevents process of elimination; mirrors real AFIS searches [26] |

| Image Selection | Experts curated pairs from larger pool to include challenging comparisons | Intentionally incorporate difficult determinations to establish upper error bounds [26] [25] |

| Presentation Software | Custom-developed application with limited image processing capabilities | Standardized testing environment while maintaining operational relevance [25] |

The Examination Process and Decision Framework

The study evaluated examiners using the Analysis, Comparison, Evaluation, and Verification (ACE-V) method, the prevailing approach in latent print examination [26] [25]. However, a significant design decision excluded the verification step for all decisions, allowing researchers to establish baseline error rates without the safety net of peer review [26]. Participants could render one of four decisions at key points in the examination process:

- No Value: The latent print was unsuitable for comparison

- Individualization: The latent and exemplar originated from the same source (Identification)

- Exclusion: The latent and exemplar originated from different sources

- Inconclusive: Neither individualization nor exclusion could be determined

The fingerprint data incorporated intentional challenges, including low-quality latents and nonmated pairs selected through AFIS searches to identify "close non-matches" [25]. This design element was crucial for measuring performance boundaries rather than optimal conditions.

Diagram 1: Experimental workflow of the FBI/Noblis latent print study

Key Findings and Quantitative Results

Accuracy Metrics and Error Rates

The 2011 study yielded groundbreaking quantitative data on examiner performance, providing the first large-scale error rate estimates for the latent print discipline [25]. The results demonstrated a notable asymmetry between false positive and false negative errors.

Table 2: Primary Accuracy Findings from FBI/Noblis Study

| Decision Type | Mated Pairs (Same Source) | Nonmated Pairs (Different Sources) | Error Classification |

|---|---|---|---|

| Individualization | 62.6% (True Positive) | 0.1% (False Positive) | False positive error: 1 in 1,000 |

| Exclusion | 7.5% (False Negative) | 69.8% (True Negative) | False negative error: 7.5 in 100 |

| Inconclusive | 17.5% | 12.9% | Context-dependent interpretation |

| No Value | 15.8% | 17.2% | Not considered in error rate calculations |

The false positive rate of 0.1% translates to examiners wrongly identifying two prints as coming from the same source only once in every 1,000 determinations [26]. Conversely, the false negative rate of 7.5% means examiners incorrectly excluded mated pairs nearly 8 out of 100 times [26] [25]. This asymmetry suggests the discipline is tilted toward avoiding false incriminations, a conservative approach that may reflect the serious consequences of wrongful convictions.

Reproducibility and Follow-up Research

The legacy of the original FBI/Noblis study continues through ongoing research. A 2025 follow-up study examined examiner performance with next-generation identification systems, confirming the general reliability of the discipline while providing updated metrics [28].

Table 3: Comparison of Original and 2025 Follow-up Findings

| Performance Metric | 2011 FBI/Noblis Study | 2025 Follow-up Study |

|---|---|---|

| False Positive Rate | 0.1% | 0.2% |

| False Negative Rate | 7.5% | 4.2% |

| Inconclusive Rate (Mated) | 17.5% | 17.5% |

| Inconclusive Rate (Nonmated) | 12.9% | 12.9% |

| No Value Rate (Mated) | 15.8% | 15.8% |

| No Value Rate (Nonmated) | 17.2% | 17.2% |

| Primary Concern | Potential for false positives | Individual examiner variability (one participant made majority of false IDs) |

The 2025 study noted that despite concerns that larger AFIS databases might increase false identification risks, no evidence supported this hypothesis, suggesting that risk mitigation strategies at implementing agencies may be effective [28].

Impact on Forensic Science and Legal Proceedings

Legal Admissibility and Judicial Scrutiny

The FBI/Noblis study rapidly influenced legal proceedings, with courts referencing its findings almost immediately after publication [26]. In one notable case involving a bombing at the Edward J. Schwartz federal courthouse in San Diego, the study results were cited in an opinion denying a motion to exclude FBI latent print evidence [26]. This demonstrated the practical legal significance of black-box validation studies for satisfying Daubert factors, particularly the requirement for known error rates.

The study also provided courts with scientifically rigorous data to assess the validity of fingerprint evidence, offering empirical support for what had previously been accepted largely based on historical precedent and practitioner experience [26] [27]. This shift toward evidence-based forensic science represented a significant development in both legal and scientific communities.

Methodological Legacy and Discipline Reform

The President's Council of Advisors on Science and Technology (PCAST) later cited the FBI/Noblis study as an exemplary model for black-box research in its 2016 report, recommending similar approaches for other forensic disciplines [26]. The study's design elements—including its scale, diversity of participants, incorporation of challenging comparisons, and double-blind protocols—established a benchmark for future forensic validation research.

The research also prompted increased attention to quality assurance measures within the latent print community. The finding that independent verification could detect all false positive errors and most false negative errors reinforced the importance of robust quality control procedures in operational crime laboratories [25].

Research Materials and Methodological Tools

Essential Research Components for Black-Box Studies

The FBI/Noblis study established several critical components for conducting valid black-box research in forensic science. These elements provide a framework for similar studies across pattern evidence disciplines.

Table 4: Essential Methodological Components for Forensic Black-Box Studies

| Component | Function | Implementation in FBI/Noblis Study |

|---|---|---|

| Double-Blind Design | Eliminates conscious and unconscious bias | Researchers unaware of examiner identities; examiners unaware of ground truth [26] |

| Open-Set Testing | Mimics real-world operational conditions | Examiners received 100 pairs from pool of 744; not every print had corresponding mate [26] [25] |

| Stimulus Diversity | Represents range of casework challenges | Experts selected pairs to include varied quality and difficulty levels [25] |

| Participant Diversity | Enhances generalizability of findings | Examiners from multiple agencies with varied experience levels (0.5-31 years) [25] |

| Standardized Platform | Controls for technological variables | Custom software with consistent image processing capabilities [25] |

| Ground Truth Validation | Ensures accuracy of reference data | Known mated and nonmated pairs with documented sources [25] |

Diagram 2: Conceptual framework of black-box testing methodology

The landmark FBI/Noblis latent print study fundamentally advanced the scientific understanding of fingerprint examination reliability. By providing the first large-scale empirical data on examiner accuracy, it established a new standard for forensic method validation. The findings demonstrated that while latent print examination is highly reliable for excluding nonmated pairs, it exhibits measurable error rates that must be acknowledged and addressed through rigorous quality control procedures.

The study's legacy extends beyond fingerprint evidence, serving as a model for black-box research across forensic disciplines. Its balanced approach—recognizing both the general reliability of the discipline and its specific limitations—provides a template for evidence-based forensic science that meets the demands of the legal system while maintaining scientific integrity. As forensic science continues to evolve toward more rigorous validation standards, the FBI/Noblis study remains a pivotal reference point for researchers, practitioners, and legal professionals engaged in the critical work of forensic evidence evaluation.

Forensic firearm and toolmark examination plays a critical role in the criminal justice system by linking ballistic evidence from crime scenes to specific firearms. This discipline relies on the expertise of highly trained examiners who visually compare microscopic markings on bullets and cartridge cases. Unlike many forensic disciplines that utilize objective, automated metrics, firearm examination remains largely subjective, depending on examiner judgment and experience. The scientific validity of this subjective feature-comparison method has been scrutinized in recent years, leading to calls for rigorous performance assessment through black-box studies that test examiner accuracy under controlled conditions [29].

This case study examines the current state of error rate research for bullet and cartridge case comparisons, focusing specifically on insights gained from black-box studies. We analyze the methodological frameworks employed in key studies, synthesize quantitative error rate data, and explore statistical challenges in interpreting results. The analysis is situated within the broader context of establishing the foundational validity of the forensic firearms discipline, responding directly to recommendations from the National Academy of Sciences (NAS) and President's Council of Advisors on Science and Technology (PCAST) [1] [30].

Experimental Approaches in Black-Box Studies

Core Design Principles

Black-box studies in firearms examination are designed to mimic real-world operational casework while maintaining scientific rigor through controlled conditions and known ground truth. These studies typically share several key design elements:

Open-Set Design: Unlike closed-set designs where a match always exists for every questioned specimen, open-set designs may include items with no matching counterpart, preventing examiners from assuming matches must exist and better simulating actual casework conditions [1].

Independent Pairwise Comparisons: Each comparison set is treated as an independent evaluation, typically consisting of one questioned item and two reference items. This approach avoids the correlated, round-robin comparisons that can artificially inflate performance metrics [29].

Blinded Conditions: Examiners participate without knowledge of the ground truth or study hypotheses, preventing confirmation bias. The compartmentalization of specimen preparation and data collection from examiner interaction preserves study integrity [1].

Specimen Selection and Preparation

The construction of test materials significantly influences study outcomes. Specimens are typically selected to represent a range of challenging scenarios:

Firearm Types: Studies often include firearms with different rifling characteristics, including conventional rifling and polygonal rifling (e.g., Glock generations 1-4), which leaves fewer reproducible individual characteristics on bullets and presents greater comparison difficulty [29].

Ammunition Variants: Both jacketed hollow-point (JHP) and full metal jacket (FMJ) bullets are included. JHP bullets are designed to expand on impact, potentially creating greater deformation that complicates comparison [29].

Comparison Types: Studies evaluate both Known-Questioned (KQ) comparisons (unknown evidence compared to known exemplars from a specific firearm) and Questioned-Questioned (QQ) comparisons (two unknown bullets compared to determine if they came from the same source) [31].

Table 1: Key Design Elements in Major Black-Box Studies

| Study Feature | Hicklin et al. (2024) | Monson et al. (2022) | Dunagan et al. (2024) |

|---|---|---|---|

| Sample Size | 49 examiners, 3,156 comparisons | 173 examiners, 8,640 comparisons | 49 examiners, 3,156 comparisons |

| Design | Known-Questioned & Questioned-Questioned | Open-set | Known-Questioned & Questioned-Questioned |

| Firearm Types | Conventional & polygonal rifling | Consecutively manufactured barrels | Multiple makes/models |

| Ammunition Types | JHP & FMJ | Steel-jacketed | JHP & FMJ |

| Specimen Quality | Pristine to damaged | Challenging specimens | Range of quality levels |

Quantitative Findings: Error Rates and Performance Metrics

Recent comprehensive black-box studies have produced error rate estimates that provide insights into examiner performance under testing conditions. The 2022 study by Monson et al., one of the largest to date, reported the following overall error rates [1]:

Table 2: Overall Error Rates from Monson et al. (2022) Study

| Error Type | Bullets | Cartridge Cases |

|---|---|---|

| False Positive | 0.656% (95% CI: 0.305%, 1.42%) | 0.933% (95% CI: 0.548%, 1.57%) |

| False Negative | 2.87% (95% CI: 1.89%, 4.26%) | 1.87% (95% CI: 1.16%, 2.99%) |

These findings are particularly notable as the study utilized challenging specimens designed to push the limits of examiner capability, suggesting these error rates may represent an upper bound compared to what might be expected with less challenging casework specimens [1].

Factors Influencing Decision Accuracy

The 2024 Hicklin et al. study identified several factors that significantly impact comparison difficulty and decision outcomes [29] [31]:

Rifling Type: Examiners had substantially higher rates of inconclusive responses and lower identification rates for bullets fired from firearms with polygonal rifling compared to conventional rifling.

Bullet Quality: The rate of inconclusive responses was inversely related to the quality of the questioned bullets, with damaged or suboptimal specimens producing more indeterminate conclusions.

Ammunition Compatibility: Comparisons involving different types of ammunition fired from the same firearm resulted in high rates of erroneous exclusions.

Firearm Relatedness: The rate of true exclusions was particularly high when comparing different caliber bullets and was higher for comparisons of different firearm makes/models versus the same model.

Methodological Challenges and Statistical Considerations

The Inconclusive Dilemma

A significant challenge in interpreting black-box studies lies in how inconclusive results are treated statistically. Inconclusive responses occur frequently in firearms comparison studies, particularly with challenging specimens, and their treatment dramatically impacts reported error rates [10] [12].

Researchers have identified three primary approaches to handling inconclusive results in error rate calculations, each with different implications:

- Exclusion: Inconclusive responses are removed from error rate calculations entirely

- Correct Classification: Inconclusives are counted as correct responses

- Incorrect Classification: Inconclusives are counted as errors

A Bayesian analysis by Carriquiry et al. (2023) demonstrated that error rates currently reported as low as 0.4% could potentially be as high as 8.4% in models that account for non-response, and over 28% when inconclusives are counted as missing responses [30]. This highlights the critical importance of transparent reporting regarding how inconclusive results are treated.

Signal Detection Theory Applications

Recent research has advocated for applying signal detection theory to better understand firearm examiner performance. This approach distinguishes between accuracy (discriminability) and response bias, providing a more nuanced understanding of examiner decision-making [32].

The ordered probit model has been proposed as one method to translate examiner responses into quantitative measures of evidence strength. This model summarizes the distribution of examiner responses along a latent axis representing support for the "same source" proposition, allowing for the calculation of likelihood ratios that express evidential strength numerically [33].

Figure 1: Black-Box Study Workflow for Firearm Evidence Comparisons

Research Reagents and Materials

Successful execution of firearms comparison studies requires carefully selected materials and reagents that approximate real-world conditions while introducing controlled challenges.

Table 3: Essential Research Materials for Firearms Comparison Studies

| Material/Reagent | Function in Research | Examples from Studies |

|---|---|---|

| Consecutively Manufactured Firearms | Tests discrimination of subclass characteristics; assesses individual characteristics | Jimenez JA-9, Beretta M9A3-FDE, Ruger SR-9c [1] |

| Polygonal Rifling Firearms | Creates challenging comparisons with fewer reproducible marks | Glock generations 1-4 [29] |

| Steel-Jacketed Ammunition | Harder substrate that receives fewer toolmarks; creates difficult comparisons | Wolf Polyformance 9mm Luger [1] |

| Jacketed Hollow-Point (JHP) Bullets | Tests comparison of deformed expanded bullets | Various JHP ammunition [29] |

| Full Metal Jacket (FMJ) Bullets | Standard comparison for baseline performance | Various FMJ ammunition [29] |

| Comparison Microscopy Equipment | Standardized examination under controlled conditions | Forensic comparison microscopes [29] |

Alternative Analytical Frameworks

Likelihood Ratio Approach

Traditional categorical conclusions (Identification, Inconclusive, Elimination) have been criticized for potentially overstating evidence strength. Research comparing the verbal conclusion scale to likelihood ratios derived from black-box study data suggests that current terminology may overstate the strength of evidence by several orders of magnitude [33].

The likelihood ratio approach quantifies evidence strength as the ratio of the probability of the observed evidence under two competing propositions (same source versus different sources). This framework allows examiners to communicate their interpretation of evidence strength without making ultimate decisions about source attribution, which properly remains the purview of the trier of fact [33].

Figure 2: Ordered Probit Model for Translating Examiner Responses to Quantitative Measures

Black-box studies have provided valuable insights into the performance of forensic firearms examiners, yielding quantitative error rate estimates that inform discussions of foundational validity. The current body of research suggests that while examiners generally demonstrate high accuracy rates under testing conditions, these rates are significantly influenced by multiple factors including specimen quality, firearm type, ammunition characteristics, and methodological decisions regarding the treatment of inconclusive results.

The estimated false positive error rates below 1% and false negative rates between 1.87%-2.87% from the largest studies provide a benchmark for the discipline, though these figures represent performance under challenging conditions designed to test the limits of examiner capability [1]. The field continues to grapple with complex methodological questions regarding optimal study design, statistical treatment of inconclusive results, and the most appropriate frameworks for communicating evidence strength.

Future research directions should include larger-scale studies with enhanced design to address missing data issues, continued development of quantitative frameworks for evidence evaluation, and exploration of hybrid approaches that combine human expertise with statistical algorithms. As the scientific foundation of firearms examination continues to evolve, transparent reporting of methodological limitations and continued refinement of error rate estimation will be essential for both scientific progress and appropriate application in legal contexts.

The validity of error rates estimated through black-box studies in forensic science is not a preordained fact but a direct consequence of study design. Two pillars of this design—item bank construction and participant sampling—fundamentally shape the resulting accuracy estimates, influencing whether reported error rates reflect true examiner proficiency or are artifacts of the study itself. This guide examines the experimental protocols and outcomes from seminal studies in latent prints and firearms analysis to objectively compare their approaches and findings.

Quantitative Comparison of Black-Box Study Designs and Outcomes

The design of a black-box study, particularly the composition of its item bank and the selection of its participants, creates the conditions under which error rates are observed. The table below provides a structured comparison of two foundational studies, highlighting how their differing approaches yield different interpretations of forensic accuracy [34].

Table 1: Comparative Analysis of Forensic Black-Box Studies

| Feature | Latent Prints Study (Ulery et al., 2011) | Firearms (Bullets) Study (Monson et al., 2023) |

|---|---|---|

| Item Bank Composition | 744 items [34] | 228 items [34] |

| Same-Source vs. Different-Source Ratio | 70% same-source, 30% different-source [34] | 17% same-source, 83% different-source [34] |

| Item Difficulty & Realism | Intentional inclusion of low-quality latents; non-mated pairs included "close non-matches" from an IAFIS search [34] | Items from three firearm types; 'break-in' firings used to achieve 'consistent and reproducible toolmarks' [34] |

| Participant Sampling & Task | 169 practicing latent print examiners; no noted exclusions; each assigned 98-110 items [34] | 173 participants; restricted to US and excluded FBI examiners; assigned 15, 30, or 45 items [34] |

| Reported Error Rate | Computed as erroneous determinations / total determinations (including inconclusives) [34] | Computed as erroneous determinations / total determinations (including inconclusives) [34] |

| Impact of Inconclusive Treatments | Error rates are "substantially smaller" than "failure rate" analyses that count inconclusives as potential errors [34] | Error rates are "substantially smaller" than "failure rate" analyses that count inconclusives as potential errors [34] |

| Key Design Limitation | High concentration of same-source items may not reflect the prevalence in casework [34] | The asymmetry in same/different source items makes it difficult to calculate a reliable false negative rate [10] |

Detailed Experimental Protocols in Black-Box Studies

The following section outlines the standard methodologies employed in black-box studies, detailing the protocols for constructing the experiment and analyzing the resulting data.

Core Experimental Workflow

The general protocol for a black-box study follows a sequence from foundational design choices to data interpretation. The diagram below outlines this workflow and the critical decisions at each stage [34] [30].

Protocol 1: Item Bank Construction

The item bank forms the foundation of the study, representing the universe of potential comparisons from which examiners' skills are inferred [34] [30].

- Determining Ground Truth: Researchers create test items where the ground truth (same-source or different-source) is known. This often involves using controlled reference samples, such as fingerprints from known individuals or bullets fired from specific barrels [34].

- Controlling Item Difficulty and Representativeness: A critical step is selecting items that reflect the challenges of real casework. This includes intentionally including low-quality samples (e.g., smudged latents) and, crucially, "close non-mates"—different-source items that share superficial similarities to test an examiner's ability to discriminate [34].

- Setting the Prevalence of Same-Source Pairs: The ratio of same-source to different-source pairs in the item bank is a major design choice. This prevalence can heavily influence outcome metrics and may not match the prior probability encountered in actual casework [34].

Protocol 2: Participant Sampling and Assignment

This protocol ensures that the examiners in the study constitute a representative sample from which meaningful error rates can be generalized [34].

- Defining the Population and Eligibility: Researchers must define the population of interest (e.g., all practicing latent print examiners in the US) and set eligibility criteria. These criteria can introduce selection bias, for instance, if certain agencies or examiners are excluded [34].

- Assignment of Items: In large studies, it is logistically impractical for every examiner to evaluate every item. Instead, a design is used where each examiner is assigned a subset of the total item bank. This requires careful randomization or balancing to ensure that item difficulty and type are distributed across examiners [34].

Protocol 3: Variance Decomposition Analysis for Inconclusive Results

This advanced statistical protocol moves beyond simplistic treatments of inconclusive findings by analyzing their underlying patterns [34].

- Objective: To determine whether inconclusive responses are primarily due to specific examiners (examiner variability) or specific test items (item characteristics). This helps quantify what proportion of inconclusives should be considered potential errors versus a reflection of the study's design [34].

- Methodology: The analysis involves computing raw variance in inconclusive rates across both examiners and items. These variances are then compared. A high examiner variance suggests inconclusives are a matter of individual examiner judgment or tendency, while a high item variance suggests they are driven by inherently challenging test items created by the study designers [34].

- Statistical Modeling: A more refined approach uses a logistic regression model with parameters for each examiner and each test item. This model estimates the tendency of each examiner to choose "inconclusive" and how likely each item is to be rated as inconclusive, providing a robust basis for attributing the source of ambiguity [34].

The Research Toolkit for Black-Box Studies

Conducting a rigorous black-box study requires specific "reagents" and materials. The following table details key components beyond standard laboratory equipment.

Table 2: Essential Research Reagents and Materials for Black-Box Studies

| Item/Category | Function in the Research Context |

|---|---|

| Validated Item Bank | A collection of forensic comparisons with known ground truth. It is the core reagent against which examiner accuracy is tested. Its construction, including the ratio of same/different source pairs and inclusion of difficult items, is the most critical aspect of the study [34]. |

| Standardized Conclusion Scale | A predefined set of conclusions (e.g., Identification, Exclusion, Inconclusive) that examiners must use. Standardization, such as the AFTE scale for firearms, ensures consistent data collection across all participants [34]. |