Beyond 'Mild' and 'Severe': A Research Guide to Optimizing Verbal Rating Scales with Strong Support Statements

This article provides a comprehensive guide for researchers and drug development professionals on the critical role of support statements in verbal rating scales (VRS).

Beyond 'Mild' and 'Severe': A Research Guide to Optimizing Verbal Rating Scales with Strong Support Statements

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical role of support statements in verbal rating scales (VRS). It covers foundational concepts of VRS in patient-reported outcomes (PROs), methodologies for developing and applying robust verbal descriptors, strategies for troubleshooting common pitfalls like respondent confusion and scale variance, and frameworks for rigorous psychometric validation. By synthesizing current evidence and best practices, this resource aims to enhance the reliability, validity, and sensitivity of verbal scales in clinical trials and healthcare research, ultimately improving the quality of data used to assess treatment efficacy and patient experience.

The Building Blocks: Understanding Verbal Rating Scales and the Critical Role of Support Statements

Defining Verbal Rating Scales (VRS) and Their Place in Clinical Research

Table of Contents

- Introduction to Verbal Rating Scales

- VRS in Clinical and Research Settings

- Comparative Responsiveness of VRS

- The Challenge of Interpretation and Standardization

- Experimental Protocols for VRS Research

- A Scientist's Toolkit for VRS Implementation

- Conclusion and Future Directions

A Verbal Rating Scale (VRS), also known as a verbal descriptor scale, is a psychometric tool used to quantify subjective experiences, such as pain, fatigue, or nausea. In a typical application, patients are presented with a series of ordered phrases (e.g., "none," "mild," "moderate," "severe") and are asked to select the one that best describes their current state [1]. Unlike visual analog or numerical scales, the VRS translates a patient's subjective feeling directly into a categorical verbal descriptor, which is then converted into an ordinal number for analysis. This direct use of language makes VRS intuitively simple for patients and clinicians, facilitating quick assessment and communication. However, this reliance on language also introduces core challenges regarding the precision and interpretation of each verbal anchor.

VRS in Clinical and Research Settings

Verbal Rating Scales are a cornerstone of Patient-Reported Outcome (PRO) measures, playing a critical role in clinical trials and routine care. Their applications are diverse, spanning from monitoring post-operative symptoms to evaluating the efficacy of new pharmaceuticals.

A primary application is in symptom and adverse event monitoring. For instance, in oncology, VRS is widely used to track symptoms in patients, often adapted from validated instruments like the National Cancer Institute's Patient-Reported Outcomes version of the Common Terminology for Adverse Events (PRO-CTCAE) [1]. In this context, VRS data can be linked directly to clinical alerting systems; a patient reporting "severe" pain on a daily recovery tracker may trigger an automatic follow-up call from a nurse, enabling proactive management of side effects and potentially reducing avoidable urgent care visits [1].

Furthermore, VRS is instrumental in assessing functional interference. Scales often move beyond measuring mere symptom intensity to evaluate how much a symptom interferes with daily activities. For example, a "mild" pain interference might be described as "I can do most of my daily activities without any problem, but some are a little harder because of pain," whereas "somewhat" interference could be defined as "I can do some things okay, but most of my daily activities are harder because of pain" [1]. This provides clinicians and researchers with actionable data on a treatment's impact on a patient's quality of life.

Comparative Responsiveness of VRS

A critical question in clinical research is how the performance of Verbal Rating Scales compares to other common scales, such as Numerical Rating Scales (NRS). Responsiveness, a key psychometric property, refers to an instrument's ability to detect change over time. The choice between VRS and NRS can significantly impact the outcomes and sensitivity of a clinical study.

Table 1: Comparison of Scale Responsiveness in Chronic Pain Patients

| Scale Type | Description | Responsiveness (Standardized Response Mean) | Key Finding |

|---|---|---|---|

| VRS (Current Pain) | 6-point scale assessing current pain | Small to Moderate | Less responsive than NRS for detecting patient-reported improvement [2]. |

| NRS (Current Pain) | 11-point scale (0-10) assessing current pain | Moderate to Large | Significantly larger responsiveness and greater discriminatory ability than VRS in patients with improved pain [2]. |

| NRS (Composite Score) | Composite of worst, least, average, and current pain | Moderate to Large | More responsive than VRS and individual NRS items for worst, least, or average pain [2]. |

The data suggests that while VRS is a valid tool, NRS—particularly a current pain item or a composite score—may be more sensitive for detecting changes in clinical states, especially in studies involving interventions like self-management programs where measuring improvement is a primary goal [2].

The Challenge of Interpretation and Standardization

A significant limitation of Verbal Rating Scales is the inherent subjectivity and potential for miscommunication. The same verbal descriptor can hold different meanings for different individuals, including both patients and the experts interpreting the data.

Research has demonstrated a troubling misalignment between expert intentions and lay interpretations of verbal phrases used in scales. One study using a membership function approach—which quantifies how people map verbal phrases to numerical probabilities—found that while laypersons generally order verbal conclusion phrases (e.g., "weak," "strong," "very strong") as experts intend, their actual numerical interpretations show substantial overlap and variability [3]. For instance, the terms "weak" and "limited" were found to be virtually interchangeable, with preferred numerical replacement values of 62.50% and 60.97%, respectively [3]. This indicates a high potential for miscommunication, as the intended precision of the scale is lost in translation.

This problem is not merely theoretical. A real-world study at a cancer center tested whether replacing brief VRS descriptors (e.g., "mild," "moderate") with more explicit ones (e.g., "Mild: I can generally ignore my pain") would improve the scale's properties. Contrary to the hypothesis, the explicit descriptors did not reduce variance and, in fact, led to a slightly higher coefficient of variation. Furthermore, the addition of descriptive text increased the time patients took to complete the questionnaire without improving the association between symptom scores and known clinical predictors [1]. This suggests that simply adding more words may not resolve the fundamental challenge of verbal scale interpretation and can introduce new inefficiencies.

Experimental Protocols for VRS Research

To investigate the properties and effectiveness of VRS, rigorous experimental designs are required. The following outlines a protocol derived from published research.

Protocol: Interrupted Time Series Design for VRS Modification

Objective: To compare the properties of a standard VRS versus a VRS with explicit descriptors in a clinical population.

Methodology: This design leverages a large historical database as a control, implementing the modified scale at a specific point in time and comparing outcomes before and after the change [1].

Table 2: Key Components of an Interrupted Time Series Experiment

| Component | Description | Example from Literature |

|---|---|---|

| Population | Ambulatory surgery patients undergoing cancer treatment [1]. | 17,500 patients undergoing 21,497 operations (before change); 1,417 patients (after change) [1]. |

| Intervention | Implementation of a VRS with explicit verbal descriptors. | Replacing "mild" with "Mild: I can generally ignore my pain" [1]. |

| Control | Historical cohort completing the standard VRS with brief descriptors. | Data from patients who completed questionnaires before the change was implemented [1]. |

| Primary Outcomes | 1. Coefficient of variation of symptom scores.2. Strength of association between symptom scores and known predictors (e.g., age, procedure type).3. Time to questionnaire completion [1]. | |

| Statistical Analysis | Multivariable mixed-effects linear regression adjusting for postoperative day and using nested random effects for patients and surgeries. Comparison of coefficients of variation and interaction tests between cohorts [1]. |

Experimental Workflow for VRS Comparison

A Scientist's Toolkit for VRS Implementation

Successfully deploying and analyzing Verbal Rating Scales in a research context requires a set of well-defined "reagents" or materials. The following table details essential components for a robust VRS-based study.

Table 3: Research Reagent Solutions for VRS Studies

| Item | Function / Definition | Example / Notes |

|---|---|---|

| Validated PRO Instrument | A foundation questionnaire from which VRS items can be adapted. | The PRO-CTCAE (Patient-Reported Outcomes version of the Common Terminology for Adverse Events) is a common source for symptom tracking in oncology [1]. |

| Brief VRS Descriptors | The standard set of verbal anchors. | The five-point scale: "None," "Mild," "Moderate," "Severe," "Very severe" [1]. Serves as the control condition in comparative studies. |

| Explicit VRS Descriptors | Experimental descriptors that elaborate on the brief anchors. | "Mild: I can generally ignore my pain.""Somewhat: I can do some things okay, but most of my daily activities are harder because of fatigue" [1]. |

| Clinical & Demographic Covariates | Patient and treatment variables used to validate scale performance. | Age, gender, procedure type, American Society of Anesthesiology (ASA) score, Body Mass Index (BMI), Apfel score (for nausea) [1]. |

| Statistical Analysis Plan | A pre-defined plan for analyzing scale properties. | Includes mixed-effects models with nested random effects, calculation of the coefficient of variation, and receiver operating characteristic (ROC) curve analysis for responsiveness [1] [2]. |

Verbal Rating Scales remain a vital, patient-centric tool for capturing subjective experiences in clinical research. Their strength lies in their intuitive simplicity and direct communication of patient states. However, their place in research must be informed by a clear understanding of their limitations. Evidence indicates that while VRS is valid, Numerical Rating Scales may offer superior responsiveness for detecting clinical change [2]. Furthermore, the fundamental challenge of standardizing the interpretation of verbal descriptors persists, as attempts to clarify scales with more explicit language have not yielded consistent improvements in psychometric properties and can increase respondent burden [1] [3].

Future research should continue to explore the optimal design of verbal scales, perhaps through co-creation with patients to ensure descriptors are meaningful and interpreted as intended. The use of methodologies like membership functions can help quantify and mitigate interpretation errors [3]. For the practicing researcher, the choice to use a VRS should be deliberate, weighing its ease of use against the need for precision and responsiveness, and should always be accompanied by a rigorous plan for validating its performance within the specific study context and population.

The precise wording of verbal descriptors is a cornerstone of reliable data collection in scientific research, particularly in fields that rely on subjective human interpretation. Verbal rating scales (VRS) are fundamental tools across diverse domains—from clinical outcome assessments in drug development to forensic evidence evaluation and sports science research. These scales use verbal expressions (e.g., "mild," "severe," "likely," "strong support") to quantify subjective experiences, perceptions, or opinions. The strength of support statements within these scales—the specific phrases used to anchor response options—directly influences how participants interpret and use the scale, ultimately determining data quality, reliability, and validity.

Research consistently demonstrates that the choice of verbal descriptors is not merely a presentational concern but a methodological variable with profound implications for data interpretation. Inconsistencies in how individuals interpret these descriptors introduce measurement error, potentially compromising statistical analyses, obscuring true treatment effects in clinical trials, and leading to flawed conclusions. This technical guide examines the impact of verbal descriptor wording on data quality and participant interpretation, framed within the context of verbal scales research, to equip researchers and drug development professionals with evidence-based strategies for optimizing these critical measurement tools.

The Mechanisms of Interpretation: How Wording Influences Data

Cognitive and Contextual Factors in Descriptor Interpretation

The interpretation of verbal descriptors is a complex cognitive process influenced by multiple factors. Individuals naturally translate verbal probability expressions and qualitative descriptors into numerical values to facilitate decision-making, but this translation process is highly variable [4]. This variability stems from several sources:

- Linguistic Uncertainty: Inherent vagueness in qualitative terms like "mild," "moderate," and "severe" allows for broad interpretation ranges. Without explicit anchors, individuals default to personal, subjective frameworks for meaning.

- Demographic and Experiential Factors: Evidence suggests that age, education level, and cultural background can influence how verbal descriptors are interpreted, necessitating adjustment for these factors in non-randomized data analyses [5] [6].

- Contextual Framing: The specific context in which a scale is administered—such as a clinical trial versus a forensic report—can prime participants toward different interpretations, even for identical verbal anchors.

The Precision-Usability Tradeoff in Descriptor Design

A fundamental challenge in verbal scale design lies in balancing precision with usability. While more explicit, detailed descriptors can reduce ambiguity, they also increase cognitive load and may not be suitable for all populations. Research indicates that vulnerable participants, including those with limited literacy, cognitive impairments, or different linguistic backgrounds, may struggle with both brief and explicit descriptors, potentially leading to under- or over-estimation of their true experiences [6] [7]. This tradeoff necessitates careful consideration of target population characteristics when selecting or developing verbal descriptors for research instruments.

Empirical Evidence: Quantitative Studies on Descriptor Interpretation

Numeric Equivalents of Common Verbal Descriptors

Substantial research has quantified how individuals assign numeric values to verbal probability expressions. Consistent patterns emerge across studies, enabling the creation of standardized interpretation guidelines. Table 1 summarizes the numeric interpretations of common verbal probability terms based on empirical studies with both laypersons and healthcare professionals.

Table 1: Numeric Interpretations of Verbal Probability Terms

| Probability Term | Frequency Term | Central Estimate (%) | Typical Range (%) |

|---|---|---|---|

| Very Likely | Very Frequently | 90 | 80 - 95 |

| Likely/Probable | Frequently | 70 | 60 - 80 |

| Possible | Often | 40 | 30 - 60 |

| Unlikely | Infrequently | 20 | 10 - 30 |

| Very Unlikely | Rarely | 10 | 5 - 15 |

Source: Adapted from empirical studies reviewed in [4]

These data reveal important patterns for research design: terms like "likely/probable" and "very likely" show relatively consistent interpretation, while middle-range terms like "possible" exhibit wider variation. Notably, terms incorporating "risk" (e.g., "low risk") are particularly problematic as respondents often confuse frequency with severity, making them poor choices for precise scientific measurement [4].

Variability in Interpretation Across Descriptor Sets

Research has specifically investigated which sets of verbal descriptors yield the most consistent interpretation. Mutebi et al. (2016) found that among common five-point descriptor sets, certain combinations demonstrated superior interpretive consistency [5] [6]:

- "None," "Mild," "Moderate," "Severe," "Very Severe"

- "Not at all," "A little bit," "Somewhat," "Quite a bit," "Very much"

These sets showed mean numeric scores closest to theoretically ideal fixed intervals (0.0, 2.5, 5.0, 7.5, 10.0), with descriptors like "mild" (2.50), "moderate" (5.01), "a little bit" (2.35), and "quite a bit" (7.65) aligning remarkably well with their expected values [5]. In contrast, sets using "never, rarely, sometimes, often, always" or "poor, fair, good, very good, excellent" demonstrated greater variability in interpretation, making them less reliable for precise measurement.

Impact of Explicit Versus Brief Descriptors

A critical question in descriptor design is whether adding explicit, detailed descriptions to brief terms improves measurement properties. A recent large-scale study compared brief VRS descriptors ("mild," "moderate," "severe") with explicit descriptors ("Mild: I can generally ignore my pain") in patients reporting post-operative symptoms [1].

Table 2: Comparison of Brief vs. Explicit Verbal Descriptors

| Metric | Brief Descriptors | Explicit Descriptors | Interpretation |

|---|---|---|---|

| Symptom Scores | Baseline reference | ~10% lower | Explicit descriptors may reduce score inflation |

| Coefficient of Variation | Baseline reference | Slightly higher | Increased relative variability with explicit terms |

| Association with Known Predictors | Stronger for some symptoms (e.g., nausea) | Weaker for some associations | Brief descriptors may preserve expected relationships |

| Completion Time | Baseline reference | Significantly longer | Increased respondent burden with explicit descriptors |

Source: Data from [1]

Contrary to expectations, explicit descriptors did not improve scale properties and actually slightly increased the coefficient of variation [1]. This suggests that while patients may report uncertainty with brief descriptors, elaborating on these descriptors may not enhance measurement precision and could potentially introduce new sources of variation through increased cognitive complexity.

Experimental Protocols for Descriptor Validation

Protocol 1: Quantifying Numeric Equivalents for Verbal Descriptors

Objective: To establish reliable numeric ranges for verbal probability expressions or qualitative descriptors used in research instruments.

Methodology:

- Participant Recruitment: Recruit a sufficiently large sample (N>300 recommended) representative of the target population, including both laypersons and domain experts when relevant [4].

- Survey Administration: Present participants with verbal terms (e.g., "likely," "possible," "strong support") in random order to avoid ordering effects.

- Numeric Assignment: Ask participants to assign a numeric probability (0-100%) or intensity value (0-10) to each term that corresponds to their interpretation.

- Data Analysis: Calculate central tendencies (mean, median) and variability measures (standard deviation, interquartile range) for each term. Terms with overlapping ranges or excessive variability should be flagged as problematic.

Key Considerations: This protocol can be adapted for cross-cultural validation by administering in different languages and comparing results across demographic subgroups to identify interpretation differences [5].

Protocol 2: Testing Explicit Versus Brief Descriptors

Objective: To determine whether adding explicit descriptions to standard verbal anchors improves measurement properties in a specific research context.

Methodology:

- Instrument Development: Create two versions of the assessment instrument: one with brief descriptors (e.g., "mild," "moderate," "severe") and one with explicit, context-specific descriptions for each level.

- Study Design: Implement an interrupted time-series design or randomized controlled trial where participant cohorts complete different instrument versions [1].

- Outcome Measures: Compare key psychometric properties between groups, including:

- Score distributions and variances

- Associations with known predictors or convergent validity measures

- Completion times

- Error rates or missing data patterns

- Qualitative Feedback: Collect participant feedback on interpretation ease and clarity for both descriptor types.

Key Considerations: Ensure adequate sample size to detect meaningful differences in variability. Account for potential learning effects in within-subjects designs [1].

Protocol 3: Error Rate Assessment in Low-Literacy Populations

Objective: To evaluate the usability and error rates of verbal descriptor scales in populations with varying literacy levels.

Methodology:

- Participant Stratification: Recruit participants across a spectrum of educational backgrounds and literacy levels, using standardized literacy assessments where appropriate [7].

- Cognitive Interviewing: Employ think-aloud protocols where participants verbalize their thought process while completing scales with different verbal descriptors.

- Error Coding: Develop pre-specified criteria for classification of errors (e.g., misinterpretation, inconsistent responses, arbitrary pattern responding) [7].

- Analysis: Quantify error rates across different descriptor formats and participant literacy levels, identifying specific descriptor types that pose particular challenges.

Key Considerations: This approach is particularly valuable for research involving diverse populations or when developing instruments for global clinical trials [7].

Domain-Specific Considerations and Applications

Clinical Research and Drug Development

In clinical research and drug development, verbal descriptors form the foundation of Patient-Reported Outcome (PRO) measures used as endpoints in clinical trials. The FDA's emphasis on PRO measurement in drug approval underscores the critical importance of well-defined verbal descriptors that consistently reflect treatment effects across diverse patient populations [6] [1]. Research demonstrates that the choice of verbal descriptors can directly impact clinical decision-making; for instance, patients reporting "severe" pain on a PRO trigger nurse follow-ups in some clinical systems, making precise interpretation of this term essential for appropriate resource allocation [1].

Forensic Science Applications

Verbal scales are used in forensic science to communicate the strength of evidence, with standardized terms like "limited support," "moderate support," and "strong support" intended to convey likelihood ratios to courts [8]. However, research reveals significant perception problems with these verbal scales. A pilot study found that participants' understanding of these terms diverged substantially from their intended meanings, with generally inflated perceptions of lower-strength terms and deflated perceptions of higher-strength terms [8]. This misinterpretation poses serious implications for judicial decision-making and highlights the critical need for validated verbal scales in this high-stakes domain.

Sports Science and Performance Measurement

In sports science research, verbal encouragement (VE) serves as a powerful intervention that utilizes specific verbal descriptors to enhance performance. Studies demonstrate that consistent, repeated VE containing motivating words and cues significantly improves strength and endurance outcomes in athletes [9]. The psychophysiological impact of carefully selected verbal descriptors in this context includes reduced perceived exertion and increased physical activity enjoyment, highlighting how strategic wording can directly influence both psychological and physiological parameters in research settings [9].

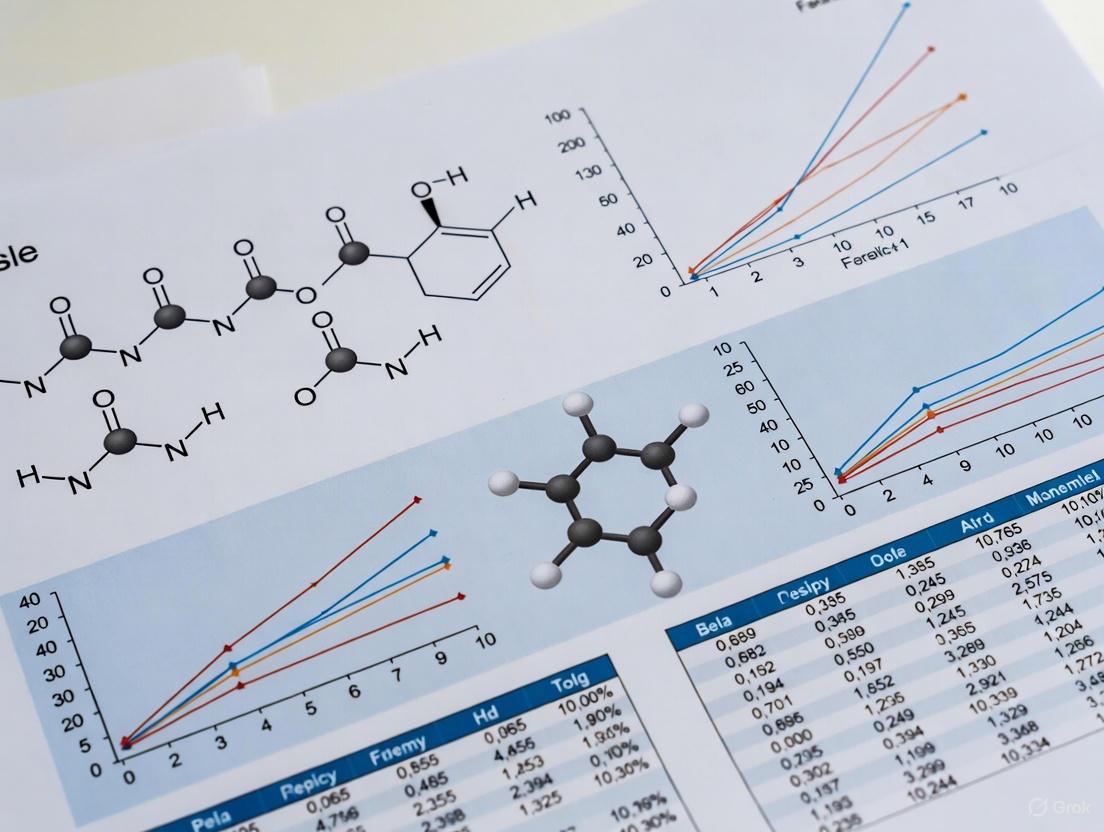

Visualization: Experimental Workflow for Descriptor Validation

The following diagram illustrates a comprehensive experimental workflow for validating verbal descriptors in research instruments:

The Researcher's Toolkit: Essential Methodological Components

Table 3: Research Reagent Solutions for Verbal Descriptor Studies

| Component | Function | Examples & Specifications |

|---|---|---|

| Standardized Descriptor Sets | Provides consistent response anchors for rating scales | Five-point sets: "None, Mild, Moderate, Severe, Very Severe" or "Not at all, A little bit, Somewhat, Quite a bit, Very much" [5] [6] |

| Visual Risk Scale | Translates verbal probabilities into standardized numeric ranges | Visual scale displaying "Very Likely" (80-95%), "Likely" (60-80%), "Possible" (30-60%), etc. [4] |

| Cognitive Interviewing Protocol | Elicits participant thought processes during scale completion | Think-aloud methods, verbal probing for specific descriptors [7] |

| Error Classification System | Quantifies misunderstanding or misapplication of descriptors | Pre-specified criteria for coding errors in response patterns [7] |

| Mixed-Effects Modeling Framework | Accounts for nested data structures in repeated measures | Statistical models with random intercepts for participants and nested observations [1] |

The evidence consistently demonstrates that wording choices in verbal rating scales directly impact data quality and interpretation across research domains. Based on the empirical findings reviewed in this guide, researchers should adopt the following best practices:

Select High-Performance Descriptor Sets: Prioritize verbal descriptor sets with demonstrated interpretive consistency, particularly the "None, Mild, Moderate, Severe, Very Severe" set for symptom assessment or "Not at all, A little bit, Somewhat, Quite a bit, Very much" for frequency or intensity measurement [5] [6].

Validate Numeric Equivalents for Your Population: Never assume consistent interpretation of probability terms across different populations. Conduct local validation studies to establish how your specific research population interprets key verbal descriptors, particularly when working with diverse cultural or demographic groups [4] [7].

Balance Precision with Practicality: While explicit descriptions may seem theoretically superior, evidence suggests they may not always improve measurement and can increase respondent burden. Test both brief and explicit descriptors with your target population before finalizing research instruments [1].

Account for Demographic and Clinical Factors: Adjust analyses for factors known to influence descriptor interpretation, particularly in non-randomized studies where age, education, and clinical status may confound results [5] [6].

Implement Robust Validation Protocols: Adopt systematic experimental approaches to verbal descriptor validation, including quantitative interpretation studies, comparative psychometric testing, and error rate assessment, particularly when developing instruments for vulnerable populations or high-stakes research contexts [7] [1].

By applying these evidence-based principles to verbal descriptor selection and validation, researchers can significantly enhance the reliability, validity, and interpretability of data collected using verbal rating scales across scientific disciplines—strengthening the foundation of research that depends on accurate human interpretation of qualitative response options.

This technical guide examines the critical differentiation between symptom severity, interference, and frequency within Verbal Rating Scales (VRS), a fundamental component of patient-reported outcome (PRO) measures in clinical research and drug development. While these constructs are interrelated, they represent distinct clinical dimensions that require precise methodological approaches for valid measurement. Contemporary research demonstrates that VRS ratings of symptom severity are significantly influenced by psychosocial factors including pain interference, catastrophizing, and patient beliefs, beyond pure intensity alone [10]. This whitepaper synthesizes current evidence, provides structured experimental protocols, and offers methodological recommendations to strengthen the scientific rigor of VRS applications in pharmaceutical research.

Conceptual Foundations and Definitions

Core Construct Definitions

Within the framework of verbal rating scales, three distinct but interrelated constructs form the foundation of comprehensive symptom assessment:

Symptom Severity: The subjective intensity or magnitude of the symptom experience, typically measured through sensory descriptors (e.g., mild, moderate, severe) [10]. Historically, severity was assumed to represent a pure intensity measure, but emerging evidence indicates it incorporates cognitive and affective dimensions.

Symptom Interference: The degree to which symptoms disrupt normal physical, mental, and social functioning [10] [11]. This construct captures the functional impact of symptoms on daily activities, representing a critical outcome measure in clinical trials.

Symptom Frequency: The temporal occurrence or recurrence of symptoms over a specified time period. While less studied in VRS-specific literature, frequency provides essential contextual information about symptom patterns.

The Conceptual Relationship Between Constructs

The differentiation between these constructs is not merely academic but reflects fundamental aspects of the patient experience. Research indicates that severity ratings on VRS cannot be assumed to measure only symptom intensity; they may also reflect patient perceptions about pain interference and beliefs about their pain [10]. This conceptual overlap presents both challenges and opportunities for clinical researchers seeking to understand the full impact of therapeutic interventions.

Methodological Approaches and Measurement

Verbal Rating Scale Structures

VRS implementations vary significantly in their structure and descriptor choices, which directly impact their ability to differentiate between key constructs:

Table 1: Common VRS Structures in Symptom Assessment

| Scale Type | Descriptor Options | Primary Construct Measured | Clinical Applications |

|---|---|---|---|

| 4-point VRS | None, Mild, Moderate, Severe | Symptom Severity | WHO Pain Ladder guidelines [10] |

| 5-point VRS | None, Mild, Moderate, Severe, Very Severe | Symptom Severity | Post-operative symptom tracking [1] |

| 6-point VRS | None, Very Mild, Mild, Moderate, Severe, Very Severe | Symptom Severity | Chronic pain populations [10] |

| Explicit Descriptor VRS | "Mild: I can generally ignore my pain" [1] | Severity with interference context | Enhanced specificity applications |

| Interference-Specific VRS | "Somewhat: I can do some things okay, but most daily activities are harder" [1] | Pure Interference | Functional impact assessment |

Comparative Scale Properties

Understanding the relative performance characteristics of different assessment approaches is crucial for appropriate scale selection in research protocols:

Table 2: Psychometric Properties of Pain Assessment Scales

| Scale Type | Responsiveness | Factor Influence Beyond Intensity | Elderly Population Suitability | Key Limitations |

|---|---|---|---|---|

| Verbal Rating Scale (VRS) | Small in all patients, moderate-large in improved patients [2] | High (pain interference, catastrophizing, beliefs) [10] | High [10] | Limited response options, non-ratio scale properties [10] |

| Numerical Rating Scale (NRS) | Significantly larger than VRS in improved patients [2] | Lower than VRS [10] | Moderate (more difficult than VRS) [10] | Can be challenging for elderly [10] |

| FACES Pain Scale | Not specifically reported | High (pain intensity + affect) [10] | High | Reflects combination of intensity and distress [10] |

Experimental Evidence and Research Protocols

Key Experimental Findings

Recent investigations have substantially advanced our understanding of factor influences on VRS responses:

Table 3: Experimental Evidence on Factors Influencing VRS Ratings

| Study Population | Experimental Design | Key Findings | Research Implications |

|---|---|---|---|

| Chronic pain patients with physical disabilities (N=594) [10] | Cross-sectional survey comparing VRS and NRS | After controlling for NRS pain intensity, VRS ratings showed significant associations with: • Pain interference (β=0.24, p<0.01) • Pain catastrophizing (β=0.18, p<0.01) • Pain control beliefs (β=-0.22, p<0.01) | VRS cannot be assumed to measure only pain intensity; incorporates interference and cognitive factors |

| Ambulatory cancer surgery patients (N=18,936) [1] | Interrupted time series comparing brief vs. explicit descriptors | Explicit descriptors (e.g., "Mild: I can generally ignore my pain"): • Reduced symptom scores by ~10% • Increased completion time • Did not improve scale variance properties | Brief descriptors may be preferable for efficient postoperative monitoring |

| Chronic pain patients (N=254) [2] | Pre-post treatment responsiveness analysis | NRS current pain showed significantly larger responsiveness (SRM=0.84) than VRS (SRM=0.61) in patients with improved pain | NRS may be preferable for detecting treatment effects in clinical trials |

Detailed Experimental Protocol: Factor Influence on VRS Severity Ratings

Based on methodologies from published research, the following protocol provides a framework for investigating construct differentiation in VRS:

Protocol Implementation Details:

- Participant Recruitment: Target sample of 200+ participants with chronic pain conditions, ensuring diversity in pain etiology and demographic characteristics [10].

- Assessment Instruments:

- Primary Outcome: 6-point VRS for usual pain severity ("None" to "Very Severe")

- Covariate Measure: 0-10 NRS for average pain intensity

- Predictor Variables: Validated measures of pain interference, catastrophizing, and pain beliefs

- Statistical Analysis: Employ multivariate ordinal logistic regression with VRS severity rating as dependent variable and NRS score as primary covariate, then test additional factors for significant independent contributions to VRS variance [10].

Research Reagent Solutions: Essential Methodological Tools

Table 4: Essential Assessment Tools for VRS Research

| Research Tool | Primary Function | Application in VRS Research |

|---|---|---|

| Multidimensional Pain Inventory | Assesses pain interference across multiple domains [11] | Quantifies functional impact distinct from severity |

| Pain Catastrophizing Scale | Measures exaggerated negative orientation toward pain [10] | Tests cognitive influences on severity ratings |

| Brief Pain Inventory | Evaluates pain intensity and interference [11] | Provides parallel measures of key constructs |

| Descriptor Differential Scale | Measures sensory and affective pain components [11] | Differentiates physiological vs. emotional aspects |

| Numerical Rating Scale (0-10) | Pure intensity assessment [10] [2] | Control variable for isolating non-intensity VRS factors |

Implications for Clinical Research and Drug Development

Clinical Trial Design Considerations

The differentiation between symptom severity, interference, and frequency has substantial implications for endpoint selection in clinical trials:

- Primary Endpoint Selection: Trials targeting functional improvement should consider interference-specific measures rather than assuming severity captures functional impact [10].

- Response Scale Selection: NRS may be preferable for detecting treatment effects in pharmacological trials due to superior responsiveness, while VRS provides complementary information on the patient experience [2].

- Descriptor Specification: Brief descriptors may enhance practicality, while explicit descriptors can be developed for specific contexts where conceptual clarity is paramount [1].

Analytical Recommendations

- Multivariate Modeling: Always control for pure intensity measures (e.g., NRS) when analyzing VRS outcomes to isolate non-intensity factors [10].

- Complementary Scale Implementation: Deploy both VRS and NRS in early phase trials to characterize full treatment effects across multiple dimensions [2].

- Interference-Specific Assessment: Include dedicated interference measures regardless of primary endpoint selection to fully capture treatment benefits [10] [11].

Future Research Directions

The evolving understanding of VRS construct measurement suggests several promising research avenues:

- Development of optimized descriptor sets that balance specificity, reliability, and practicality

- Investigation of cultural and linguistic influences on VRS construct interpretation

- Longitudinal studies examining how relationships between severity, interference, and frequency evolve throughout treatment

- Integration of ecological momentary assessment to capture real-time dynamics between constructs

This synthesis of current evidence and methodological recommendations provides a framework for enhancing the scientific rigor of Verbal Rating Scale applications in pharmaceutical research and clinical trials, ultimately supporting more precise measurement of treatment outcomes.

Within the rigorous framework of pharmaceutical development, the precision of verbal descriptors in patient-reported outcome (PRO) instruments and clinical outcome assessments (COAs) is paramount. These descriptors form the foundational language that translates a patient's subjective experience into quantifiable data for regulatory and treatment decisions. This whitepaper explores a critical case study within the broader thesis on strength of support statements verbal scales research, demonstrating how direct patient feedback was systematically integrated to refine the verbal descriptors of a digital endpoint. The refinement process ensured the tool was not only scientifically sound but also conceptually relevant and cognitively accessible to the target patient population.

The U.S. Food and Drug Administration's (FDA) Patient-Focused Drug Development (PFDD) guidance series, particularly the third guidance on "Selecting, Developing, or Modifying Fit-for-Purpose Clinical Outcome Assessments," underscores the necessity of this iterative process. It advises that a COA's content validity—the degree to which it measures the concept it intends to measure—must be supported by evidence from the target population [12] [13]. This case study provides a real-world model for implementing these guidelines, illustrating a pathway from initial patient engagement to refined, actionable descriptors.

Regulatory and Methodological Foundation

The FDA’s PFDD initiative, mandated by the 21st Century Cures Act, represents a significant shift toward incorporating the patient's voice into medical product development. The four-part guidance series outlines a systematic approach for collecting and submitting robust patient experience data [13].

- Guidance 1 focuses on collecting comprehensive and representative input, defining the target population and sampling strategies.

- Guidance 2 details methods for eliciting patient information through qualitative research, which is crucial for understanding the symptoms and impacts that matter most to patients.

- Guidance 3, which this case study directly operationalizes, provides the framework for "Selecting, Developing, or Modifying Fit-for-Purpose Clinical Outcome Assessments" [12]. It emphasizes that COAs must be "fit-for-purpose," meaning the instrument and its descriptors are appropriate for the context of use and the specific population.

- Guidance 4 will address incorporating these COAs into endpoints for regulatory decision-making.

A core tenet of this framework is the critical importance of early and continuous patient engagement. As emphasized by regulatory experts, engaging patients before a study begins is essential for ensuring digital endpoints are relevant, reliable, and ultimately acceptable to regulators and payers [14]. This aligns with the PFDD guidance's emphasis on using qualitative data and patient input to establish content validity [12].

Case Study: Refining a Digital Endpoint for Parkinson's Disease

Background and Initial Challenge

A recent initiative in the development of a digital endpoint for a Parkinson's disease clinical trial serves as a powerful case study. The research team developed a digital platform to capture patient-generated data on motor symptoms, intended for use as a key secondary endpoint. The platform's initial design used a set of verbal descriptors and on-screen instructions (e.g., "tap the circle with moderate speed") to guide patients through motor function tasks. While scientifically valid, early internal testing suggested the language might not be optimally intuitive for the target population, potentially leading to variable task performance that reflected comprehension issues rather than true motor function.

Experimental Protocol for Descriptor Refinement

The team implemented a structured, iterative feedback protocol aligned with PFDD Guidance 2 and 3 principles [12] [13]. The methodology is summarized in the workflow below:

Phase 1: Formative Feedback through Patient Committee Workshop

- Objective: To gather initial feedback on the conceptual relevance and clarity of the task instructions and descriptors.

- Participants: An independent patient committee (n=12) comprised of individuals with Parkinson's disease, independent of any single study [14].

- Method: A dedicated workshop was conducted where participants were shown prototypes of the digital tasks. Through structured discussions and interviews, they were asked to describe the tasks in their own words, identify any confusing terms, and suggest alternative phrasing. This qualitative approach is endorsed by PFDD Guidance 2 for eliciting patient information [13].

Phase 2: Usability Testing with Pre-Study Platform Access

- Objective: To observe how patients interacted with the platform and identify specific points of friction related to the language used.

- Participants: A subset of patients (n=8) enrolled in the upcoming Parkinson's trial.

- Method: Patients were given access to the platform before the study launch. The research team collected both performance data (task completion rates, errors) and qualitative feedback through cognitive debriefing. Patients were asked to "think aloud" as they completed tasks, providing real-time insight into their interpretation of the descriptors [14].

Quantitative and Qualitative Findings

The following table summarizes the key data points that drove the descriptor refinement:

Table 1: Summary of Patient Feedback and Corresponding Refinements

| Feedback Metric | Initial Prototype Data | Post-Refinement Data | Implication & Action Taken |

|---|---|---|---|

| Task Misinterpretation Rate | 42% of users (5/12 in Phase 1) misinterpreted "moderate speed" as relating to movement pace, not finger tap speed. | Reduced to <10% after refinement. | Descriptor was ambiguous. Action: Replaced with "tap at your normal, comfortable speed." |

| Cognitive Load Score (self-reported 1-5 scale) | Average score of 3.8 for tasks requiring precision. | Average score reduced to 2.1. | Term "precision" induced performance anxiety. Action: Changed to "try to tap the center of the circle." |

| UI Navigation Burden | 75% of users (6/8 in Phase 2) struggled with fine motor control for specific navigation elements. | Task success rate improved to 92%. | UI was not accommodating of motor symptoms. Action: Redesigned screen navigation and repositioned measurement tools to reduce physical burden [14]. |

| Data Quality Indicator | High variability in initial task performance scores unrelated to clinical severity. | Smoother, more clinically consistent performance data. | Refined descriptors and UI led to data that more accurately reflected the underlying motor function. |

Refined Output and Validated Endpoint

The iterative process resulted in concrete changes to the digital endpoint:

- Descriptor Refinement: The verbal instruction was changed from a prescriptive "tap the circle with moderate speed" to the more intuitive "tap at your normal, comfortable speed." This shifted the focus from a potentially confusing external standard to an internal, patient-centric one.

- UI/UX Optimization: The screen layout and interactive elements were physically redesigned to accommodate the specific motor challenges of the patient population, ensuring the tool itself did not confound the measurement [14].

These refinements, grounded directly in patient feedback, enhanced the content validity of the endpoint. The data collected became a more reliable and meaningful measure of the intended concept, thereby strengthening its potential for regulatory submission.

The Scientist's Toolkit: Key Reagents for Patient-Centric Research

Implementing a robust patient feedback loop requires specific methodological "reagents." The table below details essential components for designing such studies, drawing from the case study and the referenced research.

Table 2: Essential Research Reagents for Patient Feedback Studies on Descriptor Refinement

| Research Reagent | Function & Application | Example from Case Study & Literature |

|---|---|---|

| Structured Interview Guides | A semi-structured protocol to ensure consistent, open-ended questioning that avoids priming patients, as recommended in PFDD Guidance 2 [13]. | Used in Phase 1 workshops to elicit patients' understanding of terms like "moderate speed" and "precision" without leading their responses. |

| Cognitive Debriefing Protocol | A "think-aloud" method where patients verbalize their thought process while completing a task, revealing real-time comprehension issues [15]. | Employed in Phase 2 to identify specific points of confusion in the digital task flow that were not caught in interviews alone. |

| Text Mining & NLP Pipelines | A suite of computational tools (e.g., sentiment analysis, topic modeling) to analyze large volumes of unstructured free-text feedback at scale [15]. | While not used in this small-scale study, these methods are powerful for analyzing feedback from larger patient committees or open-ended survey responses. |

| Latent Dirichlet Allocation (LDA) | A topic modeling algorithm used to identify emergent themes and patterns across a corpus of patient comments [15]. | Can be applied to categorize feedback into themes (e.g., "UI complaints," "descriptor confusion") to prioritize refinement efforts. |

| Patient & Site Committees | Independent groups of patients and clinical site staff that provide ongoing, study-agnostic feedback throughout the product development lifecycle [14]. | The foundational source of feedback in the case study, ensuring the tool was refined based on representative user needs before the trial began. |

This case study demonstrates that the refinement of verbal descriptors is not a mere editorial exercise but a critical scientific process that strengthens the validity and reliability of clinical trial endpoints. By adopting a structured, iterative, and patient-engaged approach—as outlined in the FDA's PFDD guidance series—researchers can ensure that the language of science is seamlessly translated into the language of patients.

The strength of support for any verbal scale is ultimately determined by the robustness of the evidence demonstrating its relevance and comprehension within the target population. The methodologies outlined here—from formative workshops and usability testing to the application of advanced text analytics—provide a replicable framework for building this evidence. As the industry moves towards more patient-centric drug development, the ability to systematically gather and integrate this feedback will be a key differentiator in developing meaningful endpoints that accelerate the delivery of effective therapies.

From Vague to Valid: A Step-by-Step Methodology for Developing Explicit Support Statements

Within pharmaceutical research and drug development, the precision of data collection instruments directly impacts the reliability and validity of the resulting data. This technical guide examines the critical process of moving from brief descriptors to explicit item wording in verbal scales, a cornerstone of robust subjective response measurement. Framed within the broader thesis on strength-of-support statements in verbal scales research, this paper synthesizes empirical evidence and methodological protocols to demonstrate that explicit, standardized wording is not merely a procedural formality but a fundamental determinant of data quality. We detail experimental evidence illustrating how specific wording choices significantly influence participant interpretation and quantitative outcomes, providing researchers and drug development professionals with a structured framework for optimizing scale design to support rigorous scientific conclusions.

In the context of drug development, verbal scales serve as the primary conduit for quantifying subjective, yet critically important, patient and participant experiences. These measures inform decisions on drug safety, efficacy, and ultimately, regulatory approval. The transition from brief to explicit descriptors represents a methodological imperative rooted in the need to minimize measurement error and maximize construct validity. Evidence suggests that many research instruments, including those in widespread use, are methodologically flawed, with item wording representing a pervasive and often unaddressed source of bias [16]. The Drug Effects Questionnaire (DEQ), for instance, is widely used in studies of acute subjective response to substances but exists in numerous variations that differ in instructional set, item order, and response format, leading to challenges in cross-study comparability [17]. This lack of standardization underscores a fundamental challenge in verbal scales research: without explicit, consistently applied descriptors, the strength of support for any given conclusion is inherently weakened. This guide establishes a framework for strengthening this support through methodical item wording practices, with a specific focus on applications within clinical and pharmacovigilance research.

Empirical Foundations: Quantitative Evidence for Explicit Wording

The Impact of Combined Verbal and Numerical Descriptors on Risk Perception

Empirical investigations consistently demonstrate that the explicit integration of verbal and numerical descriptors alters participant understanding and risk estimation. A pivotal study evaluated European Medicines Agency (EMA) recommendations on communicating frequency information for side-effect risks, providing a clear quantitative assessment of how descriptor explicitness influences perception [18].

Table 1: Experimental Design for Risk Expression Evaluation

| Factor | Level 1 | Level 2 |

|---|---|---|

| Descriptor Format | Numerical only (e.g., "may affect up to 1 in 10 people") | Combined verbal & numerical (e.g., "Common: may affect up to 1 in 10 people") |

| Uncertainty Qualifier | "may affect up to..." | "will affect up to..." |

| Sample Size | 339 participants (37.5% with cancer) | Recruited via CancerHelpUK website |

| Primary Outcome | Side-effect frequency estimates and risk perceptions |

The study's findings revealed that the explicit combination of verbal terms with numerical bands significantly shifted participant perceptions compared to numerical information alone [18].

Table 2: Impact of Explicit (Combined) Descriptors on Side-Effect Estimates

| Risk Expression Format | Effect on Frequency Estimates | Statistical Significance (P-value) | Effect on Broader Risk Perceptions |

|---|---|---|---|

| Combined Verbal & Numerical | Higher estimates for four out of ten side-effects | < 0.05 for four side-effects | Participants reported side-effects would be more likely to occur |

| Numerical Only | Lower, baseline estimates | Used as reference for comparison | Baseline likelihood perception |

| Uncertainty Qualifier ("may" vs. "will") | No significant difference in estimates | Not Significant (NS) | No differences in any estimates |

This evidence indicates that while explicit wording enhances specificity, it can also introduce a "framing" effect, leading to systematic overestimation of risks. This has direct implications for patient information leaflets and clinical trial informed consent documents, where precise communication is paramount [18].

Psychometric Validation of Explicit Item Wording

The move toward explicit wording is further supported by psychometric validation studies. An analysis of the DEQ, which assesses constructs like "Feel," "High," "Like," "Dislike," and "Want More," demonstrated that well-defined items produce reliable and valid measurements across different substances [17].

Table 3: Psychometric Properties of Explicit DEQ Items

| DEQ Construct | Sample Item Wording | Response Format | Psychometric Support |

|---|---|---|---|

| FEEL | "Do you FEEL a drug effect right now?" | 100mm Visual Analog Scale ("Not at all" to "Extremely") | Supported for amphetamine, nicotine, alcohol |

| HIGH | "Are you HIGH right now?" | 100mm Visual Analog Scale ("Not at all" to "Extremely") | Supported for amphetamine, nicotine, alcohol |

| LIKE | "Do you LIKE any of the effects you are feeling right now?" | 100mm Visual Analog Scale ("Not at all" to "Extremely") | Supported for amphetamine, nicotine, alcohol |

| DISLIKE | "Do you DISLIKE any of the effects you are feeling right now?" | 100mm Visual Analog Scale ("Not at all" to "Extremely") | Supported for amphetamine, nicotine, alcohol |

| MORE | "Would you like MORE of the drug you took, right now?" | 100mm Visual Analog Scale ("Not at all" to "Extremely") | Supported for amphetamine, nicotine, alcohol |

The study concluded that the simplicity and brevity of the DEQ, combined with its promising psychometric properties when items are explicitly worded and standardized, supports its use in future subjective response research across various substances [17]. This exemplifies how explicit descriptors underpin measurement validity.

Experimental Protocols for Wording Validation

Protocol: Evaluating Risk Expression Formats

This protocol is adapted from the study on EMA risk communication recommendations, providing a template for validating the explicitness of verbal descriptors [18].

Objective: To compare the impact of combined verbal-numerical risk expressions versus numerical-only expressions on participant risk perceptions and understanding.

- Design: 2x2 factorial randomized trial.

- Participants: Target sample of approximately 340 participants, ensuring a subset with the relevant medical condition (e.g., cancer) for ecological validity. Recruitment can occur via healthcare websites or clinical settings.

- Interventions: Participants are randomly assigned to one of four groups, receiving information about drug side-effects using:

- Group 1: Numerical terms only; 'may affect up to...'

- Group 2: Combined verbal and numerical expression; 'may affect up to...'

- Group 3: Numerical terms only; 'will affect up to...'

- Group 4: Combined verbal and numerical expression; 'will affect up to...'

- Materials: Develop a hypothetical scenario (e.g., "Your doctor has told you that you need to take Paclitaxel...") followed by a list of 10 side-effects, each presented with its likelihood using the assigned risk expression format.

- Outcome Measures:

- Primary: Participant estimates of the chance they will experience specific side-effects (on a 0-100% scale).

- Secondary: Likert-scale measures of satisfaction with information, perceived badness of side-effects, likelihood of having any side-effect, general risk to health, and the influence on their decision to take the medicine.

- Data Analysis: Use analysis of variance (ANOVA) to detect significant differences in frequency estimates and risk perceptions between the experimental groups, with power analysis guiding sample size determination.

Protocol: Psychometric Validation of Scale Items

This protocol outlines the steps for establishing the reliability and validity of explicitly worded items, based on methodologies used to evaluate the DEQ [17].

Objective: To assess the internal structure and validity of a multi-item scale featuring explicit verbal descriptors.

- Design: Cross-sectional study analyzing data from placebo-controlled substance administration studies.

- Participants: Participants from controlled studies involving different substances (e.g., amphetamine, nicotine, alcohol).

- Materials: Administer the target scale (e.g., the DEQ) with explicit items (FEEL, HIGH, LIKE, DISLIKE, MORE) using a consistent, fine-grained response format such as a 100mm Visual Analog Scale (VAS).

- Procedure:

- Administer the scale at predetermined time points following substance or placebo administration.

- Collect concurrent measures of similar constructs (e.g., other validated liking scales) and substance-related behaviors (e.g., self-administration, future use) for validation.

- Data Analysis:

- Internal Structure: Examine inter-item correlations and factor structure.

- Convergent Validity: Correlate target scale items with measures of similar constructs.

- Predictive Validity: Correlate scale items with behavioral outcomes (e.g., the "MORE" item should predict subsequent self-administration).

- Item-Level Statistics: Calculate descriptive statistics (mean, SD, skewness, kurtosis) for each item to ensure they perform appropriately across different populations and substances.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Verbal Scale Development and Validation

| Research Reagent | Function/Best Practice Application |

|---|---|

| Visual Analog Scales (VAS) | A 100mm unipolar line (e.g., "Not at all" to "Extremely") provides a fine-grained, continuous measure of subjective states, superior to coarse Likert scales for detecting subtle effects [17]. |

| PhenX Toolkit | A web-based catalog of high-quality measures, recommended by the NIH, which provides standardized protocols for data collection, including versions of the DEQ, to enhance cross-study comparability [17]. |

| International Council for Harmonisation (ICH) Guidelines | Provide internationally accepted standards for clinical research, including E6(R3) for Good Clinical Practice and E9 for Statistical Principles, ensuring data integrity and regulatory compliance [19]. |

| Color Contrast Analyzers | Tools (e.g., WebAIM's Color Contrast Checker) ensure that any text in digital scales or study materials meets WCAG AA minimum contrast ratios (4.5:1 for small text), guaranteeing legibility for all participants [20] [21]. |

| Cochrane Methodological Standards | Detailed guidance for conducting systematic, methodologically rigorous evidence syntheses, which are essential for validating the use of specific scales and items during the literature review phase [16]. |

| Automated Bias Detection Tools | Open-source libraries like axe-core can be integrated into testing workflows to automatically check for common issues in digital data collection instruments, such as insufficient color contrast [20]. |

A Conceptual Framework for Explicitness

The decision to use more explicit descriptors is not merely a binary choice but exists on a continuum, with significant implications for the strength of support for a study's conclusions.

This framework illustrates that as descriptors become more explicit, they reduce ambiguity and strengthen the validity of the resulting data. However, as the empirical evidence on risk communication shows, each step can also introduce new cognitive influences, such as framing effects, which must be accounted for in the study's interpretation and in the strength-of-support statements [18]. The goal is not to simply maximize explicitness at all costs, but to achieve a level of clarity that is both psychometrically sound and appropriate for the target population and research context.

In specialized research fields such as the study of verbal scales for strength of support statements, robust and structured development processes are paramount. These processes ensure that the resulting frameworks are not only scientifically sound but also practically relevant and accurately interpreted by end-users. A structured methodology that integrates comprehensive literature reviews with systematic patient feedback analysis provides a powerful approach to developing and validating research tools. This guide details the technical protocols for such an integrated development process, contextualized within a broader thesis on verbal scales. It provides researchers and drug development professionals with actionable methodologies for creating more effective and reliably understood communication tools.

Core Methodological Frameworks

The Scoping Review for Literature Synthesis

A scoping review is an ideal methodology for mapping the existing literature and identifying key concepts, theories, and evidence gaps, particularly in emerging or complex fields [22]. This approach is exceptionally valuable for framing research on verbal scales, where understanding the landscape of existing methodologies and reported challenges is a critical first step.

- Protocol Registration: Pre-register the review protocol on a platform like the Open Science Framework (OSF) to ensure transparency and reduce reporting bias.

- Systematic Search Strategy:

- Databases: Conduct searches in major scientific databases (e.g., MEDLINE/PubMed, Embase, CINAHL, PsycINFO) and repositories of guideline developers.

- Search Terms: Use controlled vocabulary (MeSH, Emtree) and keywords related to the core concepts. For verbal scales, this includes terms like "verbal scale," "likelihood ratio," "strength of support," "communication," and "interpretation."

- Pilot Testing: Perform multiple pilot searches to refine terms and indexing.

- Eligibility Criteria: Define inclusion and exclusion criteria using the PCC (Population, Concept, Context) framework.

- Population: The target audience for the verbal scale (e.g., patients, clinicians, jurors).

- Concept: Methods for developing, validating, or testing the interpretation of verbal conclusion phrases.

- Context: The specific field of application (e.g., forensic science, healthcare communication, drug development).

- Data Extraction and Synthesis: Extract data in a standardized manner. The synthesis is typically narrative, summarizing methodologies, findings, and gaps. Data should be managed using specialized software (e.g., Rayyan).

Table 1: Quantitative Data from a Scoping Review on Feedback Interventions [23]

| Reviewed Aspect | Number of Studies | Percentage of Total |

|---|---|---|

| Studies implementing peer comparisons in feedback | 184 out of 279 | 66% |

| Studies using active feedback delivery | 181 out of 279 | 65% |

| Studies providing timely feedback | 156 out of 279 | 56% |

| Studies combining feedback with other co-interventions | 190 out of 279 | 68% |

| Studies showing improvement in quality indicators | 226 out of 279 | 81% |

Knowledge Discovery in Databases (KDD) for Patient Feedback

The KDD process provides a systematic framework for transforming raw, unstructured patient feedback into actionable insights [24]. This is crucial for empirically testing how verbal statements are perceived and understood by different audiences, moving beyond expert intention to actual interpretation.

Key Protocol Steps [24]:

The KDD process consists of five stages that convert raw data into knowledge:

- Selection: Acquire the target dataset. In verbal scales research, this involves collecting free-text feedback from participants exposed to different verbal phrases.

- Preprocessing: Clean the data. This includes removing duplicates, handling missing values, and anonymizing personally identifiable information (PII).

- Transformation: Engineer features for analysis. Techniques include:

- Tokenization: Splitting text into words or phrases.

- TF-IDF (Term Frequency-Inverse Document Frequency): Weighing the importance of terms.

- n-gram extraction: Identifying sequences of words (e.g., bigrams like "very strong").

- Part-of-Speech (POS) Tagging: Labeling words as nouns, verbs, adjectives, etc.

- Data Mining: Apply techniques to discover patterns.

- Sentiment Analysis: Quantifies the polarity (positive, negative, neutral) of feedback.

- Topic Modeling (e.g., Latent Dirichlet Allocation): Identifies latent themes in the feedback corpus.

- Aspect-Based Sentiment Analysis: Links sentiment to specific aspects of the verbal scale (e.g., clarity, perceived strength).

- Emotion Detection: Maps feedback to basic emotions (joy, trust, fear, anger, etc.).

- Interpretation/Evaluation: Synthesize the mined patterns into usable knowledge. This involves stakeholder validation workshops to contextualize findings and co-develop improvements.

Table 2: Text Mining Results from a Patient Feedback Analysis [24]

| Analytical Technique | Key Metric | Result |

|---|---|---|

| Sentiment Analysis | Average Polarity Score | 0.42 (on a scale of -1 to +1) |

| Comments Classified as Positive | 68.8% (63,685/92,578) | |

| Comments Classified as Negative | 5.8% (5,378/92,578) | |

| Topic Modeling | Distinct Topics Identified | 10 |

| Most Frequent Topic: "Staff Attitude" | 10.2% (9,443/92,578) | |

| Aspect-Based Sentiment Analysis | Most Positive Aspect: "Nurse Attitude" | Sentiment Score: 0.65 |

| Most Negative Aspect: "Waiting Time" | Sentiment Score: -0.42 |

Integrated Experimental Workflow

The following diagram illustrates the synergistic integration of the literature review and patient feedback processes within a single research and development workflow.

Integrated R&D Workflow for Verbal Scales

Experimental Protocol for Validation

Once a preliminary verbal scale is developed through integrated literature and feedback review, a controlled experiment is essential for validating its interpretation and efficacy [25].

Detailed Experimental Protocol:

Define Variables:

- Independent Variable: The specific verbal phrases comprising the scale (e.g., "weak," "moderate," "strong," "very strong" support).

- Dependent Variable: The quantitative interpretation of the phrases by participants (e.g., a numerical likelihood ratio or percentage value assigned) [3].

- Extraneous/Confounding Variables: Participant demographics (age, education), prior experience with statistical concepts, and context of the case scenario. These can be controlled statistically or via experimental design like blocking.

Formulate Hypothesis:

- Null Hypothesis (H₀): There is no difference between the intended numerical range of a verbal phrase (expert intention) and its perceived numerical value by the target audience.

- Alternative Hypothesis (H₁): There is a statistically significant difference between the intended and perceived numerical values for one or more phrases in the verbal scale [3].

Design Experimental Treatments: Decide on the number of verbal phrases to test and the context in which they are presented (e.g., embedded in a forensic report or a clinical trial summary).

Assign Subjects to Groups:

- Study Size: Determine sample size via power analysis to ensure statistical reliability.

- Design Type: A within-subjects design (repeated measures) is often most efficient, where each participant rates all verbal phrases. To mitigate order effects, counterbalancing (randomizing the order of phrase presentation) is critical [25].

Measure Dependent Variable: Develop a clear protocol for capturing the participant's interpretation. The Membership Function Approach is a validated method where participants rate the appropriateness of a verbal phrase for a series of numerical values [3].

Table 3: Example Membership Function Results for Verbal Phrases [3]

| Verbal Phrase | Preferred Replacement Value (%) | Expert Intention (Likelihood Ratio) | Interpretation Gap |

|---|---|---|---|

| Weak / Limited | ~62% | 1 - 10 | Overvaluation by participants |

| Moderate | Data not specified in results | 10 - 100 | Data not specified in results |

| Moderately Strong | Data not specified in results | 100 - 1,000 | Data not specified in results |

| Strong | Data not specified in results | 1,000 - 10,000 | Overvaluation by participants |

| Very Strong | Data not specified in results | 10,000 - 1,000,000 | Undervaluation by participants |

| Extremely Strong | Data not specified in results | > 1,000,000 | Data not specified in results |

The Scientist's Toolkit: Research Reagent Solutions

This table details essential resources and tools for implementing the described structured development process.

Table 4: Key Research Reagents and Solutions

| Item Name | Function / Application | Example / Note |

|---|---|---|

| Systematic Review Accelerator (SRA) | Software to automate the initial screening and de-duplication of literature search results. | Improves efficiency and reduces human error in the scoping review phase [22]. |

| NLTK (Natural Language Toolkit) | A leading Python platform for building Python programs to work with human language data. | Used for tokenization, POS tagging, and other preprocessing tasks in the KDD pipeline [24]. |

| TextBlob | A Python library for processing textual data. It provides a simple API for diving into common natural language processing (NLP) tasks such as sentiment analysis. | Used to calculate sentiment polarity scores for patient feedback [24]. |

| Gensim | A robust, efficient, and hassle-free Python library for topic modeling. | Implements algorithms like Latent Dirichlet Allocation (LDA) to identify latent themes in text corpora [24]. |

| Membership Function Task | A psychometric instrument designed to quantify how individuals map verbal phrases onto numerical scales. | Critical for the experimental validation of verbal scales, revealing gaps between expert intention and lay interpretation [3]. |

| Stakeholder Workshop Framework | A structured approach to collaboratively interpreting data mining results and co-developing solutions. | Ensures that insights from literature and feedback are translated into practical, contextually appropriate improvements [24]. |

The structured integration of rigorous literature review and systematic patient feedback analysis, followed by controlled experimental validation, provides a powerful, evidence-based methodology for developing and refining verbal scales. This approach directly addresses the critical research problem of miscommunication, as identified in studies of forensic science and beyond, where lay interpretations of verbal phrases consistently diverge from expert intentions [3]. By adopting these detailed protocols for scoping reviews, KDD processes, and membership function experiments, researchers and drug development professionals can create strength of support statements that are not only statistically grounded but also clearly and reliably understood by their intended audiences. This enhances scientific communication, supports better decision-making, and ultimately strengthens the validity of research outcomes.

The Role of Cognitive Interviewing and Focus Groups in Descriptor Development

Within the critical field of verbal scale research, the development of robust descriptors is foundational to generating valid and reliable data. This is particularly true in the pharmaceutical and health sectors, where strength of support statements—such as verbal risk descriptors for medication side effects—directly influence patient understanding and behavior. The Patient Reported Outcomes Measurement Information System (PROMIS) initiative, for example, identifies cognitive interviewing as an essential component in the development of standardized patient-reported outcome measures [26]. Imperfect descriptor development can have significant real-world consequences; a study on verbal risk descriptors in patient information leaflets found that terms like "common" and "rare" were greatly overestimated by patients, potentially affecting medication adherence and inducing nocebo effects [27]. This technical guide details how cognitive interviewing and focus groups serve as pivotal methodologies for refining such descriptors and ensuring they are interpreted as intended by the target audience.

Theoretical Foundations and Definitions

Core Cognitive Processes in Survey Response

The Cognitive Aspects of Survey Methodology (CASM) movement established a paradigm shift from a purely behavioral perspective to a cognitive one. This framework postulates that respondents navigate a logical sequence when answering a questionnaire item [28]:

- Comprehension: The respondent interprets the meaning of the item stem and specific descriptors.

- Retrieval: The respondent searches memory for relevant information.

- Judgement: The respondent assesses the completeness and relevance of the retrieved information.

- Response: The respondent maps their judgement onto the provided response categories [28].

Cognitive interviewing is explicitly designed to probe each of these stages to identify potential breakdowns, such as the misinterpretation of a verbal risk descriptor like "uncommon" [28] [26].

Approaches to Cognitive Interviewing

Two primary approaches underpin the application of cognitive interviews:

- The Reparative Approach (Applied CASM): This "inspect and repair" model focuses on identifying problems with draft questions and fixing them to reduce response error. The goal is pragmatic improvement of survey questions [28].

- The Descriptive Approach (Basic CASM): This approach aims not to fix problems but to build a rich understanding of how a question measures an underlying construct, even if the question functions adequately. It shifts the focus from problem-solving to concept elucidation [28].

These approaches are not mutually exclusive but represent endpoints on a continuum, and the choice between them influences the interview guide and analysis.

Methodological Protocols

Cognitive Interviewing: A Standard Protocol

Cognitive interviewing is a technique used to explore an individual's mental processes as they interpret and respond to questionnaire items, thereby ensuring the items are easily understood and valid [28] [26]. The following protocol outlines the key steps, as exemplified by the PROMIS pediatric item bank development [26].

Table 1: Key Phases of a Cognitive Interview Protocol for Descriptor Development

| Phase | Description | Best Practices & Considerations |

|---|---|---|

| 1. Preparation | Develop interview guide with probes; recruit and train interviewers. | Probes should target comprehension, recall, judgement, and response processes. Interviewers require extensive training (e.g., 16 hours) [26]. |

| 2. Recruitment & Sampling | Identify participants representing the target audience. | Use purposive sampling to ensure demographic and cognitive diversity. Sample sizes can vary; the PROMIS study reviewed each item with at least 5 participants [26]. |

| 3. Conducting the Interview | Administer the draft items followed by probing. | Use think-aloud (participant verbalizes thoughts) and/or verbal probing (interviewer asks targeted questions). Create a comfortable environment, especially for vulnerable groups [26] [29]. |

| 4. Data Analysis | Identify systematic patterns of item misinterpretation. | Compile comments for each item; items deemed problematic by multiple participants are flagged for revision. Analysis can use summary statements or formal coding [26]. |

| 5. Reporting & Revision | Document findings and refine descriptors/items. | The final output is a report detailing problematic items, the nature of the issues, and proposed revisions [30]. |

Focus Group-Based Cognitive Interviewing

Focus groups are increasingly used as a platform for cognitive interviewing, particularly for exploring culturally specific behaviors. In this methodology, a group of participants completes the survey and then engages in a facilitated discussion where they are probed about their interpretation of items and descriptors [29]. This format can encourage open-ended dialogue where participants build on each other's ideas, generating a rich source of information on contextual understanding and cultural relevance [28] [29].