Beyond Guilt or Innocence: A Scientific Framework for Formulating Prosecution and Defense Hypotheses in Forensic Science

This article provides a comprehensive framework for researchers and scientific professionals on the rigorous formulation and evaluation of prosecution and defense hypotheses.

Beyond Guilt or Innocence: A Scientific Framework for Formulating Prosecution and Defense Hypotheses in Forensic Science

Abstract

This article provides a comprehensive framework for researchers and scientific professionals on the rigorous formulation and evaluation of prosecution and defense hypotheses. It explores the foundational principles of evaluative reporting, details methodological applications like Likelihood Ratios, addresses critical reasoning barriers and optimization strategies, and establishes validation techniques for robust hypothesis testing. By synthesizing insights from forensic statistics and decision science, this guide aims to enhance the objectivity, reliability, and scientific integrity of evidentiary analysis in legal and investigative contexts.

The Bedrock of Justice: Core Principles and Critical Importance of Hypothesis Formulation

In forensic science, the evaluation of biological evidence has traditionally focused on source-level propositions, which address the question of "Whose DNA is this?" [1]. However, with advancements in DNA profiling technology capable of analyzing minute quantities of material, the focus is shifting toward activity-level propositions that help address the more complex question of "How did an individual's cell material get there?" [2] [1]. This evolution reflects the reality that in modern forensic practice, the source of DNA is often not contested, whereas the mechanism of transfer and the timing of activities frequently are [1]. This technical guide examines the formulation, evaluation, and application of activity-level propositions within the context of prosecution and defense hypothesis formulation research, providing researchers and practitioners with a framework for addressing the 'how' and 'when' of evidence.

Activity-level propositions represent a crucial level in the hierarchy of propositions framework, operating above sub-source and source levels but below the ultimate offense level [2]. They require scientists to consider not just the DNA profile itself, but additional factors including transfer mechanisms, persistence dynamics, and background prevalence of DNA [1]. The proper application of this framework enables forensic scientists to provide courts with more focused and valuable contributions regarding the activities surrounding a criminal incident, moving beyond mere identification to reconstruct potential sequences of events [1].

Theoretical Framework: Concepts and Terminology

The Hierarchy of Propositions

The hierarchy of propositions provides a structured approach to formulating questions at different levels of abstraction in forensic casework. The relationship between these levels is fundamental to proper evidence evaluation:

- Sub-source Level: Concerns the source of the DNA profile itself, before any biological considerations [2]

- Source Level: Addresses the biological source of the material (e.g., "The bloodstain comes from Mr. A") [1]

- Activity Level: Pertains to the activities that led to the deposition of the material (e.g., "Mr. A punched the victim") [1]

- Offense Level: Deals with the ultimate issues before the court, typically requiring integration of all evidence [2]

It is crucial to recognize that the value of evidence calculated for a DNA profile cannot be carried over from lower to higher levels in this hierarchy [2]. Each level requires separate calculation and consideration of different factors, with activity-level evaluations incorporating transfer and persistence mechanisms not relevant to source-level assessments.

Core Components of Activity-Level Propositions

Activity-level propositions integrate several forensic concepts that extend beyond DNA profiling alone:

- Transfer Mechanisms: The processes by which DNA moves from a person to a surface or another person, including primary transfer (direct contact) and secondary transfer (via intermediate surface) [1]

- Persistence Dynamics: How DNA persists on surfaces over time and under varying environmental conditions [1]

- Background Prevalence: The presence and quantity of DNA from unknown individuals that may be expected on various surfaces in different environments [1]

- Temporal Considerations: The timeframe between the alleged activity and the recovery of evidence, affecting transfer and persistence probabilities [2]

These components collectively inform the expectations under competing activity-level propositions and enable quantitative assessment of the evidence.

Table 1: Core Concepts in Activity-Level Proposition Formulation

| Concept | Definition | Role in Activity-Level Evaluation |

|---|---|---|

| Transfer Mechanisms | Processes by which DNA is deposited on surfaces or people | Helps distinguish between direct and indirect transfer scenarios |

| Persistence | Duration that DNA remains detectable on a surface | Informs expectations about recovery likelihood over time |

| Background Prevalence | Naturally occurring DNA on surfaces in various environments | Provides context for evaluating the significance of findings |

| Hierarchy of Propositions | Framework for addressing questions at different abstraction levels | Ensures proper scope and prevents overstatement of conclusions |

Formulating Activity-Level Propositions

Principles of Proposition Formulation

Effective activity-level propositions must be balanced, relevant, and mutually exclusive to enable meaningful evidence evaluation [2]. They should ideally be set before knowledge of the forensic results to prevent cognitive biases and ensure objective analysis. A key principle is avoiding the use of the word 'transfer' in the propositions themselves, as propositions are assessed by the Court, while DNA transfer is a factor scientists consider for interpretation [2].

Properly formulated propositions:

- Address the issues actually in dispute between prosecution and defense positions [1]

- Are framed before the specific analytical results are known [2]

- Distinguish clearly between results, propositions, and explanations [2]

- Enable the scientist to assign the probability of the evidence under each proposition [2]

Proposition Pair Formulation

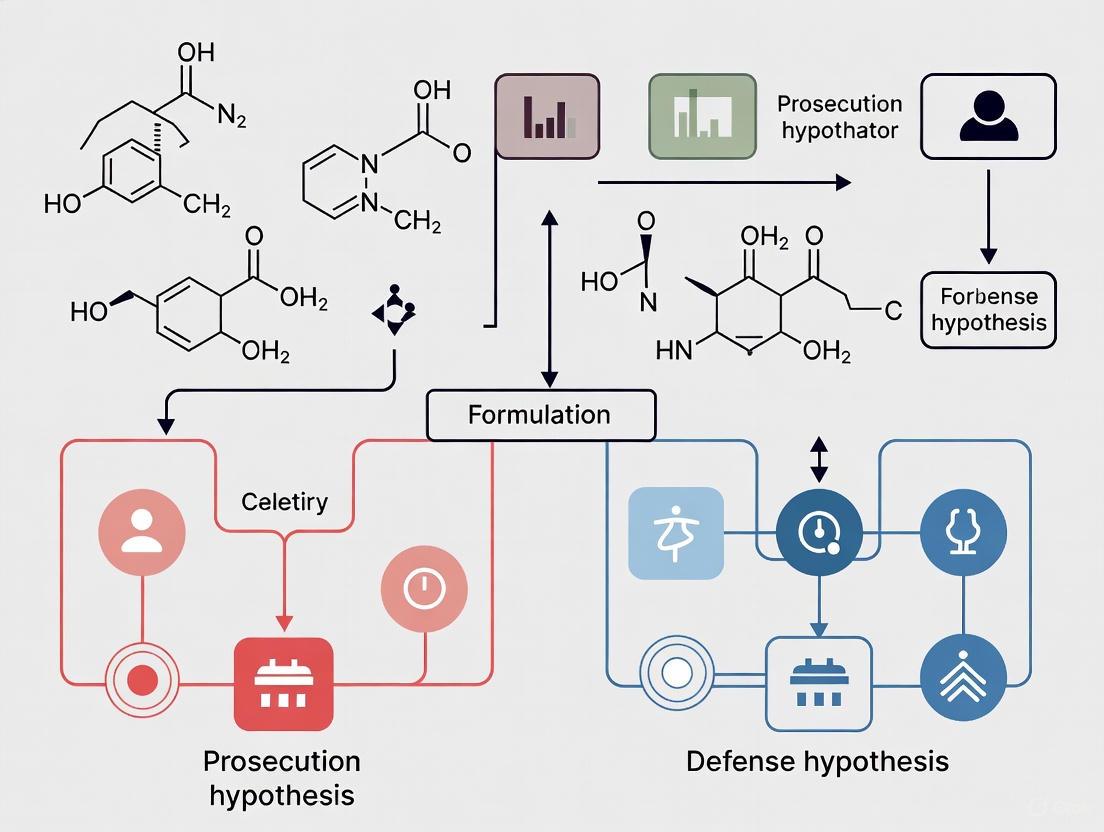

Activity-level propositions are always evaluated in pairs representing competing explanations for the evidence, typically aligning with prosecution and defense positions. The following diagram illustrates the logical structure of proposition development and evaluation:

Examples of Activity-Level Propositions

The following examples illustrate properly formulated activity-level proposition pairs for different forensic scenarios:

- Assault Scenario: "Mr. A punched the victim" versus "The person who punched the victim shook hands with Mr. A" [1]

- Sexual Offense Scenario: "Mr. A had sex with Ms. B" versus "Mr. A and Ms. B attended the same party, and they had social interaction only" [1]

- Burglary Scenario: "X stabbed Y" versus "An unknown person stabbed Y but X met Y the day before" [2]

These examples demonstrate how activity-level propositions specifically address the mechanism of transfer rather than merely the presence of DNA. They help courts distinguish between different explanatory frameworks that could account for the same DNA evidence being present.

Table 2: Activity-Level Proposition Examples Across Forensic Scenarios

| Scenario | Prosecution Proposition | Defense Proposition | Key Distinction |

|---|---|---|---|

| Violent Assault | The suspect grabbed the victim by the neck | The suspect and victim only shook hands earlier | Nature and intensity of physical contact |

| Sexual Assault | The suspect had forcible sexual contact with the victim | The suspect and victim had consensual contact days earlier | Type of contact and temporal framework |

| Burglary | The suspect handled the broken window during entry | The suspect's DNA was deposited during legal visit days prior | Context and timing of contact with evidence item |

| Weapons Offense | The suspect fired the weapon during the crime | The suspect handled the weapon at the shooting range week prior | Activity context and temporal association |

Quantitative Evaluation Framework

The Likelihood Ratio Approach

The evaluation of evidence given activity-level propositions employs a likelihood ratio (LR) framework to quantify the strength of evidence [2] [3]. The LR represents the probability of the observed evidence (E) under the prosecution proposition (Hp) divided by the probability of that same evidence under the defense proposition (Hd):

LR = P(E|Hp) / P(E|Hd)

Within this framework, the scientist assigns the probability of the evidence if each of the alternate propositions is true [2]. To do this effectively, the scientist must ask:

- "What are the expectations if each of the propositions is true?" [2]

- "What data are available to assist in the evaluation of the results given the propositions?" [2]

The likelihood ratio approach provides a transparent and balanced method for expressing the strength of forensic evidence, allowing recipients of expert information to understand how strongly the evidence supports one proposition over the other [3].

Bayesian Networks for Complex Evaluation

Bayesian Networks (BNs) are increasingly valuable for evaluating activity-level propositions because they force explicit consideration of all relevant possibilities in a logical way [2]. These probabilistic graphical models represent variables and their conditional dependencies via directed acyclic graphs, enabling complex reasoning under uncertainty.

The following diagram illustrates a simplified Bayesian Network for evaluating DNA transfer evidence:

Data Requirements for Quantitative Assessment

Robust evaluation of activity-level propositions requires empirical data on transfer, persistence, and background prevalence [2]. The following table summarizes key data requirements:

Table 3: Data Requirements for Activity-Level Proposition Evaluation

| Data Category | Specific Parameters | Research Methods | Application in Evaluation |

|---|---|---|---|

| Transfer Probabilities | Primary/secondary transfer rates by substrate, pressure, duration | Controlled transfer experiments | Informs expectations under different activity scenarios |

| Persistence Dynamics | Degradation rates under different environmental conditions | Time-series sampling studies | Informs expectations about recovery likelihood |

| Background Prevalence | DNA quantities and profiles on various surfaces in different environments | Systematic environmental sampling | Provides reference for evaluating significance of findings |

| Shedder Status | Variation in DNA deposition among individuals | Controlled deposition studies | Accounts for inter-individual variability in transfer |

Experimental Design for Knowledge Bases

Designing Forensically Relevant Experiments

Building reliable knowledge bases for activity-level evaluation requires careful experimental design that captures the complexity of real-world scenarios while maintaining scientific rigor [2]. Effective experiments should:

- Mimic alleged activities with sufficient variation to account for uncertainties in real case circumstances [1]

- Study the impact of different factors on DNA transfer during activities to identify which variables have substantial effects on evaluations [1]

- Incorporate realistic temporal frameworks that reflect the time elapsed between alleged activities and evidence collection

- Account for inter-individual variation in factors such as shedder status that significantly impact DNA transfer [1]

When exact details of alleged activities are unknown, experiments should incorporate the range of plausible scenarios, with sensitivity analyses determining which factors substantially affect the strength of observations [1].

Addressing Case-Specific Uncertainties

A common concern in activity-level evaluation is that each case has unique features, making laboratory data potentially inapplicable [1]. However, this challenge can be addressed through:

- Probability Weighting: Incorporating unknown factors by considering all possible states within the evaluation, weighted by probabilities informed by controlled experiments [1]

- Sensitivity Analysis: Determining how much effect unknown factors have on the value of the findings [1]

- Data Relevance Assessment: Ensuring that experimental data are relevant to the specific case circumstances [2]

This approach acknowledges uncertainties while still providing quantitative assessments based on the best available scientific knowledge.

The Scientist's Toolkit: Research Reagent Solutions

Implementation of activity-level proposition evaluation requires specific methodological approaches and analytical tools. The following table details key components of the research toolkit:

Table 4: Essential Methodologies for Activity-Level Proposition Research

| Methodology Category | Specific Techniques | Application in Activity-Level Research |

|---|---|---|

| DNA Quantification | qPCR, digital PCR | Measures DNA quantity for transfer and persistence studies |

| Profile Analysis | Probabilistic genotyping, mixture deconvolution | Interprets complex DNA mixtures from transfer experiments |

| Statistical Modeling | Bayesian Networks, likelihood ratio frameworks | Provides structure for evaluating evidence under competing propositions |

| Data Generation | Controlled transfer studies, environmental sampling | Creates empirical basis for assignment of probabilities |

| Sensitivity Analysis | Monte Carlo simulation, factor prioritization | Identifies which uncertain factors most impact conclusions |

Implementation Challenges and Solutions

Addressing Common Concerns

The implementation of activity-level propositions in forensic practice faces several perceived challenges that require systematic addressing:

- Proposition Specification: Scientists often express concern that they cannot know every aspect of alleged activities [1]. However, the evaluation framework does not require exhaustive knowledge but focuses on factors that substantially impact expectations about the evidence [1].

- Data Limitations: The lack of relevant data on transfer and persistence is frequently cited [1]. The solution involves actively building knowledge bases through targeted research and using Bayesian methods to explicitly account for uncertainties [2].

- Legal Framework Alignment: Some critics argue that probabilistic evaluation infringes on the presumption of innocence [3]. Properly implemented, the LR framework does not assign guilt but merely evaluates the strength of scientific evidence, leaving ultimate conclusions to the trier of fact [3].

Reporting and Communication

Effective communication of activity-level evaluations requires:

- Clear distinction between scientific conclusions and legal decisions

- Transparent explanation of assumptions and limitations

- Appropriate placement within the hierarchy of propositions

- Balanced consideration of competing explanations

Scientists must work within the hierarchy of propositions framework, recognizing that the value of evidence calculated for a DNA profile cannot be carried over to higher levels in the hierarchy [2].

Activity-level propositions represent a necessary evolution in forensic science, enabling more meaningful contributions to legal inquiries about how biological material was deposited at crime scenes. The proper formulation and evaluation of these propositions requires a solid theoretical framework, robust empirical data, and appropriate statistical methods. While implementation challenges exist, they can be addressed through continued research, knowledge base development, and clear communication between scientific and legal stakeholders. By embracing this framework, forensic scientists can provide courts with more nuanced and relevant information about the probative value of biological evidence in the context of alleged activities.

In both scientific research and legal proceedings, the pathway to reliable conclusions is built upon a foundation of precisely formulated hypotheses. Well-defined hypotheses establish the essential framework for rigorous evidence evaluation, ensuring that conclusions are structured, logical, and transparent. The legal system's dependence on this structured approach is profound; it creates the necessary conditions for rational decision-making by directing the collection and assessment of evidence, minimizing cognitive biases, and establishing clear boundaries for inferential reasoning. Within the context of prosecution and defense strategy, the formulation of competing hypotheses represents not merely a procedural formality but a fundamental imperative that safeguards the integrity of the fact-finding process.

The critical role of hypothesis formulation becomes particularly evident when examining the intersection of science and law, especially in cases involving complex forensic evidence or statistical data. Statistical inference, a cornerstone of evidence-based medicine and clinical research, relies on formal hypothesis testing to determine whether observed differences between treatment groups represent true effects or occurred by chance [4] [5]. This methodological parallel between scientific and legal reasoning underscores a universal principle: without clearly stated alternative explanations, any evaluation of evidence lacks structure, coherence, and ultimately, validity.

The Anatomy of Legal and Scientific Hypotheses

Fundamental Definitions and Structure

At its core, a hypothesis represents a precise, educated guess about a relationship or outcome that can be tested through systematic investigation [4]. In legal contexts, hypotheses are articulated as competing propositions that frame the evidence within a case.

- Prosecution Hypothesis (Hp): Typically asserts that the defendant is the source of the forensic evidence or committed the alleged act [6]. Example: "The DNA recovered from the crime scene originated from the defendant."

- Defense Hypothesis (Hd): Proposes an alternative explanation, often that someone other than the defendant is the source or that the incident occurred differently [6]. Example: "The DNA recovered from the crime scene originated from an unknown individual unrelated to the defendant."

This dichotomous framework mirrors the scientific method used in clinical research and drug development, where investigators formulate null hypotheses (H0) stating no statistical difference exists between groups, and alternative hypotheses (H1) stating that a significant difference does exist [4]. The parallel structure enables the same logical frameworks to be applied in evaluating evidence across disciplines.

The Role of Hypotheses in Evidence Evaluation

Well-constructed hypotheses serve multiple critical functions in legal proceedings:

- Direction of Inquiry: They guide investigators and legal professionals in determining what evidence to collect and how to evaluate its relevance [4].

- Framework for Interpretation: They provide the logical structure for interpreting forensic findings, particularly when using statistical methodologies like likelihood ratios [6].

- Safeguard Against Bias: By explicitly stating alternative explanations, they reduce the risk of confirmation bias where investigators might selectively seek or interpret evidence to support a single theory.

- Standardization: They create a consistent approach to evidence evaluation across different cases and forensic disciplines, enhancing the reliability and fairness of legal outcomes.

Quantitative Frameworks for Hypothesis Testing

Statistical Foundations in Science and Law

The evaluation of hypotheses in both scientific research and legal proceedings employs robust statistical frameworks to quantify the strength of evidence and determine significance. Descriptive statistics summarize and organize data in a meaningful way, while inferential statistics allow researchers to make generalizations about populations based on sample data and test hypotheses about true effects [5].

Table 1: Key Statistical Measures for Hypothesis Testing

| Statistical Measure | Function | Application Context |

|---|---|---|

| P-values | Probability of obtaining the observed effect if the null hypothesis is true | Determines statistical significance in clinical trials; conventionally p < 0.05 considered significant [4] |

| Confidence Intervals (CI) | Range of values likely to contain the true population parameter with a specified confidence level (typically 95%) | Provides estimate precision and clinical significance; more informative than p-values alone [4] |

| Likelihood Ratios (LR) | Measures how much more likely evidence is under one hypothesis versus another | Forensic evidence evaluation; quantifies strength of support for prosecution vs. defense hypotheses [6] |

| Type I Error (α) | Incorrectly rejecting a true null hypothesis (false positive) | Clinical trial risk management; typically set at 0.05 [4] |

| Type II Error (β) | Failing to reject a false null hypothesis (false negative) | Power calculations in research design; often set at 0.20 [4] |

Standards of Proof as Hypothesis Testing Thresholds

The legal system employs standardized thresholds for decision-making that function similarly to statistical significance levels in scientific research. These standards represent the minimum degree of certainty required to accept a factual proposition as proven in different legal contexts.

Table 2: Legal Standards of Proof as Hypothesis Testing Thresholds

| Legal Standard | Required Certainty | Application Context | Quantitative Estimate* |

|---|---|---|---|

| Beyond Reasonable Doubt | Abiding conviction that charge is true; moral certainty | Criminal conviction [7] | 90-100% (judicial surveys) [8] |

| Clear and Convincing Evidence | Highly probable | Civil cases with severe consequences (parental rights, restraining orders) [7] | Not precisely quantified |

| Preponderance of Evidence | More likely than not | Most civil litigation [7] | >50% probability |

| Probable Cause | Reasonable grounds for belief | Arrests, search warrants [7] | Similar to preponderance standard in judicial quantification [8] |

| Reasonable Suspicion | Objective, articulable reasons | Investigative detentions [7] | Not precisely quantified |

Note: Quantitative estimates for legal standards are derived from judicial survey data [8] and represent approximations, as these standards are formally expressed verbally rather than numerically.

Methodological Protocols for Hypothesis-Driven Analysis

Experimental Design in Clinical Research

Robust hypothesis testing in drug development follows rigorous methodological protocols to ensure valid and reliable results:

- Research Question Formulation: Identify gaps in current clinical practice or research based on literature review and clinical observation [4].

- Hypothesis Specification: Transform research questions into precise, testable hypotheses stating predicted relationships between variables [4].

- Study Population Definition: Establish clear inclusion/exclusion criteria to define the target population and ensure sample representativeness [5].

- Randomization and Blinding: Implement random assignment to treatment groups and blinding procedures to minimize selection bias and confounding [4].

- Data Collection Protocol: Standardize measurement instruments, timing of assessments, and data recording procedures across all study sites [5].

- Statistical Analysis Plan: Pre-specify primary and secondary endpoints, analytical methods, and significance thresholds before data collection [4] [5].

- Interpretation Framework: Establish criteria for clinical significance alongside statistical significance, considering confidence intervals and effect sizes [4].

Forensic Evidence Evaluation Using Likelihood Ratios

The likelihood ratio framework provides a formal methodology for evaluating hypotheses with forensic evidence:

- Proposition Formulation: Clearly articulate the prosecution hypothesis (Hp) and defense hypothesis (Hd) based on the case circumstances [6].

- Evidence Analysis: Conduct forensic examination to characterize the evidence (E) shared between the known and questioned samples [6].

- Probability Calculation: Calculate the probability of observing the evidence under both competing hypotheses [6]:

- LR = P(E|Hp) / P(E|Hd)

- Strength of Support Interpretation: Interpret the LR value according to standardized scales:

- LR > 1: Evidence supports the prosecution hypothesis

- LR = 1: Evidence has no probative value

- LR < 1: Evidence supports the defense hypothesis

- Communication of Findings: Report the LR value with appropriate explanations of its meaning, avoiding the prosecutor's fallacy (misinterpreting P(E|Hp) as P(Hp|E)) [6].

Visualizing the Hypothesis Testing Framework

Legal and Scientific Hypothesis Evaluation Workflow

Likelihood Ratio Calculation Process

Essential Research Reagent Solutions for Hypothesis Testing

Table 3: Essential Methodological Tools for Hypothesis-Driven Research

| Research Tool | Function | Application Context |

|---|---|---|

| Statistical Software (R, SPSS, Python) | Data analysis, significance testing, confidence interval calculation | Clinical trial analysis, forensic statistics [4] [5] |

| Probabilistic Genotyping Software | DNA mixture interpretation, likelihood ratio calculation | Complex forensic DNA analysis [3] |

| Randomization Protocols | Random assignment to treatment/control groups | Clinical trial design to minimize bias [4] |

| Blinding Procedures | Single/double-blind protocols to prevent bias | Drug trials, forensic analysis to reduce contextual bias [4] [6] |

| Standardized Reporting Frameworks (CONSORT, ENFSI guidelines) | Structured reporting of methods, results, conclusions | Clinical research publications, forensic expert testimony [4] [6] |

The integrity of both legal outcomes and scientific conclusions depends fundamentally on the rigorous formulation and testing of well-defined hypotheses. This structured approach transcends disciplinary boundaries, providing a universal framework for rational decision-making under conditions of uncertainty. In legal proceedings, precisely articulated prosecution and defense hypotheses create the necessary architecture for fair and reliable evidence evaluation, safeguarding against cognitive biases and logical fallacies while enabling appropriate application of statistical methods. For researchers and drug development professionals, this hypothesis-driven methodology ensures that conclusions about treatment efficacy and safety rest upon robust statistical foundations rather than ambiguous interpretations of data. The parallel structures underlying hypothesis testing across these domains reveal a fundamental truth: the path to valid conclusions in any complex inquiry must be paved with clearly stated, testable alternative explanations.

The wrongful conviction of Sally Clark for the murder of her two sons represents a critical case study in the consequences of flawed statistical reasoning and improper hypothesis formulation within legal proceedings. This case exemplifies a fundamental tension between legal and scientific principles: legal decisions seek finality through precedent, while scientific conclusions evolve with new evidence [6]. The Clark case demonstrates how improper handling of probabilistic reasoning can lead to grave miscarriages of justice, with lessons that extend directly to scientific research and hypothesis testing methodologies.

For researchers, particularly those in drug development and clinical sciences, the Clark case offers a powerful analogy for understanding how flawed foundational assumptions and incorrect hypothesis specification can invalidate study conclusions. Just as legal fact-finders must evaluate hypotheses about guilt or innocence, scientists continuously test hypotheses about treatment efficacy, biological mechanisms, and clinical outcomes. The statistical and logical fallacies present in Clark's case mirror common pitfalls in scientific research, making this legal case unexpectedly relevant for research professionals seeking to strengthen their methodological rigor.

Case Background: Scientific Tragedy in the Courtroom

Sally Clark, an English solicitor, experienced the sudden deaths of her two infant sons—Christopher in 1996 and Harry in 1998 [9]. Both children initially appeared healthy before their sudden collapses, with the first death attributed to Sudden Infant Death Syndrome (SIDS). Following the second death, Clark was arrested and charged with double murder, despite the absence of direct physical evidence linking her to the crimes [9].

The prosecution's case relied heavily on the statistical testimony of pediatrician Professor Sir Roy Meadow, who claimed that the probability of two SIDS deaths occurring in an affluent family like the Clarks was "1 in 73 million" [10] [9]. He presented this figure by squaring the estimated SIDS rate for similar families (1 in 8,500), vividly comparing it to an "80:1 longshot winning the Grand National horse race four years in a row" [10]. This statistical argument proved devastatingly persuasive despite its fundamental flaws, leading to Clark's conviction in 1999 and a life sentence [9].

The case underwent multiple appeals, with the Royal Statistical Society taking the unprecedented step of writing to the Lord Chancellor to object to the statistical methodology [9]. Clark's conviction was ultimately overturned in 2003 after hidden medical evidence emerged showing Harry had a potentially lethal bacterial infection that provided a natural explanation for his death [9]. Despite her release, Clark never recovered psychologically from the ordeal and died from alcohol-related causes four years later [9].

Deconstructing the Statistical and Hypothesis Formulation Errors

The Prosecutor's Fallacy: A Fundamental Confusion of Conditional Probabilities

At the heart of the statistical misunderstanding in Clark's case lies the prosecutor's fallacy—the confusion between the probability of the evidence given innocence versus the probability of innocence given the evidence [11]. This fallacy represents a fundamental error in conditional probability reasoning that can equally afflict scientific interpretation.

Professor Meadow testified that the probability of two SIDS deaths in the same family was 1 in 73 million, which the court mistakenly interpreted as the probability that Clark was innocent [10]. Mathematically, this confuses P(Evidence|Innocence) with P(Innocence|Evidence). The correct Bayesian interpretation shows that even with a low probability of observing two SIDS deaths under innocence, the posterior probability of innocence could remain substantial when considering the prior probability of a mother murdering her children [10].

The Independence Assumption Error: Ignoring Clustering Factors

Meadow's calculation multiplied the individual SIDS probabilities (1/8,500 × 1/8,500) based on the incorrect assumption that SIDS deaths within a family are independent events [9]. The Royal Statistical Society noted this violated biological reality, as genetic and environmental factors create dependencies that increase the probability of a second SIDS death within the same family [9]. Proper statistical analysis would account for this clustering effect, with some estimates suggesting the actual probability could be as high as 1 in 77, rather than 1 in 73 million [9].

Hypothesis Specification Error: Formulating Competing Hypotheses

A critical but often overlooked error concerns the formulation of the competing legal hypotheses. The prosecution presented the hypothesis as "both babies were murdered" versus "both babies died of SIDS" [12]. A more appropriate prosecution hypothesis would have been "at least one baby was murdered," which better corresponds to what would be required for conviction [12].

This hypothesis mis-specification dramatically affected the probabilistic calculations. Using the same assumptions from the case, the prior odds favored the defense hypothesis over the double murder hypothesis by 30 to 1, but favored the defense hypothesis over the "at least one murder" hypothesis by only 5 to 2 [12]. This subtle but crucial difference in hypothesis formulation fundamentally changes the statistical interpretation of the evidence.

Table 1: Impact of Hypothesis Specification on Prior Probabilities

| Hypothesis Formulation | Prior Odds (Defense vs. Prosecution) | Statistical Impact |

|---|---|---|

| Both murdered (M) vs. Both SIDS (S) | 30 to 1 in favor of S | Greatly exaggerates defense position |

| At least one murdered (H) vs. Both SIDS (S) | 5 to 2 in favor of S | More balanced assessment |

Bayesian Reasoning: The Mathematical Antidote to Flawed Logic

Bayes' Theorem as a Corrective Framework

Bayes' theorem provides the mathematical framework to correct the reasoning errors present in Clark's case. The theorem dictates that the probability assigned to a hypothesis in light of new evidence is proportional to both the conditional probability of the evidence assuming the hypothesis is true, and the prior probability of the hypothesis before considering the evidence [10].

For the Sally Clark case, a Bayesian approach would balance the unusualness of two infant deaths against the baseline rarity of double infanticide. One analysis suggested that, considering the prior probability of a mother committing double infanticide as approximately 1 in 100 million, the posterior probability of Clark's innocence would be about 58%—far from the "virtually impossible" impression created by the 1 in 73 million figure [10].

The Likelihood Ratio Framework for Evidence Evaluation

Modern forensic science increasingly uses likelihood ratios to evaluate evidence, which avoids the pitfalls of the prosecutor's fallacy [6]. The likelihood ratio compares the probability of the evidence under two competing hypotheses:

[ LR = \frac{P(E|Hp)}{P(E|Hd)} ]

where (Hp) represents the prosecution hypothesis and (Hd) the defense hypothesis [6]. This approach keeps the expert's testimony within their domain of expertise without requiring them to comment on prior probabilities or posterior probabilities of guilt, which properly remain the domain of the fact-finder [6].

Table 2: Comparison of Statistical Approaches in Evidence Evaluation

| Approach | Strengths | Limitations | Appropriate Use |

|---|---|---|---|

| Frequentist (Significance Testing) | Widely familiar, standardized thresholds | Ignores prior probabilities, prone to prosecutor's fallacy | Initial screening, controlled experiments |

| Bayesian (Posterior Probability) | Incorporates prior knowledge, provides direct probability statements | Requires subjective priors, computationally complex | Complex decision-making, sequential analysis |

| Likelihood Ratio | Avoids fallacy, respects boundaries of expertise | Less intuitive, requires clear hypothesis specification | Forensic science, expert testimony |

Parallels to Scientific Research: Lessons for Hypothesis Formulation

Proper Hypothesis Specification in Clinical Trials

The hypothesis formulation errors in Clark's case directly parallel challenges in clinical trial design. Research hypotheses should constitute a complete partition of all possible probability models, such that the alternative hypothesis can be logically inferred upon rejection of the null hypothesis [13]. Many clinical trials fail to properly specify their alternative hypotheses, leading to ambiguous conclusions upon rejection of the null [13].

Clinical researchers must carefully consider whether their alternative hypothesis claims are too strong or too weak for the biological properties being investigated [13]. An excessively strong claim (e.g., requiring hazard ratio superiority across all timepoints) may miss real treatment benefits detectable through weaker but more appropriate claims (e.g., superior median survival) [13].

Accounting for Multiple Comparisons and Dependencies

Meadow's independence assumption error mirrors a common problem in scientific research: failing to account for multiple testing and dependencies in data. Just as SIDS deaths within families aren't independent, repeated measurements within patients, genetic correlations in biological samples, or temporal correlations in longitudinal data require appropriate statistical modeling to avoid inflated significance claims.

Experimental Protocols for Robust Hypothesis Testing

A Framework for Proper Legal and Scientific Hypothesis Formulation

Based on the lessons from the Clark case and clinical research methodology, the following protocol provides a systematic approach to hypothesis formulation:

- Define the Universe of Possibilities: Explicitly delineate all possible explanations or outcomes relevant to the inquiry [13].

- Formulate Mutually Exclusive and Exhaustive Hypotheses: Ensure the competing hypotheses cover all possibilities without overlap, typically as logical negations [12] [13].

- Consider Biological/Legal Realism: Incorporate known dependencies, biological mechanisms, or contextual factors that affect probabilities [9].

- Select Appropriate Statistical Framework: Choose frequentist, Bayesian, or likelihood ratio approaches based on the decision context and available information [6].

- Pre-specify Decision Thresholds: Establish evidentiary standards (e.g., p-value thresholds, Bayes factors) before data collection [14].

Signaling Pathways: From Flawed to Sound Reasoning

The following diagram illustrates the logical progression from flawed to sound hypothesis evaluation, mapping both the errors in the Clark case and their corrective methodologies:

Research Reagent Solutions: Essential Methodological Tools

Table 3: Methodological Tools for Robust Hypothesis Testing

| Methodological Tool | Function | Application Context |

|---|---|---|

| Bayesian Analysis Software | Computes posterior probabilities from priors and likelihoods | Complex decision environments with prior knowledge |

| Multiple Testing Corrections | Controls false discovery rates in multiple comparisons | Genomic studies, high-throughput screening |

| Dependency Structure Modeling | Accounts for correlations in clustered data | Family studies, repeated measures, spatial data |

| Likelihood Ratio Calculators | Quantifies evidence strength for competing hypotheses | Forensic science, diagnostic test evaluation |

| Pre-specification Registries | Documents planned analyses before data collection | Clinical trials, confirmatory research |

The Sally Clark case remains a powerful cautionary tale about the consequences of flawed statistical reasoning and improper hypothesis formulation. For researchers and drug development professionals, this case underscores several critical principles: the necessity of proper hypothesis specification that reflects biological reality, the importance of understanding conditional probabilities, and the value of selecting statistical frameworks appropriate to the decision context.

Implementing robust methodological safeguards—including pre-specified analysis plans, appropriate statistical modeling of dependencies, and clarity about the precise definition of alternative hypotheses—can prevent analogous errors in scientific research. Just as the legal system has gradually incorporated lessons from cases like Clark's through improved statistical training and evidence guidelines, the research community must continuously refine its approach to hypothesis testing and statistical inference.

The tragedy of Sally Clark ultimately highlights the profound responsibility shared by legal and scientific professionals: to pursue truth through rigorous methodology, transparent reasoning, and humility in the face of uncertainty. By learning from these hard-won lessons, researchers can strengthen the foundation of scientific inference and avoid perpetuating statistical fallacies in their own work.

The successful adoption of new medical interventions on a global scale is a critical public health objective. However, this process is hindered by a complex array of barriers spanning socioeconomic, methodological, and cultural domains. These barriers create significant disparities in access to essential medicines, with an estimated two billion people globally lacking access [15]. Within the framework of prosecution hypothesis (the assertion of a treatment's benefit) and defense hypothesis (the challenge to this assertion) formulation in medical research, these barriers represent fundamental challenges to the validity and generalizability of clinical evidence. This whitepaper provides a technical analysis of these global adoption barriers, focusing on the reticence rooted in cultural identity, profound data gaps in clinical evidence generation, and methodological disparities in trial design and reporting. The objective is to equip researchers, scientists, and drug development professionals with a structured understanding of these challenges and the methodologies to address them, thereby strengthening the hypothesis testing and defense process in global drug development.

A data-driven assessment reveals the scale and nature of global disparities in healthcare access and research representation. The following tables summarize key quantitative findings.

Table 1: Global Burden and Access Disparities

| Indicator | Metric | Data Source / Period |

|---|---|---|

| People lacking access to essential medicines | 2 billion | UN Human Rights Report, 2025 [15] |

| Proportion of neglected tropical disease burden in LMICs | 80% (across 16 countries) | UN Human Rights Report, 2025 [15] |

| Increase in DALYs from Drug Use Disorders (DUDs), 1990-2021 | 14.7% | Global Burden of Disease Study, 2021 [16] |

| Slope Index of Inequality (SII) for DUDs burden (1990 to 2021) | 82.4 to 289.24 | Global Burden of Disease Study, 2021 [16] |

Table 2: Data Gaps in Regulatory Approvals of AI/ML Medical Devices (n=692) [17]

| Reporting Dimension | Percentage Reported | Implied Data Gap |

|---|---|---|

| Race/Ethnicity Data | 3.6% | 96.4% |

| Socioeconomic Data | 0.9% | 99.1% |

| Age of Study Subjects | 18.4%* | 81.6% |

| Comprehensive Performance Results | 46.1% | 53.9% |

| Link to Scientific Publication | 1.9% | 98.1% |

| Prospective Post-Market Surveillance | 9.0% | 91.0% |

Note: Age was reported in 19.4% of documents, with 134 documents containing information; 81.6% provided no data.

The Reticence Barrier: Cultural Identity and Pharmaceutical Skepticism

Resistance to pharmaceutical intervention, or "reticence," is not merely a matter of access but is often a conscious expression of cultural and racial identity.

Experimental Evidence of Cultural Skepticism

A focus group-based study provided direct evidence of this phenomenon. The study design and key findings are summarized below.

- Methodology: Drawing on focus groups with patients recently prescribed medication, researchers investigated the role of marginalization, measured by acculturation and race, in shaping subjective experiences with prescription drugs [18].

- Core Findings:

- Cultural Preference: Racial minorities reported a greater skepticism of prescription drugs compared to whites and expressed that they turned to prescription drugs as a last resort [18].

- Alternative Remedies: While highly acculturated participants rarely discussed alternatives, less acculturated racial minorities indicated a preference for complementary and alternative remedies [18].

- Identity Expression: This skepticism is framed as an act of "resistance" to pharmaceuticalization pressures, serving to express cultural and racial identities that may be marginalized by mainstream Western medical systems [18].

Quantitative Validation of Divergent Beliefs

A cross-sectional questionnaire study quantitatively validated the influence of cultural background on medication beliefs.

- Protocol: The study compared beliefs about medicines between UK undergraduate students of Asian and European cultural backgrounds using the Beliefs about Medicines Questionnaire (BMQ) [19]. The BMQ General scale includes sub-scales assessing beliefs about medicine being inherently harmful (General-Harm) and about doctors overprescribing them (General-Overuse) [19].

- Key Results: Students with an Asian cultural background perceived modern medicines as significantly more harmful and believed more strongly in doctor overuse than their European counterparts. This was evident after controlling for degree course, medication experience, and gender [19].

- Interpretation: These beliefs are not isolated opinions but are shaped by broader "health ideologies" and "cultural models of health" that are reproduced through the act of taking medicine [19]. This creates a significant barrier to adoption that is rooted in deeply held worldviews.

Data Gaps and Methodological Disparities in Evidence Generation

The foundation of the prosecution hypothesis—robust clinical evidence—is often undermined by significant gaps and methodological weaknesses that limit the generalizability of findings.

Deficiencies in Clinical Trial Diversity and Reporting

A critical barrier is the failure to ensure clinical trial populations are demographically representative of the intended treatment populations.

- Underrepresentation of Key Demographics: An analysis of FDA-approved AI/ML medical devices revealed a severe underreporting of demographic data in the supporting documents, as detailed in Table 2. This lack of transparency exacerbates the risk of algorithmic bias and health disparities, as the performance of these devices in specific populations cannot be verified [17].

- Scientific and Regulatory Imperative for Diversity: International guidelines (e.g., ICH) specify that clinical trial populations should be representative of the population for whom the medicine is intended [20]. Intrinsic factors (age, sex, race, ethnicity, comorbidities) and extrinsic factors (diet, drug interactions) are known to cause interindividual variability in pharmacokinetics, pharmacodynamics, and treatment response [20]. The FDA guidance "Enhancing the Diversity of Trial Populations" (2020) is a direct response to the ongoing failure to meet this imperative [20].

- Specific Impact of Age-Related Gaps: Older adults, a major consumer of medications, are often underrepresented. Age-related changes in physiology (e.g., renal function, body composition), comorbidities, and polypharmacy significantly alter drug absorption, distribution, and interaction potential [20]. Trials that fail to enroll adequate numbers of older adults generate evidence that is not generalizable to this key demographic.

Methodological Flaws in Clinical Trial Inference

Even perfectly executed clinical trials can generate false or irreproducible results due to inherent methodological shortcomings in statistical inference.

- The Challenge of Heterogeneity: A core problem is the assumption of "distributional homogeneity of subjects’ responses to medical interventions" [21]. In reality, patient samples are highly heterogeneous in unpredictable ways due to complex biological mechanisms and interactions. Statistical models that ignore this heterogeneity can lead to false conclusions about a drug's efficacy and safety for individuals or subgroups [21].

- Ethical Sampling vs. Representativeness: Ethical considerations often lead to the recruitment of subjects who are "younger, healthier and less medicated than the targeted population," making the trial sample non-representative [21]. This creates a major obstacle to extrapolating trial findings to the real-world population.

- The Role of Bias and Conflict of Interest: Beyond statistical issues, deliberate biases—such as withholding data, selective reporting, and manipulating patient inclusion criteria—have been documented, turning some trials into "marketing tools in disguise" [21]. This directly corrupts the hypothesis defense process.

The Emergence of Innovative Trial Designs

In response to the high costs and inefficiencies of traditional trials, innovative designs are being adopted, though unevenly across therapeutic areas.

- Methodology for Tracking Innovation: A large-scale analysis of ClinicalTrials.gov registrations (2005-2024) used a keyword-based algorithm (e.g., "adaptive," "Bayes," "seamless") to classify 348,818 trials as innovative or traditional [22]. A Large Language Model (LLM) was employed to classify trials by therapeutic area with high accuracy (94.6%) [22].

- Adoption Patterns: Of the analyzed trials, 5,827 were classified as innovative. Their adoption has grown since 2011, spurred by regulatory advancements and funding. These designs are predominantly observed in early-phase trials, pediatric research, and fields like neuroscience and rare diseases, but have limited representation in elderly-focused or sex-specific studies [22].

- Types of Innovative Designs:

- Adaptive Designs: Allow modifications to trial parameters (e.g., sample size, randomization ratios) based on interim results, improving efficiency and ethics by minimizing patient exposure to inferior treatments [22].

- Bayesian Designs: Incorporate prior knowledge with accumulating trial data to provide a more holistic view, which is particularly useful when historical data can guide the trial or for smaller, underrepresented populations [22].

The Scientist's Toolkit: Research Reagent Solutions

To address these barriers, researchers require a suite of methodological tools and approaches.

Table 3: Essential Research Reagents and Methodologies

| Tool / Reagent | Primary Function | Application in Addressing Barriers |

|---|---|---|

| Joinpoint Regression Analysis | Identifies significant temporal trend changes in disease burden or adoption rates. | Quantifying shifts in global health burdens (e.g., analyzing DALYs over time) to inform resource allocation [16]. |

| Slope Index of Inequality (SII) & Concentration Index (CI) | Measures absolute and relative health inequality across socioeconomic groups. | Objectively quantifying disparities in the burden of disease and access to care across countries with different SDI levels [16]. |

| Nordpred Age-Period-Cohort Model | Projects future disease burden based on past trends. | Informing long-term public health planning and intervention strategies for conditions like drug use disorders [16]. |

| Beliefs about Medicines Questionnaire (BMQ) | Quantifies cognitive representations of medication, including perceptions of harm and overuse. | Objectively measuring cultural and individual-level reticence towards pharmaceutical interventions [19]. |

| Large Language Models (LLMs) for Trial Classification | Automates the categorization of clinical trials from registries into therapeutic areas. | Enabling large-scale, real-time monitoring of trial design innovation and diversity across medical specialties [22]. |

| Adaptive & Bayesian Trial Designs | Dynamic methodologies that improve trial efficiency and ethical standards. | Accelerating development for rare diseases and pediatric populations; allowing for more complex hypothesis testing within a single trial [22]. |

Visualizing the Hypothesis Defense Framework in Global Adoption

The following diagram illustrates the interconnected barriers to global adoption and the reinforcing nature of data gaps and methodological disparities within the prosecution-defense hypothesis framework.

Diagram 1: The Hypothesis Defense Framework of Global Adoption Barriers

Visualizing the Experimental Protocol for Analyzing Clinical Trial Innovation

The workflow below details the methodology for quantifying the adoption of innovative clinical trial designs using registry data and large language models, as employed in recent research [22].

Diagram 2: Protocol for Analyzing Innovative Clinical Trial Adoption

The path to equitable global adoption of medical innovations is obstructed by a triad of deeply interconnected barriers: cultural reticence, significant data gaps, and fundamental methodological disparities. Within the context of prosecution-defense hypothesis research, these barriers collectively challenge the validity and generalizability of the central prosecution hypothesis that a drug is safe and effective for broad populations. The quantitative data reveals stark global inequalities in access and burden of disease, while analyses of regulatory approvals and clinical trials show a pervasive failure to represent diverse populations in the evidence base. Overcoming these challenges requires a multipronged strategy: the application of robust methodological tools to quantify disparities, the deliberate adoption of innovative and inclusive trial designs, and a respectful engagement with the cultural dimensions of medication use. For researchers and drug development professionals, addressing these issues is not merely an ethical imperative but a scientific necessity for generating defensible hypotheses and delivering on the promise of global health equity.

From Theory to Practice: Implementing Robust Methodologies for Hypothesis Testing

The formulation of prosecution and defense hypotheses represents a foundational step in the application of probabilistic reasoning to forensic science and legal proceedings. Properly structured hypotheses must be both mutually exclusive and exhaustive to ensure logical rigor and prevent misinterpretation of evidence [23]. When hypotheses do not meet these criteria, there is significant risk of statistical fallacies that can fundamentally undermine the validity of legal conclusions [12] [6]. The principle of mutual exclusivity requires that the hypotheses cannot both be true simultaneously, while exhaustiveness demands that together they cover all possible explanations for the evidence [23].

The impact of hypothesis formulation extends beyond theoretical importance into practical consequences, as subtle changes in the structure of alternative hypotheses can dramatically alter the resulting probabilities assigned to evidence [12]. This technical guide, situated within broader research on prosecution-defense hypothesis formulation, provides researchers and forensic professionals with methodological protocols for constructing logically sound hypothesis frameworks. Through proper implementation of these structured approaches, the scientific community can enhance the validity of evaluative reporting and maintain alignment with fundamental justice principles, including the presumption of innocence [3].

Theoretical Foundation: Principles of Logical Negation in Forensic Reasoning

The Mutual Exclusivity and Exhaustiveness Requirement

In probabilistic reasoning for forensic applications, the prosecution hypothesis (Hp) and defense hypothesis (Hd) must form a logical negation pair [12] [23]. This relationship means that if Hp is false, Hd must be true, and vice versa, with no overlapping territory between them. The requirement of exhaustiveness ensures that no possible explanation is omitted from consideration, while mutual exclusivity prevents ambiguity in evidentiary interpretation [23].

The theoretical basis for this approach stems from probability theory, where the relationship between competing hypotheses follows the principle of additivity [24]. For a set of hypotheses to be exhaustive, the sum of their probabilities must equal 1, ensuring that all possibilities are accounted for in the analytical framework. Mutual exclusivity guarantees that the probability of any two hypotheses being true simultaneously is zero [24]. When these conditions are met, Bayes' theorem can be properly applied to update prior beliefs based on new evidence through the likelihood ratio framework [6].

Consequences of Improper Hypothesis Formulation

Failure to establish properly negated hypotheses can lead to significant errors in evidence evaluation. In the notorious Sally Clark case, the prosecution presented the hypothesis "both babies were murdered" as the alternative to the defense hypothesis "both babies died of SIDS" [12]. This formulation proved problematic because it ignored intermediate possibilities such as one murder and one SIDS death. A more appropriate prosecution hypothesis would have been "at least one baby was murdered," which forms a true logical negation with the defense hypothesis [12].

The probabilistic impact of this hypothesis misspecification was substantial. Using the same assumptions as probability experts in the case, the prior odds favoring the defense hypothesis over the double murder hypothesis were 30 to 1. However, when compared to the more appropriate "at least one murder" hypothesis, the prior odds in favor of the defense reduced dramatically to only 5 to 2 [12]. This stark difference demonstrates how hypothesis formulation directly influences the perceived strength of evidence and ultimate conclusions.

Methodological Framework: Structured Approach to Hypothesis Construction

Hypothesis Formulation Protocol

The following experimental protocol provides a systematic methodology for constructing mutually exclusive and exhaustive hypothesis pairs across various forensic contexts:

Table 1: Hypothesis Formulation Experimental Protocol

| Step | Procedure | Purpose | Validation Check |

|---|---|---|---|

| 1. Define the Fundamental Question | Identify the core disputed issue requiring resolution through evidence evaluation. | Establish the conceptual boundaries for hypothesis development. | The question should be specific, answerable, and forensically relevant. |

| 2. Enumerate All Possible Explanations | Brainstorm all plausible scenarios that could account for the available evidence. | Ensure no reasonable explanation is omitted from consideration. | List should be comprehensive without being overly speculative. |

| 3. Group Explanations by Stakeholder Perspective | Categorize explanations according to prosecution and defense positions. | Create alignment with adversarial legal framework. | Each category should reflect a coherent narrative position. |

| 4. Formulate Logical Negations | Structure Hp and Hd such that they cannot simultaneously be true and cover all possibilities. | Establish proper logical relationship between competing hypotheses. | Test that Hp = NOT Hd and Hd = NOT Hp. |

| 5. Validate Mutual Exclusivity | Check that evidence supporting Hp necessarily undermines Hd, and vice versa. | Prevent overlapping hypotheses that create analytical ambiguity. | Confirm that P(Hp AND Hd) = 0. |

| 6. Validate Exhaustiveness | Verify that P(Hp) + P(Hd) = 1 given all possible scenarios. | Ensure the hypothesis pair accounts for all possible realities. | No scenario exists where neither Hp nor Hd is true. |

| 7. Document Rationale | Record the reasoning behind hypothesis formulation decisions. | Create transparency and allow for critical review. | Documentation should enable replication and critique. |

Hierarchical Hypothesis Framework

Forensic hypotheses operate at three distinct levels of specificity, each with different implications for mutual exclusivity and exhaustiveness [23]:

Source Level Hypotheses address the origin of physical traces and typically represent the most straightforward level for creating mutually exclusive and exhaustive pairs [23]. For example:

- Hp: The fingerprint on the drawer originated from the defendant's finger.

- Hd: The fingerprint on the drawer originated from someone other than the defendant.

Activity Level Hypotheses concern the actions through which traces were created or left and involve greater complexity due to multiple influencing factors [23]. For example:

- Hp: The defendant broke the window.

- Hd: Someone other than the defendant broke the window.

Crime Level Hypotheses encompass the entire criminal act and represent the ultimate question before the court [23]. These hypotheses typically extend beyond forensic science into legal domains.

Quantitative Impact Analysis: Measuring the Effect of Hypothesis Specification

Case Study: Statistical Analysis of Hypothesis Formulation

The Sally Clark case provides a compelling demonstration of how hypothesis specification dramatically impacts quantitative outcomes. The following table summarizes the probabilistic consequences of different hypothesis formulations using data from this case [12]:

Table 2: Impact of Hypothesis Formulation on Prior Probabilities in Sally Clark Case

| Hypothesis Pair | Prosecution Hypothesis (Hp) | Defense Hypothesis (Hd) | Prior Odds (Hd:Hp) | Posterior Odds with LR=5 | Qualitative Impact |

|---|---|---|---|---|---|

| Restricted Pair | Both babies murdered | Both babies died of SIDS | 30:1 | 150:1 | Greatly overstates evidence for defense |

| Exhaustive Pair | At least one baby murdered | Both babies died of SIDS | 5:2 | 25:4 | Appropriately represents modest evidence for defense |

| Probability Basis | P(Hp) = 1/2,152,224,291 | P(Hd) = 1/12,600,000 |

The table illustrates how the same evidence, expressed through a likelihood ratio of 5, produces dramatically different conclusions depending on the hypothesis formulation. The restricted pair creates a misleading impression that the evidence strongly supports the defense hypothesis, while the exhaustive pair provides a more balanced representation [12].

Likelihood Ratio Framework

The likelihood ratio (LR) provides a standardized measure of evidentiary strength under competing hypotheses [3] [6]. The formula for calculating LR is:

Where:

- P(E|Hp) = Probability of observing evidence E if prosecution hypothesis Hp is true

- P(E|Hd) = Probability of observing evidence E if defense hypothesis Hd is true

When hypotheses are mutually exclusive and exhaustive, the LR cleanly relates to the posterior odds through Bayes' theorem [6]:

This relationship provides the mathematical foundation for updating beliefs about hypotheses in light of new evidence. However, when hypotheses violate the mutual exclusivity or exhaustiveness requirements, this relationship breaks down, potentially leading to incorrect interpretations [12].

Common Errors and Fallacies in Practice

Prosecutor's Fallacy and Related Reasoning Errors

The prosecutor's fallacy represents one of the most prevalent errors in statistical reasoning within legal contexts [6] [24]. This fallacy occurs when the conditional probability P(E|Hp) is mistakenly interpreted as P(Hp|E), effectively transposing the conditional [6]. In practical terms, this means confusing the probability of finding evidence if the prosecution hypothesis is true with the probability that the prosecution hypothesis is true given the evidence.

When hypotheses are not properly formulated as logical negations, the risk of the prosecutor's fallacy increases substantially [12] [6]. In the Sally Clark case, the erroneous calculation that there was only a 1 in 73 million chance of two SIDS deaths in the same family was misinterpreted as the probability of Sally Clark's innocence, representing a classic example of this fallacy [12]. Proper hypothesis formulation creates a logical framework that helps prevent such misinterpretations.

Independence Assumption Errors

Another common error involves unjustified assumptions of independence between events [24]. In the Collins case, the prosecutor multiplied the individual probabilities of several characteristics to arrive at an astronomically small probability that a random couple would possess all characteristics [24]. This calculation incorrectly assumed these characteristics were independent, dramatically overstating the probative value of the evidence.

Proper hypothesis formulation helps mitigate independence errors by forcing explicit consideration of the relationships between different pieces of evidence and their probabilities under competing explanations [12] [24]. When constructing mutually exclusive and exhaustive hypotheses, analysts must carefully consider how different evidentiary elements interact within each hypothetical scenario.

Research Reagent Solutions: Methodological Toolkit

Table 3: Essential Methodological Tools for Hypothesis Formulation Research

| Research Tool | Function | Application Example |

|---|---|---|

| Bayesian Probability Framework | Mathematical structure for updating beliefs based on evidence | Calculating posterior probabilities from prior odds and likelihood ratios [6] [24] |

| Likelihood Ratio Calculator | Quantitative measure of evidentiary strength under competing hypotheses | Comparing P(E|Hp) to P(E|Hd) to generate LR values [3] [6] |

| Logical Negation Validator | Algorithmic check for mutual exclusivity and exhaustiveness | Verifying that Hp = NOT Hd and Hd = NOT Hp [12] [23] |

| Dependency Analyzer | Tool for identifying conditional relationships between evidentiary elements | Testing independence assumptions between different pieces of evidence [24] |

| Scenario Enumeration Protocol | Systematic method for generating all possible explanations | Ensuring comprehensive hypothesis development before categorization [23] |

| Fallacy Detection Algorithm | Computational check for common reasoning errors | Identifying prosecutor's fallacy and base rate neglect [6] [24] |

Implementation Workflow: From Theory to Practice

The implementation workflow illustrates the sequential process for developing and applying properly structured hypothesis pairs. This methodology begins with precise question formulation, proceeds through systematic scenario development, and culminates in hypothesis validation before quantitative analysis [12] [23]. Each stage builds upon the previous one, creating a robust framework for evidentiary evaluation.

Critical validation checkpoints at the mutual exclusivity and exhaustiveness stages ensure the logical integrity of the hypothesis pair before proceeding to likelihood ratio calculation [12] [6]. This prevents the propagation of structural errors into subsequent quantitative analysis, which could compromise the validity of final interpretations.

The structured formulation of prosecution and defense hypotheses as mutually exclusive and exhaustive logical negations represents a fundamental requirement for valid probabilistic reasoning in forensic science [12] [23]. Proper hypothesis specification ensures that likelihood ratios accurately represent evidentiary strength and prevents reasoning fallacies that can dramatically impact legal outcomes [6] [24].

Researchers and practitioners must adhere to methodological protocols that systematically enumerate possible scenarios, validate logical relationships between hypotheses, and maintain alignment with the principles of probability theory [12] [24]. Through rigorous application of these frameworks, the forensic science community can enhance the validity of evaluative reporting and better serve the interests of justice.

Future research should continue to develop standardized protocols for hypothesis formulation across different forensic disciplines, with particular attention to complex cases involving multiple pieces of evidence and alternative explanations. Such efforts will further strengthen the theoretical foundation and practical application of logical negation in forensic hypothesis testing.

The Likelihood Ratio (LR) framework is a quantitative method for evaluating the strength of forensic evidence, providing a standardized metric to assist legal decision-makers. This framework answers a fundamental question: how much more likely is the evidence under one proposition compared to an alternative proposition? Within the context of prosecution and defense hypothesis formulation, the LR quantitatively compares the probability of observing the evidence given the prosecution's hypothesis ((Hp)) to the probability of observing the same evidence given the defense hypothesis ((Hd)) [25]. The forensic science community has increasingly adopted this approach to convey evidential weight objectively, moving away from less standardized expressions of evidential significance [25].

The LR framework's theoretical foundation is rooted in Bayesian reasoning, a normative paradigm for decision-making under uncertainty [25]. According to the odds form of Bayes' rule, a decision-maker's posterior odds regarding a proposition are equal to their prior odds multiplied by the likelihood ratio: Posterior Odds = Prior Odds × LR [25]. This mathematical relationship formally separates the role of the forensic expert (who provides the LR based on the evidence) from the role of the legal decision-maker (who holds the prior beliefs about the case and updates them based on the expert's testimony). This separation is crucial for maintaining the respective roles within the judicial process while providing a logically coherent framework for updating beliefs in light of new evidence.

Mathematical Foundations and Formulation

Core Likelihood Ratio Equation

The likelihood ratio is mathematically defined by a deceptively simple equation that compares the probability of the evidence under two competing hypotheses:

[ LR = \frac{P(E|Hp)}{P(E|Hd)} ]

Where:

- (P(E|Hp)) = Probability of observing the evidence (E) if the prosecution's hypothesis ((Hp)) is true

- (P(E|Hd)) = Probability of observing the evidence (E) if the defense hypothesis ((Hd)) is true [25]

The numerator and denominator in this ratio represent conditioned probabilities that must be estimated based on relevant data, statistical models, and appropriate assumptions about the evidence-generating process. The LR value provides a continuous measure of evidential strength, where values greater than 1 support the prosecution's hypothesis, values less than 1 support the defense hypothesis, and values equal to 1 indicate the evidence is equally likely under both hypotheses and therefore has no probative value [25].

Bayesian Interpretation for Legal Decision-Making

The LR framework operates within a broader Bayesian interpretative structure that facilitates rational belief updating. The fundamental Bayesian equation linking the LR to prior and posterior beliefs is:

[ \text{Posterior Odds}{DM} = \text{Prior Odds}{DM} \times LR_{DM} ]

In this formulation, the decision-maker's (DM) posterior odds regarding a claim represent their revised degree of belief after considering the evidence, calculated by multiplying their prior odds by their personal likelihood ratio [25]. This equation highlights the subjectivity inherent in Bayesian reasoning – the LR used in Bayes' rule must be the personal LR of the decision-maker, as it incorporates all uncertainties relevant to that individual [25].

Table 1: Interpretation of Likelihood Ratio Values

| LR Value Range | Strength of Evidence | Direction of Support |

|---|---|---|

| >10,000 | Extremely strong | Supports (H_p) |

| 1,000-10,000 | Very strong | Supports (H_p) |

| 100-1,000 | Strong | Supports (H_p) |

| 10-100 | Moderate | Supports (H_p) |

| 1-10 | Limited | Supports (H_p) |

| 1 | No value | Neither |

| 0.1-1 | Limited | Supports (H_d) |

| 0.01-0.1 | Moderate | Supports (H_d) |

| 0.001-0.01 | Strong | Supports (H_d) |

| <0.001 | Very strong | Supports (H_d) |

Practical Implementation and Calculation

General Methodology for LR Calculation

Implementing the LR framework requires a systematic approach to evidence evaluation. The general methodology involves several critical steps. First, clearly define the competing hypotheses ((Hp) and (Hd)) at the source level. These hypotheses must be mutually exclusive and exhaustive within the context of the case. Second, identify and quantify the relevant features of the evidence that will be used to distinguish between the hypotheses. Third, develop statistical models that can calculate the probability of observing the evidence under each hypothesis. This typically requires representative background data to estimate the distribution of features in relevant populations. Finally, compute the ratio of these probabilities to obtain the LR [25] [26].

For different evidence types, specialized statistical models are necessary. In forensic disciplines involving categorical count data, such as digital forensics analyzing user-generated events, the LR can be calculated in closed form using specific probability distributions [26]. For 2×2 contingency tables commonly encountered in medical and forensic research, the log-likelihood ratio support (S) can be calculated using the formula:

[ S = \sum{i=1}^{2}\sum{j=1}^{2} O{ij} \times \ln\left(\frac{O{ij}}{E_{ij}}\right) ]

Where (O{ij}) represents the observed count in the i-th row and j-th column, and (E{ij}) represents the expected count under the null model of independence [27]. This approach forms the basis of the Likelihood Ratio Test (LRT), which has been shown to have higher statistical power compared to alternatives like the Pearson chi-square test for testing whether binomial proportions are equal [27].

Experimental Protocol for LR Assessment

A standardized experimental protocol for LR assessment ensures reliability and reproducibility. For a same-source versus different-source forensic comparison, the protocol should include these key steps. Begin with evidence collection and feature extraction, where relevant characteristics are quantified from both the crime scene evidence and known reference samples. Next, perform data preprocessing and normalization to ensure comparability across samples. Then, conduct model selection and training using appropriate background data to estimate the probability distributions under both hypotheses. Following this, compute probability densities for the evidence under both (P(E|Hp)) and (P(E|Hd)) using the trained models. Finally, calculate the LR by taking the ratio of these probability densities [26].

This protocol requires careful attention to model assumptions and uncertainty quantification. The choice of statistical model significantly impacts the resulting LR, and different reasonable models can produce substantially different LR values for the same evidence [25]. Therefore, sensitivity analyses should be conducted to evaluate how the LR changes under different modeling assumptions or parameter choices.

Table 2: Essential Research Reagents for LR Implementation

| Reagent/Category | Primary Function in LR Framework | Implementation Considerations |

|---|---|---|

| Statistical Software (R, Python) | Probability calculation and modeling | Must support appropriate probability distributions and Bayesian computation |

| Reference Databases | Providing population data for probability estimation | Must be relevant to the specific evidence type and population |

| Probability Distribution Models | Modeling the evidence under competing hypotheses | Choice affects LR validity; should be empirically validated |

| Feature Extraction Tools | Quantifying relevant evidence characteristics | Must be standardized and reproducible |

| Validation Datasets | Testing model performance and calibration | Should include ground truth for performance assessment |

Uncertainty Characterization and the Assumptions Lattice

The Uncertainty Pyramid Framework

A critical advancement in the rigorous application of the LR framework is the formal recognition and characterization of uncertainty. Even with a calculated LR value, forensic scientists must assess and communicate the uncertainty associated with this value to ensure proper interpretation by legal decision-makers [25]. The uncertainty pyramid framework provides a structured approach for this assessment, where each level of the pyramid corresponds to a different set of assumptions about the evidence evaluation process.

At the base of the pyramid lies the broadest set of plausible assumptions, resulting in the widest range of potentially defensible LR values. As one moves up the pyramid, assumptions become more restrictive, narrowing the range of possible LR values but potentially increasing the risk of model misspecification [25]. This framework acknowledges that multiple statistical models may satisfy stated criteria for reasonableness, and each may produce different LR values for the same evidence. The assumptions lattice concept complements this by providing a systematic way to explore the relationships between different sets of assumptions and their impact on the calculated LR [25].

Practical Implications of Uncertainty Assessment

In practice, uncertainty assessment requires forensic experts to conduct comprehensive sensitivity analyses that examine how the LR changes under different modeling choices, parameter estimates, or background population selections. For example, when evaluating glass evidence based on refractive index measurements, the calculated LR may vary substantially depending on the statistical model used to represent the distribution of refractive indices in the relevant population [25]. Similarly, in automated fingerprint comparison systems, the LR depends on the specific algorithm and score calibration method employed [25].