Beyond Certainty: The Paradigm Shift from Categorical to Probabilistic Interpretation in Forensic Chemistry

This article explores the critical evolution in forensic chemistry from traditional, categorical reporting towards modern, probabilistic frameworks.

Beyond Certainty: The Paradigm Shift from Categorical to Probabilistic Interpretation in Forensic Chemistry

Abstract

This article explores the critical evolution in forensic chemistry from traditional, categorical reporting towards modern, probabilistic frameworks. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive analysis of the foundational principles, methodological applications, and practical challenges of this transition. The scope covers the limitations of categorical statements, the implementation of statistical and machine learning models for evaluative reporting, strategies for overcoming data and validation hurdles, and the comparative efficacy of different approaches. By synthesizing current research and future directions, this review serves as a guide for integrating robust, transparent, and quantitatively sound practices into forensic and biomedical chemical analysis.

Defining the Divide: Categorical Certainty vs. Probabilistic Strength of Evidence

In scientific fields ranging from forensic chemistry to industrial material production, the communication of findings has traditionally relied on categorical reporting—definitive, binary statements about the identity or conformity of a substance. This legacy is deeply rooted in the practices established by standards organizations, which provide the foundational benchmarks for quality and safety. ASTM International, formerly known as the American Society for Testing and Materials, is a preeminent such body, developing technical standards for a wide array of materials, including metals, paints, and polymers [1] [2]. These standards create a common technical language that ensures reliability, consistency, and performance of materials across global industries [1].

The very structure of an ASTM standard designation, such as A967 for stainless steel passivation, embodies a categorical philosophy [3]. It presents a set of definitive pass/fail criteria—specific chemical treatment procedures, precise concentration parameters, and validated testing methods—against which a material or process is judged to be either compliant or non-compliant [3]. This framework of absolute conformity provides the backbone for material selection in critical applications, from medical implants to construction, and has historically influenced the broader scientific culture of evidence interpretation, including within forensic chemistry [1] [3]. This article explores the legacy of this categorical system, its interplay with emerging probabilistic models of interpretation, and its practical application in modern research and development.

ASTM Standards: A Paradigm of Definitive Classification

ASTM International operates through a collaborative process involving thousands of experts from industry, academia, and government to establish voluntary consensus standards [2]. These standards are meticulously categorized to address every aspect of material evaluation, functioning as a comprehensive system for ensuring quality and safety [4].

The Anatomy of an ASTM Standard

An ASTM code is a precise language in itself. Decoding its structure reveals the systematic approach to material specification. For example, the standard "ASTM A582/A582M-95b (2000), Grade 303Se" can be broken down as follows [1]:

- A: The prefix letter designating a ferrous metal (steel).

- 582: A sequentially assigned number identifying the specific standard.

- M: Signifies that the standard uses rationalized SI units.

- 95b: Indicates the year of adoption (1995) and the third revision that year ('b').

- (2000): The year the standard was last reapproved.

- Grade 303Se: The specific grade of steel, in this case, a selenium-modified version of grade 303.

This detailed nomenclature ensures unambiguous communication and traceability for every material and process governed by a standard [1].

Types of ASTM Standards and Their Categorical Nature

ASTM standards are developed to provide clear, actionable, and definitive guidance. They are organized into distinct categories, each serving a specific function in the quality assurance ecosystem, as outlined in the table below [3] [4].

Table: Categories of ASTM Standards and Their Functions

| Standard Type | Primary Function | Example |

|---|---|---|

| Test Method | Defines an exact procedure for conducting a test to generate reproducible data. | Procedures for measuring tensile strength or hardness. |

| Specification | Establishes explicit requirements a material or product must meet to be deemed compliant. | ASTM A967 specifying chemical treatment procedures for passivating stainless steel. |

| Practice | Provides detailed instructions for performing specific operations without generating a test result. | Procedures for cleaning equipment or preparing test samples. |

| Guide | Offers a collection of information or series of options without recommending a specific course of action. | Guidance on selecting appropriate passivation treatments for different stainless steel grades. |

| Terminology | Defines terms, symbols, and abbreviations to remove ambiguity from technical communication. | Standardized definitions for technical terms used across all other standards. |

Categorical vs. Probabilistic Interpretation in Forensic Chemistry

The definitive nature of ASTM standards reflects a categorical interpretation framework, which has parallels in other scientific disciplines. In forensic chemistry, this has traditionally manifested in expert opinions stating that a seized drug sample does or does not contain a controlled substance, or that two samples do or do not originate from the same source, with an implied absolute certainty [5]. This binary reporting provides a simple, easily understood conclusion for the legal system.

However, a paradigm shift is underway toward probabilistic reporting, which assigns a statistical likelihood or weight to a given finding [5] [6]. Instead of a definitive "match," a probabilistic approach might state that the observed chemical characteristics are, for example, 100 times more likely if the two samples have a common origin than if they do not. This approach aims to provide a more scientifically rigorous and transparent representation of the evidence, acknowledging the inherent uncertainties in analytical measurements and the potential for overlapping chemical profiles in different sources [6].

Table: Comparison of Categorical and Probabilistic Reporting Frameworks

| Aspect | Categorical Reporting | Probabilistic Reporting |

|---|---|---|

| Conclusion Type | Definitive, binary (e.g., match/no match, pass/fail) | Statistical, expressed as a likelihood ratio or probability |

| Underlying Mindset | Conforms to a fixed, pre-defined standard or classification | Evaluates evidence on a continuous scale of support |

| Handling of Uncertainty | Often implicitly discounted or subsumed into the binary decision | Explicitly quantified and reported as part of the conclusion |

| Primary Advantage | Simplicity, clarity, and ease of communication for decision-makers | Higher scientific rigor and nuanced expression of evidential value |

| Primary Challenge | Potential for overstating the conclusiveness of findings | Complexity in calculation and communication to non-experts |

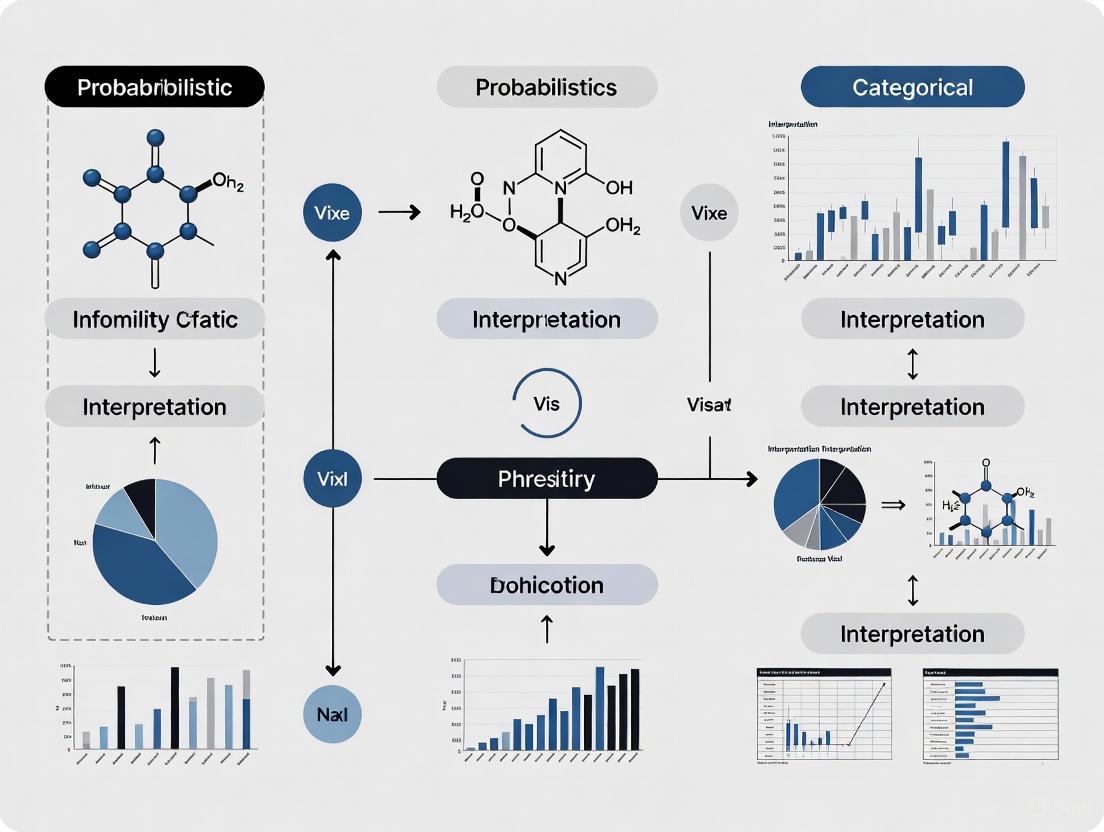

The following diagram illustrates the logical flow of evidence interpretation within these two competing frameworks.

Experimental Protocols: Bridging Categorical Standards and Probabilistic Data

Experimental data forms the bridge between rigid categorical standards and the emerging world of probabilistic interpretation. The following section outlines standard methodologies for material verification, which can produce data suitable for both frameworks.

Detailed Methodology: Passivation Quality Verification per ASTM A967

This experiment assesses the effectiveness of a passivation treatment on 300-series stainless steel, a critical process for enhancing corrosion resistance in medical and aerospace components [3].

1. Principle: The passivation process removes exogenous iron and promotes the formation of a protective, chromium-rich oxide layer on the stainless steel surface. The test verifies the integrity of this layer and the absence of free iron contamination [3].

2. Equipment & Reagents:

- Passivation Treatment Line: Configured for immersion in nitric acid or citric acid baths per A967 specifications.

- Test Chambers: Designed for salt spray (ASTM B117), high humidity, or water immersion tests.

- Analytical Balance: With precision to 0.1 mg.

- Reagents: Copper sulfate solution (5-10 g CuSO₄·5H₂O in 100 mL distilled water), hydrochloric acid (1:1 v/v), and potassium ferricyanide indicator solution, as specified in the standard test methods [3].

3. Procedure:

- Step 1: Sample Preparation. Cut stainless steel coupons to a standard size (e.g., 100 mm x 150 mm). Degrease and clean all samples thoroughly per ASTM A380 to remove any organic residues or soils [3].

- Step 2: Passivation Treatment. Immerse the cleaned coupons in the appropriate acid bath as defined by A967 for the specific grade of stainless steel. For example, treat Type 304 stainless steel in a 20-25% v/v nitric acid bath with sodium dichromate at 120-130°F for 20 minutes. Rinse thoroughly with deionized water and dry [3].

- Step 3: Post-Treatment Verification Testing.

- Copper Sulfate Test: Swab the entire surface of the passivated coupon with the prepared copper sulfate solution or immerse it for 6 minutes. Observe for any deposition of metallic copper, which indicates the presence of free iron and constitutes a test failure [3].

- Salt Spray Test: Place the passivated coupon in a salt spray (fog) apparatus operating per ASTM B117. Examine periodically for signs of rust over a duration specified in the standard (e.g., 2 hours for a rapid test, or longer for more stringent verification) [3].

- Step 4: Data Recording. Document the appearance of each coupon after testing, noting any presence of copper deposition or corrosion products. A categorical "pass" is assigned if no failure is observed. For a probabilistic approach, the time-to-failure or the percentage of surface area affected could be quantified and used in a statistical model to assign a likelihood of conformity under specified environmental conditions.

Example Data: Categorical vs. Probabilistic Interpretation of Passivation Test Results

The data generated from the above protocol can be reported in a purely categorical manner or used to generate probabilistic insights, as shown in the following comparative table.

Table: Example Passivation Test Results for Stainless Steel Grades (Hypothetical Data)

| Steel Grade | Passivation Treatment | Categorical Result (Pass/Fail per A967) | Time to Failure in Salt Spray (hours) | Relative Likelihood of Conforming to Spec |

|---|---|---|---|---|

| 304 Stainless | Nitric Acid, 25%, 25 min | Pass | >500 | Extremely High (>99.9%) |

| 304 Stainless | Citric Acid, 4%, 10 min | Fail | 48 | Low (<5%) |

| 316 Stainless | Nitric Acid, 25%, 25 min | Pass | >600 | Extremely High (>99.9%) |

| 416 Stainless | Nitric Acid, 25%, 25 min | Fail | 2 | Very Low (<1%) |

The Scientist's Toolkit: Essential Reagents and Materials for Compliance Testing

Adherence to ASTM standards requires the use of specific, high-purity reagents and materials. The following toolkit details critical items for conducting standardized experiments, such as the passivation verification described above.

Table: Key Research Reagent Solutions for ASTM-Compliant Testing

| Research Reagent/Material | Technical Function | Example Application in ASTM Standards |

|---|---|---|

| Nitric Acid (HNO₃) | Oxidizing mineral acid used to dissolve free iron from the surface and promote the formation of the passive chromium oxide layer. | Primary chemical for passivation treatments of stainless steel (ASTM A967) [3]. |

| Citric Acid (C₆H₈O₇) | Organic chelating agent that binds to and removes free iron ions from the metal surface; considered a safer, "greener" alternative. | Alternative passivation treatment for certain stainless steel grades (ASTM A967) [3]. |

| Copper Sulfate (CuSO₄) | Reacts with free iron particles on the surface to deposit metallic copper, providing a visual indicator of passivation failure. | Key reagent in the copper sulfate test for verifying passivation quality (ASTM A967) [3]. |

| Potassium Ferricyanide | Chemical indicator used in combination with nitric acid to detect the presence of free iron on the surface of stainless steel. | Component of the ferricyanide-nitric acid spot test (ASTM A967) [3]. |

| Sodium Chloride (NaCl) | Ionic compound used to create a corrosive saline environment for accelerated corrosion testing. | Primary component of the electrolyte solution in salt spray (fog) testing (ASTM B117) [3]. |

The legacy of categorical reporting, as exemplified by the definitive nature of ASTM standards, has provided an indispensable foundation for quality control, safety, and interoperability across global industries [1] [2]. Its strength lies in its clarity and its ability to deliver unambiguous decisions for engineers and regulators. However, the rise of probabilistic interpretation in fields like forensic chemistry highlights a growing recognition of the need for more nuanced reporting that quantifies and communicates uncertainty [5] [6].

The future of scientific evidence interpretation does not necessarily lie in the wholesale replacement of one framework by the other. Instead, a synergistic approach is emerging. The rigorous, standardized experimental protocols defined by categorical systems like ASTM can generate the high-quality, reproducible data necessary for robust probabilistic models. In this integrated view, categorical standards provide the critical baseline for material and method qualification, while probabilistic methods offer a sophisticated tool for interpreting complex data in cases where absolutes are scientifically untenable. For researchers and drug development professionals, mastering both frameworks is becoming essential for driving innovation while maintaining the highest standards of scientific rigor and accountability.

For decades, forensic chemistry and DNA analysis relied on categorical interpretation, where examiners would opine that evidence did or did not originate from a particular source. This binary framework is increasingly being supplanted by probabilistic genotyping (PG) systems that calculate Likelihood Ratios (LRs) to quantify the strength of forensic evidence [7] [5]. This paradigm shift represents a fundamental transformation in how forensic scientists communicate evidential value, moving from assertive statements to calibrated expressions of probability that more accurately represent the scientific method.

The LR provides a mathematically robust framework for updating beliefs about competing propositions based on new evidence. Within forensic chemistry, particularly for DNA mixtures, continuous PG systems have become the default method for calculating LRs for competing propositions about the contributors to a DNA sample [7]. This framework reframes forensic interpretation as a structured and transparent process, replacing intuition-driven reasoning with a quantitative method that allows probabilities to evolve dynamically as evidence accrues [8].

Theoretical Foundation: Likelihood Ratios and Bayesian Interpretation

Core Mathematical Framework

The Likelihood Ratio represents the heart of the Bayesian interpretive framework for forensic evidence. It is formally defined as the ratio of two conditional probabilities:

LR = P(E|H₁) / P(E|H₂) [7]

Where:

- E represents the observed evidence (e.g., DNA profile)

- H₁ and H₂ represent two competing propositions (typically the prosecution and defense hypotheses)

- P(E|H₁) is the probability of observing the evidence if proposition H₁ is true

- P(E|H₂) is the probability of observing the evidence if proposition H₂ is true

The resulting LR value quantifies how much more likely the evidence is under one proposition compared to the other. An LR > 1 supports H₁, while an LR < 1 supports H₂. The magnitude of the LR indicates the strength of the evidence, with values further from 1 providing stronger support [7].

Bayesian Hypothesis Generation in Scientific Reasoning

The Bayesian framework extends beyond mere hypothesis testing to what has been termed Bayesian Hypothesis Generation (BHG). This formal probabilistic framework structures belief-updating by defining priors, estimating likelihood ratios, and updating posteriors [8]. Unlike Bayesian hypothesis testing (BHT), which responds to data within established theoretical frameworks, BHG is forward-looking—it applies Bayesian logic to evaluate whether a novel hypothesis is worth pursuing before new data exist, relying on indirect signals or biological plausibility [8].

In practical terms, BHG reframes the earliest phase of scientific inquiry as a structured and transparent process. Rather than dismissing novel or uncertain hypotheses as epistemically weak, BHG offers a rational framework for evaluating their plausibility based on priors, likelihoods, and the explanatory value of early observations [8]. This approach is particularly valuable in forensic contexts where evidence may be complex or ambiguous.

Comparative Analysis of Probabilistic Genotyping Systems

Multiple continuous probabilistic genotyping systems have been developed and validated for forensic DNA analysis. These systems employ sophisticated algorithms to model the probability distributions of observed peak heights in STR electropherograms under different scenarios, which are then used to generate likelihoods for competing propositions [7].

Table 1: Major Continuous Probabilistic Genotyping Systems

| System Name | Development Model | Key Features | Algorithmic Approach |

|---|---|---|---|

| STRmix | Commercial [7] | Models peak height variance and stutter [7] | Markov chain Monte Carlo (MCMC) simulation [7] |

| TrueAllele | Commercial [7] | Calculates LRs for DNA mixtures [7] | Numerical methods and probabilistic simulations [7] |

| EuroForMix | Open Source [7] | Extended version of Cowell et al. model [7] | Simultaneous probabilistic simulations of multiple variables [7] |

| DNAxs | Open Source [7] | Extended version of Cowell et al. model [7] | Models laboratory-specific processes and artefacts [7] |

| DNA·VIEW | Commercial [7] | Generates LRs for complex mixtures [7] | Numerical methods rather than analytical solutions alone [7] |

Performance Comparison and Reproducibility

Inter-laboratory comparisons represent a standard feature of forensic DNA analysis methods, indicating the reproducibility of a particular method across different laboratories and the variance of quantitative results [7]. Such comparisons are essential for demonstrating consistency in results from multiple laboratories and help ensure equality of justice outcomes across jurisdictions [7].

Recent studies have challenged the assumption that LRs produced by continuous PG are unique and cannot be compared across systems. Research indicates there are specific conditions defining particular DNA mixtures that can produce an aspirational LR, thereby providing a measure of reproducibility for DNA profiling systems incorporating PG [7]. Such DNA mixtures could serve as the basis for inter-laboratory comparisons, even when different STR amplification kits are employed [7].

Table 2: Performance Characteristics of Probabilistic Genotyping Systems

| Performance Metric | STRmix | EuroForMix | DNAxs | TrueAllele |

|---|---|---|---|---|

| Reproducibility across laboratories | Demonstrated with defined mixtures [7] | Demonstrated with defined mixtures [7] | Validated in 5 laboratories [7] | Commercial implementation available [7] |

| Handling of complex mixtures | Capable with MCMC [7] | Capable with probabilistic simulations [7] | Capable with probabilistic simulations [7] | Capable with numerical methods [7] |

| Inter-system comparison | Possible under specific conditions [7] | Possible under specific conditions [7] | LRs mostly within an order of magnitude [7] | Commercial implementation available [7] |

| Variance in LR estimation | Intra-model variability increases with contributor number and low template [7] | Shows reproducibility for high template amounts [7] | LRs mostly within an order of magnitude for same data [7] | Uses numerical methods and simulations [7] |

Bright et al. proposed a series of tests for validating PG systems using single source, simulated major/minor (3:1) mixtures and simulated balanced (1:1) mixtures [7]. Their results showed good agreement between expected results, continuous PG, and semicontinuous PG for single source and balanced profiles, though continuous PG yielded higher LRs than semicontinuous PG for major/minor profiles, as expected from the extra peak height information considered by continuous PG [7].

Experimental Protocols and Methodologies

Standardized Workflow for Probabilistic Genotyping

The following diagram illustrates the generalized experimental workflow for conducting probabilistic genotyping analysis in forensic chemistry, synthesizing methodologies from multiple established systems:

Inter-Laboratory Comparison Protocol

For inter-laboratory comparisons of PG systems, researchers have developed specific protocols to ensure meaningful results:

Sample Preparation: Defined DNA mixtures are created with specific contributor ratios and template amounts. These include single-source samples, two-person mixtures (both balanced and unbalanced), and three-person mixtures [7].

Data Generation: Multiple laboratories process the same DNA samples using their standard STR amplification kits and capillary electrophoresis protocols. This incorporates realistic laboratory-to-laboratory variation in instrumentation and processes [7].

PG Analysis: Each laboratory analyzes the electropherogram data using their preferred probabilistic genotyping system, calculating LRs for predefined propositions [7].

LR Comparison: The resulting LRs are compared across systems and laboratories. Studies have shown that for high DNA template amounts, LRs from different PG systems (DNA·VIEW, STRmix, and EuroForMix) are reproducible across a wide range of mixture types and contributor numbers [7].

Statistical Analysis: Variance components are estimated, including intra-system variability (through replicate analyses) and inter-system variability. LR values are often binned into ranges corresponding to verbal expressions of evidential strength to facilitate comparison [7].

Bayesian Reasoning in Diagnostic Contexts

The Bayesian framework extends beyond forensic chemistry into medical diagnostics, where similar challenges in test interpretation occur. A 2025 randomized-controlled crossover trial compared how effectively medical students calculated positive predictive values (PPVs) using natural frequencies versus odds/likelihood ratio formats [9].

The study found that while the proportion of correct PPVs for a single test was significantly higher with natural frequencies (36.2%) compared to the odds/LR format (21.6%), the opposite pattern emerged for sequential testing: the proportion of correct PPVs after two sequential positive tests was significantly higher in the odds/LR format (10.6%) compared to natural frequencies (4.9%) [9]. This demonstrates the particular utility of likelihood ratios for complex, sequential diagnostic decisions.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Probabilistic Genotyping

| Reagent/Material | Function | Application in PG Workflow |

|---|---|---|

| STR Amplification Kits (e.g., GlobalFiler, PowerPlex) | Simultaneous amplification of multiple short tandem repeat (STR) loci | Generates the DNA profiles used for probabilistic genotyping analysis [7] |

| Quantification Standards | Accurate measurement of DNA concentration | Ensures optimal amplification and informs PG models about template amount [7] |

| Capillary Electrophoresis Systems | Separation and detection of amplified STR fragments | Generates electropherograms with peak height data essential for continuous PG [7] |

| Probabilistic Genotyping Software (STRmix, EuroForMix, etc.) | Calculation of likelihood ratios from complex DNA mixtures | Implements mathematical models to evaluate competing propositions [7] |

| Reference DNA Samples | Known profiles for comparison and validation | Provides ground truth for evaluating PG system performance and reliability [7] |

| Validation Sets (defined mixtures) | System performance assessment | Tests PG system reproducibility and reliability across different laboratories [7] |

The adoption of likelihood ratios and Bayesian interpretation represents a significant advancement in forensic chemistry, replacing categorical conclusions with transparent, quantitative assessments of evidential strength. The probabilistic framework acknowledges and quantifies uncertainty rather than ignoring it, aligning forensic science more closely with the scientific method.

As probabilistic genotyping systems continue to evolve, ongoing inter-laboratory comparisons and validation studies will be essential for establishing reliability and reproducibility across different platforms and methodologies [7]. The future of forensic chemistry lies in embracing these probabilistic frameworks while maintaining rigorous standards of validation and interpretation, ensuring that forensic evidence continues to meet the highest standards of scientific rigor and contributes meaningfully to the administration of justice.

The 2009 NAS Report as a Catalyst for Change in Forensic Science

The 2009 National Academy of Sciences (NAS) report, "Strengthening Forensic Science in the United States: A Path Forward," represents a watershed moment in the history of forensic science [10]. This groundbreaking report provided a comprehensive critique of the field, noting that with the exception of nuclear DNA analysis, no forensic method had been "rigorously shown to have the capacity to consistently, and with a high degree of certainty, demonstrate a connection between evidence and a specific individual or source" [11] [12]. The report identified a "notable dearth of peer-reviewed, published studies establishing the scientific bases and validity of many forensic methods" [11], fundamentally challenging the perception of forensic evidence's reliability that had prevailed for decades.

In the years following its publication, the NAS report has catalyzed an ongoing paradigm shift in forensic science, particularly in the interpretation and reporting of evidence. This shift has centered on moving from traditional categorical reporting toward more scientifically rigorous probabilistic reporting [12]. Where categorical reporting requires analysts to make definitive decisions about evidence classification or source identification, probabilistic reporting communicates the strength of evidence using statistical measures, typically likelihood ratios, allowing for more transparent expression of uncertainty [12]. This transition represents a fundamental change in how forensic evidence is conceptualized, analyzed, and presented in legal contexts.

The NAS Critique and Its Immediate Impact

Fundamental Limitations Identified in Forensic Science

The 2009 NAS report provided a systematic analysis of the shortcomings across multiple forensic disciplines. The report highlighted that commonly used techniques like bite mark analysis, microscopic hair analysis, shoe print comparisons, handwriting comparisons, fingerprint examination, and firearms and toolmark examinations lacked sufficient scientific validation [11]. According to the report, these methods did not "have the capacity to consistently, and with a high degree of certainty, demonstrate a connection between evidence and a specific individual or source" [11].

The report identified several key challenges contributing to these limitations:

- Absence of standardization in operational procedures across laboratories and jurisdictions

- Lack of uniformity in certification of practitioners or accreditation of crime laboratories

- Unevenness in techniques, methodologies, reliability, and error rates across disciplines

- Insufficient research on established limits and measures of performance [13]

These deficiencies were particularly pronounced in disciplines relying on pattern recognition and subjective interpretation, where contextual bias and the absence of robust scientific foundations threatened the reliability of evidence presented in courtrooms.

Initial Responses and Recognition

The NAS report received immediate recognition from scientific and legal communities. Justice Scalia cited the report within three months of its publication in a Supreme Court decision, noting that "Serious deficiencies have been found in the forensic evidence used in criminal trials" [10]. Both the Senate and House held hearings on the report's findings, and legislation was introduced in Congress to address the identified issues [10].

The report was variously described in legal literature as a "blockbuster," "a watershed," "a scathing critique," "a milestone," and "pioneering" [10]. This recognition reflected the profound impact the report had in challenging long-held assumptions about forensic science and catalyzing calls for reform.

Methodological Evolution: From Categorical to Probabilistic Reporting

Traditional Categorical Reporting Framework

Categorical reporting has been the traditional approach in most forensic disciplines. This method requires analysts to make definitive decisions about evidence interpretation and report conclusions in categorical terms [12]. For example:

- In forensic analysis of ignitable liquid residues, the ASTM E1618-19 standard requires reporting samples as simply positive or negative for presence of such residues [12].

- In comparative glass analysis, ASTM E2927-16e1 mandates binary reporting of evidence as either an exclusion (different sources) or an inclusion (same source) [12].

This approach presents several scientific limitations. When reporting is done categorically, the expert's opinion often appears dogmatic, carries no indication of evidentiary strength or analyst uncertainty, and can appear totally subjective and open to bias [12]. The reporting terms used are frequently not clearly defined, don't convey the strength of the evidence, and don't support more than one interpretation of the evidence [12].

Probabilistic Reporting Framework

Probabilistic reporting, also called evaluative reporting, represents a more scientifically rigorous approach to forensic evidence interpretation. Under this framework, analysts report the strength of evidence in probabilistic terms, typically using a likelihood ratio, without offering a conclusive interpretation [12]. The likelihood ratio is expressed as two competing hypotheses:

- Prosecution hypothesis (Hp): The evidence is associated with the suspect

- Defense hypothesis (Hd): The evidence is associated with someone else

The likelihood ratio then represents the probability of the evidence under Hp divided by the probability of the evidence under Hd. This approach allows the court to interpret the statistical strength of the evidence while considering prior odds of the competing propositions [12].

Comparative Analysis of Reporting Methods

Table 1: Comparison of Categorical vs. Probabilistic Reporting Frameworks

| Aspect | Categorical Reporting | Probabilistic Reporting |

|---|---|---|

| Decision process | Analyst makes definitive decision about evidence | Analyst calculates strength of evidence without conclusive interpretation |

| Uncertainty expression | Rarely expresses uncertainty or error rates | Quantitatively expresses uncertainty through statistical measures |

| Scientific foundation | Often based on tradition and precedent | Grounded in statistical theory and empirical data |

| Transparency | Obscures decision-making process | Makes decision process more transparent |

| Jury interpretation | Easier for laypersons to understand but may be misleading | More accurate but potentially difficult for laypersons to interpret |

| Bias potential | Higher potential for cognitive bias | Lower potential for bias through quantitative framework |

| Current usage | Still dominant in many forensic disciplines | Gaining traction with support from scientific community |

Experimental and Statistical Foundations

Receiver Operating Characteristic (ROC) Framework

The relationship between categorical and probabilistic reporting can be understood and visualized through a decision theory construct known as the receiver operating characteristic (ROC) curve [12]. Originally developed by electrical engineers during World War II for RADAR operators, the ROC method is particularly useful for binary decisions between two competing propositions, making it highly applicable to forensic science [12].

The ROC curve is generated by plotting the true positive rate (TPR) against the false positive rate (FPR) across various decision thresholds. In forensic terms:

- True Positive Rate: Probability of correctly identifying a match when samples truly come from the same source

- False Positive Rate: Probability of incorrectly identifying a match when samples come from different sources

Each point on the ROC curve represents a different decision threshold, with the overall shape of the curve indicating the discriminative power of the forensic method. The area under the ROC curve provides a measure of overall performance, with larger areas indicating better discrimination [12].

The ROC Framework for Forensic Decision-Making illustrates how statistical analysis of evidence scores against ground truth data leads to optimized decision thresholds.

Error Classification and Statistical Foundations

The statistical framework for evaluating forensic evidence draws heavily on hypothesis testing approaches developed by Fisher, Neyman, and Pearson in the early 20th century [12]. Within this framework, two types of errors must be considered:

- Type I Error (False Positive): Incorrectly associating evidence with a source when no true association exists (probability designated as α)

- Type II Error (False Negative): Failing to associate evidence with a source when a true association exists (probability designated as β)

The Neyman-Pearson approach to classification seeks to limit the more serious type I error (false positive) while simultaneously minimizing type II errors (false negatives) [12]. In forensic science, where false convictions are of paramount concern, controlling false positive rates is particularly critical.

Implementation in Specific Disciplines

Different forensic disciplines have made varying progress in implementing probabilistic approaches:

- DNA Analysis: Recognized as the "forensic gold-standard" by the NAS report, DNA evidence has employed likelihood ratios to communicate evidence strength for many years, with continuing advances in reporting practices [12].

- Latent Print Analysis: Research has demonstrated that latent print comparison has achieved "foundational validity," with ongoing work to establish appropriate statistical frameworks for reporting [11] [14].

- Fire Debris and Glass Analysis: Recent research has focused on evaluating evidence strength using likelihood ratios, moving these disciplines toward probabilistic reporting [12].

- Firearms and Toolmark Analysis: This discipline has taken "strong steps toward achieving foundational validity" but continues to develop appropriate statistical frameworks [11].

Table 2: Essential Research Tools for Advancing Forensic Methodologies

| Tool/Resource | Function | Application in Forensic Research |

|---|---|---|

| Likelihood Ratio Framework | Quantifies strength of evidence for competing hypotheses | Foundation for probabilistic reporting across multiple disciplines |

| ROC Analysis | Visualizes relationship between true positive and false positive rates | Optimizing decision thresholds and evaluating method performance |

| Ground Truth Data Sets | Provides known-source samples with verified origins | Essential for validating methods and establishing error rates |

| Statistical Software Platforms | Implements complex statistical calculations and models | Calculating likelihood ratios, building classification models |

| ASTM Standards | Provides standardized procedures for evidence analysis | Ensuring consistency and reliability across laboratories |

| OSAC Registry Standards | Offers consensus-based practice standards | Implementing current best practices in forensic analysis |

| NIST Reference Materials | Supplies certified reference materials | Ensuring analytical accuracy and method validation |

Implementation Challenges and Practitioner Resistance

Cultural and Institutional Barriers

Despite the compelling scientific arguments for probabilistic reporting, implementation faces significant cultural and institutional barriers within the forensic science community. A recent survey of fingerprint examiners' attitudes toward probabilistic reporting found that 98% of respondents continue to report categorically with explicit or implicit statements of certainty [14].

The primary reasons for this resistance include:

- Comfort with traditional practices: Practitioners are accustomed to established reporting methods

- Concerns about defense exploitation: Fear that expressing uncertainty will be exploited by defense attorneys

- Institutional norms: Long-standing cultural expectations within forensic laboratories

- Resource constraints: Limited time and resources for implementing new approaches [14]

As one researcher noted, forensic practitioners "view this [probabilistic reporting] as having little gain and a lot of risk, and they are bound by what they are comfortable with" [14].

Standardization Efforts and Voluntary Adoption

The Organization of Scientific Area Committees for Forensic Science (OSAC), administered by the National Institute of Standards and Technology (NIST), was created in 2014 to address the lack of discipline-specific standards identified in the NAS report [14]. OSAC has developed and recommended 95 specific standards for crime labs and forensic practitioners, with 87 forensic science service providers declaring implementation of some of these standards [14].

However, significant challenges remain:

- Voluntary adoption: OSAC lacks authority to require adoption of standards

- Resource limitations: Crime laboratories face constant backlogs and limited resources

- Casework pressures: Practitioners must balance standards implementation with urgent casework demands [14]

As OSAC Program Manager John Paul Jones II noted, crime lab professionals constantly battle backlogs, and "everything they do involves analyzing evidence for pending cases," making it difficult to find time for implementing new standards [14].

Current Status and Future Directions

Progress Assessment

Fifteen years after the NAS report, significant progress has been made, but substantial work remains. The forensic science community has undergone what David Stoney describes as a "paradigm shift" characterized by:

- Expanded research involvement: Broader scientific community engagement with forensic science

- Increased external scrutiny: Greater critical examination of forensic methods by scientists outside the field

- Ongoing development: Recognition that improvement represents a continuum rather than an endpoint [14]

In 2019, the Honorable Harry T. Edwards assessed progress of the forensic science community as "still facing serious problems," noting that the fundamental issue identified in the NAS report remained: forensic practitioners often "didn't know what they didn't know" [12].

Legal System Adaptation

The courts have increasingly recognized the limitations of traditional forensic evidence. Senior United States District Judge Jed Rakoff noted that "the impact of the report, modest at first but gathering steam in recent years," combined with the work of the Innocence Project establishing that "questionable forensic science testimony was often associated with wrongful convictions," has caused "a growing number of judges to explore with much greater rigor than previously the reliability, and admissibility, of much forensic science testimony that they used to take for granted" [11].

This judicial scrutiny has been particularly evident in cases involving bite mark analysis, microscopic hair analysis, and other disciplines criticized in the NAS report. These efforts have led to exonerations of wrongfully convicted individuals such as Steven Chaney (bite mark evidence), George Perrot (microscopic hair comparison), Timothy Bridges (microscopic hair comparison), and Alfred Swinton (bite mark) [11].

The Evolution of Forensic Science Post-NAS Report shows the transition from pre-2009 limitations to ongoing developments catalyzed by the report's findings.

The 2009 NAS report has served as a powerful catalyst for change in forensic science, fundamentally challenging established practices and sparking a necessary transition toward more scientifically rigorous approaches. The shift from categorical to probabilistic reporting represents a cornerstone of this transformation, offering a more statistically sound framework for expressing the strength of forensic evidence.

While significant progress has been made in the years since the report's publication—including substantial research investment, standardization efforts, and increased scientific scrutiny—the transformation remains incomplete. The continued resistance from practitioners, the voluntary nature of standards adoption, and the challenges of implementing statistical approaches in legal settings highlight the complexity of reforming an established field.

The future of forensic science will likely involve continued integration of probabilistic approaches, development of more transparent reporting standards, and ongoing collaboration between forensic practitioners, research scientists, and legal stakeholders. As this evolution continues, the fundamental critique articulated in the NAS report will continue to guide efforts to strengthen the scientific foundations of forensic evidence and ensure its proper application in the pursuit of justice.

Forensic science has traditionally relied on categorical reporting, where analysts must render definitive conclusions about evidence by assigning it to specific classes or making binary source determinations [12]. Standards such as ASTM E1618-19 for fire debris analysis or ASTM E2927-16e1 for comparative glass analysis require analysts to report results in categorical terms—positive or negative for ignitable liquid residue, exclusion or inclusion for glass sources [12]. This methodological framework demands that the analyst makes the ultimate decision regarding the interpretation of evidence, presenting conclusions that often appear dogmatic and carry no indication of evidentiary strength or analytical uncertainty [12]. The 2009 National Academies of Science (NAS) report profoundly questioned this approach, finding that with the exception of nuclear DNA analysis, no forensic method has been rigorously shown to consistently demonstrate connections between evidence and specific sources with high certainty [12]. This critique underscores fundamental limitations in categorical methods that this analysis will explore in detail, focusing on how they obscure decision-making processes and introduce multiple forms of bias into forensic evaluations.

Core Limitations of Categorical Methods

Obscured Decision-Making Processes

Categorical reporting frameworks systematically obscure critical dimensions of forensic decision-making, primarily through the omission of evidentiary strength and the concealment of decision thresholds that determine categorical assignments.

Strength of Evidence Omission: Traditional categorical statements provide no quantitative information about how strongly the evidence supports a particular conclusion [12]. Without reference to evidentiary strength, the court cannot properly integrate the analyst's testimony into the overall evidence assessment, as the categorical conclusion stands alone without context about its reliability or limitations [12].

Unspecified Decision Thresholds: The critical thresholds that must be crossed for an analyst to declare an "identification" or "exclusion" remain implicit and undefined in categorical frameworks [12]. Different examiners may apply different internal thresholds for the same categorical conclusion, creating inconsistency and unpredictability in evidence interpretation.

Subjectivity and Opacity: Categorical reporting "obscures the decision-making process" by failing to reveal the analytical journey from data to conclusion [12]. The final categorical statement presents as an authoritative endpoint without transparency about the underlying reasoning, uncertainties, or alternative explanations that were considered and rejected.

The structural limitations of categorical methods create fertile ground for multiple cognitive biases to influence forensic decision-making. The table below summarizes key biases that particularly affect categorical frameworks:

Table 1: Cognitive Biases in Categorical Decision-Making

| Bias Type | Mechanism of Influence | Impact on Categorical Decisions |

|---|---|---|

| Confirmation Bias | Selective gathering or interpretation of information that supports initial conclusions [15] [16] | Analysts may disproportionately focus on features supporting their initial hypothesis while discounting contradictory evidence |

| Overconfidence Bias | Excessive optimism about the correctness of one's judgments [15] | Analysts may express unwarranted certainty in categorical conclusions, potentially overstating evidential value |

| Representative Bias | Judging situations based on perceived similarities rather than objective probabilities [15] | Analysts might make categorical calls based on pattern matching to ideal types rather than objective feature analysis |

| Anchoring Bias | Fixating on initial information and failing to adjust for subsequent data [15] [16] | Early impressions about evidence may unduly influence final categorical assignments |

The insulation from quantitative calibration represents perhaps the most significant bias-amplifying feature of categorical methods. Without quantitative feedback on performance, analysts operate in an echo chamber of subjective judgment, where erroneous categorical calls may never be identified or corrected [12]. This lack of calibration and feedback prevents the refinement of decision thresholds over time, potentially institutionalizing erroneous decision patterns.

Experimental Comparisons: Categorical vs. Probabilistic Approaches

Methodological Framework for Comparison

Research comparing categorical and probabilistic methods typically employs ground-truth known datasets where the true sources of evidence are definitively established. These datasets are evaluated using both traditional categorical protocols and emerging probabilistic frameworks, enabling direct comparison of performance metrics [12]. The receiver operating characteristic (ROC) curve methodology provides a particularly powerful framework for this comparison, originally developed for RADAR operators during World War II to distinguish between true targets and noise [12]. In forensic applications, ROC analysis plots the true positive rate (TPR) against the false positive rate (FPR) across various decision thresholds, creating a visual representation of the trade-off between sensitivity and specificity that characterizes any identification system [12].

Table 2: Experimental Protocol for Method Comparison Studies

| Protocol Phase | Categorical Approach | Probabilistic Approach |

|---|---|---|

| Sample Preparation | Ground-truth known samples with verified sources | Same ground-truth known samples with verified sources |

| Data Collection | Examiners render categorical conclusions (e.g., Identification, Inconclusive, Elimination) [17] | Quantitative feature extraction and statistical modeling |

| Analysis Method | Subjective pattern matching and professional judgment | Calculation of likelihood ratios based on statistical models |

| Output | Binary or ordinal categorical assignments | Continuous measures of evidentiary strength |

| Performance Assessment | Simple accuracy rates without discrimination measure | ROC curves with calculated AUC (Area Under Curve) |

Key Comparative Findings

Experimental studies directly comparing categorical and probabilistic approaches have yielded insightful results, particularly in fingerprint evidence analysis. A 2018 study by Garrett et al. presented a nationally representative sample of jury-eligible adults with a hypothetical robbery case featuring fingerprint evidence [5]. The research examined how participants evaluated evidence presented in either categorical terms or probabilistic terms with varying strength levels.

Table 3: Results from Fingerprint Evidence Comparison Study

| Evidence Presentation Format | Participant Assessment of Match Likelihood | Assessment of Guilt Likelihood |

|---|---|---|

| Categorical Conclusion | Baseline level | Baseline level |

| Strong Probabilistic Match | Similar to categorical | Similar to categorical |

| Weak Probabilistic Match | Reduced likelihood | Reduced likelihood |

The findings demonstrate that participants appropriately discriminated between strong and weak probabilistic evidence, reducing their assessments of match and guilt likelihood when presented with weaker probabilistic evidence [5]. However, participants exposed to categorical conclusions lacked this discriminative ability, as the categorical framework provided no information about evidence strength. This suggests that categorical reporting may over-simplify complex evidence for decision-makers, potentially leading to over-weighting of forensically weak evidence when presented categorically.

The Probabilistic Alternative: Likelihood Ratios and Statistical Frameworks

Foundations of Probabilistic Reporting

Probabilistic reporting represents a paradigm shift from definitive categorical conclusions to continuous measures of evidentiary strength, most commonly expressed through likelihood ratios (LR) [12]. The likelihood ratio framework evaluates evidence under two competing propositions—typically the prosecution hypothesis (Hp) and defense hypothesis (Hd)—and calculates the ratio of the probability of the evidence under each hypothesis [17]. This approach explicitly acknowledges that forensic evidence rarely provides absolute answers but rather strengthens or weakens particular propositions to varying degrees. The mathematical formulation is:

LR = P(E|Hp) / P(E|Hd)

Where P(E|Hp) represents the probability of observing the evidence if the prosecution's hypothesis is true, and P(E|Hd) represents the probability of observing the evidence if the defense's hypothesis is true [17]. This framework is "the logically correct framework for interpretation of forensic evidence" according to key organizations [17].

Relationship Between Categorical and Probabilistic Reporting

The receiver operating characteristic (ROC) curve provides a powerful conceptual bridge between categorical and probabilistic reporting [12]. Each point on an ROC curve represents a potential decision threshold for a categorical system, with corresponding true positive and false positive rates. The slope of a tangent to the curve at any point corresponds directly to a likelihood ratio value, creating a mathematical relationship between categorical decisions and their probabilistic equivalents [12]. This relationship reveals that every categorical decision implicitly contains a probabilistic value, though traditional categorical frameworks make this relationship explicit and calibrated.

Visualization 1: Probabilistic to Categorical Reporting Workflow

Implementing Transparent Reporting: ROC-Based Frameworks

Theoretical Foundation for ROC-Integrated Reporting

The integration of ROC curves into forensic reporting creates a transparent mechanism for connecting probabilistic evidence assessment to categorical reporting thresholds [12]. This approach acknowledges that categorical decisions are sometimes necessary for legal purposes but insists they should be derived from calibrated, transparent thresholds rather than subjective judgment. The ROC framework allows forensic systems to explicitly define their false positive tolerance and select decision thresholds that maximize true positives within that constraint, implementing a Neyman-Pearson approach to decision-making [12]. This method prioritizes controlling the more serious error type (typically false positives) while maximizing detection capability, creating a statistically principled approach to categorical decision-making.

Practical Implementation Framework

Implementing ROC-facilitated reporting requires systematic collection of ground-truth known datasets that represent the full spectrum of evidence quality and conditions encountered in casework [12]. These datasets are used to:

- Generate score distributions for same-source and different-source comparisons

- Construct ROC curves that visualize the discrimination power of the analytical system

- Select optimal decision thresholds based on explicit error trade-off preferences

- Validate threshold performance using separate test datasets to ensure reliability

For this framework to produce meaningful results in actual casework, the data used to train statistical models must be representative of both the particular examiner's performance and the specific conditions of the evidence being evaluated [17]. An examiner's performance can differ substantially from the average, and evidence characteristics significantly impact the reliability of conclusions [17]. Therefore, implementation requires careful attention to both examiner-specific calibration and condition-specific validation.

Visualization 2: ROC-Based Reporting Implementation

Essential Research Reagents and Tools

Table 4: Essential Research Tools for Forensic Method Development

| Tool Category | Specific Examples | Research Function |

|---|---|---|

| Statistical Software | R, Python with scikit-learn, specialized forensic packages | Implementation of statistical models and ROC analysis |

| Reference Databases | Ground-truth known samples of fingerprints, firearms, fibers, etc. [17] | Method validation and performance assessment |

| Chemometric Tools | Principal Component Analysis (PCA), Linear Discriminant Analysis (LDA), Support Vector Machines (SVM) [18] | Pattern recognition in complex chemical data |

| Likelihood Ratio Frameworks | Probabilistic genotyping software, continuous models for fingerprint evidence [17] | Quantitative evidence evaluation |

| Validation Materials | Blind proficiency tests, reference standards with known ground truth [17] | Method reliability testing and error rate estimation |

The limitations of categorical methods—obscured decision-making processes and vulnerability to multiple forms of bias—represent significant challenges to forensic science reliability and validity. The categorical framework's failure to communicate evidentiary strength and its insulation from quantitative calibration undermine both the accuracy and transparency of forensic conclusions. Probabilistic approaches centered on likelihood ratios and supported by ROC-based decision thresholds offer a scientifically rigorous alternative that preserves necessary categorical reporting while embedding it within a calibrated, transparent statistical framework. Implementing these approaches requires significant investment in ground-truth datasets, statistical training, and methodological validation, but offers the potential for forensic science to achieve the scientific rigor demanded by the NAS report and expected by the justice system.

In forensic science, the evaluation of evidence is structured through a hierarchy of propositions, a framework essential for providing logical, balanced, and transparent opinions in legal contexts. This framework distinguishes between source-level, activity-level, and offence-level propositions, each addressing different questions about the evidence. Furthermore, the interpretation of evidence under these propositions can be presented either categorically (conclusive statements) or probabilistically (using likelihood ratios to convey the strength of the evidence). This guide objectively compares these core concepts, underpinned by the ongoing methodological shift in forensic chemistry towards probabilistic interpretation, and provides structured comparisons, experimental data, and visual workflows to aid researcher understanding.

The hierarchy of propositions is a fundamental concept for the logical evaluation of forensic findings, helping scientists reason in the face of uncertainty [19]. It provides a structured way to address questions at different levels of case circumstances, moving from the specific source of a trace to the activities that led to its deposition and ultimately to the legal implications of those activities.

The value of evidence is critically dependent on the propositions defined, and the calculations given for different levels in the hierarchy are all separate [20]. This framework ensures that the scientific assessment remains within the boundaries of the forensic expert’s knowledge, while allowing the evidence to be contextualized for the court.

Defining the Core Terminology

Source-Level Propositions

Source-level propositions concern the origin of a specific piece of trace material. They address questions such as, "Is the suspect the source of the DNA found on the item?" or "Did this paint chip originate from that car?" [19]. The focus is purely on establishing a link between a recovered trace (from the crime scene) and a control sample (from a known source).

- Core Question: "Whose or what is this?"

- Scientific Assessment: Typically involves comparative analysis to determine if two samples share the same physical or chemical origin.

- Example Propositions:

- Prosecution Proposition (Hp): The DNA profile from the crime scene originates from the suspect.

- Defense Proposition (Hd): The DNA profile from the crime scene originates from an unknown, unrelated person [19].

Activity-Level Propositions

Activity-level propositions represent a higher level in the hierarchy and help the court address the question of "How did an individual’s cell material get there?" [20]. These propositions consider the transfer, persistence, and presence of material in the context of alleged activities. For instance, they help distinguish between direct transfer (e.g., from stabbing a victim) and indirect transfer (e.g., from meeting the victim the day before) [20].

- Core Question: "How did this evidence get here, and what activity does it support?"

- Scientific Assessment: Requires evaluating the probability of the evidence given specific activities, which involves understanding mechanisms of transfer and persistence. It is important to avoid using the word 'transfer' in the propositions themselves, as transfer is a factor for scientists to consider during interpretation, not a proposition for the court to assess [20].

- Example Propositions:

- Prosecution Proposition (Hp): The suspect stabbed the victim.

- Defense Proposition (Hd): The suspect met the victim the day before the incident, and the trace material was transferred indirectly [20].

Offence-Level Propositions

Offence-level propositions sit at the top of the hierarchy and relate directly to the ultimate issue before the court: whether a crime has been committed. These are typically outside the remit of the forensic scientist, whose expertise lies in evaluating the physical evidence, not legal guilt or innocence.

- Core Question: "Did the suspect commit the offence?"

- Scientific Assessment: A forensic scientist does not typically evaluate results directly given offence-level propositions. Instead, their evaluations at the source or activity level inform the court's deliberations on the ultimate issue [19].

- Example Propositions:

- Prosecution Proposition (Hp): The suspect committed the murder.

- Defense Proposition (Hd): The suspect is innocent of the murder.

The following table summarizes the key characteristics of each level in the hierarchy.

Table 1: Core Characteristics of Proposition Levels

| Proposition Level | Core Question | Focus of Assessment | Example |

|---|---|---|---|

| Source-Level | What or who is the origin of this trace? | Linking a trace to a specific source. | "This DNA profile originates from the suspect." |

| Activity-Level | How did this trace get here? | Evaluating transfer and persistence in the context of an activity. | "The suspect stabbed the victim vs. The suspect met the victim the day before." |

| Offence-Level | Was a crime committed? | The ultimate issue of guilt or innocence (generally for the court to decide). | "The suspect committed the murder." |

Probabilistic vs. Categorical Interpretation

A central thesis in modern forensic science is the debate between probabilistic and categorical reporting of evidence. This distinction cuts across all levels of the hierarchy of propositions.

Categorical Reporting

Categorical reporting requires the analyst to make a definitive decision and report their conclusion in absolute terms (e.g., inclusion/exclusion, match/no match) [12]. This approach can obscure the strength of the evidence and the decision-making process, potentially allowing for inconsistency and bias [12].

- Typical Language: "The samples match," "The substance is identified as cocaine," or "The suspect is excluded as the source."

- Role in the Justice Process: Often used in investigative opinions to help investigators make decisions about what happened or who could be involved [19].

- Limitations: Provides no indication of evidentiary strength or analyst uncertainty, and can appear totally subjective [12].

Probabilistic Reporting

Probabilistic reporting, in contrast, involves reporting the strength of the evidence in probabilistic terms, typically using a Likelihood Ratio (LR) [12]. The scientist assigns the probability of the evidence under each of two competing propositions to derive the LR [20]. This approach is a cornerstone of evaluative reporting for use in court [19].

- Core Formula:

LR = P(E | Hp) / P(E | Hd)P(E | Hp): Probability of the evidence given the prosecution proposition.P(E | Hd): Probability of the evidence given the defense proposition.

- Interpretation: An LR greater than 1 supports the prosecution's proposition; an LR less than 1 supports the defense's proposition.

- Advantages: Provides a transparent, logical, and balanced way to convey the weight of evidence, allowing the court to integrate it with other case facts [19].

Table 2: Comparison of Reporting Methods

| Feature | Categorical Reporting | Probabilistic Reporting |

|---|---|---|

| Output | A definitive conclusion (e.g., match, exclusion). | A Likelihood Ratio (LR) indicating the strength of the evidence. |

| Transparency | Low; obscures the strength of evidence and decision threshold. | High; makes the strength of the evidence explicit. |

| Role | Often used for investigative opinions [19]. | Typically used for evaluative opinions in court [19]. |

| Jury Interpretation | Easily understood but can be misleadingly dogmatic [12]. | Can be difficult for a lay jury to interpret without guidance [12]. |

| Foundation | Based on professional judgment and experience. | Based on calibrated probabilities and relevant data [12]. |

Experimental Protocols for Evaluative Reporting

The implementation of a robust evaluative report, particularly for activity-level propositions, follows a structured protocol.

Pre-Assessment and Formulating Propositions

The first step is pre-assessment, where the scientist reviews the case circumstances to define relevant propositions before knowing the analytical results [19]. The propositions must be:

- Case-specific: Grounded in the framework of the case circumstances.

- Mutually exclusive: Only one of the propositions can be true.

- Set at the same level: Both the prosecution and defense propositions must address the same level in the hierarchy (e.g., both activity-level) [19].

Data Requirements and Knowledge Bases

To assign probabilities for the likelihood ratio, the analyst must have relevant data. This necessitates further research and the collection of data to form knowledge bases [20]. For activity-level propositions, this could include data on:

- Transfer and Persistence: How easily a material transfers and how long it persists on various surfaces.

- Background Prevalence: How common a particular material is in the relevant environment.

The Use of Bayesian Networks

Bayesian Networks are graphical tools that are "extremely useful to help us think about a problem, because they force us to consider all relevant possibilities in a logical way" [20]. They provide a visual model to compute complex probabilities involving multiple interdependent factors, such as the various ways transfer could occur, which is essential for evaluating activity-level propositions [20].

Visualizing the Logical Workflow

The following diagram illustrates the logical flow from evidence analysis through the hierarchy of propositions to the final reporting method, highlighting the key questions and decision points for a forensic scientist.

Logical Workflow of Forensic Evidence Interpretation

The Scientist's Toolkit: Key Reagents and Materials

The application of these interpretive frameworks relies on a foundation of robust analytical chemistry. Below is a table of key reagents, tools, and methodologies essential for generating the data used in forensic interpretation.

Table 3: Essential Research Reagent Solutions and Methodologies

| Tool/Reagent/Method | Core Function | Role in Interpretation |

|---|---|---|

| Gas Chromatography-Mass Spectrometry (GC-MS) | Separates and provides a definitive "fingerprint" for volatile compounds [21]. | Gold-standard confirmatory test for drug analysis; provides data for source-level propositions. |

| Statistical Design of Experiments (DoE) | A mathematical tool to optimize analytical methods by evaluating multiple variables at once [22]. | Improves method robustness and efficiency, providing reliable data for probability assignment. |

| Likelihood Ratio (LR) Framework | A statistical formula to evaluate the strength of evidence under two competing propositions [20]. | The core mathematical engine for probabilistic reporting at all levels of the hierarchy. |

| Bayesian Networks | A graphical model representing probabilistic relationships between multiple variables [20]. | Aids in logically computing complex probabilities for activity-level propositions involving transfer. |

| Validated Methods (Phase II-IV) | Analytical methods whose performance characteristics (precision, accuracy) have been rigorously tested [23]. | Ensures the reliability of the underlying data used in any evaluative report. |

| Proficiency Test Samples | Samples provided by an external agency to test a laboratory's procedures and performance [24]. | Critical for quality assurance and for documenting low rates of misleading opinions. |

The clear distinction between source-level, activity-level, and offence-level propositions provides the essential scaffolding for a logical and transparent forensic evaluation. The ongoing paradigm shift from categorical to probabilistic reporting, facilitated by the Likelihood Ratio, represents the modern standard for evaluative opinions in court. This approach better respects the boundaries of scientific expertise, providing the court with the strength of the evidence rather than a potentially misleading categorical conclusion. For researchers and scientists, mastering this terminology and its associated methodologies—from optimized analytical techniques using DoE to the construction of Bayesian Networks—is fundamental to producing forensic evidence that is both scientifically robust and forensically relevant.

Implementing Probabilistic Frameworks: From Likelihood Ratios to Machine Learning

Forensic science is undergoing a fundamental transformation in how evidence is interpreted and reported in legal proceedings. Traditional categorical reporting requires analysts to make definitive decisions regarding evidence interpretation, assigning samples as matches or non-matches without quantifying uncertainty [12]. This approach has faced significant criticism, as it provides no indication of evidentiary strength or analyst uncertainty, potentially appearing subjective and open to bias [12]. In contrast, probabilistic reporting quantifies evidentiary strength statistically, typically using likelihood ratios (LRs) that assess the probability of the evidence under two competing hypotheses (e.g., same source versus different source) [25] [12]. This shift represents a move toward more transparent, measurable, and scientifically rigorous forensic practice that better communicates the probative value of forensic evidence to courts.

The 2009 National Academies of Science (NAS) report highlighted that with the exception of nuclear DNA analysis, no forensic method had been rigorously shown to consistently demonstrate connections between evidence and specific sources with high certainty [12]. This landmark assessment accelerated the adoption of statistical approaches in forensic science, particularly the likelihood ratio framework, which provides a quantitative measure of evidence strength that can be more objectively evaluated and validated [25]. The LR framework offers numerous benefits, including improved reproducibility, mitigated cognitive bias, reduced evaluation time, and more transparent comparisons between analytical models [25].

Theoretical Framework of Likelihood Ratio Models

Fundamental Principles and Mathematical Formulation

The likelihood ratio is a fundamental concept in forensic statistics that compares the probability of observing evidence under two competing hypotheses. Formally, the LR is expressed as:

LR = P(E|H₁)/P(E|H₂)

Where E represents the observed evidence, H₁ typically represents the prosecution hypothesis (e.g., the questioned and known samples originate from the same source), and H₂ typically represents the defense hypothesis (e.g., the questioned and known samples originate from different sources) [25]. The numerator represents the probability of observing the evidence if the prosecution hypothesis is true, while the denominator represents the probability of observing the same evidence if the defense hypothesis is true.

LR values greater than 1 support the prosecution hypothesis, with higher values indicating stronger support. Conversely, LR values less than 1 support the defense hypothesis, with values closer to 0 indicating stronger support for different sources. A LR equal to 1 indicates the evidence provides equal support for both hypotheses and is therefore non-discriminative [26].

Performance Metrics for LR System Validation

Robust validation of LR systems requires multiple performance metrics that assess different aspects of system behavior [25]. The following key metrics are essential for comprehensive evaluation:

- Discrimination: The system's ability to distinguish between same-source and different-source specimens, typically measured using the area under the ROC curve (AUC) [25].

- Calibration: The agreement between calculated LRs and actual ground truth, measured using metrics like the log-likelihood ratio cost (Cllr) [25] [26]. Well-calibrated systems should produce LRs >1 for same-source comparisons and <1 for different-source comparisons.

- Rates of Misleading Evidence (ROME): Quantitative measures of system error, including ROME for same-source comparisons (ROME-ss) and different-source comparisons (ROME-ds) [26]. These rates provide transparent information about system reliability under specific conditions.

Relationship Between Categorical and Probabilistic Reporting

The relationship between categorical decisions and probabilistic statements can be visualized and understood using receiver operating characteristic (ROC) curves [12]. ROC curves plot the true positive rate (sensitivity) against the false positive rate (1-specificity) across all possible decision thresholds. Each point on the ROC curve represents a potential decision threshold, with the slope of the tangent at any point corresponding to a likelihood ratio value [12]. This relationship provides a mathematical bridge between binary decisions and continuous probability measures, allowing forensic scientists to select decision thresholds based on explicit trade-offs between error rates according to the specific requirements of each case context.

Experimental Comparison of LR Modeling Approaches

Methodology for Comparative Performance Assessment

A comprehensive comparison of LR modeling approaches requires standardized experimental protocols across different forensic domains. The following methodologies represent current best practices for evaluating LR system performance:

3.1.1 Data Collection and Preparation

- Sample Set Composition: Assembling representative sample sets with known ground truth is fundamental. Studies should include both same-source and different-source specimens that reflect the natural variation encountered in casework [25] [26].

- Analytical Techniques: Standardized analytical protocols ensure reproducible data generation. Common techniques include gas chromatography-mass spectrometry (GC/MS) for chemical analysis [25] and laser ablation inductively coupled plasma mass spectrometry (LA-ICP-MS) for elemental analysis of materials like glass [26].

- Data Preprocessing: Appropriate data transformation and normalization techniques prepare raw data for statistical modeling. This may include LambertW transformations to achieve normality [25] and peak alignment algorithms for chromatographic data.

3.1.2 Model Implementation and Validation Framework

- Nested Cross-Validation: When limited data availability prevents separate training, validation, and test sets, nested cross-validation provides robust performance estimation while minimizing overfitting [25].

- Benchmarking Against Traditional Methods: Experimental LR systems should be compared against established statistical methods and human expert performance using the same dataset [25].

- Interlaboratory Studies: Multi-laboratory collaborations assess method robustness across different instruments, operators, and environmental conditions [26].

Table 1: Experimental Design for LR Model Comparison

| Experimental Component | Implementation Details | Performance Metrics |

|---|---|---|

| Sample Set | 136 diesel oil samples from Swedish gas stations/refineries (2015-2020) [25] | Ground truth establishment via known provenance |

| Analytical Method | Gas chromatography-mass spectrometry (GC/MS) with Agilent 7890A GC system [25] | Chromatographic peak resolution, retention time stability |

| Data Representations | Raw chromatographic signals vs. selected peak height ratios [25] | Feature discriminativity, computational efficiency |

| Validation Approach | Nested cross-validation with multiple folds [25] | Discrimination, calibration, rates of misleading evidence |

Performance Comparison of LR Modeling Strategies

Recent empirical studies have directly compared the performance of different LR modeling approaches across various evidence types. The following results highlight key performance differences:

3.2.1 Diesel Oil Analysis Using Chromatographic Data A comprehensive study compared three LR models for source attribution of diesel oil samples using gas chromatographic data [25]:

- Model A (Experimental): Score-based machine learning model using feature vectors from a convolutional neural network (CNN) trained on raw chromatographic signals.

- Model B (Benchmark): Score-based statistical model using similarity scores derived from ten selected peak height ratios.

- Model C (Benchmark): Feature-based statistical model constructing probability densities in a three-dimensional space defined by three peak height ratios.

Table 2: Performance Comparison of LR Models for Diesel Oil Attribution

| Model | Model Type | Median LR (H₁) | Median LR (H₂) | Discrimination Performance | Calibration Performance |

|---|---|---|---|---|---|

| Model A | Score-based CNN | ≈ 1800 | ≈ 0.001 | High discrimination | Good calibration with Cllr < 0.02 |

| Model B | Score-based statistical | ≈ 180 | ≈ 0.01 | Moderate discrimination | Good calibration with Cllr < 0.02 |

| Model C | Feature-based statistical | ≈ 3200 | ≈ 0.0003 | High discrimination | Good calibration with Cllr < 0.02 |