Bayes' Theorem in Forensic Science: A Framework for Statistical Evidence Evaluation

This article provides a comprehensive examination of the application of Bayes' Theorem for the evaluation of forensic evidence.

Bayes' Theorem in Forensic Science: A Framework for Statistical Evidence Evaluation

Abstract

This article provides a comprehensive examination of the application of Bayes' Theorem for the evaluation of forensic evidence. Tailored for researchers and forensic professionals, it covers the foundational principles of Bayesian reasoning, its methodological implementation in disciplines from DNA to trace evidence, and the critical challenges in its application, including the selection of prior probabilities and avoiding cognitive fallacies. It further explores validation strategies and compares Bayesian methods with classical statistical approaches, synthesizing key takeaways to outline future directions for robust and transparent forensic science practice.

The Bayesian Framework: Foundations for Forensic Reasoning

Mathematical Foundation

Bayes' Theorem provides a rigorous mathematical framework for updating the probability of a hypothesis based on new evidence. The theorem represents a fundamental rule for inverse probability, allowing calculation of conditional probabilities when the reverse conditional probability is known [1] [2].

Theorem Statement

Bayes' Theorem is mathematically stated as:

P(A|B) = [P(B|A) × P(A)] / P(B) [1]

Where:

- P(A|B) is the posterior probability - the probability of hypothesis A given evidence B

- P(B|A) is the likelihood - the probability of evidence B given that hypothesis A is true

- P(A) is the prior probability - the initial probability of hypothesis A before observing evidence B

- P(B) is the marginal probability - the total probability of evidence B under all possible hypotheses [1] [2]

For competing hypotheses, the second form of Bayes' Theorem is more practical:

P(H|E) = [P(H) × P(E|H)] / [P(H) × P(E|H) + P(¬H) × P(E|¬H)] [2]

Theorem Derivation

Bayes' Theorem derives from the definition of conditional probability. The probability of event A given event B is defined as:

P(A|B) = P(A ∩ B) / P(B), provided P(B) ≠ 0 [1]

Similarly, the probability of B given A is:

P(B|A) = P(A ∩ B) / P(A), provided P(A) ≠ 0 [1]

Solving for P(A ∩ B) in both equations and equating them gives:

P(A|B) × P(B) = P(B|A) × P(A)

Dividing both sides by P(B) yields Bayes' Theorem [1].

Bayesian Inference in Forensic Evidence Evaluation

Theoretical Framework for Forensic Science

Bayesian inference provides the mathematical foundation for interpreting forensic evidence through the likelihood ratio framework. When evaluating evidence E against competing propositions (H₁ and H₂), forensic scientists use the likelihood ratio:

LR = P(E|H₁) / P(E|H₂) [3]

This ratio quantifies how much more likely the evidence is under one proposition compared to the alternative. The posterior probability is then calculated by combining this likelihood ratio with prior odds [2] [3]:

Posterior Odds = Likelihood Ratio × Prior Odds

This framework discourages binary "yes/no" testimony and promotes transparency in forensic reporting by explicitly separating the statistical evidence from prior assumptions [3].

Bayesian Networks for Activity Level Propositions

Bayesian Networks (BNs) extend Bayes' Theorem to complex forensic scenarios with multiple dependent variables. Recent research has developed narrative Bayesian networks specifically for evaluating forensic fibre evidence given activity-level propositions [4] [5].

These networks provide:

- Transparent incorporation of case information

- Sensitivity assessment of evaluations to variations in data

- Interdisciplinary collaboration through alignment with approaches in other forensic disciplines [4]

A key advancement is the development of template Bayesian networks that handle disputes about the relation between an item and an activity. This is particularly valuable in interdisciplinary casework where multiple evidence types must be evaluated against a single set of activity-level propositions [6].

Table 1: Bayesian Network Applications in Forensic Science

| Network Type | Application | Key Features | Reference |

|---|---|---|---|

| Narrative Bayesian Network | Fibre evidence evaluation | Simplified methodology, accessible starting point for practitioners | [4] |

| Template Bayesian Network | Combining forensic evidence | Handles disputes about item-activity relations, interdisciplinary focus | [6] |

| Extended Template Model | Multiple case variations | Flexible framework adaptable to various forensic scenarios | [6] |

Experimental Protocols and Methodologies

Protocol for Constructing Narrative Bayesian Networks

The methodology for constructing narrative BNs for forensic fibre evidence involves [4] [5]:

- Case Scenario Definition: Develop an illustrative case scenario capturing the essence of cases where the template model applies

- Network Structure Development: Create three examples of BNs designed as accessible starting points for case-specific networks

- Variable Identification: Identify key variables and their probabilistic dependencies based on forensic expertise

- Sensitivity Analysis: Assess the evaluation's sensitivity to variations in input data and assumptions

- Validation: Verify network structure and conditional probabilities against known case outcomes and expert knowledge

This methodology emphasizes qualitative, narrative formats that are more accessible to both experts and courts, enhancing user-friendliness and accessibility [4].

Protocol for Template Bayesian Network Construction

For template BNs that combine multiple evidence types [6]:

- Proposition Definition: Define activity-level propositions considering disputes about actors, activities, and item-activity relationships

- Association Proposition Specification: Include a set of association propositions enabling combined evaluation of evidence concerning alleged activities and evidence concerning item usage

- Discipline Integration: Structure the network to accommodate evidence from different forensic disciplines

- Relation Uncertainty Incorporation: Model uncertainty about the connection between trace material and the offender (relevance of trace material)

- Likelihood Ratio Calculation: Derive likelihood ratios for competing propositions based on network computations

This protocol specifically addresses cases where the relationship between an item of interest and an activity is contested, which previous evaluative procedures at activity level failed to adequately account for [6].

Visualization of Bayesian Networks

Basic Bayesian Network Structure

Basic Bayesian Inference

Forensic Bayesian Network for Activity Level Propositions

Forensic Evidence Evaluation

Interdisciplinary Evidence Integration

Interdisciplinary Evidence Combination

Quantitative Data in Forensic Bayesian Analysis

Diagnostic Test Performance Metrics

Bayesian analysis is particularly valuable for interpreting diagnostic test results in contexts such as disease screening or forensic analysis. The following table summarizes key quantitative metrics used in these applications [1] [7]:

Table 2: Diagnostic Test Performance Metrics for Bayesian Analysis

| Metric | Definition | Formula | Application in Forensics | |

|---|---|---|---|---|

| Sensitivity (True Positive Rate) | Probability test is positive given condition is present | P(T+ | D+) | Ability to detect target evidence when present |

| Specificity (True Negative Rate) | Probability test is negative given condition is absent | P(T- | D-) | Ability to exclude non-target evidence when absent |

| Prevalence | Proportion of population with the condition | P(D+) | Base rate of target evidence in relevant population | |

| Positive Predictive Value | Probability condition is present given positive test | P(D+ | T+) | Probability evidence is relevant given positive finding |

| Negative Predictive Value | Probability condition is absent given negative test | P(D- | T-) | Probability evidence is not relevant given negative finding |

Case Study: Disease Diagnosis with Bayesian Analysis

Consider a medical diagnostic scenario with the following parameters [1]:

- Disease prevalence: 0.05 (5%)

- Test sensitivity: 0.90 (90%)

- Test specificity: 0.80 (80%)

The probability of having the disease given a positive test result is calculated as:

P(Disease|Positive) = [P(Positive|Disease) × P(Disease)] / [P(Positive|Disease) × P(Disease) + P(Positive|No Disease) × P(No Disease)]

P(Disease|Positive) = [0.90 × 0.05] / [0.90 × 0.05 + 0.20 × 0.95] = 0.045 / (0.045 + 0.19) ≈ 0.191 or 19.1% [1]

This demonstrates how even with a test that appears accurate (90% sensitivity), the posterior probability of having the disease can be relatively low when prevalence is low, due to false positives.

The Scientist's Toolkit: Bayesian Research Reagents

Table 3: Essential Methodological Components for Bayesian Forensic Research

| Component | Function | Application Example |

|---|---|---|

| Prior Probability Distribution | Quantifies initial beliefs about hypotheses before considering current evidence | Base rate of fibre transfer in alleged activity scenarios [4] |

| Likelihood Function | Quantifies how probable observed evidence is under different hypotheses | Probability of finding matching fibres given specific transfer scenarios [6] |

| Posterior Probability Distribution | Updated belief about hypotheses after considering evidence | Probability that suspect performed activity given all forensic findings [3] |

| Likelihood Ratio | Measures diagnostic strength of evidence for distinguishing hypotheses | Ratio of probabilities of evidence under prosecution and defence propositions [3] |

| Bayesian Network Software | Computational tools for implementing complex probabilistic models | Constructing template networks for interdisciplinary evidence evaluation [6] |

| Sensitivity Analysis Framework | Assesses robustness of conclusions to changes in inputs | Evaluating how variations in transfer probabilities affect activity level propositions [4] |

Advanced Applications in Forensic Science

Template Models for Interdisciplinary Evidence

Recent advances in Bayesian networks for forensic science include template models that enable evaluation of combined evidence from different disciplines. These models specifically address cases where [6]:

- The actor (who performed the activity) is disputed

- The activity itself is disputed

- The relationship between an item and an activity is disputed

The template model includes association propositions that enable combined evaluation of evidence concerning alleged activities of the suspect and evidence concerning the use of an alleged item in those activities. This is particularly valuable for interdisciplinary casework where DNA evidence, fibre evidence, and other trace materials must be evaluated against a single set of activity-level propositions [6].

Historical Development and Modern Implementation

Bayes' Theorem was discovered by Thomas Bayes (c. 1701-1761) and independently developed by Pierre-Simon Laplace (1749-1827) [8] [9]. After periods of controversy and decline, Bayesian methods have emerged as powerful tools across numerous fields, including forensic science [8].

The modern resurgence stems from:

- Computational advances enabling complex Bayesian calculations

- Theoretical developments in Bayesian networks and probabilistic reasoning

- Forensic science reforms emphasizing quantitative evidence evaluation

- Judicial recognition of the logical framework provided by Bayesian methods [3]

Bayesian inference now provides the mathematical foundation for interpreting forensic evidence through the likelihood ratio framework, which has been widely applied and validated across forensic disciplines including DNA analysis, fingerprint examination, voice comparison, and trace evidence evaluation [3].

The evolution of forensic evidence evaluation represents a paradigm shift from qualitative, experience-based reasoning to a structured, quantitative science. This transformation is exemplified by the journey from Wigmore Charts, an early graphical method for analyzing legal evidence, to the sophisticated Bayesian networks and probabilistic frameworks that underpin modern forensic science [10]. This whitepaper examines this historical progression, framing it within the context of Bayes' theorem and its critical role in contemporary evidence evaluation for researchers and scientific professionals.

The foundational work of John Henry Wigmore, developed in the early 20th century, provided the first systematic attempt to map the complex relationships between items of evidence and legal hypotheses using charts composed of lines and shapes [10]. Although largely forgotten by the 1960s, Wigmore's analytical method has been rediscovered and recognized as a precursor to modern belief networks [10] [11]. The subsequent integration of Bayesian statistics addresses a crucial need in forensic science: to objectively evaluate the strength of evidence under competing propositions, such as those presented by prosecution and defense, thereby minimizing cognitive biases and potential miscarriages of justice [12].

The Wigmorean Foundation: A Precursor to Analytical Rigor

Core Principles of Wigmore Charts

Developed by John Henry Wigmore after the completion of his Treatise in 1904, Wigmore Charts (or Wigmorean analysis) were born from his conviction that "something was missing" in legal evidence analysis [10]. This system employs:

- Graphical Representation: Using lines to represent reasoning, explanations, refutations, and conclusions.

- Symbolic Logic: Employing shapes to denote facts, claims, explanations, and refutations [10].

- Structured Analysis: Providing a method to deconstruct complex evidence into component relationships for clearer evaluation.

This methodology offered a nascent framework for managing evidentiary complexity, though it lacked the mathematical formalism that would later emerge through Bayesian probability.

The Decline and Rediscovery

Despite being taught in classrooms during the early 20th century, Wigmore's charting method was nearly obsolete by the 1960s [10]. Contemporary scholars have since revived his work, using it as a foundation for developing modern analytic standards that bridge legal reasoning and scientific evidence evaluation [13]. This rediscovery coincides with growing recognition of the need for transparent, structured approaches to evidence interpretation in forensic contexts.

The Bayesian Revolution in Forensic Science

Theoretical Foundation: Likelihood Ratios and Hypothesis Testing

The Bayesian framework for forensic evidence evaluation centers on the Likelihood Ratio (LR) as a measure of evidentiary strength. The LR quantitatively compares the probability of observing the evidence under two competing hypotheses [12]:

LR = p(E|Hp) / p(E|Hd)

Where:

p(E|Hp)= Probability of the evidence given the prosecution hypothesisp(E|Hd)= Probability of the evidence given the defense hypothesis

This approach forms the cornerstone of evaluative reporting standards developed by the European Network of Forensic Science Institutes (EFNSI) and other regulatory bodies, requiring forensic scientists to consider evidence under all relevant hypotheses rather than focusing solely on a single proposition [12].

Addressing Cognitive Biases Through Systematic Reasoning

The adoption of Bayesian methods directly addresses critical vulnerabilities in human cognition identified through the work of Kahneman and Tversky on System 1 (intuitive) and System 2 (analytical) thinking [12]. Forensic science failures, such as the erroneous statistical evidence in the Sally Clark and Kathleen Folbigg cases, often stem from:

- Transposition of Conditional Probabilities: Confusing p(E|H) with p(H|E)

- Baseline Neglect: Ignoring prior probabilities or background prevalence

- Prosecutor's Fallacy: Misrepresenting the strength of evidence by failing to consider alternative explanations [12]

Bayesian reasoning provides a systematic, System 2 approach that mitigates these heuristic errors by requiring explicit consideration of prior probabilities and alternative hypotheses.

Modern Implementation: Bayesian Networks for Evidence Evaluation

Construction Methodology for Forensic Applications

Contemporary implementation of Bayesian reasoning employs Bayesian Networks (BNs) as direct descendants of Wigmore's graphical approach. The construction methodology for forensic applications involves:

- Narrative Development: Building qualitative, narrative-based networks that align representation across forensic disciplines [4]

- Variable Identification: Defining key factors and relationships specific to case circumstances

- Probability Assignment: Quantifying relationships based on empirical data and expert knowledge

- Sensitivity Analysis: Testing the model's robustness to variations in input parameters [4]

This methodology offers a simplified, accessible starting point for practitioners to build case-specific networks while maintaining mathematical rigor.

Application to Trace Evidence: Fiber Analysis Case Example

In forensic fiber evidence evaluation at the activity level, Bayesian Networks provide a structured framework for handling complex transfer and persistence scenarios [4]. The network construction:

- Aligns representation with other forensic disciplines like biology

- Incorporates case information transparently

- Facilitates assessment of evaluation sensitivity to data variations

- Enhances user-friendliness and accessibility for courts [4]

This approach demonstrates the maturation of Wigmore's original concept into practical, interdisciplinary tools for forensic evaluation.

Table 1: Evolution of Analytical Approaches in Forensic Evidence Evaluation

| Era | Primary Method | Key Features | Limitations |

|---|---|---|---|

| Early 20th Century | Wigmore Charts [10] | Graphical representation, Symbolic logic, Qualitative relationships | Lacked mathematical formalism, Subjective interpretation |

| Mid-Late 20th Century | Traditional Statistical Methods | Frequency-based analysis, Class characteristics matching | Prosecutor's fallacy, Neglect of alternative hypotheses |

| 21st Century | Bayesian Networks & Likelihood Ratios [4] [12] | Quantitative probability framework, Explicit hypothesis testing, Transparency | Computational complexity, Data requirements, Training needs |

Experimental Protocols for Forensic Evidence Evaluation

Protocol 1: Fiber Evidence Analysis Using Bayesian Networks

Purpose: To evaluate forensic fiber findings given activity-level propositions using a narrative Bayesian network construction methodology [4].

Methodology:

- Case Scenario Definition: Develop detailed case circumstances and factors of consideration

- Hypothesis Formulation: Define prosecution and defense propositions regarding activities

- Network Structure Development:

- Identify key variables (transfer, persistence, recovery, background)

- Establish conditional dependencies between variables

- Align network structure with interdisciplinary approaches

- Probability Assignment:

- Parameterize nodes with empirical data where available

- Use expert knowledge for missing data with appropriate uncertainty

- Likelihood Ratio Calculation: Compute LR for competing propositions based on network output

- Sensitivity Analysis: Test robustness to variations in input probabilities and assumptions

Validation: Compare network outputs with known case outcomes and assess discriminatory power [4].

Protocol 2: Paper Analysis Using Multi-Technique Characterization

Purpose: To discriminate between paper sources for forensic document examination using integrated analytical techniques [13].

Methodology:

- Sample Preparation: Collect representative paper samples with known provenance

- Multi-Technique Analysis:

- Spectroscopic Analysis: FTIR, Raman spectroscopy for molecular composition

- Elemental Analysis: LIBS, XRF for inorganic filler characterization

- Mass Spectrometry: Py-GC/MS for organic additive profiling

- Isotopic Analysis: IRMS for geographical origin determination [13]

- Data Integration: Combine multiple analytical outputs using chemometric methods

- Statistical Classification: Apply machine learning algorithms for source discrimination

- Validation: Test method performance with forensically realistic samples including aged, contaminated, and degraded materials

Limitations Addressment: Account for environmental degradation pathways and extrinsic contamination typical of forensic exhibits [13].

Table 2: Analytical Techniques for Forensic Paper Characterization

| Technique Category | Specific Methods | Targeted Signatures | Forensic Utility |

|---|---|---|---|

| Spectroscopic | FTIR, Raman, LIBS, XRF, UV-Vis [13] | Molecular structure, Fillers, Additives, Elemental composition | Discrimination of paper types, Batch differentiation |

| Chromatographic/Mass Spectrometric | Py-GC/MS, HPLC, IRMS [13] | Organic additives, Sizing agents, Isotopic ratios | Source identification, Geographical origin determination |

| Physical & Imaging | Microscopy, Texture analysis, Thickness measurement [13] | Surface topology, Fiber distribution, Physical properties | Alteration detection, Manufacturing process identification |

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Forensic Evidence Evaluation

| Reagent/Material | Composition/Type | Primary Function in Forensic Analysis |

|---|---|---|

| DNA Profiling Kits | PCR/STR Amplification Reagents [14] | Genetic marker amplification for human identification from biological evidence |

| Reference Fiber Collections | Synthetic/Natural Fiber Libraries | Comparative analysis of questioned fibers against known sources |

| Chromatographic Standards | Certified Reference Materials | Instrument calibration and quantitative analysis of inks, dyes, and additives |

| Spectroscopic Calibration Sets | Elemental/Molecular Standards | Validation of spectroscopic methods for paper and trace evidence analysis |

| Bayesian Network Software | Specialized Statistical Platforms | Implementation of probabilistic models for evidence evaluation under competing hypotheses |

Visualizing the Evolution: From Wigmore to Bayesian Analysis

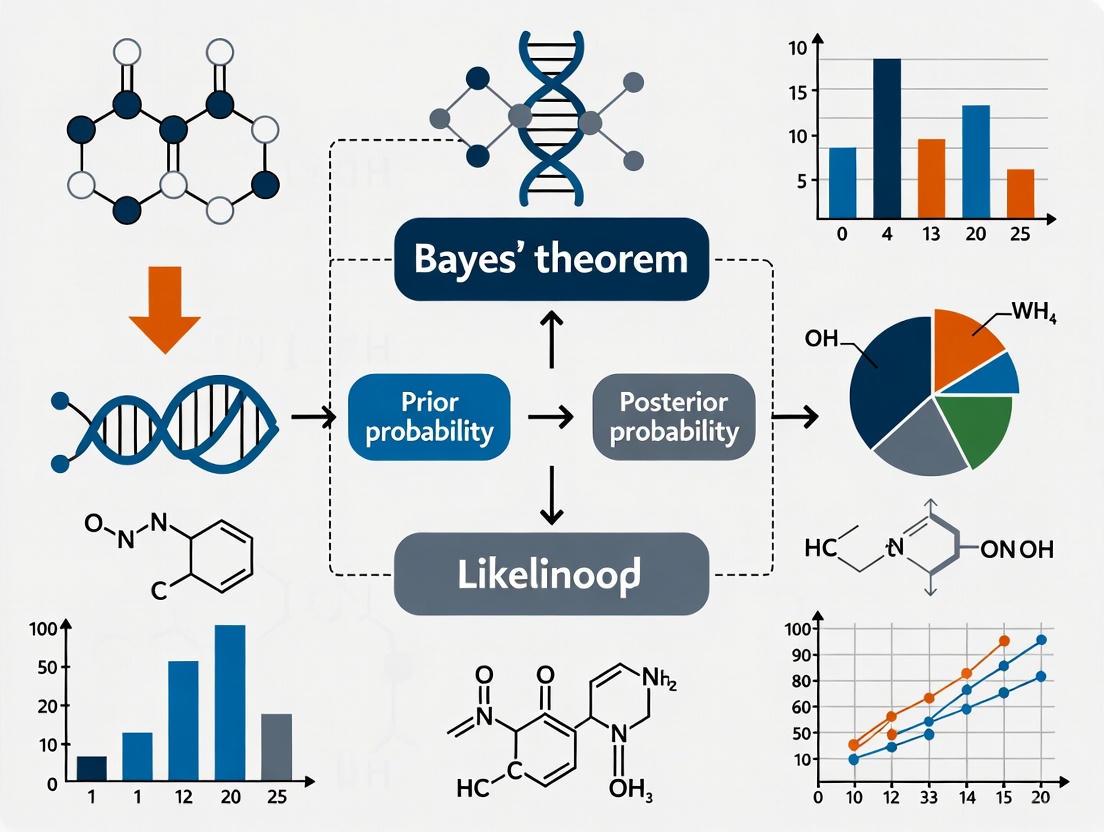

Figure 1: Evolution of Forensic Evidence Analysis Methods. This diagram traces the development from early qualitative methods to contemporary quantitative frameworks.

Figure 2: Bayesian Workflow for Forensic Evidence Evaluation. This diagram illustrates the systematic process of evidence evaluation using Bayesian principles, highlighting the iterative nature of hypothesis testing.

The historical trajectory from Wigmore Charts to modern Bayesian networks represents a fundamental transformation in forensic evidence evaluation—from qualitative mapping to quantitative probabilistic reasoning. This evolution addresses critical needs for transparency, robustness, and scientific rigor in forensic science, particularly in light of documented miscarriages of justice stemming from flawed statistical reasoning [12].

Future developments in this field will likely focus on:

- Enhanced Computational Tools: Refining Bayesian network software for greater accessibility to forensic practitioners

- Expanded Empirical Databases: Developing comprehensive reference data for probability assignments across evidence types

- Interdisciplinary Collaboration: Leveraging approaches successful in disciplines like forensic biology for other evidence categories [4]

- Standardization and Validation: Establishing universally accepted protocols for evaluative reporting across forensic specialties

- Education and Training: Implementing curriculum requirements for statistical and probabilistic reasoning in forensic science education [12]

The integration of Wigmore's structured approach with Bayesian mathematical formalism provides a powerful framework for advancing the scientific foundations of forensic evidence evaluation, offering researchers and practitioners robust tools for addressing complex evidentiary questions in legal contexts.

Within the rigorous domain of forensic evidence evaluation, the Bayesian framework provides a structured and mathematically sound methodology for updating beliefs in the presence of uncertainty [15]. This approach is increasingly recognized as a normative standard for quantifying the probative value of evidence, moving beyond subjective assertion to a calculable measure of support for one proposition over another [16]. The core of this paradigm rests on three interconnected components: prior odds, which represent the initial belief about competing hypotheses before considering the new evidence; the likelihood ratio (LR), which quantifies the strength of the new evidence; and posterior odds, which reflect the updated belief after integrating the evidence [17] [16]. The relationship between these components is governed by a simple yet powerful multiplicative rule, offering a transparent model for reasoning under uncertainty that is particularly vital in legal contexts [15].

This technical guide details the core components and methodologies of the Bayesian framework as applied to forensic science. It is structured to provide researchers and practitioners with a deep understanding of the mathematical underpinnings, practical applications, and experimental protocols essential for implementing this approach in casework and research.

Mathematical Foundation

Bayes' Theorem provides the fundamental equation for updating probabilistic beliefs [1]. Its utility in forensic science is most clearly expressed in its odds form, which directly relates the three core components [16].

Bayes' Theorem and the Odds Form

The standard probability form of Bayes' Theorem is:

P(A|B) = [P(B|A) × P(A)] / P(B) [1] [18]

Where:

- P(A|B) is the posterior probability of hypothesis A given evidence B.

- P(B|A) is the likelihood of observing evidence B if hypothesis A is true.

- P(A) is the prior probability of hypothesis A.

- P(B) is the probability of evidence B.

For forensic evaluation, where two competing propositions (e.g., proposed by the prosecution, Hp, and the defense, Hd) are compared, the theorem is more effectively used in its odds form [16]:

Posterior Odds = Likelihood Ratio × Prior Odds [16]

This can be written as:

P(Hp|E) / P(Hd|E) = LR × [P(Hp) / P(Hd)]

In this framework, the Likelihood Ratio (LR) is the central term that forensic experts can evaluate and present to the court. The prior and posterior odds fall within the remit of the trier of fact (e.g., the jury), as they incorporate non-scientific, context-dependent information [15].

Core Components Definition

Table 1: Core Components of the Bayesian Framework in Forensic Science

| Component | Mathematical Representation | Forensic Interpretation | Responsible Party |

|---|---|---|---|

| Prior Odds | P(Hp) / P(Hd) | The odds in favor of the prosecution's proposition (Hp) over the defense's proposition (Hd) before considering the scientific evidence (E). | Trier of Fact (Jury) |

| Likelihood Ratio (LR) | P(E|Hp) / P(E|Hd) | The probability of observing the evidence (E) if Hp is true, divided by the probability of observing E if Hd is true. It quantifies the strength of the evidence. | Forensic Expert |

| Posterior Odds | P(Hp|E) / P(Hd|E) | The odds in favor of Hp over Hd after considering the scientific evidence (E). | Trier of Fact (Jury) |

Bayesian Framework in Forensic Evidence Evaluation

The application of the LR is the primary contribution of the forensic scientist to the Bayesian framework. It is a measure of diagnosticity that helps the trier of fact update their beliefs in a coherent manner [16].

The Likelihood Ratio as the Weight of Evidence

The LR is a continuous measure of evidential strength [16]. Its value can be interpreted as follows:

- LR > 1: The evidence is more likely under the prosecution's proposition (Hp) than under the defense's proposition (Hd). The higher the value, the stronger the support for Hp.

- LR = 1: The evidence is equally likely under both propositions. The evidence has no probative value and does not alter the prior odds.

- LR < 1: The evidence is more likely under the defense's proposition (Hd) than under the prosecution's proposition (Hp). The smaller the value, the stronger the support for Hd.

This approach explicitly separates the role of the forensic expert (providing the LR) from the role of the judiciary (assessing prior and posterior odds), thereby adhering to the Ultimate Issue Rule by preventing experts from directly pronouncing on the guilt or innocence of a defendant [15].

Conceptual Workflow of Forensic Bayesian Reasoning

The following diagram visualizes the logical sequence and relationship between the core components, the responsible parties, and the outcome in a forensic evaluation.

Bayesian Forensic Evaluation Workflow - This diagram illustrates how the court's prior odds and the expert's likelihood ratio combine to form the posterior odds, which represent the court's updated belief.

Experimental Protocols and Uncertainty Analysis

Implementing the Bayesian framework in practice requires careful methodology and a critical assessment of the uncertainties involved in calculating the LR.

General Protocol for LR Evaluation

Table 2: Generalized Protocol for Forensic Likelihood Ratio Evaluation

| Step | Action | Methodological Considerations |

|---|---|---|

| 1. Define Propositions | Formulate two competing, mutually exclusive propositions at the same hierarchical level (e.g., source level, activity level). | Propositions must be forensically relevant and agreed upon by parties. Hp: Prosecution proposition. Hd: Defense proposition. |

| 2. Identify Relevant Data & Populations | Determine the data needed to estimate the probabilities P(E|Hp) and P(E|Hd). This often involves selecting relevant reference populations. | Population data must be appropriate and representative. The choice can significantly impact the LR. |

| 3. Develop a Probabilistic Model | Construct a statistical model that formalizes the relationship between the evidence and the propositions under consideration. | Model complexity can vary. Assumptions must be explicitly stated and justified (e.g., assuming independence of features). |

| 4. Calculate the LR | Compute the ratio P(E|Hp) / P(E|Hd) using the developed model and available data. | For complex evidence (e.g., DNA mixtures), this may require specialized software and algorithms. |

| 5. Conduct Uncertainty Analysis | Evaluate the sensitivity of the LR to changes in model assumptions, parameters, and data quality. | Use frameworks like the "lattice of assumptions" and "uncertainty pyramid" to explore a range of plausible LR values [16]. |

Uncertainty Analysis: The Lattice of Assumptions

A reported LR is not an absolute, objective number. Its value depends on a chain of assumptions and modeling choices [16]. A comprehensive experimental protocol must therefore include an uncertainty analysis. The "lattice of assumptions" is a proposed framework for this purpose. It involves:

- Identifying Key Assumptions: Listing all major assumptions made in the modeling process (e.g., independence of features, choice of population database, statistical distribution used).

- Varying Assumptions Systematically: Recalculating the LR under different, plausible sets of assumptions.

- Building an Uncertainty Pyramid: The range of LR values obtained from this process forms a pyramid of results, from the most specific (a single set of assumptions) to the most general (encompassing all reasonable assumptions). This provides the trier of fact with a more complete picture of the robustness and reliability of the LR characterization, ensuring its fitness for purpose [16].

The Scientist's Toolkit: Essential Research Reagents and Materials

The practical application of the Bayesian framework across various forensic disciplines relies on a suite of specialized tools, databases, and software.

Table 3: Key Research Reagent Solutions for Bayesian Forensic Evaluation

| Tool / Material | Function in Bayesian Evaluation | Example Use-Case |

|---|---|---|

| Reference Population Databases | Provides the data necessary to estimate the probability of observing the evidence under the defense proposition (P(E|Hd)), i.e., the random match probability. | DNA frequency databases, automotive paint databases, fibre population studies. |

| Probabilistic Genotyping Software (PGS) | Implements complex statistical models to calculate LRs for DNA mixtures, where the evidence contains DNA from multiple contributors. | STRmix, TrueAllele. These are essential for calculating LRs in low-template or complex mixture DNA evidence. |

| Bayesian Network (BN) Software | Allows for the construction of graphical models that represent the probabilistic relationships between multiple variables and pieces of evidence in a case. | Hugin, GeNIe. Used for activity-level propositions (e.g., evaluating fibre evidence given a proposed scenario) [4]. |

| Validated Quantitative Models | Discipline-specific mathematical models that form the basis for calculating likelihoods. For example, models for glass refractive index or bullet lead composition. | Provides the "likelihood function" P(E|H) for continuous evidence types, moving beyond simplistic categorical matching. |

| Calibrated Black-Box Studies | Provides empirical data on the performance and error rates of forensic feature-comparison methods, which informs the uncertainty analysis of the LR. | Studies where practitioners evaluate evidence from ground-truth known sources; used to validate the reliability of the LR output for a given discipline [16]. |

The Bayesian framework, with its core components of prior odds, likelihood ratio, and posterior odds, offers a logically coherent and legally appropriate structure for the evaluation of forensic evidence [15] [16]. Its power lies in its ability to clearly separate the roles of the scientist and the jurist, while providing a common language of quantification for the "weight of evidence." The widespread adoption of this paradigm, particularly through the use of the likelihood ratio, represents a significant step toward the epistemological reform of forensic science, addressing historical criticisms concerning subjectivity and a lack of robust reliability testing [17].

However, the implementation of this framework is not a mere mechanical calculation. It requires careful formulation of propositions, development of valid probabilistic models, and, crucially, a thorough analysis of the uncertainty inherent in any reported LR value [16]. The ongoing development of standardized protocols, reference databases, and specialized software will continue to enhance the robustness and accessibility of Bayesian methods. For researchers and practitioners, mastering these core components is not just an academic exercise but a necessary skill for advancing the scientific rigor and, consequently, the justice, of modern forensic practice.

The Odds Form of Bayes' Theorem and its Legal Relevance

This technical guide provides an in-depth examination of the odds form of Bayes' Theorem and its critical applications in forensic science and legal evidence evaluation. The document outlines the mathematical formalism, presents structured probabilistic frameworks for forensic reasoning, and details experimental protocols for implementing Bayesian analysis in casework. Designed for researchers and forensic professionals, this whitepaper establishes a foundational framework for integrating Bayesian methodologies into forensic evidence evaluation research, with particular emphasis on interdisciplinary applications and the quantification of evidential value.

Bayesian inference provides a coherent framework for updating beliefs about propositions based on new evidence. Within forensic science, this framework enables the quantitative evaluation of evidence given competing propositions, typically advanced by prosecution and defense parties. The odds form of Bayes' Theorem serves as the mathematical cornerstone for this process, transforming prior beliefs into posterior beliefs through the integrating of evidential strength [19]. The theorem's application addresses a fundamental goal of forensic science: to determine the degree to which scientific evidence supports one proposition over another in legal proceedings [20].

The historical development of Bayesian methods in law traces back to foundational works by scholars such as John Henry Wigmore, who developed graphical systems to map legal reasoning [17]. The formal integration of probability theory into evidence scholarship gained significant momentum in the 1960s and 1970s through the work of Finkelstein and Fairley, Kaplan, and others who advocated for probabilistic approaches to legal decision-making [20]. Today, Bayesian networks—graphical models that represent variables and their conditional dependencies—have become increasingly sophisticated tools for handling complex forensic scenarios involving multiple pieces of evidence and propositions [4] [6].

Mathematical Foundations of the Odds Form

Theorem Formulation

The odds form of Bayes' Theorem provides a computationally efficient method for updating beliefs about competing hypotheses. The theorem states that the posterior odds in favor of hypothesis H₁ over hypothesis H₂, given evidence E, equal the prior odds multiplied by the likelihood ratio (LR):

Posterior Odds = Likelihood Ratio × Prior Odds

Mathematically, this is expressed as:

[ \frac{P(H1|E)}{P(H2|E)} = \frac{P(E|H1)}{P(E|H2)} \times \frac{P(H1)}{P(H2)} ]

Where:

- ( \frac{P(H1|E)}{P(H2|E)} ) represents the posterior odds of H₁ versus H₂ given evidence E

- ( \frac{P(E|H1)}{P(E|H2)} ) constitutes the likelihood ratio, quantifying how much more likely the evidence E is under H₁ than under H₂

- ( \frac{P(H1)}{P(H2)} ) represents the prior odds of H₁ versus H₂ before considering evidence E [21] [20]

The likelihood ratio (LR) serves as the fundamental measure of evidentiary strength in this framework, with values greater than 1 supporting H₁ and values less than 1 supporting H₂ [19].

Comparative Formulations

Table 1: Comparison of Bayes' Theorem Formulations

| Formulation Type | Mathematical Expression | Primary Application Context |

|---|---|---|

| Standard Form | ( P(A|B) = \frac{P(B|A)P(A)}{P(B)} ) | Basic probability calculations, single evidence evaluation |

| Odds Form | ( \frac{P(H1|E)}{P(H2|E)} = \frac{P(E|H1)}{P(E|H2)} \times \frac{P(H1)}{P(H2)} ) | Comparative hypothesis testing, forensic evidence evaluation |

| Extended Odds Form (Multiple Evidence) | ( O(H1:H2|E1,E2) = LR1 \times LR2 \times O(H1:H2) ) | Complex cases with multiple independent pieces of evidence [21] |

The odds form offers distinct advantages for forensic applications. It separates the role of the forensic scientist (who assesses the likelihood ratio) from the role of the trier of fact (who assesses prior odds based on other case information). This distinction maintains appropriate boundaries between scientific evaluation and legal judgment [20].

Bayesian Networks for Complex Legal Reasoning

Network Architecture for Evidence Evaluation

Bayesian networks (BNs) provide a graphical framework for representing complex probabilistic relationships among multiple variables in legal reasoning. These networks consist of nodes (representing variables), edges (representing dependencies), and conditional probability tables (quantifying these dependencies) [19]. In forensic applications, BNs enable the structured evaluation of evidence given activity-level propositions, which concern specific actions rather than source identification [4].

Table 2: Core Components of Forensic Bayesian Networks

| Component | Description | Forensic Application Example |

|---|---|---|

| Hypothesis Nodes | Represent competing propositions (e.g., prosecution vs. defense positions) | "Suspect present at scene" vs. "Suspect not present at scene" |

| Evidence Nodes | Represent observable forensic findings | DNA match, fiber transfer, eyewitness testimony |

| Intermediate Nodes | Represent unobservable events or sub-hypotheses | "Transfer occurred," "Persistence occurred," "Detection occurred" |

| Conditional Probability Tables | Quantify probabilistic relationships between connected nodes | Probability of observing fibers given specific activities |

Recent research has developed template Bayesian networks that can be adapted to various case scenarios, providing a standardized approach for forensic evaluators [6]. These templates facilitate interdisciplinary casework by offering a common structural framework for combining different types of evidence (e.g., DNA, fibers, digital evidence) within a single probabilistic model.

Visualizing Bayesian Reasoning

The following diagram illustrates a basic Bayesian network for evaluating forensic evidence given activity-level propositions:

Diagram 1: Basic Bayesian network for forensic evidence evaluation, incorporating uncertainty about the relationship between an item and an activity [6].

For more complex reasoning patterns involving multiple items of evidence, the following network structure captures the relationship between different evidentiary reports and their connection to underlying events:

Diagram 2: Bayesian network for multiple evidence reports referring to different events, connected by a "weft" representing conditional dependence [19].

Quantitative Framework for Evidence Evaluation

Likelihood Ratio Calculations

The likelihood ratio serves as the quantitative measure of evidentiary strength in the Bayesian framework. For forensic evidence E and competing propositions H₁ and H₂, the likelihood ratio is calculated as:

[ LR = \frac{P(E|H1)}{P(E|H2)} ]

The interpretation of LR values follows a standardized scale:

Table 3: Likelihood Ratio Interpretation Scale

| LR Value Range | Interpretation | Evidential Strength |

|---|---|---|

| 1 to 10 | Limited support for H₁ over H₂ | Weak |

| 10 to 100 | Moderate support for H₁ over H₂ | Moderate |

| 100 to 1000 | Strong support for H₁ over H₂ | Moderately strong |

| >1000 | Very strong support for H₁ over H₂ | Strong |

In practice, the likelihood ratio formulation can be expanded to account for relevant background information I:

[ LR = \frac{P(E|H1,I)}{P(E|H2,I)} ]

This formulation explicitly acknowledges that probabilistic assessments are always made within a specific context and with reference to available background information [20] [19].

Weight of Evidence

An alternative measure of evidential strength is the "weight of evidence," formally defined as the logarithm of the likelihood ratio:

[ WoE = \log_{10}(LR) ]

This logarithmic transformation provides practical benefits for combining multiple independent pieces of evidence, as the weights of evidence become additive [19]. For two independent pieces of evidence E₁ and E₂:

[ WoE{\text{total}} = WoE1 + WoE_2 ]

Which corresponds to multiplying likelihood ratios:

[ LR{\text{total}} = LR1 \times LR_2 ]

This additive property simplifies complex evidence combination problems and aligns with intuitive reasoning about cumulative evidential strength.

Experimental Protocols for Forensic Bayesian Analysis

Protocol for Template Bayesian Network Construction

Objective: Develop a standardized methodology for constructing Bayesian networks to evaluate forensic evidence given activity-level propositions.

Materials and Equipment:

- Case information documentation

- Probabilistic reasoning software (e.g., GeNIe, Hugin)

- Template Bayesian network structures [4] [6]

- Relevant empirical data on transfer, persistence, and detection probabilities

Procedure:

- Case Analysis: Identify the disputed issues and relevant activity-level propositions (H₁, H₂, ..., Hₙ).

- Evidence Identification: Catalog all relevant forensic findings and their relationships to the propositions.

- Network Structure Development:

- Place proposition nodes at the top of the network hierarchy

- Add intermediate nodes representing transfer, persistence, and detection events

- Incorporate evidence nodes at the bottom level

- Include nodes representing uncertainties (e.g., item-activity relationships)

- Parameterization:

- Define conditional probability tables for each node

- Incorporate relevant empirical data where available

- Use expert judgment for unavailable parameters, documenting all assumptions

- Validation:

- Test network behavior under extreme scenarios

- Verify that the network produces intuitively reasonable results

- Conduct sensitivity analysis to identify influential parameters

Analysis:

- Calculate likelihood ratios for evidence given competing propositions

- Evaluate the sensitivity of conclusions to changes in key parameters

- Document the overall support for competing propositions

This methodology aligns with recent research on narrative Bayesian network construction, emphasizing transparent incorporation of case information and assessment of evaluation sensitivity [4].

Protocol for Interdisciplinary Evidence Combination

Objective: Establish a framework for combining multiple types of forensic evidence (e.g., DNA, fibers, digital evidence) within a unified Bayesian model.

Materials and Equipment:

- Specialized knowledge from multiple forensic disciplines

- Extended template Bayesian networks [6]

- Interdisciplinary collaboration framework

Procedure:

- Proposition Definition: Establish a common set of activity-level propositions relevant to all evidence types.

- Network Integration:

- Utilize template networks with association propositions linking items to activities

- Create separate evidence pathways for each forensic discipline

- Implement wefts (cross-connections) between evidence pathways where appropriate

- Conditional Probability Assessment:

- Discipline-specific experts provide likelihood assessments for their evidence

- Document all assumptions and limitations for each evidence type

- Establish consensus on inter-disciplinary dependencies

- Combined Evaluation:

- Calculate overall likelihood ratios considering all evidence

- Evaluate synergistic or contradictory relationships between different evidence types

- Assess the combined weight of evidence

Analysis:

- Determine the cumulative support for competing propositions

- Identify evidential conflicts or synergies between different evidence types

- Quantify the value added by each additional evidence type

This protocol addresses the growing demand for interdisciplinary evidence evaluation in modern forensic casework [6].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Analytical Tools for Bayesian Forensic Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| Bayesian Network Software | Graphical creation and probability calculation | Implementing complex probabilistic models for case evaluation |

| Template Bayesian Networks | Pre-structured models for common forensic scenarios | Accelerating model development and ensuring methodological consistency [6] |

| Empirical Transfer/Persistence Databases | Source of quantitative data for probability assignments | Informing realistic parameter estimates for transfer evidence |

| Sensitivity Analysis Tools | Identification of influential model parameters | Focusing resources on highest-impact data collection [4] |

| Likelihood Ratio Calculation Frameworks | Standardized metrics for evidential strength | Providing quantitative measures of evidence value [19] |

Applications in Forensic Evidence Evaluation

Activity Level Propositions

The odds form of Bayes' Theorem finds particularly valuable application in evaluating evidence given activity-level propositions, which concern specific actions rather than source identification. For example, in a case involving fibers matching the suspect's sweater found at a crime scene, relevant activity-level propositions might be:

- H₁: The suspect strangled the victim while wearing the sweater

- H₂: The suspect never visited the crime scene [6]

The likelihood ratio would then assess the probability of finding the fibers under these competing explanations, considering factors such as:

- The probability of fiber transfer given physical contact

- The probability of fiber persistence until collection

- The background prevalence of similar fibers

- The possibility of indirect transfer mechanisms

This approach moves beyond simple source attribution to address the more forensically relevant question of what activities could explain the evidence findings.

Interdisciplinary Evidence Combination

Bayesian networks provide a structured framework for combining different types of forensic evidence within a single analysis. For example, a case involving both DNA evidence and fiber evidence can be evaluated using an extended network that incorporates:

- Separate evidence pathways for each discipline

- Association propositions linking items to activities

- Uncertainties about the relationship between items and activities [6]

This approach enables a more holistic evaluation of the total evidence than separate disciplinary evaluations, properly accounting for dependencies between different types of evidence.

The odds form of Bayes' Theorem provides a mathematically rigorous framework for evaluating forensic evidence given competing propositions. Its implementation through Bayesian networks offers a transparent, structured approach to complex probabilistic reasoning in legal contexts. The methodology supports both single-discipline evaluations and interdisciplinary evidence combination, addressing a critical need in modern forensic practice.

Future research directions include developing more sophisticated template networks for specific forensic scenarios, expanding empirical databases for transfer and persistence probabilities, and creating standardized protocols for sensitivity analysis and validation. As Bayesian methodologies continue to evolve, they promise to enhance the logical foundation of forensic science and improve the administration of justice through more transparent, quantitative evidence evaluation.

Bayesian probability theory, named after Thomas Bayes, provides a formal mathematical framework for updating beliefs in the presence of uncertainty. This approach stands in contrast to classical frequentist statistics by treating probabilities as measures of belief rather than as long-run frequencies. The cornerstone of this framework is Bayes' Theorem, which mathematically describes how to update the probability of a hypothesis based on new evidence [1]. The theorem's power lies in its ability to invert conditional probabilities: moving from the probability of observing data given a hypothesis to the probability that the hypothesis is true given the observed data. This inversion is particularly valuable in scientific fields where researchers must draw conclusions about underlying causes from observed effects.

The application of Bayesian methods has expanded dramatically across diverse scientific disciplines, from forensic science and medicine to machine learning and drug development. In forensic science specifically, Bayesian networks have emerged as a powerful tool for evaluating evidence under activity-level propositions, where the complexity of circumstances requires a transparent and structured approach to reasoning under uncertainty [4] [5]. The flexibility of the Bayesian framework allows researchers to incorporate both data-driven information and expert knowledge into a coherent probabilistic model, making it particularly suited for real-world scientific problems where multiple sources of uncertainty must be reconciled.

Theoretical Foundations of Bayes' Theorem

Mathematical Formulation

Bayes' Theorem provides a mathematical rule for inverting conditional probabilities. The theorem is stated mathematically as follows:

P(A|B) = [P(B|A) × P(A)] / P(B) [1]

Where:

- P(A|B) is the posterior probability - the probability of hypothesis A given evidence B

- P(B|A) is the likelihood - the probability of evidence B given that hypothesis A is true

- P(A) is the prior probability - the initial probability of hypothesis A before observing evidence B

- P(B) is the marginal probability - the total probability of evidence B across all possible hypotheses

This deceptively simple formula provides a rigorous method for updating beliefs in light of new evidence. The theorem can be derived from the definition of conditional probability, where the probability of both A and B occurring together, P(A∩B), can be expressed as either P(A|B)×P(B) or P(B|A)×P(A). Setting these equal and solving for P(A|B) yields Bayes' Theorem [1].

Conceptual Framework

The power of Bayesian reasoning extends beyond its mathematical formulation to its conceptual framework for scientific inference. This framework involves:

- Prior Probability (P(A)): Representing initial beliefs about a hypothesis before collecting new data

- Likelihood (P(B|A)): Expressing how probable the observed data is under the hypothesis

- Posterior Probability (P(A|B)): Representing updated beliefs about the hypothesis after considering the evidence

- Marginal Likelihood (P(B)): Serving as a normalizing constant that ensures probabilities sum to one

This Bayesian inference process creates a continuous cycle of knowledge updating, where today's posterior probabilities become tomorrow's priors as new evidence emerges. The framework explicitly acknowledges and incorporates prior knowledge while providing a mathematically coherent mechanism for updating beliefs based on empirical observations [1].

Bayesian Networks for Complex Reasoning

Representation and Structure

Bayesian networks (BNs) are graphical models that represent complex probabilistic relationships among multiple variables. They combine graph theory with probability theory to provide a natural tool for dealing with uncertainty and complexity in scientific reasoning [22]. A Bayesian network consists of two components:

- A directed acyclic graph (DAG) where nodes represent random variables and edges represent conditional dependencies between variables

- Conditional probability distributions for each node given its parents in the graph

The key advantage of Bayesian networks is their ability to represent the full joint probability distribution over all variables in a compact form by leveraging conditional independence relationships. For a set of variables X₁, X₂, ..., Xₙ, the chain rule of probability states that the joint probability is P(X₁, X₂, ..., Xₙ) = ΠP(Xᵢ|X₁, ..., Xᵢ₋₁). In a Bayesian network, each variable Xᵢ is conditionally independent of its non-descendants given its parents, allowing the joint distribution to be simplified to P(X₁, X₂, ..., Xₙ) = ΠP(Xᵢ|Parents(Xᵢ)) [22].

Table 1: Components of a Bayesian Network

| Component | Description | Function in the Model |

|---|---|---|

| Nodes | Represent random variables | Capture key factors in the problem domain |

| Edges | Represent conditional dependencies | Show direct influences between variables |

| Conditional Probability Tables (CPTs) | Quantify relationships between variables | Specify P(node|parents) for discrete variables |

| Prior Probabilities | Initial beliefs for root nodes | Represent knowledge before evidence |

Inference in Bayesian Networks

The primary computational task in Bayesian networks is probabilistic inference - calculating posterior probabilities of query variables given observed evidence. Consider the classic "water sprinkler" example [22]:

- Cloudy (C): A parent node with prior probability P(C=true) = 0.5

- Sprinkler (S): Child of C with P(S=true|C=true) = 0.1 and P(S=true|C=false) = 0.5

- Rain (R): Child of C with P(R=true|C=true) = 0.8 and P(R=true|C=false) = 0.2

- Wet Grass (W): Child of S and R with P(W=true|S=true,R=true) = 0.99, P(W=true|S=true,R=false) = 0.9, P(W=true|S=false,R=true) = 0.9, P(W=true|S=false,R=false) = 0.0

If we observe that the grass is wet (W=true), we can use Bayes' theorem to calculate the posterior probability that the sprinkler was on:

P(S=1|W=1) = P(W=1|S=1)P(S=1) / P(W=1) = 0.9 × 0.5 / (0.9 × 0.5 + 0.9 × 0.5) = 0.45 / 0.9 = 0.5

This demonstrates diagnostic reasoning - inferring causes from effects. Bayesian networks also support causal (top-down) reasoning, such as computing the probability that the grass will be wet given that it is cloudy [22].

Bayesian Network for Water Sprinkler Example

Methodological Approaches and Experimental Protocols

Narrative Bayesian Networks for Forensic Evidence Evaluation

The evaluation of forensic fibre evidence given activity-level propositions presents particular challenges due to case-specific circumstances and multiple interacting factors. A recently proposed methodology uses narrative Bayesian networks to address this complexity through a simplified construction approach [4] [5]. The experimental protocol for this methodology involves:

- Case Scenario Development: Creating illustrative case scenarios that capture the essential elements of forensic fibre evidence evaluation

- Network Structure Design: Developing BN structures that align with the narrative of the case while maintaining computational tractability

- Parameter Estimation: Specifying conditional probability distributions based on empirical data, expert knowledge, or a combination of both

- Sensitivity Analysis: Assessing how the evaluation changes with variations in input data and assumptions

This approach emphasizes transparent incorporation of case information, making the reasoning process more accessible to both experts and legal professionals [4]. The narrative format enhances user-friendliness and facilitates interdisciplinary collaboration by aligning with successful approaches in related forensic disciplines like forensic biology.

Bayesian Clinical Reasoning with Structured and Unstructured Data

In clinical medicine, Bayesian networks face the challenge of integrating both structured tabular data (lab results, demographic information) and unstructured text data (clinical notes, consultation summaries). A recent study proposed and compared different architectures for this integration in the context of pneumonia diagnosis [23]:

Experimental Protocol for Clinical Bayesian Networks:

- Data Generation: Creating an artificial yet realistic dataset containing both structured variables (symptoms, background factors) and unstructured clinical text

- Model Architectures:

- BN-gen-text: A generative approach that models the distribution of text representations directly

- BN-discr-text: A discriminative approach that uses text classifiers integrated with the BN

- Baseline Models:

- Standard BN without text variables

- Enhanced BN with additional observed symptoms

- Discriminative neural network using both tabular and text data

- Evaluation: Comparing posterior diagnostic probabilities across different evidence scenarios

This protocol allows researchers to investigate the impact of different modeling approaches for integrating textual information into the clinical reasoning process, with the goal of more accurately diagnosing conditions like pneumonia [23].

Table 2: Bayesian Model Comparison for Clinical Reasoning

| Model Type | Data Handling | Advantages | Limitations |

|---|---|---|---|

| Standard BN | Tabular data only | High interpretability, handles uncertainty well | Cannot utilize textual information |

| BN-gen-text | Tabular + generative text | Coherent probabilistic framework | Complex parameter estimation |

| BN-discr-text | Tabular + discriminative text | Effective use of text representations | Less coherent probabilistic semantics |

| Neural Network | Fused tabular and text | Powerful pattern recognition | Black box, poor interpretability |

Advanced Computational Techniques

Efficient Inference Algorithms

Performing exact inference by computing the full joint probability distribution and marginalizing out unwanted variables requires time exponential in the number of nodes, making it computationally intractable for large networks. Variable Elimination is a fundamental exact inference algorithm that efficiently computes marginal probabilities by leveraging the factored structure of the joint distribution [22].

The algorithm works by:

- Organizing the computation around the network structure

- "Pushing sums in" as far as possible when marginalizing out irrelevant variables

- Creating intermediate factors that are reused across computations

- Systematically eliminating variables in an order that minimizes computational complexity

For example, when computing P(W) in the water sprinkler network, we can efficiently compute:

P(W) = ΣC,S,R P(C) × P(S|C) × P(R|C) × P(W|S,R)

By strategically ordering the operations as:

P(W) = ΣS,R P(W|S,R) × [ΣC P(R|C) × P(S|C) × P(C)]

This approach significantly reduces computational complexity compared to naive computation of the full joint distribution [22].

Learning from Incomplete Data

Real-world scientific data often contains missing values, presenting challenges for Bayesian network learning. The Node-Average Likelihood (NAL) method provides a consistent and computationally efficient approach for learning BN structures from incomplete data [24]. The methodology involves:

- Theoretical Foundation: Establishing sufficient conditions for the consistency of the NAL estimator across different BN types, including discrete, Gaussian, and conditional Gaussian networks

- Model Identifiability: Proving that the true network structure can be identified from incomplete data under appropriate conditions

- Simulation Studies: Confirming theoretical results through independent simulation studies comparing NAL with Expectation-Maximization (EM) approaches

This research demonstrates that NAL maintains competitive performance with EM algorithms while offering greater computational efficiency, particularly for conditional Gaussian Bayesian networks [24].

Applications in Scientific Research

Forensic Science Applications

Bayesian networks have found particularly valuable applications in forensic science, where reasoning under uncertainty is essential. The evaluation of forensic fibre evidence demonstrates how BNs can address activity-level propositions through:

- Transparent Reasoning: Making the logical structure of forensic evaluation explicit and accessible

- Sensitivity Analysis: Understanding how conclusions depend on various evidential elements

- Interdisciplinary Alignment: Creating consistency with approaches used in other forensic disciplines

- Courtroom Communication: Facilitating clearer explanation of probabilistic reasoning to legal professionals [4] [5]

The narrative approach to BN construction in this context emphasizes the story-like nature of forensic evidence evaluation, where different hypotheses about activities compete to explain the observed trace evidence.

Biomedical and Clinical Applications

Bayesian methods support various biomedical applications:

Drug Development:

- Calculating the probability that a drug is effective based on clinical trial results

- Incorporating prior knowledge from earlier trial phases into later phase decisions

- Balancing evidence of efficacy against safety concerns [25] [1]

Diagnostic Testing:

- Determining the true positive and negative predictive values of medical tests

- Adjusting interpretations based on population prevalence and individual risk factors

- Combining multiple diagnostic indicators into coherent probability assessments

For example, consider a drug test with 90% sensitivity and 80% specificity used in a population with 5% prevalence. The probability that a random person who tests positive is actually a user is only:

P(User|Positive) = [P(Positive|User) × P(User)] / [P(Positive|User) × P(User) + P(Positive|Non-user) × P(Non-user)] = [0.90 × 0.05] / [0.90 × 0.05 + 0.20 × 0.95] ≈ 19% [1]

This demonstrates how Bayesian reasoning prevents misinterpretation of diagnostic results.

Clinical Reasoning with Multimodal Data

Research Reagent Solutions

Table 3: Essential Research Tools for Bayesian Modeling

| Research Tool | Function | Application Context |

|---|---|---|

| Probabilistic Programming Languages (Stan, PyMC3) | Specify complex Bayesian models | General Bayesian modeling, hierarchical models |

| Bayesian Network Software (GeNIe, Hugin) | Graphical BN construction and inference | Educational purposes, prototype development |

| Bayesplot Color Schemes | Visualization of posterior distributions | Model checking, result communication [26] |

| Node-Average Likelihood (NAL) Algorithm | BN structure learning from incomplete data | Handling missing data in real-world datasets [24] |

| Variable Elimination Algorithm | Exact inference in BNs | Probabilistic queries in moderate-sized networks [22] |

| Neural Text Representations | Integrating unstructured text into BNs | Clinical reasoning with narrative notes [23] |

| Narrative BN Templates | Standardized frameworks for evidence evaluation | Forensic fiber analysis, activity level propositions [4] |

Bayesian methods provide a powerful, coherent framework for scientific reasoning under uncertainty. From theoretical foundations to practical applications in fields ranging from forensic science to clinical medicine, the Bayesian approach offers principled methods for incorporating both data and prior knowledge into scientific conclusions. The ongoing development of computational methods - including efficient inference algorithms, techniques for handling incomplete data, and approaches for integrating diverse data types - continues to expand the applicability of Bayesian reasoning across scientific domains. As these methods become more accessible and computational power increases, Bayesian approaches are poised to play an increasingly central role in scientific research, particularly in complex, real-world problems where uncertainty is inherent and multiple sources of evidence must be reconciled.

From Theory to Practice: Implementing Bayesian Methods in Forensics

The evaluation of evidence, whether in a forensic laboratory or a clinical trial, is a fundamental scientific process. The Likelihood Ratio (LR) has emerged as a powerful and coherent statistical framework for quantifying the strength of evidence, providing a standardized measure for reasoning under uncertainty. Rooted in Bayesian probability theory, the LR enables researchers to update their beliefs about competing propositions based on new data [16] [27]. This technical guide explores the LR as a quantitative measure of evidential value, focusing specifically on its application within forensic evidence evaluation and its intrinsic relationship with Bayes' theorem.

The LR provides a method for comparing how well evidence supports one hypothesis against an alternative. This approach has gained significant traction in forensic science as practitioners seek objective quantitative methods to convey the meaning of evidence to legal decision-makers [16] [28]. The framework is equally valuable in diagnostic medicine and pharmaceutical research, where it helps assess the diagnostic value of tests or the strength of clinical findings [29] [30] [31].

Theoretical Foundations

Bayes' Theorem and the Role of the Likelihood Ratio

Bayes' Theorem, named after Reverend Thomas Bayes, provides the mathematical foundation for updating probabilities based on new evidence [32] [27]. In the context of evidence evaluation, it is typically expressed in its odds form:

Posterior Odds = Prior Odds × Likelihood Ratio

[16]

This equation separates the fact-finder's ultimate degree of belief (posterior odds) into their initial belief before considering the evidence (prior odds) and the weight of the evidence itself, quantified by the Likelihood Ratio [16]. The prior odds represent the fact-finder's initial assessment of the relative probabilities of the propositions based on all other information. The posterior odds represent the updated assessment after considering the new evidence. The Likelihood Ratio acts as the bridge between these two states of knowledge, quantifying how much the evidence should change one's beliefs [27].

Formally, the Likelihood Ratio is defined as the ratio of two probabilities: the probability of observing the evidence (E) if the first hypothesis (H1) is true, divided by the probability of observing the same evidence if the second hypothesis (H2) is true:

LR = P(E|H1) / P(E|H2)

[32] [30] [31]

Interpreting the Likelihood Ratio Value

The value of the LR provides direct insight into the strength and direction of the evidence. The further the LR is from 1, the stronger the evidence. The interpretation scale for LRs, as suggested by Jeffreys and others, provides a qualitative meaning to the quantitative values [33].

Table 1: Interpretation of the Likelihood Ratio Value

| LR Value | Strength of Evidence | Interpretation |

|---|---|---|

| > 10,000 | Decisive | Extreme support for H1 over H2 [33] [34]. |

| 1,000 - 10,000 | Very Strong | Very strong support for H1 over H2 [33]. |

| 100 - 1,000 | Strong | Strong support for H1 over H2 [33]. |

| 10 - 100 | Moderate | Moderate support for H1 over H2 [33]. |

| 1 - 10 | Limited | Weak support for H1 over H2 [33]. |

| 1 | None | The evidence is equally probable under both hypotheses; it has no evidential value [30] [31]. |

| 0.1 - 1 | Limited | Weak support for H2 over H1 [33]. |

| 0.01 - 0.1 | Moderate | Moderate support for H2 over H1 [33]. |

| 0.001 - 0.01 | Strong | Strong support for H2 over H1 [33]. |

| < 0.001 | Decisive | Extreme support for H2 over H1 [33] [34]. |

The Likelihood Ratio in Forensic Evidence Evaluation

Framework for Forensic Interpretation

In forensic science, the LR paradigm is increasingly advocated as a normative approach for expert testimony [16] [28]. The framework requires the examiner to define two mutually exclusive propositions, typically proposed by the prosecution and the defense. The role of the forensic expert is to evaluate the evidence under these two propositions and report the LR to the court [16].

For a piece of evidence E, the standard hypotheses are:

- H1 (Prosecution's Proposition): The evidence originated from the suspect.

- H2 (Defense's Proposition): The evidence originated from someone other than the suspect (a random member of the population).

The LR is then calculated as LR = P(E|H1) / P(E|H2). An LR of 1000 would mean that the observed evidence is 1000 times more likely if the suspect is the source than if a random person from the population is the source. This provides the trier of fact with a clear, quantitative measure of the evidence's strength, which they can then combine with other case information using Bayes' theorem [16] [28].

Application to Categorical and Digital Evidence

The LR framework is versatile and can be applied to various evidence types. For categorical count data, such as that encountered in digital forensics (e.g., patterns of user behavior), a Bayesian model can be developed to compute the LR in closed form [28]. In such applications, user-generated events are categorized, and the LR measures the relative probability of the observed pattern of counts under the two competing propositions about the user's identity. This approach provides a statistically rigorous method for comparing behavioral patterns from known and unknown sources [28].

Quantitative Application and Protocols

Calculation Methods and Formulae

The calculation of the LR depends on the nature of the test or evidence. For diagnostic tests with dichotomous outcomes (positive/negative), the LR can be derived directly from the test's sensitivity and specificity [30] [31].

Table 2: Likelihood Ratio Calculation for Diagnostic Tests

| Test Result | Formula | Component Definitions |

|---|---|---|

| Positive (LR+) | LR+ = Sensitivity / (1 - Specificity) |

Sensitivity: Probability a diseased patient tests positive (P(T+|D+)). Specificity: Probability a disease-free patient tests negative (P(T-|D-)). |

| Negative (LR-) | LR- = (1 - Sensitivity) / Specificity |

For instance, a test with 92% sensitivity and 99.98% specificity has an LR+ of 0.92 / (1 - 0.9998) = 0.92 / 0.0002 = 4600. This positive result is 4600 times more likely in a patient with the disease than in a patient without it, representing strong evidence for the disease [29]. For evidence that is not dichotomous, such as a continuous measurement or a result with multiple categories, an LR can be calculated for each specific result or category, providing more granular information than a simple positive/negative dichotomy [32] [30].

From Likelihood Ratio to Post-Test Probability

A critical application of the LR is updating the prior probability of a hypothesis (e.g., disease presence) to a posterior probability after incorporating the test result. This process involves converting probabilities to odds, multiplying by the LR, and converting back to probabilities [30] [31].

Protocol for Calculating Post-Test Probability:

- Establish Pre-Test Probability: Based on prevalence, clinical judgment, or base rates. Example: Pre-test probability = 0.1 (10%).

- Convert Pre-Test Probability to Pre-Test Odds:

Pre-test Odds = Pre-test Probability / (1 - Pre-test Probability). Example:0.1 / (1 - 0.1) = 0.1 / 0.9 ≈ 0.111. - Calculate Post-Test Odds:

Post-test Odds = Pre-test Odds × LR. Example (using LR+ of 20.4 from Table 3):0.111 × 20.4 ≈ 2.264. - Convert Post-Test Odds to Post-Test Probability:

Post-test Probability = Post-test Odds / (1 + Post-test Odds). Example:2.264 / (1 + 2.264) ≈ 2.264 / 3.264 ≈ 0.694.

This means a pre-test probability of 10% is updated to a post-test probability of about 69.4% after a positive test result with an LR of 20.4 [30]. This workflow can be visualized as a sequential updating process.

Illustrative Example from Clinical Research

The following table summarizes data from a study on smoking history as a diagnostic indicator for obstructive airway disease (OAD). The LR is calculated for each category of smoking exposure, demonstrating how LRs can utilize all available data without forcing dichotomization [30].

Table 3: Likelihood Ratios for Smoking History and Obstructive Airway Disease

| Smoking Habit (Pack Years) | OAD Present (n=148) | OAD Absent (n=144) | Likelihood Ratio (LR) | 95% CI |

|---|---|---|---|---|

| ≥ 40 | 42 (28.4%) | 2 (1.4%) | (42/148)/(2/144) = 20.4 | 5.04 to 82.8 |

| 20 - 40 | 25 (16.9%) | 24 (16.7%) | (25/148)/(24/144) = 1.01 | 0.61 to 1.69 |

| 0 - 20 | 29 (19.6%) | 51 (35.4%) | (29/148)/(51/144) = 0.55 | 0.37 to 0.82 |

| Never smoked | 52 (35.1%) | 67 (46.5%) | (52/148)/(67/144) = 0.76 | 0.57 to 1.00 |

Source: Adapted from Straus et al., 2000, as cited in [30].

This example shows that a history of ≥40 pack years provides strong positive evidence for OAD (LR=20.4), while a history of 0-20 pack years provides weak evidence against OAD (LR=0.55) [30]. The category of 20-40 pack years is uninformative (LR≈1). This multi-level approach preserves more diagnostic information than a simple "smoker/non-smoker" dichotomy.

Advanced Considerations and Uncertainty

The Scientist's Toolkit: Key Reagents and Models

Implementing the LR framework requires careful consideration of the underlying statistical models and data requirements.

Table 4: Essential Components for LR Calculation

| Component | Function / Description | Considerations |

|---|---|---|

| Reference Data | Data from known populations (e.g., known non-diseased and diseased populations; relevant background population in forensics). | Used to estimate the probability densities P(E|H1) and P(E|H2). Quality and representativeness are critical [16]. |

| Probabilistic Model | A statistical model (e.g., binomial, normal, kernel density, multivariate model) that describes the distribution of the evidence. | The choice of model can significantly impact the computed LR. The model must be a reasonable fit for the data [16] [28]. |

| Sensitivity Analysis | A procedure to evaluate how changes in assumptions or model parameters affect the resulting LR. | Assesses the robustness and uncertainty of the LR. An essential step for validating the conclusion [16]. |