Assessing Linkage Feasibility: A 2025 Guide to Quantifying Identifier Discriminatory Power for Researchers

This article provides a comprehensive framework for researchers and drug development professionals to assess the feasibility of data linkage projects by quantifying the discriminatory power of identifiers.

Assessing Linkage Feasibility: A 2025 Guide to Quantifying Identifier Discriminatory Power for Researchers

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to assess the feasibility of data linkage projects by quantifying the discriminatory power of identifiers. It covers foundational concepts like Shannon entropy and record uniqueness, explores deterministic, probabilistic, and machine learning linkage methods, addresses common challenges in data quality and privacy, and outlines validation techniques to ensure linkage accuracy. With the increasing reliance on linked real-world data in clinical research and regulatory submissions, this guide offers practical strategies for planning and executing robust, high-quality data linkages that are essential for generating reliable evidence.

The Foundation of Linkage: Understanding Discriminatory Power and Record Uniqueness

Defining Discriminatory Power in Data Linkage

In the context of data linkage, discriminatory power refers to the ability of a set of identifiers to correctly distinguish between different entities and accurately link records that belong to the same entity across multiple datasets. This concept is fundamental to assessing the feasibility of any linkage project, as the quality and usefulness of the resulting linked data depend heavily on the accuracy of this matching process. A fundamental step in any linkage effort is the prospective assessment of linkage feasibility, which depends largely on the quantity and quality of the identifying information available in the data sources being linked [1].

Identifiers possess varying levels of informativeness based on their discriminatory power, or the number of unique values they contain. This power determines how likely it is that two records will agree on an identifier simply by chance. For instance, sex (with only 2 unique values) has low discriminatory power, as randomly matched record pairs will agree 50% of the time by chance alone. In contrast, month of birth (with 12 unique values) is more informative, with random matches occurring only 8.3% of the time. When a matched pair agrees on month of birth, it is less likely due to chance and more likely that the records represent the same individual [1].

The principle of discriminatory power extends beyond simply counting unique values. Additional information can be gleaned from the values themselves—matches on rarely occurring values are less likely to occur by chance than matches on frequently occurring values. For example, a match on a rare surname such as "Lebowski" provides stronger evidence of a true match than a match on a common surname like "Smith" [1]. Combining multiple identifiers further increases the number of unique value combinations (or "pockets"), thereby decreasing the probability that two records will match by chance alone and enhancing the overall discriminatory power of the linkage strategy.

Quantitative Assessment of Discriminatory Power

Theoretical Framework and Metrics

The discriminatory power of identifiers can be quantified using formal mathematical approaches, enabling researchers to objectively evaluate and compare different linkage strategies. Shannon entropy provides one method for this quantification, calculated as the sum of the absolute value of (p*log₂(p)), where p is the proportion of records captured by each unique value of that identifier or set of identifiers [1].

To illustrate this calculation, consider a simple dataset with one variable (sex) and three records: one male and two females. The discriminatory power of sex in this scenario would be equal to abs((0.33)(log₂0.33)) + abs((0.67)(log₂0.67)) = 0.92. Using this method, researchers can measure the discriminatory power of each available identifier or set of identifiers and rank them from most to least discriminatory. Notably, two variables with the same number of unique values can have varying levels of discriminatory power depending on the distribution of unique values across the records [1].

For the purposes of record linkage, the minimum set of variables needed to successfully link two or more datasets is that combination of variables for which each record is identified uniquely (the mean number of records in each pocket is approximately 1.00). Variable combinations that approach this threshold of approximately one record per pocket in both datasets to be linked are most likely to succeed [1].

Comparative Discriminatory Power of Common Identifiers

Research has empirically evaluated the discriminatory power of various personal identifiers commonly used in health data linkage. One French study assessed six identifiers—date of birth, maiden name, usual last name, first and second Christian names, and gender—using a probabilistic record linkage method based on likelihood ratios [2].

The findings demonstrated that date of birth consistently exhibited the best discriminating power, followed by first and last names. The study also revealed that including a poorly discriminating identifier like gender did not improve results, and adding a second Christian name, which was often missing, actually increased linkage errors. The research further suggested that using a phonetic treatment adapted to the French language slightly improved linkage results compared to the Soundex algorithm [2].

Table 1: Relative Discriminatory Power of Common Identifiers in Data Linkage

| Identifier | Relative Discriminatory Power | Key Characteristics | Considerations |

|---|---|---|---|

| Date of Birth | Highest | Fixed at birth, numerous unique values | Stable over time |

| Last Name | High | Cultural variations in distribution | May change after marriage |

| First Name | High | Cultural and generational patterns | Nicknames common |

| Maiden Name | Moderate to High | Fixed at birth | Availability issues |

| Middle Name | Moderate | Often missing or abbreviated | Limited utility if incomplete |

| ZIP/Post Code | Variable | Many unique values | Changes with relocation |

| Gender | Lowest | Only 2 possible values | Limited discriminatory value |

Methodological Approaches for Assessing Discriminatory Power

Record Uniqueness Assessment

A critical methodology for evaluating linkage feasibility involves assessing record uniqueness within datasets. This approach examines the frequency distributions for every possible combination of variables in the dataset to identify variable combinations that uniquely identify records [1].

The implementation involves calculating the percentage of unique records in a dataset identified by each combination of variables available for release. Researchers can request that data vendors examine this percentage, focusing on variable combinations that approach the threshold of approximately one record per pocket in both datasets to be linked. Variable combinations meeting this threshold are likely to support successful linkage [1].

For hypothesis-testing research, adhering to the approximately 1.00 record per pocket threshold is advised, as false positives and false negatives introduced during linkage can bias subsequent statistical analyses. For exploratory research, this threshold can be relaxed. SAS code for assessing record uniqueness in a dataset has been developed and made publicly available by Tiefu Shen [1].

Probabilistic Linkage Methods

Probabilistic linkage methods provide a framework for formally incorporating the discriminatory power of identifiers into the linkage decision process. Unlike deterministic approaches that require exact matches, probabilistic methods allow for errors in matching variables and assign a probability of a correct match [3].

The probabilistic approach uses the discriminatory power of identifiers to calculate agreement weights for each identifier. Rare values that match contribute more evidence toward a true match than common values. The method determines the level of discriminatory power needed to link two records with a certain desired degree of confidence (e.g., 95%) by comparing the combined discriminatory power (assessed as weights) over all available variables with the difference between the current weight and the desired weight [1].

This methodology allows researchers to set an information threshold for the discriminatory power needed to successfully match files while meeting a prespecified false-positive rate. Once this information threshold is met, researchers can identify the minimum discriminatory power needed, avoiding unnecessary costs or compromises to subject confidentiality [1].

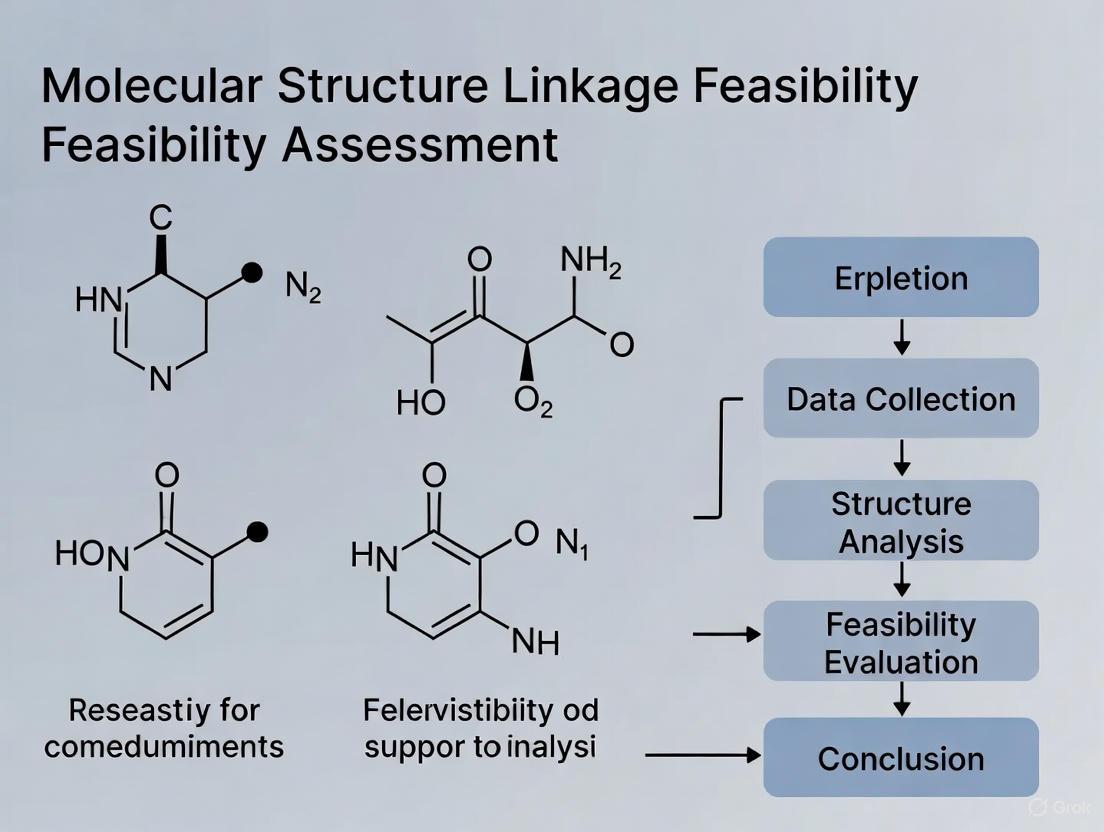

Data Linkage Methodology Workflow

Comparative Analysis of Linkage Methods

Deterministic vs. Probabilistic Linkage

Data linkage methods primarily fall into two categories: deterministic and probabilistic, each with distinct strengths, weaknesses, and appropriate use cases based on the discriminatory power of available identifiers [4] [3].

Deterministic linkage, also known as exact matching, requires records to agree exactly on every character of every matching variable to be declared a match. This method can be implemented in a single step (comparing all identifiers at once) or multiple steps (iterative approach with progressively less restrictive criteria). The primary advantage of deterministic linkage is its simplicity and lower computational requirements. However, it treats all identifiers as equally important, failing to account for their varying discriminatory power, and is vulnerable to even minor data errors [4] [3].

Probabilistic linkage incorporates the discriminatory power of identifiers through a weight-based system that accounts for the relative importance of each identifier. This method allows for partial agreements and can accommodate errors in matching variables. While more computationally intensive, probabilistic linkage typically achieves higher match rates and better accuracy, particularly when data quality issues are present [3].

Table 2: Comparison of Deterministic and Probabilistic Linkage Methods

| Characteristic | Deterministic Linkage | Probabilistic Linkage |

|---|---|---|

| Matching Principle | Exact agreement on all specified identifiers | Probability-based with partial agreements |

| Identifier Weighting | All identifiers treated equally | Weights based on discriminatory power |

| Error Tolerance | Low | High |

| Computational Demand | Lower | Higher |

| Data Quality Dependence | High | Moderate |

| Typical Match Rate | Lower | Higher |

| False Match Control | Good with high-quality data | Good across data quality spectrum |

| Implementation Complexity | Simple | Complex |

Practical Implementation Considerations

Successfully implementing a data linkage project requires careful consideration of multiple factors beyond theoretical discriminatory power. Data quality issues significantly impact practical discriminatory power, as identifiers may contain typographical errors, missing values, or inconsistent formatting [4].

Temporal disparities between datasets present another critical challenge. Addresses, phone numbers, and names change over time, and if information is collected at different times for different data sources, linkage success may be diminished. This affects both the mechanical linkage process and the functional utility of the linked data, potentially leading to misinterpretation of joint data patterns [1].

The regulatory and ethical environment also constrains linkage feasibility. Researchers must consider the original purpose of data collection, any limitations on data use, data ownership, and governance requirements before embarking on a linkage project. These considerations should be evaluated to the extent possible before applying for grant funding [1].

The Researcher's Toolkit for Data Linkage

Implementing a robust data linkage strategy requires both methodological expertise and practical tools. The following toolkit outlines essential components for assessing and utilizing discriminatory power in linkage projects.

Table 3: Essential Research Toolkit for Data Linkage

| Tool/Resource | Function/Purpose | Implementation Examples |

|---|---|---|

| Data Cleaning Algorithms | Standardize formats and correct errors | SAS, Stata, OpenRefine |

| Phonetic Encoding | Account for spelling variations | Soundex, NYSIIS |

| String Comparators | Measure similarity between strings | Jaro-Winkler distance |

| Record Uniqueness Assessment | Evaluate identifier combinations | SAS code by Tiefu Shen |

| Probabilistic Linkage Frameworks | Implement weight-based matching | Fellegi-Sunter model |

| Deterministic Linkage Algorithms | Perform exact matching | Iterative matching routines |

Data cleaning and standardization form the foundation of successful linkage. Techniques include parsing fused strings (e.g., separating full names into first, middle, and last names), standardizing formats (e.g., dates, case), and handling missing values consistently across datasets. The extent of cleaning should be proportional to data quality—more intensive cleaning is warranted when data quality is poor or few identifiers are available [4].

Advanced matching techniques enhance practical discriminatory power. Phonetic codes like Soundex or NYSIIS transform strings based on pronunciation, accounting for spelling variations. String comparator metrics (e.g., Jaro distance) quantify similarity between strings by accounting for deletions, insertions, and transpositions. These techniques help compensate for data quality issues while maintaining linkage accuracy [4] [3].

Discriminatory Power Assessment Process

Discriminatory power serves as the cornerstone of successful data linkage, determining both the feasibility and quality of linked datasets. This comprehensive analysis demonstrates that effective linkage requires careful assessment of identifier quality, appropriate method selection, and meticulous attention to implementation details. By quantitatively evaluating discriminatory power through methods like Shannon entropy and record uniqueness assessment, researchers can make informed decisions about linkage strategies before committing significant resources.

The comparative analysis reveals a tradeoff between deterministic and probabilistic approaches, with the optimal choice dependent on data quality, identifier availability, and research objectives. As data privacy concerns grow and access to unique identifiers becomes more restricted, understanding and leveraging the discriminatory power of partial identifiers becomes increasingly crucial. Future advancements in linkage methodology will likely focus on enhancing discriminatory power through sophisticated algorithms while maintaining strict privacy protections, ensuring that data linkage remains a powerful tool for evidence-based research and policy development.

In health services research and drug development, linking data from disparate sources—such as clinical trials, electronic health records, and administrative claims—is essential for generating comprehensive evidence. The feasibility of any linkage project hinges on the discriminatory power of the identifiers available within the datasets. This guide objectively compares the informational value of common identifiers, from highly specific ones like Social Security Numbers to broader demographic data, providing a framework for researchers to assess linkage feasibility for their studies.

Comparative Informational Value of Key Identifiers

The ability of an identifier to correctly link records depends on its "discriminatory power," or the number of unique values it can take. Combining identifiers increases the number of unique combinations ("pockets"), making it less likely that records match by chance alone [1]. The table below summarizes the key characteristics and relative power of common identifiers.

Table 1: Comparative Analysis of Key Identifiers Used in Data Linkage

| Identifier | Type | Discriminatory Power & Notes | Common Data Quality Issues | Best Suited For |

|---|---|---|---|---|

| Social Security Number (SSN) | Unique Identifier | Very High; Near-perfect accuracy if available and correct [5]. | May be entered incorrectly or represent the primary subscriber for an entire family in claims data [1]. | Deterministic linking in datasets with verified, unique SSNs [5]. |

| Personal Health Number | Unique Identifier | Very High; Similar to SSN where available [5]. | Country-specific; may not be available in all datasets. | Deterministic linking within a single healthcare system. |

| Full Name | Quasi-Identifier | Moderate to High; Power increases with rarity (e.g., "Lebowski" > "Smith") [1]. | Typos, nicknames, hyphenation, cultural variations, and name changes [5]. | Probabilistic linking, often used in blocking strategies. |

| Date of Birth | Quasi-Identifier | Moderate; More informative than sex (1/12 chance of random match vs. 1/2) [1]. | Formatting inconsistencies, data entry errors. | A core component of most probabilistic matching models. |

| Address/Postal Code | Quasi-Identifier | Moderate to High; Informative, especially in smaller geographic areas. | High volatility over time, formatting differences (e.g., "St." vs. "Street") [1] [5]. | Probabilistic linking; useful for validating other identifiers. |

| Sex | Quasi-Identifier | Low; Records matched randomly will agree on sex 50% of the time by chance [1]. | Binary coding may not reflect gender identity; generally immutable. | A supporting variable in probabilistic models; low power alone. |

Quantifying Discriminatory Power and Linkage Feasibility

Before embarking on a linkage project, researchers must determine if a reliable and accurate link is possible with the available identifiers. This involves quantitative assessment of identifier quality.

Measuring Discriminatory Power with Shannon Entropy

The discriminatory power of an identifier or a set of identifiers can be quantified using Shannon entropy. This metric accounts for both the number of unique values and their distribution across the records [1].

The formula for Shannon entropy (H) is: H = -Σ (pi * log₂(pi)) Where p_i is the proportion of records captured by each unique value of the identifier.

Experimental Protocol for Calculating Entropy:

- Data Preparation: Isolate the identifier variable(s) to be assessed from your dataset.

- Frequency Calculation: For a single identifier, calculate the frequency and proportion (p_i) of each unique value in the dataset.

- Entropy Computation: For each unique value, compute the expression

abs(p_i * log2(p_i)). Sum these values across all unique values to obtain the total entropy for that identifier. - Combination Assessment: To evaluate a combination of identifiers (e.g., sex, date of birth, and postal code), first create a new composite variable representing each unique combination of values. Then, calculate the entropy for this new composite variable using the same method.

- Interpretation: A higher entropy value indicates greater discriminatory power. Identifiers or combinations with higher entropy are better suited for record linkage. Researchers should aim for a combination of variables where the mean number of records per unique combination ("pocket") is approximately 1.00, which indicates a high probability of uniquely identifying records [1].

Table 2: Workflow for Assessing Record Uniqueness in a Dataset

| Step | Action | Tool/Code |

|---|---|---|

| 1 | Examine frequency distributions for every possible combination of variables in the dataset. | SAS code for this purpose is available from the North American Association of Central Cancer Registries (NAACCR) [1]. |

| 2 | Identify variable combinations that result in a mean number of records per pocket close to ~1.00. | Custom scripts in R or Python can also be developed to perform this analysis. |

| 3 | Select the minimal set of variables that achieves the desired uniqueness threshold for the linkage. | The chosen set should balance discriminatory power with data privacy concerns. |

Methodologies for Data Linking

Once identifiers are assessed, a linking method must be selected. The choice depends on data quality, available identifiers, and privacy constraints [5].

Table 3: Comparison of Core Data Linking Methodologies

| Feature | Deterministic Linking | Probabilistic Linking | Machine Learning Linking |

|---|---|---|---|

| Basis | Exact match on unique identifiers or a combination of quasi-identifiers [5]. | Statistical probability based on weights assigned to multiple fields [5]. | Learned patterns from training data [5]. |

| Accuracy | High when identifiers are perfect and complete, but fails with any imperfection [5]. | Robust to errors and variations; may produce false positives/negatives requiring manual review [5]. | Potentially the highest accuracy, adapts to data nuances [5]. |

| Data Needs | Requires a common, high-quality key (e.g., National ID) or exact agreement on a set of variables [5]. | Works with non-unique, imperfect identifiers (name, DOB, address) [5]. | Requires a large, labeled training set for supervised learning [5]. |

| Complexity | Simple to implement and compute [5]. | Computationally intensive; requires tuning of m- and u-probabilities [5]. | High complexity; requires significant ML expertise and resources [5]. |

| Best Application | Datasets with high-quality, shared unique IDs or standardized demographic data. | Most real-world scenarios with no perfect IDs or with data errors [5]. | Complex linking problems where high accuracy is paramount and resources are available. |

The following diagram illustrates the workflow for a probabilistic linkage process, which is commonly used in research settings where perfect identifiers are unavailable.

Probabilistic Record Linkage Workflow

The Scientist's Toolkit: Essential Reagents for Linkage Research

This table details key solutions and materials required for implementing a data linkage study, particularly in the context of clinical and real-world data.

Table 4: Essential Research Reagents and Solutions for Data Linkage

| Tool/Solution | Function & Explanation |

|---|---|

| Tokenization Solutions | Enable privacy-preserving linkage by de-identifying patient data and replacing identifiers with irreversible tokens. This allows connecting trial data to EHRs or claims without exposing PII [6]. |

| Privacy-Preserving Record Linkage (PPRL) | A suite of computational techniques that allow records to be matched across organizations without the original, identifiable data ever being shared or revealed [5]. |

| Linkage Feasibility Framework | A structured process to assess variable overlap, data quality, and ethical/legal restrictions before starting a project. This ensures the linkage is technically and legally possible [1]. |

| Statistical Software (SAS/R/Python) | Used for calculating Shannon entropy, assessing record uniqueness, performing probabilistic matching, and analyzing the final linked dataset. SAS code for record uniqueness is publicly available [1]. |

| Fellegi-Sunter Model | The foundational probabilistic model for record linkage. It calculates m-probabilities (agreement given a true match) and u-probabilities (agreement given a non-match) to score record pairs [5]. |

Selecting the right identifiers and understanding their informational value is the cornerstone of assessing linkage feasibility. While unique identifiers like SSNs offer the highest discriminatory power, their absence or unreliable quality often necessitates the use of quasi-identifiers and robust probabilistic methods. By quantitatively evaluating identifiers using measures like Shannon entropy and adhering to structured experimental protocols, researchers and drug development professionals can design higher-quality, more feasible linkage studies that generate reliable evidence for regulatory and payer decisions.

Publish Comparison Guides

Shannon entropy, introduced by Claude Shannon in 1948, is a fundamental concept from information theory that quantifies the average level of uncertainty or information inherent in a random variable's possible outcomes [7]. In essence, it measures the expected or "average" amount of information needed to specify the state of a variable, considering the probability distribution across all its potential states. The core intuition is that the informational value of a message is tied to how surprising its content is; a highly likely event carries little information, while a highly unlikely event is much more informative [7]. The entropy, H, of a discrete random variable X is mathematically defined as: H(X) = - Σ p(x) log p(x) where p(x) represents the probability of each possible outcome x [7]. The higher the entropy, the greater the uncertainty or the more information is required to describe the variable.

This guide frames Shannon entropy within the specific research context of assessing linkage feasibility, which is crucial in health services research when linking datasets like administrative claims and disease registries [1]. A fundamental step in such projects is prospectively evaluating whether a reliable and accurate linkage is possible, which largely depends on the discriminatory power of the available identifiers (e.g., sex, month of birth, Social Security Number) [1]. Shannon entropy provides a powerful, quantitative means to measure this discriminatory power, helping researchers determine if their data contains sufficient information to successfully and accurately link records.

Core Concepts and Linkage Feasibility Assessment

Key Principles of Information Theory

Shannon entropy rests on the principle of surprisal. The information content (or surprisal) of a single event E is defined as I(E) = -log(p(E)), where p(E) is the event's probability [7]. Entropy is then the expected value of this surprisal across all possible events or outcomes for the random variable [7]. This means it quantifies the average level of uncertainty. A key feature is that entropy is maximized when all outcomes are equally likely (e.g., a fair coin toss), representing a state of maximum uncertainty and minimum predictability. Conversely, entropy is zero when the outcome is deterministic (a single outcome has a probability of 1) [7].

The unit of entropy depends on the base of the logarithm used. Using log base 2 gives bits, while the natural logarithm yields nats. In the context of data linkage, the bit is a common and intuitive unit.

Quantifying Discriminatory Power for Linkage

In record linkage, the "random variable" can be thought of as the value of an identifier or a combination of identifiers across all records in a dataset. The discriminatory power of an identifier refers to its ability to uniquely identify a record, which is a function of the number and distribution of its unique values [1].

- Low-Discriminatory Identifiers: Variables with very few unique values, such as sex (2 values), contain little information. Random record pairs will agree on sex 50% of the time by chance alone [1].

- High-Discriminatory Identifiers: Variables with many unique values, such as month of birth (12 values), are more informative. The chance of a random match is only 8.3% (1/12) [1]. Identifiers with even more unique values, like a full birth date or SSN, are more powerful still.

The power of combining identifiers is multiplicative. For example, while sex alone has 2 unique values and month of birth has 12, the combination of sex + month of birth creates 2 * 12 = 24 unique "pockets," drastically reducing the probability of a match by chance and increasing the confidence that a matched pair is a true match [1].

Shannon entropy formally quantifies this intuitive understanding. The entropy for an identifier is calculated as the sum of the absolute value of (p * log2(p)) for every unique value of that identifier, where p is the proportion of records captured by each unique value [1]. This calculation accounts not only for the number of unique values but also for their distribution. An identifier with uniformly distributed values will have higher entropy than one where a few values are very common, making it a more powerful discriminator.

Table 1: Discriminatory Power of Common Linkage Identifiers

| Identifier | Number of Unique Values (Theoretical) | Probability of Random Match (Uniform) | Information Content (Typical) |

|---|---|---|---|

| Sex | 2 | 50% | Low |

| Month of Birth | 12 | ~8.3% | Medium |

| 5-Digit ZIP Code | ~100,000 | ~0.001% | High |

| Social Security Number | ~1 billion | ~0.0000001% | Very High |

Shannon Entropy in Action: Experimental Protocols and Applications

Detailed Methodology for Assessing Linkage Feasibility

The following workflow, as outlined by the Agency for Healthcare Research and Quality (AHRQ), details the steps for using Shannon entropy to assess the feasibility of a data linkage project [1].

1. Data Preparation and Identifier Selection:

- Obtain the datasets to be linked.

- Identify the common variables (potential identifiers) available in both datasets. Examples include sex, date of birth, ZIP code, and SSN.

2. Calculate Proportion and Entropy for Each Identifier:

- For each identifier variable, calculate the proportion

pof records for each of its unique values. For example, for the variable "sex," calculate the proportion of records that are "male" and "female." - Compute the Shannon entropy

Hfor the variable using the formula: H(Identifier) = - Σ [ pi * log2(pi) ] where the sum is over all unique valuesiof the identifier [1].

3. Assess Record Uniqueness:

- Examine the frequency distributions for every possible combination of variables.

- The goal is to find the minimal set of variables for which each record is (almost) uniquely identified, meaning the mean number of records in each value combination "pocket" is approximately 1.00 [1].

- SAS or other statistical code can be used to automate this analysis of record uniqueness across variable combinations.

4. Set an Information Threshold:

- Based on the entropy and uniqueness analysis, researchers can set a threshold for the discriminatory power needed to successfully match files with a pre-specified false-positive rate (e.g., 95% confidence) [1].

- This helps avoid paying for or using more identifying information than is necessary, thus protecting subject confidentiality.

Performance Comparison with Traditional Methods

The application of Shannon entropy provides a significant advantage over traditional, less formal methods of assessing linkage feasibility, which might rely on ad hoc judgments about identifier quality.

Table 2: Comparison of Linkage Feasibility Assessment Methods

| Assessment Feature | Traditional / Ad Hoc Methods | Shannon Entropy-Based Method |

|---|---|---|

| Basis of Evaluation | Subjective intuition about identifier "quality." | Quantitative, mathematical measure of information content. |

| Handling of Value Distribution | Often ignores distribution, focusing only on the number of unique values. | Explicitly accounts for the frequency distribution of each unique value (e.g., rare vs. common surnames). |

| Combination of Identifiers | Difficult to objectively gauge the combined power of multiple variables. | Allows for the calculation of entropy for any variable combination, objectively quantifying total discriminatory power. |

| Decision Threshold | Vague and difficult to standardize across projects. | Enables the setting of a precise information threshold tied to a desired confidence level (e.g., 95%). |

| Efficiency | May lead to acquiring more identifying data than necessary, increasing cost and privacy risk. | Helps identify the minimal set of identifiers required, protecting confidentiality. |

Advanced Applications and Computational Tools

Beyond Linkage: Shannon Entropy in Biomedical Research

The utility of Shannon entropy extends far beyond data linkage, proving to be a versatile tool in various biomedical research domains.

Drug Target Identification: A seminal study applied Shannon entropy to temporal gene expression data from rat spinal cord development. The core hypothesis was that genes with the highest entropy in their expression patterns over time are the most active participants in a biological process (like development or disease) and thus represent the best putative drug targets. The study found that recognized functional categories, like ionotropic neurotransmitter receptors, were over-represented at the highest entropy levels, validating the approach [8]. This method allows researchers to rank genes by physiological relevance and focus resources on the most promising candidates, which often represent less than 10% of the genome in response to toxins [8].

Quantifying Neural Dysregulation: In neuroimaging, Shannon entropy has been used to quantify dysregulation in the limbic system. One study using fMRI and near-infrared spectroscopy (NIRS) found that individuals with greater trait anxiety showed increased entropy in their prefrontal cortex response to aversive stimuli [9]. This suggests that a less regulated neural system exhibits a more disordered, higher entropy signal, providing a quantitative biomarker for psychological traits.

Molecular Property Prediction: In cheminformatics, a Shannon Entropy Framework (SEF) based on molecular string representations (like SMILES) has been used as descriptors in machine learning models. These SEF descriptors, which are low-correlation and sensitive to subtle structural changes, have been shown to significantly enhance the prediction accuracy of molecular properties, such as binding efficiency, sometimes outperforming standard descriptors like Morgan fingerprints [10].

Computational Tools for Entropy Calculation

As the applications of Shannon entropy grow, so does the need for scalable computational tools. Recent research has focused on overcoming the challenge of precisely computing entropy for complex systems, which traditionally requires a number of model-counting queries that grows exponentially with the number of variables.

PSE (Precise Shannon Entropy): A state-of-the-art tool designed for programs modeled by Boolean constraints. PSE optimizes the entropy computation process in two stages: first, it uses a knowledge compilation language (

ADD[∧]) to avoid exhaustively enumerating all possible outputs; second, it optimizes model counting queries by caching shared components across queries. This makes precise computation feasible for larger problems [11].Performance Comparison: In experimental evaluations on 441 benchmarks, PSE solved 55 more instances than the prior state-of-the-art probably approximately correct (PAC) tool, EntropyEstimation. For 98% of the benchmarks solved by both tools, PSE was at least 10 times more efficient, marking a significant advance in scalable, precise entropy computation [11].

Table 3: Comparison of Computational Tools for Shannon Entropy

| Tool Name | Core Methodology | Key Advantage | Demonstrated Performance |

|---|---|---|---|

| PSE (Precise Shannon Entropy) | Knowledge compilation & optimized model counting. | Scalable, precise computation. | Solved 329/441 benchmarks; >10x faster than EntropyEstimation on most shared benchmarks [11]. |

| EntropyEstimation | Probably Approximately Correct (PAC) estimation via uniform sampling. | Avoids output enumeration; good scalability. | Solved 274/441 benchmarks [11]. |

The Scientist's Toolkit

Table 4: Essential Research Reagents and Solutions for Entropy-Based Analysis

| Item / Solution | Function in Research |

|---|---|

| Administrative Datasets (e.g., Claims Data) | Provide the raw, real-world data on which linkage feasibility is assessed and entropy of identifiers is calculated [1]. |

| Statistical Software (e.g., SAS, R, Python) | Platforms used to execute code for calculating proportions, Shannon entropy, and record uniqueness across variable combinations [1]. |

| Record Uniqueness Analysis Code | Pre-written scripts (e.g., in SAS) that automate the process of finding variable combinations that uniquely identify records, a key step in feasibility assessment [1]. |

| Gene Expression Datasets (Microarray, RNA-seq) | Provide temporal or condition-specific mRNA abundance data, which serves as the input for calculating gene expression entropy in drug target identification [8]. |

| PSE (Precise Shannon Entropy) Tool | A specialized software tool for performing scalable and precise computation of Shannon entropy on Boolean models, crucial for quantitative information flow analysis in software/program analysis [11]. |

| Shannon Entropy Framework (SEF) Descriptors | A set of numerical descriptors derived from the Shannon entropy of molecular string representations (e.g., SMILES), used as input for machine learning models predicting molecular properties [10]. |

Shannon entropy has evolved from a cornerstone of communication theory into a versatile quantitative tool across scientific disciplines. In the specific context of assessing linkage feasibility, it provides an objective, mathematical framework to measure the discriminatory power of identifiers, enabling researchers to make informed decisions before embarking on complex and costly data linkage projects. By calculating the entropy of individual identifiers and their combinations, researchers can determine if a high-quality link is possible, identify the minimal set of data required, and protect subject confidentiality.

The continued development of advanced computational tools, like PSE, ensures that precise entropy calculations can be performed at scale. Furthermore, its successful application in diverse fields—from identifying drug targets from gene expression data to enhancing molecular property prediction in machine learning—underscores its fundamental utility in extracting meaningful information from complex, noisy biological data. For researchers in health services and drug development, mastering the application of Shannon entropy is a powerful step towards more rigorous, efficient, and successful data-driven research.

In identifier discriminatory power research, the principle of record uniqueness is paramount for ensuring data integrity and linkage feasibility. The "~1.00 Record per Pocket" threshold emerges as a critical benchmark, signifying an ideal state where identifiers possess sufficient discriminatory power to minimize ambiguity. In scientific data analysis, particularly in fields like genomics and drug discovery, the ability to distinguish true biological signals from artifacts introduced during experimental processes like PCR amplification is foundational [12] [13]. This guide objectively compares methodologies and tools for achieving and assessing this threshold, providing researchers with a framework for evaluating the feasibility of record linkage based on the discriminatory power of their identifying systems.

Unique Molecular Identifiers (UMIs), short random nucleotide sequences, provide a powerful model system for this research. They are incorporated into each molecule in a sample library prior to any PCR amplification steps, acting as unique tags that enable precise tracking of individual molecules through the sequencing process [12] [13]. The core challenge that necessitates such identifiers is amplification bias, where certain sequences are overrepresented during PCR, distorting the true representation of molecules in the original sample and complicating the accurate assessment of record uniqueness [14] [13].

Core Methodologies for Assessing Uniqueness with UMIs

The journey from raw sequencing data to a confident count of unique molecules involves sophisticated bioinformatic techniques. Below is a detailed comparison of the primary methods used to account for errors and resolve complex networks of related sequences.

Comparative Analysis of UMI Deduplication Methods

Table 1: Comparison of UMI Deduplication Methods for Assessing Record Uniqueness

| Method Name | Core Principle | Handling of UMI Errors | Key Advantage | Key Limitation |

|---|---|---|---|---|

| Unique [14] | Counts every distinct UMI sequence at a genomic locus as a unique molecule. | Does not account for sequencing errors in the UMI. | Simplicity and computational speed. | Overestimates true molecule count due to artifactual UMIs from errors. |

| Percentile [14] | Removes UMIs whose counts fall below a set threshold (e.g., 1% of mean counts at the locus). | Filters out low-count UMIs assumed to be errors. | Straightforward filtering of likely noise. | Relies on an arbitrary threshold; may remove true, low-abundance molecules. |

| Cluster [14] | Merges all UMIs within a defined edit distance (e.g., 1 or 2) into a single, representative UMI. | Groups similar UMIs to correct for errors. | Effectively reduces error-induced inflation. | Can underestimate count in complex networks from multiple true molecules. |

| Adjacency [14] | Iteratively removes the most abundant UMI in a network and all its neighbors within one edit distance. | Uses count information to resolve networks, allowing for multiple origin molecules. | More accurate for complex networks than the "cluster" method. | Resolution of networks with an edit distance of 2 can be suboptimal. |

| Directional [14] | Forms directional networks based on edit distance and count ratios (na ≥ 2nb − 1) to identify error-derived UMIs. |

Models the likelihood that a UMI originated as an error from a "parent" UMI. | High accuracy by leveraging both sequence similarity and abundance. | More computationally complex than other network-based methods. |

Detailed Experimental Protocol for UMI Error Analysis

The network-based methods (Cluster, Adjacency, Directional) rely on a defined experimental workflow to quantify and correct for artifactual UMIs. The following protocol, as implemented in tools like UMI-tools, details this process [14]:

- Data Extraction and Grouping: For a given sequencing experiment using UMI-tagged libraries, the UMI sequence and its corresponding genomic alignment coordinates are extracted for each read. All reads are then grouped by their identical genomic coordinates, creating pools of UMIs for each unique locus.

- Edit Distance Calculation: Within each group of UMIs (at the same genomic locus), the average edit distance (number of base differences) between all UMI pairs is calculated. This distribution is compared to a null expectation generated by randomly sampling UMIs. An enrichment of low edit distances indicates the presence of artifactual UMIs created by PCR or sequencing errors [14].

- Network Formation: For each group of UMIs at a shared locus, a network graph is constructed. In this graph, each node represents a unique UMI sequence, and edges connect nodes that are separated by a single edit distance (a one-nucleotide difference) [14].

- Network Resolution (Deduplication): Each connected network is resolved using one of the methods described in Table 1 (e.g., Cluster, Adjacency, Directional). The goal is to reduce the network down to the estimated number of unique molecules that originated prior to amplification. For the Adjacency method, this involves iteratively removing the most abundant node and its connected neighbors until the network is accounted for, with the number of removal steps equaling the estimated unique molecule count [14].

- Quantification and Threshold Application: The final output is a deduplicated count of unique molecules for each genomic locus. Researchers can then analyze the distribution of molecules per locus (or "pocket") and apply thresholds, such as assessing how many loci approach the ideal of ~1.00 record per pocket, indicating high-confidence, unique observations.

Workflow Visualization for UMI Uniqueness Assessment

The following diagram illustrates the logical workflow for processing UMIs to assess record uniqueness, from raw data to final threshold application.

The Scientist's Toolkit: Essential Reagents and Computational Solutions

Successful experimentation with UMIs and the assessment of record uniqueness require both specific laboratory reagents and robust bioinformatic tools.

Table 2: Key Research Reagent Solutions for UMI Workflows

| Item / Solution Name | Function / Application in UMI Protocols |

|---|---|

| UMI-Adopted Library Prep Kits | Commercial kits (e.g., from Illumina, Lexogen) that incorporate UMI tagging into the standard library preparation workflow, ensuring UMIs are added before PCR amplification [12] [13]. |

| Unique Molecular Identifiers (UMIs) | Short, random nucleotide sequences (e.g., 10nt = ~1 million unique tags) that uniquely label each individual molecule in a sample, enabling digital counting and error correction [12] [13]. |

| High-Fidelity DNA Polymerase | Essential for the PCR amplification step to minimize the introduction of novel errors within the UMI sequences themselves during library preparation. |

| Bioinformatic Tools (UMI-tools) | A dedicated software package providing implemented network-based methods (directional, adjacency, etc.) for accurate UMI deduplication and error correction [14]. |

| Deduplication-Aware Aligners | Computational pipelines that are aware of and can correctly process and group sequencing reads based on both their UMI sequences and genomic mapping coordinates. |

Comparative Performance Data: Accuracy in Molecular Counting

The choice of deduplication method has a direct and measurable impact on quantification accuracy, which is critical for achieving a reliable assessment of record uniqueness.

Table 3: Performance Comparison of UMI Deduplication Methods on Real and Simulated Datasets

| Evaluation Metric | Unique Method | Percentile Method | Cluster Method | Adjacency Method | Directional Method |

|---|---|---|---|---|---|

| Quantification Accuracy (iCLIP Data) | Low (High false-positive rate) | Moderate | Good | Better | Best (Improved reproducibility between replicates) [14] |

| Handling of UMI Sequencing Errors | No | Partial | Yes | Yes | Yes (Most sophisticated) [14] |

| Resolution of Complex UMI Networks | N/A | N/A | Poor (Underestimates count) | Good | Best [14] |

| Impact on Single-Cell RNA-seq Clustering | Suboptimal | N/A | N/A | N/A | Optimal (Improved cell type separation) [14] |

The pursuit of the ~1.00 record per pocket threshold is a quest for data fidelity. As the comparative data demonstrates, simplistic UMI deduplication methods like "Unique" or "Percentile" are insufficient for rigorous assessments of identifier discriminatory power, as they are highly susceptible to inflation from experimental noise. Network-based methods, particularly the "Directional" and "Adjacency" approaches implemented in tools like UMI-tools, provide the necessary sophistication to correct for errors and deliver accurate counts of true unique molecules [14]. The choice of method should be guided by the specific application: while "Cluster" may suffice for simple datasets, the "Directional" method offers superior performance for complex experiments like single-cell RNA-seq or iCLIP, where accurate linkage and quantification are paramount for valid scientific conclusions [14]. By adopting these advanced methodologies, researchers can robustly assess the linkage feasibility of their identifier systems, ensuring that downstream analyses are built upon a foundation of accurate and unique records.

The Critical Role of Feasibility Assessment in Pre-Study Planning

In the scientific method, the step between a conceptual idea and a full-scale study is critical. Feasibility assessment serves as this essential bridge, systematically evaluating whether a proposed study can be successfully implemented within real-world constraints. For research involving data linkage—the integration of records from distinct datasets—a rigorous pre-study feasibility assessment is not merely beneficial but fundamental to ensuring the validity and reliability of subsequent findings. Such assessments methodically examine logistical practicality, resource availability, and, specific to linkage, the technical possibility of accurately merging datasets based on available common identifiers. By identifying potential pitfalls in protocols, data collection methods, and intervention delivery early, researchers can mitigate risks of costly failures, thereby safeguarding valuable resources and upholding the integrity of the scientific process [15] [16].

Within the specialized domain of data linkage, feasibility centers on a core technical question: can the available identifiers (e.g., name, date of birth) correctly and uniquely link records pertaining to the same individual across different databases? The answer hinges on the discriminatory power of these identifiers—a measure of their ability to reduce false matches (linking records that do not belong to the same person) and false non-matches (failing to link records that do belong to the same person). Assessing this power before a study begins is a cornerstone of rigorous research planning, transforming a linkage project from a hopeful endeavor into a calculated, evidence-based initiative [1].

The Feasibility Assessment Framework: A Multi-Faceted Approach

A comprehensive feasibility assessment extends beyond technical data linkage to evaluate the entire research ecosystem. The National Center for Complementary and Integrative Health (NCCIH) framework emphasizes testing methods and procedures to gauge feasibility and acceptability for a larger study [16]. This involves a multi-pronged approach, the key components of which can be visualized in the following workflow.

Feasibility Assessment Workflow for Pre-Study Planning

This workflow underscores that a robust assessment investigates several interconnected domains [15] [16]:

- Protocol and Design Feasibility: Examining the practicality of the study protocol itself, including the clarity of specifications and the burden of data collection procedures on participants.

- Resource and Operational Feasibility: Evaluating the availability of a suitable participant population, adequate staff capacity, and the necessary physical and financial resources.

- Methodological and Technical Feasibility: For data linkage studies, this is the critical evaluation of identifier quality and discriminatory power, which is explored in detail in the following sections.

- Ethical and Regulatory Feasibility: Ensuring the study complies with data governance, determines data ownership, and adheres to restrictions on data use established during the original data collection [1].

Pilot studies are the primary vehicle for conducting these assessments. They are small-scale tests designed not to provide definitive answers to research questions but to field-test the logistical aspects of the future study. The focus is on confidence intervals for feasibility parameters rather than on point estimates of effect sizes, which are often unstable in small samples [16].

Quantitative Feasibility Indicators for Pilot Studies

Effective feasibility assessment relies on concrete, measurable indicators. The table below summarizes key metrics that should be tracked during a pilot study to inform the planning of a larger-scale investigation.

Table: Key Quantitative Feasibility Indicators for Pilot Studies

| Assessment Area | Specific Indicator | Measurement Strategy | Interpretation for Full Study |

|---|---|---|---|

| Participant Recruitment | Recruitment Rate | Number of participants recruited per month [16] | Estimates timeline and resources needed for full-scale recruitment. |

| Data Collection | Assessment Completion Rate | Percentage of participants completing complex assessments (e.g., biospecimens, performance tests) [16] | Identifies overly burdensome protocols needing simplification. |

| Intervention Fidelity | Protocol Adherence | Percentage of intervention sessions delivered as intended, measured by observer checklists [16] | Determines if interventionists require additional training or support. |

| Participant Retention | Drop-out Rate | Percentage of participants lost to follow-up over the study period [16] | Informs strategies to improve retention and minimize bias. |

| Measure Acceptability | Respondent Burden | Average time to complete surveys or questionnaires [16] | Helps refine and shorten measures to reduce participant fatigue. |

The Core of Linkage Feasibility: Discriminatory Power of Identifiers

For studies dependent on linking two or more datasets, the feasibility of the linkage itself is paramount. A fundamental step is the prospective assessment of whether a reliable and accurate linkage is possible given the available identifiers and their quality [1].

The discriminatory power of an identifier refers to its ability to distinguish one individual from another within a dataset. This power is not uniform; it varies significantly based on the number of unique values an identifier can take and the distribution of those values. The principle is that record pairs matched randomly are less likely to agree on an identifier with high discriminatory power simply by chance [1].

- High vs. Low Power Identifiers: A date of birth is highly informative because it has many unique values (365 possibilities per year). In contrast, sex has only two common values, so randomly matched records will agree 50% of the time by chance, making it a weak identifier on its own [1].

- The Role of Rare Values: Matches on rarely occurring values (e.g., an uncommon surname like "Lebowski") provide more confidence that a record pair is a true match than matches on frequently occurring values (e.g., "Smith") [1].

- Quantifying Power with Shannon Entropy: The discriminatory power of an identifier or a set of identifiers can be quantitatively measured using Shannon entropy. This metric is calculated as the sum of the absolute value of (p*log₂(p)), where 'p' is the proportion of records captured by each unique value. This method allows researchers to rank identifiers or combinations from most to least discriminatory, guiding the selection of the optimal set for linkage [1].

- Assessing Record Uniqueness: The ultimate goal is to find the minimum combination of variables where each record is uniquely identified (a mean of approximately 1.00 record per "pocket"). Researchers can request data vendors to analyze the percentage of unique records identified by different variable combinations available for release. Combinations approaching this uniqueness threshold in both datasets to be linked are likely to succeed [1].

Experimental Protocol: Evaluating Linkage Feasibility

The following protocol provides a detailed methodology for assessing the feasibility of a proposed data linkage prior to initiating a full-scale study.

Objective: To determine the feasibility of accurately linking Dataset A and Dataset B using available personal identifiers and to identify the minimal set of identifiers required to achieve a high-quality linkage.

Methodology:

- Inventory and Map Identifiers: Compile a complete list of all common personal identifiers available in both Dataset A and Dataset B (e.g., full name, date of birth, sex, address, Social Security Number). Document the format and coding of each variable (e.g., date format: DD/MM/YYYY vs. MM/DD/YYYY).

- Assess Data Quality and Overlap: For each identifier, work with data custodians to assess data completeness (percentage of non-missing values) and potential quality issues. Investigate temporal disparities in data collection that could affect variable stability (e.g., addresses that change over time) [1].

- Calculate Discriminatory Power: For each identifier and for promising combinations of identifiers, calculate the Shannon entropy to quantify their discriminatory power [1].

- Formula: H = -Σ (pi * log₂(pi)), where p_i is the proportion of records in the dataset with the i-th unique value of the identifier.

- Software: This analysis can be performed using statistical software like R or Python (Pandas, NumPy) [17].

- Analyze Record Uniqueness: Using the most promising identifier sets, calculate the mean number of records per "pocket" (i.e., per unique combination of identifiers). The goal is a set where the mean is close to 1.00, indicating most records are unique [1]. SAS code for this purpose has been made publicly available by Tiefu Shen [1].

- Estimate Linkage Confidence: Methods exist, such as that developed by Cook et al., to determine if the combined discriminatory power of the available identifiers meets a pre-specified threshold for a desired degree of linkage confidence (e.g., 95%) while controlling for a prespecified false-positive rate [1].

Expected Output: A feasibility report concluding whether a high-quality linkage is technically possible and recommending the optimal set of identifiers to use. This report should also highlight any data quality issues that need resolution before the full linkage proceeds.

The Researcher's Toolkit for Linkage Feasibility

Successfully executing a linkage feasibility assessment requires a combination of conceptual tools, data sources, and analytical software. The following table details essential "research reagent solutions" for this field.

Table: Essential Research Reagents and Tools for Linkage Feasibility Assessment

| Tool or Resource | Type | Primary Function in Feasibility Assessment |

|---|---|---|

| Shannon Entropy | Analytical Metric | Quantifies the information content and discriminatory power of individual identifiers or combinations thereof [1]. |

| Record Uniqueness Analysis | Analytical Method | Determines the proportion of records in a dataset that can be uniquely identified by a given set of variables, targeting ~1 record per pocket [1]. |

| Personal Identifiers | Data | Common identifiers include date of birth, full name, and sex. The quality and completeness of this data are paramount [2]. |

| TriNetX | Data Query Service | Used in clinical research to query de-identified patient data and get counts of patients who may qualify for a study, informing participant availability [15]. |

| R/Python (Pandas, NumPy) | Statistical Software | Open-source programming environments ideal for performing custom calculations of entropy, record uniqueness, and other statistical analyses on datasets [17]. |

| SAS | Statistical Software | A commercial software platform often used in health research; code exists for performing record uniqueness analysis [1]. |

Visualizing the Linkage Feasibility Decision Pathway

The process of assessing linkage feasibility follows a logical sequence, from initial identifier evaluation to the final go/no-go decision. The following diagram maps this critical pathway, incorporating the core concepts of discriminatory power and uniqueness.

Linkage Feasibility Decision Pathway

A meticulously executed feasibility assessment is the bedrock upon which successful, impactful research is built. It is a critical investment that de-risks projects by systematically evaluating logistical protocols, resource availability, and participant engagement strategies before major resources are committed [15] [16]. Within the specific and growing domain of data linkage research, this assessment must pivot on a rigorous, quantitative evaluation of the discriminatory power of identifiers. Techniques such as Shannon entropy and record uniqueness analysis provide the empirical evidence needed to determine if a proposed linkage is mechanically possible and statistically sound [1].

Ignoring this step risks two fundamental errors: creating linked datasets riddled with false connections or failing to connect records that should be linked, either of which can introduce profound bias into subsequent analyses [1]. Therefore, integrating a thorough feasibility assessment, particularly one that rigorously tests the power of linkage variables, is not a preliminary administrative task. It is a fundamental scientific responsibility. By adopting these practices, researchers and drug development professionals can ensure their studies are not only conceptually elegant but also methodologically robust and ethically compliant, thereby maximizing the validity and utility of their findings.

Linking in Action: Methodologies for Maximizing Discriminatory Power

In an era defined by large-scale secondary data analysis, record linkage is a cornerstone of comprehensive health services and comparative effectiveness research. The fundamental challenge lies not merely in linking datasets, but in assessing the feasibility of doing so accurately. This assessment hinges critically on the discriminatory power of the available identifiers—their ability to uniquely identify individuals within a dataset [1]. Before embarking on a linkage project, researchers must prospectively determine whether a reliable linkage is possible, a process that depends almost entirely on the quantity and quality of the identifying information [1].

Deterministic matching, or exact-match linkage, is a method that relies on observed data and exact agreement on a given set of identifiers to link records [4] [18]. It operates on discrete, "all-or-nothing" outcomes, declaring a match only if two records agree character-for-character on all specified identifiers [4]. The central thesis of this guide is that the decision to use deterministic linkage is not arbitrary; it is a direct function of the assessed discriminatory power of the linkage variables and the quality of the data to be linked. The following sections will provide an objective comparison of linkage methods, supported by experimental data, and detail the protocols for implementing deterministic matching when conditions are favorable.

Understanding Deterministic and Probabilistic Linkage

Record linkage methods are broadly categorized into two types: deterministic and probabilistic. Understanding their core mechanisms is essential for selecting the appropriate approach.

Deterministic linkage uses fixed rules to determine whether record pairs agree or disagree on a given set of identifiers [4]. It can be executed in a single step, requiring exact agreement on all identifiers, or iteratively (stepwise), where records not matched in a first round are passed to a second, potentially less restrictive, round of matching [4]. For example, a single-step strategy might require a perfect match on Social Security Number, first name, and last name. In contrast, an iterative approach might first try to match on SSN and name, and for unmatched records, proceed to match on a combination of last name, date of birth, and sex [4]. The primary strength of deterministic matching is its high precision and low false-positive rate, making it suitable for contexts where certainty is paramount [18] [19].

Probabilistic linkage, in contrast, uses statistical models to account for uncertainty and inconsistencies in the data [19]. Instead of requiring exact matches, it calculates the probability that two records refer to the same entity based on the agreement and disagreement of various identifiers, often using algorithms like the Fellegi-Sunter model [20]. This method is designed to handle typographical errors, missing data, and other real-world data quality issues by assigning weights to different identifiers and using a threshold to declare a match [19] [20]. Its key strength is higher sensitivity, or a better ability to capture true matches in datasets with poorer quality information [21].

The choice between these methods represents a classic trade-off between precision and recall, heavily influenced by the underlying data quality.

Comparative Performance Analysis: Experimental Data

A simulation study designed to understand the data characteristics affecting linkage performance provides critical, objective data for method selection [21]. The study created 96 scenarios representing real-life situations with non-unique identifiers, systematically varying discriminative power, rates of missing data and errors, and file size.

The results across these scenarios reveal a nuanced picture of the performance trade-offs, which can be summarized in the following table.

Table 1: Performance Comparison of Deterministic and Probabilistic Linkage Across Data Quality Scenarios [21]

| Performance Metric | Deterministic Linkage | Probabilistic Linkage | Contextual Findings |

|---|---|---|---|

| Sensitivity | Lower | Higher (Uniformly superior) | The performance gap is smallest in data with low rates of missingness and error. |

| Positive Predictive Value (PPV) | Higher | Lower | Deterministic linkage showed a distinct advantage in PPV. |

| Trade-off Balance | Less optimal | Better trade-off between sensitivity and PPV | Probabilistic linkage provided a superior balance across most scenarios. |

| Computational Resource Efficiency | High (Execution in <1 min) | Variable (Execution from 2 min to 2 hours) | Deterministic linkage is significantly faster, a key practical consideration. |

| Key Determining Factor | Data quality | Data quality | The intrinsic rate of missing data and error in linkage variables is the key to choosing a method. |

The study's overarching conclusion is that probabilistic linkage generally outperforms deterministic linkage by achieving a better trade-off between sensitivity and PPV across a wide range of conditions [21]. However, a crucial finding for researchers is that deterministic linkage "performed not significantly worse" and was a "more resource efficient choice" in the specific context of exceptionally high-quality data—defined as having an error rate of less than 5% [21].

Furthermore, both methods performed poorly if the linkage rules relied only on identifiers with low discriminative power, underscoring the foundational importance of assessing identifier quality before linkage begins [21].

A Framework for Assessing Linkage Feasibility and Method Selection

The experimental data clearly indicates that data quality is the primary determinant for method selection. Researchers can implement the following framework to make an evidence-based choice.

Evaluating Identifier Discriminatory Power

The feasibility of a linkage project depends on the discriminatory power of the available identifiers [1]. Discriminatory power refers to the number of unique values an identifier has and the distribution of those values. For example, month of birth (12 unique values) is more informative than sex (2 unique values) because a random match is less likely to occur by chance (8.3% vs. 50%) [1]. Matches on rare values (e.g., a rare surname like "Lebowski") provide more confidence than matches on common values (e.g., "Smith") [1].

This power can be quantified using Shannon entropy (calculated as the sum of the absolute value of (p*log2(p)), where p is the proportion of records for each unique value) [1]. This metric allows researchers to rank identifiers and their combinations from most to least discriminatory. The goal is to find the minimum set of variables for which each record is identified uniquely (a mean of ~1.00 record per "pocket") [1]. Research has shown that in practice, date of birth, first name, and last name typically have the highest discriminating power for linking patient data [2].

Table 2: Discriminatory Power of Common Identifiers and Research Reagent Solutions

| Identifier / Research Reagent | Primary Function in Linkage | Considerations for Use & Discriminatory Power |

|---|---|---|

| Social Security Number (SSN) | Provides a near-unique key for exact matching. | High theoretical power, but quality can be compromised by data entry errors or misuse (e.g., a primary subscriber's SSN used for dependents) [4] [1]. |

| Date of Birth | High-discrimination demographic field used in exact and iterative matching. | Consistently identified as the identifier with the best discriminating power [2]. Can be parsed into day, month, and year to allow for partial credit in probabilistic matching [4]. |

| First and Last Names | Primary textual identifiers for linkage. | High discriminating power, second only to date of birth [2]. Require cleaning and standardization (e.g., parsing, phonetic coding like Soundex) to account for misspellings and typos [4]. |

| Phonetic Coding Algorithms (e.g., Soundex) | Software-based reagent to account for minor misspellings in names. | Improves linkage accuracy by converting strings to phonetic codes before comparison. One study suggested a language-adapted phonetic treatment can slightly improve results over Soundex [2]. |

| Address Information | Provides geographic context for matching. | Can be parsed into street, city, state, and ZIP code. Useful for iterative matching, but subject to change over time, which can diminish linkage success if datasets are temporally disparate [4] [1]. |

| Sex/Gender | Basic demographic field. | Very low discriminating power on its own due to few unique values; adding it to a matching algorithm may not improve results [2]. |

A Practical Workflow for Method Selection

The following diagram synthesizes the experimental findings and feasibility assessment principles into a logical workflow for choosing between deterministic and probabilistic linkage.

Diagram 1: Linkage Method Selection Workflow

Implementation Protocols for Deterministic Linkage

When the feasibility assessment indicates that deterministic linkage is appropriate, researchers should follow a structured protocol to ensure optimal results.

Data Cleaning and Standardization Protocol

The first step after data delivery is a thorough examination of the data to understand how information is stored, its completeness, and any idiosyncrasies [4]. Subsequent cleaning and standardization are critical to minimize false matches caused by typographical errors. Key techniques include [4]:

- Standardization: Force variables into the same case (e.g., all uppercase), format, and length across all data sources. Remove all punctuation and digits where appropriate.

- Parsing: Break composite identifiers into separate pieces. For example, parse a full name into first, middle, and last names; a date of birth into month, day, and year; and an address into street, city, state, and ZIP code. This allows the algorithm to give credit for partial agreement.

- Error Handling: Apply techniques to account for minor misspellings. This includes converting strings to phonetic codes (e.g., Soundex) and using string distance algorithms to measure the number of edits required to make two strings identical.

The extent of cleaning is a cost-benefit decision; it is highly recommended when data quality is poor or only a few identifiers are available [4].

Iterative Deterministic Matching Protocol

A robust approach to deterministic linkage is the iterative (or multi-step) method. A validated example of this protocol is the one employed by the National Cancer Institute to create the SEER-Medicare linked dataset [4]. The protocol can be broken down into the following steps, which serve as a model for researchers:

Step 1: High-Confidence Match. Link records that match on SSN and one of the following secondary criteria:

- First and last name (allowing for fuzzy matches like nicknames)

- Last name, month of birth, and sex

- First name, month of birth, and sex

Step 2: Lower-Confidence Match. For records not matched in Step 1, declare a match if they agree on last name, first name, month of birth, sex, and one of the following:

- Seven to eight digits of the SSN

- Two or more of the following: year of birth, day of birth, middle initial, or date of death

This stepwise protocol demonstrates high validity and reliability by using a sequence of progressively less restrictive deterministic matches, successfully balancing the capture of true matches with the maintenance of high accuracy [4].

The decision to use deterministic, exact-match linkage is not one of mere preference but of strategic feasibility. As the experimental data and frameworks presented here demonstrate, deterministic linkage is a valid and highly resource-efficient choice only under specific conditions of high data quality and strong identifier discriminatory power. When these conditions are met—notably, when error rates are low (<5%) and identifiers like date of birth and name are complete and accurate—deterministic methods can provide the high precision and auditability required for hypothesis-driven research. Conversely, in the more common scenario of imperfect, real-world data, probabilistic methods offer a superior and more robust approach. Therefore, a prospective and rigorous assessment of linkage feasibility, centered on evaluating the discriminatory power of identifiers, is an indispensable first step in any research project involving data linkage.

The Fellegi-Sunter (FS) model serves as the foundational theoretical framework for probabilistic record linkage, operating as an unsupervised classification algorithm that assigns field-specific weights based on agreement or disagreement between corresponding fields [22]. Its primary strength lies in achieving reasonable performance without requiring training data, making it widely applicable in numerous domains [22]. However, real-world data complexities—including missing values, typographical errors, and varying identifier discriminatory power—present significant challenges for practical implementation.

This guide examines the FS model's performance against these real-world challenges, comparing core methodologies and their adaptations. We present experimental data from health information exchange deduplication, public health registry linkage, and administrative cohort construction to objectively assess accuracy across varying data conditions. The analysis is framed within assessing linkage feasibility through identifier discriminatory power research, providing researchers and drug development professionals with evidence-based protocols for implementing probabilistic matching in scientific and operational contexts.

Performance Comparison: FS Methods and Experimental Results

Quantitative Performance Across Methodologies

Experimental studies across healthcare and administrative data demonstrate how FS model adaptations address specific real-world data challenges. The following table summarizes performance metrics from multiple implementations:

Table 1: Performance comparison of Fellegi-Sunter model adaptations across real-world applications

| Application Context | Data Source & Size | Methodology | Key Performance Metrics | Reference |

|---|---|---|---|---|

| Health Data Deduplication | 765,814 HL7 messages from Health Information Exchange | Frequency-based FS using last name rarity | Potential accuracy improvement demonstrated via frequency distribution differentials between matches/non-matches | [22] |

| Administrative Cohort Construction | Mexican Hospital Discharges & Death Records | Probabilistic linkage with trigram blocking & EM algorithm | Sensitivity: 90.72%Positive Predictive Value: 97.10% | [23] |

| Multi-Source HIE Linkage | 4 use cases including HIE deduplication & public health registry linkage | FS with MAR assumption & data-driven field selection | Optimized F1-scores across all use cases | [24] |

| Privacy-Preserved Linkage | Synthetic datasets with 0-20% error rates | FS with Bloom filters & EM parameter estimation | High F-measure comparable to calculated probabilities even with 20% error rates | [25] |

Core Methodological Approaches

Table 2: Comparison of Fellegi-Sunter model methodologies for handling real-world data challenges

| Methodology | Core Approach | Advantages | Limitations | Best-Suited Applications |

|---|---|---|---|---|

| Standard FS Model | Binary agreement/disagreement weights based on m/u probabilities | Simple implementation, no training data required | Identical weights for rare/common values; missing data challenges | Clean datasets with consistent formatting and complete identifiers |

| Frequency-Based FS | Adjusts weights based on value rarity in the dataset | Accounts for greater discriminatory power of rare values | Requires estimating value frequency distributions | Datasets with non-uniform value distributions (names, locations) |

| FS with MAR Assumption | Models missing data as Missing At Random conditional on match status | Maintains or improves F1-scores; avoids information loss | Requires validation of MAR assumption plausibility | Datasets with substantial missing values in key identifiers |

| Privacy-Preserving FS | Uses Bloom filters for encrypted linkage | Enables linkage without sharing identifiable information | Increased computational complexity; specialized expertise needed | Multi-institutional research with privacy restrictions |

Experimental Protocols and Workflows

Frequency-Based Matching Implementation

The two-step frequency-based FS procedure addresses a critical limitation of the standard model: identical classification regardless of whether records agree on rare or common values [22]. Agreement on rare values (e.g., surname "Harezlak") is less likely to occur by chance than agreement on common values (e.g., "Smith"), making rare value agreements more indicative of true matches [22].

Experimental Protocol (from health data deduplication study):

- Blocking: Applied three blocking schemes: (1) day/month of birth and zip code, (2) telephone number, and (3) next-of-kin's last and first names [22]

- Sample Review: Randomly selected and manually reviewed record pairs from each block (12,643; 4,138; and 1,904 pairs respectively) to establish true match status [22]