Applying the Likelihood Ratio Framework in Forensic Text Comparison: Principles, Methods, and Validation

This article provides a comprehensive guide to the Likelihood Ratio (LR) framework for forensic text comparison, tailored for researchers and forensic professionals.

Applying the Likelihood Ratio Framework in Forensic Text Comparison: Principles, Methods, and Validation

Abstract

This article provides a comprehensive guide to the Likelihood Ratio (LR) framework for forensic text comparison, tailored for researchers and forensic professionals. It explores the foundational Bayesian principles underpinning the LR, reviews methodological approaches from score-based to feature-based models, and addresses key challenges such as uncertainty quantification and topic mismatch. The content also covers critical validation requirements and performance metrics, synthesizing current research to offer a scientifically defensible and practical roadmap for implementing the LR framework in forensic linguistics.

The Bayesian Bedrock: Understanding the Likelihood Ratio Framework

Core Principles

The Likelihood Ratio (LR) framework is recognized as the logically and legally correct method for the evaluation of forensic evidence, including textual evidence [1]. At the heart of this framework is Bayes' Theorem, which provides a formal mechanism for updating beliefs in the presence of new evidence.

The odds form of Bayes' Theorem offers a intuitive and practical way to understand this updating process [1]. It is formally expressed as:

Posterior Odds = Prior Odds × Likelihood Ratio

This can be written as:

[ \frac{P(Hp|E)}{P(Hd|E)} = \frac{P(Hp)}{P(Hd)} \times \frac{P(E|Hp)}{P(E|Hd)} ]

Where:

- Posterior Odds: The updated belief about the competing hypotheses after considering the evidence, ( \frac{P(Hp|E)}{P(Hd|E)} ).

- Prior Odds: The initial belief about the hypotheses before considering the new evidence, ( \frac{P(Hp)}{P(Hd)} ).

- Likelihood Ratio (LR): The probability of the evidence under the prosecution hypothesis, ( P(E|Hp) ), divided by the probability of the evidence under the defense hypothesis, ( P(E|Hd) ) [1].

The role of the forensic scientist is strictly limited to the evaluation and presentation of the Likelihood Ratio. The scientist is not in a position to know the trier-of-fact's prior beliefs, and it is legally inappropriate for the scientist to present posterior odds, as this would address the ultimate issue of guilt or innocence [1].

Quantitative Data in Forensic Text Comparison

The following tables summarize key quantitative aspects of the Likelihood Ratio framework as applied to forensic text comparison.

Table 1: Interpretation of Likelihood Ratio Values

| Likelihood Ratio Value | Interpretation of Support for ( Hp ) vs. ( Hd ) |

|---|---|

| > 1 | Supports the prosecution hypothesis (( H_p )) |

| 1 | Evidence has no probative value; neutral |

| < 1 | Supports the defense hypothesis (( H_d )) |

| >> 1 (e.g., 10, 100) | Strong support for ( H_p ) |

| << 1 (e.g., 0.1, 0.01) | Strong support for ( H_d ) |

Table 2: Performance Metrics for LR Systems

| Metric | Description | Application in Validation |

|---|---|---|

| Log-Likelihood-Ratio Cost (C~llr~) | A single scalar metric for system performance; lower values indicate better performance [1]. | Used to assess the validity and reliability of a forensic text comparison system [1]. |

| Tippett Plots | A graphical method for visualizing the distribution of LRs for both same-source and different-source comparisons [1]. | Used to empirically validate a method; shows the proportion of LRs that exceed a given value for both true ( Hp ) and true ( Hd ) cases [1]. |

Experimental Protocols

Protocol for Validating a Forensic Text Comparison System

Objective: To empirically validate a Forensic Text Comparison (FTC) system using the LR framework under conditions that reflect real casework.

Background: Empirical validation must satisfy two critical requirements: 1) reflecting the conditions of the case under investigation, and 2) using data relevant to the case [1]. Failure to do so may mislead the trier-of-fact.

Materials:

- Relevant text corpora (see Reagent Solutions).

- Computational resources for statistical modeling (e.g., R, Python).

- Calibration and validation software (e.g., for calculating C~llr~ and generating Tippett plots).

Procedure:

- Define Casework Conditions: Identify the specific conditions of the forensic text comparison for which the system is being validated. In FTC, this could include variables such as:

- Topic: Mismatched topics between questioned and known documents is a known challenging condition that must be validated [1].

- Genre (e.g., formal letter vs. informal chat).

- Text Length.

- Modality (e.g., email vs. social media post).

Source Relevant Data: Obtain text corpora that accurately reflect the defined casework conditions. The data must be representative of the population relevant to the case [1].

Develop Statistical Model: Implement a statistical model to calculate LRs from quantitative measurements of the texts. An example from recent research is the Dirichlet-multinomial model, followed by logistic-regression calibration [1].

Compute Likelihood Ratios: For each pair of texts (same-author and different-author) in the validation dataset, compute the LR using the developed model.

Assess System Performance: Evaluate the computed LRs using the following methods:

Interpret Results: A validated system will show good discrimination (LRs >1 for same-author and <1 for different-author) and good calibration (LRs accurately reflect the strength of the evidence). High C~llr~ values or poorly separated Tippett plots indicate a need for model refinement.

Protocol for a Stylometric Authorship Analysis

Objective: To apply a full Bayesian framework to quantify the evidence for authorship of a questioned document.

Background: Stylometry uses quantitative features of writing style (e.g., character n-grams, word frequencies) to infer authorship. A Bayesian framework allows for a legally sound evaluation of this evidence [2].

Materials:

- Questioned text (e.g., a disputed play).

- Known text samples from candidate authors.

- Computational software for Bayesian analysis.

Procedure:

- Define Hypotheses: Formulate two competing hypotheses.

- ( Hp ): The questioned document was written by Author A.

- ( Hd ): The questioned document was written by Author B.

Feature Extraction: From the questioned and known documents, extract stylometric features. Character n-grams (sequences of 'n' characters) are often considered highly selective for authorship [2].

Model Building: Construct a probabilistic model that describes the generation of the extracted features under both ( Hp ) and ( Hd ).

Calculate the Bayes Factor: Compute the Bayes Factor (BF), which is the Likelihood Ratio in this context.

- ( BF = \frac{P( \text{Features of Questioned Text} | Hp )}{P( \text{Features of Questioned Text} | Hd )} )

Report Interpretation: Report the BF as the strength of the evidence. For example, a study on the authorship of Molière's plays reported a BF that strongly supported the hypothesis that Corneille did not write them [2].

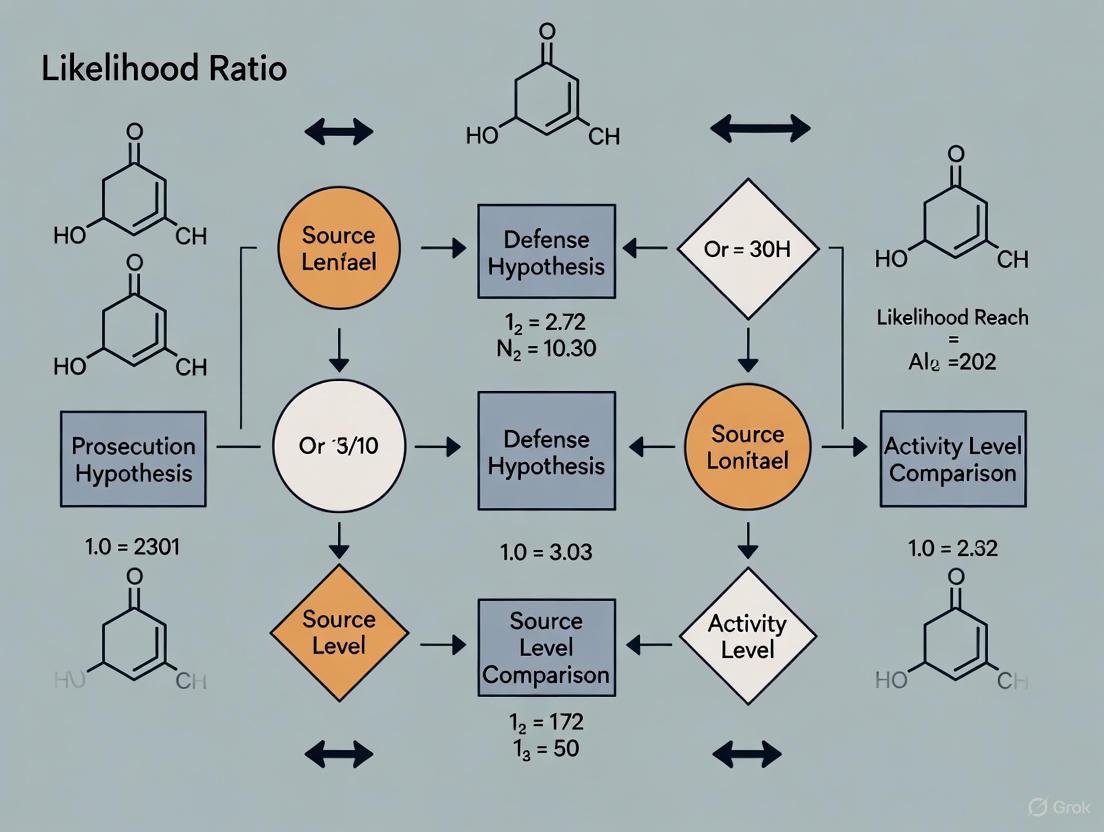

Workflow and Logical Diagrams

Figure 1. High-level workflow for a forensic text comparison case, from evidence intake to reporting.

Figure 2. The logical relationship of the odds form of Bayes' Theorem.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Forensic Text Comparison Research

| Tool / Solution | Function / Description | Application in FTC |

|---|---|---|

| Relevant Text Corpora | Collections of texts that mirror real-world case conditions (e.g., topic, genre, modality). | Critical for empirical validation; using irrelevant data can invalidate results and mislead the trier-of-fact [1]. |

| Dirichlet-Multinomial Model | A statistical model for discrete data, often used for text represented as counts of features. | Used to calculate initial likelihood ratios from textual features [1]. |

| Logistic Regression Calibration | A statistical method for calibrating the output of a model to ensure it is meaningful and interpretable. | Applied to the raw scores from a model (e.g., Dirichlet-multinomial) to produce well-calibrated LRs [1]. |

| Log-Likelihood-Ratio Cost (C~llr~) | A scalar performance metric that measures both the discrimination and calibration of an LR system. | The primary metric for validating the performance and reliability of an FTC system [1]. |

| Tippett Plot Software | Software capable of generating Tippett plots, which visualize the distribution of LRs for same-source and different-source pairs. | Used for the empirical validation and presentation of system performance [1]. |

| Character N-gram Analyzer | A tool that breaks text into contiguous sequences of 'n' characters for analysis. | A highly selective feature set for capturing an author's stylistic fingerprint in stylometric analysis [2]. |

The Likelihood Ratio (LR) has emerged as a fundamental framework for the interpretation of forensic evidence, providing a logically sound and statistically rigorous method for evaluating the strength of evidence under competing propositions [1]. In forensic disciplines, including the complex domain of forensic text comparison (FTC), the LR framework offers a transparent and quantifiable alternative to traditional opinion-based testimony. Its adoption addresses growing demands for empirical validation and demonstrable reliability in forensic science [3] [4]. This document outlines the theoretical foundation of the LR, detailed protocols for its application in forensic text analysis, and the essential validation criteria required for its use in casework, framed within a broader thesis on the LR framework for forensic text comparison research.

Theoretical Foundation of the Likelihood Ratio

Core Definition and Mathematical Formulation

The Likelihood Ratio is a quantitative measure of the strength of evidence. It compares the probability of observing the evidence under two mutually exclusive hypotheses: the prosecution's proposition (Hp) and the defense's proposition (Hd) [1]. This is formally expressed as:

LR = p(E | Hp) / p(E | Hd)

In this equation [1]:

- p(E | Hp) represents the probability of observing the evidence (E) given that the prosecution's hypothesis is true.

- p(E | Hd) represents the probability of observing the same evidence given that the defense's hypothesis is true.

The interpretation of the LR is straightforward [1]:

- LR > 1: The evidence supports Hp.

- LR = 1: The evidence is equally probable under both hypotheses and thus offers no support for either.

- LR < 1: The evidence supports Hd.

The further the LR is from 1, the stronger the evidence. For instance, an LR of 10 means the evidence is ten times more likely under Hp than under Hd, while an LR of 0.1 means it is ten times more likely under Hd [1].

Integration within the Bayesian Framework

The LR's power is fully realized when integrated into the Bayesian framework, which describes how prior beliefs should be rationally updated in the face of new evidence [1]. This is captured by the odds form of Bayes' Theorem:

Prior Odds × LR = Posterior Odds

Where:

- Prior Odds represent the fact-finder's belief about the hypotheses before the new evidence is presented.

- Posterior Odds represent the updated belief after considering the new evidence.

This framework clearly delineates the roles of the forensic scientist and the trier-of-fact (e.g., judge or jury). The forensic scientist's role is to compute and present the LR, a task of evidence evaluation. The trier-of-fact's role is to assess the prior odds, a task of decision-making that incorporates all other circumstances of the case [1]. It is legally inappropriate for a forensic practitioner to present a posterior odds, as this encroaches on the ultimate issue of the suspect's guilt or innocence [1].

Application in Forensic Text Comparison

Defining Propositions for Textual Evidence

The first and most critical step in applying the LR framework to textual evidence is the careful formulation of the competing propositions, Hp and Hd. These must be mutually exclusive, forensically relevant, and framed at the appropriate level (e.g., source level or activity level) [1].

Table 1: Example Propositions in Forensic Text Comparison

| Hypothesis Type | Typical Formulation in FTC |

|---|---|

| Prosecution (Hp) | "The questioned document and the known document were written by the same author (the suspect)." |

| Defense (Hd) | "The questioned document and the known document were written by different authors (the suspect is not the author of the questioned document)." |

Quantitative Measurement of Textual Features

A scientific FTC approach requires the conversion of linguistic properties into quantitative data [1]. The choice of features is driven by the concept of idiolect—an individual's distinctive and consistent way of using language [1]. The following feature types are commonly used in state-of-the-art authorship verification methods [5]:

- Lexical Features: Frequency of function words, character n-grams, word n-grams.

- Syntactic Features: Patterns of punctuation, sentence length distributions, part-of-speech tags.

- Stylistic Features: Measures of richness and complexity (e.g., type-token ratio).

LR Calculation Methods in FTC

Several computational methods can be used to calculate LRs from the quantified textual data. These can be broadly categorized as feature-based or score-based [3]. Recent research has tested and validated various authorship analysis methods for their suitability in forensic contexts, including on speech data [5].

Table 2: Likelihood Ratio Methods in Forensic Text Comparison

| Method | Brief Description | Key Characteristics |

|---|---|---|

| Cosine Delta [5] | Measures the cosine similarity between vector representations of documents. | A simple, common baseline method in authorship verification. |

| N-gram Tracing [5] | Exploits the occurrence and frequency of character or word n-grams. | A variant that uses both typicality and similarity information has shown strong performance [5]. |

| The Impostors Method [5] | Tests if a known document is more similar to a questioned document than to a set of "impostor" documents. | A state-of-the-art method that directly addresses the question of distinctiveness. |

| Dirichlet-Multinomial Model [1] | A generative statistical model for discrete data (e.g., word counts). | Allows for direct, feature-based LR calculation; can be followed by logistic regression calibration [1]. |

Experimental Protocol for Validating an FTC System

The following protocol provides a step-by-step guide for the empirical validation of a Likelihood Ratio method used for forensic text comparison, ensuring its performance is fit for purpose before deployment in casework [3] [6].

Pre-Validation: Defining Scope and Criteria

Define Performance Characteristics: Identify the key characteristics that the LR method must demonstrate. These typically include [6]:

- Discriminating Power: The ability to clearly distinguish between same-author and different-author comparisons.

- Accuracy/Calibration: The degree to which the computed LRs correspond to the ground-truth probabilities. A well-calibrated system with an LR of 100 should be correct 100 times more often than it is wrong.

- Robustness: Performance stability when input conditions vary (e.g., topic mismatch, text length).

- Coherence: Consistency of results across different subsystems or feature sets.

Select Performance Metrics: Choose quantitative metrics to measure each characteristic [3] [6].

- Cllr (Log-Likelihood-Ratio Cost): A primary metric that measures the overall performance, considering both discrimination and calibration. Lower values indicate better performance.

- Cllr~min~: The minimum possible Cllr, representing the pure discriminating power of the system, isolated from calibration issues.

- EER (Equal Error Rate): The rate where the proportion of misleading evidence for Hp and Hd is equal.

Set Validation Criteria: Establish pass/fail thresholds for each performance metric. These criteria are laboratory-specific but must be transparent and justified. For example: "The method will be deemed valid for casework if Cllr < 0.2 and the rate of misleading evidence with LR > 1000 is below 1%." [3] [6].

Experimental Design and Data Preparation

Secure Relevant Data: Validation must use data that is relevant to the casework conditions under which the system will be applied [1] [3]. This involves:

- Using real-world or realistically simulated forensic texts.

- Reflecting specific challenges encountered in casework, such as mismatch in topics between known and questioned documents, which is a known challenging factor in authorship analysis [1].

- Ensuring the dataset includes a sufficient number of same-author and different-author comparisons.

Split Data: Use separate datasets for system development (training/tuning) and validation (testing) to prevent over-optimistic performance estimates [6].

System Validation and Reporting

Run Validation Experiments: Compute LRs for all comparisons in the test dataset. The experimental protocol must replicate the intended forensic application, including the specific propositions being tested [1] [6].

Generate Performance Graphics: Create standard plots to visualize performance [6]:

- Tippett Plots: Show the cumulative distribution of LRs for both same-source and different-source comparisons, illustrating the rates of misleading evidence.

- ECE (Empirical Cross-Entropy) Plots: Visualize the calibration of the LRs and the Cllr metric.

- DET (Detection Error Trade-off) Plots: Display the trade-off between false positive and false negative rates at different decision thresholds.

Compile Validation Report: Document the entire process and results in a validation report. A validation matrix is a useful tool for summarizing this information [6].

Table 3: Simplified Validation Matrix Example

| Performance Characteristic | Performance Metric | Graphical Representation | Validation Criterion | Analytical Result | Validation Decision (Pass/Fail) |

|---|---|---|---|---|---|

| Discriminating Power | Cllr~min~ < 0.15 | DET Plot | Cllr~min~ < 0.2 | 0.14 | Pass |

| Accuracy/Calibration | Cllr < 0.3 | ECE Plot | Cllr < 0.3 | 0.28 | Pass |

| Robustness (to topic mismatch) | Cllr degradation < 20% | Tippett Plot | Degradation < 25% | 15% degradation | Pass |

Visualization of the LR Framework and Workflow

The Logic of the Likelihood Ratio in Forensic Science

Experimental Protocol for FTC System Validation

The Researcher's Toolkit for FTC

Table 4: Essential Research Reagent Solutions for Forensic Text Comparison

| Research Reagent | Function in FTC Research |

|---|---|

| Relevant Text Corpora | Provides the empirical data foundation for developing and validating LR models. Data must be forensically relevant, reflecting real-world conditions like topic mismatch [1]. |

| Quantitative Feature Set | Converts qualitative text into measurable data for statistical modeling. Examples include function word frequencies and n-grams, which have demonstrated speaker discriminatory power [5]. |

| LR Computation Method (e.g., N-gram Tracing, Impostors) | The core algorithm that calculates the likelihood ratio from the quantified feature data. Different methods have varying performance and underlying assumptions [5]. |

| Validation Software & Metrics (e.g., Cllr, ECE plots) | Tools to empirically test the performance, discriminating power, and calibration of the LR system, as required for accreditation [3] [6]. |

| Calibration Model (e.g., Logistic Regression) | A post-processing step that adjusts the output of an LR system to ensure that the numerical values it produces are legally and statistically meaningful (i.e., well-calibrated) [1]. |

The adoption of the Likelihood Ratio framework represents a paradigm shift towards a more scientific, transparent, and robust practice in forensic text comparison. By providing a structured methodology for evaluating evidence, the LR framework helps ensure that conclusions are data-driven, reproducible, and presented in a logically correct manner. However, the application of the LR in FTC faces unique challenges, primarily due to the complex, multi-faceted nature of textual data, where authorial style is influenced by topic, genre, and other situational factors [1]. Therefore, a rigorous validation process that replicates casework conditions is not merely beneficial but essential. Future research must continue to refine LR methods, explore a broader range of linguistic features, and establish comprehensive, standardized validation protocols to fully realize the potential of a scientifically defensible and demonstrably reliable forensic text comparison.

Core Principles of Forensic Interpretation Using LRs

The Likelihood Ratio (LR) framework provides a formal and logically sound method for evaluating the strength of forensic evidence, including evidence derived from text comparisons. Within the context of forensic text comparison (FTC), the LR quantifies the support the evidence provides for one proposition over another—typically, the prosecution's proposition (that a given text was written by a specific suspect) versus the defense's proposition (that it was written by someone else from a relevant population) [7]. This approach moves beyond categorical assertions of authorship, offering a transparent and balanced measure of evidentiary strength that is crucial for scientific and legal applications. Its adoption represents a significant shift towards more rigorous, statistically grounded practices in forensic linguistics.

The core expression of the LR is:

- LR = Probability of observing the evidence given the prosecution's proposition / Probability of observing the evidence given the defense's proposition.

An LR greater than 1 supports the prosecution's proposition, while an LR less than 1 supports the defense's proposition. The magnitude of the LR indicates the degree of support. This framework helps prevent logical fallacies, such as the prosecutor's fallacy, by clearly separating the evaluation of the evidence itself from the prior odds of the propositions.

Core Quantitative Principles and Experimental Data

Experimental data is critical for validating the LR framework and understanding its performance under different conditions. A foundational experiment in FTC investigated the strength of evidence derived from various stylometric features using a Multivariate Kernel Density formula for LR estimation [7]. The experiment utilized a corpus of 115 authors from a real chatlog archive. To assess the impact of data quantity, authorship attribution was modeled using four different text lengths.

Table 1: Influence of Sample Size on System Performance in Forensic Text Comparison [7]

| Sample Size (Words) | Discrimination Accuracy (Approx.) | Log-Likelihood Ratio Cost (Cllr) | System Performance Interpretation |

|---|---|---|---|

| 500 | 76% | 0.68258 | Moderate discriminability; useful but limited evidential strength. |

| 1000 | - | - | Progressive improvement in accuracy and reliability. |

| 1500 | - | - | Progressive improvement in accuracy and reliability. |

| 2500 | 94% | 0.21707 | High discriminability; strong and reliable evidential strength. |

Performance was primarily assessed using the log-likelihood ratio cost (Cllr), a metric that evaluates the overall quality of the LR system by considering both discrimination and calibration. A lower Cllr value indicates better performance [7]. The study also found that larger sample sizes not only improved discriminability but also increased the magnitude of LRs that were consistent with the fact and decreased the magnitude of LRs that were contrary to the fact.

Table 2: Robust Stylometric Features for Authorship Attribution [7]

| Feature Category | Specific Feature Examples | Robustness |

|---|---|---|

| Lexical | Vocabulary Richness | Robust across different sample sizes. |

| Character-Level | Average character number per word token | Robust across different sample sizes. |

| Punctuation | Punctuation character ratio | Robust across different sample sizes. |

Experimental Protocol for Forensic Text Comparison

The following protocol outlines a detailed methodology for conducting a forensic text comparison study within the LR framework, based on established experimental design [7].

Protocol: Multivariate LR Analysis of Stylometric Features

Objective: To compute a likelihood ratio quantifying the strength of evidence for authorship attribution based on stylometric features.

Materials:

- Reference Material: A set of known texts from a suspect.

- Questioned Material: The text of unknown authorship under investigation.

- Background Corpus: A collection of texts from a relevant population of potential authors.

Procedure:

Corpus Compilation and Preparation:

- Select a relevant background corpus. The cited experiment used 115 authors from a chatlog archive [7].

- For each author in the background corpus, and for the suspect, compile text samples of predetermined lengths (e.g., 500, 1000, 1500, 2500 words) to analyze the effect of sample size.

Feature Extraction:

- From all text samples (questioned, suspect, and background corpus), extract a set of stylometric features. The protocol should specify the exact features to be used.

- Recommended features include [7]:

- Average character number per word token

- Punctuation character ratio

- Vocabulary richness features

Statistical Modeling and LR Calculation:

- Use a multivariate statistical model, such as the Multivariate Kernel Density formula, to estimate the probability density of the features under both propositions [7].

- Proposition 1 (Hp): The suspect and the questioned document share a common source. The feature data is modeled assuming the suspect is the author.

- Proposition 2 (Hd): The questioned document originates from a different source within the relevant population. The feature data is modeled using the background corpus.

- Calculate the LR for the specific case as follows:

LR = Probability(Feature Data | H<sub>p</sub>) / Probability(Feature Data | H<sub>d</sub>)

System Validation:

- Conduct a validation study by treating each known author in the background corpus in turn as a "suspect" and calculating LRs for known non-matches.

- Calculate performance metrics, primarily the log-likelihood ratio cost (Cllr), to assess the discrimination and calibration of the system [7]. Other metrics like credible intervals and equal error rates can also be informative.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Tools for FTC Research

| Item Name | Function / Description | Application in FTC |

|---|---|---|

| Chatlog Archive Corpus | A collection of authentic digital communications, serving as a background population for modeling language use. | Provides the relevant population data necessary for estimating the probability of evidence under the defense proposition (Hd) [7]. |

| Stylometric Feature Set | A defined group of quantifiable features that capture an author's stylistic habits. | Forms the basis for comparison between the questioned text and reference materials. Examples include vocabulary richness and punctuation ratios [7]. |

| Multivariate Kernel Density Model | A statistical model used to estimate the probability density of multivariate feature data. | The core computational engine for calculating the probability of observing the evidence under both the prosecution and defense propositions, leading to the LR value [7]. |

| Log-Likelihood Ratio Cost (Cllr) | A key performance metric that measures the overall quality of an LR-based forensic system. | Used during system validation to assess both the discrimination (separation of LRs for same-source and different-source cases) and calibration (accuracy of the LR values) of the method [7]. |

Within the Likelihood Ratio (LR) framework for forensic text comparison, a central tension exists between the pursuit of purely objective, computational methods and the inescapable role of expert subjectivity. The LR framework provides a formal structure for evaluating the strength of evidence, quantifying the ratio of the probability of the evidence under the prosecution hypothesis to that under the defense hypothesis [7]. However, the practical application of this framework, from feature selection to model construction, involves a series of decisions that introduce a subjective dimension. This document outlines application notes and experimental protocols for researchers and forensic scientists navigating this complex interplay, ensuring that the scientific rigor of the LR framework is maintained while acknowledging and controlling for the inherent subjectivity in its application.

The performance of different LR estimation methodologies varies significantly based on the model used and the sample size. The following tables summarize key findings from empirical research in forensic text comparison.

Table 1: System Performance vs. Text Sample Size (Multivariate Kernel Density Model) [7]

| Sample Size (Words) | Discrimination Accuracy (%) | Log-Likelihood Ratio Cost (Cllr) |

|---|---|---|

| 500 | ~76 | 0.68258 |

| 1000 | Information Not Provided | Information Not Provided |

| 1500 | Information Not Provided | Information Not Provided |

| 2500 | ~94 | 0.21707 |

Note: This study utilized word- and character-based stylometric features with the Multivariate Kernel Density formula. The Cllr is a performance metric where a lower value indicates better system discrimination.

Table 2: Method Comparison for LR Estimation (Poisson Model vs. Cosine Distance) [8]

| LR Estimation Method | Key Characteristics | Reported Performance (Cllr) |

|---|---|---|

| Feature-Based (Poisson Model) | Accounts for both similarity and typicality; theoretically more appropriate for textual data. | Outperformed score-based method by ~0.09 (under best-performing settings) |

| Score-Based (Cosine Distance) | A standard distance measure in authorship attribution; assesses similarity only. | Higher Cllr than the feature-based method |

Experimental Protocols

Protocol: Feature-Based LR Estimation Using a Poisson Model

1. Objective: To estimate the strength of forensic text comparison evidence using a feature-based method with a Poisson model, which accounts for both similarity and typicality of authorship features [8].

2. Materials:

- A collection of text documents from a known set of authors (e.g., the chatlog corpus of 115 authors [7] or 2,157 authors [8]).

- Text processing software for tokenization and normalization.

- Computational environment for statistical modeling (e.g., R, Python).

3. Procedure:

- Step 1: Feature Extraction. From each document, extract relevant stylometric features. Robust features include:

- Average character number per word token.

- Punctuation character ratio.

- Vocabulary richness measures [7].

- Step 2: Feature Selection. Implement a feature selection process to improve model performance by identifying the most discriminative features for the specific corpus [8].

- Step 3: Model Formulation. Develop a Poisson model to represent the distribution of the extracted linguistic features across the population of authors. The model should be capable of calculating the probability of observing the specific feature counts in both the known and questioned documents.

- Step 4: LR Calculation. For a given questioned document and a known document from a specific author, compute the Likelihood Ratio using the Poisson model. The LR is the ratio of the probability of the observed evidence (the linguistic features) given the hypothesis that the known and questioned documents share the same author, to the probability of the evidence given the hypothesis that they were written by different authors [8].

- Step 5: System Validation. Assess the performance and validity of the system using metrics such as the log-likelihood ratio cost (Cllr) and credible intervals [7].

Protocol: Assessing the Comprehension of LR Presentation Formats

1. Objective: To empirically determine the most effective way to present Likelihood Ratios to legal decision-makers (e.g., jurors, judges) to maximize understandability [9].

2. Materials:

- A pool of laypersons representative of a jury pool.

- Experimental materials presenting the same forensic evidence using different formats:

- Numerical Likelihood Ratios.

- Numerical Random-Match Probabilities.

- Verbal Strength-of-Support Statements.

- Standardized questionnaires to measure comprehension.

3. Procedure:

- Step 1: Subject Recruitment. Recruit a sufficient number of participants and randomly assign them to different experimental groups.

- Step 2: Intervention. Each group is presented with the same mock forensic scenario and evidence, but the strength of the evidence is communicated using only one of the presentation formats listed above.

- Step 3: Comprehension Assessment. Administer a questionnaire designed to measure key indicators of comprehension as defined by the CASOC framework, particularly:

- Sensitivity: The ability to distinguish between different strengths of evidence.

- Orthodoxy: The alignment of the interpreted strength with the intended, statistically derived strength.

- Coherence: The internal consistency of the interpretation across different scenarios [9].

- Step 4: Data Analysis. Compare the performance of the different groups on the comprehension indicators to identify which presentation format leads to the highest levels of sensitivity, orthodoxy, and coherence.

- Step 5: Iteration and Recommendation. Use the results to formulate evidence-based recommendations for forensic practitioners on presenting LRs in legal settings.

Workflow and Relationship Visualizations

LR Framework in Forensic Text Comparison

Subjectivity in the LR Process

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Forensic Text Comparison Research

| Item Name | Function/Description |

|---|---|

| Chatlog Corpus | A collection of real-world digital communications (e.g., from chatlog archives) used as a ground-truthed dataset for developing and validating authorship attribution models [7]. |

| Stylometric Features | Quantifiable linguistic characteristics extracted from text, such as "Average character number per word token," "Punctuation character ratio," and vocabulary richness measures, which serve as the data points for model computation [7]. |

| Multivariate Kernel Density Formula | A statistical method used to estimate the probability density of multivariate data, applied in LR frameworks to model the distribution of multiple stylometric features simultaneously [7]. |

| Poisson Model | A feature-based statistical model suitable for count-based linguistic data. It is theoretically advantageous as it considers both the similarity and typicality of features, unlike simple distance measures [8]. |

| Log-Likelihood Ratio Cost (Cllr) | A primary performance metric used to assess the overall discrimination accuracy and calibration of a forensic evaluation system based on likelihood ratios. A lower Cllr indicates better performance [7] [8]. |

The Likelihood Ratio (LR) framework is widely recognized as the logically and legally correct method for evaluating forensic evidence, including in the domain of Forensic Text Comparison (FTC) [1]. An LR is a quantitative statement of the strength of evidence, formulated as the ratio of two probabilities under competing hypotheses [1]. In the context of FTC, the typical prosecution hypothesis (Hp) is that "the source-questioned and source-known documents were produced by the same author," while the typical defense hypothesis (Hd) is that "the source-questioned and source-known documents were produced by different individuals" [1]. The LR provides a transparent and reproducible method for expressing how strongly the evidence supports one hypothesis over the other, enabling decision-makers to update their beliefs in a logically coherent manner via Bayes' Theorem [1]. This framework is increasingly mandated by forensic science regulators and professional associations, making its proper communication essential for researchers and practitioners [1].

Core Concepts and Quantitative Foundation

Likelihood Ratio Formulation

The Likelihood Ratio is mathematically expressed as:

LR = p(E|Hp) / p(E|Hd)

In this equation:

- p(E|Hp) represents the probability of observing the evidence (E) assuming the prosecution hypothesis (Hp) is true. This can be interpreted as the similarity between the questioned and known documents.

- p(E|Hd) represents the probability of observing the same evidence (E) assuming the defense hypothesis (Hd) is true. This can be interpreted as the typicality of the observed similarity—how common or distinctive it is in the relevant population [1].

The interpretation of LR values follows a standardized scale, as outlined in Table 1.

Table 1: Interpretation of Likelihood Ratio Values

| LR Value Range | Verbal Interpretation | Strength of Evidence |

|---|---|---|

| >1 to 10 | Limited support for Hp over Hd | Weak |

| 10 to 100 | Moderate support for Hp over Hd | Moderate |

| 100 to 1000 | Strong support for Hp over Hd | Strong |

| >1000 | Very strong support for Hp over Hd | Very Strong |

| 1 | Evidence has no diagnostic value | Neutral |

| <1 to 0.1 | Limited support for Hd over Hp | Weak |

| 0.1 to 0.01 | Moderate support for Hd over Hp | Moderate |

| 0.01 to 0.001 | Strong support for Hd over Hp | Strong |

| <0.001 | Very strong support for Hd over Hd | Very Strong |

Bayesian Interpretation for Decision-Makers

The LR's true utility emerges when combined with prior beliefs through the odds form of Bayes' Theorem:

Prior Odds × LR = Posterior Odds

This can be expressed as:

[P(Hp)/P(Hd)] × [p(E|Hp)/p(E|Hd)] = [P(Hp|E)/P(Hd|E)] [1]

It is crucial to understand that the forensic expert's role is to provide the LR, not to calculate the posterior odds or to opine on the ultimate issue of guilt. The prior odds fall within the purview of the trier-of-fact (e.g., the judge or jury), as they incorporate other case evidence beyond the specific textual analysis [1]. Presenting the LR separately maintains the logical separation of responsibilities and prevents the expert from usurping the court's authority.

Critical Validation Requirements for Meaningful LRs

For an LR value to be scientifically defensible and meaningful in a specific case, the underlying validation must meet two critical requirements, as detailed in Table 2.

Table 2: Core Validation Requirements for Forensically Valid LRs

| Requirement | Description | Pitfalls of Neglect |

|---|---|---|

| Relevant Data | Data used for validation and model training must be relevant to the specific conditions of the case under investigation [1]. | LRs derived from mismatched data (e.g., different topics or genres) misrepresent the actual strength of evidence and can mislead the trier-of-fact [1]. |

| Reflective Conditions | The conditions of the test trials must reflect the specific conditions of the questioned-source and known-source items in the case [10]. | A model trained on pooled data from non-representative conditions will produce miscalibrated LRs that are not valid for the case at hand [10]. |

These requirements are particularly critical in FTC due to the complexity of textual evidence. An author's writing style is influenced by multiple factors beyond identity, including topic, genre, formality, and emotional state [1]. For instance, a mismatch in topics between compared documents is a known challenging factor for authorship analysis [1]. Empirical validation must therefore account for these variables to ensure that the calculated LR is both relevant and reliable for the specific context of the case.

Experimental Protocols for Forensic Text Comparison

Protocol 1: Empirical Validation Under Case-Specific Conditions

This protocol ensures that LR calculations are validated using data and conditions relevant to a specific case.

- Case Condition Analysis: Define the specific conditions of the case. This includes variables such as text topic, genre, register, and the length and quality of the questioned and known documents [1].

- Relevant Data Collection: Compile a reference dataset where these conditions are reflected. For a case involving a mismatch in topics, the validation dataset must include texts with similar topical mismatches [1].

- Feature Extraction & Quantification: Apply quantitative measurements to the texts. This may involve extracting linguistic features such as:

- Lexical features: Word n-grams, character n-grams, vocabulary richness.

- Syntactic features: Part-of-speech tags, punctuation patterns, sentence length distributions.

- Stylistic features: Function word frequencies, complexity measures [1].

- Statistical Modeling: Train a statistical model (e.g., a Dirichlet-multinomial model) using the quantified features from the relevant dataset [1].

- LR Calculation & Calibration: Calculate LRs using the trained model. Subsequently, apply a calibration step, such as logistic regression calibration, to improve the realism and fairness of the LR values [1].

- Performance Validation: Assess the system's performance using metrics like the log-likelihood-ratio cost (Cllr) and visualize the results with Tippett plots [10] [1].

Protocol 2: Examiner-Specific Calibration Using Bayesian Updating

A primary criticism of methods that convert examiner conclusions to LRs is their reliance on data pooled from multiple examiners, which may not represent the performance of the specific examiner in a given case [10]. The following protocol addresses this via a Bayesian approach.

- Prior Elicitation: Use a large dataset of responses from multiple examiners (excluding the examiner of interest) to establish informed prior distributions for same-source and different-source responses [10].

- Blind Proficiency Testing: Integrate blind test trials into the specific examiner's regular workflow. The conditions of these trials should mirror the challenges of real casework [10] [10].

- Posterior Updating: As the examiner completes test trials, use their specific responses (both same-source and different-source) to update the prior models to examiner-specific posterior models [10].

- LR Calculation: Calculate a Bayes' factor using the expected values from the examiner's own same-source and different-source posterior models [10].

- Iterative Refinement: Continuously update the model as more data from the examiner becomes available. Over time, the LRs will become more reflective of the individual examiner's performance [10].

The following diagram illustrates this Bayesian updating workflow.

Diagram: Bayesian workflow for examiner-specific LR calibration.

The Scientist's Toolkit: Essential Reagents & Materials

The following table details key components necessary for conducting validated forensic text comparison research.

Table 3: Essential Research Reagents for Forensic Text Comparison

| Tool/Reagent | Function & Application | Key Considerations | |

|---|---|---|---|

| Reference Text Corpora | Provides population data for estimating typicality (p(E | Hd)) and for validation under specific conditions [1]. | Must be relevant to case conditions (topic, genre, language). Size and representativeness are critical for robust model building. |

| Quantitative Feature Sets | Converts textual data into measurable units for statistical analysis (e.g., n-grams, syntax, style markers) [1]. | Features must be linguistically meaningful and sufficiently discriminative between authors while being stable within an author's work. | |

| Statistical Models (e.g., Dirichlet-Multinomial) | Computational engines for calculating probabilities and deriving LRs from quantitative feature data [1]. | Model choice affects performance. Must be calibrated and validated for the specific task and data type. | |

| Calibration Datasets | Used to adjust raw model outputs to ensure LRs are fair and correctly scaled (e.g., not overstating the evidence) [1]. | Requires known-ground-truth data with same-source and different-source pairs that reflect casework conditions. | |

| Validation Metrics (e.g., Cllr) | Provides a quantitative measure of the system's performance and the validity of the LRs it produces [10] [1]. | Cllr measures overall system performance, rewarding good discrimination and calibration. A single number summarizes accuracy. |

Data Presentation & Visualization Protocols

Tippett Plots for System Validation

A Tippett plot is a standard method for visualizing the performance of a forensic evaluation system. It shows the cumulative distribution of LRs for both same-source (Hp true) and different-source (Hd true) conditions.

- Data Preparation: Collect LRs from a validation test for both same-source and different-source comparisons.

- Plot Generation:

- On the x-axis, plot the log10(LR) value.

- On the y-axis, plot the cumulative proportion of cases.

- Plot two curves: one showing the proportion of same-source comparisons with an LR greater than or equal to a given value, and another showing the proportion of different-source comparisons with an LR less than a given value.

- Interpretation:

- The further the same-source curve is to the right and the further the different-source curve is to the left, the better the system's discrimination.

- The closer the curves are to the extremes (top left and bottom right), the more calibrated the LRs are. For example, a well-calibrated system will show that 90% of its same-source comparisons yield LRs greater than 10 [10] [11].

The logical process for generating and interpreting a validation report is summarized below.

Diagram: Workflow for generating a system validation report.

Effectively communicating LR values to decision-makers in forensic science, and specifically in FTC, requires more than just presenting a number. It demands a rigorous, scientifically defensible process built upon two pillars: validation with data relevant to the case and under conditions that reflect the case [10] [1]. The protocols outlined herein—for empirical validation, examiner-specific calibration, and result visualization—provide a pathway toward producing LRs that are not only logically sound but also forensically valid and meaningful in a specific context. As the field moves towards greater adoption of the LR framework, adherence to these principles is paramount for maintaining scientific integrity and ensuring that evidence presented to the trier-of-fact is both reliable and accurately interpreted.

From Theory to Text: Methodological Approaches for LR Calculation

Within the Likelihood Ratio (LR) framework for forensic text comparison, the score-based approach provides a statistically robust method for quantifying the strength of evidence. This methodology involves reducing multivariate textual data into a single, comparable score via distance measures, which is then converted into a likelihood ratio. This document details the application notes and experimental protocols for implementing two prominent distance measures—Cosine distance and Burrows’s Delta—within this forensic paradigm. The LR framework offers a logically correct structure for evidence interpretation, balancing the probabilities of the evidence under competing prosecution and defense hypotheses, and is increasingly aligned with international forensic standards such as ISO 21043 [12].

Theoretical Foundation: The LR Framework and Score-Based Methods

The core of the likelihood ratio framework in forensic science is the Bayesian interpretation of evidence. It assesses the probability of the observed evidence (E) under two competing propositions: the prosecution hypothesis (Hp) that the suspect and the author of the questioned text are the same person, and the defense hypothesis (Hd) that they are different individuals [13]. The LR is calculated as:

LR = p(E|Hp) / p(E|Hd)

A score-based method simplifies this calculation when dealing with the high-dimensional data typical of textual evidence. Instead of working directly with the multivariate feature space (e.g., word frequencies), this approach uses a distance measure to calculate a univariate score representing the (dis)similarity between a known source text (e.g., from a suspect) and a questioned text (e.g., from a crime) [13]. The subsequent step, score-to-LR conversion, relies on modelling the probability densities of these scores from many known same-author and different-author comparisons [13]. The log-likelihood ratio cost (Cllr) is a primary metric for assessing the validity and performance of the computed LRs, with lower values indicating a more reliable system [8] [7].

Table 1: Core Components of the Score-Based LR Framework

| Component | Description | Role in Forensic Text Comparison |

|---|---|---|

| Likelihood Ratio (LR) | Ratio of the probability of evidence under Hp to the probability under Hd [13] | Quantifies the strength of evidence for one of two competing hypotheses. |

| Score-Based Approach | A method that reduces multivariate data to a univariate similarity/distance score [13] | Enables practical computation of LRs from complex textual data. |

| Distance Measure | An algorithm that computes a scalar value representing the (dis)similarity between two texts. | Produces the score used for LR calculation; central to method performance. |

| Cllr (Log-LR Cost) | A metric measuring the average cost of misrepresenting the evidence strength [8] [7] | Assesses the overall accuracy and discrimination power of the LR system. |

Distance Measures: Protocols and Implementation

Cosine Distance

Cosine distance is derived from the cosine similarity metric, which measures the cosine of the angle between two non-zero vectors in a multi-dimensional space. In text comparison, these vectors typically represent word frequencies from a Bag-of-Words model.

Experimental Protocol: Cosine Distance with Bag-of-Words

Text Preprocessing: For both the known (K) and questioned (Q) text samples, perform the following:

- Convert all text to lowercase.

- Remove punctuation and numerical characters.

- (Optional) Apply stemming or lemmatization.

Feature Vector Construction (Bag-of-Words):

- Create a unified vocabulary (

V) from the most frequentNwords across a large, representative background corpus. The value ofNis a tunable parameter (e.g., 100 to 2000) [13]. - Represent each document (K and Q) as a vector of length

N, where each element corresponds to the frequency (or normalized frequency) of a word fromVin that document.

- Create a unified vocabulary (

Score Calculation:

- Compute the cosine similarity between the vector for K (

vec_k}) and Q (vec_q}):similarity = (vec_k · vec_q) / (||vec_k|| * ||vec_q||)where·denotes the dot product and|| ||denotes the Euclidean norm. - The cosine distance is then:

distance = 1 - similarity

- Compute the cosine similarity between the vector for K (

LR Conversion:

- This distance score must be converted into a likelihood ratio. This requires reference distributions for same-author (SR) and different-author (DR) scores, built using a common source method on a large background corpus [13].

- The probability density of the calculated distance score under the SR and DR models is used to compute the LR:

LR = f(distance | SR) / f(distance | DR), wherefis the probability density function. Parametric models (e.g., Normal, Log-normal) can be fitted to the SR and DR score distributions for this purpose [13].

Burrows's Delta

Burrows's Delta is a distance measure specifically designed for stylometric analysis and authorship attribution. It is known for its effectiveness in quantifying stylistic differences based on the relative frequencies of very common words, which are largely used unconsciously by authors [14].

Experimental Protocol: Burrows's Delta

Text Preprocessing: Similar to the Cosine protocol, standardize the texts (K and Q) by lowercasing and removing punctuation.

Feature Selection:

- The features are typically the

Nmost frequent words (e.g., 100-500 words) across the entire corpus under analysis. These are overwhelmingly function words (e.g., "the", "and", "of", "to") [14].

- The features are typically the

Feature Standardization:

- For each of the

Nwords, calculate its mean frequency and standard deviation across all documents in a large background corpus. - For both K and Q, convert the raw frequency of each word to a z-score:

z = (frequency - mean_frequency) / standard_deviation.

- For each of the

Delta Calculation:

LR Conversion:

- Similar to the Cosine distance method, the Delta score must be converted to an LR. This involves building reference distributions of Delta scores from numerous known same-author and different-author comparisons.

- The probability densities of the computed Delta score under these two distributions form the basis of the LR.

Diagram 1: A generalized workflow for implementing score-based methods, showing the common steps from raw text to a computed Likelihood Ratio, with a selection of distance measures.

Performance and Validation

Empirical validation is critical for demonstrating the reliability of any forensic method. Research has shown that the choice of distance measure and parameters significantly impacts performance.

Table 2: Quantitative Performance of Score-Based Methods (Exemplary Data)

| Distance Measure | Best-Performing Context / Parameters | Reported Performance (Cllr) | Key Findings |

|---|---|---|---|

| Cosine Distance | Bag-of-Words model with ~1500 most frequent words; document length ≥1400 words [13] | Varies with parameters; lower Cllr indicates better performance. | Outperforms Euclidean and Manhattan distances in Bag-of-Words models for authorship attribution [13]. |

| Burrows's Delta | Used with several hundred most frequent function words; applied to texts of the same genre and period [14] | Not explicitly quantified in provided results, but widely validated in stylometry. | A standard, effective tool in authorship attribution studies [8]. Sensitive to genre and topic influences. |

| Feature-Based (Poisson Model) | Compared against Cosine; benefits from feature selection [8] | Cllr improvement of ~0.09 over score-based Cosine [8] | Feature-based methods can outperform score-based by assessing both similarity and typicality, not just similarity [8]. |

Validation Protocol:

- Data Preparation: Use a large, relevant corpus of known authorship. Split the data into known and questioned samples for testing.

- System Calibration: For a given set of parameters (e.g.,

Nmost frequent words, document length), compute scores for a vast set of same-author and different-author comparisons to build the reference SR and DR distributions. - Performance Assessment:

- Primary Metric: Calculate the Cllr for the system. This metric evaluates the discriminative power and calibration of the computed LRs [8] [7].

- Visual Assessment: Generate Tippett plots to graphically represent the distribution of LRs for ground-truth same-author and different-author pairs [13].

- Robustness Testing: Investigate how performance varies with text length and feature set size, as these are critical factors in real-world applications where data may be limited [7] [13].

The Scientist's Toolkit: Research Reagents & Materials

Table 3: Essential Materials for Forensic Text Comparison Experiments

| Category / Item | Function / Description | Example / Specification |

|---|---|---|

| Reference Corpora | Provides a background population for modeling feature distributions (e.g., word means/standard deviations for Delta) and building same-author/different-author score distributions. | Amazon Product Data Corpus [13]; Chatlog archives from real cases [7]. |

| Text Preprocessing Tools | Software libraries to standardize text data before analysis, ensuring comparability. | Python's nltk (Natural Language Toolkit) for tokenization, lowercasing, and punctuation removal [14]. |

| Feature Sets | The linguistic variables used to represent an author's style. | Most Frequent Words (MFW) [14]; Character N-grams; Vocabulary Richness Measures [7]; Punctuation Ratios [7]. |

| Computational Environment | Software and hardware for performing intensive calculations and statistical modeling. | Python with scikit-learn for machine learning and scipy for statistical modeling; sufficient RAM/CPU for high-dimensional vector operations. |

| Validation Metrics | Quantitative tools to measure the accuracy and reliability of the method. | Log-Likelihood Ratio Cost (Cllr) [8] [7]; Tippett Plots [13]; Equal Error Rate (EER). |

Within the Likelihood Ratio framework for forensic text comparison, quantifying the strength of evidence is paramount. This framework assesses whether observed textual evidence more strongly supports one proposition (e.g., that a questioned document originated from a specific suspect) or an alternative proposition (e.g., that it originated from someone else) [8]. Two principal methodological approaches exist for this quantification: score-based methods and feature-based methods. Score-based methods, which often use distance measures like Cosine distance, are commonly used in authorship attribution but possess significant limitations. They primarily assess the similarity between two documents without adequately accounting for the typicality of the features within a relevant population, and they often rely on statistical assumptions that textual data may violate [8].

Feature-based methods, in contrast, directly model the distribution of linguistic features in a population. This approach allows for the computation of a Likelihood Ratio (LR) that naturally incorporates both similarity and typicality, offering a more statistically robust foundation for evidence evaluation. The log-Likelihood Ratio cost (Cllr) is a key metric for evaluating the performance of these methods, with lower values indicating better system performance [8]. These application notes detail the implementation of two powerful feature-based models: a Poisson model for discrete feature counts and a Multivariate Kernel Density Estimation (KDE) model for continuous data. The integration of these models provides a comprehensive toolkit for forensic text comparison, enabling analysts to handle diverse types of linguistic evidence.

The Poisson Model for Discrete Linguistic Features

Model Rationale and Foundation

The Poisson model is theoretically well-suited for authorship attribution tasks because it can directly model the occurrence rates of discrete linguistic features, such as the frequencies of specific function words, character n-grams, or syntactic patterns [8]. In a seminal study comparing score- and feature-based methods, a Poisson model was implemented for forensic text comparison using a corpus of texts from 2,157 authors. The study demonstrated that the feature-based Poisson model outperformed the score-based Cosine distance method by a Cllr value of approximately 0.09 under optimal settings, confirming its practical superiority [8]. This performance can be further enhanced through appropriate feature selection techniques, which refine the model by identifying the most discriminative linguistic variables.

The Poisson model operates on the principle that the number of occurrences of a particular linguistic feature in a text document follows a Poisson distribution. The model estimates the rate parameters (λ) for these features across different authors or author populations. When comparing two documents, the Likelihood Ratio is computed by comparing the probability of observing the feature counts under the assumption that both documents come from the same source versus the assumption that they come from different sources.

Experimental Protocol and Implementation

Protocol 2.1: Implementing the Poisson Model for Forensic Text Comparison

- Objective: To compute a forensic Likelihood Ratio using a Poisson model for the comparison of textual documents based on discrete linguistic features.

Materials and Data Requirements:

- A representative corpus of text documents from known authors (reference population)

- Questioned document(s) of unknown authorship

- Computational environment with statistical software (e.g., R, Python)

- Predefined set of linguistic features (e.g., word frequencies, character n-grams)

Procedure:

- Feature Extraction: From all documents in the reference population and the questioned document, extract counts for each predefined linguistic feature. This creates a feature vector for each document where each element represents the count of a specific feature.

- Feature Selection: Identify the most discriminative features to improve model performance and reduce dimensionality. This can be achieved through methods such as:

- Frequency-based filtering (removing very rare or very common features)

- Measures of association with author categories

- Regularization techniques (e.g., L1 regularization/Lasso)

- Parameter Estimation: For each feature in the model, estimate the rate parameters (λ) for the Poisson distributions under relevant hypotheses. This typically involves:

- Estimating author-specific parameters for known authors

- Estimating population-wide parameters for the reference corpus

- Likelihood Calculation: Calculate the probability of observing the feature counts in the questioned document under two competing hypotheses:

- H1: The questioned document originated from a specific suspect author.

- H2: The questioned document originated from someone else in the relevant population.

- Likelihood Ratio Computation: Compute the LR as the ratio of the probabilities: LR = P(Evidence|H1) / P(Evidence|H2).

Validation:

- Evaluate system performance using the Cllr metric.

- Conduct cross-validation experiments to ensure generalizability.

- Compare performance against baseline methods (e.g., Cosine distance).

Table 1: Key Parameters for Poisson Model Implementation

| Parameter | Description | Considerations for Selection |

|---|---|---|

| Linguistic Features | Discrete countable elements (e.g., word frequencies, character n-grams) | Should be sufficiently frequent for modeling yet discriminative between authors |

| Feature Vector Dimension | Number of features used in the model | Balance between model richness and computational complexity; typically refined through feature selection |

| Rate Parameters (λ) | Expected occurrence rates for each feature | Estimated from reference population data using maximum likelihood estimation |

| Smoothing Parameter | Adjustment for zero counts | Prevents undefined probabilities when unobserved features appear in questioned documents |

Multivariate Kernel Density Estimation for Continuous Features

Theoretical Background

Kernel Density Estimation (KDE) is a nonparametric technique for estimating probability density functions from data without making strong assumptions about the underlying distribution [15] [16] [17]. This is particularly valuable in forensic text comparison because linguistic data often exhibits complex, irregular distributions that do not conform to standard parametric forms [16]. The multivariate extension of KDE allows for the modeling of multiple continuous linguistic variables simultaneously, capturing their interdependencies—a capability crucial for representing the complex feature spaces encountered in textual analysis.

For a d-variate sample (\mathbf{X}1, \ldots, \mathbf{X}n) drawn from an unknown density function (f), the multivariate KDE at point (\mathbf{x}) is defined as:

[\hat{f}{\mathbf{H}}(\mathbf{x}) = \frac{1}{n} \sum{i=1}^n K{\mathbf{H}}(\mathbf{x} - \mathbf{X}i)]

where (K{\mathbf{H}}(\mathbf{x}) = |\mathbf{H}|^{-1/2}K(\mathbf{H}^{-1/2}\mathbf{x})) is the scaled kernel function, and (\mathbf{H}) is the (d \times d) bandwidth matrix that controls the smoothness of the estimate [15] [18]. A common and computationally efficient simplification uses a diagonal bandwidth matrix (\mathbf{H} = \mathrm{diag}(h1^2, \ldots, h_d^2)), which leads to the product kernel formulation:

[\hat{f}(\mathbf{x};\mathbf{h}) = \frac{1}{n} \sum{i=1}^n K{h1}(x1 - X{i,1}) \times \cdots \times K{hd}(xd - X_{i,p})]

The most frequently used kernel is the Gaussian (normal) kernel, though other kernels like Epanechnikov, triangle, or box can be employed [17] [19]. The choice of kernel function is generally less critical than the selection of an appropriate bandwidth [16].

Bandwidth Selection: Critical Considerations

The bandwidth matrix (\mathbf{H}) profoundly influences the resulting density estimate, balancing between undersmoothing (high variance) and oversmoothing (high bias) [15] [17]. Selecting an optimal bandwidth is therefore crucial for producing reliable density estimates for forensic evaluation. The most common optimality criterion is the Mean Integrated Squared Error (MISE) or its asymptotic approximation (AMISE) [15] [17].

For practical implementation, especially with multivariate data, two main classes of bandwidth selectors are widely used:

- Plug-in Selectors: These involve replacing unknown quantities in the AMISE with their estimates, particularly focusing on estimating the Hessian (second derivative matrix) of the unknown density [15].

- Smoothed Cross Validation: A subset of cross-validation techniques that modifies the standard cross-validation criterion to reduce variance [15].

For high-dimensional data, using a full bandwidth matrix becomes computationally challenging, as the number of parameters grows quadratically with dimension. In such cases, a diagonal bandwidth matrix is often employed, which scales linearly with dimension and can be further simplified to a single bandwidth parameter when variables are standardized to common scales [18].

Table 2: Bandwidth Selection Methods for Multivariate KDE

| Method | Principle | Advantages | Limitations |

|---|---|---|---|

| Plug-in (PI) | Minimizes an estimate of the AMISE where unknown functionals of the density are directly estimated [15] | Fast convergence; stable performance | Computational complexity increases with dimension |

| Smoothed Cross Validation (SCV) | Modifies the cross-validation criterion to reduce variance [15] | More robust than standard cross-validation | Can be computationally intensive for large datasets |

| Rule-of-Thumb | Uses distributional assumptions (e.g., Silverman's rule for Gaussian data) [17] [19] | Computationally simple; easy to implement | Can yield inaccurate estimates for non-Gaussian data |

Experimental Protocol and Implementation

Protocol 3.1: Implementing Multivariate KDE for Forensic Comparison

- Objective: To estimate multivariate probability densities for continuous linguistic features to compute Likelihood Ratios in forensic text comparison.

Materials and Data Requirements:

- Reference population data with multiple continuous linguistic measurements

- Questioned document feature vector

- Software with multivariate KDE capabilities (e.g.,

kspackage in R,mvksdensityin MATLAB) - Sufficient computational resources for handling multidimensional data

Procedure:

- Feature Space Definition: Identify and measure continuous linguistic variables (e.g., sentence length statistics, vocabulary richness measures, syntactic complexity indices).

- Data Standardization: Standardize variables to common scales if using simplified bandwidth selectors, particularly when variables exhibit different variances.

- Bandwidth Selection: Select an appropriate bandwidth matrix using a chosen method (see Table 2). For initial implementation, consider:

- Diagonal bandwidth matrix with univariate rule-of-thumb applied to each dimension

- More sophisticated plug-in or smoothed cross-validation selectors for refined analysis

- Density Estimation: Compute the multivariate KDE for the reference population using the selected bandwidth.

- Likelihood Evaluation: Evaluate the density estimate at the feature vector of the questioned document to obtain probabilities for Likelihood Ratio computation.

Technical Considerations:

- The optimal MISE convergence rate for multivariate KDE is (O(n^{-4/(d+4)})), which deteriorates as dimension (d) increases [15]. This underscores the importance of dimensionality management through feature selection.

- For computational efficiency with large datasets, binned KDE implementations can be employed, though these may have limitations in higher dimensions (e.g.,

ks::kdedisables binning for (p > 4)) [18]. - Boundary correction methods (e.g., log transformation or reflection) should be employed when the density has bounded support [19].

Integration and Comparative Analysis

Workflow Integration for Forensic Text Comparison

The integration of Poisson and multivariate KDE models within a unified forensic text comparison framework provides a comprehensive approach to handling diverse types of linguistic evidence. The following workflow diagram illustrates the logical relationship between these methods and their role in the Likelihood Ratio framework:

Comparative Performance and Application Contexts

Empirical research demonstrates that feature-based methods, including both Poisson and KDE approaches, can outperform traditional score-based methods. In direct comparisons, the feature-based Poisson model achieved superior performance (lower Cllr values) compared to Cosine distance-based score methods [8]. The performance of these models can be further enhanced through appropriate feature selection techniques.

Table 3: Model Selection Guide for Forensic Text Comparison

| Criterion | Poisson Model | Multivariate KDE |

|---|---|---|

| Primary Application | Discrete count data (word frequencies, character n-grams) | Continuous features (sentence length, syntactic complexity) |

| Data Requirements | Frequency counts of linguistic features | Continuous measurements of linguistic variables |

| Key Strengths | Naturally models count data; theoretically appropriate for linguistics [8] | Makes no distributional assumptions; flexible for complex distributions [16] |

| Computational Load | Generally moderate | Increases with dimensionality and dataset size |

| Performance Consideration | Demonstrated superior to Cosine distance (Cllr improvement ~0.09) [8] | Performance heavily dependent on bandwidth selection [15] [17] |

| Implementation Tools | Custom implementation in statistical software | R: ks::kde, MATLAB: mvksdensity, Python: sklearn.neighbors.KernelDensity |

Table 4: Essential Resources for Implementation

| Resource Category | Specific Tools/Software | Function in Research | Implementation Notes |

|---|---|---|---|

| Programming Environments | R, Python, MATLAB | Primary platforms for statistical modeling and algorithm implementation | R offers comprehensive packages for specialized statistical modeling |

| Specialized KDE Packages | ks package (R) [18], mvksdensity (MATLAB) [19] |

Implements multivariate KDE with sophisticated bandwidth selection | ks::kde supports up to 6 dimensions; for p>4, use binned = FALSE [18] |

| Text Processing Libraries | NLTK, spaCy (Python); tm, tidytext (R) | Preprocessing raw text and extracting linguistic features | Critical for feature engineering prior to model application |

| Data Resources | Representative text corpora | Provides reference population for modeling feature distributions | Must be relevant to forensic context (e.g., general language, specialized domains) |

| Performance Validation Tools | Custom implementations for Cllr calculation | Evaluates system reliability and calibration | Essential for demonstrating methodological validity in forensic context |

The implementation of feature-based methods, specifically Poisson models for discrete data and multivariate KDE for continuous data, provides a robust statistical foundation for forensic text comparison within the Likelihood Ratio framework. These methods offer significant advantages over traditional score-based approaches by properly accounting for both similarity and typicality of textual features. The experimental protocols detailed in these application notes provide researchers with practical guidance for implementing these sophisticated techniques. Continued refinement of these methods—particularly through advanced bandwidth selection for KDE and optimized feature selection for both approaches—promises to further enhance the reliability and scientific validity of forensic text comparison in both research and casework applications.

Within the discipline of forensic text comparison (FTC), the selection of discriminative stylometric features is paramount for performing robust authorship analysis. This process forms the core of a scientifically defensible approach to evaluating textual evidence, which must be integrated within the likelihood ratio (LR) framework to quantitatively express the strength of evidence for authorship hypotheses [1]. This document provides detailed application notes and protocols for the selection and analysis of two pivotal categories of stylometric features: vocabulary richness and punctuation patterns.

The LR framework offers a logically and legally correct method for evaluating forensic evidence, including authorship [1]. It requires a transparent, reproducible, and empirically validated methodology. The stylometric features discussed herein serve as the quantitative measurements fed into statistical models to compute LRs, thereby assisting the trier-of-fact in updating their beliefs regarding whether a suspect is the author of a questioned document [20] [1].

Stylometric Features in the LR Framework

The Role of Features in a Scientifically Defensible Approach

A scientifically defensible approach to forensic authorship analysis is built upon four key elements: the use of quantitative measurements, statistical models, the LR framework, and empirical validation [1]. Stylometric features constitute the essential quantitative measurements. The concept of idiolect—a distinctive, individuating way of writing—provides the theoretical foundation, suggesting that authors unconsciously exhibit consistent and measurable patterns in their use of language [1]. The task of the forensic analyst is to detect and quantify these patterns.

In the context of the LR framework, the evidence (E) is typically the multivariate data derived from the stylometric features measured in both questioned and known documents. The two competing hypotheses are:

- Prosecution hypothesis (Hp): The suspect is the author of the questioned document.

- Defense hypothesis (Hd): The suspect is not the author of the questioned document [1].

The LR is then calculated as LR = p(E|Hp) / p(E|Hd), representing the strength of the evidence under these two propositions [1].

Classification of Stylometric Features

Stylometric features are diverse and can be categorized in various ways. A primary distinction exists between:

- Class characteristics: Features that can restrict the circle of potential authors to a specific population (e.g., social group) [21].

- Individual characteristics: Features that point toward a specific individual [21].

The features detailed in this protocol—vocabulary richness and punctuation—are largely individual characteristics, though they can also exhibit class-based variations.

Table 1: Major Categories of Stylometric Features

| Feature Category | Description | Examples | Key References |

|---|---|---|---|

| Lexical | Features related to vocabulary usage and word choice. | Word n-grams, vocabulary richness, word length distribution. | [20] [21] |

| Character | Features based on character-level patterns. | Character n-grams, average characters per word. | [20] [7] |

| Syntactic | Features describing sentence structure and grammar. | Sentence length, phrase structures, part-of-speech tags. | [21] [22] |

| Punctuation | Features capturing the use of punctuation marks. | Punctuation character ratio, frequency of specific marks. | [7] |

| Structural | Features related to the organization of the text. | Paragraph length, use of capitalization. | [22] |

Vocabulary Richness Analysis

Definition and Historical Context