Advancing Forensic Science: A TRL-Based Protocol for Interpreting Complex DNA Mixtures

This article presents a comprehensive Technology Readiness Level (TRL)-based framework for the interpretation of complex forensic DNA mixture evidence, addressing a critical need for standardized protocols in biomedical and forensic...

Advancing Forensic Science: A TRL-Based Protocol for Interpreting Complex DNA Mixtures

Abstract

This article presents a comprehensive Technology Readiness Level (TRL)-based framework for the interpretation of complex forensic DNA mixture evidence, addressing a critical need for standardized protocols in biomedical and forensic research. It explores the foundational challenges of mixture deconvolution, including allele drop-out, stutter, and low-template DNA, and details the evolution of statistical methods from the Combined Probability of Inclusion/Exclusion (CPI/CPE) to modern probabilistic genotyping systems (PGS) like STRmix™ and TrueAllele®. The protocol provides methodological guidance for application, troubleshooting for complex casework, and emphasizes the necessity of rigorous validation and comparative analysis to ensure reliability and reproducibility across laboratories, thereby enhancing the quality of evidence presented in clinical and legal contexts.

The Complex Landscape of Forensic DNA Mixtures: Foundational Concepts and Interpretational Challenges

Defining DNA Mixtures and the Rise of Complex Evidence in Modern Casework

A DNA mixture refers to a biological sample that contains genetic material from two or more individuals [1]. While such evidence has always been part of forensic casework, contemporary laboratories are facing a substantial increase in the frequency and complexity of these mixtures [1] [2]. This shift is largely driven by advances in forensic methodology that enable analysis of increasingly challenging samples, including those with low quantities of DNA, degraded DNA, or contributions from three or more individuals [1] [2]. Modern DNA analysis typically targets short tandem repeat (STR) polymorphisms at multiple genetic loci, where the presence of three or more allelic peaks at two or more loci generally indicates a mixture [1].

The rising complexity of DNA evidence presents substantial interpretative challenges, including allele drop-out (failure to detect alleles from a true contributor), allele stacking (shared alleles between contributors appearing as a single peak), and difficulty distinguishing PCR stutter artifacts from true alleles [1] [2]. Low-template DNA samples (often below 200 pg) are particularly prone to stochastic effects that compound these issues [2]. These challenges necessitate robust, standardized protocols for interpretation and statistical evaluation to ensure the reliability of forensic conclusions.

Table 1: Characteristics of Simple versus Complex DNA Mixtures

| Feature | Simple Mixture | Complex Mixture |

|---|---|---|

| Number of Contributors | Typically two | Often three or more [3] |

| DNA Quantity | Sufficient, high-quality | Low-template or degraded [1] [2] |

| Stochastic Effects | Minimal | Significant (allele drop-out, drop-in) [2] |

| Profile Clarity | Major and minor contributors often distinguishable | Contributors may not be easily separable [1] |

| Statistical Approach | Combined Probability of Inclusion (CPI) may be appropriate | Often requires probabilistic genotyping/Likelihood Ratios [1] |

Experimental Protocols for DNA Mixture Analysis

Sample Preparation and STR Amplification

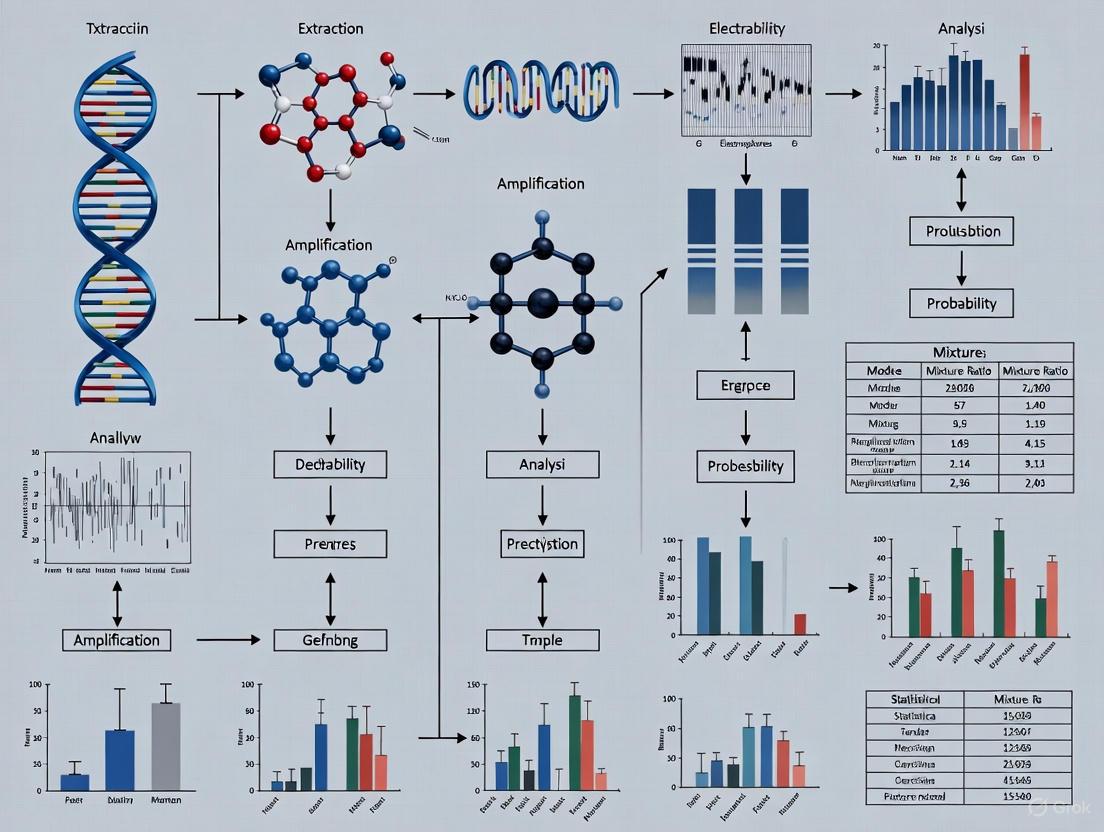

The initial phase of DNA mixture analysis involves careful sample processing to generate interpretable genetic profiles. The following protocol outlines the standard workflow for processing forensic DNA mixtures:

- DNA Extraction: Isolate DNA from forensic specimens using validated extraction methods optimized for the sample type (e.g., semen, blood, saliva, touched items) [2].

- DNA Quantification: Precisely measure total human DNA and, if relevant, male DNA content using quantitative PCR (qPCR) or digital PCR (dPCR) methods [3]. Accurate quantification is critical for determining optimal input into amplification reactions.

- Amplification Setup: Amplify target STR loci using commercial multiplex kits (e.g., PowerPlex Fusion, GlobalFiler, ForenSeq DNA Signature Prep) following manufacturer protocols [3] [4]. These kits typically target 15-24 highly polymorphic autosomal STR loci plus amelogenin for sex determination [2].

- PCR Amplification: Conduct polymerase chain reaction (PCR) using thermal cycling parameters specified by the kit manufacturer. For low-template samples, the number of PCR cycles may be increased (e.g., >28 cycles), though this heightens stochastic effects [2].

- Capillary Electrophoresis: Separate amplified DNA fragments by size using capillary electrophoresis instruments (e.g., Applied Biosystems 3500 Genetic Analyzer) [4]. The instrument generates electropherograms (EPGs) displaying peaks representing detected alleles [1].

Data Interpretation Workflow

The interpretation of DNA mixture data requires systematic analysis of the EPG to determine the number of contributors and their potential genotypes prior to statistical evaluation.

Protocol for Combined Probability of Inclusion (CPI) Calculation

The Combined Probability of Inclusion (CPI) remains one of the most widely used statistical methods for evaluating DNA mixture evidence, particularly in the Americas, Asia, Africa, and the Middle East [1]. The CPI represents the proportion of a population that would be included as potential contributors to the observed mixture. The following protocol details its proper application:

- Locus-by-Locus Assessment: Examine each genetic locus independently. Disqualify any locus where allele drop-out is considered possible based on peak height observations at other loci in the profile [1].

- Allele Identification: Identify all alleles present at each qualified locus above the analytical threshold.

- Calculate Probability of Inclusion (PI): For each qualified locus, calculate PI using the formula:

PI = (sum of allele frequencies in the mixture)^2[1]. For example, if alleles A, B, and C are observed in a mixture with frequencies p, q, and r, then PI = (p + q + r)^2. - Compute Combined CPI: Multiply the individual PI values across all qualified loci to obtain the overall CPI:

CPI = PI₁ × PI₂ × PI₃ × ... × PIₙ[1]. - Report Combined Probability of Exclusion: The CPE, calculated as

1 - CPI, represents the probability of excluding a random unrelated individual as a potential contributor [1].

Critical Considerations: The CPI method requires that all alleles of the donor being considered are detected above the analytical threshold. If a profile component is low-level, additional considerations are needed to ensure allele drop-out has not occurred. Loci omitted from CPI calculation may still be used for exclusionary purposes [1].

Advanced Statistical Approaches for Complex Mixtures

Probabilistic Genotyping and Likelihood Ratios

For complex mixtures with potential allele drop-out, low-template DNA, or multiple contributors, probabilistic genotyping methods using Likelihood Ratios (LRs) are increasingly employed [1] [4]. These systems use statistical models to consider all possible genotype combinations that could explain the observed DNA data, assigning probabilities to each scenario [4].

The LR compares the probability of the observed DNA evidence under two competing propositions:

- Proposition 1 (Prosecution): The suspect contributed to the DNA mixture.

- Proposition 2 (Defense): Unknown individuals from the population contributed to the DNA mixture.

The formula is expressed as:

LR = P(E | H₁) / P(E | H₂)

where E represents the evidence DNA profile, H₁ is the prosecution proposition, and H₂ is the defense proposition [4].

Table 2: Comparison of DNA Mixture Interpretation Methods

| Method | Application Scope | Strengths | Limitations |

|---|---|---|---|

| Combined Probability of Inclusion (CPI) | Simple mixtures with no allele drop-out [1] | Simple calculation, easy to explain in court [1] | Not suitable for complex mixtures with potential drop-out [1] |

| Likelihood Ratio (LR) with Probabilistic Genotyping | Complex mixtures, low-template DNA, multiple contributors [1] [4] | Accounts for drop-out/drop-in; uses all data including peak heights [4] | Computationally intensive; requires specialized software [4] |

| TrueAllele System | Mixtures with up to 10 contributors [4] | Uses continuous interpretation; no analytical threshold [4] | Proprietary system; requires validation [4] |

| STRmix | Complex forensic mixtures [3] | Fully continuous; validated for casework | Requires detailed validation and training |

Validation of Probabilistic Genotyping Systems

Validation studies for probabilistic genotyping systems typically assess reliability through metrics including:

- Sensitivity: Ability to detect true contributors.

- Specificity: Ability to exclude non-contributors.

- Reproducibility: Consistency of results across repeated analyses [4].

These studies demonstrate that the amount of DNA match information (measured as log(LR)) is proportional to the quantity of DNA contributed by an individual [4]. Recent validation research has confirmed the reliability of probabilistic genotyping systems for interpreting complex DNA mixtures containing up to ten contributors [4].

Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Forensic DNA Mixture Analysis

| Reagent/Material | Function | Example Products |

|---|---|---|

| STR Multiplex Kits | Simultaneous amplification of multiple STR loci | PowerPlex Fusion, GlobalFiler, AmpFlSTR NGM SElect [2] [4] |

| Quantification Kits | Precise measurement of human and male DNA | Quantifier Duo, Plexor HY System [2] [4] |

| Next-Generation Sequencing Assays | High-resolution sequencing of STR and SNP markers | ForenSeq DNA Signature Prep Kit, Precision ID GlobalFiler NGS Panel [3] |

| Reference DNA Standards | Quality control and method validation | NIST Standard Reference Materials (SRM 2391d) [5] |

| Probabilistic Genotyping Software | Statistical interpretation of complex DNA mixtures | TrueAllele, STRmix, EuroForMix [1] [4] |

The evolution of forensic DNA analysis has led to increased encounter rates with complex mixture evidence, necessitating advanced interpretation protocols and statistical approaches. While the Combined Probability of Inclusion method remains appropriate for straightforward mixtures without allele drop-out, probabilistic genotyping systems using Likelihood Ratios offer a more scientifically rigorous framework for evaluating complex mixtures with potential stochastic effects [1] [4]. Proper application of these methods requires thorough validation, standardized protocols, and ongoing training to ensure reliable interpretation of DNA mixture evidence in modern forensic casework. The forensic community continues to develop new resources, including publicly available mixture data sets and standardized reference materials, to support improved consistency and reliability in DNA mixture interpretation [5] [3].

Forensic DNA analysis, particularly of complex mixtures, faces significant interpretational challenges due to stochastic effects and technical artifacts. Allele drop-out, drop-in, and stochastic effects represent three core hurdles that can compromise the reliability of DNA evidence if not properly accounted for in analytical protocols. These phenomena become increasingly prevalent with low-template DNA (LTDNA), degraded samples, and mixtures with multiple contributors, directly impacting the Technology Readiness Level (TRL) of forensic DNA interpretation methods by introducing uncertainty in results. This paper outlines detailed application notes and experimental protocols to identify, quantify, and mitigate these issues within a TRL-based framework for forensic DNA mixture interpretation research.

Definitions and Underlying Mechanisms

Key Definitions and Impact on DNA Analysis

Table 1: Core Interpretational Challenges in Forensic DNA Analysis

| Term | Definition | Primary Cause | Impact on Profile |

|---|---|---|---|

| Allele Drop-out | The failure to detect an allele that is present in the sample [6]. | Stochastic sampling effects, low template DNA, degradation [6] [7]. | Incomplete genetic profile; heterozygotes may be mistaken for homozygotes [7]. |

| Drop-in | The sporadic, random appearance of an allele that is not part of the true biological sample. | Contamination from exogenous DNA, typically a single allele [8]. | Introduction of extraneous alleles, potentially leading to false inclusions or complex mixture artifacts. |

| Stochastic Effects | Random fluctuations in PCR amplification due to low starting quantities of DNA [9]. | Pre-PCR stochastic sampling of alleles and randomness during PCR replication [9] [10]. | Imbalanced heterozygote peak heights, drop-out, and increased stutter [9]. |

Mechanistic Workflow of Artifact Generation

The following diagram illustrates the sequential processes leading to the core interpretational hurdles, from sample collection to data analysis.

Quantitative Data and Experimental Characterization

Characterizing Stochastic Effects and Drop-out

Empirical characterization is essential for developing robust protocols. The following table summarizes key quantitative relationships derived from controlled studies.

Table 2: Experimentally Observed Relationships in Stochastic DNA Analysis

| Experimental Variable | Observed Effect | Quantitative Relationship / Model | Experimental Context |

|---|---|---|---|

| Reduced Template DNA | Decreased Heterozygote Peak-Height Ratio (PHR) [9]. | PHR = (Height of smaller allele / Height of taller allele). PHR becomes increasingly less balanced, often below 0.6, as template decreases [9]. | Dilution series of NIST SRM 2372A DNA; Identifiler Plus and MiniFiler kits [9] [10]. |

| Reduced Template DNA | Increased Allelic Drop-out Frequency [9]. | Frequency successfully predicted by a pre-PCR stochastic sampling model using the Poisson distribution and by logistic regression methods [9] [10]. | Replicate amplifications of low-template DNA dilutions; dropout frequencies recorded and modeled [9]. |

| Increasing Number of Loci | Increased Number of Potential Drop-outs [11]. | Analyzing more loci (e.g., with Massively Parallel Sequencing) increases the absolute number of drop-outs in challenging samples, complicating probabilistic assessment [11]. | Computational analysis using a generic Python algorithm for RMNE calculations across variable locus numbers [11]. |

Detailed Experimental Protocols

Protocol 1: Quantifying Stochastic Imbalance and Drop-out

This protocol provides a method to characterize stochastic effects and establish laboratory-specific thresholds for low-template analysis.

I. Sample Preparation and Dilution

- Materials: Accurately quantified human DNA standard (e.g., NIST SRM 2372A [9] [10]), nuclease-free water, precision pipettes and tubes.

- Procedure:

- Prepare a serial dilution of the DNA standard to cover a range from standard (e.g., 0.5 ng) to low-template (e.g., 15 pg) amounts.

- For each dilution level, prepare a minimum of 30 replicates to ensure robust statistical analysis of stochastic events [9].

II. PCR Amplification and Electrophoresis

- Materials: Commercial STR kit (e.g., AmpFlSTR Identifiler Plus or MiniFiler [9]), thermal cycler, capillary electrophoresis instrument (e.g., 3130xL or 3500 Genetic Analyzer [9]).

- Procedure:

- Amplify all replicate samples according to the manufacturer's recommended protocol and cycle number.

- Separate and detect amplified products via capillary electrophoresis using standard platform settings.

III. Data Analysis and Modeling

- Materials: STR genotyping software (e.g., GeneMapper), statistical software (e.g., R, Python).

- Procedure:

- Peak Height Ratio (PHR) Calculation: For each heterozygous locus, calculate PHR = (Height of smaller allele / Height of taller allele) [9]. Calculate the mean and standard deviation of PHRs for each dilution level.

- Drop-out Frequency Calculation: For each dilution, record the number of replicates where a known heterozygous allele fails to be detected. Calculate the empirical dropout frequency as (Number of dropouts / Total number of expected alleles).

- Poisson Simulation: Model the pre-PCR sampling process using a Poisson distribution, where the probability of sampling

ktemplate molecules isP(k) = (λ^k * e^{-λ}) / k!, withλbeing the mean number of template molecules per allele per PCR [9]. - Logistic Regression Modeling: Fit a logistic regression model to the binary dropout data (1=dropout, 0=no dropout) versus template amount or peak height to predict dropout probabilities for casework samples [9] [10].

Protocol 2: Implementing a Dropout-Conscious RMNE Calculation

This protocol outlines a method to calculate the Random Man Not Excluded (RMNE) probability while accounting for potential allelic dropouts, suitable for profiles where probabilistic genotyping software is not employed.

I. Profile Assessment and Locus Categorization

- Materials: Evidentiary STR profile data, population allele frequency database.

- Procedure:

- For each locus in the mixed profile, list all observed alleles and their population frequencies

P(Ai). - Determine the total set of possible alleles

mat that locus and calculate the sum of frequencies of all non-observed alleles:ΣP(Ax)[6].

- For each locus in the mixed profile, list all observed alleles and their population frequencies

II. Locus-Specific RMNE Probability Calculation

- The calculation depends on the number of dropouts (

x) allowed per locus (0, 1, or 2) [6]. - For

x=0(No dropout allowed):P(EL0) = (ΣP(Ai))^2[6]. This is the standard CPI calculation for a mixture. - For

x=1(One dropout allowed):P(EL1) = 2 * (ΣP(Ai)) * (ΣP(Ax))[6]. This accounts for "random men" with one observed and one non-observed allele. - For

x=2(Two dropouts allowed):P(EL2) = (ΣP(Ax))^2[6]. This includes only "random men" with two non-observed alleles.

III. Combined RMNE Probability

- Procedure: Combine probabilities across all

λanalyzed loci. The overall RMNE probability allowing for up todtotal dropouts across the profile is the sum of the products of locus probabilities for all possible ways of having 0, 1, ...,ddropouts [6] [11]. For complex profiles, this requires specialized algorithms [11]. - Tools: Use available online tools or source code (e.g., http://forensic.ugent.be/rmne, GitHub: fvnieuwe/rmne) to perform this complex calculation for any number of loci and allowed drop-outs [11].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Investigating Stochastic Effects

| Item | Function/Application | Example Products / Methods |

|---|---|---|

| Accurately Quantified DNA Standard | Serves as a gold-standard template for creating dilution series to model stochastic effects and validate kits/platforms [9]. | NIST Standard Reference Material (SRM) 2372 [9] [10]. |

| Enhanced Multiplex STR Kits | Amplify multiple loci simultaneously; sensitivity and marker set impact stochastic thresholds and dropout rates. | AmpFlSTR Identifiler Plus, MiniFiler, PowerPlex Fusion System [9] [12]. |

| Sensitive DNA Quantitation Kits | Precisely measure the quantity of human DNA in a sample, which is critical for determining the potential for stochastic effects. | Quantifiler Trio DNA Quantification Kit [12]. |

| Probabilistic Genotyping Software | Advanced statistical platforms that coherently incorporate peak heights, dropout, and drop-in to compute likelihood ratios (LR) for complex mixtures [7]. | STRmix [12]. |

| RMNE Calculation Tools | Software tools for calculating RMNE statistics while accounting for a specified number of allelic dropouts. | Web-based tool at forensic.ugent.be/rmne; Python algorithm on GitHub [11]. |

Addressing the core interpretational hurdles of allele drop-out, drop-in, and stochastic effects is paramount for advancing the reliability of forensic DNA mixture interpretation. The experimental protocols and analytical frameworks presented here, grounded in empirical data and statistical modeling, provide a pathway for researchers and practitioners to characterize these challenges systematically. Integrating such rigorous, quantitative approaches into a TRL-based development protocol ensures that DNA mixture interpretation methods progress from validated technology to operationally proven systems, ultimately enhancing the quality and credibility of forensic science.

The evolution of forensic DNA analysis towards techniques capable of analyzing Low Copy Number (LCN) DNA has fundamentally transformed investigative capabilities. While enabling analysis from minute biological samples, this enhanced sensitivity introduces significant interpretative complexity, particularly for mixed DNA profiles. The shift from conventional Short Tandem Repeat (STR) profiling to advanced multiplex systems and probabilistic genotyping represents a critical technological progression necessary to manage this complexity. This application note examines the relationship between analytical sensitivity and interpretative challenge, framed within a Technology Readiness Level (TRL)-based protocol for forensic DNA mixture research, to guide reliable implementation of these powerful tools.

The Sensitivity-Complexity Paradigm in Forensic DNA Analysis

The Challenge of Low Template and Mixed Samples

Forensic samples often contain DNA from multiple individuals, creating complex mixtures where minor contributor alleles can be obscured by major contributors, stochastic effects, and technical artifacts like stutter peaks [2]. The analysis is further complicated by LCN DNA (<200 pg), which is highly susceptible to stochastic effects causing allelic drop-out, drop-in, and heterozygous imbalance [2]. These challenges are quantified in Table 1, which summarizes key performance data from recent advanced analytical methods.

Table 1: Performance Comparison of Advanced Forensic DNA Analysis Methods

| Method/Kit | DNA Input | Key Performance Metrics | Complexity Handled | Limitations |

|---|---|---|---|---|

| GlobalFiler with Amplicon RX Clean-up [13] | Trace DNA (0.0001 - 0.0028 ng/µL) | Significantly improved allele recovery vs. 29-cycle (p=8.30×10⁻¹²) and 30-cycle (p=0.019) protocols; Increased signal intensity (p=2.70×10⁻⁴). | Extremely low-template, compromised samples. | Performance declines at lowest concentrations (0.0001 ng/µL). |

| FD Multi-SNP Mixture Kit (NGS) [14] | 0.009765625 ng (single source); 1 ng (mixtures) | ~70-80 loci detected from 0.009765625 ng; >65% minor alleles distinguishable at 0.5% frequency in 2-4 person mixtures. | Complex mixtures (2-10 persons), low minor contributor proportions. | Distinguishing alleles below 1.5% frequency remains challenging. |

| Probabilistic Genotyping (STRmix, EuroForMix) [15] | Standard/LCN inputs | Quantitative models compute Likelihood Ratios (LRs); Generally higher LRs for 2-contributor vs. 3-contributor mixtures. | Complex DNA mixtures, accounting for stutter, drop-out/drop-in. | Model-dependent results; requires expert understanding for court testimony. |

Technological Evolution and Methodological Shifts

The field has progressed from Capillary Electrophoresis (CE)-STR typing, which struggles with minor contributors below 5-20% [14], to more powerful solutions. Next-Generation Sequencing (NGS) enables parallel analysis of hundreds of multi-SNP markers, providing significantly more information from limited samples [16] [14]. Concurrently, probabilistic genotyping software like STRmix and EuroForMix uses statistical models to compute Likelihood Ratios (LRs) that quantitatively evaluate evidence under competing propositions, moving beyond less robust qualitative methods [15].

Experimental Protocols for Enhanced Sensitivity and Interpretation

Protocol: Enhanced Trace DNA Profile Recovery using Post-PCR Clean-up

Application: Improving STR profile quality from extremely low-template and compromised casework samples amplified with the GlobalFiler PCR Amplification Kit [13].

Workflow Overview:

Detailed Methodology:

DNA Extraction and Quantification:

- Collect trace DNA from touched items (tools, weapons, phones) using cotton swabs moistened with molecular-grade water [13].

- Extract DNA using the PrepFiler Express DNA extraction kit on the Automate Express liquid handling system with a final elution volume of 50 µL [13].

- Quantify DNA using the Investigator Quantiplex Pro DNA Quantification Kit on the QuantStudio 5 Real-Time PCR system [13].

PCR Amplification:

- Use the GlobalFiler PCR Amplification Kit on a Veriti Thermal Cycler [13].

- For trace samples (<0.0028 ng/µL), perform amplifications in parallel using both 29-cycle and 30-cycle protocols as per manufacturer's recommendations [13].

- Use a 25 µL reaction volume (15 µL DNA extract + 10 µL PCR reaction mix) [13].

Post-PCR Clean-up:

- Apply the Amplicon Rx Post-PCR Clean-up kit to the purified PCR products according to the manufacturer's instructions [13].

- This step concentrates the amplicons and removes salts, primers, and enzymes that can inhibit electrokinetic injection during capillary electrophoresis, thereby enhancing signal intensity [13].

Capillary Electrophoresis and Analysis:

- Analyze the cleaned-up PCR products using capillary electrophoresis [13].

- Compare profiles generated from the Amplicon RX-treated 29-cycle and 30-cycle amplifications against the standard 30-cycle protocol without clean-up. Key metrics include total allele recovery and peak height/signal intensity [13].

Protocol: Complex Mixture Deconvolution using Multi-SNP Markers and NGS

Application: Deconvoluting complex DNA mixtures with low-level contributors and high contributor numbers, which are challenging for standard CE-STR methods [14].

Workflow Overview:

Detailed Methodology:

Marker Design and Selection:

- Screen for multi-SNP markers (multiple linked SNPs within a 75 bp window) from public genome databases (e.g., 1000 Genomes) [14].

- Calculate a D-value (diversity value) for each window. Select markers with a D-value ≥ 0.6 to ensure high polymorphism and discriminatory power. Amplicon length should be kept below 140 bp for compatibility with degraded DNA [14].

Library Preparation and Sequencing:

- Construct sequencing libraries using 5 µL of DNA with the MGIEasy Universal DNA Library Prep Set, using 28 PCR cycles [14].

- Add sample-specific barcodes in an additional 10 PCR cycles for multiplexing [14].

- Sequence the pooled libraries on a platform such as the Illumina NovaSeq X to generate 150 bp paired-end reads [14].

Data Analysis and Mixture Deconvolution:

- Apply a computational error correction method to account for sequencing errors (~0.1% per base) which is crucial for detecting low-abundance alleles [14].

- Perform allele calling and haplotype phasing. The multi-SNP nature of the markers provides more information than single SNPs, improving the ability to distinguish and identify minor contributors in mixtures from multiple individuals [14].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Kits for Advanced Forensic DNA Mixture Analysis

| Kit/Reagent | Primary Function | Key Features | Application Context |

|---|---|---|---|

| PrepFiler Express DNA Extraction Kit (Thermo Fisher) [13] | Automated DNA extraction from forensic samples. | Optimized for low-yield and challenging samples; used with Automate Express system. | Standardized extraction for trace DNA casework. |

| GlobalFiler PCR Amplification Kit (Thermo Fisher) [13] | Multiplex amplification of 21 autosomal STR loci, Amelogenin. | High sensitivity for direct amplification of forensic samples. | Standard STR profiling; baseline for sensitivity enhancement studies. |

| Amplicon RX Post-PCR Clean-up Kit (Independent Forensics) [13] | Purification of PCR products post-amplification. | Removes inhibitors, concentrates amplicons, enhances CE signal. | Critical for improving data quality from low-template and inhibitor-containing samples. |

| FD Multi-SNP Mixture Kit [14] | NGS-based multiplex assay for 567 multi-SNP markers. | High polymorphism, short amplicons (<140 bp), no stutter. | Deconvolution of complex mixtures (2-10 persons) and LCN samples. |

| STRmix / EuroForMix [15] | Probabilistic genotyping software. | Computes Likelihood Ratios (LRs) using quantitative (peak height) models. | Statistical interpretation of complex DNA mixtures for court testimony. |

| Investigator Quantiplex Pro Kit (Qiagen) [13] | Quantitative real-time PCR for DNA quantification. | Determines human DNA quantity and presence of inhibitors. | Essential quality control step before DNA amplification. |

The pursuit of greater sensitivity in forensic DNA analysis is a double-edged sword. Techniques like increased PCR cycles, post-PCR clean-up, and NGS-based multi-SNP analysis empower scientists to generate profiles from previously unusable trace evidence [13] [14]. However, this very success compels the field to confront and manage the significant interpretative complexity that follows. The resulting data, especially from complex mixtures, often cannot be interpreted using traditional binary methods.

The path forward requires an integrated framework that couples cutting-edge wet-lab chemistry with sophisticated computational statistics. The TRL-based protocol for mixture interpretation research must prioritize the validation and integration of probabilistic genotyping as the standard for interpreting complex, sensitive data [15]. Furthermore, methods like microhaplotypes and multi-SNP analysis via NGS represent the future, offering a path to resolve mixtures that are currently intractable [14]. Ultimately, the forensic community's goal is to balance the powerful inclusionary potential of high-sensitivity analysis with rigorous scientific standards that ensure the reliability and transparent communication of conclusions in the justice system.

For decades, simple allele counting served as the foundational method for forensic DNA mixture interpretation, providing a straightforward approach to analyzing samples from multiple individuals. This technique, primarily reliant on visual assessment of allele presence and absence, formed the basis of forensic DNA analysis during its stabilization and standardization phase (1995-2005) [17]. However, as forensic science has advanced into a period of increased sophistication (2015-2025 and beyond), the limitations of these traditional methods have become increasingly apparent when confronting complex mixture scenarios [17]. The paradigm in forensic evidence evaluation is now shifting toward methods grounded in relevant data, quantitative measurements, and statistical models that offer greater transparency, reproducibility, and empirical validation [18].

Simple allele counting methods prove particularly inadequate when facing three critical challenges: mixtures with increased contributor numbers, samples from populations with varying genetic diversity, and mixtures comprising related individuals. These scenarios reveal fundamental weaknesses in qualitative interpretation approaches, potentially leading to both false exclusions and false inclusions with significant legal implications. The emergence of probabilistic genotyping software (PGS) represents a technological evolution designed to address these limitations by accounting for peak heights, stutter, and other quantitative data within a rigorous statistical framework [15]. This application note examines the specific failure points of traditional allele counting methods and provides detailed protocols for validating and implementing advanced interpretation approaches within a Technology Readiness Level (TRL) framework.

Critical Limitations of Simple Allele Counting

Impact of Genetic Diversity on False Positive Rates

Traditional allele counting methods demonstrate significant vulnerability to population genetic variation, with systematically higher false inclusion rates observed in groups with lower genetic diversity. Recent research quantifying DNA mixture analysis accuracy across 83 human groups reveals that false inclusion rates reach 1x10⁻⁵ or higher for 36 out of 83 groups in three-contributor mixtures where two contributors are known and the reference group is correctly specified [19]. This elevated error rate means that, depending on multiple testing factors, false inclusions may be expected in routine casework when using simple allele counting approaches.

Table 1: False Inclusion Rates Based on Genetic Diversity and Contributor Numbers

| Number of Contributors | Level of Genetic Diversity | False Inclusion Rate | Key Implications |

|---|---|---|---|

| 3 contributors (2 known) | Low diversity groups | ≥1x10⁻⁵ for 36/83 groups | Expected false inclusions with multiple testing |

| 3 contributors (2 known) | High diversity groups | <1x10⁻⁵ | Better discrimination capability |

| Increasing contributors | All populations | Rate increases | Compounded effect with lower diversity |

The fundamental issue stems from the increased allele sharing in populations with reduced genetic heterogeneity, which creates ambiguity that simple threshold-based allele counting cannot resolve. This limitation persists even when laboratory protocols are correctly specified, indicating a fundamental methodological constraint rather than procedural error [19]. These findings underscore the necessity of either more selective and conservative use of DNA mixture analysis with traditional methods or migration to probabilistic approaches that can quantitatively account for population genetic parameters.

Limitations with Related Contributors

Simple allele counting methods face particular challenges when interpreting mixtures containing related individuals due to increased allele sharing patterns that violate core assumptions of qualitative interpretation. Research investigating mixtures comprising related persons identifies four specific effects that compromise traditional analysis [20]:

- Underestimation of the number of contributors due to allele sharing (e.g., a three-person mixture of two parents and their biological child appearing as a two-person mixture by allele count alone)

- Preferential selection of alternate genotype explanations during deconvolution

- High adventitious support for non-donating relatives of true sample donors occurs more frequently than with unrelated non-donors

- Increased adventitious support for non-donors as the fraction of related donors in the mixture increases

Table 2: Adventitious Support Risk in Related Contributor Scenarios

| Mixture Composition | Compared Non-donor | Adventitious Support Risk | Primary Genetic Cause |

|---|---|---|---|

| Multiple relatives of non-donor | Relative | Highest frequency/magnitude | Complete allele sharing expectation |

| One relative + unrelated individuals | Relative | Moderate | Partial allele sharing |

| All unrelated individuals | Unrelated person | Lowest baseline | Random allele matches |

These effects are particularly pronounced in specific familial relationships. For instance, a balanced mixture of a mother and father will provide adventitious support for all their biological children because, barring complexities like mutation and dropout, all children's alleles will be shared with the mixture [20]. This phenomenon represents an expected consequence of Mendelian inheritance rather than methodological error, but simple allele counting lacks the statistical framework to quantify or account for these relationships appropriately.

Limitations with Increasing Contributor Numbers

As the number of contributors to a DNA mixture increases, simple allele counting methods experience rapid degradation in performance due to overlapping alleles and complex stutter patterns. The exponential increase in possible genotype combinations overwhelms the capabilities of qualitative assessment, particularly with low-template DNA samples where stochastic effects further complicate interpretation [17].

Comparative studies of qualitative versus quantitative probabilistic genotyping approaches demonstrate that likelihood ratio (LR) values computed by quantitative tools are generally higher than those obtained by qualitative methods, with three-contributor mixtures showing generally lower LR values than two-contributor mixtures across all platforms [15]. This performance gap widens as mixture complexity increases, revealing the fundamental constraints of allele counting in evidentiary weight evaluation.

Experimental Protocols for Method Validation

Protocol for Evaluating Population Genetic Effects

Objective: To quantify false inclusion rates across diverse population groups and mixture complexities.

Materials:

- Reference DNA samples from genetically diverse populations

- Commercial STR amplification kits (e.g., GlobalFiler, PowerPlex Fusion)

- Capillary electrophoresis system

- Probabilistic genotyping software (e.g., STRmix, EuroForMix)

Procedure:

- Prepare in vitro mixtures with 2-4 contributors at balanced ratios (1:1 for 2 contributors; 1:1:1 for 3 contributors)

- Generate DNA profiles using standard amplification and electrophoresis protocols

- Analyze profiles using simple allele counting method (qualitative assessment)

- Re-analyze same profiles using probabilistic genotyping software

- Calculate false inclusion rates by comparing non-donors from same population group

- Statistical analysis: Compute confidence intervals for false positive rates across population groups

Validation Metrics:

- False inclusion rate by population group and contributor number

- Sensitivity and specificity calculations

- Comparative likelihood ratios between qualitative and quantitative methods

Protocol for Assessing Relatedness Effects

Objective: To evaluate adventitious match rates for related non-donors across different familial relationships.

Materials:

- DNA samples from complete family units (parents and multiple children)

- Quantitative PCR system for DNA quantification

- STR amplification and detection systems

- Probabilistic genotyping software with relatedness modeling capabilities

Procedure:

- Prepare mixture series including:

- Parent-Parent-Child (PPC) triads

- Parent-Child-Child (PCC) triads

- Sibling-Sibling-Sibling (SSS) triads

- Maintain balanced (1:1:1) and unbalanced (varying ratios) mixture proportions

- Process samples through standard forensic workflow: extraction, quantification, amplification, separation

- Analyze each mixture using:

- Simple allele counting with combined probability of inclusion (CPI)

- Probabilistic genotyping software with appropriate proposition setting

- Compute likelihood ratios for:

- True donors (all relationships)

- Related non-donors (siblings, parents, children)

- Unrelated non-donors

- Assess number of contributor (NoC) estimates for each mixture type

Data Analysis:

- Compare LR distributions for true donors versus non-donors

- Quantify rate of adventitious support (LR > 1) for related non-donors

- Evaluate NoC estimation accuracy across relationship types

- Document instances of false exclusions for true donors

Diagram 1: Relatedness effects assessment protocol workflow (63 characters)

Protocol for Software Comparison Studies

Objective: To compare performance characteristics of qualitative versus quantitative genotyping approaches.

Materials:

- 156 sample pairs (mixture profile + single source reference)

- GeneMapper files for data input

- LRmix Studio (v.2.1.3 - qualitative)

- STRmix (v.2.7 - quantitative)

- EuroForMix (v.3.4.0 - quantitative)

Procedure:

- Import anonymized GeneMapper files into all three software platforms

- Maintain consistent parameters across platforms:

- Number of contributors

- Population genetic data

- Stutter models

- Compute likelihood ratios using identical proposition pairs

- Analyze 21 STR autosomal markers for all samples

- Compare LR outputs for:

- Two-contributor mixtures

- Three-contributor mixtures

- Varying template amounts

- Statistical analysis: Compute descriptive statistics and correlation measures between software outputs

Output Metrics:

- Likelihood ratio values across software platforms

- Sensitivity analysis for template amount effects

- Discrimination efficiency between true donors and non-donors

- Computational requirements and time investments

Advanced Interpretation Workflow

The transition from traditional allele counting to probabilistic genotyping requires a structured workflow that incorporates validation data from the previously described protocols. The following diagram illustrates the decision pathway for implementing mixture interpretation methods based on mixture characteristics and validation performance.

Diagram 2: Advanced interpretation workflow pathway (47 characters)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Forensic Mixture Interpretation Research

| Research Reagent | Specific Example | Function in Experimental Protocol |

|---|---|---|

| DNA Quantification Kit | Quantifiler Trio DNA Quantification Kit | Determines human DNA concentration and degradation state using multiple-copy target loci (Small Autosomal, Large Autosomal, Y-chromosome) [21] |

| STR Amplification Kits | GlobalFiler PCR Amplification Kit | Simultaneously amplifies 21 autosomal STR loci, 3 Y-STR loci, and amelogenin for gender determination [17] |

| Capillary Electrophoresis System | Applied Biosystems 3500 Genetic Analyzer | Separates fluorescently labeled PCR products by size with single-base resolution for accurate allele calling [17] |

| Probabilistic Genotyping Software | STRmix v.2.7 | Implements quantitative statistical models that consider both qualitative (allele presence) and quantitative (peak height) information to compute likelihood ratios [15] [20] |

| Qualitative Analysis Software | LRmix Studio v.2.1.3 | Provides qualitative genotyping approach considering only detected alleles without quantitative peak information for comparison studies [15] |

| Internal Validation Standards | Standard Reference Material 2391d | Certified human DNA standards for calibration and validation of quantitative measurements across experiments [21] |

The limitations of simple allele counting in forensic DNA mixture interpretation present significant challenges that increase with mixture complexity, genetic diversity considerations, and contributor relatedness. Traditional approaches demonstrate systematically higher false positive rates in populations with lower genetic diversity and show particular vulnerability to adventitious matching when interpreting mixtures containing related individuals. These methodological constraints necessitate a paradigm shift toward probabilistic genotyping methods that incorporate quantitative data, statistical models, and empirical validation.

The experimental protocols outlined provide a framework for validating mixture interpretation methods within a TRL-based research context, enabling forensic researchers to quantitatively assess performance characteristics across diverse scenarios. Implementation of these advanced interpretation approaches requires careful consideration of mixture characteristics, available validation data, and computational resources. As the field continues its progression toward more sophisticated analytical frameworks, these protocols and methodologies will support the development of robust, transparent, and scientifically valid mixture interpretation practices that meet the evolving needs of the forensic genetics community.

From CPI to Probabilistic Genotyping: A Methodological Evolution for DNA Mixture Analysis

The Combined Probability of Inclusion/Exclusion (CPI/CPE) represents a foundational statistical method for evaluating forensic DNA mixture evidence. A DNA mixture is identified when a biological sample originates from two or more individuals, typically indicated by the presence of three or more allelic peaks at two or more genetic loci or significant peak height imbalances [1]. The CPI specifically measures the proportion of a given population that would be included as potential contributors to the observed DNA mixture, while its complement, the CPE, calculates the probability of exclusion [1] [22].

This method remains the most prevalent statistical approach for DNA mixture evaluation across many regions, including the Americas, Asia, Africa, and the Middle East [1]. Its persistence in forensic practice necessitates a thorough understanding of its proper application, inherent limitations, and position within the evolving landscape of DNA mixture interpretation, particularly within a Technology Readiness Level (TRL) framework for protocol development and validation.

Fundamental Principles and Mathematical Formulation

The CPI approach is fundamentally grounded in population genetics and statistical theory. The calculation involves determining the sum of the frequencies of all possible genotype combinations that are included within the observed DNA mixture profile [1].

For a single locus, the Probability of Inclusion (PI) is calculated as follows:

PI = (Sum of the frequencies of all included genotypes)²

The Combined Probability of Inclusion (CPI) is then obtained by multiplying the individual PIs across all interpreted loci:

CPI = PI₁ × PI₂ × PI₃ × ... × PIₙ

This multiplicative combination follows the product rule and assumes independence across the genetic loci tested. The corresponding Combined Probability of Exclusion (CPE) is:

CPE = 1 - CPI

Table 1: Core Components of CPI/CPE Calculation

| Component | Description | Function in Calculation |

|---|---|---|

| Observed Alleles | Allelic peaks above the analytical threshold at each locus | Forms the basis for determining possible genotype combinations |

| Allele Frequencies | Population-specific frequencies for each observed allele | Used to calculate the probability of genotype combinations |

| Included Genotypes | All possible pairs of alleles that could explain the observed mixture | Summed frequencies form the basis for the PI calculation |

| Locus Multiplier | Application of the product rule across multiple loci | Combines individual PIs to generate the final CPI statistic |

Protocol for CPI/CPE Analysis: A TRL-Based Framework

The reliable application of CPI/CPE requires a structured, phased protocol that aligns with TRL-based development, progressing from basic assessment to statistical calculation.

Phase 1: Profile Assessment and Deconvolution

The initial phase focuses on the qualitative assessment of the DNA profile to determine the presence of a mixture and its fundamental characteristics.

Step 1: Mixture Identification Analyze the electrophoregram for indicators of multiple contributors:

- Presence of three or more allelic peaks at multiple loci

- Significant imbalance in peak heights beyond expected heterozygote ratios [1]

- Inconsistencies in peak height patterns across multiplexed loci

Step 2: Determine the Number of Contributors Estimate the minimum number of contributors required to explain the observed profile. This critical step informs subsequent decisions about potential allele dropout [1]. For instance, the observation of only four alleles at a locus in a two-person mixture suggests no dropout, while fewer than four alleles indicates potential dropout.

Step 3: Deconvolution and Artifact Identification

- Differentiate true alleles from PCR stutter artifacts using validated stutter ratios

- Identify potential off-ladder alleles and other analytical artifacts

- Assess peak height balance to evaluate potential major and minor contributors [1]

Figure 1: Workflow for DNA mixture assessment and deconvolution prior to CPI calculation

Phase 2: Comparative Analysis and Inclusion/Exclusion Determination

This phase involves comparing the evidence profile with known reference samples and making determinations about inclusion or exclusion.

Step 4: Comparison with Reference Profiles

- Compare the mixture profile with known reference samples from persons of interest, victims, or other known contributors

- Evaluate whether the known individual's alleles are present at all loci

Step 5: Subtraction and Inclusion/Exclusion Decision

- Where appropriate, "subtract" the known contributor's alleles from the mixture profile [1]

- Determine if the known individual cannot be excluded as a potential contributor

- Make explicit exclusion decisions when a known individual's profile contains alleles not present in the mixture evidence

Phase 3: Statistical Calculation and Interpretation

The final phase involves the actual CPI calculation with careful consideration of locus usability and potential limitations.

Step 6: Locus Qualification for CPI This critical step determines which loci are suitable for inclusion in the CPI calculation based on dropout potential:

- Include: Loci where all alleles from all contributors are presumed to be detected (e.g., four alleles observed in a two-person mixture) [1]

- Exclude: Loci where allele dropout is a reasonable possibility based on low peak heights or other stochastic effects [1]

- Loci excluded from CPI calculation may still be used for qualitative exclusionary purposes [1]

Step 7: CPI Calculation and Reporting

- Calculate the PI for each qualified locus using the formula

(a + b + ... + n)²where a, b, ... n are the frequencies of included alleles - Multiply individual PIs across all qualified loci to obtain the CPI

- Report the CPE as

1 - CPIwhere appropriate - Clearly document all loci included in the calculation and justifications for any excluded loci

Table 2: Locus Qualification Criteria for CPI Analysis

| Locus Condition | Suitability for CPI | Rationale |

|---|---|---|

| All expected alleles observed (e.g., 4 alleles in 2-person mix) | Suitable | Confidence that no allele dropout has occurred |

| Fewer alleles than expected (e.g., 3 alleles in 2-person mix) | Not Suitable | High probability of allele dropout violating CPI assumptions |

| Low template DNA with stochastic effects | Not Suitable | Increased risk of allele dropout and drop-in |

| High degradation affecting larger loci | Not Suitable | Potential for locus-specific dropout |

| Allele stacking from shared alleles | Conditionally Suitable | Requires careful evaluation of peak heights and mixture proportions |

Essential Research Reagents and Materials

The reliable application of the CPI method depends on several critical reagents and analytical tools.

Table 3: Essential Research Reagents for DNA Mixture Analysis

| Reagent/Material | Function/Application |

|---|---|

| Commercial STR Multiplex Kits | Simultaneous amplification of multiple short tandem repeat loci |

| DNA Quantitation Standards | Accurate measurement of DNA concentration for input control |

| Positive Control DNA | Verification of amplification efficiency and profile quality |

| Population-Specific Allelic Ladders | Accurate allele designation and population frequency estimation |

| Analytical Threshold Materials | Establishment of minimum peak height thresholds for allele calling |

| Stutter Calculation Standards | Characterization of stutter ratios for artifact identification |

| DNA Size Separation Matrix | High-resolution capillary electrophoresis for allele separation |

Limitations and Methodological Constraints

The CPI method possesses significant limitations that restrict its application to certain types of DNA mixtures and affect its positioning within a TRL-based developmental framework.

Primary Limitations

Inability to Account for Allele Dropout: The most significant limitation is that standard CPI calculation requires all alleles from all contributors to be present and detected [1]. In low-template or degraded samples where dropout is possible, the CPI method becomes unreliable unless modified, and such loci must be excluded from calculation [1].

Binary Nature: The CPI approach operates on a binary inclusion/exclusion paradigm without the flexibility to incorporate probabilistic weighting of potential genotypes [1]. This contrasts with more advanced likelihood ratio approaches that can evaluate the probability of the evidence given different propositions.

Sensitivity to Contributor Number: While the CPI calculation itself does not require an assumption about the number of contributors, the interpretation prior to calculation does require such an assumption to evaluate potential dropout [1]. An incorrect estimate can lead to improper locus inclusion or exclusion.

Statistical Conservatism: When loci must be excluded due to potential dropout, the resulting CPI statistic may become less informative and potentially overstate the evidence against an innocent person who shares common alleles with the true contributor.

Figure 2: Key limitations of the CPI method and its position in methodological progression

Comparative Analysis with Advanced Methods

The forensic genetics community is increasingly moving toward probabilistic genotyping approaches using Likelihood Ratios (LRs), which offer significant advantages for complex mixture interpretation [1] [23].

Table 4: CPI versus Probabilistic Genotyping Methods

| Analytical Aspect | CPI/CPE Method | Probabilistic Genotyping |

|---|---|---|

| Statistical Framework | Combined probability of inclusion | Likelihood ratio |

| Handling of Dropout | Loci must be excluded | Can explicitly model probability |

| Peak Height Information | Used qualitatively for interpretation | Quantitatively incorporated into model |

| Number of Contributors | Assumed for interpretation | Explicitly considered in model |

| Complex Mixtures | Limited application | Suitable for higher-order mixtures |

| Software Implementation | Manual calculation or simple tools | Advanced computational systems |

| Statistical Efficiency | Often more conservative | Typically more informative |

The CPI/CPE method represents an important historical and current approach for DNA mixture interpretation, particularly for straightforward mixtures with no potential for allele dropout. Its protocol requires meticulous attention to profile assessment, locus qualification, and understanding of its inherent limitations.

Within a TRL-based framework for forensic DNA mixture interpretation research, the CPI method represents an established but technologically mature approach with defined limitations in addressing contemporary challenges such as complex mixtures, low-template DNA, and probabilistic evaluation. The field is progressively transitioning toward fully continuous probabilistic methods that can more flexibly and efficiently account for stochastic effects and complex mixture scenarios [1] [23] [24].

For research and casework application, laboratories choosing to implement CPI must adhere to strict protocols regarding its proper application, particularly in disqualifying loci where allele dropout is possible. Future methodological development should focus on validation frameworks that position CPI appropriately within a hierarchy of analytical approaches, recognizing both its utility for simpler mixtures and its limitations for more complex evidentiary samples.

The interpretation of forensic DNA mixtures, particularly complex ones containing genetic material from multiple individuals, has long presented a significant challenge for forensic analysts. Probabilistic Genotyping (PG) represents a fundamental paradigm shift from traditional binary methods to a sophisticated statistical framework for interpreting forensic DNA evidence [25]. Unlike conventional methods that may yield only an inclusion or exclusion, probabilistic genotyping uses statistical models to compute a Likelihood Ratio (LR), quantifying the strength of evidence that a person of interest contributed to a mixed DNA sample [26] [27].

This shift is particularly crucial for complex mixture samples where DNA profiles reveal multiple contributors of varying proportion and clarity, often resulting from sensitive collection techniques that recover DNA from surfaces touched by multiple individuals [25]. Traditional methods struggle with these complexities due to issues like allele drop-in or drop-out and poor signal-to-noise ratios that can obscure the true number of contributors and their individual DNA profiles [25].

Table 1: Comparison of Traditional vs. Probabilistic Genotyping Approaches

| Feature | Traditional Methods | Probabilistic Genotyping |

|---|---|---|

| Interpretation Approach | Binary (match/no match) | Statistical (likelihood ratio) |

| Complex Mixtures | Subjective interpretation | Objective, model-based evaluation |

| Statistical Output | Random match probability | Likelihood Ratio (LR) |

| Information Utilized | Allelic presence/absence | Peak heights, stutter, degradation |

| Result Presentation | Qualitative statements | Quantitative evidence strength |

The Core Concept: Likelihood Ratios (LR)

At the heart of probabilistic genotyping lies the Likelihood Ratio (LR), a statistical measure that evaluates the strength of DNA evidence by comparing two competing hypotheses [25] [26]. The LR is calculated as the ratio of two probabilities:

- The probability of observing the DNA evidence if the person of interest (POI) was a contributor to the mixture (prosecution hypothesis, Hp)

- The probability of observing the DNA evidence if the POI was not a contributor (defense hypothesis, Hd) [25]

Formula 1: Likelihood Ratio Calculation

Where:

- E = Observed DNA evidence

- Hp = Prosecution hypothesis (POI is a contributor)

- Hd = Defense hypothesis (POI is not a contributor)

The resulting value indicates how many times more likely the evidence is under one hypothesis versus the other [26]. For example, an LR of 10,000 means the evidence is 10,000 times more likely if the POI was a contributor than if they were not. It is crucial to understand that the LR estimates the strength of the evidence that an individual's DNA is included in a mixture sample—not the probability of innocence or guilt [25].

Table 2: Interpreting Likelihood Ratio Values

| Likelihood Ratio Value | Interpretation of Evidence Strength |

|---|---|

| 1 | Evidence has no probative value; equally likely under both hypotheses |

| 1 - 10 | Limited support for Hp over Hd |

| 10 - 100 | Moderate support for Hp over Hd |

| 100 - 1,000 | Moderately strong support for Hp over Hd |

| 1,000 - 10,000 | Strong support for Hp over Hd |

| >10,000 | Very strong support for Hp over Hd |

Technical Foundations and Methodologies

Algorithmic Foundations: Markov Chain Monte Carlo (MCMC)

The two most commonly used probabilistic genotyping systems in the United States—TrueAllele and STRmix—utilize sophisticated computational algorithms known as Markov Chain Monte Carlo (MCMC) methods [25]. MCMC is a machine learning approach that examines a mixture sample's DNA profile, simulates possible genotype combinations from different contributors, and evaluates how likely specific combinations could generate the observed profile [25].

The MCMC process involves these key steps [27]:

- Initialization: Begin with a model containing parameters for variables like mixture ratios, degradation rates, and stutter percentages

- Prediction: Generate predicted peak heights based on the current model parameters

- Comparison: Compare predictions to actual observed data

- Acceptance/Rejection: Accept models that closely match observations; reject or modify others

- Iteration: Repeat the process thousands of times to explore the vast parameter space

This iterative sampling allows the system to explore billions of possible genotype combinations that would be computationally infeasible to calculate directly [27]. The collection of accepted models forms a distribution representing the range of possible explanations for the observed data.

Addressing DNA Mixture Complexity

Probabilistic genotyping systems specifically address challenges in DNA mixture interpretation that confound traditional methods. The complexity increases exponentially with each additional contributor due to various factors [27]:

- Peak height imbalance: Heterozygous loci showing unequal peak heights due to amplification variability

- Stutter artifacts: PCR byproducts that can be mistaken for minor contributor alleles

- Allelic dropout: Failure to detect alleles actually present in the sample

- Degraded DNA: Sample breakdown affecting larger fragments more severely

In a two-person mixture, several scenarios create interpretation challenges [27]:

- Four Peaks: Simplest scenario where each allele can be clearly attributed

- Three Peaks: Occurs when contributors share one allele, potentially masking contribution ratios

- Two Peaks: Multiple scenarios creating significant ambiguity in interpretation

- Single Peak: Nearly impossible to determine contributor number or ratio without additional information

Experimental Protocols and Validation

SWGDAM Validation Guidelines for PG Systems

The Scientific Working Group on DNA Analysis Methods (SWGDAM) has established comprehensive guidelines for validating probabilistic genotyping software to ensure reliable results that withstand scientific and legal scrutiny [27]. These validation requirements are essential safeguards that ensure PG results will stand up to scrutiny in court.

Table 3: SWGDAM Validation Requirements for Probabilistic Genotyping Systems

| Validation Component | Purpose | Key Parameters Assessed |

|---|---|---|

| Sensitivity Studies | Evaluate detection of low-level contributors | Limit of detection, minor contributor thresholds |

| Specificity Testing | Ensure discrimination between contributors and non-contributors | False positive/negative rates |

| Precision & Reproducibility | Verify consistent results across multiple analyses | Inter-run variability, operator consistency |

| Complex Mixture Studies | Assess performance with varying numbers of contributors | 3-, 4-, and 5-person mixtures with varying ratios |

| Method Comparison | Establish concordance with accepted practices | Comparison with traditional methods |

Comprehensive PG Validation Protocol

A thorough validation study for probabilistic genotyping software should include these essential experimental components [27]:

Single-Source Samples Testing

- Purpose: Establish baseline performance with straightforward cases

- Methodology: Analyze known single-source profiles across expected concentration ranges (0.1-1.0 ng)

- Success Criteria: Correct genotype identification with high confidence (LR > 10⁶)

Simple Mixture Analysis

- Purpose: Test deconvolution capability with two-person mixtures

- Methodology: Prepare mixtures in varying ratios (1:1, 3:1, 9:1, 99:1) with total DNA quantities of 0.5-2.0 ng

- Success Criteria: Correct identification of both contributors across ratio spectrum

Complex Mixture Evaluation

- Purpose: Assess performance limits with challenging scenarios

- Methodology: Create 3-, 4-, and 5-person mixtures with various ratios, degradation levels, and relatedness scenarios

- Success Criteria: Reliable performance within established system limits

Degraded and Low-Template DNA Testing

- Purpose: Verify performance with suboptimal samples

- Methodology: Artificially degrade samples or use low-quantity DNA (0.01-0.1 ng)

- Success Criteria: Established operational thresholds and reliability metrics

Mock Casework Samples

- Purpose: Simulate real evidence conditions

- Methodology: Create mixtures from touched items, mixed body fluids, or other challenging scenarios

- Success Criteria: Performance comparable to validation samples

Implementation Workflow for Forensic Laboratories

Implementing probabilistic genotyping in a forensic laboratory requires developing comprehensive workflows that integrate with existing processes while maintaining strict quality control [27].

Preliminary Data Evaluation

- Assess electropherogram quality including size standards, allelic ladders, and controls

- Identify and address poor-quality data before PG interpretation

Number of Contributors Determination

- Estimate contributors using maximum allele count, peak height patterns, and mixture proportions

- Utilize statistical tools like NOCIt for objective determination

Hypothesis Formulation

- Define clear hypotheses for testing:

- Hp: Person of interest is a contributor

- Hd: Person of interest is not a contributor

- Address potential close relatives or population substructure if relevant

MCMC Analysis Configuration

- Set appropriate parameters:

- Iterations: 10,000-1,000,000 depending on complexity

- Burn-in period: 10-20% of total iterations

- Thinning interval to reduce autocorrelation

- Degradation, stutter, and peak height variation parameters

Result Interpretation and Technical Review

- Interpret likelihood ratios with understanding of statistical meaning and limitations

- Conduct comprehensive technical review by qualified second analyst

- Verify all analysis parameters and software settings

Essential Research Reagent Solutions

Table 4: Key Research Reagents and Materials for Probabilistic Genotyping

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Reference DNA Standards | Quality control and calibration | Use certified reference materials traceable to standards |

| PCR Amplification Kits | Target amplification for STR analysis | Select kits with demonstrated validation data |

| Allelic Ladders | Fragment size standardization | Essential for accurate allele designation |

| Software Systems | PG analysis and interpretation | STRmix, TrueAllele, MaSTR |

| Validation Samples | Method performance assessment | Create characterized samples with known contributors |

| Quantification Standards | DNA quantity and quality assessment | Critical for interpreting low-template results |

| Stutter Models | Accounting for PCR artifacts | Laboratory-specific validation required |

| Degradation Models | Addressing sample quality issues | Must reflect laboratory-specific conditions |

Critical Considerations in Probabilistic Genotyping

Technical Limitations and Assumptions

While probabilistic genotyping represents a significant advancement, several technical considerations require careful attention [25]:

Analyst-Dependent Inputs Despite promises of automation, PG systems remain constrained by analyst inputs, particularly the estimation of the number of contributors to the mixture. Determining the true number of contributors can be exceptionally difficult, especially for mixtures requiring probabilistic rather than manual interpretation [25]. Inaccurate specification of contributor numbers can significantly affect analysis results.

Genetic Relatedness Assumptions Probabilistic genotyping systems typically assume that possible contributors to a mixture are unrelated. When biological relationships exist among potential contributors, genetic relatedness can mask the true number of alleles and their abundance, confounding attempts to identify the number of contributors and their relative DNA fractions [25].

Software-Specific Results Different PG software can yield contradictory results when analyzing the same sample, as various systems are based on different models and assumptions [25]. Even reanalysis of the same sample by the same software using MCMC processes may not report identical likelihood ratio values due to the statistical nature of the simulations [25].

Interpretation Guidelines

Understanding Uninformative Results PG software will always report a result regardless of DNA sample quality, number of contributors, or the algorithm's ability to find likely contributor-genotype combinations [25]. Generally, profiles with limited information will produce likelihood ratios close to 1.0, indicating uninformative results [25].

Validation and Transparency In the absence of testable "ground truth" for expected likelihood ratio outputs, forensic laboratories must demonstrate extensive and particular validation of their methods [25]. This validation should be specific to the quality and complexity of the samples being analyzed. Additionally, scrutiny of the actual simulation software is essential, as third-party audits have previously identified issues in source code with meaningful case impacts [25].

Probabilistic genotyping represents a fundamental advancement in forensic DNA analysis, providing a statistically robust framework for evaluating complex mixture evidence. When properly validated and implemented, these systems offer forensic analysts powerful tools for extracting meaningful information from challenging DNA mixtures, supported by quantitative measures of evidence strength through likelihood ratios.

The interpretation of forensic DNA mixtures, particularly those involving multiple contributors, low-template DNA, or degraded samples, presents significant challenges for traditional methods. These challenges include allele dropout, allele stacking due to shared alleles, and the differentiation of stutter artifacts from true alleles [7]. Probabilistic genotyping systems have emerged as a sophisticated solution, enabling the statistical evaluation of complex DNA evidence that was previously considered inconclusive. These systems employ computational methods to deconvolve mixtures and calculate a Likelihood Ratio (LR), which quantifies the strength of the evidence for a proposition that a specific individual contributed to the DNA sample versus the proposition that they did not.

Among the most prominent probabilistic genotyping systems used in forensic practice are STRmix and TrueAllele. This article provides a detailed overview of STRmix, based on available data, and outlines the core principles expected to be present in systems like TrueAllele. The content is framed within the context of developing a Technology Readiness Level (TRL)-based protocol for forensic DNA mixture interpretation research, providing researchers and forensic professionals with a clear understanding of the operational methodologies, experimental protocols, and practical applications of these advanced systems.

Table 1: Key Challenges in Forensic DNA Mixture Interpretation Addressed by Probabilistic Genotyping

| Challenge | Impact on Interpretation | Probabilistic Genotyping Solution |

|---|---|---|

| Low Template/Degraded DNA | Leads to allele and locus dropout [7] | Models probability of dropout events using statistical methods |

| Multiple Contributors | Causes allele stacking, making deconvolution difficult [7] | Computes all possible genotype combinations to explain the profile |

| PCR Stutter Artifacts | Difficult to distinguish from true alleles [7] | Models stutter peak heights and behavior mathematically |

| Complex Mixtures | Traditional methods may yield inconclusive results | Uses sophisticated biological modelling to interpret a wide range of complex profiles [28] |

The STRmix Probabilistic Genotyping System

Operating Principles and Mathematical Foundation

STRmix employs a sophisticated computational framework that integrates biological modeling with established statistical methods to interpret complex DNA profiles. The core of its methodology involves comparing the evidentiary DNA profile against millions of possible genotype combinations to determine which ones best explain the observed data [28].

The software utilizes a Markov Chain Monte Carlo (MCMC) engine to model various electrophoretic phenomena, including allelic and stutter peak heights, as well as drop-in and drop-out behavior [28]. This approach allows STRmix to rapidly analyze DNA mixture evidence that was previously beyond the reach of traditional forensic methods. The system builds millions of conceptual DNA profiles and grades them against the evidential sample, identifying the combinations that provide the best explanation for the observed profile [28]. This process generates Likelihood Ratios (LRs) that facilitate subsequent comparisons to reference profiles.

STRmix Workflow and Architecture

The analytical process within STRmix follows a logical progression from raw data input to the generation of interpretable results. The workflow can be conceptualized as a series of interconnected modules that transform electropherogram data into statistically robust likelihood ratios.

STRmix Adoption and Casework Applications

STRmix has seen substantial adoption within the global forensic community. According to recent survey data, the software has been used in at least 220,000 cases worldwide since its introduction in 2012, with evidence derived from STRmix presented in more than 80 successful admissibility hearings across multiple jurisdictions [29]. This extensive practical application demonstrates the system's reliability and acceptance in legal proceedings.

The technology is currently deployed in 59 organizations across the United States, including federal agencies such as the FBI and the Bureau of Alcohol, Tobacco, Firearms, and Explosives (ATF), as well as numerous state, local, and private forensic laboratories [29]. An additional 60 organizations are in various stages of installation, validation, and training, indicating continued growth in adoption [29]. STRmix has proven particularly valuable in solving violent crimes, sexual assaults, and cold cases where evidence was previously dismissed as inconclusive [29].

Table 2: STRmix Quantitative Deployment and Casework Statistics

| Metric | Value | Context |

|---|---|---|

| Global Case Usage | 220,000+ cases | Since its introduction in 2012 [29] |

| U.S. Organizations | 59 laboratories | Includes FBI, ATF, state, local, and private labs [29] |

| Successful Admissibility Hearings | 80+ hearings | Double the number reported the previous year [29] |

| Additional Pending Implementations | 60+ organizations | In various stages of installation, validation, and training [29] |

Experimental Protocols for STRmix Implementation

Protocol 1: STRmix Analysis of Complex DNA Mixtures

Purpose: To provide a standardized methodology for analyzing complex forensic DNA mixtures using the STRmix probabilistic genotyping software.

Materials and Equipment:

- STRmix software (v2.8 or later)

- FaSTR DNA software for initial profile analysis

- DBLR for database searches (optional)

- Validated genetic analyzer and STR multiplex kits

- Reference DNA profiles from persons of interest

Procedure:

- Sample Preparation and Electrophoresis: Extract DNA from forensic samples following standard protocols. Amplify using appropriate STR multiplex kits and analyze using capillary electrophoresis to generate raw electropherogram data.

Data Pre-processing: Input raw data into FaSTR DNA software for initial analysis. This software rapidly analyzes DNA profiles, assigns a number of contributors (NoC) estimate, and performs peak classification, optionally using artificial neural networks for this process [29].

Profile Assessment in STRmix:

Statistical Analysis:

- STRmix builds millions of conceptual DNA profiles and grades them against the evidential sample [28].

- The software identifies genotype combinations that best explain the observed profile.

- For casework involving known contributors, apply the "top-down" approach available in STRmix v2.8, which allows users to set the number of major contributors of interest and obtain LRs specifically for those contributors [29].

Results Interpretation:

- Review the calculated Likelihood Ratios provided by STRmix.

- Generate a formal report suitable for courtroom presentation, ensuring results are explained clearly and comprehensibly [28].

Validation and Quality Assurance: STRmix has been extensively validated and is in use for casework interpretation at multiple international laboratories, having first been implemented in 2012 [28]. The software has achieved Certificate of Networthiness (CoN) status on the United States Army Network [28].

Protocol 2: Comparative Analysis Using CPI vs. Probabilistic Genotyping

Purpose: To compare traditional Combined Probability of Inclusion/Exclusion (CPI/CPE) methods with probabilistic genotyping approaches for DNA mixture interpretation.