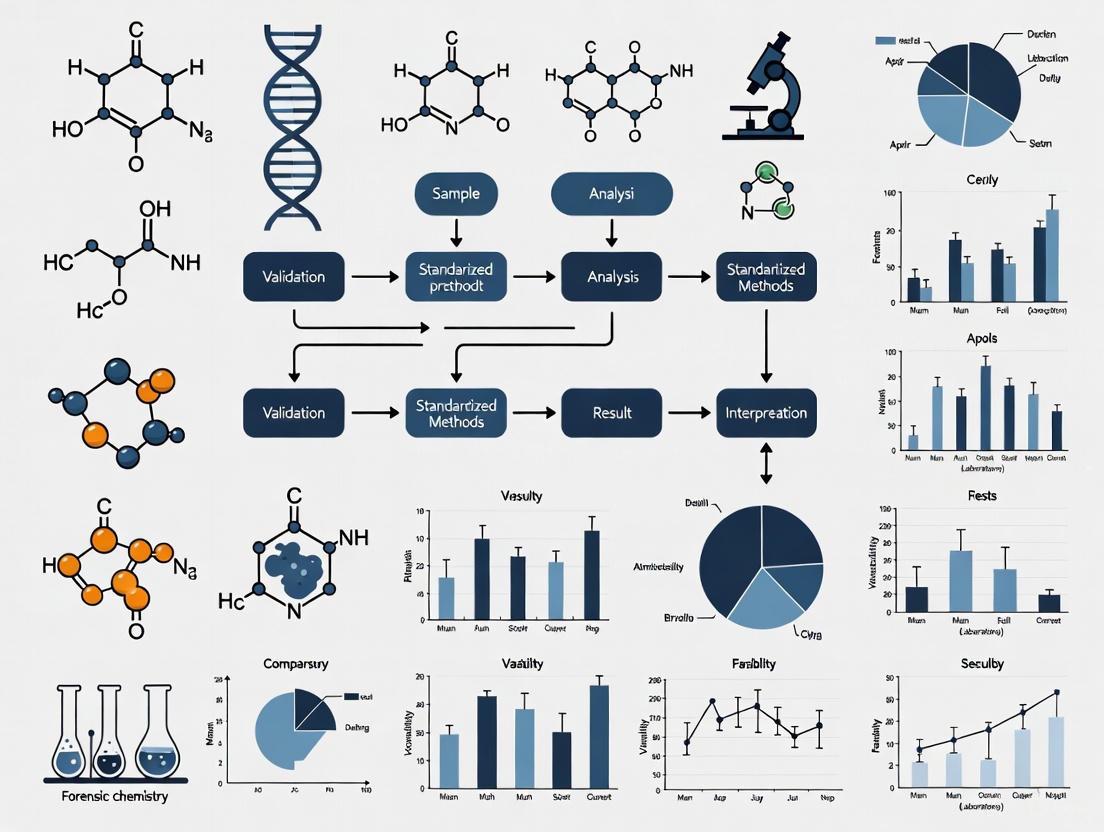

Advancing Forensic Chemistry: A Strategic Roadmap for Inter-Laboratory Validation and Standardized Methods

This article provides a comprehensive overview of the critical role inter-laboratory validation and standardized methods play in strengthening the foundations of forensic chemistry.

Advancing Forensic Chemistry: A Strategic Roadmap for Inter-Laboratory Validation and Standardized Methods

Abstract

This article provides a comprehensive overview of the critical role inter-laboratory validation and standardized methods play in strengthening the foundations of forensic chemistry. Aimed at researchers, scientists, and drug development professionals, it explores the current landscape of forensic standards, details the development and implementation of robust methodological protocols, addresses common challenges and optimization strategies, and establishes frameworks for rigorous validation and comparative analysis. Synthesizing insights from recent reports, standards updates, and peer-reviewed research, the content is designed to guide the adoption of practices that enhance the validity, reliability, and consistency of forensic analyses for both research and casework.

The Landscape of Standardization in Forensic Chemistry: Building a Foundation for Reliability

In forensic science, the reliability of evidence presented in court hinges on the scientific validity and reproducibility of the analytical methods used. A significant gap exists between the development of novel analytical techniques in research and their routine application in casework. This gap is bridged through inter-laboratory validation and the establishment of standardized methods, which are critical for demonstrating that a technique produces reliable, consistent, and reproducible results across different laboratories and practitioners. The legal system imposes rigorous standards for the admissibility of expert testimony, including the Daubert Standard and Federal Rule of Evidence 702, which require that methods have been tested, peer-reviewed, have a known error rate, and are generally accepted in the scientific community [1]. This review identifies key challenges in the field through the lens of inter-laboratory studies, providing a comparative analysis of method performance and outlining the essential pathway from research innovation to forensically validated practice.

Critical Gaps and Comparative Analysis of Forensic Methods

Despite advancements, several forensic chemistry subfields face challenges related to standardization, objectivity, and the need for robust quantitative metrics. The table below summarizes the current state and identified gaps for several key evidence types based on recent inter-laboratory studies.

Table 1: Critical Gaps in Forensic Evidence Analysis Identified Through Inter-Laboratory Studies

| Evidence Type | Analytical Method(s) | Key Challenge/Gap | Inter-Laboratory Study Findings | Quantitative Performance Data |

|---|---|---|---|---|

| Duct Tape Physical Fits [2] | Physical comparison of edge patterns (Edge Similarity Score - ESS) | Lack of standardized protocols for examination and interpretation. | High accuracy (>95% for high-confidence fits) but greater variance in mid-range similarity scores [2]. | Overall accuracy of 95.5% (Study 2); False positive rate of 0.7% [2]. |

| Drug Analysis & Novel Psychoactive Substances (NPS) [3] | DART-MS, GC-MS, HPLC | Rapidly evolving drug market and need for efficient, confident identification [3]. | Need for standardized databases, algorithms, and methods for instrument calibration [3]. | NIST program focuses on developing standard methods and data-sharing tools [3]. |

| Glass Fragments [4] | Particle-Induced X-ray Emission (PIXE), µ-XRF, LA-ICP-MS | Insufficient discriminative power of classical methods (e.g., refractive index) [4]. | Machine learning models on combined lab data achieved equal or better accuracy than lab-specific models [4]. | Unified classification model accuracy >80% for car manufacturer identification [4]. |

| Shooting Distance Estimation [5] | Color tests (MGT, SRT), SEM-EDS, FTIR-ATR | Subjectivity and lack of sensitivity in traditional color tests [5]. | Integrated instrumental methods (SEM-EDS, FTIR) enhanced sensitivity and objectivity vs. color tests alone [5]. | RSD for SRT increased to 28.6% at 100 cm; strong negative correlation (PCC = -0.72) between distance and residue [5]. |

| Comprehensive GC×GC [1] | GC×GC-MS (Various applications) | Transition from research to court-admissible method requires extensive validation [1]. | Most applications (e.g., fire debris, drugs) are at low Technology Readiness Levels (TRLs) for routine forensic use [1]. | Requires defined error rates and intra-/inter-laboratory validation to meet legal standards [1]. |

Experimental Protocols: A Case Study in Standardization

The evolution of a method for duct tape physical fit analysis exemplifies the rigorous process required to develop a standardized protocol. The following workflow and detailed methodology from recent inter-laboratory studies highlight the steps taken to ensure reliability and objectivity.

Detailed Experimental Methodology for Duct Tape Physical Fits

The inter-laboratory study for duct tape physical fits provides a model for rigorous method validation [2].

- Sample Preparation: Test samples were originating from medium-quality grade duct tape (Duck Brand Electrician's Grade Gray Duct Tape). A large population study of over 3,000 duct tape samples informed the selection of samples representing a range of edge patterns from hand-torn separations [2].

- Experimental Design: Selected tape comparison pairs were divided into seven groups, each containing three similar pairs. These were assembled into three distribution kits. Each kit contained seven pairs: three with high-confidence fits (F+ with Edge Similarity Score (ESS) of 86-99%), one with moderate similarity (F with ESS of 50-85%), two with low similarity (F- with ESS of 20-49%), and one known non-fit (NF). This design tested participants' ability to distinguish subtle differences [2].

- Analysis Protocol: Participants followed a systematic method for examining, documenting, and interpreting duct tape physical fits. The core of the method involved calculating an Edge Similarity Score (ESS), a quantitative metric estimating the percentage of corresponding scrim fiber ("scrim bins") along the fracture edge between two tape pieces. This objective score was supplemented with qualitative descriptors and a final conclusion (Fit, No Fit, or Inconclusive) [2].

- Data Consolidation and Analysis: The coordinating body collected all participant data and compared the reported ESS scores against pre-established consensus values. Statistical analysis measured overall accuracy, false positive/negative rates, and inter-participant agreement. A key finding was that most participant scores fell within a 95% confidence interval of the mean consensus values, demonstrating the method's robustness [2].

The Scientist's Toolkit: Essential Research Reagent Solutions

Forensic chemistry relies on a suite of analytical instruments and reagents, each chosen based on the evidence type and required information. The following table details key tools and their functions in modern forensic analysis.

Table 2: Essential Research Reagent Solutions and Instrumentation in Forensic Chemistry

| Tool/Reagent | Primary Function in Forensic Analysis | Common Applications |

|---|---|---|

| Gas Chromatography-Mass Spectrometry (GC-MS) [1] [6] | Separates complex mixtures (GC) and identifies individual components by mass (MS). Considered the "gold standard" for many analyses. | Drug identification, toxicology, fire debris analysis (ignitable liquids), explosive residues [1] [6]. |

| Fourier Transform Infrared Spectroscopy (FTIR) [6] [5] | A nondestructive technique that identifies organic and inorganic materials by measuring their absorption of infrared light. | Polymer identification (e.g., tapes, paints), drug screening, detection of organic gunshot residues [6] [5]. |

| Scanning Electron Microscopy with Energy Dispersive X-ray Spectroscopy (SEM-EDS) [5] | Provides high-resolution imaging and simultaneous elemental composition of a sample. | Inorganic gunshot residue (GSR) analysis, identification of trace metals, examination of material morphology [5]. |

| Particle-Induced X-ray Emission (PIXE) [4] | A non-destructive nuclear analytical technique for determining the elemental composition of a material. | Analysis of glass fragments, paint layers, and other trace evidence for major, minor, and trace elements [4]. |

| Direct Analysis in Real Time Mass Spectrometry (DART-MS) [3] | An ambient ionization technique that allows for rapid, high-throughput analysis of samples with minimal preparation. | Rapid screening of drugs of abuse, novel psychoactive substances (NPS), and other chemical evidence [3]. |

| Modified Griess Test (MGT) & Sodium Rhodizonate Test (SRT) [5] | Color tests used as chemical reagents to detect specific compounds. MGT detects nitrites, SRT detects lead. | Presumptive tests for gunshot residue patterns on fabrics to estimate shooting distance [5]. |

The path to overcoming the grand challenges in forensic evidence analysis is unequivocally paved with robust, multi-laboratory validation. As demonstrated by the case studies in duct tape, glass, and shooting distance estimation, the consistent application of standardized methods, objective quantitative metrics, and inter-laboratory collaboration is paramount. These practices directly address the core requirements of the legal system for reliable, reproducible, and error-rated scientific evidence. Future research must prioritize large-scale validation studies, the development of standardized data-sharing platforms, and the integration of objective statistical models to quantify the weight of evidence. By closing these critical gaps, the forensic science community can fortify the foundation of expert testimony, thereby enhancing the administration of justice.

In forensic chemistry, the legal system requires the use of scientifically valid and reliable methods to ensure that evidence presented in courts is consistent, accurate, and trustworthy [7] [8]. The 2009 National Academies report, "Strengthening Forensic Science in the United States: A Path Forward," highlighted significant challenges within the forensic science community, noting that it often lacked the culture of rigorous validation, bias avoidance, and connection to peer-reviewed research that characterizes other scientific fields [8]. This identified need for greater scientific rigor has driven the development of centralized standards.

The Organization of Scientific Area Committees for Forensic Science (OSAC), administered by the National Institute of Standards and Technology (NIST), was created to address the critical lack of discipline-specific, technically sound standards [9]. OSAC strengthens the nation's use of forensic science by facilitating the development and promoting the use of high-quality standards that define minimum requirements, best practices, and standard protocols to help ensure that forensic results are reliable and reproducible [9]. The OSAC Registry, a key output of this effort, serves as a repository of approved standards that have undergone a rigorous technical and quality review process, encouraging the forensic science community to adopt them to advance the practice of forensic science [10].

The OSAC Registry: Structure and Core Components

Architecture of the OSAC Registry

The OSAC Registry is a dynamic repository containing two distinct types of standards, both of which have passed a stringent review process that encourages feedback from practitioners, research scientists, statisticians, legal experts, and the public [10].

- SDO-Published Standards: These are standards that have completed the consensus process of an external Standards Developing Organization (SDO), such as ASTM International or the Academy Standards Board (ASB), and have subsequently been approved by OSAC for placement on the Registry [10]. Placement on the Registry requires a consensus (evidenced by a two-thirds vote or more) of both the relevant OSAC subcommittee and the Forensic Science Standards Board [10].

- OSAC Proposed Standards: These are draft standards that have been developed by OSAC and handed off to an SDO for the external development and publication process [10]. They remain on the Registry as proposed standards to help fill the standards gap while the SDO completes its own consensus process. OSAC encourages laboratories to implement these proposed standards even before the SDO publishes a final version [10].

The Registry covers a wide array of forensic disciplines, including specific subcommittees for Human Forensic Biology, Seized Drugs, Trace Materials, and Ignitable Liquids, Explosives & Gunshot Residue [10] [3]. As of the latest data, the OSAC Registry hosts 245 standards in total, comprising 162 SDO-published standards and 83 OSAC Proposed Standards [10].

The Standards Development Workflow

The process for creating and vetting a standard that is ultimately posted to the OSAC Registry is a transparent, consensus-based endeavor that involves multiple stages of development and review. The following diagram illustrates the key stages a standard passes through before being added to the OSAC Registry.

Comparative Analysis: OSAC Registry vs. Traditional Validation Approaches

The implementation of standards from the OSAC Registry facilitates a more collaborative and efficient model for method validation compared to traditional, insular approaches. The following table summarizes the key differences between these two paradigms, drawing on a collaborative model proposed in forensic literature.

| Feature | Traditional Isolated Validation | OSAC Registry & Collaborative Validation |

|---|---|---|

| Core Philosophy | Individual laboratories tailor validations to their specific needs, often modifying parameters. | Adopt and verify standardized methods from the Registry; promotes uniformity [7]. |

| Resource Expenditure | High redundancy; each lab spends significant time and resources on similar tasks [7]. | Significant savings by sharing expertise and data; reduces activation energy for smaller labs [7]. |

| Methodological Consistency | Results in many similar techniques with minor differences across labs, hindering data comparison [7]. | Enables direct cross-comparison of data between labs using the same standardized methods [7]. |

| Scientific Rigor | Varies by laboratory; no universal benchmark for performance. | Incorporates technically sound, consensus-based standards from inception, raising all labs to a high level [7]. |

| Implementation Speed | Slow; each lab must navigate method development and validation independently. | Accelerated; labs can move directly to verification if they adopt a published, validated method [7]. |

Quantitative Impact of Collaborative Validation

A proposed collaborative validation model demonstrates the profound efficiency gains achievable when Forensic Science Service Providers (FSSPs) adopt published standards and validation data. The table below quantifies the benefits, using a business case analysis from a published model.

Table: Quantitative Business Case for Collaborative Validation Model [7]

| Metric | Traditional Independent Validation | Collaborative Validation (Using Published Data) |

|---|---|---|

| Primary Activity | Method development and full validation | Method verification |

| Reported Cost Savings | Baseline | Significant savings in salary, sample, and opportunity costs |

| Data Comparability | No benchmark for cross-lab comparison | Provides an inter-laboratory study, building a shared knowledge base [7]. |

| Path to Publication | Rarely pursued for individual validations | Encouraged; original validations are published for community use [7]. |

Experimental Protocols for Implementing OSAC Standards

Protocol for Verifying an OSAC-Registered Standard Method

This protocol is designed for a laboratory (the "verifying lab") that wishes to implement a method previously validated by another FSSP (the "originating lab") and published in a peer-reviewed journal, in alignment with OSAC's principles [7].

Method Selection and Documentation Review:

- Identify the specific OSAC-registered standard and the corresponding peer-reviewed publication detailing the original validation [7].

- Obtain the exact written method, including all instrumentation, software, reagents, and procedural parameters from the originating lab.

Acquisition and Calibration:

- Procure the specified instrumentation, reagents, and quality control materials as defined in the method.

- Perform installation, operational, and performance qualification (IQ/OQ/PQ) of equipment as required. Calibrate instruments according to the manufacturer's and the method's specifications.

Verification Testing and Competency Assessment:

- Analyze a representative set of samples that mimic evidence, including positive controls, negative controls, and authentic or mock case samples.

- Lab personnel must successfully complete training and demonstrate competency by generating data that meets the performance characteristics (e.g., precision, accuracy, sensitivity) established in the original published validation.

Data Analysis and Report Generation:

- Compare the verification data against the benchmarks set by the originating lab. The results should demonstrate that the verifying lab can successfully operate the method and obtain comparable results.

- Compile a verification report that documents the process, presents all data, and includes a statement of successful verification, authorizing the method for use in casework.

Workflow for Standard Implementation and Verification

The following diagram outlines the end-to-end process for a laboratory to adopt and verify a method based on an OSAC-registered standard and published validation data.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents, materials, and instruments commonly referenced in OSAC standards and foundational to modern forensic chemistry research and analysis [11] [3].

Table: Key Research Reagent Solutions in Forensic Chemistry

| Item | Function / Application in Forensic Chemistry |

|---|---|

| Gas Chromatography (GC) Systems | Separates volatile components in complex mixtures, such as drugs, ignitable liquids, and explosives residues. Often coupled with mass spectrometry (MS) for detection [11]. |

| Mass Spectrometry (MS) | Provides definitive identification of compounds based on their mass-to-charge ratio. Central to methods like DART-MS for drug analysis and LA-ICP-MS for glass evidence [3]. |

| Inductively Coupled Plasma Mass Spectrometry (ICP-MS) | An ultra-sensitive technique for elemental analysis, used in the characterization of materials like glass and gunshot residue [11]. |

| Infrared Spectroscopy | Used for the identification of organic compounds, including seized drugs and trace materials, by analyzing the absorption of infrared light by molecular bonds [11]. |

| Polarized Light Microscopy | A fundamental tool for the forensic examination and comparison of trace evidence such as soils, fibers, and explosives [10]. |

| Certified Reference Materials | High-purity standards with certified chemical composition or properties, essential for instrument calibration, method validation, and ensuring quantitative accuracy [3]. |

The OSAC Registry represents a transformative shift in forensic science, moving from a fragmented landscape of individual laboratory practices to a unified community built on shared, scientifically robust standards. For researchers and professionals in forensic chemistry and drug development, the Registry provides a critical foundation for ensuring that analytical methods are reliable, reproducible, and legally defensible. The collaborative validation approach it enables—where one lab's rigorous validation paves the way for others' efficient verification—dramatically increases efficiency, reduces costs, and enhances the overall scientific rigor of the discipline [7]. By providing a central hub for high-quality, technically sound standards, the OSAC Registry is not just a repository of documents but a proactive engine for continuous improvement and trust in forensic science.

For researchers, scientists, and drug development professionals, the presentation of scientific evidence in a legal context is a critical juncture where rigorous laboratory work meets the procedural rules of the courtroom. The admissibility of forensic chemical data and expert opinions hinges on specific legal standards designed to ensure the reliability and relevance of scientific testimony. The Daubert Standard and Federal Rule of Evidence 702 collectively form the primary framework governing this process in federal courts and many state jurisdictions [12] [13]. These standards establish trial judges as "gatekeepers" responsible for screening expert testimony to prevent "junk science" from influencing legal proceedings [12] [14]. For forensic chemistry research involving inter-laboratory validation of standardized methods, understanding these legal benchmarks is not merely academic—it is essential for ensuring that scientific findings will be deemed admissible and persuasive in litigation.

The evolution of these standards reflects an ongoing effort to balance scientific innovation with legal reliability. The older Frye Standard, stemming from the 1923 case Frye v. United States, admitted scientific evidence only if it was "generally accepted" by the relevant scientific community [15] [13]. This was largely superseded in federal courts by the more flexible Daubert Standard, established in the 1993 Supreme Court case Daubert v. Merrell Dow Pharmaceuticals, Inc. [12] [16]. Subsequent cases including General Electric Co. v. Joiner and Kumho Tire Co. v. Carmichael (collectively known as the "Daubert Trilogy") reinforced and expanded this gatekeeping role, culminating in the codification of these principles in Federal Rule of Evidence 702 [12] [17] [16]. A significant clarification to Rule 702 took effect in December 2023, emphasizing that the proponent of expert testimony must demonstrate to the court that "it is more likely than not" that the testimony meets the rule's admissibility requirements [14] [18] [19].

Comparative Analysis of Evidentiary Standards

The landscape of expert evidence admissibility is not monolithic across the United States. While federal courts uniformly apply the Daubert framework, state courts exhibit a diverse patchwork of standards, with some retaining the older Frye test, others adopting Daubert, and many implementing hybrid approaches [15]. This variation necessitates that forensic chemists and researchers understand the specific jurisdictional requirements where their evidence might be presented.

Table 1: Comparison of Frye and Daubert Evidentiary Standards

| Feature | Frye Standard | Daubert Standard |

|---|---|---|

| Originating Case | Frye v. United States (1923) [13] | Daubert v. Merrell Dow Pharmaceuticals, Inc. (1993) [12] |

| Core Question | Is the method "generally accepted" in the relevant scientific community? [15] [13] | Is the testimony based on reliable methodology and principles, and are they reliably applied to the facts? [12] [17] |

| Gatekeeper | Scientific community [15] | Trial judge [12] |

| Primary Focus | Consensus within the scientific field [13] | Methodological reliability and relevance [12] [13] |

| Flexibility | Rigid; excludes novel science [13] [18] | Flexible; allows for case-by-case evaluation [15] [13] |

| Impact on New Methods | Excludes "good science" that is not yet widely accepted [15] [18] | Potentially admits reliable but novel science [15] [13] |

| Sample Jurisdictions | California, Illinois, Pennsylvania, Washington [15] [16] | All federal courts and the majority of states (e.g., Arizona, Georgia, Texas) [15] |

The fundamental distinction lies in the locus of authority. Under Frye, the scientific community serves as the gatekeeper through its established consensus, whereas under Daubert, the judge actively assumes this role [15] [12]. This has practical implications: Frye offers a "bright-line rule" that is simple to apply but can exclude reliable emerging science, while Daubert provides a more nuanced, multi-factor test that requires judges to engage more deeply with the scientific methodology [15] [13] [18]. As one court noted, Daubert may admit reliable but not yet generally accepted methodologies, while excluding "bad science" derived from an otherwise accepted method [18].

Table 2: State Jurisdictions and Their Primary Evidentiary Standards

| Daubert Standard | Frye Standard | Hybrid or Modified Standards |

|---|---|---|

| Alabama [15] | California [15] [16] | Colorado (Shreck/Daubert) [15] |

| Arizona [15] | Illinois [15] [16] | Connecticut (Porter/Daubert) [15] |

| Florida [15] | Maryland [15] | Indiana (Modified Daubert) [15] |

| Georgia [15] | Pennsylvania [18] [16] | New Jersey (varies by case type) [15] |

| Texas (Modified Daubert) [15] | Washington [15] [16] | New Mexico (Daubert/Alberico) [15] |

Federal Rule of Evidence 702: A Detailed Framework

Federal Rule of Evidence 702 codifies the Daubert standard and provides the specific criteria that expert testimony must meet to be admissible in federal court. The rule was amended in 2023 to clarify and emphasize the judge's gatekeeping role and the proponent's burden of proof [14] [18] [19].

The current rule states: A witness who is qualified as an expert by knowledge, skill, experience, training, or education may testify in the form of an opinion or otherwise if the proponent demonstrates to the court that it is more likely than not that: (a) the expert’s scientific, technical, or other specialized knowledge will help the trier of fact to understand the evidence or to determine a fact in issue; (b) the testimony is based on sufficient facts or data; (c) the testimony is the product of reliable principles and methods; and (d) the expert’s opinion reflects a reliable application of the principles and methods to the facts of the case. [17] [14] [18]

The 2023 amendments introduced two critical clarifications. First, they explicitly state that the proponent must demonstrate admissibility by a preponderance of the evidence ("more likely than not") [14] [19]. Second, they change the language from "the expert has reliably applied" to "the expert’s opinion reflects a reliable application," making clear that the court must assess whether the expert's conclusions are within the bounds of what the methodology can reliably support [18] [20]. This reinforces that questions about the sufficiency of an expert's basis and the application of their methodology are threshold questions of admissibility for the judge, not merely questions of weight for the jury [19] [20].

The Daubert Factors and Inter-Laboratory Validation

The Daubert decision provided a non-exclusive checklist of factors for judges to consider in evaluating expert testimony [12] [17]. For forensic chemists, these factors align closely with the principles of robust scientific practice and validation.

Figure 1: Mapping Daubert Legal Factors to Forensic Chemistry Research Practices

Experimental Protocols for Legal Readiness

To satisfy Daubert's factors and the requirements of Rule 702, forensic chemistry research protocols must be designed with legal admissibility as a key objective. The following methodological framework is essential for establishing reliability.

Inter-Laboratory Validation Studies: A core methodology for addressing the testing and standards factors of Daubert involves designing and executing inter-laboratory validation studies [21]. These studies should engage multiple independent laboratories to perform the same analytical method on homogeneous, representative reference materials. The experimental workflow must be thoroughly documented in a detailed study protocol that specifies the standardized method, equipment specifications, reagent qualifications, data acceptance criteria, and statistical analysis plan. The resulting data is analyzed to determine key validation metrics, including inter-laboratory precision (e.g., reproducibility standard deviation), bias estimates, and the method's robustness to variations in operational and environmental conditions.

Error Rate and Uncertainty Quantification: To address the error rate factor, researchers must employ rigorous statistical analysis to establish the reliability of their methods [12] [21]. This involves calculating the method's total uncertainty budget by quantifying all significant sources of uncertainty, including those from calibration, instrumentation, and sample preparation. Proficiency testing using blinded samples should be conducted regularly to monitor ongoing performance and estimate potential false-positive and false-negative rates. Statistical confidence intervals (e.g., at 95% or 99% confidence) must be reported for all quantitative results to communicate the inherent limitations and reliability of the data to the court.

Peer-Review and Publication Strategy: A deliberate strategy for peer review and publication is critical for demonstrating that a methodology has been scrutinized by the scientific community [12] [16]. Researchers should prioritize submitting their complete validation study reports, including all experimental data and statistical analyses, to reputable, peer-reviewed scientific journals. The peer review process itself serves as independent validation of the method's scientific soundness. Furthermore, presenting these findings at major scientific conferences provides additional opportunities for professional critique and acceptance, strengthening the argument for the method's reliability under the Daubert framework.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following reagents and materials are fundamental to conducting forensic chemistry research that meets the exacting standards of legal admissibility.

Table 3: Essential Research Reagents and Materials for Legally Defensible Forensic Chemistry

| Item | Function in Research & Validation |

|---|---|

| Certified Reference Materials (CRMs) | Provides traceable calibration and method validation with known purity and uncertainty, essential for establishing the accuracy and reliability of quantitative analyses. |

| Analytical Grade Solvents & Reagents | Ensures minimal interference from impurities during sample preparation and analysis, directly supporting the "standards and controls" factor of Daubert. |

| Stable Isotope-Labeled Internal Standards | Compensates for matrix effects and analytical variability in mass spectrometric methods, allowing for precise quantification and supporting a known, controlled error rate. |

| Proficiency Test Materials | Allows for blind testing of laboratory performance and method reproducibility across different operators and instruments, providing empirical data on method error rates. |

| Quality Control Check Standards | Monitors the stability and precision of analytical instrumentation over time, demonstrating the existence of maintained standards and controls for the methodology. |

For the forensic chemistry research community, the path to legal readiness is paved with rigorous, transparent, and collaborative science. The Daubert Standard and Federal Rule of Evidence 702 are not merely legal hurdles but embody principles of sound scientific inquiry: testability, peer scrutiny, error awareness, standardized operation, and eventual consensus. By consciously designing inter-laboratory validation studies and routine analytical workflows with the Daubert factors in mind, researchers and drug development professionals can ensure that their scientific findings are not only chemically sound but also legally admissible. This synergy between scientific and legal rigor fortifies the integrity of both disciplines and ensures that the evidence presented in courtrooms is founded upon the most reliable scientific knowledge available.

The reliability of forensic evidence presented in courtrooms hinges on the rigor and consistency of the analytical methods used in crime laboratories. In forensic chemistry, the analysis of seized drugs and trace evidence represents a critical front in the legal system's pursuit of justice. A broader thesis on inter-laboratory validation posits that without standardized methods, forensic results remain vulnerable to challenge, potentially undermining their probative value. The scientific and legal communities have increasingly recognized this imperative, leading to a concerted effort to develop, refine, and implement robust standards that ensure analytical results are both reliable and reproducible across different laboratories and jurisdictions.

This guide explores the current landscape of standardization initiatives, objectively comparing emerging and established analytical techniques. It places particular emphasis on the legal admissibility criteria, such as the Daubert Standard, which requires that scientific evidence be derived from testable methods with known error rates and general acceptance within the relevant scientific community [1]. The drive for standardization is not merely academic; it is a foundational element for ensuring that forensic science can meet these legal benchmarks.

Current Standardization Initiatives and Key Organizations

The movement toward forensic standardization is championed by several key organizations. The Organization of Scientific Area Committees (OSAC) for Forensic Science, administered by the National Institute of Standards and Technology (NIST), plays a pivotal role in evaluating and registering consensus-based standards for a wide range of forensic disciplines [22] [23]. Similarly, Standards Developing Organizations (SDOs) like the Academy Standards Board (ASB) and ASTM International are actively generating new standards and updating existing ones.

Recent initiatives highlight a significant focus on the analysis of seized drugs, a critical area given the ongoing opioid crisis. As of early 2025, several new standard proposals are moving through the development pipeline at ASTM, indicating a direct response to evolving forensic needs [24]. These include:

- WK93504: A new test method for the analysis of seized drugs using Gas Chromatography/Mass Spectrometry (GC/MS), designed to comprehensively identify over 400 substances [24].

- WK93971: A proposed new standard for analyzing fentanyl and related substances using Gas Chromatography-Infrared Spectroscopy (GC-IR) [22].

- WK93533: A new practice for establishing intralaboratory blind quality control programs specific to seized-drugs analysis [24].

Furthermore, there is a concerted effort to reinstate and revise foundational guides, such as the standard guide for sampling seized drugs for qualitative and quantitative analysis (WK93516) [24]. This flurry of activity underscores a dynamic and responsive standards environment aimed at enhancing the quality and consistency of forensic chemical analysis.

Comparison of Analytical Techniques and Their Standardization Status

Forensic laboratories employ a variety of techniques for drug identification and analysis, each with distinct advantages, limitations, and varying levels of acceptance within the standardization framework. The table below provides a comparative overview of key technologies.

Table 1: Comparison of Forensic Drug Testing Techniques

| Technique | Principle of Operation | Detection Capabilities & Accuracy | Ease of Use & Cost | Standardization & Legal Readiness |

|---|---|---|---|---|

| Mass Spectrometry (MS) [25] | Measures mass-to-charge ratio of ions. Often coupled with GC or LC for separation. | Gold standard. High specificity & sensitivity (detection to attomolar range). Identifies virtually any substance. | Requires expert operator. High equipment cost ($5k-$1M). High ongoing costs. | Well-established, legally accepted. GC/MS is subject of new standard (WK93504) [24]. |

| Immunoassays [26] | Antibody-based detection of drug classes. | Presumptive only. Prone to false positives/negatives due to cross-reactivity. Lower sensitivity. | Easy to use, low cost. Suitable for point-of-care. | Well-established for clinical use; considered presumptive in forensic context. |

| Comprehensive 2D Gas Chromatography (GC×GC) [1] | Two sequential GC separations with different stationary phases, greatly increasing resolution. | Superior separation of complex mixtures. Higher peak capacity and signal-to-noise vs 1D GC. | Expert operation needed. Specialized equipment. Higher cost than 1D GC. | Early research stage. Not yet routine. Lacks extensive validation and standardized methods for court. |

| Ion Mobility Spectrometry (IMS) [25] | Separates ions based on speed through a carrier gas. | Fast, accurate for small molecules. Selective (ppb detection). Non-destructive. | Does not require trained operator. Requires database for identification. | Gaining traction; standard methods are developing. |

Insights from Comparative Data

The choice of technique involves a clear trade-off between discriminatory power and accessibility. While MS remains the undisputed gold standard for confirmatory analysis, its cost and operational complexity can be prohibitive for non-laboratory settings [25]. Immunoassays, though affordable and easy to use, serve only as a preliminary screening tool due to their inherent limitations in specificity [26].

Emerging techniques like GC×GC offer a powerful solution for complex mixtures that challenge conventional 1D-GC, such as emerging psychoactive substances or intricate trace evidence. However, its technology readiness level for routine forensic casework remains low, primarily due to a lack of intra- and inter-laboratory validation studies and established, court-ready standards [1]. In contrast, IMS strikes a balance, offering rapid and reliable identification that is easier to operationalize than MS, making it a strong candidate for harmonization and future standard adoption in point-of-care or field applications [25].

Experimental Protocols for Standardized Analysis

The path from a submitted evidence sample to a forensically defensible result requires a meticulously controlled workflow. The following protocols detail the standardized methodologies for the most critical techniques in seized drug analysis.

Gas Chromatography-Mass Spectrometry (GC/MS) for Seized Drugs

GC/MS is the cornerstone of confirmatory drug analysis. The proposed ASTM standard WK93504 will provide a formalized test method for analyzing over 400 seized drug substances [24]. A generalized, high-level workflow is as follows:

- Sample Preparation: A small, representative portion (e.g., milligrams) of the seized material is weighed. The sample is then dissolved and diluted in an appropriate solvent (e.g., methanol). The solution may be centrifuged and filtered to remove particulate matter.

- Instrumental Analysis:

- Separation (GC): A microliter of the prepared sample is injected into the GC inlet, vaporized, and carried by an inert gas through a capillary column. The components of the mixture separate based on their differing interactions with the column's stationary phase.

- Ionization and Detection (MS): As separated compounds elute from the GC column, they are ionized (commonly by Electron Ionization, EI) in the MS source. The ions are separated by their mass-to-charge ratio (m/z) in the mass analyzer, and a detector records the abundance of each m/z.

- Data Interpretation & Confirmation: The resulting mass spectrum for each compound is compared against a certified reference library of known controlled substances. Identification is confirmed based on the retention time and the correspondence of the sample's mass spectrum with the reference spectrum, including the relative abundances of characteristic ions.

Inter-Laboratory Validation Workflow

For any method to be considered standardized and reliable, it must undergo rigorous inter-laboratory validation. This process assesses whether different laboratories can reproduce the same results from the same sample, a core requirement for legal admissibility [1] [5]. The workflow, adapted from successful models in trace evidence like glass analysis [4], can be visualized below.

The corresponding protocol for this workflow involves:

- Single-Laboratory Validation: A lead laboratory fully optimizes and validates the analytical method, establishing standard operating procedures (SOPs) and key performance metrics [27].

- Sample and Lab Selection: Homogeneous and stable blind samples (e.g., certified drug standards or characterized seized material) are prepared. Multiple independent laboratories with relevant expertise are recruited.

- Parallel Testing and Data Collection: All participating laboratories receive the same set of blind samples and the SOP. They perform the analysis independently and report their raw data and results to a central coordinating body.

- Statistical Analysis and Assessment: The coordinating body performs a statistical analysis of the collated data. Key metrics include:

- Relative Standard Deviation (RSD): Measures the precision of quantitative results (e.g., drug concentration) across labs. A low RSD indicates high reproducibility [5].

- Pearson Correlation Coefficient (PCC): Assesses the strength of the linear relationship between results from different labs, indicating consistent trends [5].

- Paired t-tests: Determine if there are statistically significant differences between the results obtained by different laboratories [5].

- Standard Formulation: Based on the outcomes of the inter-laboratory study, the method is refined, and a consensus-based standard is drafted, balloted, and published by an SDO like ASTM or ASB [22] [24].

Essential Research Reagents and Materials

The execution of standardized forensic methods relies on a suite of high-quality reagents and reference materials. The following table details key components essential for reliable seized drug analysis.

Table 2: Key Reagents and Materials for Forensic Drug Analysis

| Reagent/Material | Function/Application | Importance in Standardization |

|---|---|---|

| Certified Reference Materials (CRMs) [4] | Pure analyte standards used for method calibration, qualification of instruments, and comparison/identification of unknown compounds. | Provides the metrological traceability essential for accurate and defensible results. The cornerstone of inter-laboratory reproducibility. |

| Internal Standards (IS) [25] | A known quantity of a non-native compound added to a sample to correct for variability during sample preparation and instrument analysis. | Improves the precision and accuracy of quantitative analyses, a critical factor in achieving consistent results across different labs. |

| Chromatography Solvents [25] | High-purity solvents (e.g., methanol, acetonitrile) used for sample dissolution, dilution, and mobile phase preparation in GC and LC. | Minimizes background interference and chemical noise, ensuring optimal separation and detection while protecting instrumentation. |

| Derivatization Reagents | Chemicals that modify a drug molecule to improve its volatility, stability, or chromatographic behavior for GC analysis. | Standardized derivatization protocols are necessary for analyzing certain drugs (e.g., cannabinoids) to ensure consistent and reproducible chromatographic profiles. |

| Quality Control (QC) Materials [24] | Characterized control samples with known drug identity and concentration, used to monitor ongoing analytical performance. | The use of blind QC samples is the subject of a proposed new standard (WK93533), vital for continuous assessment of laboratory proficiency [24]. |

The field of forensic chemistry is in a dynamic state of advancement, driven by a clear mandate for greater scientific rigor and reliability. Current initiatives led by OSAC, ASTM, and ASB are actively producing and refining standards that directly address the analysis of seized drugs and trace evidence. The progression of techniques like GC×GC from research to routine application is contingent upon successful inter-laboratory validation, which provides the necessary data on error rates and reproducibility demanded by the Daubert Standard.

For researchers and forensic service providers, engagement in this ongoing standardization process is crucial. This can be achieved by participating in public comment periods for draft standards, conducting and publishing validation studies, and implementing registered standards in daily practice. The ultimate goal is a robust, scientifically sound, and universally trusted forensic science system, where analytical results stand up to scrutiny both in the laboratory and the courtroom.

From Theory to Practice: Implementing Standardized Protocols and Novel Techniques

The global escalation of drug-related crimes has placed unprecedented pressure on forensic laboratories, where analytical backlogs can impede judicial processes and law enforcement responses. Within this challenging context, Gas Chromatography-Mass Spectrometry (GC-MS) has remained a cornerstone technique in forensic drug analysis due to its high specificity and sensitivity [28] [29]. However, traditional GC-MS methods often require extensive analysis times, creating a critical need for faster analytical techniques that do not compromise evidential accuracy. The emergence of rapid GC-MS methodologies presents a promising solution, potentially reducing analysis times from approximately 30 minutes to as little as 10 minutes or even one minute with certain configurations [28] [30].

The adoption of any new analytical technique in forensic science necessitates rigorous validation to ensure its reliability and admissibility in legal proceedings. Courts rely on standards such as the Daubert Standard and Federal Rule of Evidence 702, which require demonstrated testing, peer review, known error rates, and general acceptance within the scientific community [1]. A significant challenge facing the forensic chemistry community has been the lack of standardized validation protocols, making instrument validation a challenging and time-consuming task that can hinder technological adoption [31] [32]. This case study explores how structured validation templates facilitate the implementation of a rapid GC-MS method for seized drug screening, providing a framework for inter-laboratory standardization and robust method validation.

Validation Framework: Components for Forensic Reliability

A comprehensive validation framework for rapid GC-MS methods must address multiple performance characteristics to establish forensic reliability. Recent research has systematized this process through a nine-component validation template, providing laboratories with a structured approach to evaluate instrumental capabilities and limitations [31] [32]. This framework encompasses:

- Selectivity: Assessing the method's ability to distinguish target analytes from other compounds and matrix interferences.

- Matrix Effects: Evaluating how different sample matrices influence analytical results.

- Precision: Measuring the reproducibility of retention times and mass spectral matches under varying conditions.

- Accuracy: Determining the correctness of identifications through comparison with known standards.

- Range: Establishing the concentration interval over which the method provides accurate and precise results.

- Carryover/Contamination: Verifying that samples do not contaminate subsequent analyses.

- Robustness: Testing the method's resilience to deliberate, small variations in operational parameters.

- Ruggedness: Assessing performance across different instruments, operators, or laboratories.

- Stability: Determining the constancy of analytical results over time [31] [32].

This multi-faceted approach provides forensic laboratories with a comprehensive template for establishing method validity, addressing the stringent requirements of legal standards while creating a pathway for inter-laboratory comparison and standardization.

Experimental Comparison: Rapid GC-MS Versus Conventional Methodology

Instrumentation and Operational Parameters

The optimized rapid GC-MS method employs specific instrumentation and parameters to achieve faster analysis times while maintaining analytical integrity. The following workflow illustrates the key steps in the rapid GC-MS analysis process for seized drugs:

Instrumentation: Development and validation typically utilize an Agilent 7890B gas chromatograph coupled with an Agilent 5977A single quadrupole mass spectrometer, equipped with a standard 30-m DB-5 ms column (30 m × 0.25 mm × 0.25 μm) [28] [29].

Key Method Parameters: The dramatic reduction in analysis time is achieved primarily through optimized temperature programming and flow rate adjustments as detailed in the table below:

Table 1: Comparative Instrumental Parameters for Conventional and Rapid GC-MS Methods

| Method Parameter | Conventional Method | Rapid GC-MS Method |

|---|---|---|

| Temperature Program | Initial: 70°C, ramp to 300°C at 15°C/min (hold 12 min) | Initial: 120°C, ramp to 300°C at 70°C/min (hold 7.43 min) |

| Total Run Time | 30.33 minutes | 10.00 minutes |

| Carrier Gas Flow Rate | 1 mL/min | 2 mL/min |

| Injection Type | Split (20:1 fixed) | Split (20:1 fixed) |

| Inlet Temperature | 280°C | 280°C |

| Ion Source Temperature | 230°C | 230°C |

| Mass Scan Range | m/z 40 to m/z 550 | m/z 40 to m/z 550 |

Analytical Performance and Detection Capabilities

Systematic validation studies demonstrate that the optimized rapid GC-MS method not only reduces analysis time but also enhances several key performance metrics compared to conventional approaches:

Table 2: Performance Metrics for Rapid GC-MS Method in Drug Screening

| Performance Metric | Conventional GC-MS | Rapid GC-MS | Improvement |

|---|---|---|---|

| Analysis Time | 30.33 minutes | 10.00 minutes | 67% reduction |

| Cocaine LOD | 2.5 μg/mL | 1.0 μg/mL | 60% improvement |

| Heroin LOD | Comparable improvement | ≥50% better | ≥50% improvement |

| Retention Time Precision (RSD) | Variable | <0.25% | Excellent repeatability |

| Spectral Match Scores | Typically high | >90% | Consistently high accuracy |

| Application to Case Samples | Reliable | 20/20 accurate IDs | Maintained reliability |

The method has been successfully applied to diverse drug classes, including synthetic opioids, stimulants, cannabinoids, and benzodiazepines, with match quality scores consistently exceeding 90% across tested concentrations [28] [29]. When applied to 20 real case samples from forensic laboratories, the rapid GC-MS method accurately identified controlled substances in both solid and trace samples, demonstrating its utility in authentic forensic contexts [28].

Practical Application: From Validation to Casework Implementation

Sample Preparation and Processing

The validation template emphasizes standardized sample preparation protocols to ensure consistent results across different sample types:

Solid Samples: Tablets and capsules are ground into a fine powder using a mortar and pestle. Approximately 0.1 g of material is added to 1 mL of methanol, followed by sonication for 5 minutes and centrifugation. The supernatant is transferred to a GC-MS vial for analysis [28].

Trace Samples: Swabs moistened with methanol are used to collect residues from drug-related items using a single-direction technique to maintain controlled pressure and prevent contamination. Swab tips are immersed in 1 mL of methanol, vortexed vigorously, and the extract is transferred to a GC-MS vial [28].

Data Processing: Advanced software solutions like PARADISe (PARAFAC2 based Deconvolution and Identification System) enable robust processing of complex GC-MS data, effectively handling challenges such as overlapped peaks, retention time shifts, and low signal-to-noise ratios [33]. This integrated approach converts raw data files into compiled peak tables with automated compound identification using integrated NIST library searches.

Essential Research Reagent Solutions

The following reagents and reference materials are critical for implementing and validating rapid GC-MS methods for seized drug screening:

Table 3: Essential Research Reagents and Materials for Rapid GC-MS Drug Screening

| Reagent/Material | Function/Application | Source Examples |

|---|---|---|

| Drug Reference Standards | Qualitative identification and quantification | Sigma-Aldrich (Cerilliant), Cayman Chemical |

| Methanol (99.9%) | Primary extraction solvent for solid and trace samples | Sigma-Aldrich |

| Helium Carrier Gas (99.999%) | Mobile phase for chromatographic separation | Various suppliers |

| DB-5 ms Capillary Column | Stationary phase for compound separation | Agilent J&W |

| Wiley Spectral Library | Compound identification through spectral matching | 2021 Edition |

| Cayman Spectral Library | Specialized database for emerging drugs | September 2024 Edition |

Implementation Strategy: Toward Inter-Laboratory Standardization

The transition from conventional to rapid GC-MS methods requires careful implementation planning and consideration of legal standards. Successful adoption involves several critical phases:

Validation Template Adoption: Laboratories can utilize published validation templates that include validation plans and automated workbooks specifically designed for rapid GC-MS applications [31] [32]. These resources reduce implementation barriers by providing structured frameworks for assessing the nine key validation components.

Technology Readiness Assessment: Current rapid GC-MS methods for seized drug screening demonstrate high technology readiness levels, with extensive validation studies and successful application to real case samples [30] [31]. The technology meets forensic admissibility standards through demonstrated reliability, peer-reviewed publication, and established error rates.

Legal Considerations: Forensic methods must satisfy legal admissibility standards, including the Daubert Standard and Federal Rule of Evidence 702 in the United States, which emphasize testing, peer review, error rates, and general acceptance [1]. The comprehensive validation approach for rapid GC-MS directly addresses these requirements through systematic error rate determination and demonstration of robust performance across operational parameters.

Organizational Standards Alignment: Implementation should align with established quality assurance standards, such as the FBI Quality Assurance Standards for forensic laboratories, which were updated in 2025 to include provisions for rapid DNA technologies, setting a precedent for rapid analytical methods [34]. Additionally, standards organizations like OSAC (Organization of Scientific Area Committees) maintain registries of validated methods that support forensic standardization [35].

The validation template approach for rapid GC-MS method implementation represents a significant advancement in forensic chemistry standardization. By providing a structured framework for assessing key performance characteristics, these templates facilitate robust method validation while supporting inter-laboratory comparability. The case study demonstrates that properly validated rapid GC-MS methods can reduce analysis time by over 65% while simultaneously improving detection limits and maintaining the high accuracy required for forensic evidence.

As forensic laboratories continue to face increasing caseloads and evolving substance threats, standardized validation protocols for rapid screening technologies will play an increasingly vital role in ensuring both efficiency and reliability. The template-based approach outlined here provides a pathway for laboratories to adopt innovative methodologies with confidence, supported by comprehensive validation data that meets the rigorous standards of the judicial system. Through continued refinement and inter-laboratory collaboration, these validation frameworks will strengthen the foundation of forensic chemistry practice and enhance the administration of justice.

The analysis of complex mixtures represents a significant challenge in fields ranging from forensic chemistry to pharmaceutical development. While conventional one-dimensional gas chromatography-mass spectrometry (1D GC-MS) has long been the gold standard, its limitations in resolving power become apparent when dealing with increasingly complex samples. Comprehensive two-dimensional gas chromatography coupled with mass spectrometry (GC×GC-MS) addresses these limitations by providing superior separation capabilities, enhancing compound detectability, and offering a more complete chemical profile of samples. Within the context of forensic chemistry research, the adoption of any new analytical technique must be framed within a rigorous framework of inter-laboratory validation and standardized methods to ensure the reliability, reproducibility, and ultimate admissibility of generated data in legal proceedings [1]. This article objectively compares the performance of GC×GC-MS against established alternatives, providing supporting experimental data and detailing the methodologies required to validate this powerful technology for routine application.

Technology Comparison: GC×GC-MS vs. Alternatives

The selection of an analytical technique for mixture analysis depends on the specific requirements of resolution, sensitivity, speed, and operational considerations. The following table provides a structured comparison of GC×GC-MS against other chromatographic techniques.

Table 1: Technical Comparison of Separation Technologies

| Technology | Separation Mechanism | Key Strengths | Key Limitations | Typical Forensic Applications |

|---|---|---|---|---|

| GC×GC-MS | Two independent separation columns connected via a modulator [1]. | Highest peak capacity; enhanced signal-to-noise ratio; superior for non-targeted analysis of complex mixtures [1]. | Higher instrumental cost; complex data processing; requires specialized expertise; not yet standardized for routine forensic use [1]. | Illicit drug profiling, ignitable liquid residue (ILR) analysis, odor decomposition, fingerprint aging [1] [36]. |

| Rapid GC-MS | A single column with optimized, fast temperature programming and/or shorter columns [28]. | Very fast analysis (as low as 1-10 minutes); high-throughput screening; reduced solvent consumption [37] [28]. | Lower resolution; limited peak capacity; potential for co-elution [37]. | High-throughput seized drug screening [37] [28]. |

| Conventional GC-MS | A single column with standard temperature programming. | "Gold standard"; well-established, validated protocols; widely accepted in court; extensive spectral libraries [1]. | Limited peak capacity leading to co-elution; lower sensitivity for trace analytes in complex matrices [1]. | Confirmatory analysis of seized drugs, toxicology [37] [1]. |

| GC-VUV | A single column with separation confirmed by vacuum ultraviolet spectroscopy. | Provides spectral differentiation of isomers that MS struggles with; can deconvolute co-eluting peaks [36]. | Emerging technique; limited established application base and library data compared to MS. | Distinguishing positional isomers of synthetic cannabinoids [36]. |

The quantitative performance of these techniques further elucidates their differences. The data below, synthesized from validation studies, highlights key metrics.

Table 2: Comparative Quantitative Performance Data

| Performance Metric | GC×GC-TOF-MS [1] | Rapid GC-MS [28] | Conventional GC-MS [28] |

|---|---|---|---|

| Analysis Time | Long (can be >60 min) | Short (~10 min) | Long (~30 min) |

| Peak Capacity | Very High (up to 1000) | Low | Moderate (100-500) |

| Limit of Detection (LOD) | Significantly improved for trace compounds | 1 µg/mL (Cocaine) | 2.5 µg/mL (Cocaine) |

| Retention Time Precision (%RSD) | Data not available in search results | < 0.25% | Data not available in search results |

| Isomer Differentiation | Excellent | Limited [37] | Moderate |

Experimental Protocols for Method Validation

For a technology to be adopted in forensic laboratories, its methods must undergo comprehensive validation. The following protocols are critical for establishing the reliability of GC×GC-MS and are prerequisites for inter-laboratory studies.

Selectivity and Isomer Separation

Objective: To assess the method's ability to separate and distinguish analytes from each other and from the matrix, with a focus on challenging isomer pairs [37].

Protocol:

- Preparation: Analyze single- and multi-compound test solutions of commonly encountered compounds and their isomers (e.g., synthetic cannabinoid positional isomers) [37] [36].

- Data Acquisition: Process data using chemometric strategies such as PARAFAC2 (PARAllel FACtor analysis 2) or MCR-ALS (Multivariate Curve Resolution - Alternating Least Squares) for peak deconvolution and resolution [38].

- Assessment: Evaluate separation based on two independent retention times (1D and 2D) and mass spectral data. For GC×GC-VUV, apply second-derivative processing of VUV absorbance curves to reveal unique spectral features for each isomer [36].

Precision and Ruggedness

Objective: To determine the method's repeatability (intra-day precision) and reproducibility (inter-day, inter-operator, inter-instrument precision) [37].

Protocol:

- Preparation: Prepare a custom 14-compound test solution at a specified concentration (e.g., 0.25 mg/mL per compound) [37].

- Analysis: Analyze the solution repeatedly (n=5) within a single day and over multiple days (n=15) by different analysts [37].

- Data Analysis: Calculate the percent relative standard deviation (%RSD) for both retention time and mass spectral search scores. An acceptance criterion of ≤10% RSD is commonly used in accredited forensic laboratories [37].

Sensitivity and Limit of Detection (LOD)

Objective: To determine the lowest concentration of an analyte that can be reliably detected.

Protocol:

- Preparation: Serially dilute a standard solution of the target analyte to create a concentration series [28].

- Analysis: Analyze each dilution and establish the signal-to-noise ratio (S/N).

- Calculation: The LOD is typically defined as the concentration yielding an S/N of 3:1. This process demonstrated a 50% improvement in LOD for substances like cocaine with rapid GC-MS (1 µg/mL) compared to conventional methods (2.5 µg/mL), and GC×GC-MS offers further gains for trace compounds [28].

Workflow and Legal Admissibility Pathway

The analytical process for forensic substance analysis using advanced techniques involves a structured workflow, and the path to legal admissibility for new methods like GC×GC-MS is defined by specific legal standards.

Diagram 1: Forensic Analysis Workflow

Diagram 2: Legal Admissibility Pathway

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation and validation of GC×GC-MS methods require specific, high-quality materials and reagents.

Table 3: Essential Research Reagents and Materials for GC×GC-MS Analysis

| Item | Function / Purpose | Example Specifications / Notes |

|---|---|---|

| Reference Standards | Provides known retention times and mass spectra for compound identification and quantification. | Analytical reference materials (e.g., from Cayman Chemical, Cerilliant/Sigma-Aldrich); custom multi-compound mixtures [37] [28]. |

| GC×GC Column Set | Provides two independent separation mechanisms; the heart of the GC×GC system. | A primary column (e.g., non-polar 5% phenyl polysilphenylene-siloxane) and a secondary column (e.g., mid-polarity) connected via a modulator [1]. |

| Modulator | The "heart" of GC×GC; traps and re-injects effluent from the first column onto the second. | Can be thermal or pneumatic; critical for preserving 1D separation and creating the 2D chromatogram [1]. |

| Mass Spectrometer | Provides detection, identification, and structural elucidation of separated compounds. | Time-of-flight (TOF) MS is often preferred for its fast acquisition rate, essential for capturing narrow peaks from the second dimension [1] [36]. |

| Chemometrics Software | Processes complex, high-dimensional data from GC×GC-MS for peak detection, deconvolution, and pattern recognition. | PARADISe (for PARAFAC2), MCR-ALS algorithms; essential for interpreting results [38]. |

| Extraction Solvents | Used in sample preparation to extract analytes from the sample matrix. | High-purity solvents like methanol (HPLC grade) or acetonitrile are used for liquid-liquid extraction [37] [28]. |

| Carrier Gas | The mobile phase that carries the sample through the chromatographic system. | Helium is traditional, but hydrogen is increasingly used due to shortages and for its faster analysis times [39]. |

GC×GC-MS represents a powerful analytical tool with demonstrated superiority in separating complex mixtures, as evidenced by its high peak capacity and application in challenging forensic domains such as drug isomer differentiation, fingerprint aging, and decomposition odor analysis. However, its performance must be objectively weighed against factors like operational complexity, cost, and the current lack of standardized, court-ready validation compared to the entrenched status of conventional GC-MS and the raw speed of rapid GC-MS. The future integration of GC×GC-MS into routine forensic practice is inextricably linked to a concerted, community-wide effort focused on rigorous inter-laboratory validation, the establishment of standardized protocols, and a clear demonstration of its reliability under the stringent criteria set by the Daubert and Frye standards. By systematically addressing these challenges, the forensic science community can fully leverage this advanced technology to uncover deeper insights from complex evidence, thereby enhancing the accuracy and reliability of chemical analysis within the justice system.

The forensic science disciplines have historically exhibited wide variability in techniques, methodologies, and training, creating a pressing need for uniform and enforceable standards. In response to the landmark 2009 National Research Council report "Strengthening Forensic Science in the United States: A Path Forward," the National Institute of Standards and Technology (NIST) established the Organization of Scientific Area Committees (OSAC) for Forensic Science to accelerate the development and adoption of high-quality, technically sound standards [40]. OSAC-approved standards provide minimum requirements, best practices, standard protocols, and definitions to help ensure that forensic analysis results are valid, reliable, and reproducible [40].

This guide examines the implementation of three OSAC Proposed Standards within the context of inter-laboratory validation for forensic chemistry research. For researchers and forensic science service providers (FSSPs), understanding these standards' technical requirements and implementation pathways is crucial for advancing methodological rigor. The voluntary adoption of these standards strengthens forensic chemistry research by providing a consistent framework for evaluating analytical techniques across different laboratory environments [41].

OSAC Standards Landscape and Registry Process

The OSAC Registry serves as a repository of high-quality published and proposed standards for forensic science, with the Registry recently growing to contain 225 standards (152 published and 73 OSAC Proposed) representing over 20 forensic science disciplines as of January 2025 [35]. Standards progress through a multi-tiered development pathway before achieving full recognition, as visualized below:

Figure 1: OSAC Standards Development and Registry Pathway

The implementation of these standards across forensic laboratories remains voluntary, with OSAC encouraging adoption through its "Open Enrollment" approach for collecting implementation data. By 2024, 224 Forensic Science Service Providers had contributed implementation data to OSAC, representing a significant increase in participation over previous years [35]. This growing engagement demonstrates the forensic community's recognition of standards as critical for ensuring reliability and building trust in forensic results [41].

Comparative Analysis of Selected OSAC Proposed Standards

Standard for the Examination and Comparison of Toolmarks for Source Attribution (OSAC 2024-S-0002)

This standard establishes a standardized methodology for toolmark examination, addressing one of the most subjective areas in forensic science. It provides requirements for the analytical process of comparing toolmarks to determine if they originate from the same source, with particular relevance to research on manufacturing marks and their reproducibility.

Key Technical Requirements:

- Defines standardized protocols for microscopic examination of toolmarks

- Establishes criteria for identifying subclass and individual characteristics

- Provides framework for concluding on source attribution

- Supports implementation of 3D technologies for toolmark analysis [42]

Research Implications: Implementation of this standard enables inter-laboratory studies on toolmark reproducibility, allowing researchers to quantify error rates and validate comparison methodologies across different instrumental platforms.

Best Practice Recommendation for the Chemical Processing of Footwear and Tire Impression Evidence (OSAC 2022-S-0032)

This standard addresses the chemical enhancement of footwear and tire impression evidence, providing a critical framework for evaluating development techniques on various substrates. For forensic chemistry research, it establishes baseline protocols for comparing the efficacy of different chemical treatments.

Key Technical Requirements:

- Standardizes chemical processing sequences for impression evidence

- Establishes validation requirements for new chemical formulations

- Defines substrate-specific application protocols

- Provides guidance on documentation and quality control

Research Implications: The standard enables controlled studies comparing sensitivity, specificity, and background interference of chemical enhancement methods across multiple laboratories, strengthening the evidential value of footwear and tire impression evidence.

Standard Practice for the Forensic Analysis of Geological Materials by SEM/EDX (OSAC 2024-S-0012)

This standard provides comprehensive guidelines for analyzing geological materials using scanning electron microscopy with energy dispersive X-ray spectrometry (SEM/EDX). Currently, no other standards specifically address forensic applications of SEM analysis of geological material, making this particularly valuable for inter-laboratory validation studies [35].

Key Technical Requirements:

- Standardizes sample preparation methods for diverse geological materials

- Establishes instrument calibration and validation protocols

- Defines analytical parameters for SEM/EDX analysis

- Provides framework for comparative analysis of geological samples

Research Implications: This standard enables multi-laboratory validation of SEM/EDX methods for forensic geology, allowing researchers to establish error rates, discrimination power, and transfer probabilities for geological evidence.

Implementation Workflow for OSAC Proposed Standards

Successful implementation of OSAC Proposed Standards requires a systematic approach that integrates validation, training, and quality assurance processes. The following workflow outlines the key stages for laboratories adopting these standards:

Figure 2: OSAC Proposed Standards Implementation Workflow

For inter-laboratory validation studies, particular emphasis should be placed on the Method Validation and Proficiency Testing phases, where consistent application of the standard across participating laboratories is essential for generating comparable data. The OSAC Implementation Reporting phase contributes to the broader forensic community's understanding of standard effectiveness [41].

Experimental Design for Inter-laboratory Validation Studies

Core Methodological Framework

Inter-laboratory validation of OSAC Proposed Standards requires carefully controlled experimental designs that isolate variables while testing the standards' applicability across different laboratory environments. The core methodology should include:

- Blinded Sample Sets: Circulate identical sample sets to participating laboratories with known ground truth unknown to analysts

- Standardized Data Collection Forms: Ensure consistent recording of observations and results across all participants

- Control Samples: Include known positive and negative controls to monitor analytical performance

- Statistical Power Analysis: Determine appropriate sample sizes to detect significant differences between methods

Quantitative Metrics for Standard Assessment

Table 1: Key Metrics for Inter-laboratory Validation of OSAC Proposed Standards

| Metric Category | Specific Measurement | Application to Footwear Standards | Application to Toolmarks Standards | Application to Geological Materials Standards |

|---|---|---|---|---|

| Accuracy Measures | False Positive Rate | Chemical processing interference | Erroneous source attribution | Misclassification of mineral components |

| False Negative Rate | Failure to develop usable impression | Missed true matches | Failure to detect trace elements | |

| Precision Measures | Intra-laboratory Reproducibility | Consistency of enhancement quality | Repeatability of comparison conclusions | SEM/EDX measurement variability |

| Inter-laboratory Reproducibility | Cross-lab consistency of developed impressions | Agreement on source conclusions between labs | Instrument-to-instrument calibration consistency | |

| Sensitivity Measures | Limit of Detection | Minimal impression residue detectable | Minimal toolmark characteristics identifiable | Minimal elemental concentrations quantifiable |

| Robustness Measures | Substrate Variability | Performance on porous vs. non-porous surfaces | Consistency across different tool materials | Analysis of heterogeneous geological samples |

Table 2: Research Reagent Solutions and Essential Materials for OSAC Standards Implementation

| Item/Category | Technical Function | Application Examples |

|---|---|---|

| 3D Surface Scanning Microscopes | Creation of virtual models for comparative analysis | Firearm and toolmark analysis using standardized 3D protocols [42] |

| Algorithm Comparison Software | Generation of numerical match scores for objective comparison | Bullet and cartridge case comparison with quantifiable uncertainty metrics [42] |

| Standard Reference Materials | Instrument calibration and method validation | SEM/EDX analysis of geological materials using certified reference standards [35] |

| Chemical Enhancement Reagents | Development of latent impressions on various substrates | Footwear and tire impression processing using standardized chemical sequences [35] |

| Validated Sampling Kits | Standardized collection and preservation of evidence | Geological sample collection maintaining chain of custody and sample integrity [35] |

| Quality Control Materials | Monitoring analytical process performance | Positive and negative controls for toolmark examination procedures [41] |

Data Interpretation and Statistical Framework

The implementation of OSAC Proposed Standards necessitates proper statistical frameworks for data interpretation, particularly for inter-laboratory studies. The likelihood-ratio framework has emerged as the logically correct method for interpreting forensic evidence and is incorporated into international standards such as ISO 21043 [43]. This approach provides a transparent method for weighing evidence and expressing analytical uncertainty, which is particularly valuable when implementing standards that introduce new technologies such as 3D imaging systems for firearm and toolmark analysis [42].

For the three standards examined here, specific statistical considerations include:

- Toolmark Analysis: Implementation of 3D systems generates algorithmically-derived match statistics that express the amount of uncertainty in the analysis, providing a quantitative measure that investigators and jurors can use when weighing evidence [42]

- Geological Materials: Multivariate statistical approaches for comparing SEM/EDX spectral data from multiple laboratories

- Footwear Impressions: Binary logistic regression models for predicting development success rates across different substrate types

The implementation of OSAC Proposed Standards for footwear, toolmarks, and geological materials represents a significant advancement in forensic chemistry research methodology. By providing standardized frameworks for analytical procedures, these standards enable robust inter-laboratory validation studies that strengthen the scientific foundation of forensic science. The ongoing development of these standards through OSAC's consensus-based process ensures they remain current with technological advances while maintaining the rigorous technical requirements necessary for producing valid and reliable forensic results [40].