Advanced Strategies for Sensitivity Testing of Degraded DNA in Forensic Panels

This article provides a comprehensive guide for researchers and scientists on navigating the challenges of sensitivity testing with degraded DNA in forensic panels.

Advanced Strategies for Sensitivity Testing of Degraded DNA in Forensic Panels

Abstract

This article provides a comprehensive guide for researchers and scientists on navigating the challenges of sensitivity testing with degraded DNA in forensic panels. It covers the foundational science of DNA degradation, explores established and emerging methodological approaches—from optimized Short Tandem Repeat (STR) analysis to dense Single Nucleotide Polymorphism (SNP) profiling and mass spectrometry. The content delivers practical troubleshooting protocols for sample purification and inhibition removal, and concludes with rigorous validation frameworks and comparative analyses of forensic techniques to ensure reliable, reproducible results in biomedical and clinical research.

Understanding DNA Degradation: Mechanisms, Challenges, and Impact on Forensic Assays

In forensic genetic applications, the integrity of DNA is paramount for successful analysis. Degraded DNA samples, frequently encountered in forensic casework, present significant challenges for profiling and interpretation due to the reduced size of DNA fragments, which can hamper the performance of genetic tests like Short Tandem Repeat (STR) analysis [1]. DNA degradation is a natural process that impacts the quality of genetic material, making it difficult to analyze or amplify. This occurs through several primary mechanisms: oxidation, hydrolysis, and enzymatic activity [2]. Understanding these pathways is crucial for developing effective strategies to minimize damage, preserve sample integrity, and validate forensic genotyping applications. Each mechanism contributes to DNA fragmentation, creating breaks in the sequence that interfere with polymerase chain reaction (PCR), sequencing, and other downstream forensic analyses [2]. Consequently, sample handling, preservation, and extraction techniques must be meticulously optimized to maintain DNA integrity for sensitivity testing in forensic panels.

Primary DNA Degradation Pathways

Oxidative DNA Damage

Oxidative damage is one of the most common causes of DNA degradation, particularly in samples exposed to environmental stressors. Reactive oxygen species (ROS), heat, and UV radiation modify nucleotide bases, leading to strand breaks and structural changes that interfere with replication and sequencing [2]. These modifications can cause mutations and stall polymerases during amplification. To mitigate oxidative damage, the use of antioxidants and proper storage conditions—such as freezing samples at -80°C or maintaining them in oxygen-free environments—is recommended [2]. In forensic contexts, exposure to environmental elements can exacerbate oxidative damage, making samples recalcitrant to standard analysis.

Hydrolytic DNA Damage

Hydrolysis involves the breakdown of DNA through the cleavage of chemical bonds by water molecules. This process can lead to depurination, where purine bases (adenine and guanine) are removed, resulting in abasic sites that can halt polymerase activity during amplification [2]. If extensive, hydrolytic damage fragments DNA into unusable pieces. Key strategies to reduce hydrolysis include using buffered solutions that maintain a stable pH and storing samples in dry or frozen conditions [2]. The susceptibility of DNA to hydrolysis is a critical consideration for the long-term storage of forensic samples.

Enzymatic DNA Degradation

Enzymatic breakdown, primarily driven by nucleases, represents a significant challenge in biological samples such as blood, tissue, or saliva. These enzymes are designed to degrade nucleic acids and can rapidly dismantle DNA if not properly inactivated during collection or initial processing [2]. Standard countermeasures include heat treatment during extraction, the use of chelating agents like EDTA to inhibit nuclease activity, and the application of nuclease inhibitors [2]. In forensic workflows, rapid inactivation of nucleases is essential to preserve the DNA template from moment of collection.

Table 1: Primary DNA Degradation Pathways and Their Characteristics

| Degradation Pathway | Primary Causes | Resultant DNA Lesions | Common Protective Measures |

|---|---|---|---|

| Oxidation | Reactive oxygen species (ROS), heat, UV radiation [2] | Base modifications, single and double-strand breaks [2] | Antioxidants, storage at -80°C, oxygen-free environments [2] |

| Hydrolysis | Water molecules, acidic or basic conditions [2] | Depurination (loss of A/G), abasic sites, deamination [2] | Buffered solutions, controlled pH, dry or frozen storage [2] |

| Enzymatic Breakdown | Endogenous and exogenous nucleases (DNases, RNases) [2] | Strand scission, fragmentation [2] | Heat treatment, chelating agents (EDTA), nuclease inhibitors [2] |

Protocols for Simulating and Analyzing DNA Degradation

Rapid UV-C Irradiation Protocol for Artificially Degrading DNA

This protocol describes a method to reproducibly generate degraded DNA in only five minutes using UV-C light irradiation, suitable for developmental evaluation and validation of new forensic markers and technologies [1].

- Principle: Ultraviolet radiation, particularly UV-C light, induces cyclobutane pyrimidine dimers and other photolesions in DNA, leading to strand breaks and fragmentation. This mimics the degradation state observed in biological samples exposed to environmental factors [1].

- Materials and Equipment:

- DNA extracted from blood (or other sources)

- UV-C light source (e.g., germicidal lamp, 254 nm)

- Microcentrifuge tubes

- Pipettes and tips

- Spectrophotometer or fluorometer for DNA quantification

- Procedure:

- Sample Preparation: Dilute the extracted DNA to different concentrations (e.g., 1 ng/μL, 10 ng/μL, 100 ng/μL) in low-EDTA TE buffer or nuclease-free water. Aliquot different volumes (e.g., 20 μL, 50 μL, 100 μL) into microcentrifuge tubes.

- UV-C Exposure: Place the open tubes containing DNA aliquots directly under the UV-C light source. Expose samples for varying durations (e.g., 1, 3, 5 minutes) at a fixed distance to create a gradient of degradation. A longer exposure time results in a higher degree of fragmentation.

- Post-Irradiation Handling: Close tubes and store at 4°C if used immediately, or -20°C for long-term storage.

- Assessment of Degradation:

- Quantitative Real-Time PCR (qPCR): Use a multiplex qPCR assay that targets both long and short DNA fragments. A decreasing large-to-small target ratio indicates successful degradation [1].

- Fragment Analyzer/Capillary Electrophoresis: Analyze the DNA to visualize the size distribution profile. Degraded samples will show a smear of low-molecular-weight fragments compared to a tight, high-molecular-weight band in intact DNA [1].

- STR Analysis: Amplify the degraded DNA using your standard forensic STR panel. Compare the profile to that of intact DNA; degraded samples typically show a progressive drop-in peak height with increasing amplicon size (peak height imbalance) and allele dropout [1].

Table 2: Key Research Reagent Solutions for DNA Degradation Studies

| Reagent/Equipment | Primary Function | Application Note |

|---|---|---|

| EDTA (Ethylenediaminetetraacetic acid) | Chelates magnesium ions, inhibiting nuclease activity (enzymatic breakdown) [2]. | Balance is critical; excess EDTA can inhibit downstream PCR [2]. |

| UV-C Light Source (254 nm) | Induces DNA strand breaks and pyrimidine dimers to create artificially degraded DNA for validation [1]. | Allows for reproducible generation of degradation patterns in minutes [1]. |

| Bead Ruptor Elite Homogenizer | Mechanical homogenization for efficient lysis of tough samples while minimizing DNA shearing [2]. | Precise control over speed and cycle duration preserves DNA integrity [2]. |

| Antioxidants (e.g., DTT) | Protects DNA from oxidative damage by neutralizing reactive oxygen species (ROS) [2]. | Useful in storage buffers for precious or low-copy-number samples. |

| Short Amplicon qPCR Assay | Quantifies and assesses the degree of DNA degradation by targeting amplicons of varying lengths [3]. | A decreased long/short amplicon ratio is a key indicator of degradation [1]. |

DNA Purification and Quality Control for Degraded Samples

Specialized DNA analysis techniques adapted from oligonucleotide workflows offer high-resolution solutions for analyzing degraded DNA [3].

- Reversed-Phase High Performance Liquid Chromatography (RP-HPLC): This technique is effective for purifying fragmented DNA, removing inhibitors, and separating degradation products from intact DNA. It is critical for maximizing recovery and minimizing interference in downstream forensic analysis [3].

- Liquid Chromatography-Mass Spectrometry (LC-MS): LC-MS reveals chemical modifications, sequence variations, and degradation patterns—even in harsh sample conditions. Optimization of ionization conditions improves detection sensitivity and confidence for trace forensic samples [3].

- Capillary Electrophoresis (CE): CE provides high-resolution separation of DNA fragments by size, which is ideal for the complex mixture of fragments in a degraded forensic sample. It is the standard method for assessing DNA fragment size distribution and for STR fragment analysis [3].

Data Presentation and Analysis in Degradation Studies

Effective presentation of quantitative data from degradation experiments, such as qPCR results and fragment size distributions, is essential for accurate communication in forensic science [4].

- Frequency Tables for Fragment Size Distribution: When analyzing fragment sizes via capillary electrophoresis, data should be organized into a frequency table with class intervals [4]. The class intervals (size bins) should be equal in size, and the number of intervals should typically be between 5 and 16 for clarity [5]. The table should include absolute frequencies (count of fragments in each bin) and relative frequencies (percentage of the total) [4].

- Histograms for Visualizing Size Distribution: A histogram is the appropriate graphical representation for the frequency distribution of DNA fragment sizes [6]. The horizontal axis is a number line representing fragment size (in base pairs), and the vertical axis represents the frequency (absolute or relative) of fragments in each size class. The bars are contiguous, touching each other without space, because the data (fragment size) is continuous [5].

Table 3: Simulated Data Table: DNA Fragment Size Distribution After UV-C Exposure

| Fragment Size Class (bp) | Absolute Frequency (0 min) | Absolute Frequency (3 min UV-C) | Absolute Frequency (5 min UV-C) | Relative Frequency % (5 min UV-C) |

|---|---|---|---|---|

| > 500 | 850 | 150 | 25 | 2.5% |

| 300 - 500 | 120 | 280 | 100 | 10.0% |

| 200 - 299 | 25 | 320 | 225 | 22.5% |

| 100 - 199 | 5 | 200 | 450 | 45.0% |

| < 100 | 0 | 50 | 200 | 20.0% |

| Total Yield (ng) | 1000 | 800 | 500 | - |

The analysis of degraded DNA remains a significant challenge in forensic genetics, impacting the success of human identification from compromised samples such as skeletal remains, aged evidence, and forensic casework. DNA degradation involves the fragmentation of DNA strands into shorter pieces through enzymatic, chemical, and environmental processes, which directly affects the amplification efficiency of forensic markers [7]. The extent of degradation determines the maximum achievable amplicon length, making marker selection critical for analytical success. This application note examines the differential impacts of degradation on Short Tandem Repeats (STRs) and Single Nucleotide Polymorphisms (SNPs), and outlines optimized protocols for their analysis within the context of sensitivity testing for forensic panels.

Technical Background: Degradation Mechanisms and Marker Selection

DNA Degradation Processes

Upon cell death, endogenous nucleases become activated and begin fragmenting the DNA backbone. This is followed by exogenous enzymatic attack from microorganisms and chemical processes including hydrolytic and oxidative damage [7]. Hydrolytic attacks cause depurination and base deamination, while oxidative damage leads to base modifications and strand breaks. Environmental factors—particularly temperature, humidity, UV radiation, and pH—significantly accelerate these processes, resulting in DNA fragments typically ranging from 100-500 base pairs [7]. The fragmentation pattern is non-random, with longer fragments being disproportionately affected.

Key Forensic Genetic Markers

Forensic genetics employs several marker types with distinct characteristics and degradation tolerance:

- Short Tandem Repeats (STRs): Highly polymorphic length-based polymorphisms (1-6 bp repeats) requiring longer intact DNA templates (typically 100-450 bp) for successful amplification [8].

- Single Nucleotide Polymorphisms (SNPs): Biallelic base substitutions distributed throughout the genome with minimal amplicon requirements (often <150 bp) [7].

- Microhaplotypes: Novel compound markers containing multiple closely linked SNPs within 200 bp, combining high polymorphism with minimal stutter artifacts [9].

Table 1: Comparative Characteristics of Forensic Genetic Markers

| Characteristic | STRs | SNPs | Microhaplotypes |

|---|---|---|---|

| Marker Type | Length polymorphism | Sequence polymorphism | Multi-SNP haplotype |

| Amplicon Size | 100-450 bp [8] | Typically <150 bp [7] | 60-150 bp [9] |

| Degradation Resistance | Low | High | High |

| Multiplex Capacity | Moderate (CE limited by dyes) | High (NGS/Microarrays) [8] | High |

| Stutter Artifacts | Present | Absent | Absent [9] |

| Mixture Deconvolution | Challenging | Moderate | Enhanced |

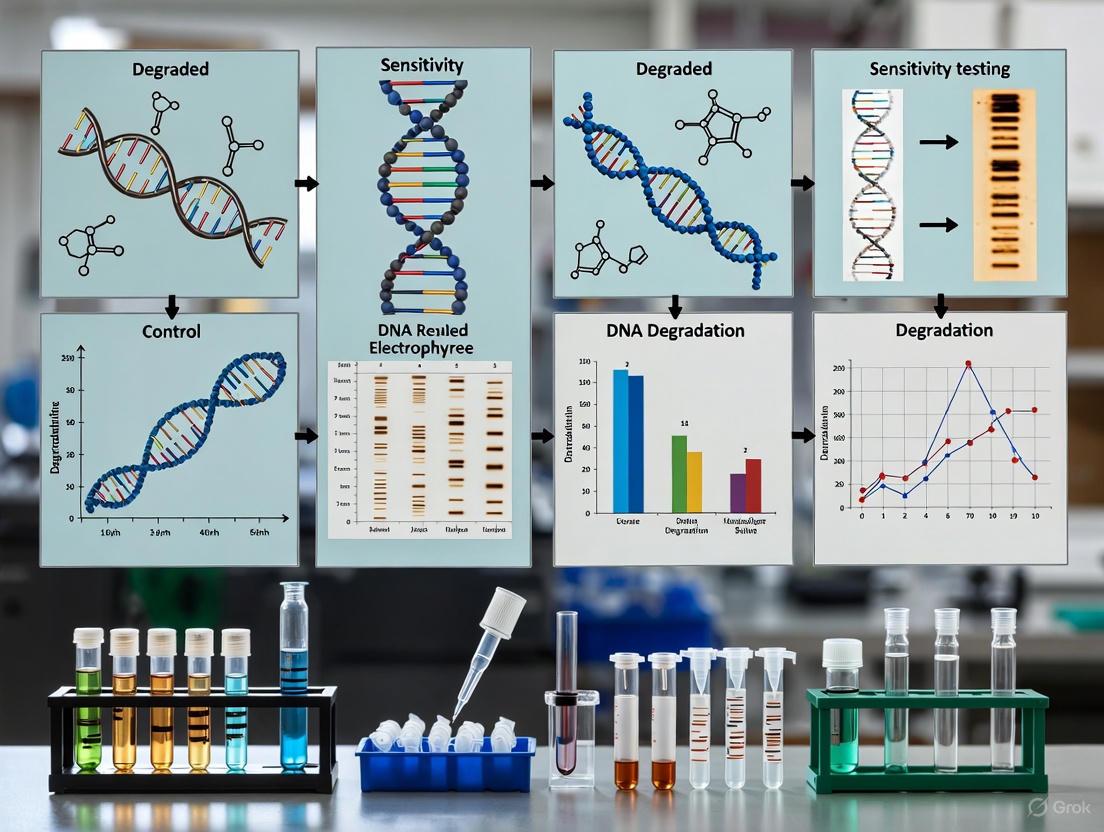

Figure 1: Impact of DNA Degradation on Forensic Marker Analysis. Environmental factors accelerate DNA fragmentation, disproportionately affecting STR analysis due to longer amplicon requirements compared to SNP and microhaplotype markers.

Experimental Approaches for Degraded DNA Analysis

Quantitative Assessment of DNA Degradation

Accurate quantification of DNA quality and quantity is essential before STR amplification. Traditional real-time quantitative PCR (qPCR) methods often fail with highly degraded samples where fragments are <150 bp [10]. A novel triplex droplet digital PCR (ddPCR) system has been developed to precisely quantify DNA degradation by targeting three autosomal conserved regions of 75 bp, 145 bp, and 235 bp fragment sizes [10]. This approach introduces a Degradation Rate (DR) indicator that combines absolute quantification of copy numbers for DNA fragments of varying sizes, enabling comprehensive evaluation of degradation severity.

Table 2: Performance Characteristics of Degradation Assessment Methods

| Method | Principle | Effective Range | Limitations | Advantages |

|---|---|---|---|---|

| Agarose Gel Electrophoresis | Visual fragment size distribution | High DNA concentrations [10] | Low precision, qualitative | Simple, inexpensive |

| qPCR with Degradation Index | Ratio of long:short amplicons | Mild-moderate degradation [10] | Fails with severe degradation (<150 bp) | Quantitative, established workflow |

| Triplex ddPCR System | Absolute quantification of 75/145/235 bp targets | All degradation levels [10] | Requires specialized equipment | High precision, inhibitor tolerant, absolute quantification |

Protocol: Triplex ddPCR Degradation Assessment

Principle: Simultaneous quantification of three target lengths (75 bp, 145 bp, 235 bp) using a ddPCR system to determine fragment length distribution and degradation rate [10].

Materials:

- QX200 Droplet Digital PCR System (Bio-Rad)

- Triplex ddPCR assay (75 bp, 145 bp, 235 bp targets)

- HiPure Universal DNA Kit (Magen Biotechnology)

- Degraded DNA samples (formalin-fixed paraffin-embedded tissues, aged blood samples)

Procedure:

- DNA Extraction: Extract DNA using HiPure Universal DNA Kit according to manufacturer's protocols.

- Reaction Setup:

- Prepare 20 μL reaction mixture containing:

- 10 μL of 2× ddPCR Supermix

- 1 μL of triplex primer-probe mix (optimized concentrations)

- 5 μL of template DNA

- 4 μL of nuclease-free water

- Include no-template controls for contamination assessment.

- Prepare 20 μL reaction mixture containing:

- Droplet Generation:

- Transfer 20 μL reaction mixture to DG8 cartridge.

- Add 70 μL of droplet generation oil.

- Place in QX200 Droplet Generator.

- PCR Amplification:

- Transfer emulsified samples to 96-well plate.

- Seal plate and run PCR with optimized thermal cycling conditions:

- 95°C for 10 min (initial denaturation)

- 40 cycles of: 94°C for 30 s, 60°C for 60 s

- 98°C for 10 min (enzyme deactivation)

- 4°C hold

- Droplet Reading and Analysis:

- Place plate in QX200 Droplet Reader.

- Analyze using QuantaSoft software to determine target copy numbers.

- Calculate Degradation Rate (DR) using the formula:

Interpretation: Lower DR values indicate more severe degradation, with negative values suggesting preferential loss of longer fragments.

Protocol: SNP-Based Analysis of Degraded DNA Using Microhaplotypes

Principle: Microhaplotypes (SNP-SNP markers) enable analysis of highly degraded DNA through short amplicons (60-150 bp) and absence of stutter artifacts, making them ideal for unbalanced degraded mixtures [9].

Materials:

- Amplification Refractory Mutation System (ARMS) PCR primers

- SNaPshot Multiplex Kit (Thermo Fisher Scientific)

- ABI 3500 Genetic Analyzer (Applied Biosystems)

- 15 SNP-SNP marker panel [9]

Procedure:

- ARMS-PCR Amplification:

- Design allele-specific primers for each SNP-SNP marker with amplicons <150 bp.

- Prepare 10 μL reaction mixture containing:

- 1× PCR buffer

- 2.5 mM MgCl₂

- 200 μM dNTPs

- 0.5 U DNA polymerase

- 1 μL template DNA (0.025-0.05 ng for sensitivity testing)

- Primer mix (optimized concentrations)

- Thermal cycling:

- 95°C for 5 min

- 32 cycles of: 95°C for 30 s, optimized annealing temperature for 30 s, 72°C for 30 s

- 72°C for 7 min

- SNaPshot Multiplex Reaction:

- Purify PCR products with shrimp alkaline phosphatase (SAP) and exonuclease I (Exo I)

- Prepare SNaPshot reaction:

- 3 μL purified PCR product

- 2 μL SNaPshot Multiplex Ready Reaction Mix

- 1 μL primer mix (0.5-1.0 μM each)

- Thermal cycling:

- 25 cycles of: 96°C for 10 s, 50°C for 5 s, 60°C for 30 s

- Capillary Electrophoresis:

- Purify SNaPshot products with SAP

- Mix 1 μL purified product with 8.7 μL Hi-Di formamide and 0.3 μL GeneScan-120 LIZ size standard

- Denature at 95°C for 5 min, snap-cool on ice

- Analyze on ABI 3500 Genetic Analyzer using POP-7 polymer

- Analyze data with GeneMapper ID-X v1.2 software

Validation: Test sensitivity with dilution series (1:1 to 1:1000 mixtures) and artificially degraded DNA (heat treatment at 98°C for 35-45 min) [9].

Figure 2: Decision Workflow for Analysis of Degraded Forensic Samples. The analytical pathway is determined by DNA quality assessment, with SNP-based methods preferred for severely degraded samples.

Advanced Applications and Emerging Technologies

Next-Generation Sequencing for Degraded DNA

Massively Parallel Sequencing (MPS) technologies enable simultaneous analysis of multiple marker types (STRs, SNPs, microhaplotypes) from degraded samples by leveraging short amplicon designs [11] [8]. MPS provides enhanced mixture deconvolution through linked SNP analysis and enables parallel investigation of biogeographical ancestry, phenotypic traits, and identity from minimal DNA [11]. For severely compromised samples, whole genome sequencing using ancient DNA (aDNA) techniques—originally developed for archaeological specimens—can recover genetic information from fragments as short as 50-70 bp [11].

Genetic Record-Matching Between SNP and STR Profiles

When degradation prevents STR profiling, genetic record-matching enables comparison between SNP profiles from degraded samples and existing STR databases [12]. This approach leverages linkage disequilibrium between STRs and neighboring SNPs to establish matches despite non-overlapping markers. The method shows promise even with low-coverage genome data (5-10% coverage), significantly expanding investigative possibilities for cold cases and unidentified remains [12].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents for Degraded DNA Analysis

| Reagent/Kit | Application | Key Features | Reference |

|---|---|---|---|

| HiPure Universal DNA Kit | DNA extraction from challenging samples | Optimized for degraded samples, inhibitor removal | [10] |

| QX200 Droplet Digital PCR System | Absolute quantification of degraded DNA | High precision, tolerant to PCR inhibitors | [10] |

| PowerPlex 35GY System | STR analysis with mini-STRs | Includes 15 mini-STRs (<250 bp) for degraded DNA | [8] |

| SNaPshot Multiplex Kit | SNP/microhaplotype multiplexing | CE-based, minimal amplicon requirements | [9] |

| MagMAX cfDNA Isolation Kit | Cell-free DNA extraction | Optimized for short fragments (e.g., cffDNA) | [9] |

| GlobalFiler PCR Amplification Kit | Conventional STR analysis | 21+ markers, requires high DNA quality | [8] |

DNA degradation presents significant challenges for traditional STR analysis due to the preferential amplification of shorter fragments in compromised samples. SNPs and microhaplotypes offer superior performance with degraded DNA through their minimal amplicon requirements and absence of stutter artifacts. The triplex ddPCR system provides a precise method for quantifying degradation severity and predicting STR amplification success. For forensic laboratories handling challenging samples, implementing a tiered approach—beginning with DNA quality assessment, followed by marker selection appropriate for the degradation level—maximizes the likelihood of obtaining usable genetic profiles. Emerging technologies including MPS and genetic record-matching further expand the possibilities for extracting investigative leads from even the most severely degraded forensic evidence.

Deoxyribonucleic acid (DNA) is a fundamental molecule in forensic science, providing unique genetic information that enables the identification of individuals from biological evidence [13]. However, the integrity of DNA is often compromised between the time of deposition at a crime scene and its analysis in the laboratory. DNA degradation is a dynamic process that fragments the DNA molecule into progressively smaller pieces, presenting significant challenges for forensic genetic analysis [13] [7]. Despite these challenges, advanced extraction and analytical methods now enable the study of poorly preserved and degraded DNA, making it a potent tool in forensic investigations [13] [7].

Understanding the sources, types, and degradation mechanisms of forensic samples is crucial for selecting appropriate analytical strategies. This knowledge forms the essential context for sensitivity testing of forensic DNA panels, as the performance of genetic markers is directly influenced by the preservation state of the DNA template [7]. The extent of DNA preservation in biological evidence depends on numerous factors, with environmental variables being among the most influential [7]. This article provides a comprehensive overview of degraded forensic samples, from cold cases to ancient DNA, and presents detailed protocols for their analysis within the framework of sensitivity testing for forensic DNA panels.

Mechanisms and Impact of DNA Degradation

Biochemical Mechanisms of DNA Degradation

Upon an organism's death, or when biological material is separated from its source, enzymatic DNA repair mechanisms cease, exposing the genome to destructive factors [7]. The degradation process occurs through several biochemical mechanisms:

- Hydrolytic damage: Water molecules attack the DNA, leading to depurination (loss of nitrogenous bases) and deamination of cytosine to uracil [7]. These lesions can cause strand breaks and miscoding during polymerase chain reaction (PCR) amplification.

- Oxidative damage: Free radicals and reactive oxygen species cause base modifications, sugar alterations, and strand breaks [13] [7].

- Enzymatic cleavage: Endogenous nucleases become activated and begin cleaving the DNA backbone, followed by exogenous enzymatic attack from microbes that colonize decomposing remains [7].

- UV radiation: Exposure to ultraviolet light induces the formation of cyclobutane pyrimidine dimers between neighboring pyrimidines, which distort the DNA helix and block polymerase activity [14] [7].

The most visible outcome of these processes is fragmentation, where long, continuous DNA strands are cleaved into shorter pieces, typically ranging from 50 to 500 base pairs [7]. This fragmentation directly impacts the success of subsequent genetic analysis, as the reduced size of DNA fragments may hamper the performance of standard genetic tests [14].

Impact on Genetic Analysis

The effectiveness of forensic DNA profiling, particularly short tandem repeat (STR) analysis, depends on how well the DNA in the biological sample has been preserved [7]. STR markers typically display fragment sizes between 100 to 450 base pairs, and their successful analysis primarily depends on the availability of intact target molecules within that size range [14]. With increasing degradation of nuclear DNA, STR profiles become incomplete due to preferential amplification of shorter fragments, resulting in loss of discriminatory power [14] [7].

Table 1: Impact of DNA Degradation on Genetic Markers

| Genetic Marker | Typical Amplicon Size | Impact of Degradation | Suitable for Degraded DNA? |

|---|---|---|---|

| Standard STRs | 100-450 bp [14] | Significant dropout of larger loci [7] | Limited |

| Mini-STRs | <100-150 bp | Reduced dropout compared to standard STRs | Yes [15] |

| Autosomal SNPs | 50-150 bp [7] | Minimal impact due to short length | Yes [7] [16] |

| Mitochondrial DNA | Variable (can target <50 bp) [14] | Minimal impact due to high copy number and circular structure | Yes [7] |

| Identity SNPs | ~100 bp [16] | Minimal impact with optimized panels | Yes [16] |

Forensic scientists encounter degraded DNA across diverse sample types, each with characteristic preservation challenges and degradation patterns. Understanding these sources is crucial for selecting appropriate analytical approaches.

Sample Typology and Degradation Characteristics

Table 2: Forensic Sample Types and Their Degradation Characteristics

| Sample Type | Common Sources | Degradation Factors | Typical DNA Fragment Size | Recommended Analysis Methods |

|---|---|---|---|---|

| Skeletal Remains | Cold cases, mass disasters, ancient DNA [7] | Environmental exposure, microbial activity, time [7] | 50-200 bp [7] | NGS, mtDNA, targeted SNPs [7] |

| Formalin-Fixed Paraffin-Embedded (FFPE) Tissues | Medical archives, pathological specimens [7] | Protein-DNA crosslinking, nucleic acid fragmentation [7] | <100-300 bp [17] | Specialized extraction, RC-PCR [16] |

| Touch DNA | Crime scene evidence [16] | Low quantity, environmental exposure, inhibitor presence [16] | Variable (often fragmented) [16] | Enhanced amplification, mini-STRs [15] |

| Ancient DNA | Archaeological specimens, historical remains [13] | Extreme age, hydrolytic damage, oxidative damage [7] | 30-100 bp [7] | NGS, specialized authentication [13] |

| Burned/Charred Remains | Arson cases, fire scenes [13] | Thermal degradation, oxidation [13] | Highly fragmented (<100 bp) [13] | Mini-STRs, SNPs, mtDNA [7] |

| Hair Shafts | Crime scene evidence, missing persons cases [18] | Keratinization, low nuclear DNA content | Primarily mtDNA [7] | mtDNA sequencing, NGS [7] |

Environmental Factors Influencing DNA Degradation

The preservation of DNA postmortem is determined by a complex interplay of environmental conditions that collectively influence degradation rates [7]:

Temperature: Considered the most influential factor, temperature controls the kinetic energy of all atoms and molecules, dictating the rate of every chemical reaction, including hydrolysis and oxidation [7]. A 10°C rise can double or triple the rate of destructive chemical processes [7]. Constant cold environments like permafrost show exceptional DNA preservation, while high heat conditions accelerate degradation [7].

Humidity and Water Activity: Moisture acts as both a necessary reactant in hydrolysis and a prerequisite for microbial life [7]. Environments with high water activity are extremely detrimental to DNA preservation.

Ultraviolet Radiation: UV exposure, particularly UV-C light at 254 nm, causes photochemical changes in DNA, including cyclobutane pyrimidine dimers and 6-4-photoproducts [14]. These lesions reduce the amount of intact, amplifiable DNA available for PCR-based genetic analysis [14].

pH: Highly acidic or alkaline conditions catalyze chemical degradation, while neutral to slightly alkaline pH is generally most favorable for preservation [7]. Soil pH profoundly affects preservation, with decomposition proceeding up to three times faster in acidic soils compared to alkaline counterparts [7].

Microbial Activity: Microorganisms colonize decomposing remains and biological traces, releasing nucleases that further fragment genetic material [7]. Microbial activity is heavily influenced by temperature, moisture, and oxygen availability.

Time: The duration of exposure to environmental conditions is a critical variable, with prolonged intervals generally leading to accelerated and more comprehensive degradation [7].

Experimental Protocols for Degraded DNA Analysis

Artificial DNA Degradation Protocol

For sensitivity testing of forensic DNA panels, generating artificially degraded DNA with predictable fragment sizes is essential for method validation and optimization [14]. The following protocol uses UV-C irradiation to produce controlled DNA degradation.

Protocol Title: Rapid, Reproducible Generation of Artificially Degraded DNA Using UV-C Irradiation [14]

Principle: UV-C light at 254 nm induces photochemical changes in DNA, including cyclobutane pyrimidine dimers and 6-4-photoproducts, leading to predictable fragmentation patterns suitable for mimicking naturally degraded forensic samples [14].

Materials and Equipment:

- DNA extracts (1 ng/μL, 7 ng/μL, 14 ng/μL concentrations)

- UV-C irradiation unit equipped with three 30 W G13 germicidal lamps (main spectral line at 254 nm)

- 0.6 mL microtubes (Axygen, Corning Life Sciences)

- Real-time quantitative PCR system (e.g., QuantStudio 5)

- SD quants real-time PCR assay [14]

- STR typing kit (e.g., AmpFLSTR NGM SElect PCR Amplification Kit)

Procedure:

- Sample Preparation: Extract DNA from whole blood using standardized methods (e.g., QIAamp DNA Blood Maxi Kit). Quantify DNA via real-time quantitative PCR targeting both nuclear and mitochondrial DNA regions (69 bp and 143 bp) [14].

- Dilution and Aliquoting: Dilute DNA with low TE buffer (10 mM Tris, 0.1 mM EDTA, pH 8) to prepare stock solutions of 1 ng/μL, 7 ng/μL, and 14 ng/μL. Prepare aliquots of 10 μL or 20 μL in 0.6 mL microtubes [14].

- UV-C Exposure: Place sample aliquots in microtubes laid on their side on the laboratory bench under the UV-C light source at a distance of approximately 11 cm from the lamps. Expose aliquots to UV-C radiation for timed intervals (0-5 minutes), removing replicates at 30-second intervals [14].

- Post-Irradiation Analysis: Quantify degraded DNA aliquots using SD quants on a real-time PCR system. Analyze by STR typing on a genetic analyzer. Calculate degradation index (DI) by dividing DNA quantity of long target (mt143bp) by that of short target size (mt69bp) [14].

Quality Control: Include non-irradiated controls in each experiment. Monitor degradation pattern reproducibility across technical replicates. Validate fragmentation patterns using capillary electrophoresis.

Analysis of Degraded DNA Using Modified PCR Methods

For analyzing highly degraded DNA samples where conventional STR typing fails, alternative approaches targeting shorter fragments are necessary.

Protocol Title: Reverse Complement PCR (RC-PCR) for Analysis of Highly Degraded DNA [16]

Principle: RC-PCR is a novel target enrichment and library preparation method for next generation sequencing that uses two reverse-complement, target-specific primer probes and a universal primer to generate target-specific index primers capable of multiplex amplification of target regions [16].

Materials and Equipment:

- RC-PCR 85-plex SNP panel (e.g., IDseek SNP85 panel)

- Reverse complement PCR reagents

- Next generation sequencing platform

- DNA quantification system (e.g., qPCR)

- Fragmented DNA samples (50-100 bp fragment size)

Procedure:

- DNA Quantification: Quantify input DNA using sensitive qPCR methods. For highly degraded samples, use assays targeting short fragments (50-100 bp) to accurately assess amplifiable DNA content [16].

- RC-PCR Reaction Setup: Set up reactions according to manufacturer specifications. The closed-tube system combines target enrichment and indexing in a single reaction, reducing handling steps and contamination risk [16].

- Library Preparation: The RC-PCR method automatically generates sequencing-ready libraries through the hybridization and extension processes.

- Sequencing and Analysis: Perform sequencing on appropriate NGS platform. Analyze data using specialized software to call SNP genotypes.

Performance Characteristics: Preliminary tests of the RC-PCR 85-plex demonstrate sensitivity with 78% of SNP loci recovered at 31 pg input DNA and over 99% recovery at 62 pg input. Allele dropout rates of 6-8% have been observed at low DNA inputs [16].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Essential Research Reagents for Degraded DNA Analysis

| Reagent/Material | Function | Application Notes | References |

|---|---|---|---|

| UV-C Irradiation Unit | Artificial degradation of DNA for validation studies | Custom-built unit with three 30 W G13 germicidal lamps (254 nm) at ~11 cm distance | [14] |

| SD Quants Real-Time PCR Assay | DNA quantification and degradation assessment | Targets one nuclear and two differently sized mtDNA regions (69 bp and 143 bp) | [14] |

| Reverse Complement PCR (RC-PCR) Kit | Target enrichment and library preparation for degraded DNA | Enables analysis of DNA fragmented to 50-100 bp; 85-plex SNP panel available | [16] |

| Magnetic Bead-Based Extraction Kits | DNA extraction from challenging samples | Effective for bone, teeth, and formalin-fixed tissues; enables inhibitor removal | [7] [17] |

| Next-Generation Sequencing Kits | Comprehensive analysis of fragmented DNA | ForenSeq DNA Signature Prep Kit includes STRs, SNPs; suitable for degraded samples | [15] |

| Mini-STR Amplification Kits | STR analysis of degraded DNA | Shorter amplicons (typically <200 bp) reduce dropout in degraded samples | [15] |

| Quantifiler Trio DNA Quantification Kit | DNA quantification and degradation assessment | Simultaneously targets long and short genomic targets to calculate degradation index | [15] |

The analysis of degraded DNA samples presents significant challenges in forensic genetics, but ongoing technological advancements continue to expand the limits of what is possible. Understanding the sources, types, and degradation mechanisms of forensic samples provides the essential foundation for developing and validating sensitive DNA analysis panels. The protocols and methodologies described herein, from artificial degradation models to advanced amplification techniques, offer forensic researchers comprehensive tools for sensitivity testing of forensic DNA panels. As the field evolves with emerging technologies like NGS, microfluidic platforms, and advanced bioinformatics, the ability to extract meaningful genetic information from even the most compromised samples will continue to improve, enhancing the power of forensic science to deliver justice in increasingly challenging cases.

In forensic genetic casework, the analysis of compromised deoxyribonucleic acid (DNA) from challenging samples—such as ancient bones, formalin-fixed tissues, or environmentally exposed crime scene evidence—is a routine challenge. DNA degradation is a dynamic process that progressively fragments the DNA molecule, directly impacting the analytical sensitivity of forensic DNA panels and the reliability of generated profiles [13]. Defining sensitivity benchmarks for these compromised samples is therefore not merely an academic exercise but a fundamental laboratory requirement. It ensures that the data presented in court are both scientifically robust and reproducible, forming the core of a reliable forensic service. This document outlines standardized protocols and metrics for establishing laboratory-specific sensitivity thresholds for degraded DNA samples, framed within the broader context of validation requirements for forensic DNA testing laboratories [19] [20].

The integrity of DNA is compromised by factors including hydrolysis, oxidation, and ultraviolet radiation, which break the sugar-phosphate backbone and nitrogenous base bonds [13]. This fragmentation process results in a reduction of amplifiable template, particularly affecting larger polymerase chain reaction (PCR) amplicons. Consequently, establishing sensitivity benchmarks requires quantifying not just the amount of human DNA present, but also its quality. These benchmarks are critical for making informed decisions during the analytical process, such as selecting the most appropriate short tandem repeat (STR) amplification kit or interpreting partial DNA profiles with confidence [21].

Quantitative Assessment of DNA Degradation

The precise determination of human DNA concentration and integrity is a critical first step in the analytical workflow. Modern forensic quantitative PCR (qPCR) assays enable simultaneous quantification of total human DNA, determination of the presence of male DNA, assessment of PCR inhibitors, and, crucially, evaluation of the DNA degradation status [21].

The Principle of Differential Quantification

DNA degradation is quantitatively assessed using multi-target qPCR assays. These assays target genomic regions of differing lengths; in a pristine DNA sample, the concentration ratio between these targets is approximately 1:1. However, in a degraded sample, the longer target is more susceptible to fragmentation, leading to a reduced apparent concentration compared to the shorter target [21]. The ratio between the concentrations of the small and large targets provides a Degradation Index (DI), a quantitative measure of DNA fragmentation.

- PowerQuant System (Promega): This system uses an 84 bp (Auto) target and a 294 bp (D) target, both autosomal, to calculate an [Auto]/[D] ratio [21].

- Plexor HY System (Promega): A study demonstrated that the ratio between the estimated concentrations of a 99 bp autosomal target and a 133 bp Y-chromosomal target ([Auto]/[Y]) can serve as a proxy for degradation in male samples. This ratio showed a statistically significant inverse correlation (R = -0.65, p < 0.001) with STR profile quality scores [21].

- Quantifiler Trio (Thermo Fisher Scientific): This and other similar kits operate on the same fundamental principle of differential amplification efficiency based on template length.

Key Quantitative Metrics and Correlations

The quantitative data derived from qPCR is used to establish key sensitivity benchmarks. The following table summarizes the core metrics and their implications for downstream STR analysis.

Table 1: Key Quantitative Metrics for Assessing Degraded DNA

| Metric | Typical Calculation | Interpretation | Correlation with STR Data |

|---|---|---|---|

| Human DNA Concentration | ng/μL (from small target, e.g., 84 bp) | The effective quantity of amplifiable DNA; used for normalizing input into STR amplification. | Directly impacts peak heights; low concentration (<0.1 ng/μL) increases stochastic effects [21]. |

| Degradation Index (DI) | [Small Target]/[Large Target] (e.g., [Auto]/[D]) | A ratio >1 indicates degradation; higher values signify greater fragmentation. | Statistically significant inverse correlation with profile quality scores (e.g., average peak height) [21]. |

| Male DNA Concentration | ng/μL (Y-chromosomal target, e.g., 133 bp) | Quantity of male DNA in a sample; critical for analyzing mixtures. | In degraded male samples, the [Auto]/[Y] ratio increases as the longer Y-target fails to amplify efficiently [21]. |

| Inhibition Indicator | Cycle threshold (CT) shift of Internal PCR Control (IPC) | A CT shift ≥2 cycles (or ≥0.3 Cq) indicates presence of PCR inhibitors. | Can cause peak height imbalance, locus-to-locus drop-out, or complete amplification failure [21]. |

Experimental Protocol for Establishing Sensitivity Benchmarks

This protocol provides a detailed methodology for conducting a degradation sensitivity study, a critical component of the validation process for implementing a new forensic DNA testing method [20].

Sample Preparation and Artificial Degradation

Objective: To create a calibrated series of degraded DNA samples for testing. Materials:

- Commercially available human genomic DNA (e.g., from cell lines).

- DNase I (e.g., from Qiagen or Thermo Fisher Scientific).

- DNase I reaction buffer.

- Thermonixer or water bath.

- EDTA for reaction termination.

Procedure:

- Dilution: Dilute the human genomic DNA to a working concentration of 50 ng/μL in nuclease-free water or TE buffer.

- Degradation Series Setup: Set up a series of 0.5 mL microcentrifuge tubes. To each tube, add 1 μg (20 μL of 50 ng/μL) of DNA and the appropriate volume of 10X DNase I buffer.

- Enzymatic Digestion: Add varying amounts of DNase I (e.g., 0, 0.5, 1, 2, 4 mU) to each tube. Adjust the final volume to 50 μL with nuclease-free water.

- Incubation: Incubate the reactions at 25°C for 10 minutes. To control the degree of degradation, use a gradient of incubation times (e.g., 5, 10, 20 minutes) with a fixed enzyme concentration.

- Reaction Termination: Stop the digestion by adding 5 μL of 50 mM EDTA to each tube and heating at 65°C for 10 minutes.

- Purification (Optional): Purify the degraded DNA using a commercial clean-up kit (e.g., QIAamp DNA Mini Kit) to remove enzymes and salts. Elute in a final volume of 50 μL.

- Verification: Verify the success of the degradation by running an aliquot (e.g., 50 ng) of each sample on a 1.5% agarose gel. A successful degradation series will show a progressive smearing of DNA from high to low molecular weight.

qPCR Quantification and STR Profiling

Objective: To correlate quantitative DNA metrics with STR profiling success. Materials:

- PowerQuant or Quantifiler Trio qPCR kit.

- Real-Time PCR instrument (e.g., CFX96 Touch, 7500 Real-Time PCR System).

- STR amplification kit (e.g., GlobalFiler, Identifier).

- Genetic Analyzer for capillary electrophoresis.

Procedure:

- Quantification: Quantify all samples in the degradation series, including the non-degraded control, in duplicate using the selected qPCR kit according to the manufacturer's instructions [21].

- Data Analysis: Calculate the Human DNA Concentration (from small target), Degradation Index ([Auto]/[D] or equivalent), and assess inhibition for each sample.

- STR Amplification: Normalize all samples to the same input concentration (e.g., 0.5 ng based on the small target concentration) for STR amplification. Perform PCR amplification following the kit's standard protocol.

- Capillary Electrophoresis: Analyze the PCR products on a genetic analyzer according to established laboratory protocols.

- STR Data Analysis: Generate STR profiles and record key quality metrics, including:

- Average Peak Height (RFU)

- Profile Completeness (% of alleles called)

- Heterozygote Peak Height Ratio

- Intra-locus Peak Height Balance

Data Analysis and Benchmark Definition

Objective: To define laboratory-specific sensitivity thresholds for degraded DNA. Procedure:

- Correlation Analysis: Plot the Degradation Index (DI) against STR profile quality metrics (e.g., Average Peak Height, Profile Completeness). Perform linear or non-linear regression analysis to determine the correlation coefficient (R value), as demonstrated in studies showing an inverse correlation (R = -0.65) between [Auto]/[Y] and quality score [21].

- Threshold Establishment: Define the maximum acceptable DI value for obtaining a "usable" or "interpretable" profile based on your laboratory's internal data interpretation guidelines. For instance, a laboratory may set a benchmark that a DI ≤ 5 is required to achieve a profile with ≥70% completeness and average peak heights ≥200 RFU.

- Decision Matrix: Develop a standard operating procedure based on the established benchmarks. The workflow below outlines a logical decision process for analyzing a casework sample based on qPCR data.

The Scientist's Toolkit: Research Reagent Solutions

Successful analysis of compromised DNA requires a suite of specialized reagents and kits. The following table details essential materials and their functions in the workflow.

Table 2: Essential Research Reagents for Degraded DNA Analysis

| Reagent / Kit | Primary Function | Key Features for Degraded DNA |

|---|---|---|

| PowerQuant System (Promega) | Simultaneous DNA quantification, degradation, and inhibition assessment. | Contains an 84 bp (small autosomal) and a 294 bp (large autosomal) target for calculating a Degradation Index ([Auto]/[D]) [21]. |

| Quantifiler Trio (Thermo Fisher) | Simultaneous DNA quantification, degradation, and inhibition assessment. | Provides a multi-copy target for human DNA quantification and a synthetic IPC for robust inhibition detection. |

| QIAamp DNA FFPE Tissue Kit (Qiagen) | DNA extraction from formalin-fixed, paraffin-embedded (FFPE) tissues. | Specialized buffers reverse formalin-induced crosslinks, maximizing recovery of fragmented DNA [21]. |

| DNase I (RQ1 RNase-Free DNase, Promega) | Enzymatic digestion of DNA for creating controlled degradation. | Used in validation studies to artificially degrade high-quality DNA for establishing sensitivity thresholds (see Protocol 3.1). |

| Mini-STR Amplification Kits | PCR amplification of highly degraded DNA. | Amplify shorter STR amplicons (<200 bp) to overcome the drop-out of larger loci in degraded samples [13]. |

| Next-Generation Sequencing (NGS) Systems | Massively parallel sequencing of DNA fragments. | Enables sequencing of ultra-short amplicons, providing data from severely degraded samples where CE methods fail [13]. |

Implementation and Compliance

Integrating these sensitivity benchmarks into laboratory practice is mandated by quality assurance standards. The FBI's Quality Assurance Standards (QAS) for Forensic DNA Testing Laboratories, which take effect on July 1, 2025, require laboratories to define and validate the performance characteristics of their methodologies [19]. The data generated from the protocols described herein directly fulfill the validation requirements outlined in Section 8 of these standards, providing objective evidence of a method's reliability with compromised samples [20]. Furthermore, the Scientific Working Group on DNA Analysis Methods (SWGDAM) revised validation guidelines recommend examining at least 50 samples as part of a comprehensive validation study, a sample size that can be effectively achieved using the artificial degradation series described [20].

Laboratories must document all validation data, including the established sensitivity benchmarks and decision matrices, in their standard operating procedures. This documentation is critical for demonstrating technical competency during audits and for ensuring the admissibility of DNA evidence in legal proceedings. The workflow for analyzing a casework sample, once validated, provides a defensible and standardized approach for handling the complex samples frequently encountered in forensic casework.

Methodological Arsenal: Techniques for Profiling and Sequencing Degraded DNA

Optimizing Short Tandem Repeat (STR) Analysis for Fragmented DNA

In forensic genetics, the analysis of degraded DNA remains a significant challenge, often compromising the effectiveness of conventional Short Tandem Repeat (STR) typing. DNA degradation initiates immediately after an organism's death, driven by enzymatic breakdown, hydrolytic processes, and oxidative damage [7]. These processes fragment the DNA molecule into progressively shorter pieces, directly impacting the success of PCR-based methods that require intact template DNA for amplification of longer target sequences [7]. Environmental factors such as temperature, humidity, ultraviolet radiation, pH, and microbial activity significantly influence the rate and extent of DNA degradation [7]. When DNA becomes fragmented, the maximum achievable amplicon length through polymerase chain reaction (PCR) is inherently limited, leading to allele dropout, incomplete profiles, and reduced overall success of STR analysis [7] [22].

The limitations of traditional STR analysis have prompted the development of optimized methodologies for recovering genetic information from compromised samples. This document outlines advanced protocols and application notes for optimizing STR analysis specifically for fragmented DNA, providing forensic researchers and scientists with structured approaches to overcome these challenges. The strategies discussed here include procedural refinements from extraction through interpretation, the implementation of alternative genetic markers, and the adoption of novel sequencing technologies that collectively enhance the recovery of informative data from degraded forensic specimens.

Methodological Approaches for Degraded DNA Analysis

Strategic Framework for Fragment Analysis

Optimizing STR analysis for degraded DNA requires a comprehensive strategy that addresses each stage of the forensic workflow. The fundamental principle involves targeting shorter DNA fragments that are more likely to persist in compromised samples [7]. This can be achieved through multiple complementary approaches:

- Reduced Amplicon Size Strategies: Implementing mini-STR primers that target smaller regions flanking the standard STR loci increases the probability of amplifying degraded templates [7] [22].

- Enhanced Sensitivity Protocols: Modifying amplification conditions, such as increasing cycle numbers or adjusting reaction components, can improve signal recovery from low-template DNA [23].

- Advanced Sequencing Technologies: Utilizing next-generation sequencing (NGS) enables simultaneous analysis of multiple marker types (STRs, SNPs) with shorter amplicon requirements, providing a more comprehensive genetic profile from degraded samples [11] [24].

The following table summarizes the primary genetic markers used in forensic analysis and their comparative performance with degraded DNA:

Table 1: Comparison of Forensic Genetic Markers for Degraded DNA Analysis

| Marker Type | Average Amplicon Size | Degraded DNA Performance | Primary Advantages | Key Limitations |

|---|---|---|---|---|

| Standard STRs | 200-500 bp | Poor for heavily degraded samples | High discrimination power, standardized databases | Large amplicon size susceptible to dropout |

| miniSTRs | 70-250 bp | Good | Maintains STR discrimination with smaller targets | Requires separate amplification systems |

| iiSNPs | <150 bp | Excellent | Very short amplicons, suitable for highly degraded DNA | Biallelic nature requires more loci for discrimination |

| X-STRs | Varies (NGS provides sequence data) | Good with NGS | Specialized kinship applications, sequence polymorphism | More complex interpretation, population data limited |

| mtDNA | Variable (can target hypervariable regions) | Excellent | High copy number per cell, more persistent | Maternal inheritance only, lower discrimination power |

DNA Extraction and Quantitation Optimization

Effective recovery of degraded DNA begins with optimized extraction protocols specifically designed for compromised samples. The Organic Extraction method and QIAcube/EZ1 systems have demonstrated particular efficacy for challenging forensic samples [25]. For skeletal remains, specialized bone DNA extraction protocols incorporate extended digestion times and demineralization steps to recover DNA from the mineral matrix [25] [2]. A critical consideration is balancing effective sample disruption with DNA preservation, as overly aggressive mechanical processing can cause excessive shearing and further degrade already fragmented DNA [2].

For formalin-fixed paraffin-embedded (FFPE) tissues, which present unique challenges due to formalin-induced fragmentation and cross-linking, specialized kits like the Maxwell RSC Xcelerate DNA FFPE Kit have shown improved DNA recovery, though STR profile completeness may remain limited even with optimized extraction [22]. Successful extraction from these challenging samples often requires:

- Strategic use of protein-degrading enzymes (e.g., proteinase K) with extended incubation [22]

- Chemical demineralization for skeletal elements using EDTA, with careful optimization to avoid PCR inhibition [2]

- Mechanical homogenization with controlled parameters (speed, cycle duration, temperature) to minimize additional DNA fragmentation [2]

- Combined chemical and mechanical methods for particularly resistant samples like bone [2]

Following extraction, accurate DNA quantification is essential for determining the appropriate input for downstream STR analysis. The Quantifiler Trio DNA Quantification Kit provides valuable quality metrics, including degradation indices, that guide protocol selection and interpretation strategies for compromised samples [25].

Amplification and Analysis Enhancements

For low-template and degraded DNA, conventional PCR amplification often produces suboptimal results characterized by stochastic effects. The abasic-site-mediated semi-linear preamplification (abSLA PCR) method represents a significant advancement for these challenging samples [23]. This innovative approach utilizes primers containing abasic sites that prevent nascent strands from serving as templates in subsequent cycles, thereby minimizing error accumulation and achieving high-fidelity amplification [23].

The abSLA PCR method has demonstrated improved sensitivity and allele recovery from trace DNA samples when coupled with standard STR kits like the Identifiler Plus system [23]. Implementation requires careful optimization of abasic site positioning, with locations at the 8th to 10th bases from the 3' end of primers proving most effective for facilitating PCR amplification [23]. This method significantly enhances the recovery of STR loci in low-template genomic DNA and single-cell analyses, making it particularly valuable for the most challenging forensic evidence.

For capillary electrophoresis analysis of degraded samples, analytical thresholds may require adjustment to account for increased baseline noise and stochastic effects. The New York City Office of Chief Medical Examiner protocols recommend specific GeneMarker Quality Reasons Index parameters and ReRun Codes for samples producing partial or complex profiles [25]. Additionally, probabilistic genotyping software such as STRmix v2.7 provides advanced interpretation capabilities for complex mixtures and partial profiles derived from degraded samples [25].

Advanced Technologies and Future Directions

Next-Generation Sequencing Applications

Next-generation sequencing (NGS) technologies have revolutionized forensic analysis of degraded DNA by enabling simultaneous sequencing of multiple genetic markers with significantly enhanced resolution. Unlike capillary electrophoresis, which detects only length-based polymorphisms, NGS identifies sequence-level variations within STR repeats, substantially increasing discriminatory power and providing more genetic information from compromised samples [11] [24].

The implementation of NGS panels specifically designed for forensic applications, such as the 55-plex X-STR NGS panel, demonstrates the technology's capacity to overcome limitations of traditional methods [24]. This comprehensive system captures both length and sequence polymorphisms across 55 X-chromosomal STR loci, providing enhanced discrimination power particularly valuable for complex kinship cases involving degraded samples [24]. The technology has shown robust performance with low-template DNA, degraded samples, and mixtures—all common challenges in forensic casework with compromised evidence [24].

Massively parallel sequencing also facilitates the simultaneous analysis of multiple marker types (autosomal STRs, Y-STRs, X-STRs, and SNPs) in a single workflow, maximizing information recovery from limited or degraded samples [11]. This multi-marker approach is particularly beneficial when sample quantity is insufficient for multiple separate analyses. The integration of NGS into forensic workflows represents a significant advancement in degraded DNA analysis, with continuing improvements in sequencing chemistry and bioinformatics promising further enhancements.

Single Nucleotide Polymorphisms and Forensic Genetic Genealogy

For severely degraded DNA where STR analysis fails entirely, single nucleotide polymorphisms (SNPs) offer a powerful alternative approach. SNPs possess several advantages for degraded DNA analysis: their shorter amplicon requirements (typically under 150 base pairs) align well with the fragment sizes preserved in degraded samples, and their genome-wide distribution provides abundant targets for analysis [11] [7].

The implementation of forensic genetic genealogy (FGG) represents a paradigm shift in analyzing compromised samples from cold cases and unidentified remains [11]. This approach leverages dense SNP testing (hundreds of thousands of markers) to establish familial connections well beyond first-degree relationships, generating investigative leads through pedigree development [11]. While FGG typically utilizes different markers than traditional STR analysis, the underlying principle of adapting genetic analysis to the limitations of degraded DNA remains consistent.

Beyond kinship analysis, SNP testing enables biogeographical ancestry inference and forensic DNA phenotyping, which can provide investigative leads in cases where no reference samples are available for comparison [11]. These complementary applications further enhance the utility of genetic analysis from degraded samples when conventional STR typing fails to produce actionable results.

Research Reagent Solutions

Table 2: Essential Research Reagents for STR Analysis of Fragmented DNA

| Reagent/Kit | Primary Function | Application in Degraded DNA Analysis |

|---|---|---|

| QIAamp DNA Investigator Kit | DNA extraction from challenging forensic samples | Optimized protocol for recovery of fragmented DNA from various substrate types [23] |

| Maxwell RSC Xcelerate DNA FFPE Kit | DNA extraction from formalin-fixed tissues | Specialized formulation for reversing formalin-induced cross-links and recovering fragmented DNA [22] |

| Quantifiler Trio DNA Quantification Kit | DNA quantification and quality assessment | Provides degradation index (DI) to guide optimal input amount for STR amplification [25] |

| PowerPlex Fusion System | Multiplex STR amplification | Commercial kit with optimized chemistry for challenging samples, compatible with CE platforms [25] |

| ForenSeq DNA Signature Prep Kit | NGS library preparation for forensic samples | En simultaneous analysis of STRs and SNPs with sequence-level resolution from degraded templates [11] [26] |

| Phusion Plus DNA Polymerase | High-fidelity PCR amplification | Used in specialized methods like abSLA PCR for improved allele recovery from low-template DNA [23] |

| Proteinase K | Enzymatic digestion of proteins | Critical for breaking down cellular structures and nucleases that would otherwise degrade DNA further during extraction [22] |

Experimental Protocols

abSLA PCR Preamplification for Low-Template DNA

The following protocol describes the abasic-site-mediated semi-linear preamplification method for enhancing STR recovery from low-template and degraded DNA samples [23]:

Principle: This method utilizes primer pairs consisting of one normal primer and one primer containing an abasic site. The abasic site prevents nascent strands from serving as templates in subsequent cycles by eliminating primer-binding sites, ensuring only the original template and its primary products are replicated, thereby minimizing error accumulation.

Reagents:

- Phusion Plus DNA Polymerase (2× Master Mix)

- Custom abasic primers (positioned 8th-10th base from 3' end)

- Normal primers for STR loci of interest

- Template DNA (degraded or low-template)

- dNTP mix

- Molecular biology grade water

Procedure:

- Prepare reaction mix in a total volume of 10 μL containing:

- 5 μL 2× Phusion Plus PCR Master Mix

- 1 μL primer mix (containing both abasic and normal primers)

- 1-3 μL DNA template

- Molecular biology grade water to volume

Perform thermal cycling with the following parameters:

- Initial denaturation: 98°C for 30 seconds

- 10-15 cycles of:

- Denaturation: 98°C for 10 seconds

- Annealing: 60°C for 30 seconds

- Extension: 72°C for 30 seconds

- Final extension: 72°C for 5 minutes

- Hold at 4°C

Use 1-2 μL of the abSLA product as template for subsequent standard STR amplification using commercial kits (e.g., Identifiler Plus).

Analyze PCR products using capillary electrophoresis according to manufacturer recommendations.

Validation: The efficiency of abSLA preamplification should be assessed using absolute quantitative real-time PCR with serial dilutions of control DNA to create a standard calibration curve. Allelic balance and stutter ratios should be evaluated compared to standard amplification without preamplification [23].

Mini-STR Amplification for Degraded DNA

This protocol adapts standard STR amplification for degraded DNA by targeting reduced amplicon sizes:

Principle: Redesigning primers to bind closer to the STR repeat region generates shorter amplicons that are more likely to amplify successfully from fragmented DNA templates.

Reagents:

- Commercial STR kit or custom mini-STR primer sets

- DNA polymerase optimized for amplification efficiency

- Template DNA (degraded)

- Appropriate buffer systems

- Capillary electrophoresis materials

Procedure:

- Select or design mini-STR primers that produce amplicons 70-250 bp in length for standard STR loci.

Prepare amplification reactions according to kit specifications or standard PCR protocols, with potential modifications:

- Increased cycle number (30-34 cycles)

- Extended extension time

- Potential adjustment of annealing temperature based on primer design

Perform thermal cycling using conditions optimized for the specific primer set.

Analyze products using capillary electrophoresis with appropriate size standards.

Interpret results with consideration for potential increased stutter and allelic imbalance inherent to degraded samples.

Validation: Compare mini-STR profiles with standard STR profiles from high-quality DNA samples to ensure concordance of genotype calls. Establish specific analytical thresholds and interpretation guidelines for mini-STR data from degraded samples.

Workflow and Pathway Diagrams

Diagram 1: Strategic workflow for STR analysis of degraded DNA, illustrating decision points for method selection based on sample quality assessment.

Diagram 2: abSLA PCR mechanism showing how abasic sites in primers enable semi-linear amplification to improve STR recovery from low-template DNA.

Optimizing STR analysis for fragmented DNA requires a multifaceted approach that addresses each stage of the forensic workflow. Through implementation of specialized extraction methods, reduced amplicon size strategies, advanced amplification techniques like abSLA PCR, and the integration of NGS technologies, forensic researchers can significantly enhance genetic information recovery from compromised samples. The continued development and validation of these methodologies will expand the boundaries of forensic identification capabilities, providing crucial investigative leads in challenging cases where biological evidence has undergone degradation. As the field progresses, the integration of artificial intelligence and machine learning into analytical workflows promises further enhancements in interpreting complex DNA profiles derived from degraded samples.

Leveraging Dense SNP Panels and MPS for Enhanced Sensitivity with Low-Input DNA

The analysis of low-input and degraded DNA represents a significant challenge in forensic genetics, often resulting in incomplete or uninformative profiles when using traditional Short Tandem Repeat (STR) typing methods [27]. The integration of Massively Parallel Sequencing (MPS) technologies with dense Single Nucleotide Polymorphism (SNP) panels has revolutionized forensic DNA analysis by enabling successful genotyping from minute amounts of degraded genetic material [11] [28]. This paradigm shift is primarily due to the fundamental advantages of SNPs over STRs, including their smaller amplicon size requirements, lower mutation rates, and higher genomic density, making them particularly suitable for compromised forensic samples [7] [28].

The limitations of conventional STR analysis become particularly apparent when working with highly degraded DNA, as the preferential amplification of shorter fragments in compromised samples often prevents the generation of complete profiles [7]. In contrast, SNP-based methods coupled with MPS technology can successfully generate usable genetic information from samples that would otherwise yield inconclusive results with standard forensic techniques [11] [29]. This technical advancement has profound implications for solving cold cases, identifying unidentified human remains, and generating investigative leads where biological evidence is minimal or severely compromised [11] [30].

Advantages of SNP-Based Analysis Over STR Typing

Technical Comparisons

The transition from STR to SNP-based analysis represents a significant advancement in forensic genetics, particularly for challenging samples. Table 1 summarizes the key differences between these approaches.

Table 1: Comparison of STR and SNP Markers for Forensic DNA Analysis

| Characteristic | STR Markers | SNP Markers |

|---|---|---|

| Marker size | Larger (typically 100-450 bp) | Smaller (can be <50 bp) |

| Mutation rate | High (~1 in 1000) | Low (~1 in 100 million) |

| Number of markers | Limited (typically 20-30 in commercial kits) | Extensive (hundreds to thousands) |

| Amplicon length | Longer fragments required | Short fragments sufficient |

| Performance with degraded DNA | Limited, especially for larger fragments | Excellent, due to small target size |

| Kinship analysis | Effective for first-degree relationships | Effective for distant relationships (up to 7th degree) |

| Multiplexing capacity | Limited by capillary electrophoresis | High with MPS technology |

| Additional information | Primarily identity only | Ancestry, phenotype, and kinship |

Practical Implications for Degraded DNA Analysis

The molecular characteristics of SNPs make them particularly advantageous for forensic applications involving degraded DNA compared with STRs [28]. One of the foremost benefits is their presence in smaller DNA fragments, making them especially suitable for analyzing highly degraded samples where DNA is fragmented into short pieces [28]. Additionally, SNPs exhibit a significantly lower mutation rate (approximately 1 in 100 million per replication) compared to STRs (about 1 in 1000), which reduces complications often encountered in kinship analysis [28].

The high genomic density of SNPs enables the analysis of hundreds of thousands of markers simultaneously, providing a vastly richer dataset than STR profiling [11]. This expanded marker set allows for kinship associations to be inferred well beyond first-degree relationships, which is particularly valuable in cases involving unknown suspects or unidentified human remains [11]. Furthermore, SNP testing enables biogeographical ancestry inference and forensic DNA phenotyping, which can provide additional investigative context about an unknown individual [11].

Performance Characteristics and Sensitivity Data

Quantitative Performance Metrics

Extensive validation studies have demonstrated the enhanced sensitivity of MPS-based SNP panels for low-input and degraded DNA analysis. Table 2 summarizes key performance characteristics from recent studies.

Table 2: Performance Characteristics of MPS-Based SNP Panels with Low-Input and Degraded DNA

| Parameter | Performance | Experimental Details |

|---|---|---|

| Minimum input DNA | Full profiles with 0.1-5 ng; reliable down to 50-125 pg | MPS multiplex assay targeting 41 HIrisPlex-S SNPs [29] |

| Degraded DNA performance | High success rate with artificially degraded and skeletal remains | Analysis of bones, teeth, and artificially degraded samples [29] |

| Genotype accuracy | >99% with UMI correction; >90% with coverage >10X without UMIs | FORCE panel with QIAseq UMI technology; benchmarking study [31] [32] |

| Coverage requirements | 10X coverage provides >90% genotyping accuracy | Simulation study with degraded DNA sequences [32] |

| PCR cycle optimization | Increased cycles (21-25) improve success with low-template DNA | Testing different PCR cycles for library preparation [29] |

| Fragment length | Successful with fragments as short as 40 bp | Simulation of degraded DNA with normal distribution around 40 bp [32] |

Impact of Unique Molecular Indices (UMIs)

The incorporation of Unique Molecular Indices represents a significant advancement for enhancing sensitivity and accuracy in low-input DNA analysis [31]. UMIs are short random nucleotide sequences (typically 8-12 base pairs) ligated to each template molecule prior to amplification, enabling bioinformatic detection of original template molecules and distinction from PCR-amplified copies [31].

Studies applying UMIs with the FORCE panel demonstrated substantial improvements in genotype accuracy and sensitivity when analyzing low-input DNA samples [31]. The UMI-based approach enabled very high genotype accuracies (>99%) for both reference DNA and challenging samples down to 125 pg input DNA [31]. By creating consensus reads from sequences sharing the same UMI, this technology effectively filters out artifacts resulting from amplification and sequencing errors, which is particularly valuable when analyzing low-copy-number DNA where stochastic effects are more pronounced [31].

Experimental Protocols

Workflow for MPS-Based SNP Analysis of Degraded DNA

The following diagram illustrates the comprehensive workflow for processing degraded DNA samples using MPS-based SNP panels:

Detailed Methodological Protocols

Library Preparation and UMI Incorporation

For the FORCE panel with QIAseq technology, library preparation follows a targeted approach with UMI incorporation [31]:

- DNA Input: 0.1-10 ng of DNA is recommended, with optimal results in the 1-5 ng range

- UMI Ligation: Unique Molecular Indices are ligated to each DNA template molecule prior to amplification using the QIAseq Targeted DNA Custom Panel (Qiagen)

- Library Amplification: PCR is performed with 21-25 cycles, with increased cycles improving success rates for low-template DNA [29]

- Pooling and Cleanup: Libraries are purified and normalized before sequencing

MPS Sequencing and Data Analysis

- Sequencing Platform: MiSeq FGx instrument (Verogen) or similar MPS platforms

- Coverage Target: Minimum 10X coverage for >90% genotyping accuracy [32]

- UMI Processing: Bioinformatic pipeline groups reads by UMI sequence and generates consensus sequences to eliminate PCR and sequencing errors

- Variant Calling: Specialized tools like ATLAS (developed for ancient DNA) outperform conventional methods for degraded samples, achieving over 90% genotyping accuracy at coverages greater than 10X [32]

Genotype Refinement and Imputation

For samples with lower coverage (<10X), genotype refinement and imputation using population reference panels can improve accuracy:

- Reference Panels: The 1000 Genomes Project provides a representative worldwide population reference

- Imputation Tools: Beagle and GLIMPSE are commonly used for genotype imputation

- Accuracy Improvement: Imputation can significantly enhance genotype calling for low-coverage samples by leveraging haplotype information from reference populations [32]

Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for MPS-Based SNP Analysis

| Reagent/Platform | Function | Application Notes |

|---|---|---|

| QIAseq Targeted DNA Custom Panel (Qiagen) | UMI-based library preparation | Enables accurate molecular counting and error correction; suitable for low-input DNA [31] |

| FORCE Panel | Comprehensive SNP marker set | ~5500 SNPs for ancestry, phenotype, identity, and kinship applications [31] |

| HIrisPlex-S System | Phenotype prediction SNP panel | 41 SNPs for eye, hair, and skin color prediction; adaptable to MPS [29] |

| Ion AmpliSeq Designer (Thermo Fisher) | Custom MPS panel design | Enables design of panels with amplicons <180 bp for degraded DNA [29] |

| Precision ID Library Kit (Thermo Fisher) | Library preparation for challenging samples | Optimized for low-input and degraded DNA [29] |

| MiSeq FGx Sequencing System (Verogen) | Forensic-grade MPS platform | Validated for forensic applications; integrated workflow [31] |

| ATLAS Variant Calling Tool | Genotyping for degraded DNA | Outperforms conventional tools (GATK, SAMtools) for degraded samples [32] |

The integration of dense SNP panels with MPS technology represents a transformative advancement in forensic genetics, particularly for analyzing low-input and degraded DNA samples. The technical advantages of SNPs—including their smaller amplicon size, genome-wide distribution, and lower mutation rate—make them ideally suited for challenging forensic evidence that would otherwise yield inconclusive results with traditional STR typing [11] [28].

Methodological enhancements such as UMI incorporation and specialized bioinformatic tools like ATLAS further improve genotype accuracy and sensitivity, enabling reliable analysis of samples with input quantities as low as 50-125 pg [32] [31]. Additionally, genotype imputation using comprehensive population reference panels can rescue data from low-coverage sequences, expanding the range of samples amenable to successful analysis [32].

As these technologies continue to evolve and sequencing costs decrease, MPS-based SNP analysis is poised to become an indispensable tool in forensic genetics, particularly for cold cases, unidentified human remains, and other challenging scenarios where biological evidence is minimal or severely compromised [11]. The implementation of standardized protocols, validation frameworks, and appropriate quality control measures will be essential for the widespread adoption of these powerful techniques in forensic practice.

The analysis of degraded DNA presents significant challenges in forensic science, where samples are often compromised by environmental factors such as heat, moisture, and ultraviolet radiation. These conditions break long DNA molecules into short, damaged fragments that resist analysis by standard genetic tools like polymerase chain reaction (PCR) and Sanger sequencing [3]. Advanced analytical techniques adapted from oligonucleotide research—including liquid chromatography-mass spectrometry (LC-MS), microarray analysis, and hybridization methods—are now enabling forensic researchers to recover meaningful genetic information from these compromised samples. This document outlines detailed application notes and protocols for these techniques, framed within sensitivity testing for degraded DNA in forensic panel research.

Core Analytical Techniques

Liquid Chromatography-Mass Spectrometry (LC-MS)

LC-MS has emerged as a powerful technique for detecting DNA adducts and modifications in degraded samples, providing both sensitive determination and structural information on formed adducts.

Protocol: LC-MS/MS Analysis of DNA Adducts from Cell Cultures

Sample Preparation and DNA Isolation

- Cell Culture: Seed HepG2 cells in 10 cm culture dishes at a density of 3 × 10⁶ cells/dish. After 24 hours, replace medium with serum-free medium containing 1-100 µM β-naphthoflavone (β-NF) to induce cytochrome P450 expression [33].

- Treatment: After 24 hours of induction, replace medium with Hank's Balanced Salt Solution (HBSS) containing 10 µM of the compound of interest (e.g., aflatoxin B1). Incubate for 24 hours [33].

- DNA Isolation:

- Wash cells twice with phosphate-buffered saline (PBS)