Advanced Paper Analysis Techniques in Questioned Document Examination: Methodologies, Applications, and Scientific Validation

This article provides a comprehensive overview of modern paper analysis techniques within the field of Questioned Document Examination (QDE), tailored for researchers and forensic science professionals.

Advanced Paper Analysis Techniques in Questioned Document Examination: Methodologies, Applications, and Scientific Validation

Abstract

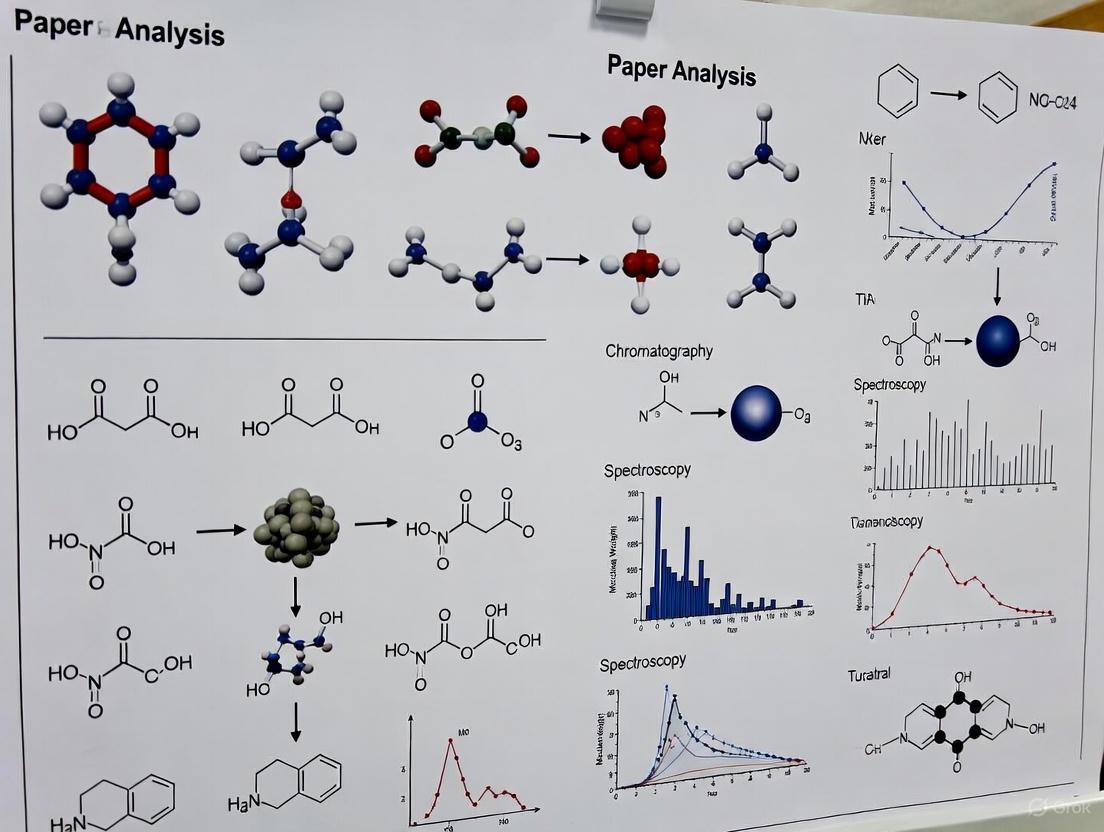

This article provides a comprehensive overview of modern paper analysis techniques within the field of Questioned Document Examination (QDE), tailored for researchers and forensic science professionals. It explores the foundational principles defining questioned documents and their legal significance, details advanced methodological approaches for physical and chemical paper analysis, addresses common challenges and optimization strategies in laboratory practice, and examines the critical frameworks for validating findings and ensuring their admissibility in legal contexts. By synthesizing current research trends and technological advancements, this review serves as a vital resource for enhancing analytical capabilities, promoting standardized practices, and driving future innovation in forensic document science.

Defining the Scope and Scientific Basis of Paper Analysis in QDE

What is a Questioned Document? Expanding Beyond Paper to Any Message-Bearing Object

Questioned Document Examination (QDE) is a forensic science discipline dedicated to analyzing documents to ascertain their origin, authenticity, and history [1]. Its primary purpose is to provide evidence about a suspicious or questionable document using scientific processes and methods for the legal system [2]. The term "document" is defined broadly in forensic science, encompassing any material bearing marks, signs, or symbols intended to convey a message or meaning to someone [2]. This scope extends far beyond traditional paper documents to include items such as graffiti on a wall or stamp impressions on meat products [1] [2].

The discipline has evolved from an initial focus on handwriting analysis to now include the examination of modern mass reproduction devices and a wide array of security documents [3]. Forensic Document Examiners (FDEs) are often called upon to provide evidence in cases involving fraud, forgery, counterfeiting, and threats [1] [2]. Their work is integral to the judicial process, helping to establish facts and connections between documents and their sources.

Defining the "Questioned Document"

A questioned document is any material bearing marks, signs, or symbols that is potentially disputed in a court of law [2]. The "question" can relate to its authenticity, origin, date, integrity, or authorship [2]. The evidence sought from a questioned document can include alterations, the chain of possession, damage, forgery, or other challenges that arise when a document is presented in a legal context [2].

The Expanding Scope of Materials

The following table categorizes the wide range of materials that fall under the purview of modern document examination.

Table: Types of Questioned Documents and Examination Focus

| Category of Document | Specific Examples | Primary Focus of Examination |

|---|---|---|

| Traditional Paper Documents | Contracts, wills, handwritten letters, cheques, diaries, ransom notes [1] [2] [3] | Handwriting & signature analysis; detection of alterations (erasures, additions); ink and paper analysis [1] [4] |

| Modern Machine-Produced Documents | Office printer output, photocopies, facsimiles [2] [3] | Identification of printer/copier make and model; analysis of machine defects and Machine Identification Codes (MIC) [3] |

| Security and Identity Documents | Passports, driver's licenses, academic certificates, birth certificates, voting ballots, counterfeit currency [3] | Verification of security features (watermarks, holograms, microprinting); detection of forgery or tampering [3] |

| Non-Traditional Message-Bearing Objects | Graffiti on walls, markings on whiteboards, stamp impressions on products, writings damaged by fire or water [1] [2] | Recovery of latent evidence; deciphering original text; determining source [1] |

Core Analytical Techniques: Application Notes and Protocols

Forensic document examination relies on a systematic approach and specialized techniques to uncover evidence not visible to the naked eye. The following protocols detail standard methodologies used in the field.

Protocol 1: Indented Writing Analysis using Electrostatic Detection Apparatus (ESDA)

Principle: The ESDA technique uses electrostatic charges to detect and visualize indented impressions left on a sheet of paper placed under the one that was originally written on [5]. These impressions are a valuable latent evidence of previous documentation.

Application Notes: This method is particularly useful in investigations where a notepad may have been used to write a message, and examiners need to recover what was written on the now-missing top pages. It can link a suspect to a specific notepad or document sequence.

Workflow:

- Sample Preparation: The document is placed on a vacuum bed, and a humidifying chamber is used to condition the paper to optimal moisture content.

- Charging Process: A thin, transparent polymer film is placed over the document. A corona charge is applied uniformly across the surface, creating an electrostatic potential.

- Development: Black toner particles are cascaded or cascaded over the charged film. The particles are attracted to areas with differential charge, corresponding to the indented writing.

- Fixation: The developed image is permanently fixed, either photographically or by using a second transparent film to laminate the toner in place.

The following diagram illustrates the ESDA workflow:

Protocol 2: Ink and Paper Analysis via Video Spectral Analysis

Principle: Video Spectral Comparators (VSC) use different wavelengths of light (from ultraviolet to infrared) and filters to examine a document's properties [5] [3]. This non-destructive method can reveal alterations, differentiate between ink types, and examine security features.

Application Notes: The VSC is essential for authenticating security documents and detecting forgeries. It can reveal writing that has been obliterated or erased, and determine if different inks were used in a document, suggesting tampering.

Workflow:

- Initial Examination: The document is placed under the VSC camera and examined under white light to note visible characteristics.

- Infrared (IR) Examination: The document is viewed under infrared illumination and through IR filters. Inks that appear identical in visible light may absorb or reflect IR differently, revealing inconsistencies.

- Ultraviolet (UV) Examination: The document is exposed to UV radiation to observe fluorescence or absorption in inks and paper. This can detect erasures and identify paper coatings.

- Spectral Response Analysis: The reflectance or fluorescence of specific areas is measured across the spectrum to create a unique spectral signature for an ink, allowing for objective comparison.

The following diagram illustrates the VSC workflow:

Protocol 3: Systematic Handwriting and Signature Comparison

Principle: Handwriting comparison is based on the principle that while every person has a range of natural variation in their writing, no two skilled writers exhibit identical features [3]. The examination involves a side-by-side comparison of questioned writing with known specimens (exemplars) to identify consistent individual characteristics or significant discrepancies [4].

Application Notes: This is a core technique in verifying the authenticity of signatures on contracts and wills, or linking a suspect to a handwritten ransom note. The examiner must have an adequate number of known exemplars for a valid comparison.

Workflow:

- Collection of Exemplars: Obtain known handwriting samples (requested writing) from the individual under controlled conditions for comparison [4].

- Analysis of Questioned Document: Thoroughly examine the questioned handwriting or signature for its overall form, movement, and spatial arrangement.

- Examination of Individual Characteristics: Break down the writing into specific elements such as letter formations, pen pressure, spacing, slant, line quality, and the presence of tremors or hesitations.

- Side-by-Side Comparison: Directly compare the characteristics of the questioned writing with the known exemplars, looking for both similarities and fundamental dissimilarities [6].

- Evaluation: Weigh the evidence to form an opinion regarding authorship, which can range from identification (same writer) to elimination (different writer), with qualified conclusions or inconclusive findings in between.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key equipment and materials essential for a comprehensive questioned document examination laboratory.

Table: Essential Toolkit for Questioned Document Examination

| Tool / Material | Category | Primary Function |

|---|---|---|

| Electrostatic Detection Apparatus (ESDA) | Indentation Analysis | To visualize and recover indented writing impressions that are invisible to the naked eye [5]. |

| Video Spectral Comparator (VSC) | Spectral Analysis | To examine documents under different light wavelengths (UV, IR) to detect alterations, differentiate inks, and verify security features [5] [3]. |

| Comparison Microscope | Magnification & Analysis | To perform side-by-side microscopic comparison of fine details in handwriting, typewriting, ink lines, and paper fibers [1] [7]. |

| Stereo Microscope | Magnification & Analysis | To provide a three-dimensional view for examining the surface topography of documents, including impressions, erasures, and alterations [7]. |

| Chemical Test Kits / Reagents | Chemical Analysis | To perform tests for ink age determination and to reveal erased or obliterated writing through chemical reactions [1] [7]. |

| Chromatography Equipment | Chemical Analysis | To separate and identify components in ink mixtures, helping to determine ink formulation and potential differences between samples [1]. |

| High-Resolution Document Scanner | Imaging | To capture fine details of documents for digital analysis, archiving, and presentation of evidence [7]. |

| Forensic Photography Setup | Imaging | To document evidence with macro lenses, adjustable tripods, and specialized lighting for low-angle and oblique lighting techniques [5]. |

The Crucial Role of Paper Analysis in Fraud, Counterfeiting, and Threat Investigations

This application note details the advanced protocols of forensic paper analysis, a critical sub-discipline of Questioned Document Examination (QDE). Within the framework of a broader thesis on QDE techniques, we outline standardized methodologies for analyzing paper substrates to determine origin, authenticity, and history. These procedures are vital for researchers and forensic professionals investigating document-based crimes such as fraud, counterfeiting, and threats, providing objective, scientific evidence for legal and investigative proceedings [1] [8].

Questioned Document Examination is a forensic science discipline focused on analyzing documents to ascertain their origin and authenticity [1]. A "questioned document" is any signature, handwriting, or material whose authenticity is in doubt [9]. Paper analysis forms a foundational pillar of QDE, moving beyond the surface ink to investigate the substrate itself.

The primary objectives of paper analysis are to:

- Establish Authenticity: Determine if a document is genuine or forged.

- Identify Origin: Link a document to a specific source, manufacturer, or batch.

- Detect Alterations: Reveal additions, erasures, or other tampering.

- Provide Evidence: Supply scientifically-grounded data for criminal and civil litigation [1] [10].

In the context of national security, document and benefit fraud create vulnerabilities that enable threats to public safety, making robust analytical techniques essential [8].

Key Analytical Techniques and Experimental Protocols

The following section provides detailed methodologies for the core techniques used in the forensic analysis of paper.

Microscopic Surface and Fiber Analysis

This protocol aims to examine the physical structure and composition of paper to identify class characteristics and individualizing features.

Materials & Reagents:

- Sterile tweezers and scalpels

- Glass slides and cover slips

- Distilled water

- Iodine-based stain (e.g., for fiber identification)

- Polarizing light microscope

Procedure:

- Sample Preparation: Under sterile conditions, use tweezers to isolate a small fiber sample (approx. 2-4 mm²) from an unobtrusive area of the document.

- Wet Mount: Place the sample on a glass slide with a drop of distilled water and apply a cover slip.

- Dry Mount: For surface texture analysis, place a separate sample directly on a slide without mounting medium.

- Examination:

- Observe the dry mount under low magnification (10x-40x) to assess surface texture, fillers, and coatings.

- Examine the wet mount under higher magnification (100x-400x) to identify fiber types (e.g., wood pulp, cotton, linen) and their processing method.

- Polarized Light: Use polarized light to assess birefringence and identify specific mineral fillers or pigments.

- Documentation: Photomicrograph all characteristic features for comparison with known standards.

Video Spectral Analysis (VSA)

VSA is used to examine the optical properties of paper and any security features under different wavelengths of light, revealing alterations or hidden information [5].

Materials & Reagents:

- Video Spectral Comparator (VSC) system

- Known standard paper samples for comparison

Procedure:

- System Calibration: Calibrate the VSC using a standard white reference tile according to manufacturer specifications.

- Initial Observation: Place the questioned document in the examination chamber and observe under white light to document its baseline appearance.

- Spectral Scanning:

- Systematically examine the document across a range of wavelengths (UV, visible, and IR).

- Observe and document the document's absorption, reflection, and luminescence properties at each wavelength.

- Filter Application: Use various filters to enhance contrast and reveal obscured or erased entries, indented writing, or watermarks.

- Comparison: Directly compare the reactions of the questioned document with those of known paper standards to identify similarities or differences in composition.

Electrostatic Detection Apparatus (ESDA) for Indented Writing

ESDA is a non-destructive technique used to visualize and recover indented impressions on paper, which may not be visible to the naked eye [5].

Materials & Reagents:

- ESDA unit

- Mylar film

- Electrostatic toner powder

- Copying film or plastic packaging for preservation

Procedure:

- Humidification: Lightly humidify the document to enhance its ability to hold an electrostatic charge, if necessary.

- Charging: Place the document on the ESDA's porous bed and cover it with a thin Mylar film. Apply a high-voltage electrostatic charge across the film.

- Development: Apply a fine toner powder over the Mylar surface. The toner will be preferentially attracted to areas where the paper's surface has been disturbed by indented writing.

- Fixation: Once the indented writing is clearly visualized, permanently fix the image onto a piece of plastic film or photograph the result immediately, as the image is temporary.

- Interpretation: Analyze the recovered indented writing for content and compare it with writing samples from potential sources.

The logical workflow for applying these techniques is outlined below.

Data Presentation: Paper Characteristics and Analytical Findings

Forensic paper analysis generates both qualitative observations and quantitative data. The following tables summarize key characteristics and their investigative significance.

Table 1: Class Characteristics of Paper and Their Forensic Significance

| Characteristic | Description | Analytical Method | Forensic Significance |

|---|---|---|---|

| Fiber Composition | Types of pulp (e.g., wood, cotton, rag). | Microscopy, Staining | Identifies paper grade and manufacturer; links to a common source. |

| Filler/Coating | Minerals like clay, calcium carbonate. | Microscopy, SEM-EDS | Indicates paper type and intended use; provides batch information. |

| Grammage | Weight per unit area (g/m²). | Precision Weighing | A quantifiable metric for comparison with known standards. |

| Thickness (Caliper) | Measured in micrometers (µm). | Micrometer | Another physical property for distinguishing paper batches. |

| Watermarks | Designs impressed during manufacturing. | Transmitted Light, VSA | Strong indicator of brand, manufacturer, and production date. |

| Fluorescence | Brightness under UV light. | VSA (UV) | Can identify specific paper brands or batches; reveals stains or alterations. |

Table 2: Summary of Core Analytical Techniques for Paper Examination

| Technique | Principle | Information Obtained | Destructive? |

|---|---|---|---|

| Microscopic Analysis | High-magnification visual inspection. | Fiber type, fillers, surface erasures, mechanical damage. | Typically micro-destructive |

| Video Spectral Analysis (VSA) | Analysis of light interaction (UV, Vis, IR). | Ink differentiation, latent security features, alterations. | Non-destructive |

| Electrostatic Detection (ESDA) | Electrostatic charge attraction of toner. | Visualization of indented writing. | Non-destructive |

| Chemical Testing | Reactivity of paper/ink with specific reagents. | Chemical composition of paper sizing or coatings. | Destructive |

The Scientist's Toolkit: Essential Research Reagents and Materials

A well-equipped document laboratory maintains a suite of specialized materials and reagents for comprehensive analysis.

Table 3: Essential Research Reagent Solutions for Document Analysis

| Item | Function/Application |

|---|---|

| Polarizing Light Microscope | The primary tool for identifying fiber types, fillers, and the physical structure of paper. |

| Video Spectral Comparator (VSC) | A core instrument for examining documents under various light wavelengths to detect alterations and security features [5]. |

| Electrostatic Detection Apparatus (ESDA) | Specialized equipment for recovering indented writings without damaging the original document [5]. |

| Sterile Sampling Tools | Tweezers, scalpels, and probes for taking minute paper samples for destructive testing without contaminating evidence. |

| Chemical Test Kits | Reagents for thin-layer chromatography (TLC) and other tests to analyze ink and paper composition [1]. |

| Reference Standards | Libraries of known paper samples, watermarks, and security features for comparative analysis. |

Paper analysis provides indispensable, objective data in the investigation of fraudulent, counterfeit, and threatening documents. The techniques detailed in this application note—from basic microscopy to advanced electrostatic detection—enable researchers and forensic professionals to uncover the hidden history of a document. By applying these standardized protocols, scientists can reliably determine a document's authenticity, trace its origin, and detect tampering, thereby playing a crucial role in upholding the integrity of legal and financial systems [1] [8] [10]. The continued development and rigorous application of these methodologies are fundamental to advancing the field of forensic document examination.

Forensic document examination is a branch of forensic science focused on analyzing documents to ascertain their origin and authenticity [1] [11]. This discipline, often referred to as Questioned Document Examination (QDE), involves the scientific examination of documents such as contracts, wills, checks, and anonymous letters to determine their provenance and detect any alterations or forgeries [1] [11]. Within this field, paper analysis represents a crucial investigative pathway for tracing the origin of documents and establishing their historical context.

Paper examination falls under the broader category of "writing media examination," which also includes analysis of writing instruments and inks [11]. Forensic document examiners employ paper analysis to address critical questions in legal and investigative contexts: Can a threatening letter be linked to a specific notepad recovered from a suspect? Was a page added to a business contract after its original execution? Do multiple documents share a common origin? [1]. By systematically analyzing both class and individual characteristics of paper, examiners can provide valuable evidence regarding document authenticity and historical usage, which is particularly vital in cases involving fraud, forgery, counterfeiting, and threats [1].

Fundamental Principles: Class vs. Individual Characteristics

In forensic document examination, the distinction between class and individual characteristics forms the foundational framework for analysis [12]. This systematic differentiation allows examiners to progressively narrow down the origin of paper evidence.

Class characteristics are shared by a group of items manufactured by a common process or to a common specification [12]. For paper, these include features determined during mass production, such as basic composition, standard size, and general manufacturing attributes that allow the paper to be categorized into specific groups. These characteristics can demonstrate that a questioned document could have originated from a particular source but cannot exclusively identify a single source.

Individual characteristics are unique to a specific item and arise from random variations during manufacturing, natural aging, or subsequent use [12]. For paper, these include microscopic fiber distributions, unique imperfections from manufacturing equipment, and acquired features from handling and storage. These characteristics have the potential to individually identify a specific source or document with a high degree of certainty.

The relationship between these characteristic types follows a hierarchical identification process: class characteristics first narrow the field of possible sources, while individual characteristics subsequently provide the potential for unique identification.

Comprehensive Characteristics of Paper

Class Characteristics

Class characteristics represent the shared attributes imparted during the paper manufacturing process. These features allow forensic examiners to categorize paper into broad groups and potentially link a questioned document to a specific production batch or manufacturer.

Table 1: Class Characteristics of Paper

| Characteristic | Description | Forensic Significance |

|---|---|---|

| Paper Composition | Fiber sources (wood pulp, cotton, rag), filler materials (clay, calcium carbonate), and sizing agents [11] | Indicates paper grade and intended use; provides manufacturing era information |

| Basic Weight/Thickness | Grammage (g/m²) and caliper (thickness) measurements [11] | Identifies conformity to specific product standards and specifications |

| Sheet Dimensions | Standard paper sizes (A4, legal, letter) or specialized cut dimensions | Links to specific product lines or industrial applications |

| Color | Base paper color including bright whites, creams, and colored stocks | Suggests intended use and narrows manufacturer possibilities |

| Watermarks | Manufacturer logos, brand names, or designs incorporated during manufacturing [1] | Identifies specific brands, production mills, and sometimes date ranges |

| Fluorescence | Optical brightening agents (OBAs) that glow under UV light | Characteristic of specific manufacturers and production periods |

| Surface Texture | Wove, laid, or specialized finishes imparted during manufacturing | Indicates manufacturing method and potential end-use applications |

Individual Characteristics

Individual characteristics represent the unique, often microscopic features that distinguish one sheet of paper from another, even within the same production batch.

Table 2: Individual Characteristics of Paper

| Characteristic | Description | Forensic Significance |

|---|---|---|

| Microscopic Fiber Distribution | Random orientation and distribution of cellulose fibers at microscopic level | Creates a unique "fingerprint" for each sheet; highly discriminatory |

| Manufacturing Imperfections | Random debris, consistency variations, or coating irregularities from production | Provides unique identifiers traceable to specific manufacturing moments |

| Edge Characteristics | Micro-tears, cuts, or imperfections along sheet edges from cutting process | Can be matched to remaining sheets in a pad or ream |

| Acquired Surface Features | Stains, indentations, tears, or holes acquired during use or storage | Creates a unique usage history that individualizes the document |

| Aging Patterns | Unique yellowing, brittleness, or foxing patterns based on storage conditions | Provides information about document history and potential timeline |

| Previous Application Marks | Indented writing from prior use, staple holes, or crease patterns | Links document to specific contexts or prior uses |

The following workflow diagram illustrates the systematic process for analyzing these paper characteristics in forensic investigations:

Experimental Protocols for Paper Analysis

Protocol 1: Comprehensive Paper Examination Workflow

Objective: To systematically examine questioned paper documents for class and individual characteristics to determine origin and authenticity.

Materials and Equipment:

- Stereo microscope (10x-40x magnification)

- Polarized light microscope

- UV light source (longwave and shortwave)

- Micrometer or paper thickness gauge

- Analytical balance (0.0001g sensitivity)

- Reference collection of paper standards

- Forensic photography system with macro capabilities

Procedure:

- Documentation and Preservation

- Photograph the entire document under standardized lighting before any analysis

- Note any folds, stains, or pre-existing damage

- Use powder-free gloves and document handling tools to prevent contamination

Visual Examination under Normal Light

- Examine surface characteristics, texture, and color under incident light at varying angles

- Document watermarks, logos, or manufacturer identifications using transmitted light

- Record sheet dimensions to the nearest millimeter using calibrated rulers

- Examine edge conditions for cutting patterns or tears

Examination under Ultraviolet Light

- Observe and document fluorescence patterns under longwave (365nm) UV light

- Examine under shortwave (254nm) UV light for variations in fluorescence

- Photograph fluorescence patterns with appropriate filters

Microscopic Fiber Analysis

- Collect fiber samples from document edges using fine tweezers

- Prepare temporary mounts in water or glycerin on glass slides

- Examine fiber morphology under polarized light at 100x-400x magnification

- Document fiber type, length, and processing characteristics

Physical Measurement

- Measure basis weight using analytical balance (weight per unit area)

- Determine thickness using micrometer at multiple document locations

- Calculate density from weight and thickness measurements

Comparative Analysis

- Compare all characteristics with known standard samples

- Look for both class correspondence and individual matching features

- Document any differences or points of correspondence

Troubleshooting:

- Faint watermarks may require specialized watermark photography techniques

- Low fluorescence may necessitate longer exposure times for photography

- Mixed paper compositions may require chemical staining for fiber differentiation

Protocol 2: Fiber Analysis and Composition Testing

Objective: To identify the fiber composition and filler materials in paper samples.

Materials and Equipment:

- Polarized light microscope with magnification to 400x

- Herzberg stain or Graff "C" stain for fiber identification

- Micro cover slips and glass slides

- Centrifuge tubes and small beakers

- Muffle furnace for ash content determination

- pH testing strips or micro pH electrode

Procedure:

- Sample Preparation

- Take small samples (approximately 1cm²) from document edges or areas with minimal writing

- Separate samples for different analytical techniques

- Macerate samples in distilled water for fiber separation

Fiber Staining and Identification

- Prepare Herzberg stain (zinc chloride, potassium iodide, iodine in water)

- Place macerated fibers on slide and apply stain

- Observe color reactions under microscope:

- Wood fibers: Blue to blue-purple

- Cotton/rag fibers: Red to wine red

- Esparto: Pinkish red

- Document fiber types and approximate proportions

Filler Content Determination

- Weigh precisely approximately 1g of paper sample

- Ash sample in muffle furnace at 900°C for 1 hour

- Cool in desiccator and reweigh

- Calculate filler percentage from weight loss

Surface pH Determination

- Apply distilled water to document edge using micro-dropper

- Touch pH test strip to moistened area immediately

- Record pH value and compare to standards

Safety Considerations:

- Use fume hood when preparing chemical stains

- Wear appropriate PPE including lab coat, gloves, and safety glasses

- Follow proper disposal procedures for chemical waste

Research Reagent Solutions and Essential Materials

The following table details key reagents and materials essential for comprehensive paper analysis in forensic document examination:

Table 3: Essential Research Reagents and Materials for Paper Analysis

| Reagent/Material | Function/Application | Technical Specifications |

|---|---|---|

| Herzberg Stain | Differential staining of cellulose fibers for type identification | Zinc chloride, potassium iodide, iodine solution; specific color reactions distinguish wood, cotton, and other fibers |

| Graff 'C' Stain | Alternative staining solution for fiber differentiation | Chlorazol black, ethanol, glycerol solution; provides contrasting coloration for various paper components |

| Polarized Light Microscope | Examination of fiber morphology and optical properties | 40x-400x magnification with cross-polarizers and compensator for birefringence observations |

| UV Light Source | Observation of optical brighteners and fluorescence patterns | Longwave (365nm) and shortwave (254nm) capabilities with appropriate safety filters |

| Reference Paper Collection | Comparative standards for dating and sourcing | Comprehensive collection of dated papers from various manufacturers with known production histories |

| Analytical Balance | Precise basis weight measurements | 0.0001g sensitivity with static elimination capability for accurate paper weighing |

Data Analysis and Interpretation Framework

The analytical process for paper characteristics requires systematic data interpretation, as illustrated in the following decision pathway:

Interpretation Guidelines:

- Excluded Source: Significant differences in class characteristics definitively eliminate a potential source

- Possible Source: Correspondence in class characteristics with no significant individual characteristic analysis

- Probable Source: Correspondence in class characteristics with multiple consistent individual characteristics

- Identified Source: Correspondence in class characteristics with matching unique individual characteristics that individualize the source

Quantitative Measurement Standards:

- Basis weight measurements should be reported as grams per square meter (g/m²) with standard deviation across multiple measurements

- Thickness measurements should be reported in micrometers (μm) with sampling from multiple document locations

- Fiber composition should be reported as percentage estimates with differentiation between fiber types

- Fluorescence intensity can be qualitatively reported as none, weak, moderate, or strong relative to standards

The systematic analysis of class and individual characteristics in paper provides a powerful methodology for origin tracing in questioned document examination. By progressing from broad categorization through class characteristics to specific identification via individual characteristics, forensic examiners can provide scientifically robust evidence regarding document provenance and authenticity. The experimental protocols and analytical frameworks outlined in this application note provide researchers and forensic professionals with comprehensive methodologies for conducting rigorous paper analysis that meets the evidentiary standards required in legal proceedings. As paper manufacturing technologies evolve, continued research and refinement of these analytical techniques remains essential for maintaining the efficacy of forensic document examination in addressing questions of document origin and integrity.

Questioned Document Examination (QDE) stands as a crucial forensic science discipline dedicated to analyzing documents to determine their authenticity, origin, and detect alterations [13]. This field has evolved from a practice reliant on expert opinion to a rigorous scientific discipline employing a wide array of analytical techniques. The journey of QDE, from its historical roots to its modern applications, demonstrates a continuous adaptation of scientific principles to meet the challenges of document fraud. This evolution is particularly critical in legal contexts, where the integrity of documents can determine the outcomes of criminal and civil cases. This article details the key protocols and applications that define contemporary QDE practice, providing a resource for researchers and professionals engaged in the scientific analysis of document evidence.

Historical Context and Foundational Cases

The systematic foundation of QDE was largely established in the late 19th and early 20th centuries, notably with the 1901 publication of "Questioned Documents" by Albert S. Osborn, who is often regarded as the father of this field [13]. However, the application of document analysis in legal proceedings has been validated through several landmark cases:

- Lindbergh Kidnapping Case (1932): Forensic handwriting analysis was instrumental in convicting Bruno Hauptmann for the kidnapping and murder of Charles Lindbergh's son, showcasing the early legal acceptance of document evidence [13].

- Hitler Diaries Hoax (1983): QDE techniques exposed what was claimed to be Adolf Hitler's personal diaries as modern forgeries, demonstrating the role of document examiners in historical authentication [13].

- The Unabomber Case (1995): The identification of Ted Kaczynski was significantly aided by handwriting analysis of his manifesto, linking him to a series of mail bombings [13].

These cases underscore the real-world impact of QDE and established the core principles of document comparison and authenticity testing that remain relevant today.

Modern QDE Techniques: Application Notes and Protocols

Modern QDE employs a multi-faceted approach, utilizing a suite of scientific instruments and methodologies to uncover evidence imperceptible to the naked eye.

Handwriting and Signature Analysis

Application Note: This fundamental QDE technique involves comparing questioned handwriting or signatures with known samples to identify the writer or detect simulation [13]. It relies on the principle that individual handwriting is unique and exhibits consistent, habitual characteristics.

Protocol 1: Comparative Handwriting Analysis

- Objective: To determine whether a questioned document was written by a specific individual by comparing its handwriting characteristics with verified specimens.

- Materials: Questioned document, known handwriting exemplars (requested and collected), stereomicroscope, transparent overlays, digital imaging software with overlay capabilities, high-resolution scanner.

- Procedure:

- Acquisition of Exemplars: Obtain adequate known writing samples from the suspected writer under controlled conditions that mimic the questioned document (e.g., writing instrument, paper, writing speed).

- Examination under Microscope: Systematically examine both questioned and known writings under a stereomicroscope (typically 10x-40x magnification). Observe and document individual characteristics, including:

- Letter Formation: The shape and construction of individual letters.

- Slant and Alignment: The angle and baseline orientation of the writing.

- Spacing: The proportion and rhythm between letters, words, and lines.

- Pen Pressure: The variations in pressure applied during writing, often visible in ink density and paper indentation.

- Digital Comparison: Scan the documents at high resolution (≥600 dpi). Use specialized software to create digital overlays, comparing the alignment of specific characters and words.

- Analysis of Evidence: Identify points of agreement and disagreement between the questioned and known writings. Evaluate the significance of these findings, considering natural variation in a person's handwriting.

- Reporting: Document all observations, methodologies, and conclusions in a detailed report, including annotated photographs to illustrate key points of comparison.

Ink and Paper Analysis

Application Note: This technique analyzes the physical composition of the document's materials to determine origin, authenticity, and detect alterations. It can reveal if different inks were used or if a document's paper is inconsistent with its alleged age [13] [14].

Protocol 2: Thin-Layer Chromatography (TLC) for Ink Comparison

- Objective: To separate and compare the chemical components of ink from a questioned document to determine if they are consistent with a known source or if multiple inks are present.

- Materials: Micro-sampling tool (hypodermic needle or scalpel), small glass vials, micropipettes, TLC plates (silica gel), developing chamber, solvent system (e.g., ethyl acetate:ethanol:water in specific ratios), UV light chamber.

- Procedure:

- Micro-Sampling: Using a sterile hypodermic needle, carefully extract minute ink samples (≈0.5 mm) from the questioned writing and from control ink pens. Take multiple samples if an alteration is suspected.

- Extraction: Place each sample in a separate vial and dissolve it in a few microliters of a suitable solvent (e.g., pyridine).

- Spot Application: Using a micropipette, apply each dissolved ink sample as a small spot on a TLC plate, approximately 1 cm from the bottom. Label each spot.

- Chromatogram Development: Place the TLC plate in a developing chamber containing a shallow layer of solvent. Ensure the solvent level is below the application spots. Seal the chamber and allow the solvent to migrate up the plate until it is near the top.

- Visualization: Remove the plate and allow it to dry. Observe the developed chromatogram under visible light and then under UV light (254 nm and 365 nm). Document the color and distance traveled (Rf values) of each separated dye band.

- Interpretation: Compare the banding pattern, colors, and Rf values of the questioned ink to the known controls. Different patterns indicate different ink formulations.

Detection of Erasures and Alterations

Application Note: This involves using non-destructive imaging techniques to reveal latent evidence of document tampering, such as erased text, additions, or impressions from writing on previous pages [13].

Protocol 3: Multispectral Imaging with a Video Spectral Comparator (VSC)

- Objective: To reveal erased, obliterated, or altered writing, and to differentiate between inks that appear similar under normal light.

- Materials: Video Spectral Comparator (VSC) system or similar multispectral imaging device, high-resolution digital camera.

- Procedure:

- Initial Examination: Place the document on the VSC stage and perform an initial examination under white light at various magnifications to note any visible irregularities.

- Infrared (IR) Examination:

- Engage the IR light source and an IR-sensitive camera.

- Apply various IR filters while observing the monitor. Some inks (especially blue and black ballpoint pens) may become transparent under specific IR wavelengths, revealing underlying text or erased areas.

- Document all findings with digital capture.

- Ultraviolet (UV) Examination:

- Switch to UV illumination (both long-wave and short-wave).

- Observe the document for luminescence differences. Erased areas, adhesive residues, or different paper types often exhibit different luminescent properties compared to the original document.

- Document the findings.

- Analysis of Intersecting Lines: Use the VSC to determine the sequence of intersecting strokes (e.g., whether a signature passes over a printed line or vice versa). This is often assessed by observing the continuity of the upper stroke over the lower one under microscopic magnification and specific lighting.

- Reporting: Compile all digital images with annotations explaining what each technique revealed, providing objective evidence of alteration.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and instruments used in a modern QDE laboratory.

Table 1: Essential Materials and Instruments for a QDE Laboratory

| Item | Function & Application Note |

|---|---|

| Stereomicroscope | Provides low-power magnification (typically 10x-40x) for the detailed observation of handwriting features, paper fiber structure, and erasure marks [13]. |

| Video Spectral Comparator (VSC) | A core instrument that uses different wavelengths of light (UV, IR, visible) to differentiate inks, reveal erased text, and examine security features non-destructively [13]. |

| Electrostatic Detection Device (EDD) | Detects and visualizes subtle indentations or impressions on paper left from writing on pages above, which can be critical for recovering content from burned or damaged documents [13]. |

| Thin-Layer Chromatography (TLC) Kit | Used for the chemical separation and comparison of ink components to determine if multiple inks are present or to link an ink to a specific pen [13] [14]. |

| Digital Imaging Software | Allows for precise comparison of handwriting through overlays, enhancement of faint images, and calibration of measurements for objective analysis [13]. |

Workflow Visualization and Data Analysis

The analytical process in QDE follows a logical, sequential workflow to ensure comprehensive and unbiased analysis. The following diagram illustrates the standard progression from receiving a questioned document to forming a conclusion.

Table 2: Quantitative Data from scRNA-seq Study Featuring QDE-SVM

The following table summarizes performance metrics from a recent bioinformatics study that utilized a Quantum-inspired Differential Evolution algorithm wrapped with a Support Vector Machine (QDE-SVM) for gene selection. Although from a different field, it exemplifies the type of quantitative data and high-performance outcomes that modern, algorithm-driven scientific QDE aims for in its own domains. [15]

| Feature Selection Method | Average Classification Accuracy | Number of Datasets Evaluated | Key Application Area |

|---|---|---|---|

| QDE-SVM (Proposed Method) | 0.9559 | 12 | scRNA-seq Cell Type Identification |

| FSCAM | 0.8872 | 12 | scRNA-seq Cell Type Identification |

| SSD-LAHC | 0.8614 | 12 | scRNA-seq Cell Type Identification |

| MA-HS | 0.8463 | 12 | scRNA-seq Cell Type Identification |

| BSF | 0.8292 | 12 | scRNA-seq Cell Type Identification |

The discipline of Questioned Document Examination has undergone a profound transformation, evolving from a skill-based art to a rigorous scientific practice. This evolution is characterized by the adoption of standardized protocols, sophisticated analytical instrumentation, and a commitment to empirical evidence. Techniques such as VSC analysis, TLC, and digital comparison provide examiners with powerful, objective tools to address questions of document authenticity. As the field continues to advance, particularly with the challenges posed by digital documentation and sophisticated forgery, the integration of new technologies and the rigorous application of the scientific method will remain paramount for upholding the integrity of document evidence in legal and research contexts.

In the field of questioned document examination, the forensic analysis of paper provides critical insights into the origin, authenticity, and history of documents. Paper is a complex composite material whose properties are determined by three fundamental components: fibers, chemical additives, and watermarks. Understanding the production processes behind these components enables forensic scientists to identify unique characteristics that may link a document to a specific source, date, or manufacturing batch. This article presents detailed application notes and experimental protocols for the analysis of these key paper components, providing researchers with standardized methodologies for forensic paper analysis.

The interaction between paper fibers and chemical additives creates a unique signature that can be quantified through specialized analytical techniques. Recent advancements in measurement technologies, such as zeta potential analysis, now allow for precise characterization of fiber-additive interactions, offering new dimensions for comparative analysis in forensic investigations [16]. This scientific framework establishes the foundation for objective, reproducible analysis in questioned document examination.

Analytical Techniques for Fiber and Additive Characterization

Zeta Potential Measurement for Fiber-Chemical Interaction Analysis

Principle: The zeta potential of paper fibers represents theelectrostatic potential at the slipping plane of the fiber-solution interface. This measurement directly influences how chemical additives interact with and adhere to fibers during paper production. Measuring zeta potential provides forensic scientists with a quantitative method to predict additive demand and understand the chemical profile of paper samples.

Protocol: The following methodology details the standardized approach for measuring zeta potential in paper fibers using specialized instrumentation:

Sample Preparation: Obtain a representative paper sample of approximately 0.5-1.0 grams. Disintegrate the sample in 1 liter of deionized water using a standard disintegrator for 10,000 revolutions at 1.5% consistency. Filter the resulting slurry through a 200-mesh screen to remove large contaminants.

Instrument Calibration: Power on the SZP-16 or similar zeta potential instrument. Perform a three-point calibration using standard solutions of known zeta potential (-50 mV, 0 mV, +50 mV) according to manufacturer specifications. Verify calibration stability with a control sample before proceeding with unknown samples.

Measurement Procedure: Transfer 50 mL of the prepared fiber suspension to the measurement cell. Ensure the cell is free of air bubbles. Initiate the automated measurement cycle, which typically completes within 2 minutes. The instrument applies an electric field and measures the electrophoretic mobility of particles, which is converted to zeta potential using the Smoluchowski approximation.

Data Interpretation: Record the average zeta potential value from three replicate measurements. A highly negative zeta potential (typically -30 mV to -50 mV for cellulose fibers) indicates strong anionic character and predicts high demand for cationic additives like wet-strength resins. Compare values against known paper samples for forensic comparison.

Forensic Application: This technique enables the classification of paper types based on their surface chemistry and can detect anomalous additive patterns that may indicate document alteration or forgery. The SZP-16 instrument's portability allows for analysis in various laboratory settings [16].

Chemical Additive Demand Quantification

Principle: The Particle Charge Detector (PCD) measures the colloidal charge demand of process water and fiber suspensions, directly indicating the optimal dosage of chemical additives required for paper formation. This measurement complements zeta potential data by providing information on the total charge demand of the system.

Protocol:

Sample Preparation: Collect process water from paper maceration or prepare a fiber suspension as described in Section 2.1. Centrifuge at 3000 rpm for 5 minutes to remove suspended solids if analyzing water only.

Titration Procedure: Transfer 10 mL of sample to the PCD-06 measurement cell. Add 0.001N poly-DADMAC standard titrant in 0.1 mL increments. After each addition, measure the streaming current potential. Continue titration until the endpoint is reached (sign change of the streaming current).

Calculation: Calculate the charge demand using the formula: Charge Demand (μeq/L) = (Vt × Nt × 1000) / Vs, where Vt is titrant volume (mL), Nt is titrant normality, and Vs is sample volume (mL).

Forensic Application: Variations in charge demand between paper samples from different sources provide distinctive chemical signatures. Anomalous values in specific document areas may indicate localized alterations or additions.

Table 1: Quantitative Analysis of Wet Strength Additives in Paper Products

| Paper Product Type | Common Additive Chemistry | Typical Strength Improvement | Key Analytical Signatures |

|---|---|---|---|

| Packaging Materials | Polyamide-epichlorohydrin (PAE) resins | 20-30% increase in wet durability [17] | High nitrogen content, chlorine residues from cross-linking |

| Hygiene Products & Wipes | Polyacrylamides, Glyoxalated resins | 15-25% improvement in product lifespan [17] | Aldehyde groups, thermal curing response |

| Tissue and Towels | Polyamide-epichlorohydrin resins | 10-20% increase in wet strength [17] | Medium nitrogen content, specific ionic charge profile |

| Medical & Sanitary Products | Biocompatible PAE, Polyethyleneimine | Regulatory compliance focused [17] | Low cytotoxicity, specific extractables profile |

| Specialty & Security Papers | Cross-linked polyacrylamides | Enhanced resistance to solvent alteration [17] | Unique fluorescence markers, specific thermal decomposition products |

Experimental Protocols for Comprehensive Paper Analysis

Integrated Fiber and Additive Characterization Workflow

The following workflow diagram illustrates the comprehensive protocol for forensic paper analysis, integrating multiple analytical techniques to characterize fibers, additives, and watermarks:

Diagram 1: Paper analysis workflow for forensic examination.

Watermark Analysis and Documentation Protocol

Principle: Watermarks are distinctive patterns created during paper manufacturing by impressing a design with a raised wire mesh (dandy roll) onto the wet paper web. These features provide valuable forensic markers for dating, authenticating, and sourcing paper documents.

Protocol:

Non-Destructive Examination:

- Place the document on a light box with adjustable intensity. Use oblique lighting at 15-45 degrees to enhance contrast.

- Capture high-resolution images (minimum 600 DPI) using a digital camera with a macro lens. Take multiple exposures with varying lighting angles.

- For low-contrast watermarks, employ infrared or ultraviolet photography to enhance visibility. Use appropriate safety filters.

Beta Radiography (When Non-Destructive Analysis is Inadequate):

- This specialized technique requires proper licensing and radiation safety protocols.

- Place the document in close contact with beta radiography film (e.g., Kodak SR-45) in a vacuum frame.

- Expose to a beta radiation source (Carbon-14) for 2-5 minutes, depending on paper density.

- Develop the film according to manufacturer specifications to obtain a high-contrast negative of the watermark.

Watermark Classification:

- Compare the obtained watermark image to reference databases (e.g., Churchill, Briquet).

- Document key characteristics: design type, placement, spacing of lines, and any distinctive features.

- Note any inconsistencies in watermark clarity or distortion that may indicate addition or alteration.

Forensic Application: Watermark analysis can establish the earliest possible creation date of a document (terminus post quem) and provide evidence of authenticity when compared to known genuine samples from the same paper manufacturer and production period.

Research Reagent Solutions and Essential Materials

Table 2: Essential Research Reagents and Instruments for Forensic Paper Analysis

| Reagent/Instrument | Function/Application | Forensic Analysis Significance |

|---|---|---|

| SZP-16 Zeta Potential Instrument [16] | Measures surface charge of fibers in suspension | Quantifies fiber-additive interaction potential; provides chemical signature for paper comparison |

| PCD-06 Particle Charge Detector [16] | Determines total charge demand of fiber suspensions | Identifies optimal additive dosage; detects anomalous chemical treatments in questioned documents |

| Poly-DADMAC Standard Titrant | Categorical polymer for charge titration | Standardized reagent for charge demand measurements; enables quantitative comparison between samples |

| Wet Strength Additives (PAE resins, Polyacrylamides) [17] | Reference materials for analytical comparison | Provides benchmarks for identifying unknown additives via chromatography and spectroscopy |

| Fiber Staining Reagents (Graff "C" Stain, Herzberg Stain) | Differentiates fiber types under microscopy | Identifies wood vs. non-wood fibers; detects fiber blends characteristic of specific paper grades |

| Beta Radiography System | Creates detailed images of watermarks and paper structure | Non-destructive visualization of internal paper features for authentication and dating |

Advanced Analytical Integration for Questioned Document Examination

The forensic analysis of paper requires a systematic approach that integrates multiple analytical techniques to build a comprehensive profile of a questioned document. The combination of zeta potential measurements, charge demand titration, additive characterization, and watermark analysis creates a multi-parameter signature that is difficult to replicate, providing strong scientific evidence in document authentication.

Emerging trends in paper manufacturing, including the development of eco-friendly additives and compatibility with recycled fibers, are introducing new variables that forensic scientists must understand [17]. These developments create temporal markers that can help date documents based on the technological landscape of paper production at specific time periods.

Future directions in forensic paper analysis include the development of standardized reference databases for chemical additive profiles, advanced spectral imaging techniques for non-destructive analysis, and machine learning algorithms for pattern recognition in watermark and fiber distribution analysis. These advancements will further strengthen the scientific foundation of questioned document examination, providing increasingly sophisticated tools for legal proceedings and historical authentication.

A Practical Guide to Modern Paper Examination Techniques and Equipment

Non-destructive optical examination forms the cornerstone of forensic document analysis, allowing researchers to investigate questioned documents without altering or damaging the evidentiary material. These techniques leverage various properties of light and its interaction with document substrates and inks to reveal latent information, detect alterations, and authenticate materials. Within the broader thesis on questioned document examination paper analysis techniques, this paper details application notes and standardized protocols for three principal optical methods: Video Spectral Comparators (VSC), microscopy, and alternate light source analysis. The non-destructive nature of these techniques preserves the integrity of original documents for subsequent examinations or legal proceedings, making them the preferred first line of investigation in forensic document laboratories worldwide [18] [13].

The Scientific Principles of Light-Document Interaction

The fundamental principle underlying non-destructive optical examination is the analysis of how light interacts with document materials. When light strikes a document surface, several interactions can occur, including reflection, absorption, transmission, and luminescence [19]. Different inks, papers, and alterations exhibit characteristic responses to these interactions, creating spectral signatures that trained examiners can interpret.

- Reflection: The document surface reflects incident light. Variations in surface topography, such as indented writing, can be visualized using oblique lighting.

- Absorption: Materials on the document absorb specific light wavelengths. Infrared (IR) absorption, for instance, can differentiate between inks that appear identical under visible light.

- Transmission: Light passes through the document substrate. Transmission microscopy can reveal watermarks and paper density variations.

- Luminescence: Materials absorb light at one wavelength and re-emit it at a longer wavelength. Ultraviolet (UV) radiation often induces visible luminescence in paper additives, security features, or certain inks.

The electromagnetic spectrum utilized in these examinations extends beyond visible light (approximately 400-700 nm) into the ultraviolet (200-400 nm) and infrared (700-1000 nm) ranges [19] [18]. Specialized instruments like VSCs use filters to isolate these non-visible wavelengths, converting them into visible images for analysis [18].

Core Instrumentation and Research Toolkit

The following section catalogs the essential equipment and reagents constituting the core research toolkit for non-destructive document analysis.

Table 1: Essential Research Toolkit for Non-Destructive Optical Document Examination

| Tool/Instrument | Primary Function | Key Applications in Document Analysis |

|---|---|---|

| Video Spectral Comparator (VSC) | Multi-spectral imaging system with high-resolution camera and varied illumination sources (UV-Vis-IR) [19]. | Ink differentiation, detection of alterations/obliterations, visualization of security features, examination of passports and travel documents [19] [20]. |

| Stereomicroscope | Provides three-dimensional, magnified view of document surfaces [18]. | Handwriting and signature analysis, examination of paper fiber structure, detection of mechanical erasures, writing instrument tip analysis [18] [13]. |

| Alternate Light Source (ALS) | High-intensity light source with selectable wavelengths (filters) [18]. | Inducing and observing luminescence in inks and papers, preliminary ink differentiation. |

| Electrostatic Detection Device (EDD) | Creates electrostatic image of indented writing on a plastic film [20] [13]. | Visualizing indented impressions on a document, such as text from a page that was written on above it. |

| Bandpass, Longpass, & Shortpass Filters | Optical filters that isolate specific wavelength ranges for the camera [19]. | Used with VSC and ALS to isolate UV, IR, or specific visible light responses. |

| Polarizing Filters | Filters that reduce glare from reflective surfaces [19]. | Improving contrast and visualizing details on glossy paper or laminated surfaces. |

Application Notes & Experimental Protocols

Video Spectral Comparator (VSC) Analysis

Video Spectral Comparators represent the most advanced optical systems for document examination, integrating high-resolution digital imaging with precisely controlled multi-wavelength illumination [19] [20].

Theoretical Basis and Applications

The VSC operates on the principle that different materials absorb, reflect, transmit, and luminesce differently across the electromagnetic spectrum [19]. Inks that are visually identical may exhibit starkly different characteristics in the IR or UV ranges. This allows examiners to:

- Differentiate Inks: Determine if multiple inks were used on a document, even if they are the same color [18].

- Detect Alterations and Obliterations: Reveal text that has been erased, covered, or chemically bleached [19] [13].

- Examine Security Features: Visualize and authenticate hidden security elements in passports, IDs, and banknotes [19] [20].

Quantitative VSC Performance Data

Modern VSC systems offer a range of technical capabilities, as summarized in the table below.

Table 2: Quantitative Performance Specifications of Modern VSC Systems

| Examination Feature | VSC9000/8000-HS Performance | VSC90/80 Series Performance | Primary Application |

|---|---|---|---|

| Spectral Range | UV through IR (Full Spectrum) [20] | UV-Vis-IR (Multispectral) [20] | Broad-spectrum analysis. |

| Camera Resolution | Up to 127 MP (Super-resolution) [19] | High-resolution (e.g., 12MP) [19] | Microscopic detail capture. |

| Imaging Modes | Multi-spectral, Hyper-spectral, 3D Topographical [20] | Multi-spectral, Fluorescence [20] | Diverse evidence visualization. |

| Additional Analytics | Integrated Micro-spectrometry [19] | e-Chip data extraction (VSC STAC) [20] | Ink chemistry; Digital document authentication. |

Standardized Protocol: VSC-Based Ink Differentiation

Objective: To determine if two visually similar ink entries on a document were made with the same or different ink compositions.

Materials: VSC workstation (e.g., Foster+Freeman VSC8000/HS or similar), computer with VSC software, questioned document [19] [20].

Workflow:

- Document Preparation: Place the document on the VSC examination stage. Ensure it is flat and secure.

- Initial Documentation: Capture a high-resolution color image under standard white light illumination.

- Infrared (IR) Absorption Analysis:

- Engage the IR light source.

- Apply a deep red or near-IR longpass filter (e.g., >700nm) to the camera.

- Observe and capture images. Some inks will become transparent (absorb IR), while others remain opaque (reflect IR) [19].

- Infrared Luminescence Analysis:

- Illuminate the document with high-intensity blue-green light.

- Use a longpass filter blocking the illuminating light but transmitting any emitted IR radiation (>800nm).

- Capture images. Some inks will exhibit IR luminescence, appearing bright against a dark background [18].

- UV Fluorescence Analysis:

- Switch to UV illumination (e.g., 200-400nm).

- Use a filter to block the UV light and observe the visible fluorescence.

- Capture images. Paper and inks will fluoresce at different intensities and colors [18].

- Analysis and Reporting: Compare the spectral responses of the questioned ink entries. Different behaviors in IR absorption, luminescence, or UV fluorescence indicate different ink compositions. Compile all images into a formal report.

The following workflow diagram illustrates this standardized protocol.

Microscopic Examination

Microscopy serves as a fundamental, first-line tool for the physical examination of questioned documents, providing magnification and enhanced depth perception [18] [13].

Theoretical Basis and Applications

Stereomicroscopes offer a three-dimensional view of the document surface, revealing fine details imperceptible to the naked eye. This technique is crucial for:

- Handwriting and Signature Analysis: Examining line quality, pen lifts, tremors, retouching, and stroke sequence that may indicate forgery [13].

- Writing Instrument Identification: Analyzing stroke characteristics to determine whether a ballpoint pen, gel pen, fountain pen, or fiber-tip pen was used [18].

- Erasure and Alteration Detection: Identifying disturbances to the paper surface from mechanical erasures or abrasive tools [13].

Standardized Protocol: Microscopic Analysis of Line Intersections

Objective: To determine the sequence of intersecting lines (e.g., which pen stroke was applied first).

Materials: Stereomicroscope (10x to 40x magnification), fiber-optic oblique lighting, questioned document.

Workflow:

- Microscope Setup: Place the document under the stereomicroscope. Use low magnification to locate the intersection of interest.

- Oblique Lighting: Position the fiber-optic light source at a low, oblique angle (5-30 degrees) to the document surface. This creates shadows that enhance the perception of depth and topography.

- Focus and Examination: Systematically adjust the focus through different planes of the intersection. Observe the behavior of the ink lines at the crossing point.

- Interpretation:

- The second stroke applied will typically appear continuous and on top, crossing the first stroke without interruption.

- The first stroke may appear to be "broken" or may show a slight ridge of ink from the second pen crossing over it.

- Minute ink spatter or "shouldering" can often be seen along the edges of the second stroke.

- Documentation: Capture digital images at the highest possible resolution from the optimal angle and focal plane. Use focus stacking software if necessary to create a fully sharp composite image.

Alternate Light Source (ALS) and Filtered Light Analysis

This technique uses specific wavelengths of light to excite luminescence in document materials, which is then observed through blocking filters [18].

Theoretical Basis and Applications

Many organic compounds, including dyes in inks and additives in paper, fluoresce when excited by light of a specific wavelength. An ALS with a range of wavelength filters can optimize this response for:

- Enhancing Faded/Washed Writing: Recovering text that has been intentionally faded or accidentally washed out.

- Detecting Stains and Latent Impressions: Visualizing otherwise invisible biological or chemical stains on documents.

- Security Feature Verification: Actifying luminescent security threads or inks in passports and currencies.

Standardized Protocol: Using ALS to Detect Obliterated Writing

Objective: To recover text that has been covered or obliterated by another ink.

Materials: Alternate Light Source (ALS) with a range of excitation filters, appropriate safety goggles, camera with a matching barrier filter.

Workflow:

- Safety First: Put on appropriate safety goggles rated for the wavelength of light being used.

- Initial Observation: Observe the obliterated area under normal white light and document its appearance.

- Wavelength Sweep: Begin with the ALS set to a long wavelength (e.g., red) and progressively move to shorter wavelengths (e.g., blue, green, UV). Observe the document through corresponding barrier filters that block the excitation light.

- Optimize Contrast: Adjust the wavelength and angle of the light source to maximize the contrast between the obliterating material and the underlying writing. The goal is to find a wavelength where the underlying ink fluoresces and the covering ink does not, or vice versa.

- Image Capture: Once the optimal contrast is achieved, attach the correct barrier filter to the camera lens and capture a high-resolution photograph. Long exposure times may be necessary due to low light levels.

The true power of non-destructive optical examination is realized when these techniques are used in an integrated, complementary manner. A typical examination might begin with stereomicroscopy to assess physical characteristics, proceed to VSC analysis for a full spectral investigation, and use specific ALS settings to target particular luminescent responses. This multi-layered approach builds a robust and defensible body of evidence.

These non-destructive methods form the indispensable foundation of modern questioned document examination. The protocols outlined herein provide a standardized framework for researchers and forensic scientists to reliably authenticate documents, detect forgeries, and uncover hidden evidence, thereby making a critical contribution to the integrity of legal and investigative processes. Future advancements in sensor technology, machine learning-based image analysis, and portable spectroscopic systems will further enhance the sensitivity and applicability of these essential techniques.

Application Notes

This document provides detailed application notes and protocols for the chemical analysis of paper in questioned document examination. The techniques outlined—Thin-Layer Chromatography (TLC), Gas Chromatography-Mass Spectrometry (GC-MS), and Raman Spectroscopy—enable the characterization of inks, binding media, and paper substrates to support document dating and authentication.

Thin-Layer Chromatography (TLC) for Ink Analysis

Principle and Forensic Application Thin-Layer Chromatography is a solid-liquid chromatographic method ideal for separating the complex dye mixtures found in writing inks. Its principle is based on the differential migration of analyte components between a polar stationary phase (e.g., silica gel) and a mobile solvent phase, resulting in distinct spots characterized by their retardation factor (Rf) [21] [22]. In forensic document analysis, TLC is indispensable for comparing ink formulations, detecting ink mismatches, and tracking the degradation of specific dye components over time, which can contribute to relative dating studies [23].

Key Data and Performance The analytical outcome hinges on the Rf value, calculated as the distance travelled by the substance divided by the distance travelled by the solvent front [21]. This value is characteristic of a compound under a specific set of conditions. A well-optimized method will show excellent separation of dye components. Visualization is a critical step; while colored inks may be visible directly, many components require methods like ultraviolet light (to quench fluorescence) or chemical reagents (e.g., ninhydrin for specific functional groups) to become apparent [21] [22].

Table 1: Typical TLC Solvent Systems for Ink Analysis

| Solvent System | Polarity | Best For | Visualization Method |

|---|---|---|---|

| Hexane / Ethyl Acetate (20:1) [22] | Low | Non-polar dyes | UV, Phosphomolybdic acid |

| Dichloromethane / Methanol (var.) [22] | Medium-High | Polar dyes, ballpoint inks | UV, Ninhydrin |

| Ethyl Acetate / Ethanol / Water (70:35:30) [23] | High | Water-soluble inks | Specific chemical stains |

Gas Chromatography-Mass Spectrometry (GC-MS) for Substrate and Medium Analysis

Principle and Forensic Application GC-MS combines the separation power of gas chromatography with the identification capability of mass spectrometry. It is particularly suited for analyzing volatile and semi-volatile organic components in paper and its coatings, such as binders, resins, waxes, and sizing agents [23]. In substrate dating, GC-MS can profile the organic composition of paper, identify specific additives that were historically introduced at known times, and detect degradation products that accumulate with aging.

Key Data and Performance Recent advancements have led to rapid GC-MS methods, which reduce analysis times from approximately 30 minutes to just 10 minutes while maintaining or improving data quality [24] [25]. This is achieved through optimized temperature programming and carrier gas flow rates. Method validation data demonstrates excellent performance, with retention time relative standard deviations (RSDs) of ≤ 0.25% for stable compounds and detection limits for key analytes improved by at least 50% compared to conventional methods (e.g., Cocaine detection as low as 1 μg/mL) [24]. These validation parameters ensure the results are precise, accurate, and forensically defensible [25].

Table 2: Performance Metrics of Rapid vs. Conventional GC-MS

| Parameter | Conventional GC-MS | Rapid GC-MS |

|---|---|---|

| Total Run Time | ~30 minutes [24] | ~10 minutes [24] |

| Retention Time Precision (RSD) | N/A | < 0.25% [24] |

| Exemplary Limit of Detection (LOD) | Cocaine: 2.5 μg/mL [24] | Cocaine: 1 μg/mL [24] |

| Key Application | General analysis of seized drugs [24] | Fast screening for complex mixtures [24] [25] |

Raman Spectroscopy for Non-Destructive In Situ Analysis

Principle and Forensic Application Raman spectroscopy is a powerful, non-destructive technique that provides a molecular fingerprint based on inelastic scattering of light from a sample [26]. Its primary advantage in document examination is the ability to analyze inks and paper directly, in situ, with minimal to no sample preparation. This is crucial for analyzing valuable evidence without altering it. It can identify specific pigments, differentiate between visually similar inks, and characterize paper composition.

Key Data and Performance A significant challenge in Raman analysis of paper is the inherent background fluorescence of the cellulose substrate, which can obscure the weaker Raman signal [26]. Advanced techniques have been developed to overcome this:

- Wavelength Modulated Raman Spectroscopy (WMRS): This method uses multiple, slightly shifted excitation wavelengths to computationally separate the Raman signal from the constant fluorescent background, achieving over a 100-fold improvement in signal-to-noise ratio [26].

- Surface-Enhanced Raman Spectroscopy (SERS): This approach uses nanostructured plasmonic materials (e.g., silver or gold nanoparticles) to dramatically enhance the Raman signal, allowing for trace-level detection. SERS-active substrates can be fabricated by impregnating paper with silver nanoparticles, creating "hot spots" for enhancement and achieving detection limits in the parts-per-billion range for model analytes [27] [28].

Table 3: Raman Techniques for Paper and Ink Analysis

| Technique | Mechanism | Key Advantage | Reported Sensitivity |

|---|---|---|---|

| Standard Raman | Normal scattering | Non-destructive, fingerprinting | Limited by fluorescence |

| WMRS | Multi-wavelength excitation & PCA | Suppresses paper fluorescence | Nanomolar for pharmaceuticals on paper [26] |

| SERS | Plasmonic enhancement on nanoparticles | Ultra-high sensitivity | ~5 ppb for 4-ATP on Ag/chitosan paper [27] |

Experimental Protocols

Protocol A: TLC Analysis of Ink Dyes

Objective To separate and identify the dye components of a writing ink from a questioned document.

Materials and Reagents

- TLC Plates: Silica gel (e.g., Whatman 1 CHR) [21] [27]

- Solvents for Extraction: Methanol (99.9%) for liquid inks [24]

- Mobile Phase: e.g., Ethyl Acetate/Hexane mixture; must be optimized [22]

- Micropipette or Glass Capillaries: For sample application [21]

- Developing Chamber: A jar with a lid or a beaker covered with foil [22]

- Visualization Agents: UV lamp (254 nm/365 nm), ninhydrin reagent (for specific functional groups) [21]

Procedure

- Sample Preparation: For a ink line on paper, carefully excise a 1-2 mm segment using a scalpel. Extract the ink by soaking the segment in 50-100 μL of methanol in a micro-vial for 10-15 minutes [24].

- Plate Preparation: Cut a TLC plate to size (~5x5 cm). Using a pencil (not pen), draw a baseline 1 cm from the bottom and mark spots at least 1 cm apart [21] [22].

- Spotting: Using a capillary or micropipette, apply ~1 μL of the extracted ink solution as a small spot on the baseline. Allow the spot to dry completely. Re-apply if necessary to concentrate the sample [22].

- Development: Pour the mobile phase into the chamber to a depth of ~0.5 cm. Place the spotted TLC plate in the chamber, ensuring the solvent level is below the baseline. Seal the chamber and allow the solvent to ascend via capillary action until it is 5-10 mm from the top of the plate [21].

- Visualization: Remove the plate and immediately mark the solvent front with a pencil.

- First, examine under UV light (254 nm and 365 nm) and outline any fluorescent or quenching spots.

- If needed, carefully spray with a visualizing agent like ninhydrin and heat as required to develop color [21].

- Analysis: Calculate the Rf value for each separated spot. Compare the pattern and Rf values to those from known ink standards run on the same plate [21] [22].

Protocol B: Rapid GC-MS Analysis of Paper Extracts

Objective To rapidly screen and identify semi-volatile organic components (e.g., binders, additives) in a paper sample.

Materials and Reagents

- GC-MS System: Agilent 7890B GC/5977A MSD or equivalent, equipped with a DB-5 ms column (30 m × 0.25 mm × 0.25 μm) [24]

- Carrier Gas: Helium (99.999% purity), fixed flow rate of 2 mL/min [24]

- Extraction Solvent: Methanol (99.9%), high purity [24]

- Vials: 2 mL GC-MS capped vials [24]

Procedure

- Sample Preparation (Liquid Extraction):

- For paper substrate, weigh approximately 0.1 g of material and grind it into a fine powder.