Achieving TRL 4: A Practical Guide to Inter-Laboratory Validation for Forensic Techniques

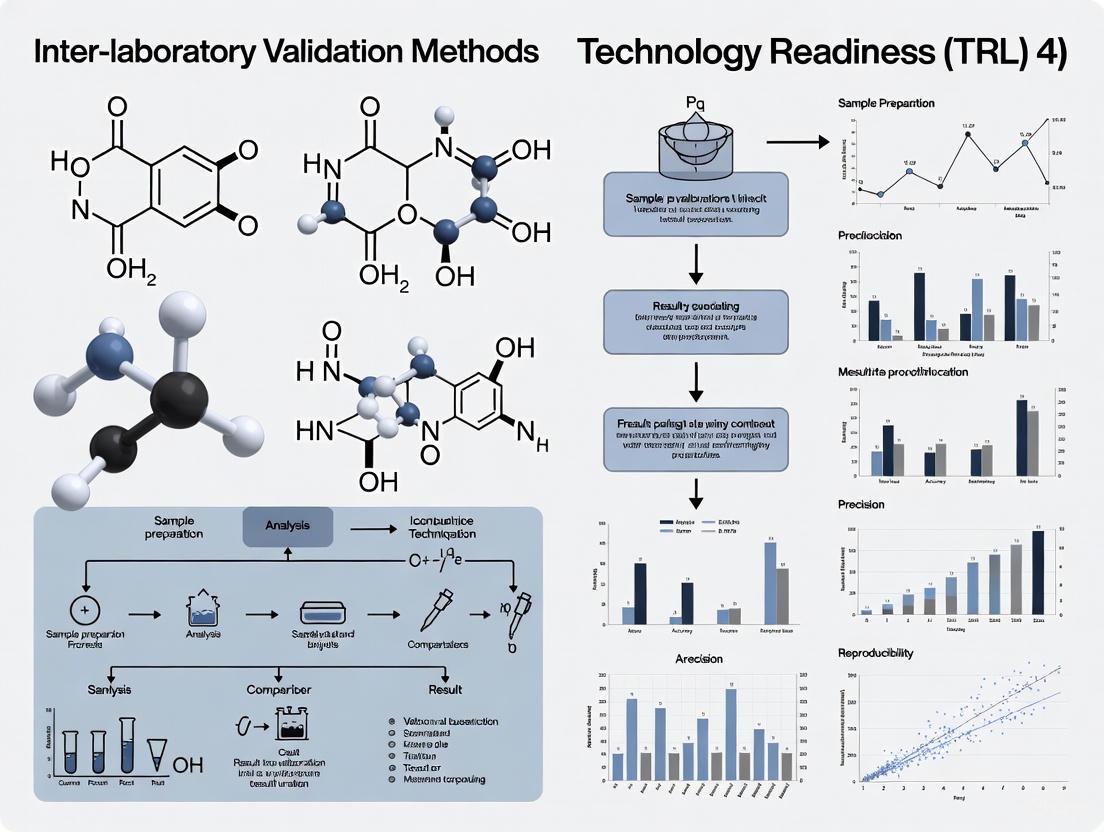

This article provides a comprehensive guide for researchers and forensic science professionals on conducting inter-laboratory validation studies to advance forensic techniques to Technology Readiness Level (TRL) 4.

Achieving TRL 4: A Practical Guide to Inter-Laboratory Validation for Forensic Techniques

Abstract

This article provides a comprehensive guide for researchers and forensic science professionals on conducting inter-laboratory validation studies to advance forensic techniques to Technology Readiness Level (TRL) 4. TRL 4 represents a critical milestone where a method transitions from a single-laboratory proof-of-concept to a standardized procedure with demonstrated intra-laboratory validation and initial inter-laboratory trials. We cover the foundational principles of inter-laboratory comparisons (ILC) and proficiency testing (PT), methodological frameworks for implementation, strategies for troubleshooting and optimization, and the rigorous validation required to meet legal admissibility standards such as the Daubert Standard and Federal Rule of Evidence 702. The content is designed to support the development of reliable, reproducible, and court-ready forensic methods.

The Bedrock of Reliability: Understanding TRL 4 and Inter-Laboratory Comparisons

Technology Readiness Level 4 serves as a critical gateway in the maturation of forensic methods, marking the transition from fundamental concept to validated laboratory procedure. This stage is defined by the integration of basic technological components to establish that they work together in a controlled laboratory environment [1]. For forensic science, TRL 4 represents the first systematic validation of a method within a single laboratory, forming the essential foundation required for subsequent inter-laboratory standardization efforts [2] [1].

Achieving TRL 4 demonstrates that a forensic technique can produce reliable results under controlled conditions before facing the complexities of multi-laboratory implementation. This progression is vital for maintaining the scientific rigor and reliability demanded by legal standards, including the Daubert Standard and Federal Rule of Evidence 702, which require demonstrated validity, known error rates, and peer review for scientific evidence [3]. This article delineates the principles, components, and experimental pathways for achieving TRL 4 validation as a prerequisite for robust inter-laboratory standardization in forensic science.

Technology Readiness Levels in Context

The TRL framework provides a systematic approach for assessing the maturity of a developing technology. At a fundamental research level, TRLs 1-3 encompass basic principle observation and experimental proof-of-concept. The subsequent research and development phase, which includes TRL 4 and TRL 5, focuses on validation in laboratory and simulated environments [1].

TRL 4 is specifically characterized as "Validation of component(s) in a laboratory environment," where basic technological components are integrated to establish that they work together [1]. In forensic science, this translates to developing and testing a method's core components in a controlled setting to verify they function as an integrated system. The immediate next stage, TRL 5, involves "Validation of semi-integrated component(s) in a simulated environment," where the integrated components are tested in an environment that more closely resembles real-world conditions [1].

The following workflow illustrates the progression from technology development at TRL 4 to inter-laboratory standardization:

Core Components of TRL 4 Validation

Defining the TRL 4 Stage

According to the Government of Canada's TRL Assessment Tool, TRL 4 is defined as "Validation of component(s) in a laboratory environment" with the following specific characteristics [1]:

- Integration of basic technological components to establish that they work together in a laboratory environment

- Use of systems that may be "ad-hoc," potentially comprising available equipment and special-purpose components

- Special handling, calibration, or alignment may be required for components to function together

- Testing occurs in a "laboratory environment" - a fully controlled test environment where a limited number of functions and variables are tested [1]

This stage represents a crucial departure from earlier TRLs, as it focuses on component integration rather than individual component performance. As one practitioner notes, "Success at TRL 4 is about components working together harmoniously" after potential issues like electromagnetic interference between components are identified and resolved [4].

Key Activities and Documentation Requirements

The critical activities for achieving TRL 4 in forensic science include:

- Controlled Integration Testing: Establishing that all methodological components work together in a controlled laboratory setting [1]

- Baseline Performance Establishment: Documenting performance metrics under ideal conditions to establish baseline expectations [4]

- Protocol Drafting: Creating preliminary standard operating procedures that integrate all methodological components [5]

- Error Identification: Systematically identifying and documenting sources of error and variability in the integrated system [5]

Documentation at this stage must include [4]:

- Detailed records of integration testing procedures and outcomes

- Comprehensive documentation of unexpected behaviors or system interactions

- Preliminary standard operating procedures that integrate all methodological components

- Initial validation data demonstrating the integrated system's performance

Experimental Protocols for TRL 4 Validation

Case Study: Duct Tape Physical Fit Analysis

A recent interlaboratory study on duct tape physical fit examinations exemplifies the TRL 4 validation process. The researchers developed a systematic method for examining, documenting, and interpreting duct tape physical fits using standardized qualitative descriptors and quantitative metrics [5] [6].

Experimental Protocol:

- Sample Preparation: Medium-quality grade duct tape samples were prepared using various separation methods including scissor-cut and hand-torn separations [5]

- Component Integration: The method integrated multiple examination components including:

- Visual examination of tape edges

- Documentation of scrim fiber patterns

- Calculation of Edge Similarity Score (ESS)

- Statistical interpretation framework [5]

- Controlled Testing: Examination of known fit and non-fit pairs under controlled laboratory conditions

- Performance Metrics: Evaluation based on accuracy rates, false positive rates, and false negative rates [5]

Key Integration Challenge: The method required harmonizing subjective visual examination with quantitative ESS scoring, ensuring these components worked together reliably before interlaboratory distribution [5].

Case Study: Forensic Glass Analysis

Another exemplar TRL 4 validation comes from forensic glass analysis, where multiple analytical techniques were integrated and validated [7].

Integrated Techniques:

- Refractive Index (RI) measurement

- Micro X-ray Fluorescence Spectroscopy (μXRF)

- Laser Induced Breakdown Spectroscopy (LIBS) [7]

Validation Protocol:

- Sample Set Establishment: Automotive windshield glass fragments of known origin were curated as reference materials [7]

- Method Integration: Multiple elemental analysis techniques were integrated with traditional optical methods

- Controlled Comparison: Known vs. questioned sample comparisons performed under standardized laboratory conditions

- Performance Benchmarking: Establishment of correct association rates (>92% for same-source samples) and exclusion rates (82-96% for different-source samples) [7]

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 1: Key Research Reagent Solutions for TRL 4 Validation in Forensic Science

| Item | Function in TRL 4 Validation | Exemplary Application |

|---|---|---|

| Reference Materials | Provide ground truth for method validation | Automotive windshield glass samples of known origin [7] |

| Standardized Scoring Metrics | Enable quantitative assessment of method performance | Edge Similarity Score for duct tape physical fits [5] |

| Control Samples | Monitor method performance and identify drift | NIST SRM 1831 for glass analysis quality control [7] |

| Protocol Documentation Templates | Ensure consistent implementation across operators | Standardized forms for bin-by-bin documentation of tape edges [5] |

| Data Analysis Frameworks | Provide statistical interpretation of results | Likelihood ratio calculations for evidence weight assessment [7] |

Quantitative Assessment and Performance Metrics

Establishing quantitative performance metrics is essential for TRL 4 validation. The following data from forensic method validation studies illustrate typical performance benchmarks:

Table 2: Performance Metrics from Forensic Method Validation Studies

| Method | Sample Type | Correct Association Rate | Correct Exclusion Rate | Key Metric |

|---|---|---|---|---|

| Duct Tape Physical Fits [5] | Hand-torn duct tape | 92% | Not reported | Edge Similarity Score (ESS) |

| Duct Tape Physical Fits [5] | Scissor-cut duct tape | 81% | Not reported | Edge Similarity Score (ESS) |

| Refractive Index Glass Analysis [7] | Automotive windshield | >92% | 82% | Elemental composition |

| μXRF Glass Analysis [7] | Automotive windshield | >92% | 96% | Spectral overlay comparison |

| LIBS Glass Analysis [7] | Automotive windshield | >92% | 87% | Elemental profile |

These quantitative metrics provide the essential foundation for evaluating method performance before proceeding to interlaboratory studies. The duct tape physical fit study demonstrated particularly rigorous validation, with initial testing on "greater than 3000 duct tape comparisons" before interlaboratory distribution [5].

Pathway to Interlaboratory Standardization

Successful TRL 4 validation enables progression to more advanced testing stages, ultimately leading to interlaboratory standardization. The critical next steps include:

Transition to TRL 5

TRL 5 involves "Validation of semi-integrated component(s) in a simulated environment" where the integrated components are tested in conditions more closely resembling real-world applications [1]. This represents a crucial advancement from TRL 4, moving from controlled laboratory validation to simulated realistic conditions [4].

Interlaboratory Studies as Validation Tool

Interlaboratory studies represent a powerful mechanism for validating forensic methods across multiple operational environments. These studies:

- Evaluate consistency of results across different laboratories and practitioners [5] [7]

- Identify sources of variability in method application and interpretation [8]

- Provide data on method robustness and reproducibility [6]

- Establish foundational data for consensus standards and protocols [5]

The duct tape physical fit study exemplifies this process, with 38 participants across 23 laboratories conducting 266 separate examinations, yielding overall accuracy rates of 95-99% after method refinement [6].

Standardization and Implementation

The ultimate goal of TRL 4 validation is to establish methods sufficiently robust for standardization and implementation across forensic laboratories. This requires:

- Development of Consensus Protocols: Incorporating feedback from multiple laboratories to create practical, implementable methods [5]

- Training Programs: Ensuring consistent application of methods across different practitioners and laboratories [5]

- Performance Monitoring: Establishing ongoing quality assurance measures to maintain method reliability [7]

The iterative refinement process demonstrated in the duct tape study—where feedback from the first interlaboratory exercise was used to improve methods for a second exercise—exemplifies this standardization pathway [5] [6].

Technology Readiness Level 4 represents a pivotal stage in forensic method development, where integrated components are first validated in a controlled laboratory environment. This stage provides the essential foundation for subsequent validation in simulated and operational environments, ultimately leading to robust interlaboratory standardization. Through systematic integration, controlled testing, and quantitative performance assessment, TRL 4 validation establishes the scientific reliability necessary for forensic methods to meet legal standards and contribute meaningfully to the administration of justice. The progression from single-laboratory validation to multi-laboratory standardization ensures that forensic methods produce consistent, reproducible results across the diverse landscape of operational forensic laboratories.

For forensic techniques, particularly those in the Technology Readiness Level (TRL) 4 research phase, transition from experimental methods to legally admissible evidence presents a significant challenge [3]. Interlaboratory Comparisons (ILCs) and Proficiency Testing (PT) are critical validation tools that provide the empirical foundation required by legal systems for evidence admissibility [9]. These processes deliver the objective performance data necessary to demonstrate that forensic methods are reliable, reproducible, and scientifically sound, thereby bridging the gap between laboratory research and courtroom application [3] [9]. For researchers developing new forensic techniques, integrating ILC/PT protocols early in the validation process is essential for establishing the method's error rates, limitations, and operational boundaries—factors that courts increasingly require under evidentiary standards such as Daubert and Mohan [3].

Legal Frameworks Governing Forensic Evidence Admissibility

The admissibility of forensic evidence in legal proceedings is governed by specific legal standards that directly implicate the need for robust validation through ILC and PT.

Key Legal Standards

Table 1: Legal Standards for Expert Testimony and Forensic Evidence

| Standard | Jurisdiction | Core Requirements | ILC/PT Relevance |

|---|---|---|---|

| Daubert Standard [3] | U.S. Federal Courts | - Theory/technique can be tested- Known or potential error rate- Peer review and publication- General acceptance | Provides direct evidence of testability and error rates |

| Frye Standard [3] | Some U.S. State Courts | "General acceptance" in the relevant scientific community | Demonstrates community acceptance through participatory validation |

| Federal Rule 702 [3] | U.S. Federal Courts | - Testimony based on sufficient facts/data- Reliable principles/methods- Reliable application of methods | Supplies quantitative data on method reliability |

| Mohan Criteria [3] | Canada | - Relevance- Necessity- Absence of exclusionary rules- Properly qualified expert | Establishes necessity and reliability of novel techniques |

The Role of Error Rates and Validation

The Daubert standard's emphasis on "known or potential error rate" creates a direct imperative for PT programs [3]. Forensic laboratories must quantitatively characterize their methods' performance through controlled testing scenarios that mimic casework conditions [9]. Without such data, experts cannot truthfully testify to their method's reliability, potentially rendering their evidence inadmissible. For TRL 4 research, this signifies that error rate estimation cannot be an afterthought but must be integrated throughout the development and validation lifecycle.

Proficiency Testing and Interlaboratory Comparisons: Definitions and Schemes

Conceptual Foundations

While often used interchangeably, PT and ILC represent distinct but related concepts in quality assurance:

Proficiency Testing (PT): "The determination of the calibration or testing performance of a laboratory or the testing performance of an inspection body against pre-established criteria by means of interlaboratory comparisons" [9]. PT is a formal evaluation managed by a coordinating body with a reference laboratory, where results are assessed against predetermined criteria [10].

Interlaboratory Comparison (ILC): "The organisation, performance and evaluation of calibration/tests on the same or similar items by two or more laboratories or inspection bodies in accordance with predetermined conditions" [9]. ILCs may be conducted without a reference laboratory, comparing performance among participant laboratories [10].

Experimental Protocols: Implementing PT and ILC Schemes

Protocol 1: Sequential Participation (Round-Robin Testing)

This design is optimal for stable, transportable artifacts [10]:

- Reference Analysis: A reference laboratory first characterizes the artifact to establish reference values.

- Sequential Circulation: The artifact is successively shipped to each participant laboratory.

- Independent Testing: Each laboratory tests the artifact using their standard methods and procedures.

- Result Submission: Participants submit their results to the coordinating body.

- Performance Evaluation: The coordinating body evaluates results using statistical measures (e.g., Normalized Error (Eₙ), Z-score).

Protocol 2: Simultaneous Participation (Split-Sample Testing)

This scheme is ideal for materials that can be homogenized and subdivided [10]:

- Sample Homogenization: A material source is homogenized to ensure uniformity.

- Sample Distribution: Sub-samples are randomly selected and simultaneously distributed to all participants.

- Concurrent Testing: All laboratories test their sub-samples within a defined time window.

- Data Collection: Results are collected by the coordinating body.

- Statistical Analysis: Performance is evaluated using consensus values and Z-scores.

Quantitative Evaluation of PT and ILC Results

Statistical analysis of PT/ILC data provides the objective metrics required for legal defensibility.

Statistical Evaluation Methods

Table 2: Statistical Methods for Evaluating PT/ILC Results

| Method | Calculation | Interpretation | Legal Relevance |

|---|---|---|---|

| Normalized Error (Eₙ) [10] | Eₙ = (Lab_result - Ref_result) / √(U_Lab² + U_Ref²)Where U = measurement uncertainty |

- Satisfactory: |Eₙ| ≤ 1- Unsatisfactory: |Eₙ| > 1 | Directly validates measurement uncertainty claims |

| Z-Score [10] | Z = (Lab_result - Mean) / Standard Deviation |

- Satisfactory: Z ≤ 2- Questionable: 2 < Z < 3- Unsatisfactory: Z ≥ 3 | Demonstrates performance relative to peer laboratories |

Experimental Protocol: Calculating Proficiency Statistics

Protocol 3: Statistical Evaluation of PT Results

For a hypothetical toxicology PT measuring blood alcohol content (BAC):

Participant Data Collection:

- Laboratory result (x_lab): 0.079 g/dL

- Reference value (x_ref): 0.082 g/dL

- Laboratory uncertainty (U_lab): 0.003 g/dL (k=2)

- Reference uncertainty (U_ref): 0.002 g/dL (k=2)

Normalized Error Calculation:

- Numerator: 0.079 - 0.082 = -0.003 g/dL

- Denominator: √(0.003² + 0.002²) = √(0.000009 + 0.000004) = √0.000013 = 0.0036 g/dL

- Eₙ: -0.003 / 0.0036 = -0.83

Interpretation: Since \|Eₙ\| = 0.83 ≤ 1, the result is statistically satisfactory.

This quantitative demonstration of competency provides tangible evidence that can be referenced in court to support an expert's testimony regarding their method's reliability.

The Scientist's Toolkit: Essential Materials for Forensic Validation

Table 3: Research Reagent Solutions for Forensic Method Validation

| Material/Reagent | Function in ILC/PT | Application Examples |

|---|---|---|

| Certified Reference Materials (CRMs) | Provides traceable reference values for quantitative analysis | Drug quantification, toxicology, arson analysis (ignitable liquids) |

| Homogenized Biological Samples | Ensures sample uniformity across participants in split-sample designs | Blood, urine, tissue analysis for toxicology and DNA extraction |

| Stable Isotope-Labeled Internal Standards | Corrects for analytical variability in mass spectrometry-based methods | LC-MS/MS confirmation of drugs of abuse in sweat patches [11] |

| Characterized Illicit Drug Mixtures | Validates qualitative identification and quantitative determination | Seized drug analysis, purity determination, cutting agent identification |

| Synthetic Matrix Blanks | Controls for matrix effects and interference in complex samples | Novel psychoactive substance detection, environmental forensics |

| Data Analysis Software | Enables statistical evaluation of Eₙ, Z-scores, and consensus values | All quantitative forensic disciplines |

Implementation Workflow: From TRL 4 to Courtroom Ready

A structured approach to implementing ILC/PT ensures developmental methods meet legal admissibility standards.

Case Study: Forensic Defensibility in Practice

The application of these principles is exemplified by the PharmChek Sweat Patch, which has established forensic defensibility through rigorous validation [11]. Key factors in its judicial acceptance include:

- Scientific Validation: Extensive testing over three decades demonstrating accuracy and reliability in detecting drugs [11].

- Advanced Confirmatory Techniques: Use of LC-MS/MS for confirmation testing, reducing false positives/negatives [11].

- Tamper-Evident Design: Ensures integrity of evidence collection, critical for chain of custody [11].

- Third-Party Validation: Independent verification by external laboratories [11].

- Judicial Acceptance: Recognition under both Frye and Daubert standards [11].

This case illustrates how comprehensive validation creates a foundation for expert testimony that withstands legal challenges, even under rigorous cross-examination.

For forensic researchers operating at TRL 4, integrating ILC and PT protocols is not merely an accreditation formality but a scientific necessity for courtroom admissibility. These processes generate the quantitative evidence courts require to assess a method's reliability, error rate, and general acceptance. As forensic science continues to evolve, establishing robust validation frameworks through interlaboratory studies will remain fundamental to ensuring that novel techniques meet the exacting standards of both the scientific and legal communities.

The integration of novel forensic techniques into legal proceedings requires navigating complex admissibility standards. For forensic research at Technology Readiness Level (TRL) 4, which focuses on component validation in laboratory environments, understanding these legal frameworks is crucial for designing experiments that will eventually meet judicial scrutiny. Three primary standards govern the admissibility of expert scientific testimony in the United States and Canada: the Daubert Standard, Federal Rule of Evidence (FRE) 702, and the Mohan Criteria [3]. These standards ensure that forensic evidence presented in court derives from reliable principles and methods properly applied to the facts of a case.

Recent amendments to FRE 702, effective December 2023, clarify that the proponent must demonstrate "that it is more likely than not that the proffered testimony meets the admissibility requirements set forth in the rule" [12]. This emphasizes the trial court's role as a gatekeeper in excluding unreliable expert testimony, extending to all forms of expert evidence, including emerging forensic technologies [13] [12]. For research scientists, this means validation studies must specifically address the factors articulated in these legal standards during method development.

Comparative Analysis of Legal Standards

Table 1: Comparative Analysis of Legal Admissibility Standards for Forensic Evidence

| Admissibility Factor | Daubert Standard | FRE 702 | Mohan Criteria |

|---|---|---|---|

| Core Principle | Judicial gatekeeping for reliable scientific testimony [13] | Proponent must show testimony is more likely than not admissible [12] | Threshold reliability and necessity for expert evidence [3] |

| Testing & Falsifiability | Whether theory/technique can be/has been tested [13] [14] | Testimony is product of reliable principles/methods [13] | Relevance to the case at hand [3] |

| Peer Review | Whether theory/technique has been peer-reviewed [13] [14] | Implicit in reliable principles/methods requirement | Not explicitly stated |

| Error Rates | Known or potential error rate of technique [13] [14] | Implicit in reliable application requirement | Not explicitly stated |

| Standards & Controls | Existence of standards controlling technique operation [13] [14] | Testimony reflects reliable application to facts [13] | Absence of exclusionary rules |

| General Acceptance | General acceptance in relevant scientific community [13] [14] | Expert qualified by knowledge, skill, etc. [13] | Properly qualified expert testifying [3] |

| Helpfulness to Trier of Fact | Helps trier understand evidence/determine facts [13] | Helps trier understand evidence/determine facts [13] | Necessity in assisting trier of fact [3] |

Application to Inter-Laboratory Validation (TRL 4)

Designing Legally Compliant Validation Studies

For forensic techniques at TRL 4, inter-laboratory studies are critical for establishing method robustness and reproducibility—key factors considered under Daubert and FRE 702 [15] [16]. Recent studies demonstrate effective approaches for addressing legal admissibility requirements during validation.

The 2025 inter-laboratory evaluation of the VISAGE Enhanced Tool for epigenetic age estimation provides a model protocol. This study involved six laboratories conducting reproducibility, concordance, and sensitivity assessments using standardized DNA methylation controls and samples [16]. The resulting mean absolute errors (MAEs) of 3.95 years for blood and 4.41 years for buccal swabs established known error rates, directly addressing a key Daubert factor [16].

Similarly, a 2025 inter-laboratory exercise for Massively Parallel Sequencing (MPS) involved five forensic DNA laboratories from four countries analyzing identical STR and SNP reference samples [15]. This study established foundational data for proficiency testing by comparing genotyping performance across different laboratories, platforms, and analysis tools—specifically evaluating sensitivity, reproducibility, and concordance [15].

Experimental Protocol: Inter-Laboratory Validation for Legal Admissibility

Table 2: Essential Research Reagents for Forensic Validation Studies

| Research Reagent | Technical Function | Legal Standard Addressed |

|---|---|---|

| Standard Reference Materials | Provides standardized controls for inter-laboratory comparisons [15] [16] | Daubert: Standards & Controls; FRE 702: Sufficient facts/data |

| Multiplex PCR Kits | Enables simultaneous amplification of multiple DNA markers | Daubert: Testing & Reliability; FRE 702: Reliable principles/methods |

| Bisulfite Conversion Reagents | Facilitates DNA methylation analysis for epigenetic methods [16] | Daubert: Testing & Falsifiability; FRE 702: Reliable application |

| Massively Parallel Sequencing Assays | Provides high-throughput sequencing of forensic markers [15] | Daubert: General Acceptance; FRE 702: Qualified expert knowledge |

| Bioinformatic Analysis Tools | Enables standardized data interpretation across laboratories [15] | Daubert: Peer Review; FRE 702: Reliable application |

Protocol Title: Inter-Laboratory Validation of Forensic Methods for Legal Admissibility Compliance

Objective: To establish analytical validity of [Technique Name] through multi-laboratory testing that addresses specific admissibility criteria under Daubert, FRE 702, and Mohan.

Materials:

- Standardized reference samples with known properties [15] [16]

- Identical protocol documents distributed to all participating laboratories

- Control materials for establishing baseline performance metrics

- Standardized data reporting templates

Methodology:

- Participant Laboratory Selection: Recruit 3-5 independent testing laboratories with relevant technical expertise [15] [16]

- Sample Distribution: Provide identical sample sets including:

- Reference materials with known properties

- Blind-coded proficiency samples

- Sensitivity series (e.g., 5ng, 10ng, 20ng DNA inputs) [16]

- Data Generation: Each laboratory performs analysis using standardized protocols

- Data Analysis:

- Statistical Analysis: Apply appropriate statistical methods to determine error rates and confidence intervals

Deliverables:

- Quantitative error rate estimation

- Demonstration of reproducibility across laboratory environments

- Documentation of standard operating procedures and controls

- Peer-reviewed publication of validation data

Visualization of Legal Admissibility Pathway

For forensic researchers developing techniques at TRL 4, incorporating legal admissibility requirements directly into validation study designs is essential. The 2023 amendments to FRE 702 have further emphasized that courts must rigorously evaluate whether expert testimony rests on reliable foundations [12]. By implementing inter-laboratory validation protocols that specifically address Daubert factors, FRE 702 requirements, and Mohan criteria, researchers can significantly enhance the judicial acceptance of novel forensic methods. This integrated approach ensures that scientific advances not only demonstrate technical efficacy but also meet the rigorous standards of evidence required in legal proceedings.

Achieving Technology Readiness Level (TRL) 4 is a critical milestone in the development of forensic chemical methods, signifying the transition from a proof-of-concept to a validated laboratory technique. Within the framework of a broader thesis on inter-laboratory validation, establishing a robust intra-laboratory foundation is an indispensable first step. According to the journal Forensic Chemistry, a TRL 4 method is characterized by the "application of an established technique... with measured figures of merit, some measurement of uncertainty, and developed aspects of intra-laboratory validation" [17]. This application note delineates the core components and experimental protocols necessary to meet these criteria, providing researchers and drug development professionals with a roadmap to demonstrate that a method is sufficiently mature and reliable for subsequent multi-laboratory studies.

Core Component 1: Figures of Merit

Figures of merit (FOMs) are quantitative parameters used to characterize the performance of an analytical method, providing the fundamental metrics for comparing techniques and confirming their fitness for purpose [18]. At TRL 4, measuring these parameters is mandatory to demonstrate that the method operates at an acceptable standard on commercially available instrumentation [17].

Table 1: Essential Figures of Merit and Their Definitions at TRL 4

| Figure of Merit | Definition | TRL 4 Requirement |

|---|---|---|

| Sensitivity (SEN) | The change in analytical response for a given change in analyte concentration. Must be based on the Net Analyte Signal (NAS), the portion of the signal unique to the target analyte [18]. | Establish a calibration model and calculate NAS to determine SEN. |

| Selectivity (SEL) | The ability of the method to distinguish and quantify the analyte in the presence of other components in the sample. Defined as the ratio of the NAS to the total analyte signal [18]. | Demonstrate high selectivity for the target analyte against common interferents expected in forensic matrices. |

| Limit of Detection (LOD) | The lowest concentration of an analyte that can be reliably detected, but not necessarily quantified, under the stated experimental conditions. | Determine via signal-to-noise ratio (e.g., 3:1) or calibration curve standards (e.g., 3.3σ/S, where σ is the standard deviation of the response and S is the slope of the calibration curve). |

| Limit of Quantification (LOQ) | The lowest concentration of an analyte that can be reliably quantified with acceptable precision and accuracy. | Determine via signal-to-noise ratio (e.g., 10:1) or calibration curve standards (e.g., 10σ/S). |

Experimental Protocol: Determining Sensitivity and Selectivity

This protocol is designed for a separation technique like comprehensive two-dimensional gas chromatography (GC×GC).

- Preparation of Solutions: Prepare a minimum of five standard solutions of the pure target analyte across a concentration range that is linear for the detector. Separately, prepare a solution containing the target analyte at a mid-range concentration in the presence of all expected interferents (e.g, common cutting agents or matrix components).

- Instrumental Analysis: Analyze each solution in triplicate using the optimized GC×GC method, ensuring data is collected in a form amenable to multi-way calibration (e.g., as an unfolded vector) [18].

- Data Calculation:

- Net Analyte Signal (NAS): Calculate the NAS for the target analyte using the formula:

NASA = (I - R₋ₐ R₋ₐ⁺) rₐ, whererₐis the spectral profile of the analyte,R₋ₐis the matrix of spectral profiles of all interferents,Iis the identity matrix, and⁺indicates the Moore-Penrose pseudo-inverse [18]. - Sensitivity: Calculate as

SEN = ||NASA|| / c₀, wherec₀is the unit concentration [18]. - Selectivity: Calculate as

SEL = ||NASA|| / ||rₐ||[18].

- Net Analyte Signal (NAS): Calculate the NAS for the target analyte using the formula:

Core Component 2: Uncertainty Quantification

Uncertainty quantification moves beyond simple repeatability measurements to provide a quantitative assessment of the doubt surrounding a measurement result. For a TRL 4 method, this involves a structured approach to identifying, quantifying, and combining all significant sources of variability. This process is critical for establishing the reliability required by legal standards such as the Daubert Standard, which emphasizes known error rates [3].

A practical approach to uncertainty quantification for a spectrophotometric enzymatic assay, as used in dietary supplement analysis, is outlined below [19].

- Identify Uncertainty Sources: Construct a cause-and-effect (fishbone) diagram to identify all potential sources of uncertainty. Key sources typically include:

- Sample Preparation: Weighing, dilution volume, recovery.

- Instrumental Response: Calibration curve fitting, detector noise, drift.

- Environmental Conditions: Temperature fluctuations.

- Operator: Technique in sample handling.

- Quantify Uncertainty Components:

- Repeatability: Perform at least 10 independent analyses of a homogeneous sample. The standard deviation of the results is the standard uncertainty due to repeatability,

u(rep). - Calibration Curve: From the linear least-squares regression of the calibration curve, calculate the standard uncertainty of the predicted concentration,

u(cal). - Balance and Glassware: Obtain standard uncertainties for weighing (

u(mass)) and volume (u(vol)) from manufacturer certificates or standard values.

- Repeatability: Perform at least 10 independent analyses of a homogeneous sample. The standard deviation of the results is the standard uncertainty due to repeatability,

- Calculate Combined Uncertainty: Combine the individual standard uncertainties using the root sum of squares method to obtain the combined standard uncertainty,

u_c:u_c = √[ u(rep)² + u(cal)² + u(mass)² + u(vol)² ] - Calculate Expanded Uncertainty: Multiply the combined standard uncertainty by a coverage factor (typically

k=2, for a 95% confidence level) to obtain the expanded uncertainty,U:U = k * u_c

Core Component 3: Intra-Laboratory Validation

Intra-laboratory validation is the process of providing objective evidence that a method consistently performs as intended within a single laboratory's controlled environment. It is a prerequisite for any future inter-laboratory study and is a core requirement for TRL 4 [17]. This process ensures the method is robust, reproducible, and ready for more extensive testing.

Table 2: Intra-Laboratory Validation Parameters and Target Criteria

| Validation Parameter | Experimental Approach | Target TRL 4 Criteria |

|---|---|---|

| Specificity | Analyze the target analyte in the presence of likely interferents (e.g., matrix, excipients). | Chromatographic resolution > 1.5; no interference at the retention time of the analyte. |

| Linearity | Analyze a minimum of 5 concentrations of the analyte in triplicate. | Correlation coefficient (r) > 0.995. |

| Accuracy | Spike a known amount of analyte into a blank matrix and analyze (recovery). | Mean recovery of 90–108% with RSD < 5%. |

| Precision (Repeatability) | Analyze 6 replicates of a homogeneous sample at 100% of the test concentration. | Relative Standard Deviation (RSD) < 3%. |

| Intermediate Precision | Perform the analysis on different days, by different analysts, or with different equipment. | RSD between two sets < 5%. |

Experimental Protocol: A Tiered Validation Study

The following workflow, adapted from the intra-laboratory validation of an alpha-galactosidase assay, provides a structured path to completion [19].

The Scientist's Toolkit: Research Reagent Solutions

The following reagents and materials are essential for developing and validating a TRL 4 method in forensic chemistry.

Table 3: Essential Research Reagents and Materials

| Item | Function / Purpose |

|---|---|

| Certified Reference Materials (CRMs) | Provides a traceable and definitive value for the target analyte, essential for calibration, determining accuracy, and establishing measurement uncertainty. |

| Chromatography Columns | The primary column (1D) and secondary column (2D) with different stationary phases are the core of GC×GC separation, providing the high peak capacity needed for complex forensic samples [3]. |

| Modulator | The "heart" of the GC×GC system, it traps and re-injects effluent from the first column onto the second column, preserving separation and enabling two independent retention mechanisms [3]. |

| Stable Isotope-Labeled Internal Standards | Used to correct for analyte loss during sample preparation and for variations in instrument response, significantly improving the accuracy and precision of quantitative results. |

| Simulated/Blank Matrix | A drug-free sample matrix used for preparing calibration standards and quality control samples, allowing for accurate assessment of specificity, linearity, and recovery in a realistic background. |

The rigorous implementation of figures of merit, uncertainty quantification, and intra-laboratory validation forms the foundational triad of a TRL 4 method. By adhering to the detailed protocols and criteria outlined in this document, researchers can generate the objective evidence required to prove a method's maturity and robustness within a single laboratory. This disciplined approach not only satisfies the technical requirements of TRL 4 but also lays the essential groundwork for the next critical phase of development: inter-laboratory validation. A method that successfully meets these criteria is well-positioned to undergo the collaborative testing necessary to achieve the standardization and general acceptance demanded by the forensic science community and the legal system [3].

From Theory to Practice: Designing and Executing a TRL 4 Validation Study

Step-by-Step Framework for Planning an Inter-Laboratory Comparison

Inter-laboratory comparisons (ILCs) are a cornerstone of quality assurance in analytical science, serving as a critical tool for validating method performance, ensuring result reliability, and demonstrating competency [20]. For forensic techniques at Technology Readiness Level (TRL) 4, where core technology components are validated in a laboratory environment, ILCs provide the initial, essential evidence that a method is robust and reproducible across multiple operational settings [3]. This framework outlines a systematic, step-by-step protocol for planning and executing an ILC, specifically contextualized for the rigorous demands of forensic research and development.

A Four-Phase Framework for ILC Planning

A robust ILC plan should be conceptualized as a multi-year strategy, ensuring that all critical techniques and measurement ranges within a laboratory's scope are verified over a defined period, typically four years [20]. The process can be broken down into four sequential phases.

Phase 1: Preliminary Planning and Definition of Scope

- Step 1.1: Establish the Primary Objective: Clearly define the purpose of the ILC. Is it to validate a new forensic method (e.g., using comprehensive two-dimensional gas chromatography or GC×GC-MS), compare performance between laboratories, identify biases, or support accreditation efforts? [3]

- Step 1.2: Define Technical Scope and Measurands: Specify the analyte(s) or property to be measured (e.g., identification and quantification of a specific illicit drug), the matrix of the test material, and the specific analytical technique(s) to be used [20].

- Step 1.3: Formulate a Participant Plan: Identify and invite participating laboratories. A minimum of 8-10 participants is generally recommended to obtain statistically meaningful results.

Phase 2: Experimental and Logistics Design

- Step 2.1: Select and Homogenize Test Material: Source or prepare a test material that is homogeneous, stable, and representative of real casework samples. The material's stability must be confirmed over the entire duration of the ILC.

- Step 2.2: Confirm Method Protocol: Decide whether the ILC will use a standardized method (all participants follow an identical, prescribed protocol) or a validated in-house method (each laboratory uses its own accredited method). For TRL 4 research, a standardized protocol is often preferable to isolate variables related to the technique itself [3].

- Step 2.3: Develop Reporting and Timeline Protocol: Create detailed instructions for participants, including data reporting formats, units of measurement, uncertainty estimation requirements, and a clear timeline for material distribution, analysis, and result submission.

Phase 3: Execution and Data Collection

- Step 3.1: Distribute Test Materials: Ship materials to participants under conditions that ensure stability, with clear labeling and handling instructions.

- Step 3.2: Provide Ongoing Support: Designate a coordinator to answer participant questions during the analysis phase to ensure protocol adherence.

- Step 3.3: Collect and Secure Data: Gather all participant results through a secure and confidential system.

Phase 4: Data Analysis, Reporting, and Review

- Step 4.1: Perform Statistical Analysis: Analyze the submitted data to determine assigned values (e.g., consensus mean from participants) and measures of dispersion (e.g., standard deviation for proficiency assessment). Calculate performance metrics like z-scores for each laboratory.

- Step 4.2: Draft and Circulate Individualized Reports: Prepare confidential reports for each participant, detailing their performance relative to the consensus and other participants.

- Step 4.3: Incorporate into Management Review: The findings and outcomes of the ILC must be included in the laboratory's broader review of its Quality Management System (QMS) to drive continuous improvement [20].

The following workflow diagram visualizes this structured planning process:

Diagram 1: Four-Phase ILC Planning Workflow

Multi-Year Planning Table for Forensic Techniques

For an accredited laboratory, participation in ILCs must be carefully planned over a multi-year cycle to cover all significant techniques and measuring ranges in its scope of accreditation [20]. The following table provides a hypothetical 4-year plan for a forensic laboratory developing GC×GC-MS methods, aligning with the transition from TRL 4 to higher readiness levels.

Table 1: Exemplary Four-Year ILC Plan for Forensic Method Validation

| Year | Primary Technique | Target Analyte/Application | Measuring Range | ILC Focus / TRL Context |

|---|---|---|---|---|

| 1 | GC×GC-MS | Illicit Drugs (e.g., synthetic cannabinoids) | 0.1 - 10 mg/mL | Initial Validation (TRL 4): Demonstrate basic reproducibility and separation power vs. 1D-GC [3]. |

| 2 | GC×GC-TOFMS | Ignitable Liquid Residues (ILR) in fire debris | NIST classes (e.g., gasoline, diesel) | Advanced Application (TRL 4-5): Validate capability for complex mixture analysis and pattern recognition [3]. |

| 3 | GC×GC-MS/MS | Toxicology (e.g., drugs in blood) | 0.01 - 1 µg/mL | Complex Matrix (TRL 5): Assess method robustness and sensitivity in biological matrices with high interference potential. |

| 4 | GC×GC-HRMS | Chemical Warfare Agent Biomarkers | Varies by agent | Specialized/CBNR Focus (TRL 5+): Final validation for low-abundance analytes in challenging scenarios [3]. |

Detailed Experimental Protocol: GC×GC-MS ILC for Illicit Drug Identification

This protocol provides a detailed methodology for an ILC corresponding to Year 1 in the multi-year plan, focusing on a technique at TRL 4.

Scope and Application

This protocol describes the procedure for an ILC to validate the identification and semi-quantification of a synthetic cannabinoid (e.g., 5F-MDMB-PICA) in a simulated herbal material using GC×GC-MS.

Materials and Reagents

Table 2: Research Reagent Solutions and Essential Materials

| Item | Function / Description |

|---|---|

| Certified Reference Standard | High-purity analyte for accurate quantification and method calibration. |

| Internal Standard (e.g., deuterated analog) | Corrects for analytical variability and losses during sample preparation. |

| Simulated Herbal Matrix | Inert plant material free of interferents, serving as a consistent and homogeneous background. |

| Sample Preparation Solvents | HPLC-grade methanol, acetone, and ethyl acetate for compound extraction. |

| Derivatization Reagent (if required) | Used to modify the analyte for improved chromatographic behavior and detectability. |

| GC×GC-MS System | Instrumentation comprising a GC, a thermal or flow modulator, and a mass spectrometer detector. |

Step-by-Step Procedure

- Test Material Distribution: The coordinating laboratory distributes pre-weighed, homogeneous samples of the simulated herbal material, spiked with the target synthetic cannabinoid at a concentration unknown to the participants (e.g., 2 mg/g).

- Sample Preparation:

- Participants are instructed to add a specified volume of internal standard solution to 100 mg of the test material.

- Extract using 10 mL of chilled acetone via ultrasonication for 15 minutes.

- Centrifuge and evaporate the supernatant to dryness under a gentle stream of nitrogen.

- Reconstitute the residue in 1 mL of ethyl acetate.

- Instrumental Analysis:

- GC×GC Conditions: Participants use a standardized method. Example parameters:

- Primary Column: Non-polar (e.g., Rxi-5Sil MS, 30 m x 0.25 mm i.d. x 0.25 µm).

- Secondary Column: Mid-polar (e.g., Rxi-17Sil MS, 1 m x 0.15 mm i.d. x 0.15 µm).

- Modulator Period: 4 s.

- Oven Program: 60°C (hold 1 min) to 300°C at 5°C/min.

- MS Conditions: Electron Impact (EI) ionization at 70 eV; mass range: 40-550 m/z.

- GC×GC Conditions: Participants use a standardized method. Example parameters:

- Data Processing and Reporting:

- Identify the target analyte based on its retention time in the first (¹tᵣ) and second (²tᵣ) dimensions, and its mass spectrum compared to a standard.

- Perform semi-quantification against the internal standard.

- Report the identified compound and its calculated concentration (mg/g).

Data Analysis and Performance Evaluation

- The assigned value for the test material is established as the robust consensus mean of all participant results.

- Participant performance is evaluated using z-scores: ( z = (x{lab} - X)/\sigma ), where ( x{lab} ) is the participant's result, ( X ) is the assigned value, and ( \sigma ) is the standard deviation for proficiency assessment. A |z| ≤ 2.0 is considered satisfactory.

Legal and Technical Readiness Considerations for Forensic Applications

For forensic research, demonstrating methodological validity extends beyond the laboratory to meet legal admissibility standards. Techniques like GC×GC-MS must satisfy criteria such as the Daubert Standard, which emphasizes testing, peer review, known error rates, and general acceptance [3]. A well-documented ILC is a direct response to these requirements, providing empirical data on a method's reproducibility and error rate, thereby bridging the gap from TRL 4 research to court-admissible evidence.

The following diagram illustrates the critical path from methodological development to legal admissibility, highlighting the role of ILCs.

Diagram 2: ILC Role in Forensic Legal Admissibility

For forensic techniques at Technology Readiness Level (TRL) 4, where validation occurs in a laboratory setting, the foundation of any successful inter-laboratory study is the quality and consistency of the test materials used. The reliability of validation data hinges on two fundamental properties of these materials: homogeneity and stability. Homogeneity ensures that every sub-sample sent to participating laboratories is chemically and physically identical, guaranteeing that any variability in results stems from the analytical methods or laboratories themselves, not from the test material. Stability ensures that these properties remain unchanged from the time of preparation through distribution, storage, and analysis, thus ensuring the integrity of the validation data [21] [22]. This document outlines best practices for selecting, preparing, and characterizing test materials to support robust inter-laboratory validation studies for forensic techniques.

Defining Requirements and Selecting Source Materials

The process begins with a clear definition of the test material's purpose, which dictates its required characteristics.

2.1. Purpose-Driven Material Selection The choice of test material is intrinsically linked to the forensic technique being validated. For DNA typing methods, this may involve creating samples with a specific number of contributors, known degradation levels, or defined mixture ratios to challenge and validate interpretive protocols [21]. For forensic toxicology, the test materials could be biological spiked with known concentrations of target analytes, such as anticholinesterase pesticides, to validate quantitative analytical methods like HPLC-DAD [23]. The material must be fit-for-purpose, meaning it should accurately represent the challenges encountered with real casework samples.

2.2. Sourcing with Integrity Source materials must be obtained with explicit informed consent that permits their use in research and allows for the sharing of data among collaborators and laboratories [21]. For biological materials, this often involves working with commercial blood banks or tissue providers under protocols reviewed by an ethics board. The provenance and handling of the source material should be thoroughly documented to ensure ethical and legal compliance.

Protocol for Achieving and Assessing Homogeneity

Homogeneity is a prerequisite for a valid inter-laboratory study. The following protocol provides a detailed methodology for its achievement and verification.

Experimental Protocol: Homogeneity Testing

Objective: To ensure that the variation between sub-samples (vials) of the test material is significantly less than the expected inter-laboratory variation.

Materials and Reagents:

- Bulk test material (e.g., DNA extract in TE buffer, spiked blood/urine, or synthetic mixture).

- Appropriate vials (e.g., sterile cryovials).

- Calibrated pipetting system or automated bottle filler [21].

- Reagents for quantitative analysis (e.g., dPCR or qPCR reagents for DNA, internal standards for HPLC).

Procedure:

- Preparation: Prepare a large, well-mixed bulk batch of the test material. Ensure it is in a homogenous state (e.g., fully solubilized DNA, a liquid suspension, or a finely ground and blended powder) [21].

- Sub-sampling: Using a calibrated and precise method, fill at least 30 vials from the bulk batch. For liquid materials, automated filling systems are preferred to minimize variability [21].

- Sampling for Testing: Randomly select a minimum of 10 vials from the entire batch for homogeneity testing.

- Quantitative Analysis: From each of the selected vials, perform at least two independent replicate measurements of a key property that defines the material. For DNA, this is typically concentration using a validated digital PCR (dPCR) or qPCR assay [21]. For a chemical analyte, this would be concentration using a core validated method like HPLC-DAD [23].

- Statistical Analysis: Analyze the resulting data using one-way analysis of variance (ANOVA). The variation between vials should not be statistically significant, or should be negligible compared to the target method reproducibility.

Homogeneity Assessment Data

The following table summarizes the key parameters and acceptance criteria for a typical homogeneity study, as applied to different forensic test materials.

Table 1: Homogeneity Assessment Parameters for Forensic Test Materials

| Parameter | DNA Typing Material [21] | Toxicological Material (e.g., Spiked Blood) [23] | General Chemical Forensic Material |

|---|---|---|---|

| Key Property Measured | DNA Concentration (copies/μL) | Analyte Concentration (e.g., μg/mL) | Analyte Concentration or Property Value |

| Analytical Method | Digital PCR (dPCR) | High-Performance Liquid Chromatography (HPLC-DAD) | Fit-for-purpose core method (e.g., GC-MS, HPLC) |

| Number of Vials Tested | ≥ 10 | ≥ 10 | ≥ 10 |

| Replicates per Vial | ≥ 2 | ≥ 2 | ≥ 2 |

| Acceptance Criterion | Between-vial variance < 30% of total variance (or non-significant ANOVA result) | Coefficient of Variation (CV) < 5% for between-vial measurements | CV < pre-defined target based on method precision |

Protocol for Establishing and Monitoring Stability

Stability testing confirms that the test material does not undergo significant degradation or change under the anticipated storage and shipping conditions.

Experimental Protocol: Stability Monitoring

Objective: To determine the shelf-life of the test material by monitoring its key properties over time under defined storage conditions.

Materials and Reagents:

- Filled vials of the homogeneous test material.

- Controlled temperature storage chambers (e.g., 4°C, -20°C, room temperature).

- Reagents for quantitative analysis (same as for homogeneity testing).

Procedure:

- Study Design: Create a stability study protocol that defines the storage conditions (e.g., 4°C is common for DNA extracts), testing intervals, and the tests to be performed at each interval [24]. The protocol should be comprehensive, covering product, package, study design, and tests [24].

- Storage: Store the test materials under the selected conditions. For materials shipped to multiple laboratories, include conditions that simulate transportation (e.g., short-term temperature cycling) [24].

- Stability Testing: At pre-defined intervals (e.g., 0, 1, 3, 6, 12 months), randomly pull a minimum of three vials from storage.

- Analysis: Perform quantitative analysis on the pulled vials using the same method as for homogeneity testing (e.g., dPCR for DNA, HPLC for chemical analytes).

- Data Interpretation: Compare the results at each time point to the baseline (T=0) measurements. The material is considered stable if no statistically significant trend or change is observed, and the measured values remain within pre-defined acceptance criteria of the baseline value.

Stability Study Parameters and Data

A well-designed stability study is documented in a detailed protocol. The data collected is used to establish expiration dates or recommended use-by dates.

Table 2: Key Elements of a Stability Protocol and Monitoring Plan [24]

| Protocol Element | Description & Examples |

|---|---|

| Product & Package | Specific product name, dosage form, strength; Container-closure system description (e.g., 2 mL cryovial, screw cap) [24]. |

| Batch Information | Lot number, date of manufacture, batch size, manufacturing site. |

| Storage Conditions | Defined storage conditions (e.g., long-term at 4°C ± 2°C), testing frequency (intervals), and study duration [24]. |

| Test Attributes & Methods | List of tests (e.g., DNA quantification, STR profile, analyte concentration) with reference to specific test methods and their version codes [24]. |

| Acceptance Criteria | Pre-defined specification limits for each test attribute, which may include stability-indicating parameters like assay purity and degradation products [24]. |

The Scientist's Toolkit: Essential Materials and Reagents

The following table details key reagents and materials required for the preparation and characterization of homogeneous and stable forensic test materials.

Table 3: Research Reagent Solutions for Test Material Preparation

| Item | Function & Application |

|---|---|

| Digital PCR (dPCR) System | Provides absolute quantification of target DNA sequences without a standard curve; critical for precisely determining the concentration and homogeneity of DNA-based test materials [21]. |

| HPLC-DAD System | A reliable and cost-effective platform for identifying and quantifying analytes (e.g., pesticides, drugs) in spiked biological test materials; DAD allows for spectral confirmation of identity [23]. |

| Stabilization Reagents | Reagents such as carrier RNA or TE buffer are added to low-quantity DNA samples to prevent adsorption to tube walls and stabilize the material during long-term storage [21]. |

| Extraction Solvents (e.g., Pyridine/Water) | Used to extract dyes from fabric fibers for the creation of forensic fabric analysis test materials, enabling comparison via Thin Layer Chromatography (TLC) [25]. |

| Matrix-Matched Standards | Analytical standards prepared in a blank sample of the same biological matrix (e.g., drug-free blood, liver); essential for achieving accurate quantification and compensating for matrix effects during method validation [23]. |

| Validated Reference Materials | Well-characterized materials, such as NIST's Research Grade Test Materials (RGTMs), used as benchmarks for quantifying in-house test materials or for validating new analytical methods [21]. |

Workflow for Test Material Preparation

The entire process, from definition to distribution, can be visualized in the following workflow. This diagram integrates the key stages of material selection, homogeneity and stability studies, and final release.

Defining Protocols and Data Reporting Requirements for Participating Laboratories

Inter-laboratory validation studies are a critical final step in maturing a forensic technique from research to routine application. For techniques reaching Technology Readiness Level (TRL) 4, the focus shifts to refinement, enhancement, and rigorous inter-laboratory validation to ensure the method is ready for implementation in forensic laboratories [17]. The transition of a technique like comprehensive two-dimensional gas chromatography (GC×GC) into the forensic mainstream illustrates this pathway, moving from proof-of-concept studies toward standardized methods suitable for evidence analysis in a legal context [3]. The overarching goal of such validation is to produce methods that are not only scientifically sound but also meet the stringent admissibility standards for expert testimony in legal proceedings, as defined by the Daubert Standard or the Mohan Criteria [3] [26]. These standards emphasize that scientific testimony must be reliable, which requires that the underlying method has been tested, has a known error rate, has been peer-reviewed, and is generally accepted [3]. This document outlines the protocols and data reporting requirements for laboratories participating in a TRL 4 inter-laboratory validation study, providing a framework to demonstrate that a method is accurate, reproducible, and forensically valid.

Experimental Protocols

Core Validation Methodology

The following protocol provides a generalized framework for the inter-laboratory validation of a quantitative forensic technique. The example of quantitative fracture surface matching is used for illustration, but the principles are applicable to a wide range of forensic chemical and physical analysis methods [26].

- Objective: To validate a quantitative method for matching fractured surfaces of materials (e.g., glass, metal, plastic) by comparing the topography of their fracture surfaces using statistical learning models. The primary goal is to establish the method's discriminatory power and estimate its false match and false non-match rates across multiple laboratories and operators.

- Materials and Sample Sets:

- Reference Materials: A central coordinating laboratory shall prepare and distribute standardized sample sets. These sets must include known matching pairs (KM) and known non-matching pairs (KN) covering a range of materials relevant to forensic casework (e.g., different steel alloys, types of glass, or polymers).

- Sample Preparation: The coordinating laboratory will generate fractures under controlled conditions (e.g., three-point bending, tension) to create the KM pairs. KN pairs will be created from different source objects or different fracture events. All samples must be cleaned (e.g., ultrasonically in solvent) to remove debris without altering the fracture surface topography.

- Blinding: Participating laboratories will receive blinded sample sets where the identity (KM or KN) of each pair is unknown to them.

- Procedure:

- Imaging and Topography Mapping: Participants shall use a three-dimensional (3D) microscope (e.g., laser scanning confocal microscope, white light interferometer) to map the fracture surface topography of each sample. The imaging must be performed at a scale that captures both self-affine surface roughness and the unique, non-self-affine topographical details, typically at a resolution and field of view determined to be optimal during single-laboratory validation (e.g., a field of view greater than 10 times the self-affine transition scale of the material) [26].

- Data Pre-processing: Raw topographic data (height maps) must be processed to remove tilt and curvature, creating a flattened surface for analysis. Participants will use standardized software or scripts (e.g., an R package provided by the coordinating laboratory) to ensure consistent pre-processing.

- Feature Extraction: The pre-processed topography data will be analyzed to extract quantitative features. The primary feature will be the height-height correlation function, which characterizes surface roughness across different length scales [26]. The transition scale where the surface behavior deviates from self-affine to unique should be identified and recorded.

- Statistical Classification: Participants will use a provided multivariate statistical learning tool (e.g., a likelihood-ratio model) to compare the extracted features from two surfaces. The model will output a score or a likelihood ratio indicating the strength of support for the proposition that the two surfaces are a match [26] [27].

- Result Reporting: For each sample pair, participants will report the quantitative score and their categorical assessment ("match," "non-match," or "inconclusive") based on a pre-defined threshold.

Workflow and Logical Relationships

The following diagram illustrates the core experimental workflow for the inter-laboratory validation study, from sample receipt to final data submission.

Data Reporting Requirements

For the validation study to be successful, data must be reported in a consistent, complete, and usable format. Incomplete data reporting has been identified as a significant issue in the transmission of laboratory results to end-users, with one study finding only 69.6% of test results contained all essential reporting elements [28]. The following tables detail the mandatory data reporting requirements for all participating laboratories.

Table 1: Mandatory Data Fields for Sample and Laboratory Information

| Category | Data Field | Format/Units | Description |

|---|---|---|---|

| Laboratory Info | Laboratory ID | Text | Unique identifier assigned to the participating lab. |

| Analyst Name | Text | Name of operator performing the analysis. | |

| Instrument Model | Text | Make and model of the 3D microscope used. | |

| Sample Info | Sample Pair ID | Text | Unique identifier for the pair being analyzed. |

| Material Type | Text | e.g., Borosilicate glass, 1045 steel, Polypropylene. | |

| Date of Analysis | YYYY-MM-DD | Date the analysis was performed. |

Table 2: Mandatory Data Fields for Analytical Results

| Data Field | Format/Units | Description | |

|---|---|---|---|

| Imaging Parameters | Field of View (FOV) | µm x µm | Dimensions of the imaged area. |

| Lateral Resolution | nm | Resolution in the x-y plane. | |

| Vertical Resolution | nm | Resolution in the z-axis (height). | |

| Extracted Features | Transition Scale (ξ) | µm | The length scale where topography becomes non-self-affine [26]. |

| Saturation Roughness | µm | The saturated value of the height-height correlation function. | |

| Statistical Output | Likelihood Ratio / Score | Numeric | The quantitative output of the statistical model. |

| Categorical Conclusion | Text | "Match", "Non-match", or "Inconclusive". | |

| Quality Metrics | Signal-to-Noise Ratio | Numeric | A measure of data quality from the topographic map. |

Table 3: Required Metadata for Method and Error Reporting

| Category | Data Field | Format/Units | Description |

|---|---|---|---|

| Methodology | Software Version | Text | Version of pre-processing/analysis software used. |

| Reference Interval | Text | The score threshold used for "Match" conclusion. | |

| Uncertainty & Error | Internal Precision Data | Numeric | Results of repeatability tests on a control sample. |

| Audit Trail | Text | Documentation of any anomalous events or data corrections. |

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table lists key materials, instruments, and software solutions essential for conducting a TRL 4 inter-laboratory validation study in forensic fracture analysis.

Table 4: Key Research Reagent Solutions for Quantitative Fracture Matching

| Item | Function in Validation |

|---|---|

| Standardized Reference Materials | Certified materials with known fracture properties are used to calibrate instruments and verify the accuracy of the topographic measurement process across all participating labs. |

| Three-Dimensional (3D) Microscope | This instrument is used to map the surface topography of fracture surfaces at a high resolution, providing the raw quantitative data (height maps) for subsequent analysis [26]. |

| Height-Height Correlation Analysis Software | Specialized software or scripts are required to process the raw topographic data and calculate the height-height correlation function, which is a key feature for quantifying surface uniqueness [26]. |

| Statistical Learning Software (e.g., R package) | A validated software package (e.g., MixMatrix) is used to perform the multivariate statistical analysis and compute the likelihood ratio that forms the basis of the objective "match" conclusion [26]. |

| Blinded Validation Sample Sets | These sets, containing known matches and non-matches, are the primary tool for empirically measuring the method's false positive and false negative rates in a realistic, inter-laboratory setting. |

Data Analysis and Statistical Interpretation

The analysis of data collected from multiple laboratories must provide a transparent and statistically sound measure of the method's performance and reliability.

Statistical Framework and Workflow

The core of the quantitative approach is the likelihood ratio framework, which is the logically correct framework for the interpretation of forensic evidence and is a key component of the paradigm shift in forensic science [27]. The following diagram outlines the process for aggregating and analyzing inter-laboratory data.

Key Performance Indicators and Error Rate Analysis

- Calculation of Performance Metrics: The coordinating laboratory will aggregate all blinded results to calculate key performance indicators. These include sensitivity (the ability to correctly identify matching pairs), specificity (the ability to correctly exclude non-matching pairs), and overall accuracy.

- Error Rate Determination: A critical requirement under the Daubert Standard is the establishment of a known error rate [3] [26]. The false positive rate (false matches) and false negative rate (false non-matches) will be calculated directly from the results of the blinded validation study. Confidence intervals for these rates must be reported to quantify the uncertainty in the estimates.

- Assessment of Reproducibility: The data will be analyzed to assess inter-laboratory reproducibility. This involves measuring the variation in quantitative scores and categorical conclusions for the same sample pairs analyzed across different laboratories. Statistical tests (e.g., ANOVA) may be used to determine if differences between laboratories are significant.

Compliance with Legal Admissibility Standards

For a forensic method to be implemented, it must satisfy legal criteria for the admissibility of scientific evidence. The data generated through these protocols is designed to directly address these criteria [3].

- Daubert Standard Compliance: The described validation directly addresses the four Daubert factors:

- Testability: The hypothesis that fracture surfaces are unique is tested through the blinded validation study.

- Peer Review: The methodology and results will be submitted for publication in peer-reviewed journals like Forensic Chemistry [17].

- Error Rate: The false positive and false negative rates are empirically measured through the inter-laboratory study.

- General Acceptance: Widespread successful implementation across multiple independent laboratories, as demonstrated in this study, is a strong step toward establishing general acceptance.

- Transparency and Reliability: By replacing subjective pattern recognition with quantitative measurements and statistical models, the method becomes more transparent, reproducible, and resistant to cognitive bias, fulfilling the calls for reform in forensic science [26] [27]. The final validation report must clearly articulate how the method and its performance metrics meet these legal standards.

The integration of robust inter-laboratory validation methods is fundamental to advancing forensic techniques from research concepts to legally admissible evidence. This application note details protocols and case studies for applying Inter-Laboratory Comparisons (ILC) and Proficiency Testing (PT) to forensic techniques, specifically framed within Technology Readiness Level (TRL) 4 research. At TRL 4, component validation is conducted in a laboratory environment, focusing on establishing reproducibility and reliability through cross-laboratory collaboration [3]. For forensic science, this stage is critical for transitioning novel analytical methods toward courtroom acceptance under standards such as Daubert and Federal Rule of Evidence 702, which emphasize testing, peer review, known error rates, and general acceptance within the scientific community [3]. Successful ILC/PT at this stage provides the necessary foundation for these legal requirements.

These programs offer numerous technical and quality benefits beyond mere accreditation. They enable laboratories to compare their performance against peers, evaluate new methods against established ones, demonstrate method precision and accuracy, and provide valuable data for estimating measurement uncertainty [29]. Participation also provides external validation of a laboratory's quality management system and offers a mechanism for continuous improvement and confidence-building for both internal staff and external stakeholders [29].

ILC/PT Protocol for Cannabis Potency Analysis

Background and Legal Context

The 2018 Farm Bill's redefinition of hemp as Cannabis containing less than 0.3% Δ9-THC (tetrahydrocannabinol) by dry weight created an urgent need for quantitative analytical methods in forensic laboratories [30]. Previously, qualitative confirmation of THC was sufficient for confirming a controlled substance. Now, laboratories must accurately quantify THC concentration to distinguish between legal hemp and illegal marijuana, a task requiring high metrological confidence [30].

Case Study: NIST Cannabis Quality Assurance Program (CannaQAP)

The National Institute of Standards and Technology (NIST) established CannaQAP as a perpetual interlaboratory study mechanism to help laboratories assess and improve their in-house quantitative measurements for cannabinoids [30].

Study Design:

- Objective: To assess the comparability of quantitative results for cannabinoids (e.g., Δ9-THC, CBD) across multiple forensic laboratories using various analytical platforms.

- Materials: Participating laboratories analyze identical, homogeneous Cannabis-based test items provided by NIST.

- Anonymity: Participant identities are anonymized in published reports, encouraging open participation and data sharing [30].

Participant Instructions:

- Sample Preparation: Utilize the provided sample preparation protocol or a validated in-house method. Record all deviations.

- Instrumentation: Analyze test items using one or more of the following techniques:

- Gas Chromatography-Mass Spectrometry (GC-MS)

- Liquid Chromatography with Ultraviolet Detection (LC-UV)

- Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS)

- Data Reporting: Report the determined concentration (by dry weight) for each target cannabinoid, along with the measurement uncertainty and a description of the analytical method used.

Data Analysis and Output: NIST compiles all participant data and generates a report that allows each laboratory to:

- Compare their results to the consensus mean value.

- Evaluate their method's performance relative to other techniques (e.g., GC-MS vs. LC-MS).

- Identify any potential biases in their analytical workflow.

Table 1: Key Characteristics of the CannaQAP ILC/PT Study

| Feature | Description |

|---|---|

| Primary Goal | Method assessment and improvement for quantitative cannabinoid analysis [30] |

| TRL Focus | TRL 4 (Component validation in laboratory environment) |

| Legal Driver | Need to accurately distinguish hemp (<0.3% THC) from marijuana [30] |

| Test Materials | Homogeneous Cannabis plant material or extracts |

| Output | Peer-reviewed NIST Internal Report with anonymized data [30] |

Experimental Workflow

The following diagram illustrates the end-to-end workflow for a laboratory participating in the CannaQAP study, from registration to performance assessment.

ILC/PT Protocol for Comprehensive Two-Dimensional Gas Chromatography (GC×GC)

Background and Forensic Application

Comprehensive two-dimensional gas chromatography (GC×GC) provides superior peak capacity and separation for complex forensic mixtures like ignitable liquid residues (ILR) in arson investigations, illicit drugs, and toxicological evidence [3]. However, as an emerging technique, it requires extensive validation before routine adoption.

Proposed ILC/PT Study for GC×GC-MS Ignitable Liquid Residue Analysis

This proposed protocol is designed to validate GC×GC-MS methods for a key forensic application.

Study Design:

- Objective: To evaluate the ability of laboratories to correctly identify and classify ignitable liquid residues (ILR) from fire debris samples using GC×GC-MS.

- Test Items: Participants receive simulated fire debris samples containing a known ILR from a specific ASTM class (e.g., gasoline, diesel, heavy petroleum distillate) and a negative control sample.

Participant Instructions:

- Sample Extraction: Perform headspace solid-phase microextraction (HS-SPME) or solvent extraction on the provided samples.

- GC×GC-MS Analysis:

- Primary Column: Use a non-polar or weakly polar column (e.g., 5% phenyl polysilphenylene-siloxane).

- Secondary Column: Use a mid-polarity column (e.g., 50% phenyl polysilphenylene-siloxane).

- Modulation: Set an appropriate modulation period (e.g., 2-4 seconds).

- Detection: Use time-of-flight mass spectrometry (TOFMS) for non-targeted analysis [3].

- Data Analysis: Process the two-dimensional chromatographic data to identify characteristic patterns and biomarker compounds for ILR classification.

Data Reporting and Performance Metrics: Participants must report:

- The identified ILR type (or "none detected").

- The confidence level of the identification.