A Modern Framework for Likelihood Ratio Calculation in Complex DNA Mixtures: From Foundational Principles to Clinical Applications

This article provides a comprehensive guide for researchers and scientists on the interpretation of complex forensic DNA mixtures using likelihood ratios (LRs).

A Modern Framework for Likelihood Ratio Calculation in Complex DNA Mixtures: From Foundational Principles to Clinical Applications

Abstract

This article provides a comprehensive guide for researchers and scientists on the interpretation of complex forensic DNA mixtures using likelihood ratios (LRs). It covers the foundational statistical principles, explores advanced methodological applications including probabilistic genotyping software (PGS) and single-cell pipelines, addresses critical troubleshooting and optimization challenges such as degradation and mixture complexity, and reviews validation frameworks and comparative performance metrics. By synthesizing the latest research and standards from institutions like NIST, this resource aims to equip professionals with the knowledge to implement robust, reliable, and statistically sound DNA mixture interpretation in biomedical and clinical contexts.

Understanding the Core Principles and Challenges of DNA Mixture Interpretation

The Critical Role of the Likelihood Ratio (LR) in Forensic DNA Evidence

The Likelihood Ratio (LR) has become a cornerstone of modern forensic science, providing a robust statistical framework for evaluating the strength of DNA evidence. In the context of complex DNA mixtures—samples originating from two or more individuals—the LR offers a coherent method to quantify evidence under competing propositions posed by the prosecution and defense. This approach is increasingly vital as forensic laboratories encounter more challenging casework involving low-template, degraded, or complex mixture evidence [1].

The fundamental principle of the LR involves comparing the probability of the observed DNA evidence under two alternative hypotheses. For sub-source propositions, where the evidence is the DNA profile itself, this typically involves propositions such as whether a particular individual is a contributor to the mixture versus whether the DNA originated from unknown, unrelated individuals [2]. The LR provides a clear, quantitative measure of evidential strength that helps courts understand the significance of DNA matches while properly accounting for uncertainty in complex mixture interpretation.

LR Calculation Framework and Proposition Setting

Fundamental LR Formulae

The likelihood ratio is calculated using the following fundamental formula, where E represents the observed DNA evidence, and Hp and Hd represent the prosecution and defense hypotheses respectively:

LR = P(E | Hp) / P(E | Hd)

In practice, forensic DNA analysis utilizes different LR formulations depending on case circumstances. The three primary types are:

- Simple LR: Assesses how well a single questioned individual explains the data [2]

- Conditioned LR: Calculated for remaining individuals when one or more individuals are assumed to be contributors [2]

- Compound LR: Evaluates multiple questioned individuals together in the inclusionary proposition versus an equal number of unknown contributors [2]

Table 1: Likelihood Ratio Types and Applications

| LR Type | Propositions | Use Case |

|---|---|---|

| Simple LR | Hp: ID₁ + U vs Hd: U + U | Single suspect cases |

| Conditioned LR | Hp: ID₁ + ID₂ vs Hd: U + ID₂ | Known contributor present |

| Compound LR | Hp: ID₁ + ID₂ vs Hd: U + U | Multiple suspects jointly |

Proposition Formulation Guidelines

Proper proposition formulation is critical for meaningful LR calculations. The recent NISTIR 8351-DRAFT emphasizes the impact of specific propositions chosen for the calculated LR value, encouraging standardization in proposition development [2]. Key considerations include:

- Propositions must be mutually exclusive and exhaust all reasonable possibilities

- The defense proposition should specify the number of unknown contributors

- Alternative propositions might consider related individuals or different population groups

- The complexity of propositions should match the complexity of the mixture

For simple two-person mixtures where major and minor contributors can be distinguished, the propositions might focus on whether a suspect is the major contributor. For complex mixtures with potential allele dropout, propositions must account for the possibility that not all alleles of a contributor are detected [1].

Comparative Statistical Approaches

Combined Probability of Inclusion/Exclusion (CPI/CPE)

The Combined Probability of Inclusion (CPI) remains the most commonly used method for statistical evaluation of DNA mixtures in many parts of the world, including the USA [1]. The CPI refers to the proportion of a given population that would be expected to be included as potential contributors to an observed DNA mixture, while its complement, the Combined Probability of Exclusion (CPE), represents the proportion that would be excluded.

The CPI approach is considered simpler to calculate and explain, as it doesn't require assumptions about the number of contributors during the calculation phase. However, proper interpretation prior to calculation does require consideration of the likely number of contributors to assess potential allele dropout [1]. The CPI method becomes problematic when applied to low-level DNA mixtures where allele dropout may have occurred, as the formulation requires that both alleles of a donor must be detectable above the analytical threshold.

Advantages of Likelihood Ratio Approach

The likelihood ratio framework offers several significant advantages over the CPI method for complex DNA mixture interpretation:

- Flexibility to handle uncertainty: LR methods can coherently incorporate potential allele dropout in complex mixtures through probabilistic genotyping [1]

- More efficient information use: Utilizes quantitative data such as peak heights and mixture proportions

- Clearer statement of evidential strength: Directly addresses the question of how much the evidence supports one proposition over another

- Theoretical coherence: Provides a mathematically rigorous framework based on probability theory

- Adaptability: Can accommodate different sets of propositions tailored to case circumstances

Empirical studies demonstrate that compound LRs (evaluating multiple individuals jointly) typically exceed the product of simple LRs (evaluating individuals separately), with log(LR) differences ranging from approximately -2.7 to 28.3 in controlled studies [2]. This information gain results from reduced ambiguity when considering constrained genotype combinations.

Table 2: Statistical Method Comparison for DNA Mixture Interpretation

| Feature | CPI/CPE | Likelihood Ratio |

|---|---|---|

| Handling of Uncertainty | Limited | Flexible incorporation via probabilistic genotyping |

| Peak Height Information | Not utilized | Fully utilized in probabilistic systems |

| Allele Dropout Accommodation | Locus disqualification required | Probabilistic weighting |

| Statistical Framework | Frequentist | Bayesian |

| Complex Mixture Suitability | Limited | High |

| Information Efficiency | Lower | Higher |

Experimental Protocols for LR Calculation

DNA Mixture Interpretation Workflow

The following workflow outlines the standard protocol for forensic DNA mixture interpretation using probabilistic genotyping and LR calculation:

- Profile Assessment: Examine electrophoregram data for peak characteristics, stutter patterns, and potential artifacts

- Mixture Deconvolution: Separate mixture components into individual contributor profiles where possible

- Proposition Formulation: Define appropriate prosecution and defense hypotheses based on case circumstances

- Statistical Modeling: Apply probabilistic genotyping software to calculate likelihoods under competing propositions

- LR Calculation: Compute the ratio of likelihoods to quantify evidence strength

- Quality Assessment: Review results for consistency and reliability

For complex mixtures, the protocol emphasizes that interpretation should not be done by simple allele counting but through systematic deconvolution efforts [1]. If a probative single-source profile can be determined at some or all loci, single-source statistics may be used for those portions of the profile.

Protocol for Complex Mixture Analysis

Laboratories implementing LR calculations for complex mixtures should adhere to the following detailed protocol:

- Threshold Establishment: Set analytical thresholds based on validation studies to distinguish true alleles from background noise

- Stutter Filtering: Apply validated stutter ratios to identify potential stutter artifacts

- Mixture Ratio Estimation: Determine approximate contribution proportions from peak heights where possible

- Probabilistic Genotyping: Use validated software (e.g., STRmix) to calculate genotype probabilities under competing propositions

- Model Verification: Check model assumptions against observed data characteristics

- Result Interpretation: Report LRs with appropriate explanations of their meaning and limitations

When employing probabilistic genotyping software, laboratories must conduct extensive validation studies demonstrating reliable performance across the range of mixture types and template amounts encountered in casework [1].

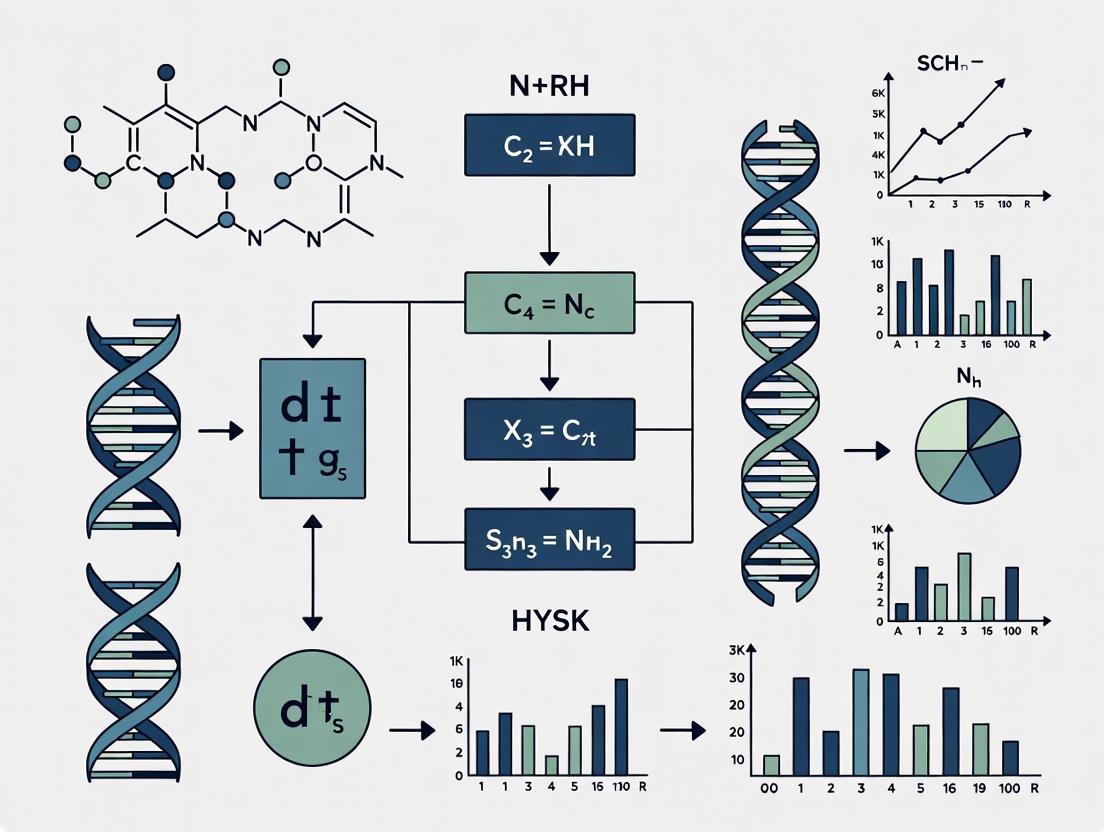

Workflow Visualization

Figure 1: Workflow for DNA mixture interpretation and LR calculation.

Proposition Relationships in LR Framework

Figure 2: Relationships between LR types and their quantitative behavior.

Research Reagent Solutions

Table 3: Essential Research Reagents for Forensic DNA Mixture Analysis

| Reagent/Kit | Function | Application in LR Research |

|---|---|---|

| STR Multiplex Kits | Amplification of multiple STR loci | Generating DNA profile data for mixture interpretation |

| Quantifiler Trio | DNA quantification | Determining input amounts for mixture construction |

| PrepFiler DNA Extraction | DNA purification from biological samples | Isolving DNA for experimental mixture studies |

| STRmix Software | Probabilistic genotyping | LR calculation for complex DNA mixtures |

| CE Instrumentation | Capillary electrophoresis separation | Detection of STR alleles and peak height measurement |

Quantitative Data on LR Behavior in DNA Mixtures

Empirical studies systematically assessing LR behavior across different mixture types provide valuable insights for researchers. One comprehensive study examined two-, three-, and four-person DNA mixtures of various proportions and template amounts, interpreting results using STRmix software [2].

Table 4: LR Magnitude Relationships in Empirical Studies

| Comparison Type | LR Relationship | Magnitude Range (log(LR) difference) | Key Influencing Factors |

|---|---|---|---|

| Compound vs Simple LR Product | Compound LR ≥ Simple LR product | ~-2.7 to ~28.3 | Template level, mixture composition |

| Conditioned vs Unconditioned LR | Conditioned LR ≥ Unconditioned LR | Similar to compound/simple differences | Reduction in genotype ambiguity |

| Information Gain | Positive in most cases | Peak probability density at ~0.5 | Constraint on genotype combinations |

The distribution of log(LR) differences between compound and simple LR comparisons demonstrates that considering individuals jointly typically provides increased evidential strength compared to evaluating them separately. This information gain stems primarily from the reduction in possible genotype combinations when multiple contributors are constrained in the model [2].

Studies specifically examined mixtures with high major-to-minor contributor ratios (e.g., 99:1, 100:100:4, 100:100:100:6), as these extreme ratios present particular interpretation challenges. The research confirmed that probabilistic genotyping systems can reliably handle such extreme mixtures, providing valid LRs across diverse mixture compositions and template amounts [2].

Implementation Considerations for Forensic Laboratories

Transitioning from CPI to LR-based interpretation requires careful planning and validation. Laboratories should consider the following key aspects:

- Software Validation: Conduct extensive validation studies covering the range of mixture types and template amounts encountered in casework

- Personnel Training: Ensure analysts understand probabilistic genotyping principles and LR interpretation

- Proposition Framework: Develop standardized approaches to proposition setting based on case circumstances

- Reporting Standards: Establish clear reporting protocols that explain LR meaning without overstating conclusions

- Quality Assurance: Implement technical review procedures specific to probabilistic genotyping results

The forensic community increasingly recognizes that LR approaches offer more scientifically defensible solutions for complex mixture interpretation compared to traditional CPI methods [1]. However, this transition requires significant investment in validation, training, and infrastructure to ensure reliable implementation.

In forensic DNA analysis, the likelihood ratio (LR) is the fundamental statistic used to evaluate the strength of evidence, providing a measure of support for one proposition over another [3]. The LR is a ratio of two conditional probabilities under competing propositions, typically formulated as the prosecution proposition (Hp) and the defense or alternate proposition (Hd or Ha) [3]. Properly defining these propositions is critical, as they must be mutually exclusive, address the issue of interest, and be exhaustive within the known framework of case circumstances [3]. The hierarchy of propositions—spanning offense, activity, and source levels—provides a structured framework for formulating these hypotheses. This document focuses on the application of sub-source to activity level propositions within the context of complex DNA mixture interpretation, detailing protocols for LR calculation and the analysis of challenging forensic samples.

The Hierarchy of Propositions and Likelihood Ratio Calculation

Theoretical Foundation of the Likelihood Ratio

The likelihood ratio follows from Bayes' theorem and can be expressed in its odds form as [3]:

Mathematically, the LR is written as:

Where E represents the evidence, I represents relevant background information, and Hp and Hd represent the alternate hypotheses or propositions [3]. An LR greater than 1 supports the prosecution proposition, while an LR less than 1 supports the defense proposition. The magnitude of the LR indicates the strength of this support.

Proposition Levels and Types

Forensic DNA evidence is typically evaluated at different levels within the hierarchy. The following table summarizes the primary proposition types used in complex mixture analysis:

Table 1: Hierarchy of Proposition Types in DNA Mixture Analysis

| Proposition Type | Definition | Example Scenario | Typical Application |

|---|---|---|---|

| Simple Proposition | A single Person of Interest (POI) is considered under Hp and replaced with an unknown under Ha [3]. | Hp: DNA from POI + 1 unknown.Ha: DNA from 2 unknown individuals [3]. | Initial screening of a POI in a mixture. |

| Compound Proposition | Multiple POIs are considered together under Hp and replaced with unknown donors in Ha [3]. | Hp: DNA from POI1 + POI2.Ha: DNA from 2 unknown individuals [3]. | Assessing whether multiple POIs explain a mixture together. |

| Conditional Proposition | The contribution of all POIs is assumed under Hp, and all but one POI is assumed under Ha, isolating the evidence for a single individual [3]. | Hp: DNA from POI1, POI2, POI3.Ha: DNA from POI2, POI3 + 1 unknown [3]. | Isolating the evidence for each POI in a multi-contributor mixture. |

Logical Workflow for Proposition Assignment

The following diagram outlines the decision process for selecting and applying proposition types in the analysis of a DNA mixture.

Experimental Protocols for Complex Mixture Analysis

Protocol: LR Calculation Using Probabilistic Genotyping Software

Objective: To compute Likelihood Ratios for propositions on complex DNA mixtures using probabilistic genotyping software (e.g., STRmix).

Materials and Reagents:

- Extracted DNA sample

- Amplification kit (e.g., GlobalFiler)

- PCR thermocycler

- Capillary Electrophoresis instrument

- Probabilistic Genotyping Software (e.g., STRmix)

Procedure:

DNA Profiling:

Profile Interpretation:

Proposition and LR Assignment:

- For Simple Propositions: For each POI, assign an LR using the proposition pair:

Hp: POI + (N-1) unknownvs.Ha: N unknown individuals[3]. - For Compound Propositions: To test if multiple POIs explain the mixture together, assign an LR using:

Hp: POI1 + POI2 + ...vs.Ha: N unknown individuals[3]. - For Conditional Propositions: To isolate evidence for one POI among known contributors, assign an LR using:

Hp: POI1 + POI2 + POI3...vs.Ha: POI2 + POI3 + ... + 1 unknown[3].

- For Simple Propositions: For each POI, assign an LR using the proposition pair:

Validation and Reporting:

- If a compound LR for true donors returns 0 (exclusion), re-deconvolute using increased numbers of burn-in and post-burn-in accepts (e.g., ×10 or ×100 compared to defaults) [3].

- Per ASB draft standard guidance, report LRs from simple proposition pairs unless the compound LR is exclusionary, as compound LRs can overstate evidence [3].

Protocol: Analysis of Complex Mixtures Using Multi-SNP Markers

Objective: To employ a high-resolution Multi-SNP kit for the detection of minor contributors in complex mixtures where CE-STR methods may fail.

Materials and Reagents:

- "FD Multi-SNP Mixture Kit" (contains 567 multi-SNP markers) [4]

- MGIEasy Universal DNA Library Prep Set [4]

- Illumina NovaSeq X Platform for sequencing [4]

- Bioinformatics tools (e.g., bowtie2 for sequence alignment) [4]

Procedure:

Library Preparation:

Sequencing:

- Pool libraries and sequence on an Illumina NovaSeq X platform to obtain 150 bp paired-end reads [4].

Bioinformatic Analysis & Error Correction:

- Map raw reads to the human reference genome using

bowtie2and discard unmapped/partially mapped reads [4]. - For a fully mapped read, take the nucleotide sequence spanning a multi-SNP locus as its allele [4].

- Apply computational error correction: Ignore variants located within or two base pairs from repeat sequences, consecutive mismatches, or indels to reduce alignment errors [4].

- Map raw reads to the human reference genome using

Sensitivity and Mixture Analysis:

Data Presentation and Analysis

Comparative Performance of Proposition Types

The following table summarizes quantitative data from a study investigating the performance of simple, compound, and conditional propositions on mixed DNA profiles analyzed with probabilistic genotyping software [3].

Table 2: Performance Comparison of Proposition Types in DNA Mixture Analysis

| Proposition Type | LR for True Donors | LR for Non-Contributors | Key Findings and Caveats |

|---|---|---|---|

| Simple | Inclusionary | Exclusionary (LR ~ 0) | Standard approach; may have lower power to differentiate true from false donors than conditional propositions [3]. |

| Compound | Can be highly inclusionary | Can be exclusionary | Can misstate the weight of evidence strongly in either direction. The log(LR) is approximately the sum of the simple log(LRs) for true donors [3]. Should not be reported alone unless exclusionary [3]. |

| Conditional | Higher than simple LRs | More exclusionary than simple LRs | Provides a higher ability to differentiate true from false donors. A good approximation of the exhaustive LR [3]. |

Essential Research Reagents and Materials

Table 3: Research Reagent Solutions for Complex DNA Mixture Analysis

| Reagent / Material | Function / Application | Example Product / Kit |

|---|---|---|

| STR Amplification Kit | Generates multi-locus DNA profiles from extracted samples for capillary electrophoresis analysis. | GlobalFiler PCR Amplification Kit [3] |

| Probabilistic Genotyping Software | Interprets complex DNA mixture data by calculating the probability of the evidence given different proposition pairs to compute a Likelihood Ratio. | STRmix [3] |

| Multi-SNP Marker Kit | Provides highly polymorphic markers for analyzing highly complex or low-template mixtures via Next-Generation Sequencing. | FD Multi-SNP Mixture Kit [4] |

| NGS Library Prep Kit | Prepares DNA libraries for sequencing on high-throughput platforms for Multi-SNP or microhaplotype analysis. | MGIEasy Universal DNA Library Prep Set [4] |

Forensic DNA analysis is a cornerstone of modern criminal investigations. However, the evidential value of DNA profiles can be compromised by several technical challenges. Low-template DNA (ltDNA), degraded DNA, and mixtures from multiple contributors represent the most significant hurdles in forensic genetics, directly impacting the reliability of statistical assessments, including likelihood ratio (LR) calculations. These challenges induce stochastic effects, reduce the number of reportable alleles, and complicate genotype deconvolution. This application note details these challenges and provides validated protocols to support robust forensic analysis within a framework designed for complex mixture research.

Key Challenges and Quantitative Data

The interplay of low template, degradation, and multiple contributors exacerbates stochastic effects, thereby challenging the formulation of a reliable probabilistic genotyping framework for accurate LR calculation. The table below summarizes the core issues and their impacts on DNA profiling.

Table 1: Key Challenges in Forensic DNA Analysis

| Challenge | Key Characteristics | Impact on DNA Profile | Implication for LR Calculation |

|---|---|---|---|

| Low-Template DNA (ltDNA) [5] [6] [7] | DNA quantity below 100-200 pg. Increased stochastic effects due to low copy number of template molecules. | Allele and locus drop-out, allele drop-in, heterozygote peak height imbalance [6] [8]. | Increased uncertainty must be accounted for in the probabilistic model. Failure to do so can over- or underestimate the strength of evidence. |

| Degraded DNA [5] [9] [10] | Fragmented DNA molecules due to environmental factors (heat, UV, humidity) or enzymatic activity. | Preferential loss of longer STR amplicons, leading to a downward slope in profile and partial profiles [5] [9]. | The probability of observing an allele becomes dependent on its fragment length, adding complexity to the LR model. |

| Multiple Contributors [11] [12] [8] | DNA from two or more individuals mixed in a single sample. Major and minor contributors. | Overlapping alleles, complex peak height ratios, and potential for allele masking. Difficulty in determining the number of contributors [8]. | The genotype of interest is not directly observed. The LR must consider all possible genotype combinations under the prosecution and defense propositions, requiring sophisticated software. |

The challenges are not mutually exclusive. A sample can be low-template, degraded, and a mixture simultaneously, creating a perfect storm of complexity. Recent research indicates that the accuracy of DNA mixture analysis is not uniform across populations; groups with lower genetic diversity have been shown to experience higher false inclusion rates, highlighting a critical consideration for the equity of forensic applications [11].

Experimental Protocols

Protocol for Sensitivity Analysis with Low-Template DNA

This protocol evaluates the performance of a DNA profiling system across a range of low DNA quantities to establish stochastic thresholds and assess allelic drop-out/drop-in rates [5] [6].

- Objective: To determine the sensitivity and reliability of the STR or SNP multiplex kit when analyzing ltDNA.

- Materials:

- Reference DNA (e.g., control 007 DNA)

- Quantification kit (e.g., Quantifiler Trio)

- STR or SNP multiplex PCR kit (e.g., AmpFlSTR NGM SElect, Ion AmpliSeq Identity panel)

- Thermal cycler, Capillary Electrophoresis instrument or Massively Parallel Sequencer

- Method Steps:

- Sample Preparation: Perform serial dilutions of the reference DNA to create a dilution series from 1 ng down to 1 pg. Quantify each dilution using a qPCR assay [5].

- Amplification: For each dilution level, perform a minimum of 10 replicate PCR amplifications using the standard and/or enhanced cycle number as defined by the kit or laboratory protocol [6].

- Analysis: Separate and detect PCR products. Analyze the resulting profiles for:

- Consensus Profiling: For replicates at the same dilution level, generate a consensus profile. An allele is typically reported if it appears in two or more replicate profiles [6] [7].

Protocol for Generating Artificially Degraded DNA

This protocol uses UV-C irradiation to produce DNA with controlled degradation in a rapid and reproducible manner, useful for validating assays on degraded samples [9].

- Objective: To reproducibly generate degraded DNA samples for validating profiling systems.

- Materials:

- DNA extract from whole blood.

- Custom UV-C irradiation unit with 254 nm germicidal lamps.

- Low TE buffer.

- Microtubes.

- Method Steps:

- Sample Aliquoting: Prepare aliquots of DNA extract (e.g., 10 µL of 7 ng/µL) in microtubes [9].

- UV-C Exposure: Place the open microtubes on their side under the UV-C light source at a fixed distance (e.g., ~11 cm). Expose aliquots for different time intervals (e.g., 0.5 to 5.0 minutes). Include non-exposed controls [9].

- Post-Exposure Quantification: Quantify the degraded aliquots using a qPCR assay that targets nuclear DNA and multiple-sized mtDNA targets (e.g., 69 bp and 143 bp) to calculate a Degradation Index (DI) [9].

- Profiling and Analysis: Subject the degraded DNA to STR or SNP profiling. Correlate the DI with the success rate of amplification, noting the progressive loss of longer amplicons [9].

Workflow and Decision Pathway

The following diagram illustrates a logical workflow for processing challenging forensic samples, integrating the challenges and methodologies discussed.

The Scientist's Toolkit: Research Reagent Solutions

Successful analysis of complex DNA samples relies on a suite of specialized reagents and tools. The following table details key solutions for addressing the outlined challenges.

Table 2: Essential Research Reagents and Materials

| Item | Function/Application | Example Product(s) |

|---|---|---|

| High-Sensitivity qPCR Kit | Precisely quantifies low-level DNA and assesses degradation by targeting sequences of different lengths. Critical for deciding downstream workflow [5] [9]. | Quantifiler Trio DNA Quantification Kit |

| STR Multiplex Kits | Simultaneously amplifies multiple STR loci for core identity testing. Newer kits feature improved primer designs and buffer systems for better performance on challenging samples [5] [8]. | AmpFlSTR NGM SElect, PowerPlex 16 HS System |

| SNP Panels (MPS) | Provides an alternative for highly degraded or ltDNA. Shorter amplicons and sequencing-based analysis can recover information from samples where STR analysis fails [5]. | Ion AmpliSeq Identity Panel (MPS) |

| Probabilistic Genotyping Software (PGS) | Statistical software that calculates LRs for complex DNA mixtures. It accounts for stochastic effects, peak heights, and all possible genotype combinations under competing propositions [12]. | N/A (Various commercial and open-source platforms) |

| UV-C Irradiation Unit | A custom apparatus for generating artificially degraded DNA in a reproducible manner, essential for validation studies and assessing assay limitations [9]. | Custom-made unit with 254 nm germicidal lamps |

The Shift from Combined Probability of Inclusion (CPI) to LR Frameworks

The analysis of complex DNA mixtures has long posed a significant challenge in forensic genetics. As forensic short tandem repeat (STR) genotyping assays have become more sensitive, DNA samples that were previously classified as single-source are now recognized as having multiple contributors as low-level alleles are detected [13]. This evolution has necessitated a parallel shift in the statistical frameworks used to evaluate DNA evidence. The Combined Probability of Inclusion (CPI), also known as Random Man Not Excluded (RMNE), has been largely superseded by the Likelihood Ratio (LR) framework for quantifying the statistical weight of mixed DNA profiles, particularly when individual contributors cannot be readily deconvoluted [14]. This application note details this critical methodological transition, framed within broader research on likelihood ratio calculations for complex DNA mixtures.

The Limitations of Combined Probability of Inclusion

The CPI approach calculates the probability that a random person would be included as a potential contributor to a mixed DNA profile. While historically important and intuitively accessible, CPI exhibits significant limitations:

- Treatment of Drop-out: CPI struggles with allelic drop-out (the failure to detect an allele) which becomes increasingly common with low-template DNA samples [14].

- Information Utilization: CPI does not fully utilize all available data in an electropherogram, such as peak height information and stutter patterns [13].

- Statistical Weight: The method can be limiting for evaluating propositions involving DNA transfer, persistence, prevalence, and recovery (TPPR) in cases with multiple contributors [13].

The DNA commission of the International Society of Forensic Genetics (ISFG) recommends using LR over CPI as more available data are utilized and allelic drop-out and drop-in can be explicitly incorporated in the calculation [14].

The Likelihood Ratio Framework

Fundamental Principles

The Likelihood Ratio (LR) provides a more robust statistical framework for evaluating DNA evidence. The LR is a ratio of two conditional probabilities:

[ LR = \frac{P(E|Hp)}{P(E|Hd)} ]

Where (E) represents the evidence (the electropherogram data), (Hp) is the prosecution hypothesis, and (Hd) is the defense hypothesis [13] [3]. The LR directly addresses the support for one hypothesis relative to another rather than simply indicating inclusion or exclusion.

Continuous Probabilistic Genotyping

Continuous Probabilistic Genotyping (PG) systems represent the most advanced implementation of the LR framework. These systems model probability distributions of observed peak heights in STR electropherograms under different scenarios to generate likelihoods for propositions [13]. Available PG systems include:

- STRmix: Commercial software used for forensic DNA mixture interpretation [3]

- EuroForMix: Open-source PG system based on the model proposed by Cowell et al. [13]

- TrueAllele: Commercial software using Markov chain Monte Carlo (MCMC) for mixture deconvolution [14]

- Forensic Statistical Tool (FST: Software developed by the Office of Chief Medical Examiner of New York for LR analysis allowing for drop-out and drop-in [14]

Table 1: Comparison of Major Probabilistic Genotyping Systems

| System Name | Availability | Key Methodology | Drop-out/Drop-in Handling |

|---|---|---|---|

| STRmix | Commercial | Continuous model | Empirical modeling |

| EuroForMix | Open source | Extended Cowell model | User-defined parameters |

| TrueAllele | Commercial | Markov chain Monte Carlo | Heuristic penalty |

| FST | Institutional | Empirical rates | Function of template, cycles, loci |

Proposition Formulation in LR Framework

The formulation of appropriate propositions is critical to meaningful LR calculation. Research demonstrates that different proposition types significantly impact LR outcomes [3]:

Simple Propositions

For a two-person mixture considering one Person of Interest (POI):

- (H_p): The DNA originated from the POI and one unknown individual

- (H_d): The DNA originated from two unknown individuals [3]

Conditional Propositions

For a four-person mixture considering POI1:

- (H_p): The DNA originated from POI1, POI2, POI3, and POI4

- (H_d): The DNA originated from POI2, POI3, POI4, and one unknown individual [3]

Compound Propositions

For a two-person mixture with two POIs:

- (H_p): The DNA originated from POI1 and POI2

- (H_d): The DNA originated from two unknown individuals [3]

Research shows that conditional propositions have a much higher ability to differentiate true from false donors than simple propositions, while compound propositions can misstate the weight of evidence [3].

Table 2: Performance Characteristics of Different Proposition Types

| Proposition Type | True Donor LR | Non-contributor LR | Key Application |

|---|---|---|---|

| Simple | Moderate | Less exclusionary | Standard casework |

| Conditional | Higher | More exclusionary | Isolating individual evidence |

| Compound | Variable | Can overinflate | Assessing multiple POIs together |

Experimental Protocols for PG System Validation

Inter-Laboratory Comparison Framework

McNevin et al. propose a standardized procedure for inter-laboratory comparisons of continuous PG systems [13]:

Sample Design: Prepare DNA mixtures with defined numbers of contributors (2-5 persons), mixture ratios, and template amounts covering expected casework range.

Laboratory Processing: Distribute identical DNA extracts to participating laboratories for independent processing using their standard STR amplification kits and capillary electrophoresis parameters.

Data Analysis: Each laboratory analyzes their generated electropherograms using their preferred PG system with predetermined propositions.

LR Comparison: Compare calculated LRs across laboratories using defined metrics, focusing on reproducibility and variance.

Validation Protocol for Drop-out and Drop-in Parameters

The Office of Chief Medical Examiner (OCME) validation protocol for the Forensic Statistical Tool incorporates [14]:

Empirical Rate Estimation:

- Analyze 2000+ amplifications of 700+ mixtures and single-source samples

- Estimate drop-out probabilities by locus, template quantity, cycle number, and number of contributors

- Calculate separate probabilities for partial heterozygous, complete heterozygous, and complete homozygous drop-out

- Estimate drop-in rates as a function of amplification cycles only

Validation Testing:

- Generate 454 mock evidence samples including single-source, deliberate mixtures, and touched objects

- Compute LRs for true contributors and 1200+ non-contributors from population databases

- Compare FST results with qualitative assessments

Validation Workflow for PG Systems

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials for PG System Research and Validation

| Item | Function | Example Specifications |

|---|---|---|

| STR Amplification Kits | Multi-locus amplification for DNA profiling | GlobalFiler, Identifiler |

| DNA Quantification Systems | Precise template DNA measurement | qPCR-based systems |

| Capillary Electrophoresis Instruments | Electropherogram generation | 3500 Genetic Analyser |

| Probabilistic Genotyping Software | LR calculation for complex mixtures | STRmix, EuroForMix, TrueAllele |

| Reference DNA Samples | Controlled mixture preparation | Commercial standards or characterized donors |

| Population Databases | Allele frequency estimation for LR calculation | Laboratory-specific or standardized databases |

Advanced Considerations in LR Implementation

Reproducibility and Credible Intervals

A significant challenge in continuous PG systems is establishing reproducibility and credible intervals for LRs. Swaminathan et al. found that intra-model variability increases with the number of contributors and decreases in template mass [13]. In their study, 9% of intra-model comparisons showed LRs falling in different verbal expression bins, highlighting the importance of establishing performance characteristics for PG systems [13].

Signaling Pathways and Logical Relationships in PG Systems

PG System Logical Framework

The shift from CPI to LR frameworks represents a fundamental advancement in forensic DNA mixture interpretation. Continuous probabilistic genotyping systems enable more nuanced and statistically robust evaluation of DNA evidence, particularly for complex mixtures with potential drop-out or drop-in. The implementation of these systems requires careful validation, appropriate proposition formulation, and understanding of performance characteristics across different laboratory conditions. As noted in recent research, conditional propositions generally provide better differentiation between true and false donors than simple propositions, while compound propositions require careful application to avoid misstating the weight of evidence [3]. This methodological evolution continues to enhance the scientific rigor of forensic genetics while presenting new challenges in standardization and reproducibility across laboratories.

The National Institute of Standards and Technology (NIST) conducts Scientific Foundation Reviews to evaluate the technical merit and reliability of forensic science methods. Initiated with appropriated Congressional funds starting in 2018, these reviews fulfill a critical need identified by the National Academy of Sciences' 2009 landmark report and a 2016 recommendation from the National Commission on Forensic Science [15] [16]. The primary objective is to identify and document the empirical evidence supporting forensic methods, explore their capabilities and limitations, and identify knowledge gaps requiring future research [15]. These reviews are particularly vital for disciplines interpreting complex evidence, such as DNA mixture interpretation, where methods must rest on solid scientific foundations to ensure just outcomes in the criminal justice system [15] [12].

Within the context of complex DNA mixture research, the likelihood ratio (LR) serves as the fundamental statistical framework for evaluating the strength of evidence [17]. The NIST review provides a critical assessment of the methodologies and reliability of LR calculation in this complex context.

Core Concepts for Complex DNA Mixtures

The Challenge of Complex DNA Mixtures

Advances in DNA testing sensitivity allow profiles to be generated from minute quantities of DNA, such as a few skin cells. While beneficial, this increased sensitivity introduces interpretation challenges for mixtures, including distinguishing contributors, estimating the number of individuals present, assessing potential contamination, and determining the relevance of trace amounts of DNA [12]. These complexities, if not properly managed and communicated, can lead to misunderstandings regarding the strength and relevance of DNA evidence [12].

The Likelihood Ratio Framework

The likelihood ratio is a cornerstone of forensic DNA evidence evaluation, providing a measure of support for one proposition versus another [17]. It is calculated as the ratio of two conditional probabilities:

LR = Pr(E | Hp, I) / Pr(E | Hd, I)

where E represents the DNA evidence, Hp is the prosecution proposition, Hd is the defense proposition, and I represents case background information [17]. An LR greater than 1 supports the prosecution's proposition, while an LR less than 1 supports the defense's alternative proposition [17].

Proposition Formulation in DNA Mixtures

The formulation of propositions (Hp and Hd) is critical and exists within a hierarchy, with DNA evidence typically evaluated at the sub-source level [17]. The NIST review identifies three primary proposition types used in mixture interpretation, each yielding different LRs and interpretations [12] [17].

Table 1: Types of Propositions Used in DNA Mixture Interpretation

| Proposition Type | Definition | Example for a Two-Person Mixture | Key Characteristic |

|---|---|---|---|

| Simple Proposition [17] | Considers one Person of Interest (POI) with all other contributors unknown. | Hp: POI + 1 unknownHd: 2 unknown individuals |

Default approach; does not assume other known contributors. |

| Compound Proposition [17] | Considers multiple POIs together in a single ratio. | Hp: POI₁ + POI₂Hd: 2 unknown individuals |

Can misstate the weight of evidence if not reported with simple LRs. |

| Conditional Proposition [17] | Assumes the contribution of all POIs under Hp and all but one POI under Hd. |

Hp: POI₁ + POI₂Hd: POI₂ + 1 unknown |

Isolates evidence for each POI; approximates an exhaustive LR. |

Research demonstrates that conditional propositions offer superior performance, providing a "much higher ability to differentiate true from false donors than simple propositions" [17]. For true donors, correctly assuming relatedness between contributors, such as full siblings, generally increases the LR, while ignoring such relatedness typically yields a more conservative (lower) LR [18].

Reliability Assessment and Quantitative Insights

The NIST foundation review establishes reliability by evaluating empirical data from validation studies, interlaboratory studies, and proficiency tests [12]. For DNA mixture interpretation, this involves assessing the performance of Probabilistic Genotyping Software (PGS), which uses statistical models to calculate LRs from complex mixture data [12].

Quantitative Data on Proposition Performance

A key study evaluated 32 mixed DNA samples involving 2 to 5 contributors, interpreting profiles with the STRmix PGS to compare the performance of different proposition types [17]. The findings provide critical quantitative insights for researchers assessing methodological reliability.

Table 2: Performance Comparison of Proposition Types for True Donors

| Number of Contributors (N) | Simple Proposition (Log10 LR) | Conditional Proposition (Log10 LR) | Compound Proposition (Log10 LR) | Key Finding |

|---|---|---|---|---|

| 2 | 12.4 | 13.1 | 25.5 | Conditional LRs are higher than simple LRs for true donors. |

| 3 | 8.7 | 9.5 | 28.6 | The sum of simple log(LRs) approximates the compound log(LR). |

| 4 | 6.2 | 7.1 | 25.9 | Compound LRs can be obtained as the product of conditional LRs. |

| 5 | 4.5 | 5.3 | 21.8 | Conditional LRs provide the clearest distinction for each POI. |

Technology Comparison for Challenging Samples

The reliability of LR calculation is also influenced by the analytical technology. A 2024 study compared Massively Parallel Sequencing (MPS) to traditional Capillary Electrophoresis (CE) for analyzing challenging surface DNA samples [19].

Table 3: MPS vs. Capillary Electrophoresis for Surface DNA Samples

| Performance Metric | Capillary Electrophoresis (CE) | Massively Parallel Sequencing (MPS) | Implication for Research |

|---|---|---|---|

| Data Complexity/Content | Lower | Higher number of sequences/peaks observed | MPS provides more genetic data markers. |

| Average LR for Contributors | Higher | Lower for the tested data set | Current MPS data preprocessing may require optimization. |

| Potential Artefacts | Standard | Elevated unknown alleles/artefacts noted | Increased complexity of MPS data impacts LR output. |

Experimental Protocol for LR Calculation

This protocol outlines the procedure for calculating likelihood ratios from complex DNA mixtures using probabilistic genotyping software, based on methodologies cited in the NIST Scientific Foundation Review [12] [17].

Materials and Equipment

- DNA Extracts: from casework samples or reference specimens.

- Amplification Kit: e.g., GlobalFiler PCR Amplification Kit.

- Genetic Analyzer: e.g., 3500 Genetic Analyzer (Thermo Fisher Scientific).

- Genotyping Software: e.g., GeneMapper ID-X.

- Probabilistic Genotyping Software (PGS): e.g., STRmix or EuroForMix.

- Computing Hardware: Workstation meeting PGS vendor specifications.

Step-by-Step Procedure

Profile Generation and Analysis

- Amplify DNA samples using a standard PCR protocol (e.g., 28-29 cycles) [17].

- Separate and detect amplified fragments on a genetic analyzer.

- Analyze electrophoregrams using genotyping software (e.g., GeneMapper ID-X V1.6) with a set analytical threshold (AT), typically between 100-150 RFU [17]. Export the quantitative peak data.

Profile Interpretation in PGS

- Import the peak data and reference profiles into the PGS.

- Specify the number of contributors to the mixture. This can be based on the maximum number of alleles at a locus or other statistical indicators.

- The PGS will model the data, estimating parameters like the mixture proportion and the DNA template amount for each contributor [17].

Define Propositions and Calculate LRs

- Formulate the proposition pairs (

HpandHd). For a single POI, start with a simple proposition pair [17]:Hp: The DNA originated from the POI and N-1 unknown individuals.Hd: The DNA originated from N unknown individuals.

- Run the PGS calculation to obtain the LR for the specified propositions.

- If multiple POIs are present, evaluate the evidence using conditional propositions [17]:

Hp: The DNA originated from POI₁, POI₂, ... and POIₓ.Hd: The DNA originated from POI₂, ... POIₓ, and one unknown individual (to test POI₁).

- Repeat the conditional LR calculation for each POI individually.

- Formulate the proposition pairs (

Data and Reporting

- Document all input parameters, proposition sets, and the resulting LRs.

- The final report should clearly state which proposition type was used and the computed LR value.

Workflow Visualization

The following diagram illustrates the logical workflow for the interpretation of complex DNA mixtures and calculation of likelihood ratios, integrating key decision points from the protocol.

The Scientist's Toolkit

This section details key research reagents, software, and analytical tools essential for conducting reliable DNA mixture interpretation and likelihood ratio calculation, as referenced in the NIST review and supporting literature.

Table 4: Essential Research Reagents and Solutions for DNA Mixture Analysis

| Tool Name | Type/Category | Primary Function in Research |

|---|---|---|

| GlobalFiler PCR Kit [17] | Chemical Reagent | Simultaneously amplifies 21 autosomal STR loci, 1 Y-STR, and 2 sex-determination markers to generate multi-locus DNA profiles from evidentiary samples. |

| STRmix [17] | Software | A probabilistic genotyping system that uses a continuous model to interpret complex DNA mixtures and calculate evidentiary LRs, accounting for peak heights and other artifacts. |

| EuroForMix [19] | Software | An open-source probabilistic genotyping software for interpreting STR profiles from mixed DNA samples, enabling LR calculation under different propositions. |

| MPSproto [19] | Software | A probabilistic genotyping software designed to analyze and interpret the complex data output from Massively Parallel Sequencing (MPS) technologies. |

| GeneMapper ID-X [17] | Software | Genotyping software used after capillary electrophoresis to size alleles, call peaks against a set analytical threshold, and generate the quantitative data file for PGS. |

| 3500 Genetic Analyzer [17] | Laboratory Instrument | A capillary electrophoresis instrument used for the high-resolution separation and detection of fluorescently labeled DNA fragments to generate DNA profiles. |

Implementing Advanced Methods and Software for LR Calculation

Probabilistic Genotyping Software (PGS) represents a paradigm shift in the interpretation of forensic DNA evidence, particularly for complex mixtures involving DNA from multiple contributors or low-template samples. These systems employ sophisticated statistical models to calculate a Likelihood Ratio (LR), which quantitatively assesses the strength of evidence by comparing the probability of the observed DNA data under two competing propositions [20]. The move to PGS marks a significant advancement over older, more subjective binary methods, with the President's Council of Advisors on Science and Technology (PCAST) noting that these programs "clearly represent a major improvement over purely subjective interpretation" [21]. This document provides a detailed overview of two prominent PGS systems—STRmix and TrueAllele—framed within the context of advanced LR calculation research for complex DNA mixtures. It is intended to serve as a technical resource for researchers, scientists, and professionals engaged in the development and validation of forensic genomic tools.

Theoretical Foundations of Probabilistic Genotyping

The Likelihood Ratio (LR) Framework

The core of any PGS is the calculation of the Likelihood Ratio. The LR is formally defined as the ratio of the probabilities of observing the electrophoretic data (the DNA profile, denoted as O) given two opposing hypotheses [20]. Formulaically, this is expressed as:

LR = Pr(O | H1, I) / Pr(O | H2, I)

Here, H1 typically represents the prosecution's proposition (e.g., the suspect is a contributor to the sample), and H2 represents the defense's proposition (e.g., an unknown, unrelated individual is a contributor). The term I represents relevant background information. To compute this probability, the software must account for all possible genotype combinations (Sj) that could explain the mixed profile, along with nuisance parameters such as the DNA amount from each contributor, degradation levels, and stutter. This leads to the expanded calculation [20]:

LR = [ Σ Pr(O | Sj) Pr(Sj | H1) ] / [ Σ Pr(O | Sj) Pr(Sj | H2) ]

The terms Pr(O | Sj) are the weights, representing the probability of the observed data given a specific genotype set. The method by which these weights are assigned fundamentally distinguishes the different classes of probabilistic genotyping models.

Evolution of Statistical Models for DNA Interpretation

The development of statistical models for DNA interpretation has progressed through several distinct stages, each offering increasing sophistication in handling data uncertainty.

Table 1: Evolution of Statistical Models for DNA Mixture Interpretation

| Model Type | Key Characteristics | Treatment of Peak Heights | Handling of Low-Template/Drop-out |

|---|---|---|---|

| Binary Models | Uses yes/no decisions; genotype sets are either possible (weight=1) or impossible (weight=0). | Not modeled. | Limited to no consideration. |

| Qualitative (Semi-Continuous) Models | Calculates weights using probabilities of drop-in and drop-out. | Used indirectly to inform drop-out probabilities, but not modeled directly. | Can account for these phenomena probabilistically. |

| Quantitative (Continuous) Models | Uses peak height information directly to assign numerical weights via statistical models. | Directly modeled using peak height data and expectations. | Explicitly models these effects within a continuous framework. |

Quantitative models, such as those employed by STRmix and TrueAllele, represent the most advanced approach because they fully utilize the quantitative peak height information in the electrophoretic data [20]. These systems use this information to infer real-world properties like the DNA amount from each contributor and the level of DNA degradation, leading to a more accurate and efficient assignment of the probabilities Pr(O | Sj) [20].

Software-Specific Methodologies and Protocols

STRmix

STRmix is a Bayesian-based continuous PGS that is in widespread use, with 91 organizations in the U.S. and 29 internationally using it for casework as of 2024 [22]. Its methodology involves specifying prior distributions on unknown model parameters, such as mixture proportions, and then using Markov Chain Monte Carlo (MCMC) sampling to explore the possible genotype combinations [20].

Key Experimental Protocol: STRmix Deconvolution and LR Calculation

- Input Data Preparation: The protocol begins with the analyzed electrophoretic data, including allele designations and peak heights, which can be generated automatically by companion software like FaSTR DNA [22].

- Model Parameterization: The user must define key parameters, including:

- Number of Contributors (NoC): This can be a fixed value or a variable input (varNOC) using the stratified LR approach to account for uncertainty [22].

- Propositions (H1 and H2): The competing hypotheses about who contributed to the DNA sample.

- Allele Frequencies: The relevant population genetic database.

- Profile Deconvolution: STRmix performs a quantitative modeling of the DNA profile. It calculates the posterior probability of different genotype sets by considering all possible combinations of genotypes that explain the observed peaks, weighted by their probability given the peak heights and model parameters.

- LR Calculation: The final LR is computed by comparing the probability of the observed data under the two propositions (H1 and H2), summing over all the plausible genotype sets and model parameters [23].

The software ecosystem around STRmix includes DBLR, an investigative application used for tasks such as superfast database searches, mixture-to-mixture matching, and complex kinship analysis [22]. DBLR v1.5 allows for the use of varNOC inputs and can include the Amelogenin locus in LR calculations [22].

TrueAllele

TrueAllele is another continuous PGS that uses a Bayesian approach coupled with MCMC methods to separate DNA mixtures. Its protocol shares the same fundamental steps as other continuous systems but differs in specific implementation details and model assumptions, which can lead to divergent LR results on the same sample.

Key Experimental Protocol: TrueAllele Statistical Decomposition

- Data Input and Thresholding: The raw DNA data is input, and an analytical threshold (AT) is applied. It is critical to note that differences in the selected AT between different software analyses can significantly impact the resulting LR [24].

- MCMC Exploration: TrueAllele uses MCMC to exhaustively explore the "universe" of all possible genotype combinations and model parameters that could explain the observed data. This process estimates the posterior probability distribution of contributor genotypes.

- LR Computation: Once the genotype probabilities are estimated, the LR is calculated by forming the ratio of the probabilities for the evidence under the two competing hypotheses, given the inferred genotype distributions.

A pivotal case study highlighted the profound impact of subtle differences in software methodologies. When analyzing the same low-template DNA evidence, STRmix reported an LR of 24, while TrueAllele reported LRs ranging from 1.2 million to 16.7 million [25]. This discrepancy was attributed to differences in modeling parameters, analytic thresholds, and mixture ratios, underscoring the fact that PG analysis "rests on a lattice of contestable assumptions" [25]. Critics of varying the AT in casework argue that it is "pointless, and potentially dangerous" as the decision should be based on data reliability, not on the resulting LR value [24].

Workflow Visualization

The following diagram illustrates the generalized logical workflow for probabilistic genotyping and LR calculation, integrating the roles of different software components.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key solutions and materials essential for conducting research and validation in the field of probabilistic genotyping.

Table 2: Key Research Reagent Solutions for Probabilistic Genotyping

| Item / Solution | Function / Application in PGS Research |

|---|---|

| Commercial STR Multiplex Kits | Provides the foundational DNA profiles from known-source samples necessary for creating positive and negative controls, and for generating validation data sets with known ground truth. |

| Validated Reference DNA | Genomically characterized DNA from cell lines used as a standard reagent for calibration, run-to-run performance monitoring, and inter-laboratory reproducibility studies. |

| Characterized Mixed DNA Samples | Pre-made mixtures with defined contributor ratios and quantities, crucial as controlled reagents for testing software sensitivity, specificity, and performance limits (e.g., minor contributor %). |

| Synthetic DNA Profile Data | Computer-generated data files simulating electrophoretic output; used as a reagent for stress-testing software models, exploring edge cases, and developer training without consuming physical resources. |

| Population Allele Frequency Databases | A critical statistical reagent used to calculate the prior probability of genotype sets (Pr(Sj|Hx)); must be representative and appropriate for the population under study. |

| Software Development Kits | For developers, SDKs and APIs (e.g., for STRmix or DBLR) act as tools to create custom validation protocols, automated testing suites, and bespoke investigative workflows. |

STRmix and TrueAllele represent the cutting edge of forensic DNA analysis, enabling the statistical interpretation of complex DNA evidence that was previously considered intractable. While both are validated, continuous PGS that use Bayesian methods, differences in their underlying modeling assumptions, parameter choices, and computational implementations can lead to significantly different LRs for the same evidentiary sample [25]. This highlights a critical area for ongoing research: understanding and quantifying the uncertainty and sensitivity of PGS outputs. The field is supported by a growing ecosystem of software tools, such as DBLR and FaSTR DNA, which automate and extend analytical capabilities from evaluation to intelligence generation [22]. Future research must focus on rigorous, independent validation using known-source test samples that mirror the challenging nature of casework evidence [25] [20], ensuring that these powerful tools continue to provide reliable, transparent, and scientifically defensible results for the justice system.

End-to-End Single-Cell Pipelines (EESCIt) for Resolving Complex Mixtures

The interpretation of complex DNA mixtures, particularly those comprising multiple contributors or related individuals, represents one of the most challenging problems in forensic genetics. Traditional bulk processing methods, which extract DNA from all cells collectively, often produce composite profiles where minor contributors can be overwhelmed by major ones, and subtle genetic relationships become obscured [26]. These limitations directly impact the reliability of likelihood ratio (LR) calculations, which are fundamental for evidential weighting in forensic casework.

End-to-End Single-Cell Pipelines (EESCIt) present a paradigm shift by physically separating individual cells before genetic analysis. This approach fundamentally transforms the mixture deconvolution problem, allowing for the generation of single-source genetic profiles from complex biological samples [27] [28]. By analyzing cells individually, EESCIt enables precise determination of the number of contributors, accurate mixture ratio estimation, and robust genotype calling—addressing critical limitations that plague traditional bulk mixture analysis. This protocol details the implementation of EESCIt within a forensic framework, emphasizing its integration with probabilistic genotyping systems for enhanced LR calculation.

Table 1: Performance Comparison of Single-Cell vs. Traditional Bulk Analysis

| Parameter | Traditional Bulk Analysis | EESCIt Pipeline |

|---|---|---|

| Ability to detect minor contributors | Limited (typically >5% contribution) | Excellent (>92% probability of detecting 1:20 minor contributor with 40 cells sampled) [27] |

| Impact of contributor number on LR | LR approaches 1 as number increases | LR remains highly informative regardless of contributor number (91% of clusters rendered LR>10¹⁸) [27] |

| Genotype resolution in complex mixtures | Challenging with overlapping alleles | High (99.3% of true genotypes included in 99.8% credible set) [27] |

| Effect of related contributors | Problematic, requires specialized software | Robust deconvolution possible without prior kinship assumptions [27] |

Technical Specifications & Workflow Architecture

The EESCIt framework integrates several advanced technologies to enable high-resolution genetic analysis at the single-cell level. The system is compatible with both STR profiling using capillary electrophoresis and single-cell multi-omics approaches utilizing next-generation sequencing platforms [29].

Core Technological Components

Cell Isolation Platforms: EESCIt supports multiple cell isolation methods, including fluorescence-activated cell sorting (FACS), dielectrophoresis systems (DEPArray), and microfluidic platforms [26]. The semi-permeable capsules (SPCs) technology offers particular advantages for microbial analysis, enabling multistep workflows on thousands of individual cells in parallel without reaction compatibility constraints [30].

Direct-to-PCR Extraction: A critical innovation in forensically relevant single-cell pipelines is the implementation of direct-to-PCR extraction treatments, which eliminate DNA purification steps that lead to sample loss. This approach maintains compatibility with standard downstream forensic reagents and protocols [28].

Amplification Systems: The pipeline supports both whole genome amplification (WGA) for comprehensive genetic analysis and targeted amplification of forensic STR markers. Studies comparing commercial WGA kits have identified significant differences in performance, with REPLI-g demonstrating the lowest allele drop-out (ADO) rate of 8.33% for STR profiling [26].

Bioinformatic Integration

The EESCIt bioinformatic framework incorporates specialized algorithms for single-cell data processing, including:

- Quality control and normalization procedures addressing single-cell specific technical variations [31]

- Model-based clustering for grouping single-cell electropherograms (scEPGs) by genetic similarity without reference to known genotypes [27]

- Probabilistic models for calculating genotype probabilities given single-cell data characteristics [27]

Experimental Protocols

Protocol 1: Single-Cell Isolation from Complex Mixtures

Principle: Physical separation of individual cells from forensic samples before DNA extraction to eliminate mixture formation at the source [28].

Materials:

- Biological sample (buccal swabs, blood stains, touch evidence)

- Cell isolation platform (FACS, DEPArray, or microfluidic system)

- Lysis buffer (compatible with direct-to-PCR)

- Nuclease-free water

- Phosphate-buffered saline (PBS)

Procedure:

- Sample Preparation: Suspend 0.1 g of sample in 150 μL of 2.5% NaCl solution. Add 50 μL detergent mix (100 mM EDTA, 100 mM sodium pyrophosphate, 1% Tween 80) and 50 μL methanol [30].

- Cell Detachment: Vigorously shake suspension for 60 min at 500 r.p.m. using a shaker.

- Sonication: Sonicate sediment slurry three times for 1 min each in a water bath.

- Filtration: Add 1 mL of 2.5% NaCl solution and filter through an 8μM-sized filter syringe.

- Concentration: Centrifuge collected supernatant at 15,000 × g for 10 min. Remove supernatant.

- Washing: Suspend cell pellets in 1x PBS and wash twice at 8,000 × g for 5 min.

- Cell Isolation: Load prepared sample onto cell isolation platform. For microfluidic systems, target lambda of 0.1 (cells/compartment) to minimize multiple cell encapsulation [30].

- Collection: Individually collect isolated cells into PCR-compatible tubes or plates.

Quality Control: Count total number of cells using impedance flow cytometry. Verify single-cell isolation efficiency via microscopy for a subset of compartments [30].

Protocol 2: Direct-to-PCR Extraction and Amplification

Principle: Perform cell lysis and DNA amplification in the same reaction vessel to minimize DNA loss, followed by forensic STR profiling [28].

Materials:

- Isolated single cells in PCR tubes

- Direct-to-PCR extraction reagents (e.g., Arcturus PicoPure)

- PCR amplification master mix

- GlobalFiler or other STR amplification kit

- Thermal cycler

Procedure:

- Cell Lysis: Add 5μL direct-to-PCR extraction reagent to each cell. Incubate according to manufacturer's specifications.

- PCR Setup: Directly add STR amplification master mix to the lysed cell without DNA purification.

- Thermal Cycling: Amplify using manufacturer-recommended cycling conditions with increased cycle numbers (28-32 cycles) to compensate for low template.

- Capillary Electrophoresis: Inject PCR products following standard forensic protocols with injection parameters optimized for low template (1.2-1.6 kV, 20-30 s) [27].

Troubleshooting:

- Low signal intensity: Increase injection time or PCR cycle number within validation limits.

- High baseline noise: Optimize direct-to-PCR reagent concentration to reduce inhibition.

- Allele drop-out: Implement replicate amplifications or consensus profiling from multiple cells.

Protocol 3: Single-Cell Data Analysis and LR Calculation

Principle: Implement probabilistic framework for analyzing single-cell electropherograms (scEPGs) and calculating likelihood ratios for contributor identification [27].

Materials:

- Single-cell EPG data

- Computational resources with appropriate software (R, Python)

- Probabilistic genotyping system (STRmix or custom implementations)

- Population allele frequency database

Procedure:

- Data Preprocessing: Apply quality control filters to remove scEPGs with insufficient genetic information.

- Clustering Analysis: Group scEPGs by genetic similarity using model-based clustering without reference to known genotypes:

- Assume scEPGs in a cluster originate from a single contributor

- Calculate similarity based on shared alleles across multiple loci

- Consensus Genotyping: For each cluster, determine consensus genotype by evaluating probability of observed data given possible genotypes:

- Calculate P(Gl=gl|C) = [ΠP(Eil|Gl=gl) × P(Gl=gl)] / [Σ(ΠP(Eil|Gl=gl) × P(Gl=gl))] [27]

- Where Gl is genotype at locus l, C is cluster data, Eil is scEPG data for cell i at locus l

- Likelihood Ratio Calculation: For each cluster, compute LR comparing prosecution and defense propositions:

- LR(C,s) = P(C|Hp,s) / P(C|Hd)

- Where Hp assumes POI contributed, Hd assumes random individual contributed [27]

- Credible Genotype Set Determination: Apply decision criterion such that sum of ranked probabilities of genotypes in set is ≥1-α (typically α=0.002 for 99.8% credible set) [27].

Table 2: Single-Cell Analysis Performance Metrics Across Mixture Complexity

| Number of Contributors | True Genotypes in Credible Set | LR > 10¹⁸ for True Donors | Most Probable Genotype Correct |

|---|---|---|---|

| 2 | 99.5% | 94% | 98% |

| 3 | 99.4% | 92% | 97% |

| 4 | 99.2% | 90% | 96% |

| 5 | 99.1% | 89% | 96% |

| Average | 99.3% | 91% | 97% |

Performance data based on analysis of 630 admixtures containing up to 5 donors [27]

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for EESCIt Implementation

| Item | Function | Example Products |

|---|---|---|

| Semi-permeable Capsules (SPCs) | Enable multistep workflows on thousands of individual cells in parallel without reaction compatibility constraints [30] | Atrandi Biosciences SPCs Innovator Kit |

| Direct-to-PCR Extraction Kits | Cell lysis and DNA extraction compatible with immediate PCR amplification, minimizing sample loss [28] | Arcturus PicoPure, REPLI-g Single Cell Kit |

| STR Amplification Kits | Target amplification of forensic STR markers from single-cell templates | GlobalFiler, PowerPlex ESX Fast |

| Microfluidic Platforms | High-throughput single-cell isolation and processing | 10x Genomics Chromium, ONYX Platform |

| Probabilistic Genotyping Software | Calculate likelihood ratios from single-cell data accounting for stochastic effects | STRmix, EuroForMix |

| Cell Isolation Systems | Physical separation of individual cells from complex mixtures | DEPArray, FACS systems |

Data Interpretation & Statistical Analysis

The interpretation of single-cell data requires specialized statistical approaches that account for the unique characteristics of low-template DNA analysis, including allele drop-out (ADO), allele drop-in (ADI), and imbalanced amplification.

Probabilistic Modeling Framework

The core statistical framework for EESCIt data analysis employs Bayesian approaches to determine posterior probability distributions for genotypes given the observed single-cell data:

For a cluster C of v single-cell electropherograms, the probability of a genotype gl at locus l given the cluster data is:

P(Gl=gl|C) = [Π(i=1 to v) P(Eil|Gl=gl) × P(Gl=gl)] / [Σ(gl) Π(i=1 to v) P(Eil|Gl=gl) × P(Gl=gl)] [27]

Where:

- Eil represents the electropherogram data for cell i at locus l

- P(Eil|Gl=gl) is determined from calibration data of known genotype

- P(Gl=gl) is calculated from population allele frequencies

Likelihood Ratio Calculation in Kinship Scenarios

Single-cell data significantly enhances the capacity to resolve mixtures containing related individuals, a particularly challenging scenario for traditional bulk analysis. When kinship between contributors is suspected, the LR framework can incorporate relatedness:

LR = P(E|Hp, I) / P(E|Hd, I)

Where Hp may specify that contributors include known relatives, and Hd may specify unrelated individuals [18]. Studies demonstrate that correctly assuming relatedness increases LRs for true donors, while ignoring relatedness is typically conservative in most cases [18].

Performance Validation

Rigorous validation of EESCIt performance demonstrates its superior capabilities for complex mixture resolution:

- Credible Genotype Accuracy: 99.3% of true genotypes are included in the 99.8% credible set, regardless of the number of mixture contributors [27]

- Evidential Strength: 91% of clusters from true contributors render LR > 10¹⁸, providing exceptionally strong evidence for contributor identification [27]

- Minor Contributor Detection: Probability of detecting a minor contributor present at 5% (1:20 ratio) exceeds 92% when sampling 40 cells, dramatically improving sensitivity over traditional methods [27]

Applications in Forensic Investigations

The EESCIt framework provides particular value in several challenging forensic scenarios:

Sexual Assault Evidence: Resolution of complex mixtures containing epithelial and sperm cells from multiple individuals, even with pronounced contributor imbalance.

Touch DNA Evidence: Enhanced analysis of minimal quantity samples where traditional methods produce uninterpretable mixed profiles.

Kinship Analysis in Mixtures: Identification of related contributors without prior kinship assumptions, overcoming limitations of traditional mixture interpretation [18].

Database Searching: Generation of high-quality single-source profiles from complex mixtures for effective DNA database searches.

The implementation of end-to-end single-cell pipelines represents a transformative advancement for forensic genetics, fundamentally changing the approach to complex mixture resolution and enabling robust likelihood ratio calculations even in the most challenging evidentiary samples.

The probabilistic interpretation of DNA evidence recovered from crime scenes is a central and widely investigated issue in forensic biology, particularly with Low-Template DNA (LT-DNA) samples and complex mixtures involving multiple contributors [32]. The selection of an appropriate statistical model is paramount for accurately quantifying the weight of evidence, typically expressed as a Likelihood Ratio (LR). This LR compares the probability of the evidence under two competing hypotheses: the prosecution hypothesis (Hp) and the defense hypothesis (Hd) [3]. Over time, the forensic community has transitioned from simple binary models to more sophisticated semi-continuous (qualitative) and fully-continuous (quantitative) approaches, which represent the current gold standard for mixture interpretation [32]. These models differ significantly in their complexity, underlying assumptions, and the extent to which they utilize the information contained within the DNA profile data [33]. This application note provides a detailed comparison of these two dominant approaches, outlining their theoretical foundations, practical applications, and performance characteristics within the context of likelihood ratio calculation for complex DNA mixtures.

Model Definitions and Theoretical Foundations

Semi-Continuous (Qualitative) Models

Semi-continuous models represent an intermediate level of complexity. They consider the presence or absence of alleles in the electrophoregram but do not utilize the quantitative information of peak heights [32]. These models incorporate the possibility of major stochastic effects such as allelic drop-out (the failure to detect an allele present in a contributor) and drop-in (the appearance of a spurious allele from contamination) [32] [34]. However, they rely on predefined analytical thresholds to distinguish true alleles from baseline noise, and any peak below this threshold is disregarded [34]. The algorithms in semi-continuous software are generally more straightforward, making the process and results easier to explain in legal settings [32].

Fully-Continuous (Quantitative) Models

Fully-continuous models constitute a more advanced approach that utilizes both the qualitative (allelic identity) and quantitative (peak height) information from the DNA profile [32] [34]. By modeling the peak heights, these methods can account for an contributors' DNA proportion in the mixture and more effectively model stochastic effects like drop-in, drop-out, and stutter artifacts within their statistical framework, often eliminating the need for a rigid stochastic threshold [32] [33]. These models incorporate more of the available data, which can lead to greater power to discriminate between true and non-contributors, especially for complex, low-level mixtures [33].

Table 1: Core Characteristics of Semi-Continuous and Fully-Continuous Models

| Feature | Semi-Continuous Model | Fully-Continuous Model |

|---|---|---|

| Primary Input | Presence/absence of alleles | Allelic presence and peak heights |

| Treatment of Peak Heights | Not considered | Integral to the model [32] |

| Stochastic Threshold | Required [32] | Often not required [33] |

| Handling of Artifacts | Accounts for drop-in/drop-out via user-defined probabilities [34] | Models stutter, drop-in, and drop-out within the peak height framework [34] |

| Statistical Complexity | Lower; more straightforward to implement and present [32] | Higher; involves complex algorithms and computations [32] |

| Typical Software | LRmix Studio, Lab Retriever [32] | STRmix, EuroForMix, DNA•VIEW [32] |

Performance Comparison and Experimental Data

Comparative studies have consistently demonstrated performance differences between semi-continuous and fully-continuous software. A proof-of-concept multi-software comparison analyzed 2- and 3-person mixtures with varying DNA proportions and multiple amplification kits [32]. The study found that fully-continuous computations provided different (higher) results in terms of degrees of magnitude of the likelihood ratio values compared to those from the semi-continuous approach, irrespective of the amplification kit used [32].

Another study comparing the effectiveness of statistical models for low-template two-person mixtures concluded that as the sophistication of the models increases, so does the power of discrimination [33]. This enhanced discrimination often correlates with each model's ability to use observed data effectively. Fully-continuous models, such as STRmix, incorporate all stochastic events into the calculation, making the most effective use of the observed data [33].

Table 2: Example Likelihood Ratio (LR) Outputs from Model Comparison Studies

| Mixture Type & Proportion | Amplification Kit | Semi-Continuous LR (e.g., LRmix Studio) | Fully-Continuous LR (e.g., STRmix) | Key Study Finding |

|---|---|---|---|---|

| 2-person, 1:1 | GlobalFiler | Varies with specifics | Varies with specifics | Fully-continuous LRs were consistently higher in magnitude [32] |

| 2-person, 19:1 | PowerPlex Fusion 6C | Varies with specifics | Varies with specifics | Fully-continuous models showed greater power to discriminate [33] |

| 3-person, 1:1:1 | Multiple Kits | Varies with specifics | Varies with specifics | Fully-continuous models more effectively use peak data for complex mixtures [32] |

| Low-Template DNA | Multiple Kits | Lower LR magnitude | Higher LR magnitude | Fully-continuous approaches are more powerful for LT-DNA [32] |

Critical Experimental Parameters and Protocols