A Comprehensive Guide to Validating Probabilistic Genotyping Software in Forensic and Biomedical Research

This article provides a detailed framework for the validation of probabilistic genotyping (PG) software, essential for interpreting complex DNA mixtures in forensic and biomedical contexts.

A Comprehensive Guide to Validating Probabilistic Genotyping Software in Forensic and Biomedical Research

Abstract

This article provides a detailed framework for the validation of probabilistic genotyping (PG) software, essential for interpreting complex DNA mixtures in forensic and biomedical contexts. It explores the scientific and legal foundations of PG software, outlines methodological approaches for internal validation as per SWGDAM and international guidelines, addresses common troubleshooting scenarios and optimization strategies for parameters like stutter and degradation, and offers a comparative analysis of major software tools including STRmix™, EuroForMix, and MaSTR™. Aimed at researchers, scientists, and laboratory professionals, this guide synthesizes current standards and published validation studies to support robust implementation, ensure statistical reliability, and navigate legal admissibility.

The Science and Standards Behind Probabilistic Genotyping

Defining Probabilistic Genotyping and the Likelihood Ratio (LR)

Frequently Asked Questions (FAQs)

What is probabilistic genotyping?

Probabilistic genotyping (PG) is a scientific method for interpreting complex DNA mixtures using statistical models. Unlike traditional binary methods that declare a simple "match" or "non-match," PG software uses statistical algorithms to evaluate all possible genotype combinations that could explain a mixed DNA sample. It then calculates a Likelihood Ratio (LR) to quantify the strength of the evidence for whether a person of interest is a contributor to the mixture [1] [2] [3]. This approach is particularly vital for interpreting challenging samples, such as those with low-quality DNA, multiple contributors, or where stochastic effects like allelic drop-out have occurred [1] [2].

What is a Likelihood Ratio (LR) and how is it calculated?

A Likelihood Ratio (LR) is a statistical measure that compares the probability of the observed DNA evidence under two competing propositions [4]. The formula is:

LR = Pr(E | H1) / Pr(E | H2)

Where:

- Pr(E | H1) is the probability of the evidence (E) given the prosecution's proposition (H1)—typically that the person of interest is a contributor.

- Pr(E | H2) is the probability of the evidence (E) given the defense's proposition (H2)—typically that the person of interest is not a contributor and the DNA comes from an unknown, unrelated individual [4] [1] [3].

The LR tells you how many times more likely the evidence is under one proposition compared to the other.

How should LR results be interpreted?

The value of the LR indicates the strength of support for one proposition over the other [4]:

| LR Value | Interpretation | Support for H1 (Prosecution Proposition) |

|---|---|---|

| LR > 1 | The evidence is more likely if the person of interest is a contributor. | Positive support |

| LR = 1 | The evidence is equally likely under both propositions. | Inconclusive / Neutral |

| LR < 1 | The evidence is more likely if the person of interest is not a contributor. | Support for H2 (Defense Proposition) |

Furthermore, the magnitude of the LR can be qualitatively described using verbal equivalents. The following table provides a general guide [4]:

| LR Range | Verbal Equivalent of Support |

|---|---|

| 1 to 10 | Limited evidence to support |

| 10 to 100 | Moderate evidence to support |

| 100 to 1,000 | Moderately strong evidence to support |

| 1,000 to 10,000 | Strong evidence to support |

| > 10,000 | Very strong evidence to support |

What are common misconceptions about the Likelihood Ratio?

It is critical to understand what the LR does not represent [3]:

- It is not the probability of guilt or innocence. The LR only assesses the probability of the evidence given the propositions, not the probability of the propositions given the evidence.

- A high LR does not mean "proof." It is a measure of the strength of the DNA evidence, which must be considered alongside all other evidence in a case.

- An LR greater than 1 does not definitively "include" a person, just as an LR less than 1 does not definitively "exclude" them. The LR provides continuous statistical weight to a conclusion [5].

Troubleshooting Guides

How to address "low" or unexpected LR values

Encountering a lower-than-expected LR can be a common issue. The following flowchart helps diagnose potential causes:

Recommended Actions:

- Re-evaluate the Number of Contributors (NoC): Use statistical tools like NOCIt to support your estimate. Overestimating the NoC is a known cause of LRs tending towards 1 (neutral evidence) [2] [5].

- Inspect Raw Data: Closely examine the electropherogram for signs of high degradation, extreme peak height imbalance, or stutter artifacts that the model may have struggled to account for [2] [7].

- Review Proposition Setting: Ensure the competing hypotheses (H1 and H2) correctly reflect the case circumstances. For example, if relatedness is a possibility, the propositions and model should account for it [6].

- Consult Validation Boundaries: Ensure the sample's characteristics (e.g., number of contributors, DNA quantity) fall within the scope of your laboratory's internal validation of the PG software [7] [6].

How to validate probabilistic genotyping software for research use

A robust internal validation is essential before implementing any PG software for research or casework. The protocol should comply with guidelines from bodies like the Scientific Working Group on DNA Analysis Methods (SWGDAM) [2] [7].

Detailed Validation Protocol:

| Validation Stage | Key Objectives | Methodology & Metrics |

|---|---|---|

| 1. Sensitivity & Specificity | Determine the system's ability to identify true contributors and exclude non-contributors. | - Test with known true and false contributors.- Calculate false positive/negative rates.- Generate ROC curves and calculate the Area Under the Curve (AUC) to measure discriminatory power [7] [5]. |

| 2. Precision & Reproducibility | Assess the consistency of LR results across repeated analyses. | - Re-run analyses of the same profile multiple times.- Monitor the standard deviation of log(LR).- For MCMC-based software, ensure sufficient iterations and burn-in periods to achieve stable results [2] [7]. |

| 3. Complex Mixture Performance | Evaluate the software's limits with high-order mixtures. | - Test with 3, 4, and 5-person mixtures at varying ratios.- Document the rate of inconclusive or misleading results (e.g., high LRs for non-contributors) [2] [5]. |

| 4. Calibration Assessment | Check if the LRs reported are statistically well-calibrated. | - Use Tippett plots or Empirical Cross-Entropy (ECE) plots.- A well-calibrated system will show that when an LR of X is reported, the evidence is X times more likely under H1 than H2 [5]. |

| 5. Mock Casework Samples | Simulate real-world conditions. | - Use samples that mimic actual evidence, such as degraded DNA or touched items.- Verify concordance with established methods where possible [2]. |

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key software and tools essential for research in probabilistic genotyping.

| Tool Name | Type / Function | Brief Description & Research Application |

|---|---|---|

| STRmix | Probabilistic Genotyping Software | A widely adopted, continuous PG system that uses a Bayesian framework to compute LRs for complex DNA mixtures [1] [7]. |

| EuroForMix | Probabilistic Genotyping Software | An open-source PG system based on a maximum likelihood estimation (MLE) method, useful for research and method comparisons [1] [5]. |

| MaSTR | Probabilistic Genotyping Software | A continuous PG software that employs Markov Chain Monte Carlo (MCMC) for interpreting 2-5 person mixtures, with advanced validation tools [2]. |

| NOCIt | Statistical Tool | A tool to determine the Number of Contributors (NoC) in a DNA mixture with statistical confidence, a critical first step in PG analysis [2]. |

| DNAStatistX | Probabilistic Genotyping Software | A PG software that, like EuroForMix, uses the MLE method and is used in operational laboratories [1] [5]. |

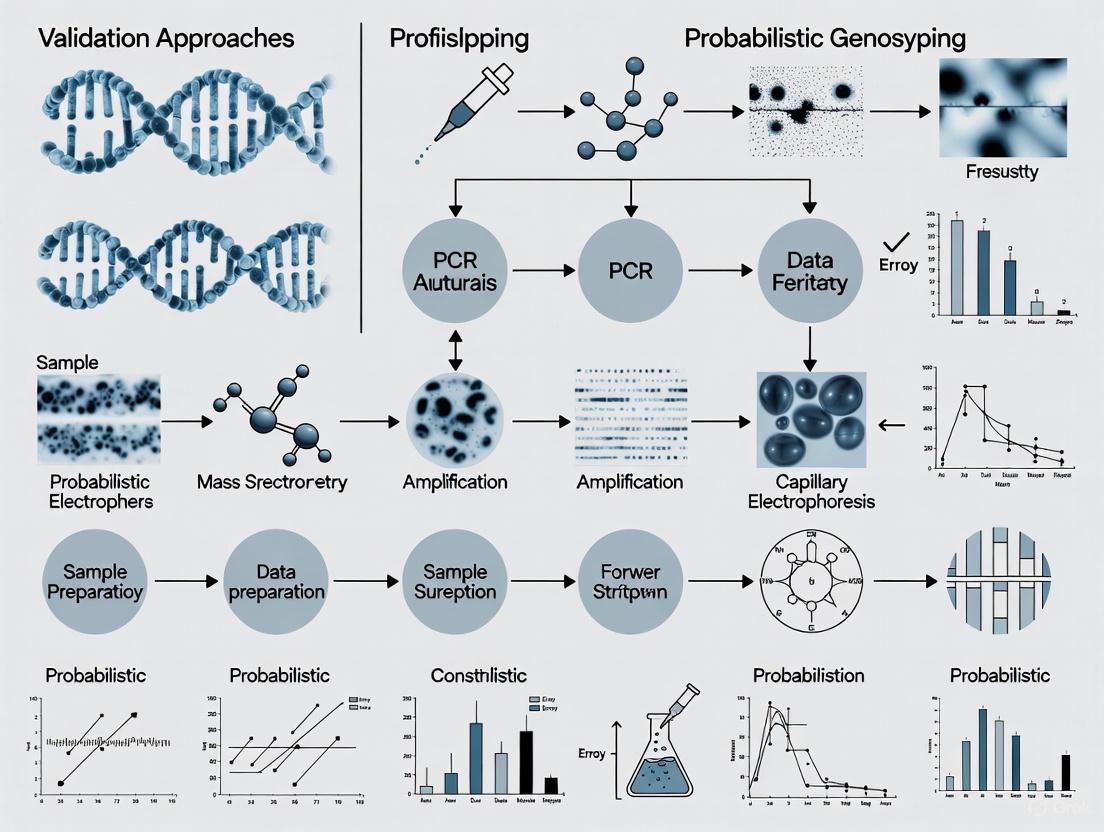

Visual Guide: The Probabilistic Genotyping Workflow

The core process of using PG software in an evaluative context follows a structured path, from raw data to statistical interpretation, as illustrated below.

The interpretation of DNA mixtures, especially those involving low-template or degraded DNA, is complicated by several stochastic effects. Allele drop-out occurs when an allele from a true contributor fails to amplify to a detectable level, while allele drop-in involves the random appearance of allelic peaks not originating from a true contributor [8] [9]. These phenomena, along with general stochastic amplification effects, present significant challenges for forensic analysts and researchers working with complex DNA mixtures [10].

These challenges are particularly relevant in the context of validating probabilistic genotyping software (PGS), where understanding and modeling these artifacts is essential for generating reliable likelihood ratios [11]. This guide addresses the specific issues users may encounter during their experiments and provides troubleshooting guidance based on current research and validation studies.

Troubleshooting Guides

Understanding and Identifying Common Artifacts

Table 1: Characteristics and Identification of Common Stochastic Effects

| Artifact Type | Definition | Key Identifying Features | Common Causes |

|---|---|---|---|

| Allele Drop-out | Failure of an allele to amplify above the analytical threshold [8] | Missing alleles in an otherwise complete profile; heterozygous imbalance; signatures of degradation [10] | Low template DNA (<100 pg), degraded DNA, inhibition, poor DNA quality |

| Allele Drop-in | Spurious appearance of allelic peaks not from biological contributors [9] | Isolated peaks (typically 1-2) below 400 RFU; non-reproducible across replicates; inconsistent with stutter patterns [12] [9] | Contamination from random DNA fragments, environmental contamination, laboratory procedures |

| Stochastic Effects | Random fluctuations in amplification efficiency [11] | Extreme peak height imbalances; heterozygote peak height ratios outside expected ranges; variable mixture ratios across loci [7] | Very low DNA quantities, inefficient amplification, primer binding issues |

Quantitative Characterization of Artifacts

Table 2: Empirical Data on Drop-in and Drop-out Characteristics

| Parameter | Drop-in Findings | Drop-out Findings |

|---|---|---|

| Frequency | 2472/13485 negative controls (18.3%) showed drop-in [12]; 5652/28842 (19.6%) over extended study [9] | Probability increases exponentially as DNA quantity decreases; can be modeled using logistic regression [8] |

| Peak Height | Typically below 400 RFU [12] [9]; majority below 150 RFU [9] | N/A (absence of peaks) |

| Locus Trends | Some loci show higher drop-in rates, though trends are not conclusive [12] | Varies by locus and template amount; more prevalent at larger loci, especially with degraded DNA [13] |

| Multiplicity | 71.9% single peaks, 28.1% two peaks in same sample [12] | Can affect single or multiple alleles depending on degradation levels and template quantity |

Frequently Asked Questions (FAQs)

Q1: How can I distinguish between genuine drop-in and low-level contamination? Drop-in typically presents as one or two isolated peaks below 400 RFU that are inconsistent with stutter or other artifacts and are non-reproducible across replicates [12] [9]. In contrast, contamination generally shows three or more alleles and may form a partial profile. If multiple alleles from a single source are detected, it is classified as contamination rather than drop-in and may require adding an unknown contributor to the probabilistic model [9].

Q2: What approaches are most effective for managing allele drop-out in complex mixtures? Probabilistic genotyping software explicitly models drop-out probabilities based on peak heights, template quantity, and locus-specific factors [8]. Fully continuous systems like STRmix and EuroForMix incorporate quantitative data to estimate drop-out probabilities [11] [14]. Validation studies recommend testing software with low-template samples exhibiting stochastic effects to establish locus-specific drop-out parameters and ensure the software can handle expected drop-out scenarios in casework [11].

Q3: How do different probabilistic genotyping software platforms handle stutter compared to drop-in? Stutter is typically modeled using expected stutter ratios derived from empirical data, with some software (like STRmix) requiring stutter inclusion in analysis, while others (like EuroForMix) offer user options for stutter modeling [14]. In contrast, drop-in is generally modeled as independent events with frequencies equivalent to population databases, often incorporating peak height considerations where larger drop-in peaks have greater impact on likelihood ratios [9].

Q4: What validation approaches are essential for ensuring reliable probabilistic genotyping results? Comprehensive validation should include: accuracy testing with known samples, sensitivity/specificity analyses, precision assessment, evaluation of software parameters, testing with varying contributor numbers, mixture ratios, degradation levels, and allele sharing patterns [11]. Studies should specifically test for Type I (false exclusion) and Type II (false inclusion) errors using both contributors and non-contributors [11]. The Scientific Working Group on DNA Analysis Methods (SWGDAM) provides detailed validation guidelines for probabilistic genotyping systems [7] [11].

Experimental Protocols for Validation Studies

Protocol for Characterizing Laboratory-Specific Drop-in Parameters

Purpose: To establish laboratory-specific drop-in rates and characteristics for configuring probabilistic genotyping software.

Materials:

- QIAamp DNA Investigator kit (Qiagen) or equivalent DNA extraction system [12] [9]

- Appropriate STR amplification kits (e.g., PowerPlex Fusion 5C, GlobalFiler) [11] [14]

- Capillary electrophoresis system (e.g., 3130-Avant Genetic Analyzer) [11]

- Negative control samples (extraction negatives, PCR negatives) [12]

Procedure:

- Process a large set of negative controls (recommended: >1000 samples) alongside casework samples over an extended period (e.g., 3-6 months) [9]

- Record all instances where one or two peaks appear between analytical threshold (typically 40 RFU) and 400 RFU that are inconsistent with stutter or other artifacts [12]

- Categorize drop-in events by: locus, peak height, run date, and negative control type [9]

- Calculate per-sample and per-locus drop-in probabilities from the data

- Use this empirical data to set drop-in parameters (rate and peak height distribution) in probabilistic genotyping software

Validation: Periodically repeat this analysis to monitor for changes in laboratory drop-in rates and adjust parameters accordingly [9].

Protocol for Evaluating Software Performance with Stochastic Effects

Purpose: To validate probabilistic genotyping software performance with samples exhibiting drop-out, drop-in, and stochastic effects.

Materials:

- Quantified human DNA extracts from single donors [11]

- Real-time PCR quantification system (e.g., 7500 real-time PCR system with Quantifiler kit) [11]

- STR amplification and capillary electrophoresis systems [11]

Procedure:

- Prepare mixture samples with varying contributor numbers (2-5 persons), template quantities (0.016-1 ng total DNA), and mixture ratios [15] [11]

- Include samples with high and low levels of allele sharing between contributors [11]

- Analyze all samples using the probabilistic genotyping software with appropriate analytical thresholds (e.g., 30-50 RFU) [11]

- For each sample, compute likelihood ratios for both true contributors and non-contributors using multiple propositions [11]

- Assess software accuracy, sensitivity, and specificity across the tested conditions

- Document instances of Type I (LR<1 for true contributor) and Type II (LR>1 for non-contributor) errors [11]

Interpretation: The software is considered validated for specific casework scenarios when it demonstrates acceptable performance across the tested range of conditions, with documented limitations [7] [11].

Workflow Visualization

DNA Analysis Workflow and Challenges - This diagram illustrates the standard DNA analysis process and points where stochastic effects introduce challenges, along with corresponding mitigation strategies implemented during data analysis and interpretation.

Research Reagent Solutions

Table 3: Essential Materials and Reagents for DNA Mixture Research

| Reagent/Kit | Primary Function | Application Notes | References |

|---|---|---|---|

| QIAamp DNA Investigator Kit | DNA extraction from forensic samples | Optimized for low-template and challenging samples; used in validation studies | [13] [9] |

| PowerPlex Fusion 5C | STR multiplex amplification | 27-locus system; used in validation studies for complex mixture analysis | [11] |

| GlobalFiler/GlobalFiler Express | STR multiplex amplification | 24-locus kits; used in validation studies and casework applications | [7] [14] |

| Quantifiler Human DNA Quantification Kit | Real-time PCR quantification | Essential for determining input DNA for mixture studies | [11] |

| FD multi-SNP Mixture Kit | MNP multiplex amplification | Covers 567 multi-SNP markers; useful for degraded DNA analysis | [13] |

| Identifiler Plus PCR Amplification Kit | STR multiplex amplification | Conventional CE-STR analysis; comparator for new technologies | [13] |

Advanced Methodologies for Complex Mixtures

For particularly challenging samples involving severe degradation or extreme low-template DNA, alternative marker systems may be necessary. Multi-SNPs (MNPs), which are genetic markers similar to microhaplotypes but with smaller molecular sizes (<75 bp), have demonstrated significant potential for analyzing degraded and trace amount DNA samples [13]. In validation studies, next-generation sequencing-based MNP analysis successfully detected a contributor's DNA in a cold case sample stored for over a decade where conventional CE-STR analysis produced inconclusive results [13].

When establishing laboratory protocols for probabilistic genotyping software validation, it is essential to consider population-specific allele frequencies and laboratory-specific parameters. These include stutter ratios, drop-in rates, and analytical thresholds, all of which significantly impact likelihood ratio calculations and should be derived from empirical laboratory data rather than relying solely on manufacturer defaults or data from other laboratories [7] [11] [9].

Navigating the validation of probabilistic genotyping software (PGS) requires a clear understanding of the key organizations that publish authoritative guidelines. These bodies provide the legal and scientific framework that ensures forensic DNA analysis is accurate, reliable, and admissible in court. The three primary organizations shaping this landscape are the Scientific Working Group on DNA Analysis Methods (SWGDAM), the ANSI/ASB Standards Board (ASB), and the International Society for Forensic Genetics (ISFG).

Each organization serves a distinct purpose. SWGDAM provides guidance and recommendations specifically for the U.S. forensic DNA community, the ASB publishes formal, consensus-based standards, and the ISFG offers international perspectives and recommendations through its DNA Commission. For laboratories implementing probabilistic genotyping systems like STRmix or MaSTR, compliance with these guidelines is not merely advisory; it is a fundamental requirement for forensic accreditation and legal acceptance [7] [11].

Table 1: Key Guideline Bodies for Probabilistic Genotyping Software Validation

| Organization | Primary Role & Focus | Key Document Examples | Authority & Jurisdiction |

|---|---|---|---|

| SWGDAM | Develops guidance for U.S. forensic DNA labs; recommends changes to FBI Quality Assurance Standards (QAS) [16]. | SWGDAM Validation Guidelines for Probabilistic Genotyping Systems [7] [11]. | U.S. national focus; closely tied to the FBI and CODIS operations [17]. |

| ANSI/ASB | Develops formal, consensus-based national standards for a broad range of forensic disciplines [18]. | ANSI/ASB Standard 018: Standard for Validation of Probabilistic Genotyping Systems [18] [19]. | U.S. national standards; often referenced for accreditation. |

| ISFG (DNA Commission) | Provides international recommendations and consensus guidelines on forensic genetics topics [20]. | DNA Commission recommendations on DNA transfer and recovery, PGS, and terminology [21] [11]. | International authority; promotes global standardization. |

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

FAQ 1: Our laboratory is performing an internal validation of a probabilistic genotyping system. Are we required to follow both SWGDAM guidelines and ASB standards?

Answer: While there can be overlap, both sets of documents are critical. The FBI Quality Assurance Standards (QAS) represent the minimum requirements for forensic DNA testing laboratories in the United States [17]. SWGDAM, which has a unique statutory relationship with the FBI, provides detailed guidance to help laboratories meet these standards and discusses emerging technologies like PGS [16] [17]. ANSI/ASB Standard 018 is a formal national standard that lays out specific requirements for PGS validation [18]. A robust internal validation study should demonstrate compliance with the relevant ASB standard and incorporate recommendations from SWGDAM guidelines to ensure it meets the expectations of the broader forensic community. Published validation studies often state compliance with both to establish scientific rigor [7] [11].

FAQ 2: During validation, we encountered a rare case where the software excluded a true contributor (LR = 0). Does this mean our validation has failed?

Answer: Not necessarily. Encountering such edge cases is a primary goal of a thorough validation. The purpose of validation is to define the limits and performance of the system under a wide range of conditions. One study noted that extreme heterozygote imbalance or significant stochastic effects can, in rare instances, lead to an LR of 0 for a true contributor [7]. Your validation report should document these observations, explain the likely causes (e.g., stochastic effects, allele drop-out), and define the limitations of the software. This documentation is essential for providing context when testifying about the strengths and limitations of the method.

FAQ 3: What is the difference between a SWGDAM "Guideline" and an ANSI/ASB "Standard"?

Answer: The key difference lies in their formality and purpose.

- A SWGDAM Guideline is a recommendation for best practices, providing a detailed framework for laboratories to develop their own procedures. SWGDAM's mission is to "develop guidance documents to enhance the delivery of forensic biology services" [16].

- An ANSI/ASB Standard is a formal, consensus-based standard developed in accordance with procedures accredited by the American National Standards Institute (ANSI). While the QAS are the minimum standards, ASB standards like ANSI/ASB 018 provide highly specific, technical requirements for validation [18]. As noted in SWGDAM FAQs, their guideline documents are more detailed than the QAS, and laboratories use them to inform their protocols [17].

Troubleshooting Common Validation Challenges

Issue 1: Inconsistent Results with High Contributor Numbers or Low-Level DNA

- Problem: The software produces unreliable or unpredictable Likelihood Ratios (LRs) when analyzing mixtures with four or more contributors, or when dealing with very low-template DNA.

- Solution:

- Define Limits: Your validation study must explicitly define the software's limits. The study by Green et al. demonstrated that MaSTR was validated for mixtures of up to five contributors, but your lab must confirm this for your specific system and conditions [11].

- Use Appropriate Controls: Include samples with known contributors and non-contributors to test for Type I (false exclusion) and Type II (false inclusion) errors under these challenging conditions [11].

- Document Stochastic Effects: Acknowledge and document that stochastic effects like allele drop-out and heterozygote imbalance are expected with low-level DNA. One study found these effects could rarely lead to an LR of 0 for a true contributor, which must be understood and reported as a limitation [7].

Issue 2: Determining the Appropriate Number of Contributors for a Mixture

- Problem: Incorrectly estimating the number of contributors (NOC) to a DNA mixture can lead to erroneous LR calculations.

- Solution:

- Validate NOC Estimation Protocol: Your validation must include a specific experiment to assess your laboratory's method for estimating the NOC. This should involve analyzing mixtures with known numbers of contributors and assessing the accuracy of your estimates.

- Test Proposition Setting: Follow the guidance in ASB Standard 018 and SWGDAM guidelines to test the effect of assuming an incorrect NOC. For instance, analyze a three-person mixture while hypothesizing only two contributors and evaluate the impact on the LR for true contributors and non-contributors [7].

Issue 3: Setting Laboratory-Specific Parameters

- Problem: The probabilistic genotyping software does not perform optimally with default parameters for your laboratory's specific chemistry, instrumentation, and population.

- Solution:

- Develop Custom Parameters: The validation must establish laboratory-specific parameters for stutter, peak height variation, and other model variables. This process is fundamental to the "internal" part of internal validation.

- Follow a Rigorous Workflow: The process for establishing and validating these parameters should be methodical and well-documented, as outlined in the diagram below.

Diagram 1: Laboratory Parameter Validation Workflow (55 characters)

Essential Experimental Protocols for PGS Validation

A robust internal validation for probabilistic genotyping software must be comprehensive. The following protocol synthesizes core requirements from SWGDAM, ANSI/ASB Standard 018, and established scientific practice [7] [11].

Core Validation Protocol

Objective: To verify that the probabilistic genotyping software performs with acceptable accuracy, sensitivity, specificity, and precision within the laboratory's specific environment and with its chosen DNA analysis kits.

Materials and Reagents: Table 2: Research Reagent Solutions for PGS Validation

| Reagent / Material | Function in Validation | Example Product(s) |

|---|---|---|

| Commercial STR Kits | Generates the DNA profiles for software interpretation. Defines the loci available for analysis. | PowerPlex Fusion 5C, GlobalFiler [7] [11]. |

| Quantification Kits | Accurately determines the quantity of human DNA in a sample, which is critical for creating mixtures with specific ratios and quantities. | Quantifiler Human DNA Quantification Kit [11]. |

| Capillary Electrophoresis System | Separates and detects amplified PCR products, generating the raw electropherogram data. | 3130-Avant Genetic Analyzer [11]. |

| Genotyping Software | Performs initial allele calling and peak height analysis from electropherograms, creating the input file for PGS. | GeneMarker HID, GeneMapper ID-X [11]. |

| De-identified Human DNA Extracts | Serves as known single-source reference material for creating controlled mixture samples. | Nebraska BioBank [11]. |

Methodology:

Preparation of Mock Mixtures:

- Create mixtures with varying numbers of contributors (e.g., 2 to 5) [11].

- Prepare samples with a wide range of mixture ratios (e.g., 1:1 to 1:20) and total DNA quantities (from optimal down to the limit of detection) [7] [11].

- Intentionally select contributors with different levels of allele sharing, from minimal to extensive, to challenge the software's ability to deconvolute profiles [11].

Data Generation and Analysis:

- Process the mock mixture samples through your standard laboratory workflow: extraction, quantification, PCR amplification, and capillary electrophoresis [11].

- Analyze the resulting profiles using your genotyping software to create the input files for the probabilistic genotyping software.

Software Testing:

- Accuracy & Specificity: For each mock mixture, calculate the LR for known true contributors and known true non-contributors. True contributors should generate an LR > 1 (supporting inclusion), and true non-contributors should generate an LR < 1 (supporting exclusion) [11].

- Sensitivity & Limits: Systematically reduce the quantity of DNA or the proportion of a minor contributor to determine the point at which the software can no longer reliably include the true contributor.

- Precision: Run the same sample multiple times to ensure the software produces reproducible LRs.

- Robustness: Test the effect of incorrect assumptions, such as specifying the wrong number of contributors, to understand how this impacts the LR [7].

The logical flow of the entire validation process, from sample creation to data interpretation, is summarized in the following diagram:

Diagram 2: PGS Validation Workflow (25 characters)

Successful validation of probabilistic genotyping software is a non-negotiable prerequisite for its use in forensic casework and research. By integrating the structured requirements of ANSI/ASB Standard 018, the practical guidance from SWGDAM, and the international perspective of the ISFG's DNA Commission, researchers and laboratories can build a scientifically defensible and legally sound validation framework. The troubleshooting guides and experimental protocols outlined here provide a concrete foundation for navigating this complex process, ensuring that the powerful tools of probabilistic genotyping are applied with the highest degree of scientific rigor.

Probabilistic genotyping software (PGS) represents a fundamental shift in the interpretation of complex DNA mixtures, moving from traditional "binary" methods to sophisticated statistical models [22]. For researchers and scientists validating these systems, understanding the underlying software architecture—specifically the distinction between fully continuous and semi-continuous models—is critical for robust experimental design and accurate assessment of software performance.

These architectural approaches differ primarily in how they handle and weight the electropherogram data, which directly impacts the validation protocols, computational demands, and the types of DNA profiles for which each is best suited [22].

Core Architectural Models: A Comparative Analysis

The two predominant architectural models for probabilistic genotyping software offer different approaches to managing the uncertainty in DNA mixture interpretation.

Semi-Continuous Architecture

Semi-continuous architectures represent an intermediate step between traditional binary methods and fully continuous models. They consider the presence or absence of alleles (the binary characteristic) but also incorporate some quantitative information from the electropherogram, such as peak heights, primarily to guide the interpretation and to apply filters for stochastic thresholds [22].

Fully Continuous Architecture

Fully continuous architectures utilize all available quantitative data from the electropherogram, including peak heights, areas, and morphology. They employ complex statistical models to account for molecular processes like stutter, dye blobs, and peak height variability due to PCR amplification effects. Software like STRmix exemplifies this architecture [7] [22].

Table 1: Comparative Analysis of Architectural Models in Probabilistic Genotyping

| Feature | Semi-Continuous Model | Fully Continuous Model |

|---|---|---|

| Core Data Used | Allelic presence/absence; limited quantitative data [22] | All quantitative data (peak heights, areas, morphology) [22] |

| Statistical Approach | Likelihood Ratios (LR) based on allele presence [22] | Fully continuous probability distributions modeling all peak data [22] |

| Handling of Uncertainty | Through a stochastic threshold; data below threshold may be excluded [22] | Explicitly models all sources of uncertainty (stutter, imbalance) [22] |

| Computational Demand | Lower | Higher [22] |

| Typical Output | Likelihood Ratio | Likelihood Ratio [7] |

| Optimal Profile Context | Higher template DNA, simpler mixtures | Low-template DNA, complex mixtures [22] |

The Scientist's Toolkit: Essential Research Reagents & Materials

Validation of probabilistic genotyping software requires carefully characterized materials to assess performance across diverse scenarios.

Table 2: Key Research Reagents and Materials for PGS Validation

| Reagent/Material | Function in Validation |

|---|---|

| Control DNA Samples | Provide known genotype templates for creating reference mixture profiles with defined ratios [7]. |

| Commercial STR Multiplex Kits | Generate standardized DNA profiles from samples; parameters from these kits are used to configure the PGS [7]. |

| Mixed DNA Profiles | The core input data for the software; created in-house from control DNA at varying mixture ratios and template quantities to test sensitivity and specificity [7] [22]. |

| Laboratory-Specific Parameters | Calibration data (e.g., stutter, peak height ratios) derived from your lab's specific protocols and equipment, which are input into the PGS to ensure accurate modeling [7]. |

| Sensitivity Panels | Series of samples with progressively decreasing amounts of DNA to determine the lower limits of reliable interpretation [7]. |

Experimental Protocols for Architectural Validation

A rigorous internal validation is mandatory to ensure the probabilistic genotyping software performs reliably within your specific experimental environment.

Core Validation Framework

Adhere to established guidelines such as those from the Scientific Working Group on DNA Analysis Methods (SWGDAM) [7]. The validation should assess key performance characteristics:

- Sensitivity: Determine how software performance changes with low-template DNA or minor contributors.

- Specificity: Ensure the software does not incorrectly include non-contributors.

- Precision & Robustness: Test the software's consistency and its performance when model assumptions are challenged [7].

Key Experimental Methodologies

Mixture Ratio and Contributor Number Studies:

- Purpose: To assess the software's accuracy under different mixture complexities and when the number of contributors is mis-specified.

- Protocol: Prepare mixtures with known contributors at varying ratios (e.g., 1:1, 1:4, 1:9). Analyze the profiles using the software with both the correct and incorrect number of contributors specified. Record the Likelihood Ratio (LR) outputs for true contributors and non-contributors [7].

Known Contributor Addition Studies:

- Purpose: To validate the software's ability to incorporate known reference profiles correctly during the interpretation process.

- Protocol: For a complex mixture profile, re-analyze the data while providing the software with the genotype of a known contributor. Observe the impact on the LR for the remaining contributors [7].

Specificity and Precision Testing:

- Purpose: To confirm that the software reliably excludes non-contributors and produces consistent results.

- Protocol: Run the same mixture profile multiple times to check for result consistency (precision). Furthermore, compute LRs for a large number of non-contributor profiles from a population database to confirm that they are correctly excluded (specificity) [7].

Frequently Asked Questions (FAQs) for Troubleshooting

Q1: Our validation shows that the software occasionally excludes a true contributor (LR=0). What could be the cause? A: This rare event, as noted in STRmix validation, can occur due to extreme heterozygote imbalance or significant stochastic differences in the mixture ratio between loci caused by PCR amplification effects. It highlights the importance of understanding the software's model limitations and reviewing the raw data carefully, especially for low-level components [7].

Q2: When validating, should we use the default model parameters or develop our own? A: You must use laboratory-specific parameters. The software should be configured with stutter, peak height ratio, and other parameters derived from your own validation data generated with your specific STR multiplex kits and laboratory protocols. Using default parameters from a different environment is not forensically sound [7].

Q3: How do we handle the choice between semi-continuous and fully continuous architectures for our laboratory? A: The choice involves a trade-off. Fully continuous models are more powerful for complex, low-template mixtures but are computationally intensive. Semi-continuous may be sufficient for simpler casework but might require more manual intervention and data exclusion via thresholds. The decision should be based on your laboratory's typical casework and validation outcomes [22].

Q4: What is the most critical factor for a successful software validation? A: A comprehensive and well-designed experimental plan that challenges the software with a wide range of scenarios reflective of your actual casework. This includes testing various mixture ratios, template amounts, and potential mis-specifications of the number of contributors [7].

Workflow and Conceptual Diagrams

The following diagram illustrates the high-level logical workflow and decision points involved in the internal validation of probabilistic genotyping software, from experimental setup to data interpretation.

This second diagram contrasts the fundamental data processing flows of the semi-continuous and fully continuous architectural models, highlighting key differentiators.

Executing a Robust Internal Validation: A Step-by-Step Framework

Designing Validation Studies According to SWGDAM Recommendations

FAQs: Navigating SWGDAM Validation Guidelines

What are the SWGDAM guidelines and why are they critical for validation?

The Scientific Working Group on DNA Analysis Methods (SWGDAM) is a collaborative body of scientists from federal, state, and local forensic DNA laboratories across the United States. SWGDAM is recognized as a leader in developing guidance documents to enhance forensic biology services, including specific guidelines for validating probabilistic genotyping systems [16]. Following these guidelines is not merely a best practice but is fundamental to ensuring the scientific rigor and legal admissibility of your validation data. Internal validation studies conducted according to SWGDAM recommendations provide the objective evidence required to demonstrate that a probabilistic genotyping software performs reliably and reproducibly within your specific laboratory environment [7].

How should I structure my internal validation study for probabilistic genotyping software?

Your internal validation should be a comprehensive investigation designed to characterize software performance under conditions mimicking casework. A key publication demonstrates this by validating STRmix according to SWGDAM guidelines, focusing on several core performance areas [7]. The study should be structured to evaluate:

- Sensitivity and Specificity: Assess the software's ability to correctly include true contributors and exclude non-contributors across a range of DNA template quantities and mixture ratios.

- Precision: Determine the reproducibility and variability of Likelihood Ratio (LR) outputs for the same DNA profile.

- Robustness and Limitations: Probe the boundaries of the software by testing the effects of incorrect user assumptions (like wrong number of contributors) and challenging profiles affected by stochastic effects like extreme heterozygote imbalance or significant mixture ratio differences between loci [7].

What are common pitfalls during validation, and how can I troubleshoot them?

Even well-designed validations can encounter issues. Below is a troubleshooting guide for common experimental problems.

| Problem | Underlying Issue | Troubleshooting Steps |

|---|---|---|

| Unexpected Exclusions | True contributor is excluded due to PCR stochastic effects (e.g., extreme heterozygote imbalance) [7]. | Re-examine profile data for low-level alleles or imbalance. Adjust laboratory-specific model parameters (e.g., stutter, peak height threshold) and re-run calculations. Document the profile characteristics causing the issue. |

| Unrealistically High LRs | Model may be over-fitting the data or the parameters may not adequately account for laboratory-specific noise. | Verify that stutter and baseline noise parameters are correctly calibrated. Test the same profile with a different biological model or with a known non-contributor to check for LR inflation. |

| Software Fails to Deconvolve | The complexity of the profile (e.g., high number of contributors, low-template components) exceeds the software's current capabilities. | Simplify the experiment by starting with a lower number of contributors. Ensure the assumed number of contributors is correct. Check that the profile data meets the minimum required input criteria for the software. |

| Inconsistent Results | Lack of precision or reproducibility between replicate runs. | Standardize all input parameters and profile interpretation thresholds. Ensure the same biological model is applied across all replicates. Investigate if the inconsistency is tied to a specific profile type (e.g., very low-level mixtures). |

Experimental Protocols for Key Validation Experiments

The following tables summarize the detailed methodologies for core validation experiments as referenced in scientific literature adhering to SWGDAM principles [7].

Sensitivity and Specificity Testing

| Objective | Experimental Method | Data Analysis | Key Parameters |

|---|---|---|---|

| Determine the effect of DNA quantity and mixture ratio on the software's ability to identify true contributors. | Prepare mixed DNA profiles from known contributors. Systematically vary the total DNA input and the ratio of contributors (e.g., 1:1, 1:4, 1:19). | Calculate Likelihood Ratios (LRs) for true contributors and non-contributors across all sensitivity series. Record the rate of false exclusions (LR < 1) and false inclusions (LR > 1 for non-contributors). | Total DNA input (ng), Mixture ratio, Profile quality metrics (peak heights, balance). |

Precision and Reproducibility Assessment

| Objective | Experimental Method | Data Analysis | Key Parameters |

|---|---|---|---|

| Evaluate the consistency of LR results for the same evidence profile. | Process the same DNA profile through the probabilistic genotyping software multiple times (n≥10). Ensure all input parameters and the biological model are identical for each run. | Calculate the mean, standard deviation, and coefficient of variation (CV) of the log10(LR) values. A low CV indicates high precision. | log10(LR), Standard Deviation, Coefficient of Variation (CV). |

Robustness and Model Limitations

| Objective | Experimental Method | Data Analysis | Key Parameters |

|---|---|---|---|

| Test the software's performance when given incorrect user-directed assumptions. | Analyze known mixed DNA profiles while intentionally specifying an incorrect number of contributors (e.g., analyze a 3-person mixture while assuming 2 contributors). | Compare the resulting LRs for true contributors obtained with the correct vs. incorrect number of contributors. Note any false exclusions or significant changes in LR magnitude. | Assumed number of contributors, LR with correct vs. incorrect assumption. |

| Investigate the impact of known PCR artifacts. | Select or create profiles exhibiting known stochastic effects, such as severe heterozygote imbalance or allele drop-out. | Process these challenging profiles and document the software's output, including any instances where a true contributor is assigned an LR of 0 (exclusion) [7]. | Presence of heterozygote imbalance, Allele drop-out/drop-in, Stochastic threshold. |

The Scientist's Toolkit: Research Reagent Solutions

This table details key materials and software essential for executing a SWGDAM-aligned validation study for probabilistic genotyping software.

| Item | Function in Validation | Example Product(s) |

|---|---|---|

| Reference DNA Profiles | Provides known, controlled source material for creating mixed DNA samples used in validation studies. | Commercially available DNA standards (e.g., from NIST), or internally characterized cell lines. |

| PCR Amplification Kit | Generates the DNA profiles from extracted DNA. The choice of kit determines the genetic markers available for analysis. | GlobalFiler, Identifier, PowerPlex systems. |

| Genetic Analyzer | Separates amplified DNA fragments by size to produce the electrophoretograms (electropherograms) that are the raw data for software interpretation. | Applied Biosystems 3500 Series. |

| Probabilistic Genotyping Software | The system under validation; interprets complex DNA mixture data and calculates a statistical weight of evidence (Likelihood Ratio). | STRmix, TrueAllele, EuroForMix. |

| Laboratory Information System | Tracks chain of custody, sample processing data, and results throughout the validation study, ensuring data integrity. | Lab-specific LIMS (e.g., LabWare, STARLIMS). |

Validation Study Workflow Diagram

SWGDAM Validation Workflow

Software Performance Verification Diagram

Software Performance Verification

Assessing Sensitivity, Specificity, and Precision with Known Samples

Troubleshooting Guides

Guide 1: Troubleshooting False Negative Results (Unexpectedly Low LR for a True Contributor)

Q: I am observing Likelihood Ratio (LR) values that support exclusion (LR ≈ 0) for a known true contributor in my validation study. What could be causing this, and how can I resolve it?

A: False negatives, where a true contributor receives an LR supporting exclusion, are often caused by extreme stochastic effects that the software's model cannot reconcile with the proposed hypothesis.

Potential Cause 1: Extreme Heterozygote Imbalance or Stochastic Effects. PCR amplification stochasticity can cause severe peak height imbalance within a heterozygous allele pair or significant differences in the mixture ratio across loci. This can make a true contributor's genotype appear unlikely under the software's model [7].

- Solution: Review the electropherogram for the sample in question. Check for loci where the peak heights of heterozygous alleles are highly imbalanced or where the proportion of a contributor's alleles varies dramatically from the overall mixture ratio. Re-running the amplification, if possible, or incorporating replicate amplifications into the probabilistic genotyping analysis can help mitigate these effects [23].

Potential Cause 2: Incorrect Assumption of the Number of Contributors (N). Overestimating the number of contributors can lead to the software incorrectly allocating the alleles of a true contributor to multiple hypothetical individuals, thereby reducing the LR for the actual contributor [7] [24].

- Solution: Re-evaluate the estimate of the number of contributors using multiple methods (e.g., maximum allele count, mixture proportion estimation). Run the analysis with a different N hypothesis to see how sensitive the LR is to this change. Ensure your validation studies include testing the impact of assuming an incorrect N [7] [23].

Potential Cause 3: Poorly Calibrated Laboratory-Specific Parameters. The biological models within the software (e.g., for peak height, stutter, and degradation) are based on laboratory-specific validation data. If these parameters are not accurately determined for your lab's conditions, the model may not perform optimally [7] [25].

Guide 2: Troubleshooting False Positive Results (Unexpectedly High LR for a Non-Contributor)

Q: A known non-contributor is producing an LR greater than 1 in my analysis, suggesting a false association. What are the common sources of such false positives?

A: False positives can arise from allele sharing or artifacts being misinterpreted as true alleles.

Potential Cause 1: High Degree of Allele Sharing. If a non-contributor shares a large number of alleles with the true contributors by chance, the software may calculate a moderate LR value [26].

- Solution: This is a known limitation of mixture interpretation. Evaluate the LR in the context of the allele frequencies in the relevant population. Validation should include tests with individuals who have varying degrees of allele sharing to establish expected baseline LRs for non-contributors under these conditions [26].

Potential Cause 2: Incorrectly Set Analytical Threshold or Drop-In Parameter. If the analytical threshold is set too low, noise may be interpreted as true allelic peaks, which can then be matched to a non-contributor. Conversely, if the drop-in parameter is not properly set, spurious peaks may not be adequately accounted for, leading to an inflation of the LR [25].

- Solution: Re-visit the data from your internal validation used to set the analytical threshold. Ensure the drop-in frequency and model (e.g., gamma or uniform distribution) are appropriately calibrated using your laboratory's negative control data [25].

Potential Cause 3: Underestimation of the Number of Contributors. If the number of contributors is set too low, the software may be forced to explain all alleles with fewer genotypes, potentially leading it to incorrectly include a non-contributor whose genotype "fits" the leftover alleles [24].

- Solution: As with false negatives, carefully re-assess the evidence for the number of contributors. Your validation should test the software's performance when N is intentionally set incorrectly [23].

Guide 3: Troubleshooting Issues with Precision and Reproducibility

Q: When I run the same analysis multiple times, I get somewhat different LR values. Is this normal, and how do I determine if the variation is acceptable?

A: Some variation is expected in fully continuous systems that use stochastic algorithms like Markov Chain Monte Carlo (MCMC), but the variation should be within acceptable bounds [26].

Potential Cause 1: Inherent Stochasticity of MCMC Algorithms. Software like STRmix and MaSTR use MCMC with the Metropolis-Hastings algorithm to explore possible genotype combinations. By nature, this method involves random sampling, which can lead to slight variations between runs [26] [27].

- Solution: Perform replicate analyses (e.g., 3-5 runs) for the same input data and propositions. Calculate the standard deviation or coefficient of variation of the log10(LR) values. Your internal validation should establish a precision threshold, such as a standard deviation of less than 0.05 or 0.1 in log10(LR) space, for results to be considered reproducible [26].

Potential Cause 2: Insufficient MCMC Convergence. The MCMC chains may not have run for enough iterations to fully converge on a stable posterior distribution.

- Solution: Use the software's diagnostic tools. MaSTR, for instance, provides mixture ratio plots that indicate if chains have converged. Ensure you are using the manufacturer's recommended number of iterations and burn-in periods, and increase them if diagnostics suggest poor convergence [26].

Frequently Asked Questions (FAQs)

Q: What are the key differences between semi-continuous and fully continuous probabilistic genotyping software?

A: Semi-continuous systems (e.g., LRmix Studio) use only qualitative information—the presence or absence of alleles—and incorporate probabilities for drop-in and drop-out. Fully continuous systems (e.g., STRmix, EuroForMix, MaSTR) use both qualitative and quantitative information, including allele peak heights and their relationships, to model stutter, degradation, and other profile characteristics. Fully continuous systems generally utilize more of the available data in the electropherogram [26] [25].

Q: According to validation guidelines, what are the essential performance characteristics that must be assessed for probabilistic genotyping software?

A: Guidelines from SWGDAM, ISFG, and ANSI/ASB stipulate that internal validation must assess sensitivity, specificity, and precision. It should also investigate the impact of software input parameters, the effects of an incorrect number of contributors, the addition of known contributors, allele sharing, and the modeling of locus and allele drop-out, stutter, and peak height variation [7] [26] [27].

Q: How can the modeling of stutter impact the calculated LR?

A: Proper stutter modeling is crucial. If stutter peaks are not accounted for, they may be misinterpreted as true alleles from a minor contributor, potentially leading to false inclusions or exclusions. Studies comparing different stutter models (e.g., modeling only back stutter vs. both back and forward stutter) have shown that while LRs are often similar, significant differences can occur in more complex samples with unbalanced contributions or greater degradation [14]. Including and accurately modeling stutter maximizes the statistical significance of the LR [14].

Q: What are the legal challenges associated with probabilistic genotyping software?

A: Some PG tools are proprietary, and their source code is often protected as a trade secret. This has led to legal challenges regarding the defendant's right to examine the tools used against them. Appellate courts in the U.S. have begun to grant defense teams access to source code for independent review to ensure the software is functioning as claimed [24].

| Software Validated | Scope of Testing | Key Quantitative Findings on Performance | Cited Reference |

|---|---|---|---|

| STRmix (Japanese population) | Sensitivity, specificity, precision; effects of wrong contributor number & adding a known donor. | Correctly included true contributors; rare exclusions due to extreme PCR stochasticity. LRs for non-contributors were typically less than 1 [7]. | [7] |

| MaSTR (2–5 person mixtures) | >280 mixed profiles; >2600 analyses; Type I/II error testing. | Accurate & precise LRs for up to 5 contributors, including minor donors with stochastic effects. Robust performance against known standards [26]. | [26] |

| STRmix (FBI Laboratory) | >300 single-source & mixed profiles; >60,000 tests. | Comprehensive assessment of sensitivity & specificity via known contributor/non-contributor comparisons across a wide template range [23]. | [23] |

Core Experimental Protocol for Sensitivity/Specificity

This protocol is synthesized from common elements in the cited validation studies [7] [26] [23].

- Sample Preparation: Create mixtures of 2 to 5 contributors using known DNA extracts. The mixtures should cover a broad range of template amounts (e.g., from 10 pg to 500 pg per contributor) and mixture ratios (e.g., 1:1 to 1:20).

- Profiling: Amplify the mixtures using your standard STR kit (e.g., GlobalFiler, PowerPlex Fusion 5C). Perform capillary electrophoresis and genotyping with established analytical thresholds.

- Analysis: For each mixture profile, perform two sets of analyses in the probabilistic genotyping software:

- Sensitivity (True Contributor Tests): Calculate the LR for each known true contributor to the mixture.

- Specificity (Non-Contributor Tests): Calculate the LR for known non-contributors (individuals whose DNA is not in the mixture).

- Precision Testing: Run the same analysis multiple times (e.g., 3-5 replicates) to assess the variation in the reported LR.

- Conditioned and Error Testing: Re-run analyses with an incorrect number of contributors and with the addition of known contributors to the hypothesis to see how the software performs.

Workflow Visualization

Diagram 1: Internal Validation Workflow

Diagram 2: PG Software Analysis & Troubleshooting

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Internal Validation Studies

| Item | Function in Validation | Example Products / Kits |

|---|---|---|

| Commercial STR Multiplex Kits | Amplifies multiple STR loci simultaneously to generate the DNA profile for analysis. | GlobalFiler [7], PowerPlex Fusion 5C [26] |

| Quantification Kits | Precisely measures the amount of human DNA in a sample prior to amplification to ensure optimal input. | Quantifiler Human DNA Quantification Kit [26] |

| Capillary Electrophoresis System | Separates and detects amplified STR fragments by size, generating the electropherogram data. | 3130-Avant Genetic Analyzer [26] |

| Genotyping Software | Performs initial analysis of electrophoretic data: sizing alleles, calling peaks, and applying filters. | GeneMarker HID [26], GeneMapper ID-X [26] |

| Probabilistic Genotyping Software | Interprets complex DNA mixtures; calculates likelihood ratios by comparing prosecution and defense hypotheses. | STRmix [7], MaSTR [26], EuroForMix [14] |

| Reference DNA Extracts | Provides known, single-source donor DNA for constructing controlled mixtures of defined composition and ratio. | Commercially available from biobanks [26] |

FAQs: Addressing Core Technical Challenges

FAQ 1: What are the most critical factors to test when validating probabilistic genotyping software for low-template DNA? Low-template (LT-DNA) or low-copy number (LCN) DNA analysis is inherently susceptible to stochastic effects, which must be a central focus of validation. The primary factors to test are:

- Allele and Locus Drop-out: The stochastic failure to detect an allele or an entire locus in a sample where it is actually present. Your validation must establish the probability of drop-out across different DNA quantities and levels of degradation [28] [29].

- Allele Drop-in: The random appearance of one or more allelic peaks that are not part of the true DNA profile, typically due to contamination. The validation should quantify the expected rate and pattern of drop-in [28] [30].

- Heterozygote Imbalance: In LT-DNA, the two alleles of a heterozygous individual can amplify with significant imbalance, which the software must accurately model. Extreme imbalance can even lead to incorrect exclusions [7].

- Impact of Replicate Testing: A key method to mitigate stochastic effects is through replicate PCR amplifications and the generation of a consensus profile. Your validation protocol should assess the software's performance with and without replicate data [28] [30].

FAQ 2: How can I experimentally model DNA degradation for a software validation study? DNA degradation can be modeled in a controlled laboratory setting to create reproducible standards for validation.

- Controlled Thermal Degradation: A published protocol involves incubating DNA samples (e.g., 50 µL aliquots at 1 ng/µL) at 99°C for varying durations (e.g., 1 to 5 hours). This process generates fragmented DNA with a predictable and increasing degree of damage, mimicking environmental degradation [30].

- Quantitative Assessment with qPCR: The success of the degradation protocol is confirmed using a qPCR assay capable of assessing degradation, such as the PowerQuant system. This assay quantifies both a short autosomal target (e.g., 84 bp) and a long autosomal target (e.g., 294 bp). The ratio of the concentration of the small target to the large target ([Auto]/[D]) provides a quantitative Degradation Index (DI). A higher ratio indicates more severe degradation [31].

FAQ 3: Our laboratory's probabilistic genotyping software is reporting a likelihood ratio (LR) for a known non-contributor (a Type II error). What are potential causes? A "false positive" LR can occur due to several factors related to software inputs or complex evidence profiles.

- Incorrect Specification of the Number of Contributors (N): This is a critical and often challenging input. If the analyst underestimates N, the software may be forced to attribute alleles from an unknown contributor to a known individual who is not actually present, potentially generating a positive LR for that non-contributor [6].

- High Level of Allele Sharing: When contributors to a mixture share many alleles, it becomes statistically more likely that a non-contributor's genotype will, by chance, be consistent with a large portion of the mixture's alleles [26].

- Software and Model Limitations: Probabilistic genotyping software will always report a result, even with uninformative or highly complex data. In such cases, the LR may be low (close to 1) but still above the reporting threshold. Different software programs, based on different models, can also yield contradictory results for the same sample [6]. This underscores the necessity of rigorous, lab-conducted internal validation to understand the software's behavior at its limits.

Troubleshooting Guides

Troubleshooting Guide 1: Managing Stochastic Effects in Low-Template DNA Analysis

Stochastic effects are random fluctuations in the PCR amplification process that become significant when analyzing low amounts of DNA template (typically below 100-150 pg) [28]. The following workflow outlines a systematic approach to identify and mitigate these challenges during your software validation.

Specific Protocols:

- Replicate Testing & Consensus Profile: To generate reliable data, perform multiple (e.g., 3-10 for validation studies) PCR amplifications from the same DNA extract. Create a consensus profile by including only those alleles that appear in at least two independent replicates. This method helps distinguish true alleles from stochastic drop-in events [28].

- Validation Data Collection: Follow a protocol similar to the NIST validation study. Dilute pristine control DNA to low amounts (e.g., 10 pg, 30 pg, 100 pg). Perform a large number of replicate amplifications (e.g., 10 per quantity) using standard and enhanced cycle protocols. Analyze the resulting electropherograms to establish baseline rates for allele drop-out, locus drop-out, and allele drop-in specific to your laboratory's methods [28].

Troubleshooting Guide 2: Incorporating Degradation and Inhibition in Software Models

Degradation and inhibition are key factors that impact STR profile quality and must be accurately modeled by probabilistic software. The following workflow details the experimental steps for generating and analyzing degraded samples.

Specific Protocols:

- Modeling DNA Degradation:

- Sample Preparation: Create multiple 50 µL aliquots of a control DNA sample (e.g., at 1 ng/µL) [30].

- Thermal Stress: Incubate the aliquots in a thermal cycler or heat block at 99°C for different time periods (e.g., 1, 2, 3, 4, and 5 hours) to create a degradation series [30].

- Quantify Degradation: Use a qPCR kit like PowerQuant or Quantifiler Trio to measure the concentration of a short target (e.g., 84 bp) and a long target (e.g., 294 bp). The Degradation Index (DI) is calculated as [Small Target]/[Large Target]. A higher DI indicates more severe degradation [31].

- Assessing PCR Inhibition:

- Internal PCR Control (IPC): Use a qPCR quantification kit that includes an IPC. The IPC is a synthetic DNA target added to the reaction to detect the presence of substances that inhibit the PCR.

- Measure Inhibition: Inhibition is typically indicated by a shift in the quantification cycle (Cq) of the IPC. For example, the Plexor HY system defines inhibition as a ≥2 cycle shift in the IPC Cq compared to a non-inhibited standard of the same DNA concentration [31].

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential Reagents and Kits for Validation Studies

| Item Name | Function in Validation | Key Application Note |

|---|---|---|

| PowerQuant / Quantifiler Trio | Simultaneous DNA quantification & degradation assessment. | Measures a short vs. long autosomal target to calculate a Degradation Index (DI) [31]. |

| Formalin-Fixed, Paraffin-Embedded (FFPE) Samples | A source of naturally degraded DNA for real-world validation. | Provides a challenging substrate to test software models for degradation and low-template DNA [31]. |

| GlobalFiler / PowerPlex Fusion 5C | STR Amplification Kits for generating DNA profiles. | Used to create the electrophoretic data that is input into the probabilistic genotyping software for interpretation [32] [26]. |

| Amplicon Rx Post-PCR Clean-up Kit | Post-amplification purification of PCR products. | Enhances signal intensity in capillary electrophoresis for low-template samples, improving allele recovery without increasing PCR cycles [32]. |

| Control Male 007 DNA | A standardized, high-quality DNA source for creating dilution series. | Used in sensitivity studies to create low-template and degraded samples with known genotypes for controlled validation experiments [30]. |

Experimental Protocols for Software Validation

Protocol 1: Sensitivity and Stochastic Variation Analysis

Objective: To determine the minimum DNA quantity at which the probabilistic genotyping software produces reliable and accurate results, and to characterize stochastic effects at low template levels.

Methodology:

- Sample Preparation: Create a serial dilution of a control DNA sample (e.g., 007 DNA) from 1 ng/µL down to 0.0001 ng/µL (0.1 pg/µL) using TE buffer [30] [32].

- Amplification and Analysis: For each dilution level, perform multiple replicate PCR amplifications (a minimum of 3, but more for robust validation data) using your standard STR kit [28].

- Data Collection and Scoring: Analyze the resulting profiles and score for the following:

- Software Testing: Input all replicate data into the probabilistic genotyping software. Test the software's ability to compute valid LRs for true contributors and correctly exclude non-contributors across the dilution series.

Protocol 2: Complex Mixture Analysis with Varying Contributor Ratios

Objective: To validate the software's performance with mixed DNA profiles, assessing its ability to deconvolute contributors and accurately compute LRs under challenging but forensically relevant conditions.

Methodology:

- Mixture Creation: Prepare mixtures with 2 to 5 contributors. For each contributor number, create different mixture ratios. Examples include:

- Two-Person: 1:1, 1:4, 1:9, 1:19 [26].

- Five-Person: Create unbalanced ratios where minor contributors represent a very small fraction of the total DNA.

- Amplification and Profiling: Amplify the mixture samples and generate STR profiles using standard laboratory protocols.

- Software Input and Analysis: For each mixture profile, analyze the data in the probabilistic genotyping software using a range of proposed numbers of contributors (N). Run analyses with both true contributors and known non-contributors.

- Validation Metrics:

- Accuracy: Does the software assign an LR > 1 for true contributors and an LR < 1 for non-contributors?

- Sensitivity/Specificity: Calculate the rates of true positives, false positives, true negatives, and false negatives.

- Impact of N: Document how changes in the assumed number of contributors affect the calculated LR for a given proposition [26].

Table 2: Key Validation Metrics for Probabilistic Genotyping Software

| Validation Aspect | Metric | Target Outcome |

|---|---|---|

| Sensitivity | Likelihood Ratio (LR) for true contributors in low-template (<100 pg) samples. | LR > 1 (Increasingly higher LRs with better-quality data). |

| Specificity | Likelihood Ratio (LR) for known non-contributors. | LR < 1 (Ideal is LR ≈ 0, exclusion). |

| Precision | Reproducibility of LR for the same sample and proposition across multiple runs. | Low coefficient of variation in LR. |

| Model Limits | Rate of Type I Error (False Exclusion) and Type II Error (False Inclusion). | Minimized and quantified error rates, established for different DNA quantities and mixture complexities [7] [26]. |

NGS Assay Validation Guidelines

What are the key components of a proper analytical validation for a targeted NGS oncology panel?

A proper analytical validation for a targeted NGS oncology panel must establish the test's performance characteristics across key metrics. The Association of Molecular Pathology (AMP) and the College of American Pathologists provide consensus recommendations that laboratories should follow [33].

Table: Key Performance Metrics for NGS Oncology Panel Validation

| Performance Metric | Recommended Validation Approach | Target Performance |

|---|---|---|

| Positive Percentage Agreement (Sensitivity) | Evaluate using reference materials and cell lines for each variant type (SNV, indel, CNA, fusion) [33]. | Establish for each variant type and allele frequency. |

| Positive Predictive Value (Specificity) | Assess by comparing NGS results to known orthogonal methods [33]. | >99% for variant calls [33]. |

| Precision (Repeatability & Reproducibility) | Run replicates across different operators, instruments, and days [33]. | 100% concordance for variant calls [33]. |

| Limit of Detection (LoD) | Determine using diluted samples to find the lowest allele frequency reliably detected [33]. | Establish minimum variant allele fraction and tumor purity [33]. |

The validation should use an error-based approach that identifies potential sources of errors throughout the analytical process and addresses them through test design, method validation, or quality controls [33]. The laboratory director must define the panel's intended use, including sample types (e.g., solid tumors vs. hematological malignancies) and the types of variants reported (SNVs, indels, CNAs, or fusions), as this influences the validation design [33].

Single-Cell Sequencing Applications

How is single-cell sequencing being applied in advanced research, and what methods are used?

Single-cell sequencing assays the nucleic acids of individual cells, revealing cellular heterogeneity that is masked in bulk sequencing [34] [35]. It has revolutionized fields like cancer research, neurobiology, developmental biology, and microbiology [34].

Key Applications:

- Cancer Research: Tracking tumor heterogeneity at its most basic level, the single cell [34].

- Developmental Biology & Embryology: Studying cell lineage and the spatiotemporal organization of cells from embryonic development to aging [34].

- Microbial Ecology: Assigning functional roles to unculturable members of the human microbiome [34].

- In Vitro Fertilization (IVF): Screening embryos for genetic diseases like trisomy 21 from a single cell [34].

Common Methodologies: High-throughput methods like droplet-based encapsulation (e.g., 10X Genomics Chromium) allow for the parallel profiling of tens of thousands of single-cell transcriptomes [34] [35]. The standard workflow involves [35]:

- Sample Submission: Isolated cells, cultured cells, or primary tissues.

- Sample QC & Cell Partitioning: Cell counting, viability testing, and isolation of single cells with barcoded beads in droplets.

- Library Preparation: Adding unique barcodes (UMIs) to each cell's RNA or DNA.

- Sequencing: High-throughput sequencing on platforms like Illumina NovaSeq.

- Data Analysis: Custom bioinformatic analysis to deconvolute the single-cell data.

Probabilistic Genotyping Software Validation

What are the requirements for the internal validation of probabilistic genotyping software like STRmix or MaSTR?

Internal validation of probabilistic genotyping (PG) software must demonstrate that the system is accurate, precise, and robust for use in casework. Validation must comply with guidelines from the Scientific Working Group on DNA Analysis Methods (SWGDAM) or other standard-setting bodies [7] [26].

Table: Core Components of Probabilistic Genotyping Software Validation

| Validation Component | Description | Acceptance Criteria |

|---|---|---|

| Accuracy & Sensitivity | Software correctly includes true contributors and excludes non-contributors across a range of mixture ratios [7] [26]. | High Likelihood Ratios (LR) for true contributors; LR < 1 for non-contributors [26]. |

| Specificity & Precision | Tests for Type I (false exclusion) and Type II (false inclusion) errors. Results are reproducible across repeated analyses [26]. | Minimal false exclusions/inclusions; reproducible LRs [7] [26]. |

| Sensitivity to Input Parameters | Assess effects of changing the number of contributors, adding known contributors, and using different analytical thresholds [7] [26]. | Software performs robustly under different, reasonable propositions [7]. |

| Performance at Limits | Challenge software with low-template DNA, high levels of allele sharing, and extreme mixture ratios that induce stochastic effects (allele drop-out, drop-in) [7] [26]. | Software provides reliable, though potentially more conservative, LRs [7]. |

A study validating MaSTR software performed over 2,600 analyses on 280+ mixed DNA profiles with 2-5 contributors. It successfully included true contributors and excluded non-contributors, though rare Type I errors (LR < 1 for a true contributor) occurred in cases of extreme stochastic effects [26]. Similarly, an internal validation of STRmix using Japanese individuals found it suitable for interpreting mixed DNA profiles, with rare exclusions of true contributors due to extreme heterozygote imbalance or significant mixture ratio differences between loci [7].

NGS & Sanger Sequencing Troubleshooting FAQs

Why did my Sanger sequencing reaction fail, and how can I fix it?

Sanger sequencing failures commonly result from template quality, concentration, or contaminants [36].

Table: Common Sanger Sequencing Issues and Solutions

| Problem | Possible Causes | Solutions |

|---|---|---|

| Failed Reaction (mostly N's) | Low template concentration, poor quality DNA, contaminants, bad primer [36]. | Check concentration (100-200 ng/µL), clean DNA, use high-quality primer [36]. |

| High Background Noise | Low signal intensity from poor amplification, low template, or inefficient primer binding [36]. | Ensure correct template concentration and a high-efficiency primer [36]. |

| Sequence Stops Abruptly | Secondary structures (e.g., hairpins) in the template that the polymerase cannot pass [36]. | Use "difficult template" chemistry or design a new primer past/through the structure [36]. |

| Double Peaks / Mixed Sequence | Colony contamination (multiple clones) or a toxic sequence in the DNA causing deletions [36]. | Re-pick a single colony; use a low-copy vector and do not overgrow cells [36]. |

My NGS library yield is low. What could be the cause, and how can I improve it?

Low NGS library yield is a frequent issue often traced to sample input, fragmentation, or ligation steps [37].

- Root Cause 1: Poor Input Quality or Contaminants. Degraded DNA/RNA or contaminants (phenol, salts) inhibit enzymes [37].

- Solution: Re-purify the input sample. Ensure high purity (260/280 ~1.8, 260/230 >1.8) using fluorometric quantification (e.g., Qubit) instead of just UV absorbance [37].

- Root Cause 2: Fragmentation or Ligation Inefficiency. Over- or under-fragmentation reduces ligation efficiency. An improper adapter-to-insert ratio can cause adapter dimer formation [37].

- Solution: Optimize fragmentation parameters. Titrate the adapter:insert molar ratio and ensure fresh ligase and optimal reaction conditions [37].

- Root Cause 3: Overly Aggressive Purification. Incorrect bead-based size selection ratios or over-drying beads can lead to significant sample loss [37].

- Solution: Precisely follow cleanup protocol instructions for bead-to-sample ratios and drying times [37].

Single-Cell RNA-seq Data Analysis Challenges

What are the major challenges in analyzing single-cell RNA-seq data, and what are the proposed solutions?

ScRNA-seq data is complex and prone to technical artifacts, requiring specialized computational tools for accurate interpretation [38].

Table: Key Challenges in scRNA-seq Data Analysis

| Challenge | Impact on Data | Recommended Solutions |

|---|---|---|

| Dropout Events | False-negative signals where a transcript is not detected in a cell, especially for lowly expressed genes [38]. | Use computational imputation methods and UMIs to account for and correct dropouts [34] [38]. |

| Amplification Bias & Technical Noise | Skewed representation of genes due to stochastic amplification, overestimating expression levels [38]. | Apply UMIs to count original molecules and use spike-in controls for normalization [34] [38]. |

| Batch Effects | Systematic technical variations between different sequencing runs that confound biological differences [38]. | Use batch correction algorithms (e.g., Combat, Harmony, Scanorama) during data integration [38]. |