A Comprehensive Guide to Forensic Sample Collection and Preservation: Protocols, Pitfalls, and Best Practices for Researchers

This guide provides researchers, scientists, and drug development professionals with a systematic framework for forensic sample collection and preservation.

A Comprehensive Guide to Forensic Sample Collection and Preservation: Protocols, Pitfalls, and Best Practices for Researchers

Abstract

This guide provides researchers, scientists, and drug development professionals with a systematic framework for forensic sample collection and preservation. It covers foundational principles, detailed methodological protocols for diverse sample types, strategies for troubleshooting common errors, and the latest validation standards. The article synthesizes current best practices, from ANSI/ASB guidelines and ISO 21043 standards to advanced techniques like Next-Generation Sequencing, to ensure evidence integrity, support reliable analytical results, and maintain legal admissibility in biomedical and clinical research contexts.

Core Principles and Standards for Forensic Sample Integrity

Understanding the Critical Role of Sample Integrity in Forensic Analysis

In forensic science, the ability to extract reliable and admissible evidence hinges on a single, foundational principle: sample integrity. From the crime scene to the laboratory, the preservation of a sample's physical and chemical state directly determines the validity of analytical results. This guide details the core principles, standardized protocols, and advanced methodologies that researchers and forensic professionals must implement to safeguard sample integrity, thereby ensuring that scientific evidence stands up to legal scrutiny.

Core Principles of Sample Integrity

Maintaining sample integrity is not a single action but a continuous process governed by several non-negotiable principles.

Contamination Prevention: This is the paramount concern. Personnel must wear full personal protective equipment (PPE), including disposable caps, masks, gloves, and protective clothing [1]. Gloves should be changed after handling each individual sample or after touching different surfaces to prevent cross-contamination [1]. All collection tools must be either single-use or thoroughly sterilized before use [1].

Chain of Custody: This is the documented chronological history of a sample's movement and handling [1]. Every step—from sample discovery, collection, packaging, transportation, storage, to final handover to the laboratory—must be meticulously recorded [1]. Details must include the time, location, collector, every individual who handled the evidence, and the storage conditions. A robust chain of custody is essential for ensuring evidence admissibility in court [1].

Non-Destructive First, Photography First: Before any physical sample is touched or collected, its original state must be comprehensively documented through photography and video recording [1]. Furthermore, analytical priorities should favor non-destructive testing methods, such as examination under UV or multi-band light sources, before proceeding to any physical extraction that might alter the sample [1].

Collection of Control Samples: When collecting suspected biological stains, it is critical to also collect a "blank substrate" sample from an unstained area nearby [1]. This practice helps exclude environmental background interference and reagent contamination during laboratory analysis, providing a crucial baseline for comparison [1].

Technical Protocols for Sample Collection and Preservation

The following protocols outline the specific methods required for different evidence types, emphasizing techniques that preserve sample integrity for subsequent analysis.

Biological Samples

Biological evidence is a primary source of DNA and requires careful handling to prevent degradation.

Table 1: Collection and Preservation Methods for Biological Evidence

| Sample Type | Detection Method | Extraction Method | Packaging and Storage |

|---|---|---|---|

| Blood/Bloodstains | Visual, white/blue-green light | Wet stains: Absorb with sterile gauze or syringe. Dry stains: Collect entire item if movable; for immovable surfaces, use a water-moistened swab, air-dry, and place in evidence bag [1]. | Paper bags/envelopes (dried swabs/stains); cryotubes (liquid blood). Avoid sealing wet samples in plastic. Refrigerate short-term; -20°C long-term [1]. |

| Semen/Vaginal Secretions | UV fluorescence (confirm with pre-test) | Similar to bloodstains. Use a swab moistened with deionized water to rotate and scrub firmly. FTA cards for direct adsorption are excellent for PCR and preservation [1]. | Air-dry, package in paper bags. Refrigerate [1]. |

| Saliva Stains (e.g., cigarette butts) | Visual inspection | Collect entirely with forceps. For surfaces like bottle mouths, use moistened swabs [1]. | Paper bags. Refrigerate [1]. |

| Hair | Visual, strong light | Collect with clean forceps. Focus on roots with follicles (nuclear DNA). Plucked hair is superior to naturally shed hair [1]. | Place in paper folds or screw-top tubes to avoid static loss. Room temperature or refrigerate [1]. |

| Bones/Teeth | Excavation | Select dense bones (e.g., mid-shaft of long bones, teeth). Clean surface, grind to remove contaminants, and drill bone powder [1]. | Paper bags. Room temperature [1]. |

| Touch DNA | Trace, invisible | High contamination risk. Use the double-swab method (one dry, one wet, or both wet) on suspected contact surfaces. Alternatively, use tape lifting [1]. | Air-dry swabs, place in evidence tubes. Refrigerate [1]. |

Non-Biological Evidence

A wide array of non-biological materials can serve as critical evidence and require specialized handling.

Fingerprints:

- Visible Prints (e.g., in dust or blood): Photograph directly before any enhancement [1].

- Latent Prints: The development technique is substrate-dependent. For hard surfaces (e.g., glass, metal), use magnetic or fluorescent powder followed by tape lifting onto evidence cards. For porous surfaces (e.g., paper, wood), use ninhydrin or DFO reagent fuming. For wet surfaces, small particle reagent (SPR) is effective, while plastic bags often require cyanoacrylate (502 glue) fuming [1].

Trace Evidence:

- Fibers: Collect with forceps or adhesive tape and compare with on-site blank samples [1].

- Glass Fragments: Collect entirely for physical matching and elemental analysis [1].

- Soil: Collect samples from different layers for geological provenance analysis [1].

- Gunshot Residue (GSR): Collect from suspects' hands using adhesive carbon stubs for subsequent SEM-EDS composition analysis [1].

Digital Evidence:

- For devices like phones, computers, and hard drives, disconnect power immediately to avoid triggering self-destruction mechanisms. Place the device in a Faraday bag to block all signals and prevent remote wiping. The device should then be handed over to digital forensic experts for laboratory-based imaging and extraction [1].

Analytical Techniques and Sample Integrity

The choice of analytical technique is crucial, with many modern methods prioritizing non-destructive or minimal-damage analysis to preserve sample integrity.

Fourier Transform Infrared (FTIR) microscopy is a macroscopically useful technique for forensic scientists as it enables rapid, non-destructive investigation of microscopic samples [2]. For example, it can be used to:

- Analyze illicit pills without requiring sample dissolution, which can degrade evidence [2].

- Chemically image ink on paper and multi-layer paint chips, providing unambiguous data for comparison without destroying the sample [2].

- Examine hair fibers to detect residual styling agents or protein structural changes from bleaching, combining visual inspection with chemical information [2].

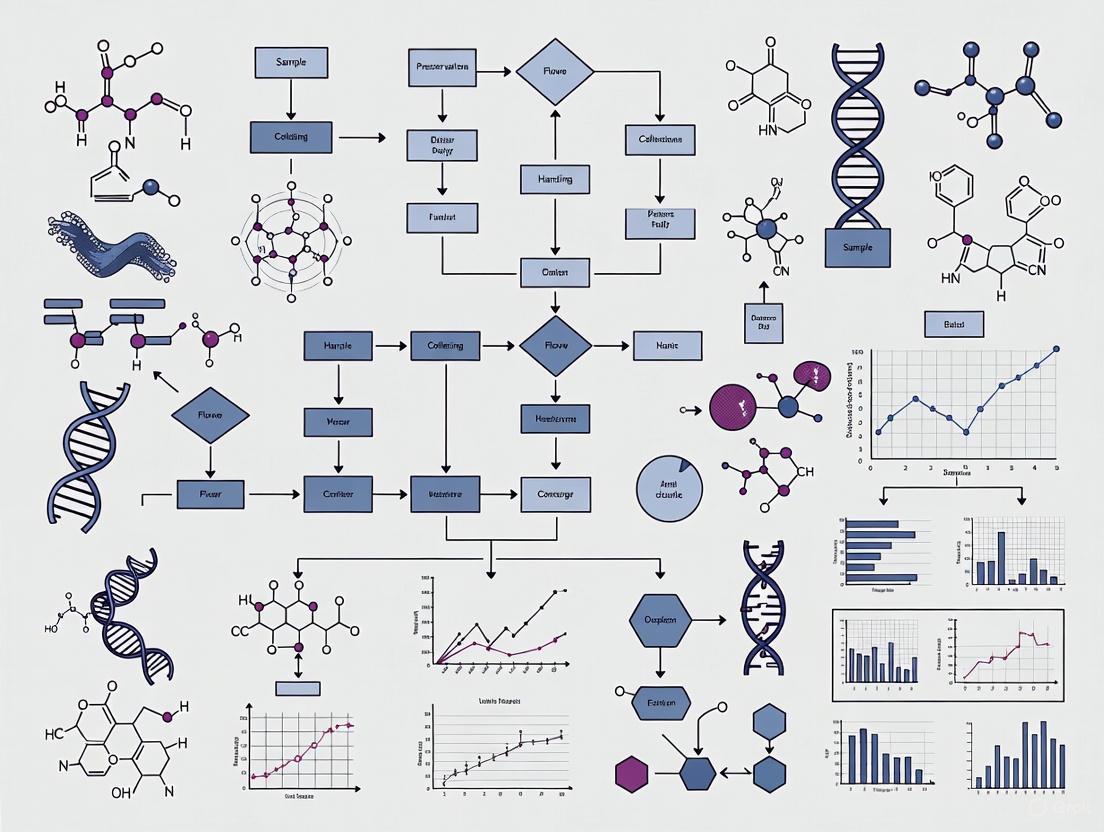

Workflow for Evidence Integrity Management

The entire process, from crime scene to lab, must follow a strict, standardized workflow to preserve sample integrity. The following diagram visualizes this integrated system.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful sample collection and preservation rely on a suite of essential tools and reagents.

Table 2: Essential Materials for Forensic Sample Collection

| Category / Item | Specific Examples | Function and Importance |

|---|---|---|

| Personal Protective Equipment (PPE) | Disposable protective suits, shoe covers, N95 masks, powder-free gloves [1] | Primary barrier for contamination prevention, protecting both the evidence and the personnel [1]. |

| Collection Tools | Sterile swabs, FTA cards, scalpels, forceps, syringes, pipettes [1] | Enable sterile, precise collection of various sample types (biological, trace). FTA cards stabilize DNA for transport and storage [1]. |

| Packaging Materials | Paper bags, kraft paper envelopes, screw-top evidence bottles, leak-proof biohazard bags [1] | Secure evidence while allowing breathability (critical for biological samples). Prevents degradation and cross-contamination [1]. |

| Detection & Lighting | Multi-band light sources, UV lamps, magnifiers [1] | Reveal latent or invisible evidence like bodily fluids, fingerprints, and hairs that are not visible to the naked eye [1]. |

| Preservation & Storage | Low-temperature ice packs, -20°C/-80°C dry ice, portable refrigerators [1] | Maintain sample integrity by slowing biological and chemical degradation during transport and before lab analysis [1]. |

| Analytical Instruments | FTIR Microscope (e.g., Nicolet iN10), Portable Rapid DNA Analyzers [2] [1] | Provide non-destructive chemical analysis (FTIR) or rapid on-site DNA screening, guiding the investigation while preserving evidence [2] [1]. |

Advanced Technologies and Future Outlook

The field of forensic science is evolving with new technologies that enhance the ability to maintain and extract information from evidence.

- Portable Rapid DNA Analyzers: These devices provide Short Tandem Repeat (STR) profiles on-site within hours, offering rapid leads for investigators. However, laboratory confirmation is still required for formal reporting [1].

- Microbiome Analysis: This innovative approach analyzes the microbial communities on corpses or in the environment to estimate the post-mortem interval (PMI) or suggest a geographic location [1].

- Forensic Phenotyping: This technique predicts a person's biogeographic ancestry, hair color, eye color, and other external visible characteristics from DNA evidence, providing invaluable investigative leads [1].

- Environmental DNA (eDNA): It is now possible to extract human DNA from soil or water samples to help locate burial sites or submerged evidence [1].

By 2025, forensic testing services are expected to become more integrated with AI and machine learning, enabling faster and more accurate analysis. The trend points toward a more automated, secure, and interoperable forensic ecosystem [3].

The critical role of sample integrity in forensic analysis cannot be overstated. It is the bedrock upon which reliable, defensible, and scientifically sound evidence is built. This guide has outlined the systematic approach—from strict adherence to core principles, implementation of detailed technical protocols, and the use of advanced technologies—required to achieve this goal. For researchers and forensic professionals, ongoing training, development of detailed standard operating procedures, and rigorous quality control are not merely recommendations but essential practices to ensure that the integrity of evidence is preserved from the crime scene to the courtroom.

Forensic science relies on standardized frameworks to ensure the validity, reliability, and reproducibility of its analytical results. This technical guide provides researchers, scientists, and drug development professionals with an in-depth analysis of two cornerstone standards in forensic sample collection and preservation: the ANSI/ASB Best Practice Recommendation 156 and the ISO 21043 Forensic Sciences series. These documents provide a structured, end-to-end framework for the forensic process, from crime scene to courtroom. Adherence to these standards is critical for reducing variability, minimizing cognitive bias, and ensuring that forensic evidence meets the rigorous demands of both scientific inquiry and the justice system [4] [5].

The development of uniform, enforceable standards addresses long-identified needs within forensic science, aiming to strengthen its scientific foundation and quality management [5]. Standards provide:

- Minimum Requirements & Best Practices: Defining essential procedures to ensure evidence integrity.

- Standard Protocols & Definitions: Creating a common language and methodology across laboratories and jurisdictions.

- Quality Management: Offering a framework for accreditation and audits, enhancing confidence in forensic results.

Internationally, standards are developed and maintained by organizations such as the International Organization for Standardization (ISO), the Academy Standards Board (ASB), and ASTM International. In the United States, the National Institute of Standards and Technology (NIST) administers the Organization of Scientific Area Committees (OSAC) for Forensic Science, which maintains a public registry of high-quality, technically sound standards for implementation by forensic service providers [6] [5].

ANSI/ASB Best Practice 156: Specimen Collection & Preservation

ANSI/ASB Best Practice Recommendation 156, titled "Best Practices for Specimen Collection and Preservation for Forensic Toxicology," provides targeted guidelines for the early stages of the forensic process. Its primary scope covers specimen collection for laboratories performing analysis in postmortem toxicology, human performance toxicology (e.g., drug-facilitated crimes, driving under the influence), and other forensic testing (e.g., court-ordered toxicology). It is not intended for the specific area of breath alcohol toxicology [7].

The standard delineates specific guidelines for:

- Types of specimens to be collected

- Required amounts or volumes

- Appropriate preservatives to use

- Optimal storage conditions for various sample types

By standardizing these pre-analytical variables, the guideline helps ensure that specimens arriving at the laboratory are of sufficient quality and quantity to permit reliable toxicological analysis, thereby safeguarding the integrity of the entire testing process.

Research Reagent Solutions & Essential Materials

The following table details key materials and reagents referenced in forensic standards for specimen collection and analysis.

Table: Essential Materials for Forensic Specimen Collection and Analysis

| Item | Primary Function | Application Context |

|---|---|---|

| Specimen Containers | Secure containment and preservation of biological samples. | Collection of blood, urine, and other fluids; may contain preservatives like EDTA or sodium fluoride [7]. |

| Solvents for Extraction | Separation of analytes from complex matrices. | Techniques like solvent extraction of ignitable liquid residues from fire debris [8]. |

| Chemical Test Reagents | Presumptive testing for specific elements or compounds. | Reagents for chemical testing of suspected projectile impacts for copper and lead [8]. |

| Microspectrophotometry | Color measurement and analysis of microscopic materials. | Forensic fiber analysis, providing objective data on color and composition [6] [8]. |

| Polarized Light Microscopy | Identification and characterization of crystalline materials. | Forensic examination and comparison of soils and analysis of explosives [6]. |

ISO 21043 is an international standard series designed specifically for forensic science. It provides requirements and recommendations structured around the complete forensic process. The importance of ISO 21043 extends beyond traditional quality management; it is guided by principles of logic, transparency, and relevance, and introduces a common language to support both evaluative and investigative interpretation [4]. This standard works in tandem with established standards like ISO/IEC 17025 (for testing and calibration laboratories), but removes the guesswork in applying them to the unique context of forensic service providers [4].

Structure of ISO 21043

The ISO 21043 series is organized into five parts, each corresponding to a different stage of the forensic process [9] [4]:

- Part 1: Vocabulary - Establishes standardized terminology, providing the essential common language for discussing forensic science and reducing fragmentation across disciplines. It contains definitions but no requirements or recommendations [4].

- Part 2: Recognition, recording, collecting, transport and storage of items - Addresses the initial crime scene phase, covering the handling of items (the standard's term for evidential material) in a way that "can make or break anything that follows" [4].

- Part 3: Analysis - Specifies requirements for the analysis of items of potential forensic value, including the selection and application of suitable methods, use of controls, and analytical strategies. It applies to work at the scene and within a facility [10].

- Part 4: Interpretation - Centers on linking observations from analysis to the questions in a case, forming opinions based on a logically correct framework (the likelihood-ratio framework) and helping to minimize cognitive bias [9] [4].

- Part 5: Reporting - Governs the communication of results, covering forensic reports and testimony, ensuring findings are conveyed accurately and clearly [4].

Key Terminology and Requirements

In ISO standards, specific keywords have legally significant meanings that indicate the level of obligation [4]:

Shall: Indicates a mandatory requirement. Compliance is obligatory unless physically or legally impossible ("comply or explain").Should: Indicates a recommendation, not a strict requirement. Organizations should have justifiable reasons for deviating.May: Indicates permission or an allowable option.Can: Refers to a possibility or capability.

It is critical to note that a standard can never require an action that breaks the law. The legal context of forensic science means that the law of the land can overrule a requirement in a standard, though the law may itself require adherence to such standards [4].

Integrated Workflow: From Collection to Reporting

The following diagram illustrates the integrated forensic process as defined by the ISO 21043 series, showing how each part of the standard maps to the workflow from crime scene to courtroom.

This workflow visualizes the logical flow of information and evidence through the forensic process. The process begins with a Request, which is the input for the recovery phase (covered by ISO 21043-2). The output of this phase is a number of Items (the standard's term for evidential material). These items serve as the input for the Analysis phase (ISO 21043-3), which results in Observations (a term encompassing both instrumental results and direct visual examinations). The observations are then the input for Interpretation (ISO 21043-4), where they are logically linked to the case questions to produce Opinions. Finally, these opinions, along with all prior data, become the input for Reporting (ISO 21043-5), with the final output being a Report or testimony [4]. This structured process ensures traceability, transparency, and scientific rigor at every stage.

The following tables summarize key quantitative data from the forensic standards landscape, providing a clear overview of the scope and activity in this field.

Table: OSAC Forensic Science Standards Library Metrics (as of 2024-2025)

| Standard Category | Count | Description |

|---|---|---|

| OSAC Registry | 225-245+ | Approved standards endorsed for implementation [6] [11]. |

| SDO Published | 262+ | Standards developed via consensus and published by a standards body [6]. |

| In SDO Development | 277+ | Standards currently under development at an SDO [6]. |

Table: Recent Standard Additions to OSAC Registry (2025 Examples)

| Standard Designation | Subject Area | Type |

|---|---|---|

| ANSI/ASTM E1386-23 | Separation of Ignitable Liquid Residues by Solvent Extraction | SDO Published [8]. |

| OSAC 2023-N-0014 | Standard for the Medical Forensic Examination in the Clinical Setting | OSAC Proposed [8]. |

| OSAC 2025-N-0002 | Standard for Qualifications for Forensic Anthropology Practitioners | OSAC Proposed [8]. |

Implementation and Impact

The implementation of these standards is critical for advancing forensic science as a unified discipline. As of early 2025, over 220 Forensic Science Service Providers (FSSPs) had contributed data on their implementation of OSAC Registry standards, reflecting a significant and growing adoption within the community [11]. The benefits of implementation extend far beyond quality management [4]:

- Scientific Progress: Standards anchor previous scientific progress and provide the common language necessary for productive debate and further innovation.

- Reduced Error: By promoting transparent, reproducible methods and a logically correct framework for evidence interpretation (the likelihood-ratio framework), standards reduce cognitive bias and enhance the reliability of expert opinions.

- Trust in Justice: Improving the quality of forensic science through standardization is a fundamental path toward reducing errors in the justice system, potentially leading to fewer wrongful convictions and more accurate identifications of the guilty [4].

For researchers and drug development professionals, understanding and applying these standards ensures that forensic data generated during research—whether related to toxicology, material analysis, or other disciplines—is forensically and legally defensible, thereby strengthening the overall validity and impact of their work.

The modern forensic process represents an integrated, holistic system where actions at the crime scene directly determine outcomes in the laboratory. This seamless continuity from scene to laboratory is fundamental to maintaining the integrity and evidential value of forensic findings, ensuring that scientific analysis can withstand legal scrutiny. The process is governed by an international standard, ISO 21043, which provides requirements and recommendations designed to ensure the quality of the entire forensic process, from initial recovery through to reporting [9]. Within this framework, every step—from the first documentation of the scene to the final statistical interpretation of results—forms an unbroken chain that must be meticulously managed and documented.

This technical guide examines the forensic holistic process through the lens of the forensic-data-science paradigm, which emphasizes methods that are transparent, reproducible, intrinsically resistant to cognitive bias, and use the logically correct framework for evidence interpretation [9]. For researchers and forensic professionals, understanding this integrated process is critical for generating reliable, defensible results that advance both case resolution and scientific knowledge.

Crime Scene Phase: Evidence Recovery & Preservation

The initial scene handling phase sets the foundation for all subsequent laboratory analysis. Proper execution at this stage preserves evidence that would otherwise be lost, contaminated, or rendered inadmissible.

Core Principles of Evidence Handling

The fundamental principles for handling forensic evidence focus on preservation and documentation. Practitioners must wear appropriate personal protective equipment including gloves, gowns, and goggles to protect both the evidence and themselves from cross-contamination [12]. Key considerations include:

- Minimal Handling: Objects should be handled as little as possible to prevent loss of evidence or cross-contamination [12].

- Preservation of State: Evidence should not be rinsed, washed, or wiped, as this directly impacts the amount and integrity of available evidence [12].

- Early Documentation: Photography should capture the state of evidence before removal, and the patient's hands should be secured in paper bags to preserve trace evidence [12].

- Proper Clothing Removal: When removing clothing, cut near seams or around bullet/stab holes to preserve the original shape of damaged areas [12].

Specialized Evidence Collection Techniques

Different evidence types require specialized collection methodologies to preserve their analytical value:

- Ballistic Evidence: Bullet retrieval must be performed using nonmetal instruments or instruments with rubber shods to prevent scratching the surface and preserving firing patterns that can match bullets to specific firearms [12].

- Biological Evidence: Collection must prevent degradation; the use of appropriate preservatives and controlled storage conditions is essential [7].

- Trace Evidence: The application of a universal experimental protocol for transfer and persistence enables reliable collection and subsequent analysis of materials like fibers, pollen, GSR, and DNA [13].

The Chain of Custody Documentation

Maintaining an unbroken chain of custody is a legal requirement for forensic evidence. This process involves a paper log that tracks possession from collection to courtroom, including dates, times, evidence types, and signatures of all individuals handling the evidence [12]. When evidence is transferred to law enforcement, the official's name and badge number are recorded, creating a documented pedigree that proves the evidence has not been tampered with and ensuring its admissibility in legal proceedings [12].

Table 1: Evidence Packaging Specifications

| Evidence Type | Packaging Material | Preservation Rationale | Labeling Requirements |

|---|---|---|---|

| Clothing/Textiles | Paper bags | Prevents deterioration, condensation, or microbial growth | Patient ID, date, time, collector |

| Bullets/Cartridges | Plastic containers | Preserves markings and firing patterns on metal surfaces | Patient ID, date, time, ballistic info |

| Biological Fluids | Sealed containers with preservatives | Prevents degradation and bacterial growth | Patient ID, date, time, biohazard warning |

| Trace Evidence | Paper/pharmacal folds | Prevents static buildup and loss of particles | Patient ID, date, time, source location |

Laboratory Analysis Phase: From Receipt to Results

Once evidence enters the laboratory, it undergoes systematic analysis using increasingly sophisticated technologies and methodologies that must balance sensitivity, specificity, and statistical robustness.

Modern Forensic Technologies

Forensic laboratories now employ technologies that seemed futuristic just a decade ago. According to Forensic Science Colleges, the field is experiencing 14% job growth for forensic science technicians between 2023-2033, largely driven by new techniques that enhance the availability and reliability of objective forensic information [14].

- Next-Generation Sequencing (NGS): This groundbreaking forensic technology allows scientists to analyze DNA in greater detail than ever before, examining entire genomes or specific regions with high precision [14]. Unlike traditional DNA profiling, NGS is particularly useful for damaged, minimal, or aged DNA samples, significantly speeding up investigations and reducing backlogs through parallel sample processing [14] [15].

- Next Generation Identification (NGI) System: This advanced biometric technology enhances law enforcement identification capabilities through integrated palm prints, facial recognition, improved fingerprint analysis, and iris scans [14]. A key feature is the 'Rap Back' system that continuously monitors individuals in law enforcement databases, providing real-time updates on new criminal activity, particularly valuable for tracking individuals on probation or parole [14].

- Automated Firearm Identification: The Integrated Ballistic Identification System (IBIS) represents cutting-edge solutions for firearm and tool mark identification, facilitating the sharing, comparison, and automated identification of exhibit information across imaging networks [14]. The latest generation features exceptional 3D imaging, advanced comparison algorithms, and robust infrastructure for police and military organizations [14].

- Artificial Intelligence in Forensics: AI is increasingly deployed across forensic domains, from digital forensics to fingerprint comparison and photograph analysis [14] [15]. These systems can identify patterns or anomalies in vast datasets that would take human investigators weeks or months to uncover, though they require careful validation to ensure courtroom admissibility [14] [15].

Analytical Chemistry in Forensic Science

The core of forensic laboratory work involves sophisticated chemical analysis to identify substances and determine their concentrations.

- Qualitative Analysis: This aims to identify the presence or absence of specific chemicals, often relying on physical properties such as color, texture, and melting point [16]. This type of analysis is essential for confirming the presence of substances like illicit drugs or poisons, though it doesn't determine quantities [16].

- Quantitative Analysis: Following qualitative identification, quantitative analysis measures how much of each identified substance is present, providing critical information for cases like assessing blood alcohol levels or drug concentrations [16].

- Analytical Techniques: Forensic laboratories employ various sophisticated techniques, including chromatography and spectroscopy, which can be adapted for both qualitative and quantitative purposes [16]. For example, liquid chromatography coupled with mass spectrometry (LC-MS) is widely used for confirmatory and quantitative analyses and serves as a powerful tool for drug screening [16].

Table 2: Analytical Techniques in Forensic Chemistry

| Technique | Primary Use | Applications | Quantitative/Qualitative |

|---|---|---|---|

| Gas Chromatography-Mass Spectrometry (GC-MS) | Drug screening, toxicology | Quantifying alcohol, illicit drugs in body fluids | Both |

| Liquid Chromatography-Mass Spectrometry (LC-MS) | Confirmatory drug analysis | Drug metabolites, explosives, marker dyes | Both |

| Fourier Transform Infrared (FTIR) Spectroscopy | Material identification | Polymers, fibers, paints, drugs | Primarily qualitative, can be quantitative |

| High-Performance Liquid Chromatography (HPLC) | Drug analysis | Metabolites, explosives, inks | Both |

| Ultraviolet-Visible Spectrophotometry | Screening | Determining presence/absence of suspected compounds | Both with standards |

Experimental Design & Data Interpretation

The forensic-data-science paradigm requires methods that are transparent, reproducible, and use empirically validated frameworks for evidence interpretation.

Statistical Design of Experiments (DoE) in Forensic Analysis

The application of Statistical Design of Experiments (DoE) represents a significant advancement in forensic methodology. DoE offers substantial advantages over traditional "one factor at a time" (OFAT) experimentation by requiring fewer experiments, involving lower costs, shorter analysis time, and less consumption of samples and reagents [17]. Critically, DoE allows the effect of interactions between independent variables to be assessed, which OFAT approaches cannot detect [17].

The DoE process in forensic analysis typically follows a structured pipeline:

- Screening Stage: When working with numerous independent variables, screening methodologies (Full, Fractional, or Plackett-Burman Designs) identify factors that significantly affect the response [17].

- Optimization Stage: Response surface methodologies (Central Composite, Face-Centered Central Composite, and Box-Behnken Designs) generate polynomial equations that describe datasets and create predictive mathematical models [17].

- Model Validation: The final model's quality is assessed through its ability to fit experimental data (model adequacy) and its predictive utility (model validation) by comparing predictions with additional experimental data [17].

Universal Experimental Protocols

The development and implementation of universal experimental protocols for transfer and persistence of trace evidence represents another significant methodological advancement. These protocols enable consistent methodology across studies, allowing meaningful comparison of results between experiments [13].

A recent implementation involved over 2500 images collected from 57 highly replicated transfer experiments and 2 persistence experiments, with results showing reliable and consistent outcomes under all conditions tested [13]. The protocol utilizes computational image analysis with open-source software like ImageJ for particle counting, ensuring objective, reproducible measurements [13].

The transfer ratio is calculated as the number of particles that have moved from the donor to the receiver material as a proportion of the total number of particles originally recorded on the donor material prior to transfer, with complete transfer represented by a ratio of 1 and no transfer by 0 [13].

Research Reagent Solutions & Essential Materials

The following toolkit details essential materials and reagents used in modern forensic analysis, particularly in trace evidence and toxicological studies.

Table 3: Essential Research Reagent Solutions for Forensic Analysis

| Reagent/Material | Function/Application | Technical Specifications |

|---|---|---|

| UV Powder-Flour Mixture | Proxy material for transfer studies | 1:3 ratio by weight; enables fluorescence tracking under UV light [13] |

| Fluorescent Carbon Dot Powder | Fingerprint enhancement | Applied to fingerprints, fluoresces under UV light in red, yellow, or orange [14] |

| Immunochromatography Test Strips | Rapid substance detection | Detects drugs, medications in bodily fluids; some smartphone-compatible [14] |

| Solid Phase Extraction (SPE) Sorbents | Sample preparation | Extracts and concentrates analytes from complex biological matrices [17] |

| Dispersive Liquid-Liquid Microextraction (DLLME) Solvents | Microscale sample preparation | Provides high enrichment factors for trace analyte detection [17] |

| Stable Isotope-Labeled Standards | Quantitative analysis | Internal standards for mass spectrometry-based quantification [16] |

| Biosensors | Fingerprint analysis | Detects age, medications, gender from trace bodily fluids in fingerprints [14] |

| Nanosensors | Molecular detection | Examines illegal drugs, explosives on molecular level [14] |

Visualization of Forensic Processes

Holistic Forensic Workflow

Trace Evidence Transfer & Persistence Protocol

The holistic forensic process represents an increasingly sophisticated integration of crime scene handling with advanced laboratory methodologies. The future of this field lies in technologies like Next-Generation Sequencing, Artificial Intelligence, and automated identification systems that enhance both the speed and reliability of forensic analysis [14] [15]. Underpinning these technological advances must be rigorous methodological frameworks including Statistical Design of Experiments, universal experimental protocols, and adherence to international standards like ISO 21043 [17] [13] [9].

For researchers and forensic professionals, success demands expertise that spans from meticulous crime scene preservation to sophisticated statistical interpretation of laboratory results. The continuity between these phases—maintained through unbroken chain of custody documentation, standardized protocols, and quality assurance processes—ensures that forensic science continues to provide reliable, defensible evidence that meets both scientific and legal standards. As the field evolves, this holistic approach will become increasingly critical for delivering justice through scientific rigor.

Essential Pre-Collection Planning and Kit Preparation

The integrity and admissibility of forensic evidence are fundamentally established during the pre-collection phase, long before any sample is gathered. In forensic science, the reliability of analytical results—whether for toxicological screening, DNA profiling, or substance identification—is entirely dependent on the meticulous planning and preparation undertaken prior to evidence collection. Proper kit preparation constitutes the first and most critical link in the chain of custody, ensuring that biological and physical evidence can withstand legal scrutiny while producing scientifically defensible results. This guide provides researchers, scientists, and drug development professionals with comprehensive technical protocols for assembling and utilizing forensic collection kits across various evidence types, incorporating both established standards and emerging technological solutions.

The convergence of biological and digital evidence in modern forensic labs demands increasingly sophisticated collection methodologies [18]. As forensic technology advances, with techniques such as probabilistic genotyping providing greater statistical power to DNA mixture interpretations [19] and novel methods emerging for quantitative fracture analysis [20], the initial collection and preservation protocols become even more crucial. Failure to implement forensically secure procedures during collection may jeopardize the acceptance of analytical results as evidence in criminal, civil, judicial, or administrative proceedings [21]. This guide establishes essential frameworks for maintaining specimen integrity through standardized kit configurations, specialized handling protocols, and rigorous documentation practices that meet international quality standards.

Forensic Collection Kit Configurations

Core Components and Specifications

Forensic collection kits must be tailored to specific evidence types while maintaining consistent standards for preservation, documentation, and contamination prevention. The following table summarizes essential components across various specialized kits:

Table 1: Forensic Collection Kit Components and Specifications

| Kit Type | Primary Components | Preservation Methods | Volume/Quantity Requirements | Special Considerations |

|---|---|---|---|---|

| Blood Collection | Sterile tubes, lancets/needles, alcohol swabs, gauze, sealable bags, labels, transport containers [22] | Anticoagulants to prevent clotting; preservatives (e.g., 1% sodium fluoride) to prevent alcohol formation and drug degradation [21] | Minimum 10 mL for forensic toxicology; collect both heart and peripheral blood if possible for postmortem cases [21] | Use gray top Vacutainer tubes or equivalent with fluoride/oxalate preservative; avoid freezing in glass tubes [21] |

| Postmortem Toxicology | Containers for blood, urine, vitreous fluid, gastric contents, tissues; seals; chain-of-custody forms [21] | Fluoride preservative for blood; light-protected containers for urine and vitreous fluid to prevent photo-decomposition [21] | 10 mL femoral blood; up to 40 mL urine; up to 40 mL gastric contents; up to 10 mL bile [21] | Each specimen container must be individually labeled with anatomic site of origin; collect specimens before embalming [21] |

| DNA Evidence | Swabs, sterile containers, desiccant, protective packaging [23] [18] | Drying at room temperature; desiccant for stabilization; anhydrobiosis technology for long-term storage [24] | Varies by source; specialized protocols for low-quantity samples (≤1 ng) [24] | Prevent contamination through single-use components; maintain dry environment to inhibit DNA degradation [23] |

| Urine Examination | Sterile collection cups, tamper-evident seals, preservatives, temperature strips [21] | Refrigeration or chemical preservation; protection from light for light-sensitive substances [21] | Up to 40 mL; record total volume and appearance [21] | Wrap containers in foil to protect against photo-decomposition of light-sensitive substances [21] |

| Digital Evidence | Faraday bags, write-blockers, static-free packaging, encrypted storage media [18] | Climate-controlled environments; bit-for-bit forensic imaging; hash verification [18] | Complete sector-level copies of storage media [18] | Maintain logical access controls (encryption, passwords); preserve metadata integrity [18] |

Specialized Kit Configurations for Unique Scenarios

Certain forensic scenarios require highly specialized collection approaches with particular attention to preservation methods and potential analytical interference:

Sexual Assault Evidence Kits: These comprehensive kits typically include swabs for biological fluid collection, alternative light sources for evidence detection, paper bags for clothing collection, and drying racks to prevent microbial degradation of biological evidence. Proper documentation includes detailed anatomical collection sites.

Toolmark and Fracture Surface Evidence: Specialized kits for physical matching should include sterile gloves, anti-static tools, magnification equipment, and rigid containers to prevent contact damage. The emerging science of quantitative fracture matching relies on undisturbed surface topography, making careful handling essential [20].

Post-Accident Industrial Testing: For workplace incident investigation, kits should accommodate both blood and urine collection to maximize detection windows. As the presence of drugs and toxicants in any biological fluid is time-dependent, the two specimens offer the greatest opportunity for detection [21].

Technical Protocols for Sample Collection and Handling

Standardized Collection Workflows

The following diagram illustrates the generalized workflow for forensic sample collection, emphasizing critical decision points and documentation requirements:

Specialized Collection Methodologies

Postmortem Specimen Collection Protocol

Postmortem specimens require particular attention to anatomical site documentation and prevention of postmortem redistribution effects:

Femoral Blood Collection: Using a clean knife, cut the iliac veins while avoiding arteries. Press blood from the upper portion of the iliac veins, then from the popliteal and femoral veins into the collection tube. Pool blood from both sides, avoiding the lower vena cava. Add potassium fluoride to a concentration of 1% or place directly into a gray top Vacutainer tube [21].

Vitreous Fluid Collection: Collect vitreous from one eye, then wait 3 hours to collect from the other eye. Send vitreous fluid from each eye separately in screw-capped, foil-wrapped plastic vials to prevent photo-decomposition of light-sensitive substances [21].

Gastric Contents Documentation: Note the total amount, appearance (including recognizable constituents), color, and odor found during initial examination. Intact tablets, capsules, or other materials should be packaged separately and identified as being found in stomach contents [21].

DNA Preservation Protocol for Room Temperature Storage

Recent advances in DNA stabilization technologies offer alternatives to conventional frozen storage:

Anhydrobiosis Technology Application: Add 1.65 mL of ultra-pure water to dehydrated GenTegra matrix. Once rehydrated, store at 4°C and use within three months [24].

Sample Preparation: Place 15 µL of rehydrated matrix into a 200 µL well of a 96-well plate and dry for 24 hours under a laminar flow hood at room temperature (average 20°C) and constant air humidity (average 35%) prior to sample deposit [24].

Sample Preservation: Apply 30 µL of the sample solution onto the prepared matrix and dry for 24 hours under a laminar flow hood at room temperature with constant humidity. Seal plates with self-adhesive film and store in the dark [24].

Quality Assurance and Methodological Frameworks

Evidence Integrity and Documentation Standards

Maintaining an unbroken chain of custody is fundamental to forensic evidence admissibility. The following documentation practices must be implemented:

Collection Documentation: Record date, time, location, and person responsible for initial collection. For biological specimens, document anatomical collection sites and preservation methods applied [21] [18].

Transfer Documentation: Log each transfer of custody, including identity of relinquishing and receiving parties, reason for transfer, and exact date and time [18].

Storage Conditions: Document secure storage location with specific details of environmental conditions (temperature, humidity) and access restrictions [18].

Disposition Tracking: Final documentation must record return, destruction, or long-term archiving of evidence with appropriate authorization [18].

Storage and Preservation Requirements

Different evidence categories demand specific storage conditions to maintain analytical integrity:

Table 2: Evidence Storage Requirements and Preservation Methods

| Evidence Type | Short-Term Storage | Long-Term Preservation | Temperature Monitoring | Integrity Verification |

|---|---|---|---|---|

| Biological (DNA) | Refrigeration (4°C) or freezing (-20°C) for blood and tissue [21] | Anhydrobiosis technology for room temperature DNA storage [24] | Continuous temperature logging with alarm systems [18] | DNA quantification and degradation index measurement [24] |

| Chemical/Toxicology | Specific temperature controls (refrigeration); protection from light [21] [18] | Frozen storage (-20°C to -80°C) with limited freeze-thaw cycles [21] | Calibrated temperature monitoring devices [18] | Positive controls and calibration verification [21] |

| Trace Evidence | Dry, cool environment; individual packaging to prevent loss [18] | Climate-controlled environments with humidity control [18] | Environmental monitoring systems [18] | Microscopic examination and comparative analysis [20] |

| Digital Evidence | Climate-controlled server rooms; Faraday bags for active devices [18] | Offline storage for backups; periodic data integrity checks [18] | Server room environmental monitoring [18] | Hash value verification throughout lifecycle [18] |

Advanced Experimental Protocols

Quantitative Fracture Matching Methodology

Emerging quantitative approaches to fracture matching provide statistical foundations for physical comparisons:

Imaging Protocol: Acquire three-dimensional topological images of fracture surfaces at appropriate magnification and field of view. The imaging scale should be greater than approximately 10 times the self-affine transition scale (typically 50-75 μm for metallic materials) to avert signal aliasing [20].

Surface Topography Analysis: Calculate height-height correlation functions to characterize surface roughness: δh(δx)=⟨[h(x+δx)−h(x)]²⟩ₓ, where the ⟨⋯⟩ operator denotes averaging over the x-direction. Identify the transition scale where roughness characteristics deviate from self-affine behavior and reach saturation [20].

Statistical Classification: Employ multivariate statistical learning tools to classify articles based on spectral analysis of surface topography. Use likelihood ratios or log-odds ratios for classifying matching and non-matching surfaces, estimating misclassification probabilities through validation testing [20].

Probabilistic Genotyping for DNA Mixtures

Complex DNA mixtures require advanced statistical approaches for interpretation:

Software Selection: Choose between qualitative software (LRmix Studio) that considers detected alleles or quantitative software (STRmix, EuroForMix) that incorporates both allele identification and peak height information [19].

Likelihood Ratio Calculation: Compute likelihood ratios comparing probabilities of observations given alternative hypotheses about contributors to the mixture. Quantitative tools generally produce higher LR values than qualitative approaches, with three-contributor mixtures typically yielding lower LRs than two-contributor mixtures [19].

Result Interpretation: Understand that different software products utilize distinct mathematical and statistical models, necessarily producing different LR values. Forensic experts must comprehend underlying methodologies to support conclusions in legal proceedings [19].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Materials for Forensic Sample Processing

| Item | Function | Application Notes |

|---|---|---|

| GenTegra DNA Matrix | Stabilizes DNA extracts in dry, ambient state through protective coating [24] | Enables room temperature DNA storage; effective for quantities as low as 0.2 ng; uses Active Chemical Protection technology [24] |

| Sodium Fluoride/Potassium Oxalate | Preservative for forensic blood samples [21] | Prevents alcohol formation and slows drug degradation; used at 1% concentration in blood samples [21] |

| Crime Prep Adem-Kit | DNA extraction from forensic samples [24] | Validated for casework samples; compatible with subsequent quantification and amplification steps [24] |

| Investigator Quantiplex Pro Kit | Quantitative PCR for DNA quantification and degradation assessment [24] | Provides DNA concentration and degradation index; can be used with half-volume reactions to conserve sample [24] |

| GlobalFiler IQC Kit | STR amplification for DNA profiling [24] | 30 amplification cycles; compatible with capillary electrophoresis on platforms such as Applied Biosystems 3500XL Genetic Analyzer [24] |

| NIST Standard Reference Material | Quantitation standards for method validation [24] | Provides reference DNA for standardization and quality control; essential for maintaining measurement traceability [24] |

Essential pre-collection planning and kit preparation represent the foundational stage of forensic analysis, determining the ultimate reliability and admissibility of scientific evidence. As forensic science continues to evolve, with emerging technologies such as quantitative fracture matching [20], probabilistic genotyping [19], and ambient DNA storage [24], the principles of meticulous preparation remain constant. By implementing the standardized protocols, specialized methodologies, and quality assurance frameworks outlined in this guide, researchers and forensic professionals can ensure that evidence collection meets the rigorous standards required for both scientific validity and legal proceedings. The integration of these established and emerging approaches strengthens the foundation of forensic science, supporting accurate and defensible analytical outcomes across the spectrum of forensic disciplines.

In forensic science, the validity of analytical results is entirely dependent on the integrity of the sample from which they are derived. Two interdependent principles form the foundation of reliable forensic practice: contamination prevention and chain of custody. Contamination prevention encompasses the procedures and protocols designed to maintain the biological and chemical purity of evidence from collection through analysis. The chain of custody provides the chronological documentation that tracks every individual who handles the evidence, ensuring its integrity can be verified throughout its lifecycle. Together, these principles ensure that forensic evidence remains legally defensible and scientifically valid, ultimately serving the interests of justice. This technical guide examines the core protocols, emerging technologies, and standardized procedures that constitute universal best practices in forensic sample management, providing researchers and drug development professionals with the framework necessary to maintain evidentiary integrity.

Foundational Principles of Evidence Integrity

The Critical Role of Chain of Custody

The chain of custody represents the procedural lifeline that preserves evidence integrity from crime scene to courtroom. This foundational principle encompasses the chronological documentation and systematic tracking of evidence throughout its entire lifecycle—from collection and movement to storage and final disposition. Its primary function is to establish an unbroken digital trail demonstrating that evidence has been collected, handled, and preserved in a manner that prevents tampering, loss, or contamination. Research indicates that improper evidence handling contributed to wrongful convictions in approximately 29% of DNA exoneration cases, underscoring the profound real-world consequences of chain-of-custody failures [25].

The integrity of this process rests on several critical pillars. Documentation requires that every interaction with evidence must be recorded, creating a transparent and traceable history that includes who collected the evidence, when, where, and under what conditions. Secure storage mandates that evidence be stored in environments protecting it from tampering, contamination, or degradation, with specific standards often set by accrediting bodies like the American Society of Crime Laboratory Directors/Laboratory Accreditation Board (ASCLD/LAB). Transfer protocols govern the movement of evidence between custodians, requiring sealed and signed evidence bags, documented handovers, and secure transport methods. These elements work in concert to create a robust framework for evidence integrity [25].

The Pervasive Challenge of Contamination

Contamination represents the introduction of exogenous materials or substances that compromise the analytical integrity of a sample. In forensic contexts, this can include cross-contamination between evidence items, introduction of environmental contaminants, or inadvertent addition of human DNA through improper handling. The challenges are particularly acute with modern analytical techniques capable of detecting minute quantities of genetic material. For instance, a recent study demonstrated the feasibility of extracting human mitochondrial DNA from air samples, highlighting both the sensitivity of modern forensic tools and the corresponding need for stringent contamination controls [26].

The consequences of contamination extend beyond scientific validity to legal admissibility. Courts increasingly scrutinize the protocols used to handle evidence, and failure to demonstrate adequate contamination controls can result in evidence being excluded from proceedings. This is especially critical in pharmaceutical research and development, where contaminated samples can lead to inaccurate toxicological assessments, flawed pharmacokinetic data, and compromised drug safety profiles.

Current Landscape: Knowledge and Practice Gaps

Assessment of Current Practitioner Knowledge

Research reveals significant gaps in forensic knowledge among healthcare professionals who often serve as first responders in evidence collection. A recent study assessing nurses' knowledge and practices regarding forensic evidence found that among 110 participants, the majority (61.8%) possessed only a moderate level of knowledge, while just 32.7% demonstrated adequate knowledge related to the collection, preservation, and transportation of forensic evidence. Perhaps more concerningly, only 0.9% of nurses had received any formal education, certification, or workshops related to forensic nursing, indicating a substantial training deficit among frontline healthcare providers [27].

This knowledge gap manifests in critical procedural errors. The same study documented deficient practices in sample handling: gastric content samples were frequently collected and sent without preservatives, oral samples were rarely preserved in moist conditions, and proper collection techniques for hair samples and genital swabs were inconsistently applied. These findings highlight an urgent need for standardized, evidence-based training programs for all professionals potentially involved in forensic sample collection [27].

Table 1: Knowledge Assessment and Practices Among Healthcare Professionals in Forensic Evidence Handling

| Assessment Area | Finding | Percentage | Implication |

|---|---|---|---|

| Overall Knowledge | Moderate knowledge level | 61.8% | Significant knowledge gaps among frontline staff |

| Adequate knowledge level | 32.7% | Minority demonstrate competency | |

| Formal Training | Received forensic education | 0.9% | Extreme training deficit |

| Evidence Collection Experience | Had practical experience | 9.1% | Limited hands-on exposure |

| Specific Practice Deficiencies | Gastric content sent without preservative | Common practice | Potential sample degradation |

| Oral samples preserved moist | 1.8% | Improper preservation technique | |

| Hair sample collection | 1.8% | Rarely performed procedure |

Common Procedural Challenges and Vulnerabilities

The implementation of contamination prevention and chain-of-custody protocols faces numerous practical challenges. Human error represents the most pervasive vulnerability, manifesting as mislabeling evidence, improper handling leading to contamination, or failure to document custody transfers. Technological failures present growing concerns, with cybersecurity reports noting a over 50% increase in cyberattacks targeting digital evidence storage systems over the past five years. Logistical complexities emerge in multi-agency investigations where variations in procedures and standards between organizations can create weaknesses in the chain of evidence [25].

Additional challenges include inadequate training and awareness among personnel, who may not fully comprehend the critical nature of their role in preserving evidence integrity. The complexity of modern evidence types, particularly digital evidence and advanced biological samples, further complicates proper handling. Environmental factors also pose significant threats, with improper environmental controls responsible for approximately 15% of evidence degradation incidents [25].

Technical Protocols for Contamination Prevention

Standardized Collection Methodologies

Proper evidence collection forms the first line of defense against contamination. Techniques must be tailored to specific evidence types while maintaining universal precautions. For physical evidence, protocols include wearing appropriate personal protective equipment (gloves, masks, coveralls), using sterile, single-use instruments for each sample, and collecting control samples from the environment when appropriate. For biological specimens, specific containers and preservatives are required—for example, blood samples for DNA analysis should be collected in color-coded vacutainers containing EDTA to prevent coagulation and preserve DNA integrity [27].

Advanced collection techniques are emerging for novel sample types. AirDNA collection, for instance, employs specialized filtration systems with either glass fiber or cotton filters to capture airborne genetic material. Research indicates cotton filters may provide superior recovery for mitochondrial DNA sequencing, offering valuable insights for forensic investigations where nuclear DNA is scarce or degraded [26]. The selection of collection materials must be evidence-specific, considering the potential for sample absorption, chemical interaction, or DNA adhesion.

Table 2: Forensic Sample Collection and Preservation Specifications

| Sample Type | Collection Method | Preservation Solution | Storage Conditions | Key Considerations |

|---|---|---|---|---|

| Blood for DNA | Color-coded vacutainer | EDTA | Room temperature | Prevents coagulation; maintains DNA integrity |

| Gastric Content | Sterile container | Appropriate preservative | Refrigeration | Often sent without preservative—critical error |

| Oral Swabs | Sterile swab | Maintain moisture | Room temperature | Only 1.8% properly preserved moist [27] |

| Tissue for Morphology & DNA | DESS solution | DMSO/EDTA/saturated NaCl | Room temperature | Maintains both morphology and DNA [28] |

| AirDNA | Vacuum filtration | Cotton filters preferred | -20°C | Better for mtDNA sequencing [26] |

| Digital Evidence | Forensic imaging | Write-blockers | Secure server | Creates bit-for-bit copy without altering original |

Preservation Solutions and Stabilization Methods

Effective preservation is sample-specific and critical for maintaining analytical integrity. Traditional methods like ethanol fixation effectively preserve DNA but often compromise morphological integrity through tissue dehydration and hardening. Recent research demonstrates that DESS (DMSO/EDTA/saturated NaCl solution) effectively preserves both high molecular weight DNA and morphological features across diverse taxonomic groups at room temperature [28].

The DESS preservation method offers particular advantages for field collection and institutions lacking cryogenic facilities. Studies show that DESS-preserved nematode samples maintained DNA integrity even after 10 years of room temperature storage, with DNA fragments exceeding 15 kb remaining viable for analysis. Notably, DNA integrity was maintained even after complete evaporation of the DESS solution, providing unexpected robustness to preservation failures [28]. For biological samples intended for RNA analysis, immediate preservation in RNAlater solution and flash-freezing in liquid nitrogen followed by storage at -80°C represents the gold standard, though even these measures don't prevent all degradation—one study noted RNA Integrity Numbers (RIN) as low as 1.1-3.1 for most sample types despite proper preservation [29].

Metatranscriptomic Workflow for Body Fluid Identification

Advanced forensic identification increasingly employs metatranscriptomic analysis to characterize active microbial communities in body fluids. The detailed methodology below demonstrates a contamination-aware workflow:

This workflow demonstrates sophisticated contamination controls throughout processing. Samples including venous blood, semen, saliva, vaginal secretion, menstrual blood, and skin tissue are collected with immediate preservation in RNA stabilization solutions to prevent degradation. Following flash-freezing in liquid nitrogen and storage at -80°C, total RNA extraction includes a critical host RNA depletion step to enrich for microbial transcripts. Following sequencing on platforms such as MGI, bioinformatic processing involves quality control, host read filtering, and assembly of hundreds of thousands of unigenes. Taxonomic annotation identifies thousands of microbial species, with diversity analyses revealing distinct profiles for different body fluids. Finally, machine learning models (Artificial Neural Networks, Random Forest, and Support Vector Machines) are trained on these metatranscriptomic profiles to accurately classify unknown samples, with research demonstrating particularly strong performance from ANN and RF models for this multidimensional data [29].

Chain of Custody Documentation Framework

Comprehensive Tracking Workflow

The chain of custody process requires meticulous documentation at each transition point from collection through final disposition. The following workflow visualization encapsulates the complete evidence journey:

This workflow illustrates the continuous documentation requirements throughout the evidence lifecycle. Initial collection must capture foundational information including precise date/time, location, and collector identity. Proper labeling requires unique identifiers and tamper-evident sealing. Secure storage necessitates restricted access facilities with appropriate environmental controls—improper storage conditions contribute to approximately 15% of evidence degradation incidents [25]. Each transfer between custodians requires complete documentation including signatures from both the releasing and receiving parties. Any access to stored evidence must be logged with purpose stated, and the final disposition (whether return, destruction, or archiving) must be thoroughly documented to complete the chain.

Specialized Research Reagents and Materials

The following toolkit details essential materials for forensic evidence collection and preservation, compiled from current research protocols:

Table 3: Essential Research Reagent Solutions for Forensic Sample Preservation

| Reagent/Material | Composition/Type | Primary Function | Application Specifics |

|---|---|---|---|

| DESS Solution | 20% DMSO, 250 mM EDTA, saturated NaCl | DNA preservation at room temperature | Maintains DNA integrity >15 kb after 10 years; preserves morphology [28] |

| EDTA Vacutainers | EDTA anticoagulant | Prevents blood coagulation | Preserves DNA for analysis; color-coded for identification [27] |

| RNA Stabilization Solutions | Quaternary ammonium salts, glycerol | RNA preservation | Prevents degradation; used before freezing [29] |

| Cotton Filters | Natural cellulose fibers | AirDNA collection | Superior mtDNA recovery compared to glass fiber [26] |

| Glass Fiber Filters | Borosilicate microfibers | Particulate collection | Heated to 450°C to destroy organic contaminants [26] |

| Tamper-Evident Seals | Security tape with unique patterns | Evidence integrity | Shows visible damage if opened improperly [25] |

| Sterile Swabs | Medical-grade cotton/polyester | Biological sample collection | Single-use with controlled pressure application [27] |

Advanced Analytical Techniques and Emerging Technologies

Spectroscopic Methods for Forensic Analysis

Advanced spectroscopic techniques are revolutionizing forensic analysis through non-destructive, rapid characterization of evidence. Raman spectroscopy is being deployed in mobile systems with improved optics and advanced data processing, enabling field-based analysis of diverse sample types. Handheld X-ray fluorescence (XRF) spectrometers provide non-destructive elemental analysis, with researchers demonstrating the ability to distinguish between tobacco brands through analysis of cigarette ash composition [30].

ATR FT-IR spectroscopy combined with chemometrics has shown remarkable capability in estimating the age of bloodstains, providing crucial temporal information for crime scene reconstruction. Similarly, near-infrared (NIR) and ultraviolet-visible (UV-vis) spectroscopy are being investigated for determining time since deposition of bloodstains, offering potential improvements in dating accuracy. The development of portable LIBS (Laser-Induced Breakdown Spectroscopy) sensors enables rapid, on-site analysis of forensic samples with enhanced sensitivity, functioning in both handheld and tabletop configurations for field deployment [30].

Novel Molecular Approaches

Metatranscriptomic analysis represents a paradigm shift in forensic microbiology, moving beyond composition to function by examining actively transcribed microbial genes. This approach has demonstrated exceptional capability for body fluid identification, with one study annotating 4690 microbial species across six forensic sample types (venous blood, menstrual blood, semen, saliva, vaginal secretion, and skin) [29]. Unlike DNA-based methods, metatranscriptomics reveals the active microbial community at the time of collection, potentially providing more relevant information for sample identification.

The sensitivity of modern molecular techniques continues to advance, with studies now successfully recovering airDNA from enclosed spaces. This approach captures both nuclear and mitochondrial DNA from skin cells and respiratory droplets suspended in air or settled as dust. While nuclear DNA quantification remains challenging from air samples, mitochondrial DNA sequencing has proven viable, offering a potential method for demonstrating presence in locations where surface samples are unavailable [26].

The universal core principles of contamination prevention and chain of custody represent interdependent components of forensic integrity. Contamination prevention requires specialized knowledge, appropriate materials, and standardized procedures throughout collection, preservation, and analysis. The chain of custody provides the verification framework that documents evidence integrity through an unbroken documentation trail. Implementation challenges—including knowledge gaps among practitioners, human error, and technological limitations—require ongoing training, quality control measures, and adoption of technological solutions like evidence management software.

Emerging technologies including advanced spectroscopic methods, metatranscriptomic analysis, and novel sampling approaches like airDNA collection are expanding forensic capabilities while introducing new contamination control considerations. As these techniques evolve, the fundamental principles outlined in this guide will remain essential for maintaining the scientific validity and legal defensibility of forensic evidence. For researchers and drug development professionals, adherence to these protocols ensures data integrity, reproducibility, and ultimately, the credibility of analytical results in both scientific and regulatory contexts.

Practical Protocols for Specific Biological and Trace Evidence Collection

The integrity of forensic biological evidence, pivotal for criminal investigations and judicial outcomes, is fundamentally dependent on the initial steps of collection, preservation, and transportation. This guide provides an in-depth technical overview of standardized methodologies for collecting key biological fluids—blood, semen, and saliva—using swabs, gauze, and FTA cards. It synthesizes current practices, highlights knowledge gaps among practitioners, and introduces advanced analytical techniques that are revolutionizing forensic serology. The article is structured to serve researchers, scientists, and drug development professionals by providing detailed protocols, comparative data on preservation methods, and a discussion on the integration of novel omic technologies for body fluid identification.

Biological fluids such as blood, semen, and saliva are frequently encountered as critical evidence in forensic investigations. They can provide a direct link between a crime scene, a victim, and a perpetrator through DNA analysis [31] [32]. The reliability of this DNA evidence, however, is contingent upon the proper collection and preservation of the biological samples at the outset. Inadequate handling can lead to sample degradation, contamination, or false-negative results, ultimately compromising the forensic investigation [27].

The expanding role of various healthcare and forensic personnel in evidence collection necessitates a clear and standardized approach. Studies indicate that knowledge regarding the optimal collection, preservation, and transportation of forensic evidence is often moderate, with expressed practices frequently deviating from established standards [27]. This guide details the core methods for collecting blood, semen, and saliva stains using three common mediums—swabs, gauze, and FTA cards—therely aiming to bridge the gap between research and practice within the broader context of forensic science.

Core Collection Methodologies

The choice of collection medium is determined by the nature of the stain, the substrate it is on, and the anticipated analytical techniques.

Swab Collection

Swabs with cotton or synthetic tips are universally employed for collecting latent or dried stains from surfaces.

- Procedure: The swab tip is moistened slightly with deionized water to facilitate the transfer of cellular material from the surface to the swab. The stained area is then swabbed with a rotating motion to maximize recovery. The swab must be air-dried completely at room temperature before packaging to prevent microbial growth and DNA degradation [32]. It should then be placed in a paper envelope or breathable container [27].

Gauze Collection

Gauze, typically sterile cotton gauze, is suitable for collecting larger volumes of liquid blood or pooling stains.

- Procedure: For liquid blood, the gauze is gently pressed against the fluid to allow for absorption. For dried stains, a moistened section of the gauze can be used. As with swabs, the blood-stained gauze must be thoroughly air-dried before final packaging. Research on expressed practices shows that blood samples for DNA analysis are often collected on dried gauze pieces and stored in paper bags or envelopes [27].

FTA Card Collection

FTA (Flinders Technology Associates) cards are chemically treated filter papers designed for the rapid preservation of biological samples for molecular analysis.

- Procedure: Liquid samples, such as blood or saliva, are spotted directly onto the indicated area of the FTA card. For stains, a small cutting from the evidence can be pressed onto the card, or a moistened swab can be applied to the card's surface. The chemicals on the card lyse cells, denature proteins, and protect DNA from nucleases and oxidative damage, allowing for stable storage at room temperature [33] [27]. Once the sample is applied, the card is air-dried and can be stored in a protective envelope.

Collection and Preservation Workflow

The process from sample collection to laboratory analysis follows a strict, sequential workflow to ensure evidence integrity. The diagram below illustrates the generalized pathway for handling biological fluid stains.

Comparative Data on Collection Methods

Table 1: Comparative analysis of biological fluid collection methods and their performance characteristics.

| Collection Method | Typical Use Case | Preservation Action | Key Advantages | Documented Issues & Considerations |

|---|---|---|---|---|

| Cotton Swab | Latent stains on surfaces | Air-drying | High flexibility, good recovery from various surfaces | Potential for DNA retention on swab fibers; requires careful drying [27] |

| Gauze Piece | Large liquid blood volumes | Air-drying | Highly absorbent, cost-effective | May require larger storage space; same drying requirements as swabs [27] |

| FTA Card | Liquid blood & saliva, reference samples | Chemical lysis & DNA stabilization | Integrated DNA preservation, room-temperature storage, direct amplification possible [33] | Higher per-unit cost; not always suitable for all stain types |

Table 2: Performance metrics of modern body fluid identification (BFID) technologies following sample collection [34].

| Technology | Specificity (%) | Sensitivity (%) | Error Rate (%) | Key Characteristics |

|---|---|---|---|---|

| Targeted Proteomics | 100 | 88.5 | 0 | Identifies specific protein markers via mass spectrometry |

| mRNA Profiling | 99.7 | 94.1 | 1.5 | Can detect multiple fluids in a single reaction |

| DNA Methylation | 99.5 | 72.5 | 3.8 | Uses epigenetic markers to distinguish fluid origin |

| Immunoassay (Traditional) | ~96 | ~87.1 | ~15.9 | Rapid but limited specificity for some fluids |

| Shotgun Proteomics | 93.4 | 67.7 | 32.3 | Large-scale screening, higher false positives |

Advanced Body Fluid Identification Technologies

Following proper collection, identifying the specific body fluid present is crucial for reconstructing events. Traditional methods rely on presumptive tests (e.g., catalytic tests for blood) and confirmatory tests (e.g., microscopic identification of spermatozoa) [31] [35]. These methods, while useful, can lack specificity and are often destructive.

The field is rapidly advancing with "omic" technologies that offer greater specificity and sensitivity, even with mixed samples [34].

- mRNA Profiling: Identifies body fluid-specific gene expression patterns. It can differentiate between multiple fluids, including venous and menstrual blood [34] [29].

- DNA Methylation Analysis: Distinguishes body fluids based on their unique patterns of DNA methylation, which regulates gene expression [34].

- Proteomics: Involves the large-scale study of proteins. Targeted proteomics focuses on a pre-defined set of proteins and has demonstrated 100% specificity in body fluid identification [34].

- Metatranscriptomics: This emerging approach characterizes the active microbial communities (microbiome) in different body fluids through RNA sequencing, providing a new dimension for identification [29].

- Fluorescence Spectroscopy: A non-destructive technique that identifies body fluids based on their unique fluorescent signatures when exposed to different wavelengths of light [36].

The diagram below outlines the decision-making process for selecting an appropriate body fluid identification technology based on the sample and investigation needs.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key reagents and materials for forensic biological evidence collection and analysis.

| Reagent/Material | Primary Function | Application Notes |

|---|---|---|

| FTA Cards | Chemical preservation of DNA; lysis of cells and inactivation of nucleases. | Enables stable, room-temperature storage of reference samples (buccal, blood); facilitates direct amplification [33]. |

| Prep-n-Go Buffer | Lysis buffer for direct amplification of DNA. | Used to lyse reference samples (swab tips, FTA punches); lysate can be directly added to PCR amplification mix, bypassing DNA extraction [33]. |

| GlobalFiler PCR Kit | Amplification of multiple STR loci for DNA profiling. | Designed for purified DNA but can be adapted for direct amplification from lysates with optimized protocols [33]. |

| RSID Kits (e.g., RSID-Saliva) | Immunochromatographic identification of body fluid-specific proteins. | Used as a confirmatory test for saliva (human salivary α-amylase) and other fluids; higher specificity than presumptive tests [35]. |

| Phadebas Amylase Test | Presumptive test for salivary α-amylase activity. | Detects enzymatic activity; blue pigment release is measured quantitatively or qualitatively [35]. |